Abstract

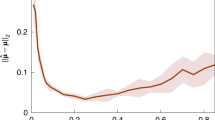

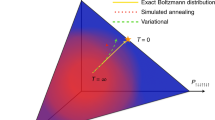

Reinforcement learning (RL) has become a proven method for optimizing a procedure for which success has been defined, but the specific actions needed to achieve it have not. Using a method we call ‘controlled online optimization learning’ (COOL), we apply the so-called ‘black box’ method of RL to simulated annealing (SA), demonstrating that an RL agent based on proximal policy optimization can, through experience alone, arrive at a temperature schedule that surpasses the performance of standard heuristic temperature schedules for two classes of Hamiltonians. When the system is initialized at a cool temperature, the RL agent learns to heat the system to ‘melt’ it and then slowly cool it in an effort to anneal to the ground state; if the system is initialized at a high temperature, the algorithm immediately cools the system. We investigate the performance of our RL-driven SA agent in generalizing to all Hamiltonians of a specific class. When trained on random Hamiltonians of nearest-neighbour spin glasses, the RL agent is able to control the SA process for other Hamiltonians, reaching the ground state with a higher probability than a simple linear annealing schedule. Furthermore, the scaling performance (with respect to system size) of the RL approach is far more favourable, achieving a performance improvement of almost two orders of magnitude on L = 142 systems. We demonstrate the robustness of the RL approach when the system operates in a ‘destructive observation’ mode, an allusion to a quantum system where measurements destroy the state of the system. The success of the RL agent could have far-reaching impacts, from classical optimization, to quantum annealing and to the simulation of physical systems.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The test datasets necessary to reproduce these findings are available at https://doi.org/10.5281/zenodo.3897413.

Code availability

The code necessary to reproduce these findings is available at https://doi.org/10.5281/zenodo.3897413.

References

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 220, 671–680 (1983).

Barahona, F. On the computational complexity of Ising spin glass models. J. Phys. A 15, 3241–3253 (1982).

Sherrington, D. & Kirkpatrick, S. Solvable model of a spin-glass. Phys. Rev. Lett. 35, 1792–1796 (1975).

Ising, E. Beitrag zur Theorie des Ferromagnetismus. Zeitschrift Phys. 31, 253–258 (1925).

Onsager, L. Crystal statistics. I. A two-dimensional model with an order–disorder transition. Phys. Rev. 65, 117–149 (1944).

Ferdinand, A. E. & Fisher, M. E. Bounded and inhomogeneous Ising models. I. Specific-heat anomaly of a finite lattice. Phys. Rev. 185, 832–846 (1969).

Lucas, A. Ising formulations of many NP problems. Front. Phys. 2, 1–14 (2014).

Hastings, B. Y. W. K. Monte Carlo sampling methods using Markov chains and their applications. Biometrika 57, 97–109 (1970).

Metropolis, N., Rosenbluth, A. W., Rosenbluth, M. N., Teller, A. H. & Teller, E. Equation of state calculations by fast computing machines. J. Chem. Phys. 21, 1087–1092 (1953).

Kirkpatrick, S. Optimization by simulated annealing: quantitative studies. J. Stat. Phys. 34, 975–986 (1984).

van Laarhoven, P. J. M. & Aarts, E. H. L. Simulated Annealing: Theory and Applications (Springer, 1987).

Stander, J. & Silverman, B. W. Temperature schedules for simulated annealing. Stat. Comput. 4, 21–32 (1994).

Heim, B., Rønnow, T. F., Isakov, S. V. & Troyer, M. Quantum versus classical annealing of Ising spin glasses. Science 348, 215–217 (2015).

Bounds, D. G. New optimization methods from physics and biology. Nature 329, 215–219 (1987).

Farhi, E. et al. A quantum adiabatic evolution algorithm applied to random instances of an NP-complete problem. Science 292, 472–475 (2001).

Hen, I. & Young, A. P. Solving the graph-isomorphism problem with a quantum annealer. Phys. Rev. A 86, 042310 (2012).

Boixo, S. et al. Evidence for quantum annealing with more than one hundred qubits. Nat. Phys. 10, 218–224 (2014).

Bian, Z. et al. Discrete optimization using quantum annealing on sparse Ising models. Front. Phys. 2, 1–10 (2014).

Venturelli, D., Marchand, D. J. J. & Rojo, G. Quantum annealing implementation of job-shop scheduling. Preprint at https://arxiv.org/pdf/1506.08479.pdf (2015).

Ray, P., Chakrabarti, B. K. & Chakrabarti, A. Sherrington–Kirkpatrick model in a transverse field: absence of replica symmetry breaking due to quantum fluctuations. Phys. Rev. B 39, 11828–11832 (1989).

Martoňák, R., Santoro, G. E. & Tosatti, E. Quantum annealing by the path-integral Monte Carlo method: the two-dimensional random Ising model. Phys. Rev. B 66, 094203 (2002).

Santoro, G. E. Theory of quantum annealing of an Ising spin glass. Science 295, 2427–2430 (2002).

Finnila, A., Gomez, M., Sebenik, C., Stenson, C. & Doll, J. Quantum annealing: a new method for minimizing multidimensional functions. Chem. Phys. Lett. 219, 343–348 (1994).

Kadowaki, T. & Nishimori, H. Quantum annealing in the transverse Ising model. Phys. Rev. E 58, 5355–5363 (1998).

Harris, R. et al. Experimental demonstration of a robust and scalable flux qubit. Phys. Rev. B 81, 134510 (2010).

Harris, R. et al. Experimental investigation of an eight-qubit unit cell in a superconducting optimization processor. Phys. Rev. B. 82, 024511 (2010).

Johnson, M. W. et al. Quantum annealing with manufactured spins. Nature 473, 194–198 (2011).

McGeoch, C. C. & Wang, C. Experimental evaluation of an adiabiatic quantum system for combinatorial optimization. In Proceedings of the ACM International Conference on Computing Frontiers, CF ’13, Vol. 23, 1–11 (ACM, 2013).

Ikeda, K., Nakamura, Y. & Humble, T. S. Application of quantum annealing to nurse scheduling problem. Sci. Rep. 9, 12837 (2019).

Dickson, N. G. et al. Thermally assisted quantum annealing of a 16-qubit problem. Nat. Commun. 4, 1903 (2013).

Okada, S., Ohzeki, M. & Tanaka, K. Efficient quantum and simulated annealing of Potts models using a half-hot constraint. J. Phys. Soc. Jpn 89, 094801 (2020).

Battaglia, D. A., Santoro, G. E. & Tosatti, E. Optimization by quantum annealing: lessons from hard satisfiability problems. Phys. Rev. E 71, 066707 (2005).

Tsukamoto, S., Takatsu, M., Matsubara, S. & Tamura, H. An accelerator architecture for combinatorial optimization problems. Fujitsu Sci. Technical J. 53, 8–13 (2017).

Inagaki, T. et al. A coherent Ising machine for 2,000-node optimization problems. Science 354, 603–606 (2016).

Leleu, T., Yamamoto, Y., McMahon, P. L. & Aihara, K. Destabilization of local minima in analog spin systems by correction of amplitude heterogeneity. Phys. Rev. Lett. 122, 040607 (2019).

Tiunov, E. S., Ulanov, A. E. & Lvovsky, A. I. Annealing by simulating the coherent Ising machine. Opt. Express 27, 10288–10295 (2019).

Farhi, E., Goldstone, J. & Gutmann, S. A quantum approximate optimization algorithm. Preprint at https://arxiv.org/pdf/1411.4028.pdf (2014).

Farhi, E., Goldstone, J., Gutmann, S. & Zhou, L. The quantum approximate optimization algorithm and the Sherrington–Kirkpatrick model at infinite size. Preprint at https://arxiv.org/pdf/1910.08187.pdf (2019).

Sutton, R. & Barto, A. Reinforcement Learning: An Introduction 2nd edn (MIT Press, 2018).

Berner, C. et al. Dota 2 with large scale deep reinforcement learning. Preprint at https://arxiv.org/pdf/1912.06680.pdf (2019).

Zhang, Z. et al. Hierarchical reinforcement learning for multi-agent MOBA Game. Preprint at https://arxiv.org/pdf/1901.08004.pdf (2019).

Vinyals, O. et al. Grandmaster level in StarCraft II using multi-agent reinforcement learning. Nature 575, 350–354 (2019).

Mnih, V. et al. Playing Atari with deep reinforcement learning. Preprint at https://arxiv.org/pdf/1312.5602.pdf (2013).

Silver, D. et al. Mastering the game of Go with deep neural networks and tree search. Nature 529, 484–489 (2016).

Silver, D. et al. Mastering the game of Go without human knowledge. Nature 550, 354–359 (2017).

Silver, D. et al. A general reinforcement learning algorithm that masters chess, shogi and Go through self-play. Science 362, 1140–1144 (2018).

Agostinelli, F., McAleer, S., Shmakov, A. & Baldi, P. Solving the Rubik’s cube with deep reinforcement learning and search. Nat. Mach. Intell. 1, 356–363 (2019).

Akkaya, I. et al. Solving Rubik’s cube with a robot hand. Preprint at https://arxiv.org/pdf/1910.07113.pdf (2019).

Schulman, J., Wolski, F., Dhariwal, P., Radford, A. & Klimov, O. Proximal policy optimization algorithms. Preprint at https://arxiv.org/pdf/1707.06347.pdf (2017).

Hill, A. et al. Stable Baselines (2018); https://github.com/hill-a/stable-baselines

Brockman, G. et al. OpenAI Gym. Preprint at https://arxiv.org/pdf/1606.01540.pdf (2016).

Schulman, J., Levine, S., Abbeel, P., Jordan, M. & Moritz, P. Trust region policy optimization. In Proceedings of the 32nd International Conference on Machine Learning 1889–1897 (ICML, 2015).

Kakade, S. & Langford, J. Approximately optimal approximate reinforcement learning. In Proceedings of the 19th International Conference on Machine Learning 267–274 (ICML, 2002).

Hochreiter, S. & Schmidhuber, J. Long short-term memory. Neural Comput. 9, 1735–1780 (1997).

Aramon, M. et al. Physics-inspired optimization for quadratic unconstrained problems using a Digital Annealer. Front. Phys. 7 (2019); https://doi.org/10.3389/fphy.2019.00048

Bunyk, P. I. et al. Architectural considerations in the design of a superconducting quantum annealing processor. IEEE Trans. Appl. Superconductivity 24, 1–10 (2014).

Liers, F., Jünger, M., Reinelt, G. & Rinaldi, G. in New Optimization Algorithms in Physics Vol. 4, 47–69 (Wiley, 2005).

Jünger, M. Spin glass server; https://informatik.uni-koeln.de/spinglass/

Wang, F. & Landau, D. P. Efficient, multiple-range random walk algorithm to calculate the density of states. Phys.Rev. Lett. 86, 2050–2053 (2001).

Acknowledgements

I.T. acknowledges support from NSERC. K.M. acknowledges support from Mitacs. We thank B. Krayenhoff for valuable discussions in the early stages of the project and thank M. Bucyk for reviewing and editing the manuscript.

Author information

Authors and Affiliations

Contributions

All authors contributed to the ideation and design of the research. K.M. developed and ran the computational experiments and wrote the initial draft of the the manuscript. P.R. and I.T. jointly supervised this work and revised the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Mills, K., Ronagh, P. & Tamblyn, I. Finding the ground state of spin Hamiltonians with reinforcement learning. Nat Mach Intell 2, 509–517 (2020). https://doi.org/10.1038/s42256-020-0226-x

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s42256-020-0226-x

This article is cited by

-

Hidden orders in spin–orbit-entangled correlated insulators

Nature Reviews Materials (2025)

-

An adaptive AI-based virtual reality sports system for adolescents with excess body weight: a randomized controlled trial

Nature Medicine (2025)

-

Message passing variational autoregressive network for solving intractable Ising models

Communications Physics (2024)

-

Energy-efficient superparamagnetic Ising machine and its application to traveling salesman problems

Nature Communications (2024)

-

Searching for spin glass ground states through deep reinforcement learning

Nature Communications (2023)