Abstract

Despite the remarkable successes of transfer learning in materials science, the practicality of existing transfer learning methods are still limited in real-world applications of materials science because they essentially assume the same material descriptors on source and target materials datasets. In other words, existing transfer learning methods cannot utilize the knowledge extracted from calculated crystal structures when analyzing experimental observations of real-world chemical experiments. We propose a transfer learning criterion, called cross-modality material embedding loss (CroMEL), to build a source feature extractor that can transfer knowledge extracted from calculated crystal structures to prediction models in target applications where only simple chemical compositions are accessible. The prediction models based on transfer learning with CroMEL showed state-of-the-art prediction accuracy on 14 experimental materials datasets in various chemical applications. In particular, the prediction models with CroMEL achieved R2-scores greater than 0.95 in predicting the experimentally measured formation enthalpies and band gaps of the experimentally synthesized materials.

Similar content being viewed by others

Introduction

Machine learning has been widely used as an efficient method to predict the physical and chemical properties of crystalline materials in various applications, such as inorganic catalysts1,2, energy materials3,4, and optoelectronic devices5. However, since chemical experiments to collect training data for machine learning are time-consuming and labor-intensive, machine learning typically suffers from the lack of experimentally collected training data in materials science6,7. To address the problem caused by the lack of training data, various statistical and data-driven methods have been studied in materials science, such as descriptor engineering8, data augmentation9, and transfer learning10. In particular, transfer learning has shown remarkable accuracy improvements in predicting experimentally measured physical and chemical properties of the crystalline materials on small training datasets by transferring knowledge extracted from large and unbiased source materials datasets11,12,13,14.

Transfer learning aims to transfer knowledge extracted from large and unbiased source datasets to prediction models in target domains where large and unbiased training datasets are not available15. Transfer learning for building regression and classification models is formally defined by:

where \({{{\mathcal{D}}}}_{t}=\{({{{\bf{x}}}}_{t,1},{y}_{t,1}),({{{\bf{x}}}}_{t,2},{y}_{t,2}),\ldots ,({{{\bf{x}}}}_{t,N},{y}_{t,N})\}\) is a training dataset of the target domain, L is a metrics to measure prediction errors, f is a trainable prediction model, and h is a trainable feature extractor optimized on a source dataset \({{{\mathcal{D}}}}_{s}=\{({{{\bf{x}}}}_{s,1},{y}_{s,1}),({{{\bf{x}}}}_{s,2},{y}_{s,2}),\ldots ,({{{\bf{x}}}}_{s,M},{y}_{s,M})\}\). Note that L can be implemented by the absolute errors or the squared errors16. In transfer learning, h trained on \({{{\mathcal{D}}}}_{s}\) is referred to as a source feature extractor, and a composite model f ∘ h re-trained on \({{{\mathcal{D}}}}_{t}\) is referred to as a target prediction model. The purpose of transfer learning is to find the best model parameters of f ∘ h that minimizes L on \({{{\mathcal{D}}}}_{t}\).

The basic assumption of existing transfer learning methods is that the modality of the input data must be the same on \({{{\mathcal{D}}}}_{s}\) and \({{{\mathcal{D}}}}_{t}\)11,14,15,17. For example, if the materials are described by the chemical compositions on \({{{\mathcal{D}}}}_{t}\), the materials on \({{{\mathcal{D}}}}_{s}\) should also be described by the chemical compositions. In other words, if xt,n in \({{{\mathcal{D}}}}_{t}\) is a chemical composition and xs,m in \({{{\mathcal{D}}}}_{s}\) is a crystal structure, we cannot transfer knowledge of \({{{\mathcal{D}}}}_{s}\) to composition-based prediction models trained on \({{{\mathcal{D}}}}_{t}\). As described in the example, the same modality assumption significantly limits the practicality of existing transfer learning methods in real-world chemical applications, since collecting informative material descriptors of experimentally synthesized materials beyond their chemical compositions is expensive and sometimes infeasible in real-world chemical experiments18,19. Therefore, despite the availability of a variety of public calculation databases that provide informative material descriptors and their associated physical properties, existing transfer learning methods are not able to leverage the benefits of the public calculation databases when performing transfer learning to build the prediction models on experimentally collected materials datasets.

We aim to transfer knowledge extracted from calculated crystal structures to the composition-based prediction models trained on experimentally collected materials datasets. In this paper, we refer to the transfer learning from calculated crystal structures to experimentally observed materials as cross-modality transfer learning. The main challenge of the cross-modality transfer learning is that the source feature extractor h trained on \({{{\mathcal{D}}}}_{s}\) cannot handle the input chemical composition xt,n in \({{{\mathcal{D}}}}_{t}\). To overcome this limitation, we propose cross-modality material embedding loss (CroMEL), which is an embedding criterion for training feature extractors (embedding networks) for the cross-modality transfer learning on materials datasets. CroMEL provides a training objective to train a composition encoder ψ so that it can generate latent material embeddings consistent with those of a structure encoder π. Formally, CroMEL enforces ψ to satisfy the following equation:

where \(P({{\mathcal{C}}};\psi )\) and P(S; π) are the probabilities of the latent embeddings of the chemical compositions \({{\mathcal{C}}}\) and crystal structures S in the embedding space generated by the composition and structure encoders ψ and π, respectively. As shown in Eq. (2), CroMEL does not make any assumptions on ψ and its training process, i.e., CroMEL is a universally applicable method regardless of the regression problems and model architectures of ψ. Furthermore, CroMEL is a nonparametric optimization criterion that can be calculated without the parametric forms of the probability distributions of \(P({{\mathcal{C}}}\psi )\) and P(S; π). Since we usually do not know the distributions of the chemical compositions and crystal structures in the material spaces, these characteristics of CroMEL make it practical in real-world chemical applications.

We conducted extensive experiments to evaluate the effectiveness of CroMEL in transfer learning on experimentally collected materials datasets. As the source datasets for the cross-modality transfer learning, we collected 13 calculation datasets containing calculated crystal structures and their physical properties. As the target datasets for evaluating transfer learning performances, we gathered 14 experimentally collected datasets containing experimentally measured chemical compositions and target properties of the materials from various chemical applications, such as thermoelectric materials, inorganic phosphors, and battery materials. We compared the prediction capabilities of the prediction models generated by the conventional machine learning and cross-modality transfer learning approaches on the 14 experimental datasets. In the experimental evaluations, the target prediction models trained by the cross-modality transfer learning based on CroMEL showed state-of-the-art prediction accuracy for all experimental datasets. In particular, the target prediction models of the cross-modality transfer learning achieved the R2-scores greater than 0.95 in predicting experimental formation enthalpies and band gaps of thousands of experimentally synthesized materials. Furthermore, CroMEL was robust to the calculation accuracy of the source calculation datasets. These results demonstrate the practical potential of CroMEL in real-world chemical applications where training data and informative features are insufficient to build a prediction model.

Results

Problem definition of cross-modality transfer learning

In this section, we formally define the cross-modality transfer learning on polymorphic crystal structures. To this end, we introduce a latent variable zs,m generated from a polymorphic crystal structure xs,m in the source dataset \({{{\mathcal{D}}}}_{s}\). Similarly, we define a latent variable zc,m that represents a chemical composition \({{{\mathcal{C}}}}_{m}\) corresponding to the polymorphic crystal structure xs,m. Note that we can easily obtain the chemical compositions of the crystal structures by reading their structure files. We refer to the latent variables zs,m and zc,m as structure and composition embeddings, respectively.

The latent variables zs,m and zc,m follow the probability distributions Pπ and Pψ parameterized by trainable structure encoder π and probabilistic composition encoder ψ, respectively. For the structure and composition encoders, the training problem of the source feature extractors on \({{{\mathcal{D}}}}_{s}\) for the cross-modality transfer learning is formally given by:

where g is a trainable prediction network on \({{{\mathcal{D}}}}_{s}\), and Ddiv is a statistical distance [-] to measure the divergence between two distributions. If π* and ψ* make Ddiv small sufficiently, the latent variable zc,m will contain the latent information about the distribution of the polymorphic crystal structures derived from \({{{\mathcal{C}}}}_{m}\), even though zc,m is generated only from the chemical composition without the crystal structures.

In the cross-modality transfer learning, we employ the optimized probabilistic composition encoder ψ instead of the optimized structure encoder π, and the cross-modality transfer learning is formally defined as:

As shown in Eq. (4), the source feature extractor h in the conventional transfer learning is replaced with the probabilistic composition encoder ψ. As a result, by predicting the target experimental properties as yt = f(ψ(xt)), we can transfer knowledge extracted from the calculated crystal structures to the prediction models on \({{{\mathcal{D}}}}_{t}\) where only the chemical compositions of the materials are available. CroMEL is an optimization criterion for implementing Ddiv in Eq. (3). In the following section, we will mathematically define CroMEL based on Wasserstein distance20 and derive its computable form.

Cross-modality Material Embedding Loss (CroMEL)

To define Ddiv in Eq. (3) as a computable form, we revisit the probability distribution of polymorphic crystal structures derived from \({{{\mathcal{C}}}}_{m}\). For a given \({{{\mathcal{C}}}}_{m}\), a possible crystal structure Sm from \({{{\mathcal{C}}}}_{m}\) follows an unknown probability distribution Ω defined by the structure parameters as:

where \(\rho \in {{\mathbb{R}}}^{6}\) is the lattice parameters of the crystal system, U(0) is initial coordinates of the atoms, and κ is physics-informed criteria of the crystal structure optimization processes. Eq. (5) implies that the crystal structure Sm follows the probability distribution defined by a set {\({{{\mathcal{C}}}}_{m}\), κ}, and the probability of Sm is calculated through the probabilistic inference on Ω for the given inputs ρ and U(0). Simply, we can rewrite Eq. (5) based on the random variable Sm associated with \({{{\mathcal{C}}}}_{m}\) as:

Therefore, we can regard the polymorphic structures derived from \({{{\mathcal{C}}}}_{m}\) as the samples drawn from \(\Omega ({{{\mathcal{C}}}}_{m},\kappa )\). If the crystal structures in a database are generated under the same optimization criteria, we can omit κ because it is a constant through the crystal structure optimization processes in the same database. In this paper, we also assume that all crystal structures in the same database are generated under the same optimization criteria κ, i.e., we omit κ and denote the distribution of the random variable Sm by:

Based on Eq. (7), we can define the structure embedding zs,m as a latent variable that follows the probability distribution determined by \({{{\mathcal{C}}}}_{m}\) and π as:

If we have a universal function approximator such as deep neural networks21, we can approximate the unknown \(P({{{\mathcal{C}}}}_{m},\pi )\) as a normal distribution parameterized by θ, and the parameterized normal distribution is formally given by:

where \({\mu }_{\theta ,m}={\psi }_{\mu }({{{\mathcal{C}}}}_{m};\theta )\) and \({\sigma }_{\theta ,m}={\psi }_{\sigma }({{{\mathcal{C}}}}_{m};\theta )\) are parameterized mean and standard deviation of the normal distribution, respectively. Note that we assume an isotropic normal distribution in Eq. (9) due to optimization stability and sampling efficiency of the parameterized normal distribution22. However, approximation capabilities of parameterized isotropic normal distributions to simulate complex probabilistic processes have been widely demonstrated in various probabilistic models23,24,25,26. As a result, the composition encoder ψ to approximate \({{\mathcal{N}}}({\mu }_{\theta ,m},{\sigma }_{\theta ,m}^{2}{{\bf{I}}})\) is defined as a neural network that outputs μθ,m and \({\sigma }_{\theta ,m}^{2}\) for a given \({{{\mathcal{C}}}}_{m}\), and we can sample \({{{\bf{z}}}}_{{{\mathcal{C}}},m}\) based on a reparameterization trick27 as:

where \(\epsilon \sim {{\mathcal{N}}}({{\bf{0}}},{{\bf{I}}})\) is a sample uncertainty. Hence, ψ learns a probability distribution that can sample polymorphic crystal structures, rather than a function that deterministically connects an input chemical composition into a structure.

For the latent representations zs,m and zc,m, CroMEL implements Ddiv in Eq. (3) as the p-Wasserstein distance20 between the data distribution of the polymorphic crystal structures and the parameterized normal distribution derived from the chemical compositions. CroMEL, denoted by J is formally given by:

where τ is a set of the joint distributions of \(P({{{\mathcal{C}}}}_{m},\pi )\) and \({{\mathcal{N}}}({\mu }_{\theta ,m},{\sigma }_{\theta ,m}^{2}{{\bf{I}}})\), and d is a distance metric. One of the biggest advantages of the Wasserstein distance compared to the conventional KL-divergence28 is that we can empirically calculate the statistical distance between arbitrary distributions without their parametric forms. In other words, we can calculate CroMEL of Eq. (11) based on the calculated crystal structures in \({{{\mathcal{D}}}}_{s}\), even though a parametric form of \(P({{{\mathcal{C}}}}_{m},\pi )\) is unknown. where τ is a set of the joint distributions of \(P({{{\mathcal{C}}}}_{m},\pi )\) and \({{\mathcal{N}}}({\mu }_{\theta ,m},{\sigma }_{\theta ,m}^{2}{{\bf{I}}})\), and d is a distance metric. One of the biggest advantages of the Wasserstein distance compared to the conventional KL-divergence28 is that we can empirically calculate the statistical distance between arbitrary distributions without their parametric forms. In other words, we can calculate CroMEL of Eq. (11) based on the calculated crystal structures in \({{{\mathcal{D}}}}_{s}\), even though a parametric form of \(P({{{\mathcal{C}}}}_{m},\pi )\) is unknown.

For an efficient calculation of CroMEL, we employ the Kantorovich-Rubinstein duality29 to convert Eq. (11) to a problem based on a trainable function ω instead of the joint distribution τ as:

where \(\omega :{{\mathbb{R}}}^{m}\to {\mathbb{R}}\) is a trainable function to map the m-dimensional latent variables into \({\mathbb{R}}\), and \({{\mathcal{F}}}\) is a set of functions satisfying the 1-Lipschitz constraint29.

Eq. (11) shows that the composition encoder ψ can generate zc,m reflecting the distribution of the polymorphic crystal structures derived from the chemical composition \({{{\mathcal{C}}}}_{m}\) if CroMEL is sufficiently minimized. In other words, we can predict the target experimental properties of the materials on \({{{\mathcal{D}}}}_{t}\) with consideration of their polymorphic crystal structures by entering zc,m to the prediction models on \({{{\mathcal{D}}}}_{t}\), even if the crystal structures are not accessible on \({{{\mathcal{D}}}}_{t}\). Therefore, we can build more accurate prediction models on the experimentally collected materials datasets where the most informative material descriptors are not available.

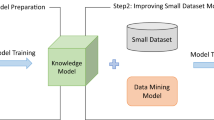

Cross-modality transfer learning based on CroMEL

Figure 1 illustrates the overall process of the cross-modality transfer learning based on CroMEL. The cross-modality transfer learning is performed through three steps: (1) The probabilistic composition encoders ψ is trained to minimize CroMEL in Eq. (12) for each source calculation dataset \({{{\mathcal{D}}}}_{s}\) so that the normal distribution \({{{\mathcal{N}}}}_{\phi ,m}\) parameterized by ψ approximates the unknown distribution Pπ,m of the polymorphic crystal structures. (2) The target experimental dataset \({{{\mathcal{D}}}}_{t}\) is divided into K non-overlapping subsets that will be used as the training, validation, and test datasets in the next step. For the validation dataset, the prediction errors of the prediction models based on each ψ is measured, and the best ψ achieving the minimum validation error is selected for the cross-modality transfer learning in the next step. (3) For the selected ψ, cross-modality knowledge transfer on \({{{\mathcal{D}}}}_{t}\) is performed by training the cross-modality prediction model f ∘ ψ to minimize the prediction loss on \({{{\mathcal{D}}}}_{t}\).

Step 1: Training of cross-modality probabilistic composition encoders on the source calculation dataset \({{{\mathcal{D}}}}_{t}\). Step 2: Selecting the best composition encoder based on validation errors. Step 3: Cross-modality knowledge transfer on the target experimental dataset \({{{\mathcal{D}}}}_{t}\).

Evaluation results on experimentally collected material datasets

We conducted extensive experimental evaluations on various experimental materials datasets to validate the effectiveness of the CroMEL-based cross-modality transfer learning in real-world chemical applications. For the cross-modality transfer learning, we collected 13 source calculation datasets from Materials Project (MP)30 and Computational Materials Repository (CMR)31, which provide the calculated crystal structures of a wide range of experimentally synthesizable materials. As the target experimental datasets, we employed 14 datasets containing chemical experimentally observed compositions and physical properties of the materials. Table 1 summarizes the characteristics of the collected source calculation and target experimental datasets. As presented in the material type, for comprehensive evaluations, we collected the target experimental datasets from various applications of materials science.

For comparative evaluations, we implemented five competitor prediction models based on XGBoost32, feedforward deep neural network (FDNN)33, ElemNet34, residual feedforward network (RFN)35, and Roost14. XGBoost is a tree-based ensemble model that has shown state-of-the-art prediction accuracy in various scientific applications. FDNN is a simple neural network consisting of three feedforward fully-connected layers. ElemNet is a feedforward neural network for predicting material properties from chemical compositions of materials. Roost is a graph neural network for predicting material properties from an atom-wise graph representation of chemical compositions. In particular, Roost showed state-of-the-art prediction accuracy on experimentally collected materials datasets14.

In the experimental evaluations, we implemented a CroMEL-based prediction model by retraining a simple FDNN with the CroMEL-based cross-modality transfer learning. We refer to the CroMEL-based FDNN model as NN-CroMEL. The implementation details and transfer learning process of NN-CroMEL are provided in the Method Section. For a fair comparison, the competitor FDNN and NN-CroMEL were constructed with the same network architecture and hyperparameters. All hyperparameters of the competitor methods and NN-CroMEL were optimized by the grid search in the valid ranges of the hyperparameters. We selected the best composition encoders by measuring the validation error for each source calculation dataset, as illustrated in Fig. 1. Table 2 presents the source datasets of the selected composition encoders for each target experimental dataset. As shown in the table, NN-CroMEL consistently showed significant accuracy improvements by transferring knowledge on the selected source calculation datasets into the target experimental datasets. In the next section, we will conduct a qualitative analysis of the accuracy improvements and the selected source calculation datasets.

We compared the coefficient of determination (R2-score)36 and mean absolute error (MAE)16 of the competitor methods and NN-CroMEL on the 14 target experimental datasets. In this experimental evaluation, R2-scores and MAE were measured by 5-fold cross-validation. Table 3 presents the measured R2-scores on the target experimental datasets, and NN-CroMEL showed higher R2-scores than those of the competitor methods for all target experimental datasets. As shown in Table 4, NN-CroMEL also showed lower MAEs than those of the competitor methods for all datasets except for the IPOP-300-QE dataset. In particular, NN-CroMEL achieved the R2-scores greater than 0.95 in predicting experimentally measured formation enthalpies and band gaps, which are important properties determining the applications of the materials. These evaluation results of NN-CroMEL are remarkable because CroMEL enabled the improvements in the prediction accuracy of the machine learning models without additional chemical experiments for collecting experimentally generated training data, which is one of the most challenging problems of machine learning in materials science10,37,38,39.

Selected source calculation datasets in cross-modality transfer learning tasks

We conducted a qualitative analysis of the results of the cross-modality transfer learning based on CroMEL to investigate the reasons for the accuracy improvements by CroMEL. Table 2 presents the selected source calculation dataset (\({{{\mathcal{D}}}}_{s}^{* }\)) and the cross-modality transfer learning results for each target experimental dataset (\({{{\mathcal{D}}}}_{t}\)). In the table, the knowledge transfer \({{{\mathcal{D}}}}_{s}^{* }\to {{{\mathcal{D}}}}_{t}\) means the knowledge extracted from the selected source calculation dataset \({{{\mathcal{D}}}}_{s}^{* }\) is transferred to the prediction model on \({{{\mathcal{D}}}}_{t}\). For the comparative analysis, we presented the R2-scores of the baseline FDNN and the proposed NN-CroMEL together.

For the target experimental datasets derived from the ESTM dataset, NN-CroMEL consistently showed significant improvements in the R2-scores by transferring the knowledge related to the thermodynamic properties of the source calculation datasets. These knowledge transfer results are natural because the power factor and figure of merit of the thermoelectric materials are highly related to the thermodynamic properties of the materials. Furthermore, the knowledge transfer results on the Liverpool-RT, EFE, and EBG datasets are consistent with the domain knowledge in physical science because the target material properties of these target experimental datasets are directly related to the formation energy and band gap of the MP-FE and MP-BG datasets. However, the knowledge transfer results on the other datasets are not physically or chemically clear, and it may be one of the limitations of deep learning models.

The analysis results in Table 2 show that the best source dataset may not be consistent with the domain knowledge in physical science. Consequently, a trial-and-error approach on various source calculation datasets is essential to perform transfer learning on materials datasets, and CroMEL allows the machine learning models to exploit a wider range of source calculation datasets for transfer learning beyond the modality constraints imposed on the material descriptors.

Comparison with conventional transfer learning methods

For further experimental evaluations, we compared the R2-scores of NN-CroMEL with those of conventional transfer learning methods. However, we cannot apply conventional transfer learning methods to our cross-modality transfer learning tasks in Table 1, as conventional transfer learning methods assume that the input material descriptors in the source and target datasets have the same modality. For this reason, we followed well-known conventions in transfer learning on materials data11,14 to generate source datasets for conventional transfer learning methods. Specifically, we constructed new source datasets by extracting the chemical composition and target material property of each crystal structure from the original source calculation datasets in Table 1. Then, we trained the source feature extractors on the extracted source datasets. In this experimental evaluation, conventional transfer learning methods based on FDNN33 and Roost14 were employed as the competitor methods of NN-CroMEL. We refer to the transfer learning methods based on FDNN and Roost as FDNNtl and Roosttl, respectively. The hyperparameters of FDNNtl and Roosttl were optimized by the grid search in the valid ranges.

Figure 2 shows the measured R2-scores of FDNNtl, Roosttl, and NN-CroMEL on the target experimental datasets. In this experimental evaluation, Roosttl achieved some accuracy improvements over the conventional FDNNtl for most target experimental datasets by employing a sophisticatedly designed graph attention network40. However, NN-CroMEL showed further improvements over Roosttl as well as FDNNtl for all target experimental datasets, even though the prediction model of NN-CroMEL is the simple FDNN. In particular, the R2-scores of NN-CroMEL exceeded 0.6 on the SCMAT-BIN, IPOP-300-QE, Liverpool-RT, and MGTT datasets, where existing machine learning methods failed to construct valid regression models. The evaluation results in Fig. 2 indicate two conclusions: (1) As shown in the R2-scores of Roosttl and NN-CroMEL, a large and unbiased calculation dataset can be more important than sophisticatedly designed machine learning algorithms for successful transfer learning in materials science. (2) As shown in the SCMAT-BIN, IPOP-300-QE, Liverpool-RT, and MGTT datasets, CroMEL shows the breakthroughs in some tasks of predicting experimentally measured material properties over the conventional transfer learning methods.

Transfer learning performances on small training datasets

Lack of experimentally collected training data is one of the most challenging problems in machine learning for materials science6,7,12. In this experimental evaluation, we measured R2-scores of the conventional transfer learning methods and NN-CroMEL for different sizes of the training datasets to evaluate the robustness to small training datasets. We split the entire target experimental dataset into a training dataset of (100 × r)% data and a fixed test dataset of 20% data, where r ∈ {0.4, 0.5, 0.6, 0.7, 0.8} is a ratio of the training dataset. The target prediction models were trained on the (100 × r)% training dataset, and then R2-scores of the trained prediction models were measured on the fixed 20% test dataset. Since we randomly split the training datasets, we repeated the training and evaluation processes 10 times and reported the mean and standard deviation of the R2-scores. For the experimental evaluations, we selected four target experimental datasets from four different chemical applications as follows: ESTM-RT-ZT, SCMAT-TER, IPOP-300-DT, and EBG datasets.

Figure 3 shows the measured R2-scores of FDNNtl (green), Roosttl (red), and NN-CroMEL (blue) on the ESTM-RT-ZT, SCMAT-TER, IPOP-300-DT, and EBG datasets. On the ESTM-RT-ZT dataset, NN-CroMEL achieved the R2-score greater than 0.74, even though it was trained only on the 40% training dataset. For all other values of r, NN-CroMEL showed the R2-scores greater than those of FDNNtl and Roosttl on the ESTM-RT-ZT dataset. Besides the ESTM-RT-ZT dataset, NN-CroMEL outperformed FDNNtl and Roosttl for all values of r on the SCMAT-TER and EBG datasets. Although NN-CroMEL and Roosttl showed similar R2-scores for the 40% training dataset on the IPOP-300-DT dataset, NN-CroMEL finally outperformed Roosttl and achieved the R2-score greater than 0.85 for r = 0.6, 0.7, and 0.8. The evaluation results in Fig. 3 show the effectiveness of the cross-modality transfer learning based on CroMEL in constructing the prediction models on small training datasets, which is one of the most challenging problems in machine learning for materials science.

Integration with transferability estimators

One of the major limitations of conventional transfer learning is that we should re-train all source feature extractors on target datasets to select the best source feature extractor for a given target task10,11,37. In other words, if we have K source feature extractors, we need K times more computational cost to perform transfer learning compared to the computational cost of training a single prediction model11. For efficient transfer learning, several transferability estimators have been proposed to preliminarily estimate the fitness of source feature extractors on a given target dataset without retraining them41,42. However, they also assume the same modality of the input data on the source and target datasets. Although a cross-modality transferability estimator was proposed to overcome the limitation on the input data modality43, it still requires a specific architecture of the source feature extractor for estimating the cross-modality transferability. However, the source feature extractors trained with CroMEL naturally provide a way to estimate the cross-modality transferability between the source and target datasets with different input data modalities. As shown in Eq. (12), we can train the cross-modality composition encoder ψ based on CroMEL. The trained ψ can be used to estimate the cross-modality transferabilities by integrating a regression-based transferability estimator43 as follows.

where A and b are a trainable weight matrix and bias vector of the transferability estimator, respectively.

Table 5 shows the R2-scores of the target prediction models generated through the transfer learning based on the conventional trial-and-error approach and the cross-modality transferability estimation. f* is the target prediction model selected by the trial-and-error approach for all source feature extractors, i.e., it was selected after retraining all source feature extractors for a given target experimental dataset. By contrast, fte is the target prediction model selected by the cross-modality transferability score in Eq. (13). As shown in Table 5, fte showed similar R2-scores with f* for all target experimental datasets, whereas the elapsed times of constructing fte were significantly lower than those of constructing f*. Quantitatively, the elapsed time of constructing fte was 6.496-12.373 times lower than that of constructing f*, as shown in the efficiency gain of Table 5. The efficiency gain was proportional to the number of data in the target experimental datasets. In addition, theoretically, the efficiency gain also increases as the number of available source feature extractors increases43. The evaluation results on the computational efficiency demonstrate two advantages of CroMEL: (1) CroMEL can be easily combined with the cross-modality transferability estimators. (2) CroMEL significantly reduces the computational cost to find the best source feature extractor in transfer learning.

Transfer learning performances and calculation level of source materials datasets

As explained in the Result section, CroMEL has made it possible to leverage the calculation datasets in transfer learning on materials data by providing an objective function for training the cross-modality composition encoders. Essentially, the representation capabilities of the cross-modality composition encoders are dependent on the volume and calculation accuracy of the source calculation datasets. However, there is a trade-off between the volume and calculation accuracy of the source calculation datasets, as the quantum mechanical methods for calculating a crystal structure at an accurate calculation level require more computational cost44. In this section, we investigated the impacts of the volume and calculation accuracy of the source calculation datasets in the cross-modality transfer learning.

For a comparative analysis, we employed two additional source calculation datasets called CMR-2D-PBG and CMR-2D-GWBG from the CMR 2D Materials Database45. The CMR-2D-PBG dataset contains the crystal structures and band gaps calculated with the PBE functional46 for 8570 2D materials. Similarly, the CMR-2D-GWBG contains the crystal structures and band gaps calculated with the G0W0 functional47 for 339 2D materials. The CMR-2D-PBG dataset covers the largest range of 2D materials, whereas the calculated band gaps are relatively inaccurate. By contrast, the CMR-2D-GWBG dataset provides the most accurate band gap of the smallest number of 2D materials. The volume and calculation accuracy of the CMR-2D-BG dataset in Table 1 are located between the CMR-2D-PBG and CMR-2D-GWBG datasets.

We compared the transfer learning performances of three source feature extractors trained on the CMR-2D-PBG, CMR-2D-BG, and CMR-2D-GWBG datasets to investigate the impacts of the source calculation dataset at different calculation levels. We denote the NN-CroMEL models derived from the source feature extractors trained on the CMR-2D-PBG, CMR-2D-BG, and CMR-2D-GWBG datasets by fpbe, fhse, and fgw, respectively. Table 6 shows the measured R2-scores of the best competitors in Table 3 and the three variants of NN-CroMEL on the target experimental datasets. We presented the R2-scores of the best competitors for comparative evaluations. In this analysis, fpbe, fhse, and fgw showed higher R2-scores than those of the best competitors for almost all target experimental datasets, and this result demonstrates that CroMEL is robust to the calculation levels of the source calculation datasets.

In particular, it was remarkable that fpbe and fhse achieved comparable prediction accuracy to fgw in predicting the experimental band gaps on the EBG dataset, even though fpbe and fhse were trained on the source calculation datasets generated with relatively inaccurate band gap calculations. This result implies two conclusions: (1) The source feature extractors trained with CroMEL captured underlying statistical relationships between the theoretically calculated and experimentally measured band gaps beyond the calculation errors caused by the approximation methods of the quantum mechanical calculations. (2) fgw achieved the best R2-score despite the smallest source calculation dataset, and it shows that the volume and the calculation accuracy are complementary in the cross-modality transfer learning.

Algorithm 1

The Overall Process of the Cross-Modality Transfer Learning Based on CroMEL

Discussion

As shown in Tables 3 and 4, NN-CroMEL outperformed state-of-the-art deep learning models, such as RFN and Roost, for all target experimental datasets. However, the prediction accuracy of NN-CroMEL was marginal compared to that of the ensemble-based model XGBoost on the ESTM-HT-RT-PF, ESTM-HT-ZT, IPOP-300-QE, and IPOP-300-DT datasets. The remarkable prediction accuracy of XGBoost is essentially derived from the ensemble method32, and thus, we may get further accuracy improvements of NN-CroMEL by adopting the ensemble method. Therefore, a systematic approach for combining the ensemble method and the cross-modality transfer learning needs to be conducted as future work of CroMEL.

In this work, since we essentially focused on a practical transfer learning method, we assumed that only chemical compositions are accessible on the target experimental datasets. However, this assumption may be one of the crucial limitations of CroMEL on the target experimental datasets where additional material descriptors (e.g., structural energy, X-ray diffraction patterns, and neutron scattering images) are available because the probabilistic composition encoder cannot estimate the importance of polymorphic crystal structures for a given chemical composition. In future work, it is necessary to develop a prediction model that can handle chemical compositions and informative material descriptors together.

Deep learning is an unexplainable black-box method48, and CroMEL is also not an explainable method. For this reason, although we qualitatively analyzed the knowledge transfer results in Table 2, we were not able to investigate why a source calculation dataset was selected for a given target experimental dataset. In future work, we need to improve the explainability of CroMEL by combining it with explainable machine learning techniques49,50.

Methods

The overall process of cross-modality transfer learning

Algorithm 1 formally describes the entire process of the cross-modality transfer learning based on CroMEL. The entire process consists of three steps: (Step 1) The source feature extractors are generated by minimizing the prediction loss and CroMEL on the given source calculation datasets \(\{{{{\mathcal{D}}}}_{s,1},{{{\mathcal{D}}}}_{s,2},\ldots ,{{{\mathcal{D}}}}_{s,K}\}\). (Step 2) The prediction network f and cross-modality composition encoder ψ are re-trained on the training dataset of \({{{\mathcal{D}}}}_{t}\). (Step 3) Among the K re-trained models, \({f}_{{k}^{* }}^{* }\) and \({\psi }_{{k}^{* }}^{* }\) with the smallest prediction error on the validation dataset of \({{{\mathcal{D}}}}_{t}\) are selected as the final target prediction model f* ∘ ψ*.

Implementations and hyperparameter settings

In the implementation of Algorithm 1, we employed message passing neural network (MPNN)51 and a crystal graph convolutional neural network (CGCNN)52 to implement the structure encoder π. However, we used the simple FDNNs with two hidden layers to implement ψ and f. In the cross-modality transfer learning, a total of 26 source feature extractors were generated due to the two types of π based on MPNN and CGCNN. All machine learning models were optimized by the Adam optimizer53. The initial learning rate and weight regularization coefficient of the Adam optimizer were fixed to 5e-4 and 1e-6 for all source calculation and target experimental datasets. All source codes and experiment scripts in this work are publicly available at https://github.com/ngs00/CroMEL.

Data availability

All source calculation and target experimental datasets are available in the references presented in Table 1.

Code availability

All source codes of CroMEL and experiment scripts are publicly available at https://github.com/ngs00/CroMEL.

References

Toyao, T. et al. Machine learning for catalysis informatics: recent applications and prospects. ACS Catal. 10, 2260–2297 (2019).

Noordhoek, K. & Bartel, C. Accelerating the prediction of inorganic surfaces with machine learning interatomic potentials. Nanoscale (2024).

Na, G. S., Jang, S. & Chang, H. Predicting thermoelectric properties from chemical formula with explicitly identifying dopant effects. Npj Comput. Mater. 7, 106 (2021).

Tao, Q., Xu, P., Li, M. & Lu, W. Machine learning for perovskite materials design and discovery. Npj Comput. Mater. 7, 23 (2021).

Mayr, F., Harth, M., Kouroudis, I., Rinderle, M. & Gagliardi, A. Machine learning and optoelectronic materials discovery: A growing synergy. J. Phys. Chem. Lett. 13, 1940–1951 (2022).

Na, G. S. & Kim, H. W. Contrastive representation learning of inorganic materials to overcome lack of training datasets. ChemComm 58, 6729–6732 (2022).

Xu, P., Ji, X., Li, M. & Lu, W. Small data machine learning in materials science. Npj Comput. Mater. 9, 42 (2023).

Zhang, Y. & Ling, C. A strategy to apply machine learning to small datasets in materials science. Npj Comput. Mater. 4, 25 (2018).

Magar, R. et al. Auglichem: data augmentation library of chemical structures for machine learning. Mach. Learn.: Sci. Technol. 3, 045015 (2022).

Gupta, V. et al. Cross-property deep transfer learning framework for enhanced predictive analytics on small materials data. Nat. Commun. 12, 6595 (2021).

Yamada, H. et al. Predicting materials properties with little data using shotgun transfer learning. ACS Cent. Sci. 5, 1717–1730 (2019).

Kong, S., Guevarra, D., Gomes, C. P. & Gregoire, J. M. Materials representation and transfer learning for multi-property prediction. Appl. Phys. Rev. 8, 021409 (2021).

Jha, D. et al. Enhancing materials property prediction by leveraging computational and experimental data using deep transfer learning. Nat. Commun. 10, 5316 (2019).

Goodall, R. E. & Lee, A. A. Predicting materials properties without crystal structure: deep representation learning from stoichiometry. Nat. Commun. 11, 6280 (2020).

Weiss, K., Khoshgoftaar, T. M. & Wang, D. A survey of transfer learning. J. Big Data 3, 1–40 (2016).

Wang, Q., Ma, Y., Zhao, K. & Tian, Y. A comprehensive survey of loss functions in machine learning. Ann. Data Sci. 9, 187–212 (2020).

Zhuang, F. et al. A comprehensive survey on transfer learning. Proc. IEEE 109, 43–76 (2020).

Neumann, M. A., Leusen, F. J. & Kendrick, J. A major advance in crystal structure prediction. Angew. Chem., Int. Ed. 47, 2427–2430 (2008).

Li, J. & Sun, J. Application of X-ray diffraction and electron crystallography for solving complex structure problems. Acc. Chem. Res. 50, 2737–2745 (2017).

Panaretos, V. M. & Zemel, Y. Statistical aspects of Wasserstein distances. Annu. Rev. Stat. Appl. 6, 405–431 (2019).

Hornik, K., Stinchcombe, M. & White, H. Multilayer feedforward networks are universal approximators. Neural Netw. 2, 359–366 (1989).

Diakonikolas, I. et al. Robustly learning a Gaussian: Getting optimal error, efficiently. In ACM-SIAM SODA, 2683–2702 (SIAM, 2018).

Vahdat, A. & Kautz, J. Nvae: A deep hierarchical variational autoencoder. NeurIPS 33, 19667–19679 (2020).

Ho, J., Jain, A. & Abbeel, P. Denoising diffusion probabilistic models. NeurIPS 33, 6840–6851 (2020).

Han, X., Zheng, H. & Zhou, M. Card: Classification and regression diffusion models. NeurIPS 35, 18100–18115 (2022).

Zeni, C. et al. A generative model for inorganic materials design. Nature 639, 624–632 (2025).

Kingma, D. P., Salimans, T. & Welling, M. Variational dropout and the local reparameterization trick. NeurIPS Vol. 28 (Curran Associates, Inc., 2015).

Van Erven, T. & Harremos, P. Rényi divergence and Kullback-Leibler divergence. IEEE Trans. Inf. Theory 60, 3797–3820 (2014).

Arjovsky, M., Chintala, S. & Bottou, L. Wasserstein generative adversarial networks. In ICML, 214–223 (PMLR, 2017).

Jain, A. et al. The materials project: A materials genome approach to accelerating materials innovation. APL Mater. 1, 011002 (2013).

Landis, D. D. et al. The computational materials repository. Comput. Sci. Eng. 14, 51–57 (2012).

Chen, T. & Guestrin, C. Xgboost: A scalable tree boosting system. In SIGKDD, 785–794 (2016).

Rosenblatt, F. The perceptron: a probabilistic model for information storage and organization in the brain. Psychol. Rev. 65, 386 (1958).

Jha, D. et al. Elemnet: Deep learning the chemistry of materials from only elemental composition. Sci. Rep. 8, 17593 (2018).

Huang, K., Wang, Y., Tao, M. & Zhao, T. Why do deep residual networks generalize better than deep feedforward networks?—a neural tangent kernel perspective. NeurIPS 33, 2698–2709 (2020).

Nagelkerke, N. J. et al. A note on a general definition of the coefficient of determination. Biometrika 78, 691–692 (1991).

Lansford, J. L., Barnes, B. C., Rice, B. M. & Jensen, K. F. Building chemical property models for energetic materials from small datasets using a transfer learning approach. J. Chem. Inf. Model. 62, 5397–5410 (2022).

Morgan, D. & Jacobs, R. Opportunities and challenges for machine learning in materials science. Annu. Rev. Mater. Res. 50, 71–103 (2020).

Na, G. S. & Chang, H. A public database of thermoelectric materials and system-identified material representation for data-driven discovery. Npj Comput. Mater. 8, 214 (2022).

Veličkovic, P. et al. Graph attention networks. In ICLR (2018).

Nguyen, C., Hassner, T., Seeger, M. & Archambeau, C. Leep: A new measure to evaluate transferability of learned representations. ICML 7294–7305 (2020).

Nguyen, C. N. et al. Simple transferability estimation for regression tasks. UAI 1510–1521 (2023).

Na, G. S. One-shot heterogeneous transfer learning from calculated crystal structures to experimentally observed materials. Comput. Mater. Sci. 235, 112791 (2024).

Bursch, M., Mewes, J.-M., Hansen, A. & Grimme, S. Best-practice DFT protocols for basic molecular computational chemistry. Angew. Chem., Int. Ed. 61, e202205735 (2022).

Haastrup, S. et al. The computational 2d materials database: high-throughput modeling and discovery of atomically thin crystals. 2D Mater. 5, 042002 (2018).

Adamo, C. & Barone, V. Toward reliable density functional methods without adjustable parameters: The PBE0 model. J. Chem. Phys. 110, 6158–6170 (1999).

Reining, L. The gw approximation: content, successes and limitations. Wiley Interdiscip. Rev. Comput. Mol. Sci. 8, e1344 (2018).

Tjoa, E. & Guan, C. A survey on explainable artificial intelligence (XAI): Toward medical XAI. IEEE Trans. Neural Netw. Learn. Syst. 32, 4793–4813 (2020).

Datta, A. et al. Machine learning explainability and robustness: connected at the hip. In ACM SIGKDD, 4035–4036 (2021).

Caruana, R., Lundberg, S., Ribeiro, M. T., Nori, H. & Jenkins, S. Intelligible and explainable machine learning: Best practices and practical challenges. In ACM SIGKDD, 3511–3512 (2020).

Gilmer, J., Schoenholz, S. S., Riley, P. F., Vinyals, O. & Dahl, G. E. Neural message passing for quantum chemistry. In ICML, 1263–1272 (PMLR, 2017).

Xie, T. & Grossman, J. C. Crystal graph convolutional neural networks for an accurate and interpretable prediction of material properties. Phys. Rev. Lett. 120, 145301 (2018).

Kingma, D. P. & Ba, J. L. Adam: A method for stochastic optimization. In ICLR (2015).

Castelli, I. E. et al. New light-harvesting materials using accurate and efficient bandgap calculations. Adv. Energy Mater. 5, 1400915 (2015).

Moustafa, H. et al. Computational exfoliation of atomically thin one-dimensional materials with application to Majorana-bound states. Phys. Rev. Mater. 6, 064202 (2022).

Kuhar, K. et al. Sulfide perovskites for solar energy conversion applications: computational screening and synthesis of the selected compound lays 3. Energy Environ. Sci. 10, 2579–2593 (2017).

Jørgensen, P. B., Garijo del Río, E., Schmidt, M. N. & Jacobsen, K. W. Materials property prediction using symmetry-labeled graphs as atomic position independent descriptors. Phys. Rev. B 100, 104114 (2019).

Rosen, A. S. et al. High-throughput predictions of metal–organic framework electronic properties: theoretical challenges, graph neural networks, and data exploration. Npj Comput. Mater. 8, 1–10 (2022).

Stanev, V. et al. Machine learning modeling of superconducting critical temperature. Npj Comput. Mater. 4, 29 (2018).

Jang, S., Na, G. S., Choi, Y. & Chang, H. Optical property dataset of inorganic phosphor. Sci. Rep. 14, 7639 (2024).

Hargreaves, C. J. et al. A database of experimentally measured lithium solid electrolyte conductivities evaluated with machine learning. Npj Comput. Mater. 9, 9 (2023).

Morgan, D. Machine Learning Materials Datasets (2018). https://figshare.com/articles/dataset/MAST-ML_Education_Datasets/7017254.

Kim, G., Meschel, S., Nash, P. & Chen, W. Experimental formation enthalpies for intermetallic phases and other inorganic compounds. Sci. Data 4, 1–11 (2017).

Zhuo, Y., Mansouri Tehrani, A. & Brgoch, J. Predicting the band gaps of inorganic solids by machine learning. J. Phys. Chem. Lett. 9, 1668–1673 (2018).

Acknowledgements

This work was supported in part by the National Research Foundation (NRF) grant funded by the Korea government (MSIT) (RS-2023-00283597).

Author information

Authors and Affiliations

Contributions

G.S.N. conducted all work in this research.

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Na, G.S. Cross-modality material embedding loss for transferring knowledge between heterogeneous material descriptors. npj Comput Mater 11, 235 (2025). https://doi.org/10.1038/s41524-025-01723-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41524-025-01723-1