Abstract

Noise is one of the main obstacles to realizing quantum devices that achieve a quantum computational advantage. A possible approach to minimize the noise effect is to employ shallow-depth quantum circuits since noise typically accumulates as circuit depth grows. In this work, we investigate the complexity of shallow-depth linear-optical circuits under the effects of photon loss and partial distinguishability. By establishing a correspondence between a linear-optical circuit and a bipartite graph, we show that the effects of photon loss and partial distinguishability are equivalent to removing the corresponding vertices. Using this correspondence and percolation theory, we prove that for constant-depth linear-optical circuits with single photons, there is a threshold of loss (noise) rate above which the linear-optical systems can be decomposed into smaller systems with high probability, which enables us to simulate the systems efficiently. Consequently, our result implies that even in shallow-depth circuits where noise is not accumulated enough, its effect may be sufficiently significant to make them efficiently simulable using classical algorithms due to its entanglement structure constituted by shallow-depth circuits.

Similar content being viewed by others

Introduction

Quantum optical platforms using photons are expected to play versatile roles in quantum information processing, such as quantum communication, sensing, and computing1,2,3,4,5,6. Especially, quantum optical circuits using linear optics are more experimentally feasible and still have the potential to provide a quantum advantage for quantum computing; representative examples are boson sampling7 or Knill-Laflamme-Milburn (KLM) protocol for universal quantum computation8.

However, as in other experimental platforms, one of the main obstacles to implementing a large-scale quantum device to perform interesting quantum information processing is noise; especially, photon loss and partial distinguishability of photons in photonic devices are typically the most crucial noise sources9,10,11,12. Through experimental realizations of intermediate-scale quantum devices using photons and thorough theoretical analysis of the effect of loss and noise, many recent results show that they can significantly reduce the computational power of the quantum devices9,10,11,12,13,14,15,16,17,18,19,20,21,22,23. Hence, there have been significant interests and efforts in reducing the effect of photon loss and partial distinguishability, such as developing quantum error correction codes24,25,26,27,28.

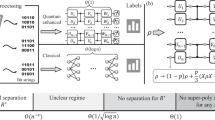

The key idea of most of the existing classical algorithms for simulating lossy systems is that their output state becomes classical when the loss rate increases as the system size grows13,16,17,19,21,22,23,29,30. In particular, refs. 13,16,17,23,29 show that when the total transmission rate scales as \(O(1/\sqrt{N})\), where N is the input photon number, the circuit can be approximately described by a nonnegative quasi-probability distribution31,32 and thus be classically simulated efficiently. Also, other classical algorithms considering various types of noises and using an approximation of the output probability by low-degree polynomials from refs. 14,15,21,33 assume Haar-random linear-optical circuits for their algorithms to be guaranteed to be efficient, which inevitably requires deep circuits34 (See Supplementary Material (SM) for more detailed discussion).

Hence, another promising path to quantum advantage is to employ shallow-depth quantum circuits to minimize the photon-loss effect, where the loss rate does not necessarily increase with system size, and the existing methods do not directly apply. In fact, a worst-case constant-depth linear-optical circuit with single photons is proven hard for classical computers to exactly simulate unless the polynomial hierarchy (PH) collapses to a finite level35. Furthermore, there have been many attempts to prove the average-case hardness of approximate simulation of shallow-depth boson sampling circuits36,37,38. In addition, there have been proposals and proof-of-principle experiments that implement linear-optical circuits with high-degree connectivity, or even all-to-all connectivity39,40,41,42,43,44. Since one main reason to utilize shallow-depth circuits is to minimize the effect of noise and loss, a pertinent question that must be answered is whether shallow-depth circuits, even under the effect, are hard to classically simulate or it again destroys the potential quantum advantage. It is worth emphasizing that since lossy boson sampling is hard to exactly classically simulate unless the PH collapses to a finite level (see the main text for more details), an appropriate notion of simulation here is not an exact but approximate simulation, which is also a usual notion of quantum advantage7.

To address this question, in this work, we analyze the computational complexity of constant-depth linear-optical circuits with all-to-all connectivity under photon loss and partial distinguishability and prove that when the input state is single photons, there exists a threshold of noise rates above which we can approximately simulate the system efficiently using classical computers. The main idea is to associate a linear-optical circuit with a bipartite graph in such a way that the single photons correspond to one part of the vertices of the graph and the output modes correspond to the other part of the vertices of the graph and they are connected by edges if the single photons can propagate to the output modes through the linear-optical circuit. We then appropriately adapt a well-known result of percolation theory from the study of network45,46 to bipartite graphs, which states that if some of the vertices of a graph of bounded degree are randomly removed, the resultant graph is divided into disjoint logarithmically-small-size graphs with high probability. We then show that the effect of photon loss or partial distinguishability noise exactly corresponds to removing some of the vertices; thus, photon loss or partial distinguishability of photons effectively transforms constant-depth linear-optical circuits into logarithmically-small-size independent linear-optical circuits. Consequently, we can simulate the entire circuit by individually simulating each small-size linear-optical circuit. Our result suggests that while shallow-depth circuits are often believed to be less subject to loss and noise, it may not always be true because the entanglement constituted by shallow-depth circuits may be more easily destroyed by loss and noise. Finally, we numerically analyze the effect for various architectures and discuss a general condition for our result to hold.

Results

Linear-optical circuits with single photons

Consider M-mode linear-optical circuits with N single-photon inputs and arbitrary local measurements. Linear-optical circuits consist of beam-splitter layers, which may be geometrically non-local, i.e., all-to-all connectivity. This setup is the basis of boson sampling7 or the KLM protocol8. While the latter typically require deep linear-optical circuits, we mainly focus on constant-depth circuits, which are expected to be less subject to loss and noise. Here, we define depth as the number of layers of beam splitters that can be implemented in parallel. We emphasize that constant-depth boson sampling is proven hard to exactly classically simulate unless the PH collapses to a finite level35; thus, shallow-depth linear-optical circuits may be sufficient for quantum advantage. Note, however, that it is not yet resolved whether constant-depth linear-optical circuits can yield quantum advantage for approximate simulation, and that, as mentioned in the introduction, approximate simulation is the usual notation for claiming quantum advantage (e.g., ref. 7).

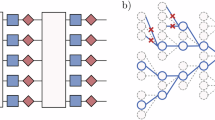

Let us associate a linear-optical circuit with a bipartite graph (see Fig. 1 (a)). To do that, let A and B be the set of input modes that are initialized by single photons and the set of output modes to which the input photons can propagate through the given linear-optical circuit, respectively. Thus, ∣A∣ = N, and ∣B∣ depends on the architecture and the circuit depth. We then introduce a bipartite graph G = (A, B, E) constituted by A and B for vertices on the left and right, respectively, and edges E ⊂ A × B between them. The edges of the bipartite graph are determined by the light cone of input photons through the linear-optical circuit; namely, if a photon from an input mode corresponding to v ∈ A can propagate to an output mode corresponding to w ∈ B, then the graph has an edge between the vertices, i.e., (v, w) ∈ E. Here, we define the size of a bipartite as the number of vertices on the left-hand side, i.e., ∣G∣ = ∣A∣.

a Linear-optical circuit with input photons on red dots and vacuum on empty dots on the left and output modes on blue dots on the right. Input and output modes are coupled by beam-splitter arrays with depth d = 3, which make the input photons propagate through the dotted lines. b The corresponding bipartite graph. c When the second and third photons get lost, the corresponding vertices are removed from the graph. Consequently, the resultant bipartite graph is divided into two disconnected bipartite graphs.

Let us define Δ to be the maximum degree of the bipartite graph G, which is the maximum number of edges connected to a single vertex,

We note that when the depth of a linear-optical circuit is d, the maximum degree of the associated bipartite graph is limited by Δ ≤ 2d because each beam splitter has two-mode input and output. Hence, when the circuit depth is a constant, i.e., d = O(1), the number of output modes relevant to each single photon input is also O(1) for an arbitrary architecture. When the circuit is geometrically local, say D-dimensional system, then each single photon can propagate up to Δ = O(dD). For example, when D = 1, Δ ≤ 2d + 1 and when D = 2, Δ ≤ 2d2 + 2d + 1.

Using the introduced relation between linear-optical circuits and bipartite graphs and percolation theory, we investigate the complexity of simulating the linear-optical circuits under the effect of photon loss or partial distinguishability noise.

Bipartite-graph percolation

Percolation theory describes the behavior of graphs when adding or deleting vertices or edges of graphs45,46. It has been used in quantum information theory to study entanglement in quantum networks47,48,49 and classical simulation of noisy quantum circuits50,51,52,53, including a very recent result that presents an efficient classical algorithm simulating constant-depth noisy IQP circuits53. Using a similar technique, we show that constant-depth linear-optical circuits with single photons are easy to classically simulate when the loss or noise rate is sufficiently high. The key idea for this, together with the percolation lemma below, is that if a single photon is lost or becomes distinguishable from others, the system essentially loses interference induced by the photon. This effect, from a graph perspective, is to remove or decouple the vertex from the graph for loss and distinguishability noise, respectively (see below for more details). To use this property, we adapt a result of percolation theory from refs. 53,54,55 to bipartite graphs (see Methods):

Lemma 1

Let G = (A, B, E) be a bipartite graph of maximum degree Δ. If we independently remove each v ∈ A with probability 1 − η with η < 1/Δ2 and all edges incident to v from E, the resultant bipartite graph is divided into m bipartite graphs \({\{{G}_{i}\}}_{i = 1}^{m}\) disconnected to each other and

Hence, when η < 1/Δ2, with high probability 1 − ϵ, the largest graph size in \({\{{G}_{i}\}}_{i = 1}^{m}\) is smaller than or equal to

From the physical perspective, it implies that for linear-optical circuits with maximum degree Δ, losing 1 − 1/Δ2 portion of input photons is so significant that the remaining system can be effectively described by independent small-size systems.

Photon loss effect

Now, let us consider photon loss on input photons and observe the correspondence between photon loss and the removal of some vertices in the associated bipartite graph as in Lemma 1. When a single photon is subject to a loss channel with loss rate 1 − η, it transforms as

Then, while there is no effect with probability η, the vertex is removed from A with probability 1 − η. Consequently, when N input single photons are subject to a loss channel with loss rate 1 − η, the effect is to remove each vertex with probability 1 − η independently, which is exactly the same procedure in Lemma 1 (see Fig. 1 for the illustration).

By taking advantage of this relation and Lemma 1, we propose a classical algorithm simulating linear-optical systems with N single-photon input when ηΔ2 < 1. First, we remove each vertex in A with probability 1 − η for the associated bipartite graph with a given linear-optical circuit with single photons. For the resultant graph, we identify components \({\{{G}_{i}\}}_{i = 1}^{m}\) that are disconnected from each other. If the size of any connected component Gi obtained from the first step is larger than y*, then we return to the first step. Otherwise, we now have \({\{{G}_{i}\}}_{i = 1}^{m}\) with \(| {G}_{i}| \le {y}^{* }=O(\log (N/\epsilon ))\) for all i’s. Since the number of photons for each connected component scales logarithmically, we can expect that it is easy to classically simulate. Here, ϵ is chosen to be the desired total variation distance (TVD) (see below).

More specifically, since the input photon number in each Gi is at most y* and the number of relevant output modes is at most Δy*, the associated Hilbert space’s dimension is upper-bounded by

where we used \(\left(\begin{array}{c}n\\ k\end{array}\right)\le {(\frac{ne}{k})}^{k}\). Thus, when Δ = O(1), like constant-depth circuits, the dimension is upper-bounded by poly(N/ϵ). Therefore, since writing down the output state and the relevant operators takes polynomial time in N/ϵ for any local measurement, we can efficiently simulate the system. For photon-number detection, like boson sampling7, one may simply use the Clifford-Clifford algorithm, whose complexity is given by \(\tilde{O}({2}^{{y}^{* }})=\,\text{poly}\,(N/\epsilon )\)56.

If Δ scales with the system size N, i.e., beyond constant depth, and the measurement is not photon-number detection, the above counting gives us a superpolynomially increasing dimension in N/ϵ. For this case, to be more efficient, consider a y* number of single photons as an input and note that a linear-optical circuit \(\hat{U}\) transforms the creation operators of input modes \({\hat{a}}_{j}^{\dagger }\) as

where U is the M* × M* unitary matrix characterizing the linear-optical circuit with M* being the relevant number of modes, and we set a bipartite between output modes [1, …, L] and [(L + 1), …, M*]. Then, the output state can be written as

Therefore, for any bipartition, the output state can be described by at most \({2}^{{y}^{* }}=\,\text{poly}\,(N/\epsilon )\) singular values, implying that a matrix product state (MPS) with bond dimension poly(N/ϵ) can describe the state57, which can be found by time-evolution block decimation58. Note that if the circuit is not linear-optical, the above decomposition is no longer valid because output bosonic operators may contain a product of two operators from each partition (e.g., \({\hat{a}}_{1}^{\dagger }{\hat{a}}_{M}^{\dagger }\)).

The remaining question is the algorithm’s error caused by skipping the case where \(\mathop{\max }\limits_{i}| {G}_{i}| > {y}^{* }\). Then, the output probability q of such an algorithm is given by

where p(m∣E) is the conditional probability on the case E where \(\mathop{\max }\nolimits_{i}| {G}_{i}| \le {y}^{* }\); hence, the true output probability is written as

where E⊥ is the case where \(\mathop{\max }\limits_{i}| {G}_{i}| > {y}^{* }\). Then, the TVD between q(m) and p(m) can be shown smaller than ϵ (see Methods). Finally, an overhead per sample from the restart is given by 1/p(E) ≤ 1/(1 − ϵ) = O(1). Thus, we have

Theorem 1

For a given loss rate 1 − η and a linear-optical circuit of maximum degree Δ with N single-photon input, if η < 1/Δ2, there exists a classical algorithm that can approximately simulate the circuit in poly(N, 1/ϵ) within TVD ϵ.

Hence, for constant-depth linear-optical circuits, d = O(1) and Δ = O(1), there is a threshold of loss rate above which it becomes classically easy to simulate. Note that the actual threshold depends on the architecture (see below for further discussion). We emphasize that the measurement basis can be arbitrary as long as it is local; thus, our theorem can be employed beyond boson sampling.

Here, the notion of approximate simulation is crucial because the exact classical simulation of constant-depth lossy linear-optical circuits is hard unless the PH collapses to a finite level. This can easily be shown by noting that postselecting no loss case of lossy boson sampling is equivalent to lossless boson sampling, and constant-depth boson sampling with post-selection is post-BQP due to the measurement-based quantum computing35. Thus, if lossy constant-depth boson sampling can be exactly simulated using classical algorithms efficiently, PH ⊂ PPP = Ppost-BQP = Ppost-BPP59, which contradicts the fact that Ppost-BPP is in the PH60 assuming that the PH is infinite. Hence, the complexity of exact simulation and approximate simulation of constant-depth lossy boson sampling has a gap when the loss rate is above the threshold.

We also consider cases where each layer of beam splitters has transmission rate η1 < 1, thus \(\eta ={\eta }_{1}^{d}\). Since Δ≤2d for any architecture and loss channel with a uniform loss rate commutes with beam splitters (see, e.g., refs. 16,19), we have the following corollary:

Corollary 1

For a linear-optical circuit with N single-photon input and a transmission rate per layer η1, if η1 < 1/4, there exists a classical algorithm that can approximately simulate the circuit in poly(N, 1/ϵ) within TVD ϵ.

For this case, the presented MPS method is crucial because the depth may not be constant.

Partial distinguishability noise

A similar observation can be used when input photons are partially distinguishable, which is another important noise model in optical systems14,15,61,62. The underlying physical mechanism that causes photons to be partially distinguishable is other degrees of freedom of photons, such as polarization and temporal shapes. Consequently, when the other degrees of freedom do not match perfectly, the overlap of the wave functions of a pair of photons becomes less than 1 (see ref. 61 for more discussion). For simplicity, we assume that the overlap for any pairs of photons is uniform as 0 < x < 1.

Reference 62 shows that such a model transforms an N single-photon state to the following density matrix

where \({\hat{\rho }}_{I}\) is the state whose I elements are indistinguishable and others are distinguishable, and pk ≡ xk(1−x)N−k. Then, the quantum state of N partially distinguishable single photons is equivalent to the mixture of an N-particle state obtained by randomly selecting k particles following a binomial distribution with success probability x and setting them indistinguishable bosons and other fully distinguishable particles. Therefore, the remaining N − k photons do not interfere with others. From the graph perspective, it corresponds to decoupling the corresponding vertices from the original graph as illustrated in Fig. 2. A difference from photon loss is that we construct bipartite graphs with each removed vertex. Lemma 1 still applies here because the resultant bipartite graphs still have the maximum size \(O(\log (N/\epsilon ))\) with high probability. Thus,

a Bipartite graph from Fig. 1. b When the second and third photons become distinguishable from other photons, they are isolated from the other vertices. Therefore, the resultant system can be simulated by simulating individual smaller systems.

Theorem 2

For partial distinguishability 1 − x and a linear-optical circuit of maximum degree Δ with N single-photon input, if x < 1/Δ2, there exists a classical algorithm that can approximately simulate the circuit in poly(N, 1/ϵ) within TVD ϵ.

Numerical result

To clearly see the percolation effect, we numerically investigate the maximum size of components \(\mathop{\max }\limits_{i}| {G}_{i}|\) after randomly removing vertices from A. We consider two cases: linear-optical circuits (i) with non-local beam splitters and (ii) with 1D-local beam splitters. For simplicity of the numerical simulation, in the non-local case, for a given input photon number N and the number of modes M = 8N, we randomly generate Δ edges from each input, and, in the 1D case, we generate Δ edges from each input mode to the Δ closest output modes.

For a fixed Δ and different loss rates, we increase the number of input modes N and analyze the largest component size, \(\mathop{\max }\limits_{i}| {G}_{i}|\), which is showcased in Fig. 3. Clearly, the largest component size increases in distinct ways depending on the loss rates; when the loss rate is sufficiently low, it increases linearly, while the loss rate is high enough, it starts to increase logarithmically as expected by Lemma 1 (See SM Section S2.). We also emphasize that the transition point depends on the architecture. For instance, when Δ = 9, the transition occurs between η = 0.4 and η = 0.7 for 1D systems, whereas it occurs between η = 0.02 and η = 0.14, implying that the non-local structure is much more robust than the 1D structure. Therefore, while Lemma 1 presents the worst-case threshold, the actual threshold may depend on the details of the architecture, such as the geometry of the architecture (e.g., non-local beam splitters or geometrically local beam splitters.) and how many output modes are relevant.

The dashed lines indicate the size of graphs with 56 vertices, corresponding to the largest permanent computed so far using a supercomputer71, i.e., the boundary of our classical algorithm. (See the main text for the details and Supplementary Material for different scales of each axis).

Finally, we note that the state-of-the-art (Gaussian) boson sampling experiments suffer from a loss rate around 0.5 − 0.7, i.e., η ≈ 0.3−0.59,10,11,12 and that the largest single-photon boson sampling so far, to the best of our knowledge, is up to 20 photons63. Figure 3 shows that while a very shallow-depth nonlocal circuit with Δ = 4 is easy to simulate using our algorithm around this regime even when the initial number of photons is as large as 104, as the circuit depth increases (e.g., Δ = 9), our algorithm starts to require a computational cost beyond the currently available supercomputers. A similar behavior can be observed for 1D linear-optical circuits. Overall, unless the loss rate is very low, around an initial hundred photons are required for shallow-depth boson sampling to be beyond our classical algorithm. It is worth emphasizing that this boundary does not necessarily imply the boundary of classical algorithms because there may exist better classical algorithms than the presented one.

Discussion on more general cases

We now discuss the possibility of generalizing the above results to more general setups. First of all, for photon-loss cases, it is not hard to see that even if we replace single photons with general Fock states \(\left\vert n\right\rangle\), a similar theorem holds. This is because a Fock state with photon number n transforms under photon loss as

Therefore, we can follow the same procedure as single-photon cases. A difference is that we now sample from a binomial distribution with failure probability (1−η)n, which corresponds to vacuum input, and thus, the associated vertex is removed from the bipartite graph. Consequently, the percolation threshold becomes [1−(1−η)n]Δ2 < 1. For this case, since each input mode has at most n photons, the associated Hilbert space’s dimension for y* is at most

Similarly, for more general input states than Fock states, if the largest photon number of each input state for each mode is constant, the Hilbert space’s dimension is at most polynomial in N/ϵ; more generally, as long as the largest total photon number is bounded by a linear function of y*, the Hilbert space’s dimension is still upper-bounded by poly(N/ϵ). For larger depth d = ω(1), we again need to use the MPS method (see Methods).

Hence, a sufficient condition for the percolation result to apply is that the lossy input state is written as \(\hat{\rho }=(1-p)\left\vert 0\right\rangle \left\langle 0\right\vert +p\hat{\sigma }\), where 0 < p < 1, \(\hat{\sigma }\ge 0\), \({\rm{Tr}}[\hat{\sigma }]=1\), and \(\hat{\sigma }\) can be written in the Fock basis with at most a constant photon number. As the Fock-state example implies, the threshold value depends on how the input state transforms under a loss channel. Thus, an analytic way to compute the threshold value for arbitrary input states has to be further studied. Also, we emphasize that the assumption that the circuit is linear-optical is important because otherwise, we may be able to apply an operation that generates photons in the middle of the circuit, such as a squeezing operation, after the loss channel on the input.

Discussion

We showed that a threshold of loss or noise rate exists above which classical computers can efficiently simulate constant-depth linear-optical circuits with certain input states. Thus, shallow-depth circuits may also be vulnerable to loss and noise because the entanglement in the system constructed by shallow-depth circuits may be more easily annihilated by noise.

An interesting future work is to find a general condition for input states under which the percolation result gives the easiness result. Whereas Fock states’ threshold is easily found, it is not immediately clear to analytically find the threshold of more general quantum states, such as Gaussian states64. Also, while our results hold for arbitrary architecture as long as the depth is constant, the depth limit might be pushed further depending on the details of the architecture, such as the geometry of the circuits and the input state configuration65,66,67. Conversely, investigating the possibility of the hardness of constant-depth boson sampling with a loss rate below the threshold is another interesting future work enabling us to demonstrate quantum advantage even under practical loss and noise effects. Finally, we can easily see that the percolation lemma immediately applies for a continuous-variable erasure channel considered in refs. 68,69,70. Thus, we may be able to apply a similar technique to other noise models, such as more general Gaussian noise64.

Methods

Proof of Lemma 1

We provide the proof of Lemma 1 in the main text. The proof is based on refs. 53,54,55 and is adapted to bipartite-graph cases.

Proof

Suppose we have an N by M bipartite graph G = (A, B, E) with maximum degree Δ, and then remove some vertices on the left-hand side A with probability 1 − η and edges connected to them.

We present an algorithm that constructs a random graph \({G}^{{\prime} }=({A}^{{\prime} },{B}^{{\prime} },{E}^{{\prime} })\) as described in the lemma. Denote the set of the vertices on the left-hand side as A and on the right-hand side as B. We initialize this to be the empty graph. Then, we construct S by querying the vertices on A. A query to vertex v ∈ A succeeds with probability η, in which case the vertex is added to S. When \(S={{\emptyset}}\), the algorithm initializes S by querying all unqueried vertices in G until the first successful query. When \(S\,\ne\, {{\emptyset}}\), the algorithm queries all unqueried vertices in NG(S), where NG(S) is a subset of A which is connected by S through B by one step. The size of NG(S) is upper-bounded by ∣S∣Δ2. Whenever the algorithm runs out of unqueried vertices, it adds S, the vertices in B connected to S, and corresponding edges to \({G}^{{\prime} }\) and resets S and continues. The algorithm finishes when there are no more unqueried vertices in A. Note that when the algorithm adds S and its corresponding vertices in B and edges to \({G}^{{\prime} }\), the added graph is always disconnected to the one in every step, which results in the set of disjoint components \({\{{G}_{i}\}}_{i = 1}^{m}\), where m is the number of steps.

If there is a component Gi of size y + 1 or higher, ∣S∣ must have reached y + 1 at some point. At this point, suppose the most recent vertex added to S is labeled v. To reach this point, we could have made at most ∣S ∪ NG(S − v)∣ ≤ Δ2(∣S∣ − 1) = yΔ2 queries with exactly y + 1 being successful. Hence, the probability of forming a Gi of size y + 1 or higher is upper bounded as

Here, Pr(Bin(yΔ2, q) > y) means the probability of obtaining more than y successes from binomial sampling out of yΔ2 trials with success probability η. Using the Chernoff bound with mean μ = ηyΔ2,

with setting 1 + δ = 1/(Δ2η),

By applying the union bound,

It is worth emphasizing that the lemma can be understood from a previous result53,55 by defining a graph based on the bipartite graph in such a way that the graph’s vertices are A and two vertices have an edge if they are connected by a vertex in B in the original bipartite graph. Physically, two input modes are connected if the photons from them can be outputted in the same output mode, i.e., they can interfere with each other. Using the relation between the underlying bipartite and the new graph G, we can easily see that the maximum degree of the graph G is upper-bounded by Δ2.

Upper bound of total variation distance

We derive the upper bound of the approximation error caused by skipping the case where the connected component’s size is larger than y*. Recall that the output probability q of such an algorithm is given by

where p(m∣E) is the true conditional probability of the case E where the maximum size of the connected components is smaller than or equal to y*; hence, the true probability is written as

where E⊥ is the case where the maximum size of the connected components is larger than y*. Then, the total variation distance between the suggested algorithm’s output probability q(m) and the true output probability p(m) can be shown to be smaller than ϵ:

Matrix product state for more general states than single photons

In this section, we show that any linear-optical circuits with N input states that have a constant maximum photon number can be simulated by a matrix product state with a bond dimension at most cN; thus, the computational cost is exponential in N with a constant c.

Let us consider a linear-optical circuit \(\hat{U}\), which transforms the creation operators of input modes \({\hat{a}}_{j}^{\dagger }\) into the creation operators of output modes \({\hat{b}}_{j}^{\dagger }\) as

where U is an M × M unitary matrix characterizing the linear-optical circuit \(\hat{U}\). When we prepare N input states with maximum photon number \({n}_{\max }\),

the total input state transforms as

Thus, the output state can be written as the linear combination of at most \({[({n}_{\max }+1)({n}_{\max }+2)/2]}^{N}\) vectors that are a product of a vector in u and a vector in d. Therefore, as long as \({n}_{\max }\) is constant, the output state requires at most an exponential number of N singular values; hence, the matrix product state method can simulate the system.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

Duan, L.-M., Lukin, M. D., Cirac, J. I. & Zoller, P. Long-distance quantum communication with atomic ensembles and linear optics. Nature 414, 413–418 (2001).

Kok, P. et al. Linear optical quantum computing with photonic qubits. Rev. Mod. Phys. 79, 135 (2007).

Sangouard, N., Simon, C., De Riedmatten, H. & Gisin, N. Quantum repeaters based on atomic ensembles and linear optics. Rev. Mod. Phys. 83, 33 (2011).

Pirandola, S., Bardhan, B. R., Gehring, T., Weedbrook, C. & Lloyd, S. Advances in photonic quantum sensing. Nat. Photonics 12, 724–733 (2018).

Slussarenko, S. & Pryde, G. J. Photonic quantum information processing: A concise review. Appl. Phys. Rev. 6 (2019).

Bourassa, J. E. et al. Blueprint for a scalable photonic fault-tolerant quantum computer. Quantum 5, 392 (2021).

Aaronson, S. & Arkhipov, A. The computational complexity of linear optics. In Proceedings of the forty-third annual ACM Symposium on Theory of Computing, 333–342 (2011).

Knill, E., Laflamme, R. & Milburn, G. J. A scheme for efficient quantum computation with linear optics. Nature 409, 46–52 (2001).

Zhong, H.-S. et al. Quantum computational advantage using photons. Science 370, 1460–1463 (2020).

Zhong, H.-S. et al. Phase-programmable Gaussian boson sampling using stimulated squeezed light. Phys. Rev. Lett. 127, 180502 (2021).

Madsen, L. S. et al. Quantum computational advantage with a programmable photonic processor. Nature 606, 75–81 (2022).

Deng, Y.-H. et al. Gaussian boson sampling with pseudo-photon-number-resolving detectors and quantum computational advantage. Phys. Rev. Lett. 131, 150601 (2023).

Oszmaniec, M. & Brod, D. J. Classical simulation of photonic linear optics with lost particles. N. J. Phys. 20, 092002 (2018).

Renema, J., Shchesnovich, V. & Garcia-Patron, R. Classical simulability of noisy boson sampling. arXiv preprint arXiv:1809.01953 (2018).

Renema, J. J. et al. Efficient classical algorithm for boson sampling with partially distinguishable photons. Phys. Rev. Lett. 120, 220502 (2018).

García-Patrón, R., Renema, J. J. & Shchesnovich, V. Simulating boson sampling in lossy architectures. Quantum 3, 169 (2019).

Qi, H., Brod, D. J., Quesada, N. & García-Patrón, R. Regimes of classical simulability for noisy Gaussian boson sampling. Phys. Rev. Lett. 124, 100502 (2020).

Renema, J. J. Simulability of partially distinguishable superposition and Gaussian boson sampling. Phys. Rev. A 101, 063840 (2020).

Oh, C., Noh, K., Fefferman, B. & Jiang, L. Classical simulation of lossy boson sampling using matrix product operators. Phys. Rev. A 104, 022407 (2021).

Shi, J. & Byrnes, T. Effect of partial distinguishability on quantum supremacy in Gaussian boson sampling. npj Quantum Inf. 8, 54 (2022).

Oh, C., Jiang, L. & Fefferman, B. On classical simulation algorithms for noisy boson sampling. arXiv preprint arXiv:2301.11532 (2023).

Liu, M., Oh, C., Liu, J., Jiang, L. & Alexeev, Y. Simulating lossy Gaussian boson sampling with matrix-product operators. Phys. Rev. A 108, 052604 (2023).

Oh, C., Liu, M., Alexeev, Y., Fefferman, B. & Jiang, L. Classical algorithm for simulating experimental Gaussian boson sampling. Nat. Phys. 20, 1461–1468 (2024).

Michael, M. H. et al. New class of quantum error-correcting codes for a bosonic mode. Phys. Rev. X 6, 031006 (2016).

Chamberland, C. et al. Building a fault-tolerant quantum computer using concatenated cat codes. PRX Quantum 3, 010329 (2022).

Marshall, J. Distillation of indistinguishable photons. Phys. Rev. Lett. 129, 213601 (2022).

Sivak, V. et al. Real-time quantum error correction beyond break-even. Nature 616, 50–55 (2023).

Faurby, C. F. D. et al. Purifying Photon Indistinguishability through Quantum Interference. Phys. Rev. Lett. 133, 033604 (2024).

Brod, D. J. & Oszmaniec, M. Classical simulation of linear optics subject to nonuniform losses. Quantum 4, 267 (2020).

Oh, C. Recent theoretical and experimental progress on boson sampling. Curr. Opt. Photonics 9, 1–18 (2025).

Mari, A. & Eisert, J. Positive Wigner functions render classical simulation of quantum computation efficient. Phys. Rev. Lett. 109, 230503 (2012).

Rahimi-Keshari, S., Ralph, T. C. & Caves, C. M. Sufficient conditions for efficient classical simulation of quantum optics. Phys. Rev. X 6, 021039 (2016).

Kalai, G. & Kindler, G. Gaussian noise sensitivity and bosonsampling. arXiv preprint arXiv:1409.3093 (2014).

Reck, M., Zeilinger, A., Bernstein, H. J. & Bertani, P. Experimental realization of any discrete unitary operator. Phys. Rev. Lett. 73, 58 (1994).

Brod, D. J. Complexity of simulating constant-depth boson sampling. Phys. Rev. A 91, 042316 (2015).

van der Meer, R., Huber, S., Pinkse, P., García-Patrón, R. & Renema, J. Boson sampling in low-depth optical systems. arXiv preprint arXiv:2110.05099 (2021).

Go, B., Oh, C., Jiang, L. & Jeong, H. Exploring shallow-depth boson sampling: Toward a scalable quantum advantage. Phys. Rev. A 109, 052613 (2024).

Go, B., Oh, C. & Jeong, H. On computational complexity and average-case hardness of shallow-depth boson sampling. arXiv preprint arXiv:2405.01786 (2024).

Crespi, A. et al. Suppression law of quantum states in a 3d photonic fast Fourier transform chip. Nat. Commun. 7, 10469 (2016).

Imany, P., Lingaraju, N. B., Alshaykh, M. S., Leaird, D. E. & Weiner, A. M. Probing quantum walks through coherent control of high-dimensionally entangled photons. Sci. Adv. 6, eaba8066 (2020).

Hu, Y., Reimer, C., Shams-Ansari, A., Zhang, M. & Loncar, M. Realization of high-dimensional frequency crystals in electro-optic microcombs. Optica 7, 1189–1194 (2020).

Lau, H.-K. & James, D. F. Proposal for a scalable universal bosonic simulator using individually trapped ions. Phys. Rev. A 85, 062329 (2012).

Shen, C., Zhang, Z. & Duan, L.-M. Scalable implementation of boson sampling with trapped ions. Phys. Rev. Lett. 112, 050504 (2014).

Chen, W. et al. Scalable and programmable phononic network with trapped ions. Nat. Phys. 19, 877–883 (2023).

Shante, V. K. & Kirkpatrick, S. An introduction to percolation theory. Adv. Phys. 20, 325–357 (1971).

Sahimi, M. Applications of percolation theory (CRC Press, 1994).

Acín, A., Cirac, J. I. & Lewenstein, M. Entanglement percolation in quantum networks. Nat. Phys. 3, 256–259 (2007).

Cuquet, M. & Calsamiglia, J. Entanglement percolation in quantum complex networks. Phys. Rev. Lett. 103, 240503 (2009).

Pant, M., Towsley, D., Englund, D. & Guha, S. Percolation thresholds for photonic quantum computing. Nat. Commun. 10, 1070 (2019).

Aharonov, D. Quantum to classical phase transition in noisy quantum computers. Phys. Rev. A 62, 062311 (2000).

Skinner, B., Ruhman, J. & Nahum, A. Measurement-induced phase transitions in the dynamics of entanglement. Phys. Rev. X 9, 031009 (2019).

Trivedi, R. & Cirac, J. I. Transitions in computational complexity of continuous-time local open quantum dynamics. Phys. Rev. Lett. 129, 260405 (2022).

Rajakumar, J., Watson, J. D. & Liu, Y.-K. Polynomial-time classical simulation of noisy IQP circuits with constant depth. arXiv preprint arXiv:2403.14607 (2024).

Grimmett, G. & Grimmett, G. Some Basic Techniques, 32–52 https://doi.org/10.1137/1.9781611978322 (Springer, 1999).

Krivelevich, M. The phase transition in site percolation on pseudo-random graphs. arXiv preprint arXiv:1404.5731 (2014).

Clifford, P. & Clifford, R. The classical complexity of boson sampling. In Proceedings of the Twenty-Ninth Annual ACM-SIAM Symposium on Discrete Algorithms, 146–155 (SIAM, 2018).

Vidal, G. Efficient classical simulation of slightly entangled quantum computations. Phys. Rev. Lett. 91, 147902 (2003).

Schollwöck, U. The density-matrix renormalization group in the age of matrix product states. Ann. Phys. 326, 96–192 (2011).

Aaronson, S. Quantum computing, postselection, and probabilistic polynomial-time. Proc. R. Soc. A: Math., Phys. Eng. Sci. 461, 3473–3482 (2005).

Han, Y., Hemaspaandra, L. A. & Thierauf, T. Threshold computation and cryptographic security. SIAM J. Comput. 26, 59–78 (1997).

Tichy, M. C. Sampling of partially distinguishable bosons and the relation to the multidimensional permanent. Phys. Rev. A 91, 022316 (2015).

Moylett, A. E., García-Patrón, R., Renema, J. J. & Turner, P. S. Classically simulating near-term partially-distinguishable and lossy boson sampling. Quantum Sci. Technol. 5, 015001 (2019).

Wang, H. et al. Boson sampling with 20 input photons and a 60-mode interferometer in a 1 0 14-dimensional hilbert space. Phys. Rev. Lett. 123, 250503 (2019).

Serafini, A.Quantum continuous variables: a primer of theoretical methods (CRC Press, 2017).

Deshpande, A., Fefferman, B., Tran, M. C., Foss-Feig, M. & Gorshkov, A. V. Dynamical phase transitions in sampling complexity. Phys. Rev. Lett. 121, 030501 (2018).

Oh, C., Lim, Y., Fefferman, B. & Jiang, L. Classical simulation of boson sampling based on graph structure. Phys. Rev. Lett. 128, 190501 (2022).

Qi, H. et al. Efficient sampling from shallow Gaussian quantum-optical circuits with local interactions. Phys. Rev. A 105, 052412 (2022).

Wittmann, C. et al. Quantum filtering of optical coherent states. Phys. Rev. A 78, 032315 (2008).

Lassen, M. et al. Quantum optical coherence can survive photon losses using a continuous-variable quantum erasure-correcting code. Nat. Photonics 4, 700–705 (2010).

Zhong, C., Oh, C. & Jiang, L. Information transmission with continuous variable quantum erasure channels. Quantum 7, 939 (2023).

Elbek, D., Taşyaran, F., Uçar, B. & Kaya, K. Superman: Efficient permanent computation on gpus. arXiv preprint arXiv:2502.16577 (2025).

Acknowledgements

We thank Byeongseon Go and Senrui Chen for interesting and fruitful discussions. This research was supported by the National Research Foundation of Korea (NRF) Grants (Nos.. RS-2024-00431768 and RS-2025-00515456) funded by the Korean government (Ministry of Science and ICT (MSIT)).

Author information

Authors and Affiliations

Contributions

C.O. developed the theory, implemented the numerical experiments, and wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Oh, C. Classical simulability of constant-depth linear-optical circuits with noise. npj Quantum Inf 11, 126 (2025). https://doi.org/10.1038/s41534-025-01041-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41534-025-01041-w