Abstract

Travelable area boundaries not only constrain the movement of field robots but also indicate alternative guiding routes for dynamic objects. Publicly available road boundary datasets have outlined boundaries by binary segmentation labels. However, hard post-processes have to be done to extract from detected boundaries further semantics including the shapes of the boundaries and guiding routes, which poses challenges to a real-time visual navigation system without detailed prior maps. In addition, boundary detectors suffer from insufficient data collected from complex roads with severe occlusion and of different shapes. In this paper, a travelable area boundary dataset is semi-automatically built. 82.05% of the data is collected from bends, crossroads, T-shape roads and other irregular roads. Novel guiding semantics labels, shape labels and scene complexity labels are assigned to boundaries. With the support of the new dataset, travelable area boundary detectors could be trained, evaluated and fairly compared. The dataset can also be used to train, evaluate or test detectors for the road boundary detection task.

Similar content being viewed by others

Background & Summary

Travelable area boundaries as inherent elements of roads, can be reliably used for navigation1 and self-localization2,3 without additional assistance such as road markings or even detailed prior maps, which is essential in places where prior guidance is absent, for instance, parks and campuses. Road boundary detectors are applied to structured roads with stable differences in elevation, material or gradient between travelable areas and other functional areas4. Three-dimensional (3D) point clouds are used since accurate distance measurement under different weather conditions5,6 allows geometric properties such as gradients7,8 and height information9 to be easily obtained. Complex scenes still pose challenges to perception. As one of the common issues, the impact of occlusion has been paid attention and alleviated6,10, especially with the advancements of deep neural networks11, though. Due to the lack of targeted dataset collected from roads with severe occlusion and of different shapes, performance is affected, especially in bends and intersections12.

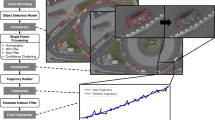

Binary segmentation labels provided by road boundary datasets9,11,13 outline and locate boundaries. They are the results of perception but far away from planning. After getting boundaries, as illustrated in Fig. 1, the shapes of the boundaries can be obtained by calculating and analyzing the gradients between adjacent curbs. Then the shape of the road can be obtained by analyzing the relationships among the boundaries. Recognizing the shapes of boundaries and roads is beneficial for field robots to move smoothly. Finally, guiding routes are available according to path planning. Such a post process should be elaborate so that further semantics, for instance, the shapes of boundaries or the shapes of roads could be timely fed into the next stage in a real-time visual navigation system without detailed prior maps. Fortunately, with the help of powerful feature extractors based on deep neural networks, some steps, such as recognizing the shapes mentioned above could be skipped and omitted from the process of perception to planning, as long as there is a dataset providing corresponding semantic labels.

A post process of perception to planning after detection. The first row shows some steps carried out after a road boundary detector detecting binary boundaries. The shapes of the detected boundaries and the shape of the road should be recognized. Finally, alternative guiding routes are obtained. The second row illustrates that guiding semantics provides the shape information of concern in a more direct way, which lightens the burden of the post process. The yellow arrows represent the position and direction of the robot.

To fill the gaps mentioned above, a travelable area boundary dataset (TAB14) is built in a semi-automatic way. Point clouds are collected in our campus and 82.05% of them are from bends and intersections. Scenes with multiple objects were carefully and specially selected. As illustrated in Fig. 2, not only straight roads, crossroads, T-shape roads, but also some uncommon roads of irregular shapes are recorded. Cars, pedestrians and cyclists, on the other hand, as common dynamic objects in the campus and cities15,16, are recorded in our dataset. They move either from far to near or vice versa. They gather in groups or columns. Some static objects such as parked cars, bicycles, barriers and traffic cones16 are also recorded. Objects may be crowded or sparse. On average, there are 13 objects in each consecutive point cloud sequence. And the maximum number of objects in a sequence can reach 51.

Typical scenes in the TAB14. Satellite images from the Google Earth (https://earth.google.com) and point cloud maps17 of the entire scenes are attached. The yellow arrows represent the position and direction of the robot. The bird’s eye view of the corresponding point cloud is displayed to the right of each group. Crossroads, T-shape intersections, right-angled bends and other irregular roads are included in the TAB14. Cars, pedestrians, cyclists, barriers and traffic cones are common objects and recorded.

Contrary to the methods devoting to improving perception and planning algorithms18, the TAB14 tackles the issue from a data-centric perspective. Boundaries are defined and reflect the semantics of roads in the TAB14. They are outlined in the way similar to the binary segmentation labels provided by road boundary datasets9,11,13. In addition, guiding semantics labels are assigned to the boundaries to narrow the gap between perception and planning since they infer the shapes of boundaries and roads and provide driving suggestions, as illustrated in Fig. 1. A boundary is labeled as turning, which means there is a bend or an intersection and robots and other dynamic objects are allowed or able to change their routes and turn with a high probability. A boundary is labeled as a straight-going side recommending robots to go straight along the boundary. Compared to the shapes of boundaries, guiding semantics can stably predict and claim in advance the shapes of boundaries and the shapes and trends of roads. Therefore, the provided annotations not only outline roads but also refer the shapes and trends of roads, and furtherly give alternative routes to robots and other dynamic objects.

Diverse and poorly maintained roads, interactions among robots and objects, and the sparsity of point clouds jointly enhance the complexity of scenes, which usually causes a boundary that needs to be observed to be partially or completely lost in the collected point clouds and hence not only poses challenges to the perception of robots, but also increases the difficulty and uncertainty of annotation. Scene complexity labels are proposed to indicate these affected parts of boundaries and assess the impact of the complexity of scenes. They directly play an important role during evaluation. Moreover, if these scene complexity labels can be put to good use in the training phase, powerful and efficient supervision could be accomplished.

The TAB14 provides a total of 42 consecutive point cloud sequences, in which 6,350 frame point clouds along with 15,315 boundaries are labeled. Table 1 shows publicly available road boundary datasets along with our TAB14. Due to the emphasis on bends and intersections where interactions and collisions are prone to occur, compared to the NRS13 containing similar number of point cloud frames, the TAB14 collects far more frames from bends and intersections (5,210 frames vs about 2k frames).

In recent years, the binary segmentation labels of boundaries can be extracted from semantic segmentation datasets19,20 by automatic or semi-automatic methods21,22 since boundaries are lines between roads and other functional areas. However, guiding semantics cannot be well extracted from semantic segmentation labels. Due to dynamic changes and interactions, the time-sensitive guiding semantics of the same boundary in two adjacent frames may be different. Therefore, a semi-automatic annotation method is carried out. Firstly, ground points are extracted by point cloud ground segmentation methods23,24. Then coarse curbs can be obtained as they are the points between the ground and non-ground areas. Since some objects in sparse point clouds may be difficult to recognize for annotators, the curbs serve as cues and indicate the potential boundaries.

In summary, our primary contribution is manifold.

-

A novel travelable area boundary dataset is built in a semi-automatic way. It collects 3D point clouds from roads with severe occlusion and of different shapes. As an extended road boundary dataset, it supports training and evaluating detection models for complex scenes.

-

The proposed guiding semantics explores the role of boundaries in visual navigation without detailed prior maps for field robots. It reduces the post process after detection and the gap from perception to planning in a data-centric perspective and enables boundaries to directly give alternative routes to robots and other dynamic objects so that visual navigation systems could be more efficient.

-

The parts of boundaries affected by the diversity of boundaries, the interactions among objects and the sparsity of point clouds are assessed and taken into account during evaluation to alleviate the impact on perception and annotation. Moreover, the proposed scene complexity labels indicating the affected parts can facilitate precise detection if they are put in good use during training.

-

A new visual task, travelable area boundary detection, is proposed that not only boundaries should be detected but also their guiding semantics should be predicted. The provided well-labeled data with evaluation metrics supports the research on the proposed task.

Methods

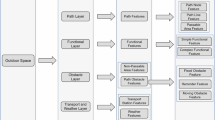

As illustrated in Fig. 3, the process of building the dataset includes collecting data, pre-processing point clouds, automatically labelling curbs, manually labelling and post-processing. In the pre-processing stage, the coordinate system is adjusted and the bird’s eye views (BEVs) of point clouds are prepared for labelling. Coarse curbs detected by a curb detection method indicate potential boundaries, which is auxiliary for annotators. The open-source graphical image annotation tool, LabelMe (https://www.labelme.io), is used when labelling. There are various labels, including binary segmentation labels, guiding semantics labels, shape labels and scene complexity labels. In the post-processing stage, ground truth files are created. All data is split into a training set, a validation set and a test set so that detectors can be trained, evaluated and fairly compared. As an important part of the TAB14, evaluation metrics are illustrated in details.

The process of building the TAB14.

Sensor setup

A LiDAR (Light Detection and Ranging), Velodyne VLP-16, is used for data collection. The Velodyne VLP-16 is a 16-beam spinning long-range LiDAR with a 360-degree horizontal field of view (FOV) and a 30-degree vertical FOV. Its horizontal resolution is set to 0.2 degrees and vertical resolution is 2 degrees.

As illustrated in Fig. 3(a), the LiDAR is tilted forward on the top of a field robot with limited load capacity. The tilt angle in the vertical direction θ is -15 degrees, which optimizes the LiDAR’s front FOV to minimize the blind area in front of the LiDAR and focus on the area below the top of the robot, particularly the ground. The LiDAR is about 0.7 meters above the ground.

Pre-processing

Given a raw point cloud Praw = {(xm, ym, zm, intensitym, beamm)|0≤m < Nraw}, where (xm, ym, zm) is the 3D coordinates of the point pm ∈ Praw, intensitym is the reflection intensity of the point pm ∈ Praw, beamm is the index of beam that the point pm ∈ Praw belongs to and Nraw is the number of the points that make up the point cloud Praw, the coordinate system of the point cloud Praw is adjusted through a rotation transform R firstly so that, in theory, the XOY plane of the adjusted point cloud Padj is parallel to the ground, as illustrated in Fig. 3(b). Given the 3D coordinates (xraw,m, yraw,m, zraw,m) of the point pm ∈ Praw, the adjust coordinates are

Considering the sparsity and visibility of point clouds and the tradeoff between computational load and task requirements, the region of interest is within ρ = 20 meters in front of the robot and between −1.5 meters and 1.5 meters in the vertical direction, which preserves 89.48% of the points ahead in average. The final point cloud P ⊆ Padj provided by the TAB14 is \(P=\{({x}_{n},{y}_{n},{z}_{n},intensit{y}_{n},bea{m}_{n})| \sqrt{{x}_{n}^{2}+{y}_{n}^{2}}\, < \,\rho ,\) \(-\pi /2\le \arctan ({y}_{n}/{x}_{n})\, < \,\pi /2,-1.5\le {z}_{n}\, < \,1.5,0\le n\, < \,N\le {N}_{raw}\}\), where N is the number of the points that make up the point cloud P.

BEVs of point clouds are generated by Algorithm 1 so that the point clouds can be annotated as images. The sizes of the BEVs are 2200 × 4200 where the paddings are 100 pixels on the four sides so that points located at the edge of the region of interest can be well observed and labeled. Therefore, points in a 0.01 × 0.01 × 3 m3 pillar are sampled and the highest point is picked out. The pixel value of the BEVs is the pseudo color calculated according to the reflection intensity of the highest point in the corresponding pillar. Obviously, compared to the resolutions of the LiDAR, the pillar is small enough so that the labelling is at centimeter level.

Algorithm 1.

Generating a bird’s eye view (BEV).

Curb detection

Annotation is conducted with the assistance of curbs which are detected and automatically labeled by a curb detection method. Ground points are extracted firstly by the fast point cloud ground detection method23. Then curbs are detected because they are between ground and non-ground points. Detectors25,26 based on deep neural networks are usually trained using dense point clouds from LiDARs with 64 or more beams16,19,20. They are not used here due to the point clouds collected from the 16-beam LiDAR are too sparse for them to perform well. After adjusting parameters, methods23,24 based on well-designed manual features could apply to the sparse point clouds and quickly meet the requirements of semi-automatic annotation.

Curbs are projected onto the BEVs and presented as points. Annotation files are automatically initialized and record these curb points. Therefore, these curb points can be shown as elements in the LabelMe.

Labelling

Binary segmentation labels

Polygonal lines, line segments and points are recommended to be used to manually outline boundaries. Therefore, binary segmentation labels indicating the positions of boundaries can be obtained according to these line-shape geometric figures. It is the primary goal for travelable area boundary detectors to detect boundaries which restrict the travelable areas of field robots.

Guiding semantics labels

Although some high-level semantics can be explored by analyzing the shapes of boundary lines, travelable area boundary detectors are expected to directly output these advanced semantics in an end-to-end way.

As illustrated in Fig. 4, guiding semantics labels are assigned to outlined boundaries. There are two types of guiding semantics labels:

-

A turning infers a bend or an intersection here or ahead where robots and other dynamic objects are allowed or able to change their routes and turn with a high probability. A boundary is assigned as a turning if 1) the shape of the boundary line is curve, 2) the visible ground surface is fan-shaped; 3) or the opposite boundary on the same side is present and visible.

-

A straight-going side recommends robots had better go straight along the boundary. A boundary is assigned as a straight-going side if it does not meet any of the requirements for being a turning.

Guiding semantics and shapes. The first line is the semantics, and the second line is the corresponding shapes. The shapes of some boundaries may be straight, but there are turnings which indicate bends or intersections. Guiding semantics can stably predict and claim in advance the shapes of boundaries and the trends of roads.

Detectors should predict the guiding semantics of boundaries along with the positions. A few boundaries are difficult to be accurately judge their semantics especially when a straight-going side turns into a turning. They are marked as fuzzy (0.15% of labeled boundaries) along with guiding semantics labels. During evaluation, these fuzzy boundaries are expected to be detected and located while their guiding semantics, regardless of which it is predicted to be, have no effect on the evaluation results.

Shape labels

The shape of a boundary line is one of criteria for the guiding semantics of the boundary as mentioned before. As illustrated in Fig. 4, a boundary could consist of straight parts or curve parts. Given a logical function IF() which checks whether the part is a specified attribute, curve parts are labeled as IF(curve) = true. Although detectors are not expected to analyze the shape of a boundary any more, shapes play a role in evaluation metrics.

Scene complexity labels

Cars, pedestrians and other objects are everywhere in the real world, especially in parks and campuses. Complex scenes pose challenges to detectors and annotators. The TAB14 assesses the influence of the complexity of scenes on observation and annotation, focusing on the diversity of boundaries, the interactions between the robot and objects, and the sparsity of point clouds. A boundary is divided into several parts here. Each part may be labeled with one or more scene complexity labels. Figure 5 shows various types of scene complexity labels. Polygonal lines, line segments and points are recommended again to be used to outline the affected and labeled parts as illustrated in the Fig. 6.

Diverse boundaries. For a boundary itself, its structure and regularity are assessed:

-

A structured road usually consists of curbs, fences and buildings which at least have a stable difference from the travelable area4. Boundaries of structured roads are almost structured. However, some parts of them may be unstructured due to damage, absence and vegetation coverage. On the contrary, boundaries of unstructured areas are almost unstructured. Unstructured parts are difficult to be located and outlined due to the loss of stable structure as illustrated in Fig. 5(b). They are labeled as IF(us) = true.

-

As illustrated in Fig. 5(c), some parts are irregular due to manhole covers4 leading to boundary lines being not smooth. Path planning algorithms should avoid guiding robots into these irregular and protruding areas which are actually travelable but not appropriate. Therefore, it is acceptable that detectors ignore these protruding areas and imprecisely locate these irregular parts. These irregular parts are labeled as IF(ir) = true, which loosens the criteria for determining the correctness of prediction during evaluation.

Interactions. The interactions between the robot and dynamic and static objects are inevitable.

-

Occlusion is a common impact on the observation of boundaries, which has been emphasized by prior works6,10,11,12. It is caused by the objects locating between the robot and boundaries. As a result, the occluded parts are lost in the collected point clouds and the affected boundaries are not continuous. Annotators have to fill in the occluded parts based on adjacent frames, which brings about annotation error. Figure 5(d) illustrates the effect of occlusion caused by some parked bicycles on the observation of a boundary. Occluded parts are marked and labeled as IF(occ) = true so that the annotation error can be taken into account during evaluation.

-

Blindness of a LiDAR also results in the lack of observation and annotation error, which is caused by the LiDAR’s FOV and the relative position between the robot and boundaries. As illustrated in Fig. 5(e), blind parts are always closest to the robot, which affects the relative position between the robot and boundaries and threatens the safety of the robot. Blind parts are marked and labeled as IF(bl) = true.

-

Point cloud distortion27,28 is an important issue in autonomous driving. Due to the working principle of spinning LiDARs, the points in a point cloud frame are not collected at the same time actually and the movement of LiDARs exacerbates the distortion. The faster a LiDAR moves, the more severe the distortion will be. As shown in Fig. 5(f), the boundary should have been straight but the ghost caused by distortion makes it discontinuous. A point in the real-world space is recorded twice in a point cloud frame and has two different coordinates. The two coordinates are correct at different times,but they are confusing for annotators and detectors. Severe distorted parts cannot clearly indicate where the boundary is and are marked and labeled as IF(dt) = true in the TAB14. The annotators are required to reserve the minimum travelable area to guarantee safe driving. Distortion removing methods usually requires the use of other sensors such as inertial measurement units27, which comes at a cost. Travelable area boundary detectors are expected to remove the effect of distortion via training. On the other hand, the evaluation metrics discussed later show tolerance for imprecise prediction caused by distortion.

Sparse point clouds. Although point clouds are able to describe 3D space, their drawback poses challenges to observation. The farther away from the LiDAR, the sparser the point clouds. Sparsity makes it impossible to observe continuous boundaries, which leads to annotators and detectors having to complete the missing parts.

-

Like blind parts, the closest parts of the boundaries may be missed and should be lengthened and completed. These lengthened parts are labeled as IF(len) = true.

-

Distant boundaries may only be scanned by a single beam which causes the locations of them are not accurate enough. These boundaries are labeled as IF(single) = true.

As mentioned above, the effects on observation are various. They may affect the same part. And it is hard to measure the weight of them. To simplify labelling, irregular parts, occluded parts, blind parts, distorted parts, lengthened parts are defined as being mutually exclusive. They are referred to as unclear parts, that is

Post-processing

After labelling guiding semantics, shapes and complexity, all labels are projected to the 3D point cloud space and share the coordinate system with the point cloud P. The i-th boundary Bi has been labeled with guiding semantics, a boolean value indicating whether its guiding semantics is fuzzy and a boolean value IF(single)i indicating whether it is only scanned by a single beam.

For the i-th boundary Bi, dense two-dimensional (2D) points are sampled every 0.01 meters along the X and Y axes. For the j-th point di,j, shape and scene complexity labels are assigned to it if it belongs to certain affected parts. In addition, if a dense point is closest to the end of a boundary line, it is identified as an endpoint and labeled as IF(end)i,j = true. Generally, a boundary line has two endpoints.

Split

In the TAB14, continuous point cloud frames are organized in the form of sequences. In order to promote the research on the travelable area boundary detection, all labeled point cloud sequences are split into a training-validation set and a test set in an approximate 7:3 ratio. About 25% of the ground truth files in the training-validation set are randomly sampled as the validation set. Table 2 shows some statistics regarding the training-validation set and the test set.

Evaluation metrics

A boundary is consist of a set of points and a scene includes some boundaries. The expected prediction result is a set of points with guiding semantics. A set of predicted points with a certain guiding semantics will be tried to match with ground truth boundaries with the same guiding semantics and fuzzy boundaries no matter which guiding semantics labels they have.

Since there are correlations among boundaries, often manifesting as parallel or intersecting and appearing in pairs, travelable area boundary detectors are assessed at point level and boundary level during evaluation. The point-level assessment evaluates the completeness of each boundary while the boundary-level assessment evaluates the completeness of a scene.

Keypoints. To reduce computational consumption, dense points are sampled by BEV sampling as illustrated in Algorithm 2. The size of the BEV is 64 × 128. Therefore, for each boundary, keypoints with an interval of about 0.3 meters are prepared for evaluation, which are not sparse for autonomous driving tasks. The interval is larger than the tolerant radii of 99.6% of keypoints that will be illustrated below, which makes the offset prediction significant. In detail, for each 0.3125 × 0.3125 m2 grid, a keypoint is generated by aggregating dense points projected in the grid. The coordinates of it are the average of the coordinates of all sampled points. Other attributes of the keypoint are identified as long as these attributes exist in the sampled dense points.

Algorithm 2.

Generating keypoints.

Tolerant radius. Each keypoint has a tolerant radius which is affected by some factors including scene complexity labels. The predicted points within the radius are correctly predicted points. Meanwhile, the corresponding keypoint is recalled. A tolerant radius ri,k of the k-th keypoint gi,k belonging to the i-th boundary Bi is comprised of four parts.

-

The base value rbase is 0.1 meters.

-

If the keypoint is an endpoint, the radius increases by 0.1 meters.

-

Since the working principle of the spinning LiDAR, the sparsity of point clouds is positively correlated with the distance from the origin. Therefore, the distance from the keypoint to the origin is a weight used to measure the sparsity.

-

Taking scene complexity into consideration, the number of affected parts the keypoint gi,k belongs to is a weight used to measure the complex impact on the observation of the keypoint. It is denoted as μi,k.

For the i-th boundary Bi, the set of keypoints is Gi = {(xi,k, yi,k, IF(curve)i,k, F(us)i,k, IF(ir)i,k, IF(occ)i,k, IF(bl)i,k, IF(dt)i,k, IF(len)i,k, IF(end)i,k)|0 ≤ k < Nkey,i}, where Nkey,i is the number of the keypoints. The tolerant radius ri,k of the k-th keypoint gi,k is

In theory, the minimum of the tolerant radius is 0.1 meters and the maximum is less than 0.436 meters. However, statistic shows the maximum is less than 0.42 meters and the average radius is about 0.15 meters.

Point-level assessment. F1-score is used to evaluate the completeness of a predicted point set. For the boundary Bi and the keypoint set Gi, given the number of predicted points Qpoint,i, the number of correctly predicted points Ncorrect,i≤Qpoint,i, the number of keypoints Nkey,i and the number of recalled keypoints Nrec,i, the point-level F1-score Fp,i can be calculated:

In practice, more than one boundary will be predicted at once. The point-level F1-scores between predicted boundaries and labeled boundaries are calculated. They are treated as similarity scores and fed into the Kuhn-Munkres algorithm29,30, an one-to-one matching method. Therefore, the final F1-scores of the boundaries are determined.

Boundary-level assessment. Three thresholds are set, which are 0.3, 0.5 and 0.8. For each threshold τ, the predicted boundaries who are matched with labeled boundaries and whose point-level F1-scores are larger than or equal to the threshold are true positive predicted boundaries. Therefore, given the number of predicted boundaries Qbound, the number of true positive predicted boundaries NTP ≤ Qbound and the number of labeled boundaries Nbound, the boundary-level F1-score Fτ can be calculated:

Data Records

The dataset, TAB14, is available at the GitHub repository (https://github.com/kaiopen/tab). It offers point cloud files in PCD (Point Cloud Data) format, ground truth files in JSON (JavaScript Object Notation) format, split files in JSON format and a Python script to initialize the dataset. The point cloud files and ground truth files are named with timestamps and saved in different paths based on sequence numbers. They can be read as same as text files. Split files record sequence numbers and timestamps of point cloud files belonging to the training-validation set and the test set. All files are packaged and compressed, and available in the GitHub repository. Extract the files and ensure that the file structures are as shown in the Fig. 7. It is recommended to run the Python script before using the TAB14.

A Python toolkit for quick access to the TAB14 is provided in the GitHub repository (https://github.com/kaiopen/tab_kit). It provides the friendly interfaces to visit and visualize point clouds and labels. The evaluation methods are available, too.

Technical Validation

Statistics and characteristics

The TAB14 provides 6,350 frame point clouds along with 15,315 labeled boundaries. As illustrated in Fig. 8(a), 59% of boundaries consist of curve parts and are curve while 68% are marked as turning, which proves that a turning may not a curve boundary. 0.15% of labeled boundaries are marked as fuzzy. And 52.17% of these fuzzy boundaries are straight-going sides. The proportion of fuzzy boundaries is very small. It is acceptable to ignore these fuzzy boundaries or ignore these fuzzy labels during training because the evaluation metrics are loose towards them.

Emphasizing bends and intersections. Interactions, including friction, usually occur at bends and intersections because turning is permitted. Hence, the TAB14 pays much attention to bends and intersections. As illustrated in Fig. 8(b), 82.05% scenes are bends and intersections.

Assessing complex scenes. The complexity of a scene with its impact on the observation of boundaries has been discussed before and labeled. As illustrated in the Fig. 8, 61% boundaries are affected.

Comparable sets. Although the training-validation set and the test set contain diverse scenes, the comparable statistics and distributions have been retained as illustrated in Figs. 9, 10 and 11.

Efficiency of the semi-automatic annotation method

The significant amount of manpower expended on manual annotation is disapproved of. Therefore, a semi-automatic annotation method is used to improve the efficiency of annotation. Coarse curbs are detected by a curb detection method inspired by prior works23,24 according to the differences in elevation and gradient between travelable areas and other functional areas. These coarse curbs are expected to serve as cues and indicate the potential boundaries so that annotators could quickly identify boundaries and reduce omissions.

100 frame point clouds are randomly sampled from the TAB14 for the two efficiency experiments here. Eight participants are involved in the experiments. Four annotators have participated in the annotation and the other four participants are inexperienced and do not engaged in point cloud processing.

Recognition accuracy. It is primary for annotators to correctly identify and recognize boundaries so that accurate and precise annotation could be done, which can reduce the workload of verification and calibration. The eight participants are asked to identify boundaries, including pointing out their approximate locations and recognizing their shapes in each frame within 10 seconds. Table 3 reports the missing rate and recognition accuracy of the eight participants. During the experiment, distant and short boundaries were easily overlooked by participants, while coarse curbs could provided good cues. With the assistance of coarse curbs, the average missing rate of the four annotators has been decreased by 43.61% (4.93% vs 2.78%). Meanwhile, the average missing rate of the four inexperienced participants has been decreased by 53.30% (42.42% vs 19.81%) which is significant. In terms of recognizing boundaries, the average accuracy of the four annotators and the four inexperienced participants has been increased by 4.48% (89.91% vs 93.94%) and 27.59% (43.93% vs 56.06%) respectively. It can be seen that the coarse curbs can effectively reduce the missing rate and improve the recognition accuracy. For inexperienced participants, in particular, the missing rate has dropped dramatically. The assistance can effectively indicate to annotators where boundaries exist.

Time efficiency. Although annotations will be further modified during verification and calibration, annotators are expected to outline boundaries as precisely as possible. The four annotators are asked to outline boundaries as precisely as possible. Table 4 shows the time efficiency including the average time they consumed to complete annotating one frame and the average F1 scores of their annotations. Since coarse curbs not only avoid omissions but also eliminate some ambiguous annotations which have taken much time from the annotators, the average annotation time per frame has been reduced by up to 25.83% (49.02 seconds vs 36.36 seconds). Meanwhile, the annotations have become more precise.

Performance among detectors

The UNet31, HRNet-w1832, DeepLabV3+33 are used as backbones to detect travelable area boundaries and verify the feasibility of the travelable area boundary detection task. The UNet31 is a typical semantic segmentation backbone used, especially in medical image processing. The HRNet-w1832 is a backbone for semantic segmentation and keypoint detection. The DeepLabV3+33 is an efficient convolution neural network for object detection and semantic segmentation. These backbones are combined with a pillar-based point cloud BEV encoder34 and two parallel heads. A multi-channel BEV image with a size of 256 × 512 is generated by the encoder and fed into the backbone. A heatmap and offsets35 constitute the prediction results after the tensor output by the backbone is respectively fed into the two heads. The sizes of the heatmap output by the UNet31 is the same as the input BEV while the sizes of the heatmaps output by the HRNet-w1832 and the DeepLabV3+33 are one quarter of the input BEV. All experiments use the same loss function, optimization algorithm and learning rate scheduler.

Detecting keypoints. Since boundaries are comprised of some keypoints during evaluation, the travelable area boundary detection is treated as a keypoint detection task36. The output heatmap has C channels, where C is the number of guiding semantics, and is of size H × W. Anchors are arranged densely and without overlap on the XOY plane of the point cloud space. One anchor corresponds to a pixel of the heatmap. Therefore, the heatmap models the probability of anchors being keypoints with specified semantics. In other words, given a keypoint gi,k from the set of keypoints Gi of the i-th boundary Bi whose guiding semantics is c, its coordinates in the point cloud space is (xi,k, yi,k) and its coordinates in the heatmap space is (ui,k, vi,k) and

where ρ is the radius of the region of interest. For this keypoint gi,k, there is one ground-truth positive anchor located at (ui,k, vi,k) whose penalty \({\widehat{s}}_{(c,{u}_{i,k},{v}_{i,k})}=1\), and all other anchors are negative. During training, the penalty given to negative anchors within a radius of the positive anchor is reduced while the penalty of other anchors is 0. Here, the radius is equal to the tolerance radius ri,k of the keypoint gi,k. Given an anchor located at (u, v) in the heatmap space, its distance \({d}_{(u,v)\to {g}_{i,k}}\) to the keypoint gi,k located at (xi,k, yi,k) in the point cloud space is

and its penalty \({\widehat{s}}_{(c,u,v)}\) is

Let s(c, u, v) be the probability score at location (u, v) for guiding semantics c in the predicted heatmap, the variant of focal loss35 is

where α = 2 and β = 4 are the hyper-parameters which control the contribution of each anchor.

Predicting which anchor contains keypoints is only a rough indication of positioning. Hence the offsets o(c,u,v) = (Δx(c,u,v), Δy(c,u,v)) between the anchor and the ground truth keypoint should be predicted. Let \({\widehat{o}}_{(c,u,v)}=(\Delta {\widehat{x}}_{(c,u,v)},\Delta {\widehat{y}}_{(c,u,v)})=({u}_{i,k}-\lfloor {u}_{i,k}\rfloor ,{v}_{i,k}-\lfloor {v}_{i,k}\rfloor )\) be the ground truth offsets, the smooth L1 loss at positive anchors where \({\widehat{s}}_{(c,u,v)}=1\) is applied as illustrated in equation (10).

Results and analysis. Table 5 shows the performance among the three models. Although the output of the UNet31 is more intensive than the other two, the F1 scores are not high. Most of boundaries have been predicted while the point-level F1-scores are almost less then 0.8. The performance has been improved as the model has been heavy. The DeepLabV3+33 gets higher point-level scores and boundary-level scores, especially in detecting straight-going sides. The performance of the HRNet-w1832 is the best not only on the point-level assessment but also on the boundary-level assessment. About three-quarters of the boundaries have been predicted with high point-level scores. More than half of the boundaries have a score larger than 0.8.

Some results are visualized in Fig. 12. The UNet31 has poor cognition of the integrity of a boundary, and it is easy to divide a complete boundary into multiple parts, which may be caused by shallow perception. Compare to the HRNet-w1832, the DeepLabV3+33 is poor recognition on guiding semantics and has insufficient grasp of detailed features. The frequent exchanges between multiple scales in the HRNet-w1832 may have a significant impact. The three models have trouble in dealing with occlusion and curves. The cognition of the integrity of boundaries and a scene needs to be enhanced. Although the performance can be further improved, the results show that the TAB14 can support training and evaluating travelable area boundary detection models.

Importance of scene complexity labels

Scene complexity labels mark the parts affected by the diversity of boundaries, the interaction between the robot and objects and the sparsity of point clouds. They directly play an important role in calculating tolerant radii when judging which predicted points are correct during evaluation. These variable tolerant radii result from the consideration of task requirements and the tolerance for annotation error. On the other hand, they have a direct impact on supervision in the supervised training phase as illustrated in equation (8).

Here, the HRNet-w1832 is used as a backbone to detect travelable area boundaries and verify the importance of scene complexity labels. Two detectors are trained. When training the first detector, the tolerant radii are variable as illustrated in equation (3), while when training another detector, the tolerant radii are fixed and set as rbase. During evaluation, the tolerant radii are variable or fixed as rbase too. Table 6 shows the performance of the two detectors evaluated with variable or fixed tolerant radii. Variable tolerant radii during training can reduce false positives as the most mFp and F0.3 are higher than those trained with fixed tolerant radii. On the other hand, the scores are decreased when the detectors are evaluated with fixed tolerant radii. In a word, it is beneficial for a detector to take scene complexity labels into account during training and evaluation.

Usage Notes

The proposed TAB14 has great potential for visual navigation. It provides guiding semantics of travelable area boundaries and can promote the development of new algorithms for both road boundary detection and travelable area boundary detection. Researches on robust detectors are expected. Visual navigation systems could be developed based on guiding semantics. End-to-end navigation could be improved with the help of guiding semantics.

Due to the fact that the selected scenes cannot cover all the diverse roads and traffic environments, applications developed based on this dataset are recommended to be further adapted, including transfer learning and parameter tuning using data collected from target scene.

As illustrated in Fig. 13, the provided point cloud files are in PCD format and the ground truth files are in JSON format. PCD readers and JSON readers can be used to load and read the files. Or the files can be read as text files. The data in the data area as shown in Fig. 13(a) are points in a point cloud frame. One line records a point and has 5 numbers including the 3D coordinates (x, y, z) in the point cloud space, the reflection intensity and the index of beam. The data encircled by the green rectangular is keypoints of a boundary in Fig. 13(b).

A Python toolkit (https://github.com/kaiopen/tab_kit) for quick access to the TAB14 is provided. After preparing the dataset, input the path to the dataset to the toolkit. Both point clouds and labels can be easily accessed via just one line of code. The frames in a sequence can be obtained chronologically through a loop so that detectors using temporal data can be developed. The provided point clouds are well adjusted and clipped, and can be directly fed into detectors. Labels and prediction results can be visualized via the toolkit. The interface of an evaluation method is provided too. Some examples have been released and are available along with the toolkit. With the help of the toolkit, point clouds can be loaded and fed into the detectors mentioned in the experiments. And then boundaries with their guiding semantics can be detected.

Code availability

The TAB14 has been released and available. The source codes for processing raw data, annotation, analysis and visualization are available in the GitHub repository (https://github.com/kaiopen/tab_creator). The codes to train, evaluate and test the travelable area boundary detectors, as well as the three well-trained models, are available in the GitHub repository (https://github.com/kaiopen/tabdet). The Python toolkit for quick access to the TAB14 is provided in the GitHub repository (https://github.com/kaiopen/tab_kit) too.

References

Ort, T., Paull, L. & Rus, D. Autonomous vehicle navigation in rural environments without detailed prior maps. In 2018 IEEE International Conference on Robotics and Automation, 2040–2047, https://doi.org/10.1109/ICRA.2018.8460519 (2018).

Qin, B. et al. Curb-intersection feature based monte carlo localization on urban roads. In 2012 IEEE International Conference on Robotics and Automation, 2640–2646, https://doi.org/10.1109/ICRA.2012.6224913 (2012).

Hata, A. Y., Osorio, F. S. & Wolf, D. F. Robust curb detection and vehicle localization in urban environments. In 2014 IEEE Intelligent Vehicles Symposium Proceedings, 1257–1262, https://doi.org/10.1109/IVS.2014.6856405 (2014).

Mi, X., Yang, B., Dong, Z., Chen, C. & Gu, J. Automated 3d road boundary extraction and vectorization using mls point clouds. IEEE Transactions on Intelligent Transportation Systems 23, 5287–5297, https://doi.org/10.1109/TITS.2021.3052882 (2022).

Yin, J., Shen, J., Guan, C., Zhou, D. & Yang, R. Lidar-based online 3d video object detection with graph-based message passing and spatiotemporal transformer attention. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 11492–11501, https://doi.org/10.1109/CVPR42600.2020.01151 (2020).

Wang, G., Wu, J., He, R. & Tian, B. Speed and accuracy tradeoff for lidar data based road boundary detection. IEEE/CAA Journal of Automatica Sinica 8, 1210, https://doi.org/10.1109/JAS.2020.1003414 (2021).

Rato, D. & Santos, V. Lidar based detection of road boundaries using the density of accumulated point clouds and their gradients. Robotics and Autonomous Systems 138, 103714, https://doi.org/10.1016/j.robot.2020.103714 (2021).

Ai, Y. et al. A real-time road boundary detection approach in surface mine based on meta random forest. IEEE Transactions on Intelligent Vehicles 9, 1989–2001, https://doi.org/10.1109/TIV.2023.3296767 (2024).

Zhang, Y., Wang, J., Wang, X. & Dolan, J. M. Road-segmentation-based curb detection method for self-driving via a 3d-lidar sensor. IEEE Transactions on Intelligent Transportation Systems 19, 3981–3991, https://doi.org/10.1109/TITS.2018.2789462 (2018).

Suleymanov, T., Kunze, L. & Newman, P. Online inference and detection of curbs in partially occluded scenes with sparse lidar. In 2019 IEEE Intelligent Transportation Systems Conference, 2693–2700, https://doi.org/10.1109/ITSC.2019.8917086 (2019).

Jung, Y., Jeon, M., Kim, C., Seo, S.-W. & Kim, S.-W. Uncertainty-aware fast curb detection using convolutional networks in point clouds. In 2021 IEEE International Conference on Robotics and Automation, 12882–12888, https://doi.org/10.1109/ICRA48506.2021.9561358 (2021).

Jung, Y., Seo, S.-W. & Kim, S.-W. Curb detection and tracking in low-resolution 3d point clouds based on optimization framework. IEEE Transactions on Intelligent Transportation Systems 21, 3893–3908, https://doi.org/10.1109/TITS.2019.2938498 (2020).

Gao, J. et al. Lcdet: Lidar curb detection network with transformer. In 2023 International Joint Conference on Neural Networks, 1–9, https://doi.org/10.1109/IJCNN54540.2023.10191580 (2023).

Zhang, K. et al. A travelable area boundary dataset for visual navigation of field robots. figshare https://doi.org/10.6084/m9.figshare.26057854 (2024).

Geiger, A., Lenz, P. & Urtasun, R. Are we ready for autonomous driving? the kitti vision benchmark suite. In 2012 IEEE Conference on Computer Vision and Pattern Recognition, 3354–3361, https://doi.org/10.1109/CVPR.2012.6248074 (2012).

Caesar, H. et al. nuscenes: A multimodal dataset for autonomous driving. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 11618–11628, https://doi.org/10.1109/CVPR42600.2020.01164 (2020).

Shan, T. & Englot, B. Lego-loam: Lightweight and ground-optimized lidar odometry and mapping on variable terrain. In 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems, 4758–4765, https://doi.org/10.1109/IROS.2018.8594299 (2018).

Jia, X. et al. Driveadapter: Breaking the coupling barrier of perception and planning in end-to-end autonomous driving. In 2023 IEEE/CVF International Conference on Computer Vision, 7919–7929, https://doi.org/10.1109/ICCV51070.2023.00731 (2023).

Behley, J. et al. Semantickitti: A dataset for semantic scene understanding of lidar sequences. In 2019 IEEE/CVF International Conference on Computer Vision, 9296–9306, https://doi.org/10.1109/ICCV.2019.00939 (2019).

Liao, Y., Xie, J. & Geiger, A. Kitti-360: A novel dataset and benchmarks for urban scene understanding in 2d and 3d. IEEE Transactions on Pattern Analysis and Machine Intelligence 45, 3292–3310, https://doi.org/10.1109/TPAMI.2022.3179507 (2023).

Bai, D., Cao, T., Guo, J. & Liu, B. How to build a curb dataset with lidar data for autonomous driving. In 2022 International Conference on Robotics and Automation, 2576–2582, https://doi.org/10.1109/ICRA46639.2022.9811676 (2022).

Apellániz, J. L., García, M., Aranjuelo, N., Barandiarán, J. & Nieto, M. Lidar-based curb detection for ground truth annotation in automated driving validation. In 2023 IEEE 26th International Conference on Intelligent Transportation Systems, 5054–5059, https://doi.org/10.1109/ITSC57777.2023.10422558 (2023).

Himmelsbach, M., Hundelshausen, F. v. & Wuensche, H.-J. Fast segmentation of 3d point clouds for ground vehicles. In 2010 IEEE Intelligent Vehicles Symposium, 560–565, https://doi.org/10.1109/IVS.2010.5548059 (2010).

Wen, H., Liu, S., Liu, Y. & Liu, C. Dipg-seg: Fast and accurate double image-based pixel-wise ground segmentation. IEEE Transactions on Intelligent Transportation Systems 25, 5189–5200, https://doi.org/10.1109/TITS.2023.3339334 (2024).

Lee, S., Lim, H. & Myung, H. Patchwork++: Fast and robust ground segmentation solving partial under-segmentation using 3d point cloud. In 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems, 13276–13283, https://doi.org/10.1109/IROS47612.2022.9981561 (2022).

Xiang, P. et al. Retro-fpn: Retrospective feature pyramid network for point cloud semantic segmentation. In 2023 IEEE/CVF International Conference on Computer Vision, 17780–17792, https://doi.org/10.1109/ICCV51070.2023.01634 (2023).

Zhang, B., Zhang, X., Wei, B. & Qi, C. A point cloud distortion removing and mapping algorithm based on lidar and imu ukf fusion. In 2019 IEEE/ASME International Conference on Advanced Intelligent Mechatronics (AIM), 966–971, https://doi.org/10.1109/AIM.2019.8868647 (2019).

Yang, W., Gong, Z., Huang, B. & Hong, X. Lidar with velocity: Correcting moving objects point cloud distortion from oscillating scanning lidars by fusion with camera. IEEE Robotics and Automation Letters 7, 8241–8248, https://doi.org/10.1109/LRA.2022.3187506 (2022).

Kuhn, H. W. The hungarian method for the assignment problem. Naval Research Logistics Quarterly 2, 83–97, https://doi.org/10.1002/nav.3800020109 (1955).

Munkres, J. Algorithms for the assignment and transportation problems. Journal of the Society for Industrial and Applied Mathematics 5, 32–38, https://doi.org/10.1137/0105003 (1957).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention, vol. 9351 of LNCS, 234–241, https://doi.org/10.1007/978-3-319-24574-4_28 (Springer, 2015).

Wang, J. et al. Deep high-resolution representation learning for visual recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence 43, 3349–3364, https://doi.org/10.1109/TPAMI.2020.2983686 (2021).

Chen, L.-C., Zhu, Y., Papandreou, G., Schroff, F. & Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Ferrari, V., Hebert, M., Sminchisescu, C. & Weiss, Y. (eds.) Computer Vision – ECCV 2018, 833–851, https://doi.org/10.1007/978-3-030-01234-2_49 (Springer International Publishing, Cham, 2018).

Lang, A. H. et al. Pointpillars: Fast encoders for object detection from point clouds. In 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 12689–12697, https://doi.org/10.1109/CVPR.2019.01298 (2019).

Law, H. & Deng, J. Cornernet: Detecting objects as paired keypoints. In Proceedings of the European Conference on Computer Vision, https://doi.org/10.1007/s11263-019-01204-1 (2018).

Fang, H.-S. et al. Alphapose: Whole-body regional multi-person pose estimation and tracking in real-time. IEEE Transactions on Pattern Analysis and Machine Intelligence 45, 7157–7173, https://doi.org/10.1109/TPAMI.2022.3222784 (2023).

Author information

Authors and Affiliations

Contributions

K.Z., X.Y. and C.Z. designed the research. X.Y. had raised the question of how to make planned routes smooth and suggested that complex roads should be paid attention, which prompts the proposal of guiding semantics labels and scene complexity labels. K.W. collected data. K.Z., J.X., K.W. and S.W did annotation. K.Z. and J.X. did verification and calibration. K.Z. and C.Z. conceived the semi-automatic annotation method. K.W. provided the point cloud ground segmentation method. K.Z. and K.W. conducted the experiments and analyzed the results. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Zhang, K., Yuan, X., Xu, J. et al. A travelable area boundary dataset for visual navigation of field robots. Sci Data 12, 164 (2025). https://doi.org/10.1038/s41597-025-04457-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41597-025-04457-3