Abstract

There is a need to help advance research on using machine learning and data mining techniques in physics education research (PER), which might still be difficult due to the unavailable dataset for the specific purpose of PER. The SPHERE (Students’ Performance Dataset in Physics Education Research) is presented as an educational dataset of physics learning collected through research-based assessments (RBAs) established by the PER scholars. In this study, students’ performance in physics at four public high schools was probed in three learning domains. It encompassed students’ conceptual understanding, scientific ability, and learning attitude toward physics. The employed RBAs were identified based on the curriculum of physics contents taught to the eleventh-grade students in the ongoing academic year. In this paper, we provide an example that SPHERE could be insightful for training machine learning models to predict students’ performance at the end of the learning process. We also revealed that its predictive performance was superior to the former method of students’ performance prediction as labeled by the physics teachers.

Similar content being viewed by others

Background & Summary

Nurturing physics learning with responsive and adaptive feedback from the assessment results and processes has been consistently studied to enhance the effectiveness of physics education. Research works have been attempted to address students’ learning differences and diversity that warrant the principle of educational equity in physics1,2,3,4,5,6,7,8,9. These streams are grounded based on our departure from the classical theoretical paradigm supposing that assessment is merely defined based on summative perspective (assessment of learning, AoL) to the recent perspective that could be the more constructive position for students’ learning, namely assessment for learning (AfL) and assessment as learning (AaL)10. Teachers should actively monitor students’ learning and dynamically adjust appropriate learning to address differential students’ needs (AfL perspective). Simultaneously, students progressively regulate their own learning process and actively enhance their learning efforts to achieve their goals in physics class (AaL perspective). PER scholars have made some accomplishments to encourage this vision by developing an adaptive tutoring system1,2, studying computerized adaptive testing for physics test3, and modeling students’ learning using the predictive power of machine learning technique4,5,6,7,8,9.

Essentially, the recent PER studies are motivated to monitor the progress of students’ learning studied from the extracted students’ data from the learning environment, as coined by educational data mining (EDM) and learning analytics (LA) scholars11,12. One of the data mining techniques that PER scholars recently focused on is employing the machine learning (ML) method to predict students’ learning performance. For instance, PER scholars in the United States (US) developed ML models using random forest (RF) and logistic regression (LR) techniques4,7,8,9. They aimed to predict university students’ performance in introductory physics based on demographic and in-class assessment data as measured by the Force Concept Inventory (FCI)13 and the Force and Motion Conceptual Evaluation (FMCE)14. The predictive power of ML is a promising research area to advance the prediction method as formerly revealed by physics teachers still facing some challenges in practice15. Despite the promising predictive power of ML, its application in physics education still faces challenges, particularly for educators. In this study, we incorporate random forest analyses16 as a working example and as the secondary focus. We intend to demonstrate how the SPHERE dataset can be useful for predictive modeling specifically for PER purpose.

The EDM studies inevitably require data to train the ML model for the designated purposes17. In aiming for students’ performance prediction in physics, we should prepare the students’ data featuring physics learning predominantly to model the ML to achieve our goal. Nevertheless, the current data-sharing published in the literature is still lacking in addressing particular features of physics education. For example, the well-known Open University Learning Analytics dataset (OULAD)18 might be less applicable as our features employed to train ML in physics education domain. The OULAD is still limited to represent the environment of physics education since its aim is generally assessing students’ learning delivered through online platform. It is only used to analyze student behavior, represented by their actions. As we know, physics education is assessed not only by students’ behavior but also by the cognitive domain. Moreover, to the best of our knowledge, the publication of the aforementioned PER studies4,7,8,9 also have made limited access to their training dataset.

With this in mind, there is a need to help advance research on using ML and EDM techniques in PER, which might face challenges due to the scarcity of dataset for the specific purpose of PER. To bridge this potential challenge, we present a students’ performance in physics education research (SPHERE) dataset for PER scholars who might be willing to use ML and EDM techniques in physics education. The acronym “SPHERE” is formed by combining key terms “students’ performance”, “physics”, and “physics education research” reflecting its specific dedication to the PER community. The SPHERE dataset measures students’ ability and competence of physics in multi-domain aspects. It encompasses conceptual understanding, scientific ability in the physics laboratory, and learning attitude toward physics. Some PER scholars argue that the cooperation of these indicators has been considered essential to success in physics19,20,21. Therefore, featuring students’ learning in physics from the measurement of those multi-domain aspects should be plausible to build a better ML model rather than employing the existing dataset in the literature. This work is the first to create, validate, and publish the previously undocumented dataset. The dataset is newly created and made available to the public for the first time, even though the machine learning algorithm presented in the research improves upon previously used approaches. Unlike previous works that applied established machine learning methods, the dataset itself represents a novel contribution to physics education research by offering a unique resource for predictive modeling.

The PER community has established research-based assessments (RBAs) for the recent decades that have been utilized to produce the SPHERE dataset in the present study. These assessment tools are built from, by, and for researchers, educators, and practitioners in physics education. As such, they can adopt it into their studies or classes. RBAs have been developed based on research evidence and collectively disseminated in the PhysPort platform22. In this study, the SPHERE dataset is collected through RBAs from the PER community ensuring the established validity evidence of the assessment constructs, and eventually increasing its reliability for sustainable application in advancing PER studies or similar fields. Additionally, we expect SPHERE could be a valuable dataset for supporting the development of the PER field primarily in quantitative studies. For example, we might be interested in establishing more robust validity evidence of the employed RBAs using the perspective from classical test theory (CTT) or item response theory (IRT), to study their behavior psychometrically, or to examine the association among the multivariate domains assessed by SPHERE dataset through the method of latent variable analysis, such as structural equation modeling (SEM).

Methods

Ethics statements

The research conducts applied in this study has been reviewed and approved by the ethics committee at the Institute of Research and Community Service of Universitas Negeri Yogyakarta under the ethical approval number T/32.1/UN34.9/KP.06.07/2023 dated 1st October 2023. All necessary permissions were received systematically and ethically, as outlined in the research project proposal. The research aim to publish an open dataset of students’ responses on conceptual understanding, scientific ability, and learning attitude of physics questionnaires underwent a full review. School heads, physics teachers, and students (with parental consent) coordinated with the researchers about the study description, including the practical objectives and methods of the research project, and provided their consent to participate in the survey. The SPHERE dataset could be fully accessed particularly for physics teachers to evaluate their instruction, and they consented to the use of the demographic data to the extent necessary for the research project. Students’ and schools’ privacy was securely protected by fully anonymizing students’ and schools’ specific identities on the test administrations and the records reported in this dataset.

Participants and contexts

A total of 497 students (age: M = 16.2; SD = 0.6, gender ratio: male = 225, female = 272) were involved from three large and a small public high schools located in a suburban district of a high-populated province in Indonesia. While the size of school A, B, and D was greater than school C (Table S1), the current policy entailed schools implementing zoning-based enrollment system by distributing students more diversely among the schools15. Consequently, we could assume that all schools would be considered to have equal student input, even if schools can differ in status, teachers, sizes, facilities, and locations. Before this policy, schools A and D were stereotyped the most popular schools in the district. Most parents wished to bring their children to study there considering the reputation. The parents’ motivation was mostly based on the greater possibility of alumni acceptance in prestigious universities in Indonesia, if their children could be graduated from these schools. In recent years, zoning-based system was initiated by the Indonesian government aimed to diminish the status discriminating the most and least popular schools in the society. Regardless of the parents’ preference, students should enroll in the closest schools measured from their domiciles, though small quotas were facilitated for students from outside the zoning area which have some achievements. We represent this context by noting the domicile variable in Table S1. Students admitted that they mostly lived inside the zoning area.

Some variables related to demographics, accessibility to literature resources, and students’ physics identity have been summarized in Table S1. We decided to incorporate variables of literature accessibility and physics identity because some PER scholars suggest their potential impact on students’ learning in physics23,24. The importance of physics identity should be aligned with the intended occupation of those students planning to pursue a STEM field after high school. In summary, our participants were dominated by female students aged between 15–16 years old living with high-school-educated parents. It is a common value in the Indonesian society that the father is more responsible than the mother in terms of finding stable occupation to make money for their family spendings. Descriptive statistics reported in Table S1 demonstrate that our students had been digitally facilitated, yet still facing some difficulties to access printed books. Participants also identified that they studied physics at night before the tomorrow’s schedule and perceived that their families had provided positive support when they were studying physics.

Data collection

The students were in the eleventh grade or entering the “F phase” as suggested by the Indonesian curriculum. In this learning phase, students were taught about high school physics on measurement, vector, linear and rotational kinematics, linear dynamics, and projectile motion in the first semester. Their physics learning in the second semester was continued on rotational dynamics, fluids, light and mechanical waves, heat and thermodynamics. Formerly, our physics teachers decided to conduct an individual assessment in their schools. Though they have agreement of the concepts taught to the students based on the F phase curriculum, each school might have a separate set of test items or testing platforms. For the purpose of creating the SPHERE dataset, researchers and teachers agreed to standardize measures among the schools. To do this, we decided to adopt RBAs established by the PER community. Some RBAs utilized in the SPHERE dataset were carefully selected based on contents that had been learned by the students in the curriculum. Prior to the test administration, the original RBAs had been translated into the Indonesian language. The translation of the RBAs was then validated by five PER experts equipped with some teaching and research experiences in PER for more than ten years.

Data collection was conducted during the 2023/2024 academic year of high school physics (Fig. 1), with specific activities taking place as follows:

First Semester

Assessments such as the Force Concept Inventory (FCI)13 and the Force and Motion Conceptual Evaluation (FMCE)14 were administered in November 2023 after students had been taught the learning contents.

Second semester

Remaining conceptual assessments including the Rotational and Rolling Motion Conceptual Survey (RRMCS)25, the Fluid Mechanics Concept Inventory (FMCI)26, the Mechanical Waves Conceptual Survey (MWCS)27, the Thermal Concept Evaluation (TCE)28, and the Survey of Thermodynamics Processes and First and Second Laws (STPFaSL)29 were conducted throughout the second semester (January to June 2024) in different sessions based on the student’s learning schedules. A laboratory work through the light diffraction experiment was carried out after the midterm. Students’ activity in the laboratory was observed using the Scientific Abilities Assessment Rubrics (SAAR)20 and their attitude toward physics was measured using the Colorado Learning Attitude about Science Survey (CLASS)21.

The survey was commenced in the first semester (November-December 2023) based on physics teachers’ recommendation, since they should teach their students about the physics contents that will be examined in the survey. At this time, FCI and FMCE were firstly administered. They were given to students separately on different days. The final test of the first semester (FINTEST1) used the teachers-developed items, and researchers only collected the score results from the teachers (Fig. 1). In the second semester (January-June 2024), we continued the survey with the remaining RBAs. We conducted a laboratory experiment on light diffraction considering the available measuring tools and materials facilitated by the involved schools. The organization of RBAs in the second semester might be different for each teacher. Physics teachers reserve the right by the curriculum to arrange their learning sequence independently on the basis of their analysis to meet the students’ needs. For instance, the administration of tests was started using the RRMCS in certain school, yet it was started using the MWCS in other schools. Therefore, the RBA should be administered based on the teachers’ recommendation to ensure their students’ readiness especially for the physics contents examined. Students were allocated approximately 60 minutes for most tests to complete each conceptual inventory. Exceptions were made for assessments requiring extended responses or reasoning, such as the FMCE and RRMCS, which included items with textual reasoning.

The experiment was aimed to measure visible light wavelengths. Students observed the LED light through a diffraction grating and found the rainbow pattern. See Fig. 2 for the schema of the experimental setup. Three slit numbers of diffraction grating observed in the experiment were 100, 300, and 600 lines/mm. There were no differences in the diffraction grating used in each school. Some readers who might be interested to study the experimental design can consult with a reference from Krulj and Nesic30. The experiment cookbook was provided by the researchers to ensure smooth communication of technical setup and tasks given to the students. In the laboratory, students were grouped by the teachers with the rule that four students should be maximum in each group (Fig. 3). Laboratory experiment and report writing took 2 weeks, with one week dedicated to conducting the experiment and the second week allocated for the deadline of the report submission. This light diffraction experiment was the first laboratory for students in the second semester. Meanwhile, in the first semester, the different physics laboratory had been conducted by the physics teachers. Yet, this time was before we granted permissions from schools to collect SPHERE dataset.

Using rubrics provided by SAAR, students’ performance in doing the experiment was observed. A week after the experiment day, students should write the laboratory reports based on data measured by their corresponding group. These laboratory reports were then collected and then evaluated by the researchers using SAAR. The conceptual assessments in the second semester including RRMCS, FMCI, MWCS, TCE, and STPFaSL were administered on different days separately, based on schedules informed by physics teachers (Fig. 1). The CLASS was then given to students directly in one day based on the teachers’ suggestion to gauge students’ learning attitude toward physics. In this study, we employed the Google Form platform to record the students’ data, ensuring efficient data collection and standardization among schools. Hence, smartphone was allowed to use by the students to do the online tests. The RBAs used in this study were essentially conceptual thus a calculator should not be needed. On the other hand, for the need of data analysis in the light diffraction experiment, students could use a calculator. The researchers shared the Google Form links to the physics teachers in each school, and teachers provided the test to their students in the day of physics classes. During the test, physics teachers was informed to minimize the possibility of students’ cheating.

The conceptual constructs measured in the SPHERE dataset will be briefly described. The presentation of the origin of the instruments, example items, and response options are further detailed in the supplementary information. The first RBA employed in this study was the Force Concept Inventory (FCI) (Fig. S1, Table S2). This instrument was intended to measure students’ conceptual understanding of Newtonian mechanics. It was developed by Hestenes, Wells, and Swackhamer in 199213 and is still used to this day31. As an alternative conceptual inventory on Newtonian mechanics, the Force and Motion Conceptual Evaluation (FMCE) was then employed14 (Fig. S2, Table S3). The FMCE was unlike the FCI because some items were designed to probe students’ understanding on interpreting graph. The FCI and the FMCE were used at the first semester in this study. In the second semester, five conceptual RBAs was administered including an attitudinal survey and an assessment of the physics laboratory.

The first test in the second semester was the Rotational and Rolling Motion Conceptual Survey (RRMCS) (Fig. S3, Table S4). This was a two-tier multiple-choice test aimed at probing students’ understanding of rotational motion and notions associated with it25. Then, the subsequent topic taught to the students was fluid mechanics. We examined their conceptual understanding using the Fluid Mechanics Concept Inventory (FMCI) (Fig. S4, Table S5). It was developed as a conceptual inventory to explore students’ ideas of fluid mechanics concepts26. After that, students did the Mechanical Waves Conceptual Survey (MWCS) (Fig. S5, Table S6). This RBA was designed to evaluate students’ understanding of main topics in mechanical waves. To assess students’ understanding of heat concepts, the TCE was utilized in this study28 (Fig. S6, Table S7). The remaining conceptual assessment measured by the SPHERE dataset was examining students’ understanding of thermodynamics. In this regard, we employed the Survey of Thermodynamics Processes and First and Second Laws (STPFaSL)29 (Fig. S7, Table S8). For the students’ scientific ability performed in the physics laboratory described above and their attitude toward physics were probed using the Scientific Abilities Assessment Rubrics (SAAR) (Fig. S8, Table S9) and the Colorado Learning Attitudes about Science Survey (CLASS) (Fig. S9, Table S10) respectively. Those RBAs could be directly downloaded through the PhysPort platform (https://www.physport.org). Interested readers should be signed up as physics educators to get access as required by the PhysPort policy.

Labeling process of the students’ performance in physics

Multi-domain assessment in SPHERE measured students’ performance in physics learning in terms of three learning outcomes. They covers students’ conceptual understanding, scientific abilities, and learning attitude of physics. We argue that these variables could be analyzed to predict students’ performance at the end of the semester within the perspective of machine learning studies4,7,8,9. The predictive results provided by machine learning would be useful to give immediate feedback about the progress of students’ learning and assist teachers to monitor individual students’ needs for future learning. In the SPHERE, students’ performance at the end of the semester was classified dichotomously (1 = high achiever, 0 = low achiever). These grouping labels were obtained in two ways. First, we collected final test scores from the first and second semesters administered by physics teachers (FINTEST1 and FINTEST2). We employed these scores to classify students into those two groups. Machine learning model was trained to predict these classes accordingly. Second, before the second semester of high school physics learning was studied, physics teachers were surveyed. They were asked to make an early justification. They classified their predicted students’ status at the final test of the second semester on the basis of their ongoing observations and assessments from prior learning and their experience interacting with the students (TEACHPRED). This prediction task done by physics teachers was students-oriented justification method. It was proposed by Zhu and Urhahne32 and also has been approached by researchers in physics education33. We were motivated to collect prediction data performed by physics teachers since the aims of this study were to train the machine learning model and compare its performance with human-based judgment.

Data Records

The SPHERE dataset is made publicly available on the Mendeley data repository34 at https://doi.org/10.17632/88d7m2fv7p.2. The dataset is open access and licensed under Creative Commons Attribution 4.0 International (CC BY 4.0). All the data are structured as a single tabular file (SPHERE dataset.xlsx and SPHERE dataset.csv) for ease of use. There are two sheets in the XLSX file. The primary data is stored in the first sheet (“data_raw”), and the second sheet (“Codes”) contains further description of variable names and their corresponding values or categories. Students’ identities have been anonymized using a code system described by a letter and four unique numbers. Each letter indicates the students’ schools (A/ B/ C/ D). The first number pertains to their cohort within the school, and the last three numbers denote the alphabetical order of the students’ names within the cohort. The record of conceptual assessment data measured by RBAs is reported as letters responded by students. This raw type of students’ cognitive response data would be helpful for further analysis of the RBAs at their items and distractors levels. Meanwhile, the records of students’ scientific abilities and attitudes are presented as ordinal number from Likert’s scale as formerly described. There are 331 data columns in the spreadsheet file filled by 497 records corresponding to the number of physics students taking part in the study. There is no missing value in the SPHERE dataset since some incomplete responses have been excluded.

Technical Validation

Research-based assessments (RBAs)

All data presented in this study has been collected through several research-based assessments (RBAs) established by the PER scholars35. An RBA is defined as a standardized assessment of teaching and learning that is developed based on research into student thinking for use by the wider physics education community. The SPHERE presented in this paper has been utilizing these standardized assessments in the community that have been rigorously developed and revised using student ideas and interviews, expert input, and statistical analyses. One could argue that the validity evidence of each RBA can be established from former works using classical test theory (CTT)36, exploratory factor analysis (EFA)21, Rasch model37, and item response theory (IRT) approaches38. On the other way, related resources of RBA validation studies are available in the “Research” tab of the PhysPort platform (Fig. 4) categorized by three levels (gold, silver, and bronze). If you want to check the sample item of each RBA, it could be found in the “Examples” tab in the platform.

While the psychometric properties of these measures are grounded in prior work, we conducted technical checks to ensure their applicability in the Indonesian context. In this paper, we present evidence from content validity assessed by expert judgments, factorial validity, and reliability testing to assess the internal consistency. As described above, the adapted version of RBAs had been validated by five PER experts. A panel of PER experts reviewed the translations for conceptual accuracy and cultural appropriateness. To quantify expert judgments toward the content, the Aiken’s content validity index was employed39. At a minimum, if an item was assessed and more than three experts agreed, it could provide us the evidence of content validity. Overall, experts reached the consensus that the content of the adapted instruments into the Indonesian context was valid and adequate to meet the constructs of the original version.

The argument of factorial validity could be evaluated using the method of confirmatory factor analysis (CFA). Table 1 summarizes some fit indices obtained from the CFA performed using varimax rotation method and weighted least squares with means and variances (WLSMV) estimator for ordinal data40. The CFA modeling was facilitated by the lavaan package in R41. Overall, the confirmatory factor analysis demonstrated that the Indonesian version of RBAs employed in this study has acceptable factorial validity with the criteria of CFI > 0.90, TLI > 0.90, RMSEA < 0.08, and SRMR < 0.0842.

The internal consistency should be recommended to assess the data reliability. A measure of Cronbach’s alpha was computed43. It is a measure of internal consistency, that is, how closely related a set of items are as a group. The results of reliability analysis to the adapted RBAs is summarized in Table 2. We could immediately note that all adapted instruments obtain the acceptable reliability coefficients (α > 0.7). High alpha values indicate that responses for each participant across a set of items are consistent.

Example of machine learning implementation using SPHERE dataset

To demonstrate a working example using the dataset, the random forest (RF) technique was used to predict students’ performance on the high school physics in the end of semester. We decided to continue the merit of the former PER scholars4,7,8,9 to implement the random forest technique that was evident as the most robust classification algorithm in physics education17. The target predicted by this study was students’ performance assessed by the teachers in the final test of the second semester (FINTEST2). Based on this score reported by physics teachers, students’ performance was classified as binary. One was assigned to a student who obtained score higher or equal to the cutoff value, and zero was assigned to those with a final test score less than the cutoff value. The cutoff value was decided from the consensus of standard practice among the physics teachers who participated in the study. Eventually, students’ performance prediction judged by physics teachers (TEACHPRED) based on their summative assessment at the final test of the first semester (FINTEST1) and their students-oriented judgment32 was compared with the RF technique.

In preprocessing the SPHERE dataset, the test scores on the conceptual tests (FCI, FMCE, RRMCS, FMCI, MWCS, TCE, STPFASL) were computed by the researchers using the sum of correct answers submitted by the students on each RBA. The SPHERE dataset did not publish the answer keys of each RBA. They should be accessed under the educator’s account through the PhysPort platform. The guide to obtain access the answer keys for scoring the SPHERE dataset, potential users are suggested to consult with the PhysPort administrator. Nevertheless, for the transparency of this publication, the percentage of responses submitted by the participants is described in the supplementary information (Tables S11–S19). It should be noted that higher proportion of certain responses does not always indicate the correct answers.

On the laboratory reports, students’ works in the physics experiment were observed and assessed by the researchers guided by the SAAR. The score of students’ performance in the physics laboratory of light diffraction experiment was computed by calculating the sum of scores on each element of scientific abilities in the SAAR. Furthermore, scoring the CLASS was done by the researchers using a five-point Likert scale, where strongly disagree and strongly agree assumed to be quantitatively equivalent to 1 and 5 respectively. Negatively worded items in the CLASS scale were recoded to ensure that higher scores consistently reflect more positive attitudes toward learning physics. Obviously, they had been reverted in the published SPHERE dataset in the repository.

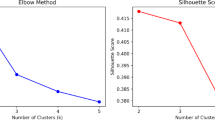

Four RF models were trained in this example using the SPHERE dataset to provide a simple machine learning exercise in this paper. The first model (RF1) included all features provided by the SPHERE dataset. The second model (RF2) contained multi-dimensional students’ performance as probed by the RBAs. The third model (RF3) only explored the demographic variables, literature accessibility, and physics identity. Based on the trained RF2 and RF3 models, the five most important features from the second (RF2) and third (RF3) models were studied using the method of variable importance analysis as illustrated in Fig. 5. From the RF2, we obtained that FMCI, TCE, FMCE, MWCS, and CLASS scores were the most important features to predict students’ performance. From the RF3, we revealed that students’ socioeconomic statuses as described by parents’ occupation, income, and education (FATHINC, FATHOCC, MOTHINC, MOTHOC, and MOTHEDU) were the most important variables for students’ performance prediction model. Based on the mixture of these ten variables, the fourth model (RF4) was developed. The performance of each model is described in Table 3, including the students’ performance prediction performed by physics teachers using their judgement at the start of the second semester (TEACHPRED). Four predictive performance measures are reported in Table 3 including area under the receiver operating characteristic curve (AUROC), accuracy, sensitivity, and specificity. For the purpose of simple explanation, we employed the default hyperparameter tuning facilitated by the randomForest package in R44. The train and test splitting technique was performed using k-fold cross validation method. This practice is recommended for relatively small dataset as reported by this study45. This technique is so popular because it effectively ‘recycles’ the sample by using each of the k splits one time as test set, so that also each observation is tested exactly once. Table 3 summarizes the averaged predictive performance of the trained RF models over ten times repeated train and test subsets of the SPHERE dataset.

We might immediately see in Table 3 that the RF4 is the best-performing model in terms of AUROC and specificity combining features featuring conceptual assessment, scientific ability, learning attitude, and demographic variables. Only involving variables measured by the RBAs, the RF2 model achieves the highest sensitivity (recall), indicating that the RBAs should be useful to measure predictors of the RF model trained using the SPHERE data. The teachers’ prediction method conversely obtains the poorest performance compared with the abovementioned RF-based prediction models. It may be unsurprising because the RF models are trained based on empirical data and statistical technique. As such, the ML method outperforms physics teachers with its more robust statistical technique instead of subjective justification that could be performed by human decision.

As a final remark, one can argue that ML-based prediction method, as demonstrated using random forest technique above, will have three advantages. First, it may provide the early notification of the future students’ performance to decrease students’ failure in physics learning. Second, machine learning can result in a priori probability of students’ obstacles and use this information to adjust the current learning. The implementation of differentiated instruction would be suggested to treat students individually. Third, machine learning and data mining for prediction tasks extract hidden patterns in the learner’s data. This may improve the former teacher’s justification method, which tends to subjectively classify students’ performance as described above. Admittedly, using the SPHERE dataset to build, train, and evaluate machine learning models may suffer disadvantages. The size of SPHERE dataset might be relatively small compared within the practices of the statistical methods. Nevertheless, to the best of our knowledge, predictive modeling in the machine learning does not necessarily require a minimum data size of training data17. Students’ performance as measured by multi-domain aspects in the SPHERE dataset could still increase the value of our work. We acknowledge that a larger dataset would further enhance model replicability. To address the potential issue of data size, it is therefore we are planning to stay updated and collect new information to expand the data size from the current version. A learning management system (LMS) could be developed. Hence, this should be used as data acquisition system when all schools have been registered in a single system. Higher data size inevitably will enhance the prediction performance of a machine learning model. There are always unique patterns from different students’ contexts that should be invaluable to be informed to the trained machine learning models. For example, a variable of physics identity might be aligned with occupation intended by the students in the future. It can predict the possibility of pursuing STEM fields in the undergraduate after high school. This variable was excluded from the SPHERE dataset and should be interesting for future studies.

Usage Notes

The SPHERE dataset has been prepared by the researchers to serve as the basis for further studies in the field of physics education research. The students’ responses on each conceptual assessment, scientific ability, and attitudinal statement are preserved in raw format. To score the students’ performance on each instrument, the answer keys of each conceptual RBA must be downloaded from PhysPort. Unfortunately, the policy only ensures the educator’s access to secure authorization protocol. Henceforth, we determine to exclude the answer keys of each RBA to prevent the potential misconduct that may happen by this publication. Additionally, some RBAs in the SPHERE (particularly in the FMCE and the RRMCS) are able to capture students’ reasoning on the items answered by the students in Indonesian. Potential users are recommended to consult them with Indonesian PER scholars or Indonesian language experts familiar with processing the textual data for the designated purpose of future studies.

The SPHERE dataset was last updated on 17/01/202534. Some records may be extendable after this time. We plan to maintain and keep the SPHERE dataset up-to-date by collating different students across various geographical regions, thereby enhancing the heterogeneity of students captured by the SPHERE. Hence, the new data records will have been made available on the Mendeley data repository with date-based versioning. As more students from different contexts become available through the forthcoming research agenda, more insights could be expanded as the inspiration for further advancement in the field by using the SPHERE. Finally, we invite scholars primarily from the PER community to reuse the SPHERE presented in this paper. Researchers and instructors can freely use it with confidence and know that SPHERE has been rigorously built through several validated and reliable research-based assessments (RBAs) in the PER community.

The SPHERE dataset has been made available under the Creative Commons Attribution (CC BY) license, facilitating educational and research applications. At the moment, no prior publications have utilized or released findings based on the SPHERE dataset. This paper represents the first comprehensive description and introduction of the dataset to the academic community. Users are encouraged to acknowledge this paper when utilizing the dataset in future work.

Code availability

The implementation of machine learning model through the random forest technique using the SPHERE dataset above was performed in R version 4.4.1. We have made the R code accessible on our GitHub repository (https://github.com/santosoph/SPHERE-random-forest) under the MIT open-source license.

References

Katz, S. et al. Linking Dialogue with Student Modelling to Create an Adaptive Tutoring System for Conceptual Physics. Int J Artif Intell Educ 31, 397–445 (2021).

Nakamura, C. M., Murphy, S. K., Christel, M. G., Stevens, S. M. & Zollman, D. A. Automated analysis of short responses in an interactive synthetic tutoring system for introductory physics. Phys Rev Phys Educ Res 12, 010122 (2016).

Yasuda, J. I., Mae, N., Hull, M. M. & Taniguchi, M. A. Optimizing the length of computerized adaptive testing for the Force Concept Inventory. Phys Rev Phys Educ Res 17, 010115 (2021).

Aiken, J. M., Henderson, R. & Caballero, M. D. Modeling student pathways in a physics bachelor’s degree program. Phys Rev Phys Educ Res 15, 010128 (2019).

Mikkelsen, N. J., Young, N. T. & Caballero, M. D. Investigating institutional influence on graduate program admissions by modeling physics Graduate Record Examination cutoff scores. Phys Rev Phys Educ Res 17, 010109 (2021).

Stewart, J., Hansen, J. & Burkholder, E. Visualizing and predicting the path to an undergraduate physics degree at two different institutions. Phys Rev Phys Educ Res 18, 20117 (2022).

Zabriskie, C., Yang, J., Devore, S. & Stewart, J. Using machine learning to predict physics course outcomes. Phys Rev Phys Educ Res 15, 020120 (2019).

Yang, J. et al. Using machine learning to identify the most at-risk students in physics classes. Phys Rev Phys Educ Res 16, 020130 (2020).

Pace, J., Hansen, J. & Stewart, J. Exploring techniques to improve machine learning’s identification of at-risk students in physics classes. Phys Rev Phys Educ Res 20, 010149 (2024).

McMillan, J. H. Classroom Assessment: Principles and Practice That Enhance Student Learning and Motivation (7th Ed.). (Pearson, New York, 2018).

Grimm, A., Steegh, A., Kubsch, M. & Neumann, K. Learning Analytics in Physics Education: Equity-Focused Decision-Making Lacks Guidance! Journal of Learning Analytics 10, 71–84 (2023).

Romero, C. & Ventura, S. Educational data mining and learning analytics: An updated survey. Wiley Interdiscip Rev Data Min Knowl Discov 10, e1355 (2020).

Hestenes, D., Wells, M. & Swackhamer, G. Force concept inventory. Phys Teach 30, 141–158 (1992).

Thornton, R. K. & Sokoloff, D. R. Assessing student learning of Newton’s laws: The Force and Motion Conceptual Evaluation and the Evaluation of Active Learning Laboratory and Lecture Curricula. Am J Phys 66, 338–352 (1998).

Santoso, P. H. & Istiyono, E. & Haryanto. Physics Teachers’ Perceptions about Their Judgments within Differentiated Learning Environments: A Case for the Implementation of Technology. Educ Sci (Basel) 12, 582 (2022).

Breiman, L. Random Forests. Mach Learn 45, 5–32 (2001).

Aiken, J. M., De Bin, R., Lewandowski, H. J. & Caballero, M. D. Framework for evaluating statistical models in physics education research. Phys Rev Phys Educ Res 17, 020104 (2021).

Kuzilek, J., Hlosta, M. & Zdrahal, Z. Open University Learning Analytics dataset. Sci Data 4, 170171 (2017).

Docktor, J. L. & Mestre, J. P. Synthesis of discipline-based education research in physics. Physical Review Special Topics - Physics Education Research 10, 020119 (2014).

Etkina, E. et al. Scientific abilities and their assessment. Physical Review Special Topics - Physics Education Research 2, 020103 (2006).

Adams, W. K. et al. New instrument for measuring student beliefs about physics and learning physics: The Colorado Learning Attitudes about Science Survey. Physical Review Special Topics - Physics Education Research 2, 010101 (2006).

McKagan, S. B. et al. PhysPort Use and Growth: Supporting Physics Teaching with Research-based Resources Since 2011. Phys Teach 58, 465–469 (2020).

Hazari, Z., Sonnert, G., Sadler, P. M. & Shanahan, M. C. Connecting high school physics experiences, outcome expectations, physics identity, and physics career choice: A gender study. J Res Sci Teach 47, 978–1003 (2010).

Träff, U., Olsson, L., Skagerlund, K., Skagenholt, M. & Östergren, R. Logical reasoning, spatial processing, and verbal working memory: Longitudinal predictors of physics achievement at age 12–13 years. Front Psychol 10, 458416 (2019).

Rimoldini, L. G. & Singh, C. Student understanding of rotational and rolling motion concepts. Physical Review Special Topics - Physics Education Research 1, 010102 (2005).

Martin, J., Mitchell, J. & Newell, T. Development of a concept inventory for fluid mechanics. in Proceedings of the 33rd Annual Frontiers in Education 2003 vol. 1 T3D (IEEE, 2003).

Barniol, P. & Zavala, G. Mechanical waves conceptual survey: Its modification and conversion to a standard multiple-choice test. Phys Rev Phys Educ Res 12, 010107 (2016).

Yeo, S. & Zadnik, M. Introductory thermal concept evaluation: assessing students’ understanding. Phys Teach 39, 496–504 (2001).

Brown, B. & Singh, C. Development and validation of a conceptual survey instrument to evaluate students’ understanding of thermodynamics. Phys Rev Phys Educ Res 17, 010104 (2021).

Krulj, I. & Nešić, L. Convenient reflective diffraction gratings in physics teaching. Phys Educ 54, 025015 (2019).

Santoso, P. H. et al. Exploring gender differences in the Force Concept Inventory using a random effects meta-analysis of international studies. Phys Rev Phys Educ Res 20, 010601 (2024).

Zhu, C. & Urhahne, D. Temporal stability of teachers’ judgment accuracy of students’ motivation, emotion, and achievement. European Journal of Psychology of Education 36, 319–337 (2021).

Li, Q., Xu, S., Chen, Y., Lu, C. & Zhou, S. Detecting preservice teachers’ visual attention under prediction and nonprediction conditions with eye-tracking technology. Phys Rev Phys Educ Res 18, 10134 (2022).

Santoso, P. H. et al. SPHERE: Students’ Performance Dataset of Conceptual Understanding, Scientific Ability, and Learning Attitude in Physics Education Research (PER). Mendeley Data https://doi.org/10.17632/88d7m2fv7p.2 (2025).

Madsen, A., McKagan, S. B. & Sayre, E. C. Resource Letter RBAI-1: Research-Based Assessment Instruments in Physics and Astronomy. Am J Phys 85, 245–264 (2017).

Ishimoto, M., Thornton, R. K. & Sokoloff, D. R. Validating the Japanese translation of the Force and Motion Conceptual Evaluation and comparing performance levels of American and Japanese students. Physical Review Special Topics - Physics Education Research 10, 020114 (2014).

Planinic, M., Ivanjek, L. & Susac, A. Rasch model based analysis of the Force Concept Inventory. Physical Review Special Topics - Physics Education Research 6, 010103 (2010).

Stewart, J., Zabriskie, C., Devore, S. & Stewart, G. Multidimensional item response theory and the Force Concept Inventory. Phys Rev Phys Educ Res 14, 010137 (2018).

Penfield, R. D. & Giacobbi, P. R. Applying a Score Confidence Interval to Aiken’s Item Content-Relevance Index. Meas Phys Educ Exerc Sci 8, 213–225 (2004).

Li, C. H. Confirmatory factor analysis with ordinal data: Comparing robust maximum likelihood and diagonally weighted least squares. Behav Res Methods 48 (2016).

Rosseel, Y. Lavaan: An R package for structural equation modeling. J Stat Softw 48 (2012).

Hair, J. F., Black, W. C., Babin, B. J. & Anderson, R. E. Multivariate Data Analysis. https://doi.org/10.1016/j.ijpharm.2011.02.019 (Pearson, US, 2018).

Taber, K. S. The Use of Cronbach’s Alpha When Developing and Reporting Research Instruments in Science Education. Res Sci Educ 48, 1273–1296 (2018).

Breiman, L., Cutler, A., Liaw, A. & Wiener, M. randomForest: Breiman and Cutler’s Random Forests for Classification and Regression. R package https://cran.r-project.org/web/packages/randomForest (2022).

Hilbert, S. et al. Machine learning for the educational sciences. Review of Education 9 (2021).

Acknowledgements

This publication is supported by Beasiswa Pendidikan Indonesia (BPI), a scholarship program from the Indonesian government for pursuing a graduate degree. This program is organized by the Pusat Pembiayaan dan Asesmen Pendidikan Tinggi (PPAPT) as a body of the Kementerian Pendidikan Tinggi, Sains, dan Teknologi (Kemendiktisaintek) and funded by the Lembaga Pengelola Dana Pendidikan (LPDP). Also, we wish to express our highest appreciation specifically to Prof. Dr. Edi Istiyono, M.Si., and Dr. Haryanto, M.Pd., M.T., who have provided invaluable suggestions and insights to this research.

Author information

Authors and Affiliations

Contributions

P.H.S.: Conceptualization, Software, Formal analysis, Investigation, Data curation, Visualization, Writing - Original Draft, Writing - Review & Editing. B.S., Y.K., W., S.B., F., M.K., I.U.W., N.D.W., A.D.K., J.S.: Data curation, Validation, Writing - Review & Editing, Funding acquisition. All authors reviewed and approved the published manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Santoso, P.H., Setiaji, B., Kurniawan, Y. et al. Students’ performance dataset for using machine learning technique in physics education research. Sci Data 12, 987 (2025). https://doi.org/10.1038/s41597-025-04913-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41597-025-04913-0