Abstract

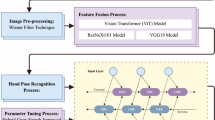

Sign Language Recognition (SLR) is a critical component of human-machine interaction, enabling more inclusive technologies for the deaf and hard-of-hearing community. However, current datasets often suffer from data sparsity and a bias toward right-handed signs. To support this effort, we present Sign4all, a dataset for Spanish Sign Language (LSE), specifically designed for Isolated Sign Language Recognition (ISLR). The dataset is composed of 7,756 high-resolution RGB video recordings and their corresponding skeletal keypoints, covering 24 signs related to daily activities, more specifically a vocabulary centered in the catering field. Unlike sparse lexicons, Sign4all adopts a high-density approach, providing an average of 323 samples per sign to facilitate data-intensive deep learning models. Moreover, the dataset provides a handedness balance, with equal representation of left- and right-handed signs for every sign to support handedness invariance. Each sample was manually segmented, temporally normalized and preprocessed through spatial normalization to guarantee consistency and compatibility with different deep learning pipelines. Technical validation using Transformer and skeletal models demonstrates the dataset’s integrity and the need of providing pre-computed augmentation splits. All data is formatted in widely supported file types (AVI for video, HDF5 for keypoints), enabling direct use in machine learning frameworks such as TensorFlow or PyTorch.

Similar content being viewed by others

Data availability

The complete Sign4all dataset is available at Science Data Bank43. Because the dataset contains identifiable facial and body features –including characteristics from which gender may be inferred– participants are exposed to a potential risk of re-identification. For this reason, access to the dataset is restricted and subject to manual request. To obtain the data, researchers must agree to a Data Usage Agreement (DUA) and provide contact information such as name, email address and affiliation details. Once the request is verified, access will be granted via a secure download link sent by email.

Code availability

None of the six variations of the proposed dataset require any custom code for access or processing since all the data is provided in widely supported formats, as mentioned before. The dataset was processed with Blender 4.0 as video editing software, MediaPipe 0.10.1 for keypoint extraction, and TensorFlow 2.12.0 for model training and testing; all of them under an Arch Linux operative system with Python 3.8. For the dataset recording, Azure Kinect SDK 1.345 with PyKinect Azure46 as Python wrapper was used under Ubuntu 18.04 LTS.

References

Duda, R. O., Hart, P. E. & Stork, D. G.Pattern Classification (2nd Edition) (Wiley-Interscience, USA, 2000).

Tao, T., Zhao, Y., Liu, T. & Zhu, J. Sign language recognition: A comprehensive review of traditional and deep learning approaches, datasets, and challenges. IEEE Access PP, 1–1, https://doi.org/10.1109/ACCESS.2024.3398806 (2024).

Adaloglou, N. et al. A comprehensive study on deep learning-based methods for sign language recognition. IEEE Transactions on Multimedia 24, 1750–1762, https://doi.org/10.1109/tmm.2021.3070438 (2022).

Koller, O., Forster, J. & Ney, H. Continuous sign language recognition: Towards large vocabulary statistical recognition systems handling multiple signers. Computer Vision and Image Understanding 141, 108–125, https://doi.org/10.1016/j.cviu.2015.09.013 Pose and Gesture (2015).

Camgoz, N. C., Hadfield, S., Koller, O., Ney, H. & Bowden, R. Neural sign language translation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018).

Duarte, A. et al. How2sign: A large-scale multimodal dataset for continuous american sign language 2008.08143 (2021).

Sanabria, R. et al. How2: A large-scale dataset for multimodal language understanding 1811.00347 (2018).

Zhou, H., Zhou, W., Qi, W., Pu, J. & Li, H. Improving sign language translation with monolingual data by sign back-translation 2105.12397 (2021).

Armstrong, D. F., Stokoe, W. C. & Wilcox, S. E. Gesture and the nature of language. Cambridge University Press (1995).

Li, D., Opazo, C. R., Yu, X. & Li, H. Word-level deep sign language recognition from video: A new large-scale dataset and methods comparison 1910.11006 (2020).

Caselli, N., Sehyr, Z., Cohen-Goldberg, A. & Emmorey, K. Asl-lex: A lexical database of american sign language. Behavior Research Methods 49, https://doi.org/10.3758/s13428-016-0742-0 (2016).

Sehyr, Z. S., Caselli, N., Cohen-Goldberg, A. M. & Emmorey, K. The asl-lex 2.0 project: A database of lexical and phonological properties for 2,723 signs in american sign language. The Journal of Deaf Studies and Deaf Education 26, 263–277, https://doi.org/10.1093/deafed/enaa038 (2021).

Joze, H. R. V. & Koller, O. Ms-asl: A large-scale data set and benchmark for understanding american sign language. ArXivabs/1812.01053 (2018).

Jin, P. et al. A large dataset covering the chinese national sign language for dual-view isolated sign language recognition. Scientific Data 12 (2025).

Asl alphabet. https://www.kaggle.com/dsv/29550, https://doi.org/10.34740/KAGGLE/DSV/29550.

MNIST. Sign language mnist. https://www.kaggle.com/datasets/datamunge/sign-language-mnist Accessed: January 2025 (2018).

Al-Barham, M. et al. Rgb arabic alphabets sign language dataset, https://doi.org/10.48550/arXiv.2301.11932 (2023).

Sincan, O. M. & Keles, H. Y. Autsl: A large scale multi-modal turkish sign language dataset and baseline methods. IEEE Access 8, 181340–181355, https://doi.org/10.1109/access.2020.3028072 (2020).

Kumwilaisak, W., Pannattee, P., Hansakunbuntheung, C. & Thatphithakkul, N. American sign language fingerspelling recognition in the wild with iterative language model construction. APSIPA Transactions on Signal and Information Processing 11, https://doi.org/10.1561/116.00000003 (2022).

Sunuwar, J., Borah, S. & Kharga, A. Nsl23 dataset for alphabets of nepali sign language. Data in Brief 53, 110080, https://doi.org/10.1016/j.dib.2024.110080 (2024).

Kumar, P., Saini, R., Roy, P. P. & Dogra, D. P. A position and rotation invariant framework for sign language recognition (slr) using kinect. Multimedia Tools Appl. 77, 8823–8846, https://doi.org/10.1007/s11042-017-4776-9 (2018).

Boulesnane, A., Bellil, L. & Ghiri, M. Aslad-190k: Arabic sign language alphabet dataset, https://doi.org/10.31219/osf.io/n236q (2024).

El Kharoua, R. & Jiang, X. Deep learning recognition for arabic alphabet sign language rgb dataset. Journal of Computer and Communications 12, 32–51, https://doi.org/10.4236/jcc.2024.123003 (2024).

Wikipedia. List of sign languages. https://en.wikipedia.org/wiki/List_of_sign_languages Accessed: March 2025 (2025).

Wikipedia. List of sign languages by number of native signers. https://en.wikipedia.org/wiki/List_of_sign_languages_by_number_of_native_signers Accessed: March 2025 (2025).

Ethnologue. Ethnologue. https://www.ethnologue.com Accessed: March 2025 (2025).

Ethnologue. Spanish Sign Language by Ethnologue. https://www.ethnologue.com/language/ssp/ Accessed: March 2025 (2025).

Docío-Fernández, L. et al. LSE_UVIGO: A multi-source database for Spanish Sign Language recognition. In Efthimiou, E.et al. (eds.) Proceedings of the LREC2020 9th Workshop on the Representation and Processing of Sign Languages: Sign Language Resources in the Service of the Language Community, Technological Challenges and Application Perspectives, 45–52 (European Language Resources Association (ELRA), Marseille, France, 2020).

Vázquez Enríquez, M., Castro, J. L. A., Fernandez, L. D., Jacques Junior, J. C. S. & Escalera, S. Eccv 2022 sign spotting challenge: Dataset, design and results. In Karlinsky, L., Michaeli, T. & Nishino, K. (eds.) Computer Vision – ECCV 2022 Workshops, 225–242 (Springer Nature Switzerland, Cham, 2023).

Rodríguez-Moreno, I., Martinez-Otzeta, J. M. & Sierra, B.A Hierarchical Approach for Spanish Sign Language Recognition: From Weak Classification to Robust Recognition System, 37–53 (2022).

Morillas-Espejo, F. & Martinez-Martin, E. A real-time platform for spanish sign language interpretation. Neural Computing and Applications (2024).

Martinez-Martin, E. & Morillas-Espejo, F. Deep learning techniques for spanish sign language interpretation. Computational Intelligence and Neuroscience https://doi.org/10.1155/2021/5532580 (2021).

Stokoe, W.C. Sign Language Structure: An Outline of the Visual Communication Systems of the American Deaf. Studies in Linguistics. Occasional Papers (University of Buffalo, 1960).

Azure Kinect DK oficial page. https://azure.microsoft.com/es-es/products/kinect-dk/ Accessed: February 2025 (2021).

Blender’s page. https://www.blender.org/ Accessed: May 2025 (2025).

Sarge, V., Andersch, M., Fabel, L., Micikevicius, P. & Tran, J. Tips for Optimization GPU Performance using Tensor Cores. https://developer.nvidia.com/blog/optimizing-gpu-performance-tensor-cores/ Accessed: February 2025 (2019).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition 1512.03385 (2015).

Tan, M. & Le, Q. V. Efficientnet: Rethinking model scaling for convolutional neural networks 1905.11946 (2020).

Arnab, A. et al. Vivit: A video vision transformer 2103.15691 (2021).

Tan, M., Pang, R. & Le, Q. V. Efficientdet: Scalable and efficient object detection 1911.09070 (2020).

Lugaresi, C. et al. Mediapipe: A framework for building perception pipelines 1906.08172 (2019).

Papakipos, Z. & Bitton, J. Augly: Data augmentations for robustness 2201.06494 (2022).

Morillas-Espejo, F. & Martinez-Martin, E. Sign4all: a spanish sign language dataset, https://doi.org/10.57760/sciencedb.28304 (2025).

Dosovitskiy, A. et al. An image is worth 16 × 16 words: Transformers for image recognition at scale 2010.11929 (2021).

Azure Kinect Sensor SDK oficial documentation. https://microsoft.github.io/Azure-Kinect-Sensor-SDK/master/index.html. Accessed: February 2025.

Gorordo, I. PyKinectAzure GitHub page. https://github.com/ibaiGorordo/pyKinectAzure?tab=readme-ov-file Accessed: February 2025 (2020).

Joo, H. et al. Panoptic studio: A massively multiview system for social interaction capture 1612.03153 (2016).

Athitsos, V. et al. The american sign language lexicon video dataset. 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops 0, 1–8, https://doi.org/10.1109/CVPRW.2008.4563181 (2008).

Mavi, A. & Dikle, Z. A new 27 class sign language dataset collected from 173 individuals 2203.03859 (2022).

Albanie, S. et al. Bbc-oxford british sign language dataset 2111.03635 (2021).

Efthimiou, E. et al. Sign language recognition, generation, and modelling: A research effort with applications in deaf communication. In Stephanidis, C. (ed.) Universal Access in Human-Computer Interaction. Addressing Diversity, 21–30 (Springer Berlin Heidelberg, Berlin, Heidelberg 2009).

Bhatia, P. & Wadhawan, A. Deep learning-based sign language recognition system for static signs. Neural Computing and Applications https://doi.org/10.1007/s00521-019-04691-y (2021).

Chai, X., Wang, H. & Chen, X. The devisign large vocabulary of chinese sign language database and baseline evaluations (2014).

Radakovic, M. et al. The serbian sign language alphabet: A unique authentic dataset of letter sign gestures. Mathematics 12, 525, https://doi.org/10.3390/math12040525 (2024).

Kapitanov, A., Karina, K., Nagaev, A. & Elizaveta, P.Slovo: Russian Sign Language Dataset, 63-73 (Springer Nature Switzerland, 2023).

Azure Kinect DK hardware specifications. https://learn.microsoft.com/en-us/previous-versions/azure/kinect-dk/hardware-specification#depth-camera-supported-operating-modes Accessed: February 2025 (2021).

Acknowledgements

This work has been partially funded by a PhD grant under the reference UAFPU21-78 from the University of Alicante (Spain). In addition, this work has been funded by the Spanish State Research Agency (AEI) and ERDF/EU under grant: GEMELIA PID2024-161711OB-I00.

Author information

Authors and Affiliations

Contributions

F.M.E. and E.M.M. defined the vocabulary; F.M.E. recorded, filtered and processed the data, also performed the technical validation; E.M.M. supervised the experiments. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Morillas-Espejo, F., Martinez-Martin, E. Sign4all: a Spanish Sign Language dataset. Sci Data (2026). https://doi.org/10.1038/s41597-026-06872-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41597-026-06872-6