Abstract

Grasshopper optimization algorithm (GOA) proposed in 2017 mimics the behavior of grasshopper swarms in nature for solving optimization problems. In the basic GOA, the influence of the gravity force on the updated position of every grasshopper is not considered, which possibly causes GOA to have the slower convergence speed. Based on this, the improved GOA (IGOA) is obtained by the two updated ways of the position of every grasshopper in this paper. One is that the gravity force is introduced into the updated position of every grasshopper in the basic GOA. And the other is that the velocity is introduced into the updated position of every grasshopper and the new position are obtained from the sum of the current position and the velocity. Then every grasshopper adopts its suitable way of the updated position on the basis of the probability. Finally, IGOA is firstly performed on the 23 classical benchmark functions and then is combined with BP neural network to establish the predicted model IGOA-BPNN by optimizing the parameters of BP neural network for predicting the closing prices of the Shanghai Stock Exchange Index and the air quality index (AQI) of Taiyuan, Shanxi Province. The experimental results show that IGOA is superior to the compared algorithms in term of the average values and the predicted model IGOA-BPNN has the minimal predicted errors. Therefore, the proposed IGOA is an effective and efficient algorithm for optimization.

Similar content being viewed by others

Introduction

Based on the existence of the constraint conditions, optimization models are divided into unconstrained optimization models and constrained optimization models, which widely exist in computer science1, artificial intelligence2, pattern recognition3, energy consumption4, truss structural systems5, engineering areas6, nonlinear time series7, and so on.

Swarm intelligence algorithms recently proposed have been applied to solve the optimization models obtained from different actual problems. The genetic algorithm (GA) by Holland in 19928 and the particle swarm optimization (PSO) by Eberhart and Kennedy in 19959 provided some insights for researchers to propose many other swarm intelligence algorithms, such as Moth-fame optimization algorithm (MFO)6, Multi-verse optimizer (MVO)10, Sine cosine algorithm (SCA)11, Whale optimization algorithm (WOA)12, Grey wolf optimizer (GWO)13, Bird mating optimizer (BMO)14, Harris Hawks Optimizer (HHO)15, Grasshopper Optimization Algorithm (GOA)16, Dragonfly Algorithm (DA)17, Slap Search Algorithm (SSA)18, Arithmetic Optimization Algorithm (AOA)19 and Aquila Optimizer (AO)20. These swarm intelligence algorithms have the exploration phase and the exploitation phase. According to the different simulations, every swarm intelligence algorithm has the prominent updated method of the individual and the different applied background. Generally, benchmark functions are applied to test the performance of these swarm intelligence algorithms. Experimental results show that not all swarm intelligence algorithms can solve the whole optimization problems.

Machine learning has been used to solve the prediction and classification problems. For example, Support Vector Regression (SVR) and Long Short-Term Memory (LSTM) based deep learning model were combined to establish the deep learning method for predicting the AQI values accurately, which helped to plan the metropolitan city for sustainable development21. And LSTM Recurrent Neural Network was also utilized to preform the stock market prediction22. Especially, the randomness of the parameters of machine learning leads to unstable results of predictions and classifications. The fitter are the parameters, the better are the results. Swarm intelligence algorithms can be used to optimize the fitter parameters of machine learning. The problems of optimizing the parameters of neural network by using swarm intelligence algorithm are actually the optimization problem. For example, SCA and GA were used to optimize the parameters of BP neural network for predicting the direction of the stock market indices23,24; the improved Exponential Decreasing Inertia Weight-Particle Swarm Optimization Algorithm was utilized to optimize the parameters of generalized radial basis function neural network with AdaBoost algorithm for stock market prediction25; least squares support vector machine (LSSVM) with the parameters optimized by the Bat algorithm (BA) was used to forecast the air quality index (AQI)26; MVO and PSO algorithms were combined to optimize the parameters of Elman neural network for classification of endometrial carcinoma with gene expression27. Swarm intelligence algorithms were used to be hybridized with artificial neural network for predicting the carbonation depth for recycled aggregate concrete28. Swarm intelligence algorithms based technique were employed to perform the sparse signal reconstruction29.

In particular, GOA proposed in 2017 mimics the behaviour of grasshopper swarms in nature for solving optimization problems16. In16, GOA was firstly preformed on a set of test problems including CEC2005 qualitatively and quantitatively and then is applied to find the optimal shape for a 52-bar truss, 3-bar truss, and cantilever beam. Then, GOA is improved and is applied into the different fields. A novel periodic learning ontology matching model based on interactive GOA proposed in30 considered the periodic feedback from users during the optimization process, using a roulette wheel method to select the most problematic candidate mappings to present to users, and take a reward and punishment mechanism into account for candidate mappings to propagate the feedback of user, which is conducted on two interactive tracks from Ontology Alignment Evaluation Initiative. In31, a dynamic population quantum binary grasshopper optimization algorithm based on mutual information and rough set theory for feature selection is performed in twenty UCI datasets. In32, GOA was employed to design a linear phase finite impulse response (FIR) low pass, high pass, band pass, and band stop filters. In33, GOA was firstly to find optimal parameters with the aim of fusing low-frequency components and then the Kirsch compass operator is used to create an efficient rule for the fusion of high-frequency components, which allows the fused image to significantly preserve details transferred from input images. In34, the main controlling parameter of GOA was taken to be a new adaptive function to enhance the exploration and exploitation capability, thus the improved GOA is obtained and then is utilized to optimize the hyperparameters of the support vector regression with embedding the feature selection simultaneously by running on four datasets. In35, on the one side, the theoretical perspectives of GOA were given by its versions of modifications, hybridizations, binary, chaotic, and multi-objective; and on the other side, GOA had its applied regions, such as test functions, machine learning, engineering, image processing, network, parameter controller.

The influence of the gravity force and the velocity on GOA and its improvements have not be considered in the basic GOA, which possibly causes GOA to have the slower convergence speed. Based on this, the two updated ways of the position of every grasshopper are proposed in this paper. One is that the gravity force is introduced into the updated position of every grasshopper in the basic GOA. And the other is that the velocity is introduced into the updated position of every grasshopper and the new position are obtained from the sum of the current position and the velocity, which is inspired by PSO. Then every grasshopper adopts its suitable way of the updated position on the basis of the probability. Thus the improved GOA (IGOA) is obtained. Performed on the 23 classical benchmark functions, IGOA is superior to the compared algorithms GOA, PSO, MFO, SCA, SSA, MVO and DA in term of the average values and the convergence speeds. Then, IGOA is tested to optimize the parameters of BP neural network for predicting the closing prices of the Shanghai Stock Exchange Index and the air quality index (AQI) of Taiyuan, thus the predicted model IGOA-BPNN is built. The experimental results show that the IGOA-BPNN has potentiality to optimize the parameters of BP neural network for prediction. Therefore, the proposed IGOA is an effective and efficient algorithm for optimization.

The structure of the paper is organized as follows. The original GOA and the improved GOA are introduced in “Improved grasshopper optimization algorithm”. Section “The function optimization” shows the comparison results of IALO, GOA, PSO, MFO, SCA, SSA, MVO and DA performed on 23 benchmark functions. In “Applications”, IGOA is also utilized to optimize the parameters of BP neural network (BPNN) for predicting the closing prices of the Shanghai Stock Exchange Index and the air quality index (AQI) of Taiyuan, Shanxi Province. Conclusion and discussion are presented in “Conclusions and discussion”.

Improved grasshopper optimization algorithm

The basic grasshopper optimization algorithm

GOA proposed in 2017 mimicked the behaviour of grasshopper swarms in nature for solving optimization problems16. The mathematical model of simulating the behaviour of grasshopper swarms is as follows36:

where \(X_{i} ,\) \(S_{i} ,\) \(G_{i} ,\) and \(A_{i}\) denote the position, the social interaction, the gravity force and the wind advection of the ith grasshopper, respectively. The randomness of the position of grasshoppers is considered and then the Eq. (1) is written to be \(X_{i} = r_{1} S_{i} + r_{2} G_{i} + r_{3} A_{i}\), where \(r_{1} ,\;r_{2} ,\;r_{3}\) are the random numbers in the interval [0, 1], and \(S_{i}\) is defined by

where \(N\) denotes the number of the grasshoppers in the swarm, \(d_{ij} = \left| {X_{j} - X_{i} } \right|\) is the distance between the ith grasshopper and the jth grasshopper, \(\hat{d}_{ij} = \frac{{X_{j} - X_{i} }}{{d_{ij} }}\) is the unit vector from the ith grasshopper to the jth grasshopper and the social force \(s(r)\) is defined by

where \(f\) indicates the intensity of attraction and \(l\) is the attractive length scale. In the Ref.16, \(l = 1.5,f = 0.5.\)

Let \(d\) be the distance between two grasshoppers. \(d=2.079\) is called the comfort zone or comfortable distance where there is neither attraction nor repulsion between two grasshoppers. When \(d<2.079\), there is repulsion between two grasshoppers. When \(d>2.079\), there is attraction between two grasshoppers. In particular, when \(d\) changes from 2.079 to nearly 4, \(s\) increases. When \(d > 4\),\(s\) decreases. When \(d > 10\), \(s\) trends to 0 and then \(s\) has no action. Therefore, \(d\) is mapped into the distance of grasshoppers in the interval of1,4. Thus the space between two grasshopper is divided into repulsion region, comfort zone, and attraction region.

\(G_{i}\) in the Eq. (1) is defined by

where \(g\) is the gravitational constant and \(\hat{e}_{i}\) is a unity vector towards the center of the earth.

\(A_{i}\) in the Eq. (1) is defined by

where \(u\) is a constant drift and \(\hat{w}_{i}\) is a unity vector in the direction of the wind.

\(S,G,A\) in the Eq. (1) are substituted by Eqs. (2)–(5) and the Eq. (1) becomes

But the grasshoppers are as soon as located in the comfort zone and the swarm can not be converged into the appointed point. Therefore, Eq. (6) can not be used to solve the optimization model directly.

In order to solve the optimization model, the Eq. (6) is modified to be

where \(ub_{d} ,lb_{d}\) are the upper bound and the lower bound of the dth component of the ith grasshopper, \(\hat{T}_{d}\) is the \(d\)th component of the optimal grasshopper \(\hat{T}\), the adaptive parameter \(c\) is a decreasing coefficient to shrink the comfort zone, repulsion zone, and attraction zone. In Eq. (7), the gravity force is not considered, that is, there is no G component. And assume that the wind direction (\(A\) component) is always towards a target \(\hat{T}_{d}\).

In order to balance the exploration stage and the exploitation, the parameter \(c\) is defined by

where \(c_{\max } ,c_{\min }\) are the maximum and the minimum of the parameter \(c\), respectively, \(t\) denotes the current iteration and \(T\) denotes the maximum iteration. In GOA, \(c_{\max } = {1},c_{\min } = {0}{\text{.000001}}{.}\)

The improved GOA

Based on the influence of the gravity force not to be considered in the basic GOA, the right side of the Eq. (7) minus the sum of the product between the gravitational constant g and the unit vector from the ith grasshopper to the jth grasshopper, thus the new updated position of the grasshopper is obtained as follows

The velocity of the ith grasshopper causes its position updated during the hunting process as follows

where \(a\) is the acceleration coefficient and \(rand\) is the random number between 0 and 1.

Therefore, the ith grasshopper adopts two updated ways Eqs. (9) and (11) of the position. According to the selected probability \(p\), the position of the ith grasshopper is updated as follows

where \(c\) is the same as that in the basic GOA.

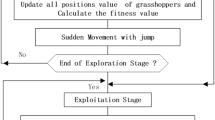

Based on the above, the GOA is improved, written as IGOA. The concrete steps of IGOA are as follows.

Step 1. Initialization. Initialize the grasshopper swarm \(X_{i} (i = 1,2, \ldots ,N)\), \(c_{\max } ,c_{\min }\), minimum and maximum of velocity, maximum number of iterations \(T\).

Step 2. Calculate the fitness of every grasshopper and find the optimal grasshopper \(\hat{T}\). Let \(\text{t}=1.\)

Step 3. Update the parameter \(c\) by use of Eq. (8).

Step 4. For every grasshopper, the distance between the grasshoppers is firstly mapped into the interval1,4, and the selected probability \(p\) is adopted. If \(p < {0}{\text{.5}}\), the position of the grasshopper is updated by use of Eq. (12) or Eq. (9). Otherwise, the position of the grasshopper is updated by use of Eq. (12) or Eqs. (10) and (11). If the position of the grasshopper is out of the bound, then the position of the grasshopper is updated by use of the upper bound and the lower bound.

Step 5. Calculate the fitness value of every grasshopper. Update \(\hat{T}\) if there is a better grasshopper. Let \(t = t + 1\).

Step 6. Judge whether the terminal condition is satisfied. If YES, return the optimal grasshopper \(\hat{T}\). Otherwise, turn Step 3.

The function optimization

In this section, we adopt 23 benchmark functions to test the performance the proposed IGOA by compared with GOA, PSO, MFO, SCA, SSA, MVO and DA.

23 benchmark functions

In this subsection, 23 benchmark functions are derived from the Ref.15. Tables 1, 2 and 3 show the function expression, the dimension, the range and the minimum value of seven unimodal functions \(F_{1} (x) - F_{7} (x)\) with \(n\) dimension (Table 1), six multimodal functions \(F_{{8}} (x) - F_{{{13}}} (x)\) with \(n\) dimension (Table 2) and ten functions \(F_{{{14}}} (x) - F_{{{23}}} (x)\) with the fixed dimension (Table 3), respectively. 3D version of some functions among these 23 benchmark functions are shown in Fig. 1.

3D version of the benchmark functions \(F_{{1}} (x),F_{3} (x),F_{8} (x),F_{9} (x),F_{14} (x),F_{{{18}}} (x)\) (using Matlab R2018a and www.mathworks.com).

The setup of the parameters

In order to verify the validation of the proposed IGOA in the paper, we choose GOA, PSO, MFO, SCA, SSA, MVO and DA to be the comparable algorithms. In the experiments, the parameters of these eight algorithms are set up as shown in Table 4.

Experimental results

IGOA, GOA, PSO, MFO, SCA, SSA, MVO and DA all run 30 times independently. The average values and the standard deviations of the optimal function values of these 23 benchmark functions are obtained, shown in Table 5. In this section, we compare IGOA with GOA and PSO and then compare IGOA with MFO, SCA, SSA, MVO and DA.

IGOA vs. (GOA, PSO)

For the unimodal functions \(F_{1} (x) - F_{{5}} (x),F_{7} (x)\), the average values of the optimal values obtained by IGAO are 4.9537E−03, 2.9389E−01, 3.5429E−02, 1.9125E−02, 2.9610E+01 and 1.6040E−02, respectively, which are all less than those obtained by GOA and PSO. But for the unimodal function \(F_{{6}} (x)\), the average value of the optimal value obtained by IGAO is 2.0048E+00, which is less than that obtained by GOA and is more than that obtained by PSO.

For the multimodal functions \(F_{{9}} (x) - F_{{{13}}} (x)\), the average values of the optimal values obtained by IGAO are 4.2445E−01, 5.2213E−02, 2.9202E−04, 2.2805E−01 and 1.5727E+00, respectively, which are all less than those obtained by GOA and PSO. But for the multimodal function \(F_{{8}} (x)\), the average value of the optimal value obtained by IGAO is − 7.1112E+03, which is more than that obtained by GOA and is less than that obtained by PSO.

For the functions \(F_{{{14}}} (x),F_{20} (x)\), the average values of the optimal values obtained by IGAO are 9.9816E−01 and − 3.2475E+00, respectively, whose degree closest to the optimal values 9.9800E−01 and − 3.32 is less than those obtained by GOA and is more than those obtained by PSO. For the functions \(F_{{{16}}} (x) - F_{17} (x)\), the average values of the optimal values obtained by IGAO are − 1.0313E+00 and 3.9851E−01, whose degrees closest to the optimal values − 1.0316E+00 and 0.3978 are all less than GOA and PSO. For the functions \(F_{{{18}}} (x) - F_{19} (x)\), the average values of the optimal values obtained by IGAO are 3.0152E+00 and − 3.8583E+00, whose degree closest to the optimal values 3 and − 3.86 is less than that obtained by PSO and is more than that obtained by GOA. For the functions \(F_{15} (x),F_{{{21}}} (x) - F_{{{23}}} (x)\), the average values of the optimal values obtained by IGAO are 1.9673E−03, − 8.9507E+00, − 9.4709E+00 and − 9.3486E+00, whose degrees closest to the optimal values 0.0030, − 10.1532, − 10.4028 and − 10.5363 are more than those obtained by GOA and PSO.

Figure 2 shows the convergence curves of IGOA, GOA and PSO on the first 200 iterations on benchmark functions \(F_{{1}} (x),F_{3} (x) - F_{5} (x),F_{10} (x) - F_{11} (x),F_{13} (x),F_{22} (x) - F_{23} (x).\) It is observed from Fig. 2 that the convergence speed of IGOA is faster than those of GOA and PSO. Table 5 and Fig. 2 show that IGOA outperforms GOA and PSO.

IGOA vs. (MFO, SCA, SSA, MVO, DA)

For the unimodal functions \(F_{1} (x),F_{6} (x)\), the average values of the optimal values obtained by IGAO are 4.9537E−03 and 2.0048E+00, which are less than those obtained by MFO, SCA, MVO and DA and are more than those obtained by SSA. For the unimodal function \(F_{2} (x)\), the average value of the optimal value obtained by IGAO is 2.9389E−01, which is less than that obtained by MFO, SSA, MVO and DA and is more than that obtained by SCA. For the unimodal functions \(F_{3} (x) - F_{{5}} (x),F_{7} (x)\), the average values of the optimal values obtained by IGAO are 3.5429E−02, 1.9125E−02, 2.9610E+01 and 1.6040E−02, less than those obtained by MFO, SCA, SSA, MVO and DA.

For the multimodal functions \(F_{{9}} (x) - F_{{{13}}} (x)\), the average values of the optimal values obtained by IGAO are 4.2445E−01, 5.2213E−02, 2.9202E−04, 2.2805E−01 and 1.5727E+00, which are all less than those obtained by MFO, SCA, SSA, MVO and DA. For the multimodal function \(F_{{8}} (x)\), the average value of the optimal value obtained by IGAO is − 7.1112E+03, which is more than that obtained by SCA, SSA, MVO and DA and is less than that obtained by MFO.

For the function \(F_{{{14}}} (x)\), the average value of the optimal value obtained by IGAO is 9.9816E−01, whose degree closest to the optimal value 9.9800E−01 is less than that obtained by MVO and is more than that obtained by MFO, SCA, SSA and DA. For the function \(F_{{{15}}} (x)\), the average value of the optimal value obtained by IGAO is 1.9673E−03, whose degree closest to the optimal value 0.0030 is less than that obtained by MVO and DA and is more than that obtained by MFO, SCA and SSA. For the function \(F_{{{16}}} (x)\), the average value of the optimal value obtained by IGAO is − 1.0313E+00, whose degree closest to the optimal value − 1.0316E+00 is less than that obtained by MFO, SCA, SSA, MVO and DA. For the function \(F_{{{17}}} (x)\), the average value of the optimal value obtained by IGAO is 3.9851E−01, whose degree closest to the optimal value 0.398 is less than that obtained by MFO, SSA, MVO and DA and is more than that obtained by SCA. For the function \(F_{{{18}}} (x)\), the average value of the optimal value obtained by IGAO is 3.0152E+00, whose degree closest to the optimal value 3 is less than that obtained by MFO, SCA, SSA, MVO and DA. For the function \(F_{{{20}}} (x)\), the average value of the optimal value obtained by IGAO is − 3.2475E+00, whose degree closest to the optimal value − 3.32 is less than that obtained by MVO and is more than that obtained by MFO, SCA, SSA and DA. For the functions \(F_{19} (x),F_{{{21}}} (x) - F_{{{23}}} (x)\),the average values of the optimal values obtained by IGAO are − 3.8583E+00, − 8.9507E+00, − 9.4709E+00 and − 9.3486E+00, which is the closer to the optimal values − 3.86, − 10.1532, − 10.4028 and − 10.5363 than those obtained by MFO, SCA, SSA, MVO and DA.

Therefore, it is observed from Table 5 that IGOA outperforms MFO, SCA, SSA, MVO and DA. By the comparison in the “IGOA vs. (GOA, PSO)” and “IGOA vs. (MFO, SCA, SSA, MVO, DA)”, IGOA can be used to perform the function optimization and is superior to GOA, PSO, MFO, SCA, SSA, MVO and DA, which is utilized to verify no free lunch theorem.

Applications

In this section, the proposed IGOA is used to optimize the weights and the bases of the BP neural network, and then predicted model IGOA-BPNN is obtained. Finally, the IGOA-BPNN is used to perform the prediction of the closing prices of the Shanghai Stock Exchange Index and the air quality index (AQI) of Taiyuan, Shanxi Province.

Predicted model

IGOA proposed in this paper is used to optimize the connected weights and the bases of BP neural network, and the predicted model IGOA-BPNN is established. The concrete steps of IGOA-BPNN are as follows:

Step 1: Input data and divide these data into the training set and the testing data. Normalize the training set and the testing set.

Step 2: Initialize the size of the grasshopper swarm, \(c_{\max } ,c_{\min }\), minimum and maximum of velocity, the maximum number of iterations of IGOA, and the number of the nodes in the hidden layer of BP neural network and the maximum number of iterations of BP neural network. Choose Mean Square Error (MSE)

to be the fitness function of IGOA, where \(y_{s} ,t_{s}\) are the predicted value and the target value of the \(s\)th sample. Let \(t = 1\).

Step 3: Initialize the grasshopper swarm.

Step 4: Map every grasshopper into the connected weights between the input layer and the hidden layer, the bases in the hidden layer, the connected weights between the hidden layer and the output layer, the bases in the output layer of BP neural network. Then train BP neural network and obtain the predicted values of the training set. According to predicted values and the target values, calculate the fitness value of this grasshopper. Find out the optimal grasshopper.

Step 5: Update the grasshopper swarm by use of IGOA. Then let \(t = t + 1\).

Step 6: If the termination conditions are satisfied, then turn Step 6, otherwise turn Step 3.

Step 7: Output the optimal grasshopper. Then map the optimal grasshopper into the connected weights between the input layer and the hidden layer, the bases in the hidden layer, the connected weights between the hidden layer and the output layer, the bases in the output layer of BP neural network. Then train BP neural network and obtain the predicted values of the training set. Thus obtain the trained BP neural network. Finally input the testing set into the trained BP neural network and obtain the predicted values of the testing set.

The flowchart of IGOA-BPNN is shown in Fig. 3.

In this section, MSE, Mean Absolute Error (MAE)

Root Mean Square Error (RMSE)

Mean Absolute Percentage Error (MAPE)

are taken to the evaluation criteria of model, where \(y_{s} ,t_{s}\) are the predicted value and the target value of the \(s\)th sample.

Prediction of Shanghai Stock Index

Data source

In this subsection, Shanghai Stock 000001 from December 19, 1990 to June 28, 2021 loaded from the website http://quotes.money.163.com/trade/lsjysj_zhishu_000001.html contains 7459 days’ data. Figure 4 shows that the trend of the 7459 days’ closing prices. The features of every data sample consist of the closing price, the highest price, the lowest price, the opening price, the previous closing, the rise and fall amount, the rise and fall range, the trading volume and the transaction amount. In this paper, we choose the closing price, the highest price, the lowest price, the opening price, the previous closing, the trading volume and the transaction amount to be the features of samples and use the features of the current day to predict the closing price of the next day. The 7383 data samples from December 19, 1990 to March 5, 2021 are taken to the training set and the remaining 75 data samples from March 6, 2021 to June 27, 2021 are taken to the testing set.

Experimental results

In this subsection, we use the predicted model IGOA-BPNN to predict the closing price of Shanghai Stock Index 000001. In order to verify the performance of the predicted model IGOA-BPNN, GOA, PSO, MFO, SCA, SSA, MVO and DA are employed to be combined with BP neural network (BPNN) to establish the comparable predicted models GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN and DA-BPNN, respectively. Meantime, BPNN is also employed to be the comparable predicted model.

Among the BPNN and the BPNN part of these comparable predicted models IGOA-BPNN, GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN and DA-BPNN, the maximum number of iterations is set up to be 5000 and the momentum factor is 0.95. IGOA-BPNN, GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN, DA-BPNN and BPNN are run independently 30 times, respectively, and then MSE, MAE, RMSE, MAPE of the average predicted values of these 75 testing samples are obtained, shown in Table 6.

Figure 5 shows the comparison among the actual values and the predicted values of IGOA-BPNN, GOA-BPNN, PSO-BPNN and BPNN of these 75 testing samples.

From Table 6, the predicted errors MSE = 828.95, MAE = 21.70, RMSE = 3.32, MAPE = 0.62% obtained from predicted model IGOA-BPNN are less than those obtained from the predicted models GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN, DA-BPNN and BPNN, which shows that the proposed IGOA in this paper is more suitable for optimizing the parameters of BP neural network for predicting Shanghai Stock Index 000001. It is also observed from Table 6 that the predicted performance of the BP neural network optimized by swarm intelligence algorithms outperforms the pure BP neural network.

Prediction of air quality index in Taiyuan, Shanxi

Data source

The 1273 day’s data from January 1, 2018 to June 26, 2021 in this subsection is derived from the website https://www.aqistudy.cn/historydata/monthdata.php?city=%e5%a4%aa%e5%8e%9f. The features of every data consist of the current air quality index (AQI), quality grade, PM2.5, PM10, SO2, CO, NO2, O3. The relation between AQI and quality grade is shown in Table 7. The remaining 1264 data samples are kept by deleting the missing data. Figure 6 shows the number of days and proportion distribution of excellent, good, light pollution, moderate pollution, heavy pollution and serious pollution in these 1264 days. Figure 7 shows the trends of AQI in these 1264 days.

In this subsection, we choose the current AQI, PM2.5, PM10, SO2, CO, NO2 and O3 to predict AQI of the next day and select the 1241 data samples from January 1, 2018 to June 3, 2021 to be training set and the 22 data samples from June 4, 2021 to June 25,2021 to be the testing set.

Experimental results

The proposed IGOA-BPNN in this subsection is utilized to predict AQI in Taiyuan, Shanxi. Similar to “Experimental results”, among the BPNN and the BPNN part of these comparable predicted models IGOA-BPNN, GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN and DA-BPNN, the maximum number of iterations is set up to be 5000 and the momentum factor is 0.95. IGOA-BPNN, GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN, DA-BPNN and BPNN are run independently 10 times, respectively, and then MSE, MAE, RMSE, MAPE of the average predicted values of these 22 testing samples are obtained, shown in Table 8. Figure 8 shows the comparison among the actual values and the predicted values of IGOA-BPNN, GOA-BPNN, PSO-BPNN and BPNN of these 22 testing samples.

From Table 8, the predicted errors MSE = 134.43, MAE = 32.51, RMSE = 7.81, MAPE = 31.91% obtained from predicted model IGOA-BPNN are less than those obtained from the predicted models GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN, DA-BPNN and BPNN, which shows that the proposed IGOA in this paper is more suitable for optimizing the parameters of BP neural network for predicting AQI in Taiyuan, Shanxi. It is also observed from Table 8 that the predicted performance of the BP neural network optimized by swarm intelligence algorithms outperforms the pure BP neural network.

Conclusions and discussion

The updated methods of velocity and position of PSO are introduced into GOA and thus improved GOA is obtained, written as IGOA. Then 23 benchmark functions are used to verify the effectiveness of IGOA, and the experimental results show that IGAO is superior to MFO, SCA, SSA, MVO and DA. Finally IGOA is utilized to optimize the connection weight and bases of BP neural network, and the prediction model IGOA-BPNN is established. IGOA-BPNN is applied to the prediction of Shanghai stock index and AQI of Taiyuan, Shanxi. The results show that IGOA-BPNN is better than GOA-BPNN, PSO-BPNN, MFO-BPNN, SCA-BPNN, SSA-BPNN, MVO-BPNN, DA-BPNN and BPNN.

However, the size of the initial population of IGOA, the parameters of BP neural network, and other machine learning methods may lead to different experimental results. Therefore, it is necessary to study the combination of swarm intelligence algorithm and different machine learning to establish prediction models to solve practical problems.

References

Ho-Huu, V., Nguyen-Toi, T., Vo-Duy, T. & Nguyen-Trang, T. An adaptive elitist differential evolution for optimization of truss structures with discrete design variables. Comput. Struct. 165, 59–75 (2016).

Bartsch, G. et al. Use of artifcial intelligence and machine learning algorithms with gene expression profling to predict recurrent nonmuscle invasive urothelial carcinoma of the bladder. J. Urol. 195(2), 493–498 (2016).

Lai, C. M., Yeh, W. C. & Huang, Y. C. Entropic simplifed swarm optimization for the task assignment problem. Appl. Soft Comput. 58, 115–127 (2017).

Ghalambaz, M., Yengejeh, R. J. & Davami, A. H. Building energy optimization using Grey Wolf Optimizer (GWO). Case Stud. Thermal Eng. 27, 101250 (2021).

Awad, R. Sizing optimization of truss structures using the political optimizer (PO) algorithm. Structures 33, 4871–4894 (2021).

Mirjalili, S. Moth-fame optimization algorithm: A novel naturE−inspired heuristic paradigm. Knowl.-Based Syst. 89, 228–249 (2015).

Yu, L., Ma, X., Wu, W. Q., Wang, Y. & Zeng, B. A novel elastic net-based NGBMC(1, n) model with multi-objective optimization for nonlinear time series forecasting. Commun. Nonlinear Sci. Numer. Simulat. 96, 105696 (2021).

Holl, J. H. Genetic algorithms. Sci. Am. 267(1), 66–73 (1992).

Eberhart, R. & Kennedy, J. Particle swarm optimization. Proc. IEEE Inter Conf. Neural Netw. 4, 1942–1948 (1995) (IEEE, Perth, Australia).

Mirjalili, S., Mirjalili, S. M. & Hatamlou, A. Multi-verse optimizer: A naturE−inspired algorithm for global optimization. Neural Comput. Appl. 27(2), 495–513 (2016).

Mirjalili, S. SCA: A sine cosine algorithm for solving optimization problems. Knowl.-Based Syst. 96, 120–133 (2016).

Mirjalili, S. & Lewis, A. The whale optimization algorithm. Adv. Eng. Softw. 95, 51–67 (2016).

Mirjalili, S., Mirjalili, S. M. & Lewis, A. Grey Wolf Optimizer. Adv. Eng. Softw. 69, 46–61 (2014).

Askarzadeh, A. Bird mating optimizer: An optimization algorithm inspired by bird mating strategies. Commun. Nonlinear Sci. Numer. Simul. 19(4), 1213–1228 (2014).

Heidari, A. A. et al. Harris hawks optimization: Algorithm and applications. Futur. Gener. Comput. Syst. 97, 849–872 (2019).

Saremi, S., Mirjalili, S. & Lewis, A. Grasshopper Optimisation Algorithm: Theory and application. Adv. Eng. Softw. 105, 30–47 (2017).

Mirjalili, S. Dragonfly algorithm: A new meta-heuristic optimization technique for solving singlE−objective, discrete, and multi-objective problems. Neural Comput. Appl. 27(4), 1053–1073 (2016).

Mirjalili, S. et al. Salp Swarm Algorithm: A bio-inspired optimizer for engineering design problems. Adv. Eng. Softw. 114, 163–191 (2017).

Abualigah, L., Diabat, A., Mirjalili, S., Elaziz, M. A. & Gandomi, A. H. The Arithmetic Optimization Algorithm. Comput. Methods Appl. Mech. Eng. 376, 113609 (2021).

Abualigah, L. et al. Aquila Optimizer: A novel meta-heuristic optimization algorithm. Comput. Ind. Eng. 157, 107250 (2021).

Janarthanan, R., Partheeban, P., Somasundaram, K. & Elamparithi, P. N. A deep learning approach for prediction of air quality index in a metropolitan city. Sustain. Cities Society. 67, 102720 (2021).

Moghar, A. & Hamiche, M. Stock market prediction using LSTM recurrent neural network. Procedia Comput. Sci. 170, 1168–1173 (2020).

Hu, H. P., Tang, L., Zhang, S. H. & Wang, H. Y. Predicting the direction of stock markets using optimized neural networks with Google Trends. Neurocomputing 285, 188–195 (2018).

Qiu, M. & Song, Y. Predicting the direction of stock market index movement using an optimized artificial neural network model. PLoS ONE 11(5), e0155133 (2016).

Lu, J., Hu, H. & Bai, Y. Generalized radial basis function neural network based on an improved dynamic particle swarm optimization and AdaBoost algorithm. Neurocomputing 152, 305–315 (2015).

Wu, Q. L. & Lin, H. X. A novel optimal-hybrid model for daily air quality index prediction considering air pollutant factors. Sci. Total Environ. 683, 808–821 (2019).

Hu, H., Wang, H., Bai, Y. & Liu, M. Determination of endometrial carcinoma with gene expression based on optimized Elman neural network. Appl. Math. Comput. 341, 204–214 (2019).

Liu, K. H., Alam, M. S., Zhu, J., Zheng, J. K. & Chi, L. Prediction of carbonation depth for recycled aggregate concrete using ANN hybridized with swarm intelligence algorithms. Construct. Build. Mater. 301, 124382 (2021).

Erkoc, M. E. & Karaboga, N. Sparse signal reconstruction by swarm intelligence algorithms. Eng. Sci. Technol. Int. J. 24, 319–330 (2021).

Lv, Z. M. & Peng, R. A novel periodic learning ontology matching model based on interactive grasshopper optimization algorithm. Knowl-Based Syst. 228, 107239 (2021).

Wang, D., Chen, H. M., Li, T. R., Wan, J. H. & Huang, Y. Y. A novel quantum grasshopper optimization algorithm for feature selection. Int. J. Approximate Reasoning 127, 33–53 (2020).

Yadav, S., Yadav, R., Kumar, A. & Kumar, M. A novel approach for optimal design of digital FIR filter using grasshopper optimization algorithm. ISA Trans. 108, 196–206 (2021).

Dinh, P. H. A novel approach based on Grasshopper optimization algorithm for medical image fusion. Expert Syst. Appl. 171, 114576 (2021).

Algamal, Z. Y., Qasim, M. K., Lee, M. H. & Ali, H. T. M. Improving grasshopper optimization algorithm for hyperparameters estimation and feature selection in support vector regression. Chemometr. Intell. Lab. Syst. 208, 104196 (2021).

Abualigah, L. & Diabat, A. A comprehensive survey of the Grasshopper optimization algorithm: Results, variants, and applications. Neural Comput. Appl. 32, 15533–15556 (2020).

Topaz, C. M., Bernoff, A. J., Logan, S. & Toolson, W. A model for rolling swarms of locusts. Eur. Phys. J. Special Top. 157, 93–109 (2008).

Acknowledgements

Research Project Supported by Shanxi Scholarship Council of China (No. 2020-104). The National Sciences Foundation of China (No.11971278).

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Qin, P., Hu, H. & Yang, Z. The improved grasshopper optimization algorithm and its applications. Sci Rep 11, 23733 (2021). https://doi.org/10.1038/s41598-021-03049-6

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-021-03049-6

This article is cited by

-

Development of a machine learning-based graphical user interface for compressive strength prediction of graphene oxide infused concrete

Architecture, Structures and Construction (2026)

-

A comparative analysis on parametric optimization of abrasive water jet machining processes using foraging behavior-based metaheuristic algorithms

OPSEARCH (2025)

-

Suspended sediment load prediction using sparrow search algorithm-based support vector machine model

Scientific Reports (2024)

-

Air quality historical correlation model based on time series

Scientific Reports (2024)

-

The improved grasshopper optimization algorithm with Cauchy mutation strategy and random weight operator for solving optimization problems

Evolutionary Intelligence (2024)