Abstract

Many complex systems involve interactions between more than two agents. Hypergraphs capture these higher-order interactions through hyperedges that may link more than two nodes. We consider the problem of embedding a hypergraph into low-dimensional Euclidean space so that most interactions are short-range. This embedding is relevant to many follow-on tasks, such as node reordering, clustering, and visualization. We focus on two spectral embedding algorithms customized to hypergraphs which recover linear and periodic structures respectively. In the periodic case, nodes are positioned on the unit circle. We show that the two spectral hypergraph embedding algorithms are associated with a new class of generative hypergraph models. These models generate hyperedges according to node positions in the embedded space and encourage short-range connections. They allow us to quantify the relative presence of periodic and linear structures in the data through maximum likelihood. They also improve the interpretability of node embedding and provide a metric for hyperedge prediction. We demonstrate the hypergraph embedding and follow-on tasks—including quantifying relative strength of structures, clustering and hyperedge prediction—on synthetic and real-world hypergraphs. We find that the hypergraph approach can outperform clustering algorithms that use only dyadic edges. We also compare several triadic edge prediction methods on high school and primary school contact hypergraphs where our algorithm improves upon benchmark methods when the amount of training data is limited.

Similar content being viewed by others

Introduction

A typical graph-based data set captures pairwise interactions between nodes. There is growing interest in understanding higher-order, group-level, interactions, with different paradigms being proposed1,2. In this work, we represent such interactions with a hypergraph formulation; here each hyperedge involves two or more nodes. This framework is discussed in3,4,5 and has found application in real-world problems such as epidemic spread modelling6, image classification7, and the study of biological networks8.

A fundamental learning task on graph-based data is to embed nodes into a low-dimensional Euclidean space9,10. The learned embedding could be used in follow-on tasks such as clustering, classification, and structure recovery. There are various types of learning algorithms for graphs; some design and analyze Laplacian matrices related to the graph11, some solve maximum likelihood problems associated with random graph models12,13, and others involve more complex machine learning frameworks14,15.

In this work, we build on the use of spectral methods which derive node embeddings from eigenvectors of a Laplacian matrix16. Such spectral algorithms are popular, since they can be implemented efficiently on large sparse graphs and they are backed up by accompanying consistency theory17. Two main approaches have also recently been proposed for spectral clustering on hypergraphs. One approach is to employ higher-order Laplacian tensors18. Tensors in general contain richer information, however, their use can require considerably more computational expense than matrix algorithms, and the results can be difficult to visualize and interpret. A second approach is to “flatten” the higher order information into a representative node-level matrix. Some matrix-based approaches analyze the vertex-edge incidence matrix associated with a random walk interpretation9, other frameworks utilise motif-based Laplacian matrices that could be generalized to various motifs and time steps10,19. The methodology that we develop here fits into this second category by building a node-based matrix, using an intermediate step that looks over all hyperedge dimensions in order to incorporate higher order information.

A second aspect of our work is the connection between spectral methods and random models. Many graph embedding20, re-ordering12, clustering21, and structure recovery22,23 techniques solve maximum-likelihood problems on graphs assuming specific generative models. Besides their application in these inverse problems, random graph models are useful inference tools for quantifying structure, predicting new or missing links, and improving the interpretability of learning algorithms by relating node embeddings to edge probabilities24,25.

Many spectral algorithms are naturally related to optimization problems. This is the case when the Laplacian matrix is Hermitian, so that its eigenvectors are critical points of a quadratic form26. For example, spectral embedding for undirected graphs using the standard combinatorial Laplacian is related to minimizing the unnormalized cut11,27.

Furthermore, such optimization formulations may lead to interesting random graph interpretations of spectral algorithms. When the quadratic form can be expressed as the log-likelihood of the graph under a suitable model, the optimization problem may be restated as a node reordering. Such connections have been investigated for undirected graphs28 and directed graphs25, and here we extend these ideas to the hypergraph setting.

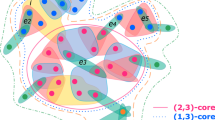

In particular, we associate customized spectral embedding algorithms with generative models that belong to a new class of range-dependent random hypergraphs that encourages short-range connections between nodes, generalizing existing graph models12,29. These range-dependent random hypergraphs offer flexibility that is not available in stochastic block models21 which require block sizes to be pre-specified or inferred.

The rest of the paper is structured as follows. Our notation is introduced in the “Notation” section. In the “Linear hypergraph embedding” and “Periodic hypergraph embedding” sections we define the linear and periodic hypergraph embedding algorithms and derive associated optimization problems. We propose random models associated with the hypergraph embedding algorithms in the “Generative hypergraph models” section, which leads to a model comparison workflow that quantifies the relative strength of linear versus periodic structures. Numerical studies on synthetic and real-world hypergraphs using the proposed models are presented in the “Experiments” section.

The main contributions of this work are as follows.

-

We propose new range-dependent generative models for hypergraphs that generate linear and periodic cluster patterns.

-

We establish their connection with linear and periodic spectral embedding algorithms.

-

We demonstrate on synthetic and real data that, after tuning model parameters to the data, these models can quantify the relative strength of linear and periodic structures.

-

We perform prediction of triadic hyperedges (triangles) using the proposed linear model and show that it outperforms the existing average-score based method30 on synthetic hypergraphs, and also on high school and primary school contact data when the amount of training data is limited.

Notation

We consider undirected, unweighted hypergraphs \(G = (V, E)\) on the vertex set V containing n nodes and the hyperedge set E. We let \(R \in \mathscr {R}\) be an unordered set of nodes, where \(\mathscr {R}\) denotes the collection of all such sets. We use \(|R |\) to denote the number of nodes in tuple R, that is, its cardinality, and we assume \(2 \le |R |\le T\) for all \(R \in E\).

Let \(A_R\) indicate the presence of a hyperedge, so that \(A_R = 1\) if \(R \in E\) and \(A_R = 0\) otherwise. We define the t-th order n by n adjacency matrix \(W^{[t]}\) such that \(W^{[t]}_{ij}\) counts the number of hyperedges with cardinality t that contain distinctive nodes i and j; hence, \(W^{[t]}_{ij} = \sum _{R \in \mathscr {R}: |R| =t} A_R {{\,\mathrm{\mathbbm {1}}\,}}{( i \in R)}{{\,\mathrm{\mathbbm {1}}\,}}{( j \in R )}\) if \(i \ne j\), and \(W^{[t]}_{ij} = 0\) otherwise, where \({{\,\mathrm{\mathbbm {1}}\,}}\) is the indicator function. Similarly, we define the corresponding t-th order diagonal degree matrix \(D^{[t]}\) such that \(D^{[t]}_{ii} = \sum _{j\in V} W^{[t]}_{ij}\), and the t-th order Laplacian matrix \(L^{[t]} = D^{[t]} - W^{[t]}\). We use \(\pmb {\textrm{i}}\) to denote \(\sqrt{-1}\) and \(\pmb {1}\) to denote the vector in \({{\,\mathrm{\mathbb {R}}\,}}^n\) with all entries equal to one. We let \(\pmb {a}'\) represent the transpose of a real-valued vector \(\pmb {a}\) and let \(\pmb {b}^H\) denote the conjugate transpose of a complex-valued vector \(\pmb {b}\). We use \(\mathscr {P}\) to denote the set of all permutation vectors, that is, all vectors in \({{\,\mathrm{\mathbb {R}}\,}}^n\) that contain each of the integers \(1,2,\ldots ,n\). We will focus on one-dimensional embedding. We let \(x_i \in {{\,\mathrm{\mathbb {R}}\,}}\) be the location to which node i is assigned, and \(\pmb {x}= [x_1, x_2,\ldots , x_n]' \in {{\,\mathrm{\mathbb {R}}\,}}^n\) .

Linear hypergraph embedding

Given a hypergraph, suppose we wish to find node embeddings \(\pmb {x}\in {{\,\mathrm{\mathbb {R}}\,}}^n\) such that hyperedges tend to contain nodes that are a small distance apart. To formalize this idea, we can define a linear incoherence function \(I_{\text {lin}}(\pmb {x}, R)\) that sums up the squared Euclidean distance between all nodes pairs in tuple R:

We may then define the total linear incoherence of the hypergraph, \(\eta _{\text {lin}}(G,\pmb {x})\), by aggregating the linear incoherence over all node tuples. Furthermore, we may wish to tune the weights of hyperedges of different cardinalities through a coefficient \(c_{|R |} \ge 0\) for node tuple R; that is,

One justification for these tuning parameters \(c_{|R |}\) is that they allow us to avoid the case where high-cardinality hyperedges dominate the expression. For example, we could choose \(c_{t} = \frac{1}{t(t-1)}\) to balance the contributions from hyperedges with different sizes. A suitable choice of \(c_{t}\) may also depend on the relative importance of hyperedges in the application.

In Proposition 3.1 we show that the total linear incoherence maybe be written as a quadratic form involving the hypergraph Laplacian matrix

Proposition 3.1

For any \(\pmb {x}\in {{\,\mathrm{\mathbb {R}}\,}}^n\) with L defined in (3), and \(\eta _{\text {lin}}(G,\pmb {x})\) defined in (2) we have

Proof

It is straightforward to show that \(\pmb {x}'L^{[t]}\pmb {x}= \sum _{i,j\in V} x_i (D^{[t]}_{ij} - W^{[t]}_{ij}) x_j= {\textstyle {{\frac{1}{2}}}}\sum _{i,j\in V} W^{[t]}_{ij}(x_i - x_j)^2\). Therefore,

\(\square\)

We note that each Laplacian \(L^{[t]}\) is symmetric and positive semi-definite with smallest eigenvalue 0.

Assumption 3.1

We assume that the unweighted, undirected graph associated with the binarized version of L is connected. It then follows that L has a single eigenvalue equal to 0 with all other eigenvalues positive. We further assume that there is a unique smallest positive eigenvalue, \(\lambda _2\) (the eigenvector \(\pmb {v}^{[2]}\) corresponding to \(\lambda _2\) is a generalization of the classic Fiedler vector).

In minimizing the total linear incoherence (2) we must avoid the trivial cases where (a) all nodes are located arbitrarily close to the origin and (b) all nodes are assigned to the same location. Hence it is natural to impose the constraints \(\Vert \pmb {x}\Vert _2 =1\) and \(\pmb {x}' \pmb {1}= 1\). It then follows from the Rayleigh-Ritz Theorem31, Theorem 4.2.2] that the quadratic form in Proposition 3.1 is solved by \(\pmb {x}= \pmb {v}^{[2]}\). This leads us to Algorithm 1 below, which could also be considered as a special case of the algorithm in18 where the motifs considered are hyperedges.

Remark 3.1

Algorithm 1 could be extended to higher dimensional embeddings where node i is assigned to \(\pmb {x}^{[i]} \in {{\,\mathrm{\mathbb {R}}\,}}^{d}\) for \(d >1\). In this case we could generalize (1) to

If we require the coordinate directions to be orthogonal, then the embedding is found via the eigenvectors corresponding to the d-smallest non-zero eigenvalues; see27 for details in the graph case.

Periodic hypergraph embedding

In this section, we look at the periodic analogue of linear hypergraph embedding. Here nodes are embedded into the unit circle rather than along the real line. Such a periodic structure formed the basis of the classic “small world” model of Watts and Strogatz32. Results in24 showed that certain real networks are better represented via this type of “wrap-around” notion of distance. Hence, it is of interest to develop concepts that apply to the hypergraph case.

We may position nodes on the unit circle by mapping them to phase angles \(\pmb {\theta }= \{ \theta _i \}_{i=1}^{n} \in [0, 2\pi )\). We may then use a periodic incoherence function to quantify the distance between node pairs in the tuple R:

Then the total periodic incoherence of the hypergraph becomes

In Proposition 4.1 below, we relate the total periodic incoherence to a quadratic form involving the hypergraph Laplacian matrix (3).

Proposition 4.1

Let \(\pmb {\psi }\in \mathbb {C}^n\) be such that \(\psi _j = e^{\pmb {\textrm{i}}\theta _j}\). Then

Proof

We have

Then the proof may be completed in a similar way to the proof of Proposition 3.1. \(\square\)

Appealing again to the Rayleigh-Ritz theorem31, Theorem 4.2.2], the quadratic form in (8) is minimized over all \(\pmb {\psi }\in \mathbb {C}^n\) with \(\Vert \pmb {\psi }\Vert _2 = 1\) and \(\pmb {\psi }^H \pmb {1}= 1\) by taking \(\pmb {\psi }= \pmb {v}^{[2]}\). However, this real-valued eigenvector cannot be proportional to a vector with components of the form \(e^{\pmb {\textrm{i}}\theta _j}\). Hence, following the approach in24 we will use the heuristic of setting

defined as \(v^{[2]}_i+ \pmb {\textrm{i}}v^{[3]}_i = |v^{[2]}_i+ \pmb {\textrm{i}}v^{[3]}_i |\cdot e^{i\theta _i}\), where \(\pmb {v}^{[3]}\) is an eigenvector corresponding to the next-smallest eigenvalue of L. Such a heuristic assumption converts two real eigenvectors into a complex vector, which gives an approximate solution to the minimization problem. The resulting workflow is summarized in Algorithm 2.

We also note that for simple unweighted, undirected graphs, finding \(\pmb {\theta }\) that minimizes \(\eta _{\text {per}}(G,\pmb {\theta })\) is equivalent to the formulation proposed in24. This may be shown by letting \(u_i = \cos \theta _i\) and \(z_i = \sin \theta _i\) and expanding (7) as

which simplifies to

This is essentially equation (3.1) in24, derived from a slightly different perspective.

We then arrive at Algorithm 2 below.

Generative hypergraph models

We now discuss a connection between the minimization of total incoherence and generative models. Let us consider finding a node embedding \(\pmb {x}\in {{\,\mathrm{\mathbb {R}}\,}}^n\) that minimizes a generic total graph incoherence expression

for a non-negative incoherence function \(I(\pmb {x}, R)\). We consider the case where the \(x_i \in {{\,\mathrm{\mathbb {R}}\,}}\) must take distinct values from a discrete set \(\{ \nu _i \}_{i=1}^n\), where \(\nu _i \in {{\,\mathrm{\mathbb {R}}\,}}\); that is, we must have \(x_i = \nu _{p_i}\), where \(p \in {\mathscr {P}}\) is a permutation vector. In the linear case, this set may be the integers from 1 to n and in the periodic case this set may be equally spaced angles in \([0,2\pi )\).

Now consider a random hypergraph model where each hyperedge involving node tuple \(R\in \mathscr {R}\) is generated independently with probability

for a function \(f_{R}\) that takes values between 0 and 1. We have the following connection.

Theorem 5.1

Suppose \(\pmb {x}\in {{\,\mathrm{\mathbb {R}}\,}}^n\) is constrained to take values from a discrete set such that \(x_i = \nu _{p_i}\), where \(p \in {\mathscr {P}}\) is a permutation vector. Then minimizing the total incoherence (11) over all such \(\pmb {x}\) is equivalent to maximizing over all such \(\pmb {x}\) the likelihood that the hypergraph is generated by a model of the form (12), where

for any positive \(\gamma\).

Proof

Using (12), the likelihood of the whole hypergraph is

which leads to the log-likelihood

The second term on the right-hand side, which is the probability of the null hypergraph, is independent of the the permutation. Hence, with (13), maximizing the log-likelihood of the hypergraph is equivalent to minimizing

\(\square\)

Remark 5.1

Theorem 5.1 could be extended to the case where node i is assigned to \(\pmb {x}^{[i]} \in {{\,\mathrm{\mathbb {R}}\,}}^{d}\) for \(d >1\), and a higher-dimensional incoherence function in (5) is considered. In this scenario, we constrain \(\pmb {x}^{[i]} \in {{\,\mathrm{\mathbb {R}}\,}}^{d}\) to take values from a discrete set \(\{ \pmb {\nu }^{[i]} \}_{i=1}^n\) where \(\pmb {\nu }^{[i]} \in {{\,\mathrm{\mathbb {R}}\,}}^d\), such that \(\pmb {x}^{[i]} = \pmb {\nu }^{[p_i]}\) for a permutation vector \(p \in {\mathscr {P}}\). Then we could follow the same arguments as in Theorem 5.1 to derive a model described by (12) and (13), where \(\pmb {x}\in {{\,\mathrm{\mathbb {R}}\,}}^{n\times d}\) and \(I(\pmb {x}, R) = \sum _{i,j\in R} \Vert \pmb {x}^{[i]} -\pmb {x}^{[j]}\Vert _2^2\).

For a hypergraph generated by model (13) the number of hyperedges connecting the node tuple R follows a Bernoulli distribution with probability \(1/1+e^{\gamma c_{|R |} I(\pmb {x}, R)}\). The log-odds of the hyperedge decay linearly with the incoherence of the node tuple since

where the factor \(\gamma c_{|R |}\) determines the decay rate. The probability of a hyperedge is highest when all nodes overlap, i.e., \(I(\pmb {x}, R)=0\), which gives a 1/2 likelihood. If we generate hyperedges in repeated trials for the node tuple R, the variance of the number of hyperedges is \(e^{c_{|R |}\gamma I(\pmb {x}, R)}/(1+e^{c_{|R |}\gamma I(\pmb {x}, R)})^2\). When \(I(\pmb {x}, R)=0\), the largest variance of 1/4 is achieved. The expected total number of hyperedges of the whole hypergraph G can be expressed as

We note that Theorem 5.1 introduces the extra scaling parameter \(\gamma\). This parameter plays no direct role in Algorithms 1 and 2. However, a value for \(\gamma\) is needed if we wish to compare the likelihoods of the two models having inferred the embeddings. In principle, we may fit the parameter \(\gamma\) to a given hypergraph by matching the observed number of hyperedges with their expectation. However, from a computational point of view, this is rather challenging in general, since the computational complexity of the expectation calculation is \(\mathcal{O}\,(n^T)\) when the maximum cardinality of a considered hyperedge is T. Hence, given an embedding, in practice we prefer to pick \(\gamma\) by maximizing the likelihood, as described in the following subsection.

Model comparison

Under the assumption that a given hypergraph arose from a mechanism that favours connections between “nearby” nodes (in some latent, unobservable configuration), it is of interest to know whether a linear or periodic distance provides a better description.We may address this question using a model comparison approach. As in the “Linear hypergraph embedding” and “Periodic hypergraph embedding” sections, we consider one-dimensional embeddings, such that both the linear and periodic version have \(n+1\) parameters given the Laplacian coefficients: n node embeddings and a decay parameter \(\gamma\). The node embeddings will be estimated from Algorithms 1 and 2. For any choice of \(\gamma\), we may then calculate the corresponding likelihood for each type of hypergraph, given the embedding. We may then compare the models by reporting plots of likelihood versus \(\gamma\) or by reporting the maximum likelihood over all \(\gamma\). We note that Theorem 5.1 states that node embeddings that minimize the incoherence also maximize the graph likelihood under the given discrete constraints. We note that Algorithms 1 and 2 minimize linear and periodic incoherence after relaxing the discrete constraints in Theorem 5.1 for computational feasibility. Such heuristics are often used in discrete programming. Therefore instead of the exact maximum likelihood, we get an estimated maximum likelihood. An overall workflow is shown below in Algorithm 3.

Experiments

Model comparison

Synthetic hypergraphs

In this section we test the performance on Algorithms 1, 2 and 3 in a controlled setting. To do this, we generate hypergraphs with either linear or periodic clustered structure using the proposed random model. For simplicity, we only consider dyadic and triadic edges, although the experiments could be extended to include higher-order hyperedges.

Linear hypergraph with clustered nodes

We first generate hypergraphs with K planted clusters \(C_1, C_2,\ldots , C_K\) of size m, and \(n = m K\) nodes. We embed the nodes using \(x_{i} = \frac{2(l-1)}{K} + \sigma\) if \(i\in C_l\), where \(\sigma \sim \textrm{unif}(-a,a)\) is an additive uniform noise. Hyperedges are then drawn randomly according to model (13) with the linear incoherence (1).

We note that, in practice, the embedding algorithms must choose values \(c_2\) and \(c_3\) in order to form the hypergraph Laplacian, and the model comparison algorithm must choose a value for \(\gamma\). We are therefore interested in the sensitivity of the process with respect to \(c_2\) and \(c_3\), and in the accuracy with which \(\gamma\) can be estimated. We use \(c_2\), \(c_3\) and \(\gamma _0\) to denote parameters used by the generative model to create the synthetic data; we also let \(c^*_2\) and \(c^*_3\) denote the corresponding parameters used in the spectral embedding algorithms and let \(\gamma ^*\) represent an inferred value of \(\gamma _0\). We choose \(c_2 =1\) and \(c_3 = 1/3\) so that the weight of a hyperedge is inversely proportional to the number of node pairs involved. We let \(m = 50\), \(K=5\), \(a = 0.05\), and vary the decay parameter \(\gamma _0\) from 0 to 10. Figure 1a shows an example of the dyadic adjacency matrix, \(W^{[2]}\), with \(\gamma _0 = 4\), where dots represent non-zeros. A corresponding triadic adjacency matrix, \(W^{[3]}\), is shown in Fig. 1b. In all our tests we discard hypergraphs that do not satisfy Assumption 3.1.

For each synthetic hypergraph, we estimate the maximum log-likelihood assuming a linear or a periodic structure using Algorithm 3. For each input decay parameter \(\gamma _0\), 40 hypergraphs are generated independently and the average maximum log-likelihood is plotted in Fig. 1c. The shaded regions represent the estimated \(80\%\) confidence interval. In this case, the linear model correctly achieves a higher average maximum log-likelihood. The tight bound of the confidence interval suggests that the result is consistent across random trials.

We then perform K-means clustering using the periodic and linear embeddings assuming 5 clusters and plot the Adjusted Rand Index (ARI)33,34,35 in Fig. 1d. Here, a larger ARI indicates a better clustering result . The dotted line shows the average over 40 independently trials for each \(\gamma _0\) value and the shaded area is the estimated \(80\%\) confidence interval. The plot suggests that the clustering from the linear embedding outperforms the clustering from the periodic embedding.

We are interested in the effect of parameters \(c_3\) and \(c_3^*\) that control the weight of triadic edges in the random graph model and spectral embedding algorithm respectively. To conduct an experiment, we fix the weight of dyadic edges \(c_2 = 1\), \(c_2^*=1\), and decay parameter \(\gamma _0 = \gamma ^*= 1\), while varying \(c_3\) and \(c_3^*\). The maximum log-likelihood of the linear model (Fig. 1e) and the ARIs using the linear embedding (Fig. 1f) are shown as heat-maps over \(c_3\) and \(c_3^*\). Values are the average over 40 random trials. Overall, choosing \(c_3^* = c_3\), gives the highest maximum likelihood. Therefore, when the true \(c_3\) is not known, it could be estimated using a maximum likelihood method. In terms of the clustering result we note that when \(c_3\) is large, for example, when \(c_3 > 0.3\), using information from triadic edges by setting \(c_3^*>0\) achieves a better ARI than using only diadic edges, i.e., \(c_3^*=0\). This is because a large \(c_3\) encourages more triadic edges to be formed within clusters, whereas a small \(c_3\) leads to more triadic edges between clusters. In general the larger the \(c_3\), the less sensitive the ARI is to the choice of \(c_3^*\)

Model comparison experiments on synthetic linear hypergraphs. (a) Black pixels in the dyadic adjacency matrix (\(\gamma _0 = 4\)) represent edges, and they reveal 5 clusters in the diagonal blocks. (b) In the triadic adjacency matrix, colors reflect the number of triangles shared between nodes (\(\gamma _0 = 4\)). (c) The linear embedding achieves higher log-likelihoods than the periodic version for hypergraphs generated with different \(\gamma _0\). (d) Adjusted Rand Indices of K-means clustering based on the linear and periodic embedding are plotted against \(\gamma _0\). (e) Values in the heatmap represent the maximum likelihoods of the linear model \(\times 10^{-6}\) from Algorithm 3. The maxima are found along the diagonal when \(c_3^* = c_3\). (f) Values represent the Adjusted Rand Indices of K-means clustering based on the linear embedding for different values of \(c_3^*\) and \(c_3\).

Periodic hypergraph with clustered nodes

To generate hypergraphs with periodic clusters, we use a node embedding based on a vector of angles \(\varvec{\theta } = (\theta _1, \theta _2,\ldots , \theta _n)^T\) in \([0,2\pi )\), forming K clusters \(C_1, C_2, \ldots , C_K\) of size m. In particular, we let \(\varvec{\theta }_i = \frac{2 \pi (l-1)}{K}+\sigma\) if \(i\in C_l\) for \(1\le l \le K\), where \(\sigma \sim \textrm{unif}(-a,a)\) is the added noise. The hyperedges are generated using model (13), where the incoherence function is defined in (6). We choose \(a=0.05 \pi\), \(c_2 =1\), \(c_3 = 1/3\) and vary the decay parameter \(\gamma _0\). Examples of the dyadic and triadic adjacency matrices with \(\gamma _0 = 1\) are shown in Fig. 2a,b.

Using the same approach as in the previous section, we compare the maximum log-likelihood and ARIs assuming linear and periodic structures in Fig. 2c,d. We see that the periodic model achieves a higher maximum, and on average the periodic embedding produces higher ARIs.

Heat-maps in Fig. 2e,f show results for different combinations of \(c_3\) and \(c^*_3\) for the periodic embedding algorithm. These results were generated in the same way as for Fig. 1c,d. Higher maximum likelihoods are achieved near the diagonal where \(c_3^* = c_3\), hence the true parameters \(c_3\) for the underlying hypergraph could be estimated using the maximum likelihood method. As in the previous example, when \(c_3 \ge 0.3\), using the triadic edges (\(c_3^*\)) improves the ARI. When \(c_3 < 0.3\), increasing \(c_3^*\) leads to an inferior clustering result. However when \(c_3 \ge 0.3\), ARI becomes less sensitive to the choice of \(c_3^*\) as long as it is positive.

In summary, these tests indicate that the algorithms are able to correctly distinguish between linear and periodic range-dependency when one such structure is present in the data. We observed that setting \(c_3^* >0\) improves the ARI when the triadic edges have a strong structural pattern; that is, when \(c_3\) is large. Moreover, when the true parameter \(c_3\) is unknown we recommend choosing \(c_3^*\) based on a maximum likelihood estimation, that is, finding the value \(c_3^*\) that returns the largest maxima in Algorithm 3. Such a choice also achieve reasonable ARIs in our synthetic examples as shown in the diagonal entries in Figs. 1f and 2f.

Model comparison experiments on synthetic periodic hypergraphs. (a) The bottom-left and top-right blocks in the dyadic adjacency matrix (\(\gamma _0 = 1\)) reveal a periodic clustered structure. (b) Similar periodic clustered pattern is shown in the triadic adjacency matrix (\(\gamma _0 = 1\)). (c) The periodic embedding achieves higher log-likelihoods than the linear embedding for different values of \(\gamma _0\). (d) Adjusted Rand Indices of K-means clustering based on the linear and periodic embedding are plotted against \(\gamma _0\). (e) Values in the heatmap indicate the maximum likelihoods of the periodic model \(\times 10^{-6}\) from Algorithm 3 and the maxima lie on the diagonal where \(c_3^* = c_3\). (f) Values represent the Adjusted Rand Indices of K-means clustering based on the periodic embedding for different values of \(c_3^*\) and \(c_3\).

Real hypergraphs

High school contact data

The high school contact data from36 records the frequency of student interaction. Students are represented as nodes, and contacts between two or three students are registered as dyadic or triadic edges. We retrieved the hypergraph from21 containing 327 nodes, and only studied its dyadic and triadic edges considering the computational complexity. We construct the hypergraph Laplacian \(L = c_2^* L^{[2]} + c_3^* L^{[3]}\) and perform linear and periodic spectral embedding. For the linear embedding we map nodes into 3-dimensional Euclidean space using the eigenvectors corresponding to the three smallest eigenvalues that are larger than 0.01. We make this choice because the eigenvector associated with the smallest non-zero eigenvalue has only a few non-zero entries and leads to trivial clusters. We fix \(c_2^* = 1\) and vary \(c_3^*\) since only the relative weight \(c_3^*/c_2^*\) matters in node embedding.

The the maximum likelihoods and ARIs evaluated using various \(c_3^*\) are shown in the left column in Fig. 3. The true clusters are defined by the classes the students came from. Overall the periodic embedding achieves higher likelihoods and ARIs despite the linear embedding involving more parameters. Since linear clusters tend to have more marginalized groups that are far from other clusters, our results may suggest a lack of marginalisation driven by class membership.

We note that setting \(c^*_3=0\) causes the algorithm to ignore triangles, and hence to reduce to classical spectral clustering. For the linear algorithm, we see that incorporating triadic edges by using a positive \(c^*_3\) can improve the ARI by up to around 0.09. We note that in21, modularity maximization-based clustering achieved ARI=1 on the same data. However, those methods have more parameters, which makes the ARI not directly comparable.

Primary school contact data

The primary school contact hypergraph21 is constructed from the contact pattern between primary students from 10 classes37. Nodes represent students or teachers, and hyperedges represent their physical contact. Each node is labelled by the class of the students or as a teacher. The hypergraph contains 242 nodes and 11 classes of labels. We extracted the dyadic and triadic edges from the hypergraph and performed likelihood comparison and clustering with four eigenvectors associated with the four smallest eigenvalues that are greater than 0.01. The middle column in Fig. 3 suggests the periodic embedding achieves the maximum likelihood at \(c_3^* = 0.7\) and overall performs better than the linear embedding in the clustering task. These results may be related to the existence of a teacher group that connects with all student groups. When we arrange dyadic and triadic adjacency matrices by node classes, these connections will appear as off-diagonal entries. As we have shown in Fig. 2a,b, the periodic model tends to produce more off-diagonal connections than the linear model.

Senate bills data

In the senate bills hypergraph21,38,39, nodes are US Congresspersons and hyperedges are the sponsor and co-sponsors of Senate bills. There are in total 294 nodes, and each node is labelled as either Democrat or Republican. We performed likelihood comparison and clustering with only the dyadic and triadic edges. Since the node degree distribution is highly inhomogeneous, we observe many trivial eigenvectors that are close to indicator functions. To address this issue we trimmed off the top and bottom 2% nodes by node degree, and use the eigenvector associated with the smallest eigenvalue that is greater than 0.01. The linear and periodic models have similar maximum likelihoods and clustering ARIs, as shown in the right column in Fig. 3. In contrast with previous examples, there are only two clusters present in this data set. Hence the difference between the periodic and linear models, which could be reflected in the connection (or disconnection) pattern between the first and the last group, is less prominent.

Model comparison experiments on the high school contact data (left), the primary school contact data (middle), and the senate bill data (right). Top row shows the maximum log-likelihoods of the linear and periodic embedding for different values of \(c_3^*\) where pentagrams indicate maxima. Bottom row plots the Adjusted Rand Indices of K-means clustering based on the linear and periodic embedding for various \(c_3^*\).

Hyperedge prediction

Once the node embeddings are estimated from the spectral algorithms, the probability of hyperedges may be computed from the proposed models. The hyperedge probability can naturally serve as a score for hyperedge prediction. We implement and test such triadic edge prediction on timestamped high school contact data30,36 , primary school contact data30,37, and synthetic linear hypergraphs. The results will be compared against approaches based on average-scores proposed in30. Other hyperedge prediction methods include feature-based prediction40, model-based prediction41, and machine learning-based prediction42.

For high school and primary school contact data, we used three and four eigenvectors respectively corresponding to the smallest eigenvalues that are greater than 0.01 to be consistent with the previous section, and only consider dyadic and triadic edges. The hyperedges are sorted by time stamps and split into training and testing data. For example, an 80:20 training/testing splitting ratio means we use the first \(80\%\) of the hyperedges to train the model and the last \(20\%\) to test the predictions. When the training ratio is low, the subgraph for training may be disconnected thus violating Assumption 3.1. Therefore, we only consider nodes in the largest connected component of the graph associated with the binarized version of L of the training subgraph, and test the prediction on the same set of nodes. Note that in real data, the parameters \(\gamma _0\), \(c_2\) and \(c_3\) for the hypergraph model are unknown. We fix \(c_2^*=1\) and choose \(c_3^*\) and \(\gamma ^*\) using a maximum likelihood estimate through a grid search on the training data.

On the training set, we assign scores to each triplet using five methods: a random score as baseline, hyperedge probability from the linear model, arithmetic mean, harmonic mean, and geometric mean from30. On the test set, we measure the prediction performance with the area under precision-recall curve (AUC-PR)43. A Precision-Recall (PR) curve traces the Precision = True Positive/(True Positive + False Positive) and Recall = True Positive/(True Positive + False Negative) for different thresholds. The AUC-PR is a measure that balances both Precision and Recall where 1 means perfect prediction at any threshold. Setting \(c_3^* = 0\) will assign probability of 0.5 to all triplets, which is equivalent to the random score approach if we break ties randomly.

On the high-school contact data shown in Table 1, the harmonic and geometric mean attain the highest AUC-PR for large amounts of training data, see, for example the 80:20 data split; while the linear model predictions achieve the best results for small amounts of training data, as seen in the 20:80 data split. This could be because when training data is insufficient, there are more unobserved “missing” dyadic and triadic edges. In this case, the node embedding algorithm can infer node proximity based on common neighbours. In other words, it can place nodes with common neighbours nearby even if they haven’t been directly linked before. On the other hand, the geometric and harmonic mean will assign a score of zero to a triplet if none of the nodes has been connected previously, and therefore will predict no triadic edges.

We also test the triadic edge prediction on the synthetic linear hypergraphs, generated in the manner described in the “Experiments” section, with \(K = 4\), \(m=60\), \(\gamma = 10\), \(c_2 = 1\), and \(c_3 = 0.3\), such that the clustered pattern resembles the high-school contact data. We consider three eigenvectors associated with the smallest eigenvalues that are greater than 0.01 for the synthetic linear model. Since defining a periodic model with more than one eigenvector is beyond the scope of this work, we only test the linear hypergraphs. We randomly select a portion of the hyperedges as the training set, while ensuring the sampled hypergraph is connected, and test the performance on the rest of the hyperedges. The AUC-PR averaged over 20 random hypergraphs is shown in Table 1. We observe that the linear model outperforms random score and average scores for various training data sizes.

Conclusion

In this work we have developed new random models and embedding algorithms for hypergraphs, and investigated their equivalence. In particular, we focused on two spectral embedding algorithms customized for hypergraphs, which aim to reveal linear and periodic structures, respectively. We also described random hypergraph models associated with these algorithms, which allow us to quantify the relative strength of linear and periodic structures based on maximum likelihood. We demonstrated the model comparison approach on synthetic linear and periodic hypergraphs, showing that the results are consistent with the generating mechanism. When applied to high school and primary school contact hypergraphs, the model comparison suggests the periodic structure is more prominent. On this data set we also showed that the “spectral embedding plus random hypergraph” approach gives a useful strategy for predicting new hyperedges.

In future work, it would be interesting to investigate how these linear and periodic hypergraph models compare with other versions that use alternative assumptions, including those based on core-periphery23 and stochastic block model13,21 structures.

Data availability

This research made use of public domain data that is available over the internet, as indicated in the text.

Code availability

Code for the experiments is available at https://github.com/OpalGX/hypergraph_model.

References

Bianconi, G. Higher Order Networks: An Introduction to Simplicial Complexes (Cambridge University Press, 2021).

Lambiotte, R., Rosvall, M. & Scholtes, I. From networks to optimal higher-order models of complex systems. Nat. Phys. 15, 313–320 (2019).

Benson, A. R., Gleich, D. F. & Leskovec, J. Higher-order organization of complex networks. Science 353, 163–166 (2016).

Benson, A. R., Gleich, D. F. & Higham, D. J. Higher-order network analysis takes off, fueled by classical ideas and new data. SIAM News (2021).

Torres, L., Blevins, A. S., Bassett, D. S. & Eliassi-Rad, T. The why, how, and when of representations for complex systems. SIAM Rev. 63, 435–485 (2021).

Higham, D. J. & De Kergorlay, H.-L. Epidemics on hypergraphs: Spectral thresholds for extinction. Proc. R. Soc. A 477, 20210232 (2021).

Yu, J., Tao, D. & Wang, M. Adaptive hypergraph learning and its application in image classification. IEEE Trans. Image Process. 21, 3262–3272. https://doi.org/10.1109/TIP.2012.2190083 (2012).

Ramadan, E., Tarafdar, A. & Pothen, A. A hypergraph model for the yeast protein complex network. In 18th International Parallel and Distributed Processing Symposium, 2004. Proceedings, 189, https://doi.org/10.1109/IPDPS.2004.1303205 (2004).

Schölkopf, B., Platt, J. & Hofmann, T. Learning with hypergraphs: clustering, classification, and embedding. In Advances in Neural Information Processing Systems 19: Proceedings of the 2006 Conference, 1601–1608 (2007).

Rossi, R. A. et al. A structural graph representation learning framework. In Proceedings of the 13th International Conference on Web Search and Data Mining, WSDM 2020, 483–491, https://doi.org/10.1145/3336191.3371843 (Association for Computing Machinery, 2020).

Luxburg, U. A tutorial on spectral clustering. Stat. Comput. 17, 395–416 (2007).

Grindrod, P. Range-dependent random graphs and their application to modeling large small-world proteome datasets. Phys. Rev. E 66, 066702 (2002).

Peixoto, T. P. Ordered community detection in directed networks. Phys. Rev. E 106, 024305. https://doi.org/10.1103/PhysRevE.106.024305 (2022).

Hamilton, W. L. Graph representation learning. Synth. Lect. Artif. Intell. Mach. Learn. 14, 1–159 (2020).

Hamilton, W. L., Ying, R., & Leskovec, J. Representation learning on graphs: Methods and applications. IEEE Data Eng. Bull. (2017).

Chung, F. Spectral Graph Theory. Regional conference series in mathematics; no. 92 (American Mathematical Society, 1997).

De Kergorlay, H.-L. & Higham, D. J. Consistency of anchor-based spectral clustering. Inf. Inference J. IMA 11, 801–822 (2022).

Galuppi, F., Mulas, R. & Venturello, L. Spectral theory of weighted hypergraphs via tensors. Linear Multilinear Algebra https://doi.org/10.1080/03081087.2022.2030659 (2022).

Lucas, M., Cencetti, G. & Battiston, F. Multiorder Laplacian for synchronization in higher-order networks. Phys. Rev. Res. 2, 033410. https://doi.org/10.1103/PhysRevResearch.2.033410 (2020).

Hoff, P. D., Raftery, A. E. & Handcock, M. S. Latent space approaches to social network analysis. J. Am. Stat. Assoc. 97, 1090–1098 (2002).

Chodrow, P. S., Veldt, N. & Benson, A. R. Generative hypergraph clustering: From blockmodels to modularity. Sci. Adv. 7, eabh1303. https://doi.org/10.1126/sciadv.abh1303 (2021).

Cimini, G., Mastrandrea, R. & Squartini, T. Reconstructing Networks (Cambridge University Press, 2021).

Tudisco, F. & Higham, D. J. Core-periphery detection in hypergraphs. To appear in SIAM J. Mathematics of Data Science (2023).

Grindrod, P., Higham, D. J. & Kalna, G. Periodic reordering. IMA J. Numer. Anal. 30, 195–207 (2010).

Gong, X., Higham, D. J. & Zygalakis, K. Directed network Laplacians and random graph models. R. Soc. Open Sci. 8, 211144. https://doi.org/10.1098/rsos.211144 (2021).

Lütkepohl, H. Handbook of Matrices (Wiley, 1996).

Higham, D. J., Kalna, G. & Kibble, M. J. Spectral clustering and its use in bioinformatics. J. Comput. Appl. Math. 204, 25–37 (2007).

Higham, D. J. Unravelling small world networks. J. Comput. Appl. Math. 158, 61–74 (2003).

Higham, D. J. Spectral reordering of a range-dependent weighted random graph. IMA J. Numer. Anal. 25, 443–457 (2005).

Benson, A. R., Abebe, R., Schaub, M. T., Jadbabaie, A. & Kleinberg, J. Simplicial closure and higher-order link prediction. Proc. Natl. Acad. Sci. 115, E11221–E11230 (2018).

Horn, R. & Johnson, C. Matrix Analysis (Cambridge University Press, 1985).

Watts, D. J. & Strogatz, S. H. Collective dynamics of ‘small-world’ networks. Nature 393, 440–442. https://doi.org/10.1038/30918 (1998).

Rand, W. M. Objective criteria for the evaluation of clustering methods. J. Am. Stat. Assoc. 66, 846–850 (1971).

Hubert, L. & Arabie, P. Comparing partitions. J. Classif. 2, 193–218. https://doi.org/10.1007/BF01908075 (1985).

McComb, C. Adjusted Rand index. GitHub (2022). Accessed 29 June 2022.

Mastrandrea, R., Fournet, J. & Barrat, A. Contact patterns in a high school: A comparison between data collected using wearable sensors, contact diaries and friendship surveys. PLoS ONE 10, e0136497 (2015).

Stehlé, J. et al. High-resolution measurements of face-to-face contact patterns in a primary school. PLoS ONE 6, e23176. https://doi.org/10.1371/journal.pone.0023176 (2011).

Fowler, J. H. Connecting the congress: A study of cosponsorship networks. Polit. Anal. 14, 456–487. https://doi.org/10.1093/pan/mpl002 (2006).

Fowler, J. H. Legislative cosponsorship networks in the US house and senate. Soc. Netw. 28, 454–465. https://doi.org/10.1016/j.socnet.2005.11.003 (2006).

Yoon, S., Song, H., Shin, K. & Yi, Y. How much and when do we need higher-order information in hypergraphs? A case study on hyperedge prediction. In Proceedings of The Web Conference vol. 2020, 2627–2633 (2020).

Scholtes, I. When is a network a network? multi-order graphical model selection in pathways and temporal networks. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 1037–1046 (2017).

Yadati, N. et al. Nhp: Neural hypergraph link prediction. In Proceedings of the 29th ACM International Conference on Information & Knowledge Management, 1705–1714 (2020).

Davis, J. & Goadrich, M. The relationship between precision-recall and ROC curves. In Proceedings of the 23rd International Conference on Machine Learning, 233–240 (2006).

Acknowledgements

X.G. acknowledges support of MAC-MIGS CDT under EPSRC grant EP/S023291/1. D.J.H. was supported by EPSRC grant EP/P020720/1. K.C.Z. was supported by the Leverhulme Trust grant 2020-310 and EPSRC grant EP/V006177/1.

Author information

Authors and Affiliations

Contributions

X.G. carried out the numerical experiments and drafted the manuscript. All authors contributed to the theoretical understanding, development of research, and the writing of the manuscript. All authors gave final approval for publication.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Gong, X., Higham, D.J. & Zygalakis, K. Generative hypergraph models and spectral embedding. Sci Rep 13, 540 (2023). https://doi.org/10.1038/s41598-023-27565-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-023-27565-9