Abstract

Emotion recognition based on Electroencephalogram (EEG) has been applied in various fields, including human–computer interaction and healthcare. However, for the popular Valence-Arousal-Dominance emotion model, researchers often classify the dimensions into high and low categories, which cannot reflect subtle changes in emotion. Furthermore, there are issues with the design of EEG features and the efficiency of transformer. To address these issues, we have designed TPRO-NET, a neural network that takes differential entropy and enhanced differential entropy features as input and outputs emotion categories through convolutional layers and improved transformer encoders. For our experiments, we categorized the emotions in the DEAP dataset into 8 classes and those in the DREAMER dataset into 5 classes. On the DEAP and the DREAMER datasets, TPRO-NET achieved average accuracy rates of 97.63%/97.47%/97.88% and 98.18%/98.37%/98.40%, respectively, on the Valence/Arousal/Dominance dimension for the subject-dependent experiments. Compared to other advanced methods, TPRO-NET demonstrates superior performance.

Similar content being viewed by others

Introduction

Emotions play a significant role in daily life, reflecting the connection between subjective needs and the objective external world1. Positive emotions have a beneficial impact on people's physical and mental health, while negative emotions have the opposite effect2. In recent years, there has been a growing interest in applications related to emotion recognition, such as human–computer interaction3 and psychological disease rehabilitation4. Due to the excellent characteristics of Electroencephalogram (EEG) signals in terms of their inability to be falsified, high time resolution, and sensitivity to emotional changes5,6, emotion recognition based on EEG signals has received attention from both the academic and industrial communities.

Discrete and continuous emotion models are commonly used to measure emotions7. Discrete emotion models divide emotions into finite categories, such as happiness, sadness, surprise, fear, anger, and disgust8. Continuous emotion models measure emotions using dimensions and can describe more complex emotions7. The VAD (Valence-Arousal-Dominance) emotion model9, which is currently popular, uses valence, arousal, and dominance to measure emotions. Valence corresponds to the degree of pleasure, arousal corresponds to the degree of intensity, and dominance corresponds to the degree of subjective control7. The lower the values of these dimensions, the lower the degree of the corresponding emotion and vice versa7.

Emotion recognition based on EEG signals involves two stages: feature extraction and classification algorithms. Various features for EEG signals have been proposed, including fractal dimension features10, high-order cross features11, power spectral density12, differential entropy (DE)13, Riemannian manifold features14, differential causality15, and the discrete wavelet transform16. Deep learning classification algorithms, such as transformer7,17,18,19, graph neural network20,21, and convolutional neural network2,22, are commonly used for emotional category classification.

Although these efforts have yielded convincing results, there are still some issues. Firstly, due to the time asymmetry, instability, and low signal-to-noise ratio of EEG signals23, researchers often use various complex methods to design robust features24,25, which may not be conducive to practical applications. Secondly, although transformer performs well on EEG-based emotion classification tasks7,13,18,19, it requires a large amount of computation26,27. Finally, in VAD emotion models, researchers typically categorize dimensions into high- and low-state labels2,7,17,20,21,22, which may not capture nuanced changes in emotion.

To address these issues, we propose TPRO-NET to recognize complex emotions. The model takes differential entropy features and enhanced differential entropy features as inputs, and outputs emotion categories through convolutional neural networks and improved transformer encoders. The main contributions of this article are as follows:

-

(1)

A Simple nonlinear transformation is proposed to enhance the robustness of differential entropy features.

-

(2)

By improving the transformer encoder, we reduce the amount of computation while ensuring the similar performance as the transformer encoder does.

-

(3)

TPRO-NET achieved state-of-the-art results by completing an 8-class emotion classification task on the DEAP dataset and a 5-class emotion classification task on the DREAMER dataset.

This article is organized as follows: Section "Related works" reviews previous work on feature extraction and transformer. Section "Materials" presents the materials used in this study. Section "Methods" describes the proposed EEG-based emotion recognition algorithm TPRO-NET. Section "Experiments results and analysis" presents the experimental results and analysis of the DREAMER and DEAP datasets. Section "Discussion" discusses the experiments. Finally, Section "Conclusion" provides a summary of the paper and discusses future work.

Related works

Researchers have made significant progress in the field of emotional recognition based on EEG signals. Meanwhile, there are still some existing flaws and issues that require attention.

Wei, et al.7 proposed the transformer capsule network to address the challenges of capturing global contextual information across the temporal, frequency, intra-channel, and inter-channel domains in convolutional neural networks. Subject-dependent binary classification experiments were conducted on the DEAP (DREAMER) dataset, achieving accuracies of 98.76% (98.59%), 98.81% (98.61%), and 98.82% (98.67%) on the dimensions of Valence, Arousal and Dominance, respectively. However, the model's performance was average in cross-subject EEG emotion recognition tasks, indicating that it may not fully account for the inter-individual differences in EEG patterns, which could affect its universality and applicability.

Chang, et al.16 put forward an automatic transformer neural architecture search framework based on multi-objective evolutionary algorithm to avoid the time-consuming and resource-intensive process of designing neural networks. This framework achieved an accuracy rate of over 95% in subject-dependent binary classification experiments on the DEAP and the DREAMER datasets. Nevertheless, the model parameters of this method amount to 6.98M, which is not lightweight enough. Still, the model's performance in cross-subject EEG emotion recognition is average.

Yin, et al.21 aimed to achieve breakthroughs in model performance metrics. They employed graph neural networks to extract non-Euclidean spatial features and utilized long short-term memory to search for temporal features in order to perform binary classification of emotions. Conducting subject-dependent experiments on the DEAP dataset, they achieved an accuracy of 90.45% and 90.60% on the Valence and Arousal dimensions, respectively. In cross-subject experiments, they obtained accuracies of 84.81% and 85.27% on Valence and Arousal, respectively. It is worth pointing out that the method was validated using only the DEAP dataset, which may limit the model's effectiveness. Other limitations of this model include its inability to recognize multiple emotions, high computational complexity, and the requirement for a long-time window for data truncation that potentially hinders its real-time application.

Cheng, et al.2 proposed a random convolutional neural network to reduce the computational complexity of the backpropagation process, inspired by the successes of random vector functional link and convolutional random vector functional link. Binary classification experiments were conducted on the DEAP dataset, demonstrating that the model achieved accuracies of over 99% on the dimensions of valence and arousal. Being validated by only one dataset cannot demonstrate its robustness in different contexts.

Choo, et al.28 investigated the effectiveness of multi-task learning for emotion recognition using raw EEG-based convolutional neural networks (CNNs) with auxiliary context information. The study utilized temporal and spatial filtering layers from raw EEG-based CNNs as shared and task-specific layers for emotion and context classification tasks. The experiments were conducted using the authors’ own dataset, on which promising results were achieved. Noteworthy, their dataset only includes negative emotions, and the proposed model has limited generalization ability.

Due to the nonlinearity and non-stationarity of EEG signals, extracting effective non-stationary and valid features can be challenging. To address this issue, Zhong, et al.24 developed a new feature extraction method called tunable Q-factor wavelet transform (TQWT), a spatiotemporal representation method for multichannel EEG signals, and a hybrid convolutional recurrent neural network. The model achieved an accuracy of 95.33% on the SEED dataset. Besides only tested by one dataset, the bottlenecks also include the complex computation of TQWT, which requires numerous floating-point operations.

Liu, et al.29 focused on enhancing the performance of the model, proposing an EEG emotion recognition model based on the attention mechanism and a pre-trained convolution capsule network to recognize various emotions more effectively. This model utilizes coordinate attention to endow the input signal with relative spatial information, and subsequently maps the EEG signal to a higher dimensional space, thereby enriching the emotion-related information within the EEG. They conducted experiments using only the DEAP dataset, achieving good performance in subject-independent experiments and moderate performance in subject-dependent experiments. Still, only one dataset was applied for the model validation, and cross-subject results are not ideal.

Lin, et al30 proposed the dual-scale EEG-Mixer to address the limitation of CNNs in extracting global information. This model fuses spatial and frequency domain features of EEG signals, achieving an accuracy of over 95% on the DEAP dataset and 93.69% on the SEED dataset. The model, nonetheless, does not effectively utilize the temporal information of EEG signals and performs moderately in cross-subject experiments. This work demonstrates experimentally that transformers require significant computational resources.

Tang, et al.31 proposed the spatial-temporal information learning network to extract discriminative features from EEG signals. The network captures spatial correlations and temporal contexts using a combination of a convolutional neural network, convolutional block attention module, and bidirectional long short-term memory. Subject-independent experiments were conducted using only one dataset, and moderate results were achieved.

Several shortcomings have been identified in previous works, including validating model effectiveness using only one dataset, difficulty in cross-subject emotion recognition from EEG signals, challenges in incorporating temporal, spatial, and frequency domain features into EEG signal characteristics simultaneously, high computational complexity in feature extraction, low computational efficiency of the transformer model, and a limited number of emotional categories recognized by the VAD model. In addition to the literature described in detail above, there are other works in EEG fields that have also mentioned these issues, such as32,33,34.

Standing on the shoulders of predecessors’ work, this article focuses on improving model performance by utilizing simplified features, enhancing the efficiency of the transformer, and developing algorithms to recognize subtle changes in emotions. Due to this, we have designed TPRO-NET, a neural network that takes differential entropy and enhanced differential entropy features as input and outputs emotion categories through convolutional layers and improved transformer encoders.

Materials

The open-source DEAP35 and the DREAMER36 datasets are commonly used for EEG-based emotion recognition. Both datasets induce emotion-related EEG signals through video stimuli and determine emotion labels through subjective scoring by participants.

The DEAP dataset includes 40 videos that induce emotions in 32 subjects and collects EEG signals from 32 channels. For each video stimulus, participants must provide a floating-point score ranging from 1 to 9 for the four dimensions of valence, arousal, dominance, and liking to measure their emotions. Each elicitation produces 3 s of baseline signals and 60 s of experimental signals, resulting in a total of 2520 ((60 + 3) × 40) seconds of EEG signals per subject. The DEAP dataset provides two types of signals: the original signals with a sample rate of 512 Hz and the signals that have been downsampled to 128 Hz and have undergone independent component analysis to remove EOG artifacts. We conducted experiments using the processed signals with experimental signals. The general scheme of video-induced emotion is depicted in Fig. 1.

The DREAMER dataset includes 18 videos that induce emotions in 23 subjects and collects EEG signals from 14 channels. For each video stimulus, the participant was asked to provide an integer score ranging from 1 to 5 on three dimensions: valence, arousal, and dominance, to measure their emotions. The length of each elicitation video ranges from 65 to 393 s. The Dreamer dataset provides signals that have been downsampled to 128 Hz and have undergone eye artifact removal using a linear phase finite impulse response filter. The general scheme of video-induced emotion is depicted in Fig. 1.

Methods

The proposed EEG emotion recognition algorithm, TPRO-NET, comprises four parts: feature extraction, convolutional layers and reshaping, improved transformer encoder, and emotion classification layer. Figure 2 illustrates the detailed structure of TPRO-NET.

Feature extraction

The processed EEG signals from the datasets mentioned in Section "Materials" are segmented into non-overlapping one-second segments. Immediately following that, we apply a fourth-order band-pass Butterworth filter to each of the four frequency bands (\(\theta (4-8Hz),\alpha (8-13Hz),\beta (13-31Hz),\gamma (31-45Hz\)))37,38 to obtain the filtered signals. To improve computational efficiency, the differential entropy feature can be approximated as a Gaussian distribution between 4 and 45 Hz11. The calculation formula for this approximation is as follows.

where X represents a one-second EEG signal segment, σ represents the standard deviation of the Gaussian distribution, and \(\uppi\), \(\text{e}\) are constants.

To improve feature robustness, we normalize the differential entropy features calculated for each channel using the following formula:

where \(x_{i}\) represents the value of the differential entropy feature within a channel, and m represents the total number of differential entropy features in a channel.

Similarly to previous studies22,39, we map the differential entropy feature to a two-dimensional matrix with dimensions of \(H\times W (H=9, W=9)\) based on the spatial location of the channel to include spatial information. The values in the locations that are not mapped are set to 0 by default. The detailed spatial mapping method is shown in Fig. 3. Currently, a one-second EEG signal segment can produce four two-dimensional matrices based on the four different frequency bands (\(\theta , \alpha , \beta , \gamma\)).

To obtain more robust features, we applied nonlinear transformation to the four two-dimensional matrices we calculated. The calculation formula for nonlinear transformation is as follows.

where M represents the matrix after spatial mapping, and \({M}_{1}\) represents the matrix after nonlinear transformation. In addition, to improve the calculation accuracy of single precision floating point numbers, we multiplied the coefficient by 255. Through this step, four differential entropy feature matrices and four enhanced differential entropy feature matrices can be obtained. These two-dimensional matrices are organized into a three-dimensional structure with a size of \(C\times H\times W\) to obtain the input of the neural network, where \(C=8\) represents the number of matrices.

Convolutional layers and reshaping

By feeding the features obtained in 4.1 into convolutional layers to learn global features, tensor \(x\in {R}^{C\times H\times W}\) can be obtained. Table 1 shows the hyperparameters used in the convolutional layers. Afterwards, in order to match the input of the improved transformer encoder, we reshape x using formula 4.

where \(z_{0} \in R^{{C \times \left( {H \times W} \right)}}\) represents the input of improved transformer encoder.

Improved transformer encoder

The improved transformer encoder mainly consists of two parts: the attention mechanism ISA and the feedforward neural network (FFN). The structure of the improved transformer is presented in Fig. 4. Attention mechanism ISA first calculates Q, K, and V using the method provided in40, and then learns local features. The relevant calculation formulas are shown below.

where Q, K and V represent queries, keys, and values40, respectively, D represents a trainable dynamic scaling matrix with an initial value of 1, \({U}_{sum}, {U}_{copy}\), \({U}_{qkv},{U}_{proj}\) represent linear transformation matrices, \(Scal{e}_{K}, Scal{e}_{V}\) represent the L2 norms of the last dimension of keys and values, respectively, and ‘\(\odot\)’ represents the operation of the Hadamard product.

The FFN is composed of four components: layer normalization (LN), linear layer (Linear), GELU activation function, and residual connection structure. The relevant calculation formulas are presented below.

where \({z}_{l}{\prime},{z}_{l}\in {R}^{\left(C+2\right)\times \left(H\times W\right)}\) represent the intermediate variables and the output of the \(lth\) improved transformer encoder, respectively, and \(L=15\) represents the number of sequentially connected improved transformer encoders.

Classification layers

For the tensor \({z}_{L}\in {R}^{\left(C+2\right)\times \left(H\times W\right)}\) outputed by the improved transformer encoder in series, we perform linear transformation to obtain \({z}_{D}\in {R}^{\left(H\times W\right)}\), and then pass it through a fully connected layer and a SoftMax layer to obtain the final classification result. Figure 2 shows the detailed process.

Experiments results and analysis

Experiment setup

The performance of TPRO-NET was tested on a NVIDIA RTX 4090 using the DEAP dataset35 and the DREAMER dataset36 with the PyTorch framework. The batch size was set to 240, the learning rate was set to 0.001, and the optimizer was AdamW. Additionally, the number of epochs was set to 100 for the DEAP dataset and 50 for the DREAMER dataset. We conducted subject-dependent experiments, where training data and testing data from the same subjects, with five-fold cross-validation, as in many past works1,7,31,39,41,42,43,44,45.

Two types of subject-dependent experiments, where training data and testing data from the same subjects, with five-fold cross-validation1,7,31,39,41,42,43,44,45 were conducted: binary classification and multi-classification. In the first type of subject-dependent experiment, the labels were divided into high and low categories. A threshold of 5 was used for the DEAP dataset (low: < 5, high: \(\ge\)5) and a threshold of 3 was used for the DREAMER dataset (low: < 3, high: \(\ge\)3). The strings ‘lv’, ‘la’, ‘ld’, ‘hv’, ‘ha’ and ‘hd’ represent low valence, low arousal, low dominance, high valence, high arousal and high dominance, respectively. In the second type of experiment, the labels are divided into as many categories as possible. For the DEAP dataset, floating-point labels in the range [1,9] are equally divided into 8 parts to obtain 8 categories. The characters '1', '2', '3', '4', '5', '6', '7', '8' represent the eight intervals [1,2], (2,3], (3,4], (4,5], (5,6], (6,7], (7,8], (8,9] respectively. For the DREAMER dataset, the 5 categories provided by the dataset are directly used. The characters '1', '2', '3', '4', '5' represent the integer labels 1, 2, 3, 4, 5, respectively. Tables 2 and 3 show the sample size of each category for binary classification and multi-class classification in the DEAP and the DREAMER datasets.

We use macro average of accuracy, precision, specificity, recall, and F1-score due to the imbalanced sample distribution to evaluate the model's performance. The formulas of accuracy, precision, specificity, recall and F1-score with parameters of true positives (TP), false positives (FP), true negatives (TN), false negatives (FN) are as follows:

Subject-dependent experiments

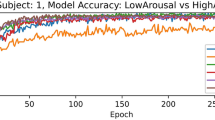

Two types of subject-dependent experiments were conducted: binary classification and multi-classification. Table 4 lists the average experimental outcomes of TPRO-NET on the DEAP and the DREAMER datasets. The binary classification results of TPRO-NET on the DEAP and the DREAMER datasets for each subject are shown in Figs. 5 and 6, respectively, by the blue lines. Similarly, the multiple classification results of TPRO-NET on the DEAP and the DREAMER datasets for each subject are shown in Figs. 7 and 8, respectively, by the blue lines. The confusion matrices presented in Figs. 9, 10, 11, and 12 demonstrate that TPRO-NET has exceptional discrimination capabilities for each category in both two-class and multi-class classifications on the DEAP and the DREAMER datasets.

The experimental results demonstrate that TPRO-NET effectively matches the characteristics of EEG signals to accomplish both multi-classification tasks that reflect subtle changes in emotion and conventional binary classification tasks.

Influence of different types of features on the results

In addition to DE features, we also use a nonlinear transformation as shown in Equation 4 to enhance DE features for better results. To demonstrate the effectiveness of the enhanced DE features, we conducted subject-dependent experiments using the DE features and the enhanced DE features, respectively. In the relevant tables of the experimental results, the expressions"Feature_1" and "Feature_2" represent the DE feature and the enhanced DE feature, respectively.

The results are shown in Table 5, as well as the orange and green lines of Figs. 5, 6, 7, and 8, indicating that there is no significant performance gap between the DE features and the enhanced DE features, and that there is a complementary relationship between them. By comparing the experimental results in Section "Subject-dependent experiments", using both DE features and enhanced DE features can improve the performance of TPRO-NET in most cases.

Ablation study

In order to improve efficiency while maintaining similar performance to transformer encoders, we have made improvements to transformer encoders. To verify the effectiveness of the improved transformer encoder, we conducted ablation experiments. The ablation experiment was conducted using the original transformer encoder instead of the improved transformer encoder. In the tables related to the experimental results, "Original_Transformer" is used to represent the original transformer encoder40.

The results of subject-dependent experiments are shown in the red line of Figs. 5, 6, 7, and 8, as well as Table 6. These results demonstrate that the improved transformer encoder achieves performance comparable to the original transformer encoder and even surpasses it in certain cases. Additionally, we measured the model parameters (Params) and Floating Point Operations (FLOPs) of the original transformer encoder and the improved transformer encoder on TPRO-NET to compare efficiency. When utilizing the original transformer encoder, TPRO-NET has Params of 1.20M and FLOPs of 9.90M. However, when utilizing the improved transformer encoder, TPRO-NET has Params of 1.10M and FLOPs of 9.11M. The results indicate that the improved transformer encoder can enhance computational efficiency by reducing Params and FLOPs by 8.33% and 7.98%.

Experiments across all dimensions

Compared to running the algorithm separately in three dimensions to obtain emotions, running the algorithm once can significantly enhance practicality. To obtain the labels, a binary classification approach (Section 5.1) is used to divide the Valence, Arousal, and Dominance dimensions into high and low categories. The VA model uses two dimensions, Valence and Arousal, to create four labels by combining high and low labels from each dimension. Similarly, the VAD model employs three dimensions of Valence, Arousal, and Dominance to generate eight different labels by combining the high and low labels of different dimensions.

The experimental results of the subject-dependent experiment and the comparison with other advanced methods are shown in Table 7. The symbol '-' signifies that the pertinent data is not reported in the references. The experimental results show that TPRO-NET performs better than the existing advanced methods.

Model efficiency

The Params and FLOPs are used to measure the model efficiency. After the measurement, TPRO-NET has FLOPs of 9.11M and Params of 1.10M. Table 8 shows the results of the comparison with other advanced models in FLOPs and Params. Although the Params in TPRO-NET is not the smallest, the number of FLOPs is the smallest, which can significantly improve efficiency.

Comparison with other advanced peer methods

We compare our method with other advanced methods using the accuracy and standard deviation of the three dimensions of Valence, Arousal, and Dominance. When conducting binary classification, TPRO-NET outperforms other advanced methods. Furthermore, TPRO-NET exhibits strong classification ability in multi-classification scenarios. Table 9 shows the comparison results of advanced algorithms on the DEAP dataset and the DREAMER datasets.

Discussion

In this section, we first summarize the experiments and then discuss some details of the experiment.

The experimental results in Section "Experiments results and analysis" lead to three conclusions:

-

Firstly, using an improved transformer encoder can achieve similar or higher performance with higher efficiency compared to the original transformer encoder.

-

Secondly, the simultaneous use of enhancing DE features and DE features is complementary and can improve the performance of TPRO-NET.

-

Finally, TPRO-NET can recognize more emotions compared to the current advanced methods, reflecting subtle changes in emotion.

Based on these conclusions, TPRO-NET has potential for practical application.

Validation of emotion recognition algorithms based on EEG signals can be subject-dependent or subject-independent. Our article uses the former to verify the effectiveness of TPRO-NET. However, for the latter, when using known emotional data from subjects to predict the emotions of unknown subjects, TPRO-NET performed commonly. We speculate different individuals have different feelings towards the same induced material, which leads to different emotions. TPRO-NET is currently difficult to infer the emotions of unknown individuals using the emotional data of known individuals.

In Eq. 4, we use a simple logarithmic transformation to enhance the differential entropy feature. The results of the experiments in Section "Influence of different types of features on the results" demonstrate that utilizing both features concurrently can enhance performance. Moreover, we utilized the exponential function with a base of e and the linear transformation of 1-M to improve the differential entropy feature. The former result in a decrease in TPRO-NET's performance, while the latter enhance performance but also has the potential to cause training crashes at unpredictable times. Although the use of simple transformations may decrease the computational efficiency of the algorithm, the performance of the algorithm is constantly improving with the development of deep neural networks. It is worth studying how to improve performance by using simple linear and nonlinear transformations. As the reasonable utilization of these transformations is a complex topic, further research will be conducted in the future.

Conclusion

In this article, we propose an EEG signal-based emotion recognition algorithm, TPRO-NET, which uses differential entropy features and enhanced differential entropy features as inputs and obtains the final emotion category through convolutional layers and improved transformer encoders. Experiments on the DEAP and the DREAMER datasets demonstrate that TPRO-NET achieves state-of-the-art results in completing complex multiple emotion classification tasks, distinguishing subtle emotional changes. In addition, the experiments demonstrate the effectiveness of the differential entropy feature enhancement method and the improved transformer encoder for complex sentiment classification tasks. We plan to investigate the issue of TPRO-NET's generally average performance in subject-independent experiments, the impact of simple transformations on existing features and the practical application of TPRO-NET.

Data availability

The DEAP dataset and the DREAMER dataset are both publicly available. They can be accessed from https://www.eecs.qmul.ac.uk/mmv/datasets/deap/ and https://zenodo.org/records/546113, respectively.

Code availability

To enhance academic mutual assistance and benefit, the source code of this work can be shared with the requester for non-commercial, pure scientific research upon reliable inquiry.

References

Li, J. et al. Cross-subject EEG emotion recognition combined with connectivity features and meta-transfer learning. Comput. Biol. Med. 145, 105519 (2022).

Cheng, W. X. et al. EEG-based emotion recognition using random Convolutional Neural Networks. Eng. Appl. Artific. Intell. 116, 105349 (2022).

Bian, Y. et al. Understanding naturalistic facial expressions with deep learning and multimodal large language models. Sensors 24(1), 126 (2014).

Huang, H. et al. An EEG-based brain computer interface for emotion recognition and its application in patients with disorder of consciousness. IEEE Trans. Affect. Comput. 12(4), 832–842 (2019).

Hämäläinen, M. et al. Magnetoencephalography—theory, instrumentation, and applications to noninvasive studies of the working human brain. Rev. Modern Phys. 65(2), 413 (1993).

Liu, S. et al. 3DCANN: A spatio-temporal convolution attention neural network for EEG emotion recognition. IEEE J. Biomed. Health Inf. 26(11), 5321–5331 (2021).

Wei, Y. et al. TC-Net: A transformer capsule network for EEG-based emotion recognition. Comput. Biol. Med. 152, 106463 (2023).

Batty, M. & Taylor, M. J. Early processing of the six basic facial emotional expressions. Cogn. Brain Res. 17(3), 613–620 (2003).

Verma, G. K. & Tiwary, U. S. Affect representation and recognition in 3D continuous valence–arousal–dominance space. Multimed. Tools Appl. 76, 2159–2183 (2017).

Liu, Y. & Sourina, O. Real-time fractal-based valence level recognition from EEG. Transactions on computational science XVIII: Special issue on cyberworlds (Springer, Berlin, 2013).

Petrantonakis, P. C. & Hadjileontiadis, L. J. Emotion recognition from EEG using higher order crossings. IEEE Trans. Inf. Technol. Biomed. 14(2), 186–197 (2009).

Zhang, X. et al. Emotion recognition from multimodal physiological signals using a regularized deep fusion of kernel machine. IEEE Trans. Cybern. 51(9), 4386–4399 (2020).

Duan, R. N., et al. Differential entropy feature for EEG-based emotion classification. In 2013 6th International IEEE/EMBS Conference on Neural Engineering (NER). IEEE (2013).

Gao, Y. et al. EEG emotion recognition based on enhanced SPD matrix and manifold dimensionality reduction. Comput. Biol. Med. 146, 105606 (2022).

Zheng, W. L. & Lu, B. L. Investigating critical frequency bands and channels for EEG-based emotion recognition with deep neural networks. IEEE Trans. Auton. Mental. Dev. 7(3), 162–175 (2015).

Sbargoud, F. et al. WPT-ANN and belief theory based EEG/EMG data fusion for movement identification. Traitement du Signal 36(5), 383–391 (2019).

Li, C. et al. EEG-based emotion recognition via transformer neural architecture search. IEEE Trans. Ind. Inf. 19(4), 6016–6025 (2022).

Xu, Y. et al. AMDET: Attention based multiple dimensions EEG transformer for emotion recognition. IEEE Trans. Affect. Comput. https://doi.org/10.1109/TAFFC.2023.3318321 (2023).

Gong, L. et al. EEG emotion recognition using attention-based convolutional transformer neural network. Biomed. Signal Process. Control 84, 104835 (2023).

Lin, X. et al. EEG emotion recognition using improved graph neural network with channel selection. Comput. Methods Programs Biomed. 231, 107380 (2023).

Yin, Y. et al. EEG emotion recognition using fusion model of graph convolutional neural networks and LSTM. Appl. Soft Comput. 100, 106954 (2021).

Li, C. et al. EEG-based emotion recognition via efficient convolutional neural network and contrastive learning. IEEE Sensors Journal 22(20), 19608–19619 (2022).

Song, T. et al. EEG emotion recognition using dynamical graph convolutional neural networks. IEEE Trans. Affect. Comput. 11(3), 532–541 (2018).

Zhong, M. et al. EEG emotion recognition based on TQWT-features and hybrid convolutional recurrent neural network. Biomed. Signal Process. Control 79, 104211 (2023).

Guo, W. et al. Multi-source domain adaptation with Spatio-temporal feature extractor for EEG emotion recognition. Biomed. Signal Process. Control 84, 104998 (2023).

Sun, Y., et al. Retentive network: A successor to transformer for large language models (2023). http://arxiv.org/abs/2307.08621v1.

Ren, H. et al. Combiner: Full attention transformer with sparse computation cost. Adv. Neural Inf. Process. Syst. 34, 22470–22482 (2021).

Choo, S. et al. Effectiveness of multi-task deep learning framework for EEG-based emotion and context recognition. Expert Syst. Appl. 227, 120348 (2023).

Liu, S. et al. EEG emotion recognition based on the attention mechanism and pre-trained convolution capsule network. Knowl-Based Syst. 265, 110372 (2023).

Lin, K. et al. DSE-Mixer: A pure multilayer perceptron network for emotion recognition from EEG feature maps. J. Neurosci. Methods 401, 110008 (2024).

Tang, Y. et al. STILN: A novel spatial-temporal information learning network for EEG-based emotion recognition. Biomed. Signal Process. Control 85, 104999 (2023).

Guo, W. et al. Horizontal and vertical features fusion network based on different brain regions for emotion recognition. Knowl-Based Syst. 247, 108819 (2022).

Yan, Y., et al. Topological EEG nonlinear dynamics analysis for emotion recognition. IEEE Trans. Cogn. Dev. Syst. (2022).

Liu, J., et al. CR-GAT: Consistency regularization enhanced graph attention network for semi-supervised EEG emotion recognition. In 2022 IEEE International Conference on Bioinformatics and Biomedicine (BIBM). IEEE (2022).

Koelstra, S. et al. Deap: A database for emotion analysis; using physiological signals. IEEE Trans. Affect. Comput. 3(1), 18–31 (2011).

Katsigiannis, S. & Ramzan, N. DREAMER: A database for emotion recognition through EEG and ECG signals from wireless low-cost off-the-shelf devices. IEEE J. Biomed. Health Inf. 22(1), 98–107 (2017).

Mert, A. & Akan, A. Emotion recognition based on time–frequency distribution of EEG signals using multivariate synchrosqueezing transform. Digit Signal Process. 81, 106–115 (2018).

Aftanas, L. I. et al. Analysis of evoked EEG synchronization and desynchronization in conditions of emotional activation in humans: temporal and topographic characteristics. Neurosci. Behav. Physiol. 34, 859–867 (2004).

Liu, S. et al. GLFANet: A global to local feature aggregation network for EEG emotion recognition. Biomed. Signal Process. Control 85, 104799 (2023).

Ashish, V. et al. Attention is all you need. Adv. Neural Inf. Process. Syst. 30, 6000 (2017).

Vempati, R. & Sharma, L. D. EEG rhythm based emotion recognition using multivariate decomposition and ensemble machine learning classifier. J. Neurosci. Methods 393, 109879 (2023).

Rao, W. & Zhong, S. H. EEG model compression by network pruning for emotion recognition. In 2023 International Joint Conference on Neural Networks (IJCNN). IEEE (2023).

Li, W. et al. Fractal spiking neural network scheme for EEG-based emotion recognition. IEEE J. Trans. Eng. Health Med. 12, 106 (2024).

Li, C. et al. Emotion recognition from EEG based on multi-task learning with capsule network and attention mechanism. Comput. Biol. Med. 143, 105303 (2022).

Li, C. et al. EEG-based emotion recognition via neural architecture search. IEEE Trans. Affect. Comput. 19, 6016–6025 (2021).

Funding

Open Access funding enabled and organized by Projekt DEAL. The APC was funded by the Open Access Initiative of the University of Bremen and the DFG via SuUB Bremen. This research was also supported by National Key R&D Program of China (2023YFC3604804) and Key R& D Program of Jiangsu Province (BE2022064-2).

Author information

Authors and Affiliations

Contributions

X.Z.: Conceptualization, Methodology, Formal analysis, Writing—Original, Visualization. Draft. X.C.: Supervision, Funding acquisition. H.L.: Writing—Review & Editing, Funding acquisition.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Zhang, X., Cheng, X. & Liu, H. TPRO-NET: an EEG-based emotion recognition method reflecting subtle changes in emotion. Sci Rep 14, 13491 (2024). https://doi.org/10.1038/s41598-024-62990-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-62990-4

Keywords

This article is cited by

-

Video-dominant emotion recognition for portable EEG-based devices

Scientific Reports (2026)

-

A deep learning approach to emotionally intelligent AI for improved learning outcomes

Scientific Reports (2026)

-

A multi-task EEG emotion recognition method based on emotion-dimension coupling constraints

Scientific Reports (2026)

-

Analysis of the generalization ability of graph neural networks in cross-subject EEG emotion recognition

Neurological Sciences (2026)

-

EEG-EmotionActivityNet: a deep learning approach for emotion and human activity classification

International Journal of Information Technology (2025)