Abstract

Human-Computer Interaction (HCI) is a multidisciplinary field focused on designing and utilizing computer technology, underlining the interaction interface between computers and humans. HCI aims to generate systems that allow consumers to relate to computers effectively, efficiently, and pleasantly. Multiple Spoken Language Identification (SLI) for HCI (MSLI for HCI) denotes the ability of a computer system to recognize and distinguish various spoken languages to enable more complete and handy interactions among consumers and technology. SLI utilizing deep learning (DL) involves using artificial neural networks (ANNs), a subset of DL models, to automatically detect and recognize the language spoken in an audio signal. DL techniques, particularly neural networks (NNs), have succeeded in various pattern detection tasks, including speech and language processing. This paper develops a novel Coot Optimizer Algorithm with a DL-Driven Multiple SLI and Detection (COADL-MSLID) technique for HCI applications. The COADL-MSLID approach aims to detect multiple spoken languages from the input audio regardless of gender, speaking style, and age. In the COADL-MSLID technique, the audio files are transformed into spectrogram images as a primary step. Besides, the COADL-MSLID technique employs the SqueezeNet model to produce feature vectors, and the COA is applied to the hyperparameter range of the SqueezeNet method. The COADL-MSLID technique exploits the SLID process’s convolutional autoencoder (CAE) model. To underline the importance of the COADL-MSLID technique, a series of experiments were conducted on the benchmark dataset. The experimentation validation of the COADL-MSLID technique exhibits a greater accuracy result of 98.33% over other techniques.

Similar content being viewed by others

Introduction

The information in the HCI is performed in two ways: through images and audio. For instance, users interact with graphical user interfaces (GUIs) through visual elements such as icons and menus. At the same time, voice-activated assistants like Siri and Alexa employ audio input for user commands and responses. The user implements numerous tasks simultaneously1. When multiple tasks require simultaneous user resources, it can result in interference that improves psychological pressure. For instance, an interface depending on natural language (refers to human language used for communication) to retrieve data from a database can ease this strain by allowing users to input data verbally. Subsequently, it presents the processed data through a visual interface, effectively reducing cognitive load and enhancing user experience2. This method permits more interaction between the user and the computer. The challenges in allowing these regular communications frequently resulted in these ways being considered, at best, error-prone substitutes to “traditional” input or output methods3. However, this should lead to continuing speech interaction (communication with devices using spoken language), as people increasingly find themselves in situations where hands- and eyes-free interaction with computational devices is necessary. Additionally, accomplishing highly accurate speech processing, which involves analyzing and interpreting spoken language, is a great objective that will often be nothing small of a fairy tale4. There is essential research showing that correct interaction strategy can match speech processing in a manner that rewards for its lesser than precise accuracy. In several tasks where users communicate with spoken data, verbatim speech transcription will only sometimes be relevant5. SLI is the method of recognition of the language spoken in an utterance. Automated language recognition is the difficulty of detecting the language spoken from an instance of speech by a speaker. According to speech identification, humans have primary accurate language identification (LID) methodologies6.

Numerous applications of SLI include making the front ends for multi-language speech recognition methods, automatic customer routing in web data retrieval, monitoring, and call centres. The SLID model has three essential processes: language classification, feature removal, and data collection. Various techniques have been developed to resolve the issues of automated LID using the acoustic phonetics method7. Reinforcement in the DL domain has permitted research workers to employ Generative Adversarial Networks (GANs) for LID strength (are DL models for realistic data generation, while LID comprises determining the spoken language from audio samples) in semi-supervised and unsupervised tasks. Support vector machine (SVM) methods need to be performed better under short statements, providing decreased accuracy. Traditional recognition systems are maintained in i-vector systems, which is a probabilistic speaker representation technique for SLI tasks to be ineffective8. DL and ANN have recently become the best in class for pattern recognition problems. Numerous computer vision (CV) tasks, such as Image Classification, demonstrate improved performance employing Deep NNs (DNNs). SLI could be represented as the task of SLI in some specified utterance. While exploring advances in ASR, an LID method must be significant for some multilingual speech recognition techniques. When there is multilingual speech recognition, the precision of the speech recognizer model will be increased by employing the LID method at the front end9. It minimizes complexity by directly processing speech using the recognized language instead of executing speech in numerous languages. The motivation stems from detecting gaps or challenges in existing knowledge or practices, driving studies to seek novel solutions or enhancement. This comprises addressing practical problems, enhancing efficiency or effectiveness in a specific field, contributing to theoretical frameworks, or improving understanding in growing areas. Motivation often includes the desire to make meaningful impacts, solve real-world problems, or explore novel concepts that can lead to practical applications or theoretical enhancements in the chosen discipline10.

This paper develops a novel Coot Optimizer Algorithm with a DL-Driven Multiple SLI and Detection (COADL-MSLID) technique for HCI applications. The COADL-MSLID approach aims to detect multiple spoken languages from the input audio regardless of gender, speaking style, and age. In the COADL-MSLID technique, the audio files are transformed into spectrogram images as a primary step. Besides, the COADL-MSLID technique employs the SqueezeNet model to produce feature vectors, and the COA is applied to the hyperparameter range of the SqueezeNet method. The COADL-MSLID technique exploits the SLID process’s convolutional autoencoder (CAE) model. To underline the importance of the COADL-MSLID technique, a series of experiments were conducted on the benchmark dataset. The novel contributions of the COADL-MSLID technique are as follows:

-

The COADL-MSLID approach converts audio into spectrogram images, which enables the capture of intricate time-frequency representations needed for robust language detection. This transformation accommodates several speech characteristics, enhancing the ability of the model to discriminate subtle linguistic changes across several speakers and environmental conditions. By employing spectrogram images, the approach efficiently preprocesses audio data to extract informative features that enhance the accuracy and reliability of language detection tasks.

-

The utilization of SqueezeNet for feature extraction enables the creation of compact and discriminative feature vectors, enhancing computational efficiency and conserving significant linguistic attributes across various input scenarios. This approach streamlines complex data processing, ensuring the model’s effectiveness captures significant features essential for accurate analysis and classification of spoken languages. By utilizing SqueezeNet, the study improves the speed and accuracy of language feature extraction, which is crucial for robust performance in diverse linguistic environments.

-

Implementing a CAE model improves MSLID by employing unsupervised learning to learn hierarchical language features, enhancing generalization across languages and speaker characteristics. This method strengthens the approach’s capability to discriminate and classify spoken languages precisely, adapting dynamically to various linguistic discrepancies and speaker profiles. Using CAE, the COADL-MSLID method improves the robustness and reliability of MSLID models, achieving superior performance in real-world language recognition tasks.

-

The COADL-MSLID model presents a novel approach to optimize the SqueezeNet method for language identification tasks by harnessing the COA technique for hyperparameter tuning. COA innovatively adapts the method’s parameters to achieve peak performance among various linguistic and acoustic environments, outperforming conventional tuning techniques. This novel methodology improves the adaptability and accuracy of the model, ensuring robust performance in detecting spoken languages across a wide range of conditions and scenarios.

Literature works

Exarchos et al.11 introduced an innovative technique by integrating 3D-CNNs and LSTM networks to undertake the complex word identification process from lip actions. The introduced method leverages an accurately indexed dataset called MobLip, which includes ecological conditions, speakers, and numerous speech patterns. In12, a dataset and CNN technique-based sign language interface model was designed to interpret gestures of sign language and hand position to natural language. The NN was created with the CNN method, which improves the predictableness of the American Sign Language alphabet (ASLA). Islam et al.13 introduced a solution that can be a stacked encoded system, incorporating AI with the IoT that improves feature extraction and classification for overcoming such problems. The system leverages a lightweight backbone system for the primary feature extractor and utilizes a stacked autoencoder (SAE) to enhance such features further. The introduced method connects the scalability of big data. In14, three stages of a CNN method-based Qur’anic sign LID approach were developed. Images have been trained for dynamic and static gesture identification training at the primary stage. At the secondary stage, making images expands databases. During the last stage, the CNN-based DL method was utilized to extract and categorize the features.

Yirtici and Yurtkan15 introduced an innovative technique for identifying Turkish Sign Language (TSL) types. The method was examined using captured images comprising signs that must be removed from video data. In this method, Alexnet was deployed as a pre-trained network. An R-CNN object detector was employed to train the new system. The knowledge of the NN was forwarded by using the transfer learning (TL) model and tuned to identify TSL. In16, an automatic method was developed, proficient in recognizing particular Bangla Sign Language (BdSL) words in overcoming the critical gap in the analysis of sign language recognition and detection. The introduced method leverages DL techniques and TL values to produce the textual representations in real-time through webcam-captured hand gestures. Bansal et al.17 projected an automatic hashtag recommendation model termed TAGALOG. The technique implements language- and user-guided attention mechanisms for extracting significant features at lower-resource tweets by the user’s contemporary and language preferences. A graph-based NN was also developed to extract the posting behaviour of users by relating previous tweets of specific clients and language relationships by connecting tweets.

Das et al.18 presented a hybrid method containing a deep-TL (DTL)-based CNN (DL-CNN) technique with an RF method for the automated identification of BdSL (alphabets and numeric values). Also, this developed technique suggested a background removal method, which could remove undesired features in the sign images. Alshehri et al.19 introduce a model by combining DL with the Hopfield Recurrent NN-Grasshopper Optimization Algorithm (HRNN-GOA) for sequential learning of digit recognition. Traditional Haralick features are also extracted to complement texture-based data from input images. Subramanian and Aruchamy20 present a model to classify diverse speech emotions. Initially, preprocessing is performed to remove noise. The model also incorporates speech attributes depending on energy and phase with existing features to evaluate emotional behaviours. Furthermore, statistical techniques utilize a threshold-based feature selection (TFS) model to optimize feature selection. Aslam, Abid, and Munir21 developed a method employing DL and BERT frameworks. The NLP model serves as a mechanism to generate frequent and precise responses. Al Khuzayem et al.22 proposes a mobile application. The prototype is an Android-based mobile application that utilizes DL models for translating isolated SSL to text and audio and comprises unique features not available in other associated applications targeting ArSL.

The existing studies present novel approaches and models with specific limitations such as combining 3D-CNNs and LSTM for word detection from lip actions, a CNN-based sign language interface model facing difficulties in detecting subtle gesture discrepancies, stacked encoded systems for AI and IoT facing complexity in real-time feature extraction, a CNN-based Qur’anic sign LID model lacking scalability to other sign languages, detection of TSL constrained by dataset diversity, automatic BdSL word detection potentially missing gesture variability, TAGALOG for hashtag recommendation lacking adaptability to various social media trends, DTL-CNN and RF for BdSL facing challenges in handling linguistic complexity, DL with HRNN-GOA for digit recognition restricted by computational resources, speech emotion classification potentially missing cultural complexities, DL and BERT techniques restricted by dataset quality, and a proposed mobile app facing threats in user adoption and technology integration. The research gap is in effectively addressing the variability and complexity intrinsic in real-world applications of language and gesture detection systems, specifically in diverse and dynamic environments.

The proposed method

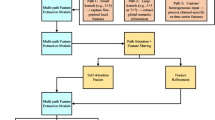

This paper presents an innovative COADL-MSLID approach for HCI applications. The COADL-MSLID approach aims to detect multiple spoken languages from the input audio regardless of gender, speaking style, and age. The COADL-MSLID technique uses different procedures, such as SqueezeNet-based feature extraction, COA-based hyperparameter tuning, and CAE-based classification process. Figure 1 depicts the complete workflow of the presented COADL-MSLID technique.

SqueezeNet model

The COADL-MSLID technique employs the SqueezeNet model to produce feature vectors23. As a lightweight convolutional NN (CNN), SqueezeNet was modernized on the foundation of AlexNet. SqueezeNet is a compact CNN approach designed to achieve high performance with fewer parameters, making it efficient for applications with limited computational resources. Its key innovation is utilizing fire modules, substantially reducing model size while maintaining accuracy in image classification tasks. Its capability to balance computational efficiency with competitive performance justifies the technique’s suitability over others. It is ideal for scenarios where computational resources are constrained, or rapid inference is significant, such as embedded systems or real-time applications. Figure 2 illustrates the architecture of the SqueezeNet model.

A technique with fewer parameters has been constructed to certify similar accuracy. Fire Module was presented as the simple network unit, and then the feature map was compacted and merged in size, creating the model parameters compacted well.

Simultaneously, the network model is robust. The basic building block of the SqueezeNet network is a modularized convolutional system recognized as the fire module. The Fire module mainly contains dual layers of convolutional processes. The squeeze is the first layer using a 1 × 1 convolution kernel. The expand is the second layer, which integrates the usage of 1 × 1 and 3 × 3 convolutional kernels. The Fire module is an essential module of the SqueezeNet structure, intended to remove valuable features from input data well. It contains a mixture of squeeze and expands processes designed to decrease the number of input networks and then increase the backbone to take more complex patterns. The squeezing process uses 1 × 1 convolutional to an input, efficiently decreasing the channel numbers. Then, the expanding process contains 1 × 1 and 3 × 3 convolutional that upsurge the channel numbers, permitting more complete and discriminative features. The SqueezeNet structure integrates complex bypass links to simplify data flow over the system. These connections bypass manifold layers, allowing the straight transmission of data across dissimilar stages of generalization. The complex bypass connections are expressed below: Assume that X is the input feature map, and F() is the transformation functional to X. The connection of bypass is conveyed as:

Here, Y signifies the output, and + represents element-wise addition. This formula certifies that the original input features are joined with the altered feature, maintaining significant data while allowing for the combination of more difficult representations. By employing the Fire Module and inserting complex bypass connections, the SqueezeNet structure can effectively feature extraction.

Hyperparameter tuning using COA

At this step, the COA is applied to the hyperparameter range of the SqueezeNet model. The most commonly identified meta-heuristic optimizer algorithm is called the COOT method24. Figure 3 shows the steps involved in COA.

Steps involved in COA

The COA technique begins by initializing a population of potential solutions, which are later computed based on a specified fitness function (FF) to determine their efficiency in solving the optimization problem. Through iterative processes inspired by the behaviours of coots, COA explores and refines these solutions, balancing between exploration to discover various areas of the solution space and exploitation to concentrate on promising regions for optimal outcomes. This iterative refinement continues until a stopping criterion is met, such as achieving a satisfactory level of solution quality or attaining a maximum number of iterations. The objective of the COA model is to effectively navigate intrinsic optimization landscapes, giving a robust performance compared to conventional algorithms by employing insights from natural behaviours.

Also, the selection of the COA model over other techniques is due to the method’s efficiency in effectively exploring and exploiting the search space with inspiration from natural behaviours, which can lead to finding global optima efficiently. The iterative enhancement process of the COA and adaptive mechanisms make it robust against getting trapped in local optima, giving a competitive performance in optimization tasks related to conventional methodologies such as genetic algorithms or gradient-based methods. Also, metaphorical descriptions of coot bird behaviour in COA improve solution diversity and global search capabilities, yet empirical validation is significant for performance metrics, namely convergence speed and scalability. This makes COA particularly appropriate for complex optimization problems where conventional methods may struggle to converge or require extensive computational resources.

Hyperparameter tuning

This model imitates the movement and behaviour of coot birds on the water surface in search of foodstuff or a place. Generally, coots travel at an incline near the way of effort and arise with comfort from sea scoters and an area of conflict. The coot group action contains three movements: random action, chain action, and coordinated movement. The coot birds concentrate on the food; some work as leaders by stepping in front of the cluster. The four dissimilar performances of coots on the aquatic are given as follows.

-

Random movement.

-

Chain movement.

-

Altering the location by following the group leader.

-

The leader must guide the group to the best position.

In all meta-heuristic optimizer models, the procedure starts with the stochastic population. Equation (2) is employed to create an early random population.

Here, \(CootPos \left(j\right)\) specifies the location of the coot, \(d\) denotes the dimension, \({x}_{\text{m}\text{i}\text{n}}\) is the minimum, and \({X}_{\text{m}\text{a}\text{x}}\) represents the maximum of the search space.

By monitoring growth, every coot’s fitness value is defined, and then NLC group leaders are nominated randomly. The following are descriptions of fourcoots’ actions on the water.

(1) Random movement

This movement originated utilizing Eq. (4) in the search area and transports the effort randomly.

Coot examines the search area in numerous positions. Such movement will permit the optimizer model to evade the local minima when it gets stuck. The Eq. (5) is employed to define the coot’s novel location.

Here, \({R}_{2}\) belongs to \(\left[\text{0,1}\right],\)\({and A}_{1}\) is estimated by Eq. (6).

On the other hand, \(t\) and \(T\) denote the present and total iteration count.

(2) Chain movement

As per the current research, the average position is concluded through chain movement. They were employed to upgrade the coot’s location, which was completed utilizing Eq. (7).

\(CootPos\left(j\right)\) is \({j}^{th}\) the coot location, and \(CootPos\left(j-1\right)\) is the previous coot of \(CootPos\left(j\right)\).

(3) Changing the position following the group leaders

Equation (8) is employed to upgrade the coot’s movement through this stage.

Whereas \(j\) denotes the index amount of the existing coot, \(NLC\) is the leader coot count, and \(K\)indicates the index. During this movement, the \({i}^{th}\) coot must upgrade its place, dependent upon the foremost coot, \(K\). The upgraded form is given below:

Here, \(CootPos \left(j\right)\) designates the existing location of the coot, \(LCP\left(k\right)\) is the chosen leader, \({R}_{1} \in \left[{0,1}\right],\pi =3.14\), and \(R\in \left[-{1,1}\right].\)

(4) The leaders should direct the group to the perfect location

To create a group of coots to travel near the best location, the leader coots must upgrade their place near the target. Equation (10) is used to attain the last objective of upgrading the leader coot locations.

Whereas \(Gbest\) denotes the finest location of the coot, \({R}_{3}\) and \({R}_{4}\) are random numbers among \(\left[{0,1}\right],\)\(\pi =3.14,\)\(R\) is in the domain1, and \({B}_{1}\) is defined by employing Eq. (11).

Here, \(T\) and \(t\) denote the total and current iterations count.

The COA model originates an FF to conquer upgraded classifier solutions. It decides a positive number to indicate the greater accomplishment of the candidate’s result. This paper measures the classifier error rate minimization as FF is assumed in Eq. (12).

CAE-based classification process

For the SLID process, the COADL-MSLID technique exploits the CAE model25. This model was chosen over other approaches for its efficiency in capturing spatial dependencies and hierarchical patterns inherent in data. Unlike conventional autoencoders, CAEs utilize convolutional layers appropriate for handling complex data structures, namely images, where local correlations between pixels are significant. This architecture allows the CAE to effectively learn meaningful representations by exploiting the spatial locality of features.

In the context of various language characteristics, CAEs can be adapted to process textual data by treating text as sequences of characters or words and applying convolutional operations across these sequences to capture syntactic and semantic patterns effectively. This ability makes CAEs adaptable for text generation, sentiment analysis, or machine translation tasks. Moreover, CAEs portray robust noise resilience in audio data processing. CAEs can denoise signals and extract relevant features despite background noise or distortions by encoding raw audio spectrograms or waveforms into latent representations. This capability is substantial for speech recognition, audio classification, and enhancement applications.

Furthermore, CAEs exhibit scalability to real-world HCI applications by effectually processing and analyzing visual, textual, or auditory inputs. This scalability enables CAEs to support several HCI tasks, such as gesture recognition from video streams, natural language understanding in virtual assistants, or emotion detection from speech signals. Thus, the CAE’s ability to handle several data types, resilience to noise, and scalability make it a powerful option for applications demanding robust feature extraction and representation learning.

AE is a self-supervised learning system comprising a decoder and an encoder for extracting deep features. This includes NNs. The AE is an ANN that contains a successively linked 3-layer planning, namely the hidden layer (HL), input, and output layer, where entire layers function in an unsupervised learning model. The AE is frequently employed for data groups or as a generative method, where its training process contains dual stages: first is encoding, where the input data are mapped into the HL, whereas second is decoding, where the input data are rebuilt from the HL. In encoding, the model absorbs a compacted representation or hidden variables of the input. Meanwhile, in decoding, the method rebuilds the objective from the compacted representation throughout the encoding phase. Figure 4 demonstrates the infrastructure of CAE.

Set an unlabelled input database \({X}_{n}\), whereas \(n={1,2},\dots ,N\) and \({x}_{n}\in {R}^{m}\), the dual stages can be expressed as below:

Meanwhile, \(h\left(x\right)\) signifies the encoder vector intended from input vector \(x\), and X refers to the decoder. Furthermore, \(g\) represents the decoding function, \(f\) denotes the encoding function, \({W}_{1}\),\({ W}_{2}\), and \({b}_{1}, and {b}_{2}\)refers to the encoder and decoder’s weight matrix and bias vector. The dissimilarity between the output and input is generally called reconstruction error. This technique attempts to decrease it during training, e.g., to diminish \(\left|\right|x-\widehat{x}|{|}^{2}\). Stacking many \(AE\) layers is probable such that beneficial higher-level features are attained, with few abilities like invariance and abstraction. A low error reconstruction will be achieved, so superior simplification is estimated.

CAE is a category of AE that integrates Conv kernels with NNs26. 1D CAE has robust reconstruction capability and efficiently extracts deep features in the higher-dimension data.

Convolutional layer: In a 1D input variable \(X\in {R}^{L}\), the 1D Conv layer employs \(K\) convolutional kernels \({\omega }_{i}\in\)\({R}^{w}(i={1,2}, \dots , K)\) of width \(w\) to execute Conv functions on it, given in Eq. (15).

Now,\(b\) denotes the bias, \(\odot\) indicates the Conv computation of the convolution kernel and input variable\(, and f\) describes the activation function.

Pooling layer: In 1D input variable \(T\in {R}^{K\odot L}\), the wide-ranging usage of\(\text{m}\text{a}\text{x}\)pooling leads to Eq. (16) next to the pooling method.

whereas\(S\) refers to the stride, \(W\) describes the pooling window width, and \({T}_{i}\) denotes the \(i‐th\) feature tensor.

Result analysis and discussion

In this section, the SLID outcomes of the COADL-MSLID methodology are studied under the Kaggle dataset27. It has 300 samples with three classes, as described in Table 1.

Figure 5 displays the confusion matrices achieved by the COADL-MSLID approach at 80:20 and 70:30 of TRAPH/TESPH. These results denote that the COADL-MSLID approach has effective recognition under all three classes.

The SLID results of the COADL-MSLID technique with 80%TRAPH and 20%TESPH are portrayed in Table 2; Fig. 6. These experimentation outcomes underlines that the COADL-MSLID method effectually recognizes multiple spoken languages. With 80%TRAPH, the COADL-MSLID approach gains an average at \(acc{u}_{y}\) of 98.33%, \(pre{c}_{n}\) of 97.46%, \(sen{s}_{y}\) of 97.45%, \(spe{c}_{y}\) of 98.76%, and \({F}_{score}\) of 97.45%. Additionally, with 20%TESPH, the COADL-MSLID approach provides an average at \(acc{u}_{y}\) of 97.78%, \(pre{c}_{n}\) of 96.39%, \(sen{s}_{y}\) of 97.33%, \(spe{c}_{y}\) of 98.43%, and \({F}_{score}\) of 96.76%.

The SLID outcomes of the COADL-MSLID method with 70%TRAPH and 30%TESPH have been reported in Table 3; Fig. 7. These experimentation outputs highlight that the COADL-MSLID method successfully recognizes numerous spoken languages. According to 70%TRAPH, the COADL-MSLID method achieves an average at \(acc{u}_{y}\) of 97.46%, \(pre{c}_{n}\) of 96.13%, \(sen{s}_{y}\) of 96.13%, \(spe{c}_{y}\) of 98.11%, and \({F}_{score}\) of 96.13%. Additionally, with 30%TESPH, the COADL-MSLID approach offers an average \(acc{u}_{y}\) of 96.30%, \(pre{c}_{n}\) of 94.59%, \(sen{s}_{y}\) of 94.49%, \(spe{c}_{y}\) of 97.26%, and \({F}_{score}\) of 94.39%, respectively.

The effectiveness of the COADL-MSLID method at 80%TRAPH and 20%TESPH is graphically demonstrated in Fig. 8 under training accuracy (TRAA) and validation accuracy (VALA) curves. The figure exhibits the behaviour of the COADL-MSLID method over diverse epochs, indicating its learning process and generalization capabilities. Notably, the figure depicts a constant enhancement in the TRAA and VALA with an epoch surge. It ensures the adaptive characteristics of the COADL-MSLID model in the pattern detection process under TRA/TES data. The growth in VALA depicts the ability of the COADL-MSLID model to adapt to the TRA data and excel in providing correct classification of unnoticed data, accentuating robust generalization capabilities.

Figure 9 illustrates the training loss (TRLA) and validation loss (VALL) results of the COADL-MSLID method at 80%TRAPH and 20%TESPH over distinct epochs. The progressive reduction in TRLA emphasizes the COADL-MSLID method, which enhances the weights and minimizes the classification error under the TRA/TES data. The figure comprehends the COADL-MSLID technique associated with the TRA data, highlighting its superiority in capturing patterns within both datasets. The COADL-MSLID model constantly enhances its parameters to reduce the differences between the prediction and real TRA classes.

Inspecting the PR curve, as displayed in Fig. 10, the results ensured that the COADL-MSLID model at 80%TRAPH and 20%TESPH increasingly obtains boosted PR values at all classes. It verifies the COADL-MSLID model’s improved abilities in identifying distinct classes, showing proficiency in the recognition of classes.

Likewise, in Fig. 11, ROC curves created by the COADL-MSLID model at 80%TRAPH and 20%TESPH outperformed in classifying distinct labels. It thoroughly explains the trade-off between TPR and FRP over distinct recognition threshold values and epoch counts. The figure emphasized the enhanced classifier results of the COADL-MSLID method with each class, outlining the effectiveness in addressing several classification issues.

In Table 4, a detailed comparative outcome is studied well28. Figure 12 exhibits a comparison assessment of the COADL-MSLID technique for \(acc{u}_{y}\) and \(pre{c}_{n}\). These obtained outcomes infer that the COADL-MSLID technique gains superior performance. Based on \(acc{u}_{y}\), the COADL-MSLID technique reaches an increased \(acc{u}_{y}\) of 98.33% while the PLDA LR, CNN, Context-aware, ConvNets, Inceptionv3 CRNN, and CapsNet techniques have obtained reduced \(acc{u}_{y}\) values of 91.20%, 96.00%, 97.35%, 97.00%, 95.40%, 96.00%, and 98.20%, respectively. Meanwhile, based on \(pre{c}_{n}\), the COADL-MSLID method gets a higher \(pre{c}_{n}\) of 97.46%, whereas the PLDA LR, CNN, Context-aware, ConvNets, Inceptionv3 CRNN, and CapsNet techniques provide minimized \(pre{c}_{n}\) values of 96.54%, 92.52%, 96.73%, 95.13%, 96.08%, 96.40%, and 95.11%, correspondingly.

An extensive comparative outcome of the COADL-MSLID method to \(sen{s}_{y}\) and \(spe{c}_{y}\) is described in Fig. 13. These experimentation findings denote that the COADL-MSLID method achieves excellent performance. According to \(sen{s}_{y}\), the COADL-MSLID technique gets an improved \(sen{s}_{y}\) of 97.45%, although the PLDA LR, CNN, Context-aware, ConvNets, Inceptionv3 CRNN, and CapsNet models acquired decreased \(sen{s}_{y}\) values of 95.99%, 92.78%, 94.72%, 96.54%, 95.51%, 93.96%, and 94.48%. Similarly, based on \(spe{c}_{y}\), the COADL-MSLID technique provides a higher \(spe{c}_{y}\) of 98.76%. At the same time, the PLDA LR, CNN, Context-aware, ConvNets, Inceptionv3 CRNN, and CapsNet models have attained diminished \(spe{c}_{y}\) values of 97.09%, 97.62%, 95.97%, 97.35%, 94.76%, 95.30%, and 94.26%, respectively. Thus, the COADL-MSLID technique can be applied to improve the SLID process.

Conclusion

In this paper, an innovative COADL-MSLID model is presented for HCI applications. The COADL-MSLID technique aims to detect multiple spoken languages from the input audio regardless of gender, speaking style, and age. To accomplish that, the COADL-MSLID technique has different procedures, such as a SqueezeNet-based feature extractor, COA-based hyperparameter tuning, and CAE-based classification process. Besides, the COADL-MSLID approach employs the SqueezeNet method to produce feature vectors, and the COA is applied to the hyperparameter range of the SqueezeNet technique. For the SLID process, the COADL-MSLID technique exploits the CAE model. To underline the importance of the COADL-MSLID technique, a series of experiments were made on the benchmark dataset. The experimentation values emphasized that the COADL-MSLID approach attains enhanced performance over other methods in dissimilar procedures. The CAE model for language detection may face challenges with scalability and generalization across various linguistic characteristics, genders, speaking styles, and ages. Another limitation of the COADL-MSLID approach could be its dependency on spectrogram resolution and quality, which may affect its accuracy across diverse recording conditions. Future studies may concentrate on developing adaptive methods for spectrogram preprocessing to improve robustness and performance consistency in varied acoustic environments. Moreover, exploring TL models could further enhance the ability of the model to generalize across various speaking styles and demographic factors. Future enhancements could explore more robust preprocessing methods for spectrograms, consider ensemble models for model fusion, and address potential biases in the training data to enhance comprehensive accomplishment and adaptability in real-world scenarios.

Data availability

The data that support the findings of this study are openly available in Kaggle repository at https://www.kaggle.com/datasets/toponowicz/spoken-language-identification.

References

Štuikys, V. & Burbaitė, R. Speech recognition technology in K–12 STEM-driven computer science education. In Evolution of STEM-Driven Computer Science Education: The Perspective of Big Concepts 275–309 (Springer Nature Switzerland, 2024).

Borch, C. & Hee Min, B. Toward a sociology of machine learning explainability: Human–machine interaction in deep neural network-based automated trading. Big Data Soc. 9(2), 20539517221111361 (2022).

Tesema, F. B. et al. Addressee detection using facial and audio features in mixed human–human and human–robot settings: a deep learning framework. IEEE Syst. Man. Cybern. Mag. 9(2), 25–38 (2023).

Ding, Z., Ji, Y., Gan, Y., Wang, Y. & Xia, Y. Current status and trends of technology, methods, and applications of human–computer Intelligent Interaction (HCII): a bibliometric research. Multimedia Tools Appl.83, 69111–69144 (2024).

Lu, Y. et al. Decoding lip language using triboelectric sensors with deep learning. Nat. Commun. 13(1), 1401 (2022).

Siddique, S. et al. Deep learning-based Bangla sign language detection with an edge device. Intell. Syst. Appl. 18, 200224 (2023).

Chu, L., Liu, Y., Zhai, Y., Wang, D. & Wu, Y. The use of deep learning integrating image recognition in language analysis technology in secondary school education. Sci. Rep. 14(1), 2888 (2024).

Wang, X. & Smith, S. Design of network English autonomous learning education system based on human-computer interaction. Front. Psychol. 13, 989884 (2022).

Ma, Y., Zhang, L. & Wang, X. February. Natural language understanding and interaction engine oriented to human-computer interaction based on neural network. In Third International Conference on Computer Vision and Data Mining (ICCVDM 2022) (Vol. 12511, 781–786) (SPIE, 2023).

Mohsin, S., Salim, B. W., Mohamedsaeed, A. K., Ibrahim, B. F. & Zeebaree, S. R. American sign language recognition based on transfer learning algorithms. Int. J. Intell. Syst. Appl. Eng. 12(5s), 390–399 (2024).

Exarchos, T. et al. Lip-reading advancements: a 3D convolutional neural network/long short-term memory fusion for precise word recognition. BioMedInformatics 4(1), 410–422 (2024).

Kasapbaşi, A., Elbushra, A. E. A., Omar, A. H. & Yilmaz, A. DeepASLR: A CNN based human computer interface for American Sign Language recognition for hearing-impaired individuals. Comput. Methods Programs Biomed. Update 2, 100048 (2022).

Islam, M. et al. Toward a vision-based intelligent system: A stacked encoded deep learning framework for sign language recognition. Sensors 23(22), 9068 (2023).

AbdElghfar, H. A. et al. QSLRS-CNN: qur’anic sign language recognition system based on convolutional neural networks. Imaging Sci. J. 72(2), 254–266 (2024).

Yirtici, T. & Yurtkan, K. Regional-CNN-based enhanced Turkish sign language recognition. Signal, Image and Video Processing, 1–7 (2022).

Haque, A. et al. Recognition of Bangladeshi Sign Language (BdSL) words using deep convolutional neural networks (DCNNs). Emerg. Sci. J. 7(6), 2183–2201 (2023).

Bansal, S., Gowda, K. & Kumar, N. Multilingual personalized hashtag recommendation for low resource Indic languages using graph-based deep neural network. Expert Syst. Appl. 236, 121188 (2024).

Das, S., Imtiaz, M. S., Neom, N. H., Siddique, N. & Wang, H. A hybrid approach for Bangla sign language recognition using deep transfer learning model with random forest classifier. Expert Syst. Appl. 213, 118914 (2023).

Alshehri, M. K., Sharma, S. K., Gupta, P. & Shah, S. R. Empowering the visually impaired: translating handwritten digits into spoken language with HRNN-GOA and Haralick features. J. Disabil. Res. 3(1), 20230051 (2024).

Subramanian, R. & Aruchamy, P. An effective speech emotion recognition model for multi-regional languages using threshold-based feature selection algorithm. Circ. Syst. Signal. Process. 43(4), 2477–2506 (2024).

Aslam, N., Abid, K. & Munir, S. Robot assist sign language recognition for hearing impaired persons using deep learning. VAWKUM Trans. Comput. Sci. 11(1), 245–267 (2023).

Al Khuzayem, L., Shafi, S., Aljahdali, S., Alkhamesie, R. & Alzamzami, O. Efhamni: A deep learning-based Saudi sign language recognition application. Sensors 24(10), 3112 (2024).

Shi, F., Wang, J. & Govindaraj, V. SGS: SqueezeNet-guided Gaussian-kernel SVM for COVID-19 Diagnosis 1–14 (Mobile Networks and Applications, 2024).

Naruei, I. & Keynia, F. A new optimization method based on COOT bird natural life model. Expert Syst. Appl. 183, 115352 (2021).

Sagheer, A. & Kotb, M. Unsupervised pre-training of a deep LSTM-based stacked autoencoder for multivariate time series forecasting problems. Sci. Rep. 9(1), 19038 (2019).

Chen, S. et al. NOx formation model for utility boilers using robust two-step steady-state detection and multimodal residual convolutional auto-encoder. J. Taiwan Inst. Chem. Eng. 155, 105252 (2024).

https://www.kaggle.com/datasets/toponowicz/spoken-language-identification

Singh, G. et al. Spoken language identification using deep learning. Computational Intelligence and Neuroscience, 2021 (2021).

Author information

Authors and Affiliations

Contributions

Conceptualization: E.A.; Methodology: E. A. and M.M.; Software: G.M.; Validation: R.C. and G.R.J.; Formal analysis: G.M. and M.M.; Investigation: R.S.; Resources: M.M.; Data curation: R.S.; Writing-original draft: E.A.; Writing-review & editing: G.P.J.; Visualization: G.M.; Supervision: G.P.J. and W.C.; Project administration: W.C.; Funding acquisition: W.C..

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

This article contains no studies with human participants performed by any authors.

Consent to participate

Not applicable.

Informed consent

Not applicable.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Akhmetshin, E., Meshkova, G., Mikhailova, M. et al. Enhancing human computer interaction with coot optimization and deep learning for multi language identification. Sci Rep 14, 22963 (2024). https://doi.org/10.1038/s41598-024-74327-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-74327-2

Keywords

This article is cited by

-

Multi-scale feature fusion of deep convolutional neural networks on cancerous tumor detection and classification using biomedical images

Scientific Reports (2025)

-

A novel deep transformer based CvT model for sign language recognition in visual communication

Scientific Reports (2025)

-

Prediction of strata settlement in undersea metal mining based on deep forest

Scientific Reports (2024)