Abstract

Meibomian Gland Dysfunction (MGD) and Dry Eye Disease (DED) comprise two of the most significant eye diseases, impacting millions of sufferers worldwide. Several etiological factors influence the early symptoms of DED. Early diagnosis and treatment of erectile dysfunction may significantly improve the Quality of Life (QoL) for people. The current study introduces the ESAE-ODNN, an improved stacked autoencoder-aided optimised deep neural network, as a new way to predict DED using feature selection (FS), feature extraction (FE), and classification. The approach described here is novel because it merges chaotic maps into FS, employs SLSTM-STSA for improved classification accuracy (CA), and optimizes with the adaptive quantum rotation of the Enhanced Quantum Bacterial Foraging Optimisation Algorithm (EQBFOA). The present study enhances prediction functions by extracting MGD-related features and complicated relationships from the DED dataset. To ensure essential feature identification, the ESAE minimizes irrelevant and redundant features. To predict the DED, the ESAE first applies FE and then implements an ODNN classifier. This method fine-tunes the ODNN framework to enhance the effectiveness of the classification. The proposed ESAE-ODNN classification system efficiently assists in the early diagnosis of DED. Combining advanced Deep Learning (DL) methods with optimization can help us understand MGD features better and sort the data with the best accuracy (96.34%). The experimental evaluation with relevant performance metrics indicates that the proposed method is efficient in diverse aspects: accurate identification, reduced complexity, and fine-tuned performance. The ESAE-ODNN’s robustness in handling intricate feature indications and high-dimensional data outperforms the existing state-of-the-art techniques.

Similar content being viewed by others

Introduction

Individuals with visual issues have a far worse Quality of Life (QoL). Among the eye conditions are glaucoma, diabetes, retinopathy, and others. In 2013, 64.3 million individuals worldwide suffered from glaucoma1. Early identification of eye disorders may help ensure appropriate treatment or, at the very least, prevent further deterioration of the symptoms. But it’s limited because of low awareness, a dearth of ophthalmologists, and expensive consultation fees. Automated screening is thus essential2. Depending on the research population and diagnostic criteria used, the prevalence of Dry Eye Disease (DED), one of the most prevalent eye conditions globally, may range from 5 to 50%3.

DED is considered one of the most underdiagnosed and undertreated disorders in ophthalmology4 despite being the most common cause for seeking medical attention for eye problems5. DED symptoms include photophobia, fluctuating vision, and inflammation of the eyes. The illness may cause excruciating pain and cause the cornea’s surface to become irritated, which may lead to long-term damage. According to epidemiological research, DED is more common in women6 and rises with age7. However, projections indicate that longer screen times and increased contact lens use, both risk factors, will increase the incidence of DED in all age groups in the coming years8. Diabetes mellitus9 and exposure to air pollution10 are other risk factors.

DED can significantly impact the QoL, leading to significant direct and indirect public health costs and personal financial hardship due to lower productivity at work.

The disease’s underlying mechanisms distinguish two types of DED:

-

(i)

The condition known as aqueous-deficient DED is marked by insufficient tear production from the lacrimal gland.

-

(ii)

Meibomian glands (MG) in the eyelids typically malfunction, causing Evaporative DED, the most common form.

MG produces meibum, a concentrated material that typically coats the cornea’s surface to provide a protective superficial lipid layer that prevents the underlying tear film from evaporating. One must accurately differentiate between evaporative and aqueous-deficient DED, their severity levels, and mixed aqueous and evaporative forms to determine the best treatment technique. In addition to saving patients excessive expenditure and exposure to possible adverse effects connected with various therapies, a prompt and correct diagnosis reduces suffering in patients.

A customized treatment plan may increase health provider efficiency and provide a better treatment response. Reduced tear volume, faster tear film break-up (measured by fluorescein tear break-up time, or TBUT), and ocular surface micro-wounds11. After 10 s, the tear film in a healthy eye “breaks up” spontaneously, and blinking causes the tear film to rebuild. The degree of clinical symptoms that a patient reports is often uncorrelated with the diagnostic tests that are now available. No single clinical trial is considered conclusive in diagnosing DED12. Consequently, the clinic typically combines and enhances various tests with data from patient symptoms documented via questionnaires. The clinic must devote much time and money to these examinations.

Tear osmolarity, meniscus height, TBUT, and Schirmer’s test are all used to measure the physical characteristics of tears. These tests can help determine if someone has DED: aberrometry, corneal sensitivity, interblink frequency, corneal surface topography, ocular surface staining, and imaging methods like meibography and in vivo confocal microscopy (IVCM). In 1955, someone described Artificial Intelligence (AI) as “the science and engineering of making intelligent machines”13. Intelligence is the “ability to achieve goals in a wide range of environments”14. ML, as used in AI, refers to a class of algorithms that can learn from data instead of having explicit rules written into them.

In particular, AI—Machine Learning (ML) is becoming a crucial component of healthcare systems. The ML subfield known as “Deep Learning (DL),” which has garnered more attention recently due to its proficiency in text and picture recognition, employs deep artificial neural networks15. DL in ophthalmology has primarily processed retinal data to automate diagnosis, predict disease outcomes, and segment areas of interest in pictures16. For retinal diseases, DL fusion with Optical Coherence Tomography (OCT) has led to improved diagnosis and reliable detection. Also, ML has been shown to help diagnose and treat anterior segment disorders such as DED. The personal evaluation of visions by the person who watches is an integral part of numerous DED monitoring and diagnosis tests, which raises ethical issues17.

The deployment of DL algorithms to the analysis of medical imaging has significantly enhanced the diagnosis and treatment of eye diseases and, notably, the treatment of dry eye syndrome. A recent research investigation attempted to develop a DL18 model that could distinguish between the tarsus and MG regions in meibography images collected from people who suffer from dry eye (cite). Using a U-net model based on Convolutional Neural Networks (CNNs), investigators trained the model to divide those regions accurately. Furthermore, not only did the model’s sensitivity and specificity metrics attract attention, but they also attained significant Area Under the Curve (AUC) values.

Diagnostic retina imaging is also vital in diagnosing retinal disorders resulting in microvascular abnormalities. Using colour fundus scans, the present investigation developed an automated DL-based framework superior to benchmark models in diagnosing multiple eye diseases. Using this novel system, EyeDeep-Net19, eye doctors can improve the treatment of patients and their outcomes through enhanced early diagnosis and management of several eye diseases.

The Tear Film Breakup Time (TBUT) test is commonly used to diagnose DED. It monitors how long it requires for the tear film to demonstrate its preliminary breakup signal. However, computer-aided diagnostic methods are required since this test is personal, time-consuming, and costly20. Current computer-assisted methods are based on expensive tools and cannot provide information about the tear film breakup area or time; they do not evaluate the severity of DED, which is essential for management. Automated DED detection difficulties include low video resolution, blinking eyes, and the absence of public databases. Another new approach uses TBUT video to determine the presence and degree of DED within a standard, moderate, or severe classification. This method demonstrated 83% accuracy in differentiating TBUT frames and was 90% consistent with the ophthalmologist’s rating21.

The related research emphasizes Meibomian Gland Dysfunction (MGD), which is vital for diagnosing and treating dry eye. The MGD-1k dataset consists of 1k infrared images of the MG with gland and eyelid masks and multiple Meiboscore assessments. The investigation collected images from 320 patients with different age distributions over one year using LipiView II. This dataset, whose mean aggregated Jaccard index is 0.4718 for the gland class and 0 for the eyelid class, helps train DL models for MGS. The eyelid class has an average of 8470 generated numbers. Some of the difficulties noted in the study include gland overlap and image sharpness, and the authors recommend future improvement22.

AI-based techniques for MG assessment have achieved 80% instance-wise accuracy, leading to further developments. The accuracy in distinguishing between dry eye and non-DED is 8%. Eyelid detection and tarsal plate segmentation improve meibography feature extraction (FE) and classification23.

Additionally, researchers have created a fully automated DL framework for DED severity grading, achieving a Dice coefficient of 0.962 and a correlation of 0.868 with expert grading24. A similar study presented an AI-assisted TFOD for dry eye subtype classification using video keratography, with a total classification rate of 78.40%25. This review also highlights the increasing use of AI in ophthalmology, especially in diagnosing ocular surface diseases26.

This paper27 has revealed that DL has revolutionized medical imaging by increasing its diagnostic precision and speed. Such models like CNNs illustrated by CG-Net help diagnose and treat GIT diseases further. This approach helps in early diagnosis and has vast uses in various specialties, including ophthalmology, oncology, and neurology, to name but a few.

DL models for evaluating eye condition, particularly for segmenting images from medical devices into tarsus and MG regions, require further analysis into feature selection (FS) and optimization approaches. Because of the emphasis on personal feature engineering or limited optimization methods, conventional techniques frequently do not capture the complex shapes and structures discovered in ocular images. Optimal performance and the ability to be generalized across numerous data sets could be equally challenging.

This is solved by the recommended Enhanced Quantum Bacterial Foraging Optimisation Algorithm (EQBFOA), which integrates the Assisted Stacked LSTM Sequence-to-Sequence Autoencoder (SLSTM-STSA) with the Optimal Deep Learning Technique (ESAE-ODNN). By changing quantum rotation angles and probability amplitudes employing theories based on the theory of quantum mechanics, the EQBFOA improves FS. As a result, local convergence is prevented, and the human population remains unique; for linear data such as healthcare images, a successful model for representation learning and FE can be developed by combining EQBFOA with SLSTM-STSA.

Furthermore, ESAE-ODNN optimizes the DL model parameters, ensuring efficient training and improved performance on ocular image segmentation tasks. The proposed method fills in the gaps in research by improving the accuracy, robustness, and generalisability of DL models for assessing eye health by combining advanced FS and optimization techniques.

The article is organized as follows: Sect. "Introduction" provides an overview of DED, identifies the research gap, and outlines rectification measures. Section "Proposed methodology - enhanced stacked auto-encoder assisted optimized deep neural network (ESAE-ODNN)" provides the detailed methodology of ESAE-ODNN, Sect. EQBFOA assisted stacked LSTM sequence-to-sequence autoencoder (SLSTM-STSA) with optimal deep neural network (ESAE-ODNN) presents a comparative analysis with an illustration of the result, and Sect. Result and discussion concludes the article.

Proposed methodology - enhanced stacked auto-encoder assisted optimized deep neural network (ESAE-ODNN)

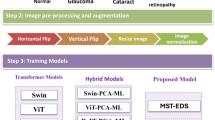

This section explains the detailed methodology of the proposed system, ESAE-ODNN. This study pre-processed the images, performed segmentation using UNet++28, and selected features using Chaotic Map. ESAE-ODNN performs the classification, and Fig. 1 displays the system architecture.

MGD significantly contributes to DED by manipulating the oily layer of the tear film, which in turn affects the stability of the tear film and various ocular symptoms. Dysfunctional MG impacts tear features, ocular surface inflammation, and visual comfort, so its management is critical in ameliorating dry eye syndromes and further complications. Proper identification of the MG is crucial for diagnosing DED and determining the disease’s stage. UNet + + is a complex method for segmenting MG from ocular images.

The architecture of UNet + + is that of a deeply supervised encoder-decoder network with nested dense skip pathways between the encoder and decoder. These skip pathways help minimize the semantic gap between the feature maps and help with better learning. This architecture improves segmentation results through better multi-scale feature extraction and better alignment of the feature maps.

UNet + + extends this by incorporating hierarchical features into the architecture to process multi-scale features, thereby directly enhancing segmentation accuracy. This combined method effectively uses the best parts of both models: UNet + + for further segmentation to improve diagnosis and DED treatment with sequential data.

Chaotic version of optimal foraging algorithm (COFA) based feature selection

This paper introduces COFA, a novel, chaotic variation of the optimal foraging approach. This work utilizes the recommended COFA to extract FS from DED images. This paper subjects it to the multi-level image thresholding optimization problem. COFA uses chaotic maps to regulate the trade-off between intensification (exploitation) and diversification (exploration) in the fundamental OFA. Exploration is considered the primary goal of all optimizers because it can potentially reveal new, improved solutions in the search space. Following exploration, exploitation is the act of using a promising place or location. Determining the appropriate balance between these two elements is the most significant issue for any meta-heuristic algorithm aiming to approach the global optimum.

This is due to the intrinsic stochastic nature of bio-inspired optimization approaches.

-

Initialization Step: For N individuals, start the initialization for the group of \({p}^{1}\)with uniform distribution based on the Eq. (1).

A computer for each individual \({x}_{j}^{1}\) in group, \({p}^{1}\)the fitness value \({F}_{j}^{1}\). Order \({F}_{j}^{1}\) from the best value to the worse, considering the corresponding sequence \({x}_{j}^{1}\:\)by formulating \({F}_{j}^{1}\) where (j = 1; 2;…; N).

-

Iteration step: set t = 1 with termination criteria of the maximum number of iterations ‘tMax’. set \({F}_{best}\) = \({F}_{1}^{t}\) =min (\({F}_{1}^{t}\). . .\(\:{F}_{n}^{t}\)), \({F}_{n}^{t}\:\)= min (\({F}_{1}^{t}\). . .\({F}_{n}^{t}\)) check if (\({F}_{j}^{t}\) = \({F}_{best}\)), then update \({x}_{ji}^{t+1}\) by the Eq. (2).

where,\(\:{x}_{1ji}\)=rand (0,1),\(\:{x}_{2ji}\)=rand (0,1), k is defined as k = \(\frac{t}{tmax}\). The parameter ‘k’ is decreasing linearly in each iteration.

If condition (\({F}_{j}^{t}={F}_{best}\)) is failed, use the sorted values in \({F}_{j}^{t}\), pick randomly a value as variable b, then obtain \({x}_{b}^{t}\) and \({F}_{b}^{t}<{F}_{j}^{t}\). update \({x}_{ji}^{t+1}\) by the Eq. (5).

Test on the boundary limits based on the Eqs. (6) and (7).

Get the objective function value \({F}_{j}^{t+1}of{x}_{j}^{t+1}\), check for the following to determine by the Eqs. (8) to (10).

Then,

Else

Compute \({F}_{j}^{t+1}\) with (j = 1,2,…N) by ordering \({F}_{j}^{t+1}\) from the best to the worse, considering the corresponding sequence \({x}_{j}^{t+1}\), check if (\({F}_{j}^{t+1}<{F}_{best}\)), then \({F}_{best}\:\)= \({F}_{1}^{t+1};\:{X}_{best}={X}_{1}^{t+1}\).

-

Termination step: Terminate the iteration when reaching ‘tMax’ by obtaining \({F}_{best}\) and \({X}_{best}\) as the final output, the entire process of FS is given in Fig. 2, and the process is given in Algorithm 1.

The description of prominent chaotic maps is given in Table 1. The sequence of the chaotic map is given by ‘o’, and its index is given in ‘s’. The sth count of the chaotic sequence is specified by ‘os’, and a collection of control parameters (c, d, l) may be applied to assess chaotic actions inside the dynamical framework. Table 1 gives chaotic maps that only exist in a definite form and do not include any random components. It is important to note that all of the chosen chaotic maps in this research start at ‘Oo’ with 0.7 at the beginning.

The FS process using the COFA for analyzing DED images resulted in the selection of 16 features. Various Chaotic Maps, such as the Chebyshev Map, Circle Map, Mouse/Gauss Map, Iterative Map, Logistic Map, Piecewise Map, Sine Map, Singer Map, Sinusoidal Map, and Tent Map, derived from the FS. These Chaotic Maps make finding and optimizing the dataset’s critical features easier, improving the DL model’s performance for DED analysis.

Enhanced Quantum Bacterial foraging Optimisation Algorithm (EQBFOA)

The traditional QBFO technique uses a look-up table to find the quantum rotation angle, which restricts population diversity development and fails to adequately capture the solution space’s primary conditions. Thus, this work proposes an improved quantum rotation angle that is continuously and adaptively adjustable. This paper implements a modified operator to prevent the quantum gate updating process from encountering invariant solutions. The modified operator could increase the diversity of the population and fortify the algorithm’s ability to search globally. Assuming that ‘Size’, ‘N’, and ‘Q(t)’ represent the population size, chromosomal length, and population, respectively, the kth individual in the population ‘Q(t)’ has the following coding in Eq. (11).

The QBFO initializes the initial values of the two Size ‘N’ probability amplitudes as 1/√2. This selection guarantees that all possible superposition states have identical probability and preserves the unitary typical of the quantum rotation gate. Since the probability amplitudes are initialized with the same value, the angles ‘\({\theta\:}_{i}^{\prime\:}\) and \(^{\prime\:}{\theta\:}_{b}^{\prime\:}\) are also identical. Consequently, the optimal rotation angle is equivalent to the rotation angle of each qubit. The symbol \(^{\prime\:}{\theta\:}_{i}^{\prime\:}\) denotes the angle of a quantum bit for the present bacteria, whereas ‘\({\theta\:}_{b}^{\prime\:}\) Indicates the angle of a quantum bit for the ideal bacterium. As mentioned earlier, the scenario facilitates the algorithm’s production of invariant solutions during the first stages of development, leading to local convergence or even outright failure. Considering this, a novel concept known as modified probability of optimum rotation angle is introduced, as seen in Eq. (12).

The interval of 0,1 is assigned with the random numbers ‘µ’ and ‘ε’. The suggested optimum rotation angle aims to avoid the creation of invariant solutions, hence eliminating the need for a significant alteration of the optimal rotation angle. According to Eq. (12), the value of ε is greater than \({^{\prime\:}P}_{\theta\:}\)’ where it is assigned with the value of 0.75.

In order to increase the diversity of the population and strengthen the algorithm’s capacity to search globally, the modified probability amplitude operator is used. Equation (12) defines the modified operator for probability amplitude.

where the minimal positive number is specified as in \(^{\prime\:}\gamma\:^{\prime\:}\), and the quantum gate is applied in updating the probability amplitude in (\({\alpha\:}_{i}^{*}\) ,\(\:{\beta\:}_{i}^{*}\))T.

The enhanced quantum rotation angle is defined in Eqs. (14) to (16).

where \({\theta\:}_{i}\) represents the angle quantum bit for the current bacterium;\({x}_{i}\) is the binary solution measured by the quantum bit of the current bacterium;\({\theta\:}_{i}\) is the initial value of the rotation angle; η = \(\:\frac{gen}{Maxgen}\); gen is the number of iterations; Maxgen is the maximum number of iterations, and sgn(·) is the sign function. In Eq. (15), \(\:{M}_{1}\) and \(\:{M}_{2}\) are used to control the direction of the rotation angle, and η and \(\:{\theta\:}_{0}\) are used to control the size of the rotation angle. Due to the continuous rotation angle obtained from Eq. (15), the improved QBFO algorithm based on this rotation angle can thoroughly search the solution space and find the global optimal solution quickly.

Convergence analysis of the EQBFOA algorithm

Theorem 1

The EQBFOA algorithm is a finite and homogeneous Markov chain.

Proof

In the EQBFOA algorithm, the set of Q(t) ={} is a finite set. For any two states and ’ in state space ‘S’, the probability transferred from to ’ is only related to and has nothing to do with the time ‘k’. Thus, the EQBFOA algorithm is a finite and homogeneous Markov chain.

In the state space ‘S’, the modified probability of the optimal rotation angle, the modified operator of probability amplitude, reproduction, and elimination-dispersal operations. The corresponding probability matrix is R, A, C, and E; thus, the transfer probability matrix of the Markov chain of EQBFOA can be labelled as F = RACE.

Theorem 2

The transfer probability matrix R, A, and E are both random and strictly positive,and the matrix C is random.

Proof

Taking the transfer probability matrix ‘R’ of rotation angle modified operation as an example, the state is mapped to the state ’ according to a certain probability The transfer probability is defined in Eqs. (17) and (19).

where h(\(\:{T}_{i}\),\(\:\:{T}_{j}\)) is the hamming distance between \(\:^{\prime\:}{t}_{i}^{\prime\:}\) and \(\:^{\prime\:}{t}_{j}\)’, and \(\:{^{\prime\:}g}_{ik}^{\prime\:}\) is the kth gene of the stage, \(\:^{\prime\:}{T}_{i}^{\prime\:}.\) Due to pτ ∈ (0, 1) and \(\:{m}_{ij}\ge\:0\), it was evident that ‘R’ is strictly positive. For all states \(\:{T}_{i}\), \({T}_{j} \in\)S, there is \(\:{m}_{ij}\ge\:0\) and \(\:{\sum\:_{{t}_{j}\in\:\:S}m}_{ij}\)= 1, so ‘R’ is random. Similarly, this method can prove that the matrix A and E are random and strictly positive, and the matrix ‘C’ is random.

Lemma 1

If A and B are n×n random matrices, then AB is also an n×n random matrix.

Theorem 3

The transfer probability matrix F in the Markov chain of EQBFOA is strictly positive.

Proof

Set B = RAC, according to Lemma 1, matrix.

B is random. \(\forall\)i, j ∈ [1, N], there is \(\:{c}_{ij}\)=\(\:\sum\:_{k=1}^{N}{b}_{ik}{e}_{kj}>o,\) so we get C = BE > 0, which completes the proof.

Lemma 2

Suppose that P is a reducible random matrix, P = T is an m-order random matrix, and Y, Q ≠ 0, then in Eq. (20).

where \(\:{P}^{\infty\:}\)= \(\:{P}^{0}\) whose value is independent of the initial state and satisfies {\(\:\begin{array}{c}{p}_{i}^{\infty\:}>\text{0,1}\le\:i\le\:m\\\:{p}_{i}^{\infty\:}=0,i>m\end{array}\).

Lemma 3

If A = [aij] ≥ 0 is an n×n square matrix, and is established for any ‘i’, then in Eq. (21).

Lemma 4

Suppose that U is a transfer probability matrix of a regular homogeneous Markov chain, then.

-

(i)

There is a limit probability matrix that satisfies lim \(\:\begin{array}{c}lim\\\:k\to\:\infty\:\end{array}{U}^{K}\check{U}\) .

-

(ii)

A unique probability vector satisfies ρTU = ρT.

Theorem 4

EQBFOA is globally convergent.

Proof

Suppose that the state of transfer probability matrix F satisfies the following properties:

-

(i)

f1 is the global optimal solution;

-

(ii)

fi(i = 2, 3, . .,N – 1) is the global suboptimal solution;

-

(iii)

fN is the global worst solution. Then, matrix F can be expressed as a lower triangular matrix, as shown in Eq. (22).

From Eq. (23), it can be understood that the matrix F is a reducible random matrix. Let T = [1],

And, Eq. (24).

According to Lemma 2, it is assumed in Eq. (25).

Let \(\:{H}_{K}=\sum\:_{i=1}^{k}{Q}^{i-1}Y{T}^{k-i}\), and we have \(\:{H}_{k+1}={H}_{k}T+{Q}^{k}Y\). Take the limit for k on both sides of the equation, and the result is assumed in Eq. (26).

By Lemma 4, the row vector is a probability vector of \(\:\begin{array}{c}lim\\\:k\to\:\infty\:\end{array}{H}_{K}\), and each element is greater than 0. From Eq. (27), we conclude that the state can eventually reach f1, wherever the state starts, which implies Theorem 4.

Long short-term memory

Recent studies have focused on Recurrent Neural Networks (RNNs) for prediction tasks. This is because deep learning (DL) and computer power have improved, and traditional machine learning (ML) has problems with time-series data. Due to their recurrent nature, RNNs inherently possess short-term memory but suffer from gradient-related issues that hinder long-term memory retention. Time-series prediction has been extensively proposed and used in LSTM networks to address this. LSTMs incorporate memory cells and gate mechanisms to manage information flow within the network, facilitating short-term and long-term memory. The LSTM architecture includes input, output, and forget gates, allowing for effective information retention and dissemination. Equations (28) to (35) detail the implementation and updating of LSTM cell states, enabling the computation of LSTM outputs.

where Xt=input vector; Yt=output vector; It=input gate outcome; Ft=forget gate outcome; Ot = output gate outcome; Ct=finishing state in memory block; C¯t=temporary; σ = sigmoid function; Wxf, Wxi, Wxc, and Wxo are input weight matrices; Whf, Whi, Whc, and Who are recurrent weight matrices; Why is output weight matrix; and Bf, Bi, Bc, Bo, and By are the related bias vectors.

EQBFOA assisted stacked LSTM sequence-to-sequence autoencoder (SLSTM-STSA) with optimal deep neural network (ESAE-ODNN)

The ESAE is an advanced stacked auto-encoder version that enhances FS and FE. This mainly removes the features that are irrelevant or contain redundant information, increasing the efficiency and accuracy of the model. It demonstrates that ESAE is less sensitive to the application’s conditions and is, therefore, suitable for real-life use. Thus, using EQBFOA, ESAE has a higher classification accuracy, making it an effective tool for solving tasks that require recognizing complex and deep features and high-dimensional data analysis.

The research presented here proposes a DL hybrid model, SLSTM-STSA, which includes an RNN encoder-decoder system, as a first phase towards a sequence-to-sequence (Seq2seq) paradigm. Seq2seq, which comprises an encoder and a decoder, is currently prominent in machine translation. The decoder employs the context vector, which the encoder reduces in size, to generate an output sequence from what is input. There is a hidden state vector in the encoder generated from the input, and the output vector demonstrates the hidden state of the earliest recent RNN cell. The encoded context vector has been employed as the decoder network’s initial hidden state, and the output, compared to the prior time step, will be transmitted to the next LSTM unit for linear prediction. Mathematically, the encoder ‘φ’ compresses input data ‘x’ into a low-dimensional representation ‘Z’, while the decoder ‘Ψ’ reconstructs the input data ‘x0’. A standard NN function mathematically represents these transitions in Seq2seq learning passed through a sigmoid activation function σ Eqs. (36) and (37).

The Optimal Deep Neural Network (ODNN) approach is implemented in the present research. Figure 3 exhibits the networks employed by the LSTM Seq2seq model to predict GSRs. By overlapping LSTM layers on both the encoder and decoder parts, the stacked LSTM sequence-to-sequence autoencoder (SLSTM-STSA) may enhance GSR prediction using Seq2seq learning. This layering intends to improve the model’s prediction capability by integrating multi-level hidden models that represent time-series data. As demonstrated by Eqs. (38) and (39), the symbols ‘x’ and ‘o’ represent input and output data, ‘c’ for the encoder context vector, and ‘ht’ and ‘st’ for hidden states in the encoder and decoder, appropriately. Algorithm 2 provides detailed procedures for DED classification.

In the SLSTM-STSA structure, the encoder’s loss function is usually determined by the reconstruction loss used in the Optimal Deep Learning Technique (ESAE-ODNN). Autoencoder-based Seq2seq models are employed in case of the loss function, and MSE or MAE represents the difference between the input sequence and the decoded output sequence. This loss function quantifies the extent to which the decoder can replicate the input data after encoding them, and hence, the model learns the correct data representation.

This study computes the total quantity of parameters trained in the EQBFOA-Assisted Stacked LSTM Sequence-to-Sequence Autoencoder (SLSTM-STSA) with Optimal Deep Learning Technique (ESAE-ODNN) by analyzing the network structure. Each of these LSTM layers in this model, with 3 LSTM layers, 128 units, and an input dimension of 64, has parameters equal to 98,816. Therefore, the total parameters of the three LSTM layers sum up to 296,448. Also, if the network has a dense layer with 64 nodes connected to the last LSTM layer, it adds 8,256 parameters. Thus, this model learns a total of 304,704 parameters. A standard UNet + + model, based on the configuration and a certain number of layers, has approximately 30–50 million parameters. In the case of the proposed MGST + UNet + + architecture, the parameter count increases even more due to incorporating the Transformer-based features, which have helped enhance the segmentation accuracy.

The dataset presents some challenges, including the high dimensionality of the features, class imbalance, noise, and missing data. The proposed model solves these challenges using several main approaches. Chaotic maps improve FS by handling high dimensionality and accelerating computations specifically for FS. The proposed model employs the EQBFOA optimization algorithm to minimize class imbalance and noise while improving robustness and accuracy. The proposed model’s temporal dependencies, SLSTM-STSA, handle temporal dependencies and irregular data patterns, thereby improving classification accuracy. The proposed model uses augmentation and preprocessing methods to address the issue of missing and noisy data, thereby ensuring improved results and reliability.

Result and discussion

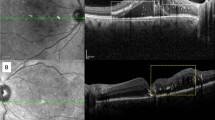

The study did many tests to see how well the UNet + + method worked for Meibomian Gland Segmentation (MGS) in eye images. The assessment included a discussion of the clinical consequences, visual comparisons, and quantitative data. The experimental comparison uses performance measurements, including Intersection over Union (IoU), dice coefficient, and pixel-wise accuracy. These metrics frequently assess the quality of segmentation results in image analysis tasks. They provide information on the degree to which the projected segmentation matches the actual segmentation, considering both area overlap and pixel-level classification accuracy.

MGD specialists and professional ophthalmologists manually annotate all 1,000 infrared images of the MG in the MGD-1k dataset. This dataset contains 1000 gland images with corresponding gland masks, 1000 eyelid masks, and 6 Meiboscore evaluations per image. The dataset consists of images of 320 patients; the average age distribution is 32. The dataset includes 2% male patients (mean age = 51, standard deviation 19) and 67.8% female patients (mean age = 55, standard deviation 19). It has 467 upper and 533 lower eyelid images, of which 94.1% are gradable. The current study uses single-channel grayscale images obtained from the LipiView II Ocular Surface Interferometer for over a year. This rich and highly documented data source is valuable for understanding MGD in people of all ages and is crucial for improving ML algorithms for diagnosing and studying MGD.

The research utilizes the dataset20, allocating 60% for testing and 40% for training. This work contrasts the proposed method, ESAE-ODNN, with DL18, CNN18, and EyeDeep-Net29. Figure 4 displays the input image, eyelid, and MG labels.

Performance metrics such as accuracy, precision, recall, sensitivity, and F1-score evaluate the classification process. The DED classification categorizes individuals based on the presence and severity of dry eye symptoms and associated clinical findings. Accuracy refers to the overall correctness of the classification, measuring the proportion of correctly classified cases among all cases. Precision represents the fraction of true positive cases among all predicted positive cases, indicating the model’s ability to avoid false positives. Recall, also known as sensitivity, quantifies the proportion of true positive cases correctly identified by the model out of all positive cases, indicating the model’s ability to detect positive instances.

Sensitivity is significant in medical diagnosis because it determines the model’s ability to identify individuals with the condition correctly. The F1-score, the harmonic mean of precision and recall, balances precision and recall as a comprehensive metric for evaluating classification performance. These metrics collectively assess the classification model’s effectiveness in accurately identifying individuals with dry eye symptoms in DED classification, aiding in developing robust diagnostic and management strategies (Table 2).

Table 6 describes the significant advantages of segmenting the data using the SL-FRST method. The increase in accuracy with segmentation is not only remarkable, but it also increases with sample size, underscoring the method’s effectiveness for working with larger and more complex data sets. In all practical applications, especially when the dataset is large, segmentation is a practical preprocessing step that improves the accuracy and robustness of the SL-FRST classification model (Table 7).

Discussion and complexity analysis

The comparative analysis across different evaluation metrics reveals notable performance variations among the DL, CNN, EyeDeep-Net, and SL-FRST models. Across all sample counts, SL-FRST consistently outperforms the other models regarding accuracy, precision, F1-Score, sensitivity, and recall (Tables 2, 3, 4, 5 and 6). More specifically, when tested versus DL, CNN, and EyeDeep-Net, SL-FRST enhances accuracy by about 2–12%. Similarly, compared to the other models, SL-FRST demonstrates higher precision values, with variation in percentage ranging from about 5–11%. Additionally, the F1-Score results indicate that SL-FRST is better than DL, CNN, and EyeDeep-Net, with around 3 to 9% improvements (Table 7).

Figures 5, 6, 7, 8, 9 and 10 indicate that SL-FRST is an exceptionally reliable model for precisely detecting positive cases, as it consistently demonstrates higher sensitivity and recall percentages than the other models, regardless of the number of sample counts. But DL consistently performs lower than the others across all metrics, indicating that it isn’t particularly effective at accurately identifying cases in the data set. A range of metrics for assessment demonstrates that the SL-FRST model is superior to the other models, confirming that it is successful for classification tasks on this dataset relative to the empirical analyses.

The computational cost of the proposed framework is primarily impacted by the chaotic map and adaptive optimization procedure employed by the EQBFOA algorithm. For an identified number of features, the FS has a time level of complexity O(n2). The EQBFOA optimization, including quantum rotation, increases the complexity by approximately O(m.k), where ‘m’ is the number of iterations and ‘k’ is the number of quantum bits. The time complexity of the SLSTM-STSA model is O(T.H2), where T is the sequence length, and H is the dimension of the hidden state. In summary, the integrated strategy guarantees the appropriate choice of features and their optimization; however, the computational complexity remains realistic for big data analysis.

The time complexity depends on the following components of the proposed approach. The preprocessing here is done by integrating the chaotic maps into the FS, and EQBFOA’s adaptive quantum rotation optimizes. The complexities are, therefore, as follows: The time complexity of the preprocessing is O(n log n), while that of the optimization is O(n2). In the SLSTM-STSA model’s advanced classification, a time complexity of O(n.m) is added for FE and time complexity for sequence processing. The ESAE method used in FE and dimensionality reduction performs O(n.m) operations, allowing the discarding of unimportant features. The next classifier is the ODNN, which requires O(n2.m) for training and O(f.h.w.c) for inference. Classification accuracy improves via improvements to the ODNN framework, but the computational cost is higher. By fixing problems with feature complexity, training models, and prediction, the ESAE-ODNN system performs an excellent job of early DED diagnosis.

Conclusion and future work

Early detection is imperative for successful treatment and improved patient quality of life in diagnosing and managing Dry Eye Disease (DED), an eye disease prevalent globally and frequently related to Meibomian Gland Dysfunction (MGD). Class imbalance, data noise, and high-dimensional feature spaces represent a few of the challenges the present research aims to eliminate to enhance Motor Imagery DED image classification. A novel standard in DED image processing is being indicated, the Enhanced Stacked Auto Encoder (ESAE) for FS and FE, when used with an ODNN for classification, which promises to enhance Classification Accuracy (CA) and reliability by the inclusion of chaotic maps, optimization methods, and improved frameworks. A phenomenal accuracy of 96.34% is attained by ESAE-ODNN through enhanced detection of features and comprehension of the complex relationships with MGD. The proposed approach is easy to navigate optimized, and demonstrates merit in early DED diagnosis. Experiments demonstrate that it superiors state-of-the-art methods, primarily in processing high-dimensional data and producing accurate FS. Regarding medical diagnosis and management, ESAE-ODNN demonstrates immense potential as another significant step in anticipating DED prediction.

Improvements to the quality in future research might include researching methods that render the DL and CNN models more efficient. Additional research into novel frameworks or including novel techniques like attention mechanisms or transfer learning could potentially enhance these algorithms’ performances. Also, in order to improve the models’ overall accuracy while addressing possible presumptions, the dataset could be improved by boosting the number of samples or balancing the class distributions. Additional improvements in classification performance may be feasible with the study of ensemble methods that integrate the positive features of several models. Overall, the accuracy and effectiveness of model-based classification for a particular field could be improved with regular attempts to refine models while improving datasets.

Data availability

The datasets used and/or analysed during the current study available from the corresponding author on reasonable request.

Change history

08 May 2025

A Correction to this paper has been published: https://doi.org/10.1038/s41598-025-99886-w

References

Storås, A. M. et al. Artificial intelligence in Dry Eye Disease. Ocul. Surf. 23, 74–86 (2022).

Nam, S. M., Peterson, T. A., Butte, A. J., Seo, K. Y. & Han, H. W. Explanatory model of Dry Eye Disease using health and nutrition examinations: machine learning and network-based factor analysis from a national survey. JMIR Med. Inf. 8(2), e16153 (2020).

Brahim, I., Lamard, M., Benyoussef, A. A. & Quellec, G. Automation of Dry Eye Disease quantitative assessment: A review. Clin. Exp. Ophthalmol. 50(6), 653–666 (2022).

Curia, F. Features and explainable methods for cytokines analysis of Dry Eye Disease in HIV-infected patients. Healthc. Anal.. 1, 100001 (2021).

Yang, H. K., Che, S. A., Hyon, J. Y. & Han, S. B. Integration of Artificial Intelligence into the Approach for diagnosis and monitoring of Dry Eye Disease. Diagnostics. 12(12), 3167 (2022).

Malik, S. et al. Data-driven approach for eye disease classification with machine learning. Appl. Sci. 9(14), 2789 (2019).

Abdelmotaal, H. et al. Detecting dry eye from ocular surface videos based on deep learning. Ocul. Surf. 28, 90–98 (2023).

Lu, C. W. et al. Impacts of air pollution and meteorological conditions on Dry Eye Disease among residents in a northeastern Chinese metropolis: A six-year crossover study in a cold region. Light: Sci. Appl. 12(1), 186 (2023).

Urbanski, G. et al. Tear metabolomics highlights new potential biomarkers for differentiating between Sjögren’s syndrome and other causes of dry eye. Ocul. Surf. 22, 110–116 (2021).

Rodriguez, D. A., Galor, A. & Felix, E. R. Self-report of severity of ocular pain due to light as a predictor of altered central nociceptive system processing in individuals with symptoms of Dry Eye Disease. J. pain. 23(5), 784–795 (2022).

Heidari, M., Noorizadeh, F., Wu, K., Inomata, T. & Mashaghi, A. Dry Eye Disease: Emerging approaches to disease analysis and therapy. J. Clin. Med. 8(9), 1439 (2019).

Raza, A. et al. Classification of eye diseases and detection of cataract using digital fundus imaging (DFI) and inception-V4 deep learning model. In 2021 International Conference on Frontiers of Information Technology (FIT) (pp. 137–142). IEEE. (2021), December.

Vyas, A. H. & Khanduja, V. A Survey on Automated Eye Disease Detection using Computer Vision Based Techniques. In 2021 IEEE Pune Section International Conference (PuneCon) (pp. 1–6). IEEE. (2021), December.

Arias, E. D., Fernández, E., Peral, A. & Gómez-Pedrero, J. A. Classification of Dry Eye Disease with machine learning techniques. Programa de Doctorado en Óptica, Optometría y Visión Facultad de Óptica y Optometría, 26. (2021).

Dharani, C., Tamilarasi, A., Chitra, K., Karthick, T. J. & Jeevitha, S. Eye Disease Prediction Among Corporate Employees Using Machine Learning Techniques (No. 10623). EasyChair. (2023).

Ren, X., Wang, Y., Wu, T., Jing, D. & Li, X. Binocular dynamic visual acuity in Dry Eye Disease patients. Front. NeuroSci. 17, 1108549 (2023).

Elsawy, A. et al. Multidisease deep learning neural network for the diagnosis of corneal diseases. Am. J. Ophthalmol. 226, 252–261 (2021).

Wang, Y., Shi, F., Wei, S. & Li, X. A deep learning model for evaluating Meibomian glands morphology from Meibography. J. Clin. Med. 12(3), 1053 (2023).

Sengar, N., Joshi, R. C., Dutta, M. K. & Burget, R. EyeDeep-Net: A multi-class diagnosis of retinal diseases using deep neural network. Neural Comput. Appl. 35(14), 10551–10571 (2023).

Dataset received from https://mgd1k.github.io/index.html

Vyas, A. H. et al. Tear film breakup time-based Dry Eye Disease detection using convolutional neural network. Neural Comput. Appl. 36(1), 143–161 (2024).

Swiderska, K. et al. A deep learning approach for meibomian gland appearance evaluation. Ophthalmol. Sci. 3(4), 100334 (2023).

Yeh, C. H., Graham, A. D., Stella, X. Y. & Lin, M. C. Enhancing meibography image analysis through artificial intelligence–driven quantification and standardization for Dry Eye Research. Transl. Vis. Sci. Technol. 13(6), 16–16 (2024).

Kim, S. et al. Deep learning-based fully automated grading system for Dry Eye Disease severity. Plos One, 19(3), e0299776. (2024).

Yokoi, N. et al. Dry Eye Subtype classification using Videokeratography and Deep Learning. Diagnostics. 14(1), 52 (2023).

Ji, Y. et al. Advances in artificial intelligence applications for ocular surface disease diagnosis. Front. Cell. Dev. Biol. 10, 1107689 (2022).

Siddiqui, S. et al. CG-Net: A novel CNN framework for gastrointestinal tract disease classification. Int. J. Imaging Syst. Technol. 34(3), e23081. (2024).

Zhou, Z., Rahman Siddiquee, M. M., Tajbakhsh, N. & Liang, J. Unet++: A nested u-net architecture for medical image segmentation. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support: 4th International Workshop, DLMIA 2018, and 8th International Workshop, ML-CDS 2018, Held in Conjunction with MICCAI 2018, Granada, Spain, September 20, 2018, Proceedings 4 (pp. 3–11). Springer International Publishing. (2018).

Spoorthi, K., Prasad, D. K., Pal, S., Venkatakrishnan, S. & Kulkarni, M. S. A Novel method using deep learning technique for automatic grading and classification (of Interferometry Video Frames for Dry Eye Analysis, 2023).

Acknowledgements

NA.

Funding

Not Applicable.

Author information

Authors and Affiliations

Contributions

Conceptualization, Methodology, Software, Validation, Formal analysis, Investigation, Resources, Data CurationWriting - Original Draft, Writing - Review & Editing, Visualization : R. Steffi and P. Suresh.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The original online version of this Article was revised: In the original version of this Article the authors were incorrectly affiliated. Full information regarding this can be found in the correction published with this article.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Rajan, S., Ponnan, S. An efficient enhanced stacked auto encoder assisted optimized deep neural network for forecasting Dry Eye Disease. Sci Rep 14, 24945 (2024). https://doi.org/10.1038/s41598-024-75518-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-75518-7