Abstract

Neural networks are reported to be vulnerable under minor and imperceptible attacks. The underlying mechanism and quantitative measure of the vulnerability still remains to be revealed. In this study, we explore the intrinsic trade-off between accuracy and robustness in neural networks, framed through the lens of the “uncertainty principle”. By examining the fundamental limitations imposed by this principle, we reveal how neural networks inherently balance precision in feature extraction with susceptibility to adversarial perturbations. Our analysis highlights that as neural networks achieve higher accuracy, their vulnerability to adversarial attacks increases, a phenomenon rooted in the uncertainty relation. By using the mathematics from quantum mechanics, we offer a theoretical foundation and analytical method for understanding the vulnerabilities of deep learning models.

Similar content being viewed by others

Introduction

The impact of deep neural networks (DNNs) spans a wide array of applications, from image classification1 and speech recognition2 to strategic gameplay3,4, protein folding5, semiconductor design6, particle discovery7, and quantum system analysis8,9. Despite their remarkable success, these models have recently come under scrutiny due to their susceptibility to adversarial attacks-subtle perturbations imperceptible to humans that can dramatically degrade performance10,11.

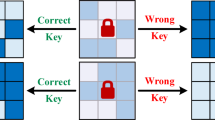

Empirical12,13,14,15,16,17,18,19,20 and theoretical21,22,23,24 studies have shown that these networks can be easily fooled by minor, non-random perturbations, leading to erroneous predictions. A notable example is the Fast Gradient Sign Method (FGSM) attack22 (Fig. 1 ), highlighting the vulnerabilities of DNNs. If such slight disruptions can undermine their performance, the reliability of technologies relying on state-of-the-art deep learning could be at risk. To make the neural networks more robust, the perturbed inputs are often reused as additional training data. These strategies are known as adversarial training23,25,26,27,28,29,30,31, where the robustness is strengthened in sacrifice of the test accuracy, suggesting a fundamental trade-off between accuracy and robustness in neural networks. Therefore, understanding the trade-off between accuracy and robustness in neural networks is crucial, especially given their increasing role in critical decision-making systems, from autonomous vehicles to medical diagnostics and secure authentication systems.

Adding an imperceptible non-random noise to the images, the network will fail to predict the correct label. The trained Googlenet32 on Cifar-1033 gives a 90.78% accuracy on the test set and only 3.67% accuracy on the slightly attacked images. The attack, called FGSM22, is achieved via the transformation \(x = x_{0}+\epsilon \cdot \text {sign}(\nabla _{x}l(f(x,\theta ),Y)| _{x=x_0})\), where \(x_{\text {0}}\) denotes the input images of the training set and and Y is the true label for image \(x_{0}\). x denotes the image to be attacked, \(\epsilon =8/255\) and \(\text {sign}(\nabla _{x}l(f(x,\theta ),Y)| _{x=x_0})\) gives the non-random noise with \(l(f(x,\theta ),Y)\) denoting the loss function. \(\theta\) denotes the weights of the trained network.

In our previous work, we uncovered an intrinsic characteristic of neural networks: their vulnerability shares a mathematical equivalence with the uncertainty principle in quantum physics34,35, which demonstrates the inherent trade-off between the network accuracy and its robustness. This is observed when gradient-based attacks22,36,37,38,39,40 on the inputs are identified as their conjugates. Despite classical approximation theorems asserting that neural networks can approximate continuous functions with arbitrary precision41,42,43,44, we have found that this uncertainty principle is a natural result of minimizing the loss function, which is inevitable. Since modern network structures always include a loss function with respect to some inputs, we can “design” the conjugates by taking the gradient of the loss function with respect to the input variables. These conjugates, when involved in the inputs, can drastically decrease prediction accuracy.

However, the theoretical nature and complexity of our previous findings have made it confusing for readers to fully grasp the implications. To address this, we present a new study in the present work, which aims to elucidate the concept of the “uncertainty principle of neural networks” through a simple example. Here, we focus on a binary classification task where the input is a random number between [−1, 1]. If the number is greater than 0, it is classified as category 1; otherwise, it is classified as category 0. By training a neural network, we investigate the network’s behavior in terms of the loss values and the corresponding frequency domain across different epoch numbers.

Through this straightforward example, we reveal that the complementary principle45, a cornerstone of quantum physics, applies to neural networks, imposing a limit on their simultaneous accuracy and robustness. Furthermore, by integrating the mathematics developed in physics into the study of neural networks, we illuminate new pathways to investigate and explain the various properties of neural networks. This intersection of disciplines provides the potential to enhance our understanding of the theoretical underpinnings of these black-box networks.

Results

Explanation of uncertainty relation in neural networks

In this section, we draw an analogy to the uncertainty principle in signal processing via Fourier transforms46 to provide an intuitive understanding. However, for neural networks, a more rigorous mathematical formalism of the uncertainty relation can be conveniently expressed using Dirac notations from quantum mechanics, which is detailed in the Methods section for interested readers.

Consider a loss function \(l(f(x,\theta ),Y)\) of a trained neural network with a given label \(Y\). We introduce a new function \(\psi _{Y}(x)\), named as neural packet:

where the normalization coefficient \(\beta\) ensures that the integral of the squared neural packet over its input space equals one. The Fourier transform converts the function \(\psi _{Y}(x)\) into its conjugate space as:

where \(p\) is the conjugate variable of \(x\), and vise versa.

The uncertainty principle in Fourier transform primarily refers to the resolution limit between the time domain (here in neural networks, it is the domain of the input x) and the frequency domain (i.e., \(p\), which is the Fourier transform of input) of a signal: for a signal \(\psi _{Y}(x)\), the standard deviations in the time domain and frequency domain are constrained by the relation (see derivations in Methods):

where the explicit formulas for calculating \(dx\) and \(dp\) can be found in Methods. This uncertainty principle has important applications in both signal processing and quantum mechanics, reflecting the inherent limitations when converting between different domains.

In the context of a neural network, Eq. (3) implies that for a trained network with loss function \(l(f(x,\theta ),Y)\), it is impossible for the network to extract features of both \(x\) and \(p\) with arbitrary high precision simultaneously. Here, the term “feature” is an abstract concept that depends on the specific scenarios. For instance, in classification tasks, inputs belonging to the same category share common features. These inputs typically form a distribution representing the features, with some inputs at the center of the distribution and others at the boundaries. During training, we aim for \(dx\) associated with label \(Y\) to be as small as possible to achieve high test accuracy. This analogy holds for other tasks as well, where categories are replaced by abstract concepts.

In a standard classification task, the neural network primarily extracts features of the input \(x\) without considering its conjugate \(p\). However, the scenario changes when gradient-based attacks are involved. To illustrate this, we perform the Fourier transform of the gradient of \(\psi _{Y}(x)\) with respect to \(x\):

where we observe that the gradient involves the distribution \(\hat{\psi }_{Y}(p)\) and the conjugate variable \(p\), indicating that the neural network need to extract the features of p in order to accurately extract the features of the gradient, i.e., the gradient-based “attack”. Thus, when the gradient is added to the input variables, the network is compelled to identify both \(x\) and \(p\) with high accuracy, which is prohibited by the uncertainty relation in Eq. (3). Hence, if the network is trained to have higher accuracy on input x, it will be less accurate in extracting features on p.

In practice, the gradient term can appear either in the input or in the label, with the former contributing to adversarial attacks and the latter to generation tasks. In this paper, we focus solely on the former, leaving the discussion of generation tasks for future work.

Illustration of the neural packet in the binary case

Since the mathematical proof of the uncertainty principle is achieved via the Dirac notations in quantum theory, analogous to the wave packet, we use the term “neural packet” to denote the normalized loss function (see the comparison table in Methods). To elucidate the concept of the “neural packet” and its relationship with the uncertainty principle, we use a simple binary classification task. In the binary classification task, the input domain is from -1 to 1. If the input \(-1 \le x \le 0\), we tabulate it to be class “0”. If the input \(0 \le x \le 1\), we tabulate it to be class “1”. The loss function of a neural network measures the difference between the predicted and true outputs and is integrable over the input space. Figure 2(A) exhibits the loss function \(l(f(x,\theta ),Y)\) of a 1-D binary classification network under different training epochs, where \(f(x, \theta )\) denotes the output of the network with input x, and weight parameters \(\theta\). Y refers to the label corresponding to input x. The value of the loss function reaches a minimum at the correctly classified input regions and starts to increase as inputs approach the classification interfaces, resembling the wave packet in quantum mechanics.

Here, since the input space of type “0” is in the range \([-1, 0]\), \(\psi _{Y=``0''}\) is normalized in range \([-1, 0]\). Similarly, \(\psi _{Y=``1''}\) is normalized in range [0, 1]. In the case we have obtained the two wave packets, we can calculate the corresponding averaged deviation of input x measured by the probability density \(\psi _{Y}(x)\), i.e., \(dx_1\) and \(dx_2\) (the explicit formulas are provided in Methods).

As shown in Fig. 2(B), we can observe that with the increase of the test accuracy, \(dx_1\) and \(dx_2\) both decrease, corresponding to a growing slope of the neural packet at the interface. Hence, the prediction ability of the network increases with the decrease of dx.

Accurate networks are vulnerable

When training a neural network, the test accuracy of the classifier typically increases gradually before reaching a plateau. Concurrently, we can evaluate the variation in robust accuracy throughout the training process. Analogous to the FGSM attack, we introduce an attack without the sign function:

where \(x^\prime\) represents the perturbed input, and \(\epsilon\) is a constant controlling the magnitude of the perturbation. \(Y^{*}\) denotes the specific type. For the purposes of this discussion, we refer to the “+” sign as an “attack” and the “-” sign as an “anti-attack.”

As illustrated in Eq. 5, the added term induces the two attacks. Focusing on the neural packet of category “0” in Fig. 2, whose neural packet is defined in the range [-1, 0], we observe that near the boundary at \(x=0\), the gradient of the loss function with respect to the input x is always positive. Consequently, adding the gradient will shift the points towards other classes. Similarly, for category “1,” the gradient is negative near the boundary, and adding the negative gradient will also shift the points towards class “0.”

Therefore, in the context of simple binary classification, we can generally conclude that the more accurately the model is trained, the steeper the boundary becomes. If we apply the “+” sign in Eq. (5), the network will be attacked, resulting in decreased accuracy. Conversely, if we apply the “-” sign, the network will be “anti-attacked,” leading to increased accuracy.

Figure 3(A) shows the evolution of test, attacked, and anti-attacked accuracies throughout the training process. In the initial epochs, we observe an increase in all three accuracies, indicating that the network is fitting the data distribution. As training progresses, a discrepancy emerges between test and attacked accuracies, while the anti-attacked accuracy continues to increase until it reaches a plateau. This observation aligns with the descriptions in literature.

The phenomena presented in Fig. 3(A) can be attributed to a deeper and more fundamental mechanism, which we term the “uncertainty principle of neural networks”. To elucidate how the uncertainty relation manifests, we also compute the average deviation of the conjugate variable p, denoted as \(dp_1\) and \(dp_2\), measured by the probability density \(\hat{\psi }_{Y}(p)\), where \(Y=0 \text { or } 1\) corresponding to the two categories. As shown in Fig. 3(B), a decrease in \(dx_1\) and \(dx_2\) corresponds to an increase in \(dp_1\) and \(dp_2\). This inverse relationship highlights the network’s inherent limitation in simultaneously extracting features of both x and p with arbitrary precision, similar to the uncertainty principles observed in signal processing and quantum mechanics.

To understand the meaning of dp, we use the idea of the Fourier transform on the “neural packet” (i.e., the normalized loss function). In Fourier transform, input x is transformed into a new space of p. In Figs. 2 and 3(B) we see that dx measures the accuracy of x (where a smaller dx indicates higher classification accuracy), and similarly dp measures the accuracy of p (where a smaller dp indicates higher accuracy in measuring p with \(\hat{\psi }_{Y}(p)\)). Since both x and p cannot be measured accurately simultaneously, a trade-off between x and p is evident. In the simple binary case, dp aligns with robust accuracy, where a larger dp indicates lower robust accuracy. Therefore, dp can be viewed as a manifestation of the network’s vulnerability: larger value of dp indicates more vulnerable network.

Building on this understanding, we can interpret the attacks through the lens of the uncertainty principle. During an attack, all data points are perturbed by “adding” the gradient terms via Eq. (5). These gradient terms, as conjugates of the inputs in terms of the normalized loss function \(\psi _{Y}(x)\), forces the network to accurately discern both the input and the added gradients (atttacks). This process, however, is strictly limited by the uncertainty relation, leading to a decrease of the test accuracy. On the other hand, during anti-attack scenarios, points are shifted towards their correct categories, all data points are adjusted by “subtracting” the conjugates, eliminating the need for the network to identify the gradient terms, which in turn improves accuracy. Therefore, a larger dp value indicates a higher susceptibility of the network to gradient-based attacks. This relationship underscores the trade-off between the network’s precision in feature extraction and its vulnerability to perturbations.

Why the uncertainty principle to understand neural network vulnerabilities?

In our simple binary classification task, the trade-off between accuracy and robustness can be understood through the slope at the decision boundaries, i.e., the direction of the attack. If this vulnerability phenomenon can be explained in such a straightforward manner, one might question the necessity of adopting the concept of the uncertainty principle.

The response to this question can be divided into three key points. Firstly, in a simple binary scenario, the alignment of the slope at the interface with the uncertainty phenomenon is readily apparent. In contrast, in more complex real-world cases, such as the classification of the ImageNet dataset, network accuracy often significantly declines due to minor, imperceptible perturbations. This suggests that a substantial portion of images lie near classification boundaries, indicating that there exist many singularities like in Fig. 2(A) leading to the network to be unstable. This complex phenomenon cannot be easily understood quantitatively through the lens of the slopes alone. However, by applying the more fundamental concept of the uncertainty principle, these phenomena become more intuitive. Introducing conjugates into the inputs inherently leads to a decrease in network performance, aligning with the principles of uncertainty which can be quantified by dx and dp.

Secondly, the uncertainty principle provides a complementary perspective in terms of the input \(x\) and its conjugate \(p\), and asserts that a neural network cannot extract features of both \(x\) and \(p \sim \frac{\partial l(f(x,\theta ),Y)}{\partial x}\) with arbitrary precision. This is a general and profound conclusion in physics that applies to all relevant tasks, not limited to adversarial attacks. For example, the uncertainty principle is also valid in generative networks. In a generative task, if the labels of the dataset include gradient information of the trained neural network, the network’s accuracy will be inherently limited, a point we will demonstrate in our future work.

Thirdly, in practical scenarios, inputs are typically defined in high-dimensional spaces. For instance, in the MNIST dataset, each input image resides in a 28\(\times\)28-dimensional space, which is too large to visualize effectively. Additionally, different classes often intersect, blurring both the boundaries and the gradients. These complexities make it difficult to understand why highly accurate networks are so vulnerable to tiny perturbations. The uncertainty principle, however, does not rely on the dimensions of the input space and the network, making it a unuversal property for all neural networks.

By adopting the concept of the uncertainty relation, we know that the accuracy-robustness trade-off is an inherent property of all neural networks. Readers are encouraged to refer to our previous work for empirical verification of the uncertainty relation on the MNIST and CIFAR-10 datasets34.

Conclusion and future directions

In our previous works34,35, we mathematically proved the uncertainty principle in neural networks. However, the theoretical nature and complexity of those studies made the concept difficult to grasp intuitively. In this new work, we aim to provide a clearer and more accessible explanation through a simple binary classification task. By training a neural network to classify random numbers between -1 and 1, we illustrate how the trade-off between accuracy and robustness manifests in a tangible example.

Our findings reveal that as the neural network becomes more accurate, its susceptibility to adversarial attacks increases. This phenomenon is quantified using the concept of a “neural packet,” which provides a normalized representation of the error distribution in the input space. The binary classification task serves as a straightforward yet powerful demonstration of this trade-off, making the underlying principles more accessible and easier to understand.

The implications of this uncertainty principle are profound, particularly for the development and deployment of large AI models47,48. These models learn deep features and their interrelations, which are crucial for tasks such as natural language processing, image recognition, and autonomous decision-making (e.g., self-driving cars). Since these features can form neural packets, they are limited by the uncertainty principle - accurate models are vulnerable. Therefore, it is essential for the community to recognize the universality of this principle and explore ways to optimize it.

In conclusion, our study provides an analysis of the uncertainty principle in neural networks through the lens of binary classification. By highlighting the intrinsic trade-off between accuracy and robustness, we pave the way for understanding the resilience of the neural networks.

Understanding the underlying mechanisms that contribute to the vulnerability of neural networks opens avenues for developing more robust network structures in future research. One promising approach is inspired by the work of Frank Tipler49, who demonstrated how eliminating singularities in classical physics can formally reproduce Bohm’s global quantum potential. In the realm of neural networks, this concept translates to the formation of distinct boundaries between different classes or concepts. In high-dimensional spaces, these boundaries become more pronounced, contributing to the networks’ vulnerability. Our research suggests that this phenomenon is inherent and unavoidable due to the underlying uncertainty principle. Although it may not be possible to completely eliminate this vulnerability, we can mitigate it using the methods proposed by Tipler. By applying the relevant mathematical frameworks, we may be able to develop more robust neural networks without relying heavily on extensive adversarial training.

Methods

Uncertainty relation of neural networks

Formulas and notations for neural networks

Without loss of generality, we can assume that the loss function \(l(f(X,\theta ),Y)\) is square integrableFootnote 1,

Eq. (6) allows us to further normalize the loss function as

so that

For convenience, we refer \(\psi _{Y}(X)\) as a neural packet in the later discussions. Note that under different labels Y, a neural network will be with a set of neural packets.

An input \(X=(x_{1},...,x_{i},...,x_{M})\) with M components can be seen as a point in the multi-dimensional space, where the numerical values of \((x_{1},...,x_{i},...,x_{M})\) correspond to the input values. The input and attack operators of the neural packet \(\psi _{Y}(X)\) can then be defined as:

The average input value at \(x_{i}\) associated with neural packet \(\psi _{Y}(X)\) can be evaluated as

Since \(\psi _{Y}(X)\) corresponds to a purely real number without imaginary part, the above equation is equivalent to:

Besides, the attack operator \(\hat{p}_{i}=\frac{\partial }{\partial x_{i}}\) corresponds to the conjugate variable of \(x_{i}\). And we can obtain the average value for \(\hat{p_{i}}\) as

Derivation of the uncertainty relation

The uncertainty principle of a trained neural network can then be deduced by the following theorem:

Theorem 1

The standard deviations \(\sigma _{{p}_{i}}\) and \(\sigma _{{x}_{i}}\) corresponding to the attack and input operators \(\hat{p_{i}}\) and \(\hat{x_{i}}\), respectively, are restricted by the relation:

Proof

We first introduce the standard deviations \(\sigma _{a}\) and \(\sigma _{b}\) corresponding to two general operators \(\hat{A}\) and \(\hat{B}\). Then it follows that:

In general, for any two unbounded real operators \(\langle \hat{a}\rangle\) and \(\langle \hat{b}\rangle\), the following relation holds

If we further replace \(\hat{a}\) and \(\hat{b}\) in Eq. (15) by operators \(\hat{a}\langle \hat{a}^{2}\rangle ^{-1/2}\) and \(\hat{b}\langle \hat{b}^{2}\rangle ^{-1/2}\), we can then obtain the property \(2\langle \hat{a}^{2}\rangle ^{1/2}\langle \hat{b}^{2}\rangle ^{1/2} \ge i\langle \hat{a}\hat{b}-\hat{b}\hat{a}\rangle\), which gives the basic bound for the commutator \([\hat{a},\hat{b}]\equiv \hat{a}\hat{b}-\hat{b}\hat{a}\),

Seeing the fact that \([\hat{a},\hat{b}]=[\hat{A},\hat{B}]\), we finally obtain the uncertainty relation

In terms of the neural networks, we can simply replace operators \(\hat{A}\) and \(\hat{B}\) by \(\hat{p}_{i}\) and \(\hat{x}_{i}\) introduced in Eq. (9), and this leads to

where we have used the relation

\(\square\)

Note that for a trained neural network, \(\psi _{Y}(X)\) depends on the dataset and the structure of the network.

Equation (18) is a general result for general neural networks (see extension to the generation network in supplementary material). For convenience, we compare the formulas in quantum physics with those used in neural networks in Table 1 to facilitate easy understandings for readers.

The binary classifier

Data generation

To generate the dataset for the binary classification, we created a function that produces data points randomly distributed between −1 and 1. The labels are binary, with values less than 0 labeled as 0 and values greater than or equal to 0 labeled as 1. This simple binary classification task allows us to evaluate the performance and robustness of our neural network model.

Neural network architecture

The neural network architecture consists of four fully connected layers with batch normalization and dropout layers to prevent overfitting. The network uses Leaky ReLU activation functions. The architecture is designed to balance complexity and performance, ensuring that the model can learn effectively from the data while avoiding overfitting.

Training procedure

The network was trained using stochastic gradient descent (SGD) with a learning rate of 0.01. The training process involved 1000 epochs, and the model was evaluated every 10 epochs. During each epoch, the model parameters were updated to minimize the cross-entropy loss between the predicted and true labels. The training process was monitored by evaluating the model’s performance on a validation set at regular intervals.

Evaluation metrics

We evaluated the model using several metrics, including loss, accuracy, attacked accuracy (robustness to gradient-based attacks), and anti-attacked accuracy. The loss and accuracy were computed by comparing the model’s predictions with the true labels.

To evaluate the model’s resilience to attacks, we implemented a modified version of the Fast Gradient Sign Method (FGSM) attack (Eq. (5)). By introducing both the plus and minus effect of the gradient without the “sign” function, we test the model’s ability to maintain performance under different types of adversarial inputs.

Quantum inspired metrics

At some training epochs, we compute the quantum metrics such as dx and dp to gain insights into the model’s behavior from a quantum perspective. These metrics were derived from the loss function and its gradients, providing a unique perspective on the model’s performance. The dx metric measures the spread of the input data, while the dp metric quantifies the resilience of the model under attacks.

The calculation of dx and dp in the binary example involves several steps:

-

1.

Normalization Constant We first compute the normalization constant, \(\beta\), for the loss function over a specified range. This ensures that the loss function is properly scaled.

$$\begin{aligned} \beta = \sqrt{\int _{a}^{b} l(f(x, \theta ), Y)^2 \, dx} \end{aligned}$$ -

2.

Neural Packet Using the normalization constant, we define the neural packet, \(\psi (x)\), which represents the normalized loss function.

$$\begin{aligned} \psi (x) = \frac{l(f(x,\theta ),Y)}{\sqrt{\beta }}, \end{aligned}$$where the label \(Y\in {0,1}\).

-

3.

Calculation of dx The dx metric is calculated as the standard deviation of the input (i.e., the input operator34 \(\hat{x}\)) weighted by the neural packet.

$$\begin{aligned} dx = \sqrt{\int _{a}^{b} \psi (x)(\hat{x} - \langle \hat{x} \rangle )^2 \psi (x) \, dx} \end{aligned}$$where

$$\begin{aligned} \hat{x} = x, \text { and } \langle x \rangle = \int _{a}^{b} x \psi (x)^2 \, dx. \end{aligned}$$ -

4.

Calculation of dp: The dp metric is calculated as the standard deviation of the gradient (i.e., the attack operator34 \(\hat{p}\)) of the neural packet.

$$\begin{aligned} dp = \sqrt{\int _{a}^{b} \psi (x) (\hat{p} - \langle \hat{p} \rangle )^2\psi (x) \, dx} = \sqrt{\int _{a}^{b} \psi (x) (\hat{p}^2 - 2\hat{p}\langle \hat{p} \rangle + \langle \hat{p} \rangle ^2)\psi (x) \, dx}, \end{aligned}$$where

$$\begin{aligned} \langle \hat{p} \rangle = \int _{a}^{b} \frac{d\psi (x)}{dx} \psi (x) \, dx{\textbf {, }}\hat{p}\psi (x)=\frac{\partial \psi (x)}{\partial x}\text {, and }\hat{p}^2\psi (x)=\frac{\partial ^2\psi (x)}{\partial x^2} \end{aligned}$$

These calculations provide a quantitative measure of the model’s accuracy and robustness, allowing us to assess the trade-off between these two aspects.

Model evaluation

We evaluated the model every 10 epochs over a total of 1000 epochs. For each evaluation, we generated 100 uniformly distributed points in the range [-1, 1] to compute the loss curves. We also performed gradient-based and anti-gradient-based attacks with an epsilon value of 0.05 to assess the model’s resilience under attacks. The results were averaged over 50 independent runs to ensure robustness and reliability.

Data availability

The datasets and codes used and/or analyzed during the current study are available from the corresponding author on reasonable request.

Change history

16 October 2025

The original online version of this Article was revised: The original version of this Article contained errors in References 16, 27, 30 and 41. The References have been updated.

Notes

In practical applications, it is rational to only consider the loss function in a limited range \(l(f(X,\theta ),Y)<C\) under a large constant C, since samples out of this range can be seen as outliers and meaningless to the problem. The loss function can then be generally guaranteed to be square integrable in this functional range.

References

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Commun. ACM 60, 84–90. https://doi.org/10.1145/3065386 (2017).

Hinton, G. et al. Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal Process. Mag. 29, 82–97. https://doi.org/10.1109/MSP.2012.2205597 (2012).

Silver, D. et al. Mastering the game of go with deep neural networks and tree search. Nature 529, 484–489. https://doi.org/10.1038/nature16961 (2016).

Schrittwieser, J. et al. Mastering atari, go, chess and shogi by planning with a learned model. Nature 588, 604–609. https://doi.org/10.1038/s41586-020-03051-4 (2020).

Senior, A. W. et al. Improved protein structure prediction using potentials from deep learning. Nature 577, 706–710. https://doi.org/10.1038/s41586-019-1923-7 (2020).

Mirhoseini, A. et al. A graph placement methodology for fast chip design. Nature 594, 207–212. https://doi.org/10.1038/s41586-021-03544-w (2021).

Baldi, P., Sadowski, P. & Whiteson, D. Searching for exotic particles in high-energy physics with deep learning. Nat. Commun. 5, 4308. https://doi.org/10.1038/ncomms5308 (2014).

Carleo, G. & Troyer, M. Solving the quantum many-body problem with artificial neural networks. Science 355, 602–606. https://doi.org/10.1126/science.aag2302 (2017).

Pang, L.-G. et al. An equation-of-state-meter of quantum chromodynamics transition from deep learning. Nat. Commun. 9, 210. https://doi.org/10.1038/s41467-017-02726-3 (2018).

Szegedy, C. et al. Intriguing properties of neural networks. In International Conference on Learning Representations, Banff: Canada, 14–16 January (2014).

Ren, K., Zheng, T., Qin, Z. & Liu, X. Adversarial attacks and defenses in deep learning. Engineering 6, 346–360. https://doi.org/10.1016/j.eng.2019.12.012 (2020).

Su, D. et al. Is robustness the cost of accuracy? – a comprehensive study on the robustness of 18 deep image classification models. In European Conference on Computer Vision, Munich: Germany, 8–14 September (2018).

Eykholt, K. et al. Robust physical-world attacks on deep learning visual classification (2018). In Conference on Computer Vision and Pattern Recognition, Salt Lake City: USA, 18–23 June (2018).

Jia, R., Liang, P. Adversarial examples for evaluating reading comprehension systems. In Conference on Empirical Methods in Natural Language Processing, Copenhagen: Denmark, 9–11 September (2017).

Chen, H., Zhang, H., Chen, P.-Y., Yi, J., Hsieh, C.-J. Attacking visual language grounding with adversarial examples: A case study on neural image captioning. Annual Meeting of the Association for Computational Linguistics, Melbourne: Australia, 15–20 July (2018).

Carlini, N. and Wagner, D. "Audio Adversarial Examples: Targeted Attacks on Speech-to-Text," IEEE Security and Privacy Workshops (SPW), San Francisco, CA, USA, 1–7, https://doi.org/10.1109/SPW.2018.00009 (2018).

Xu, H., Caramanis, C. & Mannor, S. Sparse algorithms are not stable: A no-free-lunch theorem. IEEE Trans. Pattern Anal. Mach. Intell. 34(1), 187–193 (2012).

Benz, P., Zhang, C., Karjauv, A., Kweon, I. S. Robustness may be at odds with fairness: An empirical study on class-wise accuracy. In Neural Information Processing Systems, Virtual, 6–12th December (2020).

Morcos, S.A., Barrett, G.T.D., Rabinowitz, C.N., Botvinick, M. On the importance of single directions for generalization. In International Conference on Learning Representations, Vancouver: Canada, 30 April–5 May (2018).

Springer, J., Mitchell, M. & Kenyon, G. A little robustness goes a long way: Leveraging robust features for targeted transfer attacks. Neural Inf. Process. Syst., Virtual 34, 9759–9773 (2021).

Zhang, H. et al. Theoretically principled trade-off between robustness and accuracy. In International Conference on Machine Learning, California: USA, 9–15 June (2019).

Goodfellow, J. I., Shlens, J. Szegedy, C. Explaining and harnessing adversarial examples. In International Conference on Learning Representations, San Diego: USA, 7–9 May (2015).

Tsipras, D., Santurkar, S., Engstrom, L., Turner, A., Madry, A. Robustness may be at odds with accuracy. In International Conference on Learning Representations, Vancouver: Canada, 30 April–3 May (2018).

Colbrook, J. M., Antun, V. & Hansen, C. A. The difficulty of computing stable and accurate neural networks: On the barriers of deep learning and smale’s 18th problem. PNAS 119(12), e2107151119 (2021).

Yang, Y.-Y., Rashtchian, C., Zhang, H., Salakhutdinov, R. R. & Chaudhuri, K. A closer look at accuracy versus robustness. Neural Inf. Process. Syst. 33, 8588–8601 (2020).

Arani, E., Sarfraz, F., Zonooz, B. Adversarial concurrent training: Optimizing robustness and accuracy trade-off of deep neural networks. In British Machine Vision Conference, Virtual, 7–10 September (2020).

Arcaini, P., Bombarda, A., Bonfanti, S. and Gargantini, A. "ROBY: a Tool for Robustness Analysis of Neural Network Classifiers," 14th IEEE Conference on Software Testing, Verification and Validation (ICST), Porto de Galinhas, Brazil, 442–447, https://doi.org/10.1109/ICST49551.2021.00057 (2021).

Sehwag, V. et al. Improving adversarial robustness using proxy distributions. In International Conference on Learning Representations, Virtual, 3–7 May (2021)

Leino, K., Wang, Z., Fredrikson, M. Globally-robust neural networks. In International Conference on Machine Learning, Virtual, 18–24 July (2021).

Antun, V., Renna, F., Poon C., Adcock, B. & Hansen., A.C. On instabilities of deep learning in image reconstruction and the potential costs of AI, Proceedings of the National Academy of Sciences, 117(48) 30088–30095, https://doi.org/10.1073/pnas.1907377117 (2020).

Rozsa, A., Günther, M., Boult, E.T. Are accuracy and robustness correlated? In International Conference on Machine Learning and Applications, Anaheim: USA, 18–20 December (2016)

Zhang, J.-J., Zhang, D.-X., Chen, J.-N., Pang, L.-G., Meng, D. Robust and Test accuracy of the IFA pipeline and the frequencies related https://doi.org/10.7910/DVN/FKFJZQ (2021).

Krizhevsky, A. Learning multiple layers of features from tiny images. https://www.cs.toronto.edu/kriz/cifar.html (2009).

Zhang, J.-J. & Meng, D. Quantum-inspired analysis of neural network vulnerabilities: The role of conjugate variables in system attacks. Natl. Sci. Rev. https://doi.org/10.1093/nsr/nwae141 (2024).

Zhang, J.-J., Zhang, D.-X., Chen, J.-N., Pang, L.-G. On the uncertainty principle of neural networks. arXiv:abs/2205.01493 (2022).

Kurakin, A., Goodfellow, J.I., Bengio, S. Adversarial examples in the physical world. In International Conference on Learning Representations, San Juan: Puerto Rico, 2–4 May (2016).

Madry, A., Makelov, A., Schmidt, L., Tsipras, D., Vladu, A. Towards deep learning models resistant to adversarial attacks. In International Conference on Learning Representations, Vancouver: Canada, 30 April–3 May (2018).

Papernot, N. et al. The limitations of deep learning in adversarial settings. In European Symposium on Security and Privacy, Saarbrücken: Germany, 21–24 March (2016).

Moosavi-Dezfooli, S.-M., Fawzi, A., Frossard, P. Deepfool: A simple and accurate method to fool deep neural networks. In Conference on Computer Vision and Pattern Recognition, Las Vegas: USA, 27–30 June (2016).

Modas, A., Moosavi-Dezfooli, S.-M. & Frossard, P. Sparsefool: A few pixels make a big difference. In Conference on Computer Vision and Pattern Recognition, Long Beach: USA, 15–20 (2019).

Cybenko, G. Approximations by superpositions of a sigmoidal function. Math Control Signals Syst. 2, 303–314 (1989).

Hornik, K., Stinchcombe, M. & White, H. Multilayer feedforward networks are universal approximators. Neural Netw. 2, 359–366. https://doi.org/10.1016/0893-6080(89)90020-8 (1989).

Gelenbe, E. Random neural networks with negative and positive signals and product form solution. Neural Comput 1, 502–510. https://doi.org/10.1162/neco.1989.1.4.502 (1989).

Gelenbe, E., Mao, Z.-H. & Li, Y.-D. Function approximation with spiked random networks. IEEE Trans. Neural Netw. 10, 3–9. https://doi.org/10.1109/72.737488 (1999).

Bohr, N. On the notions of causality and complementarity. Science 111, 51–54. https://doi.org/10.1126/science.111.2873.51 (1950).

Bracewell, R. N. The Fourier transform and its applications (McGraw-Hill, London, 1986).

Devlin, J., Chang, M. W., Lee, K., Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding (2019). arXiv:1810.04805.

OpenAI et al. Gpt-4 technical report. (2024). arXiv:2303.08774.

Tipler, F. J. Quantum nonlocality does not exist. In Proceedings of the National Academy of Sciences, Vol. 111, 11281–11286, https://doi.org/10.1073/pnas.1324238111 (2014). https://www.pnas.org/doi/pdf/10.1073/pnas.1324238111.

Acknowledgements

The work is partly supported by the National Natural Science Foundation of China (NSFC) under the grant number 12105227, 12405318 and 12405318.

Author information

Authors and Affiliations

Contributions

Jun-Jie Zhang and Jian-Nan Chen designed and analysed the results and wrote the manuscript, Jun-Jie Zhang and Xiu-Cheng Wang conducted the numerical experiments, and De-Yu Meng supervised the work. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhang, JJ., Chen, JN., Meng, DY. et al. Exploring the uncertainty principle in neural networks through binary classification. Sci Rep 14, 28402 (2024). https://doi.org/10.1038/s41598-024-79028-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-79028-4

Keywords

This article is cited by

-

Automatic generation of Chinese mural line drawings via enhanced edge detection and CycleGAN-based denoising

npj Heritage Science (2025)