Abstract

For many generations, hand-drawn diagrams were utilized as a common graphical means of interaction in various fields, such as engineering, architecture, and education. Because human-made graphics are inherently complicated and variable, hand-drawn diagram recognition is a challenging task. Consequently, there is a growing demand for effective methods and techniques for recognizing and understanding hand-drawn diagrams. In this research, an integrated approach is being developed that combines the best features of multiple machine learning approaches to enhance overall performance and deal with the weaknesses of individual methods. Additionally, deep learning techniques, which are well known for their ability to find intricate patterns and features in data, are incorporated into the proposed system. This research proposes improvised methods such as Fossum Soergel k-means clustering, morphological Canny Bessel radial basis contour shape factor, Fisher kernel k-nearest neighbor, sing-scurve fuzzy rule generation, and wide context faster regional convolutional neural network to enhance the system’s performance. The system’s performance is evaluated by experimentation with benchmark datasets of hand-drawn flowcharts, finite automata, and business process models. Testing is done on the combined datasets to generalize the approach. Experimentation results are compared with the state-of-the-art methods to check the proposed model’s performance. The results show that the proposed system can digitize various diagrams automatically. Future research directions on offline hand-drawn diagrams are also explored in this paper.

Similar content being viewed by others

Introduction

Hand-drawn diagrams not connected to immediate electronic data entry are offline hand-drawn diagrams. They are created physically on paper or different surfaces. Hand-drawn diagrams served as the primary form of effective interaction from the beginning of human history until the most modern, technologically developed human age. These diagrams usually convey complex concepts, opinions, and patterns in an easy-to-understand manner. These diagrams are used in various applications related to health care1,2,3,4, education5,6, business7,8, human-computer interaction9,10, ancient communication, etc11, as shown in Figure 1.

Digitization of offline hand-drawn diagrams is the need of the day. Identifying offline hand-drawn diagrams facilitates effective digitization and visualization for various applications, as mentioned in Figure 1, enabling automatic analysis and understanding of the hand-drawn diagrams. The question of why drawing by hand and subsequently digitizing the material naturally arises, and the reason behind it is that handwriting has several advantages12. The capacity to concentrate on material is enhanced when drawn by hand. It enhances prioritizing abilities, memory, and data organization. Handwriting also improves comprehension and information handling. The conceptual question has a superior interpretation for learners who scribble notes by hand rather than those who type messages13.

Manual digitization of hand-drawn diagrams is tedious and vulnerable to mistakes, emphasizing the necessity for automated identification methods. Diagram identification has extensively used conventional approaches for image analysis, including morphological operations and edge detection. However, these techniques frequently have trouble dealing with irregular and imprecise hand-drawn diagrams. Conventional approaches’ performance can be significantly reduced by uneven line width, different sketching styles, and noise imposed. The latest developments in deep learning have proven considerable potential. Convolutional neural networks (CNNs) demonstrated exceptional effectiveness in various fields by self-acquiring hierarchical features in basic visual data, revolutionizing the field. Even with the developments in approaches to machine learning, such as convolutional neural networks (CNN), there remains the potential to increase the precision and effectiveness of hand-drawn diagram recognition14,15.

This research suggests an integrated approach incorporating conventional image processing techniques with enhanced machine and deep learning to overcome such difficulties. By considering various appearance and structural features, integrated machine learning techniques such as hybrid CNN architecture perform very well at pattern identification, improving accuracy in applications like drawing identification16. Deep learning methods’ capacity to automatically derive characteristics from input without human interaction produces reliable systems, further improving identification accuracy17. The input sketch must be segmented and clustered to separate the individual’s strokes into conceptually significant elements and recognize hand-drawn schematics18; this factor is also considered in this research. Combining these complementary approaches, the suggested methodology seeks remarkable precision in hand-drawn diagram recognition, even with distortion and variation.

Analysing and digitising sketches created by hand is the focus of the computer vision and pattern recognition area. This field of study has attracted attention because of its many real-world applications as well as its difficult technical issues. Some of the main goals and driving forces for this study are listed below.

Objectives

-

1.

The main objective is to take unstructured, hand-drawn inputs and turn them into structured, relevant information. This entails recognising and categorising different elements, including text, lines, symbols, and forms, as well as comprehending how they relate.

-

2.

Create methods that are able to identify and detect simple architectural primitives even when they are created in an informal style. These techniques must be resilient to changes in design, drawing pace, and line consistency.

-

3.

Understanding how these components work together to create a full diagram is a crucial goal that goes beyond simply identifying individual components. This entails identifying the relationships, levels of hierarchy, and spatial configurations that establish the overall framework of the diagram.

-

4.

Develop systems that can handle the chaos and unpredictability that come with hand-drawn inputs, like overlapping elements, uneven stroke lengths, and unfinished lines.

Motivation

-

1.

Hand-drawn drawings are the first step in generating ideas for numerous experts, instructors, and architects. By eliminating the period and energy needed to digitally reproduce these drawings, automatic digitisation can improve operations.

-

2.

It is simpler to share, modify, and create ideas collaboratively over systems and among multiple teams when drawings are converted to digital formats.

-

3.

Hand-drawn schematic identification can be applied in educational settings to provide interactive learning resources that assist students in turning their drawings into academic schematics, which will help them comprehend difficult ideas.

-

4.

Permitting machines to comprehend and analyse individuals drawings can result in more organic and simple connections, letting consumers to express ideas despite the need for specialised software or expertise in technology. This is a component of a larger study on human-computer interaction.

Research questions

-

1.

How might methods for computer vision and machine learning be enhanced to more precisely identify various hand-drawn diagram elements?

-

2.

Which preprocessing methods can be applied to hand-drawn diagrams to minimise noise while maintaining key characteristics?

-

3.

Which feature extraction techniques work best for distinguishing between distinct hand-drawn elements?

-

4.

The biggest challenge is the inclusion of text, mathematical expression, and symbols in diagrams.

-

5.

Can a generalized model be developed to recognize different types of diagrams?

-

6.

How to concentrate on arrowheads that indicate the direction of flow between elements?

-

7.

Individual drawing style variations present a big problem, necessitating adaptive techniques that can generalize to a variety of inputs.

The next part of the article is structured as shown in Figure 2.

Literature review

The literature on hand-drawn diagram recognition is reviewed in this section. The benefits are explored, and then the limitations are consolidated at the end of the section.

Schafer et al. (2021)19 presented the arrow-based region-based convolutional neural network (R-CNN), a useful deep learning technique for joint symbol and architecture detection in offline diagrams. Arrow R-CNN used diagram-aware after-processing methods and an arrow keypoint estimator to expand upon the faster R-CNN architecture. This work introduced a network architecture designed to handle small diagrams effectively. Additionally, specific data augmentation techniques were introduced to enhance the model’s generalization capabilities for effectively recognizing the handwritten diagrams.

Deufemia & Risi (2020)18 used hierarchical interpretation to accomplish multi-domain identification for hand-drawn diagrams. Here, the geometry of the elements and the conceptual language of graphical annotations were modeled using sketch grammar (SkG). The original drawings were then analyzed using identification frameworks built automatically from SkG. The participant’s strokes were initially divided into segments and processed as simple forms. Later, they were grouped into domain symbols by taking advantage of the domain context. Lastly, a view of the entire image was created. Thus, the domain-based hand-drawn diagrams were effectively recognized.

Yun et al. (2022)20 developed a practical framework named graph neural networks (GNN) for offline handwritten diagram recognition. GNN was utilized to model and learn complex contextual relationships within the handwritten diagrams. Using stroke visualizations, this learning approach structured symbol identification and separation as component grouping and categorizing tasks. Also, this method was specifically designed to provide both symbol instance labels and semantic labels by enhancing the comprehensiveness of recognition.

Roy et al. (2020)21 was able to recognize manually drawn electrical and electronic circuit parts automatically. In this case, cleaned-up photos of the circuit’s parts were used to teach and assess an identification system. To identify the circuit shape and structure offline hand-drawn diagrams, a set of characteristics was composed of a texture-based characteristic descriptor, histogram of orientated gradients (HOG), and structure-based characteristics, such as centroid distance, tangent angle, and chain code histogram. ReliefF algorithm was employed to optimize the texture-based feature to reduce dimensionality.

Fang et al. (2022)22 presented a practical framework named CNN-based keypoint detector for offline hand-drawn diagram recognition. DrawnNet was created by extending two innovative keypoint streaming components from CornerNet. These abstracted and aggregated mathematical properties can be found in hand-drawn graphics’ polygonal outlines, which include diamonds, squares, and rectangles. Thus, DrawnNet was built upon the CornerNet architecture and incorporated the following principles: keypoint-based detection, novel keypoint pooling modules, and arrow orientation prediction branch. This work aimed to efficiently identify handwritten diagrams by extracting and aggregating geometric properties from polygonal outlines.

Chongyu Pan et al.11 developed an ensemble method incorporating BiLSTM and HMM methods for recognizing the diagram from the handwritten document. The technique attained high recognition accuracy based on compositionality, data efficiency, and knowledge representation. The correlation of spatial features information enhanced the performance probability with improved capacity. However, interpretability and scalability problems would occur in the model and impact model training.

Lei Zhang23 established a dual channel-CNN model to identify hand-drawn diagram recognition. Meanwhile, the contour extraction algorithm recognized the contour representation of the diagram. Based on these algorithm techniques, the model significantly enhanced the recognition rate. However, the recognition accuracy of the model was reduced while performing the diagram recognition process.

Basheer Alwaely and Charith Abhayaratne24 developed a spectral domain representation on a graph basis to recognize hand-drawn diagrams and identify shapes. The method utilized eigenvalues to capture robust results of a feature vector. Additionally, the model evaluated the graph matrix by executing a normalized Laplacian operator. However, the model was not utilized for deaf people and was affected by scalability issues.

Hanning Otto Brinkhaus et al.25 introduced a machine-readable representation model to depict the chemical representation’s molecular structure. The model effectually recognized the hand-drawn structures, chemical molecular structures, and hand-drawn depiction representation, which was significantly identified and standardized correctly. For large training samples, the achieved structure information was not satisfied, which caused performance degradation.

Sardar Ali et al.26 established a DeepNet model combined with sketch representation to recognize the hand-drawn diagram tasks. The model achieved recognition accuracy when compared to other conventional methods. During feature extraction evaluation, the model suffered from extinct fusion problems, potential issues, and optimization problems.

Samuelle Bourgault and Jennifer Jacobs27 employed a sketch-based interaction representation enabled by audiovisual performance termed megafauna. In this context, the real-time animation and sketch-matching function appliances were effectively identified and provided robust outcomes. Moreover, the poor visual appearance of hand-drawn representation under real-time environment scenarios caused complexity issues and high error rate issues.

Limitations

The following limitations have been found in the reviewed literature.

-

1.

The prevailing works found it challenging to identify the arbitrary borders, ambiguous words, and overlapped parts of hand-drawn diagrams. This affects the efficiency of the existing techniques in offline hand-drawn diagram recognition.

-

2.

Another challenge was concentrating on arrowheads indicating the flow direction between elements. However, arrowheads may be inadvertently left out or not clearly defined in hand-drawn diagrams.

-

3.

The reviewed works could not concentrate on elements that might be crossed out or removed in an iterative process of refining a diagram.

-

4.

Few works use ink on paper, resulting in bleed-through and making the diagram less legible. This happens when ink seeps through the paper, affecting adjacent layers.

-

5.

Existing work has limitations that include the alteration in the size and positions of the image. It also blurs details or removes some of the image’s vital information and may produce an unrealistic contrast, resulting in ineffective detection.

To overcome these limitations, a system is proposed where

-

1.

The Fossum Soergel k-means clustering (FSkMC) algorithm is introduced to group the text and shape.

-

2.

The morphological Canny Bessel radial basis contour shape factor (MCBRBCS) is developed to detect the exact boundaries and segmented regions.

-

3.

Stroke width transform-based optical character recognition (SWT-OCR) is introduced to improve the effectiveness of stroke identification and text detection.

-

4.

Fisher kernel k-nearest neighbor (FKkNN) is used to identify or pinpoint the location of arrowheads.

-

5.

Sing-Scurve fuzzy (\(\textrm{S}^2\)Fuzzy) is used to analyze the connectivity between the shapes.

-

6.

Wide context faster regional convolutional neural network (WC-FRCNN) based effective hand-written content recognition is proposed.

Proposed methodology

The block diagram of the proposed system is presented in Figure 3. The proposed work starts by collecting the input images from publicly available sources to recognize the hand-drawn diagram. Firstly, image preprocessing is carried out to enhance the quality of the image for effective recognition. The preprocessing steps include image resizing28, grayscale conversion, noise reduction using a Gaussian filter29, and image binarization. To transform the entire images from the dataset into the same size, image resizing is done. Then, grayscale conversion is used to convert a color image to grayscale. It removes the color information and retains only the intensity of light. After that, unwanted artifacts or noises in the input image caused by instruments are removed using a Gaussian filter (GF). Then, it converts a grayscale into a binary image, where each pixel can have only two values, 0 and 1.

Next, the preprocessed image detects ink spread detection using pixel density reduction. The following process uses the Fossum Soergel k-means clustering (FSkMC). Here, text and shape are grouped. Since k-means clustering (kMC)30 effectively distinguished the interest area from the other regions of the image based on the shape characteristic. So, this research uses k-means clustering. However, it is not guaranteed that the global optimum solution will be found, and some data points are not grouped into clusters and may fall into the same cluster group. This is due to using Euclidean distance, which cannot always find more accurate cluster boundaries. The Fossum Soergel distance was used to solve this issue.

Then, the morphological Canny Bessel radial basis contour shape factor (MCBRBCS) is developed to detect the exact boundaries and segmented regions to extract the features. First, the binary pictures undergo morphological procedures. The purpose of the erosion and dilation is to alter the picture’s look. Erosion is used to reduce noise, and then the picture is filled in via dilation and stuffed in the holes. Moreover, the picture borders from the morphological image are detected using a Canny Bessel radial basis edge detector. A popular border detection approach called Canny Edge Detection (CED) significantly decreases the quantity of data that needs to be analyzed while extracting valuable structural information from various visual objects. The two standard Sobel processors in the CED are set at a 3-by-3 window, a system drawback. Furthermore, the convolution kernel that CED uses by convention is replaced with a Bessel radial basis kernel to calculate the horizontal and vertical gradients, reducing the time consumption in CED. Then, the contour formation is applied to the edge detected image. Edge detection focuses on the drastic changes in brightness or pixels, while contour detection focuses on outlining closed objects. Then, the shape factor is detected for each shape.

Then, the overlapping shape detection is done using the Hough transform technique. The Hough transform is used to detect geometric shapes in images, even when distorted, incomplete, or partially obscured. Then, arrowhead localization is identified using Fisher kernel k-nearest neighbor (FKkNN). kNN31 is straightforward to understand and easy to implement. It directly tags new data points based on historical data (nearest neighbors). But, kNN assumes uniform density across the feature space. Fisher kernel density estimation was used to estimate the local density around data points to solve this issue.

Then, Sing-Scurve fuzzy (\(\textrm{S}^2\)Fuzzy) is used to analyze the connectivity between the shapes. It is used to associate arrows with corresponding shapes. Fuzzy algorithms are straightforward and understandable and can provide the most effective solution to complex issues. The fuzzy algorithm presents the tuning difficulty of the membership function, scaling factor, and control rules. To solve this issue, the Sing-Scurve fuzzy membership function will be used in the existing fuzzy algorithm.

In the meantime, stroke identification and text detection are done using the stroke width transform-based optical character recognition (SWT-OCR) technique. Optical character recognition (OCR) is the process that converts an image of text into a machine-readable text format. When the time of recognition, segmentation errors can occur when characters are touching, overlapping, or poorly defined, thus leading to incorrect recognition results. stroke width transform (SWT) will be included in OCR to solve this issue. SWT analyzes stroke widths in the image. Characters that have consistent stroke widths can aid in segmentation. SWT detects regions with similar stroke widths.

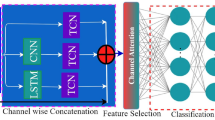

Next, features are extracted from shape and text using the principal component analysis (PCA) algorithm. Finally, a wide context faster regional convolutional neural network (WCFRCNN) recognizes the offline handwritten diagram. FRCNNs are pretty good at recognizing diagrams from images. They can learn hierarchical characteristics from the input photos immediately. Faster R-CNN introduces a region proposal network (RPN) that identifies potential object’s locations in an image. This network generates region proposals, significantly reducing the time required for object detection. The input image passes through a CNN backbone to extract feature maps. These feature maps capture various levels of visual information from the image. The RPN generates region proposals for potential object locations. These proposals are stored in memory during training and inference. The memory requirements increase with the number of proposals, impacting the model’s scalability. A wide context (WC) block was used to solve this issue.

Thus the model integrates the enhanced machine learning techniques such as FKkNN, FSkMC with the deep learning techniques such as WCFRCNN for generalized hand-drawn diagram recognition. The detailed explanation of the technique is explored here.

-

1.

Input

The input for the research of hand-drawn recognition is collected from the hand-drawn diagram dataset, which is mathematically illustrated as,

$$\begin{aligned} F = \{f_1,f_2,\dots ,f_z\} \end{aligned}$$(1)where, F indicates the input data source, \(\{f_1,f_2,\dots ,f_z\}\) represents the images respectively.

-

2.

Pre-processing

The input obtained from the hand-drawn dataset is furnished into the pre-processing phase to enhance the quality of the image by eliminating noise, distortions, redundancies, and imbalance issues. These obstacles are eliminated by executing various pre-processing techniques such as noise reduction, resizing, image binarization, and grayscale conversion, which are briefly explained below.

-

(a)

Resizing

The actual size of the original input image is resized for easy analysis, which reduces the computational and attains better uniformity for performing the recognition tasks.

-

(b)

Noise Reduction

Noise reduction techniques effectively eliminate the more sophisticated noises in the input image. In this research, the salt-and-pepper noise is reduced by evaluating the center pixel intensity and is compared with other neighboring pixels. Furthermore, using a Gaussian filter, the high-range noise frequency present in the input image is precisely eliminated, and the image is smoothed for further evaluation.

-

(c)

Grayscale conversion

In gray-scale conversion, the color source of the image gets converted into grayscale representations to eliminate the complexities of computational requirements. In this, the scale conversion takes place by evaluating the value of pixel intensity information of the image.

-

(d)

Image Binarization

After completing the grayscale conversion process, the digital form image is converted into binary form by representing the image as 0 and 1. In image binarization, the segmentation process is achieved based on the evaluation of contrast effectiveness, which is compared with the threshold value to attain binary image representation for performing further validation processes. After performing these pre-processing techniques, the quality of the input image is enhanced significantly, and the attained outcome is depicted as,

$$\begin{aligned} F^* = \{f_1^*,f_2^*,\dots ,f_z^*\} \end{aligned}$$(2)

-

(a)

-

3.

Segmentation

The quality-enhanced image is then fed into the segmentation process, in which the FSkMC model effectively extracts the significant regions of the hand-drawn diagram. From this perspective, the FSkMC method segments the text and shape of the hand-drawn diagram by capturing the complicated cluster structures with object detection, shape variation, size, and luminance identification. Based on these evaluations, the entire data pictorial structure of the diagram is segmented appropriately. Generally, the morphological process of image segmentation is achieved in binary images, in which the unlabeled data points are organized in clusters or groups for effective image processing. Meanwhile, k-means clustering30 provides reliable and precise classification results in image segmentation tasks for diverse, complicated structures. From this clustering process, the segmented text and shape characteristics are individually transmitted to SWT-OCR and MCBRBCS to evaluate further the recognition process.

-

(a)

Morphological Canny Bessel radial basis contour shape factor

In this research, the shape characters of the hand-drawn diagram are allowed to be included in the MCBRBCS model to detect the boundaries of the segmented regions. Generally, the morphological process is achieved in the binary scale images to perform the erosion and dilation process. While identifying the border region, the erosion process eliminates the noise in the binary scale image, and the image organization is done by reducing the imbalance issue using the dilation process. Based on this evaluation, the binary image gets qualified, and the exact boundaries of the region are effectively identified using the Canny Bessel radial edge detection method. Conceptually, in the detection process, the Sobel processors are introduced to exact the structural information of visual objects. Furthermore, the vertical and horizontal gradients of the image are extracted by the convolutional kernel filter obtained in the Bessel radial basis function. Considering these evaluations, the structural information of the visual representation image is extracted efficiently by gradually reducing the time complexity. Moreover, the shape factor of the image is identified by the contour shape factor, in which the contour detection function evaluates the wide range of the image contrast and brightness. Thus, the accurate shape of every object in the hand-drawn diagram is computed by shape factor effectually.

Furthermore, the shape of every object is effectively extracted by executing the Hough transform, FKkNN, and \(\textrm{S}^2\)fuzzy model. The Hough transform is effectively applied when the objects in the hand-drawn diagrams are distorted, partially obscured, incomplete, or in overlapped condition. It extracts the geometric features and shapes of the image appropriately. The \(\textrm{S}^2\)fuzzy model effectively detects the connectivity among the shapes, which makes it very easy to understand and evaluate the complex structure. In this context, the fuzzy algorithm tunes the scaling factor, complexity function, and control rules to solve the computational issues. Therefore, the \(\textrm{S}^2\)fuzzy model extracts the connectivity shapes in the image precisely. Meanwhile, the arrowhead model examines and extracts the FKkNN localization, which gathers the local density information of the image to access the feature map instead of the uniform density function of kNN31. Based on this analysis, the model effectively extracts the arrowhead in the image.

-

(b)

Stroke width transform-based optical character recognition

Further, the textual content of the hand-drawn diagram is fed into the SWT-OCR process, in which the stroke identification of textual content is measured efficiently and converts the image into machine-understandable format. While performing the textual content conversion process, some segmentation error issues might generate overlapping problems and provide inaccurate recognition results. Therefore, the SWT process effectually decreases the significant limitations of textual content conversion, which evaluates the image’s width to detect the regions with the same stroke width.

-

(a)

-

4.

Feature Extraction

The image’s detected text and shape factor are then forwarded into the feature extraction phase, in which the compelling features of the image representations are extracted significantly by the PCA method. PCA is a multivariate technique that extracts the instructive features of both text and shape characters, respectively. Conceptually, the method captures a similar pattern of images and the point variable of the feature map, which is utilized for handling multi-factor analysis. Based on these executions, the PCA model attained a better linear combination function with high variance components and orthogonal constraints. Due to these specifications, the extracted features from textual content and shape detector factor are subjected to the recognition model to attain accurate recognition outcomes.

-

5.

Recognition model

The extracted informative features are then fed into the developed recognition model, where the accurate detection outcome is observed from the WC-FRCNN classifier. Generally, the FR-CNN classifier is widely used to detect the object’s representations in diverse environmental conditions. However, the classifier consumes high computational cost to perform the recognition tasks. It integrates a wide context region that minimizes the computational cost and provides accurate recognition results to tackle this limitation. The proposed WC-FRCNN method performs the detection operation in two major algorithms: region proposal network (RPN) and CNN, which effectually locate and identify objects in diverse, complex image structures. In this context, the RPN network progressively generates its region proposals to create an efficient detection system by reducing the computational cost and the operational time accordingly while detecting the visual representations of the image. Furthermore, the CNN classifier provides a compelling feature map and captures various visualized image information component contexts. The bounding box regression is implemented at the final stage to attain effective object localization in hierarchical visual representations. Thus, the broad context in the research effectively interpolates the regions of visual artifacts and generates accurate detection outcomes. The proposed WC-FRCNN effectively captures the appropriate recognition results based on these implementations.

Experimental setup

The main focus of the proposed system is on generalization. Hence, experimentation is done on the generalized dataset created by combining images from the different benchmark datasets of flowcharts, finite automata, and business process models and notation. The efficiency is computed by comparing the proposed systems’ performance with state-of-the-art methods.

Dataset

The datasets used for the experimentation consist of various hand-drawn diagrams such as flowcharts, finite automata, and business process models. The datasets can be accessed from https://github.com/bernhardschaefer/handwritten-diagram-datasets/tree/main/datasets.

The hand-drawn flowchart dataset FC_A32 contains 419 images. These 419 images are divided into 248 samples for training and the remaining for testing. The sample image from the dataset is shown in Figure 4.

The hand-drawn finite automata (FA)33 dataset is a collection of schematic representations in the field of finite automata. It has 300 images created by 25 different people. Every participant was requested to draw twelve images using the provided patterns. The Lenovo X61 tablet PC, a regular model, was used to gather the data. Figure 5 displays an example image from the dataset.

FC_B34 is a collection of hand-drawn flowchart designs that have been drawn. It has 672 diagrams created by 24 different people. Every participant was instructed to sketch 28 images using the provided patterns. The Lenovo X61 tablet PC, a regular model, was used to gather the information. Figure 6 shows an illustration taken from the dataset.

The hand-drawn business process model and notation (hd-BPMN) dataset35,36 consists of 502 hd-BPMN images. This dataset covers 25 various components of BPMN. An illustration from the dataset is shown in Figure 7.

Performance metrics

Different metrics are used for the generalized dataset based on the various parts of the proposed system. An indicator called the silhouette score determines how effective a clustering process is. Hence, it is used to check the goodness of the proposed FSk-means technique.

The consistency between the prediction and annotation can be measured using a dice score for the segmentation method. Also, the Jaccard index is a metric used to assess how identical two sets of segmented data are. Hence, these two measures are used to detect the efficacy of the MCBRBCS segmentation method.

The rule generation is done using the \(\textrm{S}^2\)fuzzy algorithm for connectivity analysis. The various metrics used to check the performance of the fuzzy algorithm are fuzzification time, defuzzification time, and rule generation time.

Results and discussion

The proposed FSk-means clustering algorithm achieved a silhouette score of greater than 0.9, as shown in Figure 8. It is better than other clustering algorithms such as k-means, fuzzy c-means (FCM), density-based spatial clustering of applications with noise (DBSCAN), and clustering large applications (CLARA). This is because of replacing Euclidean distance with Fossum Soergel distance, which helps find accurate cluster boundaries.

The performance of the enhanced edge detection algorithm MCBRBCS is evaluated using the dice score and Jaccard index, as shown in Figure 9 and Figure 10, respectively. The MCBRBCS performance is better than edge detection algorithms such as Canny, Sobel, Prewitt, and Robert. The better score is achieved by integrating morphological operations and introducing the Bessel radial basis kernel.

The connectivity analysis is done using the \(\textrm{S}^2\)fuzzy algorithm. Measures such as fuzzification time, defuzzification time, and rule generation time are used to check the efficiency of the \(\textrm{S}^2\)fuzzy algorithm, as shown in Figure 11, Figure 12, Figure 13, respectively. The time taken by the \(\textrm{S}^2\)fuzzy is less than that of other rule generation algorithms, such as basic fuzzy, temporal fuzzy logic (TFL), and adaptive neuro-fuzzy inference system (ANFIS).

The main focus of the research was on generalization, and as per the authors’ survey, this research is the first work on generalized hand-drawn diagrams. Hence, above all results are on a generalized dataset created by combining images of different datasets. The focus was given to the flowchart datasets FC_A and \(\mathrm{FC\_B}_{scan}\) to compare the individual dataset performance with the state-of-the-art method. The comparative results on the FC_A dataset are shown in Table 1. Overall, the proposed system achieves consistent results for all types of elements of the flowchart. The achieved performance is much better than that of Wu37 and Bresler38 and comparable to that of Schafer19. There was an approximate 2% improvement in recognition of the arrows as compared to the Schafer19 method.

The performance of the proposed system on the offline hand-drawn flowchart dataset \(\mathrm{FC\_B}_{scan}\)38 is shown in Table 2. The proposed system could distinguish between the different shapes because of the Hough transform. Also, there is an improvement in the detection of the arrow compared to other methods, i.e., because of the FKkNN, which concentrates on the local density and results in better performance.

The stepwise output on the flowchart dataset is shown in Figure 14.

Conclusion and future scope

In this research, an integrated system was developed to identify offline hand-drawn diagrams, combining various image processing and deep learning techniques. The integrated approach used the strengths of multiple methodologies such as conventional methods for efficient noise cancellation and preprocessing, machine learning techniques, specifically improvised k-means clustering with Fossum Soergel distance and k-nearest neighbor with Fisher kernel for segmentation, and deep learning techniques, particularly CNN having features of wide context to enhance the performance of recognition. The performance comparison shows that the integrated model performs equally well compared to other state-of-the-art methods. The framework demonstrated a notable enhancement in managing the variations and noise associated with hand-drawn inputs. It proved its effectiveness over various hand-drawn diagram formats, such as flowcharts, finite automata, and BPMN.

The research noted certain drawbacks, though, such as the system’s reliance on the images’ resolution and the requirement for vast datasets to train the CNN. Subsequent investigations will mitigate these constraints by investigating semi-supervised and unsupervised learning methodologies to diminish dependence on labeled data and augment the system’s resilience to diverse drawing styles and input attributes. Explainability can also be used in the future to know the impact of the proposed system on different types of hand-drawn diagrams, such as electrical circuits, class diagrams, etc.

To conclude, the integrated approach that has been suggested is a noteworthy development in the domain of generalized offline hand-drawn diagram recognition. This approach offers a more precise, dependable, and effective method for digitizing different types of hand-drawn diagrams by fusing the best aspects of current and old processes. The knowledge gathered from this study opens up new avenues for diagram identification technology, which could be used in various fields such as engineering, business, and healthcare. The recognition accuracy and generalization can be enhanced with multi-modal learning, and adaptive feature extraction techniques. Improving methods for eliminating accidental strokes while keeping relevant schematic elements. Creating algorithms that are always learning from feedback from customers and improvements. Expanding identification algorithms to decipher biological drawings, chemical patterns, and healthcare schematics. Further studies can enhance hand-drawn diagram recognition’s precision, usefulness, and relevance by concentrating on these aspects, making it more reliable, effective, and broadly available across various disciplines.

Data availability

The datasets analyzed during the current study are available in the github repository, https://github.com/bernhardschaefer/handwritten-diagram-datasets/tree/main/datasets

References

Chen, J. & Takagi, N. A pattern recognition method for automating tactile graphics translation from hand-drawn maps. In Proceedings - 2013 IEEE International Conference on Systems, Man, and Cybernetics, SMC 2013, 4173 - 4178 (2013).

Ali, S. et al. Context awareness based sketch-deepnet architecture for hand-drawn sketches classification and recognition in aiot. PeerJ Computer Science 9 (2023).

Li, Z. et al. Early diagnosis of parkinson’s disease using continuous convolution network: Handwriting recognition based on off-line hand drawing without template. Journal of Biomedical Informatics 130 (2022).

Ferdib-Al-Islam & Akter, L. Early identification of parkinson’s disease from hand-drawn images using histogram of oriented gradients and machine learning techniques. In ETCCE 2020 - International Conference on Emerging Technology in Computing, Communication and Electronics (2020).

Altun, O. & Nooruldeen, O. Sketrack: Stroke-based recognition of online hand-drawn sketches of arrow-connected diagrams and digital logic circuit diagrams. Scientific Programming 2019 (2019).

Aruleba, K., Ewert, S., Sanders, I. & Raborife, M. Pre-processing and feature extraction technique for hand-drawn finite automata recognition. In 2018 IST-Africa Week Conference, IST-Africa 2018 (2018).

Schäfer, B., van der Aa, H., Leopold, H. & Stuckenschmidt, H. Sketch2bpmn: Automatic recognition of hand-drawn bpmn models. Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) 12751 LNCS, 344 - 360 (2021).

Polan?i?, G., Jage?i?, S. & Kous, K. An empirical investigation of the effectiveness of optical recognition of hand-drawn business process elements by applying machine learning. IEEE Access 8, 206118 - 206131 (2020).

Berari, R. et al. Overview of the 2021 imageclefdrawnui task: Detection and recognition of hand drawn and digital website uis. In CEUR Workshop Proceedings 2936, 1121–1132 (2021).

Fichou, D. et al. Overview of the 2020 imageclefdrawnui task: Detection and recognition of hand drawn website uis. In CEUR Workshop Proceedings, vol. 2696 (2020).

Pan, C., Huang, J., Gong, J. & Chen, C. Teach machine to learn: hand-drawn multi-symbol sketch recognition in one-shot. Applied Intelligence 50, 2239–2251 (2020).

Alonso, M. A. P. Metacognition and sensorimotor components underlying the process of handwriting and keyboarding and their impact on learning. an analysis from the perspective of embodied psychology. Procedia - Social and Behavioral Sciences 176, 263–269, https://doi.org/10.1016/j.sbspro.2015.01.470 (2015).

Mueller, P. A. & Oppenheimer, D. M. The pen is mightier than the keyboard: Advantages of longhand over laptop note taking. Psychological Science 25(6), 1159–1168. https://doi.org/10.1177/0956797614524581 (2014).

G, A., S, C., Math, K. B. N. & A, L. Automatic generation of html code from handdrawn images using machine learning techniques. International Journal of Engineering Research & Technology 11(8) (2023).

Wang, H., Pan, T. & Ahsan, M. K. Hand-drawn electronic component recognition using deep learning algorithm. International Journal of Computer Applications in Technology 62, 13–19. https://doi.org/10.1504/ijcat.2020.103905 (2020).

Zhang, X. et al. A hybrid convolutional neural network for sketch recognition. Pattern Recognition Letters 130, 73–82. https://doi.org/10.1016/j.patrec.2019.01.006 (2020).

Weir, H. et al. Chempix: automated recognition of hand-drawn hydrocarbon structures using deep learning. Chemical Science 12, 10622–10633. https://doi.org/10.1039/D1SC02957F (2021).

Deufemia, V. & Risi, M. Multi-domain recognition of hand-drawn diagrams using hierarchical parsing. Multimodal Technologies and Interaction 4, https://doi.org/10.3390/mti4030052 (2020).

Schäfer, B., Keuper, M. & Stuckenschmidt, H. Arrow r-cnn for handwritten diagram recognition. International Journal on Document Analysis and Recognition 24, 3–17. https://doi.org/10.1007/s10032-020-00361-1 (2021).

Yun, X.-L., Zhang, Y.-M., Yin, F. & Liu, C.-L. Instance gnn: A learning framework for joint symbol segmentation and recognition in online handwritten diagrams. IEEE Transactions on Multimedia 24, 2580–2594. https://doi.org/10.1109/TMM.2021.3087000 (2022).

Roy, S., Bhattacharya, A., Sarkar, N., Malakar, S. & Sarkar, R. Offline hand-drawn circuit component recognition using texture and shape-based features. Multimedia Tools and Applications 79, 31353–31373. https://doi.org/10.1007/s11042-020-09570-6 (2020).

Fang, J., Feng, Z. & Cai, B. Drawnnet: Offline hand-drawn diagram recognition based on keypoint prediction of aggregating geometric characteristics. Entropy 24, https://doi.org/10.3390/e24030425 (2022).

Zhang, L. Hand-drawn sketch recognition with a double-channel convolutional neural network. Eurasip Journal on Advances in Signal Processing 2021, https://doi.org/10.1186/s13634-021-00752-4 (2021).

Alwaely, B. & Abhayaratne, C. Graph spectral domain feature learning with application to in-air hand-drawn number and shape recognition. IEEE Access 7, 159661–159673. https://doi.org/10.1109/ACCESS.2019.2950643 (2019).

Brinkhaus, H., Zielesny, A., Steinbeck, C. & Rajan, K. Decimer-hand-drawn molecule images dataset. Journal of Cheminformatics 14, https://doi.org/10.1186/s13321-022-00620-9 (2022).

Ali, S. et al. Context awareness based sketch-deepnet architecture for hand-drawn sketches classification and recognition in aiot. PeerJ Computer Science 9, https://doi.org/10.7717/PEERJ-CS.1186 (2023).

Bourgault, S. & Jacobs, J. Preserving hand-drawn qualities in audiovisual performance through sketch-based interaction. Journal of Computer Languages 74, https://doi.org/10.1016/j.cola.2022.101186 (2023).

Thangakrishnan, M. S. & Ramar, K. Automated hand-drawn sketches retrieval and recognition using regularized particle swarm optimization based deep convolutional neural network. Journal of Ambient Intelligence and Humanized Computing 12, 6407–6419 (2021).

Pavithra, S., Shreyashwini, N., Bhavana, H., Nikhitha, G. & Kavitha, T. Hand-drawn electronic component recognition using orb. In Procedia Computer Science 218, 504–513 (2022).

Rachala, R. R. & Panicker, M. R. Hand-drawn electrical circuit recognition using object detection and node recognition. SN Computer Science 3 (2022).

Gupta, M. & Mehndiratta, P. Analysis and recognition of hand-drawn images with effective data handling. Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) 11932 LNCS, 389 - 407 (2019).

Awal, A. M., Feng, G., Mouchère, H. & Viard-Gaudin, C. First experiments on a new online handwritten flowchart database. Document Recognition and Retrieval XVIII 7874, 1–10 (2011).

Bresler, M., Phan, T. V., Prusa, D., Nakagawa, M. & Hlavác, V. Recognition system for on-line sketched diagrams. In 2014 14th International Conference on Frontiers in Handwriting Recognition, 563–568 (2014).

Bresler, M., Pr\(\mathring{\rm u}\)ša, D. & Hlaváč, V. Online recognition of sketched arrow-connected diagrams. International Journal on Document Analysis and Recognition 19, 253–267 (2016).

Schäfer, B., van der Aa, H., Leopold, H. & Stuckenschmidt, H. Sketch2bpmn: Automatic recognition of hand-drawn bpmn models (Lecture Notes in Computer Science (Springer International Publishing, 2021).

Schäfer, B. & Stuckenschmidt, H. Diagramnet: Hand-drawn diagram recognition using visual arrow-relation detection. In 2021 International Conference on Document Analysis and Recognition (ICDAR) (2021).

Wu, J., Wang, C., Zhang, L. & Rui, Y. Offline sketch parsing via shapeness estimation. In Proceedings of the 24th International Conference on Artificial Intelligence, 1200-1206 (2015).

Bresler, M., Pr\(\mathring{\rm u}\)ša, D. & Hlaváč, V. Recognizing off-line flowcharts by reconstructing strokes and using on-line recognition techniques. In 2016 15th International Conference on Frontiers in Handwriting Recognition (ICFHR), 48–53, https://doi.org/10.1109/ICFHR.2016.0022 (2016).

Funding

Open access funding provided by Symbiosis International (Deemed University).

Author information

Authors and Affiliations

Contributions

V.A. wrote the main manuscript text and prepared figures. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Agrawal, V., Kantipudi, M.P. & Jagtap, J. Enhancing hand-drawn diagram recognition through the integration of machine learning and deep learning techniques. Sci Rep 15, 17311 (2025). https://doi.org/10.1038/s41598-025-01823-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-01823-4