Abstract

Multiphysics, multiscale climate models, such as the Energy Exascale Earth System Model (E3SM) generate massive volumes of data over extended time periods to support long-term climate analysis. Data compression methods, both lossy and lossless, have been extensively used to manage these datasets. Implicit Neural Representation (INR) has recently emerged as a promising lossy compression technique. While INRs offer good compression rates, they often suffer from reconstruction errors that may impede downstream climatic analysis. To address this, we propose a Context-Aware Implicit Neural Representation (CA-INR), which is based on a multi-layer perceptron (MLP) architecture and takes both spatiotemporal coordinates and auxiliary physical variables, referred to as context, as inputs. The model is trained to memorize the data with the explicit goal of overfitting, thereby enabling accurate reconstruction of the original data. The inclusion of context allows the model to better capture the underlying structures and correlations in Earth system data. We evaluate different architectures of CA-INR using the surface temperature variable from the E3SM dataset and investigate the impact of incorporating different types, qualities, and numbers of contextual inputs, specifically, topography, mean climatological temperature, and their combination, on compression gain and reconstruction error. Our results demonstrate that incorporating contextual information reduces reconstruction error while maintaining a high compression rate, outperforming standard INR models. The resulting increase in peak signal-to-noise ratio (PSNR) is substantial, elevating the reconstructed data quality with CA-INR to a level suitable for downstream climate analysis.

Similar content being viewed by others

Introduction

Earth system modeling (ESM) is of the utmost importance to understand and project earth’s complex climate systems, informing policy decisions, and addressing environmental challenges1,2,3. The advancement in high-performance computing has led the Earth system modeling community to develop climate models that can be run at high resolution, for longer simulation periods, and for larger ensemble collections, resulting in petabytes of multi-variable, spatiotemporally correlated fields, overwhelming both disk quotas and input/output (I/O) bandwidth4,5. This has created challenges for computing and data centers, such as the National Center for Atmospheric Research (NCAR), the German Climate Computing Center, and others, in terms of data storage and transfer4,6.

Data reduction mitigation hinges on two algorithmic families, mathematically lossless and error-bounded lossy compression, each exploiting different aspects of data redundancy7,8. Lossless codecs (e.g., DEFLATE/ZIP, FPC, LZ4) apply entropy or dictionary coding, predictive differencing, and bit-shuffling to remove statistical redundancy while guaranteeing bit-exact recovery of the original field9,10,11,12,13. Although essential for reproducibility and verification workflows, their asymptotic compression ratios are modest, typically smaller than an order of magnitude for high-entropy floating-point climate data, limiting their utility in exascale archives4,12. Lossy compressors, such as ZFP and SZ, adopt transform or predictive quantization with user-specified error tolerances, trading negligible, quantifiable distortion for one to two orders-of-magnitude reduction in footprint4,6,10,13. These algorithms exploit coherency across spatial grids and temporal snapshots, employ bit-plane or block-floating-point representations, and integrate seamlessly with netCDF-4/HDF5 filter stacks, enabling on-the-fly compression during parallel I/O.

Recent advances aim to push error-bounded lossy compression toward the long-sought combination of near-lossless fidelity and aggressive bit-rate reduction. One strategy is to inject richer data semantics directly into the compression pipeline. For example, Adaptive-Hierarchical Geospatial Field Data Representation (Adaptive-HGFDR) couples blocked hierarchical tensor decomposition with adaptive rank selection to capture multi-scale, multi-dimensional correlations in climate fields14. When applied to Community Earth System Model (CESM) outputs, Adaptive-HGFDR delivered both higher compression ratios and flatter, more homogeneous error spectra than canonical schemes such as ZFP and the earlier Blocked-HGFDR, thereby reducing bias accumulation in downstream analyses14. A complementary line of research focuses on constraint-aware compression to better align the reconstructed data with scientific analysis needs. Liu et al.15 introduced a constraint-based extension of the SZ error-bounded lossy compression framework, enabling users to specify diverse constraints during compression. These constraints include isolating irrelevant values, preserving global value ranges, setting multiple precision levels across different value intervals, applying spatially varying error bounds, and masking irregular regions using bitmap techniques. The framework redesigns the quantization stage to respect these constraints efficiently without substantially degrading compression performance. Evaluations across six real-world datasets, including climate and cosmology simulations, demonstrated that constraint-aware compression can significantly improve data fidelity for quantities of scientific interest, with comparable or even superior compression ratios relative to traditional SZ compression. For example, in the Nyx cosmology simulation, the method preserved dark matter halo properties with much lower distortion, achieving compression ratios up to \(78\times\) without compromising key post hoc analyses15. Collectively, these developments illustrate a shift from one-size-fits-all lossy coding toward domain-aware, precision-adaptive compressors that maximize storage savings without compromising the scientific integrity of datasets. Nevertheless, the achieved compression gains, while meaningful, remain modest relative to the demands of exascale ESMs, highlighting the need for further innovations to meet future storage and fidelity challenges.

To substantially improve compression gains, neural-driven lossy techniques, including autoencoders10,16,17 and implicit neural representations18,19, have been actively investigated. Autoencoder-based methods operate by mapping high-dimensional input data into lower-dimensional latent representations, from which the original data are subsequently reconstructed20. Liu et al.16 proposed AE-SZ, a hybrid approach that integrates convolutional autoencoders with the SZ error-bounded lossy compression framework. Applied across five scientific datasets, including climate data, AE-SZ demonstrated a compression gain improvement of 100% to 800% relative to SZ 2.1 and ZFP, while maintaining comparable levels of data distortion16. In a similar vein17, introduced an autoencoder-based compression model tailored for high-resolution Community Earth System Model outputs, achieving compression ratios as high as \(240\times\) for sea surface datasets, accompanied by a reconstruction peak signal-to-noise ratio (PSNR) of 50.16 dB. Despite their promising performance, autoencoder-based compression methods impose practical challenges, notably the requirement of a decoder during inference, which can be prohibitively large, sometimes surpassing the size of the original uncompressed data (e.g., an image)21,22. Dupont et al.18 also proposed a neural compression framework that transmits the weights of a neural network overfitted to the data. Specifically, they introduced COIN (Compression with Implicit Neural representations), wherein a multilayer perceptron (MLP) is trained to map spatial coordinates to corresponding RGB values for images. The compression process entails storing the quantized weights of this MLP as the compressed representation18. This implicit neural representation (INR) approach leverages the function-approximation capability of MLPs, rather than directly encoding samples. To mitigate challenges arising from fitting high-frequency components in natural data, the method employs sinusoidal activations (SIREN) to enhance the expressivity and convergence of the networks23. Experimental results show that COIN can outperform JPEG at low bit rates, even without the use of entropy coding or learned weight distributions18. However, at higher bit rates, its PSNR tends to be lower compared to other compression methods, and the encoding process remains computationally expensive due to the requirement of per-instance network optimization18. Nevertheless, the flexibility of INR-based compression to achieve a wide range of PSNR values and compression gains, depending on the MLP model size, makes it a promising technique for ESM data.

This work introduces context-aware implicit neural representations (CA-INR) for compressing ESM datasets while preserving data integrity. CA-INR extends traditional implicit neural representation (INR) methods by incorporating auxiliary physical information into the input layer to facilitate more effective overfitting. In this study, we focus on compressing surface temperature fields from the Energy Exascale Earth Systems Model dataset24, using supplementary physical variables such as topography and mean climatological temperature, which are known to be strongly correlated with surface temperature fields25,26. By enriching the MLP inputs with physically meaningful context, the proposed CA-INR framework enhances reconstruction quality and compression efficiency. Our primary objective is to achieve high compression ratios across a range of PSNR targets tailored for different downstream applications of ESM data. For instance, downstream applications such as visualization can typically tolerate PSNR levels around 35–40 dB, whereas reanalysis workflows and data assimilation require fidelity above 40–50 dB to avoid introducing significant bias into physical models. This approach enables flexible trade-offs between compression gain and reconstruction fidelity, offering a promising pathway for scalable storage of exascale climate datasets.

E3SM data source

This study utilizes monthly outputs from the Energy Exascale Earth System Model (E3SM), a high-resolution Earth system model developed by the U.S. Department of Energy to simulate coupled interactions across the atmosphere, oceans, land surface, and sea ice27,28. We specifically analyze data from the 1950-control simulation spanning a 30-year period, using the high-resolution configuration with a global grid spacing of \(0.25^{\circ } \times 0.25^{\circ }\)24. The original model output, generated on a non-orthogonal cubed-sphere grid, has been bilinearly interpolated to a regular latitude-longitude grid to facilitate processing and analysis5. We extract and compress consecutive months of surface temperature (ST) fields, with each monthly snapshot comprising \(720 \times 1440\) grid points. To enhance compression performance, we incorporate auxiliary physical fields as additional input context to the neural network. Specifically, we use topography and mean climatological surface temperature fields, individually and in combination, as supplementary inputs alongside spatial coordinates. Both topography and mean temperature are physically meaningful variables that exhibit strong correlations with ST patterns25,26, providing valuable priors that can improve the efficiency and fidelity of learned neural representations. While this study focuses on using static (topography) and climatological (mean temperature) fields as context, other cross-modality strategies, such as leveraging monthly precipitation fields to assist in compressing monthly ST, could further enhance compression and will be explored in future work.

Methodology

The main goal of the proposed CA-INR framework is to overfit the model to the data, enabling accurate reconstruction and compression of E3SM fields. The method leverages contextual physical information to better capture underlying spatial structures and correlations. In the following sections, we first describe the CA-INR architecture and its integration of contextual variables, followed by the optimization procedure used to train the overfitted models. We then present the statistical metrics used to evaluate reconstruction accuracy and, finally, explore different variants of CA-INR incorporating various physical context inputs.

Context-aware implicit neural representations (CA-INR)

We introduce a context-aware variant of INR-based compression18, tailored for multi-dimensional climate data stored in tensor form. Let ST denote the target tensor we aim to compress, representing values at spatial locations \(\varvec{x} = (x, y)\) and time t. We define a neural function \(f_\theta\) with learnable parameters \(\theta\), which maps coordinates and auxiliary physical context to scalar outputs,

where \(\varvec{c}\) denotes one or more context variables introduced to guide the reconstruction. In our study on compressing ST from E3SM, we incorporate topography and/or the climatological mean of ST as contextual features (Section “Contextual variants of CA-INR”). These variables are strongly correlated with ST fields and have been shown to improve predictive performance in related geospatial learning tasks. The model is trained by minimizing the mean squared error between the predicted and true values,

where the sum is taken over all spatial locations and time steps. The function \(f_\theta\) is parameterized as a multilayer perceptron (MLP) with sine activation functions (SIREN)18,23, which enables the capture of high-frequency spatial patterns. To maximize compression efficiency, we follow the architecture optimization and quantization strategies of18, exploring different layer depths and widths, and reducing weight precision from 64-bit to 32-bit. The decoder consists solely of evaluating \(f_\theta\) at each \((\varvec{x}, t)\) using the stored quantized weights.

Optimization procedure

The optimization process is systematically designed to refine the network parameters toward an optimal configuration progressively. Training begins with an initial model and learning rate, using the Adam optimizer29 to minimize the mean squared error between the network predictions and the reference data. At each iteration, the prediction error is evaluated, and performance metrics are tracked to monitor improvements. Throughout the training, the current best-performing model, based on the lowest loss value observed so far, is recorded to prevent the optimization from drifting away from previously achieved good solutions. A key aspect of the training strategy is the scheduled adjustment of the learning rate. After a fixed number of iterations, the learning rate is reduced by a predefined factor. Rather than continuing from the latest model parameters, training is resumed from the best model checkpoint, ensuring that subsequent updates refine the most promising solution. At each adjustment point, the optimizer is reinitialized with the restored model and the updated learning rate to maintain training stability and efficiency.

This approach prevents reinforcing suboptimal paths and promotes consistent progress toward better prediction. By combining a stepwise learning rate decay with model resetting, the training procedure achieves a balanced trade-off between exploration in the early stages and fine-tuning during later phases. In the initial steps, a larger learning rate allows the optimizer to move quickly across the loss landscape, while subsequent decay phases focus on careful refinement. This structure improves the reliability of convergence and reduces the risk of common issues such as overfitting to noise or entrapment in sharp local minima. A summary of the complete training procedure applied to the CA-INR architecture is provided in Algorithm 1, where the model is initialized, trained, and periodically updated through a step-decay learning rate schedule combined with optimizer reinitialization to ensure smooth and stable convergence. This design ultimately leads to a network that generalizes well and maintains high performance across different stages of training.

Statistical metrics

To assess the performance of CA-INR, we employ various statistical metrics. We evaluate the model performance using two error metrics: the Absolute Point Error (APE), also referred to as the point-wise error \(E(x, y)\), and the Mean Squared Error (MSE), both following the definitions in30. The APE is defined as,

where \((x, y)\) denotes a spatial location (e.g., a grid point or pixel), \(X_1(x, y)\) is the reconstructed or predicted value at that location, and \(X_2(x, y)\) is the corresponding ground truth value. The Mean Squared Error (MSE) is given by,

where \(X_{1i}\) and \(X_{2i}\) are the predicted and ground truth values at the \(i^{\text {th}}\) grid point (e.g., pixels in an image or nodes in a computational domain), and \(N\) is the total number of grid points. We also compute the Mean Absolute Error (MAE), which calculates the average of the absolute differences between predicted and true values. Mathematically, it is defined as,

where \(N\) is the total number of samples, and \(X_{1i}\) and \(X_{2i}\) are the predicted and true values, as defined above, respectively. All three metrics, APE, MSE, and MAE, are used to quantify the point-wise error between the predicted and ground truth surface temperature. Furthermore, we incorporate the Peak Signal-to-Noise Ratio (PSNR) metric31,32, which quantifies the ratio between the maximum possible signal power and the power of the corrupting noise that affects the accuracy of the reconstructed signal. It is computed as,

where \(X_1(x, y)\) and \(X_2(x, y)\) represent the predicted and ground truth profiles, respectively, over the spatial domain, and MSE is defined in Eq. (4). The PSNR is expressed in decibels (dB), with higher values indicating better reconstruction fidelity.

To quantify the reduction in storage achieved by context-aware implicit neural representations , we define the compression gain (CG) as the ratio between the size of the initial ST dataset and the sum of the sizes of the compressed neural model and its associated contextual information,

where \(N_{\text {point}}\) and \(b_{\text {org}}\) represent the number of spatiotemporal ST points (e.g., a \(720 \times 1440\) grid per time step multiplied by the number of time steps) and the number of bits per point in the original dataset (e.g., 64-bit precision floating point), respectively. For the storage cost of the CA-INR model, \(N_{\theta }\) denotes the number of weights and biases, \(b_{\theta }\) is the number of bits per parameter (set to 32 bits after quantization), and the term \((N \times b)_c\) accounts for the storage cost associated with the contextual information. In this study, we neglect the contribution of \((N \times b)_c\) for two reasons. First, the ultimate objective is to compress the full ST archive over which static or slowly varying fields such as topography or mean climatological temperature are reused across time steps, rendering their amortized storage cost negligible. Second, these context fields can themselves be efficiently represented using separate INR models, further reducing their effective storage cost toward zero. For these reasons, we conservatively report compression gain based only on the original ST data size and the network model parameters, while acknowledging that including the context would slightly lower the gain when considering short-term subsets.

Contextual variants of CA-INR

In this study, we extend the INR framework by incorporating additional contextual information to enhance its predictive capabilities on physical datasets. Specifically, to compress the ST data, we introduce two forms of spatial context, topography and mean climatological temperature, resulting in two variants of the model: CA-INR (TP) and CA-INR (MT), respectively. These contextual variants are designed to provide the model with additional structure-informed cues that are not directly captured by spatiotemporal coordinates alone, helping the network to represent complex physical processes better.

Topographic information25,26 is particularly relevant in climate modeling, as elevation and terrain shape significantly influence surface temperature distribution. For example, regions with high altitudes tend to have cooler temperatures due to lower atmospheric pressure and reduced longwave radiation absorption. In this work, topography is provided at the same spatial resolution as the ST field, making it directly usable as a contextual input without additional pre-processing. We include this topographic data as a spatially aligned scalar field, which is concatenated with the spatiotemporal coordinates and passed to the network at each input location. For each input location (x, y), we construct an input vector by concatenating the coordinates with the corresponding topographic scalar value TP(x, y). This composite vector is then passed to the CA-INR model as input. This allows the model to learn spatial variations in temperature that are driven by underlying geographic features.

The mean climatological temperature (MT) serves as a second form of context that encodes averaged long-term thermal behavior across the dataset. It is computed by taking the temporal average of the ST field at each spatial location over the considered temporal domain. Mathematically, for each point (x, y), MT is defined as \(\text {MT}(x, y) = \frac{1}{M} \sum _{t=1}^{M} ST(x, y, t)\), where ST(x, y, t) represents the surface temperature at location (x, y) and time t. This averaged value captures the stationary component of temperature over time33 and reflects broad climatological trends such as regional warming or cooling34,35. Incorporating MT as contextual information enables the model to distinguish between short-term fluctuations and fundamental long-term trends, thereby enhancing its ability to reconstruct dynamic temperature distributions.

In addition to evaluating each context independently, we also examine their combined effect in the CA-INR (TP + MT) variant. This model variant receives both topography and mean climatological temperature as inputs, allowing it to leverage complementary physical signals during training. By jointly incorporating these two types of context, the model can gain a richer understanding of spatial and climatological variability, leading to more accurate data reconstruction, particularly in regions where both terrain and long-term thermal patterns play important roles. Through comparative evaluation of these contextual variants, we aim to highlight the impact of incorporating physical context into INR models for climate data representation.

Computational experiments

This section evaluates the performance of the proposed context-aware implicit neural representation framework, trained with step decay learning rates. We conduct two experiments using consecutive 6- and 12-month subsets of ST data from the E3SM high-resolution dataset. To assess the impact of physical context on compression quality, we compare CA-INR variants incorporating topography (at different qualities), mean climatological temperature, or both, against a baseline INR model without contextual input. Quantitative comparisons across methods are performed using the evaluation metrics defined in Section “Statistical metrics”, with the goal of identifying the most effective configuration for compressing ST fields.

All experiments are performed on a Linux-based workstation equipped with an NVIDIA RTX 6000 Ada GPU (48 GB VRAM) and 256 GB of system memory. The models are developed and trained using Python 3.10 and PyTorch 2.1.0. Multi-GPU training is enabled via the torch.nn.DataParallel module to accelerate computation. Data preprocessing and numerical operations are handled using NumPy 1.26.4 and Pandas 2.2.2. Figures and visualizations are generated using Matplotlib 3.8.4 and Cartopy 0.22.0.

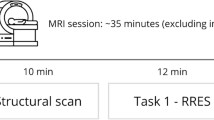

PSNR performance comparison of (a) the INR model, (b) CA-INR with topography (TP), and (c) CA-INR with mean climatological temperature (MT) across different network architectures by varying the number of layers and neurons per layer. The red-colored bars in each plot indicate the architecture configuration that achieved the highest PSNR value.

Case 1: 6-month surface temperature data

For our first set of analysis, we use a dataset that includes six consecutive months of ST data from E3SM output as elaborated in Section “E3SM data source”. The vanilla INR and the CA-INR variants incorporating CA-INR (TP) and CA-INR (MT) models are trained using SDLR for 30,000 epochs. We investigate a variety of network architectures, altering the number of hidden layers between 5 and 14, and adjusting the count of neurons in each layer from 5 to 70 in increments of 5.

In Fig. 1, the PSNR performance of the three models is illustrated. The vanilla INR model achieves its best result with 12 layers and 70 neurons, reaching a PSNR of 52.33 dB. The CA-INR (TP) model, which incorporates topography as auxiliary input, performs slightly better, achieving a PSNR of 52.47 dB with 12 layers and 65 neurons. The CA-INR (MT) model, which integrates mean climatological temperature, significantly outperforms both vanilla INR and the CA-INR (TP), achieving the highest PSNR of 56.11 dB with 11 layers and 65 neurons. These results demonstrate that enriching the input with context can lead to notable improvements in the reconstruction quality of ST fields.

To further illustrate the effectiveness of the CA-INR models, Fig. 2 presents the predicted ST fields and their corresponding absolute error maps for the second month in the dataset, using the best-performing architecture for each method. The comparison includes vanilla INR, CA-INR (TP), and CA-INR (MT). The optimal configuration for each model is selected based on the highest PSNR obtained during the training and architecture tuning. Specifically, INR achieves a PSNR of 52.33 dB with a CG of 230, CA-INR (TP) reaches 52.47 dB with CG 257, and CA-INR (MT) achieves the best performance with a PSNR of 56.11 dB and CG 292. Although all models effectively capture the general temperature distribution, the CA-INR variants, and especially CA-INR (MT), deliver reconstructions with higher accuracy and better spatial coherence, while achieving a compression gain that exceeds that of INR by 26.96%. The improvement is especially evident in regions with complex terrain or strong temperature gradients, where contextual inputs, such as topography and mean climatological temperature, provide valuable information that guides the model toward more precise approximations. These results show the importance of integrating relevant contextual information into the implicit representation framework to enhance reconstruction accuracy and compression gain.

In order to assess the performance of the models under specific compression limitations, we examine predictions made by an architecture achieving a compression factor of 60,000. This architecture is composed of 7 layers, each containing 5 neurons. As shown in Fig. 3, the vanilla INR model produces noisy reconstructions with a PSNR of 25.99 dB. In contrast, both CA-INR variants, which incorporate contextual information, significantly improve reconstruction accuracy. CA-INR (TP) achieves a PSNR of 30.35 dB, while CA-INR (MT) slightly improves performance to 30.50 dB. These results demonstrate that even under tight compression requirements, integrating relevant auxiliary information enables CA-INR models to produce more accurate and physically consistent data reconstructions than the baseline INR. Figures 2 and 3 thus demonstrate the benefits of incorporating contextual information: CA-INR consistently achieves higher accuracy and maintains robustness even under limited model capacity. This indicates that contextual cues during training enhance both learning efficiency and prediction, particularly in constrained scenarios.

A comparison between the ST data compressed and reconstructed using CA-INR (TP) with different levels of contextual data quality. Results are illustrated for the second month in the dataset. Panel (a) shows different levels of contextual data quality and corresponding reconstructed absolute error. Panel (b) shows a line graph comparing the generated surface temperatures at various context levels to the ground truth along a diagonal line from (90\(^\circ\)N, 180\(^\circ\)W) to (90\(^\circ\)S, 0\(^\circ\)). Panel (c) shows a line graph comparing the generated surface temperatures at various context levels to the ground truth along a diagonal line from (90\(^\circ\)N, 180\(^\circ\)E) to (90\(^\circ\)S, 0\(^\circ\)).

We further examine how the quality of contextual information affects the data compression and reconstruction accuracy of the CA-INR (TP) model. This evaluation is crucial because obtaining comprehensive contextual data is often difficult or impossible in many scenarios. To investigate this, we analyze four variations of the CA-INR (TP) model, each possessing different levels of topographic context quality but all sharing the same resolution. We determine the effect of context quality on reconstruction error in comparison to actual temperature data. Quality levels are defined by the PSNR between the generated topography and the real topography. Ground truth topography is referred to as Context 1. Context 2 is derived from Context 1 with a Mean Absolute Error (MAE) of 12% and a PSNR of 33.35 dB. Context 3 originates from Context 1 with a 72% MAE and 23.06 dB PSNR. Lastly, Context 4, representing the lowest quality, is derived from Context 1 with a 369% MAE and 16.67 dB PSNR.

Figure 4 illustrates how the quality of topographic context influences the performance of CA-INR (TP) in reconstructing ST data. Panel (a) presents four levels of topographic context (Context 1-4), along with the corresponding ST reconstruction absolute errors for the second month in the dataset. As we move from Context 1 (ground truth topography) to Context 4 (highly degraded topography), the context becomes progressively less similar to the true terrain. This degradation directly impacts the fidelity of the generated surface temperatures and discrepancies increase as context quality declines. Panels (b) and (c) quantify this effect through line plots of ST along two diagonal slices: from (90\(^\circ\)N, 180\(^\circ\)W) to (90\(^\circ\)S, 0\(^\circ\)) and from (90\(^\circ\)N, 180\(^\circ\)E) to (90\(^\circ\)S, 0\(^\circ\)), respectively, shown as dark blue lines on the inset maps. For each context level, the reconstructed temperatures are compared against the ground truth. The trend is clear: as the quality of the context drops, the reconstruction error increases. In panel (b), the mean absolute error rises from 0.52 to 0.90; in panel (c), the mean absolute error increases from 0.53 to 0.71. Despite this, even the model using the weakest context (Context 4) keeps most predictions within a 5 K confidence interval, showing a strong robustness. These findings show that high-quality contextual information significantly enhances reconstruction accuracy, and CA-INR remains effective even under degraded conditions, making it a powerful approach for physical data compression, particularly when precise context data is scarce or incomplete.

A comparative evaluation of CA-INR variants by examining the PSNR (dB) in relation to the compression gain under CA-INR (TP) and CA-INR (MT). The black markers, located at compression gains of approximately 20,000 and 60,000, denote the CA-INR (TP \(+\) MT) model, which integrates two types of contextual information. It is evident that incorporating additional contextual information enhances the PSNR for the reconstructed temperature data.

In the last experiment in this section, we evaluate how increasing the amount of contextual information affects the performance of CA-INR models in terms of reconstruction quality. Instead of using a single context input, this test explores the impact of using two complementary types of context simultaneously, topography and mean climatological temperature. By comparing the results of CA-INR (TP), CA-INR (MT), and the combined CA-INR (TP \(+\) MT), we aim to quantify the benefits of multi-context conditioning under different compression gain levels. Figure 5 demonstrates a significant improvement in reconstruction quality, measured by PSNR, when using both context types together. At a compression gain of around 20,000, PSNR increases by 13.68% for the TP variant and 10.44% for the MT variant. At a CG of approximately 60,000, the improvement is even more pronounced, with gains of 14.66% for TP and 14.10% for MT. These consistent boosts in PSNR highlight the enhanced fidelity provided by multi-context inputs, affirming that richer contextual signals help the CA-INR model generate higher-quality reconstructions, especially under high-compression scenarios. In this specific case, at a compression gain of approximately 20,000, the PSNR improvement resulting from dual context approaches 40 dB, rendering the reconstructed data suitable for downstream analysis and data assimilation within the 40–50 dB range. Furthermore, at a compression gain of about 60,000, the PSNR improvement due to dual context nears 35 dB, placing the reconstructed data within a suitable range for downstream visualization (35–40 dB). It is important to acknowledge, although not considered in our analysis, that augmenting the amount of context can inversely affect compression gain, owing to the increase in the denominator as described in Eq. (7).

Reconstruction accuracy over 12 months for four model variants under two compression gains: (a) CG 20,000 and (b) CG 60,000. In each panel, the top row shows monthly MAE for INR, CA-INR (TP), CA-INR (MT), and CA-INR (TP \(+\) MT) and the bottom row shows spatial absolute error for the second month in the dataset.

Case 2: 12-month ST data

In the section, we extend the temporal coverage from 6 months to 12 months in order to investigate how a longer time span affects compression efficiency and reconstruction error. The goal of this case was to examine whether using more temporal data increases the model complexity required to maintain similar levels of PSNR compared to the 6-month case. We followed a setup consistent with Case 1, training the INR model and the proposed CA-INR variants, CA-INR (TP), CA-INR (MT), and CA-INR (TP + MT), using the same step decay learning rate strategy. To ensure comparability, we evaluate model performance under two compression gain targets: approximately 20,000 and 60,000. For the CG of 20,000, we use an architecture with 10 hidden layers and 10 neurons per layer. For the CG of 60,000, we employ a deeper architecture with 14 hidden layers and 5 neurons per layer. This setup allows us to directly assess how the models scale with data volume and whether additional context continues to provide benefits under increased temporal complexity. The results are analyzed using PSNR and compression gain metrics, providing insight into the trade-offs between model size, temporal span, and reconstruction accuracy.

In Fig. 6, the reconstruction performance of four model variants is compared over a 12-month period under two compression gain settings. The top row in each panel reports the monthly mean absolute error, while the bottom row visualizes the spatial distribution of absolute error for the second month in the dataset. In Panel (a), CA-INR (TP + MT) consistently delivers the best performance, achieving the lowest MAE across all months, with values ranging from 2.08 (Month 12) to 2.49 (Months 7 and 8). In contrast, the standard INR method shows the worst performance, with MAE values between 8.56 (Month 2) and 9.78 (Month 10). Among the two single-branch CA-INR variants, CA-INR (MT) performs slightly better than CA-INR (TP) in most months. The spatial error maps for the second month in the dataset clearly show that CA-INR (TP + MT) leads to significantly lower and more uniformly distributed errors compared to the other methods. In Panel (b), when compression is increased, the overall error rises, but CA-INR (TP + MT) still maintains the best accuracy. It achieves its lowest MAE of 5.70 in Month 1 and a peak of 6.88 in Month 7. On the other hand, the INR method again performs the worst, with errors ranging from 11.8 (Month 12) to 14.87 (Month 1). Notably, CA-INR (TP + MT) outperforms both CA-INR (TP) and CA-INR (MT) throughout all months. The error maps for the second month in the dataset show a visible reduction in spatial error for CA-INR (TP + MT), confirming its robustness even under higher compression.

Table 1 summarizes the performance of different compression models over both 6-month and 12-month time spans, evaluated under two compression gain targets: approximately 20,000 and 60,000. Across all cases, the proposed CA-INR (TP + MT) consistently achieves the best results in terms of reconstruction quality, measured by PSNR (Peak Signal-to-Noise Ratio), while maintaining competitive or even lower compression gains compared to other models. For the 6-month scenario, CA-INR (TP + MT) reaches a PSNR of 39.15 dB at CG \(\approx\) 20,000 and 34.80 dB at CG \(\approx\) 60,000, clearly outperforming the standard INR model, which yields 32.48 dB and 25.99 dB, respectively. A similar improvement is observed for the 12-month case, where CA-INR (TP + MT) attains PSNR values of 41.10 dB and 33.24 dB, compared to 29.64 dB and 26.79 dB achieved by INR. This performance improvement highlights the effectiveness of incorporating both topography (TP) and mean climatological temperature (MT) supervision into the CA-INR framework. Notably, while extending the time span from 6 to 12 months increases the model’s complexity, CA-INR (TP + MT) remains robust and continues to deliver high-fidelity reconstructions with superior compression efficiency. These results strongly support the use of CA-INR for spatiotemporal data compression, particularly in settings that require both high accuracy and aggressive compression.

Conclusion

In this study, we proposed a context-aware implicit neural representation (CA-INR) framework for compressing and representing Earth system model data, focusing on the Energy Exascale Earth System Model (E3SM) surface temperature fields. Our goal was to overfit the network to minimize reconstruction error, while still achieving high compression gains (CG). Our goal was to overfit the network and achieve high compression gains while maintaining low reconstruction errors. To this end, we introduced two forms of physically-related contextual information, namely topography (TP) and the mean climatological temperature (MT), to be encoded as inputs along with the spatiotemporal coordinates in the CA-INR compression algorithm. We evaluated their individual and combined impacts, i.e., CA-INR (TP), CA-INR (MT), and CA-INR (TP + MT), on compression gain and reconstruction error. We conducted experiments on 6- and 12-month subsets of E3SM’s high-resolution surface temperature data. Compared to vanilla INR, incorporating TP improved PSNR by up to 5.20 dB (at \(\approx\) 20,000 CG) and 4.36 dB (at \(\approx\) 60,000 CG), while incorporating MT improved PSNR by up to 6.40 dB (at \(\approx\) 20,000 CG) and 4.51 dB (at \(\approx\) 60,000 CG). These results show that adding contextual information, such as TP or MT, reduces reconstruction error and improves PSNR compared to INR. Furthermore, the gains in PSNR are larger when the added context is more closely related to the target variable, such as surface temperature in this case. Furthermore, combining TP and MT leads to additional improvements, yielding up to 11.46 dB (at \(\approx\) 20,000 CG) and 8.81 dB (at \(\approx\) 60,000 CG) higher PSNR compared to vanilla INR. As the number of context sources increases, the PSNR continues to improve significantly, indicating that including multiple contextual information enhances model performance for surface temperature reconstruction. This increase in PSNR is substantial, bringing the reconstructed data quality to a level suitable for downstream climate analysis. We also investigated how the quality of contextual information, such as topography, affects the reconstruction accuracy of the CA-INR (TP) model. On average, using lower-quality context leads to a slight increase in the mean absolute error. Nevertheless, even with the weakest context quality, the model maintains most predictions within a small confidence interval, demonstrating strong robustness in reconstruction performance. We also showed that CA-INR maintains its superior performance as the temporal domain (e.g., months) expands, demonstrating its robustness in long-term data reconstruction.

Looking ahead, we envision three key directions to expand this work. First, one can extend this framework beyond single-modality compression, such as surface temperature fields, to explore multi-modality representation scenarios36,37. This requires careful consideration of the type and number of contextual features that effectively compress diverse climate variables and how these features influence reconstruction fidelity and compression efficiency. Secondly, the potential of alternative network architectures within the context of CA-INR can be explored. For example, Kolmogorov-Arnold Networks38,39, whose functional-decomposition structure typically yields better generalization than multilayer perceptrons, could be employed in both data-driven and physics-informed modes. Another research avenue is to examine how CA-INR reconstructions perform in downstream climate-analysis tasks. By feeding the reconstructed fields into workflows such as uncertainty quantification40,41,42, statistical downscaling43,44, or extreme-event assessment, we can track how reconstruction errors, especially those tied to fine-scale or rare phenomena, propagate through the pipeline. This assessment will clarify the practical reliability and limitations of the CA-INR compression scheme.

Data availability

The temperature data is avaiable at https://esgf-node.llnl.gov/projects/e3sm/ and topography data is available at https://www.temis.nl/data/gmted2010/index.php.

Code availability

The code is available at https://github.com/sfaroughi3/Pub_CA_INR.

References

Flato, G. M. Earth system models: An overview. Wiley Interdiscip. Rev. Clim. Change 2(6), 783–800 (2011).

Wagener, T. & Pianosi, F. What has global sensitivity analysis ever done for us? A systematic review to support scientific advancement and to inform policy-making in earth system modelling. Earth Sci. Rev. 194, 1–18 (2019).

Bonan, G. B. & Doney, S. C. Climate, ecosystems, and planetary futures: The challenge to predict life in earth system models. Science 359(6375), eaam8328 (2018).

Hammerling, D. M., Baker, A. H., Pinard, A. & Lindstrom, P. A collaborative effort to improve lossy compression methods for climate data. In IEEE/ACM 5th International Workshop on Data Analysis and Reduction for Big Scientific Data (DRBSD-5) Vol. 2019, 16–22 (IEEE, 2019).

Passarella, L. S., Mahajan, S., Pal, A. & Norman, M. R. Reconstructing high resolution esm data through a novel fast super resolution convolutional neural network (fsrcnn). Geophys. Res. Lett. 49(4), e2021GL097571 (2022).

Hübbe, N., Wegener, A., Kunkel, J. M., Ling, Y., & Ludwig, T. Evaluating lossy compression on climate data. In Supercomputing: 28th International Supercomputing Conference, ISC 2013, Leipzig, Germany, June 16–20, 2013. Proceedings 28 343–356 (Springer, 2013).

Underwood, R., Bessac, J., Di, S. & Cappello, F. Understanding the effects of modern compressors on the community earth science model. In IEEE/ACM 8th International Workshop on Data Analysis and Reduction for Big Scientific Data (DRBSD) Vol. 2022, 1–10 (IEEE, 2022).

Kunkel, J., Jumah, N., Novikova, A., Ludwig, T., Yashiro, H., Maruyama, N., Wahib, M., & Thuburn, J. Aimes: Advanced computation and i/o methods for earth-system simulations. In Software for Exascale Computing-SPPEXA 2016–2019 61–102 (Springer International Publishing, 2020).

Huang, X. et al. Czip: A fast lossless compression algorithm for climate data. Int. J. Parallel Prog. 44, 1248–1267 (2016).

Kuhn, J. P. & Lüttgau, J. Domain-specific compression using auto-encoders for climate data (2022).

Hübbe, N. & Kunkel, J. Reducing the hpc-datastorage footprint with mafisc-multidimensional adaptive filtering improved scientific data compression. Comput. Sci. Res. Dev. 28, 231–239 (2013).

Baker, A. H., Xu, H., Dennis, J. M., Levy, M. N., Nychka, D., Mickelson, S. A., Edwards, J., Vertenstein, M. & Wegener, A. A methodology for evaluating the impact of data compression on climate simulation data. In Proceedings of the 23rd International Symposium on High-Performance Parallel and Distributed Computing, 2014 203–214.

Liu, S. et al. In IEEE International Symposium on Parallel and Distributed Processing with Applications Vol. 2014, 68–77 (IEEE, 2014)

Yu, Z. et al. Lossy compression of earth system model data based on a hierarchical tensor with adaptive-hgfdr (v1.0). Geosci. Model Dev. 14(2), 875–887 (2021).

Liu, Y. et al. Optimizing error-bounded lossy compression for scientific data with diverse constraints. IEEE Trans. Parallel Distrib. Syst. 33(12), 4440–4457 (2022).

Liu, J. Di, S., Zhao, K., Jin, S., Tao, D., Liang, X., Chen, Z. & Cappello,F. Exploring autoencoder-based error-bounded compression for scientific data. In 2021 IEEE International Conference on Cluster Computing (CLUSTER) 294–306 (IEEE, 2021).

Le, H., Santos, H. & Tao, J. Hierarchical autoencoder-based lossy compression for large-scale high-resolution scientific data. arXiv preprint arXiv:2307.04216 (2023).

Dupont, E., Goliński, A., Alizadeh, M., Teh, Y. W. & Doucet, A. Coin: Compression with implicit neural representations. arXiv preprint arXiv:2103.03123 (2021).

Dupont, E., Loya, H., Alizadeh, M.,Goliński, A., Teh, Y. W. & Doucet, A. Coin++: Neural compression across modalities. arXiv preprint arXiv:2201.12904 (2022).

Lee, J., Cho, S. & Beack, S.-K. Context-adaptive entropy model for end-to-end optimized image compression. arXiv preprint arXiv:1809.10452 (2018).

Ballé, J., Minnen, D., Singh, S., Hwang, S. J. & Johnston, N. Variational image compression with a scale hyperprior. arXiv preprint arXiv:1802.01436 (2018).

Cheng, Z., Sun, H., Takeuchi, M. & Katto, J. Learned image compression with discretized Gaussian mixture likelihoods and attention modules. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020 7939–7948.

Sitzmann, V., Martel, J., Bergman, A., Lindell, D. & Wetzstein, G. Implicit neural representations with periodic activation functions. Adv. Neural. Inf. Process. Syst. 33, 7462–7473 (2020).

D. E3SM Project. Energy exascale earth system model v1.0. [Computer Software]. https://doi.org/10.11578/E3SM/dc.20180418.36 (2018).

Oyama, N., Ishizaki, N. N., Koide, S. & Yoshida, H. Deep generative model super-resolves spatially correlated multiregional climate data. Sci. Rep. 13(1), 5992 (2023).

Körner, C. & Paulsen, J. A world-wide study of high altitude treeline temperatures. J. Biogeogr. 31(5), 713–732 (2004).

Caldwell, P. M. et al. The doe e3sm coupled model version 1: Description and results at high resolution. J. Adv. Model. Earth Syst. 11(12), 4095–4146 (2019).

Golaz, J.-C. et al. The doe e3sm coupled model version 1: Overview and evaluation at standard resolution. J. Adv. Model. Earth Syst. 11(7), 2089–2129 (2019).

Jais, I. K. M., Ismail, A. R. & Nisa, S. Q. Adam optimization algorithm for wide and deep neural network. Knowl. Eng. Data Sci. 2(1), 41–46. https://doi.org/10.17977/um018v2i12019p41-46. (2019).

Pawar, N. M., Soltanmohammadi, R., Faroughi, S. & Faroughi, S. A. Geo-guided deep learning for spatial downscaling of solute transport in heterogeneous porous media. Comput. Geosci. 188, 105599 (2024).

Deng, X. Enhancing image quality via style transfer for single image super-resolution. IEEE Signal Process. Lett. 25(4), 571–575 (2018).

Soltanmohammadi, R. & Faroughi, S. A. A comparative analysis of super-resolution techniques for enhancing micro-CT images of carbonate rocks. Appl. Comput. Geosci. 20, 100143. https://doi.org/10.1016/j.acags.2023.100143 (2023).

Portmann, R. W., Solomon, S. & Hegerl, G. C. Spatial and seasonal patterns in climate change, temperatures, and precipitation across the united states. Proc. Natl. Acad. Sci. 106(18), 7324–7329 (2009).

Nath, R., Luo, Y., Chen, W. & Cui, X. On the contribution of internal variability and external forcing factors to the cooling trend over the humid subtropical indo-gangetic plain in india. Sci. Rep. 8(1), 18047 (2018).

Wang, K. & Zhou, C. Regional contrasts of the warming rate over land significantly depend on the calculation methods of mean air temperature. Sci. Rep. 5(1), 12324 (2015).

Huang, L. & Hoefler, T. Compressing multidimensional weather and climate data into neural networks. arXiv preprint arXiv:2210.12538 (2022).

Ioannides, G., Chadha, A. & Elkins, A. Gaussian adaptive attention is all you need: Robust contextual representations across multiple modalities. In CoRR (2024).

Mostajeran, F. & Faroughi, S. A. Epi-ckans: Elasto-plasticity informed Kolmogorov-Arnold networks using Chebyshev polynomials. arXiv preprint arXiv:2410.10897 (2024).

Mostajeran, F. & Faroughi, S. A. Scaled-cpikans: Spatial variable and residual scaling in Chebyshev-based physics-informed Kolmogorov-Arnold networks. J. Comput. Phys. 537, 114116. https://doi.org/10.1016/j.jcp.2025.114116 (2025).

Mahjour, S. K. & Faroughi, S. Uncertainty quantification and spatiotemporal downscaling in earth system models. In AGU Fall Meeting Abstracts Vol. 2022, A52F–01 (2022).

Mahjour, S. K., Liguori, G. & Faroughi, S. A. Selection of representative general circulation models under climatic uncertainty for western North America. J. Water Clim. Change 15(2), 686–702 (2024).

Mahjour, S. K., Tiefenbacher, J. P. & Faroughi, S. A. Select representative general circulation model-runs using enveloped-based technique. J. Clim. 38(7), 1627–1650 (2025).

Pawar, N. M., Soltanmohammadi, R., Mahjour, S. K. & Faroughi, S. A. ESM data downscaling: A comparison of super-resolution deep learning models. Earth Sci. Inf. 17(4), 3511–3528 (2024).

Zeraatkar, E., Faroughi, S. & Tešić, J. Visir: Vision transformer single image reconstruction method for earth system models. arXiv preprint arXiv:2502.06741 (2025).

Acknowledgements

S.A.F. would like to acknowledge support from the Department of Energy’s Biological and Environmental Research (BER) program. (award no. DE-SC0023044).

Author information

Authors and Affiliations

Contributions

F.M.: Methodology, Implementation, Investigation, Validation, Visualization, Writing – original draft; N.M.P.: Implementation, Visualization, Writing – original draft; J.M.V.: Implementation, Writing – original draft; S.A.F.: Conceptualization, Methodology, Visualization, Supervision, Writing – review & editing, Funding acquisition. All authors discussed the results and commented on the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Mostajeran, F., Pawar, N.M., Villarreal, J.M. et al. Context-aware implicit neural representations to compress Earth systems model data. Sci Rep 15, 25932 (2025). https://doi.org/10.1038/s41598-025-11092-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-11092-w