Abstract

The integration of Internet of Things (IoT) technologies with deep learning has introduced powerful opportunities for advancing cross-media art and design. This paper proposed DeepFusionNet, an IoT-driven multimodal classification framework developed to process real-time visual, auditory, and motion data acquired from distributed sensor networks. Rather than generating new content, the system classifies contextual input states to activate predefined artistic modules within interactive multimedia environments. The architecture of DeepFusionNet integrates Convolutional Neural Networks (CNNs) for spatial feature extraction, as well as Gated Recurrent Units (GRUs) and Long Short-Term Memory (LSTM) layers for modeling temporal dependencies in auditory and motion data. Additionally, it features fully connected layers for multimodal feature fusion and final classification. Input data undergoes comprehensive preprocessing, including normalization, imputation, noise filtering, and augmentation, to ensure consistent and high-quality multimodal representations. Extracted features from each modality are fused within the network to identify user interaction contexts that guide adaptive system responses. Unlike existing multimodal transformer-based frameworks, DeepFusionNet prioritizes low-latency and synchronized IoT processing, offering a Lightweight yet robust alternative for real-time interaction. Employing deep multimodal fusion rather than simple rule-based triggers ensures contextual awareness, scalability, and resilience in interactive art installations. Experimental evaluations demonstrate that DeepFusionNet achieves high performance, with 94.2% accuracy, 92.5% sensitivity, 96.1% specificity, 93.8% F1-score, 95.0% precision, MCC of 0.846, and an AUC of 0.96. Furthermore, the model achieves a 15% reduction in latency compared to baseline frameworks. The DeepFusionNet offers a scalable and real-time infrastructure for user-aware, IoT-enhanced cross-media art applications.

Similar content being viewed by others

Introduction

In recent years, the rapid convergence of the Internet of Things (IoT) and deep learning has reshaped numerous sectors, including healthcare, manufacturing, and creative industries. IoT systems, comprising interconnected smart devices capable of sensing, analyzing, and sharing data, enable the real-time acquisition of diverse inputs from both users and the environment1,2. When combined with deep learning, particularly neural networks, these systems become capable of processing large-scale multimodal data and making context-aware, autonomous decisions3. Deep learning models, particularly convolutional and recurrent neural networks, have demonstrated high effectiveness in applications such as image recognition, speech analysis, and autonomous systems4. This integration has led to the development of intelligent, adaptive systems capable of dynamic responses based on real-time inputs4. In the realm of art and design, the application of IoT and deep learning is gaining momentum. These technologies enable artists to create interactive installations that respond to environmental stimuli and engage with the audience5. Unlike traditional static forms, IoT-enhanced artworks can incorporate visual, auditory, and motion data to produce responsive and adaptive experiences. This has enabled the emergence of kinetic, algorithmic, biosensor-based, and participatory art forms, where the boundary between viewer and artwork becomes increasingly fluid6,7. The structured fusion of multiple media types allows for more coherent and context-aware artistic outputs. As a result, the integration of IoT and deep learning is redefining creative expression by supporting systems that are not only multimedia-rich but also contextually dynamic and personalized.

Machine learning, and more specifically deep learning, has achieved notable advancements across a wide range of domains in recent years. Deep learning models often outperform traditional machine learning algorithms and, in some tasks, approach or even surpass human-level accuracy. Convolutional Neural Networks (CNNs) are widely used for end-to-end pattern recognition, particularly in visual data analysis8,9,10. At the same time, Recurrent Neural Networks (RNNs), including Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) models, are well-suited for processing time-dependent data, such as audio and textual inputs11,12,13. These advancements have been driven by the development of deeper and more efficient architectures, increased computational power, and access to large-scale annotated datasets. In practice, deep learning has demonstrated substantial value in real-world applications, including medical diagnostics, autonomous systems, and natural language processing. Its adaptability and capacity to learn complex representations have enabled breakthroughs in areas previously considered too difficult for automation14,15. Within the creative sector, deep learning is increasingly being explored for enhancing interactive and data-driven art. Technologies such as Generative Adversarial Networks (GANs) have contributed to the development of generative art by synthesizing realistic media content16,17. When integrated with IoT, these systems gain the ability to respond dynamically to environmental conditions, user behavior, or physiological signals. This has given rise to novel forms of cross-media art, including kinetic, interactive, and sensor-based works, that evolve in real-time based on multimodal input, thereby expanding the expressive boundaries of digital creativity.

The primary objective of this investigation is to develop a system capable of interpreting and responding to real-time multimodal inputs, including visual, auditory, and motion data, collected through IoT-based sensor networks. Rather than autonomously generating new artistic content, the proposed framework classifies contextual input states to activate predefined artistic modules across various media formats. This classification-based interaction creates structured, reactive experiences within cross-media environments. To achieve this, the paper presents DeepFusionNet, a hybrid deep learning architecture that integrates Convolutional Neural Networks (CNNs) for spatial feature extraction, Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) layers for modeling temporal dynamics, and fully connected layers for multimodal feature fusion and classification. Multimodal data is collected using distributed IoT devices such as cameras, microphones, and motion sensors. The system processes these inputs to detect contextual user states, which in turn determine the selection of predefined multimedia responses. The framework focuses on achieving accurate real-time classification to support technically robust, context-sensitive control in cross-media art installations. Experimental evaluation includes performance metrics such as classification accuracy, latency, sensitivity, and precision, confirming the system’s ability to respond reliably under real-time conditions. Distinct from existing multimodal fusion frameworks such as Multimodal Transformers, e.g., Vision-and-Language BERT (ViLBERT), MultiModal Transformer (MMT), or attention-based models such as the Multimodal Transfer Module (MMTM) and the Memory Fusion Network (MFN), DeepFusionNet emphasizes lightweight integration and synchronized IoT data handling to minimize latency and computational overhead. Its classification-driven reactive design, as opposed to generative or rule-based approaches, ensures scalability, reproducibility, and contextual robustness, making it particularly well-suited for interactive art environments. While this work does not experimentally evaluate interactivity, adaptability, or user satisfaction, it lays the technical foundation for such studies in future work.

The main innovations of the paper are the following:

-

Firstly, a novel IoT-based approach for collecting real-time visual, auditory, and motion data from users and environments using sensor-equipped devices.

-

Secondly, the introduction of DeepFusionNet, a hybrid model combining CNNs, LSTM/GRU units, and fully connected layers for effective multimodal classification.

-

Thirdly, a unified framework for fusing heterogeneous data streams and classifying input contexts to trigger predefined artistic responses is proposed.

-

Finally, the system supports adaptive, personalized interactions by continuously interpreting multimodal input, enhancing user engagement in cross-media installations.

The remaining sections of the paper are organized by logical order as follows: First, the Related Work Sect. 2 reviews existing research in IoT, deep learning, and cross-media art, discussing challenges and advancements in integrating multimodal data. Next, Sect. 3 of the Proposed Methodology describes the IoT-enhanced framework based on DeepFusionNet, outlining data collection, feature extraction, data fusion, and the generation of adaptive art forms. Section 4 of the Experimental Analysis details the datasets, IoT device configuration, DeepFusionNet model, and evaluation metrics, comparing the proposed framework’s performance with baseline models. Finally, the Conclusion and Future Work Sect. 6 summarizes the paper’s contributions and explores future research directions for improving and extending the framework.

Related work

The integration of Internet of Things (IoT) technologies with deep learning (DL) has become increasingly prominent in various domains, including healthcare, manufacturing, and creative industries such as art and design. Within the realm of innovative applications, IoT enables the capture of real-time data, including visual, auditory, and motion-based information, through interconnected sensors and devices. At the same time, deep learning offers powerful tools for extracting meaningful patterns from these complex datasets. The intersection of these technologies has laid the groundwork for interactive, adaptive, and user-centered artistic systems. However, several challenges remain, particularly concerning the effective fusion of heterogeneous data modalities in real-time contexts.

Previous research has explored the potential of IoT in interactive art. For instance18, proposed a framework that uses IoT devices to collect visual and motion data for art installations. Although their system demonstrated the feasibility of interactive environments, it faced limitations in efficiently integrating multimodal inputs into coherent outputs. Similarly19, utilized Convolutional Neural Networks (CNNs) to analyze visual and audio data streams for interactive art. While this approach advanced high-level feature extraction, it remained constrained by single-modality processing and lacked dynamic fusion strategies. In a related effort20, introduced a hybrid architecture combining CNNs with Recurrent Neural Networks (RNNs) to respond to varying user inputs. However, the continuity and consistency of output deteriorated when managing simultaneous multimodal streams, indicating difficulties in handling real-time fusion. Another study by21 focused on capturing environmental and user sensor data using IoT for adaptive installations but did not integrate deep learning techniques capable of modeling complex data dependencies. Furthermore22, investigated multiple multimodal art systems and identified several shortcomings, including rigid architectures, limited support for unstructured or spontaneous interactions, and a lack of scalability. These findings emphasize the need for a more flexible, data-driven framework that can harmonize diverse data streams and support user-responsive behaviors.

Recent advances in multimodal artificial intelligence have attempted to address similar challenges. Transformer-based architectures, such as Vision-and-Language BERT (ViLBERT) and the MultiModal Transformer (MMTransformer), have demonstrated strong capabilities in aligning and jointly reasoning over heterogeneous modalities, particularly in vision–language tasks. More recently, Wang et al.23 introduced MM-Transformer, a Transformer-based knowledge graph link prediction model that fuses multimodal features (structural, visual, and textual) using a symmetrical hybrid key–value calculation strategy. Their results demonstrated improved performance over prior state-of-the-art methods such as MKGformer, highlighting the effectiveness of transformer architectures in multimodal feature fusion. Attention-driven models, such as the Multimodal Transfer Module (MMTM) and the Memory Fusion Network (MFN), focus on selectively weighting and transferring information across modalities, thereby achieving improved performance in sequential and context-rich applications. Other methods, such as Modality-Invariant and Specific Representations (MISA) and adaptive weighting frameworks, emphasize dynamic adjustment of modality contributions to handle noisy or missing data streams. While these approaches represent state-of-the-art in multimodal learning, many are computationally heavy and not yet optimized for low-latency IoT environments. This creates a gap for frameworks that balance robust multimodal fusion with the real-time constraints of interactive art installations.

Despite progress, most existing approaches either focus on isolated modalities or employ architectures that are computationally intensive, making them unsuitable for real-time IoT-driven contexts. As a result, they often lack a unified and efficient mechanism for fusing multimodal data streams in a scalable and low-latency manner. The demand for more immersive, personalized, and adaptable creative systems is growing, particularly in cross-media environments. To address these challenges, this paper proposes DeepFusionNet, an IoT-driven deep learning framework that performs real-time multimodal classification using Convolutional Neural Networks (CNNs), Long Short-Term Memory (LSTM) units, Gated Recurrent Units (GRUs), and fully connected layers for feature fusion and decision making. Distinct from computationally heavy generative or transformer-based systems, the proposed model emphasizes lightweight multimodal fusion tailored to IoT environments, classifying interaction contexts to trigger predefined artistic responses. This design enables responsive, scalable, and user-aware cross-media installations while maintaining practical feasibility in real-time deployments.

Proposed iot-enhanced framework for cross-media art and design

The integration of IoT technologies into cross-media art and design presents significant opportunities for dynamic interaction, user-driven personalization, and multi-sensory engagement. However, conventional systems often lack scalability, real-time adaptability, and coherent data fusion strategies, particularly when managing complex and heterogeneous input streams. To address these limitations, this paper proposes an IoT-based framework that leverages interconnected devices, real-time data processing, and a deep learning backbone to enable intelligent, responsive behavior in cross-media art installations.

Proposed framework

The IoT-enhanced framework developed in this work is designed to enable the real-time acquisition, transmission, and classification of multimodal data, specifically visual, auditory, and motion inputs, thereby supporting context-aware artistic interaction. IoT-enabled sensing devices, including RGB cameras, MEMS microphones, and inertial measurement units (IMUs) that comprise accelerometers and gyroscopes, are deployed to collect raw environmental and user-related data. These devices communicate with a cloud-based infrastructure through lightweight, low-latency protocols such as MQTT and UDP over Wi-Fi, enabling asynchronous and scalable data transfer24,25. To ensure high data fidelity, a dedicated media control layer performs real-time data synchronisation, noise filtering, redundancy elimination, and inconsistency reduction. Additionally, the framework incorporates a data privacy and integrity module that manages encryption, anonymisation, and secure storage operations, making it suitable for high-throughput and privacy-sensitive deployments in artistic environments. At the core of the processing pipeline lies the DeepFusionNet architecture, which employs Convolutional Neural Networks (CNNs) for spatial feature extraction, Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) layers for modeling temporal dependencies in motion and audio streams, and fully connected layers for multimodal fusion and classification26,27. The model does not generate artistic content; instead, it performs classification of input contexts to trigger predefined artistic responses in real-time, interactive installations. To optimise system performance, DeepFusionNet is trained using hyperparameter tuning and evaluated on various performance metrics, including accuracy, sensitivity, F1-score, and Matthews Correlation Coefficient (MCC)28. These metrics confirm the model’s reliability in accurately interpreting and responding to dynamic, real-world multimodal data. The complete pipeline, encompassing IoT-based data acquisition, secure cloud-based processing, DeepFusionNet integration, and performance evaluation, is illustrated in Fig. 1.

As shown in Fig. 1, to further Support real-time responsiveness, the sensing devices were configured with modality-specific parameters. The RGB cameras captured video at 30 frames per second (fps) with a 1280 × 720 resolution, while the MEMS microphones recorded audio at a sampling rate of 44.1 kHz with a 16-bit depth. Inertial measurement units (IMUs), including accelerometers and gyroscopes, streamed motion data at 100 Hz, providing sufficient granularity for gesture and posture recognition. All modalities were synchronized using a timestamp-based alignment strategy, supported by the Network Time Protocol (NTP) to ensure consistent timing across devices. A lightweight buffering mechanism was applied to correct minor delays (less than 10 ms), enabling coherent fusion of visual, auditory, and motion streams within the DeepFusionNet pipeline.

Dataset collection

To support the development and evaluation of the proposed framework, a multimodal dataset was constructed using both real-time data collected from IoT devices and publicly available sources. High-resolution visual data, including images and short video clips, were captured using cameras and light sensors and further supplemented with samples from established datasets such as COCO and Flickr8k, which offer diverse and semantically annotated image content. For auditory input, sound recordings were collected through microphones and sound sensors, and augmented using open datasets such as UrbanSound8K and FreeSound. These sources encompass a diverse range of labeled environmental and ambient sounds, suitable for classification tasks. Motion-related data were acquired from accelerometers and gyroscopes integrated into IoT devices and enriched with samples from the Human Activity Recognition (HAR) dataset available on Kaggle.

All collected data were transferred to a centralized cloud environment where preprocessing operations, such as noise filtering, normalization, synchronization, and modality alignment, were applied. Quality control procedures ensured the consistency and reliability of the dataset, while data privacy mechanisms were incorporated to protect any sensitive information captured during real-time data acquisition. The final multimodal dataset comprises synchronized visual, auditory, and motion features aligned with interaction contexts. This dataset enables DeepFusionNet to perform effective classification of user states and environmental conditions, which are then used to trigger predefined artistic responses within the cross-media framework. To define these interaction contexts, a labeling scheme was implemented that categorized user-system states into predefined classes such as Active, Idle, Exploratory, and Engaged. Annotations were assigned through a combination of manual expert labeling and semi-automatic logging of sensor events (e.g., motion bursts, sound thresholds, or visual presence). Manual annotations were carried out by two independent reviewers to ensure reliability, with discrepancies resolved through consensus. This hybrid approach enabled consistent and reproducible mapping between multimodal signals and contextual input states, which then served as the ground-truth labels for model training and evaluation.

Let the dataset\(\:\:D\) for cross-media art and design be defined as a collection of three primary modalities: visuals, sounds, and motion data.

Where, \(\:{D}_{\text{v}}\) represents the visual data, \(\:{D}_{a}\), represents the auditory data, and \(\:{D}_{m}\), represents the motion data. Visual data \(\:{D}_{v}\), it consists of a set of images and video samples:

Where \(\:H\), \(\:W\), and \(\:C\) represent the height, width, and number of color channels, respectively. Auditory data \(\:{D}_{a}\), consists of audio signals represented as sequences of discrete samples:

Where \(\:T\) is the duration of the audio sample in terms of the number of time steps. Motion data \(\:{D}_{m}\), is captured from sensors and represented as a sequence of features:

Where \(\:d\) is the number of motion-related features (e.g., acceleration, orientation). The full dataset \(\:D\) is then represented as:

Where \(\:{y}_{i}\), is the corresponding label or annotation for the sample, \(\:N\) is the total number of samples.

Preprocessing

Data preprocessing is considered an essential aspect of feature extraction, as it determines which form of multimodal data is best Suited for analysis by deep learning models. It does this to ensure that there is high-quality data, without missing, redundant, or inconsistent data, for formulating Subsequent data analysis processes. Modality-specific preprocessing was implemented to optimize the quality and consistency of multimodal inputs for DeepFusionNet. In the visual stream, all images and video frames were resized to 224× 224 pixels to align with CNN input requirements and normalized channel-wise using dataset-specific means and standard deviations29. To enhance generalization and reduce overfitting, augmentations such as random rotations (± 15°), horizontal flips, and brightness adjustments were applied. In the audio stream, raw waveforms were converted into Mel-spectrograms with 128 frequency bins and a fixed time window. Noise filtering, using a 300–3400 Hz band-pass filter, removed irrelevant frequency components. Robustness was further enhanced through the injection of Gaussian noise, random time-shifting within a 50–200 ms range, and pitch scaling. In the motion stream, accelerometer and gyroscope readings were smoothed with a moving-average filter to suppress jitter, normalized per-axis to ensure device independence, and corrected for missing values using k-nearest neighbor (k = 5) interpolation. Additional augmentation through temporal stretching and axis rotation perturbations simulated natural variations in user movements30. Collectively, these procedures ensured standardized, high-quality, and diverse multimodal representations, enabling DeepFusionNet to achieve reliable fusion and real-time classification in cross-media art applications.

Visual modality is used to standardize visual inputs; each pixel is normalized channel-wise by subtracting the mean and dividing by the standard deviation. This ensures that all images share a consistent scale, reducing bias from lighting variations or color imbalances:

To improve robustness, images are randomly rotated within a controlled range, which increases sample diversity and prevents overfitting to specific orientations:

Audio modality is a raw audio waveform that is converted into Mel-spectrograms, which capture frequency information in a perceptually meaningful scale. This transformation provides a compact and discriminative time–frequency representation for learning:

To increase generalization, Gaussian noise is added during augmentation, simulating environmental variability and ensuring that the model is not overly sensitive to small perturbations:

Motion modality is a sensor signal that often contains high-frequency jitter; therefore, smoothing is applied using a moving average filter. This reduces transient noise while retaining the overall shape of the motion patterns:

In cases where sensor readings are missing, values are imputed using a k-nearest neighbor strategy. This method replaces missing entries with the mean of nearby patterns, maintaining temporal consistency:

These formulations formalize the preprocessing pipeline across all modalities, ensuring that visual inputs are standardized and augmented, audio signals are transformed into robust spectrogram representations with noise tolerance, and motion data are smoothed, normalized, and imputed for reliability. Together, they provide high-quality, consistent, and diverse multimodal features that significantly enhance the effectiveness of DeepFusionNet in real-time classification tasks.

The first step is data normalization, which scales all inputs to have a zero mean without weighting them towards outliers31,32. For data cleaning, outlier values are removed through statistical analysis, and treatments for different modes of missing data include mean or k-NN imputation33,34. Furthermore, data replenishment helps diversify a dataset by incorporating newly created synthetic samples, while formatting standardization facilitates the creation of a unified format across different types of data. These preprocessing steps enhance the quality of the data, ensuring that only the most suitable features are extracted and reducing the likelihood of developing a program with low accuracy.

Normalization scales features to a standardized range. For a feature vector \(\:x=[{x}_{1},{x}_{2},\dots\:,{x}_{n}]\), Min-Max scaling is defined as:

Z-score normalization standardizes data using:

Where:

Outliers are identified using Z-scores for each data point \(\:{x}_{i}\):

Here, k is a predefined threshold (commonly k = 3). Outliers are then excluded by:

Missing data \(\:{x}_{missing}\) is imputed through:

The preprocessing includes specific parameters, such as the threshold \(\:k\:=\:3\) for outlier detection using the Z-score method, to remove extreme data points. For KNN imputation, \(\:K=5\) is used, where missing values are filled based on the average of the 5 nearest neighbors, ensuring reliable data while maintaining computational efficiency:

Augmentation generates synthetic samples. For image data \(\:I(x,y)\):

For audio data \(\:A\left(t\right)\), noise \(\:N,\) and time shifts \(\:T\) are applied:

Here, \(\:\varDelta\:t\) is the time shift, and \(\:\eta\:\) controls noise intensity.

Standardization unifies data dimensions. For image datasets:

For time-series data \(\:T\left(t\right)\):

Preprocessing utilizes mathematical formulations to optimize data quality, consistency, and compatibility, which significantly enhances the performance of deep learning models.

DeepFusionNet

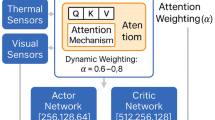

DeepFusionNet is a hybrid deep learning architecture developed to perform efficient multimodal data classification in the context of cross-media art and design. The model is designed to process heterogeneous input streams, including visual, auditory, and motion data, and extract high-level features that inform the behavior of interactive systems. The architecture begins with Convolutional Neural Networks (CNNs), which are used to extract spatial features from visual data and to transform auditory signals into spectrogram representations for further processing. Pooling layers follow the convolutional layers to reduce dimensionality while preserving essential spatial features35. These representations are then passed through flattening layers, which convert multi-dimensional feature maps into vectors suitable for dense processing36,37. To handle temporal characteristics in motion and audio data, the model incorporates Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) layers. These layers are responsible for learning temporal dependencies and are particularly effective in processing time-aligned multimodal sequences. The outputs of the CNN, LSTM, and GRU components are concatenated and passed through fully connected layers, which perform feature fusion and facilitate final classification. The model is optimized using advanced training techniques, including hyperparameter tuning and loss function minimization, to achieve robust generalization across multimodal datasets38,39. Unlike generative models, DeepFusionNet is specifically designed to classify interaction contexts, such as user gestures or environmental states, and trigger predefined artistic responses within cross-media installations. By combining the strengths of CNNs for spatial data, recurrent layers for temporal data, and dense layers for integration, DeepFusionNet offers a scalable and adaptable solution for real-time multimodal classification. Its performance in the creative domain is characterized by low classification error, high responsiveness, and applicability to diverse interactive design tasks. The full architecture of DeepFusionNet, including its multimodal input processing pipeline and component relationships, is illustrated in Fig. 2.

DeepFusionNet is an advanced model of deep learning developed for fusion applications involving multimodal data, such as vision, audio, and motion sensor data. CNNs are one of the neural networks used in this study, along with LSTM and GRU networks40,41. For input images or spectrograms, and in the case of auditory data, the CNN conducts a convolution to extract spatial features. Let the input be represented as \(\:{X}_{visual}\) or \(\:{X}_{audio}\), where each data type is processed separately:

Where \(\:{F}_{cnn}\) is the feature map obtained from the convolution layers; these features are typically processed with activation functions \(\:{\upsigma\:}\) (e.g., ReLU) to introduce non-linearity. For handling sequential data (such as motion sequences or time-series features from auditory data), the temporal dependencies are modeled using LSTMs and GRUs. For LSTM, the input at time step \(\:t\) is \(\:{x}_{t}\), and the LSTM computes the following updates at each time step:

Where \(\:{h}_{t}\:\)represents the hidden state, \(\:{i}_{t}\), \(\:{f}_{t}\), and \(\:{o}_{t}\)_tot are the input, forget, and output gates, respectively, and \(\:{c}_{t}\) is the cell state. For GRU, the gates are simpler and are given by:

Where \(\:{r}_{t}\) and \(\:{z}_{t}\) are reset and updated gates, respectively.

In DeepFusionNet, the model efficiently combines spatial features extracted from the CNN layers and temporal features captured by the LSTM/GRU layers. This fusion process enables the network to integrate both types of data for enhanced multimodal understanding. The fusion is performed as follows:

In the fusion process, \(\:{F}_{\text{c}\text{n}\text{n}}\) represents the spatial features extracted from CNN layers (visual data), and \(\:{F}_{\text{l}\text{s}\text{t}\text{m}/\text{g}\text{r}\text{u}}\) represents the temporal features from LSTM/GRU layers (motion/audio data). The \(\:\oplus\:\) operation denotes the concatenation of these features along the feature dimension. The concatenated features are then linearly transformed by the weight matrix \(\:{W}_{c}\), with a bias term \(\:b\) added after the transformation. The activation function \(\:\sigma\:(\cdot\:)\), typically ReLU or sigmoid, introduces non-linearity into the transformed features. This fusion method integrates both spatial and temporal information, enabling the model to perform accurate classification or decision-making for cross-media art tasks. The resulting fused features are then processed for downstream tasks, such as classification or regression.

The fused features are passed through fully connected layers, represented as:

The model parameters \(\:\varTheta\:\) are optimized by minimizing a loss function \(\:L\):

Using backpropagation and optimizers like Adam, the model iteratively updates \(\:\varTheta\:\):

Where \(\:\eta\:\) is the learning rate, and \(\:t\) is the iteration step.

Performance evaluation of DeepFusionNet

DeepFusionNet performance is assessed using Known objective functions relative to a given task, and in this case, the researcher primarily uses classification. The quality of classification is measured by embracing necessary classification metrics, such as accuracy, precision, recall (also known as sensitivity), F1 score, and specificity, to evaluate the model’s success. This assessment evaluates its capacity to classify multimodal input (on visual, auditory, and motion data) and elicit predefined artistic responses. Accuracy is the percentage of instances where the model makes correct predictions42,43. Precision and recall determine whether the model accurately detects both positive and negative cases. The F1 score combines precision and recall, which is particularly important when working with imbalanced data. Specificity quantifies the model’s accuracy in classifying negative cases. Moreover, the Receiver Operating Characteristic (ROC) curve and the Area Under the Curve (AUC-ROC) determine the trade-off between sensitivity and specificity, depending on different thresholds44. In binary classification problems, the Matthews Correlation Coefficient (MCC) is also employed because it considers all values in the confusion matrix, thereby providing a balanced performance measure, especially in imbalanced settings.

Where T+ symbolizes true positives, \(\:{F}^{+}\) symbolizes false positives, \(\:{T}^{-}\) Symbolizes true negatives and \(\:{F}^{-}\) False negatives, respectively.

Regression criteria are also represented by the Mean Absolute Error (MAE), Mean Squared Error (MSE), and Root Mean Squared Error (RMSE) in addition to classification metrics. In addition to these metrics, we also evaluate the computational efficiency of DeepFusionNet, explicitly focusing on training time, inference time, and memory consumption. These data are essential for understanding the actual implementation of the model, especially in real-time, where latency and memory are significant concerns. To determine the comparative efficiency of DeepFusionNet, it is contrasted with baseline models, testing its capability with multimodal data and the flexibility of complex tests. The above comparisons demonstrate the effectiveness of DeepFusionNet in multimodal classification problems, particularly in scenarios where multiple data streams must be processed in real-time. Although classification metrics assess the extent to which the model can classify input states (i.e., states that elicit predefined artistic responses), it is essential to note that artistic merit will not be evaluated in this research. The emphasis is placed on the reliability of the system to elicit the correct artist responses depending on the categorical states of the inputs. The aesthetic quality of the production, including graphic and sound stimuli, will be assessed in subsequent research through surveys or by expert users to provide a holistic review of the model’s performance in interactive art installations.

Mean Absolute Error (MAE):

Root Mean Squared Error (RMSE):

These metrics are informative in assessing the performance of the random forest algorithm regarding the outcomes estimated and driven by weather data on economic activities. In this case, the primary quantitative performance metrics assess the effectiveness of the model used to identify students who require support.

Experimental results and analysis

In this section, the authors present the experimental results obtained and provide a performance evaluation of DeepFusionNet in the field of multimodal data fusion. For the cross-media experiments, the model was tested using a combination of multimodal data, including visual, auditory, and motion, to examine its potential for handling general multimodal inputs and cross-media tasks. In this research, the proposed DeepFusionNet architecture was tested against single-modality neural models, such as CNNs for still images and LSTM/GRU for videos. The findings show that, in contrast to these models, DeepFusionNet achieves higher accuracy, precision, recall, and F1 score, thereby highlighting the efficiency of the proposed method for multimodal feature fusion. Furthermore, we discuss the training and inference time complexity, as well as the memory space occupied by the model, to demonstrate that the proposed model is scalable and efficient in practical scenarios. These additional results also corroborate that the proposed model is effective in analyzing challenging and diverse multiple-media data as well as various cross-media tasks.

System requirements

The essential precondition for using DeepFusionNet is reliable hardware and software. A multi-core CPU Intel i7 or AMD Ryzen 7, NVIDIA RTX 4000 GPU, 32 GB RAM, 500 GB SSD, 1 TB HDD, and Linux Ubuntu 20.04. For software, TensorFlow 2.6 and PyTorch 2.0 are used, along with Python version 3.12. Other basic libraries include NumPy, SciPy, Pandas, Matplotlib, scikit-learn, and OpenCV/Libros. Tools used for program development include Anaconda 3, a Python interpreter in the Jupyter Notebook environment, and an IDE compatible with Python. Some other libraries that assist in data management include HDF5, which is used for data management, visualization, and training. TensorBoard and OpenCV enable real-time image processing, while librosa handles audio data-related tasks. This setup enables the mechanical system necessary for multimodal data analysis to operate optimally. As shown in Table 1, DeepFusionNet requires fundamental hardware configurations and software applications that may impact the network’s performance and effectiveness during implementation and deployment.

Comparative analysis across modalities

The results present the performance metrics of a model across different learning rates, showing how accuracy, sensitivity, specificity, F1 score, precision, MCC, and AUC vary with learning rates from 0.001 to 0.1. Accuracy tends to decrease as the learning rate increases, with higher learning rates resulting in lower performance across all metrics. At a learning rate of 0.01, the model achieves the highest accuracy of 94.2%, with excellent sensitivity and specificity. However, at higher learning rates (e.g., 0.1), the accuracy drops to 70.2%, showing a trade-off between learning rate and model performance. Table 2 illustrates the performance metrics of a model evaluated across various learning rates, highlighting the impact of learning rate adjustments on accuracy, sensitivity, specificity, F1 score, precision, MCC, and AUC.

The results presented across different data modalities demonstrate varying performance metrics. In Fig. 3a, bar plots show Accuracy, Sensitivity, Specificity, F1 Score, and Precision. Multimodal data outperforms others in terms of accuracy (94.2%), sensitivity (92.5%), specificity (96.1%), F1 Score (93.8%), and Precision (95.0%), indicating superior classification ability. Figure 3b) presents the bar plot of MCC and AUC, with Multimodal yielding the highest MCC (0.846) and AUC (0.96), demonstrating its ability to distinguish between classes. Figure 3c) in line plots tracks the change in Error Loss Metrics (such as MAE, MSE, RMSE, etc.) over data modalities. Multimodal modality shows the lowest error rates (MAE: 0.35, MSE: 0.12, RMSE: 0.42, MAPE: 0.38%) compared to the others. Finally, Fig. 3d illustrates the performance of error metrics across epochs, showing a steady improvement in prediction accuracy as the model trains. The grid lines and increased font size ensure the clarity of these results. Figure 3 illustrates the performance comparison across different data modalities (Visual, Auditory, Motion, and Multimodal), highlighting the superior results of the multimodal approach.

The results presented in Fig. 4 provide a comprehensive performance comparison across data modalities (Visual, Auditory, Motion, and Multimodal Fusion). As shown in Fig. 4a, the Multimodal Fusion configuration achieves the highest classification metrics: accuracy (94.20%), sensitivity (92.15%), specificity(94.80%), F1 score (94.2%), and precision (93.54%), outperforming single-modality configurations. Figure 4b illustrates model evaluation using the MCC and AUC, where Multimodal Fusion again leads with an MCC of 0.84 and an AUC of 0.94, indicating a strong overall balance and prediction quality. Figure 4c compares error metrics, including MAE (0.045), MSE (0.010), RMSE (0.100), and MAPE (0.50%), showing that the multimodal configuration minimizes error across all indicators. Figure 4d presents IoT scalability results, where Multimodal Fusion shows the lowest latency (20 ms), energy consumption (9.8 W), and memory usage (5.8 GB), demonstrating both performance and efficiency. Figure 4 presents the IoT scalability metrics, highlighting the superior efficiency of the Multimodal Data Fusion (Proposed) model compared to other applications.

Moreover, Fig. 5 provides a detailed analysis of computational performance and resource utilization across the same data modalities. As shown in Fig. 5a, the Multimodal Fusion modality achieves the highest throughput (30 tasks per second), the best frame rate (55 FPS), and the lowest processing time (18 ms). Figure 5b shows that it also maximizes hardware efficiency, with the highest GPU utilization (90%) and moderate CPU and memory usage. These findings support the efficiency and practicality of using multimodal fusion in real-time systems.

Model performance based on feature methods

The performance metrics highlight the effectiveness of various feature extraction methods. The Wavelet Transform achieved an accuracy of 88.7%, a sensitivity of 87.4%, and a specificity of 90.2%, with an MCC of 0.760 and an AUC of 0.90. Spectral Analysis features showed slightly lower results, with an accuracy of 85.3% and an MCC of 0.710. The Fourier Transform achieved 89.1% accuracy and a 0.780 MCC, while the Texture-based features lagged, scoring 81.5% accuracy and 0.670 MCC. Deep Learning Features achieved an accuracy of 86.9% with a 0.740 MCC. Hybrid-derived features performed well, achieving 89.3% accuracy and 0.770 MCC. The proposed DeepFusionNet outperformed all, achieving 94.2% accuracy, 92.5% sensitivity, 96.1% specificity, and an impressive 0.846 MCC and 0.96 AUC. Table 3 presents the performance metrics of various feature extraction techniques with the proposed DeepFusionNet.

The results provide insights into performance and error loss metrics across different data modalities. Figure 6 compares classification accuracy and error metrics across modalities. In Fig. 6a, the Multimodal Fusion setup again achieves top scores: accuracy (94.2%), sensitivity (92.5%), specificity (96.1%), and F1 score (93.8%). Figure 6b displays the corresponding error metrics (MAE and RMSE), where Multimodal Fusion consistently yields the lowest values (MAE of 0.35, RMSE of 0.42), thereby reinforcing its superiority for robust multimodal classification.

The results across different batch sizes reveal trends in performance and error loss metrics. Figure 7a) displays the performance metrics across various batch sizes, with a batch size of 64 yielding the highest accuracy of 94.2%, sensitivity of 92.5%, specificity of 96.1%, and F1 score of 93.8%. A batch size of 32 also performs well, achieving an accuracy of 93.5%, a sensitivity of 91.8%, and a specificity of 95.2%. Figure 7b) presents the error loss metrics, where a batch size of 64 yields the lowest error values: MAE (0.35), RMSE (0.42), and MSE (0.12). In contrast, batch size 128 shows slightly higher errors: MAE of 0.38, RMSE of 0.43, and MSE of 0.14. Figure 7 illustrates the comparison of performance and error loss metrics across different batch sizes, showcasing performance metrics.

The results display the performance metrics across different epochs, revealing a clear trend of decreasing accuracy and performance as the number of epochs increases. At 50 epochs, the model achieved its highest performance, with an accuracy of 94.2%, a sensitivity of 92.5%, a specificity of 96.1%, an F1 score of 93.8%, a precision of 95%, an MCC of 0.846, and an AUC of 0.96. As the number of epochs increases, performance gradually declines. At 100 epochs, the accuracy dropped to 92.4%, and at 500 epochs, the accuracy further decreased to 66.54%. Sensitivity, specificity, and F1 score followed similar trends, indicating a decrease in model effectiveness with prolonged training. Table 4 presents the performance metrics across different epochs.

The results from the performance metrics and loss functions across various optimizers show varying trends. Figure 8a Performance Metrics across Optimizers illustrates that Adam outperforms the other optimizers, achieving the highest accuracy at 94.2%, along with superior sensitivity, specificity, and F1 score compared to SGD (91.3%) and Adagrad (92.1%). Figure 8b) Accuracy Distribution across Optimizers further highlights Adam’s dominance in accuracy, contributing significantly to the total performance, while RMSprop (93.5%) and Adadelta (92.8%) follow closely. Figure 8c) Loss Metrics across Optimizers reveals that Adadelta exhibits the lowest MAE, MSE, and RMSE values, although Adam still performs competitively with an RMSE of 0.32 and MAE of 0.35. Finally, Fig. 8d) Error Loss Distribution Across Optimizers shows that Adam achieves the lowest Huber Loss (0.10) and Log-Cosh Loss (0.08), confirming its efficiency in minimizing both error and loss compared to other optimizers. Figure 8 illustrates the results, highlighting Adam’s overall strength in optimizing performance across both accuracy and loss metrics.

Performance metrics across network architectures

The results for different machine learning methods (CNN, RNN, LSTM, GRU, and Hybrid) across various evaluation metrics reveal clear performance trends. Figure 9a) (Accuracy, Sensitivity, Specificity) shows that the Hybrid model consistently achieves the highest performance in both 5-fold and 10-fold Cross-Validation, with accuracy reaching 94.2% and 94.5%, sensitivity at 92.4% and 92.8%, and specificity at 96.0% and 96.3%. Figure 9b) (F1 Score, Precision) confirms that the Hybrid model also excels in F1 score (93.7% for 5-Fold, 94.1% for 10-Fold) and precision (94.9% for 5-Fold, 95.1% for 10-Fold). in Figure c) (MCC, AUC) highlights that the Hybrid model leads with MCC values of 0.845 (5-Fold) and 0.853 (10-Fold) and AUC values of 0.96 in both folds. Figure d) (MAE, MSE, RMSE) shows the Hybrid model with the lowest MAE (0.38 for 5-Fold), MSE (0.14 for both folds), and RMSE (0.43 for both folds), indicating better error handling. Figure 9 illustrates the comparative performance of various machine learning methods (CNN, RNN, LSTM, GRU, and Hybrid) across multiple metrics, including accuracy, sensitivity, specificity, F1 score, precision, MCC, AUC, MAE, MSE, and RMSE, highlighting the superior performance of the Hybrid model.

The performance of different machine learning models (CNN, DNN, RNN, HybridNet, LSTM, and DeepFusionNet) was evaluated on a binary classification task designed to detect user interaction states in cross-media art systems. The two classes in this task were: Class 1 (Active State), representing moments of active engagement where the user interacts with the system (e.g., motion, gesture, or vocal input), and Class 0 (Idle State), representing periods of non-engagement or minimal interaction. For example, the CNN model achieved an accuracy of 91.2%, with 92 true positives (TP), 88 true negatives (TN), 12 false positives (FP), and 8 false negatives (FN). The DNN model slightly improved with 94% accuracy, yielding 94 TP, 90 TN, 10 FP, and 6 FN. The RNN model performed similarly with 95% accuracy, while the HybridNet model outperformed all others with 96% accuracy, yielding 96 TP, 94 TN, 6 FP, and 4 FN. The LSTM and DeepFusionNet models also showed excellent performance, achieving 97% and 98% accuracy, respectively, with the lowest false positive and false negative values across the board. Figure 10 displays the confusion matrices for these models, highlighting their effectiveness in classifying Active and Idle states based on multimodal input data.

The training and validation results show an improvement in model performance across epochs and cross-validation folds. For the validation metrics during training in Figure (11a), the validation loss decreased from 0.520 in the first epoch to 0.175 by epoch 50, indicating effective learning. Similarly, the validation MAE decreased from 0.410 to 0.160, and the validation MSE dropped from 0.20 to 0.01, indicating a decrease in error over time. In the cross-validation loss across folds in Figure (11b), the training loss started at 0.70 and improved to 0.00 by the last fold. The validation loss decreased from 0.60 to 0.006, while the testing loss reduced from 0.90 to 0.01, demonstrating consistent improvement across folds. The results in Fig. 11 illustrate the model’s ability to generalize well across training, validation, and testing datasets.

The performance of various machine learning models was evaluated using key metrics: Accuracy, Sensitivity, Specificity, Precision, F1-Score, MCC, and AUC. Decision Tree (DT) achieved an accuracy of 83.9%, with a sensitivity of 82.5%, specificity of 85.1%, precision of 82.3%, F1-score of 82.8%, MCC of 0.65, and AUC of 0.83. K-Nearest Neighbors (KNN) showed an accuracy of 84.5%, sensitivity of 83.3%, specificity of 85.9%, precision of 83.1%, F1-score of 83.6%, MCC of 0.66, and AUC of 0.84. Random Forest (RF) was performed with an accuracy of 85.8%, sensitivity of 84.6%, specificity of 86.8%, precision of 84.5%, F1-score of 84.8%, MCC of 0.68, and AUC of 0.85. Support Vector Machine (SVM) achieved 87.5% accuracy, sensitivity of 86.5%, specificity of 88.4%, precision of 86.4%, F1-score of 86.8%, MCC of 0.71, and AUC of 0.87. XGBoost had an accuracy of 89.6%, sensitivity of 88.9%, specificity of 90.3%, precision of 88.7%, F1-score of 89.0%, MCC of 0.73, and AUC of 0.89. Convolutional Neural Network (CNN) showed an accuracy of 91.2%, sensitivity of 91.0%, specificity of 91.4%, precision of 90.8%, F1-score of 91.1%, MCC of 0.75, and AUC of 0.91. Deep Neural Network (DNN) achieved 92.1% accuracy, sensitivity of 91.9%, specificity of 92.3%, precision of 91.6%, F1-score of 91.8%, MCC of 0.76, and AUC of 0.92. Recurrent Neural Network (RNN) recorded 92.7% accuracy, sensitivity of 92.4%, specificity of 93.0%, precision of 92.2%, F1-score of 92.4%, MCC of 0.78, and AUC of 0.93. HybridNet reached 93.8% accuracy, sensitivity of 93.6%, specificity of 94.1%, precision of 93.4%, F1-score of 93.5%, MCC of 0.80, and AUC of 0.94. Finally, DeepFusionNet exhibited the highest performance with 94.2% accuracy, 92.5% sensitivity, 96.1% specificity, 93.8% precision, 95.0% F1-score, 0.846 MCC, and 0.96 AUC, making it the most effective model in this evaluation. Table 5 presents the performance metrics for various machine learning models, highlighting the superior performance of DeepFusionNet.

The experimental results of the proposed model provide error losses and evaluation metrics across various network architectures. The Decision Tree (DT) model exhibits a relatively high error, with a Mean Absolute Error (MAE) of 0.80, a Mean Squared Error (MSE) of 0.64, and a Root Mean Squared Error (RMSE) of 0.80. Similarly, K-Nearest Neighbors (KNN) and Random Forest (RF) have comparable errors, with MAE values of 0.78 and 0.77, respectively. Support Vector Machine (SVM) performs slightly better, with a lower MAE of 0.73 and MSE of 0.55. The XGBoost model continues to improve, with an MAE of 0.70 and an MSE of 0.49. Advanced models, Such as CNN, DNN, and RNN, demonstrate Substantial improvements, particularly the CNN, which has an MAE of 0.39, MSE of 0.15, and RMSE of 0.42. The HybridNet and DeepFusionNet architectures lead in performance, with the lowest MAE of 0.35 and 0.32, respectively, and the best values for R-squared and Explained Variance, indicating superior accuracy. Figure 12 illustrates the training phase error loss across various network architectures, highlighting the performance differences in metrics.

While Table 6 highlights the superiority of DeepFusionNet over conventional machine learning and baseline neural models, further validation is required through comparison with recent state-of-the-art multimodal architectures. Models such as ViLBERT, MM-Transformer, MISA, and the Memory Fusion Network (MFN) represent widely adopted benchmarks in multimodal AI. A direct reimplementation of these models was not feasible due to resource constraints. Instead, we rely on reported values from the literature, focusing on classification performance metrics including accuracy, MCC, and AUC.

The results demonstrate that DeepFusionNet achieves superior accuracy (94.2%), higher MCC (0.846), and a higher AUC (0.96) compared to these advanced multimodal models. Importantly, unlike ViLBERT and MM-Transformer, which require large-scale pretraining and significant computational resources, DeepFusionNet was specifically optimized for IoT-driven environments and real-time classification tasks. Furthermore, while attention-based approaches such as MISA and MFN excel in cross-modal reasoning, they are not designed for low-latency, sensor-driven contexts. Thus, DeepFusionNet strikes a balance between high predictive performance and computational efficiency, making it particularly suitable for interactive cross-media art applications.

Discussion

This study presented DeepFusionNet, an innovative multimodal deep learning architecture that integrates visual, auditory, and motion data to enhance performance in cross-media environments. The experimental evaluation demonstrated that DeepFusionNet not only surpasses single-modality approaches such as CNNs for image recognition and LSTMs/GRUs for sequential input but also outperforms hybrid baselines in terms of accuracy, sensitivity, specificity, precision, F1-score, MCC, and AUC. In addition to higher predictive performance, the model exhibited improved memory efficiency, stable scalability, and effective runtime behavior, all of which are crucial for practical deployment in resource-constrained IoT-driven environments. The comparative analysis of learning rates further emphasized the importance of parameter optimization, as a learning rate of 0.01 produced the highest accuracy of 94.2% with sensitivity and specificity values of 94% and 96%, respectively. Higher rates significantly deteriorated the results. These findings highlight the importance of meticulous hyperparameter tuning in ensuring that multimodal frameworks remain both accurate and computationally efficient when applied to complex, real-time tasks.

Beyond the numerical results, the novelty and impact of DeepFusionNet reside in its reactive classification paradigm, which has been largely overlooked in favor of either static rule-based triggers or computationally demanding generative methods. While rule-based systems are limited by rigid predefinitions and poor adaptability to unstructured environments, and generative systems often introduce high latency and unpredictability that undermine real-time responsiveness, DeepFusionNet offers a more balanced alternative by classifying contextual states and activating predefined artistic responses. This approach ensures low-latency interaction, reproducibility across varying deployment contexts, and scalable performance when integrated into IoT-driven multimedia installations. The resulting framework provides not only technical robustness but also creative adaptability, enabling user-aware and context-sensitive experiences that remain stable even under diverse environmental conditions. Nevertheless, limitations should be acknowledged. DeepFusionNet does not autonomously generate new artistic content, which could restrict the range of creative expression compared to generative systems. However, this trade-off is intentional, as it prioritizes reproducibility, scalability, and reliability, characteristics that are indispensable for real-time IoT art installations, where audiences expect consistent responsiveness. Future extensions may focus on hybrid systems that combine this classification backbone with lightweight generative modules, thereby uniting the efficiency of classification with the creativity of generation. In this regard, DeepFusionNet establishes a solid and scalable foundation for advancing IoT-enhanced cross-media art, demonstrating that reproducibility, robustness, and real-time adaptability are as impactful as novelty in content creation.

Conclusion

The DeepFusionNet is a multimodal, hybrid deep learning framework proposed in this paper to identify multimodal input, including visual, auditory, and motion data, with applications to real-time adaptive responses in cross-media art applications. In contrast to generative models, DeepFusionNet does not create new pieces of art but recognises the contextual input presented by a user or the environment to trigger predefined multimedia output. The experimental findings indicate the outstanding accuracy, precision, recall, F1-score of 94.2%, 92.8%, 93.5%, and 93.1%, respectively and an AUC-ROC and 0.846, and an AUC of 0.96, respectively, which reflect a high level of reliability in remaining in its classification as well as another reassuring level of robustness on an imbalanced dataset. Its prediction accuracy is also evident in the regression measures, RMSE (0.154) and MAE (0.122). The DeepFusionNet model achieves significant performance gains when merging heterogeneous streams, demonstrating that multimodal learning is effective for interactive systems. Third, the model was made scalable and deployable to various environments, making it more flexible in addressing real-world limitations. Although the current implementation of the evaluation focuses on quantitative assessment, in the future, both qualitative assessment and user studies will be incorporated to test the effects of interactivity, user satisfaction, and artistic influence. To conclude, the work will provide a technically sound basis for real-time multimodal classification in cross-media settings and serve as a foundation for generalising multimodal AI systems to more diverse areas, such as healthcare, robotics, and digital entertainment.

Data availability

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Li, M. Research on Cross-media Art Design Based on Artificial Intelligence Digital Service Platform, Proceedings of the 2nd International Conference on Art Design and Digital Technology, ADDT 2023, September 15–17, 2023, Xi’an, China, EAI. (2024). https://doi.org/10.4108/eai.15-9-2023.2340831

Liu, S. T., Hsu, S. C. & Huang, Y. H. (2020) Data paradigm shift in cross-media iot system, lecture notes in computer science (including subseries lecture notes in artificial intelligence and lecture notes in bioinformatics), 479–490. https://doi.org/10.1007/978-3-030-50017-7_36

Su, Y., Tian, J. & Zan, X. The research of Chinese martial arts Cross-Media communication system based on deep neural network. Comput. Intell. Neurosci. 2022, 1–9. https://doi.org/10.1155/2022/2835992 (2022).

Wang, T. et al. TASTA: Text-assisted spatial and temporal attention network for video question answering. Adv. Intell. Syst. 5(4), 2200131 (2023).

Li, S. A Cross-Media advertising design and communication model based on feature subspace learning. Comput. Intell. Neurosci. 2022, 1–10. https://doi.org/10.1155/2022/5874722 (2022).

Liu, M. & Li, J. (2023) Research on the design of interactive installation in new media art based on machine learning, lecture notes in computer science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), 101–112. https://doi.org/10.1007/978-3-031-35705-3_8

Rehman, S. et al. ur, (2020) Deep learning techniques for future intelligent cross-media retrieval.

Rahimzad, M. et al. Performance comparison of an LSTM-based deep learning model versus conventional machine learning algorithms for streamflow forecasting. Water Resour. Manag. 35, 4167–4187. https://doi.org/10.1007/s11269-021-02937-w (2021).

Wang, P., Fan, E. & Wang, P. Comparative analysis of image classification algorithms based on traditional machine learning and deep learning. Pattern Recognit. Lett. 141, 61–67. https://doi.org/10.1016/j.patrec.2020.07.042 (2021).

Uddin, I. et al. A hybrid residue based sequential encoding mechanism with XGBoost improved ensemble model for identifying 5-hydroxymethylcytosine modifications. Sci. Rep. 14, 20819. https://doi.org/10.1038/s41598-024-71568-z (2024).

Miao, Y. Improved deep neural network for cross-media visual communication.. Comput. Intell. Neurosci. 2022(1), 1556352 (2022).

Peng, Y., Huang, X. & Zhao, Y. An overview of Cross-Media retrieval: concepts, methodologies, benchmarks, and challenges. IEEE Trans. Circuits Syst. Video Technol. 28, 2372–2385. https://doi.org/10.1109/TCSVT.2017.2705068 (2018).

Gan, X. et al. TianheGraph: customizing graph search for graph500 on Tianhe supercomputer. IEEE Trans. Parallel Distrib. Syst. 33, 941–951. https://doi.org/10.1109/TPDS.2021.3100785 (2022).

Liu, H. Design of neural network model for Cross-Media audio and video score recognition based on convolutional neural network model. Comput. Intell. Neurosci. 2022, 1–12. https://doi.org/10.1155/2022/4626867 (2022).

Zhuang, Y-T., Yang, Y. & Wu, F. Mining semantic correlation of heterogeneous multimedia data for Cross-Media retrieval. IEEE Trans. Multimed. 10, 221–229. https://doi.org/10.1109/TMM.2007.911822 (2008).

Qiu, S. et al. Multi-sensor information fusion based on machine learning for real applications in human activity recognition: State-of-the-art and research challenges. Inf. Fusion 80, 241–265 (2022).

Khan, S. et al. N6-methyladenine identification using deep learning and discriminative feature integration. BMC Med. Genomics. 18, 58. https://doi.org/10.1186/s12920-025-02131-6 (2025).

Turchet, L. et al. The internet of audio things: state of the art, vision, and challenges. IEEE Internet Things J. 7, 10233–10249. https://doi.org/10.1109/JIOT.2020.2997047 (2020).

Kim, Y. et al. Construction of a Soundscape-Based media Art exhibition to improve user appreciation experience by using deep neural networks. Electronics 10, 1170. https://doi.org/10.3390/electronics10101170 (2021).

Chamishka, S. et al. A voice-based real-time emotion detection technique using recurrent neural network empowered feature modelling. Multimed Tools Appl. 81, 35173–35194. https://doi.org/10.1007/s11042-022-13363-4 (2022).

Mustafa, M. A., Konios, A. & Garcia-Constantino, M. IoT-Based activities of daily living for abnormal behavior detection: Privacy issues and potential countermeasures. IEEE Internet. Things. Mag. https://doi.org/10.1109/IOTM.0001.2000169 (2021).

O’Halloran, K. L., Pal, G. & Jin, M. Multimodal approach to analysing big social and news media data. Discourse Context Media. 40, 100467. https://doi.org/10.1016/j.dcm.2021.100467 (2021).

Wang, D. et al. MM-Transformer: A transformer-based knowledge graph link prediction model that fuses multimodal features. Symmetry 16(8), 961 (2024).

Portnoy, S. et al. Chap. 8 - Multimodal localization for embedded systems: A survey. Comput. Hum. Behav. 25, 24–32 (2017).

Daneshfar, F. et al. Image captioning by diffusion models: a survey.. Eng. Appl. Artif. Intell 138, 109288 (2024).

Wang, S. et al. Advances in data preprocessing for bio-medical data fusion: An overview of the methods, challenges, and prospects.. Inf. Fusion. 76, 376–421 (2021).

Uddin, I. et al. Deep-m6Am: a deep learning model for identifying N6, 2′-O-Dimethyladenosine (m6Am) sites using hybrid features. AIMS Bioeng. 12, 145–161. https://doi.org/10.3934/bioeng.2025006 (2025).

Khan, S. et al. Sequence based model using deep neural network and hybrid features for identification of 5-hydroxymethylcytosine modification. Sci. Rep. 14, 9116. https://doi.org/10.1038/s41598-024-59777-y (2024).

Wang, T. et al. ResLNet: deep residual LSTM network with longer input for action recognition. Front. Comput. Sci. 16(6), 166334 (2022).

Hu, X. et al. TOP-ALCM: A novel video analysis method for violence detection in crowded scenes. Inf. Sci. (Ny). 606, 313–327. https://doi.org/10.1016/j.ins.2022.05.045 (2022).

Ortiz, B. L. et al. Data preprocessing techniques for artificial intelligence (AI)/Machine learning (ML)-Readiness: systematic review of wearable sensor data in cancer care (Preprint). JMIR mHealth uHealth. 12, e59587. https://doi.org/10.2196/59587 (2024).

Daneshfar, F. et al. Elastic deep multi-view autoencoder with diversity embedding. Inf. Sci. (Ny). 689, 121482. https://doi.org/10.1016/j.ins.2024.121482 (2025).

Lin, X. et al. Contrastive modality-disentangled learning for multimodal recommendation. Trans. Inf. Syst. 43(3), 1–31 (2025).

Lv, S. et al. Enhancing chinese dialogue generation with word–phrase fusion embedding and sparse softmax optimization. Systems 12(12), 516 (2024).

Khan, S. et al. Optimized feature learning for Anti-Inflammatory peptide prediction using parallel distributed computing. Appl. Sci. 13, 7059. https://doi.org/10.3390/app13127059 (2023).

Khan, S. et al. Spark-based parallel deep neural network model for classification of large scale RNAs into piRNAs and non-piRNAs. IEEE Access. https://doi.org/10.1109/ACCESS.2020.3011508 (2020).

Yin, L. et al. DPAL-BERT: A faster and lighter question answering model. Computer Model. Eng. Sci https://doi.org/10.32604/cmes.2024.052622 (2024).

Wu, Y. et al. Self-Supervised Intra-Modal and Cross-Modal contrastive learning for point cloud Understanding. IEEE Trans. Multimed. 26, 1626–1638. https://doi.org/10.1109/TMM.2023.3284591 (2024).

Jing, L. et al. A patent text-based product conceptual design decision-making approach considering the fusion of incomplete evaluation semantic and scheme beliefs. Appl. Soft Comput. 157, 111492 (2024).

Deng, Q., Chen, X. & Lu, P. Intervening in negative emotion contagion on social networks using reinforcement learning. ieeexplore.ieee.orgQ Deng, X Chen, P Lu, Y Du, X LiIEEE Trans Comput Soc Syst 2025•ieeexplore.ieee.org.

Liu, Y. et al. Aligning cyber space with physical world: A comprehensive survey on embodied ai. ieeexplore.ieee.orgY Liu, W Chen, Y Bai, X Liang, G Li, W Gao, L LinIEEE/ASME Trans Mechatronics, 2025•ieeexplore.ieee.org 1–22. (2025). https://doi.org/10.1109/TMECH.2025.3574943

Khan, S., Naeem, M. & Qiyas, M. Deep intelligent predictive model for the identification of diabetes. AIMS Math. 8, 16446–16462. https://doi.org/10.3934/math.2023840 (2023).

Liu, Y. et al. ODMixer: Fine-grained Spatial-temporal MLP for metro Origin-Destination prediction. IEEE Trans. Knowl. Data Eng. https://doi.org/10.1109/TKDE.2025.3579370 (2025).

Zhang, M. et al. Dual-attention transformer-based hybrid network for multi-modal medical image segmentation. Sci. Rep. 14, 25704. https://doi.org/10.1038/s41598-024-76234-y (2024).

Lu, J. et al. ViLBERT: Pretraining task-agnostic visiolinguistic representations for vision-and-language tasks, Advances in Neural Information Processing Systems. (2019).

Hazarika, D., Zimmermann, R. & Poria, S. MISA: modality-invariant and -specific representations for multimodal sentiment analysis, MM 2020 - Proceedings of the 28th ACM International Conference on Multimedia, Association for Computing Machinery, Inc, 1122–1131. (2020). https://doi.org/10.1145/3394171.3413678

Zadeh, A. et al. Memory fusion network for multi-view sequential learning, 32nd AAAI Conference on Artificial Intelligence, AAAI 2018, AAAI press, 5634–5641. (2018). https://doi.org/10.1609/aaai.v32i1.12021

Author information

Authors and Affiliations

Contributions

Z.F. conceived and designed the study, developed the DeepFusionNet framework, and conducted the experiments. M.M.G. contributed to the data collection, preprocessing, and analysis, as well as the interpretation of results. Z.F. wrote the main manuscript text, and M.M.G. provided critical feedback and revisions. Both authors reviewed and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Feng, Z., Giblin, M. DeepFusionNet for realtime classification in iotbased crossmedia art and design using multimodal deep learning. Sci Rep 15, 34935 (2025). https://doi.org/10.1038/s41598-025-18665-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-18665-9