Abstract

The identification of insulator defects on transmission line insulators constitutes a pivotal undertaking in the context of UAV (Unmanned Aerial Vehicle) inspection, a process that is imperative to ensure the reliable functioning of transmission lines. A novel approach is proposed to mitigate missed detections and enhance detection accuracy in UAV-based insulator defect detection. RSP-YOLOv11n (RCSOSA-SEA-P2 YOLOv11n) is proposed to enhance the detection of insulator defects in UAV-acquired imagery. The conventional C3K2 module in the backbone is replaced with the RCSOSA unit, thereby enabling more effective multi-scale feature extraction and representation learning. Second, by using axial attention and detail enhancement, the SEA attention mechanism is used to improve the ability to detect surface defects on insulators. Finally, by capturing finer features during high-resolution image processing, the addition of a P2 detection head to the network improves the accuracy of small target detection. RSP-YOLOv11n performs better overall than other YOLO series models, according to experimental results on the self-constructed insulator dataset. In contrast to the baseline YOLOv11n model, RSP-YOLOv11n improved precision from 89.9 to 92.3%, recall from 82.6 to 85.9%, F1-score from 86.1 to 89.0%, from mAP@0.5 from 88.7 to 91.2%, and mAP@0.5:0.95 from 58.9 to 61.7%. Furthermore, the proposed RSP-YOLOv11n framework was evaluated on three benchmark insulator datasets—CPLID, IDID, and SFID. Across these datasets, it consistently achieved better detection performance compared to other models in the YOLO family. In addition, RSP-YOLOv11n exhibited competitive advantages over recent state-of-the-art detectors, including DINO and RT-DETR. The experimental results highlight the framework’s strong capability in small object detection, showing notable improvements in accuracy and generalization. These findings suggest that RSP-YOLOv11n holds considerable potential for meeting the practical requirements of insulator defect detection in real-world UAV inspection scenarios.

Similar content being viewed by others

Introduction

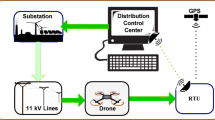

By the end of 2024, China’s transmission and distribution lines of 220 kV and above had extended to a total length of 952,270 kilometers1.Transmission line networks have gradually expanded as a result of China’s power industry’s ongoing growth. Crucial parts of transmission lines, insulators sustain overhead wires and stop current leakage. However, insulators are exposed to severe weather conditions, such as wind erosion, ice buildup, lightning strikes, and extremely high or low temperatures, which can cause damage like flashover and breakage in Fig. 1. These conditions pose a serious threat to the reliability and safety of transmission lines. Therefore, to guarantee the efficacy of transmission line inspections, precise identification of the insulators’ state is essential2,3.

The manual detection of defective insulators is an early process that consumes a considerable amount of human resources. Both mis-inspection and missed inspections are possible. In addition to this, there are still issues like security threats, sluggish detection, and other restrictions. However, traditional human patrols are gradually being replaced by UAV-based inspection tactics due to recent developments in UAV and image processing technologies. This transition is driven by a number of advantages offered by UAV-based inspection strategies, including reduced costs, enhanced efficiency, and improved safety4,5. However, the process of UAV image processing is often confronted by a number of challenges, including complex backgrounds, significantly disturbed targets, the difficulty of small target detection, and target overlap6.

Traditional insulator defect detection methods mainly rely on image processing and handcrafted feature extraction techniques, such as edge detection, threshold segmentation, grayscale statistics, and morphological operations7,8,9,10. These methods can achieve preliminary defect identification under specific conditions. However, they are highly sensitive to environmental variations and prone to performance degradation due to uneven lighting, complex backgrounds, and meteorological factors such as rain, snow, and haze. Furthermore, the complex morphology and semantic information of defects cannot be fully captured by traditional approaches because they rely on shallow, local image features. Additionally, they are not able to adjust to different kinds of defects, which leads to comparatively poor generalization and robustness. In practical applications, these methods often require manual redesign and parameter tuning for different scenarios, and their computational efficiency is generally low, making them unsuitable for large-scale, real-time inspections. In contrast, deep learning techniques have significantly improved the accuracy and stability of insulator defect detection through their end-to-end feature learning capabilities. Convolutional Neural Networks (CNNs), by extracting multi-level and multi-scale features, can automatically capture rich information in images, thereby more effectively identifying defects in complex backgrounds. Deep learning models exhibit strong noise resistance and interference robustness, adapting well to varying illumination, weather conditions, and imaging environments, demonstrating excellent robustness and generalization performance. Furthermore, recent advancements such as multi-scale feature fusion, attention mechanisms, and Transformer architectures have further enhanced detection performance, driving widespread adoption and rapid development of deep learning methods in the field of insulator defect detection.

Object detection techniques based on deep learning are typically categorized into two main types: one-stage and two-stage methods11. Two-stage methods achieve high accuracy and efficient object category separation by first generating candidate bounding boxes and then performing classification and localization. Representative models include R-CNN12, Fast R-CNN13, Faster R-CNN14, FPN-Net15, and Mask R-CNN16. However, due to their two-step processing approach, these algorithms have limited real-time detection capabilities, leading to slower detection speeds and challenges in balancing accuracy and efficiency.

The key distinction between one-stage and two-stage object detection methods is that the former does not include a region proposal phase. One-stage algorithms simplify the training process by simultaneously predicting the target class and generating the bounding box, making the approach more efficient. Common examples of one-stage algorithms are the SSD and YOLO models. Bao et al.17 optimized YOLOv5 by integrating coordinate attention mechanisms and replacing PANet with a dual-directional feature pyramid network, leading to improved insulator defect detection, especially in terms of small target accuracy. Zeng et al.18 introduced an improved YOLOv5-based algorithm for insulator detection, integrating CSP-SCConv, RFB, and LSKBlock modules to enhance feature extraction and fusion.

Hong et al.19 enhanced the YOLOv7 algorithm by substituting the original activation function with the Mish activation function, thereby improving the network’s ability to represent complex features.

Deng et al.20 proposed an insulator defect detection framework for adverse weather conditions, integrating PReNet and Dehaze Former for image enhancement and an improved YOLOv7 with SIoU loss and SimAM attention, achieving robust performance on newly developed CPLID_Rainy and CPLID_Hazy datasets. He et al.21 enhanced YOLOv8 by optimizing target feature extraction in complex backgrounds. They replaced the C2F network with a fusion of GhostNet and multi-scale asymmetric convolution, improving feature representation. Tan et al.22 developed a self-checkout system based on an improved YOLOv10, aiming to enhance efficiency and reduce labor costs. Li et al.23 introduced IF-YOLO, an optimized YOLOv10n variant incorporating Haar Wavelet Upsampling, Haar Wavelet Downsampling, Group Collaborative Attention, and Hybrid Spatial Pyramid Pooling to enhance feature extraction and detection accuracy. Zhang et al.24 proposed IL-YOLO, addressing challenges in complex backgrounds and multi-target detection for insulator defect detection. To improve small-target detection and processing efficiency, Gao et al.25 introduced a YOLOv11-based lightweight anti-UAV detection method that integrates CCFM and HWD structures. Liu et al.26 refined the SSD algorithm by optimizing network parameters and leveraging multi-scale training and horizontal mirroring augmentation, enhancing detection accuracy. Additionally, Li et al.27 proposed a dual-network framework integrating MnasNet and MobileNetv3, enabling multi-level feature extraction and overcoming the limitations of single convolutional backbones. Chen et al.28 introduced the CLS-YOLO model, an improved YOLOv11n variant, integrating a Cross-Scale Feature Fusion Module (CCFM) and Large Separable Kernel Attention (LSKA) to enhance defect detection in micro-motor commutators. Wang et al.29 proposed PC-YOLOv11s, an optimized YOLOv11 model for small object detection, achieving improved accuracy and reduced parameters, outperforming other YOLO-series models.

Collectively, these advancements contribute to the enhancement of object detection across a range of applications, underscoring the efficacy of integrating advanced feature extraction techniques and multi-scale learning strategies. Two-stage detection models generally require substantial memory resources during deployment, which may limit their capacity to achieve real-time object recognition in practical engineering applications. On the other hand, while one-stage models facilitate real-time detection, they still encounter challenges related to recognition accuracy.

In this research, YOLOv11n serves as the reference model to assess the effectiveness of the latest YOLO-series model in detecting insulator defects. To improve the model’s capability in extracting small-target features while balancing detection accuracy and computational efficiency, we introduce an enhanced method, RSP-YOLOv11n (RCSOSA-SEA-P2 YOLOv11n). The main contributions of this work are as follows:

-

1.

Optimized Backbone Network: By substituting the traditional C3K2 module with the RCSOSA module in the backbone network, the model enhances its ability to capture critical features while minimizing redundant data.

-

2.

Improved Attention Mechanism: Four SEA Attention modules are introduced at the P2, P3, P4, and P5 levels to address the problem of finding defective objects in complicated backgrounds, where poor saliency frequently results in misdetection. This integration effectively strengthens multi-scale feature extraction, improves the model’s attention to both key local information and global context, and enhances detection robustness.

-

3.

Enhanced Small Target Detection: A P2 detection head is integrated into the network to facilitate finer feature extraction at higher-resolution stages, thereby substantially enhancing the detection performance for small targets.

Three publicly available benchmarks and a self-constructed insulator dataset were used for extensive testing. The findings show that RSP-YOLOv11n continuously outperforms current YOLO-based methods for identifying small-scale targets, attaining greater recall and precision while preserving computational efficiency. These results demonstrate the usefulness of the model for UAV-based insulator inspection tasks and offer encouraging avenues for further study in real-world small object detection.

The structure of the paper is organized in the following manner: The next section outlines the core YOLOv11n model and describes the enhancements made to the proposed object detection method. The next section presents the experimental setup and the insulator dataset, followed by a comprehensive analysis and discussion based on ablation studies and comparisons with other algorithms. The paper concludes with a summary of the results and suggestions for future research directions.

Methodology

YOLOv11n baseline model

YOLOv11, the most recent model in the Ultralytics YOLO series, is intended to raise the bar for real-time object detection. It makes a substantial contribution to the field of object recognition by achieving exceptional performance in terms of accuracy, processing speed, and computational efficiency. By implementing notable improvements in network architecture and training methodologies, YOLOv11 expands on the progress made in earlier iterations. Because of these improvements, YOLOv11 can now provide better performance and dependability, making it a flexible option for a variety of computer vision applications30.

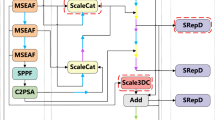

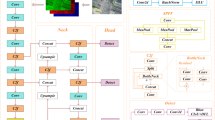

To increase feature extraction and overall efficiency, YOLOv11 makes a number of improvements to the model architecture (see Fig. 2). One of the most important changes is the substitution of the C3k2 module for the original C2f module, which greatly increases the model’s adaptability to a variety of application scenarios. Following the SPPF module, a C2PSA module has been added to improve the attention mechanism in feature extraction. This module greatly enhances the model’s capacity to capture important features by extending the C2f structure and integrating the PSA mechanism. The C2PSA module optimizes the feature extraction process by combining a multi-head attention mechanism with a feedforward neural network (FFN). By converting features into a higher-dimensional space, the FFN improves the representation of important features and captures complex nonlinear interactions. In the meantime, the multi-head attention mechanism enhances the model’s capacity to efficiently process complex and varied data by enabling it to concurrently attend to several aspects of the input features. The C2PSA module also has residual connections, which improve gradient propagation and speed up network training. To increase computational efficiency and reduce redundant calculations while maintaining high performance, YOLOv11 integrates depth-wise separable convolution in the detection head. Accuracy and processing speed are both improved by this optimization. Furthermore, the model’s feature extraction capabilities are greatly strengthened by the integration of an optimized neck architecture and an advanced backbone network, which results in more accurate object detection.

Despite significant advancements, object detection still has problems, particularly with small objects. As model depth increases, there is a risk of producing background noise interference or losing small-target characteristics. Making sure the network effectively takes in and retains small-object features is another important problem that requires more focus.

RCSOSA-SEA-P2 YOLOv11n (RSP-YOLOv11n) model

The RSP-YOLOv11n model has been introduced to address the challenges encountered in detecting insulator defects on power transmission lines. This model is based on the baseline YOLOv11n model, as illustrated in Fig. 3, and involves several modifications to the original model. First, the RCSOSA module is used as a replacement for the C3K2 module in the backbone. Second, four SEA Attention modules are incorporated. Finally, a P2 detection head is added to the network. The RCSOSA module enhances efficient feature extraction and fusion by integrating Channel Shuffle, Re-parameterization, and the Once-for-All (OSA) structure. The Channel Shuffle mechanism strengthens inter-channel information exchange, improving feature representation and detection accuracy. Re-parameterization technology maintains multi-branch learning capabilities during training while merging computation paths during inference to enhance computational efficiency. The OSA structure aggregates cross-layer features, optimizing deep feature utilization and enabling high-level features to effectively fuse with low-level information.

This greatly improves the robustness of the model, especially for small-object detection tasks and complex backgrounds. By enhancing global information modeling, local detail enhancement, feature representation, and lowering false detections, the SEA module improves insulator detection accuracy in YOLOv11. In order to better separate insulators from complex backgrounds, SEA uses Axial Attention to capture long-range dependencies with less computational complexity. The model can learn fine-grained surface features thanks to the Detail Enhancement mechanism, which combines local convolution and self-attention. This is essential for spotting minute flaws. Furthermore, in difficult environments, the Lightweight Gated Unit (LGU) enhances target distinction and optimizes feature fusion by dynamically controlling feature flow across various scales. SEA reduces false positives and missed detections by simultaneously enhancing local features and improving global information modeling, which eventually increases the model’s accuracy. In order to improve the localization and classification of small targets, such as insulator defects, the P2 detection head adds an extra prediction layer that records fine-grained spatial details. Shallow-layer features increase the model’s sensitivity to minute changes, lowering the number of missed detections. The overall increase in detection accuracy, especially in complex environments, outweighs the trade-off, resulting in higher mAP and more reliable performance in real-world scenarios, despite a slight increase in computational load. A thorough explanation of the RCSOSA module, SEA attention module, and P2 detection head’s working mechanisms is provided in the following section.

RCSOSA module

In recent years, researchers have increasingly focused on the exploration of lightweight convolutional architectures to enhance computational efficiency and improve feature representation. One notable approach is Structural Reparameterized Convolution (RCS), which was proposed by Kang et al.31 and draws inspiration from ShuffleNet. RCS incorporates channel splitting and shuffling mechanisms to reduce computational complexity while preserving efficient inter-channel information exchange. As shown in Fig. 4, RCS operates on an input feature tensor with dimensions \(C\times H\times W\), which is split into two equal-sized channel-wise tensors. During the training phase, one of these tensors is processed using a multi-branch structure that includes an identity branch, a \(1\times 1\) convolution, and a \(3\times 3\) convolution, forming the training-time RCS module. In the inference phase, these components—identity mapping, \(1\times 1\) convolution, and \(3\times 3\) convolution—are consolidated into a single \(3\times 3\) RepConv through structural reparameterization, simplifying the network while retaining its feature extraction capabilities. After processing one tensor with the multi-branch structure, it is concatenated channel-wise with the other tensor. A channel shuffle operation is then applied to improve feature fusion between the two tensors, enhancing depth-wise feature interaction while maintaining low computational complexity. Compared to conventional \(3\times 3\) convolution, RCS reduces computational complexity by a factor of two during inference while preserving inter-channel information exchange. Furthermore, structural reparameterization enables the model to learn deep feature representations during training while optimizing memory usage in the inference stage. The outcome of this approach is a significant enhancement in processing speed and an improvement in computational efficiency, rendering it particularly well-suited for high-performance object detection tasks.

The One-Shot Aggregation (OSA) module was added to DenseNet in order to address the inefficiency of dense connections. This module captures diverse features through multiple receptive fields and consolidates them only once in the final feature maps, thereby enhancing computational efficiency and feature representation32. Building on this concept, Kang et al. proposed the RCSOSA module, which integrates RCS with OSA, as shown in Fig. 5. To enhance feature reuse and improve information flow between adjacent layers, RCS modules are repeatedly stacked, with different numbers of stacked modules placed at different locations in the network. To mitigate network fragmentation, the one-shot aggregation path is designed to preserve only three feature cascades, which helps streamline computational processes and significantly decrease memory consumption while maintaining model efficiency. To improve feature representation across various scales, the RCSOSA + Upsampling and RCSOSA + RepVGG Undersampling procedures are introduced for multi-scale feature fusion, which is modeled after the Path Aggregation Network (PANet). These mechanisms allow the alignment of feature maps across different scales, facilitating the exchange of information between prediction feature layers. This approach improves recognition accuracy while ensuring fast inference in object recognition tasks. Furthermore, the RCSOSA module minimizes the number of output channels while maintaining a constant number of input channels, which lowers memory access costs (MAC). These optimizations contribute to improved computational efficiency, making RCSOSA a robust and efficient module for high performance object detection.

We have introduced the RCSOSA module into the YOLOv11 algorithm for the first time, enhancing the interaction of information between channels and improving feature diversity. This integration optimizes the use of deep features, allowing high-level features to fuse effectively with low-level information, thereby increasing the robustness of object detection.

SEA attention module

Wang et al.33 proposed the Squeeze-Enhanced Axial (SEA) Attention module, which effectively balances segmentation accuracy and inference speed. Equations (1) and (2) compute the query features in Axial Attention by applying attention-weighted summation separately along the horizontal and vertical directions, thereby achieving the squeeze operation to extract global information and enhance computational efficiency. Compared to traditional transformers that compute full global attention, this method reduces the time complexity from \(O\left(H*W\right)\) to \(O\left(H+W\right)\) through a decomposed computational approach, making it suitable for high-resolution inputs such as object detection and semantic segmentation tasks.

In this equation, \(q\left(h\right)\) and \(q\left(v\right)\) represent the query features extracted along the horizontal and vertical directions, respectively. These features are computed separately to capture directional information essential for the model’s performance. The parameter \(q\) is a linear transformation of the input features, with a shape of (\(H\), \({C}_{q w}\), \(W\)) (height \(H\), channels \({C}_{q w}\), width \(W\)). \({A}_{W}\) and \({A}_{H}\) are adaptive weight matrices corresponding to the horizontal and vertical directions, respectively. These matrices dynamically modulate the attention mechanism, allowing the model to effectively capture spatial dependencies and enhance feature representation. During transformation, \(q\) undergoes tensor dimension permutation to align with the respective directional computation, followed by multiplication with \({A}_{W}\) or \({A}_{H}\) to achieve global information compression (Squeeze). Finally, the result is reshaped back to (\(W\), \({C}_{q k}\)) or (\(H\), \({C}_{q k}\)), effectively reducing computational complexity while retaining essential global information.

On the right side of Fig. 6, the schematic illustrates the proposed Squeeze-Enhanced Axial Transformer (SEA-T) layer, which comprises the SEA attention mechanism and a Feed-Forward Network (FFN). Meanwhile, the left side of Fig. 6 provides a detailed visualization of the SEA-T layer, emphasizing the integration of the Detail Enhancement Kernel and the Squeeze Axial Attention mechanism. In the diagram, the ⊕ symbol denotes element-wise addition, while “Mul” signifies multiplication. By integrating global receptive field modeling with the retention of local details, the SEA attention mechanism strikes an effective balance between processing efficiency and detection accuracy, making it especially suitable for tasks that demand both comprehensive analysis and detailed feature extraction.

This work marks the first integration of the SEA attention module into the YOLOv11 algorithm. The SEA attention module employs the Axial Attention mechanism, a method that exhibits reduced computational complexity in comparison to traditional global self-attention techniques. In addition, it is capable of effectively capturing long-range dependencies, thereby enabling the model to accurately differentiate insulator targets from background information in complex backgrounds. Furthermore, the SEA module utilizes the Detail Enhancement mechanism to enhance local feature expression while retaining global information, thus enhancing the sensitivity to subtle defects on the insulator surface, particularly in scenarios involving small target detection. Furthermore, to optimize feature flow, SEA employs a Lightweight Gating Unit (LGU) to dynamically regulate the information flow of different channels during multi-scale feature fusion, enabling the model to more effectively focus on the insulator region and improve the ability to recognize objects under occlusion, blurring and lighting conditions.

Optimization of detection layer structure

Located in the deeper layers of the YOLOv11 network, the P2 detection head plays a crucial role in extracting detailed feature information. In object detection applications, small objects typically exhibit low resolution and limited distinguishing features, necessitating the network’s ability to preserve essential fine details during the extraction of low-level features. This ensures enhanced recognition accuracy, particularly for small-scale objects that are otherwise challenging to detect. Traditional object detection approaches primarily prioritize high-level feature abstraction, which is effective for detecting larger objects but often insufficient for capturing the finer details needed to accurately detect small targets, leading to suboptimal detection performance. Functioning as a low-level feature extractor, the P2 detection head processes high-resolution feature maps, enabling the preservation of crucial local details such as edges, textures, and shapes—fundamental elements for accurately detecting small targets. By integrating the P2 detection head, YOLOv11 enhances its ability to capture and recognize small objects at earlier stages of the network, leading to improved detection precision while effectively reducing false positives. This architectural refinement significantly strengthens the model’s capability in handling fine-grained object detection tasks.

The YOLOv11 sensor heads—P2, P3, P4, and P5—are designed to facilitate multi-scale object detection, each operating at distinct feature map resolutions to enhance detection accuracy across varying object sizes. P2, positioned in the lower layers, captures fine-grained details and is optimized for small object detection, corresponding to approximately 1/4 of the input image resolution. P3 strikes a balance between fine details and higher-level abstract features, making it particularly effective for detecting small to medium-sized objects at 1/8 resolution. P4 focuses on extracting more abstract features, catering to medium-sized objects at 1/16 resolution, while P5, situated in the deeper layers, specializes in detecting large objects by leveraging high-level semantic features at 1/32 resolution. This hierarchical multi-scale detection framework significantly enhances YOLOv11 robustness and accuracy, ensuring effective object recognition across a wide range of scales.

Integration strategy and overall improvements

In summary, the RSP-YOLOv11n model integrates the RCSOSA modules into the backbone to enhance channel interaction and multi-scale feature fusion, effectively improving feature representation for small and ambiguous targets. Meanwhile, the SEA attention modules embedded in the neck refine fused features by capturing long-range dependencies and enhancing local details, thereby improving defect discrimination in complex backgrounds. The addition of the P2 detection head further strengthens the model’s sensitivity to small targets by leveraging high-resolution shallow features. Together, these components form a cohesive architecture that significantly boosts the model’s robustness, accuracy, and detection performance across diverse and challenging scenarios.

Experimental process

Self-built insulator dataset

The caliber and variety of the training samples determine the model’s efficacy. It is impossible to achieve a robust model without high-quality training data. In order to create a comprehensive dataset, this research processes insulator photos taken from several transmission lines, an open-source insulator image collection, and data from power inspection companies that were acquired using drones. Images of insulators showing flashover, fracture, and normal circumstances are included in this dataset. As shown in Fig. 7, the insulator dataset (ZB), which is a self-constructed dataset, consists of 4192 images in total. The dataset was divided into training, validation, and test subsets in a 7:2:1 ratio to facilitate the evaluation of the network model. Since each image can contain several targets, a total of 28,163 targets were annotated across all datasets. Specifically, the training set includes 19,791 targets, the validation set contains 5,514 targets, and the test set holds 2,858 targets. This distribution ensures a comprehensive assessment of the model’s performance across different data partitions, enhancing the reliability of the evaluation results.

Experimental details

The proposed model was developed and validated using the PyTorch deep learning framework. For optimization, Stochastic Gradient Descent (SGD) was selected and applied consistently throughout the experiments. The training process spanned a total of 200 epochs, with a batch size set to 16 and an input image resolution of 640 × 640. As shown in Table 1, all experiments were carried out on the same computing platform, ensuring uniformity in training procedures and environmental factors across all tests to maintain consistency and accuracy in the evaluation.

Performance metrics

This paper evaluates the model’s detection performance using a variety of metrics, including Precision, Recall, F1-score, mAP@0.5, and mAP@0.5–0.95. Precision measures the proportion of true positive predictions among all positive predictions, whereas Recall indicates the ability to detect all relevant instances within the dataset. The F1-score is the harmonic mean of Precision and Recall, offering a balanced evaluation of both metrics. The mAP@0.5 metric computes the mean Average Precision at an Intersection over Union (IoU) threshold of 0.5, while mAP@0.5–0.95 calculates the average mAP across ten different IoU thresholds, ranging from 0.5 to 0.95, providing a comprehensive and reliable assessment of model performance at varying detection accuracies. The formulas for computing the aforementioned metrics are provided as follows:

In the calculations provided, \(TP\) refers to the number of true positives, representing the correctly identified positive samples. \(FP\) indicates the count of false positives, or incorrectly predicted positive instances, while \(FN\) stands for false negatives, which are relevant instances that were not detected. The smoothed Precision-Recall (PR) curve is denoted as \(P\left(r\right)\), capturing the relationship between precision and recall across various thresholds. Additionally, \(C\) represents the total number of categories within the dataset, and \({P}_{i}\) is the precision value for the i-th category, reflecting the accuracy of positive predictions for each specific class.

Results

Ablation experiment

To evaluate the effect of each modification and verify the performance of the individual components in the improved YOLOv11n model, a series of ablation experiments were conducted using the Insulators Dataset (ZB). To maintain consistency and ensure a fair comparison, identical parameters were applied across all experimental setups. The results of these experiments are presented in Table 2, where a "✓" denotes the inclusion of a specific module, and a "✘" indicates its exclusion. This approach allows for a clear evaluation of how each enhancement contributes to the overall model performance.

Based on the baseline model YOLOv11n, the RCSOSA, SEA, and P2 detection head modules were individually integrated and evaluated. As presented in Table 2, the proposed RSP-YOLO11n model, which incorporates the RCSOSA, SEA, and P2 detection head modules, demonstrates significant performance improvements over the baseline YOLOv11n model. Specifically, the P/%, R/%, F1/%, mAP50/%, and mAP50:95/% increased by 2.4%, 3.3%, 2.9%, 2.5%, and 2.8%, respectively. Among the models YOLOv11n + RCSOSA, YOLOv11n + SEA, and YOLOv11n + P2 head, the best improvement was achieved in P/%, R/%, F1, mAP50and mAP50:95/%, with increases of 1.8%, 2.1%, 1.2%, 1.7%, and 1.7%, respectively. Based on the data presented in Table 2, we conducted a detailed analysis and derived the following evaluations. The RCSOSA module has been proven to enhance feature-level parallelization, thereby improving feature extraction efficiency and enriching the representation of feature information. The SEA attention mechanism integrates axial and channel attention, effectively strengthening local detail modeling. Even under low computational complexity conditions, this module can capture long-range dependencies, thereby enhancing target identification accuracy. The P2 detection head improves the network’s ability to detect small insulator targets by capturing detailed features from small objects, particularly during the high-resolution stage of image processing. This enhancement allows the network to more effectively discern fine-grained details that are critical for accurate detection in high-resolution images. This enables the model to better detect and localize small-scale targets, which are often challenging to detect in lower-resolution stages.

The outcomes of the ablation experiments, including the recall curve, precision curve, mAP@0.5 curve, and mAP@0.5–0.95 curve, are presented in Fig. 8. These results demonstrate that the enhanced RSP-YOLOv11n model outperforms the baseline model across all performance metrics, reflecting substantial improvements. Specifically, the enhanced model not only shows faster convergence during the training process but also achieves superior accuracy in both recall and precision. When compared to other existing models, the proposed RSP-YOLOv11n excels in both convergence speed and overall detection accuracy, underscoring its advanced capabilities in tackling the insulator detection challenge.

Visual analysis is an important tool for intuitively comparing the detection results of different algorithms. It effectively highlights the level of attention in different regions of the image through color changes, making the results easy to interpret. In addition, this method helps to identify potential problems or anomalies by highlighting critical areas, allowing for targeted detection of key targets. To evaluate how different algorithms detect targets and prioritize important information, we performed a visual analysis using feature heatmaps generated by the GradCAM algorithms, as shown in Fig. 9.

Visual analysis of the heatmap processing for insulator defect images on six different backgrounds revealed several key findings. Our proposed RSP-YOLOv11n algorithm demonstrated superior localization performance compared to the baseline YOLOv11n algorithm, particularly in focusing on insulator defect locations across different backgrounds. RSP-YOLOv11n excelled at accurately localizing small targets, ensuring accurate defect identification. Overall, this algorithm improves localization information and provides a more focused focus on target details. These results highlight the significant advantages of RSP-YOLOv11n in object detection, effectively meeting detection requirements in various scenarios.

To further validate the superiority of RSP-YOLOv11n, a comparative analysis was conducted on the detection results from six different models (A, B, C, D, E, and F). Five images were randomly selected from the insulator dataset (ZB) as test samples, and the detection results obtained from each of the six networks are presented in Fig. 10.

As shown in Fig. 10, the detection results of six different models are compared. In (A), the YOLOv11n model is presented, which demonstrates relatively high detection accuracy but is affected by noticeable false detections. (B) displays the results for the YOLOv11n + RCSOSA model, which achieves a reduction in false detections compared to YOLOv11n, though overall detection accuracy slightly decreases. In (C), the YOLOv11n + SEA model shows a significant improvement in detection accuracy across all defect types when compared to the previous two models. (D) presents the YOLOv11n + P2 head model, which excels in detecting small targets with higher precision. (E) illustrates the YOLOv11n + RCSOSA + SEA model, which outperforms the previous four models in detection accuracy, with no false detections. Finally, (F) showcases the RSP-YOLOv11n model, which excels in addressing challenges such as background similarity, small target detection, and multiple target scenarios, outperforming all other models. This visual validation confirms that the integrated modules in RSP-YOLOv11n contribute synergistically to both precision and robustness, making it a superior solution for complex UAV-based inspection environments.

As demonstrated in Fig. 11, the confusion matrix of RSP-YOLOsv11n on Insulators Datasets (ZB) reveals that each element \({C}_{i j}\). within the confusion matrix corresponds to the number of samples that are genuinely classified as category \(i\) but predicted by the model as category \(j\) . This analysis provides a quantitative representation of the model’s precision in detecting targets of varying categories. a minimal number of insulator targets are missed (i.e. misdetected as “background”), and a negligible number of background targets are misidentified as insulators. Importantly, no misdetection occurs for defect targets, and the number of missed defect targets is extremely low. This outcome is significant for insulator detection projects, as the risk of false defect detection surpasses that of misdetecting background and insulators.

Comparison to others models with insulators datasets (ZB)

To verify the effectiveness and advancements of the proposed RSP-YOLOv11n algorithm, a comparison was conducted with YOLOv8n, YOLOv10n, YOLOv11n, SSD, DINO, and RT-DETR. The experiments were performed under identical Insulators Datasets (ZB) and conditions, with the results presented in Table 3.

As demonstrated in Table 3, the RSP-YOLOv11n model proposed in this study demonstrates superior performance in comparison to the YOLOv8n, YOLOv10n, YOLOv11n, and SSD models across all evaluation metrics. Despite exhibiting a marginally lower precision in comparison to SSD, the RSP-YOLOv11n model demonstrates superior performance in terms of recall/%, F1/%, mAP50/%, and mAP50:95/% by 21.5%, 12.2%, 9.5%, and 15.9%, respectively. In contrast to the YOLO series algorithms, the RSP-YOLOv11n model exhibits the most optimal outcomes. As shown in the table, although DINO and RT-DETR are among the more advanced object detection algorithms in recent years and exhibit strong performance across various metrics, the proposed RSP-YOLOv11n model remains highly competitive in terms of overall performance. Specifically, the RSP-YOLOv11n achieves an F1 score and mAP50 of 89.0% and 91.2%, respectively, outperforming DINO (87.3% and 88.5%) and approaching the performance of RT-DETR (91.2% and 92.6%). While its mAP50:95 (61.7%) is slightly lower than that of RT-DETR (63.2%), it surpasses that of DINO (59.5%), indicating better performance in small object detection and overall regression accuracy. Figure 12 presents the prediction results of the seven algorithms summarized in Table 3. These results demonstrate that the RSP-YOLOv11n model achieves comparable or even superior detection accuracy to Transformer-based models while maintaining a smaller model size and higher inference efficiency, making it highly practical and applicable for real-world deployment.

Robustness testing analysis

In practical UAV-based detection tasks, complex environmental factors such as weather variations and lighting differences can significantly affect image quality. Therefore, conducting robustness testing on the model is essential. Based on a self-constructed dataset, this study employed image processing techniques to synthesize five types of image augmentation effects, including Synthetic Brightness (Fig. 14, Column A), Synthetic Saturation (Fig. 14, Column B), Synthetic Fog (Fig. 14, Column C) Synthetic Rain (Fig. 14, Column D), and Synthetic Snow (Fig. 14, Column E). The augmented dataset was expanded to a total of 25,152 images.To further evaluate the performance of the proposed RSP-YOLOv11n algorithm, this experiment compared it with several mainstream object detection algorithms, including CO-DETR, RTMDet, DINO, and RT-DETR. In addition to conventional evaluation metrics, the inference speed (FPS) was also included as a key indicator. The detailed results are presented in Table 4.

As shown in Table 4, after augmenting the dataset with various lighting conditions and weather scenarios, the detection performance of most models declined to some extent. Nevertheless, the proposed RSP-YOLOv11n algorithm demonstrates competitive performance. Specifically, although its mAP50 and mAP50:95 scores (89.3% and 61.3%, respectively) are slightly lower than those of RT-DETR (92.5% and 62.2%), RSP-YOLOv11n exhibits clear advantages in inference speed, achieving 78 FPS compared to RT-DETR’s 60 FPS. This substantial improvement in speed makes RSP-YOLOv11n more suitable for deployment on edge devices with limited computational resources, such as UAV platforms requiring real-time processing. Furthermore, the proposed algorithm maintains high detection precision (92.2%) and F1-score (87.9%), indicating strong robustness and practical value in complex outdoor environments.

The visualization results, as shown in Fig. 13, further demonstrate that the RSP-YOLOv11n model exhibits certain advantages in detection accuracy, robustness, and generalization ability. It is capable of delivering more stable and accurate detection outcomes in practical applications and is better suited for UAV-based insulator defect detection scenarios.

Comparison to others models with public insulator datasets

Currently, the mainstream publicly available insulator datasets include the China Power Line Insulator Dataset (CPLID), the Insulator Defect Image Dataset (IDID), and the Synthetic Foggy Insulator Dataset (SFID). Several publicly available insulator datasets have been widely used for insulator defect detection research. The CPLID34 dateset contains 600 normal insulator images captured by UAVs and 248 synthetic defective insulator images, featuring diverse aerial backgrounds such as urban areas, rivers, fields, and mountains. The defective images are generated through segmentation and affine transformations to simulate various defect scenarios. The IDID35 dateset comprises 1,596 high-resolution images of insulator chains, categorized into flashover damaged insulators, broken insulators, and intact insulators, providing detailed real defect samples. The SFID36 dateset is an augmented dataset based on UPID, containing 13,718 images enhanced with random brightness and fog effects to simulate challenging environmental conditions. These datasets collectively offer comprehensive benchmarks for evaluating insulator defect detection algorithms under varied scenarios. To further validate the detection performance of the RSP-yolov11n algorithm, we compared the RSP-YOLOv11n with other mainstream object detection algorithms using the three public insulator datasets, as shown in Table 5.

To comprehensively evaluate the detection performance of the proposed RSP-YOLOv11n algorithm, comparative experiments were conducted on three widely used public insulator datasets: CPLID, IDID, and SFID. The CPLID dataset, containing both authentic UAV-captured normal insulator images and synthetically generated defective insulator images, provides diverse background scenarios. On this dataset, RSP-YOLOv11n achieves superior performance with a precision of 96.9%, recall of 96.1%, F1-score of 96.5%, and mAP50 of 98.4%, outperforming several state-of-the-art methods including YOLOv7 and ID-YOLO. The IDID dataset, consisting of high-resolution images of real insulator defects, poses greater detection challenges. The RSP-YOLOv11n attains competitive metrics with an F1-score of 88.6% and mAP50 of 93.5%, closely matching or exceeding other advanced models such as RT-DETR and YOLOv8 variants. For the SFID dataset, which simulates foggy environmental conditions, RSP-YOLOv11n achieves an F1-score of 99.0% and mAP50 of 99.4%, demonstrating excellent robustness in adverse weather scenarios. Across all datasets, the algorithm maintains a high inference speed (up to 74.1 FPS on SFID), balancing detection accuracy and real-time applicability. These results validate that RSP-yolov11n combines strong detection capabilities with practical deployment potential, particularly in complex aerial inspection environments requiring reliable and efficient insulator defect detection.

Figure 14 presents the visualization of detection results of the RSP-YOLOv11n algorithm on three publicly available insulator datasets: CPLID, IDID, and SFID. It can be clearly seen from the figure that RSP-YOLOv11n demonstrates certain advantages in detecting small targets and handling complex backgrounds.

Discussion

The RSP-YOLOv11n algorithm presented in this paper shows remarkable performance improvements in detecting insulator defects through drone-based inspections. This enhanced approach provides superior detection accuracy and efficiency, making it a promising tool for real-time monitoring and assessment of electrical infrastructure. By innovatively integrating the RCSOSA modules, SEA attention mechanism, and P2 detection head, RSP-YOLOv11n attains excellent accuracy on the self -built insulator dataset and demonstrates outstanding generalization capabilities on the public CPLID, IDID, and SFID dataset. However, the RSP-YOLOv11n model has several drawbacks in addition to its excellent performance in a number of areas. The model’s performance may be constrained in broader application scenarios due to the existing dataset primarily comprising images captured under clear weather conditions. This dataset does not adequately reflect real-world deployments in more challenging or adverse weather environments, potentially limiting the model’s generalization ability. In addition, although the model’s recognition results are good, the model parameters are relatively large and need to be further optimized to adapt to the more stringent computational and storage conditions on devices with more limited resources. Although drones have been increasingly used as tools for image acquisition in insulator surface defect detection, the direct deployment of detection models on drones remains insufficiently explored. Several critical factors that could substantially affect both the performance and operational efficiency of drones, including battery life, image processing speed, and signal transmission have not been comprehensively investigated. These factors are essential for ensuring that the drones can operate effectively in real-world conditions, as they directly influence flight time, model inference speed, and overall system reliability. Further research into these aspects is needed to optimize the deployment of detection models on drones for practical use in the field. In order to improve dependability and functionality in real-world applications, more study is required to optimize the model’s integration on drones while taking these factors into consideration. Future studies will focus on a few crucial areas to get beyond these obstacles and improve the model’s application in practice. First, in order to improve the model’s resilience and flexibility, the dataset will be enlarged to include failure kinds that are found in complex situations. Second, the algorithm will be further optimized to maintain or increase detection accuracy while reducing complexity. Additionally, efforts will continue to optimize the UAV’s embedded system, focusing on controlling power consumption, maximizing hardware resource utilization, and achieving an optimal balance between weight and battery life. Finally, comprehensive testing will be conducted across diverse domains, such as high-speed road defect detection, industrial manufacturing defect identification, and forest fire monitoring, to assess the adaptability of the RSP-YOLOv11n model for various tasks.

Conclusions

To enhance the algorithm’s ability to process information in complex backgrounds, we propose the RSP-YOLOv11n algorithm, specifically designed for detecting insulator defects in UAV images. Firstly, the RCSOSA modules effectively integrate both channel and spatial information, thereby improving the model’s feature extraction capability and allowing it to better capture relevant details. Secondly, the SEA attention mechanism is incorporated to sharpen the model’s focus on critical information during the feature extraction and fusion processes, ensuring that the most relevant features are emphasized. Lastly, the inclusion of the P2 detection head optimizes the model’s performance in detecting small targets, improving detection accuracy even in challenging scenarios with limited resolution. Together, these enhancements enable the RSP-YOLOv11n algorithm to achieve superior performance in insulator defect detection within complex and varied UAV-captured environments.

Experimental results indicate that, relative to the baseline YOLOv11n, RSP-YOLOv11n improves Precision (P) by 92.3%, Recall (R) by 85.9%, F1 score by 89%, mAP50 by 91.2%, and mAP50:95 by 61.7%. Future optimizations will focus on accelerating detection speed while maintaining accuracy through trimming and quantization, with the goal of efficient edge deployment on drones for real-time power line detection. Future research will concentrate on optimizing the model’s lightweight design to enhance target detection speed without compromising accuracy. Additionally, edge deployment strategies will be investigated by adapting the network for integration with unmanned aerial vehicles (UAVs) to enable real-time and efficient power line detection.

Furthermore, practical deployment scenarios were considered to evaluate the algorithm’s suitability for edge computing platforms, such as UAVs equipped with embedded devices. Although RSP-YOLOv11n is lightweight, constraints such as limited onboard computational power, battery life, and payload capacity must be addressed for seamless integration. Future research will focus on network pruning, quantization, and hardware-aware optimization to reduce inference latency and energy consumption, thereby facilitating real-time deployment on UAVs under field conditions. In addition, although the model has shown good generalization under normal conditions, extreme scenarios such as low illumination, partial occlusion, and adverse weather (e.g., fog, rain, and snow) remain challenging. Future work will involve expanding the training dataset with adverse-condition samples, introducing data augmentation strategies, and integrating adaptive enhancement modules to improve the model’s robustness and detection reliability under such extreme environments.

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.

References

National Energy Administration of China. Official website of the NEA. https://www.nea.gov.cn/.

Alhassan, A. B., Zhang, X., Shen, H. & Xu, H. Power transmission line inspection robots: A review, trends and challenges for future research. Int. j. electr. power energy Sys. 118, 105862 (2020). https://doi.org/10.1016/j.ijepes.2020.105862.

El-Hag, A. Application of machine learning in outdoor insulators condition monitoring and diagnostics. IEEE instrum. meas. mag. 24 (2), 101–108 (2021). https://doi.org/10.1109/MIM.2021.9400959.

Liu, Y., Liu, D., Huang, X. & Li, C. Insulator defect detection with deep learning: A survey. IET Gener. Transm. Distrib. 17 (16), 3541–3762 (2023). https://doi.org/10.1049/gtd2.12916.

Luo, Y., Yu, X., Yang, D. & Zhou,B. A survey of intelligent transmission line inspection based on unmanned aerial vehicle. Art. Int. Rev. 56, 173–201 (2023). https://doi.org/10.1007/s10462-022-10189-2.

Xia, H., Yang, B., Li, Y. & Wang, B. An improved CenterNet model for insulator defect detection using aerial imagery. Sensors. 22 (8), 2850 (2022). https://doi.org/10.3390/s22082850.

Yu, Y., Cao, H., Wang, Z., Li, K. & Xie, S. Texture-and-shape based active contour model for insulator segmentation. IEEE Access. 7, 78706–78714 (2019). https://doi.org/10.1109/ACCESS.2019.2922257.

Tan, P., Li, X., Xu, J. et al. Catenary insulator defect detection based on contour features and gray similarity matching. J. Zhejiang Univ., Sci. A. 21 (1), 64–73 (2020). https://doi.org/10.1631/jzus.A1900341.

Wang, B., Dong, M., Ren, M. et al. Automatic fault diagnosis of infrared insulator images based on image instance segmentation and temperature analysis. IEEE Trans. instrum. Measure. 69 (8), 5345–5355 (2020). https://doi.org/10.1109/TIM.2020.2965635.

Zheng, H., Sun, Y., Liu, X. et al. Infrared image detection of substation insulators using an improved fusion single shot multibox detector. IEEE Trans. Power Delivery. 36 (6), 3351–3359 (2020). https://doi.org/10.1109/TPWRD.2020.3038880.

Taghizadeh, M. & Chalechale, A. A comprehensive and systematic review on classical and deep learning based region proposal algorithms. Expert Syst. Appl.189, 116105 (2022). https://doi.org/10.1016/j.eswa.2021.116105.

Girshick, R., Donahue, J., Darrell, T. & Malik, J. Region-based convolutional networks for accurate object detection and segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 38 (1), 142–158 (2015). https://doi.org/10.1109/TPAMI.2015.2437384.

Girshick, R. Fast R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), 1440–1448 (2015).

Ren, S., He, K., Girshick, R. & Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 39(6), 1137–1149 (2017). https://doi.org/10.1109/TPAMI.2016.2577031.

Hao, K., Chen, G., Zhao, L. et al. An insulator defect detection model in aerial images based on multiscale feature pyramid network. IEEE Trans. Instrum. Measure. 71, 3522412 (2022). https://doi.org/10.1109/TIM.2022.3200861.

Tan, P., Li, X., Ding, J. et al. Mask R-CNN and multifeature clustering model for catenary insulator recognition and defect detection. J. Zhejiang Univ. Sci. A. 23, 745–756 (2022). https://doi.org/10.1631/jzus.A2100494.

Bao, W., Du, X., Wang, N., Yuan, M. & Yang, X. A defect detection method based on BC-YOLO for transmission line components in UAV remote sensing images. Remote Sen. 14 (20), 5176 (2022). https://doi.org/10.3390/rs14205176.

Zeng, B., Zhou, Z., Zhou, Y. et al. An insulator target detection algorithm based on improved YOLOv5. Sci. Rep.15, 496 (2025). https://doi.org/10.1038/s41598-024-84623-6.

Hong, X., Wang, F. & Ma, J. Improved YOLOv7 model for insulator surface defect detection. In 2022 IEEE 5th Advanced Information Management, Communicates, Electronic and Automation Control Conference (IMCEC), 5, 1667–1672 (2022). https://doi.org/10.1109/IMCEC55388.2022.10019873.

Deng, S., Chen, L. & He, Y. Insulator defect detection from aerial images in adverse weather conditions. Appl. Intell. 55, 365 (2025). https://doi.org/10.1007/s10489-025-06280-0.

He, M., Qin, L., Deng, X. & Liu, K. MFI-YOLO: multi-fault insulator detection based on an improved YOLOv8. IEEE Trans. Power Del. 39 (1), 168–179 (2023). https://doi.org/10.1109/TPWRD.2023.3328178.

Tan, L., Liu, S., Gao, J. et al. Enhanced self-checkout system for retail based on improved YOLOv10. J. Imag. 10 (10), 248 (2024). https://doi.org/10.3390/jimaging10100248.

Li, Y., Zhu, C., Zhang, Q., Zhang, J. & Wang, G. IF-YOLO: An efficient and accurate detection algorithm for insulator faults in transmission lines. IEEE Access. 12, 167388–167403 (2024). https://doi.org/10.1109/ACCESS.2024.3496514.

Zhang, Q., Zhang, J., Li, Y., Zhu, C., & Wang G. IL-YOLO: an efficient detection algorithm for insulator defects in complex backgrounds of transmission lines. IEEE Access. 12, 14532–14546 (2024). https://doi.org/10.1109/ACCESS.2024.3358205.

Gao, Y., Xin, Y., Yang, H., & Wang Y. A lightweight anti-unmanned aerial vehicle detection method based on improved YOLOv11. Drones. 9(1), 11 (2024). https://doi.org/10.3390/drones9010011.

Liu, W., Anguelov, D., Erhan, D. et al. SSD: Single shot multibox detector. In Comput. Vis.–ECCV 2016, 21–37 (2016). https://doi.org/10.1007/978-3-319-46448-0_2.

Li, Y., Wei, S., Liu, X. et al. An improved insulator and spacer detection algorithm based on dual network and SSD. IEICE Trans. Infor. Sys. 106 (5), 662–672 (2023). https://doi.org/10.1587/transinf.2022DLP0062.

Chen, Q., Xiong, Q., Huang, H. et al. An efficient and lightweight surface defect detection method for micro-motor commutators in complex industrial scenarios based on the CLS-YOLO network. Electronics. 14(3), 505 (2025). https://doi.org/10.3390/electronics14030505.

Wang, Z., Su, Y., Kang, F. et al. PC-YOLO11s: a lightweight and effective feature extraction method for small target image detection. Sensors. 25(2), 348 (2025). https://doi.org/10.3390/s25020348.

Khanam, R. & Hussain, M. YOLOv11: An overview of the key architectural enhancements. arXiv:2410.17725 (2024). https://doi.org/10.48550/arXiv.2410.17725.

Kang, M., Ting, C. M., Ting, F. F. et al. RCS-YOLO: A fast and high-accuracy object detector for brain tumor detection. In Medical Image Computing and Computer Assisted Intervention–MICCAI 2023. 600–610 (2023). https://doi.org/10.1007/978-3-031-43901-8_57.

Lee, Y., Hwang, J., Lee, S. et al. An energy and GPU-computation efficient backbone network for real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, (2019).

Wan, Q., Huang, Z., Lu, J. et al. SeaFormer++: Squeeze-enhanced axial transformer for mobile visual recognition. Inter. J. Comp. Vision. 133, 3645–3666 (2025). https://doi.org/10.1007/s11263-025-02345-2.

Tao, X., Zhang, D., Wang, Z., Liu, X., Zhang, H. & Xu, D. Detection of power line insulator defects using aerial images analyzed with convolutional neural networks. in IEEE Trans. Syst. Man Cybernetics: Syst. 50 (4), 1486–1498 (2020). https://doi.org/10.1109/TSMC.2018.2871750.

Lewis, D. & Kulkarni, P. Insulator defect detection. IEEE Dataport (2021). https://doi.org/10.21227/vkdw-x769.

Zhang, Z. D., Zhang, B., Lan, Z. C. et al. FINet: An insulator dataset and detection benchmark based on synthetic fog and improved YOLOv5. IEEE Trans .Instrum. Measure. 71, 1–8 (2022). https://doi.org/10.1109/TIM.2022.3194909.

Zhang, Q., Zhang, J., Li, Y. et al. ID-YOLO: A multi-module optimized algorithm for insulator defect detection in power transmission lines. IEEE Trans. Instrum. Measure. 74, 1–11 (2025). https://doi.org/10.1109/TIM.2025.3527530.

Zhang, T., Zhang, Y., Xin, M. et al. A lightweight network for small insulator and defect detection using UAV imaging based on improved YOLOv5. Sensors. 23 (11), 5249 (2023). https://doi.org/10.3390/s23115249.

Yu, Z., Lei, Y., Shen, F. et al. Research on identification and detection of transmission line insulator defects based on a lightweight YOLOv5 network. Remote Sens.15 (18), 4552 (2023). https://doi.org/10.3390/rs15184552.

Huang, L., Li, Y., Wang, W. et al. Enhanced detection of subway insulator defects based on improved YOLOv5. Appl. Sci. 13 (24), 13044 (2023). https://doi.org/10.3390/app132413044.

Souza, B. J., Stefenon, S. F., Singh, G. & Freire, R. Z. Hybrid-YOLO for classification of insulator defects in transmission lines based on UAV. Inter. J Elect. Power Ener. Sys. 148, 108982 (2023). https://doi.org/10.1016/j.ijepes.2023.108982.

Huang, S., Dong, X., Wang, Y. & Yang, L. Detection of insulator burst position of lightweight YOLOv5. In Proceedings of the 8th International Conference on Computing and Artificial Intelligence. 573–578 (2022). https://doi.org/10.1145/3532213.3532300.

Yang, Z., Xu, Z. & Wang, Y. Bidirection-fusion-YOLOv3: An improved method for insulator defect detection using UAV images. IEEE Trans. Instrum. Measure. 71, 1–8 (2022). https://doi.org/10.1109/TIM.2022.3201499.

Lv, W., Xu, S., Zhao, Y. et al. DETRs beat YOLOs on real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) ,16965–16974 (2024).

Wang, C. Y., Bochkovskiy, A. & Liao, H. Y. M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 7464–7475 (2023).

Chen, Y., Liu, H., Chen, J. et al. Insu-YOLO: An insulator defect detection algorithm based on multiscale feature fusion. Electronics.12 (15), 3210 (2023). https://doi.org/10.3390/electronics12153210.

Farooq, U., Yang, F., Shahzadi, M. et al. YOLOv8-IDX: Optimized deep learning model for transmission line insulator-defect detection. Electronics. 14 (9), 1828 (2025). https://doi.org/10.3390/electronics14091828.

Li, D., Lu, Y., Gao, Q. et al. LiteYOLO-ID: A lightweight object detection network for insulator defect detection. IEEE Trans. Instrum. Measure. 73, 1–12 (2024). https://doi.org/10.1109/TIM.2024.3418082.

You, X. & Zhao, X. An insulator defect detection network based on improved YOLOv7 for UAV aerial images. Measurement. 253, 117410 (2025). https://doi.org/10.1016/j.measurement.2025.117410.

Pradeep, V., Baskaran, K. & Evangeline, S. I. An improved transfer learning model for detection of insulator defects in power transmission lines. Neural Comput. Appl. 37, 6951–6976 (2025). https://doi.org/10.1007/s00521-025-11011-0.

Liao, Y., Peng, C., Li, X. et al. HRGA-Net: Hierarchical rotation Gaussian attention network for accurate insulator detection from UAV images. IEEE Trans. Power Deliv. 1–9 (2025). https://doi.org/10.1109/TPWRD.2025.3586984.

Lu, G., Li, B., Chen, Y. et al. Precision in aerial surveillance: Integrating YOLOv8 with PConv and CoT for accurate insulator defect detection. IEEE Access. 13, 49062–49075 (2025). https://doi.org/10.1109/ACCESS.2025.3551289.

Tian, Y., Ahmad, R. B. & Abdullah, N. A. B. Accurate and efficient insulator maintenance: A DETR algorithm for drone imagery. PLoS One. 20 (2), e0318225 (2025). https://doi.org/10.1371/journal.pone.0318225.

Acknowledgements

This research project was financially supported by Mahasarakham University, Thailand.

Author information

Authors and Affiliations

Contributions

B.Z. Conceptualization, Writing—review and editing, Resources. L.H. Methodology, Software, Validation, Writing—original draft preparation. N.A. writing—review and editing. S.S. Visualization, Writing—original draft preparation. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zheng, B., Angkawisittpan, N., Huang, L. et al. RSP-YOLOv11n multi-module optimized algorithm for insulator defect detection in UAV images. Sci Rep 15, 35426 (2025). https://doi.org/10.1038/s41598-025-19059-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-19059-7