Abstract

Daily products (DPs) are frequently used in synergy due to lifestyle preferences and usage habits, evolving in response to shifting living needs. Given this dynamic, the integration of existing product functionalities emerges as a sustainable approach to innovative development. In this study, a novel user-habit-oriented hybrid Taguchi method is proposed, coupled with experimental frameworks designed to assess usability. Through rigorous experimentation, significant design parameters that enhance usability are identified and utilized to optimize the integration of multiple DPs. Validation experiments confirm that the proposed hybrid Taguchi method effectively integrates and optimizes the functions of multiple daily products, offering a promising pathway for sustainable product innovation. The design scheme determined by this method not only has high usability performance, but also suits the user’s lifestyle habits.

Similar content being viewed by others

Introduction

Interaction between daily products and users

With the diverse needs of users leading to the emergence of everyday product types with multiple functions, including reconfigurable systems and multifunctionality, such products have a wider range of functional utility than traditional single-system products do (Lewis & Kalyanasundaram, 2011). The important purpose of this type of design is to combine two or more specified single-system products into an integrated product, a concept based on the reasonable assumption of the commonality of some functional configurations, components, or structures in the originally specified product. In addition to physical similarities in materials, structures, or functions, many functions do not exist in isolation and interact, especially when they are used1. Behaviour, personal characteristics, and the environment play important roles in usability, and individuals’ behaviours are guided by the goals they pursue in life2. Moreover, social and cultural norms define the domains that promote users’ usage and constraining behaviours, which constitute the perception, use, and improvement of the potential usability of products3,4. Users generate usage patterns and behavioural characteristics of products based on physical conditions, behavioural abilities, cognition, understanding, and cultural norms. User behaviour habits create inherent correlations between different products, offering opportunities to integrate functional configurations across multiple products and develop innovative concept products (Fig. 1). However, current research remains insufficient in leveraging user habits to infer functional correlations and applying these insights for functional integration and optimization.

Product design based on usability quality

Usability has become an important influencing factor when designers and users need to make judgements and choices among many products5,6 and is also considered one of the most important factors to consider when purchasing products, and considering attributes such as features, prices, and after-sales service performance as keys to their success in the marketplace7,8. The functional configuration of the same product will have multiple design parameters; however, issues such as whether the combination of multiple parameters can be used correctly and the efficiency of use will affect the quality of the design outcome. Therefore, the optimization of parameters based on usability performance is particularly important for integrated multifunctional designs. When there is only a single function, the representative task is obvious, whereas for a complex multifunctional product, the user will only evaluate those features that he can easily understand and use according to his own life habits and avoid all features that are difficult to use. The degree of usability is not constant, and the more the user experiences the product, the greater the evaluation of the usability of the product9. What truly matters is whether users achieve their desired goals when using the product. This depends on usability, usefulness, reliability, and efficiency,therefore, a trade-off between these attributes is needed for users to achieve their desired goals10,11. User-centred usability experiments measure the extent to which users interact with a given product (or service) and are highly useful for parameter optimization through experimental data and statistical algorithms12. The aspects of user expectations, motivations, and feelings based on lifestyle habits when an object (or system) is used in a context are summarized as pragmatism (functionality and utility)13, which optimizes usage performance by achieving pragmatic goals in the parameter design phase and leads to user testing to support and help select the best design parameters to ensure that the development is on the right track or to measure and evaluate whether the final product meets the set usability goals. Usability research bridges the gap between science and culture, striking a balance between empirical observation and meaningful expression that best respects the knowledge–creation practices of various disciplines.

Product optimization design using a hybrid Taguchi method

User research using prototypes is a key step in achieving product development to effectively capture user needs14. Prototypes are important tools for designers to gain knowledge, and the act of making physical prototypes helps designers identify ‘unknown unknowns’ regardless of fidelity (e.g., paper, wood, or 3D printed models) and is used to answer questions or test hypotheses about the design, thus improving the understanding of complex systems15. The goals, processes, and strategies of prototyping are dominated by design research16 while ignoring the promotional advantages of user behaviour in the iteration of the prototype. However, the number of prototypes composed of complete combinations of design parameters is large, which makes the experiment too costly. In addition, if only random numbers of combination patterns are available, it may increase the complexity of the experimental method and reduce the reliability of the experiment, making the data results inaccurate.

In the product development process, just the right amount of effort needs to be expended on prototyping to achieve the best results17. Therefore, the Taguchi method is proposed to streamline the number of experimental prototypes and use orthogonal arrays (OAs) to set the parameter combination pattern of each variable so that the system (i.e., product design or engineering) can produce immediate and effective results without increasing or reducing the cost,thus, usability experiments can be conducted efficiently. The Taguchi method has also been used in various fields, such as materials research18,19, mechanical engineering20, usability engineering21, product appearance design22, application programming interface design23 and many other areas, to achieve robust performance. Although the Taguchi technique is simple and efficient in optimizing parameters, one of its major drawbacks is that it can be used only for single-objective optimization. To eliminate this drawback and facilitate the solution of multiobjective optimization problems, combining grey relational analysis (GRA) with Taguchi methods has become widely popular24,25,26,27,28. In recent years, a hybrid approach combining grey correlation analysis with Taguchi’s method has been applied in the optimization of user experience and design patterns23, but it is only suitable for optimizing the parameter combination patterns of a single product or system and lacks integrated design optimization for multiple products or systems.

Research purpose

This study introduces a hybrid Taguchi method to develop an integrated approach for innovation and functional parameter optimization of daily products (DPs) based on user habits. By incorporating usability metrics, user experiences with prototypes, and their influence on product redesign, this study employs hybrid Taguchi methods, the analytic hierarchy process (AHP), and grey relational analysis to predict significant design variables and optimal parameter combinations. Validation experiments confirm the accuracy and reliability of the predicted results, demonstrating the effectiveness of the proposed methodology.

To achieve the research objectives, this study sets the following three goals:

1. Using the integrated and optimized design of shoe-changing and disinfection products in residential living environments as a case study, develop a prototype usability experiment based on users’ behavioural habits to improve usability performance.

2. Predict optimal design parameter integration patterns based on experimental results and perform functional integration and optimization design for the two products: disinfection spray and a shoe-changing bench.

3. Conduct validation experiments on the integrated and optimized design to confirm its good usability quality.

Through these objectives, the study demonstrates the effectiveness of the proposed hybrid Taguchi method in integrating multiple daily products (DPs) based on user habits and enhancing usability quality.

Materials and methodology

Prototype usability experiment of the hybrid Taguchi method

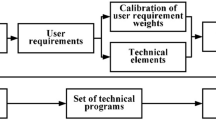

This study combines the usability experimental method of ‘postuse measurement’ with the Taguchi method of experiments (DOE) to measure user responses to the performance characteristics of a product prototype parameter set. Video recording was also used to analyse the task time and evaluate its effectiveness. The Taguchi method involves a robust process and results in system, parametric, and tolerance design processes to achieve optimal product performance29, where the key is the use of parametric design, which is an engineering approach to product or process design. This approach can be used to derive optimal processes, technologies, and products, as well as to identify control factors that significantly affect specific performance characteristics22. The system (product design or engineering) can be designed to deliver immediate and effective results to break through design bottlenecks or improve manufacturing processes at no additional cost (or reduced cost) with the fastest and most economical experimental approach. The value of y is influenced by the signal factor (M), the control factor (Z), and the noise factor (X) (Fig. 2). The signal factor is a design variable that needs to be determined by the designer or engineer to affect the integration of the two products; the control factor is also set by the designer or engineer to set the most appropriate parameter value for the design variable, usually with a high or low value, also called the ‘level’; the noise factor is a parameter that is beyond the control of the designer or engineer and is set to constitute the usability index of effectiveness, efficiency, and satisfaction.

The specific steps of the prototype usability experiment of the hybrid Taguchi method used in this study are as follows:

1. Determine the design variables that primarily affect functional integration, i.e., signal factors.

2. Determine the usability indicators, i.e., noise factors, and usability performance response values.

3. Set the parameters for integrating the scenario variables through a fishbone diagram and morphological analysis, i.e., control factors.

4. Select the appropriate orthogonal table.

5. Perform prototyping and usability experiments based on the direct cross-tabulation table.

6. Collect statistical data and perform GRA conversion to predict optimal levels and performance.

7. Conduct validation experiments to determine the best prototype and plan future actions.

Analysis of design variables

The Taguchi method is a design and experimental technique that depends on the most compact combination of factors (variables or inputs), thus eliminating the need to use all possible combinations of variables to perform complex and expensive experimental procedures. It is the most powerful method used to obtain the best combination of design (or process) parameters, and an important step before the experiment is to determine the effect of the selected design (or process) parameters on the quality of the final output. Fishbone diagram analysis is often used to analyse design variables that primarily affect quality characteristics, and when problems are identified through observation, customer surveys, or statistical process control, a template of possible causes of the effects needs to be generated by conducting a brainstorm; therefore, there may be an infinite number of causes in the process, which helps to identify root causes in a structured and precise manner30. Fishbone diagram analysis can also be used as a fuzzy front-end to experimental design to explore all factors that may contribute to changes in the effector response variables31.

This study focuses on the evaluation and experimentation of usability in functional manipulation by decomposing the design variables of electronic products into specific forms with reference to the system framework construction approach used by Han et al.32. Based on the above approach, existing and commonly used products in the market are collected at this stage, and the main factor parameters corresponding to the subfactor analysis are then morphologically decomposed and summarized, using simple diagrams or textual labels. Finally, a focus group is used to determine the important design variables.

Index of usability measurement

This study is based on the three usability indices (effectiveness, efficiency, and satisfaction) proposed in the widely recognized ISO model, combined with the metrics compiled by the MUSiC model, Hornbæk33 and Zhou and Chan34, to define a usability model and indices that are appropriate for the tasks set in this study (see Fig. 3).

The measure of ‘effectiveness’ in the usability model relates the accuracy and completeness of users’ implementation of target tasks and is defined as the two factors that constitute ‘effectiveness’; ‘efficiency’ includes both user-use efficiency and user-learning efficiency (user efficiency compared with that of experts), where user-use efficiency is defined in MUSiC as the relationship between the effectiveness of users in achieving target tasks and the time taken to achieve them35, whereas user-learning efficiency is defined in MUSiC as the ratio (expressed as a percentage) of the efficiency of any user use to the efficiency of an expert in the same context and measures the rate at which users learn how to use a given product in a given context, reflecting how efficiently the product is learned10,11. The use of the System Usability Scale (SUS) to measure subjective user satisfaction does not require additional information about interface details or test protocol details and can be combined with the three ISO model metrics to provide usability experts with a better understanding of user performance and overall usability of the product being tested36. This approach is considered one of the most reliable and valid measures of user-perceived usability37,38, and it correlates with other subjective measures of usability (e.g., the SUMI scale provided in the MUSiC model).

Because the importance of each indicator was not consistent for the usability experiment, AHP analysis was used to analyse the weights of the main indices and subindices. First, a comparison matrix was established for the usability indices, and then two subindicators of effectiveness and two indicators of efficiency were established separately to evaluate the importance of each index by establishing a focus group. The focus group consisted of seven experts who were familiar with the usability experiment and had many years of engineering and design expertise. The experts evaluated three comparison matrices and obtained 21 comparison matrices. Each matrix was first checked for consistency, and after passing the check (C.R. = 0), the evaluation data of the seven experts were synthesized by arithmetic averaging and then checked for consistency; the result C.R. = 0 indicated complete consistency. Finally, the weight value w assigned to each indicator was calculated by normalizing the geometric mean (Fig. 3).

Grey relational analysis of usability performance response values

Grey relational theory, originally proposed as a system science theory by Deng39 and continuously developed by Yang and John (2012) and Liu et al. (2012), refers to the uncertain relationship between variables and has been widely applied to uncertainty problems such as decision-making and pattern recognition, especially with discrete data and fuzzy information (Sun et al., 2018). It is one of the important results in the field of uncertain systems research, and its basic idea is to determine dominance based on the data sequence of variables by calculating the correlation between multiple variables and the same reference sequence; if the geometry of the sequence curves is closer, the grey relation between them is greater, and vice versa40,Li et al., 2019). The responses of each variable were first converted to a common scale (0–1) and normalized to all response values. The degree of similarity or dissimilarity of development trends between variables, also known as the grey relational grade (GRG), was subsequently used as a measure of the degree of association between variables, including steps such as grey association generation, the definition of reference series, calculation of the grey relational grade, and formation of the grey relational grade (Zhang et al., 2013).

The grey relational analysis (GRA) method consists of steps such as grey relational generation, reference sequence definition, grey relational grade (GRG) calculation and grey relational rank formation (Zhang et al., 2013). After the GRA process is complete, alternatives with multiple attributes can be effectively compared (Kuo et al., 2006). In this study, multiple usability metrics are combined into a single value through a GRA process, and Taguchi’s method and ANOVA are combined to help solve the design parameter optimization problem. A brief description of the computational process of the GRA method is as follows.

First, the analysis attributes or metric system are determined according to the purpose of the analysis, and the analysis data are collected. The set of n usability metrics is set as C = {c0, c1, c2…, cn}. x0 is the reference sequence, and xi is the comparison sequence, thus forming the following matrix in Eq. (1).

The reference sequence in this matrix is C0 = (c0 (j), j = 1, 2…, m), the comparison sequence is ci = (ci (j), i = 1, 2…, m), and the comparison sequence is ci = (ci (j), i = 1, 2…, n; j = 1, 2…, n; j = 1, 2…, m). The reference series should be an ideal standard for comparison. The best (or worst) value of each indicator can be used to form the reference data column, or other reference values can be chosen according to the purpose of evaluation. The comparison series ci (j) is the corresponding value of the jth experimental prototype of usability index i. The GRGγ (c0 (j), ci (j)) of the usability index can thus be obtained.

The different units of measurement and meaning in each usability index make it difficult to compare the data or to draw correct conclusions when comparing them. Therefore, for GRA, the data should usually be preprocessed by normalizing the raw data into a decimal series between 0 and 1 for comparison (Hsiao et al., 2008). This study expects that the larger the value of the usability metric is, the better, and the signal-to-noise ratio (S/N) of all the metrics is of the LTB type, so only the LTB type of standardization (Eq. 2) is used to convert individual metric measurements to [0–1] values23,39.

where ηi (j) is the S/N of the response value of the performance characteristic of the jth experimental prototype in the ith index of the metric, maxj ηi (j) is the maximum value of ηi (j), and minj ηi (j) is the minimum value of ηi (j). ci (j) is the standardized S/N.

After standardizing the raw data, the absolute difference between each indicator sequence of the measured object (comparison sequence) and the corresponding element of the reference sequence is calculated one by one according to Eq. (3).

In the formula, mini |c0 (j)-ci (j)| denotes the maximum difference in the absolute difference series, and mini |c0 (j)-ci (j)| denotes the minimum difference. ρ is the discrimination coefficient, 0 ≤ ρ ≤ 1, which depends on the requirements of the actual system. ρ generally takes values between 0.1 and 0.523,41. The smaller the value of ρ is, the greater the difference between the correlation coefficients. In this study, ρ was set to 0.1 to increase the significance of the factors in the ANOVA with the Taguchi method. If the value of ξi (j) is larger, the degree of relation between the ith comparison series ci and the reference series c0 in the jth experimental prototype is greater.

The average of the relational grades of the corresponding elements of the reference series for each subject indicator (comparison series) was calculated separately to reflect the relational relationship between each subject indicator and the reference series and is referred to as the relational sequence24,25,26. As usability attributes and indices play different roles in comprehensive evaluation, the weighting values of individual attributes and indices are obtained using the AHP method, and a weighted average of the correlation coefficients can be obtained34,42,43. Equation (5) is as follows:

In the equation, γ (c0 (j), ci (j)) is the weighted GRG of the quality characteristic of the jth experimental prototype usability measure, n is the number of usability measures, wi is the aggregated weight of the ith usability measure, and ∑n i = 1wi = 1, (j = 1, 2…, m). The values of γ (c0 (j), ci (j)) are in the range of 0–1, which can be combined with Taguchi’s method to optimize the design.

Orthogonal array

Taguchi developed a technique called orthogonal array (OA) to study the entire parameter structure of a small number of experimental samples, test all parameters in minimum equilibrium, and construct an experimental matrix, which is a fractional order multiplicative matrix that balances arbitrary levels of factors and interactions between factors and is the basis of experimental design. The OA determines the combination of the levels of the control factors used in each experimental sample.

In this study, the usability indices are defined as noise factors, the response values of the usability measures are performance characteristics, and the control factors are transformed into design variables. Through the identification of subjective perceived usability responses and design variables, relationships between them can be established, and experiments with combinations of design variables can be conducted to derive the best combination of design variables that best meets the usability index.

Signal-to-noise ratio

The Taguchi method uses the signal‒to‒noise ratio (S/N) as the selection criterion for performance characteristics, and because the standard deviation decreases as the mean value decreases, the S/N is used as the measurable value rather than the standard deviation44. The S/N is a measure of the difference between response and expectation, where ‘Signal’ represents the desirable component (what is needed) and ‘Noise’ represents the undesirable component (what is not needed) and can be expressed by Eq. (6).

The preferred value of the signal-to-noise ratio depends on the desired response and indicates that the study aims to obtain an output performance characteristics value (y) that is as large as possible, both y = ∞, such as the experience and satisfaction of the product or system. Its signal-to-noise ratio value (S/N) can be calculated using Eq. (7)22.

In the process of calculating the response values for the performance characteristics of each experiment, the maximum S/N is the best response value, i.e., the most desirable treatment or design response, regardless of the range of S/Ns of the responses45. Determining the response table and response graph from the S/N enables the best combination of design pattern types23.

Based on the S/N, the response value for the performance characteristic can be obtained via Eq. (8).

where R is the response value for the level of the design variable, ηi is the S/N for the same level of the design variable and m is the number of experimental samples with the same level of the design variable. The response table and graph can then be determined, and the optimal combination of design patterns can be derived.

To verify the performance of the optimized design, a validation experiment of the initial and optimal designs is performed to obtain usability metric values and to calculate the GRG of the S/N. In addition, the GRG (ηopt) of the parametric level combination model of the optimal design variables can be predicted as follows.

where \(\overline{\eta }\) is the total mean GRG of the S/N, ηk is the mean GRG of the S/N at the kth design pattern optimal type, and q is the number of design patterns. Finally, the GRG of the S/N can be compared to verify the resulting optimal design pattern.

Variance analysis

The purpose of analysis of variance (ANOVA) is to synthesize the response values of performance characteristics from a direct cross-tabulation experiment, to use statistical methods to identify factors that have a significant effect on the overall system, and to determine the impact of changes in the contribution of each factor46,47. Combining signal‒to‒noise ratios (S/Ns) with analysis of variance (ANOVA) allows us to find the optimal combination of levels of controllable factors and identify important factors affecting performance characteristics45. Several studies have used S/N and ANOVA for data application studies in usability and user experience with subjective assessments21,22, using a statistical analysis with a significance level of 0.05, which is equivalent to a 95% confidence level.

Analysis of variance (ANOVA) was used to identify statistically significant design variables. In cases where there are zero degrees of freedom of error26 or low values of the sum of squares (SS) of variables48 and where the p value of a variable is greater than 0.0523, pooling of the ANOVA is necessary as a process of ignoring insignificant variables and adding the contributing. The degrees of freedom of the lesser variables are added to the error terms and are often used to reestimate and revise incomplete variance analysis tables26. Some researchers have argued that pooling of variance has the benefit of simplifying the model of assumptions and the analysis and interpretation of the data, however, this action may reduce the power of the analysis to affect the value found (Hines, 1996). Therefore, to pool errors, this study followed Taguchi’s suggested pooling design variables until the degrees of freedom of error were approximately half of the total experimental degrees of freedom29.

Experimental program and methodology

This study was approved by the academic committee of the School of Design and Fashion at Zhejiang University of Science and Technology in March 2023 (NO.20230210). Informed consent was obtained from all study participants. We confirmed that all the experiments were performed in accordance with relevant guidelines and regulations. The subjective assessment data were obtained via expert group interviews and a web-based questionnaire. Before data collection, we verbally informed the participants that the assessment was anonymous, stated that it was for research purposes only, informed them of the main content of the study, and obtained their consent to begin the assessment measurements. The same instructions were also given in the web-based questionnaire, and consent was obtained to begin the assessment measurements. The experiments in this study were conducted in accordance with the informed consent provisions of the Declaration of Helsinki. Additionally, the photographs depicting the physical characteristics of some participants, which were included in the article, have obtained their consent for publication. They have also signed a declaration form.

Test experiment participants

To ensure that usability testing can be conducted smoothly and efficiently, this study conducted a role categorization of participants before testing, along with specific job requirements. Dumas and Redish49 and Rubin and Chisnell50 provide several ways of classifying the roles of test participants,however, most test teams do not require a full-role assignment, and most tests (especially informal problem-finding tests) do not require every role51. The actual distribution of skills among test participants may differ from these roles, but standard role job descriptions are useful for organizing effective usability testing.

Based on the characteristics of this study and Lewis’s51 classification of usability testing roles, the types of roles and functions of the participants were defined. The four main categories were experiment manager, data collector, prototype assembler and maintainer, and test subject.

Testing experimental participants

The usability experiment consisted of two types of researchers and participants, with 18 researchers leading the experiment, including one experimental leader, seven data collectors, and ten prototype builders, installers, and maintainers. The 17 researchers were first asked to conduct a prototype use test and to complete the SUS scale to compile preliminary data to provide an initial estimate of the variance. The appropriate formula for estimating the overall parameters is to construct confidence intervals52, and the estimation of the sample size of participants required for this study was based on the methodology of Salvendy53 and Lewis51, who calculated Eq. (10) as follows.

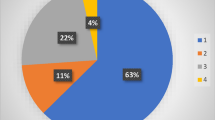

Equation (10) uses z as the reliability coefficient, s2 as the number of variances, d as the expected error, and n as the sample size. A rapid pilot study was run to obtain an estimate51,therefore, this study conducted a small sample size questionnaire based on the Satisfaction SUS scale and then used the results calculated in Eq. (10), combined with recommendations from the relevant literature, to estimate the appropriate number of respondents. A 90% confidence interval of z = 1.645 and z2 = 2.706 was first chosen51, and the expected error was then defined as 10%, indicating that the upper or lower bounds of the confidence interval were not expected to deviate from the observed mean by more than 10 percentage points23,51. s2 = 358.75 for the 17 researchers with a mean of 55 (d = 55 × 10% = 5.5), d2 = 30.25. Therefore, the sample size was determined to be n = (358.75 × 2.706)/30.25 = 32.092, and at least 33 participants were selected for this usability test, of which 11 were expert participants (i.e., researchers familiar with the experimental process and involved in prototype design and production), five of whom were male and six of whom were female. The other 23 were general participants, which included 12 males and 11 females.

The profiles of the participants are summarized in Table 1.

Experimental tasks and laboratories

For this usability test, a laboratory was constructed to simulate the function of a residential foyer and to set up the test tasks and scenarios. As shown in Fig. 4, the site consisted of a number of functional areas, as suggested in the literature54,55, with two main sections, the experimental area and the observation area, which were clearly separated, with the test prototype placed in the middle of the experimental area, the observation area directly in front of the experimental area for video recording and observation, and the subject preparation area to the side of the experimental area and the prototype. The test subject preparation area was located on the side of the experimental area, and the prototype assembly and maintenance area was to the side and rear of the observation area. The purpose of the site plan was to allow the experiment manager, observers, data collectors, prototype assemblers and repairers, and participants to conduct the tests without conflict of behaviour, allowing for a smooth and time-saving process. To simulate a basic residential foyer scene, the experimental area was equipped with an entrance and a wall for the participants to test based on their personal life experiences (Figs. 4 and 5).

Experimental data statistics of the usability indices

Effectiveness

Effectiveness measures assess the quality of a user’s implementation of a specific task goal and includes indicators such as the quality of the completed task and the error rate35. ‘Completeness’ is defined as the proportion of the target task that is performed, and ‘Accuracy’ is defined as the degree to which the task is performed to achieve the target task. A modified validity scale is proposed, which is also divided into five levels, where each level is expressed as a percentage. Because this study requires that all participants perform the target task, the more extreme score of 0 is removed to accommodate the assessment needs so that there are two scores in each level. The range is between [0.1 and 1], and a distinction is made between the ranges and descriptions of the completeness and accuracy ratings so that the purpose of the validity assessment process is clearer and the results are more refined (see Table 2).

The effectiveness of the performance of the target task is assessed separately for completeness and accuracy, and validity values are then calculated according to Eq. (11).

Efficiency

Timoshenko & Young56 defined the efficiency of a machine as the ratio of the useful work performed (output) to the total energy consumed (input). In this study, efficiency is defined as the relationship between the effectiveness (completeness and accuracy) of a user in achieving an objective task and the time spent in achieving these objectives and is divided into two indicators: user-use efficiency and user-learning efficiency.

User efficiency is defined as the effectiveness of the user in performing the target task divided by the task time and is calculated via Eq. (12) as follows:

‘Relative user efficiency’, also known as user efficiency compared with expert efficiency, is defined as the rate at which a user learns how to use a given product in a given context and can provide an indication of the learning efficiency of a user in performing a target task by comparing the efficiency of a given user to that of an expert10,11. The specific Eq. (13) is as follows.

Satisfaction

Satisfaction was measured using a standardized SUS scale for the data, and as the scale contains both positive and negative questions, the raw scores needed to be converted to contribution scores separately.

The positive question is SUSi (i = {1, 3, 5, 7, 9), and the contribution score for each question is the original score minus 1. The negative question is SUSj (j = {2, 4, 6, 8, 10), and the contribution score for each question is 5 minus the original score. The transformed score range for each question was [0–4], and then the sum of the scores for each question was multiplied by 2.5, resulting in a total score range of [0–100]. The specific Eq. (14) was calculated as follows57:

Results

Analysis of design variables for prototype experiments

This study is based on the behavioural basis of shoe changing and daily disinfection in a residential foyer space58. A prototype experiment was constructed to develop and validate an optimized model for the integration of two target products: a disinfectant spray and a shoe-changing stool. Three collaborative focus groups were formed to analyse and select patterns and corresponding types of design factors that influence the integration of the shoe changing stool and disinfectant functions based on both literature and practice. Each team was composed of three postgraduate students in the design discipline and was guided by an expert in the field of design practice and education who has been working in the field for more than ten years. The research process began with each team being asked to collect a wide range of commonly available shoe-changing stools and disinfectant sprays through online shopping platforms (e.g., Taobao, Shopee, and Amazon) and then conduct a preliminary typology and fishbone diagram to analyse the main variables affecting the integrated design.

Through group discussions and expert guidance, three rounds of analysis and revision were carried out. In the first round, each group was led by a team leader to collect a wide range of products and perform a preliminary summary analysis, which focused on dispersion and required multidirectional and multiangle thinking. After the three groups had completed the analysis of the main causes, they continued to subdivide the subcauses according to the main causes. Finally, the team members revised and summarized the main factors according to individual, integrated, and interaction attributes by referring to the system framework construction method used by Han et al.32, which resulted in six main factors, namely, shape, scale, spraying status, manipulation, placement and combination position, and refined the 10 main factors used in this study.

Daily products were collected from the market and aggregated. The products were then categorized according to 10 main factors and selected according to the principles of high frequency of occurrence, high frequency of use, moderate price, average or median. Finally, each of the 10 attributes (principal factors/design variables) was decomposed into its parameter range (subfactors/parameters), as shown in Table 3, for a limited number of A-J symbolic principal factor attributes and their characteristic parameters. This parameter list was used as a controlling factor to prepare for the subsequent design of the direct intersection table.

Experimental design

Orthogonal array

According to Table 3, there were 10 main factors and 2 subfactors/parameters for each of the 10 design factors for the integration of the shoe changing bench and disinfectant spray. The 18 basic orthogonal arrays (OAs) provided by Dr. Taguchi44 were referenced, and the relevant data were imported into Minitab 17 software to perform a DOE (design of experiment) direct cross-table setup, which resulted in two OAs, L12 (210) and L16 (210). For this experiment, a 12-experiment direct cross table, L12 (210), was chosen, as shown in Table 4, where 1 and 2 represent the two subcauses/parameters of each main factor. The results of the usability experiments in the direct cross-tab are the usability model set by the study and the statistics on the metrics (Fig. 5), including effectiveness, efficiency and satisfaction.

Prototyping

The aim was to ensure that the prototype could be quickly machined, installed and dismantled while maintaining its functionality. As some of the prototypes needed to be wall-mounted, a 70 cm*60 cm*2 cm piece of laminated wood was used to simulate a wall surface, to which the prototypes could be screwed to simulate a place of use. Figure 6 shows that the three focus groups designed and produced 12 sets of prototypes for the usability test over a week’s time, following 12 combinations of parameters and design variables.

Experimental data results

A total of 34 participants were assigned a numbered label to be attached to their right arm and asked to use this number as their number (Fig. 7). On the first day, 11 expert participants were allowed to experience the prototype within the scope of the task. The participants combined the requirements and steps of the task with their habitual behaviour of changing shoes in the foyer to test the usability of the prototype. On the second day, the test was conducted by 23 general participants who had not been involved in the design and production of the prototype before the experiment, nor had they completed the SUS scale; thus, the test was conducted after a briefing on the task requirements and process before the experiment began. There was no specific time set for the test, and the subject made behavioural responses based on their observations and subjective experience of the designed prototype. When the task was completed, the subject was asked to immediately evaluate it using the SUS scale based on their full experience with the prototype and to make an overall assessment of the usability of the product.

After completing the two usability tests, a validity expert focus group was then set up, and validity was assessed by reviewing the experimental video to determine the integrity and accuracy of the test subject’s usage behaviour during the testing process.

Results of the effectiveness measurements

In this study, the effectiveness (C1) measure of the usability model was defined as the accuracy and completeness of the user’s implementation of the target activity. Members were asked to assess both completeness and accuracy scores for each participant’s behavioural performance based on the validity assessment scale (see Table 2) and the test task and by reviewing the video of the participant testing.

The effectiveness value was calculated by multiplying the assessed completeness value with the accuracy value. As these two indicators were assigned different weights, as shown in Fig. 1, with completeness assigned a weight of 0.659 and accuracy a weight of 0.341, and the completeness and accuracy values of the 5 experts were integrated using arithmetic averaging to obtain the integrated completeness and accuracy values. Each value was then multiplied by its weight to obtain the weighted completeness and accuracy values for the 34 participants (for specific calculations, please refer to the effectiveness measurement data of the lamp and towel rack usability experiments). Finally, the two values were multiplied to obtain the weighted effectiveness values.

As shown in Table 5, C11 is the integrity weighting of the integrated participants, C12 is the accuracy weighting of the integrated participants, and the two were multiplied together to form the validity values (weighting) of the 12 prototype experiments. The integrity values ranged from 0.497– to 0.590, with the highest value for Group 5, followed by Group 7, and the lowest value for Group 5. The accuracy values ranged from 0.263–0.298, with the highest value for Group 5, followed by Groups 3, 7 and 11, and the lowest value for Group 4. The effectiveness values ranged from 0.135–0.176, with the highest value for Group 5, followed by Groups 3 and 7, and the lowest value for Group 4. The highest values for all three of these indicators were for Group 5.

Results of the efficiency measurements

The efficiency (C2) in the usability model consists of two components: user efficiency (C21) and relative user efficiency (C22). The C21 value is calculated according to Eq. (3), and the C22 value is calculated according to Eq. (4).

The total time consumed by the participants in using the prototype includes the preparation time, operation time and exit time. In this study, the time spent in operation is used as the main indicator of user efficiency. The test task included observation, contact use and adjustment time; therefore, all three were combined and defined as the operating time. Video playback was conducted to measure the operating time of 34 participants using 12 sets of design prototypes.

The efficiency data for the 34 participants were then obtained by dividing the effectiveness values from the five expert evaluations by the operating time. The efficiency values of the 34 participants were integrated using the arithmetic mean method to obtain the C21 and weighted efficiency values. As shown in Table 6, the C21 values ranged from 0.003–0.008, and the weighted values ranged from 0.002–0.005, with the highest value in Group 7, followed by Group 5, and the lowest value in Group 4.

The relative user efficiency (C22) and statistics are obtained by dividing the general user efficiency value by the dedicated user efficiency value. Finally, the C22 value and the weighted C22 value are obtained by dividing the general user efficiency value by the dedicated user efficiency value.

As shown in Table 7, the relative user efficiency values ranged from 1.065–2.175, and the weighted values ranged from 0.345–0.705, with the highest value for Group 2, followed by Group 1, and the lowest value for Group 5.

Results of satisfaction measurements

Satisfaction in the usability index of this study was measured subjectively using the SUS scale. A total of 34 participants participated in the usability test, and the experimental design prototype consisted of 12 groups; thus, each participant was required to complete 12 behavioural tests and 12 SUS scales based on their subjective perceptions. A total of 408 raw SUS scales were collected, and the raw scores were converted into contribution scores according to Eq. (5). The contribution scores of the 34 participants in the 12 groups were combined into a satisfaction (C3) score by calculating the arithmetic mean, as shown in Table 8.

The mean SUS contribution score of the 12 groups of design prototypes was defined as the satisfaction (C3), for which the scores ranged from 33.750–77.941, with the highest score being Group 3, followed by Group 5 (77.059) and the lowest score being Group 9. A product is considered to have good usability if its overall SUS score is equal to or higher than 68, whereas a score lower than 50 indicates poor usability (Sharfina, Santoso, 2016). Thus, four of the 12 groups of design prototypes scored below 50 (Groups 1, 2, 4 and 9), and six groups of prototypes scored above 68 (Groups 3, 5, 6, 7, 8 and 10), indicating that they had good usability satisfaction.

GRA transformation of usability index data

This study defines the model of the usability index as consisting of three components: effectiveness (C1), efficiency (C2) and satisfaction (C3), where effectiveness is obtained from the product of completeness (C11) and accuracy (C12), with corresponding weight values assigned. Efficiency (C2) is divided into user efficiency (C21) and relative user efficiency (C22) and is assigned a corresponding weight value. Satisfaction (C3) is measured by the SUS scale and is converted into a contribution score. The four indicators are combined into a GRG through GRA to create a sequence set and matrix, where the reference sequence is C0, set as the best value of 1, and the comparison sequences are C1, C2, C3, and C4, corresponding to the usability metrics C1, C21, C22 and C3, respectively (Table 9).

Because the units of measurement and the meaning of each usability index are different, it is necessary to standardize the data of each index into a sequence of 0 to –1 for comparison, using the standardization type LTB, which is calculated according to Eq. (7), as shown in Table 9. The absolute difference between each metric series (C1, C2, C3, C4) and the corresponding element of the reference series (C0) is calculated according to Eq. (12). The absolute differences are then substituted according to Eq. (13), and a discrimination coefficient of ρ = 0.1 is used to enhance the significance of the factors in the ANOVA. Finally, the weights of the indicators are substituted according to Eq. (14) to obtain the GRG. As shown in Table 9, the mean GRG values ranged from 0.032– to 0.215, and the S/N of the GRG ranged from − 29.980– − 13.370, with the highest value in Group 5, followed by Group 7, and the lowest value in Group 4. The GRG was defined as a measure of usability and set as a noise factor in the Taguchi method for data analysis and prototype optimization.

Results of prototype optimization

The data were imported into Minitab 17 software to perform a design of experiment (DOE) Taguchi analysis, using the S/N as the selection criterion for the performance characteristics, with LTB indicating a higher degree of usability (y = ∞). In this experiment, it was assumed that each control factor was independent of the other and did not interact with each other.

Significant design variables were identified by S/N analysis of variance (ANOVA), and the percentage contribution of each design variable to the GRG was determined. A statistical significance level of 0.05, equivalent to a 95% confidence level, was used for the analysis. Preliminary analysis of the variance showed a good model fit, with R2 (adjusted) = 72.9%, but only the sum of squares (SS) values of C and J were high among the 10 variables, and its contribution percentage was high, whereas those of the other 8 variables were low, with p > 0.05, indicating a lack of statistical significance. It is therefore necessary to pool the design variables, following the method suggested by Dr. Taguchi, starting with the variables with the largest p values, ignoring the less important variables, and adding the degrees of freedom of the less contributing variables to the error term until the degrees of freedom of error (DOF) are approximately half of the total DOF of the experiment.

See Table 10 for the pooling results of the GRG variance analysis, with R2 (adjusted) = 93.0% and a 20.1% improvement in model fit. These results clearly indicate that the design variables A (shape of Shoe changing stool), C (operation of Shoe changing stool), and J (placement of disinfectant spray) were statistically significant (p < 0.05), with p values for F (spraying of disinfectant spray) and H (size of disinfectant spray) being closer to 0.05. In addition, C was the most significant design variable and contributed the most to the design prototype, followed by J.

The relative effects between the various prototype experiments can be verified by the signal‒to‒noise ratio (S/N) response tables and response plots, all of which can provide good insight into the design and derive the optimal pattern of variable combinations. As shown in Table 11, the table of response values for the GRG of the S/N shows the difference between the maximum and minimum response values. The design variables were ranked according to these effects, with the most effective design variable C being 1 and the least effective variable I being 10.

The overall average GRG of the S/N for the 12 prototype sets was − 23.015. To maximize the usability of the prototypes, the best combination of parameters was determined by taking the highest value of the S/N for each design variable. The design variable C clearly had the strongest effect on the GRG of the S/N, followed by J, A and F. However, the design variable I had the weakest effect on the GRG of the S/N. Therefore, based on the response table and response graph, the pattern of combinations of parameter types with the best design variables was determined as the pattern with the highest GRG of S/N values.

The final combination of parameter types for the design variables that resulted in the best GRG of S/N was A1–B1–C2–D1–E1–F2–G2–H2–I1–J2. The materials and components of the original design prototype were reconstructed according to this combination, and an optimized prototype was produced (Fig. 8).

Prototype optimization for validation testing

The pattern of combinations of parameter types of optimal design variables identified through usability testing experiments must be verified through validation experiments to prevent the potential adverse effects of ignoring interactions between control factors to determine the accuracy of the overall process of analysis. The validation experiment is designed and conducted after the optimal prototype has been identified and an estimate has been obtained, and a new experiment is conducted on the optimal type.

The validation experiment was carried out using an initial prototype (Fig. 9) and an optimized prototype (Fig. 8). The initial prototype used the best prototype with the GRG mean prediction, and the pattern of combinations of parameter types for the design variables was A1–B2–C2–D1–E2–F2–G2–H2–I1–J2. As the identified initial and best prototypes were not in the original set of 12 prototypes, validation tests were required to examine the degree of improvement in usability performance characteristics.

The validation experiment was conducted with 35 participants, 34 of whom were the same as the previous participants and 10 expert participants numbered 22–31. The participants were asked to pass usability tests and index statistics, and the same five focus group members were used to perform validity assessments.

Table 12 shows the usability index weighting values, statistics and GRG values for the validation experiment, indicating that the S/N was calculated after the validation experiment, and the results showed that the mean value of the optimized prototype GRG was 0.312, with an S/N of − 10.124. The initial prototype GRG value was 0.028, with an S/N of − 30.952.

The results of the validation experiment are shown in Table 13, where the GRG of the initial design prototype was − 30.952 and the predicted GRG of the optimal design prototype calculated by Eq. (24) was − 12.771, representing an increase of 18.181 compared with the GRG of the initial design prototype (− 30.952). Clearly, the GRG obtained in the validation experiment was significantly better than the GRG calculated by the prediction equation; therefore, the optimal design prototype was valid, and the method was proven to be effective and feasible.

We uploaded the data from the study into the figshare database and generated a DOI (https://doi.org/10.6084/m9.figshare.29301410). This data file is an Excel document containing the calculation formulas and data of the Hybrid Taguchi Method, which can be used to reproduce the experimental results. The data include original experimental data, standardized data, revised data and validation experimental data.

Discussion

Problem analysis of the experimental process

In this study, we established design prototype patterns for 10 design variables at 2 levels each. Owing to the prohibitive material and time costs associated with constructing all possible combinations, Taguchi’s orthogonal array (OA) table was employed to streamline the parameter combination model to its minimum. This approach utilized 12 sets of models, specifically L12 (21⁰). A total of 34 participants were recruited to perform 12 tests evaluating the prototypes. The experiment was divided into 2 sessions, each separated by 1 week and lasting approximately 4 h. The participants were required to alternate between sets of prototypes immediately after completing each test, and this cycle was repeated 12 times. During the experiment, three distinct attitudes towards the tasks were observed: (1) a cautious type, characterized by slow speed and repeated operations; (2) a careless and impatient type, marked by fast speed and eagerness to complete the task; and (3) an ordinary type, representing a natural and smooth condition between the first two. The first two types were deemed nonnatural and habitual, potentially leading to extreme satisfaction evaluations. However, only a small proportion of participants belonged to these categories, and their impact on the overall results was negligible. Although extreme cases required expert distinction during observational evaluation, the predominance of normal-type participants allowed for the integration of satisfaction evaluations through averaging. This approach effectively neutralized the influence of extreme evaluations, yielding data closer to the natural state of habitual use.

The test was divided into two sessions, one with 132 groups of 11 people and the other with 276 groups of 23 people, as it was difficult to organize the test with a large number of people, and the accuracy of the data might be affected if the test lasted too long. The average time for each group ranged from 11–133 s, with the average time for this test being longer because it was necessary for the participants to take off and put on their shoes; therefore, the operation time was affected by the type of shoe, e.g., high-top shoes took more time to take off and put on than low-top shoes did, and tying laces took more time than not tying laces did. In addition, the total time required for each test was approximately 4 h, and the participants were required to stay in the experimental area as much as possible during this time; therefore, the latter groups of the prototype group were prone to fatigue. It was observed from the two experiments that a test lasting approximately 4 h was the limit and that any longer time would have a negative effect on the fatigue of the participants, resulting in a satisfaction assessment that may not be entirely due to the experience of the prototype but rather to fatigue; therefore, the appropriate timing affected the efficiency and accuracy of the experiment.

Discussion of users’ habits in the experiment

The difference between the initial design prototype (Fig. 9) and the optimized design prototype (Fig. 8) is due mainly to the difference in the parameters of the B and E design variables, namely, the scale of the shoe changing stool and the position of the two combinations. Although these two variables were not significant factors, the experiment revealed that a smaller-scale shoe-changing stool was more suitable for participants of different heights, and the combination of the disinfectant spray and the seat surface was also more suitable for use. The 35 participants in the validation experiment exceeded the 34 participants in the previous test, most of whom had participated in the 12 sets of prototype tests, and a few of whom were new participants. The results of the validation experiments revealed that the optimized prototype was more usable than the initial prototype and exceeded the predicted optimization value through user use testing. After the two comparison experiments, the participants were briefly asked to describe their perceptions and preferences of the two groups. Most of the participants thought that the difference between the two groups in the use of functional awareness, convenience, ease, and complexity of feeling was not significant; however, there were some differences in the height scale of the seat. Because of height differences, most women thought that a shorter chair was better, whereas men preferred a longer chair; therefore, the choice of a low scale was suitable for most people, as both tall and short height people could use it. In addition, owing to participants’ height and arm length, most of the test participants thought that the nozzle of the disinfectant spray was more suitable for hand control because it was easy to use one hand to press the nozzle while using the other hand to catch the disinfectant liquid flowing from the nozzle. However, if it was positioned lower than half the seat surface, then two-handed operation required a greater degree of physical activity, with one arm requiring a strong twist of the waist and shoulder joints to assist in the operation. It was not possible to determine the preference of the participants for two-handed versus one-handed operation, but from the behavioural observations, two-handed operation was more stable in catching the liquid and was easier to manipulate delicately and adequately to catch sufficient disinfectant, whereas one-handed operation was unstable, and the liquid was more likely to splash (Fig. 10). In summary, the user’s habits had a significant impact on the design; thus, not only the consistency of use but also the user’s body scale and physiology should be considered.

Usability experiments primarily analyse and algorithmically measure the combination of parameters of product design variables through user testing, which is related mainly to the usage behaviour and perceptual feedback of the test subjects but also relies on the expert’s understanding of behaviour and data analysis. The subject’s intuitive feelings and physical and mental tolerance as the basis for judgement do not allow for a clear description of concepts such as effectiveness, efficiency, and satisfaction; therefore, the expert’s repetitive thinking is heavily relied upon before judging the subject’s state in performing the test task and in the analysis of the SUS questionnaire measurements. For example, subjects should perform the prototype manipulation task in a natural and relaxed manner and provide feedback on their true state; if they do not perform the task adequately or exaggerate their performance, it will be difficult for the experts to analyse the results. The researcher also assisted the experts in making appropriate judgements about the tested behaviours.

Discussion of hybrid taguchi methods for product integration innovation and functional parameter optimization

The hybrid Taguchi method offers significant advantages in product integration innovation and functional parameter optimization. The method aims to recombine multiple design variables and functional parameters and then optimize their combinations through user test experiments. Key concepts include recombination and optimization, particularly when multiple prototypes are optimized based on usability results. First, the method efficiently explores the optimization space of functional parameters within a limited number of experiments by integrating Taguchi’s systematic experimental design with user feedback from prototype testing. Second, it balances user experience with technical parameters, providing a scientific basis for implementation through Taguchi’s parameter optimization and validating the effects of parameter combinations through prototype experiments. Additionally, the hybrid Taguchi method is particularly suitable for multidisciplinary product integration and innovation, as it effectively integrates parameters from different functional modules and prevents overall performance degradation caused by single-module optimization.

However, the method has limitations. First, the complexity of experimental design is high, particularly in multidimensional parameter optimization, where the difficulties of experimental design and computational demands escalate significantly, placing high demands on the designer’s expertise and computational tools. Second, prototype experiments rely on subjective user feedback, and variations in user operating habits and preferences may lead to unstable results, especially when the user sample size is limited, potentially affecting the generalizability and reliability of findings. Third, the method’s applicability depends on the decomposability of the product and the quantifiability of the parameters, which may limit its implementation for complex products with highly integrated functions or nonquantifiable parameters.

Despite these challenges, the prototype usability experiment of the hybrid Taguchi method offers advantages in product integration innovation and functional parameter optimization, including high efficiency and systematicity. To fully leverage its benefits and address its limitations, the experimental program must be carefully designed in alignment with research objectives and resource constraints.

Conclusions

In this paper, we propose a hybrid Taguchi-based prototype usability experiment, which uses the residential foyer space as a living scenario and is guided by user usage habits, to design the functional integration of two daily-use products. The method has been validated through specific experiments, demonstrating strong operability, validity, and reliability. By combining the systematic nature of experimental design with the practicality of prototype usability experiments, this approach provides a scientific solution for product integration innovation and functional parameter optimization. Using Taguchi’s parameter and orthogonal experimental design strategies, the method efficiently identifies factors that significantly influence product performance. Prototype experiments further validate the practical effects of parameter combinations. The hybrid Taguchi method has significant advantages in product integration and innovation, particularly in multidisciplinary, cross-cutting scenarios. It effectively integrates the parameters of different functional modules, mitigating the overall performance degradation caused by single-module optimization. The incorporation of user feedback further enhances the method’s ability to balance the user experience with technical parameters, offering directional guidance for product design.

However, the current study focuses solely on function combinations and parameter settings tailored to user needs, without delving into hedonic quality aspects such as morphological creativity, cultural symbols, emotional interest, or aesthetic attributes. This limitation restricts the method’s applicability to CMF (colour, material, and process) design. Additionally, the method’s computational complexity and associated costs make it challenging to implement directly in corporate design practices or university teaching, necessitating further research into simplified approaches for designers.

In conclusion, the method proposed in this study is a design decision-making and thinking mode that relies on the parametric evaluation of statistical algorithms and transforms the design analysis process into a visual diagram for discussion to facilitate design students, designers, and technicians to carry out creative maintenance, making design development simpler and more efficient. In the future, the method can be combined with computer software technology to strengthen the interactive design and make the algorithm and operation more convenient so that designers can enter the functional configuration parameters of the product and then combine them with the evaluation mechanism of the internet, wherein they can quickly carry out the functional integration design of a variety of daily products.

Data availability

All data generated or analysed during this study are included in this published article [and its supplementary information files]. Furthermore, we uploaded the data related to the experimental results into the Figshare database and generated a DOI (https://doi.org/10.6084/m9.figshare.29301410).

References

Otto, K. & Wood, K. Product design: Techniques in reverse engineering and new product development (Prentice-Hall International Inc, 2001).

Meesters, J. The meaning of activities in the dwelling and residential environment: A structural approach in people-environment relations Vol. 27 (IOS Press, 2009).

Kyttä, M. Affordances of children’s environment in the context of cities, small towns, suburbs and rural villages in Finland and Belarus. J. Environ. Psychol. 22(1–2), 109–123 (2002).

Reed, E. S. The intention to use a specific affordance: A conceptual framework for psychology. In Development in context 61–92 (Psychology Press, 2014).

Friesen, E. L., Theodoros, D. & Russell, T. G. Usability-for-one. Work 52(1), 211–213 (2015).

Kernot, J., Olds, T., Lewis, L. K. & Maher, C. usabilitytesting and piloting of the mums step it up programa team-based social networking physical activity intervention for women with young children. PLoS ONE 9(10), e108842 (2014).

Dumas, J.S., & Redish, J.C., (1994). A Practical Guide to Usability Testing. Ablex, Norwood, NJ: Revised Edition. Oregon.

Hass, C. & Edmunds, M. Understanding usability and human-centered design principles. In Consumer informatics and digital health 89–105 (Springer, 2019).

MacDorman, K. F., Whalen, T. J., Ho, C. & Patel, H. An improved usability measure based on novice and expert performance. Int. J. Hum.-Comp. Interact. 27, 280–302 (2011).

Bevan, N. Measuring usability as quality of use. Softw. Qual. J. 4, 115–150 (1995).

Bevan, N. (1995). Usability is quality of use. In Anzai, Ogawa (Eds.), Proceedings of the Sixth International Conference on Human Computer Interaction (Vol. 20, pp. 349–354). Amsterdam: Elsevier.

Kortum, P. T. & Bangor, A. Usability ratings for everyday products measures with the System Usability Scale (SUS). Int. J. Hum. Comp. Interact. 29, 67–76. https://doi.org/10.1080/10447318.2012.681221 (2013).

Allam, A. H. & Dahlan, H. M. User experience: Challenges and opportunities. J. Inf. Syst. Res. Innov. 3(1), 28–36 (2013).

Kang, B., Crilly, N., Ning, W. & Kristensson, P. O. Prototyping to elicit user requirements for product development: Using head-mounted augmented reality when designing interactive devices. Des. Stud. 84, 101147 (2023).

Nelson, J. & Menold, J. Opening the black box: Developing metrics to assess the cognitive processes of prototyping. Des. Stud. 70, 100964 (2020).

Kent, L., Snider, C., Gopsill, J. & Hicks, B. Mixed reality in design prototyping: A systematic review. Des. Stud. 77, 101046 (2021).

McElroy, K. (2016). Prototyping for designers: Developing the best digital and physical products. O’Reilly Media, Inc..

Sivapragash, M., Kumaradhas, P., Retnam, B. S. J., Joseph, X. F. & Pillai, U. T. S. Taguchi based genetic approach for optimizing the PVD process parameter for coating ZrN on AZ91D magnesium alloy. Mater. Des. 90, 713–722 (2016).

Uzun, M. & Armagan, K. Optimization of compressive strength of concrete added glass powder using taguchi methods. Optimization 7(12), 864–868 (2018).

Huang, H. C., Xu, S. S. D. & Wu, C. H. A hybrid swarm intelligence of artificial immune system tuned with Taguchi-genetic algorithm and its field-programmable gate array realization to optimal inverse kinematics for an articulated industrial robotic manipulator. Adv. Mech. Eng. 8(1), 1687814015626380 (2016).

Ling, C. & Salvendy, G. Optimizing heuristic evaluation process in E-commerce: Use of the Taguchi method. Int. J. Hum.-Comp. Interact. 22(3), 271–287 (2007).

Li, Y. & Zhu, L. Optimisation of product form design using fuzzy integral-based Taguchi method. J. Eng. Des. 28(7–9), 480–504 (2017).

Li, Y. & Zhu, L. Optimization of user experience in mobile application design by using a fuzzy analytic-network-process-based Taguchi method. Appl. Soft Comp. 79, 268–282 (2019).

Achuthamenon Sylajakumari, P., Ramakrishnasamy, R. & Palaniappan, G. Taguchi grey relational analysis for multi-response optimization of wear in co-continuous composite. Materials 11(9), 1743 (2018).

Bademlioglu, A. H., Canbolat, A. S. & Kaynakli, O. Multi-objective optimization of parameters affecting Organic Rankine Cycle performance characteristics with Taguchi-Grey Relational Analysis. Renew. Sustain. Energy Rev. 117, 109483 (2020).

Deepanraj, B., Sivasubramanian, V. & Jayaraj, S. Multi-response optimization of process parameters in biogas production from food waste using Taguchi-Grey relational analysis. Energy Convers. Manage. 141, 429–438 (2017).

Prakash, K. S., Gopal, P. M. & Karthik, S. Multi-objective optimization using Taguchi based grey relational analysis in turning of Rock dust reinforced Aluminum MMC. Measurement 157, 107664 (2020).

Younas, M. et al. Multi-objective optimization for sustainable turning Ti6Al4V alloy using grey relational analysis (GRA) based on analytic hierarchy process (AHP). Int. J. Adv. Manuf. Technol. 105(1), 1175–1188 (2019).

Taguchi, G. Taguchi on Robust Technology Development Bringing Quality Engineering Upstream (ASME Press, 1993).

Shinde, D. D., Ahirrao, S. & Prasad, R. Fishbone diagram: Application to identify the root causes of student-staff problems in technical education. Wireless Pers. Commun. 100(2), 653–664 (2018).

Radziwill, N. Creating ishikawa (fishbone) diagrams with R. Softw. Qual. Pro. 20(1), 47–48 (2017).

Han, S. H., Yun, M. H., Kim, K. J. & Kwahk, J. Evaluation of product usability: Development and validation of usability dimensions and design elements based on empirical models. Int. J. Ind. Ergon. 26(4), 477–488 (2000).

Hornbæk, K. Current practice in measuring usability: Challenges to usability studies and research. Int. J. Hum. Comp Stud. 64(2), 79–102 (2006).

Zhou, R. & Chan, A. H. Using a fuzzy comprehensive evaluation method to determine product usability: A proposed theoretical framework. Work 56(1), 9–19 (2017).

Frøkjær, E., Hertzum, M., & Hornbæk, K. (2000). Measuring usability: are effectiveness, efficiency, and satisfaction really correlated?. In Proceedings of the SIGCHI conference on Human Factors in Computing Systems (pp. 345–352). New York, NY: Association for Computing Machinery.

Kortum, P. & Peres, S. C. The relationship between system effectiveness and subjective usability scores using the System Usability Scale. Int. J. Hum.-Comp. Interact. 30(7), 575–584 (2014).

Brooke, J. SUS: A retrospective. J. Usability Stud. 8(2), 29–40 (2013).

Revythi, A. & Tselios, N. Extension of technology acceptance model by using system usability scale to assess behavioral intention to use e-learning. Educ. Inf. Technol. 24(4), 2341–2355 (2019).

Deng, J. L. Introduction to grey system theory. J. Grey Syst. 1(1), 1–24 (1989).

Huang, Y., Shen, L. & Liu, H. Grey relational analysis, principal component analysis and forecasting of carbon emissions based on long short-term memory in China. J. Clean. Prod. 209, 415–423 (2019).

Naqiuddin, N. H. et al. Numerical investigation for optimizing segmented micro-channel heat sink by Taguchi-Grey method. Appl. Energy 222, 437–450 (2018).

Chang, C. S. Multi-level grey relation comprehensive evaluation method for universal design. J. Grey Syst. 17(4), 229–237 (2014).

Dubey, S. K., Mittal, A. & Rana, A. Measurement of object oriented software usability using fuzzy AHP. Int. J. Comp. Sci. Telecom. 3(5), 98–104 (2012).

Karna, S. K., Singh, R. V. & Sahai, R. Application of Taguchi method in indian industry. Int. J. Emerg. Technol. Adv. Eng. 2(11), 387–391 (2012).

Chen, H. C., TU, J. C. & Guang, S. S. Research on application of taguchi method into optimal green kansei form design. Bull. Japanese Soc. Sci. Des. 62(5), 5_21-5_30 (2016).

Ross, P. J. Taguchi Techniques for Quality Engineering: Loss Function, Orthogonal Experiments, Parameter and Tolerance Design 2nd edn. (McGraw-Hill, 1996).

Roy, R. K. Design of Experiments using the Taguchi Approach: 16 Steps to Product and Process Improvement (John Wiley & Son Inc, 2001).

Findiani, R. Improvement of rHDPE plastic quality using six sigma and taguchi methods. J. Eng. Manage. Ind. Syst. 7(2), 77–89 (2019).

Dumas, J., & Redish, J. C. (1999). A practical guide to usability testing. Portland, OR: Intellect.

Rubin, J. & Chisnell, D. Handbook of usability testing: How to plan, design, and conduct effective tests 2nd edn. (John Wiley & Sons, 2008).

Lewis, J. R. Usability testing. Handb. Hum. Factors Ergon. 12, e30 (2006).

Bradley, R. A. Science, statistics, and paired comparisons. Biometrics 32(2), 213–239 (1976).

Salvendy, G. Handbook of human factors and ergonomics 4th edn. (John Wiley & Sons Inc., 2012).

Marshall, C., Brendan, M. & Prail, A. Usability of product X - lessons from a real product. Behav. Info. Technol. 9, 243–253 (1990).

Nielsen, J. (1997). Usability testing. In G. Salvendy (Ed.), Handbook of Human Factors and Ergonomics. New York, NY: John Wiley & Sons.

Timoshenko, S., & Young, D. H. (1937). Engineering Mechanics - Statics. New York, NY: Tata McGraw-Hill Education.

Longo, L. (2017). Subjective usability, mental workload assessments and their impact on objective human performance. In IFIP Conference on Human-Computer Interaction (pp. 202–223). Springer, Cham.

Fu, Y. L. & Liang, K. C. Fuzzy logic programming and adaptable design of medical products for the COVID-19 anti-epidemic normalization. Comp. Methods Prog. Biomed. 197, 105762 (2020).

Acknowledgements

This study was supported by the Youth Science Foundation of Zhejiang University of Science and Technology (Project No. 2025QN097). We sincerely thank the participants of the prototype-usability experiment for their active participation and valuable feedback. We are also grateful for the research funding provided by Zhejiang University of Science and Technology and Suzhou Gold Mantis Construction Decoration Co.

Funding

Youth Science Foundation of Zhejiang University of Science and Technology, 2025QN097.

Author information

Authors and Affiliations

Contributions

You-Lei Fu: Conceptualization; Data curation; Formal analysis; Funding acquisition; Investigation; Methodology; Visualization; Formal analysis. Kuei-Chia Liang: Conceptualization; Data curation; Project administration; Supervision. Linxin Zheng: Funding acquisition; Resources.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Fu, YL., Liang, KC. & Zheng, L. Experimental optimization of user-habit-oriented daily products via integrated innovation and functional parameter analysis. Sci Rep 15, 36452 (2025). https://doi.org/10.1038/s41598-025-20376-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-20376-0