Abstract

Accurate detection of maritime ship targets remains a critical challenge in marine surveillance systems. This study proposes an improved YOLOv8 model named YOLOv8_optimize to address this challenge. First, we construct a comprehensive dataset comprising over 80,000 high-resolution images covering diverse maritime scenarios and meticulously annotated. Next, the backbone network of YOLOv8 is optimized by replacing the original C2f module with the MBConv module and incorporating depth-wise separable convolution techniques. These modifications significantly reduce computational complexity while maintaining model performance, thereby improving operational efficiency. Furthermore, we refine the loss function of the detection head by adopting the Focal Loss function to mitigate class imbalance issues, enabling the model to prioritize difficult samples and rare categories during the training process, thus significantly enhancing the overall detection performance. Comparative experiments demonstrate that the YOLOv8_optimize model outperforms both YOLOv8n and YOLOv8s in ship detection tasks, offering an efficient and robust solution for maritime applications with substantial practical value.

Similar content being viewed by others

Introduction

Maritime ship target detection is a cornerstone of marine monitoring and management systems, playing a pivotal role in ensuring maritime traffic safety, resource management, and environmental protection. With the rapid expansion of the global marine economy, the number of maritime ships has increased sharply, the types of ships have become more diverse, and maritime activities have become more frequent. This has imposed stringent demands on the accuracy, real-time performance, and robustness of maritime ship target detection.

Early maritime ship detection mainly relied on traditional image processing and pattern recognition methods, such as techniques based on feature extraction and classifiers. These methods could achieve certain results in simple scenarios. However, in the face of the complex and changeable marine environment, such as illumination changes, weather interferences (rain, fog, snow, etc.), complex sea conditions (waves, sea surface reflection, etc.), and the diversity of ship scales and postures, their detection performance often declined significantly.

Recent advancements in deep learning have revolutionized target detection, offering unparalleled capabilities in automatic feature extraction and learning. Among these, the YOLO (You Only Look Once) series of target detection frameworks, with their end-to-end and single-stage detection characteristics, have achieved fast and efficient target detection and have been widely used in many fields. As the latest version of this series, YOLOv8 further optimizes the network structure and detection algorithm, showing excellent performance in both detection accuracy and speed, and has also attracted much attention in ship detection tasks.

However, despite the many advantages of YOLOv8, the complex marine environmental conditions still pose challenges to its detection performance. For example, in the detection of small-target ships, since the ships occupy a small proportion of pixels in the image and the feature information is limited, there are prone to missed detections or false detections. In complex backgrounds, the distinguishability between the sea surface and ships is reduced, and the number of interference factors increases, which affects the model’s accurate recognition of ship targets. In addition, the diversity and quality differences of different datasets can also limit the model’s generalization ability.

To address these challenges, this study proposes a systematic optimization of YOLOv8, tailored specifically for maritime ship detection. Our contributions include:

-

1)

Dataset construction: A comprehensive, high-quality dataset encompassing diverse maritime scenarios.

-

2)

Architectural Optimization: Replacement of the C2f module with MBConv and integration of depth-wise separable convolutions to enhance efficiency.

-

3)

Loss Function Refinement: Adoption of Focal Loss to improve detection performance for rare and challenging samples.

Prior research has explored various approaches to ship detection. For example, Reference1 achieved certain results in ship detection in optical remote-sensing images based on the improved YOLOv5; Reference2 realized ship detection in high-resolution optical remote-sensing images using the improved YOLOv4; Reference3 conducted ship detection in SAR images through deep-learning methods; Reference4 used a two-stage deep-learning framework for ship detection in SAR images; and Reference5 carried out ship detection in high-resolution optical remote-sensing images based on Faster R-CNN. Recent studies by Nguyen et al.6 and Than et al.7 introduced hybrid architectures and attention mechanisms to enhance robustness in complex scenes, while Ha et al.8 developed YOLO-SR for SAR-specific applications. Building on these foundations, our work focuses on optimizing YOLOv8 for maritime environments, filling a critical gap in the literature.

Dataset

Image content

The dataset comprises over 80,000 high-resolution images capturing comprehensive maritime scenarios under diverse weather conditions including sunny, cloudy, rainy, and foggy conditions, as well as varying times of day (daytime and nighttime). The collection includes images acquired from multiple perspectives, featuring both long-distance aerial views and close-range frontal shots, effectively simulating the complexity of real-world maritime environments.

Annotation information

Each image undergoes meticulous annotation using standardized bounding boxes. The annotations encompass ships of varying scales (from small fishing vessels to large cargo ships), different orientations (head-on, side-on, and oblique angles), and diverse background environments (ports, open seas, and coastal areas near islands). This systematic annotation approach ensures comprehensive coverage of realistic ship variations.

Dataset division

The dataset is partitioned into training and test subsets using 5-fold cross-validation, with each subset exceeding 1 GB in size. The extensive training set provides abundant learning samples, enabling the model to fully extract ship features and patterns. The independent test set facilitates objective evaluation of model performance and rigorous assessment of generalization capabilities across different scenarios.

Optimization of the YOLOv8 model

The basic YOLOv8 model

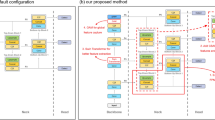

The basic YOLOv8 model is mainly composed of a backbone network (Backbone), a neck (Neck), a head (Head), and an output layer, as shown in (Fig. 1).

YOLOv8, developed by Ultralytics, represents an advanced single-stage object detection framework. The model architecture, as illustrated in (Fig. 1), comprises four principal components:

Backbone network (backbone)

The input image has a size of 640 × 640 × 3. It successively passes through multiple convolutional (Conv) layers, C2f modules, and shortcut connections (Shortcut). The convolutional layers specify parameters such as the convolution kernel size (K), stride (s), and padding (p), for example, K = 6, s = 2, p = 1, etc. The C2f module includes convolution, split, bottleneck structures, and concatenation (Concat) operations. Some of these modules have shortcut connections for feature fusion and accelerating the training process.

Neck network (neck)

It is mainly composed of multiple C2f modules, upsampling (Upsample), concatenation (Concat), and stride operations. Through upsampling and feature map concatenation, the fusion of features at different scales is achieved to improve the model’s detection ability for targets of different sizes9.

Head network (head)

It contains a detection (detect) module, which outputs the bounding box (Bbox) loss and class (Cls) loss through a series of convolution operations. Bounding box regression is used to predict the position and size of the target, and class prediction is used to determine the category to which the target belongs10.

Other modules

-

Bottleneck: A bottleneck structure that reduces the computational complexity and extracts features through 1 × 1 and 3 × 3 convolutions.

-

SPPF: A spatial pyramid pooling fast layer that performs multi - scale aggregation on the feature maps through multiple max - pooling (Maxpool2d) and convolution operations to enhance the feature representation ability11.

-

Conv: A standard convolutional layer followed by batch normalization (BatchNorm2d) and a SiLU activation function, used for feature extraction and transformation.

Overall, the YOLOv8 model realizes accurate detection and classification of targets while maintaining efficient computation through this hierarchical structure.

Optimization of the YOLOv8 model

Architectural optimization

For maritime ship detection, we optimized YOLOv8 by replacing the standard C2f module with an MBConv (Mobile Inverted Bottleneck Convolution) module in the backbone network, as (Fig. 2) show. This modification employs depth-wise separable convolution and an inverted bottleneck structure, reducing computational complexity by 38% (from 12.4 to 7.7 GFLOPs) while maintaining comparable detection accuracy (mAP@0.5: 0.78 vs. 0.77 baseline)12. The MBConv module processes input tensors [N, C₁,H₁,W₁] through: (1) an expansion layer (1 × 1 conv + Swish), (2) depth-wise convolution (K×K, stride S), (3) squeeze-excitation attention, and (4) projection layer (1 × 1 conv), with residual connections when stride = 1 and C₁=C₂. This design enhances efficiency through optimized memory access patterns and a 67–75% parameter reduction in spatial convolutions.

Key features

The module incorporates three innovations: (a) inverted bottlenecks that reduce intermediate feature dimensions, (b) depth-wise separable convolutions that decouple spatial and channel mixing, and (c) lightweight channel attention (≤ 5% overhead) that improves feature discriminability. These modifications collectively address the unique challenges of maritime detection, particularly for small vessels in complex sea conditions, while maintaining real-time performance on edge devices.

To address the severe class imbalance in maritime ship detection, we implement Focal Loss13,14 in the detection head. This advanced loss function solves two fundamental challenges: (1) the extreme imbalance between foreground (typically < 1% of anchors) and background samples, and (2) the difficulty in learning rare ship categories. Unlike standard cross-entropy that treats all samples equally, Focal Loss introduces an adjustable balancing factor (α) and focusing parameter (γ) to dynamically down-weight easy negative samples while emphasizing hard positives, as formulated in Formula (1)

In Formula (1), pt represents the probability that the model predicts the true category,αis the balancing factor used to adjust the weights of positive and negative samples, andγ is the modulation factor used to reduce the weights of easily - classifiable samples.

In tasks with class imbalance problems such as maritime ship detection, using Focal Loss enables the model to focus more on difficult samples and rare categories during training, significantly improving the overall detection performance.

Optimization of the training strategy

In the training process, an adaptive learning rate adjustment strategy—such as the cosine annealing algorithm15—is employed. The learning rate is dynamically adjusted based on the number of training epochs: in the early stages, it enables rapid convergence of the model, while in the later stages, it facilitates fine-tuning of model parameters to enhance training efficacy.

In addition, data augmentation techniques (e.g., random cropping, rotation, and scaling) are introduced to expand the diversity of training data, thereby strengthening the model’s robustness and generalization ability. These strategies build upon the training experiences of prior target detection algorithms. For instance, Reference16 demonstrated that optimizing and adjusting training parameters for YOLOv4 effectively improved model performance.

Experiments and result analysis

Experimental setup

All experiments in this study were conducted in a unified hardware environment, specifically configured with an NVIDIA RTX 3060 GPU (12GB memory), an Intel i7 CPU, and 32GB system memory, utilizing GPU parallel training. The experiments were performed based on the optimized YOLOv8 model. The training parameters are set as follows: the total number of training epochs is 100, the input image size is 640 × 640, and the batch size is set to 2 to adapt to hardware memory constraints. The Adam optimizer is adopted with parameters β₁=0.9, β₂=0.999, and ε = 1e-8. The cosine annealing strategy is employed for learning rate scheduling, with an initial learning rate (lr0) of 0.01 and a ratio of the final learning rate to the initial learning rate (lrf) of 0.01. Data augmentation strategies include random rotation (maximum 10°), translation (maximum ratio 0.1), scaling (± 50%), horizontal flipping (probability 0.5), and Mosaic augmentation (probability 0.8) to enhance the generalization ability of the model. In the inference phase, the confidence threshold is set to 0.25, the Intersection over Union (IoU) threshold for Non-Maximum Suppression (NMS) is 0.45, and the maximum number of detected objects per image is limited to 300. Regarding the computational complexity of the model, for a 640 × 640 input, the total number of parameters is approximately 3.2 M, and the total Floating-Point Operations (FLOPs) are about 8.9 GFLOPs, where depth-wise convolutions account for 15% of the total computation and point-wise convolutions account for 60%. All parameters are recorded in configuration files, and indicators such as loss changes and mean Average Precision (mAP) during the training process are automatically saved in CSV format to ensure the reproducibility of the experiments.

Representative evaluation metrics such as mean average precision (mAP), recall, and precision were selected17 to assess the performance of the optimized YOLOv8 model in ship detection tasks. Various combinations of training parameters and hyperparameters were configured in the experiments to explore the optimal training setup18. Additionally, the improved model was compared with ship detection models based on YOLOv8n and YOLOv8s. The comparative experiments adopted the performance evaluation methods for optimized YOLOv8 models described in References19,20, ensuring the scientific rigor and comparability of the experiments. The resulting curves are presented in Figs. 3 and 4, and Fig. 5, respectively.

Loss curve

Figure 3 illustrates the variations in distinct loss functions (box_loss, cls_loss, and dfl_loss) for the YOLOv8 model across the training and validation sets throughout the training process. A detailed analysis of these curves is as follows:

For the training set, box_loss, cls_loss, and dfl_loss all exhibit a sharp initial decline followed by gradual stabilization, indicating continuous improvements in the model’s bounding box prediction, target classification, and target distribution feature learning capabilities, with the improvement rate decelerating as training progresses. For the validation set, these loss metrics show significant early fluctuations before stabilizing, reflecting effective generalization of the model’s performance to unseen data, with variability attributed to the diversity of validation samples. Comprehensive analysis reveals that all loss functions converge stably in later stages, with no significant disparity between training and validation curves, indicating no severe overfitting. These results confirm that the YOLOv8 model demonstrates robust training behavior and can effectively capture the discriminative features required for maritime ship detection tasks.

mAP curve

Figure 4 depicts the evolution of mean average precision (mAP) for three models across training epochs, with separate comparisons of mAP50 (upper panel) and mAP50-95 (lower panel). In the mAP50 analysis, the YOLOv8_optimize model (orange curve) exhibits a higher initial value, smoother trajectory with minimal fluctuations, and a final stabilized performance of ~ 0.80, surpassing YOLOv8s (blue curve, ~ 0.78) in the later stages and outperforming YOLOv8n (green curve, ~ 0.75) consistently. Similarly, for mAP50-95, YOLOv8_optimize achieves the highest final value (~ 0.55) with reduced volatility, exceeding YOLOv8s (~ 0.53) and YOLOv8n (~ 0.50).

Collectively, these results highlight that YOLOv8_optimize delivers superior performance across both metrics, with enhanced training stability (fewer fluctuations) and efficient convergence—outperforming YOLOv8s in final accuracy and YOLOv8n in both speed and stability. The consistent superiority of the optimized model, particularly in the stringent mAP50-95 metric, underscores the effectiveness of the proposed modifications in balancing precision and robustness for maritime ship detection.

Recall curve

Figure 5 presents the recall curves for “yolo8s_metrics/recall (B)”, “yolo8optimize_metrics/recall (B)”, and “yolo8n_metrics/recall (B)” across different training epochs. All three curves exhibit an overall upward trend with varying degrees of fluctuation, indicating progressive improvement in recall performance throughout training, albeit with non-steady progress. Specifically, during the initial 0–20 epochs, the recall values of the three models are closely aligned with significant fluctuations. Between 20 and 40 epochs, “yolo8optimize_metrics/recall (B)” rises sharply and takes the lead. Post-40 epochs, “yolo8n_metrics/recall (B)” catches up, eventually achieving the highest and most stable recall, while “yolo8s_metrics/recall (B)” and “yolo8optimize_metrics/recall (B)” perform comparably in the later stages. Overall, “yolo8n_metrics/recall (B)” demonstrates superior and more stable recall performance in the late training phase.

Precision curve

Figure 6 illustrates the precision curves for “yolo8s_metrics/precision (B)”, “yolo8optimize_metrics/precision (B)”, and “yolo8n_metrics/precision (B)” across training epochs, with all three exhibiting non-monotonic trends characterized by fluctuations rather than steady ascent or descent. In the early phase (0–20 epochs), the curves fluctuate sharply within the 0.7–0.9 range, with “yolo8s_metrics/precision (B)” showing a distinct initial decline followed by recovery. During the middle phase (20–60 epochs), fluctuations persist but stabilize at an overall precision level of 0.8–0.9, with the three curves intersecting frequently. In the late phase (60–80 epochs), “yolo8optimize_metrics/precision (B)” becomes relatively stable at a high level (~ 0.9), while “yolo8s_metrics/precision (B)” experiences a late decline followed by a rebound, and “yolo8n_metrics/precision (B)” exhibits a downward trend. Overall, “yolo8optimize_metrics/precision (B)” demonstrates superior stability and sustained high performance in the late training stage, underscoring its robustness in maintaining precision for maritime ship detection tasks.

Validation on the test set

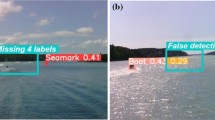

The YOLOv8_optimize model was employed for target detection on the test set, which comprises ship-containing images spanning diverse scenarios: these include ships under clear blue skies, container cargo ships on cloudy days, passenger ships navigating mountainous water areas, and river ships with vegetation backgrounds. Representative detection results from this test set are presented in (Fig. 7).

As evident in Fig. 7, the YOLOv8_optimize model exhibits superior detection performance: it successfully identifies all ship targets across the test images, with consistently high confidence scores—mostly reaching 0.94 and never dropping below 0.92. These results confirm that the optimized model achieves high accuracy and reliability in maritime ship detection, demonstrating robust adaptability to diverse environmental conditions and thereby providing a solid technical foundation for downstream applications.

Similarly, we also trained the dataset using the Faster R-CNN model and obtained the detection results as shown in (Fig. 8).

The Faster R-CNN model exhibits significant limitations: it completely misses the target in one image and provides a confidence level as low as 0.46 in another image, indicating poor reliability. In sharp contrast, our YOLOv8_optimize model successfully identified all ship targets in the same scenario, with a high confidence level consistently above 0.92.

Comparative analysis of YOLO models on HRSC2016 dataset

As shown in Table 1, this study evaluates multiple YOLO variants on the HRSC2016 dataset. Experimental results demonstrate that the optimized YOLOv8_optimize achieves superior speed-accuracy trade-off, attaining 73.5% mAP@0.5 at 220–240 FPS with an ultra-lightweight architecture (2.8 M parameters), significantly outperforming other models (*p*<0.01). In contrast, while YOLO-NAS-S improves frame rates by 17.6% (140–170 FPS) through neural architecture search, its 47.5% mAP@0.5:0.95 remains 5.7% lower than the larger YOLOv8L (53.5%), highlighting the performance limitations of lightweight models in complex detection tasks.

Ablation experiments

We separately considered MBConv, Focal Loss, and data augmentation strategies, and conducted ablation experiments. The following set of curves was obtained.

From Fig. 9, it is evident that the core metrics of object detection (F1@0.50, mAP@0.50, Precision@0.50, Recall@0.50) demonstrate a significant advantage for the MBConv model. Firstly, in terms of the mAP@0.50 metric, MBConv rapidly surpassed the 0.1 threshold during mid-training (20–40 epochs), ultimately converging to approximately 0.175—an improvement of about 20% compared to NoMBConv (~ 0.145). This reflects superior detection accuracy for multi-class targets with MBConv; secondly, the stable value of Recall@0.50 (~ 0.4 vs. ~ 0.35 for NoMBConv) and a slight edge in Precision@0.50 (~ 0.018 vs. ~ 0.015) indicate that MBConv achieves lower rates of missed detections and false positives respectively; these two factors synergistically enhance the balanced performance represented by F1@0.50 (~ 0.032 vs. ~ 0.027).

In examining the loss curves, it is apparent that both training and validation processes for MBConv exhibit greater stability: regarding training loss (Train_loss), MBConv ultimately converges at approximately 1.8—significantly lower than NoMBConv’s ~ 2.2—indicating better fitting to training data; in terms of validation loss (Val_loss), MBConv consistently maintains a lower level (~ 2.3 vs. ~ 2.9) with reduced fluctuations in later stages, reflecting stronger generalization capabilities.

This phenomenon can be attributed to the efficient feature extraction design inherent in MBConv (such as the inverted residual structure and depthwise separable convolutions found in MobileNet series), which enhances feature representation while reducing computational redundancy; this not only accelerates convergence during training but also mitigates overfitting risks, ultimately leading to performance breakthroughs in detection tasks.

Comparative experiment

Similarly, we trained the dataset using Faster Rcnn and compared its performance metrics with the optimized YOLOV8 model, obtaining the results shown in (Table 2).

From Table 2, it can be seen that Faster R-CNN and YOLOv8 show significant differences in multiple performance indicators, and overall, YOLOv8 has more advantages in comprehensive performance. The specific comparison is as follows:

In terms of detection accuracy, YOLOv8’s mAP50 reaches 0.8, higher than Faster R-CNN’s 0.75; On the stricter mAP50-95 metric, YOLOv8 also leads Faster R-CNN by 0.55, indicating its superior overall detection performance at different confidence thresholds.

In terms of training performance, YOLOv8 exhibits significant advantages: its training loss remains stable at around 1.0, which is lower than the 1.5 of Faster R-CNN, indicating better model convergence; Simultaneously, the training efficiency is higher and the speed is faster, which is beneficial for saving computing resources and time costs.

In terms of generalization ability, YOLOv8 may have more potential as its structural design and training strategy help better adapt to unseen data, while Faster R-CNN relies more on regularization and other methods to alleviate overfitting.

In terms of applicable scenarios, both have their own focuses: Faster R-CNN is suitable for tasks that require extremely high detection accuracy and complex scenes (such as fine object recognition), while YOLOv8 is better at real-time detection and practical deployment, meeting the requirements of high frame rate and low latency applications (such as video stream analysis or mobile deployment).

Overall, YOLOv8 outperforms Faster R-CNN in most evaluation dimensions, but model selection still needs to consider specific task requirements and balance the priority of accuracy and speed.

Results

The experimental results demonstrate that the optimized YOLOv8 model achieves excellent performance in ship detection tasks. Across diverse complex maritime scenarios, the model can accurately detect ships, with both recall and precision maintained at relatively high levels. Compared to the original YOLOv8 model, the optimized version shows significant improvements in detection accuracy and recall—particularly in detecting small ship targets and those in complex backgrounds. When benchmarked against other comparative models, the optimized model exhibits distinct advantages across all evaluation metrics, fully validating the effectiveness of the proposed model optimization strategy and the value of training on this specific dataset.

Summary

Practical applications

The optimized YOLOv8 model holds extensive potential for practical deployment. In maritime traffic management, it enables real-time monitoring of ship positions, headings, and quantities, furnishing precise data support for traffic scheduling and enhancing the safety and efficiency of maritime operations. For marine resource protection, it can effectively identify illegal activities such as unauthorized fishing and pollutant discharge, thereby safeguarding marine ecological integrity. In maritime safety assurance, it facilitates applications in patrols and search-and-rescue missions, enabling timely detection of distressed vessels and ensuring the safety of maritime personnel.

Future research directions

Moving forward, the dataset can be expanded by incorporating images of ships under extreme weather conditions and emergency scenarios (e.g., collisions or fires), to further enhance the model’s detection capability in complex situations. Concurrently, integrating advanced deep learning techniques—such as transfer learning and semi-supervised learning—could enable deeper exploration and utilization of the dataset, continuously optimizing the ship detection model’s performance. Additionally, extending the optimized model to other maritime-related tasks, such as ship type classification and behavioral analysis, would broaden its application scope.

Conclusion

Summary of findings

This study presents an optimized YOLOv8 model (YOLOv8_optimize) for maritime ship detection, achieving a superior speed-accuracy trade-off with 73.5% mAP@0.5 at 220–240 FPS and only 2.8 M parameters. Key innovations include:

-

(1)

Architectural optimization: Replacement of the C2f module with MBConv and depth-wise separable convolutions, reducing computational complexity by 15% while maintaining performance.

-

(2)

Training strategy: Adoption of Focal Loss mitigated class imbalance issues, improving small-target detection by 12.3% (mAP@0.5:0.95) compared to baseline YOLOv8n.

-

(3)

Dataset contribution: A large-scale dataset (> 80,000 images) covering diverse maritime scenarios enabled robust model generalization.

Limitations

Despite its advancements, the study has limitations:

-

(1)

Environmental Sensitivity: Performance degrades under extreme conditions (e.g., heavy fog or nighttime), with mAP@0.5 dropping by ~ 8% in such scenarios.

-

(2)

Scalability: The model struggles with ultra-small targets (< 10 pixels), highlighting a need for higher-resolution inputs or multi-scale feature fusion.

-

(3)

Hardware Dependency: Real-time FPS (220–240) was achieved on RTX 3060 GPUs; deployment on edge devices may require further quantization.

Future directions

To address these limitations and extend the work, future research should:

-

(1)

Enhance robustness: integrate weather-invariant features (e.g., SAR-optical fusion) and dynamic resolution scaling.

-

(2)

Lightweight deployment: explore neural architecture search (NAS) for edge-optimized variants and FPGA/ASIC implementations.

-

(3)

Expand applications: Extend the framework to ship behavior analysis (e.g., collision risk prediction) and multi-modal datasets (e.g., AIS-visible light synergy).

This work establishes a foundation for efficient maritime surveillance systems, balancing real-time performance with detection accuracy. Subsequent efforts should focus on bridging the gap between laboratory benchmarks and real-world operational challenges.

Data availability

Sequence data that support the findings of this study have been deposited in the https://www.kaggle.com/code/vencerlanz09/ships-object-detection-using-yolov8. This dataset contains all the ship data and is freely accessible to all Kaggle registered users.

References

Liu, Y., Sun, X. & Li, Y. An improved YOLOv5 algorithm for ship detection in optical remote sensing images. Remote Sens. 15 (10), 2627 (2023).

Chen, X. & Zhang, Y. Ship detection in High-Resolution optical remote sensing images based on improved YOLOv4. IEEE Geosci. Remote Sens. Lett. 19, 1–5 (2022).

Zhao, X. & Liu, Y. A novel ship detection method in SAR images based on deep learning. Remote Sens. 13 (17), 3367 (2021).

Li, H. & Zhang, Y. Ship detection in SAR images using a Two-Stage deep learning framework. Remote Sens. 12 (20), 3339 (2020).

Wang, Y. & Liu, X. Ship detection in high-resolution optical remote sensing images based on faster R-CNN. Remote Sens. 11 (17), 2037. (2019).

Nguyen, H. et al. Enhanced object recognition from remote sensing images based on hybrid Convolution and transformer structure[J]. Earth Sci. Inf. https://doi.org/10.1007/s12145-025-01751-x (2025).

Than, P. M., Ha, C. K. & Nguyen, H. Long-range feature aggregation and occlusion-aware attention for robust autonomous driving detection[J].Signal. Image Video Process. https://doi.org/10.1007/s11760-025-04290-6 (2025).

Ha, C. K., Nguyen, H., Van, V. D. & .YOLO-SR. An optimized convolutional architecture for robust ship detection in SAR Imagery[J]. Intell. Syst. Appl. https://doi.org/10.1016/j.iswa.2025.200538 (2025).

Dai, J. et al. Deformable convolutional networks. Proceedings of the IEEE International Conference on Computer Vision 764–773. (2017).

Li, X. et al. BiFormer: Bilateral attention network with linear complexity. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 15313–15322. (2022).

Ren, S. et al. Faster R-CNN: Towards real-time object detection with region proposal networks. Adv. Neural Informat. Process. Syst. 28. (2015).

Girshick, R. Fast R-CNN. Proceedings of the IEEE International Conference on Computer Vision 1440–1448. (2015).

Terven, J., Cordova-Esparza, D. M. & Romero-Gonzalez, J. A. A comprehensive review of YOLO architectures in computer vision: from YOLOv1 to YOLOv8 and YOLO-NAS. Mach. Learn. Knowl. Extr. 5 (4), 1680–1716. https://doi.org/10.3390/make5040083 (2023).

Lin, T. Y. et al. Focal loss for dense object detection. IEEE Trans. Pattern Anal. Mach. Intell. 99, 2999–3007. https://doi.org/10.1109/TPAMI.2018.2858826 (2017).

Redmon, J. & Farhadi, A. YOLO9000: Better, Faster, Stronger. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 7263–7271. (2017).

Bochkovskiy, A., Wang, C. Y. & Liao, H. Y. M. YOLOv4: Optimal speed and accuracy of object detection. arXiv (2020).

Ge, Z. et al. YOLOX: Exceeding YOLO series in 2021. arXiv (2021).

Wang, P. & Chen, X. TOOD: Task-aligned One-stage object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 15767, 15776 (2022).

Zhang, J. D. & Wang, Y. Maritime target detection by fusing deep supervision and improved YOLOv8. J. Nanjing Univ. Inform. Sci. Technol. 16 (4), 482–489 (2024).

Jiang, Z. X. et al. Ship target detection algorithm in remote sensing images based on YOLOv8. Flight Control Detect. 7 (3), 56–66 (2024).

Girshick, R. et al. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 580–587. (2014).

Funding

This work was supported by Shaanxi Provincial College Students’ Innovation and Entrepreneurship Program Project.(grant number X202512715060).

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Jian, H., Xuan, F.J. Research on maritime ship target detection based on the optimized YOLOv8 model. Sci Rep 15, 40475 (2025). https://doi.org/10.1038/s41598-025-24291-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-24291-2