Abstract

The sense of agency (SoA), or the subjective feeling of causing and controlling action outcomes, is fundamental to how we engage with the world. In the present study, we investigated how SoA is influenced when individuals unintentionally elicit emotional expressions in others. In Experiment 1, participants freely selected actions that triggered either happy or sad facial expressions in the humanoid robot iCub. However, 20% of the time, when participants intended to elicit a happy expression, the robot unexpectedly displayed a sad one. Results showed reduced SoA when they accidentally made iCub display a sad expression. Experiment 2 replicated Experiment 1 with design adjustments to improve control. The results mirrored Experiment 1, with SoA again lower for unexpected sad expressions than for intentional ones. In Experiment 3, to determine whether the effect was due to the emotional content or simply outcome predictability, we replaced the expressions with emotionally neutral color changes on iCub’s facial LED lights. The results showed no significant difference in SoA between accidental and intentional outcomes, suggesting that emotional content played a key role in Experiments 1 and 2. These findings highlight that SoA is specifically affected when emotional expressions occur as action outcomes, in ways that go beyond the role of outcome predictability.

Similar content being viewed by others

Introduction

In daily life, people navigate environments where their actions lead to a variety of outcomes. Sense of agency, the subjective experience of feeling that ‘I’ am the one generating an action and its subsequent effects1,2, plays a central role in shaping our interactions with the physical and social environment. This perception of control influences our sense of authorship and efficacy in determining outcomes, whether we are manipulating objects or influencing the behaviors of others. To assess the sense of agency, researchers often employ implicit measures, to avoid the potential biases inherent in explicit judgments3. A widely used implicit measure of sense of agency is the intentional binding effect, which captures the perceived temporal compression between a voluntary generated action and its outcome2,4,5. Stronger binding (higher degree of temporal compression) is thought to indicate an increased sense of agency, suggesting a closer perceived connection between an individual’s action and its effect (for a review, see6). However, recent studies have also argued that intentional binding might reflect sensory or causal processing rather than agency per se7,8,9,10.

Humans show a sensitivity to the effects of their actions from early in development. Social development theories suggest that infants quickly learn the contingencies between their actions and external responses, fostering social engagement and positive affect11,12. This foundational sensitivity to action-outcome relations aligns with models of action control, such as forward and comparator models13,14,15. These models propose that the sense of agency depends on the match between predicted and actual outcomes: when outcomes unfold as expected, the sense of agency is enhanced; when they deviate, it diminishes.

In social contexts, our actions can elicit emotional reactions in others, such as a smile or a frown. These responses serve as feedback and can influence how we perceive control over the interaction. Research suggests that emotionally charged outcomes can shape the sense of agency, depending on whether they are positive or negative (see16,17 for a review). However, the findings are mixed. Some studies indicate that negative affective outcomes reduce the sense of agency, potentially due to a self-serving bias, where individuals distance themselves from negative outcomes and take ownership of positive or neutral ones18,19,20,21. For example, Yoshie and Haggard21 found that participants’ sense of agency diminished when their actions produced negative emotional vocalizations, which was interpreted as a reluctance to accept negative responsibility. Similarly, Gentsch et al.19 observed that participants were more likely to attribute positive emotional expressions (i.e., happy expressions) to their own actions, while attributing negative outcomes (i.e., angry expressions) to external sources.

Some other studies, however, suggest that outcomes with negative valence might be associated with enhanced experiences of agency. For example, Beck et al.22 found that choices with different probabilities increased implicit agency when the outcome was painful heat but not when it was a non-painful electric stimulus. Di Costa et al.23 reported a post-error boost in agency, highlighting the role of negative feedback in learning. Caspar et al.24 found that participants showed stronger intentional binding when they freely chose to induce financial or physical harm to others, compared to when they acted under coercion. On the other hand, Tanaka and Kawabata25 showed that the influence of outcome valence on sense of agency might depend on the interaction of action choice and predictability. Adding nuance to these findings, some research found no effect of outcome valence on intentional binding26,27,28.

Importantly, intentions themselves might also modulate the sense of agency in these contexts. For example, in a series of experiments, Sarma & Srinivasan29 investigated how explicit intentions influence sense of agency. Participants were asked to form intentions and choose actions that resulted in either a negative (e.g., disgust or angry faces) or positive (e.g., happy) outcome. The actual result was unpredictable and could therefore differ from the initial intention. Their findings showed that negative emotional expressions, when explicitly intended, increased the sense of agency compared to positive ones.

These contrasting results might be attributed to various factors, such as the fluency of action selection (i.e., the ease and smoothness with which participants made decisions between different action options, possibly facilitated by cues or priming)30, the number of action choices26, the identity of action effects31, or the influence of predictive and postdictive processes32,33 among others. However, one common feature of many studies is that, even when outcome occurrence is probabilistic (e.g., 75%), such as in Christensen et al.34, outcome valence is typically either fully predictable (e.g., always negative) or unpredictable (e.g., 50/50), with no conditions in which valence is mostly expected but occasionally violated. This binary approach overlooks a key question: What happens when we accidentally elicit an outcome that is opposite to what we intended? For example, if one aims to make someone happy but accidentally causes sadness, how does this mismatch between the desired and actual outcome affect the sense of agency?

Aim of the study

The present study aimed to investigate how the sense of agency is impacted when accidental (unintended) outcomes manifest as the emotional facial expressions of others. We chose to use emotional facial expressions as outcomes because they can act as salient affective signals, serve as intrinsic rewards35, and can significantly influence decision-making processes and the affective states of others36. Emotional facial expressions are highly relevant in everyday interactions, whether these occur in physical or digital contexts. Compared to simple stimuli such as a sound elicited by a button press, emotional expressions serve as socially meaningful feedback. Indeed, emotional expressions of others are among the most ubiquitous action outcomes – from birth onward, we become attuned to observing and interpreting others’ expressions as feedback to our own actions.

In this study, we employed the humanoid robot iCub37 to systematically examine the impact of unintentional emotional expressions on the sense of agency. Using a humanoid robot provides several advantages over other approaches. In comparison to traditional experimental paradigms that typically present emotional faces on screens, using a robot allows for interacting with a physical agent in a shared environment. This offers higher ecological validity compared to observing virtual faces on a monitor. Humanoid robots can physically occupy shared spaces and engage in lifelike social interactions, mimicking the dynamics of real-world human exchanges. In comparison with human-human interactions, on the other hand, the use of robots like iCub ensures greater controllability over experimental variables, as the robot’s responses can be precisely programmed and timed, allowing for consistent and repeatable expressions across trials. Moreover, research has shown that humans might perceive, and are likely to respond to, the emotional facial expressions of robots in ways that resemble their responses to human expressions38,39,40,41, making it feasible to study social-cognitive processes in an innovative, yet controlled, setting.

In Experiment 1, participants were given the choice of showing one of two objects to iCub, which would elicit either a happy or a sad facial expression in the robot. However, unbeknownst to the participants, there was a 20% probability that the robot would display a sad expression when a happy one was intended. This manipulation allowed us to compare participants’ sense of agency when they elicited an intended versus an unintended sad expression. We hypothesized that participants would experience a reduced sense of agency when they accidentally made iCub sad compared to when this outcome was elicited intentionally. To assess intentional binding and hence implicit sense of agency, we employed the interval estimation method, wherein participants judged the perceived duration between their action and the resulting effect.

In Experiment 2, we sought to replicate the findings of Experiment 1 with improved experimental design. In Experiment 3, we aimed to determine whether the effect of intended versus unintended sad expressions was due to the emotional content or simply because the outcome was unexpected.

Experiment 1

Method and materials

Participants

Thirty-four volunteers participated in the study, with an average age of 23.79 years (SD = 4.19), including 8 males and 1 left-handed individual. An a priori power analysis was conducted using G*Power 3.1.9.7 (F-tests, repeated-measures ANOVA, within-subjects design). Assuming a medium effect size (f = 0.25), α = 0.05, the analysis indicated that a minimum of 22 participants would be required to achieve 95% power. All participants provided informed consent and reported having normal or corrected-to-normal vision. The study was conducted in accordance with the Code of Ethics of the World Medical Association (Declaration of Helsinki) and was approved by the local ethical committee (Comitato Etico Regione Liguria). Participants were naïve to the purpose of the study before the experiment and were debriefed afterward. They were all compensated with 10 euros for their participation.

Apparatus and stimuli

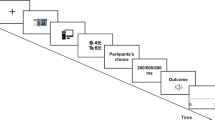

The experimental setup (Fig. 1) employed an adapted version of iCub humanoid robot, featuring iCub’s head mounted on a 3D printed torso, placed over an 82 cm high support platform. The workspace for the participants included two 21-inch monitors with a refresh rate of 60 Hz and a resolution of 1920 × 1080, positioned laterally on either side of the table. The left monitor was oriented vertically and faced iCub, aligning with the robot’s gaze during the experiment to simulate the robot’s engagement with the stimuli displayed. The right monitor was used by participants for viewing instructions and performing the interval estimation task.

Input from the participants was collected using a dual-method setup: a button box for selecting actions and a mouse for performing the timing tasks associated with the interval estimation. iCub’s facial expressions and the LED colors on its face were controlled using the YARP framework42, specifically through the faceExpression module, which managed the movements and color changes of iCub’s mouth and eyebrows. The expressions displayed included happy, sad, and neutral. All expressions (happy, sad, and neutral) were displayed using red LEDs to enhance salience. Control and data collection for the experiment were conducted via PsychoPy v2022.2.443.

Procedure

In a dimly lit room, participants were seated directly across from iCub, approximately 80 cm away, at eye level, to facilitate naturalistic interaction. iCub’s expression was neutral and its gaze was turned towards the participant when instructions were given. With the start of the training session, iCub’s gaze shifted towards its screen, though its head remained fixed.

Participants were informed that iCub “loves screwdrivers,” and thus, displays happy expressions when it sees one, and “is not a fan of hammers,” which leads to sad expressions when it is presented with a hammer. In this context, participants were asked to choose one of two objects to show iCub on its screen. After making their selection, there was a programmed delay before iCub’s expression changed from neutral to either happy or sad. The delays were set at 300 ms, 500 ms, or 800 ms, and randomized across trials. Following the expression change, which lasted for 1250 ms to support late-stage emotional processing and reduce variability due to individual differences in attentional biases (see44), participants were presented with an interval estimation slider on their screen, and iCub’s expression returned to neutral. The slider ranged from 0 to 1000 ms, with demarcations only at the start, middle, and end, to avoid distracting the participants’ judgments with numbers. They were instructed not to count but to intuitively estimate the elapsed time and indicate it on the slider. The positions of the objects on the screen (left/right) were changed in each trial.

The acquisition phase, presented to participants as the training phase, consisted of nine trials in which object selections consistently produced the expected outcomes: selecting the screwdriver resulted in happy expressions, while selecting the hammer led to sad expressions. After the acquisition phase, the procedure remained the same (see Supplementary Video 1), however, an accidental outcome condition was introduced: on average, in 20% of the trials where participants chose the screwdriver, expected to elicit a happy expression from iCub, the robot displayed instead a sad facial expression (see Fig. 2 for trial sequence). This deviation was calculated dynamically during the experimental session, ensuring that the unexpected response occurred only in the specified proportion of relevant trials. However, we did not include a condition where participants intended a sad facial expression but saw an unintended happy expression. This decision was based on two main considerations: (1) In a pilot study without accidental outcomes, where only happy and sad expressions were displayed by the robot, we found that participants significantly preferred to elicit happy expressions rather than sad ones (analysis provided in Supplementary Materials). Including a condition with accidental happy expressions would therefore have further reduced the number of trials with intended sad expressions available for analysis, and (2) we wanted to ensure that participants who chose to elicit a sad expression always saw one, so that the design could specifically test how experiencing an unintended sad expression (i.e., when a happy one was intended) influences the sense of agency compared to when a sad expression was intentionally produced. Anchoring sad expressions as fully predictable established a consistent baseline for intended negative outcomes, which allowed us to manipulate whether sad expressions were expected or unexpected. We chose not to introduce accidental happy expressions, as doing so could have reduced the salience of unexpected sad outcomes. Furthermore, if accidental happy expressions had been introduced, participants could have chosen the item that elicited mostly sad expressions hoping to elicit the happy expression nonetheless. Finally, we reasoned that this design choice also more closely reflects real-world interactions, where unintended negative outcomes are generally more disruptive and emotionally salient than unintended positive ones. The experimental session was completed after 204 trials, lasting approximately 30 min. After completing the trials, participants were debriefed about the purpose of the experiment.

Trial sequence of Experiment 1. At the beginning and between trials, iCub maintained a neutral facial expression. Participants were asked to select between two objects (i.e., screwdriver or hammer) to show iCub. After a delay (300, 500, 800 ms), iCub displayed the corresponding expression: happy (screwdriver) or sad (hammer). In 20% of the trials, an accidental outcome occurred where iCub displayed a sad expression instead of the intentional happy one. Participants then estimated the perceived time interval between their action and iCub’s facial expression using a slider.

Data analysis

The data from participants who did not choose sad expressions as outcomes in at least 5% of the trials were removed from the analysis. This exclusion criterion was applied to ensure that both outcome levels were sufficiently represented in the data, as an underrepresentation of intentional sad expressions would undermine the reliability and validity of comparisons between outcome categories. Consequently, three participants were excluded based on this criterion. Additionally, one participant was removed because they reported during debriefing that they did not realize there were accidental sad facial expressions. Thus, the final analysis was conducted with 30 participants (Mean age = 23.83, SD = 4.38, 8 males).

To analyze participants’ preferences for eliciting positive (happy) or negative (sad) expressions, we calculated the proportion of trials in which participants chose the action associated with happy expressions. To determine whether participants significantly preferred happy expressions over the chance level of 50%, we first assessed normality using the Shapiro-Wilk test. Due to a violation of normality (p = .005), a non-parametric Wilcoxon signed-rank test with continuity correction was conducted. This test assessed whether the proportion of choices intended to elicit happy expressions significantly exceeded 50%.

The distribution of outcomes was not equal between conditions due to the fact that the accidental condition occurred much less frequently than the intended conditions. Linear mixed models were used to analyze the effects of action outcomes (i.e., emotional expressions) on interval estimations, because they allow to account for the differences in number of observations in each condition. The analysis was conducted using the lme445 and lmerTest46 packages in RStudio, which implement t-tests via Satterthwaite’s method for p-value calculation, and provide 95% Wald confidence intervals to estimate the precision of fixed effects. To examine the influence of outcome type and delay on interval estimation, we fitted the model with outcome and delay and their interaction as fixed effects, and a random slope for outcome per participant:

Model

Interval Estimation ∼ Outcome * Delay + (1 + Outcome ∣ Participant).

Outcome has three levels (intentional happy, intentional sad, and accidental sad), with accidental sad as the reference level. This model tests the hypothesis that accidental outcomes would reduce participants’ sense of agency more than intentional ones and potentially alter their perception of temporal intervals, specifically compared to intentional sad outcomes. The predictor delay represents the actual interval (300 ms, 500 ms, 800 ms) between the participant’s action and the robot’s expression change, examining how different delays influence participants’ interval estimations. The interaction term assesses whether the effect of outcome type on temporal interval judgments varies across delays. Additionally, a random intercept and slope for outcome per participant were included to account for individual differences in baseline estimates and sensitivity to outcome type. Since the model compares only against the reference level (accidental sad), we conducted additional pairwise comparisons using the emmeans package47 in R. These comparisons tested all pairwise contrasts between outcome types and their interaction with delay, with multivariate t adjustment for multiple comparisons (as in48). Detailed output of the model can be found in Supplementary Materials.

As a manipulation check to examine whether the unexpected nature of the outcomes affected response times (i.e., the time it took participants to make an interval estimation after seeing the robot’s expression), we fitted a linear mixed model with outcome, delay and their interaction as fixed effects, and a random intercept for participant:

Model

Response Time ∼ Outcome * Delay + (1 | Participant).

This simplified model was selected because including outcome as a random slope resulted in a singular fit.

Results and discussion

Participants’ choices

The analysis revealed a clear preference among participants for choices they believed would lead to positive expressions (see Fig. 6), with actions intended to elicit happy expression accounting for 64.8% of all trials. Given that participants could only choose between eliciting a happy or sad expression in each trial, we tested whether happy choices occurred significantly more often than the 50% chance level. The test revealed that the proportion of choices intended to generate a happy expression significantly exceeded 50% (V = 450.5, p < .001), showing that participants were reliably more likely to make decisions that should lead to the positive outcome.

Effects of outcome on interval estimations

The analysis (see Supplementary Table S1) demonstrated a significant main effect of delay on interval estimations, as expected (500 ms: β = 65.93, t = 4.06, p < .001, 95% CI [34.09, 97.77]; 800 ms: β = 142.35, t = 8.69, p < .001, 95% CI [110.25, 174.44]), indicating that participants were able to perceptually distinguish between the different delay levels. Regarding outcome type, pairwise comparisons (see Fig. 3) showed a significant difference between accidental sad and intentional sad expressions, β = 30.0, z = 2.59, p = .026, 95% CI [2.86, 57.1], suggesting that accidental sad expressions notably influenced interval estimations. However, neither the difference between accidental sad and intentional happy, β = 15.5, z = 1.25, p = .423, 95% CI [-13.55, 44.6], nor between intentional happy and intentional sad, β = 14.5, z = 1.05, p = .545, 95% CI [-17.88, 46.8], reached the level of significance. Regarding the interaction between outcome and delay, pairwise comparisons (see Supplementary Table S2) did not reveal any significant differences between outcomes at the same delay levels (all ps > 0.27).

Experiment 1: Raincloud plot shows the participant-level interval estimates across the three outcome conditions (Accidental Sad, Intentional Sad, Intentional Happy). Each dot represents an individual participant’s mean estimate; half-violins show the distribution of participant means, boxplots indicate the median and interquartile range, and grey lines connect each participant’s values across conditions. Black diamonds with error bars represent the model-estimated marginal means with 95% confidence intervals. * indicates statistical significance at p < .05.

Effects of outcome on response times

The results (see Fig. 8) showed that accidental sad expressions significantly increased response times compared to both intentional happy, β = 0.48, z = 13.34, p < .0001, 95% CI [0.40, 0.57], and intentional sad expressions, β = 0.51, z = 13.36, p < .0001, 95% CI [0.42, 0.60]. The difference between intentional happy and intentional sad was not significant, β = 0.03, z = 1.15, p = .476, 95% CI [-0.03, 0.09]. These elevated response times in the accidental condition suggest that participants were likely surprised by the unexpected outcomes.

Discussion

Experiment 1 aimed to examine how the sense of agency is impacted when one’s own actions unexpectedly produce sad facial expressions in others, compared to when the same sad expressions are intentionally generated. To this end, participants were asked to choose objects which would trigger a specific emotional facial expression in the humanoid robot iCub. However, when participants selected the object associated with happiness, there was a 20% chance that the robot would instead display a sad expression (i.e., accidental sad). Participants then estimated the delay between their action and the robot’s response (emotional expression) to assess intentional binding as an implicit measure of sense of agency.

Results showed that interval estimations were significantly longer when participants encountered accidental sad outcomes compared to intentional sad outcomes. This suggests that when participants failed to achieve their intended emotional expression, their sense of agency was attenuated. This result is consistent with a comparator model of sense of agency, which proposes that a mismatch between predicted and actual outcomes decreases the sense of agency. In our study, participants were explicitly told that choosing certain objects would lead to specific emotional expressions (i.e., screwdriver leading to happiness, hammer leading to sadness), and when this prediction failed (i.e., an accidental sad expression was displayed), the sense of agency was disrupted. This disruption in agency also aligns with Brandi et al.’s49 concept of social agency, which posits that agency is shaped not only by motor actions and their outcomes but also by one’s control over social interactions. In the accidental sad condition, perceived emotional expressions violated the expectations, leading to a diminished sense of agency. In addition, the experiment revealed that participants responded more slowly when judging the delay between action and outcome in cases of accidental sad expressions. This increase in response times can be interpreted as the result of the mismatch/unexpectancy, where unexpected outcomes led to extended processing times. The need to reassess one’s actions and their consequences likely consumes more cognitive resources, leading to slower response times and likely involving a reassessment of the situation and an adjustment of their internal models of causality50,51.

In addition, results regarding action selection revealed a strong preference behind participants’ choices, with a clear preference for eliciting happy expressions (i.e., choosing the screwdriver over the hammer). This preference can be explained not only by social desirability and prosocial behavior, but also by emotional contagion. As Hatfield et al.52 suggest, being exposed to emotional displays enhances the participants’ emotional state; when we see a happy face, this in turn might elicit internal happiness. Moreover, this strong preference for eliciting happy expressions suggests that these outcomes might hold greater subjective value for participants. As proposed by Antusch et al.53, outcomes with higher subjective value tend to enhance intentionality, which might, in turn, reinforce the sense of agency.

Interestingly, despite participants’ clear preference for outcomes with positive valence (i.e., happy facial expression), the analysis revealed no significant difference in interval estimations between intentional happy and accidental sad outcomes. This result can be attributed to the uncertainty inherent in the intentional happy condition and can be interpreted by reference to the factor of predictability. When participants chose the screwdriver, there was always an 80% chance of eliciting a happy expression, but the remaining 20% chance for a sad outcome likely created a persistent sense of unpredictability. Even when their intention was fulfilled, participants might not have felt entirely in control because they experienced that there was always a possibility that their action could result in an unintended outcome. This uncertainty might have diminished their overall sense of agency in this condition, even when the intended happy expression appeared.

By contrast, the significant difference between the intentional sad and accidental sad outcomes potentially reflected the fact that the intentional sad outcome matched participants’ expectations. When participants chose the hammer, it always rendered a sad expression, providing them with a more consistent sense of control over the outcome. This predictability likely reinforced their sense of agency, as the outcome was entirely aligned with their intention and expectations. An alternative explanation, as demonstrated in the study by Sarma & Srinivasan29, is that negative facial expressions might have enhanced the sense of agency when they were intentionally elicited and successfully achieved. The authors suggest that in socially relevant contexts, particularly when negative intentions are involved, the sense of agency might be driven not only by seeing the expected outcome, but instead, the sense of agency might be influenced more by the anticipation of the social or emotional consequences (e.g., retaliation) when intentionally eliciting negative expressions. In these situations, prediction might become less central, as the anticipation of negative social consequences might play a more prominent role in shaping the sense of agency29. However, this interpretation seems less likely to fit to the context of our experiment, as our experiment did not involve any possibility for further social consequences of the choice that elicited negative expression in the iCub.

In conclusion, the findings from Experiment 1 suggest that participants’ sense of agency was primarily shaped by the congruence between their intended actions and the resulting outcomes. When their intended positive outcome was disrupted by an accidental sad expression, participants experienced a reduced sense of agency compared to when they intentionally elicited a sad expression, underscoring the role of intentionality and predictability in modulating agency.

To build on these findings and account for potential alternative explanations, we conducted a second experiment to replicate the main effect found in Experiment 1 while varying object-emotion pairings, adding eyebrow movement to the happy expression for visual comparability with sad expression, and changing object positions across blocks.

Experiment 2

Methods and materials

Participants

A new sample of thirty-five volunteers participated in Experiment 2 (age: M = 32.28 years, SD = 11.19; 20 females, 3 left-handed). Sample size was determined using the same approach as in Experiment 1. All participants had normal or corrected-to-normal vision and were naïve to the study’s purpose. Informed consent was obtained, and the study complied with the same approved ethics protocol as in Experiment 1. Participants received 10 Euros for their participation.

Apparatus, stimuli and procedure

The experimental setup was similar to that of Experiment 1; however, we introduced three specific adjustments to refine the design and control for possible unintended influences of factors that were not of our research interest. First, because tools such as hammers and screwdrivers can afford different types of actions, and tool-related affordances have been shown to influence action selection, perceptual processing, and anticipatory mechanisms54,55,56, we counterbalanced the object-outcome mappings across participants to control for potential biases that might be introduced by object identity. For half of the participants, the hammer triggered a happy expression and the screwdriver a sad one; for the other half, this mapping was reversed. Second, in Experiment 1, the robot’s sad expression involved both eyebrow and mouth movement, whereas the happy expression relied solely on mouth movement. To ensure that both expressions engaged comparable facial features, the happy expression was modified to also include upward eyebrow movement (see Supplementary Video 2). Third, instead of swapping the object positions on the left and right side of the screen on every trial, which might induce spatial compatibility effects, their positions were blocked. Each object remained on the same side for 15 consecutive trials before switching, and this alternation continued throughout the experiment.

All other aspects of the apparatus, stimuli, and procedure were kept consistent with those used in Experiment 1. The experimental session consisted of 210 trials and lasted approximately 30 min. At the end of the experiment, participants completed a brief questionnaire with open-text responses exploring their subjective experience of the task (see Supplementary Materials). The questions assessed whether they noticed unexpected emotional expressions from iCub, their interpretations of these expressions, object choice preferences and motivations, emotional reactions to iCub’s expressions, and the level of empathy they felt toward iCub, rated on a scale from 0 to 10. Participants were fully debriefed after completing the experiment.

Data analysis

Participants who selected sad expressions in fewer than 5% of trials were excluded to ensure adequate representation of both outcome types across conditions (N = 3). One participant was excluded because they indicated in the questionnaire and during debriefing that they had not noticed any unexpected expressions. Another participant was excluded after reporting post-experiment that they had not understood the interval estimation task and found the robot’s facial expressions too fast to follow, resulting in noncompliant responses (i.e., consistently selecting the same range on the interval slider). Additionally, one participant deviating by more than three standard deviations from the group mean on two of the main contrasts was excluded from the primary analyses. Analyses including this participant are reported in the Supplementary Materials. The final sample consisted of 29 participants (Mean age = 31.9, SD = 10.71, 18 females).

The data analysis approach followed the same procedure as in Experiment 1. We used the same linear mixed-effects models to analyze participants’ interval estimations and response times. Regarding the pairwise comparisons, as we aimed to replicate the result from Experiment 1, we had a directional hypothesis a priori that the estimated interval for intentional sad would be shorter than for accidental sad. Since this was our primary comparison of interest, we used a one-tailed test for this contrast.

Additionally, since participants were assigned one of two possible action-outcome pairings, where either selecting the screwdriver resulted in a happy expression and the hammer in a sad one, or the opposite pairing was used, we tested whether this object-outcome mapping influenced interval estimates. To examine this, we ran an additional model including mapping (i.e., screwdriver-happy vs. hammer-happy) as a fixed factor:

Model

Interval Estimate ∼ Outcome * Delay * Mapping + (1 + Outcome | Participant).

Results and discussion

Participants’ choices

We investigated whether participants in Experiment 2 significantly preferred happy expressions, as observed in Experiment 1. Results showed that the proportion of choices intended to generate a happy expression significantly exceeded 50% (V = 406, p < .001), with participants choosing to elicit happy expressions on 63.1% of trials, consistent with the pattern found in Experiment 1 (see Fig. 6 and Supplementary Table S7).

Effects of outcome on interval estimations

The analysis (see Supplementary Table S3) revealed a significant main effect of delay on interval estimation. Interval estimations were significantly longer at 500 ms, β = 72.48, t = 4.84, p < .001, 95% CI [43.15, 101.82], and 800 ms, β = 202.13, t = 13.47, p < .001, 95% CI [172.70, 231.55], compared to 300 ms. Comparisons between outcome types (see Fig. 4) showed that accidental sad led to significantly longer interval estimations than intentional sad, β = 20.4, z = 2.52, p = .014, 95% CI [1.78, 38.9]. The differences between intentional sad and intentional happy, β = 19.4, z = 1.92, p = .120, 95% CI [-3.8, 42.7], and between accidental sad and intentional happy, β = 0.94, z = 0.07, p = .997, 95% CI [-30.47, 32.4], were not significant. To examine whether delay modulated outcome effects, we conducted direct pairwise comparisons between conditions at each delay (see Supplementary Table S4). At 300 ms, interval estimations for accidental sad were significantly longer than intentional sad, β = 55.21, SE = 12.9, z = 4.28, p = .002, 95% CI [15.9, 94.5]. No other comparisons were significant (all ps > 0.25).

Additionally, to evaluate the consistency of our findings, we performed a meta-analysis combining the mean differences and standard errors for the accidental sad versus intentional sad contrast from Experiments 1 and 2, as obtained from the linear mixed models (see Supplementary Table S8). This analysis indicated that the results were highly consistent across both experiments, with no evidence of heterogeneity (I² = 0%; see Supplementary Figure S1).

Experiment 2: Raincloud plot shows the participant-level interval estimates across the three outcome conditions (Accidental Sad, Intentional Sad, Intentional Happy). Each dot represents an individual participant’s mean estimate; half-violins show the distribution of participant means, boxplots indicate the median and interquartile range, and grey lines connect each participant’s values across conditions. Black diamonds with error bars represent the model-estimated marginal means with 95% confidence intervals. * indicates statistical significance at p < .05.

Effects of action-outcome mapping on interval estimations

The results showed no significant main effect of action-outcome mapping between participants, β = 48.87, p = .297, 95% CI [-41.62, 139.37], nor any significant interactions with outcome or delay (all ps > 0.05), suggesting that the specific pairings between objects and emotional expressions did not influence participants’ interval estimates.

Effects of outcome on response times

The results (see Fig. 8) demonstrated that, consistent with Experiment 1, accidental outcomes elicited significantly longer response times. Accidental sad responses were significantly slower than both intentional happy, β = 0.60, z = 15.44, p < .0001, 95% CI [0.51, 0.68], and intentional sad responses, β = 0.62, z = 15.29, p < .0001, 95% CI [0.52, 0.71]. In contrast, response times for intentional happy and intentional sad outcomes did not differ significantly, β = 0.02, z = 0.84, p = .676, 95% CI [-0.04, 0.09].

Discussion

Experiment 2 was conducted to replicate the findings of Experiment 1 while improving experimental control. Specifically, the object-emotion mappings were counterbalanced between participants so that for half of the participants, selecting the screwdriver produced a happy expression and the hammer a sad one, while for the other half this mapping was reversed. In addition, the happy facial expression was adjusted to be more perceptually comparable to the sad expression by adding eyebrow movement, which was not present in Experiment 1. Finally, to reduce potential spatial biases, the presentation of object images was modified so that each object remained in a fixed position within a block of trials, rather than swapping positions on every trial.

The results confirmed the main pattern observed in Experiment 1. Accidental sad outcomes led to longer interval estimates than intentional sad outcomes, replicating the weakened implicit sense of agency, reflected in intentional binding, when the emotional expressions did not match participants’ intentions. Response times were also longer following accidental sad expressions than following intentional happy or intentional sad expressions, consistent with Experiment 1.

As in Experiment 1, participants in Experiment 2 predominantly selected the action associated with a high probability (80%) of eliciting a happy expression. Specifically, this action was chosen on 64.8% of trials in Experiment 1 and 63.1% in Experiment 2. However, since 20% of these selections still resulted in a sad expression, the overall distribution of happy and sad expressions was approximately balanced. This pattern might raise the question of whether “accidental” and “intentional” outcomes were fully distinct from the participants’ perspective, which could in turn influence how outcome intentionality relates to the sense of agency.

Yet, while participants might have recognized the probabilistic nature of the action-outcome associations, open-text responses collected after the experiment suggest that their choices were often guided by a desire to elicit happy expressions from the robot. Several participants expressed disappointment, surprise, or discomfort when the robot unexpectedly displayed a sad expression. Although the degree of emotional engagement and intentionality varied across participants, the overall pattern supports the interpretation that sad expressions were frequently experienced as unintended within the affective and motivational context of the task.

In Experiment 2, a significant difference between accidental and intentional sad outcomes emerged at the shortest delay (300 ms), whereas no interaction with delay was observed in Experiment 1. This delay-specific effect should be interpreted with caution, as the delay factor was included only to introduce variability in action-outcome timing and was not designed to test specific delay effects. Nevertheless, prior research has shown that when outcomes follow actions closely in time, intentional binding tends to be stronger4,57. The refinements introduced in the present design might have supported this effect by encouraging more consistent attention to the temporal link between action and outcome. However, this interpretation remains speculative as the design modifications limit direct comparisons across experiments.

Moreover, we did not find any effect of action-outcome mapping on interval estimations, suggesting that whether participants used the screwdriver or the hammer to elicit specific emotional expressions did not significantly influence their sense of agency. Although this mapping was not controlled in Experiment 1, the replication of results in Experiment 2 suggests that the observed effects were not driven by the action-outcome pairings.

Overall, the core outcome effect observed in Experiment 1 was replicated in Experiment 2. However, the results of Experiments 1 and 2 did not resolve the question of whether the observed effects on the sense of agency were primarily driven by the mismatch between participants’ internal predictions and the outcomes or by the affective nature of those outcomes. To address this, we conducted a third study in which the emotional expressions were replaced with neutral perceptual outcomes, specifically, emotionally neutral changes in the color of the robot’s facial LEDs.

Experiment 3

Methods and materials

Participants

A new sample of thirty-one volunteers participated in Experiment 3 (age: M = 30.64 years, SD = 12.15; 15 females, 4 left-handed). The sample size estimation followed the same procedure as in Experiments 1 and 2. As in the previous experiments, all participants had normal or corrected-to-normal vision and were unaware of the study’s purpose beforehand. Written informed consent was obtained prior to participation, and the study adhered to the same ethical guidelines and protocol approved for Experiments 1 and 2. They were all compensated with 10 euros for their participation and debriefed about the aims of the study after the experiment.

Apparatus, stimuli and procedure

The apparatus was the same as Experiments 1 and 2 and procedure remained largely unchanged (see Fig. 5 and Supplementary Video 3); however, self-generated actions in this paradigm induced color changes rather than emotional expressions. Participants were again seated approximately 80 cm away from iCub, with its gaze initially directed at them during the instruction phase before shifting towards its screen when the acquisition phase began. iCub displayed neutral expression throughout the experiment.

In this experiment, participants were informed that “iCub interacts with its environment in a unique way, by changing the color of its facial LEDs”, which were white by default (i.e., between trials, before a choice was made). The LEDs turned green when presented with a screwdriver and blue when shown a hammer. After the color change, participants estimated the time interval using a slider, following the same procedure as in Experiments 1 and 2. During the acquisition phase, participants completed nine trials where selecting the screwdriver consistently resulted in a green color and selecting the hammer resulted in blue. After the acquisition phase, an accidental outcome condition was introduced during the experimental trials: in 20% of the trials where participants expected green (after selecting the screwdriver), iCub unexpectedly displayed blue instead. This deviation was calculated online to maintain the specified proportion of accidental trials.

The experimental session consisted of 204 trials and lasted approximately 30 min. Participants were fully debriefed after completing the session.

Experiment 3: Trial sequence. At the beginning and between trials, iCub maintained a neutral facial expression. Participants were asked to select between two objects (screwdriver or hammer) to show iCub. After a delay (300, 500 or 800 ms), iCub’s facial LEDs changed to corresponding color: green (screwdriver) or blue (hammer). In 20% of the trials, an accidental outcome occurred where iCub displayed blue color instead of the intentional green one. Participants then estimated the perceived time interval between their action and iCub’s facial expression using a slider.

Data analysis

In Experiment 3, the outcomes were color changes instead of emotional expressions. To maintain consistency, we applied the same methods of analysis as in Experiments 1 and 2. To analyze participants’ preferences for eliciting one color over the other, we calculated the proportion of trials in which participants chose the action associated with a specific color, following the approach outlined in Experiment 1.

Results and discussion

Participants’ choices

We examined whether participants exhibited a preference for one color over the other. Participants chose to elicit the green color on iCub’s face in 52.9% of trials, but the Wilcoxon signed-rank test showed that this preference was not significantly different from 50% (V = 312, p = .052), suggesting that participants did not exhibit a clear preference for green or blue color (see Fig. 6).

Percentage of intended choices across the three experiments. Accidental outcomes were included under the intended option (e.g., accidental sad trials were counted as happy intentions). Bars show the percentage of intended happy/green versus sad/blue choices. Error bars represent the standard error of the mean (SEM) across participants.

Effects of outcome on interval estimations

The results (see Supplementary Table S5) showed a significant main effect of delay on interval estimation (800 ms: β = 50.02, t = 3.20, p = .001, 95% CI [19.34, 80.71]; 500 ms: β = 5.45, t = 0.33, p = .739, 95% CI [-26.55, 37.44]), indicating that participants perceived the longer delay (800 ms) but not the shorter one (500 ms) as different from 300 ms. Pairwise comparisons of outcome effects (see Fig. 7) revealed a significant difference between intentional green and intentional blue, β = -18.05, z = -3.49, p = .001, 95% CI [-30.00, -6.11]. However, accidental blue was comparable to both intentional green, β = 14.18, z = 1.30, p = .376, 95% CI [-10.90, 39.26], and intentional blue, β = -3.87, z = -0.34, p = .937, 95% CI [-30.50, 22.75], suggesting no substantial influence on interval estimation based on these outcomes. Regarding interaction terms (see Supplementary Table S6), intentional green and intentional blue outcomes differed significantly at the 800 ms delay, β = -28.14, z = -3.49, p = .012, 95% CI [-52.84, -3.44]. No other outcome comparisons at the same delay levels were significant (all ps > 0.30).

Experiment 3: Raincloud plot shows the participant-level interval estimates across the three outcome conditions (Accidental Blue, Intentional Green, Intentional Blue). Each dot represents an individual participant’s mean estimate; half-violins show the distribution of participant means, boxplots indicate the median and interquartile range, and grey lines connect each participant’s values across conditions. Black diamonds with error bars represent the model-estimated marginal means with 95% confidence intervals. ** indicates statistical significance at p < .01.

Effects of outcome on response times

The analysis (see Fig. 8) revealed that, similar to Experiments 1 and 2, the unexpected outcome (i.e., accidental blue) significantly increased response times compared to both intentional green, β = 0.31, z = 7.30, p < .0001, 95% CI [0.21, 0.41], and intentional blue, β = 0.33, z = 7.88, p < .0001, 95% CI [0.23, 0.43]. There was no significant difference in response times between intentional green and intentional blue, β = 0.02, z = 0.84, p = .674, 95% CI [-0.04, 0.08].

Discussion

The aim of Experiment 3 was to examine whether the difference in the temporal compression effect observed for intentional versus accidental sad outcomes in Experiments 1 and 2 was related to the impact that emotional expressions had on participants, or was merely due to predictability. To this end, in Experiment 3, we replaced the emotional facial expressions of iCub with neutral perceptual outcomes to remove the affective elements. While keeping iCub’s facial expression neutral, participants’ action choices instead changed the color of the robot’s eyebrows and mouth LEDs. Participants followed a similar task structure, selecting objects intended to trigger specific color changes (i.e., intentional green or intentional blue). However, when participants selected the object associated with green, there was a 20% chance that the robot’s facial colors would turn blue (i.e., accidental blue), mirroring the protocol used to deliver unexpected outcomes in Experiments 1 and 2.

Results showed that action-outcome congruency did not modulate the sense of agency. Specifically, there was no significant difference between accidental blue and intentionally produced blue or green. This suggests that the findings from Experiments 1 and 2 were not solely driven by predictive sensorimotor mechanisms but might rather be linked to the affective nature of the outcomes. In contrast to Experiments 1 and 2, the choice frequencies of the tools were comparable; in other words, there was no significant difference in the number of times participants selected either action (i.e., selecting a hammer or screwdriver to generate the color effects). This indicates that participants did not show a strong bias toward one color, and green and blue colors were generated almost equally. This lack of preference suggests that neither color outcome held significant value for participants, or that the colors were insufficient to elicit the kind of bias seen with emotional expressions in Experiments 1 and 2.

As observed in earlier experiments, participants again exhibited longer response times (i.e., the time that elapsed between the end of the outcome display and their interval estimation response) after producing accidental blue outcomes compared to intentional green or intentional blue. This finding suggests that outcome incongruency demanded additional cognitive processing. Interestingly, however, despite recognizing the outcome as unexpected, participants’ sense of agency was not influenced in the same way as it was by the affective outcomes in Experiments 1 and 2.

Notably, participants exhibited stronger binding when they elicited intentional green compared to intentional blue. One possibility is that participants might have experienced these color changes more in terms of error monitoring or maintaining control rather than pursuing a motivated goal, such as the preference to make the robot display happy expressions in Experiments 1 and 2. The accidental blue outcome might have been perceived as an error, while successfully producing green with an 80% probability could have enhanced the sense of agency by signaling successful control over an outcome that was not fully guaranteed. In contrast, the fully predictable blue outcome might have lacked this element of uncertainty resolution. However, alternative explanations related to perceptual or cognitive processing of the outcomes remain possible.

Additionally, intentional green produced stronger binding than intentional blue at the 800 ms delay. Although shorter delays are typically associated with stronger binding, some studies have shown that binding can persist, or even increase, at longer intervals depending on task and context (see58 for a review). One possibility is that the color changes were neutral and therefore less attentionally capturing, which might have required a wider time window for a detectable binding effect to emerge. On the other hand, the observation of stronger binding at a shorter delay (300 ms) between accidental sad and intentional sad outcomes in Experiment 2 suggests that such effects might also depend on the nature of the outcome (i.e., emotional versus perceptual). However, this effect was not part of our initial hypotheses and should be interpreted cautiously.

Taken together, while emotional expressions as outcomes in Experiments 1 and 2 evoked a stronger effect on sense of agency when they did not match participants’ intentions, outcomes as color changes did not reduce the sense of agency when expectations were violated. These findings suggest that the results of Experiments 1 and 2 cannot be solely attributed to the relationship between contingency and intentional binding, proposed by predictive sensorimotor mechanisms. Furthermore, predictive mechanisms alone might not fully account for the modulation of intentional binding, as suggested by previous research59,60,61,62,63. Instead, factors such as the perceived value of an outcome might be critical in determining the sense of agency.

General discussion

The present study aimed to investigate how action outcomes that are emotional facial expressions of others influence the sense of agency, particularly when actions lead to unintended outcomes. In Experiment 1, participants were tasked with selecting objects that were intended to trigger specific emotional expressions in iCub, a humanoid robot. Their choices were meant to elicit either happy or sad facial expressions. However, when participants selected the object associated with a happy expression, there was a 20% chance that iCub would instead display a sad expression, resulting in an accidental sad outcome. Participants then estimated the interval between their actions and the robot’s responses. We used this interval estimation to measure intentional binding, which served as an implicit measure of the sense of agency. In Experiment 2, we aimed to test the robustness of the effect observed in Experiment 1 while improving experimental design. The procedure was similar to Experiment 1, but this time the object-emotion mappings were counterbalanced across participants so that any differences in sense of agency could not be attributed to consistent object-emotion associations. In addition, the happy expression was adjusted by adding eyebrow movement to better match the parameters of the sad expression, and the intensity of the facial renderings was kept consistent. The positions of the objects on the screen remained fixed within each block but were switched across blocks. Experiment 2, therefore, allowed us to confirm whether unintended emotional expressions continued to reduce the sense of agency compared to intended outcomes. In Experiment 3, we replaced iCub’s emotional facial expressions with color changes (i.e., green or blue) of neutral facial expressions. While the task structure remained similar, this modification allowed us to explore whether modulations of the temporal compression by the emotional expressions, observed in Experiments 1 and 2, were attributable to the emotional content of the outcomes or merely to prediction-based mechanisms.

Results of Experiments 1 and 2 revealed that participants experienced a significantly lower sense of agency when their action unexpectedly produced a sad expression compared to when the same sad expression was generated intentionally. In contrast, the results of Experiment 3 showed no significant difference between accidental and intentional color outcomes. These results suggest that emotional expressions might strongly influence how people perceive their action effects and whether their intended outcome matches what actually occurs. In Experiments 1 and 2, iCub’s expressions likely carried enough affective significance to engage this goal relevance. Throughout the experiments, participants consistently intended to produce positive expressions, as shown by their clear preference for actions associated with happiness rather than sadness. When participants unexpectedly saw the robot display a sad expression, their stronger engagement with happy outcomes likely amplified the impact of prediction errors, as their preferred outcome was not achieved, thereby influencing the intentional binding effect, in line with sense of agency models.

Moreover, given that sadness is a highly salient emotion, participants might have distanced themselves from the outcome in terms of agency. While they likely acknowledged their role in initiating the action, the mismatch between their intentions and the outcome might have prompted a reassessment of authorship, leading to a feeling of “I didn’t mean for this to happen”. By contrast, in Experiment 3, the neutral color changes potentially lacked this incentive value and participants did not show a strong preference for one option over the other, as indicated by comparable choice frequencies. This weaker preference might have contributed to the absence of a significant difference in sense of agency between accidental and intentional outcomes in the color-change context.

On the other hand, the results of Experiment 3 align with the findings of Desantis et al.60, who explored whether motor predictions modulate intentional binding. In a similar paradigm where 20% of outcomes were unexpected, Desantis et al.60 observed no stronger effect of intentional binding for congruent, relative to incongruent, outcomes. Our results are also consistent with Haering and Kiesel’s62 experiment, which tested action-effect contingencies and found no significant decrease in sense of agency when the outcomes were unexpected. Specifically, in their study, one button rendered blue and the other red 80% of the time, with 20% of these mappings reversing, a manipulation analogous to our green button occasionally producing blue. Their findings suggested that the specific mapping between actions and outcomes did not significantly impact participants’ sense of agency, which aligns with our observation that accidental blue did not reduce agency compared to intentional blue.

Similarly, the findings of Bednark et al.59 parallel with the patterns observed in Experiment 3 of our study. In their EEG study, P3b ERP responses were elicited following prediction-incongruent outcomes, reflecting the canonical cognitive processing of outcome incongruency. However, despite this increased cognitive processing, Bednark et al.59 did not observe a corresponding change in N1 suppression, a neural marker associated with the sense of agency. The authors interpreted their results as indicative of context updating processes being activated when predicted sensory outcomes are violated.

Haering and Kiesel62 also noted longer response times for unexpected outcomes, which served as a manipulation check for contingency. Similarly, in our experiments, participants took longer to respond after encountering accidental outcomes compared to intentional ones, consistent with prior findings that such events may disrupt response preparation (e.g., Band et al.64). However, although longer response times were observed across all three experiments, this pattern did not consistently align with interval estimations. Notably, increased interval estimations for accidental outcomes emerged only in Experiments 1 and 2, but not in Experiment 3. This dissociation suggests that the observed differences in interval estimation cannot be solely attributed to surprise or attentional disruption, and instead might reflect outcome-specific influences on the sense of agency.

While our findings are consistent with some previous work, they contrast with several studies reporting stronger binding for predictable outcomes22,23,31,65,66. Several factors might account for these inconsistencies across studies. One consideration is methodological differences: many studies employed the Libet clock method, which can assess action and outcome binding separately and might reveal distinct patterns for each component. For example, Beck et al.22 found that outcome binding was increased by predictability only for painful somatosensory outcomes, but not for non-painful ones, whereas action binding was positively influenced by predictability for both outcome types. By contrast, our experiment used interval estimation, which could be less sensitive to specific contingency effects. Additionally, Majchrowicz & Wierzchoń63 found enhanced binding for rare, unexpected oddball tones, but this effect was only present when the oddballs differed from standard tones in both identity and delay; when oddballs and standards were matched in timing, no difference in binding was observed, suggesting that temporal prediction might also play a key role. Thus, whether action or outcome binding is measured, as well as the outcome value, and timing of the outcome, might all influence the observed effects. These methodological and conceptual differences might determine how predictability shapes the sense of agency. Future research is needed to compare these variables to address how predictability modulates the sense of agency.

Moreover, our results suggest that when an outcome is neither experienced as preferred nor embedded in a meaningful action-outcome relationship, prediction violations alone might not be sufficient to alter the sense of agency. In Experiments 1 and 2, participants gave the robot an item they knew it would “like” or “dislike,” and in turn they saw the outcome as the robot’s reaction, signaled by happy or sad expressions. This likely provided a clear semantic link between the participant’s choice and the robot’s emotional response. In Experiment 3, however, this relationship was more arbitrary, as selecting an object simply produced a color change. This interpretation also aligns with previous suggestions62,67 that sensitivity to unexpected outcomes might depend not only on the predictability and value of an outcome but also on whether the action-outcome association itself is meaningful and interpretable (e.g., pressing right arrow key to select the right arrow on the screen).

Importantly, while participants exhibited a strong preference for actions that would elicit positive emotional feedback (i.e., happy expressions) in Experiments 1 and 2, the results revealed no significant difference in the sense of agency between intentional happy and accidental sad outcomes. However, in Experiment 3, intentional binding was stronger when participants produced intentional green, despite the fact that this condition also included a 20% probability of an accidental outcome. While this might appear counterintuitive at first glance, it might reflect how the same level of outcome uncertainty can interact differently with the value and meaning of different types of outcomes (e.g., affective vs. perceptual).

One plausible explanation is that modulation of intentional binding has been linked to dopaminergic activity68,69, as dopamine signals are thought to encode not only the positive value of outcomes but also the significance of avoiding undesired outcomes70,71. Our findings suggest that the meaning and relevance of an outcome can shape how these dopaminergic mechanisms influence the sense of agency. When an outcome carries emotional or communicative value, a successful match between action and effect might be experienced as rewarding. For neutral outcomes, where the result has no clear goal or affective meaning, maintaining reliable control can engage dopaminergic signaling related to avoiding undesired outcomes or minimizing errors (e.g., avoiding the 20% accidental outcome), and therefore strengthening intentional binding, as demonstrated in Experiment 3.

Finally, the role of iCub as a mentalistic agent (i.e., being perceived as capable of having mental states such as desires, intentions, and beliefs) might be also relevant to understanding the findings. Although iCub’s intentionality was not real, the robot was framed as having preferences and dislikes (e.g., liking screwdrivers and disliking hammers), likely encouraging participants to perceive it as possessing intentions, beliefs, and desires, thus engaging with the task as a social interaction in Experiments 1 and 2, rather than a purely mechanical one. By framing iCub as a mentalistic agent, we likely heightened participants’ emotional engagement, which might explain why emotional expressions, particularly accidental mismatches, had a different impact on agency compared to the more neutral perceptual outcomes in Experiment 3, where iCub might have been perceived as more mechanistic.

Taken together, the findings from our study suggest that the sense of agency is modulated not only by action-outcome mapping and predictive processes but also by the affective significance of the outcomes. While contingency plays a role in shaping agency, emotional expressions, particularly when they violate participants’ intentions, have a stronger effect on sense of agency. The results highlight the complex interplay between intentionality, emotional feedback, and predictive mechanisms in determining how individuals experience agency.

Data availability

The data and materials related to this study are publicly available on Zenodo https://doi.org/10.5281/zenodo.17290144.

References

Gallagher, S. Philosophical conceptions of the self: implications for cognitive science. Trends Cogn. Sci. 4 (1), 14–21 (2000).

Moore, J. W. What is the sense of agency and why does it matter? Front. Psychol. 7, 1272 (2016).

Coles, N. A. & Frank, M. C. A quantitative review of demand characteristics and their underlying mechanisms. PsyArXiv (2023).

Haggard, P., Clark, S. & Kalogeras, J. Voluntary action and conscious awareness. Nat. Neurosci. 5 (4), 382–385 (2002).

Tsakiris, M. & Haggard, P. Awareness of somatic events associated with a voluntary action. Exp. Brain Res. 149, 439–446 (2003).

Tanaka, T., Matsumoto, T., Hayashi, S., Takagi, S. & Kawabata, H. What makes action and outcome Temporally close to each other: A systematic review and meta-analysis of Temporal binding. Timing time Percept. 7 (3), 189–218 (2019).

Gutzeit, J., Weller, L., Kürten, J. & Huestegge, L. Intentional binding: merely a procedural confound? J. Exp. Psychol. Hum. Percept. Perform. 49 (6), 759 (2023).

Hoerl, C. et al. Temporal binding, causation, and agency: developing a new theoretical framework. Cogn. Sci., 44(5), 1–27 (2020).

Kong, G., Aberkane, C., Desoche, C., Farnè, A. & Vernet, M. No evidence in favor of the existence of intentional binding. J. Exp. Psychol. Hum. Percept. Perform. 50 (6), 626 (2024).

Suzuki, K., Lush, P., Seth, A. K. & Roseboom, W. Intentional binding without intentional action. Psychol. Sci. 30 (6), 842–853 (2019).

Gergely, G. & Watson, J. S. Early socio-emotional development: contingency perception and the social-biofeedback model. Early Social Cognition: Underst. Others First Months Life. 60, 101–136 (1999).

Watson, J. S. The perception of contingency as a determinant of social responsiveness. In: Thoman EB (ed) Origins of the Infant’s Social Responsiveness. Erlbaum, Hillsdale, NJ, pp. 33–64 (1979).

Wolpert, D. M. & Kawato, M. Multiple paired forward and inverse models for motor control. Neural Netw. 11 (7–8), 1317–1329 (1998).

Frith, C. D., Blakemore, S. J. & Wolpert, D. M. Explaining the symptoms of schizophrenia: abnormalities in the awareness of action. Brain Res. Rev. 31 (2–3), 357–363 (2000).

Blakemore, S. J., Wolpert, D. M. & Frith, C. D. Abnormalities in the awareness of action. Trends Cogn. Sci. 6 (6), 237–242 (2002).

Kaiser, J., Buciuman, M., Gigl, S., Gentsch, A. & Schütz-Bosbach, S. The interplay between affective processing and sense of agency during action regulation: A review. Front. Psychol. 12, 716220 (2021).

Villa, R., Ponsi, G., Scattolin, M., Panasiti, M. S. & Aglioti, S. M. Social, affective, and non-motoric bodily cues to the sense of agency: A systematic review of the experience of control. Neurosci. Biobehavioral Reviews. 142, 104900 (2022).

Barlas, Z. & Obhi, S. S. Cultural background influences implicit but not explicit sense of agency for the production of musical tones. Conscious. Cogn. 28, 94–103 (2014).

Gentsch, A., Weiss, C., Spengler, S., Synofzik, M. & Schütz-Bosbach, S. Doing good or bad: how interactions between action and emotion expectations shape the sense of agency. Soc. Neurosci. 10 (4), 418–430 (2015).

Takahata, K. et al. It’s not my fault: postdictive modulation of intentional binding by monetary gains and losses. PloS One. 7 (12), Articlee53421 (2012).

Yoshie, M. & Haggard, P. Negative emotional outcomes attenuate sense of agency over voluntary actions. Curr. Biol. 23 (20), 2028–2032 (2013).

Beck, B., Di Costa, S. & Haggard, P. Having control over the external world increases the implicit sense of agency. Cognition 162, 54–60 (2017).

Di Costa, S., Théro, H., Chambon, V. & Haggard, P. Try and try again: Post-error boost of an implicit measure of agency. Q. J. Experimental Psychol. 71 (7), 1584–1595 (2018).

Caspar, E. A., Christensen, J. F., Cleeremans, A. & Haggard, P. Coercion changes the sense of agency in the human brain. Curr. Biol. 26 (5), 585–592 (2016).

Tanaka, T. & Kawabata, H. Sense of agency is modulated by interactions between action choice, outcome valence, and predictability. Curr. Psychol. 40, 1795–1806 (2021).

Barlas, Z., Hockley, W. E. & Obhi, S. S. The effects of freedom of choice in action selection on perceived mental effort and the sense of agency. Acta. Psychol. 180, 122–129 (2017).

Moreton, J., Callan, M. J. & Hughes, G. How much does emotional Valence of action outcomes affect Temporal binding? Conscious. Cogn. 49, 25–34 (2017).

Lombardi, M. et al. The impact of facial expression and communicative gaze of a humanoid robot on individual sense of agency. Sci. Rep. 13, 10113 (2023).

Sarma, D. & Srinivasan, N. Intended emotions influence intentional binding with emotional faces: larger binding for intended negative emotions. Conscious. Cogn. 92, 103136 (2021).

Chambon, V. & Haggard, P. Sense of control depends on fluency of action selection, not motor performance. Cognition 125 (3), 441–451 (2012).

Moore, J. W., Wegner, D. M. & Haggard, P. Modulating the sense of agency with external cues. Conscious. Cogn. 18 (4), 1056–1064 (2009).

Synofzik, M., Vosgerau, G. & Voss, M. The experience of agency: an interplay between prediction and postdiction. Front. Psychol. 4, 127 (2013).

Yoshie, M. & Haggard, P. Effects of emotional Valence on sense of agency require a predictive model. Sci. Rep. 7 (1), 8733 (2017).

Christensen, J. F., Yoshie, M., Di Costa, S. & Haggard, P. Emotional valence, sense of agency and responsibility: A study using intentional binding. Conscious. Cogn. 43, 1–10 (2016).

Sepeta, L. et al. Abnormal social reward processing in autism as indexed by pupillary responses to happy faces. J. Neurodevelopmental Disorders. 4, 1–9 (2012).

Sagliano, L., Ponari, M., Conson, M. & Trojano, L. The interpersonal effects of emotions: the influence of facial expressions on social interactions. Front. Psychol. 13, 1074216 (2022).

Metta, G. et al. The iCub humanoid robot: an open-systems platform for research in cognitive development. Neural Netw. 23 (8–9), 1125–1134 (2010).

Dubal, S., Foucher, A., Jouvent, R. & Nadel, J. Human brain spots emotion in Non humanoid robots. Soc. Cognit. Affect. Neurosci. 6 (1), 90–97 (2011).

Geiger, A. R. & Balas, B. Robot faces elicit responses intermediate to human faces and objects at face-sensitive ERP components. Sci. Rep. 11 (1), 17890 (2021).

Hofree, G., Ruvolo, P., Reinert, A., Bartlett, M. S. & Winkielman, P. Behind the robot’s smiles and frowns: in social context, people do not mirror android’s expressions but React to their informational value. Front. Neurorobotics. 12, 14 (2018).

Hortensius, R., Hekele, F. & Cross, E. S. The perception of emotion in artificial agents. IEEE Trans. Cogn. Dev. Syst. 10 (4), 852–864 (2018).

Metta, G., Fitzpatrick, P. & Natale, L. YARP: yet another robot platform. Int. J. Adv. Rob. Syst. 3 (1), 8 (2006).

Peirce, J. W. PsychoPy—psychophysics software in python. J. Neurosci. Methods. 162 (1–2), 8–13 (2007).

Mogg, K., Philippot, P. & Bradley, B. P. Selective attention to angry faces in clinical social phobia. J. Abnorm. Psychol. 113 (1), 160 (2004).

Bates, D. M. lme4: Mixed-effects modeling with R. (2010, February).

Kuznetsova, A., Brockhoff, P. B. & Christensen, R. H. B. LmerTest package: tests in linear mixed effects models. Journal Stat. Software, 82(13), 1–26 (2017).

Lenth, R. emmeans: Estimated Marginal Means, aka Least-Squares Means (R package version 1.4.1). https://CRAN.R-project.org/package=emmeans (2019).

Bianco, R., Gold, B. P., Johnson, A. P. & Penhune, V. B. Music predictability and liking enhance pupil dilation and promote motor learning in non-musicians. Sci. Rep. 9 (1), 17060 (2019).

Brandi, M. L., Kaifel, D., Bolis, D. & Schilbach, L. The interactive self–a review on simulating social interactions to understand the mechanisms of social agency. i-com 18 (1), 17–31 (2019).

Wessel, J. R. & Aron, A. R. On the globality of motor suppression: unexpected events and their influence on behavior and cognition. Neuron 93 (2), 259–280 (2017).

Regev, S. & Meiran, N. Post-error slowing is influenced by cognitive control demand. Acta. Psychol. 152, 10–18 (2014).

Hatfield, E., Cacioppo, J. T. & Rapson, R. L. Emotional contagion. Curr. Dir. Psychol. Sci. 2 (3), 96–100 (1993).

Antusch, S., Aarts, H. & Custers, R. The role of intentional strength in shaping the sense of agency. Front. Psychol. 10, 1124 (2019).

Le Besnerais, A., Prigent, E. & Grynszpan, O. Agency and social affordance shape visual perception. Cognition 233, 105361 (2023).

McDonough, K. L., Costantini, M., Hudson, M., Ward, E. & Bach, P. Affordance matching predictively shapes the perceptual representation of others’ ongoing actions. J. Exp. Psychol. Hum. Percept. Perform. 46 (8), 847 (2020).

Scharoun, S. M., Bryden, P. J., Cinelli, M. E., Gonzalez, D. A. & Roy, E. A. Do children have the same capacity to perceive affordances as adults? An investigation of tool selection and use. J. Motor Learn. Dev. 4 (1), 59–79 (2016).

Ruess, M., Thomaschke, R. & Kiesel, A. Intentional binding of visual effects. Atten. Percept. Psychophys. 80, 713–722 (2018).

Wen, W. Does delay in feedback diminish sense of agency? A review. Conscious. Cogn. 73, 102759 (2019).

Bednark, J. G., Poonian, S. K., Palghat, K., McFadyen, J. & Cunnington, R. Identity-specific predictions and implicit measures of agency. Psychol. Consciousness: Theory Res. Pract. 2 (3), 253 (2015).

Desantis, A., Hughes, G. & Waszak, F. Intentional binding is driven by the mere presence of an action and not by motor prediction. PLoS One, 7(1), e29557, 1–7 (2012).

Dogge, M., Custers, R. & Aarts, H. Moving forward: on the limits of motor-based forward models. Trends Cogn. Sci. 23 (9), 743–753 (2019).

Haering, C. & Kiesel, A. Intentional binding is independent of the validity of the action effect’s identity. Acta. Psychol. 152, 109–119 (2014).

Majchrowicz, B. & Wierzchoń, M. Unexpected action outcomes produce enhanced Temporal binding but diminished judgement of agency. Conscious. Cogn. 65, 310–324 (2018).

Band, G. P., van Steenbergen, H., Ridderinkhof, K. R., Falkenstein, M. & Hommel, B. Action-effect negativity: irrelevant action effects are monitored like relevant feedback. Biol. Psychol. 82 (3), 211–218 (2009).

Engbert, K. & Wohlschläger, A. Intentions and expectations in Temporal binding. Conscious. Cogn. 16 (2), 255–264 (2007).

Moore, J. & Haggard, P. Awareness of action: inference and prediction. Conscious. Cogn. 17 (1), 136–144 (2008).

Barlas, Z. & Kopp, S. Action choice and outcome congruency independently affect intentional binding and feeling of control judgments. Front. Hum. Neurosci. 12, 137 (2018).

Moore, J. W. & Obhi, S. S. Intentional binding and the sense of agency: a review. Conscious. Cogn. 21 (1), 546–561 (2012).

Render, A. & Jansen, P. Dopamine and sense of agency: determinants in personality and substance use. PloS One, 14(3), e0214069, 1–19 (2019).

Bromberg-Martin, E. S., Matsumoto, M. & Hikosaka, O. Dopamine in motivational control: rewarding, aversive, and alerting. Neuron 68 (5), 815–834 (2010).

Kim, H., Shimojo, S. & O’Doherty, J. P. Is avoiding an aversive outcome rewarding? Neural substrates of avoidance learning in the human brain. PLoS Biol., 4(8), e233, 1453–1461 (2006).

Acknowledgements

We thank Mateusz Wozniak for his helpful suggestions on the analytical approach and for insightful discussions about the results.

Funding

We acknowledge financial support from PNRR MUR Project PE000013 ‘Future Artificial Intelligence Research (hereafter FAIR)’, funded by the European Union – NextGenerationEU. CUP J53C22003010006.

Author information

Authors and Affiliations

Contributions