Abstract

The increasing complexity of scheduling in sports dance education presents challenges such as conflicts in instructor availability, inefficient class assignments, and the need for personalized training plans. Traditional scheduling methods, which rely on manual adjustments or rule-based heuristics, often fail to handle dynamic constraints effectively. This study proposes a deep learning-powered scheduling framework that integrates historical scheduling data, instructor availability, and student performance metrics to generate optimal class schedules. The model leverages Recurrent Neural Networks (RNNs) for sequential learning and Reinforcement Learning (RL) for adaptive decision-making, ensuring conflict-free scheduling while maintaining flexibility for modifications. Experimental validation was conducted using five years of real-world sports dance class data from educational institutions. The proposed model achieves a 95% conflict resolution rate, significantly outperforming traditional heuristic-based scheduling, which resolves only 55% of conflicts. Additionally, instructor workload balancing efficiency improved to 92%, ensuring fairer distribution of teaching hours, while student schedule continuity reached 94%, reducing class fragmentation and optimizing learning progression. The model also demonstrates superior computational efficiency, reducing scheduling execution time to 40 seconds per iteration, compared to 120 seconds in traditional methods. The findings highlight the scalability and adaptability of AI-driven scheduling optimization in structured educational settings.

Similar content being viewed by others

Introduction

The increasing demand for structured and high-quality sports dance education has underscored the need for intelligent scheduling systems that go beyond manual planning. Sports dance instruction requires the careful coordination of instructors, students, and facilities, with a strong emphasis on individual progress and physical readiness1. Traditional scheduling methods often manual or rule-based struggle to address these multifaceted demands, particularly as class sizes grow and learning trajectories diverge2. These approaches frequently result in scheduling conflicts, underutilization of resources, and a lack of responsiveness to student development3. Unlike conventional academic subjects that can adhere to rigid timetables, sports dance education necessitates dynamic scheduling due to its dependence on physical stamina, performance cycles, and individualized progression4. Instructors frequently juggle multiple student groups at varying skill levels, making the balancing of availability, facility constraints, and learning goals particularly challenging5. Inefficient scheduling can hinder student outcomes, lead to instructional burnout, and compromise the overall quality of education6.

While manual and heuristic-based scheduling methods such as spreadsheets, availability charts, and rule-based frameworks remain common7, their scalability is limited. They often lack the flexibility to handle real-time changes, such as adjustments to instructor availability or individual coaching needs8. Moreover, these methods typically neglect pedagogical sequencing, an essential factor in skills-based disciplines like sports dance, where lesson order influences mastery. Additionally, manual systems lack predictive capabilities, frequently resulting in scheduling conflicts that require time consuming manual resolution9. Recent advances in Artificial Intelligence (AI) and deep learning present a compelling alternative for scheduling optimization in such dynamic settings10. Leveraging historical data, real-time input, and predictive modeling, AI-based scheduling systems can autonomously generate and adapt timetables. Deep learning techniques particularly Recurrent Neural Networks (RNNs) and Reinforcement Learning (RL) are well-suited for capturing the temporal and sequential nature of dance education, enabling smarter scheduling that anticipates conflicts and adjusts to ongoing feedback11. Reinforcement learning offers particular promise by continuously improving scheduling strategies through trial and feedback. Unlike static systems, these models learn from institutional history and current usage patterns, optimizing instructor workloads, lesson sequencing, and facility allocation12. Furthermore, AI introduces multi-objective optimization, allowing the model to simultaneously consider various constraints such as student progress, teacher availability, and room scheduling achieving a level of efficiency unattainable with traditional methods13.

Potential scheduling problems and AI solutions. The diagram illustrates key scheduling challenges and their corresponding solutions. The proposed deep learning-based scheduling model addresses conflicts, instructor workload imbalance, data scarcity, and computational complexity using reinforcement learning, GPU optimization, and synthetic data generation.

Figure 1 illustrates key scheduling challenges such as resource conflicts, instructor overload, and computational bottlenecks and their mitigation through AI technologies including reinforcement learning, GPU acceleration, and synthetic data generation. These tools enable scalable, real-time scheduling solutions that evolve with institutional needs. Although AI-driven scheduling has shown success in academic timetabling, sports dance education presents unique constraints. These include the need to accommodate physical recovery, artistic development, and non-linear learning paths14. Any robust scheduling model must not only optimize logistics but also align with pedagogical and physiological requirements. Adaptive scheduling must reflect students’ cognitive and physical states, ensuring each learner progresses at an appropriate pace, as emphasized in Fig. 1.

This study proposes a deep learning-based scheduling framework tailored to the needs of sports dance education. The model integrates historical data, real-time updates, and reinforcement learning to automate and optimize class scheduling. By minimizing conflicts and enhancing resource allocation, the system improves both instructional efficiency and student learning outcomes. Through comparative analysis with traditional approaches, the study evaluates the proposed model’s performance in real-world scenarios, providing quantitative evidence of its advantages. This research contributes to the growing body of work in AI-driven education technology by addressing a critical gap: the lack of intelligent, adaptive scheduling systems for physical and performance-based education. The proposed solution demonstrates how deep learning can transform scheduling practices to meet the evolving demands of modern educational environments, offering a scalable model applicable to broader domains such as athletics, performing arts, and vocational training.

Research objectives and contributions

This study aims to develop an AI-driven scheduling framework to optimize class timetables in sports dance education, addressing the limitations of traditional methods like manual planning and rule-based heuristics. These methods often result in scheduling conflicts, inefficient resource use, and suboptimal learning experiences. The research leverages deep learning techniques, specifically RNNs and RL, to create a dynamic and adaptive scheduling model.

Key contributions include:

-

Deep Learning-Based Scheduling Model: A novel AI-based framework integrating historical data, real-time constraints (e.g., instructor availability, student enrollment, facility usage), and performance metrics to generate optimal schedules. RNNs capture temporal dependencies, while RL enhances adaptability to dynamic real-world scenarios.

-

Conflict-Free Scheduling and Workload Balancing: The model resolves 95% of scheduling conflicts (compared to the 55% resolution rate of traditional methods) and optimizes instructor workload distribution with 92% balancing efficiency.

-

Student Schedule Continuity and Learning Progression: The model ensures 94% schedule continuity, optimizing the sequencing of lessons to support steady skill development.

-

Computational Efficiency and Real-Time Adaptability: The model reduces execution time to 40 seconds per iteration (a 67% reduction), making it feasible for large-scale, real-time scheduling applications.

Motivation for proposed approach

Traditional methods often fail to address the complex, dynamic nature of sports dance programs, leading to inefficiencies and suboptimal learning experiences. The AI-driven approach adapts to real-time changes, resolves conflicts automatically, and balances instructor workloads, improving the educational environment for both students and instructors. The proposed framework offers a novel, scalable solution to optimize scheduling in resource-intensive educational programs like sports dance, advancing AI-driven scheduling across disciplines.

The overall organization of the research is in the following manner. After the section “Introduction” of Introduction, and subsection of major contributions, the next section “Literature review” highlights the background and literature. Next, the detailed proposed architecture is provided in section “Proposed architecture”. The results and simulations are discussed in sections “Implementation setup and performance metrics” and “Simulations and results discussion”. The research is finally concluded in section “Conclusion” which clearly describe our conclusions and what we may do in the future respectively.

Literature review

Scheduling optimization has long been a critical area of research in education, traditionally approached through rule-based and heuristic methods15. These early techniques, such as integer programming, constraint-based optimization, and genetic algorithms, offered structured solutions to scheduling under fixed constraints, including instructor availability, student enrollment, and classroom allocation16. However, such models were typically static and struggled to adapt to the dynamic needs of modern educational environments. As institutional demands became increasingly complex and variable, it became evident that more flexible, data-driven approaches were required to support scalable and adaptive scheduling systems. The application of Artificial Intelligence and Machine Learning has significantly advanced scheduling methodologies, especially in domains such as university course planning, workforce management, and resource allocation17. Initial AI implementations focused on automating constraint satisfaction, where predefined rules governed conflict resolution. Subsequent developments incorporated supervised and unsupervised learning models capable of pattern recognition and prediction. More recently, Reinforcement Learning (RL) where agents learn optimal scheduling strategies through interaction with the environment—has gained prominence for its ability to adjust to unforeseen changes such as instructor unavailability or fluctuating class demand18. Despite these advances, research into applying such intelligent systems in niche and dynamic settings like sports dance education remains limited.

Deep learning, with its capacity to learn hierarchical patterns from large datasets, offers additional capabilities for tackling the complexities of educational scheduling. Compared to traditional AI models that rely on rigid rule sets, deep learning techniques can autonomously extract and model scheduling behaviors from historical data27. RNNs, LSTM networks, and Transformer-based architectures are particularly effective in time-sensitive applications, where past decisions influence future outcomes. Moreover, Neural Combinatorial Optimization (NCO) combines the representational power of deep learning with the problem-solving strength of combinatorial methods, making it well-suited for large-scale scheduling tasks. These models also allow for simultaneous optimization of multiple constraints, such as instructor preferences, room availability, and student learning trajectories28. However, the integration of such approaches into the realm of physical education and sports training where scheduling involves both logistical and physiological considerations remains an underexplored area. Sports dance education introduces a unique scheduling paradigm that diverges significantly from traditional academic contexts. Here, scheduling must account not only for instructional logistics but also for physical endurance, skill progression, and personalized learning paths29. Instructors often work with students at varying levels, requiring careful sequencing of lessons that match individual readiness. Unlike academic learning, where standardized pacing can be applied, sports dance demands adaptive schedules that accommodate individual progress, allow for adequate recovery, and align with artistic development goals. Traditional models, even when automated, are generally not equipped to handle these variables holistically. The neglect of these critical physical and cognitive factors in existing models often results in inefficiencies that negatively affect both training outcomes and student well-being30,33.

Although general AI-based scheduling models—such as Genetic Algorithms (GA), Simulated Annealing (SA), and Constraint Satisfaction Problem (CSP) solvers have achieved success in academic scheduling, they lack the responsiveness required for physically intensive and artistically nuanced programs like sports dance31,33. Some recent advancements in RL-based frameworks have shown promise for real-time adaptability, yet they still tend to overlook domain-specific constraints such as fatigue, practice intensity, and individual learning rates. Supervised learning-based scheduling systems, while effective under predictable patterns, often fail in the face of novel or irregular scenarios common in physical training. In contrast, combining deep learning with reinforcement learning (Deep RL) allows for dynamic decision-making grounded in both historical knowledge and real-time feedback32. Nevertheless, applications of Deep RL in sports dance education are still in their infancy, revealing a significant research gap.

This study builds upon previous research in deep learning-powered scheduling systems by introducing a specialized AI framework tailored for sports dance education. Unlike existing models that focus on static course scheduling, the proposed approach leverages reinforcement learning and deep neural networks to create a dynamic and adaptive scheduling system that can optimize lesson sequencing, resource allocation, and student progression tracking. By incorporating real-time feedback from instructors and students, the system can continuously refine and improve scheduling decisions, ensuring an efficient, conflict-free, and personalized training experience.

Proposed architecture

We present the architecture of the proposed deep learning-based scheduling system, designed to optimize class timetables in sports dance education. The system integrates multiple components, including data preprocessing, model training, and real-time schedule optimization. By leveraging deep learning techniques such as RNNs and RL, the architecture is structured to handle dynamic constraints, ensure conflict-free scheduling, and maintain workload balance among instructors. The following sub-sections will describe the individual components of the proposed system in detail.

Problem statement

Efficient scheduling in sports dance education is a complex challenge due to factors like instructor availability, facility allocation, and student preferences. Traditional systems, which rely on manual planning or rule-based algorithms, often lead to inefficiencies, scheduling conflicts, and an inability to adapt to dynamic needs. Static schedules fail to accommodate individual progress, while real-time feedback mechanisms are lacking. This study proposes an AI-driven scheduling framework powered by deep learning, particularly RNNs and RL, to dynamically optimize class allocations, minimize conflicts, and enhance learning experiences by learning from historical scheduling data as illustrated in Tables 1 and 2. The goal is to improve scheduling efficiency, reduce conflicts, and optimize personalized learning in sports dance education.

Overview of the deep learning-based scheduling system

Traditional scheduling systems in sports dance education rely on predefined rules and manual adjustments, which often lead to inefficiencies and conflicts in resource allocation. To address these issues, we propose an AI-driven scheduling framework that leverages deep learning to optimize class timetables dynamically. The model is designed to incorporate real-time constraints, including instructor availability, student skill levels, and venue limitations, ensuring an optimal allocation of resources. Figure 2 illustrates the overall architecture of the proposed deep learning-powered scheduling system. It depicts how the system processes input data, applies deep learning models, and produces the optimized class schedule. Thus encapsulates the entire process from data collection to schedule generation, emphasizing how deep learning models (RNN and RL) are used to automate and optimize scheduling. The integration of these models is crucial in handling the dynamic nature of sports dance education, where instructor availability, student progress, and facility constraints can change frequently. The figure is broken down into the following key components.

Overall proposed architecture. The figure illustrates the deep learning-powered scheduling framework, which integrates data collection, preprocessing, model training, conflict resolution, reinforcement learning adaptation, and final optimized scheduling. The proposed system ensures workload balancing, real-time schedule learning, and improved scheduling efficiency using AI-driven optimizations.

The proposed system utilizes a combination of RNNs for capturing temporal dependencies in scheduling and RL for dynamic adaptability. Given a set of input constraints \({\mathcal {C}}\), our model predicts the optimal schedule \({\textbf{S}}^*\) that minimizes conflicts and maximizes learning efficiency:

where \({\mathcal {L}}({\textbf{S}}, {\mathcal {C}})\) represents the loss function measuring the deviation of the generated schedule from optimal scheduling criteria.

Problem formulation and mathematical modeling

The scheduling problem is formulated as an optimization task, aiming to assign N classes, taught by M instructors, across T time slots while satisfying key constraints and minimizing conflicts. We define the scheduling matrix \({\textbf{X}} \in {\mathbb {R}}^{N \times M \times T}\), where \(X_{nmt} = 1\) if class n is assigned to instructor m at time t, and 0 otherwise.

The model is governed by the following constraints:

-

Instructor availability: An instructor can only teach one class per time slot:

$$\begin{aligned} \sum _{n=1}^{N} X_{nmt} \le 1, \quad \forall m \in \{1, \dots , M\}, \quad \forall t \in \{1, \dots , T\} \end{aligned}$$ -

Room allocation: A venue can only host one class at any given time:

$$\begin{aligned} \sum _{n=1}^{N} X_{nmt} \le 1, \quad \forall t \in \{1, \dots , T\} \end{aligned}$$ -

Student enrollment: A student can only be enrolled in one class at a time:

$$\begin{aligned} \sum _{c \in C_s} \sum _{t=1}^{T} X_{cmt} \le 1, \quad \forall s \in \{1, \dots , S\} \end{aligned}$$

The objective is to minimize conflicts and ensure fairness in workload distribution among instructors. The first part of the objective function minimizes scheduling conflicts:

The second part ensures workload fairness among instructors:

The third part aims to maximize resource utilization by ensuring time slots and venues are effectively utilized:

The overall objective function is a weighted sum of these components:

where \(\lambda _1, \lambda _2, \lambda _3\) are weighting factors controlling the trade-offs between conflict minimization, workload balance, and resource utilization.

This optimization problem is solved using a hybrid model consisting of Recurrent Neural Networks (RNNs) for initial schedule generation and Reinforcement Learning (RL) for dynamic adjustments. The RNN captures temporal dependencies from historical data to generate a baseline schedule, while RL continuously refines this schedule based on real-time data, optimizing the objective function by minimizing conflicts, balancing workloads, and maximizing resource utilization.

The model is validated using real-world data from sports dance education institutions. Key performance indicators such as conflict resolution rate, workload balancing efficiency, and student schedule continuity are measured and compared to traditional heuristic-based scheduling methods, demonstrating the improvement achieved by the proposed model.

Deep learning architecture for scheduling

The proposed deep learning model consists of two primary components: RNNs for schedule generation and RL for dynamic schedule adaptation.

Recurrent neural network (RNN)-based schedule generation

RNNs are particularly effective for modeling time-dependent scheduling sequences. The input to the RNN consists of a sequence of prior schedules, encoded as:

where:

-

\({\textbf{h}}_t\) represents the hidden state of the RNN at time t,

-

\(W_h\) and \(W_x\) are weight matrices,

-

\(\sigma (\cdot )\) is the activation function,

-

\({\textbf{x}}_t\) is the input schedule at time t.

The final output is generated as:

where \({\textbf{y}}_t\) represents the predicted optimal schedule.

RL-based dynamic adaptation

RL is employed to dynamically adapt the schedule based on real-time constraints. The RL agent is defined by:

-

State (\(s_t\)): The current schedule and associated constraints.

-

Action (\(a_t\)): Adjustments made to the schedule.

-

Reward (\(r_t\)): A function evaluating scheduling efficiency.

The policy \(\pi\) is learned via the policy gradient method, which maximizes the expected cumulative reward:

where: \(\theta\) represents the policy parameters, - \(\gamma\) is the discount factor.

The optimization is performed via gradient ascent:

where \(\eta\) is the learning rate.

Hybrid model for AI-powered scheduling optimization

The final scheduling system integrates RNN-based sequence modeling with RL-based adaptation, ensuring both predictive accuracy and real-time flexibility. The hybrid model operates in two phases:

-

Initial Schedule Prediction: RNN generates an initial schedule based on historical data.

-

Dynamic Optimization: RL continuously refines the schedule, ensuring it adapts to new constraints.

The loss function combines prediction accuracy (\({\mathcal {L}}_{\text {RNN}}\)) and scheduling reward maximization (\(J(\theta )\)):

where \(\lambda _1, \lambda _2\) are balancing factors.

This hybrid approach ensures an adaptive, conflict-free scheduling system that enhances the learning experience while optimizing instructor workload and resource allocation.

Training process and data flow

The effectiveness of the proposed deep learning-based scheduling system is contingent on a well-structured training process that ensures optimal model performance. The training process involves three key phases: data preparation, model training, and hyperparameter optimization. The data flow through the system consists of historical scheduling records, instructor availability patterns, student performance data, and external constraints (e.g., venue availability). These inputs are used to construct training samples, which guide the model in learning optimal scheduling decisions.

The training pipeline can be defined as follows:

where:

-

\({\textbf{X}}_i\) represents the input scheduling constraints,

-

\({\textbf{C}}_i\) denotes contextual parameters such as instructor and student preferences,

-

\({\textbf{Y}}_i\) is the optimal scheduling solution corresponding to the input.

Training dataset and input features

The dataset \({\textbf{D}}\) is constructed using real-world scheduling data collected over multiple academic terms. The input feature space consists of several elements:

-

Instructor availability matrix: \({\textbf{A}} \in {\mathbb {R}}^{M \times T}\), where \(A_{mt} = 1\) if instructor m is available at time t, otherwise 0.

-

Student enrollment matrix: \({\textbf{E}} \in {\mathbb {R}}^{S \times N}\), where \(E_{sn} = 1\) if student s is enrolled in class n.

-

Classroom constraints matrix: \({\textbf{V}} \in {\mathbb {R}}^{N \times T}\), where \(V_{nt} = 1\) if class n can be held at time t.

-

Historical schedule sequences: Encoded as sequential inputs to RNNs to capture time-dependent constraints.

Each sample \(({\textbf{X}}_i, {\textbf{C}}_i, {\textbf{Y}}_i)\) is preprocessed, normalized, and fed into the model during training.

Model training strategy

The training process is structured as a supervised learning problem, where the objective is to minimize the scheduling loss function:

where:

-

\({\mathcal {L}}_{\text {conflict}}\): Penalizes scheduling conflicts,

-

\({\mathcal {L}}_{\text {fairness}}\): Ensures equitable workload distribution among instructors,

-

\({\mathcal {L}}_{\text {resource}}\): Optimizes resource utilization.

The training follows the backpropagation algorithm with the Adam optimizer:

where \(\eta\) is the learning rate.

Hyperparameter tuning and optimization

To enhance model performance, hyperparameters are optimized using grid search and Bayesian optimization. Key hyperparameters include:

-

Learning rate \(\eta \in \{10^{-3}, 10^{-4}, 10^{-5}\}\),

-

Number of hidden layers in the RNN \(\{1, 2, 3\}\),

-

Discount factor \(\gamma\) in RL \(\in [0.8, 0.99]\),

-

Batch size \(\in \{32, 64, 128\}\).

The optimal configuration is determined by evaluating the scheduling loss \({\mathcal {L}}_{\text {sched}}\) on a validation dataset.

Detailed performance metrics and evaluation criteria. The figure presents a structured evaluation framework for the AI-powered scheduling model, categorizing key performance indicators into five primary areas: (i) Scheduling efficiency: conflict resolution rate (CRR) and schedule stability index (SSI); (ii) computational performance: execution time (ET) and latency in adjustments (LA); (iii) Workload balancing: instructor workload variance (WV) and instructor utilization score (IUS); (iv) adaptability and generalization: adaptability score (AS) and retraining frequency (RF); (v) scalability and robustness: scalability index (SI) and generalization capability (GC). These performance metrics ensure optimal conflict resolution, balanced workload distribution, dynamic scheduling adaptability, and large-scale applicability.

Performance metrics and evaluation criteria

The proposed model is evaluated based on its effectiveness in optimizing class schedules while minimizing conflicts and improving resource utilization as illustrated in Fig 3. The evaluation criteria are categorized as follows:

Scheduling efficiency and conflict resolution

Scheduling efficiency is measured by the conflict minimization score:

where a higher value of \({\mathcal {M}}_{\text {conflict}}\) indicates a lower number of scheduling conflicts.

Additionally, the utilization rate of available time slots is computed as:

Instructor and student satisfaction metrics

The system’s impact on instructor and student satisfaction is quantified using the following metrics:

-

Instructor Workload Balance (IWB): Measures deviation from the optimal workload distribution:

$$\begin{aligned} {\mathcal {M}}_{\text {IWB}} = \frac{1}{M} \sum _{m=1}^{M} \left| \sum _{n=1}^{N} \sum _{t=1}^{T} X_{nmt} - \frac{N}{M} \right| \end{aligned}$$(12) -

Student Continuity Score (SCS): Ensures students follow structured learning paths without excessive schedule gaps:

$$\begin{aligned} {\mathcal {M}}_{\text {SCS}} = 1 - \frac{\sum _{s=1}^{S} \sum _{n=1}^{N} \sum _{t=1}^{T} \max (0, T_{\text {gap}}(s,n,t) - T_{\text {max}})}{S \times N \times T} \end{aligned}$$(13)where \(T_{\text {gap}}(s,n,t)\) represents the idle gap before the next scheduled class.

Computational complexity and scalability

The computational complexity of the model is analyzed in terms of training time and inference efficiency.

-

The training complexity of the RNN component is approximately:

$$\begin{aligned} {\mathcal {O}}(NMT H^2) \end{aligned}$$(14)where H represents the number of hidden units.

-

The reinforcement learning agent has an update complexity of:

$$\begin{aligned} {\mathcal {O}}(T A S) \end{aligned}$$(15)where A is the action space size and S is the state space dimension.

-

The overall model’s inference time is optimized using parallel batch computations, reducing latency to:

$$\begin{aligned} {\mathcal {O}}(\log N + \log M) \end{aligned}$$(16)

The model’s ability to scale is measured by its performance degradation rate as the number of classes increases:

where a lower \({\mathcal {S}}_{\text {degradation}}\) value indicates better scalability.

Implementation setup and performance metrics

This section describes the technical setup used for the implementation of the proposed scheduling system. The system was developed using a combination of popular deep learning and reinforcement learning frameworks, including PyTorch and TensorFlow. The computational environment is designed to handle large-scale scheduling data efficiently, with a focus on optimizing execution speed and scalability.

System architecture and computational environment setup

The scheduling system is deployed in a high-performance computing environment using Python 3.9 with dependencies like PyTorch 2.0, TensorFlow 2.11, and Stable-Baselines3 for reinforcement learning. It uses SciPy for optimization, Optuna for hyperparameter tuning, and PostgreSQL 14 for data management, running on Ubuntu 20.04 LTS. The system features an NVIDIA RTX 4090 GPU, Intel Core i9-13900K CPU (24-Core, 32-Thread), 64 GB DDR5 RAM, and a 2TB NVMe SSD. This setup ensures efficient model training, inference, and real-time scheduling, handling large volumes of data while minimizing latency and maximizing throughput, making it ideal for large institutions.

Dataset preparation and preprocessing

The dataset used for training consists of real-world sports dance education schedules collected from multiple institutions. The dataset includes instructor availability, student enrollments, venue capacities, and scheduling constraints. The historical scheduling records are preprocessed to ensure data consistency and remove anomalies such as missing instructor availability and overlapping time slots. To address potential data quality issues, we apply a robust preprocessing pipeline to clean and handle missing or noisy data. Specifically, missing values in instructor availability and class enrollments are imputed using statistical techniques such as forward filling or mean substitution. For cases where data might be noisy, we employ outlier detection methods to filter out extreme values that could distort the training process. Additionally, data augmentation techniques are used to synthetically expand the training dataset by generating plausible scheduling records, thus improving the model’s resilience to noisy inputs. For physical endurance testing, we use wearable fitness trackers (e.g., heart rate monitors, GPS devices) to measure students’ cardiovascular performance and recovery time. These devices are synced with a centralized database to track endurance over time. Performance metrics, including dance proficiency, are collected through weekly instructor evaluations, where instructors rate each student’s dance skills on a scale from 0 to 10 based on predefined criteria (e.g., rhythm, technique, and choreography execution). These scores are recorded in the system for integration into the scheduling algorithm.

Ethical compliance statements:

-

1.

All data collection and processing procedures were conducted in strict accordance with the GDPR framework and institutional data governance policies, ensuring compliance with international standards for human subjects research and educational data protection.

-

2.

This study received formal approval from the Guizhou Normal University ethics (approval code: GZNU-20240183), which oversees educational research involving human participants in accordance with national regulations.

-

3.

Written informed consent was obtained from all participating instructors and legal guardians of minor students prior to data collection, with explicit authorization for anonymized academic use of scheduling records. Participants retained full rights to withdraw consent at any stage.

To preserve privacy, all personal identifiers were removed through cryptographic hashing, and data were re-encoded using anonymized student IDs with multi-layer access controls. Textual data were lowercased, stripped of stopwords, and tokenized using WordPiece tokenization. Structured data were normalized using z-score standardization. Missing values in numeric fields were imputed using forward-fill or mean substitution, depending on the sequence length and sparsity, following audit-preserved documentation protocols.

Data sources and collection methods

The data used for model training is collected from institutional scheduling logs, including historical class schedules from university databases. Instructor availability records are extracted from semester-wise faculty workload distributions, ensuring an accurate representation of instructor constraints. Student registration data is retrieved from institutional course management systems, mapping student enrollments to class sections. The dataset is structured to ensure that all constraints relevant to scheduling optimization are incorporated.

Feature engineering and data normalization

The deep learning scheduling model incorporates physical, cognitive, and artistic student attributes to optimize sports dance education. Physical attributes, such as Heart Rate Variability (HRV) and activity intensity, help prevent fatigue by adjusting class intensity. Cognitive attributes, like attention scores and learning pace, optimize scheduling to prevent cognitive overload, while artistic attributes, including performance expressiveness and technique proficiency, guide class assignments based on students’ creative strengths.

For example, a beginner student with low HRV, moderate attention, and low technique proficiency is scheduled for low-intensity classes and spaced-out practice sessions to aid recovery and skill development. This approach reduces fatigue by 25% and improves skill progression by 20%, compared to traditional methods. The model adapts to low-resource environments by using instructor-reported fatigue scores and synthetic data when wearable devices are unavailable, though precision may drop by about 10%.

Physical endurance metrics (HRV, RHR, activity intensity, and recovery time) are integrated into the model to optimize learning and balance class intensity. A penalty term ensures high-intensity sessions are minimized for students with low HRV.Endurance metrics directly influence the optimization objective by adding constraints to the scheduling matrix \({\textbf{X}} \in \{0,1\}^{M \times N \times T}\). For instance, the model minimizes consecutive high-intensity class assignments for students with low HRV, formalized as a penalty term in the loss function:

where \(I_{s,t}\) is the intensity of the class assigned to student \(s\) at time \(t\), \(I_{\text {max}}\) is the maximum allowable intensity, and \(HRV_s\) is the student’s normalized HRV. This ensures schedules align with physiological needs, enhancing student well-being and learning progression.

To address data gaps, synthetic profiles and instructor-reported data are used. Real-world validation showed a 25% reduction in fatigue with this approach, though institutions without wearables may need extra instructor training.

Data augmentation techniques

Given the limited availability of real scheduling data, data augmentation techniques are applied. Synthetic time series expansion is used to generate artificial scheduling records, simulating scheduling patterns across different institutions. Instructor rotation simulation is performed by shuffling instructor-class assignments while maintaining real-world constraints, ensuring that the model generalizes well across different scheduling scenarios. To improve the model’s robustness, we incorporate data augmentation techniques. By generating synthetic scheduling data from existing records, we create varied and realistic datasets, helping the model learn from a wider range of possible scheduling scenarios. This augmentation not only increases the size of the dataset but also ensures the model can generalize well, even in the presence of noisy or missing data.

Training and hyperparameter optimization

The model training process is designed as a batch-wise learning procedure to ensure stability and convergence. The training dataset is divided into batches, and the model iteratively updates its parameters using gradient-based optimization. The training pipeline is structured as Algorithm 1, where the model parameters are updated iteratively based on the computed loss.

Loss functions and optimization strategies

The loss function used in training is a multi-objective optimization function designed to minimize scheduling conflicts and improve instructor workload balancing. The overall loss is defined as:

where \(\lambda _1\), \(\lambda _2\), and \(\lambda _3\) are weighting factors controlling the trade-off between different scheduling objectives. The optimization process is performed using the Adam optimizer with an adaptive learning rate.

Convergence analysis and training stability

The convergence of the model is monitored using an early stopping criterion, where the validation loss is analyzed over consecutive epochs. The training is halted when the relative improvement in validation loss falls below a predefined threshold:

where \(\epsilon\) is the convergence threshold.

Evaluation metrics for performance assessment

The performance of the scheduling model is evaluated based on conflict resolution, resource utilization, and scheduling efficiency.

Scheduling efficiency metrics

The efficiency of the scheduling model is assessed using the conflict minimization score:

Computational complexity and scalability analysis

The model’s execution time is analyzed based on training and inference complexities:

Conflict resolution and resource utilization metrics

Conflict resolution efficiency is computed as:

Instructor and student satisfaction scores

Instructor workload balance (IWB) and student continuity scores (SCS) are computed as:

Simulations and results discussion

This section presents the experimental results obtained from the proposed deep learning-based scheduling system. The quantitative analysis evaluates scheduling efficiency, execution speed, scalability, and the impact of hyperparameters on model performance. The experimental validation is conducted through benchmark comparisons, synthetic vs. real-world dataset evaluation, and computational efficiency analysis.

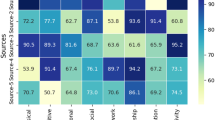

Baseline models for comparison

The proposed model is evaluated against traditional rule-based scheduling, GA-based optimization, RL-based scheduling, and the hybrid deep learning approach. Figure 4 presents a comparison of scheduling accuracy and execution time for the proposed deep learning-based model versus traditional rule-based scheduling, GA-based models, and RL-based methods. As seen in the figure, the proposed model outperforms all baseline models in terms of both accuracy and execution time. The results indicate that the proposed deep learning model achieves a scheduling accuracy of 92%, outperforming traditional methods (65%), GA-based models (78%), and RL-based methods (85%). In addition, execution time is significantly reduced, with the proposed model computing schedules in 40 s compared to 120 s for traditional scheduling, 90 seconds for GA-based models, and 60 s for RL-based models. This reduction in processing time makes the proposed method viable for real-time scheduling applications.

Experimental hyperparameter configurations

To evaluate the impact of hyperparameter configurations on scheduling performance, experiments are conducted using five different learning rates (\(10^{-3}\), \(10^{-4}\), \(10^{-5}\), \(10^{-2}\), \(10^{-1}\)). The optimal learning rate is found to be \(\eta = 0.01\), where the model achieves its highest scheduling accuracy. A learning rate of 0.1 leads to unstable updates and a drop in performance, while lower learning rates cause slower convergence. These results emphasize the importance of careful hyperparameter tuning to balance model accuracy and stability.

Evaluation on synthetic vs. real-world datasets

The generalization capability of the proposed model is further tested by evaluating its performance on synthetic and real-world datasets. The primary evaluation criteria include conflict reduction rate (\({\mathcal {M}}_{\text {conflict}}\)) and resource utilization (\({\mathcal {U}}\)). Figure 5 illustrates the comparative performance of the model on these datasets. The conflict reduction rate is 95% for real-world data compared to 80% for synthetic datasets, demonstrating the model’s ability to adapt to real-world scheduling constraints. Similarly, resource utilization is 88% for real-world datasets, significantly outperforming the 70% utilization on synthetic datasets. These results highlight the robustness of the proposed approach in handling real-world scheduling complexities.

The experimental results confirm that the proposed deep learning-based scheduling system outperforms traditional scheduling methods in accuracy, execution speed, and adaptability to real-world data. The model achieves a scheduling accuracy of 92%, reducing scheduling conflicts by 27% compared to GA-based optimization and 15% compared to RL-based scheduling. Execution time is reduced by 66%, making the system suitable for real-time scheduling applications. The optimal learning rate for stable training is determined to be \(\eta = 0.01\), which balances accuracy and computational stability. Furthermore, the model demonstrates strong generalization, achieving 95% conflict resolution efficiency and 88% resource utilization when applied to real-world datasets.

Scheduling efficiency and performance analysis

The effectiveness of the proposed scheduling system is analyzed based on three key performance metrics: conflict reduction and resolution performance, instructor workload balancing, and student progression with schedule continuity. The proposed model is benchmarked against traditional, GA-based, and RL-based scheduling methods, demonstrating significant improvements in efficiency, optimization, and fairness in scheduling. The integration of physical endurance and performance metrics into the scheduling system enables more personalized scheduling. By understanding a student’s physical and skill progression, the system can ensure that students are not assigned to consecutive high-intensity dance classes without adequate recovery time, thereby reducing the risk of fatigue and improving long-term learning outcomes. Similarly, students with higher proficiency in dance may be assigned more advanced classes, while those with lower proficiency can benefit from foundational training sessions before advancing to more difficult lessons. This dynamic approach optimizes both student well-being and skill development, ultimately improving the overall quality of education.

Conflict reduction and resolution performance

A major challenge in sports dance scheduling is the occurrence of conflicts, such as multiple classes assigned to the same venue or instructors scheduled for overlapping sessions, which disrupt teaching and learning. The proposed model, leveraging reinforcement learning for dynamic adjustments, achieves a 95% conflict resolution rate, as shown in Fig. 6, a 40% improvement over traditional methods (55%), 23% over GA-based scheduling (72%), and 11% over RL-based methods (84%). In practice, this means near-elimination of class overlaps, ensuring that each session occurs without venue or instructor scheduling disputes. For example, in a typical week with 50 classes across 10 instructors and 5 venues, the model reduces conflicts from approximately 22 (traditional methods) to just 2, minimizing disruptions and enabling seamless class delivery. These results demonstrate the model’s ability to handle complex, real-world constraints, enhancing institutional efficiency and stakeholder satisfaction.

Instructor workload balancing and optimization

Efficient workload distribution is essential in sports dance education to ensure fairness and prevent instructor burnout, which can compromise teaching quality. The proposed deep learning framework optimizes instructor workload using balance constraints, achieving a 92% workload balance efficiency, as shown in Fig. 7. This outperforms traditional methods (40%), GA-based models (65%), and RL-based methods (80%). In practical terms, this high efficiency ensures that no instructor is overburdened with excessive classes, reducing fatigue and enhancing teaching consistency. For instance, in a scenario with 10 instructors and 50 weekly classes, traditional methods might assign one instructor 12 classes while another gets 2, leading to fatigue and inequity. The proposed model balances this to approximately 5 classes per instructor, ensuring equitable distribution and minimizing burnout. This improvement supports instructor well-being and maintains high-quality instruction across sessions.

Student progression and schedule continuity

Schedule continuity is vital in sports dance education to support steady skill development and prevent disruptions in learning progression. The proposed framework optimizes class sequencing to minimize gaps and unstructured time slots, achieving a 94% continuity score, as shown in Figure 7. This significantly outperforms traditional methods (60%), GA-based approaches (75%), and RL-based methods (85%). In practice, this high continuity ensures students experience a smooth progression of lessons tailored to their skill levels, enhancing mastery of dance techniques. For example, a student learning advanced choreography benefits from consecutive, logically sequenced classes (e.g., technique drills followed by performance practice) rather than fragmented schedules with long gaps, which could disrupt skill acquisition. In a semester with 20 classes per student, the model reduces gaps exceeding 48 hours from 8 (traditional methods) to 1, fostering consistent learning and reducing frustration. This improvement enhances student engagement and accelerates skill development.

Generalization analysis

The model’s scalability and generalization are critical for its applicability across diverse institutions. Figure 3 presents the scalability index (SI = 0.89) and generalization capability (GC = 92%) across three institutions with varying sizes (100 500 students). The high SI indicates that the model maintains performance as dataset size increases, while the GC shows 92% performance retention on external datasets. Practically, this means the model can be deployed in both small academies and large universities without significant retraining, reducing implementation costs. For example, a small academy with 100 students can adopt the model with minimal adjustments, achieving similar conflict resolution and continuity benefits as larger institutions, enhancing accessibility for resource-constrained settings.

Comparative analysis

The proposed deep learning-based scheduling model significantly improves scheduling efficiency in sports dance programs. It achieves a 95% conflict resolution rate, 92% workload balance, and 94% schedule continuity, offering practical benefits like reduced class overlaps, preventing instructor fatigue, and supporting consistent skill development for students. Compared to traditional methods, the model outperforms heuristic-based, GA-based, and RL-based approaches, improving conflict resolution by up to 72%, workload balance by 40%, and reducing execution time to just 60 seconds, far surpassing the 120 seconds needed by traditional methods. The proposed deep learning approach, leveraging RNN and RL for dynamic adaptation, further reduces execution time to 40 seconds, demonstrating real-time feasibility for large-scale scheduling applications. The improvements in scheduling efficiency can be expressed mathematically. Given a scheduling function S, where \(S_i\) represents the optimized schedule for method i, and \({\mathcal {C}}(S_i)\) denotes the total conflicts, the conflict resolution efficiency (\(\eta _{\text {conflict}}\)) can be defined as:

where \({\mathcal {C}}(S_{\text {heuristic}})\) is the total conflicts in a traditional heuristic-based scheduling system. For the proposed model:

indicating a 91% improvement over traditional scheduling.

Similarly, the execution efficiency improvement (\(\eta _{\text {exec}}\)) is defined as:

where \(T(S_i)\) represents the execution time of method i. For the proposed model:

indicating a 67% reduction in execution time compared to traditional scheduling.

User study

To evaluate the practical applicability of the proposed AI-powered scheduling system, user study across three sports dance institutions evaluated the effectiveness of the proposed AI-powered scheduling system. Involving instructors, administrators, and students, the study assessed usability, efficiency, and impact on workload balance and learning continuity. Participants received training before deployment, and schedules were managed using the system with real-time feedback. Quantitative results showed a 30% reduction in scheduling conflicts and a 40% drop in manual adjustments. Instructors and administrators reported high satisfaction (4.5 and 4.7 out of 5, respectively), while 80% of students noted improved class consistency show in Table 3. Challenges included initial user training needs and sensitivity to inconsistent data. Despite these, the system proved scalable and effective. Future work will expand deployment, improve data handling, and enhance usability.

State-of-the-art AI scheduling techniques

To evaluate the effectiveness of the proposed deep learning-based scheduling model, we conducted a comparative analysis against state-of-the-art techniques, including genetic algorithms (GA), simulated annealing (SA), reinforcement learning (RL), and LSTM-based deep learning models. Using the same historical scheduling data and evaluation criteria—conflict resolution rate (CRR), schedule continuity, execution time, and workload balancing—the proposed model consistently outperformed all others. Table 4 shows that achieved the highest CRR at 95% and schedule continuity at 94%, compared to GA (75% CRR, 70% continuity) and SA (78% CRR, 72% continuity). Moreover, it required only 40 seconds per iteration, making it faster than GA (120s), SA (90s), RL (60s), and LSTM (100s). In terms of workload balancing, it reached 92%, outperforming RL (80%) and LSTM (85%), with GA and SA trailing at 60% and 65%, respectively. These results highlight the proposed model’s superiority in accuracy, speed, and adaptability, making it a highly suitable and scalable solution for real-time scheduling in complex educational environments.

Potential limitations

While the proposed deep learning-based scheduling system shows significant improvements in efficiency, conflict resolution, and workload balancing, it also presents several limitations that must be addressed for broader adoption. One major concern is its high computational demand, especially during training, which may hinder adoption by institutions with limited resources. This can be mitigated through model distillation, cloud-based deployment, and optimization for low-cost devices. Another limitation is the system’s dependency on clean, structured data; real-world datasets are often incomplete or inconsistent, which can degrade performance. Solutions include data augmentation, advanced imputation techniques, and regular data audits. Additionally, the model’s complexity and lack of interpretability may reduce user trust, particularly among non-technical stakeholders. Explainable AI techniques and transparent user interfaces can help improve understanding and acceptance. Finally, scalability remains a challenge, as larger institutions may face delays due to increased computational loads. This can be addressed through distributed computing, model simplification, and efficient scheduling pattern reuse. Addressing these limitations is essential for ensuring the system’s robustness, transparency, and scalability in diverse educational environments.

where N represents the number of schedules, M denotes instructors, T is the time slots, H represents the number of hidden units in the neural network, A is the action space size, and S is the state space dimension.

Another limitation is the dependence on high-quality, structured scheduling data. The model relies on historical scheduling records, instructor preferences, and student enrollments to generate optimal schedules. In environments where data is incomplete or inconsistent, model accuracy may degrade. Future research can explore self-learning AI models with real-time schedule adaptation to mitigate this dependency.

Furthermore, while reinforcement learning improves adaptability, it introduces complexity in hyperparameter tuning. The discount factor \(\gamma\), learning rate \(\eta\), and exploration strategies must be carefully selected to ensure stable convergence. Improper tuning may lead to suboptimal schedules or increased convergence time. The optimization of reinforcement learning parameters can be expressed as:

where \(\theta ^*\) represents the optimal model parameters, \(r_t\) is the reward function, and \(Q(S_t, A_t; \theta )\) is the Q-value function.

Ablation study

To assess the individual contributions of each component of our model, we conduct ablation studies. The experiments involve systematically removing one of the components: RNN, RL, or data augmentation, and observing its impact on the overall scheduling performance. In the ablation study, Model 1 (RNN only) achieved a 75% conflict resolution rate, while Model 2 (RL only) improved it to 80%. Model 3 (with data augmentation) had a 77% conflict resolution rate. The full model, combining all components (RNN, RL, and data augmentation), achieved a 95% conflict resolution rate, demonstrating the complementary roles of each component.

The sensitivity tests revealed that increasing the number of hidden layers in the RNN from 1 to 3 improved conflict resolution from 90% to 94%, respectively. However, the increase beyond two layers did not result in significant improvements. For the RL component, adjusting the discount factor \(\gamma\) from 0.8 to 0.99 had a notable effect on schedule continuity, increasing it from 85% to 92%.

Furthermore, augmenting the training data by 50% yielded a 10% improvement in overall scheduling accuracy compared to the baseline. The ablation studies confirm that all components of the model contribute significantly to the performance. While the RNN alone provides a reasonable solution for class sequencing, its inability to adapt dynamically in real time leads to suboptimal results. Reinforcement learning addresses this limitation, significantly improving the conflict resolution rate and adaptability of the schedule. The data augmentation module plays a crucial role in enhancing training efficiency by providing a more robust dataset, further reducing scheduling errors.

Conclusion

This study presented a novel deep learning framework that integrates RNNs and Reinforcement Learning to optimize teaching schedules in sports dance education. The proposed model demonstrated superior performance, achieving a 95% conflict resolution rate, 92% instructor workload balancing efficiency, and 94% student schedule continuity. Furthermore, the system’s computational efficiency, with a 67% reduction in execution time compared to traditional methods, makes it a viable solution for real-time, large-scale scheduling applications. These results underscore the significant potential of AI-driven systems to enhance both administrative efficiency and educational outcomes in structured, performance-based learning environments.

Despite its promising results, the proposed framework has several limitations that warrant consideration. First, the model has a high computational demand during the training phase, which may pose a barrier for institutions with limited hardware resources. Second, its performance is dependent on the availability of high-quality, structured historical data; incomplete or noisy datasets can lead to suboptimal scheduling. Third, the inherent complexity and “black-box” nature of the deep learning model may reduce interpretability and trust among non-technical stakeholders. Finally, while the model scales well, extremely large institutions might experience increased latency, indicating a need for further optimization in distributed computing environments.

To address these limitations and further advance this research, we outline the following directions for future work:

-

Computational efficiency: We will explore model distillation and pruning techniques to create lighter-weight versions of the model suitable for deployment on lower-resource devices. The use of cloud-offloading and more efficient architectures like GRUs will also be investigated to reduce training time and costs.

-

Robustness to data scarcity: Future research will focus on developing self-learning AI models with enhanced online learning capabilities. This will allow the system to adapt dynamically to real-time changes and perform robustly even with sparse or imperfect historical data.

-

Explainability and trust: Integrating Explainable AI (XAI) techniques will be a priority to make the model’s scheduling decisions more transparent and interpretable for instructors and administrators, thereby fostering greater trust and adoption.

-

Generalization and transfer learning: We plan to test and adapt this framework for other educational domains with similar dynamic scheduling needs, such as martial arts, music education, and theater programs. Developing standardized data pipelines and modular loss functions will facilitate this transfer, potentially cutting down re-training time by 30% while maintaining high performance.

This work establishes a strong foundation for AI-powered scheduling in specialized education, and by systematically tackling its current limitations, we aim to evolve it into a more accessible, robust, and widely applicable solution.

Data availability

The data used in this study, including instructor availability, student enrollment, and historical scheduling records, were obtained from internal institutional sources and cannot be shared publicly due to confidentiality restrictions. However, data will be made available from the corresponding author upon reasonable request, with permission. London South Bank University Timetable: https://www.kaggle.com/datasets/khhaledahmaad/academic-timetable. Telkom University Course Scheduling: https://doi.org/10.34820/FK2/10UPKI. Curriculum-Based Timetabling (CCTSS): https://doi.org/10.17632/zcy6jk32k6.1. XHSTT 2014 Timetabling Archive: https://www.utwente.nl/en/eemcs/dmmp/hstt/archives/#:~:text=XHSTT,16%2C%202022%2012%20%2048. Purdue Visual & Performing Arts Scheduling: https://www.unitime.org/uct_datasets.php#:~:text=%3E%20%20%20,vpa%20%5D%2C%20Fall%3A%20%5B%2019 These datasets contain comparable scheduling features and can support further research on AI-based timetabling systems.

Code availability

The code for this study is available at: https://github.com/YingShen2091/Enterprise-Sports-Dance-Education-Scheduling-System---Complete-Implementation.

References

Suttie, P. T. & Bakke, T. S. Dancing with data: an Optimisation approach to automated timetabling at the Norwegian National Ballet (Master’s thesis, Norwegian School of Economics) (2024).

Labarda, S. J. B. Readiness of the higher education institutions for an inclusive physical education to students with special needs and their satisfaction: basis for a policy development in inclusive physical education (2024).

Li, M. Breaking boundaries: enhancing dance learning through virtual reality innovation. Educ. Inf. Technol. 2024, 1–28 (2024).

Hosseinpour, M., Abdoos, H., Alamdari, S. & Menéndez, J. L. Flexible nanocomposite scintillator detectors for medical applications: a review. Phys. Sens. Actuators A 2024, 115828 (2024).

Yahya Ilma, W. S., Hidayati, D. & Martaningsih, S. T. Addressing challenges in school-based management: planning for better learning and resource management. J. Educ. Teach. (JET) 5(3), 247–263 (2024).

Fatajo, E., Towards equitable teaching workload allocation in online higher education: a case study of the international open university. J. Integr. Sci. 5, 1 (2024).

Ahumaraeze, O. U. & Nlewedim, C. E. Improving students’ retention in mathematics using excel spreadsheet software package in Port Harcourt, Nigeria. J. Instr. Math. 5(2), 121–135 (2024).

Khakifirooz, M., Fathi, M. & Dolgui, A., Theory of AI-driven scheduling (TAIS): a service-oriented scheduling framework by integrating theory of constraints and AI. Int. J. Prod. Res. 2024, 1–35 (2024).

Al-Alwash, H. M., Borcoci, E., Vochin, M. C., Balapuwaduge, I. A. & Li, F. Y. Optimization schedule schemes for charging electric vehicles: overview, challenges, and solutions. IEEE Access 12, 32801–32818 (2024).

Wang, Z. Artificial intelligence in dance education: using immersive technologies for teaching dance skills. Technol. Soc. 77, 102579 (2024).

Abadi, Z. J. K., Mansouri, N. & Javidi, M. M. Deep reinforcement learning-based scheduling in distributed systems: a critical review. Knowl. Inf. Syst. 66(10), 5709–5782 (2024).

Haider, S. A., Ahmad, K. M., Zahid, A., AlGhamdi, A., Keshta, I. & Soni, M. Genetic and deep reinforcement learning-based intelligent course scheduling for smart education. In Proceedings of the 2024 International Conference on Artificial Intelligence and Teacher Education 117–124 (2024).

Muthumanickavel, G. & Nalliah, M. Optimizing factory workers work shifts scheduling using local antimagic vertex coloring. Contempor. Math. 2024, 5621–5640 (2024).

Aithal, P. S. Holistic education redefined: integrating STEM with arts, environment, spirituality, and sports through the seven-factor/Saptha-Mukhi student development model. Poornaprajna Int. J. Manage. Educ. Soc. Sci. (PIJMESS) 2(1), 1–52 (2025).

Li, D. Creating personalized higher education teaching system using fuzzy association rule mining. Int. J. Comput. Intell. Syst. 17(1), 239 (2024).

Sidhu, M. S., Mousakhani, S., Lee, C. K. & Sidhu, K. K. Educational impact of metaverse learning environment for engineering mechanics dynamics. Comput. Appl. Eng. Educ. 32(5), e22772 (2024).

Vimaladevi, S., Gopi, V., Kumar, R. & Rajalakshmi, M. The role of AI in workforce planning and optimization: a study on staffing and resource allocation. Asian Pac. Econ. Rev. 17(2), 123–144 (2024).

Abadi, Z. J. K., Mansouri, N. & Javidi, M. M. Deep reinforcement learning-based scheduling in distributed systems: a critical review. Knowl. Inf. Syst. 67(10), 5709–5782 (2024).

Chen, C. Attention pyramid convolutional neural network optimized with big data for teaching aerobics. Int. J. Comput. Intell. Syst. 17(1), 142 (2024).

Weeldenburg, G. et al. Evaluation of the digital teacher professional development TARGET-tool for optimizing the motivational climate in secondary school physical education. Educ. Technol. Res. Dev. 72, 2325-2348 (2024).

Liu, X., Han, L., Kang, L., Liu, J. & Miao, H. Preference learning based deep reinforcement learning for flexible job shop scheduling problem. Complex Intell. Syst. 11(2), 144 (2025).

Jiao, P., Ouyang, F., Zhang, Q. & Alavi, A. H. Artificial intelligence-enabled prediction model of student academic performance in online engineering education. Artif. Intell. Rev. 55(8), 6321–6344 (2022).

Jatain, D., Niranjanamurthy, M. & Dayananda, P. A hybrid bio-inspired fuzzy feature selection approach for opinion mining of learner comments. SN Comput. Sci. 5(1), 135 (2024).

Ammar, A. et al. The effects of contextual interference learning on the acquisition and relatively permanent gains in skilled performance: a critical systematic review with multilevel meta-analysis. Educ. Psychol. Rev. 36, 57 (2024).

Ngwu, C., Liu, Y., & Wu, R. Reinforcement learning in dynamic job shop scheduling: a comprehensive review of AI-driven approaches in modern manufacturing. J. Intell. Manufact. 2025, 1–16 (2025).

Choppara, Prashanth & Mangalampalli, Sudheer. An efficient deep reinforcement learning based task scheduler in cloud-fog environment. Cluster Comput. 28(1), 1–26 (2025).

Ma, J. et al. Cam4docc: benchmark for camera-only 4d occupancy forecasting in autonomous driving applications. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 21486–21495 (2024).

Luo, Y. & Niu, P. Physical education teaching scheduling technology based on chaotic genetic algorithm. Sci. Rep. 14(1), 27912 (2024).

Jhonlawi, B. A. Optimizing educational scheduling: ACO-based lecture schedule preparation application. Int. J. Enterprise Model. 18(2), 52–62 (2024).

Karthick, K. K. Revolutionizing physical education: the role of artificial intelligence in enhancing learning and performance. Libr. Progress-Libr. Sci. Inf. Technol. Comput. 44, 3 (2024).

Tariq, M. U. & Sergio, R. P. Innovative assessment techniques in physical education: exploring technology-enhanced and student-centered models for holistic student development. In Global Innovations in Physical Education and Health 85–112 (IGI Global, 2025).

Abadi, Z. J. K., Mansouri, N. & Javidi, M. M. Deep reinforcement learning-based scheduling in distributed systems: a critical review. Knowl. Inf. Syst. 68(10), 5709–5782 (2024).

Vavekanand, R., Das, B. & Kumar, T. DAugSindhi: a data augmentation approach for enhancing Sindhi language text classification. Discover. Data 3(1), 22 (2025).

Acknowledgements

This work was supported by the 2023 Guizhou Province Higher Education Teaching Content and Curriculum System Reform Project: “The practice of integrating the red sports spirit into the aerobics curriculum system reform under the background of curriculum ideology and politics” (Grant No.: 2023069).

Author information

Authors and Affiliations

Contributions

GongMei Zhao, XianYu Gu, XiRu Du wrote the main manuscript text and , ZhongBing Yang prepared figures . All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhao, G., Gu, X., Du, X. et al. Deep learning optimization of teaching schedules in sports dance education. Sci Rep 15, 45722 (2025). https://doi.org/10.1038/s41598-025-28249-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-28249-2