Abstract

The 2:1:1 dietary proportion model, promoting balanced eating, faces challenges in practical application due to difficulty in visually estimating portion sizes. To address this, we developed an artificial intelligence (AI)-based application to assist in the accurate assessment of 2:1:1 dietary proportion. This study demonstrated the accuracy of the AI application compared to estimates made by nutrition and dietetics students (ND) and registered dietitians (RD), while also assessing user satisfaction and attitudes. The AI application was trained using images of three popular Thai dishes: Hainanese Chicken Rice, Shrimp Paste Fried Rice, and Egg Noodle, each prepared in three portion variations. The AI system demonstrated significantly lower mean absolute error (MAE) than both ND and RD groups in estimating proportions for Hainanese Chicken Rice and Shrimp Paste Fried Rice (p < 0.05), indicating superior accuracy. User satisfaction surveys revealed that 61% of participants rated their overall experience with the application as moderate, with suggestions for improving accuracy. Over half agreed that the AI tool shows potential as a practical resource for nutrition education and dietary assessments. The AI holds promise as a tool for promoting adherence to the 2:1:1 model and facilitating healthy lifestyle changes.

Similar content being viewed by others

Introduction

The global obesity epidemic has steadily increased over recent decades, becoming a major public health concern worldwide1. Obesity is a major risk factor for various non-communicable diseases (NCDs), including hypertension, dyslipidemia, and type 2 diabetes, which together contribute significantly to global morbidity and mortality2,3. Moreover, individuals with obesity may experience a reduction in disease-free life expectancy of up to 8 years compared to those with a normal weight4. Given the severity of this public health crisis, lifestyle modifications, particularly dietary behavior changes, are recognized as the first line of treatment for managing obesity and reducing the risk of NCDs5.

Dietary behavior modification is a key component of lifestyle interventions aimed at reducing energy intake. Various nutritional strategies can be employed, including meal replacements, low-fat diets, high-protein diets, and intermittent fasting6. Additionally, portion control techniques, such as the Plate Model, support energy restriction and promote balanced macronutrient distribution within meals. The Plate Model serves as a visual guideline for achieving a balanced diet, offering a simplified representation of food proportions on a plate, which enhances understanding and adherence7.

In Thailand, the 2:1:1 proportion model, adapted from the Plate Model, is widely endorsed as an effective dietary tool for promoting healthy eating habits. This model divides a standard 9-inch plate into two portions for vegetables, one portion for grains or starches, and one portion for protein. Research indicates that the 2:1:1 model not only aids in weight management but also reduces risk factors associated with non-communicable diseases (NCDs), making it a valuable resource for encouraging positive dietary behavior changes8.

Furthermore, studies have demonstrated that the Plate Model contributes to significant reductions in body weight, body mass index (BMI), and waist circumference, all of which are critical factors in mitigating the risk of developing NCDs9,10. However, several limitations associated with the Plate Model include difficulties in categorizing certain foods into specific groups, subjective estimation of food proportions, which can vary among individuals, and the need for guidance from a partner or dietitian for accurate portion estimation8.

Given the limitations of traditional dietary assessment methods, recent advancements in artificial intelligence (AI) offer promising solutions to enhance dietary adherence and portion control. AI has been successfully applied in various fields, including nutrition, where it is used for personalized meal planning, behavior modification through chatbot interactions, and dietary assessment through image recognition11,12. AI-powered dietary assessment tools, such as NutriNet, GoCARB, and goFOOD™, utilize machine learning algorithms to analyze food images and estimate nutritional content with increasing accuracy13,14,15. These applications often leverage Machine Learning (ML) principles, allowing artificial intelligence to autonomously learn from input data and analyze results without explicit programming. Additionally, they employ Deep Learning (DL) techniques that mimic neural networks to emulate human brain functions16. With repeated data input and ongoing learning, these systems undergo continuous development, resulting in increasingly accurate analyses and interpretations. Integrating AI into nutrition education and dietary assessment tools, such as web applications and virtual health coaches, has been shown to enhance participants’ compliance with behavior modification strategies. For example, Paola, a virtual health coach designed to guide users toward dietary patterns like the Mediterranean diet, has been effective in improving adherence to healthy eating behaviors17. Similarly, the eTRIP app, an AI-assisted self-monitoring tool featuring food image recognition, a chatbot, and a meal timer, has proven effective in reducing snacking and overeating behaviors18. Regarding accuracy, evidence indicates that AI-based nutritional tools can perform on par with human experts. A comparative study on carbohydrate estimation revealed that AI-powered applications can estimate carbohydrate content with accuracy similar to that of registered dietitians15. Moreover, a systematic review found that AI-based image dietary assessments possess comparable accuracy in estimating food calories and volumes, with the potential to exceed human estimation accuracy19.

Therefore, applying AI image recognition to dietary assessments based on the Plate Model may effectively address existing limitations by aiding in the classification of foods into specific groups. This approach offers a more convenient and rapid method for estimating food proportions, potentially leading to improved compliance with healthy eating behaviors. This study aims to develop an AI-based application for assessing dietary proportions according to the 2:1:1 model. The specific objectives are to evaluate the accuracy of the proportional estimations made by the web application in comparison to those conducted by nutrition and dietetics students (ND) and registered dietitians (RD). Additionally, the study assessed user satisfaction and attitudes of both ND and RD participants following their experience with the web application.

Results

Hainanese chicken rice

For the Hainanese Chicken Rice dish, the AI group exhibited a significantly lower mean absolute error (MAE) in estimating grains/starches, with a value of 3.2 ± 2.5%, compared to registered dietitians (RD) who had a MAE of 4.9 ± 2.6% (p = 0.017), indicating superior accuracy in portion estimation (Fig. 1). In the protein category, the AI group recorded a MAE of 3.1 ± 2.4%. However, no significant differences were observed in the errors of proportional estimation when compared to nutrition and dietetics students (ND) (3.9 ± 2.6%; p = 0.141) and RD (3.9 ± 2.7%; p = 0.118), suggesting comparable accuracy across all groups. For vegetable portions, the AI group achieved a significantly lower MAE of 0.4 ± 0.5% compared to ND (4.2 ± 3.0%; p < 0.001) and RD (4.8 ± 3.2%; p < 0.001), further demonstrating the AI’s enhanced precision in estimating vegetable proportions.

Shrimp paste fried rice

In the analysis of Shrimp Paste Fried Rice, the AI group displayed a significantly reduced MAE for grains/starches at 0.6 ± 0.5%, which was markedly lower than both ND (4.3 ± 2.3%; p < 0.001) and RD (4.3 ± 1.2%; p < 0.001), underscoring the AI’s heightened accuracy in this category (Fig. 1). For protein estimation, the AI group again showed a significantly lower error of 1.5 ± 1.1% compared to ND (4.3 ± 4.4%; p < 0.001) and RD (3.3 ± 2.0%; p < 0.001), indicating superior performance in protein estimation. Additionally, for vegetables, the AI group recorded a lower MAE of 1.3 ± 1.3% compared to ND (3.9 ± 5.2%; p = 0.008) and RD (3.5 ± 3.3%; p = 0.005), further affirming the AI’s efficacy.

Egg noodle

In the Egg Noodle dish, no significant differences were found in the overall MAE among the groups for any of the three components (Fig. 1). Nevertheless, the AI group demonstrated comparable accuracy to both ND and RD groups. For the grains/starches component, the MAEs were 4.3 ± 2.9% for AI, 3.8 ± 0.7% for ND, and 3.7 ± 0.5% for RD, with no significant differences (AI vs. ND: p = 0.789; AI vs. RD: p = 0.994). In the protein category, the AI group reported a MAE of 3.6 ± 2.6%, which was similar to ND (3.6 ± 5.0%; p = 0.344) and RD (2.4 ± 2.2%; p = 0.125). For vegetables, the MAEs were 4.4 ± 2.1% for AI, 4.7 ± 5.6% for ND, and 3.9 ± 2.9% for RD, again AI showing no significant differences (AI vs. ND: p = 0.154; AI vs. RD: p = 0.222).

Web application user satisfaction and attitude survey

There are 23 participants who completed the entire survey questionnaire, including 12 participants from ND group and 11 participants from RD group.

Satisfaction

As shown in Fig. 2, the survey results indicate that participants generally reported moderate satisfaction with the accuracy of food component proportion detection, with 61% expressing this view. Regarding specific aspects of the web application, participants reported moderate to high satisfaction with the following: speed of data processing (91%), stability (70%), consistency between images and content (70%), and aesthetic appeal and modernity of the interface (78%). Additionally, 61% of participants were very satisfied with the ease of use, while 57% expressed satisfaction with the font size and style of the application. Overall, the majority (61%) rated their satisfaction with the web application as moderate, reflecting a generally positive experience but indicating room for improvement in certain areas.

Attitude

According to the data presented Fig. 2, a majority of participants (57%) agreed that the web application is practical for use in their work or during internships in the dietitian profession, indicating its relevance and applicability in real-world settings. This perception highlights the tool’s potential to bridge the gap between theoretical knowledge and practical application in dietary management. Furthermore, 61% of respondents recognized the application as a valuable resource for nutritional education. This suggests that users believe the tool can enhance their understanding of dietary principles and improve educational efforts in various settings, such as clinics or community programs.

Additionally, 44% of participants agreed that the application could reduce the time required for delivering nutritional education. This efficiency could be particularly beneficial in clinical environments where dietitians often face time constraints and need to optimize their interactions with clients. Moreover, 56% of respondents acknowledged the application’s potential to provide valuable information for dietary assessments. This indicates that users see the AI tool as an effective means of gathering and analyzing dietary data, which can facilitate better-informed dietary recommendations. Lastly, 52% of participants expressed a willingness to recommend the web application to others, reflecting a generally positive attitude toward its effectiveness and utility. This willingness to advocate for the tool suggests that users find it beneficial enough to share with peers, which is a strong indicator of its potential for wider adoption within the dietetics community.

Discussion

The AI-Based application demonstrated superior accuracy in estimating dietary proportions compared to human estimators, particularly for complex Asian dishes like Hainanese Chicken Rice and Shrimp Paste Fried Rice. The AI group achieved significantly lower mean absolute errors (MAEs), showing that the model effectively helps users follow the 2:1:1 dietary proportion model. These dishes represent complex Asian recipes commonly eaten in daily life, often combining multiple components such as vegetables and meats with diverse textures and colors. Many existing AI dietary tools are primarily trained on simpler Western-style meals with fewer ingredients or more standardized components, which limits their applicability to complex cuisines. In contrast, the AI tool’s strong performance with these multi-ingredient dishes highlights its ability to address cultural variations in dietary assessment and support healthy eating practices with complex ASEAN foods.

Most existing tools focus on estimating energy and macronutrients, particularly carbohydrates. For example, NutriNet uses image recognition to classify foods on a plate14, while Keenoa, goFOOD™, and Calo Mama calculate calories, macronutrients, and food group classifications13,20,21. GoCARB specializes in carbohydrate estimation for meal planning15,22. In contrast, our AI model provides proportional estimates for grains, meat, and vegetables, which represent carbohydrates, protein, and fiber. Unlike calorie-based tools, this model emphasizes portion balance, helping users make healthier food choices by aligning meals with the 2:1:1 dietary model. By guiding users toward balanced meals through visual proportions, this approach offers a simpler, more intuitive way to promote healthy eating habits and dietary adjustments.

The results for Egg Noodle indicate that the AI’s performance was comparable to that of ND and RD groups, with no significant differences in portion estimation accuracy. This suggests that dishes with visually similar components or complex structures, such as Egg Noodle, present challenges for AI-based assessments. When ingredients share similar colors, such as bean sprouts, egg noodles, or the edges of fish balls, which are white and yellow, the AI struggles to distinguish between them, reducing estimation accuracy. These findings align with previous research on AI food recognition tools. For example, goFOOD™ misclassified visually similar foods, such as falafel and meatballs, due to their texture and color13. Similarly, Peihua Ma et al.23 reported that a nutrient estimation tool incorrectly identified spicy noodles as winter melon spare rib soup, resulting in inaccurate energy estimates. Research by Herzig et al.24 found that the SNAQ app’s accuracy decreased for cooked meals and main dishes compared to simpler foods, such as breakfast items or snacks, due to overlapping ingredients and greater visual complexity. The lack of significant findings for Egg Noodle highlights the need for further development of the AI model. Enhancing the training dataset with more diverse dishes and refining image recognition algorithms to better detect subtle differences in color and texture would be essential for improving the tool’s performance across a broader range of complex meals.

In terms of user satisfaction and attitudes, participants generally reported moderate satisfaction with the web application, particularly regarding its speed, stability, and ease of use. Positive feedback on its practical applications in dietetics and nutrition education highlights the tool’s potential for real-world implementation. The user-friendly interface makes it especially valuable for individuals new to dietary management and behavior change. Its ability to accurately detect food proportions helps users monitor their intake, identify opportunities to increase vegetable consumption, and adjust their intake of grains and proteins. These features promote adherence to the 2:1:1 dietary model, encouraging healthier eating habits over time.

This AI-Based tool may also benefit individuals who are overweight or obese by supporting weight management through improved portion control9. Additionally, it can assist those requiring dietary modifications for health conditions such as diabetes or cardiovascular disease9,22,25. By providing real-time feedback on food proportions, the tool simplifies portion control, helping users identify appropriate portion sizes and make informed dietary choices. With repeated use, users can develop greater awareness of their eating patterns, fostering sustainable behavior change and improved health outcomes18. Further studies are needed to assess the tool’s long-term effectiveness in promoting weight management and dietary improvements in these populations.

Moreover, the application offers significant value for nutrition and dietetics students by providing a platform to practice portion estimation and develop key dietetic skills, such as dietary counseling and behavior management, in preparation for their careers. Previous studies have shown that mobile apps with embedded learning aids can enhance students’ confidence during consultations by reinforcing knowledge26. Similarly, through repeated use of the AI tool, students can strengthen their ability to apply theoretical knowledge in practical contexts, enhancing their readiness for clinical practice and professional roles.

For registered dietitians, the tool provides an opportunity to reskill and refine their portion estimation abilities. It serves as a valuable resource for demonstrating appropriate portion sizes and balanced meals to patients in a simple, visually engaging way. Integrating the tool into clinical practice can enhance patient education, helping individuals better understand portion control and the 2:1:1 dietary model. This combination of improving patient understanding and refining professional skills makes the AI-powered application a versatile tool for promoting healthy eating and supporting improved dietary outcomes.

This study has several limitations that should be acknowledged. First, the AI model was trained on a limited dataset of three Thai dishes, which may limit the generalizability of the results to other foods and cuisines, particularly those with more complex or unfamiliar components. Expanding the dataset to include a wider range of dishes could improve the tool’s performance and applicability across diverse culinary contexts. Second, the AI model’s accuracy may be affected by visual factors such as lighting conditions and the similarity in ingredient colors, as seen with the Egg Noodle dish. When food components share similar colors or overlap, the model’s ability to distinguish between them decreases, potentially reducing estimation accuracy. Finally, the participant pool included only nutrition and dietetics students (ND) and registered dietitians (RD), limiting insights into how the general public or individuals with less dietary knowledge would engage with the tool. Future research should involve more diverse user groups to better assess usability and engagement, especially among those who could benefit most from dietary guidance.

Methods

Study population

The research participants comprised nutrition and dietetics students (ND) and registered dietitians (RD). The inclusion criteria for ND participants included: (1) currently enrolled in the Nutrition and Dietetics program at the Faculty of Allied Health Sciences, Chulalongkorn University; (2) ability to understand Thai; (3) proficiency in using a smartphone; and (4) willingness to participate in the research. For RD participants, the inclusion criteria were: (1) holding a valid dietitian license; (2) aged 25 years or older; (3) ability to understand Thai; (4) proficiency in using a smartphone; and (5) willingness to participate.

Participants who did not complete the survey were excluded from the study. Recruitment was conducted by disseminating information through electronic infographic posters within the Faculty of Allied Health Sciences, Chulalongkorn University. Interested individuals registered via an electronic form that outlined the study’s objectives, procedures, and activities. Researchers and research assistants screened potential participants based on the inclusion and exclusion criteria. Qualified participants were subsequently invited to the Department of Nutrition and Dietetics to engage in the research activities. Electronic informed consent was obtained from all participants prior to their involvement in the study.

Sample size calculation

The sample population for this study comprised nutrition and dietetics students (ND) and registered dietitians (RD). The required sample size was calculated based on a previous study by Vasiloglou et al.15, which evaluated the accuracy of the GoCARB application in estimating carbohydrate content on food plates compared to assessments made by dietitians.

Drawing from the methodology of this prior research, which involved similar study designs and participant characteristics, a minimum of six participants per group was initially established. To account for a potential dropout rate of 50%, the final sample size was adjusted to include 12 participants in each group: 12 ND students and 12 RD professionals. Additionally, an AI assessment group was created, comprising 12 replicated assessments, corresponding to the number of participants in each human group. This design ensures a balanced comparison between the AI tool and human estimations.

Ethical approval

The study was conducted in accordance with the guidelines established by the Declaration of Helsinki. This research protocol received ethical approval from the Research Ethics Review Committee for Research Involving Human Research Participants at Chulalongkorn University on 17th April, 2023 (Certificate of Approval: 080/67). All participants provide their informed consent to participate in the study. Informed consent was obtained for the publication of identifying information/images.

Study design

This study was conducted as a comparative analysis involving nutrition and dietetics students (ND), registered dietitians (RD), and an artificial intelligence (AI) component within the AI-based application for 2:1:1 dietary proportion assessment. Participants were asked to evaluate food proportions from images of three popular Thai dishes: Khao Man Gai (Hainanese Chicken Rice), Khao Kluk Kapi (Shrimp Paste Fried Rice), and Guay Tiew Bamee (Egg Noodle). Each dish was presented in three portion variations as illustrated in Fig. 3.

In this study, the proportions of grains/starches, proteins, and vegetables were systematically quantified based on precise weight measurements during the food preparation stage. Each food component was accurately weighed using a calibrated digital scale, and its proportional contribution was calculated as a percentage of the total dish weight. These weight-based proportions were established as the reference standard for training and evaluating the AI model. Although the AI model estimates visual area proportions based on image analysis, the reference standard for model validation was derived from the exact weight measurements to ensure data integrity. Additionally, pixel-wise annotations were performed to visually segment each food component within the images, representing relative area proportions. However, the final assessments were rigorously benchmarked against the weight-based reference standard to maintain methodological consistency and accuracy. Detailed procedures for pixel labeling and image dataset preparation are outlined in the subsequent section.

The proportional values assessed by each group were used to calculate the absolute error (AE) in portion estimation compared to the ground truth, defined as |estimated value – ground truth|. The mean absolute error (MAE) for each food component was subsequently computed for each participant group. After completing the portion assessments, both ND and RD participants tested the AI web application for 2:1:1 portion assessment. Researchers and research assistants provided instructions on using the web application before the portion assessment sessions. Following the assessments, both groups evaluated their satisfaction and attitudes toward the AI web application through a questionnaire specifically developed for this study.

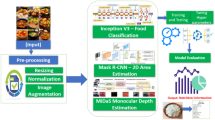

Image dataset preparation, AI model training, and validation strategy

Three widely consumed Thai composite dishes, namely Hainanese Chicken Rice, Shrimp Paste Fried Rice, and Egg Noodle, were selected as representative meal models for developing an artificial intelligence–based dietary portion assessment system. These dishes were chosen because they contain multiple food components and represent common Thai eating patterns, providing a practical model for generalization across complex meal structures. For each dish, fifteen base images were prepared with systematically varied ingredient proportions to represent realistic serving diversity. Each dish was plated on a standard nine-inch white plate, and images were captured from a vertical top view using the same smartphone camera under controlled lighting and distance conditions (Fig. 4). This standardized photographic setup minimized variability and optimized feature consistency for subsequent image segmentation.

For dataset annotation, the Pixel Labeling Method (PLM) was used to create pixel-level semantic segmentation masks corresponding to three food groups: grains or starches, proteins, and vegetables (Fig. 5). Manual pixel-wise labeling was performed using a dedicated annotation tool to ensure precision and class separability. Particular care was taken to differentiate overlapping regions such as rice and meat boundaries to reduce class ambiguity and maintain spatial accuracy. The resulting masks served as the ground truth for supervised training, allowing the model to associate image features with corresponding dietary components.

To expand dataset diversity and prevent overfitting, data augmentation techniques were applied to simulate real-world conditions. These included geometric transformations such as rotation and reflection, as well as photometric adjustments to brightness, contrast, and temperature. This process increased dataset heterogeneity and improved the model’s ability to recognize food components under varied environmental and presentation conditions. Table 1 presents the final dataset composition. This augmentation strategy enhanced the model’s generalization and reduced bias caused by limited image variability.

Model development employed a U-Net convolutional encoder–decoder structure combined with a VGG16 backbone pretrained on the ImageNet dataset. U Net was selected because it preserves spatial resolution through skip connections between the encoder and decoder, enabling accurate segmentation of food components. The VGG16 feature extractor captured hierarchical visual information such as texture, edge, and color variation, improving segmentation precision. Transfer learning from ImageNet provided generalized low-level features, facilitating faster convergence and higher segmentation accuracy given the limited dataset size.

As shown in Table 1, the final dataset comprised two hundred twenty-five images per dish, divided into training (one hundred fifty-three images, 68%), validation (thirty-six images, 16%), and testing (thirty-six images, 16%) subsets using random stratification to maintain class balance. The model optimization used the Adam algorithm with a learning rate of 0.0001 and a composite loss function integrating Categorical Focal Loss and Dice Loss. Focal Loss minimized the effect of class imbalance by assigning adaptive weights to minority classes, whereas Dice Loss enhanced boundary learning and shape consistency. This hybrid loss function improved both classification accuracy and region overlap quality.

Model accuracy was quantitatively evaluated using the Mean Intersection over Union (MIoU) metric, which measures segmentation overlap between predicted and ground truth masks. Training proceeded for a maximum of five hundred epochs with early stopping and model checkpointing to preserve the best-performing version based on validation accuracy.

To ensure reproducibility, the entire training and validation process was repeated five times using identical configurations but different random seed initializations. Mean and standard deviation values of MIoU were calculated to evaluate model stability and consistency.

External validation was performed on the independent test dataset to assess generalization capability beyond the training samples. Quantitative MIoU evaluation was supplemented by qualitative analysis through visual comparison between predicted segmentations and reference labels. These complementary assessments demonstrated that the combined U Net and VGG16 model achieved reliable and precise segmentation, effectively identifying food components and estimating dietary proportions in complex Thai mixed dishes.

AI-based application user satisfaction and attitude survey

The Web Application User Satisfaction and Attitude Survey (WUSAS) consists of two main sections. The first section is a satisfaction questionnaire that includes eight items evaluating various aspects of the web application: (1) speed of data processing, (2) ease of use, (3) stability, (4) consistency between images and content in conveying meaning, (5) aesthetic appeal and modernity of the interface, (6) font size and style, (7) accuracy in proportion detection, and (8) overall satisfaction. Responses were measured using a five-point Likert scale with options ranging from “Most Satisfied” to “Least Satisfied.”

The second section comprises an attitude questionnaire with five items designed to capture respondents’ attitudes toward the web application. Participants were asked to provide their opinions on the following statements: (1) “This web application can be practically applied in the field of dietetics or during internships,” (2) “This web application can be used as a tool for nutrition education,” (3) “This web application can reduce the time required for delivering nutrition education,” (4) “This web application can provide useful information for dietary assessment,” and (5) “I would recommend this web application to others.” Attitudes were measured on a five-point Likert scale: “Strongly Agree,” “Agree,” “Neutral,” “Disagree,” and “Strongly Disagree.”

Prior to implementation, the survey underwent evaluation for Item-Objective Congruence (IOC) by three experts to ensure its validity. All IOC values for each question item were equal to 1, indicating that all items were valid.

Statistical analysis

Data were presented as the mean and standard deviation (Mean ± SD). The normality of data distribution was evaluated using the Shapiro-Wilk test. Comparisons of the mean absolute error (MAE) in portion estimation between groups were analyzed using an independent samples t-test when the data were normally distributed, while the Mann-Whitney U test was applied for data that did not meet normality assumptions. Pairwise comparisons were conducted with a 95% confidence level (p < 0.05).

Conclusions

This study demonstrates that the AI-Based application accurately estimates dietary proportions according to the 2:1:1 model, particularly for complex ASEAN dishes such as Hainanese Chicken Rice and Shrimp Paste Fried Rice, outperforming human estimators. User feedback indicated moderate satisfaction with the application’s speed, stability, and ease of use, reinforcing its practical applicability in real-world settings. This AI tool has the potential to empower individuals, including those who are overweight or obese, by supporting informed dietary choices and promoting adherence to healthier eating patterns.

Overall mean absolute errors in proportional estimation of three components across three dishes for each participant group: (A) Hainanese Chicken Rice, (B) Shrimp Paste Fried Rice, and (C) Egg Noodle. The results are shown as mean ± standard deviation (SD). Statistical significance is indicated by an asterisk (*) for differences between groups (p < 0.05) compared to the AI group, assessed using a Mann-Whitney U test.

Percentage of respondents (n = 23) at each level of satisfaction (A) and agreement (B) regarding the evaluated aspects of the web application. Satisfaction levels assess participants’ perceptions of various features, while agreement levels reflect participants’ attitudes toward the application’s practical applications in dietetics and nutrition education.

Data availability

The complete datasets generated and analyzed in this study are presented within this manuscript.

Code availability

To promote transparency and reproducibility, the core computational codes used in this study -including the Python scripts for data augmentation, model development, and training - have been made openly accessible via GitHub at https://github.com/patinya2001/AI-DietaryProportionProject.

Abbreviations

- AI:

-

Artificial intelligence

- ND:

-

Dietetics students

- RD:

-

Registered dietitians

- MAE:

-

Mean absolute error

- NCD:

-

Non-communicable diseases

- ML:

-

Machine Learning

- DL:

-

Deep Learning

- PLM:

-

Pixel Labeling Method

References

Boutari, C. & Mantzoros, C. S. A. 2022 update on the epidemiology of obesity and a call to action: as its twin COVID-19 pandemic appears to be receding, the obesity and dysmetabolism pandemic continues to Rage on. Metab 133, 155217 (2022).

Ejigu, B. A. & Tiruneh, F. N. The link between overweight/obesity and noncommunicable diseases in Ethiopia: Evidences from nationwide WHO STEPS survey 2015. Int. J. Hypertens. 2023, 2199853 (2023).

Kahn, S. E., Hull, R. L. & Utzschneider, K. M. Mechanisms linking obesity to insulin resistance and type 2 diabetes. Nature 444, 840–846 (2006).

Nyberg, S. T. et al. Obesity and loss of disease-free years owing to major non-communicable diseases: a multicohort study. Lancet Public. Health. 3, e490–e497 (2018).

Elmaleh-Sachs, A. et al. Obesity management in adults: A review. JAMA 330, 2000–2015 (2023).

Chao, A. M., Quigley, K. M. & Wadden, T. A. Dietary interventions for obesity: clinical and mechanistic findings. J. Clin. Invest. 131, e140065 (2021).

Camelon, K. M. et al. The plate model: a visual method of teaching meal planning. DAIS project Group. Diabetes atherosclerosis intervention study. J. Am. Diet. Assoc. 98, 1155–1158 (1998).

Bouchaud, C. C. et al. A qualitative evaluation of a Plate-Method dietary Self-Monitoring tool in a sample of adults over 50. Curr. Dev. Nutr. 7, 101975 (2023).

Jayawardena, R., Sooriyaarachchi, P., Punchihewa, P., Lokunarangoda, N. & Pathirana, A. K. Effects of plate model as a part of dietary intervention for rehabilitation following myocardial infarction: a randomized controlled trial. Cardiovasc. Diagn. Ther. 9, 179–188 (2019).

Jayawardena, R., Swarnamali, H., Ranasinghe, P. & Hills, A. P. Impact of portion-control plates (PCP) on weight reduction: A systematic review and meta-analysis of intervention studies. Obes. Res. Clin. Pract. 15, 106–113 (2021).

Oh, Y. J., Zhang, J., Fang, M. L. & Fukuoka, Y. A systematic review of artificial intelligence chatbots for promoting physical activity, healthy diet, and weight loss. Int. J. Behav. Nutr. Phys. Act. 18, 160 (2021).

Sak, J. & Suchodolska, M. Artificial intelligence in nutrients science research: A review. Nutrients 13, 322 (2021).

Lu, Y. et al. goFOOD™: an artificial intelligence system for dietary assessment. Sens. (Basel). 20, 4283 (2020).

Mezgec, S., Koroušić Seljak, B. & NutriNet: A deep learning food and drink image recognition system for dietary assessment. Nutrients 9 (2017).

Vasiloglou, M. F. et al. A comparative study on carbohydrate estimation: GoCARB vs. Dietitians. Nutrients 10, 741 (2018).

Bond, A., McCay, K. & Lal, S. Artificial intelligence & clinical nutrition: what the future might have in store. Clin. Nutr. ESPEN. 57, 542–549 (2023).

Maher, C. A., Davis, C. R., Curtis, R. G., Short, C. E. & Murphy, K. J. A physical activity and diet program delivered by artificially intelligent virtual health coach: Proof-of-Concept study. JMIR Mhealth Uhealth. 8, e17558 (2020).

Chew, H. S. J. et al. Effectiveness of an artificial Intelligence-Assisted app for improving eating behaviors: mixed methods evaluation. J. Med. Internet Res. 26, e46036 (2024).

Shonkoff, E. et al. AI-based digital image dietary assessment methods compared to humans and ground truth: a systematic review. Ann. Med. 55, 2273497 (2023).

Ji, Y., Plourde, H., Bouzo, V., Kilgour, R. D. & Cohen, T. R. Validity and usability of a smartphone Image-Based dietary assessment app compared to 3-Day food diaries in assessing dietary intake among Canadian adults: randomized controlled trial. JMIR Mhealth Uhealth. 8, e16953 (2020).

Sasaki, Y., Sato, K., Kobayashi, S. & Asakura, K. Nutrient and food group prediction as orchestrated by an automated image recognition system in a smartphone app (CALO mama): validation study. JMIR Form. Res. 6, e31875 (2022).

Rhyner, D. et al. Carbohydrate Estimation by a mobile Phone-Based system versus Self-Estimations of individuals with type 1 diabetes mellitus: A comparative study. J. Med. Internet Res. 18, e101 (2016).

Ma, P. et al. Image-based nutrient Estimation for Chinese dishes using deep learning. Int. Food Res. 147, 110437 (2021).

Herzig, D. et al. Volumetric food quantification using computer vision on a Depth-Sensing smartphone: preclinical study. JMIR Mhealth Uhealth. 8, e15294 (2020).

Domhardt, M. et al. Training of carbohydrate Estimation for people with diabetes using mobile augmented reality. J. Diabetes Sci. Technol. 9, 516–524 (2015).

Peters, S., Clarebout, G., Aertgeerts, B., Leppink, J. & Roex, A. Supporting students with electronic health Record–Embedded learning aids: A Mixed-Methods study. JMIR Med. Educ. 5, e11351 (2019).

Acknowledgements

This project is funded by National Research Council of Thailand (NRCT: N42A680622) and Chulalongkorn University. We would like to thank the Ratchadaphiseksomphot Fund, Chulalongkorn University, for their support through the grant for developing new faculty staff (Grant No. DNS_67_045_3700_001).

Author information

Authors and Affiliations

Contributions

W.C.: Conceptualization, Methodology, Validation, Formal analysis, Investigation, Software development, Writing – review & editing Writing – original draft; P.J.: Software development, data analysis; A.J.: Data collection, investigation, validation, data interpretation, Writing – original draft; R.D.: Data collection, investigation, validation, data interpretation; T.K.: Data collection, investigation; C.C.: Conceptualization, methodology, Writing – review & editing, project administration, Funding acquisition; S.A.: Conceptualization, methodology, Funding acquisition, Writing – review & editing, project administration, Writing – review & editing, Supervision.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Choochaiwattana, W., Jaruariyanon, P., Jitpranee, A. et al. AI powered dietary proportion assessment for improving accuracy and practicality of the balanced meal plate model. Sci Rep 15, 45273 (2025). https://doi.org/10.1038/s41598-025-29631-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-29631-w