Abstract

As a major burden of blast furnace, sinter mineral with desired quality performance needs to be produced in sinter plants. The tumbler index (TI) is one of the most important indices to characterize the quality of sinter, which depends on the raw materials proportion, operating system parameters and the chemical compositions. To accurately predict TI, an integrate model is proposed in this study. First, to decrease the data dimensionality, the sintering production data is addressed through principal component analysis (PCA) and the principal components with the accumulated contribution rate no more than 95% are extracted as the inputs of the predictive model based on Extreme Learning Machine (ELM). Second, the genetic algorithm (GA) has been applied to promote the improvement of the robustness and generalization performance of the original ELM. Finally, the model is examined using actual production data of a year from a sinter plant, and is compared with the algorithms of single ELM, GA-BP and deep learning method. A comparison is conducted to confirm the superiority of the proposed model with two traditional models. The results showed that an improvement in predictive accuracy can be obtained by the GA-ELM approach, and the accuracy of TI prediction is 81.85% for absolute error under 0.7%.

Similar content being viewed by others

Introduction

Nowadays, with the advent of carbon peak and carbon neutrality, green intelligence is an important system engineering that runs through the entire process in the steel industry. In order to operate blast furnace (BF) efficiently and economically, good quality of the iron bearing materials is of central importance. Sinter mineral is used as the main iron bearing material in BF, so the quality of sinter mineral has a significant impact on the operation of BF. The strength is one of the most important quality indices of the finished sinter and is set according to the operational requirements of BF. If not satisfied, raw material ratios of blending or operation system parameters should be adjusted to technological requirements, and this requires a known causal relationship in the iron ore sintering process. Iron ore sintering is one of the main methods for making iron ore blocks. The iron concentrate obtained by beneficiation of poor iron ore, the fine ore generated by crushing and screening of rich iron ore, the iron containing materials recovered during production (blast furnace and converter dust, continuous casting, and rolling iron sheet), fluxes (limestone, quicklime, hydrated lime, dolomite, and magnesite), and fuels (coke breeze and anthracite) are mixed in the required proportion with water to make a granular sintering mixture, which is charged on a moving strand and sintered into blocks by ignition and exhaust. The hot gas jets from the ignition hood begins to burn coke at the surface of the bed and the heat is sucked into the bed by the gas drawn in through the offline air box. The heat holding furnace is used to ensure that the surface of the sintering bed fully absorbs heat. The flame front in sintering is controlled by strand speed to guarantee that the burn through point of the material layer occurs before the end of the strand where sinter cake is discharged. The discharged sinter cake is dropped and broken, and then cooled in cooling system. After cooling, the sinters are sieved and the smaller part (less than 5 mm) is sent back to the raw material feeding system as return fines. Then transfer the oversized portion to BF and use the screened portion of the required size (10–16 mm) as bed layer material. The cold strength characteristics of sinter is determined by the tumbler test (ISO 3271/75). The particle size fraction of + 6.3 mm represents the tumbler index(TI).

The iron ore sintering process is extremely complex due to the nonlinear, process factors coupled and the long time delay from raw materials burdening to the final finished sinter produced. To deal with this complex problem, many researches have been made to construct models to describe the process of sintering to predict the quality index.

Laboratory-based sinter-pot tests can elucidate the relationships between iron ore characteristics and sinter strength by observing microstructures and chemical compositions of the agglomerated product. Zhang et al.1 performed sinter-pot tests to study the relationship between the chemical composition and sinter body strength. Pownceby et al.2 proposed laboratory-based sinter tests to examine the links between iron ore composition and sintering conditions and their effects on sinter strength. Cheng et al.3 focused on interpreting the internal connection between the process parameters and real sinter quality in the sintering process. Guo et al.4 investigated the effect of the MgO content on the reduction melting mechanism of sinter. Wu et al.5 discussed the mechanism of the hydrogen-rich gas application in iron ore sintering process. Wang et al.6 investigated the effects of ammonia injection on the sintering characteristics. Liu et al.7 studied the effect of silica content on iron ore sintering indexes. Xu et al.8 discussed the effect of the bed depth on the mechanical strength of sinter. Patil et al.9 explored the utilization of pellet fines in the iron ore sintering process and elaborated the relationship between the tumbler index and the the usage of pellet fines. However, they were limited to apply to an actual industrial process because the measurement technology that the experiments required does not supplied extensively in the sintering plants.

Models base on mass and energy balance have been developed to describe the complex thermodynamic and kinetic phenomena involved in the sintering process10,11,12,13. Muller et al.14 proposed a finite difference model to predict the key performance parameters of sintering process such as the chemical quality, the physical strength, the productivity, and fuel consumption rate. These models were built on a series of ideal hypothesis to simplify actual mechanism, and only describe the local properties of the complex process, which were mostly confirmed on laboratory tests of sinter pot but different from the industrial production scale. It is difficult, if not impossible, to establish a fully first-principle model that covers all dynamic mechanisms of the sintering process.

Data-driven black-box models are able to characterize the nonlinear behavior of the sintering process, and have been developed to elaborate the relationships of inputs (process features) and outputs (sinter quality indices)15,16,17,18,19,20,21,22. For these black-box models, prior knowledge is not required, and are results in high computational efficiency by using computational intelligence methods such as artificial neural network (ANN)23,24, fuzzy system25,26,27, support vector machine (SVM)28, extreme learning machine (ELM)29, and convolutional neural network (CNN)30,31. One issue associated with these studies is that there are a large amount of potential features to choose as the inputs of the model, but the number of input attributes is limited to the statistical observations32. To solve this problem, the principal component analysis (PCA)33 is employed to reduce the dimensions of the large scale of sintering production data in the present study, and to extract the few front principal components whose accumulative scores are no more than 95% as the inputs of the predictive model. Egorova et al.34 used the PCA approach to reduce the task dimensionality in modelling sintering process. Du et al.35 employed the PCA method to reduce the dimensionality of detection parameters in prediction of burn-through point (BTP) for iron ore sintering process. Gao et al.36 used the PCA to decrease the dimensionality of the influence factors of tumble strength of iron ore sinter. Another issue is the accuracy of the proposed models. ANN may fall into local optima due to the gradient descent algorithm. In comparison with conventional neural networks, extreme learning machine (ELM)37,38,39,40 can theoretically obtain the global optimal solution with excellent computational speed, and much fewer parameters need to be determined initially. Although ELM has so many advantages and has been chosen to model the TI of the sinter in this paper, the original ELM algorithm is unstable due to the random choosing the weight vectors and threshold values for hidden nodes between input and hidden layer. To avoid this drawback, the genetic algorithm (GA)41,42,43 was applied to search the optimal combination of these two parameters with the minimal value of the fitness function. The experimental results indicate that GA-ELM has good generalization performance in prediction of TI for the iron ore sintering process.

The main contributions of the study can be illustrated in two aspects. On one hand, ELM is an effective neural network algorithm that randomly initializes hidden layer weights and biases, and calculates output layer weights using the least squares method. To further improve the performance of ELM, we use genetic algorithm for optimization of the weights and biases. By combining genetic algorithm with ELM, we can find better weights and biases configurations, thereby improving the predictive performance of ELM. On the other hand, PCA can effectively reduce the dimensionality of data and simplify its complexity by projecting high-dimensional data into a low dimensional space, while preserving the main features of the data. The PCA approach is used to extract the main principal components of the three types of operation parameters in iron ore sintering process.

The remaining part of this paper is organized as follows: Section II elaborates on the proposed feature selection method with PCA in prediction of TI. In Section III, the topology of ELM and GA-ELM are depicted. Section IV presents the performance comparison results of ELM, GA-BP and GA-ELM in prediction of TI. The conclusions and discussions are drawn in Section V.

Extracting features based on PCA

Feature selection is the process of selecting the most relevant or useful features from all available features. Not all features are helpful for the model, and sometimes having too many features can increase the complexity of the model and reduce its generalization ability. Feature selection can help simplify models, improve training efficiency and predictive performance. This study uses the PCA method to extract the attributes from the data, which not only preserves key information but also reduces the dimensions of the data.

The proposed methodology is clearly depicted in Fig. 1. The structure of this study could be divided into three stages. First, the iron ore sintering process and operational parameters were analyzed to obtain the fishbone diagram for the TI prediction. According to the operational process, the parameters could be classified into source parameters, process parameters, and target parameters is to clarify the roles and functions of each parameter in the production process. Second, the PCA approach was used for the three categories respectively and the principal components are obtained. The optimal feature set was obtained with this feature selection method. Last, the TI predictive model was established for iron ore sintering process by using the GA-ELM algorithm. Experimental tests could be constructed to get the predictive performance of various machine learning algorithms and evaluate the efficient of the proposed method.

Parameters affecting TI of sinter mineral

The parameters involved in sintering process that affect the TI were divided into the following three categories. (i) Raw material parameters: the proportion of iron ores, solid fuels (mainly coke breeze), limestone, dolomite, lime, return fines, and so on. These parameters were determined in the raw material burdening process. (ii) Operation system parameters: moisture rate, bed height of material layer, strand speed, ignition density, temperature of ignition hood, air flow rate, temperature of exhaust gas, burning through point (BTP), and so on. These parameters were measured by soft sensor technology or calculated indirectly from other correlative parameters. (iii) Chemical compositions of finished sinter mineral: grade of sinter (TFe), FeO, CaO, MgO, SiO2, Al2O3, MnO, S, P2O5, basicity (CaO/SiO2), and so on. To identify the possible parameters that may affect the TI of sinter, all possible parameters were collected from the operation data, fishbone diagram of parameters affecting TI is shown in Fig. 2.

The production data were collected from a sinter plant. All of the parameters mentioned above were summarized and grouped in Table 1 based on their nature or location.

According to the characteristics of iron ore sintering process, the above parameters are divided into source parameters, process parameters, and target parameters. Source parameters are input variables or conditions that affect a system or process, and they are usually set or controlled before the system starts. In this study, the raw materials are regarded as the source parameters for the iron ore sintering process. Process parameters are variables that may change or adjust during the operation of a system or process, and they directly affect the internal operation and performance of the system. The operation system parameters of the sinter machine are denotes as the process parameters. The target parameters are the output variables or performance indicators of a system or process, which reflect the final result or quality of the system or process. The chemical composition parameters of sintered ore are defined as the target parameters of the sintering process. The analysis methods of source parameters, process parameters, and target parameters are usually cross correlated, as they collectively determine the overall performance and output of the system or process. Effective analysis requires comprehensive consideration of the relationships between these parameters in order to optimize and improve the design and operation of the system or process. In this study, we applied the PCA approach to analyze the analyze the effects of source parameters, process parameters, and target parameters on the TI of the sinter ore.

Model configuration

With the analysis of production data from a sinter plant in Baosteel, there are 41 parameters (8 raw materials, 22 operation system parameters, and 11 chemical compositions) affecting the TI. Not all of these parameters have to be chosen as the inputs of the prediction model, the parameters in three categories are analyzed by PCA to extract the principal components. The framework of the predictive model is shown in Fig. 3.

As shown in Fig. 3, the few front principal components that contain the information of all the 41 parameters are obtained by using PCA. The predictive model of TI is established based on these principal components. PCA is a commonly used data analysis method that achieves dimensionality reduction of high-dimensional data by extracting the main feature components of the data. It is commonly used in data preprocessing and exploration. In this study, we applied the PCA approach to analyze the effects of source parameters, process parameters, and target parameters on the TI of the sinter ore. The principal components of the three different type of parameters could be extracted and further improve the performance of the predictive model.

Procedure of PCA feature selection

The aim of PCA approach is to pick out main components and reduce the dimensionality of the multivariate data without a significant loss of information. The computing procedure of PCA33 is stated as the following steps.

Step 1: Data standardization. The sample data are normalized with a zero mean and a unit variance to reduce the sensitivity of the different scale of the original variables. This standardization is obtained by the following formula:

where, xstd denotes the standardized data, x is the original value, xmin and xmax are the minimum and maximum value of the original data.

Step 2: Calculate the correlation matrix. According to the covariance method, the correlation matrix C of the input data X is calculated as:

where, X∈ℜn×u denotes the standardized form of the original data set, n denotes the number of samples, u denotes the number of eigenvectors.

Step 3: Calculate the eigenvalues of the correlation matrix.

where, V denotes the eigenvector matrix, eig(C) denotes the diagonal eigenvalues matrix. The u eigenvalues are stored in descending order as λ1 > λ2> …>λu.

Step 4: Determine the quantity of the principal components. The contribution rate of the ith principal component is calculate as \({{{\lambda _i}} \mathord{\left/ {\vphantom {{{\lambda _i}} {\sum\nolimits_{{i=1}}^{u} {{\lambda _i}} }}} \right. \kern-0pt} {\sum\nolimits_{{i=1}}^{u} {{\lambda _i}} }}\). The accumulated contribution rate for the former kth principal component is calculate as \({{\sum\nolimits_{{i=1}}^{k} {{\lambda _i}} } \mathord{\left/ {\vphantom {{\sum\nolimits_{{i=1}}^{k} {{\lambda _i}} } {\sum\nolimits_{{i=1}}^{u} {{\lambda _i}} }}} \right. \kern-0pt} {\sum\nolimits_{{i=1}}^{u} {{\lambda _i}} }}\). The k value is determined by the accumulated contribution rate of 95% in the present work.

Step 5: Obtain the principal component variables. The columns of matrix P are formed by the eigenvectors vi corresponding to the kth eigenvalues:

Having the matrix P, we can obtain the principal component matrix:

Thus, the high dimensional sample data X∈ℜn×u is changed into the lower dimensional principal component matrix T∈ℜn×k through PCA approach.

PCA is a mathematical dimensionality reduction method. It uses orthogonal transformation to transform a series of potentially linearly correlated variables into a set of linearly uncorrelated new variables, also known as principal components, thereby utilizing the new variables to display the characteristics of the data in a smaller dimension. The amount of variance interpreted by each principal component is important and can be determined by analyzing the cumulative rate. Each principal component is a linear combination of the original variables. Analyzing the weights of each variable on the principal components can provide insights into which variables contribute most to each component. Variables with higher absolute weights on a component are more influential in determining that component. The PCA method is often used as a preprocessing step in machine learning models by reducing the dimensions of variables. By applying PCA method, we can examine how many principal components capture most of the variability information in the original data. Typically, we select multiple components to explain a sufficiently high percentage of the total variance (e.g., 70% ~ 95%). Overall, PCA provides a way to simplify data analysis by reducing the scale of variables while retaining the most important information. Interpretation involves understanding how the original variables contribute to the principal components and what patterns emerge in the reduced dimensional space.

GA-based ELM algorithm

The weights and thresholds which randomly selected in the original ELM algorithm requires optimizing with optimization algorithms. Genetic algorithms can perform global searches in the parameter space to find potential optimization solutions. Due to the use of random search and diversity maintenance strategies in genetic algorithms, ELM can avoid getting stuck in local optima and help discover global optimal solutions or solutions closer to global optimal solutions.

Extreme learning machine (ELM)

An ELM39 is an algorithm used for training single hidden layer feedforward neural network that achieves efficient learning by randomly initializing input weights and bias. It exhibits high learning efficiency and generalization ability in problems such as classification and regression. The algorithm mainly determines the output weights by minimizing the error, simplifying the learning process to solve a linear system, avoiding the adjustment of hidden layer parameters, and ensuring fast convergence40.

Fig. 4 shows a schematic diagram of ELM network with n, L, m nodes in the input, hidden, and output layer, respectively. The mechanism of ELM algorithm can generally be described as follows.

For N different training samples {(xi, yi)|xi ∈ℝn, yi∈ℝm, i = 1, 2, …, N}, in which xi = [xi1, xi2, …, xin]T is the input vector and yi = [yi1, yi2,…, yim]T is the desired output. The output of the network can be expressed by L hidden nodes:

where wj = [w1j, w2j,…, wnj]T is the weight vector between the input node and the hidden node, bj is the threshold of the jth node in the hidden layer, βj = [βj1, βj2,…, βjm]T is the weight vector between hidden and output node, G(wj, bj, x) = g(wj·xi + bj) is the output of the jth hidden node.

These equations can be written as follows:

where

and β = [β1, β2,…, βL]T, Y=[y1, y2, …, yN]T. H is the output matrix of the hidden layer. If the activation function g(x) is infinitely differentiable, the output weight could be solved by finding the least square solutions as follows:

where H† is the Moore-Penrose generalized inverse matrix of H.

One of the main advantages of ELM is its fast training speed. Compared to traditional neural networks, ELM does not require iterative adjustment of the weights of hidden layers, but directly solves the weights of output layers in one go through random initialization and least squares method. This gives ELM a significant training time advantage on large-scale datasets. On the other hand, the algorithm structure of ELM is simple, easy to implement and adjust. It does not require complex back-propagation algorithms, but rather obtains the weights of the output layer through solving a linear system. The ELM algorithm usually exhibits good generalization ability when dealing with practical problems. This is partly attributed to its random initialization method and fast training process, which enables ELM to effectively avoid overfitting problems. The ELM algorithm is commonly applied in machine learning tasks such as classification, regression, and feature learning, especially in big data scenarios. Due to its fast training and good generalization ability, ELM can often provide good solutions. However, there are some disadvantages of the original ELM algorithm. The random initialization of ELM may lead to different training results, which may in some cases affect the stability and reliability of the model. Although this problem can be alleviated through multiple random initializations and model averaging, it increases the complexity of implementation. The traditional ELM algorithm does not support incremental learning, which means it cannot gradually update the model online and requires retraining the entire model to adapt to new data. Therefore, in this study, we applied genetic algorithms to optimize the ELM algorithm to improve its stability, robustness, and generalization performance.

Proposed GA-ELM

Due to the random selection of the weight vector wj between the input node and hidden node in the original ELM, as well as the threshold of the jth hidden node bj, it lacks stability and generalization ability for small sample data. Considering this, we propose genetic algorithm to optimize the weight and the threshold (wj, bj) to promote the stability and generalization ability of the original ELM algorithm. The schematic diagram of GA combined with ELM is shown in Fig. 5.

Genetic algorithm simulates the theory of biological evolution to solve optimization problems, and has been used widely in many aspects43. A set of individual elements is created as initial population for search at first and the ‘fitness’ of each individual is assessed based on the constraints of the problem. Then the individuals with better fitness from the initial population are selected to produce a new population of individuals (offspring) through ‘crossover’ and ‘mutation’. The crossover operator obtains two chromosomes from the first population and exchanges some information to develop new chromosomes. Mutation is implemented by occasionally changing a random bit in a string to generate a new individual. Those individuals with better fitness produced by the process mention above are the next population. This genetic manipulation process is repeated to find satisfactory solution to the problem. The GA has been explained in details in41,42,43. The procedure of the proposed GA-ELM is stated as follows:

Step 1: Population initialization.

Randomly selected vectors from the input set X = {X1, X2, …, Xn} to construct a population with size of N.

where, n is number of input layer nodes, L is number of hidden layer nodes, m is number of output layer nodes.

Step 2: Fitness function.

Fitness function is a criterion to evaluate an individual’s performance in iteration. The root mean square error (RMSE) of the testing set is referred to as the fitness function.

where y’ denotes the predicted values, y denotes the actual measured values and l is the number of testing sample.

Step 3: Selection.

Individuals with better fitness from the initial population are chose as parents for breeding a new population of individuals (offspring) in this step. The roulette wheel method is used to select two chromosomes as parent according to their selection probabilities.

Step 4: Crossover.

A new child individual Xk is generated by the following formula:

where, Xi and Xj are the two parents determined in Step 3, α is a specified constant yields in (0, 1).

Step 5: Mutation.

After a new child individual Xk is created in Step 4, mutation is conducted to keep the variation of chromosomes.

where,

In (13) and (14), ub and lb are the upper and lower bounds of the variable, r, r’ are constants in the range of [0, 1], g is the number of current generation, Gmax is the maximum number of generation.

Genetic algorithm, as a heuristic optimization algorithm, has multiple advantages in solving complex problems and global optimization. GA can be applied to various optimization problems, whether it is continuous, discrete, or combinatorial, and can effectively find optimal solutions. There is good parallelism in the calculation process of GA, as multiple individuals can be evaluated and mutated simultaneously during the evolution process, thus utilizing parallel computing resources to accelerate the solving process. Due to the use of a population search strategy in GA, the crossover and mutation operations of multiple individuals in the population help to break out of local optima and make it more likely to find global optima or better solutions. GA has a certain adaptive ability during the evolution process, and can adjust parameters such as evolution strategy, crossover probability, mutation probability, etc. Thus improving the search efficiency and convergence speed of the algorithm.

GA-ELM can promote the generalization ability of ELM by optimizing the parameters of ELM through genetic algorithms, such as the weights of input layers and the bias of hidden layer. The traditional ELM may fall into local optima during parameter initialization and training, while GA-ELM, through the population search strategy of genetic algorithm, helps to break out of local optima and improve the possibility of finding global optima or better solutions. The GA-ELM algorithm, by combining the advantages of genetic algorithm and extreme learning machine, can improve generalization ability, avoid local optima, effectively handle feature selection and parameter optimization when optimizing ELM models. It is a powerful optimization framework suitable for various complex machine learning and optimization problems.

Simulations and results

This part shows the results of the proposed methodology applied to predict TI in the iron ore sintering process. In addition, further comparative investigations were conducted with two other machine learning algorithms to validate the effectiveness of the proposed algorithm.

Principal component extraction of parameters

The raw material parameters, operation system parameters and chemical composition parameters were analyzed by using PCA respectively. The contribution rate of each eigenvalue was obtained as well as the accumulated contribution rate. The number of principal components could be specified by the accumulative rate. The variations of eigenvalue and cumulative contribution rate explained based on PCA of the three different parameters were obtained. The principal component values of raw material parameters were shown in Fig. 6. The principal component values of operation system parameters and production parameters were shown in Figs. 7 and 8. The contribution rate represents the degree to which each principal component contributes to the total variance. The contribution rate was equal to the eigenvalue of each principal components. The larger the contribution rate, the greater contribution of the principal component to the total variance. For each principal component, the contribution rate was a value between 0 and 1, and the sum of the contributions of all principal components was equal to 1. The principal components with higher contribution rates indicate their strong explanatory power towards the data. The accumulative contribution rate of the first two principal components reached nearly 60% in the source parameters as shown in Fig. 6. This denoted that the first two principal components capture a nearly 60% of the influence rate in the source parameters. The first 5 principal components in the total 22 reaches over 60% of the impact in the process parameters as shown in Fig. 7. The accumulative contribution rate of the first three principal components exactly surpassed 60% in the target parameters as shown in Fig. 8. From these results of accumulative contribution rate, the amount of principal components could be determined by considering the accumulative contribution rate and the contribution rate of principal components. A higher accumulative contribution rate and appropriate principal component contribution rate could effectively retain important information in the data, meanwhile achieving the goal of data dimensionality reduction.

The parametric analysis by using the PCA method demonstrated how the principal components respond to changes of the variation in parameters. With the PCA results, the eigenvectors of the source parameters, process parameters and target parameters could be calculated respectively. The former two eigenvectors of the source parameters are as follows.

The former two principal components of the source parameters are obtained as:

where, xi (i = 1, 2, …, 8) are the iron ore mix, limestone, sintered powder, quicklime, dolomite, serpentine, solid fuel and return fines data of source parameters. With this method, the principal components of the process parameters and target parameters are obtained.

In this study, there are 41 candidate features which are listed in Table 1. There are 8 features in the raw material category, 22 features in the operational system parameters category, and 11 features in the production parameters category. The PCA approach was used for the three categories respectively and the principal components are obtained. The significance of classifying process parameters into source parameters, process parameters, and target parameters is to clarify the roles and functions of each parameter in the production process, so as to better manage and optimize them. Source parameters refer to the parameters that provide basic data or conditions during the production process. For example, the raw materials and ratios used in the sintering process directly affect the starting conditions and external environment of the iron ore sintering process. Reasonable setting of source parameters is the foundation for ensuring stable and efficient production processes. Process parameters refer to the parameters that directly affect product characteristics and quality during the production process. For example, parameters such as water distribution, temperature, strand speed, and material layer thickness directly affect the physical and chemical properties of the product during the production process. The optimization of process parameters is the key to improving product quality and production efficiency. Target parameters refer to the expected results or standards that need to be achieved during the production process. For example, product qualification rate, production efficiency, and other parameters are important indicators for measuring production effectiveness. The setting and monitoring of target parameters help evaluate the overall effectiveness of the production process. In this study, the PCA was used to elaborate the features of the three categories and the effect of parameters could be more clearer than the original operational data.

The principal components of the raw material, operation system parameters and chemical composition parameters are obtained by calculating the eigenvector of eigenvalues tabulated above respectively. To determined the principal components of the dataset extracted by PCA, the different principal components are selected from the range {75%, 80%, 85%, 90%, 95%, 99%} of accumulated contribution rate as input feature vector. Thus, the number of principal components are in the range {12, 14, 18, 20, 25, 30} according to the accumulated contribution rate range. In the ELM algorithm, the Sigmoid function G(w, b, x) = 1/(1 + exp(-(wx + b))) is adopt as the actisvation function. The number nodes of hidden layer is selected from the set of {1, 2, …, 50}. The total 258 sample data are divided into two groups, 220 samples are applied to train the model, the other 38 samples are used to test the model’s prediction accuracy. We have conducted thirty trails of experiments and selected the number of hidden nodes as the lowest RMSE in the test set. Fig. 9 shows the average RMSE of testing set with respect to accumulated contribution rate of principal components with the best hidden nodes for each input feature vector case.

Fig. 9 shows the variation of RMSE of the test set with the input vectors determined by accumulative contribution rate. To achieve efficient computation of the predictive model, the optimal performance with the lowest RMSE contribution rate was chosen.

The results of PCA for parameters analysis of the sintering process output the variance proportion explained by each principal component, which is the variance explanatory rate. These proportions can help us understand the proportion of each principal component in the variance of the original data. According to the experimental results as shown in Fig. 8, the RMSE curve decreases with the increase of the cumulative rate under 95% and then increases after the cumulative rate larger than 95%. The minimum value of RMSE achieved when the cumulative rate at 95%. This means that the principal components that can explain 95% of the data variance are retained to achieve effective dimensionality reduction. Therefore, the cumulative contribution rate with 95% is selected and the corresponding number of principal components is 25.

Verification of the prediction model

We have conducted Thirty trails of simulations and the average value is taken as the “final results”. For comparison, experiments were conducted on the single ELM algorithm, GA-ELM algorithm and the GA-BP algorithm. The grid search approach was used to determine the hyperparameters of the machine learning algorithms. Grid search approach is a method of finding the optimal model parameters through exhaustive search. The basic principle is to define the range or candidate values for each parameter, then traverse all possible combinations of these parameters, train a model for each combination, and evaluate its performance. The best combination of parameters with the best performance is selected as the optimal parameter.

-

(1)

Single ELM model: The input layer of ELM has 25 nodes extract from 41 total parameters based on PCA. The hidden layer has 29 nodes which is decided according to the RMSE on testing set. In order to eliminate the influence caused by the dimension of production data, the sample data are normalized to the range [-1, 1] in our experiments.

-

(2)

GA-ELM model: The population size, the maximum number of generation, the generation gap, the crossover and mutation rates are 40, 100, 0.95, 0.7, and 0.01 respectively.

-

(3)

GA-BP model: Three layer BP neural networks combined with GA is used here. The learning rate, training goal and epochs are 0.05, 0.001 and 1000 respectively. The number of hidden layer nodes is derived from the empirical formula Eq. (15) and the one with best performance is selected.

where l, n, m are the number of the hidden layer, the input layer, and the output layer nodes, a is a constant between 1 and 10.

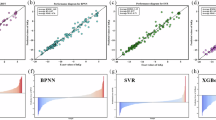

To test the precision of the established predictive model, the output of the TI value predicted from the model is compared with the actual measured values. The predicted results by the ELM algorithm, GA-BP algorithm and the GA-ELM algorithm are shown in Figs. 10, 11 and 12, respectively. The predictive errors of the three models are depicted in Fig. 13.

As we can see in Fig. 13, the error range of the GA-ELM model is smaller than that of ELM and GA-BP models. It indicates that the performance of the GA-ELM model is better than both single ELM and GA-BP algorithms. This means the predictive results of GA-ELM are closer to the actual values, indicating that the GA-ELM has good generalization performance in forecasting TI in the iron ore sintering. Furthermore, the predicted values of GA-ELM are more closer to the corresponding actual values, which indicates the effectiveness of the proposed GA-ELM model. The experimental predictive results proves that the proposed GA-ELM algorithm has higher predicting accuracy than the other two models.

The prediction accuracy are calculated to depict the performance of different models as shown in Fig. 14. The accuracy in Fig. 14 denotes the hit rate of the prediction model, which refers to the probability that the predicted values falls within a specific absolute error range. It is clear that the GA-ELM algorithm is the best, and the performance of GA-BP algorithm is superior to that of the ELM algorithm. The prediction accuracy of GA-ELM model is 100% for an absolute error under 1.0%. The proposed model achieved 81.85% of accuracy for an absolute error tolerance of a 0.7%. The proposed model achieved around 70% of accuracy for an absolute error tolerance of a 0.5%. The precision reached by GA-ELM method is very high as regards the original ELM and GA-BP algorithm. This indicates that the accuracy of the GA-ELM based TI prediction model for the sintering process is satisfactory.

To evaluate the robustness of the proposed model, the comparison of the ELM, GA-BP, LSTM and GA-ELM algorithms were conducted on TI prediction of iron ore sintering process. The results of the four algorithms with 100 experimental tries are shown in Fig. 15. The box-plot depict the distribution of the experimental results. The red line in the blue box denotes the average value of the 100 experimental results. It means that the GA-ELM algorithm get the best average RMSE of the four algorithms. The box-plot can also display the central tendency and dispersion of the data points. The distance between the two line outside the box denotes the dispersion of the data. The larger the distance, the greater the dispersion. That is to say, the GA-ELM algorithm has the best robustness. Furthermore, we can also observe from the graph that the LSTM algorithm occasionally achieves the best prediction results. Part of the reason may be that the data scale in this study is too small, and the LSTM algorithm is mainly used for big data situations.In summary, compared with ELM and GA-BP algorithm, the proposed GA-ELM algorithm has higher accuracy, so it can be used in predicting TI of sinter in the iron ore sintering process.

Combining the advantages of GA and ELM, GA-ELM has demonstrated strong potential in data regression prediction. First, through the search capability of GA, GA-ELM can find better initial weights and biases, thereby improving the predictive performance of the original algorithm. Second, GA-ELM utilizes the fast training capability of ELM to efficiently perform regression predictions on real operational datasets. In addition, GA-ELM has good interpretability and can analyze and explain the factors that affect the prediction results. PCA is an unsupervised learning method that assumes that there is linear correlation between the feature attributes of data, resulting in information redundancy between data. Through orthogonal transformation, linearly correlated features are represented with less linearly irrelevant data to achieve dimensionality reduction. The main advantages of PCA algorithm are that it only needs to measure the amount of information by variance and is not affected by factors outside the dataset. Orthogonal between the principal components can eliminate the mutual influence factors between the original data components. The calculation method is simple and easy to implement. The main disadvantage of PCA algorithm is that the meanings of each feature dimension of principal components do not have actual physical meanings, so it is not as interpretable as the original sample features. Non principal components with small variances may also contain important information about sample differences, as dimensionality reduction and discarding them may have an impact on subsequent data processing. Since the GA is used to optimize the initial weights and biases of ELM network, the similar optimization algorithms, such as particle swarm optimization, can also be used in this area. The practical significance of accurately predicting the quality of sinter mainly lies in the following aspects. By accurately predicting the quality of sinter, enterprises can adjust the raw material ratio more scientifically, ensure the stability of sinter quality, and thus improve the operational efficiency of blast furnaces. High quality sinter can reduce the fuel consumption and maintenance costs of blast furnaces. By predicting the quality of sinter, enterprises can choose more cost-effective raw materials, thereby reducing overall production costs. The quality of sinter directly affects the quality of the final product. By predicting the quality of sinter, enterprises can ensure the production of high-quality sinter, thereby improving the market competitiveness of steel products. Accurate prediction of sinter quality can optimize production processes, reduce failures and downtime during the production process, and thus improve overall production efficiency.

Conclusion

There are three main category parameters: raw materials, operating system parameters, and production chemical compositions in sintering process, and take an influence on the tumbler index of sinter mineral. To decrease the data dimensionality, a PCA method is used to extract the main principal components that are used as the inputs of the prediction model. The components with accumulated contribution rate no more than 95% have been selected in our model. That is to say, 25 principal components are extracted from the original 41 features of the production data. Since in original ELM algorithm, the weight vectors between input layer and hidden layer, as well as the threshold of the hidden layer, are randomly selected, the modification is done by using GA to overcome the limitations of original ELM algorithm. Then, an integrated approach combined the GA with ELM has been presented to construct the predictive model of the TI of sinter mineral. The GA-ELM method was compared with the original ELM and GA-BP methods using the production data of a year from a sinter plant. The experiment results show that the performance of the predictive model base on GA-ELM is the best of the three machine learning algorithms. The prediction accuracy of GA-ELM model is 81.85% for an absolute error within 0.7%. This precision can meet the requirements of the practical sintering process.

Data availability

The datasets analysed during the current study are not publicly available as they belong to the plant that hosted the research but are available from the corresponding author on reasonable request.

Abbreviations

- ANN:

-

Artificial neural network

- BF:

-

Blast furnace

- BP:

-

Back propagation

- BTP:

-

Burning through point

- CNN:

-

Convolutional neural network

- ELM:

-

Extreme learning machine

- GA:

-

Genetic algorithm

- LSTM:

-

Long short-term memory

- PCA:

-

Principal component analysis

- RMSE:

-

Root mean square error

- RNN:

-

Recurrent neural network

- TFe:

-

Total iron content

- TI:

-

Tumbler index

References

Zhang, G. et al. Influencing factor of sinter body strength and its effects on iron ore sintering indexes. Int. J. Min. Metall. Mater. 22(6), 553–561. https://doi.org/10.1007/s12613-015-1107-x (2015).

Pownceby, M. I., Webster, N. A. S., Manuel, J. R. & Ware, N. The influence of ore composition on sinter phase mineralogy and strength. Mineral. Process. Extractive Metall. 125(3), 140–148. https://doi.org/10.1080/03719553.2016.1153276 (2016).

Cheng, Z., Yang, J., Zhou, L., Liu, Y. & Wang, Q. Sinter strength evaluation using process parameters under different conditions in iron ore sintering process. Appl. Therm. Eng. 105, 894–904. https://doi.org/10.1016/j.applthermaleng.2016.03.034 (2016).

Guo, H., Shen, F., Zhang, H., Gao, Q. & Jiang, X. High-temperature reduction and melting mechanism of Sinter with different MgO content. Metals 510(9), 1–22. https://doi.org/10.3390/met9050510 (2019).

Wu, Y. et al. Comprehensive evaluation of hydrogen-rich energy application in iron ore sintering process: deep function mechanism analysis and process optimization. J. Clean. Prod. 420, 138451. https://doi.org/10.1016/j.jclepro.2023.138451 (2023).

Wang, J. et al. Effect of ammonia injection stages and segregation degrees on the characteristics of iron ore sintering process. Fuel 352, 128998. https://doi.org/10.1016/j.fuel.2023.128998 (2023).

Liu, J. et al. Effect of silica content on Iron Ore Sintering. Metals 13(1009), 1–12. https://doi.org/10.3390/met13061009 (2023).

Xu, L. et al. Super-high bed sintering for iron ores: problems ascertainment. J. Iron. Steel Res. Int. 31, 1063–1070. https://doi.org/10.1007/s42243-023-01074-5 (2024).

Patil, D. et al. Utilization of Pellet fines in the Iron Ore sintering process. Trans. Indian Inst. Met. 76, 2985–2992. https://doi.org/10.1007/s12666-023-03050-9 (2023).

Park, J., Lee, S. & Park, J. Y. Review of computational fluid dynamics modeling of iron sintering process. J. Mech. Sci. Technol. 36(9), 4501–4508. https://doi.org/10.1007/s12206-022-0814-2 (2022).

Basu, S. et al. Modeling and simulation of mechanical degradation of iron ore sinter in a complex transfer chute system using the discrete element model and a particle breakage model. Powder Technol. 417, 118264. https://doi.org/10.1016/j.powtec.2023.118264 (2023).

Liu, Z. et al. Numerical Simulation of Heat and Mass transfer behavior during Iron Ore Sintering: a review. Metals 13(1277), 1–23. https://doi.org/10.3390/met13071277 (2023).

Wang, Y., Xu, J., He, S., Liu, S. & Zhou, Z. Numerical simulation of particle mixing and granulation performance in rotating drums during the iron ore sintering process. Powder Technol. 429, 118890. https://doi.org/10.1016/j.powtec.2023.118890 (2023).

Muller, J., de Vries, T. L., Dippenaar, B. A. & Vreugdenburg, J. C. A finite difference model of the iron ore sinter process. J. South Afr. Inst. Min. Metall. 115(5), 409–417. https://doi.org/10.17159/2411-9717/2015/v115n5a8 (2015).

Ye, J., Ding, X., Chen, C., Guan, X. & Cao, X. Tumble Strength Prediction for Sintering: Data-driven Modeling and Scheme Design. Proc. 2020 Chinese Automation Congress, Shanghai, China 5500–5505. https://doi.org/10.1109/CAC51589.2020.9326800 (2020).

Chen, X., Lan, T., Shi, X. & Tong, C. A semi-supervised linear-nonlinear least-square learning network for prediction of carbon eciency in iron ore sintering process. Control Eng. Pract. 100, 104454. https://doi.org/10.1016/j.conengprac.2020.104454 (2020).

Singh, K., Vakkantham, P., Nistala, S. H. & Runkana, V. Multi-objective Optimization of Integrated Iron Ore sintering process using machine learning and evolutionary algorithms. Trans. Indian Inst. Met. 73(8), 2033–2039. https://doi.org/10.1007/s12666-020-01920-0 (2020).

Zhou, K. et al. A new CO/CO2 prediction model based on labeled and unlabeled process data for sintering process. IEEE Trans. Industr. Inf. 17(1), 333–345. https://doi.org/10.1109/TII.2020.2985663 (2021).

Ren, X. et al. The prediction of Sinter drums Strength using hybrid machine learning algorithms. Comput. Intell. Neurosci. 1–11. https://doi.org/10.1155/2022/4790736 (2022).

Silva, A. C. P., Coimbra, K. T. Z., Filho, L. W. R., Pessin, G. & Correa-Pabón, R. E. Monitoring of iron ore quality through ultra-spectral data and machine learning methods,AI 3, 554–570. https://doi.org/10.3390/ai3020032 (2022).

Yan, H. et al. Prediction of Compressive Strength of Biomass-Humic Acid Limonite pellets using Articial neural network model. Materials 16(5184), 1–14. https://doi.org/10.3390/ma16145184 (2023).

Yan, F. et al. Data-driven modelling methods in sintering process: current research status and perspectives. Can. J. Chem. Eng. 101, 4506–4522. https://doi.org/10.1002/cjce.24790 (2023).

Mallick, A., Dhara, S. & Rath, S. Application of machine learning algorithms for prediction of sinter machine productivity. Mach. Learn. Appl. 6, 100186. https://doi.org/10.1016/j.mlwa.2021.100186 (2021).

Huang, Q., Liu, Z., Liu, Z. & Lv, X. The strength prediction model of iron ore sinter based on an artificial neural network. Ironmak. Steelmak. 50(2), 159–166. https://doi.org/10.1080/03019233.2022.2096991 (2023).

Du, S., Wu, M., Chen, L., Cao, W. & Pedrycz, W. An intelligent decision-making strategy based on the forecast of abnormal operating mode for iron ore sintering process. J. Process Control. 96, 57–66. https://doi.org/10.1016/j.jprocont.2020.11.001 (2020).

Du, S., Wu, M., Chen, L. & Pedrycz, W. Prediction model of burn-through point with fuzzy time series for iron ore sintering process. Eng. Appl. Artif. Intell. 102, 104259. https://doi.org/10.1016/j.engappai.2021.104259 (2021).

Hu, J., Wu, M., Chen, L. & Pedrycz, W. A Novel modeling Framework based on customized Kernel-based fuzzy C-Means clustering in Iron Ore sintering process. IEEE/ASME Trans. Mechatron. 27(2), 950–961. https://doi.org/10.1109/TMECH.2021.3076208 (2022).

Hu, J., Wu, M., Zhang, P. & Pedrycz, W. Prediction performance improvement via Anomaly Detection and Correction of Actual Production Data in Iron Ore sintering process. IEEE Trans. Industr. Inf. 16(12), 7602–7612. https://doi.org/10.1109/TII.2020.2979465 (2020).

Chen, X., Shi, X. & Tong, C. Multi-time-scale TFe prediction for iron ore sintering process with complex time delay. Control Eng. Pract. 89, 84–93. https://doi.org/10.1016/j.conengprac.2019.05.012 (2019).

Zhang, N. et al. Online measurement method of FeO content in sinter based on infrared machine vision and convolutional neural network. Measurement 202, 111849. https://doi.org/10.1016/j.measurement.2022.111849 (2022).

Zhou, P., Gao, B., Zhao, C. & Chai, T. Heterogeneous data-driven measurement method for FeO content of sinter based on deep learning and tensor decomposition. Control Eng. Pract. 134, 105479. https://doi.org/10.1016/j.conengprac.2023.105479 (2023).

Laitinen, P. & Saxén, H. Data-Driven Modelling of Quality and Performance indices in Sintermaking. Steel Res. Int. 77(3), 152–157. https://doi.org/10.1002/srin.200606369 (2006).

Salah, B., Zoheir, M., Slimane, Z. & Jurgen, B. Inferential sensor-based adaptive principal components analysis of mould bath level for breakout defect detection and evaluation in continuous casting. Appl. Soft Comput. 34, 120–128. https://doi.org/10.1016/j.asoc.2015.04.042 (2015).

Egorova, E. G., Rudakova, I. V., Rusinov, L. A. & Vorobijev, N. V. Diagnostics of sintering processes on the basis of PCA and two-level neural network model. J. Chemom. 32 https://doi.org/10.1002/cem.2959 (2017).

Du, S. et al. Operating Mode Recognition based on fluctuation interval prediction for Iron Ore sintering process. IEEE/ASME Trans. Mechatron. 25(5), 2297–2308. https://doi.org/10.1109/TMECH.2020.2992706 (2020).

Gao, Q. et al. A forecast model of the sinter tumble strength in iron ore fines sintering process. Powder Technol. 390, 256–267. https://doi.org/10.1016/j.powtec.2021.05.063 (2021).

Huang, G. B. et al. Extreme learning machine for regression and multiclass classfication. IEEE Trans. Syst. Man Cybernet. 42(2), 513–529. https://doi.org/10.1109/TSMCB.2011.2168604 (2012).

Liu, X., Zhou, Y., Meng, W. & Luo, Q. Functional extreme learning machine for regression and classification. Math. Biosci. Eng. 20(2), 3768–3792. https://doi.org/10.3934/mbe.2023177 (2023).

Huang, G. B., Zhu, Q. Y. & Siew, C. K. Extreme learning machine: theory and applications. Neurocomputing 70, 489–501. https://doi.org/10.1016/j.neucom.2005.12.126 (2006).

Liu, X., Gao, C. & Li, P. A comparative analysis of support vector machines and extreme learning machines. Neural Netw. 33, 58–66. https://doi.org/10.1016/j.neunet.2012.04.002 (2012).

Forrest, S. Genetic algorithms: principles of Natural Selection Applied to Computation. Science 261(5123), 872–878. https://doi.org/10.1126/science.8346439 (1993).

Ribeiro Filho, J. L., Treleaven, P. C. & Alippi, C. Genetic-algorithm programming environments. Computer 27(6), 28–43. https://doi.org/10.1109/2.294850 (1994).

Chen, X., Zhong, W., Wang, T., Liu, F. & Zhang, Z. Genetic Optimization of Energy Consumption of Pellet Shaft furnace combustor based on support Vector Machine (SVM). Int. J. Chem. Reactor Eng. 12(1), 205–214. https://doi.org/10.1515/ijcre-2013-0117 (2014).

Acknowledgements

This work was supported by the Scientific Research Foundation for High-level Talents of Anhui University of Science and Technology (13210024).

Author information

Authors and Affiliations

Contributions

Senhui Wang wrote the manuscript text.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, S. Applying genetic algorithm to extreme learning machine in prediction of tumbler index with principal component analysis for iron ore sintering. Sci Rep 15, 4777 (2025). https://doi.org/10.1038/s41598-025-88755-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-88755-1