Abstract

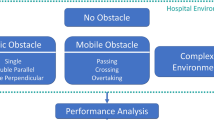

Simultaneous localization and mapping (SLAM) plays an important role in many fields, one of which is to help unmanned devices such as drones, self-driving cars and intelligent robots to achieve precise positioning and mapping. However, when facing complex or changing surroundings, especially when healthcare robots face a large number of mobile healthcare workers and patients in wards, the hospital environment is relatively complex, and the traditional positioning and mapping methods based on geometric features, such as points and lines, are not able to achieve accurate positioning and mapping results for healthcare robots. This paper mainly focuses on the characteristics of complex dynamic environment, and proposes a method to obtain semantic information of surrounding ring and dynamic point culling strategy for robot localisation and mapping. Experiments show that compared with the current popular SLAM technology, the semantic-based SLAM technology proposed in this paper can help the robot to obtain more accurate localisation and mapping, in addition, using this semantic information, the robot can also better identify the surrounding objects, which lays the foundation for performing more complex tasks.

Similar content being viewed by others

Introduction

Since the beginning of the twenty-first century, population ageing has become an important social issue that major countries around the world need to face. Statistics show that more and more elderly people will face the problem of living alone with the improvement of medical conditions, the acceleration of urbanisation and the reduction of family size. Medical robots with autonomous mobility can help elderly people living alone to complete some daily chores. Figure 1 shows several mobile care service robots. Research on care service robots for the elderly first started in Japan. As early as 1999, Waseda University started to develop service robots based on the Wendy system1.Roboar robots2 can assist caregivers in performing a range of high-intensity physical tasks. The Ubiquiti Wassi robot3 guides the user to active walking or rehabilitation through interesting interactions such as animation and voice.The Freivera robot4 can provide 24-h care for the elderly.

However, the key to the efficient operation of medical mobile robots lies in their ability to navigate and localise autonomously, and SLAM (Simultaneous Localization and Mapping) technology is the core technology to solve this problem. SLAM technology enables robots to construct maps autonomously in unknown environments and determine their position simultaneously, thus achieving accurate navigation and localisation. its own position, so as to achieve accurate navigation and localisation. In recent years, with the rapid development of sensor technology, computer vision and machine learning, SLAM technology has made significant progress, based on SLAM technology, mobile robots can realise real-time positioning and environment map construction in unknown environments through sensor data. Visual SLAM technology has received increasing attention from researchers in recent years due to its wide application prospects in fields such as autonomous driving, robotics and virtual reality technology. After a long period of research, the current visual SLAM system has formed a basic framework consisting of visual odometry, optimisation, map construction and loopback detection5. Based on the above classical framework, several SLAM algorithms are proposed, such as, DS-SLAM6, VINS-Fusion7, DynaSLAM8 and ORB-SLAM39. Their algorithms have excellent performance and can satisfy most scenario applications. However, as the application scenarios become more and more complex, SLAM systems show obvious shortcomings in some specific real-world scenarios. For example, when a robot operates in an indoor scene, it inevitably encounters dynamic object situations. In the classical SLAM framework, both feature point methods and direct methods rely on the assumption that the environment in which the robot is located is a static one. This assumption limits the application of SLAM algorithms in real environments. When dynamic objects are encountered in real-world applications, significant errors are introduced in the computation of data correlation between frames and the recovery of camera pose. This may lead to localisation failures and subsequently affect optimisation and map construction in SLAM systems10.

In order to eliminate the effect of dynamic points on the SLAM system, Robust Constraints and Random Sample Consistency (RANSAC11) is used. However, sparse feature points cannot reflect the boundaries of dynamic objects. To solve this problem, geometric constraints are utilised to validate the dynamic feature points, such as the assumption that the camera pose is known in12. The object detection network can pre-detect the bounding rectangles of dynamic objects. However, the feature points between the dynamic object and the bounding rectangle are discarded, wasting the necessary feature points. In recent years, deep learning methods (e.g., Mask R-CNN13 or SegNet14) are commonly used in image processing for image segmentation through deep learning. This method can effectively separate objects from images, and these methods significantly improve the stability and accuracy of visual SLAM systems in dynamic environments, but still have the disadvantage that the real-time performance cannot meet the practical requirements, in addition, these algorithms can only deal with the known objects in the scene, and cannot deal with the unknown moving objects.

To address these challenges, we propose the method called SAM-SLAM. The system accepts RGB-D camera image input and uses an optical flow estimation method to obtain the dynamic information, which is first coarsely segmented by a coarse segmentation network to obtain a rough dynamic region, and then finely segmented using a SAM segmentation network to obtain a more accurate and precise dynamic region. Based on the large gap between the two parameters of polar distance and depth distance of dynamic feature points compared to static feature points, the dynamic points are rejected by setting relevant thresholds. The main contributions of our work can be summarised as follows:

-

(1)

We use semantic segmentation to acquire potential dynamic objects, combined with optical flow estimation and Segment Anything Model (SAM) to perform fine segmentation of dynamic objects and eliminate dynamic object effects, thus reducing the impact of dynamic environments on point tracking.

-

(2)

We propose an approach that will set a correlation threshold for further dynamic point culling of features based on the large matching error of the characteristic polar and depth distances of the dynamic feature points to cope with the challenges encountered in dynamic environments. This approach ensures the real-time nature of the system and effectively solves the problem of possible erroneous culling of static and dynamic feature points when relying only on semantic information.

-

(3)

We our proposed vision SLAM system can access IMU data to facilitate pre-integration between consecutive frames for stable feature recognition, motion consistency detection and pose optimisation, while preventing to a certain extent the removal of too many feature points, which affects the robustness of the SLAM system.

Literature Review

To successfully implement semantic information-based SLAM, target recognition is crucial. In our semantic SLAM approach, the robot should know the positions of the surrounding objects, many of which are objects such as people, animals, and cars in practice. There are two methods to eliminate the interference of dynamic objects. One is based on features, while the other involves recognizing dynamic objects for further processing, which is currently the mainstream method. We introduce relevant works in sections A. Feature-based method, B. Object recognition processing and C. Map Initialization in separate sections.

Feature-based method

This approach builds on traditional feature point SLAM by incorporating semantic information, resulting in a clearer and more meaningful map. Wangsiripitak and Murray15 describe a monoSLAM tracker that parallelizes three-dimensional objects for reasoning about movements and obstructions. Tan et al.16 project keyframes into the current frame for visual and structural comparisons to detect areas of change. Jaimez et al.17 use geometric clustering to classify objects into static and dynamic parts. Li and Lee’s work18 uses depth edge points weighted for their association with dynamic objects’ probability of being part of the moving object. Sun et al.19 propose a motion removal method to improve RGB-D performance in dynamic environments, as well as a background modelling method that partially relies on object shapes and color features and can’t adapt better to the environment.

Mur-Artal et al. introduced ORB-SLAM20, a feature-based monocular simultaneous localization and mapping system that operates in real time, across both small and large indoor and outdoor environments. This system offers exceptional robustness in severe motion clutter, enabling wide baseline loop closing and relocalization. It also includes fully automatic initialization, a key aspect for ensuring consistent performance. Leveraging recent advancements in algorithms, the team developed a groundbreaking system that utilizes the same features for all SLAM tasks: tracking, mapping, relocalization, and loop closing. A unique survival of the fittest strategy ensures excellent robustness by selecting the most suitable points and keyframes for reconstruction. This approach results in a compact and trackable map that only grows when scene content changes, thus enabling lifelong operation.

Later, the authors of ORB-SLAM proposed an extension of their system for stereo and RGB-D cameras, introducing ORB-SLAM221. This comprehensive SLAM solution supports monocular, stereo, and RGB-D cameras, incorporating map reuse, loop closing, and relocalization capabilities. The system runs in real-time on standard CPUs and adapts to a wide range of environments, from small handheld indoor sequences to drones flying in industrial environments and cars driving around a city. Currently, it is one of the most advanced feature-based monocular VSLAM algorithms.

Object recognition and processing method

The recognition, dynamic feature detection, and processing approach is a method for SLAM systems that can effectively handle the impact of dynamic objects on SLAM. Yang et al.22, for instance, firstly utilize the target detection algorithm (YOLOv3) for high-dynamicity target segmentation and removal, then calculate the corresponding basic matrix to determine the real dynamicity of the feature point, and finally enhance the influence of motion blur on feature matching by modifying the feature extraction step under the ORB-SLAM2 system framework. Xiao et al.23 use an SSD object detection network running in a separate thread to gain prior knowledge about dynamic objects, then selectively track and process the dynamic object’s features in the tracking thread to significantly reduce the error in pose estimation. Sheng et al.24 propose Dynamic-DSO, which first utilizes Mask R-CNN algorithms to obtain regions of high dynamicity in the environment, then remove potential moving targets more robustly by only considering a pixel point and its four-neighboring pixels when they don’t belong to the dynamic region before marking it as a static background image and directly embedding it into the DSO system. Finally, good experimental results have been achieved on the TUM Dynamic Environment dataset. An effective visual Big Five system framework integrates different sensor data to locate and map objects in complex environments while increasing the precision of semantic segmentation along with depth values and color image processing to repair semantic labels, which is implemented on top of a map for realizing semantic maps. Our semantic optimization method is inspired by related ideas and proposes a method based on a large-scale SAM model with superior robustness and accuracy in detecting results.

During object segmentation, the process of partitioning an image into distinct pixel regions or masks, representing individual objects, has been a topic of extensive investigation. The segmentation of objects with complex backgrounds, either singular or multiple, remains a challenging task with significant room for improvement. Liu et al.25 delved into visual attention by detecting salient objects within an input image. They approached salient object detection as an image segmentation problem, aiming to separate the salient object from the background. To this end, they introduced a set of novel features: multi-scale contrast, center-surround histogram, and color spatial distribution. These features described the salient object locally, regionally, and globally. A conditional random field was then employed to effectively combine these features for accurate salient object detection. Furthermore, a large image database containing tens of thousands of meticulously labeled images by multiple users was constructed. Li et al.26 presented a salient instance segmentation method that produced a saliency mask with distinct object instance labels for a given image. This method encompassed three steps: estimating the saliency map, detecting salient object contours, and identifying salient object instances. For the initial two steps, they introduced a multiscale saliency refinement network, which generated high-quality salient region masks and salient object contours. When combined with multiscale combinatorial grouping and a MAP-based subset optimization framework, their method excelled in generating promising salient object instance segmentation results. To facilitate further research and evaluation in this area, they also constructed a new database consisting of 1000 images with pixelwise salient instance annotations.

Xu et al.27 propose a method that integrates the Fuzzy C-Means (FCM) algorithm with Graph Cut Theory for grayscale and color image segmentation. They utilize the Turbopixel algorithm to presegment the color image into smaller regions called superpixels and extract color histogram features from these superpixels. Based on these color histogram features, they apply FCM to form clusters. They then construct a graph model and employ the maximum flow algorithm to obtain the minimum cut, which serves as the initial segmentation result for the image. Finally, they employ a recursive process to achieve the final image segmentation result. The key aspect of their approach lies in constructing a robust graphical model and utilizing existing binary segmentation models to address multi-value segmentation challenges. Khan et al.28 introduce a novel method that incorporates both distance and angle information in the Bag of Visual Words (BoVW29) representation. This approach offers two main innovations: a computationally efficient representation that incorporates relative spatial information between visual words, and a soft pairwise voting scheme based on distance in the descriptor space. Experiments on challenging datasets such as MSRC-2, 15Scene, Caltech101, Caltech256, and Pascal VOC 2007 demonstrate that their method outperforms or is competitive with concurrent approaches. They also show that their method provides valuable complementary information to spatial pyramid matching and can improve overall performance. Li et al.29 introduce a method for object recognition based on the Region of Interest (ROI) and the Optimal Bag of Words model. This approach involves the following steps: Extraction of ROIs using Shi–Tomasi corners and the Itti saliency map; Detection and description of SIFT feature descriptors on images of interest; Generation of a visual codebook using Gaussian mixture models, which relies on the clustering results of k-means++; Computation of similarities between each visual word and corresponding local features by posterior pseudo probabilities to construct a visual word soft histogram for image representation; Utilization of the Support Vector Machine (SVM) for image classification and recognition.

Map initialization

Monocular SLAM necessitates a procedure for generating an initial map due to the inability to recover depth from a single image. One method for addressing this challenge involves initially tracking a known structure. A real-time algorithm is introduced that recovers the 3D trajectory of a monocular camera moving rapidly through a previously unfamiliar scene. This system represents the first successful application of SLAM methodology from mobile robotics to the “pure vision” domain of a single uncontrolled camera. It achieves real-time, drift-free performance not accessible through structure-from-motion approaches. The core of this approach lies in the online creation of a sparse but enduring map of natural landmarks within a probabilistic framework. Key novel contributions include an active approach to mapping and measurement, the utilization of a general motion model for smooth camera movement, and solutions for monocular feature initialization and feature orientation estimation. Collectively, these components form an extremely efficient and robust algorithm that runs at 30 Hz on standard PC and camera hardware.

Initialization methods from two views assume local scene planarity30. They propose a semi-direct monocular visual odometry algorithm that is precise, robust, and faster than current state-of-the-art methods. The semi-direct approach eliminates the need for costly feature extraction and robust matching techniques for motion estimation. Their algorithm operates directly on pixel intensities, resulting in subpixel precision at high frame rates. A probabilistic mapping method that explicitly models outlier measurements is used to estimate 3D points, leading to fewer outliers and more reliable points. Precise and high frame-rate motion estimation enhances robustness in scenes with little, repetitive, and high-frequency texture. The algorithm is applied to micro-aerial-vehicle state estimation in GPS-denied environments and runs at 55 frames per second on an onboard embedded computer and at more than 300 frames per second on a consumer laptop.

Strasdat et al.31 introduce a new near real-time visual SLAM system that incorporates the continuous keyframe optimization approach used by state-of-the-art stereo systems but accounts for the additional challenges posed by monocular input. They present a new pose-graph optimization technique that efficiently corrects rotation, translation, and scale drift at loop closures. In particular, they describe the Lie group of similarity transformations and its relationship to the corresponding Lie algebra. They also detail a new image processing frontend capable of accurately tracking hundreds of features per frame, as well as a filter-based approach for feature initialization within keyframe-based SLAM. The approach is validated through large-scale simulations and real-world experiments where a camera completes large looped trajectories. They also present a novel and general optimization framework for visual SLAM that scales for both local, highly accurate reconstruction and large-scale motion with long loop closures32. They take a two-level approach that combines accurate pose-point constraints in the primary region of interest with a stabilizing periphery of pose-pose soft constraints. Their algorithm automatically builds a suitable connected graph of key poses and constraints, dynamically selects inner and outer window membership, and optimizes both simultaneously. They demonstrate in extensive simulation experiments that their method approaches the accuracy of offline bundle adjustment while maintaining constant-time operation, even in the challenging case of highly looped monocular camera motion. Furthermore, they present a set of real experiments for various types of visual sensors and motion, including large-scale SLAM with both monocular and stereo cameras, looped local browsing with either monocular or RGB-D cameras, and dense RGB-D object model building.

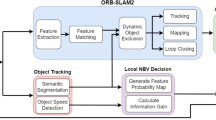

Methods

Our system is based on ORB-SLAM333, which is a multi-source sensor VIO system based on the feature-point approach, and is a classical system in which the camera is tightly coupled with the IMU. Since the IMU can record high-frequency angular velocity and acceleration signals, the system can stably obtain the relative position between two frames of an image through pre-integration operations on the high-frequency signals, and then realise the position information through the constraints of the visual features brought by the camera. Then, the position information is optimised by the constraints brought by the visual features of the camera, thus adapting to the effects of motion blur and rapid position changes.The advantage of the ORB-SLAM technology is that it can be robustly operated in real time in both large and small scenes, and indoor and outdoor scenes. In addition, ORB-SLAM3 builds hybrid maps based on an improved recall new reset module, which allows ORB-SLAM3 to operate for long periods of time in poorly characterised scenes. When the odometer fails, the system rebuilds the map and aligns it with the original constructed map. In contrast to odometers that can only utilise data from the most recent frames. While ORB-SLAM3 performs well in static scenes, its performance is disturbed by moving objects when faced with more complex dynamic scenes. To address this shortcoming, we added semantic segmentation threads to ORB-SLAM3.To address this flaw, we have added semantic segmentation threads to the visual SLAM method to detect and remove dynamic objects in frames, enabling them to eliminate the impact of moving objects before further processing. In addition, we utilized image processing techniques to modify some depth image inputs, enabling the algorithm to recognize previously undetected moving objects. This method makes our algorithm more effective than comparable algorithms using geometric methods. We named our modified visual SLAM method “SAM-SLAM”, and its framework is shown in Fig. 2.

Dynamic zone detection by optical flow method

Optical flow method is a method that describes the motion of pixels over time in various images, including dense optical flow and sparse optical flow. The dense optical flow method is represented by Horn Schunck, while the sparse optical flow method is represented by Lucas Kanada. It is usually applied to the tracking and positioning module of front-end feature points in SLAM. This article uses sparse optical flow34, abbreviated as LK optical flow method. Using the instantaneous change rate of the gray level of coordinate points on the image frame plane as the optical flow vector, as shown in Fig. 3, is a visual representation of the variation of LK optical flow over time.

In the LK optical flow method, the image grayscale can be seen as a function of time variation: I(t). So, at time t, the coordinates at position (x, y) can be expressed as \(I(x,y,t)\). The definition domain is time and pixel position coordinates, and the value domain is the pixel grayscale of the image. If the pixel coordinates of a point at time t are x, y, it can be represented as \(I(x,y,t)\), then the position of the pixel at time t + dt can be inferred as \((x + dx,y + dy,t + dt)\). According to the principle of grayscale invariance, then:

Taylor expansion is performed on the left side, retaining the first-order term, then:

Due to the assumption of unchanged grayscale, there are:

If both sides are divided by \(dt\), there is:

In the above equation, \(\frac{{{\text{dx}}}}{{{\text{dt}}}}\) is the rate of change of pixels on the x-axis, \(\frac{{{\text{dy}}}}{{{\text{dt}}}}\) is the speed of pixels on the vertical axis, written as u, v.\(\frac{\partial I}{{\partial {\text{x}}}}\) represents the gradient of the image frame on the horizontal axis, and \(\frac{\partial I}{{\partial {\text{y}}}}\) represents the gradient of the image frame on the vertical axis, which is \({I}_{x},{I}_{y}\) The change in grayscale over time is \({I}_{t},\) which can be expressed as:

It is possible to calculate the motion of pixels, where \(n \times n\), In the box of n, there are \(n^{2}\) pixels, and each window has all pixels have the same motion, so there are a total of \(n^{2}\) equations:

Record:

Can be written as:

The above equation is the overdetermined equation for u, v, then the motion velocity of pixels between images u, v is

When the value of t is discontinuous, the above equation can calculate the pose of a certain pixel block in each image frame, but pixel movement is only effective in local regions, and the equation is usually solved by iterating several times. Afte obtaining the velocity of pixels in the corresponding coordinates, the position of all pixels in the window in the image can be estimated. After tracking the corresponding matching points, the essential matrix E can be calculated based on the matching relationship.

Among them, \((\mu ,\nu {)}_{k}\) represents the motion speed of the feature point k in the i-th frame of the image, and \(\nu_{thre}\) th represents the threshold of overall scene motion speed in the i-th frame of the image. We approximate the average motion of the feature points in the scene outside the potential dynamic target area between the two frames to the camera’s motion speed and set it as a threshold. Status represents the motion state of the current feature point k, dynamic represents real motion, and static represents relative stillness. When the motion speed of the feature point exceeds the threshold, it is considered as a real dynamic feature point and removed. Then, real moving targets are selected from potential dynamic targets, and quasi static targets in the current time period are retained to improve the rationality of dynamic feature point removal.

Dynamic object coarse segmentation

We use a semantic segmentation network to segment image features and detect objects (e.g., people, dogs, and chairs) with potential motion possibilities. Our goal is to recognize and segment these objects in an image. Segmentation is an area widely explored in the field of computer vision research, and the specific goal of semantic segmentation is to assign appropriate labels to each pixel so as to efficiently segment an image into multiple semantic categories. we use an encoder-decoder based model with a deep residual network (ResNet35) as the semantic segmentation encoder. ResNet is a classical model that solves the problem of gradient vanishing and model degradation in deep neural networks by introducing residual chunks and hopping connections, which provides high model reusability and training effectiveness. The decoder model we use utilizes the pyramid pooling module, which is able to perform pooling operations at different scales to aggregate different ranges of global context information, thus improving the network’s ability to detect targets at different scales and improving the accuracy of segmentation results.

Semantic information is then combined with optical flow estimation to provide more accurate dynamic information. Optical flow provides information about the motion of moving objects, but in reality the optical flow field does not always reflect the actual motion of the target. In addition, optical flow estimation is usually imprecise, and brightness variations can affect its accuracy, causing stationary objects to be mistaken for moving objects. Therefore, we use semantic segmentation for initial segmentation to obtain targets that may be moving, combine this with dynamic point information obtained from optic flow estimation to identify moving targets, and segment the image frames using a fine segmentation network to obtain more accurate dynamic information in the scene.

Fine segmentation

After the initial segmentation of dynamic objects, SAM36 is used for finer segmentation of dynamic objects for better dynamic object culling.SAM is a new task, dataset and model for image segmentation proposed by MataAI. It is a generalisation of two classes of classification methods: interactive segmentation and automatic segmentation. By introducing a cued segmentation task to generate a valid segmentation mask based on any given segmentation cue, this can perform various segmentation tasks. In our system, dynamic information is obtained based on semantic segmentation, dynamic points obtained from optical flow estimation are used as cues for SAM, and the dynamic information is finely segmented using SAM and the mask obtained after segmentation. We only extract features from the static part, as the segmented dynamic region is considered invalid. To improve the accuracy of camera pose estimation, we use polar constraints for anomaly monitoring, and feature points of dynamic targets and neighbouring regions are eliminated by removing the anomalies by depth error. After performing dynamic object processing, feature points are extracted from the image, and among the acquired feature points, we invalidate the feature points in the vicinity of the dynamic region to perform feature matching using only the feature points in the static region.

Outlier determination based on the epipolar constraint

Combining semantic information to filter out dynamic objects. However, using only semantic segmentation algorithms to detect potential moving targets may result in incomplete removal of dynamic feature points, such as some non-potential dynamic targets, a cup or book in the hands of a moving person, or a rotating chair. Therefore, after filtering out the previous dynamic no-objects, polar constraints are applied to the feature points to dynamic feature point rejection.

As shown in Figs. 4, I1 and I2 are the RGB images obtained by the camera at the previous and current moments, O1 and O2 are the corresponding optical centre positions of the camera at the previous and current moments, respectively, and P is the map point jointly observed at adjacent moments. Theoretically, if the attitude is absolutely accurate, the projection of the map point at the current moment will lie on a ray consisting of the poles e2 and p2. However, due to the motion of dynamic objects, the projections of dynamic map points as anomalous data do not satisfy the above constraints.. The projection point (pΠ) is shown in Fig. 4. After obtaining the degree of dynamism of the region, we can further filter the outliers based on the distance between the projected points and the ray.

Firstly, the fundamental matrix is calculated based on the matching points, and then the fundamental matrix is used to obtain the polar line of the current frame. The distance between the matching point and the polar line is used as a constraint. If it exceeds the threshold, it is considered as a dynamic point. If a point does not satisfy the outer polar line constraint, the region is regarded as a potential dynamic region. The epipolar line L of the current frame is calculated as:

where A, B and C are the coefficients of the two-dimensional linear equations, F is the fundamental matrix, K is the eigenmatrix of the camera, t∧ is the antisymmetric matrix of the translation vectors, and p1 = [u, v, 1]T is the normalised planar coordinates of the matching point in the last frame.

Then the distance from the matching point to the pole line is:

By traversing the projection points of all map points in the current frame, we can calculate the distances of all projection points. When the distance corresponding to a map point is greater than the threshold sum, it is initially judged that the current static map point is a dynamic map point that has not been updated, and the corresponding projection points are eliminated.

Outlier determination based on the depth error

By outlier rejection based on depth error, anomalous feature points caused by dynamic objects, sensor noise, mis-matching and occlusion can be effectively removed. This makes the system more robust to complex and dynamic environments and enables more stable localisation and map building. The retained feature points are usually high quality and stable feature points. These feature points are more reliable in position estimation and map construction, thus improving the overall accuracy of the system. Reduced cumulative error improves the long-term stability of the system.

During the tracking of feature points, we get the position of the camera in the world coordinate system every time. At the same time, the system continuously generates map points with world coordinates. Theoretically, there is fixed distance information between any two known points in 3D space. However, as shown in Fig. 5, at some historical time t’, the camera captures an image I’. At the same time, the system generates a map point P’d. As the camera keeps moving, at the current time t, the camera captures the image I. If P’d is a dynamic map point, then P’d will move from t’ to t in the time interval.

Since the RGB-D camera can provide both RGB images and depth images, we can use the depth image obtained at the current time to determine the corresponding motion state of the map points. Based on the pixel coordinates of the projected points, we can look up the corresponding pixel locations on the depth image to estimate the corresponding depth values. Based on the 3D coordinates of the map point P’d generated in the past and the camera position in the previous frame, we can calculate the distance between the two points dep0. The formula is as follows:

where Pcw are the coordinates of the camera machine in the previous frame and P'd are the coordinates of the map points themselves. Both Pcw and P'd are known during the SLAM system operation. We only need to calculate the distance between the two points. pdπ is the projection matching point. dep(·) refers to the search operation at the coordinates of the corresponding pixel in the depth image. We can find the depth value at the pixel coordinate corresponding to pdπ on the depth image to get depth.

By traversing all the matching points in Voutlier, we obtain the corresponding deperr and set the empirical threshold depthr. To improve the accuracy of dynamic scenes, dynamic feature points should be filtered and effective matching should be retained as much as possible to construct constraints. Therefore, when deperr is greater than depthr, we remove the corresponding matching point from Voutlier. After two rounds of filtering determination, the matching points in Voutlier are treated as dynamic points in the pose estimation for the current frame. Thereby, we can achieve better filtering performance.

Experiments and results

We conducted extensive experiments in this section to demonstrate the effectiveness of the SAM-SLAM method proposed in this paper.

Test on dataset

We compared the proposed method with current advanced methods using the public TUM RGB-D dataset. Due to the addition of semantic segmentation threads before feature extraction, which can handle dynamic scene problems, and our algorithm design is based on the current best visual SLAM algorithm, our method achieves the best balance between speed and accuracy.

The TUM RGB-D dataset includes sequences of low dynamic range and High dynamic range scenarios, which are evaluated under comparable conditions. We use the Absolute Trajectory Error (ATE) metric to compare the projected trajectory of each timestamp with the real trajectory on the ground. The absolute trajectory error directly measures the difference between the real trajectory point and the estimated trajectory point. In the preprocessing phase, the estimated pose is associated with the real ground pose using a timestamp. Based on this association, the Singular value decomposition is used to align the real trajectory and the estimated trajectory. Finally, the difference between each pair of poses is calculated and the average value/median value/standard deviation of these differences is output.

Figures 5 and 6 show the ATE and RPE results of our method on four other representative sequences. Figure 5 shows the error results of the proposed method on “freiburg1xyyz” and “freiburg1udesk”. In the “freiburg1xyyz” sequence, the depth camera is pointed at a typical desk in an office environment, which only contains translational motion along the camera axis while maintaining a fixed direction. In the “freiburg1udesk” sequence, several scans of four desks in a typical office environment are included. From the results in Fig. 5, it can be seen that our method can also achieve good positioning results for objects in static scenes.

Figure 6 shows the error results of the proposed method on “freiburg2xyyz" and “freiburg2upioneer_slam”. In the “freiburg2xyyz” sequence, the depth camera moves very slowly along the axes in the x, y, and z directions. The slow camera motion basically ensures that there is no motion blur or rolling effect in the data. In the “freiburg2upioneer_slam” sequence, the depth camera is recorded from the top of the mobile robot, The mobile robot was manipulated through a maze composed of tables, containers, and other walls to draw a map. The final collected data also includes laser scanning and odometer data of the mobile robot. From the results in Fig. 6, it can be seen that the method proposed in this paper can also achieve good positioning and mapping results for complex dynamic working scenarios of mobile robots. This also provides performance assurance for the application of the method in this article on mobile nursing robots.

The error metrics used for evaluation are Absolute Trajectory Error (ATE) and Relative Pose Error (RPE), commonly used in SLAM. ATE measures the overall consistency of the trajectory, and RPE measures the odometer drift per second. Absolute trajectory error is described in units of (m/s), and relative pose error describes translational drift in (m/s) and rotational drift in (°/s), respectively. There are many ways to describe an evaluation metric, such as mean error, median error, mean square error, standard deviation, etc. Here we have chosen to use the Root Mean Square Error (RMSE) to compare the errors.

The quantitative comparison results are shown in Tables 1 and 2, where xyz, static, rpy and half in the first column stand for four types of camera ego-motions, for example, xyz represents the camera moves along the x–y–z axes. We present the values of RMSE, Mean Error, Median Error and Standard Deviation (S.D.) in this paper, while RMSE and S.D. are more concerned because they can better indicate the robustness and stability of the system. We also show the values of improvement of our SAM-SLAM compared to the original ORB-SLAM3.

As we can see from Tables 1 and 2, ORB-SLAM3 can make the performance in most high-dynamic sequences get an order of magnitude improvement. In terms of ATE, the RMSE and S.D. improvement values can reach up to 97.91% and 97.94% respectively. The results indicate that DS-SLAM can improve the robustness and stability of SLAM system in high-dynamic environments significantly. However, in low-dynamic sequences, for example, the fr3_sitting_static sequence, the improvements of performance are not obvious. We think the reason is that original ORB-SLAM3 can handle the low-dynamic scenarios easily and achieve good performance, so the space that can be improved is limited.

As can be seen from Tables 1 and 2, SAM-SLAM can lead to an order of magnitude improvement in the performance of most highly dynamic sequences. In terms of ATE, the improvement values of RMSE and S.D. are as high as 97.91% and 97.94%, respectively. The results show that SAM-SLAM can significantly improve the robustness and stability of SLAM systems in highly dynamic environments. However, in low-dynamic sequences, such as the freiburg2_xyz sequence, the performance improvement is not significant and even inferior to ORB-SLAM.3 We analyse and find out the reason for this in this paper, where the dynamic point culling strategy removes too many feature points leading to tracking failures, and thus the localisation failure of the SAM-SLAM system. Dynamic point rejection uses the polyline distance and the depth information of the RGB-D camera to determine the dynamic points to be removed, and the SLAM system fails due to the static nature of the scene and the slow movement of the camera.

Experimental measurement

Practical tests were conducted in the laboratory for this paper. The equipment used for the tests was a robotic car, as shown in Fig. 7. This car is equipped with a depth camera, NVIDIA Jetson Nano board, LiDAR, and a four-wheel motor, among other major components as shown in Fig. 8. However, as this paper focuses on the research of vision-based SLAM algorithms, we do not require LiDAR data.

We conducted mobile tests with the robotic car in the laboratory, and the results are shown in Fig. 8. We chose to test the algorithm proposed in this paper in the laboratory due to the presence of various experimental tables and chairs, as well as constantly moving laboratory personnel. As can be seen in Fig. 9, our method effectively extracts feature points from the experimental tables in the actual laboratory environment and completes accurate matching between different frames of images. As shown in Fig. 10a is the original keyframe, (b) is the keyframe after SAM segmentation, (c) is the keyframe without adding dynamic point recognition, (d) is the keyframe with adding dynamic point recognition. The red dots represent the projections of the dynamic point recognition in the keyframes,,and the green dots are the projections of the feature extraction points in the keyframes. The SAM-SLAM algorithm proposed in this paper generally seems to improve the localisation accuracy in dynamic scenes and has some robustness in complex environments.

Conclusion

This study introduces a novel algorithm, SAM-SLAM, which leverages SAM for accurate object detection and semantic segmentation, subsequently employing visual SLAM for precise robot localization and mapping. The SAM-SLAM proposed in this article not only augments the robustness of current SLAM algorithms but also mitigates the influence of dynamic objects on attitude estimation. We have innovatively replaced geometric constraints traditionally used in other algorithms with advanced image processing technology, thereby minimizing computational complexity and enhancing the robot’s environmental cognitive capabilities.

Extensive experiments conducted on the TUM RGB-D dataset have demonstrated that the SAM-SLAM method introduced in this paper outperforms comparable algorithms, effectively addressing the positioning and navigation challenges faced by mobile nursing robots in intricate environments. Looking ahead, we envision integrating our algorithm with IMU data from mobile nursing robots to compensate for any missing information, ultimately achieving superior performance in practical application scenarios.

Data availability

Code and Data Availability We declare that the relevant data and code for this study are fully open-source,if you need the code and implementation details can email to the corresponding author. Corresponding Author: Chao Zheng Email:arnold_zheng@163.com.

Change history

18 May 2025

The original online version of this Article was revised: In the original version of this Article the Acknowledgements section was omitted. The Acknowledgements section now reads: “This research was funded by Henan Academy of Sciences research start-up project, grant number 231820014, Henan Academy of Sciences research start-up project, grant number 251820019 and Henan Academy of sciences, innovative entrepreneurial team project, grant number 20230206”

References

Morita, T., Iwata, H. and Sugano, S., “Development of human symbiotic robot: WENDY,” Proc. IEEE Int. Conf. on Robotics and Automation, 3183–3188 (1999).

Gao, Y. Research and development of nursing robots in Japan. Robot Tech. Appl. 5(1), 1–5 (2015).

Wu, Z. Chinese technology enterprise Youbixuan is listed as one of the top ten robot companies in the world. Zhong Guan Cun 12(1), 58–59 (2021).

Rong, P. Freightway releases new robot product “vera generation 2” in Zhangjiang Hi-tech park, Shanghai. Sci. Technol. Indus. Parks 6(3), 12–12 (2016).

Macario Barros, A., Michel, M., Moline, Y., Corre, G. & Carrel, F. A comprehensive survey of visual slam algorithms. Robotics. 11(1), 24 (2022).

Yu, Chao, et al. “DS-SLAM: A semantic visual SLAM towards dynamic environments”. 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2018.

Qin, Tong, et al. “A general Optimization-Based Framework for Global Pose Estimation with Multiple Sensors”. arxiv preprint arXiv:1901.03642v1 2019).

Bescos, B., Fácil, J. M., Civera, J. & Neira, J. DynaSLAM: Tracking, mapping, and inpainting in dynamic scenes. IEEE Robot. Autom. Lett. 3(4), 4076–4083 (2018).

Campos, C., Elvira, R., Rodríguez, J. J., Montiel, J. M. & Tardós, J. D. Orb-slam3: An accurate open-source library for visual, visual–inertial, and multimap slam. IEEE Trans. Robot. 37(6), 1874–1890 (2021).

Wen S et al. “CD-SLAM: A real-time stereo visual-inertial SLAM for complex dynamic environments with semantic and geometric information”. IEEE Trans. Instrum. Measure. (2024).

Fischler, M. A. & Bolles, R. C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 24(6), 381–395 (1981).

Zhang, R. & Zhang, X. Geometric constraint-based and improved yolov5 semantic slam for dynamic scenes. ISPRS Int. J. Geo Inf. 12(6), 211 (2023).

He K, et al. “Mask r-cnn.” Proceedings of the IEEE International Conference on Computer Vision. 2017.

Badrinarayanan, V., Kendall, A. & Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 39(12), 2481–2495 (2017).

Wangsiripitak S, Murray D W. Avoiding moving outliers in visual SLAM by tracking moving objects//2009 IEEE International Conference on Robotics and Automation. IEEE, 2009: 375–380.

Tan W, Liu H M, Dong Z L, et al. Robust monocular SLAM in dynamic environments[C]//IEEE/ACM International Symposium on Mixed and Augmented Reality. Piscataway, USA: IEEE, 2013: 209–218.

Jaimez M, Kerl C, Gonzalez-Jimenez J, et al. Fast odometry and scene flow from RGB-D cameras based on geometric clustering[C]//2017 IEEE International Conference on Robotics and Automation (ICRA). Singapore: IEEE, 2017: 3992.

Li, S. L. & Lee, D. H. RGB-D SLAM in dynamic environments using static point weighting. IEEE Robot. Autom Lett. 2(4), 2263–2270 (2017).

Sun, Y. X., Liu, M. & Meng, M. Q. H. Motion removal for reliable RGB-D SLAM in dynamic environments. Robot. Auton. Syst. 108, 115–128 (2018).

Mur-Artal, R., Montiel, J. M. M. & Tardos, J. D. ORB-SLAM: A versatile and accurate monocular SLAM system. IEEE Trans. Robot. 31(5), 1147–1163 (2015).

Mur-Artal, R. & Tardos, J. ORB-SLAM2: An open-source slam system for monocular, stereo and RGB-D cameras. IEEE Trans. Robot. https://doi.org/10.1109/TRO.2017.2705103 (2016).

Yang, S., Fan, G., Bai, L., Zhao, C. & Li, D. SGC-VSLAM: A semantic and geometric constraints VSLAM for dynamic indoor environments. Sensors. 20(8), 2432 (2020).

Xiao, L. H. et al. Dynamic-SLAM: Semantic monocular visual localization and mapping based on deep learning in dynamic environment. Robot. Autonom. Syst. 117, 1–16 (2019).

Sheng, C., Pan, S., Gao, W., Tan, Y. & Zhao, T. Dynamic-DSO: Direct sparse odometry using objects semantic information for dynamic environments. Appl. Sci. 10(4), 1467 (2020).

Liu, T., Yuan, Z., Sun, J., Wang, J. & Zheng, N. ‘Learning to detect a salient object’. IEEE Trans. Pattern Anal. Mach. Intell. 33(2), 353–367 (2011).

G. Li, Y. Xie, L. Lin, and Y. Yu, ‘‘Instance-level salient object segmentation,’’ in Proc. CVPR, Jul. 2017, pp. 2386–2395.

M. Xu, M. Guo, L. Shang, and X. Jia, ‘‘Multi-value image segmentation based on FCM algorithm and graph cut theory,’’ in Proc. IEEE Int. Conf. Fuzzy Syst. (FUZZ-IEEE), Jul. 2016, pp. 1333–1340.

Khan, R., Barat, C., Muselet, D. & Ducottet, C. ‘“Spatial histograms of soft pairwise similar patches to improve the bag-of-visual-words model”,’fefComput. Vis. Image Understand. 132, 102–112 (2015).

Yang, Yi, and Shawn Newsam. "Bag-of-visual-words and spatial extensions for land-use classification." Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems. 2010.

Li, W., Dong, P., Xiao, B. & Zhou, L. ‘Object recognition based on the region of interest and optimal bag of words model’. Neurocomputing 172, 271–280 (2016).

Davison, A. J., Reid, I. D., Molton, N. D. & Stasse, O. MonoSLAM: Real-time single camera SLAM. IEEE Trans. Pattern Anal. Mach. Intell. 29(6), 1052–1067 (2007).

J. Engel, T. Schops, and D. Cremers, “LSD-SLAM: Large-scale direct monocular SLAM,” in Proc. Eur. Conf. Comput. Vision, Zurich, Switzerland, 2014, pp. 834–849.

Campos, C., Elvira, R., Gomez, J. & Montiel, J. ORB-SLAM3: An accurate open-source library for visual, visual-inertial, and multimap SLAM. IEEE T. Robot 37(6), 1874–1890 (2021).

Lei, Z., Fang, T., Huo, H. & Li, D. Rotation-invariant object detection of remotely sensed images based on Texton forest and Hough voting. IEEE Trans. Geosci. Remote Sensing. 50(4), 1206–1217 (2011).

Simonyan K, and Andrew Z. “Very deep convolutional networks for large-scale image recognition”. arxiv preprint arXiv:1409.1556v6 (2014).

Wang Y, Yun Z, and Linda P. “An empirical study on the robustness of the segment anything model (sam).” Pattern Recognition (2024): 110685.

Acknowledgements

This research was funded by Henan Academy of Sciences research start-up project, grant number 231820014, Henan Academy of Sciences research start-up project, grant number 251820019 and Henan Academy of sciences, innovative entrepreneurial team project, grant number 20230206.

Author information

Authors and Affiliations

Contributions

Y.H. and P.Z. performed the experiments and wrote the manuscript, C.Z. supervised the project, Y.H. and C. Z. designed the work, and P.Z. reviewed and revised the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zheng, C., Zhang, P. & Li, Y. Semantic SLAM system for mobile robots based on large visual model in complex environments. Sci Rep 15, 8450 (2025). https://doi.org/10.1038/s41598-025-90340-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-90340-5