Abstract

Evaluating cognitive load in mixed reality (MR) has become a significant challenge in human-computer interaction (HCI). To address this, we established an MR multimodal experimental platform with three distinct environments to induce varying levels of cognitive load. Participants engaged in MR-based CNC machine tool interaction tasks within these environments. Using the built-in sensors of the HoloLens 2 mixed reality head-mounted display (MR-HMD) and wearable heart rate sensors, we collected device and physiological data from participants wearing the MR-HMD while performing these tasks. The cognitive load of participants was assessed by using the NASA-TLX questionnaire. Experimental results indicated that the operation time required in the MR environment increased by 49% under high cognitive load compared to low-load conditions. High-load environments also led to increased anxiety, frustration, and decreased performance among participants. Through comparative experiments, we identified suitable sensor data streams and algorithms for cognitive load classification and designed an MR digital twin factory cognitive load warning prototype system. This system utilizes an improved Transformer-CL algorithm, achieving a cognitive load classification accuracy of 95.83%. The system provides high cognitive load warnings, reducing the risks associated with high cognitive load tasks in MR work environments.

Similar content being viewed by others

Introduction

The manufacturing industry is rapidly evolving towards technology-intensive sectors. Within the human-machine-manufacturing system of the smart factory, researchers have developed new insights into the human-machine relationship in industrial production. With advancements in automation technology, many repetitive tasks that once required human intervention have been replaced by machines. Consequently, the role of workers in the manufacturing process is evolving. Workers are no longer bound to the assembly line; instead, they take on roles as designers, operators, and supervisors of machines. In the latest technological trajectory of Industry 5.0, the focus of manufacturing has shifted from system-oriented to human-oriented systems, emphasizing human-centric manufacturing1. Collaborative cooperation between humans and machines can enhance manufacturing efficiency, whereas a lack of coordination between them can lead to economic losses and human injuries2.

Industrial machines pose various dangers and can easily cause safety accidents in the workplace3. In 2022, the U.S. Bureau of Labor Statistics (BLS) reported 738 fatalities in the United States due to contact with objects and equipment, with 199 of these deaths caused by machinery accidents. Similarly, data from the Ministry of Emergency Management, PRC shows that in 2023, there were 310 production safety accidents in the Chinese machinery industry, resulting in 294 deaths. Mechanical injuries were the primary cause of these accidents. The five manufacturing sectors with the highest number of safety accidents were metal products, special equipment manufacturing, general equipment manufacturing, machinery and equipment repair, and automobile manufacturing, which together accounted for over 80% of the total accidents in the machinery industry.

The adoption of innovative technologies in industrial production can significantly enhance both efficiency and safety4. When workers operate industrial equipment, timely handling of machine alarms is crucial for maintaining production line efficiency. Traditionally, mechanical equipment alerts workers through gauges, displays, lights, and alarm sounds. These maintenance tasks often require workers to approach hazardous areas for safety inspections. This method increases the risk of injury. However, the advent of MR technology enables virtual alarm information from mechanical equipment to be seamlessly integrated with real-world devices. By wearing the MR headsets, worker can quickly locate faulty equipment and remotely read and maintain it away from hazardous zones, reducing the risks associated with equipment maintenance.

In traditional human-machine collaboration processes, the interaction between humans and machines occurs through physical components such as mechanical buttons, joysticks, and dials5. Workers receive information from the machine and the environment through the way of visual, auditory, and tactile sense. Subsequently, their brains analyze, assess, and make decisions, then leading to responses to the machine6. As HCI terminals advances, the channels for exchanging information between humans and machines are undergoing profound changes. Traditional interaction modes based on physical buttons are insufficient to meet the demands of natural HCI in modern environments. In the era of MR computing, sensor-driven interaction devices can effectively integrate virtual and physical information by recognizing and interpreting the environment.

However, in the manufacturing environment empowered by new technologies, maintenance personnel using advanced human-machine systems and wearing MR-HMD may face increased cognitive load7. This cognitive load can result from the interference of surrounding environmental information or the impact of overwhelming number of virtual interfaces and alarm noises displayed by MR devices. As industrial work environments often contain significant noise levels, the back-and-forth switching between virtual and real environments can lead to attentional switching effects, and the cognitive overload of working memory when processing more information can result in increased cognitive load8. Additional information interference can also cause information overload for operators, disrupting attention and impairing overall cognitive abilities, subjecting them to significant cognitive load9. Therefore, it is essential to study the cognitive load experienced by workers wearing MR-HMD while performing tasks in noisy environments.

Currently, research on cognitive load has been conducted in data dashboards9, Virtual Reality (VR)10, and Augmented Reality (AR)11. However, research on cognitive load detection using mixed reality (MR) in industrial environments remains limited. The primary objective of this research is to classify cognitive load in multimodal HCI tasks in an industrial digital twin environment. In our study, we hypothesize that users’ cognitive load can be measured through the data and physiological responses collected from MR-HMD devices. Specifically, we propose that the head dynamics data, eye movement data, and various hand movement data of the participants contain hidden information related to cognitive load. This information may reflect the interference of the load environment on the participants’ task performance. To test this hypothesis, we designed and conducted a series of relevant experiments.

The contributions of this paper are as follows:

-

1.

Utilizing digital twin CNC machine, MR-HMD, and wearable heart rate sensors, this study established an experimental platform for cognitive load tasks involving CNC machine operation in a MR environment. We designed three variables of cognitive load environments within the MR-HCI task environment.

-

2.

Using the built-in sensors of MR-HMD and heart rate sensors, we collected head movement data, eye-tracking data, hand joint data, and heart rate data from 20 participants during a total of 120 interaction tasks under three different cognitive load environments. This data was compiled into a comprehensive dataset.

-

3.

Task completion time is a crucial parameter of evaluating performance. While performing interaction tasks in MR environments, we recorded the task completion times and found that the completion time was 49% longer in high-load environments compared to low-load environments. Additionally, we conducted the NASA-TLX questionnaire to assess cognitive load in participants across three different load environments. The questionnaire included six dimensions: mental demand, physical demand, temporal demand, performance, effort, and frustration. This allowed us to obtain participants’ cognitive perceptions and mental states under different load conditions.

After comparing various algorithms, we identified the most suitable one for cognitive load classification based on MR-HMD sensor data. We ultimately used an improved Transformer-CL network for cognitive load classification, which achieved an accuracy rate of 95.83%. We also designed a cognitive load warning prototype system that alerts high-load workers in MR work environments, thereby mitigating the risks associated with high-load operations.

The other sections of this paper organized as follows: The Review section provides an overview of related work on MR HCI and cognitive load. The Experiment section describes our experimental design and the setup of the experimental platform. The Data processing and analysis section presents an analysis of the experimental results, and applies various classification models to the sensor data. The Discussion and limitations section discusses the results of the experiment and examines the limitations of the proposed method. The Conclusion section summarizes the key findings of the study and outlines potential directions for future research.

Review

Research on MR-HCI

VR, AR, and MR are next-generation HCI information terminals that are rapidly evolving, with increased research in these areas. There are certain differences in the technological approaches of VR, AR, and MR: VR immerses users in a computer-generated virtual environment; AR overlays virtual information on the physical world; MR, an advancement of AR technology, further emphasizes environmental perception and the fusion of virtual and real information, thereby bridging the gap between the physical world and virtual information. Typical MR devices include Magic Leap, Microsoft HoloLens, and Apple Vision Pro. These devices integrate virtual information with the physical world by utilizing technologies like Time of Flight (TOF) sensors, gyroscopes, and spatial modeling to perceive and understand the surrounding environment.

MR is widely applied in fields such as education12,13, healthcare14, music15, manufacturing16, human-machine collaboration17, etc. In manufacturing, MR can contribute to more efficient design, development, and validation18, and promote the intelligent manufacturing of factories19. Due to the earlier emergence of VR/AR technology, there are more research and practical applications related to VR/AR than MR. Since both VR/AR and MR require wearing HMD to function, the results of related research can serve as references for studying the cognitive load in MR. Some researchers suggest that the application of AR technology can reduce cognitive load. For example, applying AR technology in assembly tasks in manufacturing can enable operators to handle more complex tasks and reduce stress levels20. Students who learn through AR have higher academic achievement and lower cognitive load21, and reading AR books also imposes less cognitive load22. However, some researchers have found that although VR environments can provide a sense of immersion, they may also lead to cognitive overload and attention dispersion among learners, ultimately affecting learning outcomes23. Therefore, there are some discrepancies regarding the magnitude of cognitive load in VR and AR environments, which may be due to differences in task complexity or related to information systems and HCI interfaces.

With the development of computer graphics interfaces and HCI technology, information systems have become the primary bridge for HCI. Cognitive load significantly impacts how people use information systems24. For example, research has found that the performance of HCI tasks is related to the user’s interaction environment and the graphical user interface (GUI); the more elements on the interface, the greater the cognitive load on the user25. When the cognitive load of additional information is high, it consumes most of an individual’s attentional resources, resulting in little or no resources available for processing task-irrelevant information26. Reducing unnecessary cognitive load during task execution is an important direction for user experience research and preventing production safety risks caused by cognitive distractions and overload.

Cognitive load

The Cognitive Load Theory27 was initially applied in the field of education, primarily studying how to achieve efficient learning processes within the constraints of human limited cognitive and memory capacity. Cognitive load during the learning process arises due to the limited capacity and duration of working memory, primarily caused by cognitive demands in learning tasks and environments28. If there are substantial and prolonged cognitive interferences, it leads to a decrease in cognitive processing speed. Therefore, managing cognitive load is necessary. Cognitive overload occurs when the amount of cognitive resources required to perform a task exceeds the available cognitive resources, resulting in severe performance and safety degradation29. Analyzing and predicting cognitive load can timely alert workers to current cognitive risks, ensuring the safety of workers operating in high-load environments.

Conducting research on cognitive load requires inducing participants to experience cognitive load. Currently, the main induction methods include inducing psychological workload based on the dual N-Back task30, through verb generation tasks31, via emotional pictures32, and through visual, tactile, and auditory stimuli induction33, among others. Auditory stimulation is a significant factor affecting cognitive load. In industrial environments, noise is unavoidable, and onsite workers are often exposed to it34. Existing research indicates that background noise in the environment is considered a stressor and has been shown to negatively impact individuals’ cognitive performance in tasks such as attention, memory, and decision-making35. Noise affects attention neurons36and influences executive functions26and reading comprehension37. Noise leads to a reduction in cognitive reserve capacity, which is related to individual differences in working memory capacity38. Particularly in complex cognitive tasks, the impact of noise levels becomes more significant. While noise levels do not significantly affect response times in attention tasks, they do affect response times in short-term memory tasks39.

Measurement of cognitive load mainly involves two methods: subjective measurement and objective measurement. Subjective measurement is mainly done through self-reporting by filling out questionnaires. Common methods include the NASA-Task Load Index (NASA-TLX) and its optimized version40, Subjective Workload Assessment Technique (SWAT)41, Multiple Resources Questionnaire (MRQ)42, and modified Cooper-Harper (MCH) scale43. Objective measurement methods involve non-invasive biometric sensors to collect physiological signals for measuring cognitive load44. Existing research shows a significant relationship between cognitive load and physiological signals45. Increased task demands usually lead to increased pupil dilation in cognitive control domains such as updating, switching, and inhibition46. Specific cognitive load measurement methods include assessing gaze duration, fixation time, and changes in pupil diameter through eye tracking47, or coupling evaluation between pupil diameter and heart rate (HR)48. Other methods involve using Long Short-Term Memory (LSTM) networks49, Bi-LSTM networks50, Recurrent Neural Networks (RNN)51to classify and predict cognitive load based on Electroencephalogram (EEG) signals, or grading cognitive load in air traffic control based on speech features52. Additionally, cognitive load can be studied through neurovascular responses and task performance53.

Currently, cognitive load assessment in MR environments often adopts questionnaire surveys54. Researchers have attempted to evaluate cognitive load in MR environments using physiological methods55. However, existing methods do not utilize the built-in sensors of MR-HMD but instead rely on screen projections and external eye-tracking sensors for measurements. This approach increases the complexity of the measurement process and introduces potential data interference. To more accurately assess the cognitive load of HCI tasks in MR, we conducted MR cognitive interaction experiments with a group of participants. We collected physiological signals using the built-in sensors of MR-HMD and heart rate wristbands and employed various algorithms to classify the cognitive load data. During the experiments, we recorded task completion times and asked participants to evaluate their cognitive load using the NASA-TLX scale. Finally, we developed a cognitive load warning system to ensure personnel safety.

Experiment

We recruited participants to perform a CNC machining task while wearing an MR-HMD under three different cognitive load environments. This section provides a detailed description of the participant selection, the experimental setup and environment, the design of cognitive load induction environments, and the specific procedures followed during task execution.

Experimental participants

A total of 20 participants (15 males, 5 females) were recruited for this experiment, with an average height of 172.1 centimeters (standard deviation, SD = 8.59) and an average age of 25.1 years (SD = 2.14). All participants had a bachelor’s degree or higher education level. The participants had experience in using CNC machine tools and are familiar with its’ basic operating procedures. During the experiment, each participant had normal or corrected-to-normal vision, was physical healthy without limb injuries, diseases, or adverse habits, and passed the color blindness test. Within 48 h before the experiment, participants did not engage in vigorous activities, receive medication, or consume beverages containing caffeine, tea, taurine, etc., which could affect the experimental data, and ensured adequate sleep. Additionally, there were no extremes in self-reported personality, and their stress sensitivity was normal.

Experimental equipment and environment

The experiment was conducted in the Digital Twin Production Line Laboratory at Guizhou University, which is equipped with digital twin production line equipment, including CNC machine tools, robotic arms, conveyors, engraving machines, and more. The overall architecture of the experimental environment is shown in Fig. 1.

To mitigate potential hazards posed by the operation of mechanical equipment, participants were strictly confined to safe areas away from the machinery during the HCI experiments. To prevent head discomfort, eye fatigue, and hand fatigue from prolonged use of MR-HMD, the duration of each participant’s session was limited to a maximum of 10 min. Additionally, to avoid hearing damage from a noisy environment, the maximum noise level during the MR experiment was controlled to remain within 90 decibels, ensuring it did not pose a risk to participants’ auditory health.

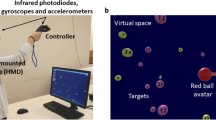

The experimental platform includes the following hardware devices: Microsoft Hololens2, Honor ws851 Wi-Fi Router, laptop computer, and Huawei GT3 heart rate wristband. The MR interactive environment for the experiment was developed using Unity 2021.3.23f1c1. The HoloLens 2 is equipped with two infrared cameras, each with 12 infrared LEDs, positioned at the nose bridge. This setup uses a corneal reflection dark pupil eye-tracking scheme based on infrared LED illumination to capture real-time eye-tracking information. The GT3 wristband employs a photoelectric sensor to collect heart rate data and uses Bluetooth broadcasting to transmit the heart rate information in real time.

To capture real-time data during the MR experiment process, we enabled research mode of the HoloLens 2 so that the experiment program has access to its built-in sensors. The collection and transmission of the MR-HMD sensor data were integrated into Unity using the modified HL2SS program56. The data is transmitted via TCP streaming. A laptop computer was used as the data receiving host to collect and record sensor data.

Experimental procedure

This experiment received approval from the Ethics Committee of Guizhou University (approval number HMEE-GZU-2023-TO25). For this experiment, all participants were required to read and sign the informed consent form at the beginning so that they understand the experiment process and terms. Before the experiment, participants were trained to operate the CNC machine tool in the MR to complete interactive tasks. The training involved both video tutorials and verbal instructions. Before the formal experiment, the participants were arranged to conduct simulated interactive experiments in front of the actual CNC machine tool interface without MR-HMD, and then wore MR-HMD to conduct a pre-experiment operation in the virtual CNC machine tool interface after they were familiar with the operation, which ensured that each participant was familiar with the entire process of CNC machine tool interactive tasks in this MR experiment.

At the beginning of the experiment, the participants were seated in a fixed chair positioned 40 cm outside the safety zone of the production line, with a seat height of 45 cm. Participants were first instructed to wear the heart rate wristband, adjusting it to be one finger-width away from the wrist joint to ensure a moderate tightness, thereby enabling the device to accurately capture real-time heart rate information. Next, the Bluetooth broadcasting function of the wristband was activated. Finally, the MR-HMD was donned. Before each participant started the experiment with the MR-HMD device, the eye-tracking system had to be recalibrated to ensure data accuracy. The experimental site and environment are shown in Fig. 2.

Experimental stimulation

In industrial settings, the management and monitoring of cognitive load are critical. For example, in complex and high-pressure work environments such as nuclear power plants, aviation, and manufacturing, operators are required to make rapid and accurate decisions during the operation of complex equipment and in emergency situations. Excessive cognitive load can lead to decision-making errors, delayed reactions, or fatigue, thus increasing safety risks. Noise is often regarded as a significant dimension of environmental cognitive load. Industrial noise, being one of the most prominent noise sources, can severely affect the auditory system of workers57, disrupt attention, and impair performance on cognitive tasks58. When industrial equipment malfunctions or encounters anomalies, the alarm systems typically alert operators through pop-up windows or auditory signals, requiring swift responses and decision-making. Prolonged exposure to alarm sounds can lead to cognitive overload, contributing to alarm fatigue and negatively impacting task performance59. Multiple alarms may indicate various system issues or require operators to focus on multiple tasks simultaneously, further complicating the allocation of cognitive resources. Inducing cognitive load through sound volume and the number of alarm pop-ups can simulate the intensity of cognitive load stimuli encountered in real-world industrial scenarios, reflecting the allocation of attentional resources during specific tasks.

To simulate real industrial scenarios in the experimental setting, we recorded an audio segment from a real factory, which includes the operational sounds of large stamping equipment and the noise generated by typical machinery. Additionally, alarm pop-ups and sounds from machine equipment were incorporated into the experimental environment to replicate the alarm issues that workers might encounter. We designed three MR-based virtual cognitive load work environments, each containing environmental noise and alarm cues: Cognitive Load Environment A features high environmental noise and multiple alarm pop-ups, simulating a scenario in which operators must address multiple urgent tasks in a complex factory setting; Cognitive Load Environment B includes moderate environmental noise and a few alarm pop-ups, simulating a situation with a moderate workload in industrial production; Cognitive Load Environment C which serves as the control group, operates with low environmental noise and no alarms, simulating a normal working environment. The disruptive stimuli elements and equipment volumes for each load environment are presented in Table 1. During the experiment, each participant wore an MR-HMD and performed interactive tasks in all three cognitive load environments. The three environments in random order, with each load scenario occurring twice, resulting in a total of six experimental sessions per participant.

Experiment tasks

In the MR experimental environment, we simulated the HCI interface of the CNC machine tool operating platform FANUC SERIES OI-MF. Because it is very important for the inspection preparation of the machine tool before the processing work, we take the state inspection of the machine tool before the processing task as an experimental task, that is to release the emergency stop status of the machine tool and zero the spindle coordinates of the machine tool. Considering that the operations of traditional machine tool follow fixed procedures and participants need to utilize their basic operation knowledge of machine tool when doing the experiment, so we did not modify the icons and interaction logic of the machine tool’s operating interface in the MR experimental platform.

In the task, participants started by clicking on the icon to enter the cognitive load experimental program, activating the HCI interface of the digital twin CNC machine tool. Upon initial entry, they need to click the “Open Socket” button on the interface to establish a connection with the remote data acquisition host via a router. Once the data acquisition host displays “Connected Successfully,” it indicates that the connection is established. After preparation is completed, the experimenter announces the formal start of the experiment. The steps to be performed during the experimental task and the corresponding HCI program commands are shown in Fig. 3.

-

Step 1:

Click the “Power On” button, after loading the self-check interface, the digital twin CNC machine tool in the MR will boot up and display the default interface.

-

Step 2:

Click the “Message” button to check the current alarm information of the CNC machine tool.

-

Step 3:

After checking the alarm information, click the “Emergency” button to release the current emergency stop state after checking the alarm information.

-

Step 4:

After releasing the emergency alarm state, click the “POS” button to open the interface displayed at the spindle position.

-

Steps 5–6:

Click the “JOG” button to open the manual feed mode, then click the “ZRN” button to initiate the axis zeroing mode.

-

Steps 8–10:

In the ZRN mode, participants sequentially click the “+X”, “+Y”, “+Z”, and “+A” buttons to return the X, Y, Z, and A axis of the machine tool to the mechanical origin.

-

Step 11:

Click the “PROG” button to enter programming mode.

-

Step 12:

Click the “Power-Off” button to send a stopping recording message to the host in the last step, which means the experiment is over. Upon receiving the message, the host saves and outputs the sensor data stream of this experiment.

Participants were required to wear the MR-HMD and heart rate wristband as instructed. In the MR environment, they completed all interaction tasks from step 1 to step 12. The collected experimental data included 22 sets of information, such as head position, eye tracking, gesture recognition, and heart rate, with a uniform sampling frequency of 30 Hz/s. After each experiment, participants were given a 5-minute break during which they filled out the NASA-TLX questionnaire. The NASA-TLX is a tool used for subjective assessment of cognitive load, allowing users to subjectively evaluate the cognitive load of the HCI system60. The form consists of six dimensions: Mental Demand, Physical Demand, Temporal Demand, Performance, Effort, and Frustration. The scale is divided from 0 to 100 with 21 vertical tick marks, and each representing increments of 5. The questionnaire is translated into Chinese to make it easier for all participants to understand during scoring. After scoring, the next experiment is conducted only after the participant’s heart rate has returned to the resting rate. The purpose of this design is to avoid the impact on the accuracy of the data due to the continuous experimental process.

Data processing and analysis

In the previous section, we outlined the experimental setup and procedure, during which MR-HMD sensor data were collected from participants. Upon completion of the experiment, participants were invited to complete the NASA-TLX questionnaire. In the following section, we describe the methods of data processing and analysis, as well as the implementation of various cognitive load classification models based on the collected sensor data.

Noise levels

To further assess the actual noise data in the experimental environment, we used the UNI-T UT353 noise meter to test the sound decibel values of the three experimental environments during the pre-experiment. First, the meter was placed beneath the sound-emitting unit of the MR-HMD, simulating the position of the participant’s ear canal entrance. Then, tasks were performed, and the sound levels were measured in Experimental Environment A, Experimental Environment B, and Experimental Environment C. Finally, the decibel noise levels of each experimental environment were measured as shown in Fig. 4.

NASA-TLX scores

Due to studies suggesting that directly calculating overall workload using the NASA-TLX scale has limited mathematical significance61,62, we utilized the original six-dimensional NASA-TLX scale to evaluate cognitive workload. Analysis of NASA-TLX scores from 20 participants revealed the following conclusions, as shown in Fig. 5.

Regarding mental demand, participants generally perceived load environment A as the most mentally demanding, with a score of 78.25. This indicates that performing tasks in load environment A requires more cognitive resources and attention. The mental demand for load environment B was 11, though lower, it still required a certain amount of mental effort. The control group C had the lowest mental demand, scoring only 29.75, indicating that a low-noise and alarm-free cognitive load environment requires less mental effort.

Regarding physical demand, participants rated load environment A at 57.5 and load environment B at 49.25. Although the difference between A and B was small, both were higher than the control group C, which scored 27.5. This suggests that performing tasks in environments A and B may require more physical effort.

For time demand, participants rated the high-load environment A at 64, indicating the longest time demand. The control group C had the shortest time demand, scoring only 25.75. This is consistent with our statistical trend of task completion times. Performance in the tasks was inversely correlated with noise levels, with the control group C achieving the highest performance score of 88, while high-interference load environment A had the lowest performance score of only 33.

In terms of effort, participants felt that load environment A required the most effort, scoring 75.5, whereas the control group C required significantly less effort, scoring only 29. The effort needed in low-interference environments was much less than that required in high-interference environments.

Regarding task frustration, load environment A induced the highest frustration among participants, scoring 79, indicating poorer emotional states under high-interference conditions. The control group C had the lowest frustration score of 20.25, showing significantly less frustration than the other two environments. Feedback from participants also revealed that many reported making more erroneous clicks in the high-noise load environment A, aligning with previous findings from experiments conducted in real-world settings63, which have shown that high-load environments increase the likelihood of human operational errors.

Heart rate

Figure 6 showed the average heart rate change of participants in all experiments. Table 2 presented the changes in heart rate in all experiments. It can be observed that the changes in participants’ heart rates are quite similar under the three load environments, and the change rates are also less different before and after the task. This indicates that the experimental environment has minimal impact on heart rate changes. We conducted a one-way ANOVA on participants’ average heart rate during task completion in different cognitive load environments. The result of F-value was 1.042 and p-value was 0.356(>0.05). This suggested that the task environment has no significant effect on the average heart rate, consistent with the findings of experiments conducted in VR45. This result is consistent with the findings from the cognitive load classification algorithm experiment presented in Table 3 Therefore, we did not use heart rate data for classification in the final cognitive load warning prototype system.

Task completion time

Task completion time is a critical parameter for evaluating task performance. To assess task performance across different load environments, we collected completion times for each experimental task, as illustrated in Fig. 7. The average task completion time in cognitive load environment A was 65.33 s. In cognitive load environment B, the completion time was 50.13 s, while the control group C had an average completion time of 43.83 s. The average completion time in the high-interference environment was 49% longer than in the low-interference environment.

ANOVA results indicated that the three cognitive load environments significantly affected task completion time (F = 6.724, p = 0.002 < 0.01, partial eta squared = 0.103). It can be observed that task completion time in the lowest noise environment (control group C) was significantly faster than in environments A and B. This result confirms that excessively noisy work environments significantly prolong the execution time of MR tasks. This conclusion is consistent with the NASA-TLX questionnaire results in this study, where higher interference correlated with lower performance and longer task completion times. Additionally, this conclusion aligns with related research findings in non-MR environments64 further validating the impact of load environments on task completion time.

Cognitive load classification network based on improved transformer

The training and testing environment for the classification algorithm includes Windows 10, Anaconda, Python 3.9, with hardware configurations of i9 13900KF and RTX 4090 (24G). We utilized CUDA 11, TensorFlow-GPU, and the Keras deep learning framework to accelerate the training process.

Due to variations in the total duration of experimental tasks across participants, sensor data were recorded at a sampling rate of 30 Hz, resulting in time-series data ranging from 1,000 to 2,500 rows per subject. To standardize the input for model training, all sequences were normalized to a fixed length of 2,500 rows. For sequences shorter than 2,500 rows, data completion was performed through repetition to ensure consistency. The standardized data were then segmented into fixed-length, non-overlapping windows of 500 rows each, extracted sequentially from the beginning of the sequence until the end. Each window was treated as an independent input sample. The cognitive load label for each window was determined based on the most frequently occurring label within that window. The resulting preprocessed dataset was subsequently used as input for model training.

Since the collected sensor data streams exhibit different data forms, normalization of these different sensor stream data is essential for the classification algorithm to extract meaningful information. Initially, we converted the labels of the three cognitive load environments into integers. Next, we standardized the data columns for each sensor. For each feature (column), we calculated the mean and standard deviation across all samples. The data was then standardized using these calculated mean and standard deviation values. The specific calculation process is as follows: assuming the sensor-collected feature data matrix is X, where X contains n samples and j features. The mean value for each feature column is calculated as follows:

where Xij represents the value of the j-th feature in the i-th sample. The standard deviation for each feature column is calculated as:

Finally, Using the calculated mean \({\mu _j}\) and standard deviation \({\sigma _j}\) to obtain the standardized input data results\(X_{{ij}}^{\prime }\)

When designing a deep learning network based on the Transformer structure for cognitive load classification, we made several modifications to tailor it to the specific classification task. Since we are not dealing with textual information, we simplified the input embedding layer and the positional encoding module. This allowed the model to effectively handle sensor time-series data from different cognitive load environments and focus on the relationships between data points. In our network, the input to the attention mechanism is Q = K = V = Xinput, where the number of heads is HHH and the dimension of each head is dk. The attention scores are calculated as follows:

The multi-head attention mechanism computes attention scores by mapping the input into H different subspaces. The attention output weight matrix is denoted as Wo.

To stabilize the training process, we employ residual connections and layer normalization:

The feed-forward neural network consists of two fully connected layers, each followed by a ReLU activation function:

We use residual connections and layer normalization again after the feed-forward network:

Before producing the final output, we apply global average pooling to the sequence data to reduce its dimensionality:

The output of the global average pooling layer is fed into a fully connected layer:

This layer produces the classification results for cognitive load.

To prevent overfitting, we add multiple dropout layers with a dropout rate of 0.5. The overall network structure of the Transformer-cl classification model is illustrated in Fig. 8.

To evaluate the classification performance of the model, we introduce accuracy, F1-score, and AUC as key evaluation metrics. Accuracy serves as a fundamental measure of the model’s overall classification correctness.

The confusion matrix for cognitive load classification is presented as follows:

For the three cognitive load levels (high, medium, and low), the correctly classified instances are denoted as TPH (True Positive for high load), TPM (True Positive for medium load), and TPL (True Positive for low load). Given that the total number of classified instances is \({N_{total}}\), the classification Accuracy of the model can be computed using the following formula:

Secondly, the F1-score is introduced as a key performance metric, which is derived from precision and recall. It is computed using the following formula:

To mitigate the impact of class imbalance, we adopt the Weighted-Average F1-score, which accounts for the proportion of each class in the test set. The weighted F1-score is computed as follows:

Where \({w_i}\) represents the proportion of class i in the test set, and \(F{1_i}\)denotes the F1-score of class.

The area under the curve (AUC) represents the area under the receiver operating characteristic (ROC) curve, which serves as a key metric to evaluate the classification capability of the model. AUC is computed using the following formula:

where N + and N- denote the number of positive and negative samples, respectively. The function \(f(x)\)represents the predicted score for a given sample, and \(1(f(x+)>f(x - ))\) is an indicator function that equals 1 if the predicted score for a positive sample is greater than that of a negative sample, and 0 otherwise. For multi-class classification, the one-vs-rest (OvR) strategy is employed to compute the AUC. The final AUC value is obtained by averaging the AUC scores for each class, where \(AU{C_i}\)represents the AUC score for class i.

The specific training parameters are as follows: the batch size is set to 16, the training epoch is set to 1000, and a callback function is used to obtain the model with the best classification performance. The validation set proportion is set to 20% of the dataset. Through multiple comparative experiments, we evaluated the performance of our method against RNN, LSTM, and BiLSTM models, as well as traditional classification models such as SVM, logistic regression, and random forest. The improved Transformer-CL network demonstrates promising classification performance, achieving a maximum accuracy of 95.83%, an F1-score of 0.96, and an AUC of 0.99, highlighting its effectiveness in cognitive load classification. The detailed performance comparison is presented in Table 3. This comprehensive evaluation demonstrates the potential of Transformer-based architectures in cognitive load classification applications.

To understand the interdependencies within the sensor data, we extracted the attention weights from the trained Transformer-CL model and conducted a detailed analysis of the model’s attention mechanism. This analysis led us to create heatmaps depicting attention weights across spatial and temporal dimensions.

To understand the interdependencies within the sensor data, we extracted the attention weights from the trained Transformer-CL model and conducted a detailed analysis of the model’s attention mechanism. This analysis led us to create heatmaps depicting attention weights across spatial and temporal dimensions.

The average attention time and the visualization of the weights for spatial and mechanism mechanisms are shown in Fig. 9. From this weight visualization, we observe that the weights associated with head posture data and right-hand posture data are relatively larger, suggesting that the classification network has identified more cognitive load-related information in these data.

High cognitive load warning prototype system in MR environment

In MR-HCI work environments, prolonged exposure to high-load conditions can lead to safety issues. Detecting workers who are under sustained high cognitive load is challenging, as it typically requires them to wear equipment such as EEG caps, eye trackers, and external physiological sensors. In an MR digital twin environment, workers are required to wear MR-HMD during their tasks. By having the sensors embedded within the HMD to capture and classify cognitive load in real-time, it becomes easier to identify workers’ cognitive load levels in the workplace.

We developed a prototype system for MR high-load warning based on Unity and PyQt. The system operates as follows: it continuously receives and logs sensor data streams from MR-HMD. The system receives and records sensor data streams from the MR-HMD. When the recorded data reaches 500 rows (approximately 16.6 s at a sampling frequency of 30 Hz), the system automatically saves the data and imports it into the cognitive load classification network to identify the participant’s current workload state. If a worker remains in a high cognitive load state for five consecutive detection periods, the warning system is triggered. A warning window pops up, prompting the worker to choose between taking a break or seeking assistance.If the worker ops for a break, the system temporarily silences the alarm sound and alarm display, instructs the worker to remove the MR-HMD, rest, and resume work afterward. When “Call for help” button is clicked, the MR-HMD sends a command to the system monitoring host. The management personnel at the program end will receive relevant alerts, as shown in Fig. 10. This allows management to promptly identify workers experiencing prolonged high-load situations and dispatch technical personnel to assist them in addressing related issues. This proactive approach helps mitigate anxiety, errors, and safety concerns that may arise from prolonged exposure to high cognitive load environments.

Discussion and limitations

In the context of future MR-based human-computer interaction (HCI) work environments, we explore the use of the sensors embedded in MR-HMD to assess workers’ cognitive load, leveraging the Transformer-CL network for cognitive load classification. Experimental results indicate that the sensor data from MR-HMD contain latent information related to the participants’ cognitive load, which reflects the interference of the load environment on task performance and can serve as a basis for cognitive load classification.

We experimentally evaluated the impact of using both unimodal and multimodal sensor data on cognitive load classification performance. The results indicate that the combination of “Head Movement + Eye Tracking + Hand Gestures” as a three-modal dataset achieved the best performance across most classification models. The Transformer-CL model exhibited the highest performance on the three-modal data, achieving an accuracy of 95.83%, highlighting the advantages of the self-attention mechanism in capturing temporal relationships and key features. Notably, the introduction of heart rate data led to a decline in the classification performance of the primary models. For instance, the accuracy of the Transformer-CL model dropped from 95.83 to 83.33%. This phenomenon suggests that although heart rate is an important physiological signal, its relevance to cognitive load may be lower in certain tasks.

The LSTM model demonstrated relatively poor performance on the unimodal dataset, with an accuracy of only 62.50%, and did not significantly improve its performance on the multimodal data, indicating a lack of capacity in modeling temporal dependencies. In contrast, the BiLSTM model enhanced its temporal modeling ability through its bidirectional structure, capturing dependencies in both forward and backward directions. Its accuracy on the three-modal dataset increased to 80.56%, with an F1-score of 0.81 and an AUC of 0.89, significantly outperforming the LSTM. However, the gap between BiLSTM and Transformer-CL remained substantial, with Transformer-CL achieving 15.27% higher accuracy on the same dataset.

The RNN model achieved only 66.7% accuracy on the three-modal dataset, indicating its limitations in handling complex temporal dependencies. The simple structure of the RNN made it less effective at capturing long-range dependencies and cross-modal features in multimodal data, which constrained its performance in cognitive load classification.

Traditional machine learning models, such as SVM and Logistic Regression, showed significantly lower classification performance on multimodal data compared to deep learning models. In particular, the SVM, when using a polynomial kernel (degree = 2), achieved an accuracy of only 54.17% on the three-modal dataset, with the AUC failing to improve significantly, suggesting its limited ability to fit high-dimensional spatiotemporal feature data. The performance of Logistic Regression was similarly limited, with a maximum accuracy of 58.33% on the three-modal dataset. Among traditional machine learning methods, Random Forest performed relatively well, achieving an accuracy of 70.83% on the three-modal data, demonstrating its advantage in handling structured data. However, its performance still lagged behind that of deep learning models, particularly when faced with complex temporal data and cross-modal feature fusion.

In summary, the Transformer-CL model demonstrated exceptional performance on multimodal data, confirming the potential of Transformer architectures based on the self-attention mechanism for cognitive load classification. The integration of multimodal data significantly enhanced classification performance, particularly the combination of head movement, eye tracking, and hand gesture data, which effectively captured multidimensional information related to cognitive load. Traditional machine learning models performed suboptimally in cognitive load classification tasks compared to deep learning models, particularly when confronted with complex temporal data and the fusion of cross-modal features. Deep learning models exhibited superior classification capabilities in these contexts. Our findings provide both theoretical support and methodological references for future multimodal classification research in analogous scenarios.

However, this study has several limitations. For instance, although the Transformer-CL model achieved a cognitive load classification accuracy of 95.83%, it is important to note that this result was obtained under controlled experimental conditions with a limited sample size. Furthermore, the total number of training samples collected in the experimental environment was 480, which is relatively small. The participants, with an average age of 25.1 years (SD = 2.14) and prior experience with CNC machine operations, were able to familiarize themselves with the machinery processes more quickly during the experiment. However, in real-world factory settings, the varying ages and prior knowledge of actual workers, as well as individual differences among the participants in the experiment may still impact the results. Additionally, due to the limitations of the current study, we simulated the noise interference of a real factory environment using recorded factory sounds, alarm sounds, and alarm interfaces to avoid safety threats during real factory experiments. However, the simulated background noise may differ from the actual noise interference in a real factory setting. Different types of factories and various job tasks may also present different cognitive load environments. Therefore, the generalizability of the model across different factory environments, task types, and user groups requires further validation through future studies.

Based on the findings of this study, we recommend strategically controlling elements in the work environment that are prone to inducing cognitive load. For instance, future industrial mixed reality headsets should integrate noise-cancellation features to mitigate the background machine noise in factory settings. Additionally, it is advisable to control the number of alarm interfaces in a single task environment, possibly by stacking alert windows based on their level of importance for information display. These measures will help reduce cognitive load in complex factory environments, thereby safeguarding workers’ psychological well-being and operational safety.

In the practical implementation of the cognitive load assessment system, there are still potential barriers. For example, while MR-HMD devices can improve the efficiency of maintenance tasks, the high cost of purchasing and training for MR-HMD devices (such as the HoloLens and Vision Pro, priced around $3,500) limits their widespread application in industrial production. Additionally, their relatively short battery life (2–3 h) and weight (approximately 560–650 g) present obstacles during user training. Furthermore, although some MR-HMDs are available in industrial versions, their performance in extreme conditions, such as high temperatures, high salinity, and high humidity, still requires further research. Moreover, due to the limited computational power of the HoloLens 2, we did not perform the classification tasks directly on the MR-HMD processor, but instead transmitted the data to a terminal computer for classification. In factories with complex network and electromagnetic environments, this data transmission may introduce certain delays in data processing.

Conclusion

In this study, we examined the impact of cognitive interference factors in MR environments on HCI tasks. We designed a controlled experiment with three different levels of load interference in MR-HCI tasks. By using sensors integrated into MR-HMD, we first collected physiological data from participants operating CNC machines in MR environments, creating a multimodal sensor cognitive load dataset. We assessed cognitive load using the NASA-TLX questionnaire during the experiments. The results indicate that load interference significantly affects MR-HCI tasks; in high-interference load conditions, average completion times were extended by 49% compared to the control group. Additionally, high-interference load conditions increased anxiety, frustration, leading to a decline in task performance among operators.

To classify cognitive load, we utilized an enhanced Transformer-CL network, achieving an accuracy rate of 95.83%. The experimental results validated our hypothesis that sensor data from MR-HMD contains hidden information related to cognitive load, which reflects the interference of the load environment on participants’ task performance. Furthermore, we designed a prototype MR digital twin factory cognitive load alert system to assist workers in high cognitive load MR environments, thereby reducing safety risks in MR work settings.

In future research, we aim to integrate additional sensor data streams into cognitive load classification systems, such as heart rate variability (HRV), video streams, and spatial data. Furthermore, we will explore the impact of personalized worker behaviors on cognitive load detection and assess the performance differences among various MR devices. Through these efforts, we anticipate further enhance the accuracy of cognitive load scenario recognition and load classification, ultimately providing better support and protection for workers in complex operational environments.

Data availability

Data is provided within the manuscript or supplementary information files.

References

Zhang, C. et al. Towards new-generation human-centric smart manufacturing in industry 5.0: a systematic review. Adv. Eng. Inf. 57, 102121 (2023).

Nickel, P. et al. Human-System interaction design requirements to improve machinery and systems safety. Adv. Saf. Manage. Hum. FACTORS. 969, 3–13 (2020).

Chinniah, Y., Aucourt, B. & Bourbonniere, R. Safety of industrial machinery in reduced risk conditions. Saf. Sci. 93, 152–161 (2017).

Benson, C., Obasi, I. C., Akinwande, D. V. & Ile, C. The impact of interventions on health, safety and environment in the process industry. Heliyon 10, e23604 (2024).

Zhou, H., Wang, D., Yu, Y. & Zhang, Z. Research progress of Human–Computer interaction technology based on gesture recognition. Electronics 12, 2805 (2023).

Zhang, S., He, C. & Ji, Z. Study on Human—Machine interface design of construction machinery. In Advances in Intelligent Systems and Computing 78–85 (2019).

Arana-De, L. C. N., De la Riva-Rodriguez, J., Maldonado-Macias, A. A. & Saenz-Zamarron, D. Cognitive analyses for interface design using dual N-Back tasks for mental workload (MWL) evaluation. Int. J. Environ. Res. Public. Health 20, 145 (2023).

Koelewijn, T., Shinn-Cunningham, B. G., Zekveld, A. A. & Kramer, S. E. The pupil response is sensitive to divided attention during speech processing. Hear. Res. 312, 114–120 (2014).

Ke, J., Liao, P., Li, J. & Luo, X. Effect of information load and cognitive style on cognitive load of visualized dashboards for construction-related activities. Autom. Constr. 154, 105029 (2023).

Chen, Y. C., Chang, Y. S. & Chuang, M. J. Virtual reality application influences cognitive load-mediated creativity components and creative performance in engineering design. J. Comput. Assist. Learn. 38, 6–18 (2022).

Atici-Ulusu, H., Ikiz, Y. D., Taskapilioglu, O. & Gunduz, T. Effects of augmented reality glasses on the cognitive load of assembly operators in the automotive industry. Int. J. Comput. Integr. Manuf. 34, 487–499 (2021).

Loup-Escande, E. et al. Contributions of mixed reality in a calligraphy learning task: effects of supplementary visual feedback and expertise on cognitive load, user experience and gestural performance. Comput. Hum. Behav. 75, 42–49 (2017).

Zhu, Y. et al. An Interactive mixed reality platform for inquiry-based education. In 2021 IEEE 7th International Conference on Virtual Reality (ICVR) 324–331 (Foshan, 2021).

Eom, S. et al. Accuracy of routine external ventricular drain placement following a mixed reality-guided twist-drill craniostomy. Neurosurg. Focus 56, E11 (2024).

Graf, M. & Barthet, M. Reducing sensing errors in a mixed reality musical instrument. In VRST ‘23 New York (2023).

Buyruk, Y. & Cagdas, G. Interactive parametric design and robotic fabrication within mixed reality environment. Appl. Sci. -Basel. 12, 12797 (2022).

Papadopoulos, T., Evangelidis, K., Evangelidis, G. & Kaskalis, T. H. Mixed reality and the internet of things: bridging the virtual with the real. Adv. Eng. Softw. 185, 103527 (2023).

James, S. & Eckert, G. A. Feasibility study on mixed Reality-Based visualization and interaction tool for performance improvement of metal cutting processes. Metals 13, 286 (2023).

Dai, M. et al. Research on holographic visualization verification platform for construction machinery based on mixed reality technology. Appl. Sci. 13, 3692 (2023).

Vanneste, P. et al. Cognitive support for assembly operations by means of augmented reality: an exploratory study. Int. J. Hum. -Comput Stud. 143, 102480 (2020).

Kucuk, S., Kapakin, S. & Goktas, Y. Learning anatomy via mobile augmented reality: effects on achievement and cognitive load. Anat. Sci. Educ. 9, 411–421 (2016).

Cheng, K. Reading an augmented reality book: an exploration of learners’ cognitive load, motivation, and attitudes. Australas J. Educ. Technol. 33, 53–69 (2017).

Makransky, G., Terkildsen, T. S. & Mayer, R. E. Adding immersive virtual reality to a science lab simulation causes more presence but less learning. Learn. Instr. 60, 225–236 (2019).

Fehrenbacher, D. D. & Djamasbi, S. Information systems and task demand: an exploratory pupillometry study of computerized decision making. Decis. Support Syst. 97, 1–11 (2017).

Figueroa, A. M. & Juarez-Ramirez, R. The problem of Cognitive Load in GUI’s: towards establishing the relationship between Cognitive Load and our Executive Functions. In 2017 IEEE 41st Annual Computer Software And Applications Conference (COMPSAC), VOL 2 561–565 (2017).

Zhu, R., Ma, X. & You, X. The effect of working memory load on inattentional deafness during aeronautical decision-making. Appl. Ergon. 113, 104099 (2023).

Sweller, J. Cognitive load during problem solving: effects on learning. Cogn. Sci. 12, 257–285 (1988).

Kockord, R. & Bodensiek, O. Cognitive load during first contact with mixed reality learning environments. In Mensch And Computer 2021 (MUC 21) 260–264 (2021).

Amadori, P. V., Fischer, T., Wang, R. & Demiris, Y. Predicting secondary task performance: a directly actionable metric for cognitive overload detection. IEEE Trans. Cogn. Dev. Syst. 14, 1474–1485 (2022).

Arana-De Las Casas, N. I., De la Riva-Rodríguez, J. & Maldonado-Macías, A. A. Sáenz-Zamarrón, D. Cognitive analyses for interface design using dual N-Back tasks for mental workload (MWL) evaluation. Int. J. Environ. Res. Public Health. 20, 1184 (2023).

Van der Heiden, R. M. A., Janssen, C. P., Donker, S. F. & Kenemans, J. L. The influence of cognitive load on susceptibility to audio. Acta Psychol. (Amst). 205, 103058 (2020).

Van Dillen, L. F., Heslenfeld, D. J. & Koole, S. L. Tuning down the emotional brain: an fMRI study of the effects of cognitive load on the processing of affective images. Neuroimage 45, 1212–1219 (2009).

Stojmenova, K. & Sodnik, J. Validation of auditory detection response task method for assessing the attentional effects of cognitive load. Traffic Inj Prev. 19, 495–500 (2018).

An, S. et al. Effects of auditory Pre-Stimulation on cognitive task performance in a noisy environment. Appl. Sci. 12, 5823 (2022).

Zhou, H., Molesworth, B. R. C., Burgess, M. & Hatfield, J. The effect of moderate broadband noise on cognitive performance: a systematic review. Cogn. Technol. Work. 26, 1–36 (2024).

Smucny, J., Rojas, D. C., Eichman, L. C. & Tregellas, J. R. Neuronal effects of auditory distraction on visual attention. Brain Cogn. 81, 263–270 (2013).

Hao, H. & Conway, A. The impact of auditory distraction on reading comprehension: an individual differences investigation. Mem. Cognit. 50, 852–863 (2022).

Rudner, M. Cognitive spare capacity as an index of listening effort. Ear Hear. 37, 69S–76S (2016).

Jin, T. et al. The impact of environmental noise on drivers’ cognitive abilities: a case study on in-vehicle voice interaction interfaces. Appl. Ergon. 117, 104247 (2024).

Kazemi, R. et al. Development and validation of a task load index for process control room operators (PCRO-TLX). Ergonomics 66, 2121–2132 (2023).

Zak, Y., Parmet, Y. & Oron-Gilad, T. Subjective workload assessment technique (SWAT) in real time: affordable methodology to continuously assess human operators’ workload. In 2020 Ieee International Conference On Systems, Man, And Cybernetics (SMC) 2687–2694 (2020).

Krehl, C. & Balfe, N. Cognitive workload analysis in rail signalling environments. Cogn. Technol. Work. 16, 359–371 (2014).

Mansikka, H., Virtanen, K. & Harris, D. Comparison of NASA-TLX scale, modified Cooper–Harper scale and mean inter-beat interval as measures of pilot mental workload during simulated flight tasks. Ergonomics 62, 246–254 (2017).

Suzuki, Y., Wild, F. & Scanlon, E. Measuring cognitive load in augmented reality with physiological methods: a systematic review. J. Comput. Assist. Learn. 40, 375–393 (2024).

Ahmadi, M. et al. Cognitive load measurement with physiological sensors in virtual reality during physical activity. In 29th Acm Symposium On Virtual Reality Software And Technology, VRST 2023 (2023).

van der Wel, P. & van Steenbergen, H. Pupil dilation as an index of effort in cognitive control tasks: a review. Psychon Bull. Rev. 25, 2005–2015 (2018).

Cong, R., Tago, K. & Jin, Q. Measurement and verification of cognitive load in multimedia presentation using an eye tracker. Multimed. Tools Appl. 81, 26821–26835 (2022).

Jercic, P., Sennersten, C. & Lindley, C. Modeling cognitive load and physiological arousal through pupil diameter and heart rate. Multimed. Tools Appl. 79, 3145–3159 (2020).

Yoo, G., Kim, H. & Hong, S. Prediction of cognitive load from electroencephalography signals using long short-term memory network. Bioeng.-Basel 10, 361 (2023).

Yedukondalu, J., Sharma, D. & Sharma, L. D. Subject-wise cognitive load detection using time–frequency EEG and Bi-LSTM. Arab. J. Sci. Eng. (2011) 49, 4445–4457 (2024).

Kuanar, S., Athitsos, V., Pradhan, N., Mishra, A. & Rao, K. R. Cognitive analysis of working memory load from Eeg, by a Deep Recurrent Neural Network. In IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 2576–2580 (Calgary, 2018).

Yang, J., Yang, H., Wu, Z. & Wu, X. Cognitive load assessment of air traffic controller based on SCNN-TransE network using speech data. Aerospace 10, 584 (2023).

Saikia, M. J. K-Means Clustering Machine learning approach reveals groups of homogeneous individuals with unique brain activation, task, and performance dynamics using fNIRS. IEEE Trans. Neural Syst. Rehabil. Eng. 31, 2535–2544 (2023).

Pal, R. Reducing cognitive load using mixed reality and external cognition. In IndiaHCI ‘21 152–155 (Virtual Event, 2021).

Wang, W., Hong, X., Dang, S., Xu, N. & Qu, J. 3D space layout design of holographic command cabin information display in mixed reality environment based on hololens 2. Brain Sci. 12, 971 (2022).

Dibene, J. C. & Dunn, E. HoloLens 2 Sensor Streaming.: arXiv (2022).

Ahmed, S. S. & Gadelmoula, A. M. Industrial noise monitoring using noise mapping technique: a case study on a concrete block-making factory. Int. J. Environ. Sci. Technol. 19, 851–862 (2022).

Hao, H. & Conway, A. R. A. The impact of auditory distraction on reading comprehension: an individual differences investigation. Mem. Cognit. 50, 852–863 (2022).

Rios, D. et al. Multisensory alarm to benefit alarm identification and decrease workload: a feasibility study. J. Clin. Monit. Comp. 37, 1051–1059 (2023).

Hart, S. G. & Staveland, L. E. In Human Mental Workload, vol. 52 (eds. Hancock, P. A. & Meshkati, N.) 139–183 (North-Holland, 1988).

Bolton, M. L., Biltekoff, E. & Humphrey, L. The mathematical meaninglessness of the NASA task load index: a level of measurement analysis. IEEE T Hum. -Mach Syst. 53, 590–599 (2023).

Galy, E., Paxion, J. & Berthelon, C. Measuring mental workload with the NASA-TLX needs to examine each dimension rather than relying on the global score: an example with driving. Ergonomics 61, 517–527 (2018).

Zhao, K. & Weiping, L. Study on the effects of noise on Crew’s mental workload in information processing. In MMESE 2018 393–399 (Singapore, 2019).

Haghighat, P. et al. Effects of an intelligent virtual assistant on office task performance and workload in a noisy environment. Appl. Ergon. 109, 103969 (2023).

Funding

This work was supported by the National Natural Science Foundation of China (Grant No. 52065010) and the Science and Technology Projects in Guizhou Province (Grant No. [2022]197, [2022]067, [2023]274).

Author information

Authors and Affiliations

Contributions

Yukang Hou: Writing – Original Draft, Conceptualization, Methodology, Data Curation, Formal Analysis, Investigation, Software, Validation, Visualization. Qingsheng Xie: Project administration, Supervision, Validation. Ning Zhang: Methodology, Writing - review & editing, Visualization. Jian Lv(Corresponding Author): Writing – review & editing, Project administration, Supervision, Funding acquisition, Validation.All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

This research has received the ethical approval from Ethical Review Committee (HMEE-GZU-2023-TO25), Guizhou University. Informed consent was obtained from all subjects in this research. We confirm that all research is conducted in accordance with the relevant guidelines of the 1964 Declaration of Helsinki where human subjects are involved.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Hou, Y., Xie, Q., Zhang, N. et al. Cognitive load classification of mixed reality human computer interaction tasks based on multimodal sensor signals. Sci Rep 15, 13732 (2025). https://doi.org/10.1038/s41598-025-98891-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-98891-3

Keywords

This article is cited by

-

Design and improvement of cultural and creative products integrating the long short-term memory algorithm for human–computer interaction behavior analysis

Intelligent Service Robotics (2026)

-

Human-centered design of VR interface features to support mental workload and spatial cognition during collaboration tasks in manufacturing

Cognition, Technology & Work (2025)