Abstract

Although Parkinson’s disease (PD) is primarily defined by motor symptoms, non-motor manifestations—including deficits in social cognition—are increasingly recognized for their impact on daily functioning. Emotion recognition impairment is one such deficit, but prior research has mainly focused on facial expressions, neglecting other socially relevant cues such as body postures. This study examined recognition of emotions from both faces and bodies and their cognitive and neuroanatomical correlates in non-demented patients with PD. We enrolled 25 individuals with mild to moderate PD and 24 age-matched healthy controls. Emotion recognition was assessed with validated facial (ER-40) and bodily (BR-40) tasks. Cognitive function was evaluated with the Montreal Cognitive Assessment, Dementia Rating Scale-2 and Frontal Assessment Battery. Motor symptoms were rated using the MDS-UPDRS Part III. Structural MRI data were analyzed with FreeSurfer. Compared with controls, patients with PD showed a specific impairment in recognizing emotions from body postures, despite comparable overall performance to controls. Within the PD group, poorer recognition was associated with greater cognitive impairment and higher bradykinesia subscores. MRI volumetry linked emotion recognition performance to the volumes of the cerebellar white matter, hippocampus, and left nucleus accumbens. In addition to these structures, facial emotion recognition was specifically related to cerebellar cortical volume, whereas body posture recognition showed associations with the rightputamen, and right amygdala. These findings highlight modality-specific social cognitive deficits in PD, related to both cognition and structural brain changes.

Similar content being viewed by others

Introduction

Parkinson’s disease (PD) is associated with a wide array of both motor and non-motor symptoms, including a progressive pattern of cognitive impairment and deficits in social cues processing1,2. The ability to accurately and rapidly recognize emotions in others is crucial for adaptive navigation through the everyday social interactions, it fosters empathy, and enables effective communication3.

Deficits in emotion recognition are widely reported in PD4,5,6, they are typically manifested as difficulties in recognizing basic emotions from the facial and prosodic stimuli7,8. These results indicate that deficits are consistent and almost identical across stimulus modalities. In PD, emotion recognition abilities are linked to the duration and severity of the disease, affective symptoms, and pharmacological therapy9. They are also associated with lower cognitive performance, primarily on those measuring attention, working memory, and executive functions10,11. The effect is more pronounced in the emotional tasks that heavily rely on these abilities4. However, some studies did not find the link between cognitive skills and emotion recognition in PD; thus, they may manifest independently of general cognitive impairment12,13. In addition, the meta-analysis found no significant link to motor symptoms, indicating separate pathophysiological underlying mechanisms for motor symptoms and emotional processing7.

While emotion recognition in PD has been widely studied through facial and vocal cues, the role of whole-body posture, which is an essential channel of emotional communication, has been largely overlooked. Several studies showed that information extracted from the whole body can substantially improve emotion recognition abilities14. Body posture can be a rich source of social cues that are similarly relevant for successful social interactions15, as those expressed by face alone. Especially in situations when people cannot see faces with sufficient detail (for example, from a distance), they can easily estimate intentions from the posture and movement using a mirror neuron system16.

Growing evidence exists about impaired perception and emotion recognition from body postures in PD. One study compared PD and healthy controls (HC) on the judgments of emotional scenes depicting whole-body movements. The task was to rate the emotional valence of short movies depicting emotional interactions between people. In PD, emotional valence evaluations were less intense than controls for positive and negative emotional expressions, even though patients could correctly describe the scene17.

In healthy individuals, face emotion processing is associated with the activation of the visual cortex (mainly fusiform gyrus), limbic area (amygdala, parahippocampal gyrus, posterior cingulate cortex), temporoparietal areas, medial frontal gyrus, putamen, and the cerebellum. In contrast, body perception is mediated by the posterior parts of the sulcus temporalis superior and fusiform body area and extrastriate body area15,18. Recently, growing evidence supports the cerebellum’s role in processing other people’s body language19. In PD, emotion processing was linked to the subcortical areas such as the amygdala, the nucleus accumbens, and other cortical regions such as the sulcus temporalis superior, fusiform face area, and extrastriate body area14,20,21.

Emotion recognition impairment may stem from the impact of the PD related neurodegeneration on the neural substrate that is responsible for accurate emotion recognition. Structural changes are progressive throughout the disease course. Emotion recognition declines more in later-stage PD patients4. It may occur as a secondary effect of the denervation of dopaminergic pathways of the ventral striatum, subthalamic nucleus, and other basal ganglia regions22. These regions relate to areas involved in emotional processing. Recently, several clinical features of PD, such as social cognition impairment, were linked to the cerebellum23.

The aims of this study are threefold. We will assess emotion recognition from facial and bodily expressions in individuals with PD and HC. In PD group, we will examine the associations between emotion recognition, clinical symptoms, and cognitive impairment severity. Moreover, we will investigate the neural correlates of emotion recognition using structural MRI.

Methods

Sample

The sample consisted of 25 patients with mild to moderate PD diagnosed based on MDS criteria Postuma et al.25 without major neurocognitive disorder and 24 age-matched HC. Table 1 displays the demographic and clinical characteristics of the PD and HC and statistical comparisons between these two groups.

All participants were native Slovak speakers. Exclusion criteria for both groups included: non-corrected visual or auditory problems; history of alcohol or substance abuse; device-aided therapy for PD; history of traumatic brain injury or intracranial surgical operations, neurological (other than PD) or psychiatric illness reported by the patient. All patients were taking antiparkinsonian medication, and the levodopa equivalent daily doses were counted based on the standard protocol25. The medication regimen remained unchanged during the past month. All testing was performed during the ON state, as reported subjectively by the patients approximately 60 min after their morning dose. Structural MRI was acquired within six weeks following the clinical examination in the ON state without dyskinesia. Patients and HC were recruited through the Second Department of Neurology, Comenius University Faculty of Medicine and University Hospital Bratislava, Bratislava, Slovakia. All participants provided written informed consent, and the study was approved by the local Ethics Committee of the University Hospital Bratislava (protocol number 14/2017). All the procedures employed in the study complied with the Declaration of Helsinki.

Measures

The Movement Disorder Society Unified Parkinson’s Disease Rating scale (part III)

The severity of the motor symptoms in PD was assessed by the motor section (section III) of the Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS-UPDRS)26,27. Higher scores indicate more severe motor symptoms. We divided the MDS-UPDRS III score into four subgroups according to motor symptoms in PD. MDS-UPDRS III scores were then subdivided into tremor (sum of items 15–18), rigidity (item 3), bradykinesia (sum of items 2, 4–9, and 14), and axial (sum of items 1 and 9–13)28. The rating was done by one experienced movement disorder specialist right after the acquisition of informed consent.

Emotion recognition tasks

The Penn Emotion Recognition Test (ER-40) was used to assess facial emotion recognition. It includes 40 photographs of faces expressing four basic emotions (happiness, sadness, anger, or fear) and neutral expressions. Stimuli are balanced for gender, age, and ethnicity, and for each emotion category, four high-intensity and four low-intensity expressions are included.

The body emotion recognition task (BR-40) was used to assess bodily emotion recognition abilities (an example of the stimulus is shown in Fig. 1). The photographs were drawn from The Bodily Expressive Action Stimulus Test29. It was developed after ER-40 and was based on the same principle as the forced-choice measure of four emotions (happiness, sadness, anger, or fear) and neutral expressions. To maintain balanced gender representation, the stimulus set included male and female postures in equal proportion. Participants completed a five-alternative forced-choice emotion-categorization task, indicating which emotion was expressed in each stimulus.

The stimuli were presented in a random order and remained on the tablet screen until the participant responded.

Cognitive assessment

The Montreal Cognitive Assessment (MoCA30 was used to assess global cognition to identify the presence of cognitive impairment. The total score can range between 0 and 30, and higher scores indicate better performance. In the present study, a total score was used for the estimation of general cognitive abilities.

Dementia Rating Scale-2 (DRS-233) was used to assess cognitive performance across the whole range of cognitive domains, including five subscales: attention (37 points possible), initiation/perseveration (37 points possible), construction (6 points possible), conceptualization (39 points possible), and memory (25 points possible). The overall total score is derived from all five subscales (144 points possible). In the present analysis, we used only the global score in DRS-2.

Frontal Assessment Battery (FAB33 was used as a brief screening tool of executive functions. It consists of six subtests assessing conceptualization, mental flexibility, motor programming, sensitivity to interference, inhibitory control, and environmental autonomy. Each subtest is scored from 0 to 3, yielding a total score ranging from 0 to 18, with higher scores indicating better executive functioning.

Image acquisition and volumetry

Magnetic resonance images were acquired using a 3.0 T machine (Ingenia Philips Medical System, Eindhoven, Netherlands). Brain MRI protocol contained sequences: 3-dimensional fluid attenuated inversion recovery FLAIR, 3-dimensional T1-weighted images, 2-dimensional T2-weighted images, 3-dimensional susceptibility weighted imaging SWI, diffusion weighted imaging DWI, perfusion arterial spin labelling ASL. The high-resolution 3D T1wi turbo field echo (TFE) were acquired in the axial plane (TR/TE 8.3/3.8 ms, matrix 240 × 220, voxel size 1 × 1 × 1 mm). The T1 images were pre - processed with FreeSurfer 6.0.0 (FS, https://surfer.nmr.mgh.harvard.edu/). We inspected visually the outputs from FS to reveal any defects in image registration to standard space and to check for imperfections in tissue segmentation. We eventually ran the preprocessing repeatedly with manual preregistration to obtain valid outputs. The following analyses were based on atlases built into FS: the Desikan-Killiany et al.35 and Destrieux et al. 36. The FS automatically outputs volumes of predefined regions provided by the atlases. Regions of interest, including cortical and subcortical structures, were chosen based on the previous body of literature relevant to emotion processing in PD and neural correlates14. Two patients with PD did not have MRI data.

Statistical analysis

The Mann–Whitney U test was used to compare differences in demographic, clinical and volumetric variables between the two clinical groups (HC vs. PD). We utilized repeated measures ANOVA with emotion modality type as a within factor (Facial vs. Bodily) and group as a between factor (HC vs. PD). We used the partial eta coefficient (ηp2) as an indicator of effect size. The strength of the relationship to demographics, clinical characteristics, cognition, and volume of several brain regions relevant to emotion processing was analysed with the Spearman coefficient. To address the increased risk of false-positive findings arising from the large number of correlations computed across the ER-40, BR-40 scores, and regional volumetric measures, we appliedthe Benjamini–Hochberg false discovery rate (FDR) procedure. Only correlations that remained significant after FDR adjustment were interpreted in the analyses.

Results

Behavioral results: emotion type

Repeated measures ANOVA (Fig. 2) showed a significant effect of type (F(1, 47) = 39.345, p < 0.001, ηp2 = 0.456). Emotion recognition was easier from bodies than from faces in both groups. The significant effect of the group suggested overall lower performance in the PD group (F(1, 47) = 5.898, p = 0.019, ηp2 = 0.112). In addition, a significant interaction effect (F(1, 47) = 5.820, p = 0.020 ηp2 = 0.110) showed that PD patients had a deficit specifically in recognition of emotions from bodies (MHC = 34.16 vs. MPD = 30.64). Overall recognition of facial emotions was comparable with HC (MHC = 29.63 vs. MPD = 28.64).

Links to demographics, clinical characteristics, and cognition

In the PD group, ER-40 and BR-40 performance was strongly correlated (rs = 0.720, p < 0.001). BR-40 performance was negatively associated with age (rs = − 0.412, p = 0.041). We found no associations between disease duration and BR-40 (rs = − 0.155, p = 0.460), neither with ER-40 (rs = − 0.066, p = 0.755).

The MDS-UPDRS III (motor section) total score did not correlate with ER-40 performance (rs = − 0.222, p = 0.286), but a moderate negative association was found with BR-40 (rs = − 0.406, p = 0.044). In an exploratory analysis of individual MDS-UPDRS III subscores, the bradykinesia subscale was negatively associated with BR-40 performance (rs = − 0.469, p = 0.018). The axial subscore also showed a trend toward an association with BR-40 (rs = − 0.388, p = 0.055), though it did not reach statistical significance. These findings should be interpreted with caution given the exploratory nature of the analysis, the issue of multiple comparisons, and the limited sample size. Levodopa equivalent daily dose was not associated with emotion recognition performance [ER-40 (rs = 0.002, p = 0.993), BR-40 (rs = − 0.004, p = 0.999)]

MoCA performance was positively associated with both ER-40 (rs = 0.426, p = 0.034) as well as BR-40 (rs = 0.543, p = 0.005). The total score on the DRS-2 showed moderately strong associations in expected directions (worse cognitive performance with worse emotion recognition), but it was not significant (ER-40-rs = 0.363, p = 0.075 and BR-40-rs = 0.391 p = 0.053). The FAB was associated with performance on BR-40 (rs = 0.720, p < 0.001) but not with ER-40 (rs = 0.251, p = 0.248).

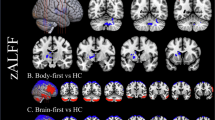

Links to brain volumetry: exploratory analysis

No statistically significant differences in the examined volumetric structures were found between HC and patients with PD (Supplementary Fig. 1). In the HC group, emotion recognition was not associated with any of the selected structures.

In the PD group, we examined links between specific regions of interest spanning from cortical to subcortical structures and performance on ER-40 and BR–40. An overview of the associations is provided in Table 2.

We report only the Spearman correlations that remained significant after applying the Benjamini–Hochberg false discovery rate correction.

Better performance on facial emotion recognition was associated with larger left cerebellum white matter (rs = 0.539, p = 0.049), left cerebellum cortex (rs = 0.516, p = 0.049), left hippocampus (rs = 0.612, p = 0.021), and left accumbens area (rs = 0.699, p = 0.006). A similar pattern of results was observed in right cerebellum white matter (rs = 0.541, p = 0.049), right hippocampus (rs = 0.642, p = 0.014), and right cerebellum cortex (rs = 0.529, p = 0.049).

Body emotion recognition was positively associated with the volume of left cerebellum white matter (rs = 0.528, p = 0.049), left hippocampus (rs = 0.573, p = 0.040), left accumbens area (rs = 0.640, p = 0.014). We also found associations with the volume of right cerebellar white matter (rs = 0.527, p = 0.049), right putamen (rs = 0.513, p = 0.049), right hippocampus (rs = 0.704, p = 0.006), and right amygdala (rs = 0.516, p = 0.049).

Discussion

This study provides new evidence of modality-specific emotion recognition deficits in individuals with PD, emphasizing that difficulties in social cognition may vary significantly depending on the type of emotional stimulus. In contrast to much of the existing literature6,8, which primarily documents facial emotion recognition deficits in PD, our findings demonstrate a more pronounced difficulty recognizing emotions from body postures compared to facial emotions. Although patients still recognized emotions from bodies slightly better than from faces overall, the relative impairment compared with healthy controls was larger for bodily expressions. This indicates that bodily cues may pose a greater cognitive and sensorimotor challenge for individuals with PD. Faces are typically considered the primary source of emotional communication36 due to their direct links between facial musculature and internal emotional states37, bodily expressions often carry more ambiguous and context-dependent cues38,39.

The significant interaction between group and modality supports the interpretation that PD-related deficits are particularly evident when decoding emotion from bodies rather than faces. This finding adds a valuable dimension to current models of affective processing in PD and underlines the importance of evaluating multiple modalities when assessing social cognitive function in neurodegenerative disorders.

Our findings are consistent with the embodied simulation theory40,41,42, which posits that emotion recognition depends on internal somatosensory and motor representations that are activated by perceiving emotional expressions in others. In PD, impairments in motor systems may compromise the ability to simulate observed bodily states, leading to deficits in emotion recognition, especially from postures. The observed correlation between poorer body emotion recognition and higher bradykinesia scores provides empirical support for this interpretation, suggesting that slowed or impaired motor functioning directly affects embodied simulation processes. Furthermore, the absence of associations with disease duration or levodopa dosage indicates that these deficits are not simply due to general disease progression or dopaminergic medication effects but may instead reflect specific disruptions in motor-affective integration. These results contribute to an expanding body of evidence that not only positions the motor system as an executor of movement, but also as a key contributor to social cognitive processes.

Cognitive functioning correlated with emotion recognition abilities. We found positive associations between performance on both emotion recognition tasks and global cognition, particularly measured by the MoCA. Moreover, performance on the FAB was positively correlated with recognition of bodily expressions, indicating that frontal–executive functioning is closely linked to the processing of emotion from body cues. This suggests that accurate interpretation of bodily affect may depend even more strongly on frontal control systems than facial emotion recognition, which may rely relatively more on posterior visual regions. This finding aligns with prior research4,43,44 indicating that emotion recognition depends not only on sensorimotor processes, but also on higher-order cognitive functions, including attention, memory, and executive control. As PD progresses and neurodegeneration extends to cortical and subcortical structures involved in cognition, social cognitive tasks such as emotion recognition may be increasingly compromised.

Depressive symptoms can affect recognition of emotions, particularly those associated with negative stimuli. Although clinically significant depression in our cohort in PD patients was excluded, the presence of mild symptoms may have contributed to reduced performance, representing a potential confounding factor.

The exploratory neuroimaging analysis revealed additional insights into neural correlates of emotion recognition in PD. Volumetric data linked better emotion recognition performance (both facial and bodily) to structural integrity in the hippocampus, left nucleus accumbens, cerebellar white matter. Specifically, facial emotion recognition was additionally associated with cerebellar cortical volume, whereas bodily emotion recognition was associated with the volumes of the right putamen and right amygdala. The hippocampus and accumbens are components of the mesolimbic system, a network crucial for emotional processing and motivation45. The consistent associations across both facial and bodily tasks suggest that these regions support domain-general aspects of emotion recognition in PD.

Of particular novelty is the association between cerebellar white matter volume and performance in both emotion recognition modalities. While the cerebellum has long been considered primarily a motor structure, recent evidence suggests that the posterior cerebellum plays a key role in social cognition, including emotion processing19. A recent neuroimaging meta-analysis also showed increased activation of the posterior cerebellum associated with social perception processes46. Our results add to this emerging literature and, to our knowledge, represent the first demonstration of a relationship between cerebellar white matter and emotion recognition in PD. This finding supports the notion that the cerebellum may compensate for deficits in cortical and basal ganglia circuits involved in emotion decoding, particularly in tasks that demand integration of sensorimotor and affective information.

We also observed a selective association between bodily emotion recognition and the right putamen, which was not found for facial emotions or for other basal ganglia structures. Given the putamen’s involvement in motor control and sensory integration47, its role in decoding emotional cues from body postures is reasonable to hypothesize. Prior functional imaging studies48 have shown that emotional body movements engage the putamen, and that dopaminergic deficits in this region reduce the brain’s ability to interpret affective gestures. The absence of a similar relationship with facial emotion recognition underscores the modality-specific contributions of different neural circuits in social cognition.

Interestingly, only the right amygdala showed a significant association with emotion recognition from body expressions, whereas associations with facial expressions were weak and did not reach statistical significance. This lateralized effect may reflect the amygdala’s preferential role in processing contextually rich, dynamic emotional cues, such as those conveyed through posture and movement.

Limitations

The findings of the present study should be interpreted considering several limitations. First, the sample size was relatively small, which restricted the use of more complex statistical approaches and may have reduced the robustness of the results. Our analyses of the associations between volume of regions of interest and emotion recognition performance were exploratory in nature. Given the large number of pairwise correlations computed, there is a possibility that some observed associations may be spurious due to type I error. Some relatively strong coefficients might therefore be biased. Future studies with larger and independent samples are needed to replicate these findings and may yield more conservative and precise estimates of the observed effects. In addition, larger samples are needed for applying more complex statistical approaches such as linear mixed models, that can account for subject-level and item-level variability. We also acknowledge that elevated depressive symptoms in the PD group could have affected emotion recognition performance, potentially confounding the observed associations and limiting the specificity of our findings.

In this study, we focused primarily on brain structures that demonstrated consistent, robust, and bilateral associations with emotion recognition performance. The potential involvement of other regions should be investigated in future research. Moreover, we acknowledge that volumetric measures of isolated CNS structures may not fully account for the observed impairments. Our study did not assess functional alterations or examine large-scale brain networks, which likely interact dynamically to support emotion recognition processes. Further investigations incorporating functional connectivity and network-level analyses are warranted to provide a more comprehensive understanding of these mechanisms.

Conclusion

This study examined emotion recognition impairments in PD, highlighting a modality-specific deficit in recognizing emotions from body postures. While both facial and bodily emotion recognition were reduced in PD patients compared to HC, the impairment was more pronounced for bodily cues. These findings challenge earlier reports emphasizing facial emotion deficits and underscore the importance of task modality and complexity when assessing social cognition in PD. Emotion recognition from facial expressions and body postures was associated with the volumetric characteristics of the hippocampus, left nucleus accumbens and cerebellar white matter.

Structural neuroimaging also revealed distinct neural correlates for each modality, with body emotion recognition particularly associated with the right putamen, and right amygdala, while facial emotion recognition showed links with the cerebellar cortex. The involvement of the cerebellum and basal ganglia structures is in accordance with embodied simulation theory, suggesting that impaired motor processing and somatosensory integration contribute to difficulties in emotional perception. Moreover, the association between bradykinesia and body emotion recognition further supports the motor-cognitive interplay in PD.

These results emphasize the need for multimodal assessment approaches in PD and suggest that deficits in social cognition are not uniform but depend on the type of emotional information and underlying neural integrity. Future research should further investigate compensatory mechanisms and explore interventions aimed at enhancing emotion recognition to improve social functioning and quality of life in individuals with PD.

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding authors on reasonable request.

References

Bloem, B. R., Okun, M. S. & Klein, C. Parkinson’s disease. Lancet 397 (10291), 2284–2303. https://doi.org/10.1016/S0140-6736(21)00218-X (2021).

Poewe, W. et al. Parkinson disease. Nat. Rev. Dis. Primer. 3 (1), 17013. https://doi.org/10.1038/nrdp.2017.13 (2017).

Enrici, I. et al. Emotion processing in Parkinson’s disease: A three-level study on recognition, representation, and regulation. Tamietto M, ed. PLoS One. 10(6), e0131470. https://doi.org/10.1371/journal.pone.0131470 (2015).

Argaud, S., Vérin, M., Sauleau, P. & Grandjean, D. Facial emotion recognition in Parkinson’s disease: A review and new hypotheses. Mov. Disord. 33 (4), 554–567. https://doi.org/10.1002/mds.27305 (2018).

Bora, E., Walterfang, M. & Velakoulis, D. Theory of mind in Parkinson’s disease: A meta-analysis. Behav. Brain Res. 292, 515–520. https://doi.org/10.1016/j.bbr.2015.07.012 (2015).

Péron, J., Dondaine, T., Le Jeune, F., Grandjean, D. & Vérin, M. Emotional processing in Parkinson’s disease: A systematic review. Mov. Disord. 27 (2), 186–199. https://doi.org/10.1002/mds.24025 (2012).

Coundouris, S. P., Adams, A. G., Grainger, S. A. & Henry, J. D. Social perceptual function in Parkinson’s disease: A meta-analysis. Neurosci. Biobehav. Rev. 104, 255–267. https://doi.org/10.1016/j.neubiorev.2019.07.011 (2019).

Gray, H. M. & Tickle-Degnen, L. A meta-analysis of performance on emotion recognition tasks in Parkinson’s disease. Neuropsychology 24 (2), 176–191. https://doi.org/10.1037/a0018104 (2010).

De Risi, M., Olivola, E., Di Gennaro, G. & Modugno, N. Facial emotion recognition in Parkinson’s disease: methodological, clinical, and pathophysiological factors. In Diagnosis and Management in Parkinson’s Disease 91–106. https://doi.org/10.1016/B978-0-12-815946-0.00006-5 (Elsevier, 2020).

Kosutzka, Z. et al. Neurocognitive predictors of understanding of intentions in Parkinson disease. J. Geriatr. Psychiatry Neurol. 32 (4), 178–185. https://doi.org/10.1177/0891988719841727 (2019).

Narme, P., Bonnet, A. M., Dubois, B. & Chaby, L. Understanding facial emotion perception in parkinson’s disease: the role of configural processing. Neuropsychologia 49 (12), 3295–3302. https://doi.org/10.1016/j.neuropsychologia.2011.08.002 (2011).

Lawrence, A. D., Goerendt, I. K. & Brooks, D. J. Impaired recognition of facial expressions of anger in Parkinson’s disease patients acutely withdrawn from dopamine replacement therapy. Neuropsychologia 45 (1), 65–74. https://doi.org/10.1016/j.neuropsychologia.2006.04.016 (2007).

Pell, M. D. & Leonard, C. L. Facial expression decoding in early Parkinson’s disease. Cogn. Brain Res. 23 (2–3), 327–340. https://doi.org/10.1016/j.cogbrainres.2004.11.004 (2005).

De Gelder, B. Towards the neurobiology of emotional body language. Nat. Rev. Neurosci. 7 (3), 242–249. https://doi.org/10.1038/nrn1872 (2006).

De Gelder, B., De Borst, A. W. & Watson, R. The perception of emotion in body expressions. WIREs Cogn. Sci. 6 (2), 149–158. https://doi.org/10.1002/wcs.1335 (2015).

Iacoboni, M. & Dapretto, M. The mirror neuron system and the consequences of its dysfunction. Nat. Rev. Neurosci. 7 (12), 942–951. https://doi.org/10.1038/nrn2024 (2006).

Bellot, E. et al. Blunted emotion judgments of body movements in Parkinson’s disease. Sci. Rep. 11 (1), 18575. https://doi.org/10.1038/s41598-021-97788-1 (2021).

Fusar-Poli, P. et al. Functional atlas of emotional faces processing: a voxel-based meta-analysis of 105 functional magnetic resonance imaging studies. J. Psychiatry Neurosci. JPN. 34 (6), 418–432 (2009).

Van Overwalle, F. et al. Consensus paper: cerebellum and social cognition. Cerebellum 19 (6), 833–868. https://doi.org/10.1007/s12311-020-01155-1 (2020).

Moonen, A. J. H., Wijers, A., Dujardin, K. & Leentjens, A. F. G. Neurobiological correlates of emotional processing in parkinson’s disease: A systematic review of experimental studies. J. Psychosom. Res. 100, 65–76. https://doi.org/10.1016/j.jpsychores.2017.07.009 (2017).

Pessoa, L. On the relationship between emotion and cognition. Nat. Rev. Neurosci. 9 (2), 148–158. https://doi.org/10.1038/nrn2317 (2008).

Moore, R. Y. Organization of midbrain dopamine systems and the pathophysiology of parkinson’s disease. Parkinsonism Relat. Disord. 9, 65–71. https://doi.org/10.1016/S1353-8020(03)00063-4 (2003).

Li, T., Le, W. & Jankovic, J. Linking the cerebellum to Parkinson disease: an update. Nat. Rev. Neurol. 19 (11), 645–654. https://doi.org/10.1038/s41582-023-00874-3 (2023).

Postuma, R. B. et al. MDS clinical diagnostic criteria for Parkinson’s disease. Mov. Disord Off J. Mov. Disord Soc. 30 (12), 1591–1601. https://doi.org/10.1002/mds.26424 (2015).

Jost, S. T. et al. Levodopa dose equivalency in Parkinson’s disease: updated systematic review and proposals. Mov. Disord. 38 (7), 1236–1252. https://doi.org/10.1002/mds.29410 (2023).

Goetz, C. G. et al. Movement disorder society‐sponsored revision of the unified parkinson’s disease rating scale (MDS‐UPDRS): Process, format, and clinimetric testing plan. Mov. Disord. 22 (1), 41–47. https://doi.org/10.1002/mds.21198 (2007).

Skorvanek, M., Kosutzka, Z. & Valkovič, P. Validation of the Slovak version of the movement disorder society-unified Parkinson’s disease rating scale (MDS-UPDRS). Cesk. Slov. Neurol. N. 76, 463–468 (2013).

Stebbins, G. T. et al. How to identify tremor dominant and postural instability/gait difficulty groups with the movement disorder society unified parkinson’s disease rating scale: comparison with the unified parkinson’s disease rating scale. Mov. Disord. 28 (5), 668–670. https://doi.org/10.1002/mds.25383 (2013).

De Gelder, B., Van Den Stock, J. & The Bodily Expressive Action Stimulus Test (BEAST). Construction and validation of a stimulus basis for measuring perception of whole body expression of emotions. Front. Psychol. 2 https://doi.org/10.3389/fpsyg.2011.00181 (2011).

Nasreddine, Z. S. et al. The montreal cognitive assessment, moca: A brief screening tool for mild cognitive impairment. J. Am. Geriatr. Soc. 53 (4), 695–699. https://doi.org/10.1111/j.1532-5415.2005.53221.x (2005).

Jurica, P. J., Leitten, C. L. & Mattis, S. DRS-2: Dementia Rating Scale-2: Professional Manual (Psychological Assessment Resources, 2001).

Boleková, V. et al. Verification of the psychometric properties of the Slovak version of the dementia rating Scale-2 in a healthy population and in patients with Parkinson‘s disease—a pilot study. Čes Slov. Neurol. Neurochir. 86/119 (3). https://doi.org/10.48095/cccsnn2023185 (2023).

Abrahámová, M. et al. Normative data for the Slovak version of the Frontal Assessment Battery (FAB). Appl. Neuropsychol. Adult. 29(2), 273–278. https://doi.org/10.1080/23279095.2020.1748031 (2022).

Desikan, R. S. et al. An automated labelling system for subdividing the human cerebral cortex on MRI scans into gyral based regions of interest. NeuroImage 31 (3), 968–980. https://doi.org/10.1016/j.neuroimage.2006.01.021 (2006).

Destrieux, C., Fischl, B., Dale, A. & Halgren, E. Automatic parcellation of human cortical gyri and sulci using standard anatomical nomenclature. Neuroimage 53 (1), 1–15. https://doi.org/10.1016/j.neuroimage.2010.06.010 (2010).

Barrett, L. F., Adolphs, R., Marsella, S., Martinez, A. M. & Pollak, S. D. Emotional expressions reconsidered: challenges to inferring emotion from human facial movements. Psychol. Sci. Public. Interest. 20 (1), 1–68. https://doi.org/10.1177/1529100619832930 (2019).

Krumhuber, E. G., Skora, L. I., Hill, H. C. H. & Lander, K. The role of facial movements in emotion recognition. Nat. Rev. Psychol. 2 (5), 283–296. https://doi.org/10.1038/s44159-023-00172-1 (2023).

Barrett, L. F., Mesquita, B. & Gendron, M. Context in emotion perception. Curr. Dir. Psychol. Sci. 20 (5), 286–290. https://doi.org/10.1177/0963721411422522 (2011).

Kret, M. E. & de Gelder, B. Social context influences recognition of bodily expressions. Exp. Brain Res. 203 (1), 169–180. https://doi.org/10.1007/s00221-010-2220-8 (2010).

Goldman, A. & De Vignemont, F. Is social cognition embodied? Trends Cogn. Sci. 13 (4), 154–159. https://doi.org/10.1016/j.tics.2009.01.007 (2009).

Neal, D. T. & Chartrand, T. L. Embodied emotion perception: amplifying and dampening facial feedback modulates emotion perception accuracy. Soc. Psychol. Personal Sci. 2 (6), 673–678. https://doi.org/10.1177/1948550611406138 (2011).

Gallese, V. Mirror neurons and the simulation theory of mind-reading. Trends Cogn. Sci. 2 (12), 493–501. https://doi.org/10.1016/S1364-6613(98)01262-5 (1998).

Dodich, A. et al. Deficits in emotion recognition and theory of Mind in parkinson’s disease patients with and without cognitive impairments. Front. Psychol. 13 https://doi.org/10.3389/fpsyg.2022.866809 (2022).

Ho, M. W. R. et al. Impairments in face discrimination and emotion recognition are related to aging and cognitive dysfunctions in parkinson’s disease with dementia. Sci. Rep. 10 (1), 4367. https://doi.org/10.1038/s41598-020-61310-w (2020).

LeDoux, J. E. Emotion circuits in the brain. Annu. Rev. Neurosci. 23, 155–184. https://doi.org/10.1146/annurev.neuro.23.1.155 (2000).

Arioli, M., Cattaneo, Z., Rusconi, M. L., Blandini, F. & Tettamanti, M. Action and emotion perception in parkinson’s disease: A neuroimaging meta-analysis. NeuroImage Clin. 35, 103031. https://doi.org/10.1016/j.nicl.2022.103031 (2022).

Kana, R. K. & Travers, B. G. Neural substrates of interpreting actions and emotions from body postures. Soc. Cogn. Affect. Neurosci. 7 (4), 446–456. https://doi.org/10.1093/scan/nsr022 (2012).

Lotze, M. et al. Reduced ventrolateral fMRI response during observation of emotional gestures related to the degree of dopaminergic impairment in Parkinson disease. J. Cogn. Neurosci. 21 (7), 1321–1331. https://doi.org/10.1162/jocn.2009.21087 (2009).

Funding

This study was supported by the Slovak Research and Development Agency (APVV-24-0369) and partly by the Startlab crowdfunding campaign Aby veda nebola bieda. We sincerely acknowledge and appreciate all contributions from donors.

Author information

Authors and Affiliations

Contributions

Project conception, design and organization Z.K., M.H., P.B., V.B.; participants recruitment Z.K., I.S.; clinical examinations Z.K., I.S., P.B., V.K., M.J., R.M.; data management, analysis, and interpretation Z.K., P.B., V.B.; statistical analysis M.H., Z.K., I.S.; drafting of manuscript P.B., M.H., Z.K., revising the manuscript critically for important intellectual content P.V. All authors read and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

The study protocol was approved by the ethics committee of the University Hospital Bratislava and complied with the ethical standards of the Helsinki Declaration of 1964 (2013 revision). All participants provided written informed consent prior to the study entry, including disclosure of the data obtained.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Brandoburova, P., Bolekova, V., Hajduk, M. et al. Emotion recognition from faces and bodies in Parkinson’s disease and its relationship to MRI-based brain volumetry. Sci Rep 16, 5841 (2026). https://doi.org/10.1038/s41598-026-35889-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-35889-5