Abstract

Though ideological differences have long been a ubiquitous feature of American politics, the rise of online news and social media has exacerbated divisions between groups. While existing research has documented how political preferences manifest online, relatively few studies have considered whether ideological divisions extend to discussions of foreign policy. We examine this question by analyzing nearly 2 million tweets about the war in Ukraine posted by Americans during the opening stages of the Russian invasion. We first categorize each tweet according to the user’s ideological leanings estimated by the network of political accounts they follow. Then, we apply a natural language processing model specifically designed for short texts to classify the tweets into clusters that we hand code into substantive topics. We find that the topic distributions of conservative, moderate, and liberal users are substantively and statistically different. We further find that conservatives are more likely to spread some form of misinformation and that liberals are more likely to express support for Ukraine. Our paper concludes with a discussion of the implications of our findings for the conduct of U.S. foreign policy.

Similar content being viewed by others

Introduction

Ideological differences of opinion are a ubiquitous feature of U.S. politics (Baldassarri and Page, 2021; Fiorina and Abrams, 2008; Iyengar and Westwood, 2015; Iyengar et al., 2019; Levin et al., 2021; Sides and Hopkins, 2015; Wood and Porter, 2019). In contemporary politics, Americans are deeply divided on a host of domestic issues including policing, immigration, and COVID-19 (Dias and Lelkes, 2022; Druckman et al., 2021). Recent studies have found that these divisions also extend to foreign policy issues. For example, Americans disagree over nuclear weapons, climate change, the use of force, human rights, and multilateralism (Gelpi et al., 2009; Guisinger and Saunders, 2017; Milner and Tingley, 2015; Rathbun, 2007; Tomz and Weeks, 2020). Indeed, domestic political preferences appear to be remarkably resilient when it comes to foreign policy, among both elites and publics (Brutger, 2021; Jeong and Quirk, 2019; Kertzer et al., 2021; Myrick, 2021).

Prior research has attributed this domestic divide to the sharing of information within selective social networks that connect users with like-minded others (Carlson, 2019; Guess et al., 2021). Social media platforms facilitate the process of sorting users into ideological networks that limit individuals’ exposure to alternative political views. The increase in social media use has thus amplified concerns about divisions in the United States (Bail et al., 2018). However, most of these studies focus on political differences over domestic, not foreign, policy. Despite the abundance of evidence that domestic political preferences carry over into the foreign policy realm, we know surprisingly little about how people engage with foreign policy issues on social media platforms. Given that a majority of Americans consume, access, and discuss politics online, including on social media (Guay and Ecker, 2022; Mitchell, 2020), it is particularly pressing to understand how these dynamics unfold (Porter and Wood, 2021; Santos et al., 2021).

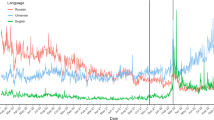

We investigate public reactions on social media in response to the war in Ukraine, a highly salient political issue that is nonetheless undeniably international in nature. We examine an original dataset of nearly 2 million Twitter posts (known as “tweets”) to trace how political discourse about Ukraine spreads within ideological social networks on social media. Our sample consists of tweets about the war in Ukraine posted during the opening days of the invasion (February 24–28, 2022), before political attitudes hardened. Of the universe of tweets posted during this period, we identify those that include one of our pre-defined keywords. We subsequently categorize each tweet according to the user’s ideology-conservative, liberal, or moderate-by looking at the network of political users that each individual follows. We classify users in this way to explore whether individuals sharing political information within different social networks engage in divergent political discussions. Then, we apply a natural language processing (NLP) model tailored for short texts (Angelov, 2020) to classify our large collection of social media posts. Applied to our data, the NLP model groups together tweets with similar words and meanings into clusters to facilitate analysis. The model assumes that semantically related tweets indicate an underlying topic. Finally, we hand-code the content of each cluster into a set of aggregate substantive topics.

The war in Ukraine is an especially interesting case because American elected officials have remained largely unified in their response to the invasion. In his March 1st, 2022 State of the Union speech, President Joe Biden made this point explicit: “[Russian President Vladimir Putin] thought he could divide us at home, in this chamber and in this nation...But Putin was wrong. We are ready. We are united, and that’s what we did. We stayed united.” Members of Congress from both sides of the aisle sported the blue and yellow colors of the Ukrainian flag in support (Amiri, 2022).

Yet the issue seems more divisive for American citizens. American liberals, conservatives, and moderates ostensibly think of Russia, Ukraine, and the conflict in fundamentally different terms. At its core is President Donald Trump’s public esteem for Putin. For example, the day after the invasion began, Trump praised Putin as a “very savvy” leader who made a “genius” move. In a February 26-March 1, 2022 Economist/YouGov poll of 1500 Americans, only 54% of conservatives reported having a “very unfavorable” view of Putin, compared to 70% of liberals and 62% of moderates. These views also translate to policy evaluations: only 19% of conservatives surveyed in this poll approved of the Biden administration’s handling of the Ukraine crisis, a remarkably low share compared to liberals (68%) and moderates (49%).

Hypotheses

Our main research hypotheses evaluate whether social media users have different political conversations about the war in Ukraine within their respective ideological social networks. Our null hypothesis is that there are no differences across ideological groups. Given the nature of political preferences and partisan divisions in American politics, our first alternative hypothesis is that we expect to find that liberals and conservatives have different discussions about the same international issue (H1). That is, the general distribution of topics discussed related to the war in Ukraine will differ between liberal and conservative Twitter users. In addition to examining users with clear ideological leanings, we examine how moderates respond. Recent research shows that a large portion of the American public has genuinely centrist views that make them political moderates (Fowler et al., 2022). These findings suggest that moderates discuss different aspects of a given international issue than conservatives (H2a) or liberals (H2b) would. As applied to our case, we would expect moderate Twitter users to post about a different general distribution of topics broadly related to the war in Ukraine than either conservatives or liberals.

Next, we consider the specific substantive content of social media discourse. First, we look at the sharing of misinformation within ideological networks (Rathje et al., 2023). Recent work shows that conservatives are more likely than liberals to spread false or misleading news on social media (Garrett and Bond, 2021; Guess et al., 2019; Grinberg et al., 2019). We, therefore, expect conservatives to be more likely to share misinformation about the war in Ukraine (H3). Finally, we investigate whether support for Ukraine differed according to users’ ideological leanings. Past research has found that liberals are more supportive of international allies, humanitarian intervention, and foreign aid than conservatives (Kertzer et al., 2021; Mattes and Weeks, 2019; Milner and Tingley, 2015). Although Republican elected officials joined Democrats in condemning the Russian invasion, some prominent conservative voices and media outlets offered more ambiguous positions. And so, we hypothesize that liberals are more likely than conservatives to express support for Ukraine (H4).

Materials and methods

Data collection

We collect the universe of tweets discussing the war in Ukraine using a broad keyword search via the Twitter Application Programming Interface (API). We download tweets posted during February 24–28, 2022, and contain at least one keyword (Ukraine, Ukraina, Ukrainian, Ukrainians, Kyiv, or Kiev), resulting in about 6.5 million tweets collected. We chose this period because it marks the first 5 days of the war, including Russia’s initial invasion. This strategy allows us to examine public responses to the war as the opinions were still forming. We pre-process the text of the tweets by removing hyperlinks, mentions, extra space, and new lines. Then, we use cld3, a language identification package released by Google to detect the language of tweets and select only American English tweets. Using this method, we retain an American English corpus containing about 4.4 million tweets.

Our sample is likely broadly representative of American political discourse on Twitter for three reasons. First, we use American English to refine our sample. Individuals who post in American English participate in the broader American online political discourse on a given subject, even if they are not voters. Second, by limiting our analysis to those accounts that post in American English and follow at least one political account, we are limiting our sample to users that are at the very least participating in the political conversations about Ukraine, even if they are not doing so on an active or permanent basis. Finally, as a robustness check, we download and analyze the self-reported geographic location of all the tweets in our sample. 0.06% of our sample (2648 Tweets)—a tiny fraction of our overall sample—did not originate in the United States, according to this user-reported metric. However, we still retain these users because, as discussed, these individuals may still participate in relevant American political discourse, even if they are not located in America. Some of these users may be traveling abroad overseas. Others may be living overseas permanently. Indeed, there are nearly 3 million eligible American voters living abroad. Regardless, we keep these tweets in the sample because the content of the tweet was a part of the broader political conversation we are interested in analyzing.

As a further validation check of whether these accounts belong to Americans or not, we fit a structural topic model on a random sample of tweets included in our in the main analysis (in sample) and excluded (out of sample). We find that in-sample tweets have a higher proportion of topics related to American politics and domestic concerns than out-sample tweets. We report the full results of the validation in Appendix C.

Measuring user ideology

Next, we estimate the political leaning of each tweet by estimating the ideology of the user who posted it. Each tweet in our sample contains meta information that includes usernames. We estimate the political ideology of Twitter users based on who they follow. We begin by creating a list of 75 conservative and 75 liberal media accounts, including political commentators, talk show hosts, and journalists. We limit this list to popular accounts with a blue checkmark verification status on Twitter with more than 100,000 but fewer than 1 million followers. The data collection took place before the Twitter policy change that made the blue checkmark purchasable. This restriction ensures that our list contains mainstream accounts with clear ideologies. We evaluate the ideology of users in our data by matching the number of media accounts they follow and taking the difference between the number of conservative- and liberal-leaning accounts they follow. Formally, we define a user’s partisan score as \(\frac{L-C}{L+C}\), where L and C represent the number of liberal- or conservative-leaning media accounts they follow.

We classify users with a score of less than −0.5 as conservative, and those greater than 0.5 as liberal. We consider users with scores between −0.5 and 0.5 as moderate. We then connect each tweet to the user’s ideology and retain only tweets for which we could identify the users’ ideology. This process gives us 1.8 million tweets (almost half of the English corpus) that we use in the main analysis. Because the list of media accounts are U.S. based, users who follow them and thus are included in our analysis also are likely to be Americans.

Our final sample contains 530,824 users and a corpus of 1,864,133 tweets. Of these, 38% of the tweets were posted by conservative-leaning accounts, 54% by liberals, and 8% by moderates. Table 1 reports summary statistics of our dataset. The median conservative and moderate user in our sample tweeted twice while the median liberal user tweeted once. The most active conservative user posted 828 tweets, the most active moderate user 631 times, and the most active liberal user 2044 times.

Clustering Tweets and aggregate topic labeling

We apply Top2Vec, an unsupervised clustering algorithm, to classify our large collection of social media posts (Angelov, 2020). This method is especially well suited to analyzing short political texts (Lin and Nomikos, n.d.). Compared to other topic modeling methods like Latent Dirichlet Allocation (Blei et al., 2003), our method does not require pre-determination of the number of topics or tokenization and stemming of the documents. Instead, the model retains the order of words when learning fixed-length distributed vector representations of documents (Le and Tomas, 2014) with neural networks. When the learning is done, documents that are semantically similar are supposed to be close in the vector space. The model assumes that semantically similar documents indicate an underlying topic. Therefore, it maps these document vectors to a lower-dimensional space using Uniform Manifold Approximation and Projection (McInnes and Healy, 2018) and automatically find dense areas in that space using a Hierarchical Density-Based Clustering technique (McInnes et al., 2017). Documents in the same dense areas are assigned to the same cluster, resulting in 6171 clusters of tweets.

In order to facilitate further analysis, we aggregate the clusters into seven meaningful categories, which we call substantive topics: “anti-media,” “domestic politics,” “foreign policy,” “misinformation,” “news,” “Russia discourse,” and “Ukraine Support.” For example, tweets using words such as “lord,” “amen,” “Jesus,” and “God” were grouped together, and upon further analysis, it was determined that such tweets were generally sent by people expressing their sympathy and support for Ukraine.

We implemented the following procedure of aggregate topic labeling and validation. First, we review tweets from the 100 largest clusters to identify qualitatively the seven substantive topics. We describe our coding protocol for these categories in full detail in Appendix E. Second, we train two RAs to aggregate all clusters into substantive topics. Each tweet has a matching score indicating how far it is from the center of its assigned cluster. The higher the score, the more representative the tweet is of the whole group. They read the ten most representative tweets from each cluster and determine which category that topic belongs to. Third, if none of the categories is applicable, we exclude that cluster from our analysis. This results in dropping about 34% of the tweets from the substantive topic analysis. Fourth, We compare two RAs’ labels and retain those they both agree on. Fifth, for clusters for which two RAs choose different labels, the three authors code them again and adopt the topic coding a majority of the authors agree upon. Finally, since it indicates valence, we validate the Ukraine support measure by hand-coding 500 randomly selected tweets of those our NLP model classified as supportive of Ukraine. We find that our NLP model accurately predicted the valence of 90.8% of the tweets.

Limitations

Although we designed our study to uncover differences between ideological groups related to the war in Ukraine, we address three potential limitations before presenting our results. First, as with any study using social media data, our sample may not be representative of the general public. Given that we only examine those who follow certain media accounts to receive updates on political news, our sample may be more politically engaged and ideologically extreme than an average social media user. Although these social media users are not representative of the general public, it is likely that these voices are more likely to affect politics in the real world. Politically engaged voters are the most likely to donate to and volunteer for campaigns. Voters who are politically active on social media are also likely active in real life discussion about politics. Existing research takes a similar approach in generalizing findings from a Twitter sample to the general U.S. population (Barberá et al., 2019; Boucher and Thies, 2019).

Second, we operationalize ideology using an individual user’s network. However, our strategy is not immune to the possibility of failing to capture the latent ideological content of a user or their tweets. Prior research finds that social media users tend to follow and interact with others who mirror their ideological preferences and lends support for our measurement strategy (Mosleh et al., 2021).

We further validate this measure in three ways, which we report in full in the Appendix B. To begin with, we collect elected US officials’ Twitter handles and use our list of media accounts to estimate their party affiliations. Assuming that Republicans are conservative and Democrats are liberal, we find that elected officials’ party affiliations perfectly match the ideological types of the news accounts they follow. Democratic officials follow more liberal-leaning accounts than conservative-leaning accounts; Republican officials behave the opposite. Next, we download the universe of tweets that include one of two ideological salient hashtags during the run-up to the 2022 U.S. midterm elections (January–November 2022): (1) #voteprochoice, a liberal hashtag used by liberals to encourage fellow liberals to vote for pro-choice candidates in the 2022 midterm elections; and (2) #voteprolife, a conservative hashtag used by conservatives to encouraging fellow conservatives to vote for pro-life candidates in the 2022 midterm elections. This validation shows us that our measure performs as expected. Of the 14,313 tweets that use the #voteprochoice hashtag, our measure can identify the ideology of almost 60% of tweets. Among them, 8157 tweets (97.9%) are by accounts coded as liberal. Similarly, of the 5134 tweets that use the #voteprolife hashtag, our measure can identify the ideology of about 50% of tweets. Among them, 2253 tweets (94.6%) are by accounts that code as conservative. Finally, we also conduct a survey on Amazon MTurk to validate our measurement strategy. In the survey, we collect information on subjects’ political participation and Twitter usernames. This data shows that subjects’ voting records and ideologies are correlated with the types of news accounts they follow on social media. Subjects who voted for Biden in 2020 or defined themselves as Democrats usually follow more liberal-leaning accounts than conservative-leaning accounts on our list.

Third, our process of aggregating tweets into substantive topics through human labeling and supervised machine learning necessarily introduces some degree of imprecision and human error. Though we mitigate this concern by using an NLP model to classify the initial set of Twitter data into clusters, we cannot fully resolve this issue. In order to overcome this limitation, our substantive analysis focuses primarily on the issues that our model performed well with rather than those it did not. For example, our model performed well in categorizing support for Ukraine but less well in categorizing support for Russia. We adjust our analysis accordingly to emphasize that social media posts offered general discourse about Russia rather than specific support.

Results

In line with the main hypothesis, we find that liberals, moderates, and conservatives post about different topics related to the War in Ukraine. Table 2 lists the five most frequently discussed topic clusters of tweets by ideology group. There is no overlap in the topics discussed between liberals and conservatives. Moderates have only one topic in common with either side. Moreover, large differences exist in the proportion of tweets related to topics one group considers important but others do not. For example, the most discussed cluster of tweets for liberals referred to the impeachment of Donald Trump, accounting for about 1% of liberal tweets compared to fewer than 0.1% of conservative tweets, an order of magnitude less. While it was also the most discussed cluster of tweets for moderates, approximately 0.4% of all their tweets referred to the Trump impeachment, a substantially larger proportion than that of conservatives yet a substantially smaller than that of liberals.

For a broader view, we have included in the Appendix Fig. A1, which plots the distributions of the 50 most frequently discussed clusters of tweets according to the user’s ideological leaning. Each row represents a different cluster with a label hand-coded by the researcher after examining the ten most relevant tweets in each cluster. The figure suggests that the general distribution of the conversation topics differs among conservative and liberal tweets. Although these clusters do not represent the entire universe of tweets in our analysis, they offers an illustrative snapshot of the differences between conservatives and liberals.

In addition to plotting the general distribution of frequent clusters, we conduct a formal test to determine if these distributions are statistically different from each other (H1). We test the hypothesis with a Chi-squared test, which shows that these clusters of tweets are likely to come from substantively and statistically distinct distributions (\({\chi }_{6,171}^{2}=215,902,p < 0.001\)). This finding is consistent with H1, which posits that conservative and liberal users generally post about different topics related to the war in Ukraine.

H2 expects different topics of discussion between moderates and conservatives (H2a) and between moderates and liberals (H2b). A set of two Chi-Squared tests, each of which compares a pair of distributions between conservatives and moderates, and between liberals and moderates, shows that clusters of moderate tweets likely come from substantively and statistically distinct distributions compared to conservative Tweets (\({\chi }_{6,171}^{2}=67,852,p < 0.001\)) and liberal Tweets (\({\chi }_{6,171}^{2}=60,213,p < 0.001\)). These results support our hypotheses that moderates hold different conversations than conservatives (H2a) and liberals (H2b).

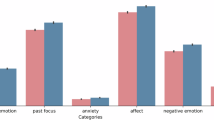

Our results also echo the findings in recent research on moderates that emphasize the true centrist nature of American moderates (Fowler et al., 2022). Figure 1 illustrates how moderates tend to fall in between liberals and conservatives in terms of the frequency with which certain topics are discussed. This gives us observational evidence of moderates’ centrist tendencies.

Next, we assess whether conservatives are more likely than liberals to discuss topics related to misinformation (H3). Figure A1 in the Appendix offers some suggestive evidence that supports this hypothesis. The most frequent clusters of tweets among conservative users related to conspiracy theories or misinformation, including discussions of “US biolabs” and anti-Semitic conspiracy theories that are used to justify Russia’s invasion.

To further test this hypothesis, we identify seven substantive topics related to the discussion surrounding the war in Ukraine and assign each cluster of tweets to one of them (we include the full coding protocol in Appendix E to this paper). Figure 1 visualizes the proportion of substantive topics discussed by three different ideology groups. Each color represents a different group (Conservative, Moderate, Liberal), while each set of three bars visualizes a substantive topic ("Ukraine Support,” “Misinformation,” etc.). The height of each bar reflects the magnitude of the proportion of each ideology’s tweets devoted to a given topic.

An examination of the proportion of tweets spreading some form of misinformation provides strong evidence in line with H3. As Fig. 1 shows, we categorize the percentage of misinformation tweets posted by conservative users (8.9%) as more than twice that of liberal users (4.1%). The results of a formal t-test comparing these two proportions confirm that the difference is statistically and substantively significant.

Our final hypothesis (H4) predicts that liberals are likelier than conservatives to tweet in support of Ukraine. Using the clusters produced by our NLP model, we categorize all tweets that are broadly supportive of Ukraine as Ukraine Support. These include clusters of tweets related to prayers for Ukraine or those lauding the bravery of Ukrainians.

Although support for Ukraine was the most frequently discussed topic in general (see Fig. 1), we find substantive and significant differences among the groups of users separated by their ideological leaning. We categorize 31.2% of all conservative tweets as supportive of Ukraine, compared to 42.2% of all liberal tweets and 35.3% of all moderate tweets.

Discussion

Our study presents evidence that American public discourse about the war in Ukraine on Twitter is divided along ideological dimensions. We document statistically and substantively different engagement patterns in political discussions between conservative and liberal social networks (H1). We also find that moderates discuss topics in ways distinct from those of conservatives (H2a) and liberals (H2b). An in-depth analysis reveals that nearly twice as large of a proportion of conservative tweets compared to liberal tweets contained some form of misinformation (H3). Our results further suggest that a greater proportion of discussion within liberal social media networks than conservative social media networks is devoted to supporting Ukraine, its people, or its leaders (H4). Tweets from moderates lie between the ideological poles for both misinformation and support for Ukraine.

Beyond our general hypotheses, we also find specific differences between the topics discussed ideological groups. As we highlighted in the results section above, both liberals and moderates most frequently mentioned the (first) Trump impeachment. The impeachment followed a formal inquiry by the House of Representatives into Trump leveraging US military aid to Ukraine to solicit interference by Ukrainian President Volodymyr Zelenskyy in the 2020 election in Trump’s favor. Less than 0.1% of conservative Tweets discussed the impeachment. Conversely, both conservatives and moderates both discussed Russian justifications for the invasion while liberals did not. For example, many moderates and conservatives mentioned the widely discredited idea that the United States supported a “coup” to overthrow the pro-Russian Ukrainian President Viktor Yanukovych in 2014. In reality, the Ukrainian parliament voted unanimously to remove Yanukovych following the widespread, popular Maidan protests (Yablokov, 2022).

Although our evidence suggests that there exist general differences between conservatives, moderates, and liberals, we also uncover some specific commonalities. For example, a similar proportion of Tweets from all three groups compared the crisis to the Cuban Missile Crisis. Perhaps most interestingly, all three groups also offered prayers to Ukraine. The topic appears to reflect a general sentiment among a certain segment of the American population to support Ukraine civilians separate from the political goals of the wars. More specifically, observers at the time noted how spiritual Americans from different traditions came together to offer prayers for Ukraine. Some of these Americans did this even while they expressed understanding for the Russian justification for invasion (Harrison Warren, 2022) This suggests that prayers—or spiritual support for Ukrainian civilians—is conceptually and practically distinct from support for Ukrainian political goals.

Conclusion

In this article, we show that conservatives, moderates, and liberals made Twitter posts about ideologically divergent types of topics in the early stages of the war in Ukraine. There are at least three reasons why these findings are important for understanding contemporary U.S. politics. First, social media discourse about an issue is likely to shape the development of future attitudes about that same issue. For example, in the case of the war in Ukraine, we would expect support for Ukraine to become even more divisive over time, a pattern supported by polling data. A recent Pew Research Center poll found that 49% of Republican respondents said that the U.S. was sending “too much” aid to Ukraine in April 2024 compared to 9% in March 2022. By contrast, only 16% of Democrats said that the U.S. was sending “too much” aid to Ukraine compared to 5% who said so in March 2022 (Wike et al., 2024).

Second, the evidence suggests that the differences in attitudes about the war in Ukraine are not limited to this issue but are rather driven by domestic ideological divides. Just as ideological social networks spread information about the war in Ukraine, they would also do the same for other foreign policy issues. Political attitudes about the war in Gaza reflect this growing divide between the three ideological groups. In a recent Gallup poll, 74% of Democrats reported being in favor of creating an independent Palestinian state as a solution to the ongoing conflict compared to 55% of independents and only 26% of Republicans (Jones, 2024).

Finally, while the results of the study suggest that foreign policy issues likely have very little appeal across the aisle, it might be possible for presidential and congressional candidates to make foreign policy-based appeals to moderates by changing their position. As we demonstrate, moderates care about different issues than either liberals or conservatives, offering an opportunity for politicians embracing those issues rather than those that might matter more for their own base, be it liberal or conservative.

Our findings also suggest a number of directions for future research. Our study documents the existence of ideological differences on a series of dimensions related to a salient foreign policy issue, including public support for a US ally. However, our design does not allow us to ascertain why support varies. Future work could disentangle these mechanisms in greater detail. At least three potential mechanisms are worth exploring. First, the difference in support may come from underlying policy preferences. Conservatives tend to be more sympathetic to military intervention and less supportive of economic aid than liberals (Milner and Tingley, 2015). The Biden administration’s emphasis on economic rather than military tools of statecraft may thus explain the difference in support for Ukraine. Second, conservatives might profess less support for Ukraine due to their preexisting dissatisfaction with the incumbent Biden administration. Partisanship may be an underlying factor that leads conservative Republicans to associate praise for Ukraine with the Biden administration and thus refrain from showing support for Ukraine. Indeed, we found that conservatives were more likely than liberals to connect the war in Ukraine to domestic politics, which accords with recent work on partisan types in foreign policy (Brutger, 2021; Kertzer et al., 2021; Marinov et al., 2015). Third, it is plausible that support for Russia among conservatives is related to the spread of misinformation within those same networks, a mechanism largely consistent with existing research on the spread of misinformation within conservative social media networks. Although we find that conservatives are likelier than liberals and moderates to spread misinformation and support Russia, we do not formally test the connection between the two.

Another avenue for future research is whether individuals’ political engagement level moderates how they respond to foreign policy issues. Scholars have suggested that there are informational asymmetries between leaders and publics in the foreign policy domain, giving leaders an advantage to frame foreign policy as they desire (Baum and Potter, 2008, 2019; Nomikos and Sambanis, 2019). Given that many people do not pay attention to politics (Hibbing and Theiss-Morse, 2002) and do not have clear political preferences (Converse, 2006), it would be worthwhile to study whether the reactions we identify come from people’s true political preferences, or whether they are merely following cues from political leaders such as Donald Trump, who was quick to praise Russian President Vladimir Putin despite Congress’s bipartisan support for Ukraine. Such research could provide insight into the potentially heterogeneous effect of elite cues in public opinion (Tappin et al., 2023; Zaller, 1992). A related possibility is that Democratic party messages about Ukraine caused a partisan “backlash” among conservatives but not liberals (Peterson, 2023; Tappin et al., 2023), though some studies suggest such responses are rare (Guess and Coppock, 2020).

To our knowledge, our findings offer the most direct evidence yet that political discourse on social media platforms on foreign policy issues in the United States is different for conservatives, moderates, and liberals. These results suggest that the contested nature of American domestic politics also extends to salient foreign policy issues.

Data availability

The datasets generated during and/or analyzed during the current study are available through the journal’s Harvard Dataverse repository, which may be accessed at https://doi.org/10.7910/DVN/WQ5N32.

References

Amiri F, Mascaro L (2022) Blue and yellow: Ukraine unity colors state of the union. AP News. Available at: https://apnews.com/article/russia-ukraine-state-of-the-union-address-joe-biden-coronavirus-pandemic-health-dc47e23ee745bdc400b1f3797a4f49a9 (accessed 30 January 2025)

Angelov, D (2020) Top2vec: Distributed representations of topics. arXiv preprint arXiv:2008.09470

Bail CA, Argyle LP, Brown TW et al. (2018) Exposure to opposing views on social media can increase political polarization. Proc Natl Acad Sci USA 115:9216–9221

Baldassarri D, Page SE (2021) The emergence and perils of polarization. Proc Natl Acad Sci USA 118:e2116863118

Barberá P et al. (2019) Who leads? Who follows? Measuring issue attention and agenda setting by legislators and the mass public using social media data. Am Political Sci Rev 113:883–901

Baum MA, Potter PB (2008) The relationships between mass media, public opinion, and foreign policy: toward a theoretical synthesis. Annu Rev Political Sci 11:39–65

Baum MA, Potter PB (2019) Media, public opinion, and foreign policy in the age of social media. J Politics 81:747–756

Blei DM, Ng AY, Jordan MI (2003) Latent Dirichlet allocation. J Mach Learn Res 3:993–1022

Boucher J-C, Thies C (2019) "I Am a Tariff Man": the power of populist foreign policy rhetoric under President Trump. J Politics 81:712–722

Brutger R (2021) The power of compromise: proposal power, partisanship, and public support in international bargaining. World Politics 73:128–166

Carlson TN (2019) Through the grapevine: informational consequences of interpersonal political communication. Am Political Sci Rev 113:325–339

Converse PE (2006) The nature of belief systems in mass publics (1964). Crit Rev 18:1–74

Dias N, Lelkes Y (2022) The nature of affective polarization: disentangling policy disagreement from partisan identity. Am J Political Sci 66:775–790

Druckman JN, Klar S, Krupnikov Y et al. (2021) Affective polarization, local contexts and public opinion in America. Nat Hum Behav 5:28–38

Fiorina M, Abrams S (2008) Political polarization in the American public. Annu Rev Political Sci 11:563–588

Fowler A, Melnikov M, Suh S (2022) Moderates. Am Political Sci Rev 116:1–18

Garrett RK, Bond RM (2021) Conservatives’ susceptibility to political misperceptions. Sci Adv 7:eabe5903

Gelpi C, Feaver PD, Reifler J (2009) Paying the human costs of war: American public opinion and casualties in military conflicts. Princeton University Press

Guess A, Nagler J, Tucker J (2019) Less than you think: prevalence and predictors of fake news dissemination on Facebook. Sci Adv 5:eaau4586

Guess AM, Barberá P, Munzert S, Yang J (2021) The consequences of online partisan media. Proc Natl Acad Sci USA 118:e2013464118

Grinberg N, Joseph K, Friedland L et al. (2019) Fake news on Twitter during the 2016 U.S. presidential election. Science 363:374–378

Guisinger A, Saunders EN (2017) Mapping the boundaries of elite cues: How elites shape mass opinion across international issues. Int Stud Q 61:425–441

Guay B, Ecker UK (2022) Examining partisan asymmetries in fake news sharing and the efficacy of accuracy prompt interventions. PsyArXiv https://doi.org/10.31234/osf.io/y762k

Guess A, Coppock A (2020) Does counter-attitudinal information cause backlash? Results from three large survey experiments. Br J Political Sci 50:1497–1515

Harrison Warren T (2022) How readers around the world are praying for Ukraine. The New York Times

Hibbing JR, Theiss-Morse E (2002) Stealth democracy: Americans’ beliefs about how government should work. Cambridge University Press

Iyengar S, Westwood SJ (2015) Fear and loathing across party lines: new evidence on group polarization. Am J Political Sci 59:690–707

Iyengar S, Lelkes Y, Levendusky M, Malhotra N, Westwood SJ (2019) The origins and consequences of affective polarization in the United States. Annu Rev Political Sci 22:129–146

Jeong G-H, Quirk PJ (2019) Division at the water’s edge: the polarization of foreign policy. Am Politics Res 47:58–87

Jones JM (2024) Americans’ views of both Israel, Palestinian Authority Down

Kertzer JD, Brooks DJ, Brooks SG (2021) Do partisan types stop at the water’s edge? J Politics 83:1764–1782

Le Q, Tomas M (2014) Distributed representations of sentences and documents. Int Conf Mach Learn, PMLR 32:1188

Levin SA, Milner HV, Perrings C (2021) The dynamics of political polarization. Proc Natl Acad Sci USA 118:e2116950118

Lin G, Nomikos WG New Methods and Procedures for Measuring Public Opinion with Social Media Data. SocArXiv. Retrieved from: https://osf.io/u6fm4

Marinov N, Nomikos WG, Robbins J (2015) Does electoral proximity affect security policy? J Politics 77:762–773

Mattes M, Weeks JL (2019) Hawks, doves, and peace: an experimental approach. Am J Political Sci 63:53–66

McInnes L, Healy J (2018) UMAP: uniform manifold approximation and projection for dimension reduction. J Open Source Softw 3:861

McInnes L, Healy J, Astels S (2017) HDBSCAN: Hierarchical density based clustering. J Open Source Softw 2:205

Milner HV, Tingley D (2015) Sailing the water’s edge: the domestic politics of American foreign policy. Princeton University Press

Mitchell A (2020) Americans who mainly get their news on social media are less engaged, less knowledgeable. Pew Res Center

Mosleh M, Martel C, Eckles D, Rand DG (2021) Shared partisanship dramatically increases social tie formation in a Twitter field experiment. Proc Natl Acad Sci USA 118

Myrick R (2021) Do external threats unite or divide? Security crises, rivalries, and polarization in American foreign policy. Int Organ 75:921–958

Nomikos WG, Sambanis N (2019) What is the mechanism underlying audience costs? Incompetence, belligerence, and inconsistency. J Peace Res 56:575–588

Peterson E (2023) Persuading partisans. Nat Hum Behav 1–2

Porter E, Wood TJ (2021) The global effectiveness of fact-checking: evidence from simultaneous experiments. Proc Natl Acad Sci USA 118:e2021629118

Rathbun B (2007) Hierarchy and community at home and abroad: evidence of a common structure of beliefs. J Confl Resolut 51:379–407

Rathje S, Roozenbeek J, Van Bavel JJ, van der Linden S (2023) Accuracy and social motivations shape judgements of (Mis)information. Nat Hum Behav 1–12

Santos FP, Lelkes Y, Levin SA (2021) Link recommendation algorithms and dynamics of polarization in online social networks. Proc Natl Acad Sci USA 118:e2102144118

Sides J, Hopkins D (2015) Political polarization in American politics. Bloomsbury Publishing USA

Tappin BM, Berinsky AJ, Rand DG (2023) Partisans’ receptivity to persuasive messaging is undiminished by countervailing party leader cues. Nat Hum Behav 1–15

Tomz MR, Weeks JLP (2020) Human rights and public support for war. J Politics 82:182–194

Wike R, Fagan M, Gubbala S, Austin S (2024) Growing partisan divisions over NATO and Ukraine

Wood T, Porter E (2019) The elusive backfire effect: mass attitudes’ steadfast factual adherence. Political Behav 41:135–163

Yablokov I (2022) The five conspiracy theories that Putin has weaponized. The New York Times

Zaller JR (1992) The nature and origins of mass opinion. Cambridge Studies in Public Opinion and Political Psychology

Author information

Authors and Affiliations

Contributions

WN conceptualized the research idea and designed the study. DK and GL collected and curated the data. GL developed the analytical framework and performed data analyses. All authors interpreted the results, contributed to discussions about the findings, and wrote the initial manuscript. WN revised the manuscript and prepared the final version of the manuscript for submission and publication.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

This article does not contain any studies with human participants performed by any of the authors necessitating specific ethical approval.

Informed consent

This article does not contain any studies with human participants performed by any of the authors necessitating specific informed consent.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Nomikos, W.G., Kim, D. & Lin, G. American social media users have ideological differences of opinion about the War in Ukraine. Humanit Soc Sci Commun 12, 125 (2025). https://doi.org/10.1057/s41599-024-04304-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1057/s41599-024-04304-7