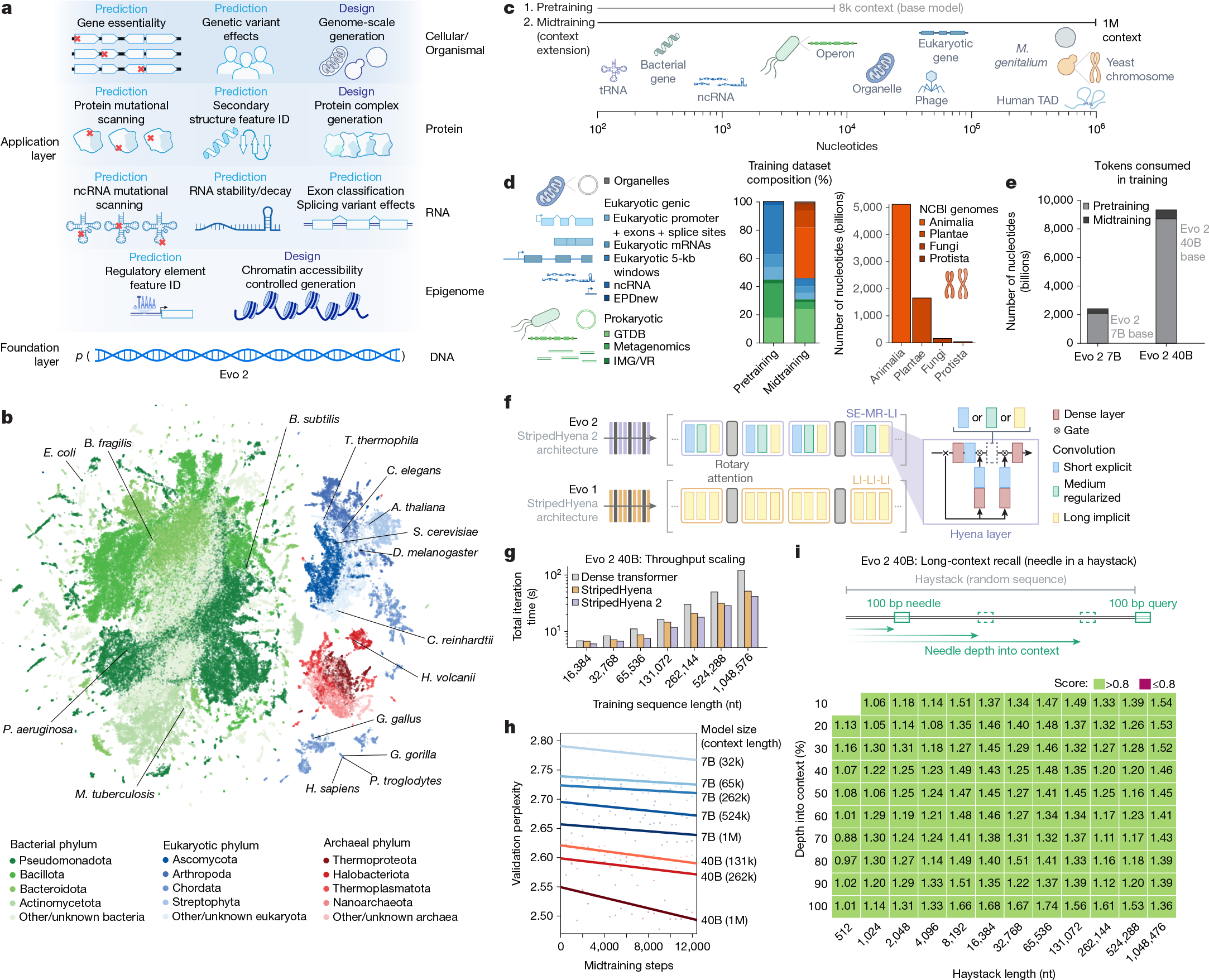

Fig. 1: Overview of model architecture, training procedure, datasets and evaluations for Evo 2.

From: Genome modelling and design across all domains of life with Evo 2

a, Evo 2 models DNA sequence and enables applications across the central dogma, scaling from molecules to genomes and spanning all domains of life. b, Evo 2 was trained on data encompassing trillions of nucleotide sequences from all domains of life. Each point in the UMAP (uniform manifold approximation and projection) graph represents a single genome in the training dataset that is embedded on the basis of the genome’s k-mer frequencies. Arabidopsis thaliana, Bacillus subtilis, Bacteroides fragilis, Caenorhabditis elegans, Chlamydomonas reinhardtii, D. melanogaster, E. coli, Gallus gallus, Gorilla gorilla, Haloferax volcanii, Homo sapiens, Mycobacterium tuberculosis, Pan troglodytes, Pseudomonas aeruginosa, S. cerevisiae and Tetrahymena thermophila are highlighted. c, A two-phase training strategy was used to optimize model performance while expanding the context length up to 1 million base pairs to capture wide-ranging biological patterns. M. genitalium, Mycoplasma genitalium; TAD, topologically associating domain. d, Novel data augmentation and weighting approaches prioritize functional genetic elements during pretraining and long-sequence composition during midtraining. GTDB, Genome Taxonomy Database; IMG/VR, Integrated Microbial Genomes/Virus database. e, The number of tokens used to train Evo 2 40B and 7B, split into the shorter sequence pretraining and the long context midtraining. f, Schematic of the new multi-hybrid StripedHyena 2 architecture, showing the efficient block layout of short explicit (SE), medium regularized (MR) and long implicit (LI) hyena operators. g, Comparison of iteration time at 1,024 GPU, 40B scale between StripedHyena 2, StripedHyena 1 and Transformers, showing improved throughput. h, Validation perplexity of Evo 2 midtraining comparing the model size and context length, showing benefits with scale and increasing context length. i, A modified needle-in-a-haystack task was used to evaluate long context recall ability up to 1 million sequence length, and shows that Evo 2 performs effective recall at 1 million token context.