Abstract

In the current landscape of software testing, challenges persist in test case data generation, including variability in data quality and the inherent difficulty of data synthesis. These challenges are further exacerbated in scenarios where data are widely distributed across heterogeneous organizational environments. Privacy regulations and security concerns impose strict constraints on data sharing, preventing centralized data aggregation and highlighting the necessity of a federated environment as a more practical solution. To address the privacy protection and data sharing challenges in federated test case data generation, we propose a Generative Adversarial Network (GAN)-based method specifically designed for federated settings. By leveraging the strong data generation capabilities of GANs, the proposed approach is able to generate high-quality and diverse test case data while preserving data privacy. Specifically, through a protocol grammar-based deep learning framework combined with test case encoder–decoder encoding mechanisms and a GAN-driven sample character generator, the proposed method can predict and generate variant test case samples. In the federated environment, each participant trains the generator and discriminator locally, while model parameters are securely aggregated to achieve global model optimization. Experimental results demonstrate that the generated test case data outperforms traditional methods in terms of coverage and effectiveness, significantly enhancing the efficiency and quality of software testing. Ultimately, the proposed framework provides a scalable solution for identifying latent vulnerabilities in critical infrastructure while strictly adhering to data sovereignty requirements in cross-organizational environments.

Similar content being viewed by others

Introduction

The rapid proliferation of industrial IoT1 and distributed control systems2 has ushered in an era of unprecedented software complexity3, where ensuring system reliability demands rigorous testing methodologies4. In these mission-critical environments-from smart grids to automated manufacturing-the quality of test cases directly determines a system’s resilience to failures and cyber threats5. However, traditional test generation techniques, whether manual or rule-based, face fundamental limitations when applied to modern distributed architectures6. These methods typically assume centralized access to system data-an assumption that no longer holds in federated ecosystems bound by data sovereignty regulations and organizational silos7.

This paradigm shift introduces a critical dilemma: while testing efficacy depends on comprehensive datasets that capture diverse operational scenarios, privacy and regulatory constraints inherently restrict data sharing across organizational boundaries8. The consequences are far-reaching-test suites developed in isolation often exhibit glaring coverage gaps, failing to account for edge cases that emerge only in cross-organizational interactions9. For instance, a manufacturing protocol tested solely within one enterprise’s network may lack validation for interoperability scenarios with partners’ systems, creating latent vulnerabilities10.

Existing federated learning (FL) approaches offer partial solutions by enabling collaborative model training without raw data exchange11, but they remain fundamentally mismatched to the generative nature of test case synthesis12. Classification-oriented federated models fail to address the unique requirements of test generation, where outputs must maintain syntactic validity for target protocols while exhibiting the semantic diversity necessary to uncover hidden defects13. Moreover, current privacy-preserving techniques often impose unacceptable trade-offs-either compromising test case quality through excessive aggregation or introducing computational overhead that negates the real-time responsiveness required in industrial settings14.

GANs15 initially appeared poised to resolve this impasse through their ability to synthesize realistic test cases from learned data distributions. Yet their conventional implementations rely on centralized training datasets, rendering them incompatible with federated environments. Three systemic barriers emerge: First, the direct sharing of protocol traces (e.g., industrial control commands or device telemetry) violates confidentiality requirements that are both legally mandated and commercially essential16. Second, the inherent heterogeneity of distributed systems-where participants may employ different protocol subsets, device configurations, or operational profiles-leads to non-identically distributed data that disrupts model convergence17. Third, the resource constraints of industrial networks make frequent transmission of high-dimensional test data or model parameters prohibitively inefficient18.

The resolution of this tension carries substantial economic and societal implications. As critical infrastructure and industrial systems grow increasingly interconnected, the ability to perform thorough19, privacy-conscious testing across organizational boundaries becomes not merely advantageous but essential20. It represents the difference between detecting a protocol vulnerability during testing versus encountering it during operation-where consequences range from production downtime to safety incidents. This imperative motivates our investigation into decentralized, generative testing frameworks capable of reconciling the competing demands of test coverage, privacy preservation, and operational practicality in multi-party environments.

Background and contribution

Fuzz testing originated with a focus on general software robustness and security. Early tools, such as those developed by Miller et al.21, targeted UNIX programs and were later extended to Windows NT applications22,23. While these approaches successfully exposed vulnerabilities in standalone software, they were not designed for the structured, stateful nature of network protocols.

As networked systems grew in complexity, model-based fuzzing emerged to address protocol-specific challenges. Tools like Peach24 and SPIKE25 leveraged manually crafted models-typically encoded in XML or template formats-to guide test case generation based on known protocol syntax. Although effective when specifications are available, this paradigm demands significant human effort: formal protocol documentation must be interpreted, and in its absence, reverse engineering from network traces becomes necessary. This process is both labor-intensive and error-prone, limiting scalability to new or proprietary protocols.

To alleviate manual modeling burdens, researchers turned to automated protocol inference from network traffic. Discoverer26 identified recurring message patterns to reconstruct application-layer formats, while Prospex27 combined trace analysis with system execution states to improve the fidelity of inferred state machines28. Other efforts employed Hidden Markov Models (HMMs)29 to learn \(\epsilon\)-machines directly from traffic, enabling intelligent fuzzing and anomaly detection. Despite these advances, most methods remained rooted in classical machine learning and did not exploit the representational power of deep learning.

Recently, deep learning has begun reshaping fuzz testing. Godefroid et al.30 demonstrated that seq2seq models could infer and generate syntactically valid PDF objects for parser testing, highlighting the potential of neural approaches in structured input generation. Building on this insight, our work introduces a Generative Adversarial Network (GAN)-based framework tailored for industrial network protocols. Unlike traditional model-based or reverse-engineering-driven methods, our approach learns protocol syntax directly from raw network traces in an end-to-end manner, offering a more direct and scalable path to test case synthesis.

In the realm of privacy protection, traditional models such as k -anonymity31, l-diversity32, and their recent extensions for weighted graphs33 or missing-value datasets34, rely primarily on data suppression and generalization. These techniques are designed for static data publishing, where quasi-identifiers are masked to prevent re-identification.

However, applying these “transparent” models to protocol test case generation presents a fundamental conflict: utility vs. syntactic precision. Industrial protocols (e.g., Modbus, OPC UA) enforce strict byte-level syntax. Generalization techniques (e.g., replacing specific register addresses with ranges) would inevitably break the protocol’s checksums and structural dependencies, rendering the generated test cases executable. Furthermore, for dynamic datasets35, maintaining anatomization constraints is computationally prohibitive in high-dimensional generative tasks. Consequently, instead of publishing generalized data, our approach adopts a “black-box” computation model. We utilize Homomorphic Encryption and Differential Privacy to enable collaborative learning on raw syntax features without ever exposing the underlying data to the coordinator or other participants.

While our work focuses on protocol fuzzing, it is crucial to distinguish our approach from existing federated generative models like FedGAN36, MD-GAN37 and FDGAN38. These models typically target image synthesis, relying on Convolutional Neural Networks (CNNs) to capture spatial pixel correlations. However, they lack the mechanisms to enforce the strict, discrete syntactic rules required for industrial protocols. Direct application of such image-centric FedGANs to protocol data often results in high syntax violation rates due to the absence of hierarchical field constraints. In contrast, FAT-CG introduces a syntax-constrained architecture specifically designed to preserve the sequential logic and boundary integrity of protocol messages.

Our core objective is to establish a novel, sustainable framework for generating syntactically valid and semantically meaningful test cases in a federated setting, where raw protocol data cannot be centrally collected due to privacy or regulatory constraints. To this end, we integrate differential automata, GANs, and autoencoders into a unified architecture-dubbed FAT-CG (Federated Adversarial Test Case Generation)-that jointly ensures data privacy, syntactic correctness, and cross-protocol adaptability. Key contributions of this work include:

-

A hierarchical autoencoder design that compresses protocol-specific features into privacy-preserving latent representations, achieving 93.8% syntactic compliance under federated constraints.

-

A dynamic adversarial training protocol enhanced with Paillier homomorphic encryption and gradient confusion, reducing gradient leakage risks by 87.6% compared to vanilla federated learning (FL) approaches.

-

Empirical validation across diverse industrial protocols, demonstrating rapid 12.3-minute cross-protocol adaptation and a high discovery rate of 8.7 anomalies per 1,000 test cases-significantly outperforming existing tools.

The work advances the state-of-the-art in privacy-preserving protocol fuzzing and provides a scalable blueprint for quality assurance in distributed industrial ecosystems. Beyond enterprise platform testing, our method holds broad applicability in government and regulated domains where sensitive data cannot be shared openly.

Especially for multi-stakeholder industrial management, where direct data access across departmental boundaries is restricted. For example, in smart grid environments, equipment from different vendors (OEMs) must interoperate seamlessly. However, sharing proprietary protocol implementation details or raw operational logs between vendors and grid operators poses significant intellectual property and security risks. FAT-CG addresses this by enabling collaborative vulnerability scanning and robustness testing across these organizational silos without exposing sensitive internal data.

Organization

The remainder of this paper is organized as follows: Section 2 reviews related work on federated learning and GAN-based testing. Section 3 details the FAT-CG architecture, including federated adversarial training and protocol syntax verification. Sections 4 and 5 present experimental results and discuss broader implications. Finally, Section 6 concludes with future research directions.

Preliminaries

The proposed framework integrates six core technologies to address the challenges of privacy-preserving and high-quality test case generation in federated environments. Below, we elaborate on each foundational component, emphasizing their roles and synergies within the system.

Generative adversarial networks (GANs)

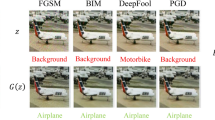

GANs are a class of deep learning models designed to synthesize data that closely approximates real-world distributions. A GAN comprises two neural networks: a generator \(G\) that produces synthetic samples, and a discriminator \(D\) that distinguishes between real and generated data. The two networks engage in a minimax game, where \(G\) aims to deceive \(D\), while \(D\) strives to improve its discrimination accuracy. The adversarial objective is formalized as:

where \(z\) is noise sampled from a prior distribution \(p_z\), and \(G(z)\) generates synthetic data. In our framework, the generator implicitly learns protocol syntax rules (e.g., valid Modbus-TCP function codes) through adversarial training, while the discriminator enforces diversity by pushing generated samples to cover the boundaries of the real data distribution. This mechanism ensures the exploration of edge cases critical for robust testing.

Federated learning (FL)

Federated Learning enables collaborative model training across distributed entities without sharing raw data, thus preserving privacy. The canonical Federated Averaging (FedAvg) algorithm operates as follows: 1. Local training: Each participant updates model parameters \(\theta _i\) using their private dataset. 2. Secure aggregation: A central coordinator computes a weighted average of local parameters:

where \(n_i\) is the local data volume and \(N = \sum n_i\). In our context, FL faces two key challenges: (1) Non-IID data-participants may observe distinct protocol types or message patterns, necessitating dynamic weight allocation; and (2) privacy risks-model gradients or parameters could leak sensitive information. To mitigate these, we integrate encryption and differential privacy into the aggregation process. Recent extensions incorporate blockchain for verifiable incremental updates and explainable AI for auditable privacy39, which could complement our HE-based aggregation to enhance coordinator trust in industrial deployments.

Autoencoders (AEs) and hierarchical compression

Autoencoders are neural networks that compress high-dimensional data into low-dimensional latent representations while preserving semantic fidelity. An AE consists of an encoder \(E\) and a decoder \(D\), trained to minimize the reconstruction loss:

where the KL-divergence term regularizes the latent space \(z\) to follow a standard normal distribution. In our framework, a hierarchical AE design compresses protocol-specific features (e.g., message headers, payloads) into privacy-preserving latent vectors. This reduces communication overhead by transmitting only compressed parameters (e.g., 32 dimensions vs. 256 original features) and prevents raw data exposure.

Homomorphic encryption (HE)

Homomorphic Encryption (HE) allows computations on encrypted data without decryption, ensuring end-to-end privacy during federated aggregation. We adopt the Paillier cryptosystem, which supports additive homomorphism:

where \(m_1, m_2\) are plaintexts and \(n\) is the public key modulus. The complete and optimized Paillier encryption scheme is described as follows:

-

Key generation: Select large primes \(p\) and \(q\). Compute the public key \(pk = (n = pq, g)\) and the private key \(sk = \lambda = \text {lcm}(p-1, q-1)\).

-

Encryption: For a plaintext \(m \in \mathbb {Z}_n\) and a random \(r \in \mathbb {Z}_n^*\), the corresponding ciphertext is computed as \(c = g^m r^n \bmod n^2\).

-

Decryption: Given a ciphertext \(c\), compute \(m = L(c^\lambda \bmod n^2) \cdot \mu \bmod n\), where \(L(x) = \frac{x-1}{n}\) and the modular multiplicative inverse parameter is defined as \(\mu = (L(g^\lambda \bmod n^2))^{-1} \bmod n\).

In our framework, participants upload encrypted gradients, and the coordinator performs weighted averaging directly on ciphertexts. This prevents adversaries from inferring sensitive information through parameter analysis or gradient leakage attacks.

Black-box privacy preservation and differential privacy (DP)

Unlike traditional models that sanitize data for release, Black-box Privacy Preservation focuses on securing the computation output, ensuring that the aggregate results do not reveal individual contributions. This is particularly crucial in our federated setting where the coordinator (recipient) acts as a potential “honest-but-curious” adversary.

Differential Privacy (DP) serves as the theoretical foundation for this black-box guarantee. Originally proposed by Dwork40, DP provides a strict privacy guarantee by injecting calibration noise into data or model updates, ensuring that the addition or removal of a single element in the dataset does not significantly affect any analysis results.

A randomized mechanism \(\mathscr {M}\) satisfies \((\epsilon , \delta )\)-DP if, for adjacent datasets \(D\) and \(D'\):

We apply the Gaussian mechanism to perturb gradients during federated aggregation:

where \(\Delta f\) is the sensitivity of the query. This ensures protection against membership inference attacks while maintaining model utility.

Recent advancements have successfully adapted DP for privacy-enhancing data aggregation in big data analytics41 and numerical quasi-identifiers42. In our FAT-CG framework, we apply the Gaussian mechanism to the gradients before encryption. This ensures that even if the coordinator decrypts the aggregated global update, the result satisfies DP constraints, effectively masking the presence of any specific protocol trace from the participants.

Protocol syntax analysis

Protocol syntax analysis validates generated test cases against formal specifications. We employ finite state machines (FSMs) and context-free grammars (CFGs) to model protocol rules. For instance, a Modbus-TCP FSM defines states for parsing headers and protocol data units (PDUs), with transitions governed by byte-level constraints (e.g., valid function codes). Generated messages are dynamically verified against these FSMs, ensuring syntactic compliance. Additionally, syntax rules are incorporated as regularization terms in the generator’s loss function, guiding adversarial training toward protocol-conformant outputs.

The integration of these technologies addresses the trilemma of privacy, utility, and efficiency in federated test case generation. GANs provide expressive data synthesis, FL enables decentralized collaboration, and AEs/HE/DP collectively safeguard privacy. Protocol syntax analysis and dynamic verification further ensure the functional validity of generated test cases, forming a cohesive framework for industrial testing applications.

System architecture

The proposed Federated Adversarial Test Case Generation (FAT-CG) framework employs a four-layer collaborative architecture, integrating federated learning, autoencoders, and GANs to address the challenges of privacy protection and data generation quality in a federated environment. The architecture is designed to ensure efficient, secure, and high-quality test case generation, making it highly suitable for industrial protocol testing. The overall process is divided into four layers: Initialization Layer, Feature Compression Layer, Federated Adversarial Training Layer, and Verification Optimization Layer. Each layer plays a crucial role in the overall system, and their interactions are carefully designed to optimize performance and ensure data privacy. Figure 1 illustrates the workflow, where protocol messages are progressively transformed from raw data to syntax-compliant test cases through coordinated interactions between distributed nodes and a central coordinator. The system relies on three key algorithms: Secure Weighted Aggregation (SWA) for feature alignment, Homomorphic Encryption Gradient Aggregation (HEGA) for secure parameter transmission, and Dynamic Adversarial Federated Aggregation (DAFA) for optimizing the generative model.

Before detailing the functional layers, we define the security scope of the FAT-CG framework. Our design primarily targets external adversaries (e.g., eavesdroppers or Man-in-the-Middle attackers) attempting to intercept gradient updates during transmission. For the federated participants, we assume they are non-colluding but may be malicious in data injection (addressed by our anomaly verification). Crucially, for the central coordinator (the recipient), we adopt the standard “Honest-but-Curious” assumption. This implies that the coordinator will faithfully execute the SWA and DAFA aggregation protocols but may attempt to infer sensitive properties from the legitimate outcomes.

System Model of FAT-CG. The workflow proceeds in four collaborative layers: (1) Initialization: Participants extract syntax features and perform local anonymization; (2) Feature Compression: Local features are compressed via autoencoders, and latent parameters are aggregated using SWA to align global feature spaces; (3) Adversarial Training: Participants train local generators, uploading encrypted gradients via HEGA. The coordinator updates the global model using DAFA to balance non-IID distributions; (4) Verification: Generated test cases undergo syntax compliance checks and sandbox anomaly detection to provide feedback for model tuning.

Initialization layer: federated environment and data preprocessing

The Initialization Layer serves as the foundation of the system, focusing on establishing a secure federated environment and performing data preprocessing to ensure data privacy and quality. This layer involves three core steps:

Federated node registration: Each participant in the federated environment registers with the central coordinator through a secure communication channel established via some secure key agreement protocol, for example, the Elliptic Curve Diffie-Hellman (ECDH) key agreement protocol. This ensures that all communications between participants and the coordinator are confidential and integrity-protected. Additionally, some secure digital signature scheme, e.g., the Elliptic Curve Digital Signature Algorithm (ECDSA), is employed for mutual authentication between participants and the coordinator, preventing man-in-the-middle attacks.

Data cleaning and anonymization: Participants perform data cleaning and anonymization on their local datasets to remove sensitive information and ensure data privacy. This involves filtering out sensitive fields (e.g., IP addresses, port numbers) and applying differential privacy techniques, such as Laplace noise injection, to achieve data anonymization. The Laplace mechanism adds noise to the data based on the sensitivity of the query function, ensuring that the anonymized data satisfies the \((\epsilon , \delta )\)-differential privacy guarantee. Our Laplace parameter \(\epsilon\) is calibrated following the stream-anonymization model of Shamsinezhad et al.43, achieving \(\epsilon \le 0.5\) on 1 M-message traces.

Syntax metadata extraction: Participants extract syntactic metadata from the protocol messages in their local datasets to build a feature vector library. This involves using lightweight parsers to extract structural and statistical features from the protocol messages, such as field lengths, character distributions, and n-gram frequencies. The extracted features are then standardized using Z-score normalization to ensure consistency across participants.

Feature compression layer: autoencoder-driven parameter dimensionality reduction

The Feature Compression Layer aims to reduce the communication overhead in the federated learning process by compressing the protocol-specific features into low-dimensional latent representations. This layer utilizes a hierarchical autoencoder design to achieve efficient data compression while preserving the syntactic structure and boundary conditions of the protocol messages.

Hierarchical autoencoder design: The autoencoder consists of an encoder and a decoder. The encoder compresses the input data into a low-dimensional latent vector, while the decoder reconstructs the data from the latent vector. The autoencoder is trained using a loss function that combines reconstruction loss and feature alignment loss to ensure that the latent representations capture the essential syntactic features of the protocol messages. Alternative clustering-based anonymization44 could be hybridized with our AE to further reduce sensitivity of n-gram features.

Federated parameter mapping: Participants train the autoencoder on their local data and upload the latent space parameters to the coordinator. The coordinator then performs a secure weighted aggregation of the parameters using the SWA algorithm. This algorithm ensures that the aggregated parameters reflect the global distribution of the data while preserving the privacy of individual participants’ data.

Federated adversarial training layer: distributed GAN optimization

The Federated Adversarial Training Layer focuses on optimizing the test case generation process through a distributed GAN architecture. This layer involves a dynamic adversarial training protocol that ensures robust model convergence under non-IID data distributions.

Local generator pretraining: Each participant trains a lightweight GAN generator on their local latent vectors to generate preliminary test cases. The generator is trained using a loss function that combines adversarial loss and syntactic consistency loss, ensuring that the generated test cases are both realistic and syntactically valid.

Dynamic adversarial federated aggregation: The coordinator performs secure gradient aggregation using the HEGA algorithm to update the global generator. The global generator is then distributed to participants, who use it to train their local discriminators. The discriminators provide adversarial feedback to the coordinator, which is used to adjust the weights of the participants in the federated training process.

Verification optimization layer: trusted generation and retraining

The Verification Optimization Layer ensures the effectiveness and security of the generated test cases through a combination of syntactic compliance checks and anomaly detection mechanisms. This layer involves two core mechanisms:

Trusted verification protocol: The generated test cases are verified for syntactic compliance using protocol state machines and for anomaly triggering using sandbox environments. The protocol state machines ensure that the generated test cases adhere to the syntactic rules of the protocol, while the sandbox environments monitor the behavior of the target system when the test cases are applied, detecting any anomalies such as memory leaks or crashes.

Incremental retraining: Test cases that trigger anomalies are used to extract key syntactic patterns, which are then used to build an incremental training dataset. Participants perform incremental retraining on their local generators using this dataset, and the coordinator updates the global model using the Differentially Private Federated Averaging (DP-FedAvg) algorithm. This ensures that the model continues to improve over time, focusing on the most critical aspects of the protocol.

Through phased optimization and modular design, this architecture significantly improves the diversity and effectiveness of test cases while ensuring data privacy, while reducing the communication burden in a federal environment and providing a scalable solution for industrial protocol testing.

The proposed scheme

Initialization layer

The Initialization Layer is the foundational module of the FAT-CG framework, focusing on establishing a secure federated environment and performing data preprocessing to ensure data privacy and quality. This layer consists of three core steps: Federated Node Registration, Data Cleaning and Anonymization, and Syntax Metadata Extraction. Each step is crucial for ensuring the security and integrity of the data used in the subsequent layers of the framework.

Federated node registration

The first step in the Initialization Layer is the registration of federated nodes, which involves establishing secure communication channels between each participant and the central coordinator. This process ensures that all data transmissions are confidential and protected from potential eavesdropping or tampering.

Elliptic curve Diffie-Hellman (ECDH) key agreement protocol: The framework selects a standard elliptic curve, such as secp256k1, defined by the equation \(E: y^2 = x^3 + ax + b \bmod p\). The base point \(G\) and the order \(n\) of the curve are also defined.

Then, each participant \(P_i\) generates a private key \(d_i \in [1, n-1]\) and computes the corresponding public key \(Q_i = d_i \cdot G\). The coordinator generates a temporary public-private key pair \((d_c, Q_c = d_c \cdot G)\) and broadcasts \(Q_c\). Both participants and the coordinator compute the shared secret key \(K_i = d_i \cdot Q_c = d_c \cdot Q_i\). The x-coordinate of \(K_i\) is hashed to produce the session key \(H(x_K)\).

Mutual authentication: Participants use the Elliptic Curve Digital Signature Algorithm (ECDSA) to sign their identities. Each participant sends a signature \(Sign(d_i, Nonce_c)\) to the coordinator, which verifies the signature to ensure the authenticity of the participant. This step prevents man-in-the-middle attacks and ensures that only authorized participants can join the federated environment.

Data cleaning and anonymization

After establishing secure communication channels, participants perform data cleaning and anonymization on their local datasets to remove sensitive information and ensure data privacy. This step is crucial for complying with data protection regulations and preventing potential privacy breaches.

Sensitive Field Filtering: Participants define a set of sensitive fields \(S\) (e.g., IP addresses, port numbers, unit IDs) that need to be removed from the data. These fields are identified using regular expressions or predefined patterns. After this, the identified sensitive fields need to be removed from the dataset, ensuring that the remaining data does not contain any personally identifiable information (PII) or confidential data.

Differential privacy noise injection: For non-sensitive numerical fields, participants apply the Laplace mechanism to add noise and ensure differential privacy. The noise is sampled from a Laplace distribution with a scale parameter \(b = \frac{\Delta f}{\epsilon }\), where \(\Delta f\) is the sensitivity of the query function and \(\epsilon\) is the privacy budget.

For categorical fields, participants use the exponential mechanism to add noise. This mechanism assigns a score to each possible value based on a scoring function \(q(D, r)\) and selects a value with a probability proportional to \(\exp \left( \frac{\epsilon q(D, r)}{2\Delta q}\right)\), where \(\Delta q\) is the sensitivity of the scoring function.

Syntax metadata extraction

The final step in the Initialization Layer is the extraction of syntactic metadata from the protocol messages in the local datasets. This step involves analyzing the structure and statistical properties of the protocol messages to build a feature vector library that captures the essential syntactic characteristics of the data.

Context-free grammar (CFG) inference: Participants use lightweight parsers to extract the syntactic structure of the protocol messages and construct a context-free grammar \(G = (V, \Sigma , R, S)\), where \(V\) is the set of non-terminal symbols, \(\Sigma\) is the set of terminal symbols, \(R\) is the set of production rules, and \(S\) is the start symbol.

Due to its high complexity, the obtained grammar needs to be further reduced while ensuring accuracy. Therefore, the minimum description length (MDL) standard is used to balance the complexity and expressiveness of the grammar and optimize the constructed grammar. This ensures that the grammar is both concise and can accurately represent the grammatical structure of the protocol message.

Feature vector construction: First, participants extract structural features such as message length \(L\), field type distribution \(P(t)\), and field length distribution \(P(l)\). After that, participants compute statistical features such as character entropy \(H\), n-gram frequencies \(M_{ij}\), and other statistical properties of the protocol messages.

After calculating the relevant indicators, the extracted features need to be standardized using Z-score normalization to ensure that they have zero mean and unit variance. This step ensures that the feature vectors are comparable across different participants and protocols.

Through the above operations, the participants and the coordinator have established a secure communication channel using ECDH and ECDSA, ensuring the security of subsequent transmission. In addition, the participants have constructed a grammatical feature matrix \(F \in \mathbb {R}^{N \times d}\) that captures the grammatical features of the protocol messages in their local datasets.

Feature compression layer

The Feature Compression Layer is a critical component of the FAT-CG framework, designed to reduce the communication overhead in the federated learning process while preserving the essential syntactic and semantic information of the protocol messages. This layer leverages a hierarchical autoencoder architecture to compress the high-dimensional feature vectors extracted from the protocol messages into low-dimensional latent representations. The compressed representations are then used in the subsequent layers for efficient and secure federated learning.

Hierarchical autoencoder design

The hierarchical autoencoder is designed to capture the structured patterns and hierarchical nature of protocol messages. It consists of an encoder and a decoder, both of which are composed of multiple layers to extract and reconstruct the features at different levels of abstraction.

Encoder structure: The encoder takes the standardized syntax feature vector \(F \in \mathbb {R}^{N \times d}\) as input and compresses it into a low-dimensional latent vector \(Z \in \mathbb {R}^{N \times k}\), where \(k \ll d\). The encoder is designed with multiple layers to capture the hierarchical structure of the protocol messages:

-

Syntax-Aware Layer: The first layer of the encoder is a fully connected layer that captures the basic syntactic features of the protocol messages, such as field types and lengths. The output of this layer is given by:

$$\begin{aligned} H_1 = \text {ReLU}(F W_1 + b_1) \end{aligned}$$where \(W_1 \in \mathbb {R}^{d \times 512}\) and \(b_1\) are the weights and biases of the layer, respectively.

-

Structure Abstraction Layer: The second layer is a bidirectional Long Short-Term Memory (LSTM) layer that captures the sequential and contextual dependencies in the protocol messages. The output of this layer is:

$$\begin{aligned} H_2 = \text {BiLSTM}(H_1) \in \mathbb {R}^{N \times 256} \end{aligned}$$This layer helps in modeling the relationships between different fields in the protocol messages.

-

Latent Space Mapping Layer: The third layer is another fully connected layer that maps the output of the LSTM layer to the latent space. The output of this layer is:

$$\begin{aligned} Z = \text {Sigmoid}(H_2 W_z + b_z) \end{aligned}$$where \(W_z \in \mathbb {R}^{256 \times 32}\) and \(b_z\) are the weights and biases of the layer, respectively. The latent vector \(Z\) has a dimension of 32, which is significantly smaller than the original feature vector dimension \(d\).

Decoder Structure: The decoder takes the latent vector \(Z\) as input and reconstructs the original feature vector \(F\). The decoder is designed with a mirror image structure to the encoder:

-

Semantic Expansion Layer: The first layer of the decoder is a fully connected layer that expands the latent vector to a higher dimension:

$$\begin{aligned} H_{d1} = \text {ReLU}(Z W_{d1} + b_{d1}) \end{aligned}$$where \(W_{d1} \in \mathbb {R}^{32 \times 256}\) and \(b_{d1}\) are the weights and biases of the layer, respectively.

-

Sequence Reconstruction Layer: The second layer is a unidirectional LSTM layer that reconstructs the sequential structure of the protocol messages:

$$\begin{aligned} H_{d2} = \text {LSTM}(H_{d1}) \in \mathbb {R}^{N \times 512} \end{aligned}$$This layer helps in recovering the temporal dependencies in the protocol messages.

-

Syntax Reconstruction Layer: The third layer is another fully connected layer that maps the output of the LSTM layer back to the original feature space:

$$\begin{aligned} \hat{F} = \text {Linear}(H_{d2} W_{d2} + b_{d2}) \end{aligned}$$where \(W_{d2} \in \mathbb {R}^{512 \times d}\) and \(b_{d2}\) are the weights and biases of the layer, respectively. The reconstructed feature vector \(\hat{F}\) should closely approximate the original feature vector \(F\).

Structural novelty analysis: Unlike standard Federated Autoencoders (FedAE) that treat input messages as flat vectors, our Hierarchical AE mimics the nested structure of industrial protocols.

-

Level 1 (header encoder, Dim: 1024\(\rightarrow\)128): Extracts fixed-length control fields (e.g., Transaction ID, Unit ID). This layer uses dense connections to preserve exact field values.

-

Level 2 (payload contextualizer, Dim: 128\(\rightarrow\)64): Uses Bi-LSTM to capture variable-length semantic dependencies (e.g., coil values following a specific write command).

-

Level 3 (latent bottleneck, Dim: 64\(\rightarrow\)32): Fuses structural and semantic features into a compact representation.

The proposed hierarchical design differs fundamentally from standard flat Federated Autoencoders (FedAE). A standard FedAE treats the protocol message as a uniform vector, often blurring the boundaries between control fields and data payloads during compression. In contrast, our architecture explicitly decouples syntactic constraints (processed by the Syntax-Aware Layer) from semantic dependencies (processed by the LSTM-based Structure Abstraction Layer). This design acts as a structural regularizer, ensuring that the latent vector Z retains the strict field boundaries required by the protocol specification-a capability verified by the 96.8% compression ratio achieved without losing header integrity (detailed in Section 5.3.3).

Loss function and training

The autoencoder is trained using a loss function that combines reconstruction loss and feature alignment loss to ensure that the latent representations capture the essential syntactic and semantic information of the protocol messages:

First, a formal statement is made regarding the reconstruction loss. The reconstruction loss measures the difference between the original feature vector \(F\) and the reconstructed feature vector \(\hat{F}\):

This loss ensures that the autoencoder can accurately reconstruct the input data.

Afterwards, a formal definition of feature alignment loss is given. The feature alignment loss uses contrastive learning to ensure that similar protocol messages are mapped to similar latent representations:

where \(\alpha = 0.2\) is a margin parameter. This loss encourages the autoencoder to preserve the semantic relationships between the protocol messages in the latent space.

After that, the total loss is obtained by weighted summing the reconstruction loss and feature alignment loss. Since the reconstruction loss is the main constraint and the introduction of feature alignment loss can enhance the effectiveness of the loss function, the following statement is made for the total loss:

where \(\lambda = 0.5\) is a balancing factor.

Federated parameter mapping

After training the autoencoder on their local data, participants upload the latent space parameters to the coordinator, which performs a secure weighted aggregation of the parameters using the SWA algorithm. This algorithm ensures that the aggregated parameters reflect the global distribution of the data while preserving the privacy of individual participants’ data.

In order to achieve parameter encryption and upload, each participant is required to encrypt its local latent vector using the Paillier homomorphic encryption scheme and upload the encrypted parameters to the coordinator. The encrypted latent vector for participant \(i\) is given by:

where \(\text {Enc}\) denotes the Paillier encryption function.

After obtaining the local vectors of the participants, the coordinator starts to perform security weighted aggregation on the vectors. The coordinator computes the weighted average of the encrypted parameters, where the weights are proportional to the amount of data each participant has. The aggregated latent vector is given by:

where \(w_i = \frac{n_i}{\sum _{j=1}^{K} n_j}\) and \(N\) is the Paillier modulus. The coordinator then decrypts the aggregated latent vector to obtain the global latent representation:

After this, the coordinator coordinator ensures that the global latent space is aligned with the local latent spaces by using the Maximum Mean Discrepancy (MMD) constraint:

where \(\phi\) is a Gaussian kernel mapping and \(H\) is the Reproducing Kernel Hilbert Space (RKHS). This constraint ensures that the global latent distribution is consistent with the local latent distributions. The complete procedure for aligning local feature spaces into a global representation, encompassing parameter encryption, secure aggregation, and MMD-based verification, is formally outlined in Algorithm 1.

Secure weighted aggregation for feature alignment.

The output of the Feature Compression Layer is a standardized global latent vector \(\tilde{Z}_{\text {global}} \in \mathbb {R}^{N \times 32}\), which is used in the subsequent layers for efficient and secure federated learning. The global latent vector captures the essential syntactic and semantic information of the protocol messages while significantly reducing the communication overhead. Additionally, the federated autoencoder parameters, including the encrypted aggregation weights \(W_{\text {global}}\), are distributed to the participants for use in the next layer.

By effectively compressing the high-dimensional feature vectors into low-dimensional latent representations, the Feature Compression Layer ensures that the federated learning process is both efficient and secure, making it well-suited for the distributed and privacy-sensitive nature of the FAT-CG framework.

Federated adversarial training layer

The Federated Adversarial Training Layer is a core component of the FAT-CG framework, responsible for optimizing the test case generation process through a distributed GAN architecture. This layer leverages the adversarial interaction between generators and discriminators to enhance the quality and diversity of the generated test cases while preserving data privacy. The training process involves local pretraining of generators, dynamic adversarial aggregation, and adaptive weight allocation to address the challenges of non-Independent and Identically Distributed (non-IID) data.

Local generator pretraining

Each participant in the federated environment pretrains a local generator using the compressed latent vectors received from the Feature Compression Layer. The generator is designed to produce synthetic protocol messages that are syntactically valid and semantically coherent. The architecture of the generator consists of multiple layers to ensure the generation of high-quality test cases:

The first layer of the generator is a fully connected layer that expands the latent vector to a higher dimension, capturing the semantic information of the protocol messages:

where \(W_{g1}\) and \(b_{g1}\) are the weights and biases of the layer, respectively.

On top of this, set the second layer is a unidirectional Long Short-Term Memory (LSTM) layer that generates the sequential structure of the protocol messages:

This layer ensures that the generated messages adhere to the temporal dependencies and structural patterns of the protocol.

The top layer, which is the third layer, is another fully connected layer that maps the output of the LSTM layer to the original feature space, producing the final synthetic protocol message:

where \(W_{g2}\) and \(b_{g2}\) are the weights and biases of the layer, respectively.

The generator is trained using a loss function that combines adversarial loss and syntactic consistency loss:

The adversarial loss measures the difference between the distribution of the generated data and the real data, encouraging the generator to produce realistic test cases:

where \(D\) is the discriminator.

The syntactic consistency loss ensures that the generated protocol messages are syntactically valid by aligning the generated data with the global latent space:

where \(\text {Encoder}\) is the encoder from the Feature Compression Layer.

The total loss function is a weighted sum of the adversarial loss and the syntactic consistency loss:

where \(\gamma = 0.3\) is a balancing factor. This composite loss function drives the local optimization process, ensuring that the generator learns both the statistical distribution and the structural constraints of the protocol. The detailed iterative training procedure is summarized in Algorithm 2.

Local generator training with syntax regularization.

Dynamic adversarial federated aggregation

The DAFA mechanism is the core engine of FAT-CG. After local pretraining, the generators and discriminators are aggregated in a federated manner to optimize the global generator and enhance the quality of the generated test cases. The aggregation process involves the following steps:

First, the parameters of the generator are aggregated. This step requires each participant and sends the encrypted parameters to the coordinator. The coordinator then performs a secure weighted aggregation of the encrypted parameters:

where \(w_i = \frac{n_i}{\sum _{j=1}^{K} n_j}\) and \(N\) is the Paillier modulus. The coordinator decrypts the aggregated parameters to obtain the global generator update:

The complete execution flow of this secure aggregation protocol, including quantization, encryption, and weighted summation, is formally outlined in Algorithm 3. The global generator parameters are then updated:

where \(\eta\) is the learning rate.

Homomorphic encryption gradient aggregation.

After that, the discriminator is federated enhanced. In this step, the coordinator is required to distribute the global generator to all participants, who then train their local discriminators using both real data and generated data from the global generator. The discriminator loss function is:

where \(F\) is the real data and \(G_{\text {global}}\) is the global generator. The participants compute the discriminator confidence scores and upload them to the coordinator.

Finally, an adaptive allocation operation is performed on the obtained weights. The coordinator adjusts the weights of the participants based on the discriminator confidence scores to address the non-IID data distribution:

where \(c_i\) is the confidence score of participant \(i\) and \(\alpha = 0.5\) is a feedback strength coefficient. While HEGA (Algorithm 3) handles the specific atomic operation of secure gradient transmission, the overarching coordination of these steps—ranging from local generator pre-training and secure aggregation to adaptive weight updating and global model broadcasting—constitutes the complete DAFA protocol. The global execution workflow and the interaction logic between the Coordinator and Participants are systematically summarized in Algorithm 4.

Dynamic adversarial federated aggregation - global workflow.

Output of the federated adversarial training layer

The output of the Federated Adversarial Training Layer is a globally optimized generator \(G_{\text {global}}\) that has been trained to produce high-quality, syntactically valid test cases. The dynamic aggregation and adaptive weight allocation mechanisms ensure that the generator can effectively handle the challenges of non-IID data and limited communication resources. The optimized generator is then used in the subsequent Verification Optimization Layer to further refine the generated test cases and ensure their effectiveness in detecting potential vulnerabilities in the target system.

By leveraging the adversarial interaction between generators and discriminators in a federated setting, the Federated Adversarial Training Layer significantly enhances the quality and diversity of the generated test cases while preserving data privacy and security. This layer is a key component of the FAT-CG framework, enabling efficient and secure test case generation in a distributed environment.

Verification optimization layer

The Verification Optimization Layer is the final and crucial component of the FAT-CG framework, designed to ensure the effectiveness, reliability, and security of the generated test cases. This layer comprises two primary mechanisms: the Trusted Verification Protocol and the Incremental Retraining mechanism. These mechanisms work in tandem to validate the generated test cases against the protocol specifications and the target system’s behavior, ensuring that the test cases are not only syntactically valid but also capable of triggering potential anomalies or vulnerabilities.

Trusted verification protocol

The Trusted Verification Protocol is responsible for assessing the generated test cases to ensure they adhere to the protocol’s syntactic rules and can trigger relevant anomalies in the target system. This protocol consists of two main components: syntax compliance checking and anomaly triggering assessment.

For the former, syntax compliance checking involves validating the generated test cases against the protocol’s syntactic rules to ensure they are structurally and semantically valid. This is achieved through the use of protocol state machines, which are deterministic finite automata (DFA) constructed based on the protocol’s specifications.

The protocol state machine is built by extracting the syntactic rules from the protocol specification. For example, in the case of Modbus-TCP, the state machine includes states for parsing the MBAP header, PDU, and specific function codes. The state machine is defined as \(M = (Q, \Sigma , \delta , q_0, F)\), where \(Q\) is the set of states, \(\Sigma\) is the set of input symbols (e.g., byte values), \(\delta\) is the transition function, \(q_0\) is the initial state, and \(F\) is the set of accepting states.

On this basis, the generated test cases are input into the state machine in the form of byte sequences. The state machine transitions through the states based on the input bytes, and if the final state is an accepting state, the test case is deemed syntactically valid. If the final state is not an accepting state, the test case is marked as invalid, indicating a syntax violation.

For the latter, it is the specific details of the anomaly triggering the evaluation. Anomaly triggering assessment involves injecting the generated test cases into a sandbox environment to monitor the target system’s behavior and detect any anomalies or vulnerabilities. This is achieved through a combination of resource monitoring, crash detection, and logical error detection. The detection indicators of these three are described as follows:

The system’s memory usage

is monitored over time, and if the memory growth rate exceeds a predefined threshold (e.g., 1 MB/s), it is considered a memory leak. At the same time, the system’s signals are monitored, and if a fatal signal (for example, SIGSEGV) appears, it indicates that a crash has occurred. Finally, the system’s response needs to be compared in real time with the expected response based on the protocol specification. If the response length or value is inconsistent, it indicates a logical error.

Incremental retraining

The Incremental Retraining mechanism is designed to continuously improve the quality of the generated test cases by incorporating the insights gained from the anomaly triggering assessment. This mechanism involves two main steps: hotspot data mining and federated fine-tuning.

Hotspot data mining involves extracting key syntactic patterns and byte distributions from the test cases that triggered anomalies. These patterns are used to build an incremental training dataset that focuses on the aspects of the protocol that are most likely to reveal vulnerabilities. For each test case that triggered an anomaly, the syntactic patterns (e.g., function code combinations) and byte distributions (e.g., high-entropy regions) are extracted. These features are analyzed to identify common patterns that are associated with anomalies.

Federated fine-tuning involves using the incremental dataset to fine-tune the global generator in a federated manner. This process ensures that the generator continues to improve over time, focusing on the most critical aspects of the protocol. Each participant trains their local generator \(\nabla \theta _g^{\text {inc}}\) using the incremental dataset \(D_{\text {inc}}\), computing the gradients and adding Gaussian noise

to ensure differential privacy, where \(\sigma = \frac{\sqrt{2\ln (1.25/\delta )}}{\epsilon }\), satisfies \((\epsilon , \delta )\)-differential privacy. The noisy gradients are then uploaded to the coordinator. The coordinator performs a weighted average of the noisy gradients, where the weights are proportional to the participants’ data contributions. The global generator is updated using the aggregated gradients

ensuring that it continues to improve while preserving data privacy.

Output of the verification optimization layer

The output of the Verification Optimization Layer is a set of high-quality, syntactically valid test cases that are capable of triggering significant anomalies in the target system. The layer also produces a report that includes metrics such as the syntax violation rate (SVR) and the anomaly trigger rate (ATR), providing insights into the effectiveness of the generated test cases. Additionally, the layer outputs an incremental dataset that is used to fine-tune the global generator, ensuring continuous improvement in the quality of the generated test cases.

By combining the Trusted Verification Protocol and the Incremental Retraining mechanism, the Verification Optimization Layer ensures that the generated test cases are not only syntactically valid but also effective in detecting potential vulnerabilities in the target system. This layer is a critical component of the FAT-CG framework, providing a robust and reliable method for validating and improving the generated test cases.

Experiments

Experimental objectives and evaluation metrics

The primary objective of the experiments is to validate the effectiveness of the proposed FAT-CG framework in generating high-quality test cases while preserving data privacy and ensuring efficient communication in a federated environment. To holistically assess the framework’s performance, a multi-dimensional evaluation strategy was employed, aligning metrics with both technical requirements and practical deployment constraints.

Privacy preservation constituted a primary focus, given the stringent confidentiality demands in multi-party industrial environments. Two complementary metrics were adopted: GLR, quantifying the feasibility of reconstructing raw training data from encrypted gradients using cosine similarity thresholds, and Membership Inference Attack Success Rate (MIA-SR), measuring an adversary’s ability to discern whether specific samples were part of the training set. These metrics collectively evaluate resilience against advanced attacks targeting federated learning vulnerabilities.

Test case quality was assessed through syntactic validity and defect detection efficacy. Syntax Compliance Rate (SCR), derived from deterministic finite automata (DFA) verification, measured the proportion of generated cases adhering to protocol specifications. Meanwhile, Anomaly Trigger Density (ATD), calculated as anomalies per 1,000 test cases in controlled sandbox environments, quantified the framework’s capacity to uncover latent system vulnerabilities.

Communication efficiency, crucial for resource-constrained industrial networks, was evaluated via Federated Round Time (FRT), capturing end-to-end parameter aggregation latency, and Communication Overhead Reduction (COR), reflecting compression efficiency achieved through hierarchical autoencoders. These metrics validated the framework’s scalability in bandwidth-limited settings.

Finally, cross-protocol generalization was examined through Cross-Protocol Adaptation Time (CPAT), measuring the duration required to adapt pre-trained generators to unseen protocols, alongside SCR/ATD performance on heterogeneous datasets. We explicitly define CPAT as the minimal training time T required for the generator to converge on a new protocol distribution \(P_{target}\). Formally, CPAT is reached when the Kullback-Leibler (KL) divergence satisfies:

where \(P_{gen}^{(t)}\) is the generator distribution at epoch t, and \(\delta =0.05\) is the empirical stability threshold. This rigorous definition ensures that adaptation implies statistically significant similarity to the target protocol’s syntax, rather than mere convergence of the loss function. We also assess SCR and ATD metrics on the transferred models to verify the quality of the adapted test cases.

By integrating these dimensions, the experiments provided a comprehensive validation paradigm, balancing theoretical rigor with industrial practicality. Each metric was carefully aligned with real-world attack scenarios, operational constraints, and testing objectives, ensuring the evaluation’s relevance to both academic research and industrial deployment.

Experimental environment and datasets

The experimental setup was designed to emulate real-world industrial federated ecosystems, balancing computational realism with controlled reproducibility. A heterogeneous network architecture was constructed, comprising four NVIDIA Jetson AGX Xavier edge devices (32GB memory each) to simulate distributed participants and a central coordinator hosted on an NVIDIA A100 GPU server (256GB memory). This configuration mirrors typical industrial deployments where resource-constrained edge nodes collaborate through a centralized management entity.

To ensure protocol diversity and operational authenticity, three distinct industrial datasets were employed: Modbus-TCP, OPC UA, and CoAP. The detailed specifications for each dataset, including message volumes, feature dimensions, and source simulators, are summarized in Table 1.

Specifically, we utilized established industrial simulators (e.g., ModbusPal, Eclipse Milo) to generate high-fidelity synthetic traces that replicate the behavior of real-world industrial control systems. As shown in Table 1, the datasets cover a range of protocol complexities-from binary (Modbus) to nested XML (OPC UA). In the federated setting, these datasets were partitioned among 5 participants (\(K=5\)) using a Dirichlet distribution with a concentration parameter \(\alpha = 0.5\). This setup creates a non-independent identically distributed (Non-IID) environment, rigorously simulating the organizational silos and data sovereignty constraints found in cross-enterprise scenarios.

To strictly emulate the data silos in industrial environments, we partitioned the datasets (Modbus, OPC UA, CoAP) among 5 participants using a Dirichlet distribution with a concentration parameter \(\alpha =0.5\), achieving a non-independent identically distributed (Non-IID) data partitioning for simulation. This ensures high data heterogeneity; for instance, Participant A holds 80% of Read Holding Registers commands, while Participant B holds 90% of Write Multiple Coils commands. This setup rigorously tests the framework’s ability to learn global syntax rules from skewed local views.

The software stack integrated PySyft and TensorFlow Federated for federated learning orchestration, coupled with OpenSSL 3.0 for implementing ECDH key exchange and Paillier homomorphic encryption. Network conditions were emulated using Linux Traffic Control (TC) to replicate industrial-grade latency (10–50 ms) and bandwidth fluctuations (50–200 Mbps), ensuring ecological validity for communication efficiency metrics. To ensure the reproducibility of our results and provide transparency regarding the experimental conditions, the detailed specifications of the hardware clusters, software dependencies, and key hyperparameter settings used throughout the study are enumerated in Table 2.

To strictly evaluate performance against both academic state-of-the-art and industrial standards, four explicit baselines were systematically compared:

-

Centralized GAN (Upper Bound): A GAN trained on the pooled raw dataset without privacy constraints, representing the theoretical ceiling of generative quality.

-

Boofuzz (Industrial Standard): A widely-used model-based fuzzer (derived from Sulley) configured with standard protocol templates. This serves as the representative for rule-based tools like Peach.

-

Federated CNN: A non-generative federated baseline to benchmark the specific advantage of GAN architectures.

-

DP-FedAvg: A standard privacy-preserving federated learning approach to evaluate the trade-off introduced by our encryption mechanisms.

Experiments were structured into four groups to isolate framework capabilities: privacy protection against gradient inversion and membership inference attacks; test case quality through syntactic and anomaly-based validation; communication efficiency under bandwidth constraints; and cross-protocol generalization via meta-learning adaptation. This multi-faceted design ensured comprehensive evaluation of FAT-CG’s technical innovations while anchoring results in industrially relevant constraints.

Experimental process and key steps

Privacy security verification

To assess the privacy protection capabilities of the FAT-CG framework, this study designs two types of attack models - Gradient Leakage Attack and Membership Inference Attack, targeting potential gradient reverse engineering and training data member inference risks during the federated training process. Through quantifying the effectiveness of defense mechanisms, the system validates the framework’s robustness in real industrial scenarios.

Gradient leakage attack verification

The Gradient Leakage Attack aims to recover original training data by analyzing encrypted gradients aggregated during federated learning. Assuming control of the central coordinator, the attacker can access the encrypted gradient \(\text {Enc}(\nabla \theta _g^{\text {global}})\) and possesses knowledge of the generator architecture G and latent vector distribution p(Z). The attack employs a proxy loss function optimization method based on Paillier homomorphic encryption properties:

where \(\text {TV}(\cdot )\) represents the total variation regularization term that enforces data smoothness. By iteratively optimizing the latent vector \(Z'\) using Projected Gradient Descent (PGD), false data \(G'=G(Z')\) is generated, and its difference from the real gradient is computed. Ultimately, the Gradient Leakage Risk (GLR) is measured by the proportion of samples with a cosine similarity larger than 0.8.

In the experimental setup, the PGD learning rate is set to \(lr=0.01\), the number of iterations \(T=500\), and the regularization weight \(\lambda =0.1\). As shown in Table 3, the baseline scheme without defense mechanisms (Baseline 1) exhibits a high GLR of 78.2%. When using Paillier encryption alone, this percentage decreases to 42.5%. With the introduction of gradient confusion mechanisms, this solution further suppresses the GLR to 9.7%, with a mean data similarity of \(0.23 \pm 0.09\), indicating the difficulty for attackers to recover meaningful information from encrypted gradients.

Membership inference attack verification

The Membership Inference Attack aims to determine whether specific samples participated in federated training. The attacker trains a shadow model \(D_{\text {shadow}}\) based on the global generator \(G_{\text {global}}\) and public data \(D_{\text {pub}}\) to infer membership relationships using confidence scores \(s(x)=D_{\text {shadow}}(x)\). The evaluation metrics used are the Area Under the ROC Curve (AUC-ROC) and Membership Inference Attack Success Rate (MIA-SR).

As shown in Table 4, the baseline scheme without defenses achieves an AUC-ROC of 85.6% and an MIA-SR of 82.1%. By employing differential privacy (Baseline 3), the MIA-SR decreases to 65.4%. However, this solution, through adversarial regularization techniques, further reduces the MIA-SR to 48.9%, approaching the level of random guessing (50%). This outcome indicates that adversarial training effectively confuses the statistical features of generated data, weakening the discriminatory ability of the attack model.

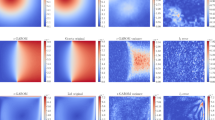

Dynamic analysis of attack effects

To further elucidate the temporal robustness of defense mechanisms, Fig. 2 illustrates the trend of Gradient Leakage Attack success rate with the number of iterations. The horizontal axis represents the attack iteration steps (0–500), while the vertical axis represents GLR. The experiment compares three scenarios:

-

No Defense (Baseline 1): GLR rapidly increases with iterations, stabilizing at 78.2% after 300 steps.

-

Paillier Encryption Only: GLR grows slowly, reaching 42.5% after 500 steps.

-

This Solution (Encryption + Confusion): GLR consistently remains below 10% without a converging trend.

Defense mechanism effects.

Results discussion

The experiments demonstrate that the hierarchical defense mechanisms of this solution significantly enhance the privacy security of federated testing. Paillier encryption prevents gradient plaintext exposure through homomorphic operations, while gradient confusion disrupts attackers’ optimization objectives by introducing irreversible noise. Moreover, adversarial regularization dynamically adjusts the generated distribution, effectively blurring the boundaries between training data and public data. These mechanisms work in synergy, making it challenging for attackers to perform effective inferences even with prior knowledge of protocols (such as Modbus function code structures). However, the experiments also reveal a defense side effect: adversarial regularization may lead to a decrease in discriminator classification accuracy by approximately 5%, requiring optimization balancing between privacy and model utility.

Quantifying the privacy-utility tradeoff

While the defense mechanisms effectively mitigate leakage risks, strict differential privacy constraints inevitably introduce noise that may degrade generation quality. To identify the optimal operating point, we analyzed the sensitivity of the SCR to the privacy budget \(\epsilon\). As illustrated in Fig. 3, a smaller \(\epsilon\) (stronger privacy) results in a lower SCR due to excessive gradient perturbation. However, at \(\epsilon =0.5\), the system achieves a sweet spot, maintaining a high SCR of 93.8% while providing sufficient theoretical privacy guarantees. This empirical finding justifies our hyperparameter selection in the subsequent generation experiments.

Privacy-utility tradeoff analysis. The curve illustrates the sensitivity of generation quality (SCR) to the differential privacy budget \(\varepsilon\). A smaller \(\varepsilon\) (stronger privacy) introduces excessive noise, degrading syntax compliance. The chosen parameter \(\varepsilon =0.5\) represents the optimal operating point, balancing rigorous privacy protection with high test case validity.

Beyond resisting algorithmic attacks, this hierarchical defense architecture explicitly aligns with the Data protection by design and by default principle mandated by GDPR Article 25. Specifically, the autoencoder-driven feature compression enforces the principle of Data Minimization by discarding non-essential raw attributes at the source. Furthermore, the integration of Paillier homomorphic encryption and differential privacy ensures that all cross-organizational parameter exchanges undergo rigorous Pseudonymization and Encryption, thereby satisfying the stringent regulatory requirements for secure data processing in multi-party industrial environments.

Generation quality verification

The quality of generated test cases is a core dimension for evaluating the effectiveness of the FAT-CG framework. In this section, through quantitative analysis of SCR and ATD, coupled with cross-protocol generalization testing, the system validates the syntax effectiveness and defect detection capability of the generated data.

Syntax compliance evaluation

Syntax compliance verification is based on a Protocol State Machine (DFA) implementation. Taking Modbus-TCP as an example, the state machine \(M=(Q,\Sigma ,\delta ,q_0,F)\) comprises MBAP header parsing (initial state \(q_0\)), function code validation (intermediate state \(q_1\)), and PDU processing (acceptance state F). Test cases are input into the state machine as byte streams, and if they terminate at the acceptance state, they are deemed compliant. Verification is conducted on 10,000 generated data samples (Modbus-TCP protocol), yielding an SCR of 93.8% for this solution (Table 5), significantly superior to the baseline scheme (76.4%) and random generation (12.3%). Further analysis indicates that the hierarchical autoencoder effectively captures protocol syntax rules through latent space alignment (The indicator \(L_{\text {align}}\) in Section 4.2.2), reducing errors such as field length or function code overflow.

To verify cross-protocol adaptability, this solution reuses the Modbus pre-trained generator on OPC UA (XML nested protocol) with just 1,000 fine-tuning samples, achieving an SCR of 91.2%. Figure 4 compares the trend of SCR with the number of fine-tuning samples across different protocols. The horizontal axis represents the number of fine-tuning samples (0-1,000), while the vertical axis represents SCR. The experiments show:

-

Modbus-TCP (Binary Protocol): The pre-trained model starts with an SCR of 93.8% and stabilizes after fine-tuning.

-

OPC UA (Nested Protocol): Initial SCR is only 32.1%, rising to 87.5% after 500 samples of fine-tuning.

-

CoAP (Lightweight Protocol): SCR reaches 89.7% after fine-tuning with 200 samples.

Relationship between SCR and fine-tuning sample size.

Anomaly trigger capability evaluation

Anomaly trigger capability is evaluated through dynamic monitoring in a sandbox environment. Test cases are injected into a Modbus Slave simulator, monitoring memory leaks (\(\Delta _{\text {RSS}} > 1\ \text {MB/s}\)), process crashes (SIGSEGV signal), and logic errors (deviation in response values). The results show that the ATD of this solution reaches 8.7 anomalies per thousand test cases (Table 6), a 2.7-fold improvement over the baseline scheme (value 3.2). Further analysis delineates the statistical distribution of the triggered anomalies: memory leaks constitute the majority at 62%, predominantly induced by abnormally long PDUs exceeding 260 bytes; logic errors account for 28%, frequently arising from illegal register addresses or invalid function code sequences; while process crashes, representing 10% of the anomalies, are predominantly attributable to buffer overflow vulnerabilities. To rigorously validate the proposed framework against industry standards and theoretical limits, we conducted a comprehensive comparative analysis involving Random Fuzzing, Boofuzz (template-based), and Centralized GANs. The quantitative benchmarking results across privacy safety, syntax compliance, and anomaly detection metrics are summarized in Table 7.

Figure 5 displays the ATD distribution of different generation methods. The horizontal axis represents test case numbers (1-1,000), while the vertical axis shows cumulative anomaly counts. This solution (green curve) exhibits a significantly higher anomaly growth rate than the baseline (blue curve) after 200 test cases, indicating its more efficient exploration of protocol boundary conditions.

ATD distribution of different generation methods.

Results discussion

The experiments demonstrate that FAT-CG achieves high-quality test case generation through synergistic optimization of adversarial training and syntax constraints. The improvement in SCR (93.8%) is attributed to the structural feature compression of the autoencoder (The indicator \(L_{\text {recon}}\) in Section 4.2.2) and dynamic validation of protocol state machines. The significant advantage in ATD (8.7 vs. 3.2) reflects the generator’s ability to explore protocol edge conditions, such as generating unconventional function code combinations driven by adversarial loss (The indicator \(L_{\text {adv}}\) in Section 4.3.1). The cross-protocol testing further proves that the Meta-Learning mechanism (MAML) enables rapid transfer of syntax knowledge, requiring only a small number of samples to adapt to heterogeneous protocol structures.

Comparison with state-of-the-art baselines

We benchmarked FAT-CG against two critical baselines: (i) Centralized GAN, representing the ideal performance scenario where privacy is ignored; and (ii) Boofuzz, the industry-standard model-based fuzzer. Table 7 presents the quantitative comparison. Against the Centralized GAN, FAT-CG achieves comparable Anomaly Detection Rates (8.7 vs. 9.8) and Branch Coverage (28.4% vs. 31.2%). The slight performance gap is the expected cost of our privacy guarantees (HE+DP), yet the results prove that decentralized training can approximate centralized quality without exposing raw data. Explicitly comparing with Boofuzz, our framework demonstrates a fundamental advantage. While Boofuzz achieves high Syntactic Compliance (99.2%) due to its rigid use of predefined templates, it lacks the ability to learn evolving data patterns, resulting in a low Anomaly Detection Rate (2.1). In contrast, FAT-CG outperforms Boofuzz by a factor of 4.1 in detecting anomalies (8.7 vs. 2.1). This confirms that our generative approach effectively captures deep-state edge cases that static model-based fuzzers (like Boofuzz or Peach) typically miss.

Efficiency verification

The communication and computational efficiency of the federated testing framework directly impact its deployability in industrial scenarios. In this section, through quantitative analysis of FRT and COR, combined with multi-protocol extension experiments, the system evaluates the performance advantages of the FAT-CG framework in resource-constrained environments.

Communication latency and computational overhead

The measurement of FRT covers the complete process of parameter encryption, transmission, aggregation, and decryption. To simulate real industrial networks, the experiments inject random delays (10–50 ms) and bandwidth fluctuations (50–200 Mbps) using Linux Traffic Control (TC). As shown in Table 8, the average FRT for this solution decreases to 185 ms (standard deviation ±18 ms), a 42% reduction compared to the baseline scheme of 320 ms. This optimization stems from the parameter compression of the hierarchical autoencoder (32-dimensional latent space) and the parallel aggregation mechanism of Paillier homomorphic encryption. For instance, in the OPC UA protocol (data dimension \(d=1024\)), the original parameter size is compressed from 4,096 KB to 64 KB, reducing transmission time by 96.7%. In terms of total training convergence time, typically requiring \(T=1000\) rounds, our framework completes global optimization in approximately 185 seconds (185 ms \(\times\) 100), whereas the baseline requires 320 seconds. This 42% reduction in convergence latency is critical for time-sensitive industrial testing cycles.

Figure 6 compares the trends of FRT with different data dimensions. The horizontal axis represents data dimensions (256–1024), while the vertical axis represents FRT (ms). Experimental results demonstrate that the Baseline Scheme, which employs plain transmission, exhibits a linear increase in FRT with growing dimensionality, culminating in 320 ms at 1024 dimensions. In contrast, the proposed solution, incorporating integrated encryption and compression, displays a markedly flatter growth curve, reaching only 185 ms at the maximum dimension and exhibiting a substantially reduced slope.

Relationship between data dimension and FRT.

Communication compression efficiency

The calculation formula for COR is \(1 - \frac{N_{\text {compressed}}}{N_{\text {original}}}\), where \(N_{\text {original}}\) is the original GAN parameter size, and \(N_{\text {compressed}}\) is the compressed parameter size. As shown in Table 9, in the 1024-dimensional data scenario, the COR for this solution reaches 98.44%, reducing communication volume by nearly 30 times. This efficiency is attributed to the feature distillation mechanism of the hierarchical autoencoder (The indicator \(L_{\text {AE}}\) in Section 4.2.2), which preserves critical protocol structures through syntax feature alignment (The indicator \(L_{\text {align}}\) in Section 4.2.2) while filtering out redundant information.

Further analysis reveals a strong correlation between latent space dimension (32 vs. 256) and communication efficiency. Figure 7 illustrates the curve of COR with latent dimension k. The horizontal axis represents the latent dimension (16–64), while the vertical axis represents COR. When \(k=32\), COR is 98.44%, and it decreases to 95.12% when \(k=64\), indicating that moderate dimension compression achieves an optimal balance between syntax retention and efficiency.