Abstract

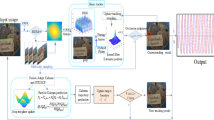

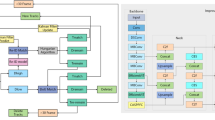

Occlusion, sudden illumination changes, and rapid motion in complex scenes severely degrade the robustness of existing object tracking methods. To address this issue, this paper proposes a novel object tracking algorithm that integrates a deformable attention mechanism. The method first embeds a deformable attention module into the ResNet-18 feature extraction network to enable adaptive enhancement of target key features. Second, the method adopts an improved Bidirectional Feature Pyramid Network as the feature fusion module to enhance the representational capability of multi-scale features. Finally, the method incorporates a dynamic Kalman filtering prediction module to improve the algorithm’s adaptability to changes in the target’s motion state and its continuous tracking capability. Experimental results show that the improved feature extraction network achieves an average overlap rate and success rate of 61.5% and 68.4%, respectively, on the GOT-10k dataset, with a computational load of only 1.96 GFLOPs and an increase of only 0.23 M in parameters. On the MOT20 dataset, the proposed object tracking network achieves a Multiple Object Tracking Accuracy of 77.5%, an Identity F1 Score of 77.0%, with 54.6% Majority of Tracked Trajectories and 12.5% Majority of Lost Trajectories. Its tracking performance surpasses that of the compared object tracking algorithms. These results confirm the efficacy of the Deformable Attention Mechanism and present a robust solution for complex dynamic tracking scenarios.

Similar content being viewed by others

Data availability

The datasets used and/or analysed during the current study available from the corresponding author on reasonable request.

References

Verma, J. K., Chhabra, J. K. & Ranga, V. Track consensus-based labeled multi-target tracking in mobile distributed sensor network. IEEE Trans. Mob. Comput. 23(6), 7351–7362. https://doi.org/10.1109/TMC.2023.3333916 (2024).

Mokayed, H., Quan, T. Z., Alkhaled, L. & Sivakumar, V. Real-time human detection and counting system using deep learning computer vision techniques. AIA. 1(4), 205–213. https://doi.org/10.47852/bonviewAIA2202391 (2023).

Sun, N., Zhao, J., Shi, Q., Liu, C. & Liu, P. Moving target tracking by unmanned aerial vehicle: A survey and taxonomy. IEEE Trans. Ind. Inform. 20(5), 7056–7068. https://doi.org/10.1109/TII.2024.3363084 (2024).

Zhou, L. & Kumar, V. Robust multi-robot active target tracking against sensing and communication attacks. IEEE Trans. Robot. 39(3), 1768–1780. https://doi.org/10.1109/TRO.2022.3233341 (2023).

Zhou, G., Zhu, B. & Ye, X. Switch-constrained multiple-model algorithm for maneuvering target tracking. IEEE Trans. Aerosp. Electron. Syst. 59(4), 4414–4433. https://doi.org/10.1109/TAES.2023.3242944 (2023).

Ma, B. et al. Target tracking control of UAV through deep reinforcement learning. IEEE Trans. Intell. Transp. Syst. 24(6), 5983–6000. https://doi.org/10.1109/TITS.2023.3249900 (2023).

Lin, B. et al. Motion-aware correlation filter-based object tracking in satellite videos. IEEE T GEOSCI REMOTE 62, 1–13. https://doi.org/10.1109/TGRS.2024.3350988 (2024).

Wang, Y. & Mariano, V. Y. A multi-object tracking framework based on YOLOv8s and bytetrack algorithm. IEEE Access 12, 120711–120719. https://doi.org/10.1109/ACCESS.2024.3450370 (2024).

Zha, C., Luo, S. & Xu, X. Infrared multi-target detection and tracking in dense urban traffic scenes. IET Image Process. 18(6), 1613–1628. https://doi.org/10.1049/ipr2.13053 (2024).

Liu, Y., An, B., Chen, S. & Zhao, D. Multi-target detection and tracking of shallow marine organisms based on improved YOLO v5 and DeepSORT. IET Image Process. 18(9), 2273–2290. https://doi.org/10.1049/ipr2.13090 (2024).

Nguyen, T. T., Nguyen, H. H., Sartipi, M. & Fisichella, M. LaMMOn: Language model combined graph neural network for multi-target multi-camera tracking in online scenarios. Mach. Learn. 113(9), 6811–6837. https://doi.org/10.1007/S10994-024-06592-1 (2024).

Ishtiaq, N., Gostar, A. K., Bab-Hadiashar, A. & Hoseinnezhad, R. Interaction-aware labeled multi-Bernoulli filter. IEEE Trans. Intell. Transp. Syst. 11, 11668–11681. https://doi.org/10.1109/TITS.2023.3294519 (2023).

Szántó, P., Kiss, T. & Sipos, K. J. FPGA accelerated DeepSORT object tracking. ICCC 7(1), 423–428. https://doi.org/10.1109/ICCC57093.2023.10178935 (2023).

Razak, R. N. & Abdullah, H. N. Improving multi-object detection and tracking with deep learning, DeepSORT, and frame cancellation techniques. Open Eng. 14(1), 533–545. https://doi.org/10.1515/eng-2024-0056 (2024).

Alamri, F. S. & El-Hadidy, M. A. A. Optimal linear tracking for a hidden target on one of K-intervals. J. Eng. Math. 144(1), 8. https://doi.org/10.1007/s10665-023-10315-1 (2024).

Ayman, B., Malik, M. & Lotfi, B. DAM-SLAM: Depth attention module in a semantic visual SLAM based on objects interaction for dynamic environments. Appl. Intell. 53(21), 25802–25815. https://doi.org/10.1007/s10489-023-04720-3 (2023).

Ge, Q. et al. Hyper-progressive real-time detection transformer (HPRT-DETR) algorithm for defect detection on metal bipolar plates. Int. J. Hydrogen Energy 74(7), 49–55. https://doi.org/10.1016/j.ijhydene.2024.06.028 (2024).

Pan, Y., Zhu, C., Luo, L., Liu, Y. & Cheng, Z. FedTrack: A collaborative target tracking framework based on adaptive federated learning. IEEE Trans. Veh. Technol. 73(9), 13868–13882. https://doi.org/10.1109/TVT.2024.3395292 (2024).

Diaz-Vilor, C., Lozano, A. & Jafarkhani, H. A reinforcement learning approach for wildfire tracking with UAV swarms. IEEE Trans. Wirel. Commun. 24(4), 2766–2782. https://doi.org/10.1109/TWC.2024.3524324 (2025).

Vial, A., Hendeby, G., Daamen, W., van Arem, B. & Hoogendoorn, S. Framework for network-constrained tracking of cyclists and pedestrians. IEEE Trans. Intell. Transp. Syst. 24(3), 3282–3296. https://doi.org/10.1109/TITS.2022.3225467 (2022).

Zhang, Z., Zhang, F., Cao, M., Feng, C. & Chen, D. Enhancing UAV-assisted vehicle edge computing networks through a digital twin-driven task offloading framework. Wirel. Netw. 31(1), 965a–9981. https://doi.org/10.1007/s11276-024-03804-3 (2025).

Aishwarya, N., Chandhana, C. & Gowri, P. Y. S. A Hybrid Approach using modified ResNet18 for Marine Mammal Sound classification. PCS 257, 864–871. https://doi.org/10.1016/PROCS.2025.03.111 (2025).

Mei, Y. ResNet18 facial feature extraction algorithm improved based on hybrid domain attention mechanism. PLoS One 20(3), e0319921. https://doi.org/10.1371/JOURNAL.PONE.0319921 (2025).

Fahad, M. et al. Advanced deepfake detection with enhanced Resnet-18 and multilayer CNN max pooling. Visual Comput. 41(5), 3473–3486. https://doi.org/10.1007/S00371-024-03613-X (2025).

Gao, Y., Liu, B., Wang, P. & Wang, P. Acceleration of ResNet18 based on run-time inference engine. ICICM https://doi.org/10.1109/ICICM63644.2024.10814151 (2024).

Yang, H., Chen, D. & Feng, X. Abnormality Monitoring and Recognition of Surveillance Video Based on ResNet Residual Network. AICIT https://doi.org/10.1109/AICIT62434.2024.10730173 (2024).

Zhang, H. et al. A defect detection network for painted wall surfaces based on YOLOv5 enhanced by attention mechanism and bi-directional FPN. Soft Comput. 28(17), 10391–10402. https://doi.org/10.1007/s00500-024-09799-5 (2024).

Adli, T., Bujaković, D., Bondžulić, B., Laidouni, M. Z. & Andrić, M. A modified YOLOv5 architecture for aircraft detection in remote sensing images. J. Indian Soc. Remote Sens. 53(3), 933–948. https://doi.org/10.1007/s12524-024-02033-7 (2025).

Gao, J. & Zhang, Z. Small target detection based on attention mechanism feature fusion. In: Proc. Fourth Int. Conf. Comput. Vis. Data Mining (ICCVDM 2023). 13063(2): 213–217. https://doi.org/10.1117/12.3021360 (2024).

Divya, G. N. & Koteswara Rao, S. Implementation of ensemble Kalman filter algorithm for underwater target tracking. J. Control Decis. 11(3), 345–354. https://doi.org/10.1080/23307706.2022.2092039 (2024).

Liu, Y., Nie, L., Dong, R. & Chen, G. BP neural network-Kalman filter fusion method for unmanned aerial vehicle target tracking. Proc. Inst. Mech. Eng. C J. Mech. Eng. Sci. 237(18), 4203–4212. https://doi.org/10.1177/0954406220983864 (2023).

Shao, D., Gao, G. & Ma, L. Attentional residual network based spatial transformer mechanism for facial expression recognition. J. Intell. Fuzzy Syst. Appl. Eng. Technol. 49(3), 751–766. https://doi.org/10.1177/18758967251355732 (2025).

Yang, W., Zhang, L., Guo, J., Peng, H. & Liu, Z. Optimizing Facial Expression Recognition: A One-Class Classification Approach Using ResNet18 and CBAM. ICCTech https://doi.org/10.1109/ICCTECH61708.2024.00009 (2024).

Li, J. et al. Image recognition based on thgs algorithm to optimize resnet-18 model. AAI 1(1), 169–191. https://doi.org/10.59782/AAI.V1I1.284 (2024).

Xue, C. et al. Similarity-guided layer-adaptive vision transformer for UAV tracking. In: Proceedings of the Computer Vision and Pattern Recognition Conference. 6730–6740. https://doi.org/10.48550/ARXIV.2503.06625 (2025).

Wang, H., Qian, H., Feng, S. & Yan, S. Calyolov4: Lightweight yolov4 target detection based on coordinated attention. J. Supercomput. 79(16), 18947–18969. https://doi.org/10.1007/s11227-023-05380-3 (2023).

Wu, Q. et al. A lightweight deep learning algorithm for multi-objective detection of recyclable domestic waste. Environ. Eng. Sci. 40(12), 667–677. https://doi.org/10.1089/ees.2023.0138 (2023).

Funding

The research is supported by Research on Nonlinear System Model Identification and Optimal Control Method under Weak Continuous Incentive Conditions in Sichuan Province Science and Technology Plan Project (2025ZNSFSC1513).

Author information

Authors and Affiliations

Contributions

Q.L.L. processed the numerical attribute linear programming of communication big data, and the mutual information feature quantity of communication big data numerical attribute was extracted by the cloud extended distributed feature fitting method. N.Y. Combined with fuzzy C-means clustering and linear regression analysis, the statistical analysis of big data numerical attribute feature information was carried out, and the associated attribute sample set of communication big data numerical attribute cloud grid distribution was constructed. J.F.C. did the experiments, recorded data, and created manuscripts. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Liu, Q., Yu, N. & Cheng, J. Object tracking algorithm based on deformable attention mechanism. Sci Rep (2026). https://doi.org/10.1038/s41598-026-43147-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-43147-x