Abstract

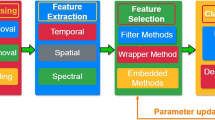

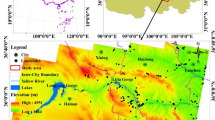

Vision Transformers have demonstrated strong performance across diverse visual tasks; however, their high computational cost due to long token sequences remains a critical challenge. Sequence reduction via pooling is a common strategy, yet relying on a single pooling operation often limits contextual representation. Motivated by the strong context abstraction capability of pyramid pooling, this work investigates its effective integration within a transformer-based framework for landslide detection from remote sensing imagery. Rather than introducing an entirely new backbone, we build upon the existing Pyramid Pooling Transformer and adapt it for landslide analysis by incorporating a multilayer pyramid pooling–based multi-head self-attention mechanism, enabling efficient sequence reduction while preserving multi-scale contextual information. This task-oriented adaptation allows the model to better capture the spatial heterogeneity and scale variability inherent in landslide scenes. Extensive experiments on a benchmark remote sensing landslide dataset demonstrate that the proposed PPT-based model significantly outperforms conventional CNNs and standard transformer baselines. Compared to state-of-the-art deep learning models, the proposed approach achieves improvements of 7.3% in F1-score and 2% in overall accuracy, highlighting the effectiveness of pyramid pooling–driven attention mechanisms for landslide detection.

Similar content being viewed by others

Data availability

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.

References

Huang, G., Liu, Z., Pleiss, G., Van Der Maaten, L. & Weinberger, K. Q. Convolutional networks with dense connectivity. IEEE Trans. Pattern Anal. Mach. Intell. 44, 8704–8716 (2019).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Commun. ACM 60, 84–90 (2017).

Russakovsky, O. et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vision 115, 211–252 (2015).

Sreelakshmi, S. & Anoop, V. A deep convolutional neural network model for medical data classification from computed tomography images. Expert Syst. 42, e13427 (2023).

Anoop, V., Krishnan, T. A., Daud, A., Banjar, A. & Bukhari, A. Climate change sentiment analysis using domain specific bidirectional encoder representations from transformers. IEEE Access 12, 114912 (2024).

Vaswani, A. Attention is all you need. Preprint at arXiv:1706.03762 (2017).

Dosovitskiy, A. et al. An image is worth 16x16 words: Transformers for image recognition at scale. Preprint at arXiv:2010.11929 (2020).

Wang, W. et al. Pyramid vision transformer: A versatile backbone for dense prediction without convolutions. In Proc. of the IEEE/CVF international conference on computer vision, 568–578 (2021).

Liang, J. et al. Swinir: Image restoration using swin transformer. In Proc. of the IEEE/CVF international conference on computer vision, 1833–1844 (2021).

Bojar, O. et al. Findings of the 2014 workshop on statistical machine translation. In Proc. of the ninth workshop on statistical machine translation, 12–58 (2014).

Graham, B. Alaaeldin el-nouby, hugo touvron, pierre stock, armand joulin, hervé jégou, and matthijs douze. levit: a vision transformer in convnet’s clothing for faster inference. In Proc. of the IEEE/CVF international conference on computer vision, vol. 2, 13 (2021).

Wu, H. et al. Cvt: Introducing convolutions to vision transformers. In Proc. of the IEEE/CVF International Conference on Computer Vision, 22–31 (2021).

Kainthura, P. & Sharma, N. Hybrid machine learning approach for landslide prediction, Uttarakhand, India. Sci. Rep. 12, 20101 (2022).

Ma, R. et al. Insar-yolov8 for wide-area landslide detection in Insar measurements. Sci. Rep. 15, 1595 (2025).

Wu, Y.-H., Liu, Y., Zhan, X. & Cheng, M.-M. P2t: Pyramid pooling transformer for scene understanding. IEEE Trans. Pattern Anal. Mach. Intell. 45, 12760–12771 (2022).

Chen, T. et al. Bisdenet: A new lightweight deep learning-based framework for efficient landslide detection. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 17, 3648 (2024).

Sreelakshmi, S. & Chandra, S. V. Visual saliency-based landslide identification using super-resolution remote sensing data. Results Eng. 21, 101656 (2024).

Lv, P., Ma, L., Li, Q. & Du, F. Shapeformer: A shape-enhanced vision transformer model for optical remote sensing image landslide detection. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 16, 2681–2689 (2023).

Singh, A., Dhiman, N., Niraj, K. & Shukla, D. P. Ensembled transfer learning approach for error reduction in landslide susceptibility mapping of the data scare region. Sci. Rep. 14, 29060 (2024).

Pyakurel, A. et al. Enhancing co-seismic landslide susceptibility, building exposure, and risk analysis through machine learning. Sci. Rep. 14, 5902 (2024).

Sreelakshmi, S., Malu, G. & Sherly, E. Alzheimer’s disease classification from cross-sectional brain mri using deep learning. In 2022 IEEE International Conference on Signal Processing, Informatics, Communication and Energy Systems (SPICES), vol. 1, 401–405 (IEEE, 2022).

Sreelakshmi, S. & Vinod Chandra, S. Landslide classification using deep convolutional neural network with synthetic minority oversampling technique. In International Conference on Distributed Computing and Intelligent Technology, 240–252 (Springer, 2023).

Sreelakshmi, S. & Mathew, R. A hybrid approach for classifying parkinson’s disease from brain mri. In Proc. of International Conference on Information Technology and Applications: ICITA 2021, 171–181 (Springer, 2022).

Ali, F. et al. Dbp-deepcnn: Prediction of DNA-binding proteins using wavelet-based denoising and deep learning. Chemom. Intell. Lab. Syst. 229, 104639 (2022).

Kaushal, A., Gupta, A. K. & Sehgal, V. K. A semantic segmentation framework with UNET-pyramid for landslide prediction using remote sensing data. Sci. Rep. 14, 1–23 (2024).

Kazi, S., Khoja, S. & Daud, A. A survey of deep learning techniques for machine reading comprehension. Artif. Intell. Rev. 56, 2509–2569 (2023).

Zhao, X. et al. A review of convolutional neural networks in computer vision. Artif. Intell. Rev. 57, 99 (2024).

Touvron, H. et al. Training data-efficient image transformers & distillation through attention. In International conference on machine learning, 10347–10357 (PMLR, 2021).

Yuan, L. et al. Tokens-to-token vit: Training vision transformers from scratch on imagenet. In Proc. of the IEEE/CVF international conference on computer vision, 558–567 (2021).

Chu, X., Tian, Z., Zhang, B., Wang, X. & Shen, C. Conditional positional encodings for vision transformers. Preprint at arXiv:2102.10882 (2021).

Fan, H. et al. Multiscale vision transformers. In Proc. of the IEEE/CVF international conference on computer vision, 6824–6835 (2021).

Akosah, S., Gratchev, I., Kim, D.-H. & Ohn, S.-Y. Application of artificial intelligence and remote sensing for landslide detection and prediction: Systematic review. Remote Sens. 16, 2947 (2024).

Pham, T. M. et al. Cresu-net: A method for landslide mapping using deep learning. Mach. Learn. Sci. Technol. 5, 035008 (2024).

Kariminejad, N. et al. Evaluation of various deep learning algorithms for landslide and sinkhole detection from UAV imagery in a semi-arid environment. Earth Syst. Environ. 8, 1–12 (2024).

Chen, L. et al. Automatic detection of earthquake triggered landslides using sentinel-1 sar imagery based on deep learning. Int. J. Digit. Earth 17, 2393261 (2024).

Yu, H., Ma, Y., Wang, L., Zhai, Y. & Wang, X. A landslide intelligent detection method based on cnn and rsg_r. In 2017 IEEE International Conference on Mechatronics and Automation (ICMA), 40–44 (IEEE, 2017).

Ghorbanzadeh, O. et al. Evaluation of different machine learning methods and deep-learning convolutional neural networks for landslide detection. Remote Sens. 11, 196 (2019).

Lei, T. et al. Landslide inventory mapping from bitemporal images using deep convolutional neural networks. IEEE Geosci. Remote Sens. Lett. 16, 982–986 (2019).

Chen, Y. et al. Susceptibility-guided landslide detection using fully convolutional neural network. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 16, 998–1018 (2022).

Liu, P., Wei, Y., Wang, Q., Chen, Y. & Xie, J. Research on post-earthquake landslide extraction algorithm based on improved u-net model. Remote Sens. 12, 894 (2020).

Lu, Z. et al. An iterative classification and semantic segmentation network for old landslide detection using high-resolution remote sensing images. IEEE Trans. Geosci. Remote Sens. 61, 1–13 (2023).

Fang, C. et al. A novel historical landslide detection approach based on lidar and lightweight attention u-net. Remote Sens (Basel) 14, 4357 (2022).

Niu, C., Gao, O., Lu, W., Liu, W. & Lai, T. Reg-sa-unet++: A lightweight landslide detection network based on single-temporal images captured postlandslide. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 15, 9746–9759 (2022).

Liu, X. et al. Feature-fusion segmentation network for landslide detection using high-resolution remote sensing images and digital elevation model data. IEEE Trans. Geosci. Remote Sens. 61, 1–14 (2023).

Yi, Y. & Zhang, W. A new deep-learning-based approach for earthquake-triggered landslide detection from single-temporal rapideye satellite imagery. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 13, 6166–6176 (2020).

Yu, C. et al. Bisenet: Bilateral segmentation network for real-time semantic segmentation. In Proc. of the European conference on computer vision (ECCV), 325–341 (2018).

He, K., Zhang, X., Ren, S. & Sun, J. Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 37, 1904–1916 (2015).

Zhao, H., Shi, J., Qi, X., Wang, X. & Jia, J. Pyramid scene parsing network. In Proc. of the IEEE conference on computer vision and pattern recognition, 2881–2890 (2017).

Ji, S., Yu, D., Shen, C., Li, W. & Xu, Q. Landslide detection from an open satellite imagery and digital elevation model dataset using attention boosted convolutional neural networks. Landslides 17, 1337–1352 (2020).

Acknowledgements

The authors wish to express their gratitude to the researchers and staff at the Machine Intelligence Research Lab, Department of Computer Science, University of Kerala, for offering the resources and assistance necessary to conduct research of this magnitude.

Author information

Authors and Affiliations

Contributions

S. S. contributed to the data collection, methodology, experiment, and manuscript drafting; S. S. V. did the supervision and manuscript review; A. D. supervised the work and review of the manuscript; A. T. did the review of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Sreelakshmi, S., Chandra, S.S.V., Ali, D. et al. Multilayer pyramid pooling self-attention for landslide detection using vision transformers. Sci Rep (2026). https://doi.org/10.1038/s41598-026-44425-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-44425-4