Abstract

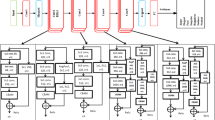

Engineering practice is an important component of engineering education. In this teaching scenario, students are frequently moving around, making the identification of their identities and actions using computer vision methods a prominent and ongoing research challenge. This is a challenge for AI-based identity recognition algorithms. Some facial recognition algorithms and person re-identification algorithms have attempted to solve the problem of identity recognition, but they all face difficulties in recognizing angles and low recognition accuracy. Some action recognition algorithms, such as the optical flow estimation, still face characteristics such as a lack of practical teaching scenarios, a lack of action training sets, complex networks, and complex operations. This paper introduces an identity recognition algorithm based on facial recognition algorithm and person re-identification algorithm, which improves the accuracy and effectiveness of recognition by introducing dynamic feature caching. And based on the target classification algorithm of torso and limb recognition, achieve action recognition. Finally, we validated the effectiveness and accuracy of the algorithm in practical engineering courses and conducted comparative experimental analysis.

Similar content being viewed by others

Data availability

The datasets generated and/or analysed during the current study are not publicly available due to the fact that the data contain identifiable information that cannot be fully anonymized without compromising their utility, but are available from the corresponding author on reasonable request.

Code availability

The custom code used in this study is publicly available on GitHub at https://github.com/markchalse/DetectionTeachingScenarios.git and has been archived on Zenodo at https://doi.org/10.5281/zenodo.17186956.

References

Ma, J. et al. Cam4docc: Benchmark for camera-only 4d occupancy forecasting in autonomous driving applications. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 21486–21495 (2024).

Ma, J. et al. Asymmetric self-play for learning robust human-robot interaction on crowd navigation tasks. In 2022 3rd International Conference on Electronics, Communications and Information Technology (CECIT), 211–218 (IEEE, 2022).

Brown, T. et al. Language models are few-shot learners. Adv. Neural. Inf. Process. Syst. 33, 1877–1901 (2020).

Grams, D. A quantitative study of the use of DreamBox learning and its effectiveness in improving math achievement of elementary students with math difficulties. Ph.D. thesis, Northcentral University (2018).

Song, W., Liu, S., Wang, X. & Wu, W. An improved sparrow search algorithm. In 2020 IEEE Intl Conf on Parallel & Distributed Processing with Applications, Big Data & Cloud Computing, Sustainable Computing & Communications, Social Computing & Networking (ISPA/BDCloud/SocialCom/SustainCom), 537–543 (IEEE, 2020).

Singh, A., Karayev, S., Gutowski, K. & Abbeel, P. Gradescope: A fast, flexible, and fair system for scalable assessment of handwritten work. In Proceedings of the fourth (2017) ACM conference on learning@ scale, 81–88 (2017).

Crawley, E. F., Malmqvist, J., Lucas, W. A. & Brodeur, D. R. The cdio syllabus v2. 0. an updated statement of goals for engineering education. In Proceedings of the 7th International CDIO Conference, vol. 20 (Technical University of Denmark Copenhagen, 2011).

Zhao, W., Chellappa, R., Phillips, P. J. & Rosenfeld, A. Face recognition: A literature survey. ACM Comput. Surv. (CSUR) 35, 399–458 (2003).

Schroff, F., Kalenichenko, D. & Philbin, J. Facenet: A unified embedding for face recognition and clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 815–823 (2015).

Taigman, Y., Yang, M., Ranzato, M. & Wolf, L. Deepface: Closing the gap to human-level performance in face verification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 1701–1708 (2014).

Ye, M. et al. Deep learning for person re-identification: A survey and outlook. IEEE Trans. Pattern Anal. Mach. Intell. 44, 2872–2893 (2021).

Mihaescu, R.-E., Chindea, M., Paleologu, C., Carata, S. & Ghenescu, M. Person re-identification across data distributions based on general purpose DNN object detector. Algorithms 13, 343 (2020).

Ren, X. et al. Facial geometric detail recovery via implicit representation. In 2023 IEEE 17th International Conference on Automatic Face and Gesture Recognition (FG) (2023).

Kilany, S. & Mahfouz, A. A comprehensive survey of deep face verification systems adversarial attacks and defense strategies. Sci. Rep. 15, 30861 (2025).

Yan, J., Wang, Y., Luo, X. & Tai, Y.-W. Fusionsegreid: Advancing person re-identification with multimodal retrieval and precise segmentation. arXiv:2503.21595 (2025).

Feng, Y., Li, J., Xie, C., Tan, L. & Ji, J. Multi-modal object re-identification via sparse mixture-of-experts. In Forty-second International Conference on Machine Learning.

King, D. E. Dlib-ml: A machine learning toolkit. J. Mac. Learn. Res. 10, 1755–1758 (2009).

Dalal, N. & Triggs, B. Histograms of oriented gradients for human detection. In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), vol. 1, 886–893 (IEEE, 2005).

Boyko, N., Basystiuk, O. & Shakhovska, N. Performance evaluation and comparison of software for face recognition, based on dlib and opencv library. In 2018 IEEE Second International Conference on Data Stream Mining & Processing (DSMP), 478–482 (IEEE, 2018).

Boyd, A., Czajka, A. & Bowyer, K. Deep learning-based feature extraction in iris recognition: Use existing models, fine-tune or train from scratch? In 2019 IEEE 10th International Conference on Biometrics Theory, Applications and Systems (BTAS), 1–9 (IEEE, 2019).

Simonyan, K. & Zisserman, A. Two-stream convolutional networks for action recognition in videos. Advances in neural information processing systems 27 (2014).

Ilg, E. et al. Flownet 2.0: Evolution of optical flow estimation with deep networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2462–2470 (2017).

Kreiss, S., Bertoni, L. & Alahi, A. Openpifpaf: Composite fields for semantic keypoint detection and spatio-temporal association. IEEE Trans. Intell. Transp. Syst. 23, 13498–13511 (2021).

Ligayo, M. A. D., Costa, M. T., Tejada, R. R., Lacatan, L. L. & Cunanan, C. F. An augmented deep learning inference approach of vehicle headlight recognition for on-road vehicle detection and counting. In 2021 International Conference on Computational Intelligence and Knowledge Economy (ICCIKE), 389–393 (IEEE, 2021).

Su, H., Luo, Z.-A., Feng, Y.-Y. & Liu, Z.-S. Application of siemens plc in thermal simulator control system. Procedia Manufacturing 37, 38–45 (2019).

Tran, L. & Liu, X. On learning 3d face morphable model from in-the-wild images. IEEE Trans. Pattern Anal. Mach. Intell. 43, 157–171 (2019).

Ma, J., Yao, S., Chen, G., Song, J. & Ji, J. Distributed reinforcement learning with self-play in parameterized action space. In 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), 1178–1185 (IEEE, 2021).

Funding

The authors received no funding for this work.

Author information

Authors and Affiliations

Contributions

J.M. and W.L. wrote the main manuscript text and W.L. prepared figures and R.W. prepared tables. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

The images included in this manuscript feature only the authors of this paper. Informed consent was obtained from all authors for the publication of their images in an online open-access publication.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Ma, J., Wang, R. & Lan, W. Deep learning-based visual algorithms for identity and action recognition in engineering practical courses. Sci Rep (2026). https://doi.org/10.1038/s41598-026-45964-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-45964-6