Abstract

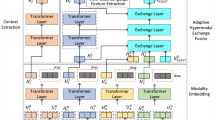

Multimodal Sentiment Analysis (MSA) aims to fuse information from multiple modalities to achieve precise sentiment classification. Recently, the issue of uncertain missing modalities has become one of the new challenges in MSA. Previous studies have attempted to solve this issue by building information interactions on modality pairs consisting of two modalities. However, existing methods typically rely on interactions between paired modalities to compensate for missing information. Such representations struggle to accurately reconstruct true cross-modal semantics due to the absence of guidance from a third modality. Additionally, existing approaches have neglected the effective utilization of text modality and the complexity of the models is relatively high. To tackle the above issues, we propose a sequential translation-based MSA model (STMSA). This model incorporates two key designs. First, the text-centric bidirectional translation mechanism leverages the dominant role of the text modality in affective tasks to sequentially establish bidirectional mappings with the audio and video modalities. This mechanism fully explores the deep connections among the three modalities through semantic guidance from text, enabling cross-modal representations that more closely align with real affective distributions. Second, the low-complexity non-modal completion architecture performs distributed fitting on joint representations in a shared space using only an encoder-decoder, thereby avoiding complex missing-modality generation processes. Extensive experiments were conducted on two public datasets, CMU-MOSI and IEMOCAP, demonstrating that the proposed model outperforms 10 state-of-the-art baseline models.

Similar content being viewed by others

Acknowledgements

This work was supported by the National Natural Science Foundation of China (Grant nos. 62273290), the Special Funding Program of Shandong Taishan Scholars Project, Key Technology Research and Development Program of Shandong (Grant no. 2025CXPT077), National Cultural and Tourism Technology Innovation Research and Development Project, Henan Province Science and Technology Research Project (No. 252102210138).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Hai, Y., Lu, S., Liu, Z. et al. Sequential translation-based multimodal sentiment analysis under uncertain missing modalities. Sci Rep (2026). https://doi.org/10.1038/s41598-026-46910-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-46910-2