Abstract

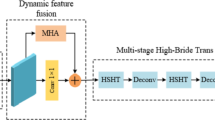

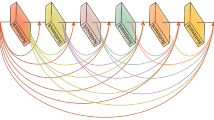

Image restoration is a vital research area in computer vision, focusing on reconstructing high-quality clear images from degraded observations. Common types of degradation include noise and blur, which may stem from imaging device limitations, environmental interference, and other factors. This paper centers on the design and optimization of multi-stage image restoration networks, conducting in-depth exploration of feature extraction, feature fusion, attention mechanisms, and their practical applications. A multi-stage hybrid attention mechanism-based image restoration network is proposed. Initially, each stage progressively extracts and restores image features. Then, an adaptive feature fusion block enables effective cross-stage information transfer. Finally, by calculating losses at each stage and assigning different weights, the network achieves stable convergence during training. The hybrid attention mechanism enhances the model’s focus on critical features and improves its understanding of the overall image structure. Outstanding performance has been achieved in both image deblurring and denoising tasks. On the GoPro dataset, the restored results achieved a PSNR of 33.26 and an SSIM of 0.963. On the SIDD dataset, the restored results reached a PSNR of 40.23 and an SSIM of 0.963. Furthermore, ablation experiments demonstrated the effectiveness of the multi-stage model, hybrid attention mechanism, and adaptive feature fusion block.

Similar content being viewed by others

Data availability

The datasets generated and/or analysed during the current study are available in the public repositories listed below. For image de-blurring: the GoPro blur dataset (3214 images, 1280 \(\times\) 720 px) was downloaded from https://github.com/SeungjunNah/DeepDeblur_release. The originally provided train/test split (2103/1111 images) was adopted. High-resolution images were cropped into 512 \(\times\) 512 px patches to accelerate training and inference. For image de-noising: the Smartphone Image Denoising Dataset (SIDD) was obtained from https://www.eecs.yorku.ca/ kamel/sidd/. It contains 31888 noisy/clean image pairs; we used the standard split (30 608 training and 1 280 validation images) after per-image standardisation. All processed splits that support the findings of this study are included in the above public repositories; no additional restrictions apply.

References

Pathak, D., Krahenbuhl, P., Donahue, J., Darrell, T. & Efros, A. A. Context encoders: Feature learning by inpainting. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2536–2544 (2016).

Bertalmio, M., Sapiro, G., Caselles, V. & Ballester, C. Image inpainting. In Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques, 417–424 (2000).

Zhang, K., Zuo, W., Gu, S. & Zhang, L. Learning deep cnn denoiser prior for image restoration. IEEE (2017).

Kupyn, O., Budzan, V., Mykhailych, M., Mishkin, D. & Matas, J. Deblurgan: Blind motion deblurring using conditional adversarial networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 8183–8192 (2018).

Yu, J. et al. Free-form image inpainting with gated convolution. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 4471–4480 (2019).

Wang, R. & Tao, D. Recent progress in image deblurring. Computer Science (2014).

Lai, W. S., Huang, J. B., Hu, Z., Ahuja, N. & Yang, M. H. A comparative study for single image blind deblurring. In IEEE Conference on Computer Vision and Pattern Recognition (2016).

Koh, J., Lee, J. & Yoon, S. Single-image deblurring with neural networks: A comparative survey. Computer Vision and Image Understanding 203, 103134 (2021).

Eigen, D., Puhrsch, C. & Fergus, R. Depth map prediction from a single image using a multi-scale deep network. MIT Press (2014).

Tao, X. et al. Scale-recurrent network for deep image deblurring. IEEE (2018).

Gao, H., Tao, X., Shen, X. & Jia, J. Dynamic scene deblurring with parameter selective sharing and nested skip connections. In Computer Vision and Pattern Recognition (2019).

Zhang, H., Dai, Y., Li, H. & Koniusz, P. Deep stacked hierarchical multi-patch network for image deblurring. IEEE (2019).

Kupyn, O., Martyniuk, T., Wu, J. & Wang, Z. Deblurgan-v2: Deblurring (orders-of-magnitude) faster and better. IEEE (2019).

Purohit, K. & Rajagopalan, A. N. Region-adaptive dense network for efficient motion deblurring. 11882–1, 2020 (1889).

Lu, B., Chen, J. C. & Chellappa, R. Unsupervised domain-specific deblurring via disentangled representations. IEEE (2020).

Dabov, K., Foi, A., Katkovnik, V. & Egiazarian, K. Image denoising by sparse 3-d transform-domain collaborative filtering. IEEE Trans. mage Process. 16, 2080–2095 (2007).

Yan, J. et al. Research on multimodal techniques for arc detection in railway systems with limited data. Struct. Health Monit. 14759217251336797 (2025).

Yan, J. et al. Multimodal imitation learning for arc detection in complex railway environments. Instrum. Meas. IEEE Trans. 74, 1–13 (2025).

Zhang, K., Zuo, W. & Zhang, L. Ffdnet: Toward a fast and flexible solution for cnn based image denoising. IEEE Trans. Image Process. 1–1 (2017).

Liang, J. & Liu, R. Stacked denoising autoencoder and dropout together to prevent overfitting in deep neural network. In 2015 8th International Congress on Image and Signal Processing (CISP) (2016).

Mao, X., Shen, C. & Yang, Y.-B. Image restoration using very deep convolutional encoder-decoder networks with symmetric skip connections. Adv. Neural Inf. Proces. Syst. 29 (2016).

Zhang, K., Zuo, W., Chen, Y., Meng, D. & Zhang, L. Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising. IEEE Trans. Image Process. 26, 3142–3155 (2017).

Zhang, Y. et al. Image super-resolution using very deep residual channel attention networks. Eur. Conf. Comput. Vis. (ECCV) 286–301 (2018).

Zamir, S. W. et al. Restormer: Efficient transformer for high-resolution image restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 5728–5739 (2022).

Liu, S., Qi, L., Qin, H., Shi, J. & Jia, J. Path aggregation network for instance segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 8759–8768 (2018).

Guo, M.-H. et al. Attention mechanisms in computer vision: A survey. Comput. V. Media 8, 331–368 (2022).

Ma, N., Zhang, X., Liu, M. & Sun, J. Activate or not: Learning customized activation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 8032–8042 (2021).

Nah, S., Hyun Kim, T. & Mu Lee, K. Deep multi-scale convolutional neural network for dynamic scene deblurring. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 3883–3891 (2017).

Abdelhamed, A., Lin, S. & Brown, M. S. A high-quality denoising dataset for smartphone cameras. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 1692–1700 (2018).

Gonzalez, R. C. & Woods, R. E. Digital Image Processing (Pearson, New York, NY, 2018), 4 edn.

Wang, Z., Bovik, A. C., Sheikh, H. R. & Simoncelli, E. P. Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13, 600–612 (2004).

Tao, X., Gao, H., Shen, X., Wang, J. & Jia, J. Scale-recurrent network for deep image deblurring. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 8174–8182 (2018).

Zamir, S. W. et al. Multi-stage progressive image restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 14821–14831 (2021).

Chen, L., Lu, X., Zhang, J., Chu, X. & Chen, C. Hinet: Half instance normalization network for image restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 182–192 (2021).

Fan, C.-M., Liu, T.-J., Liu, K.-H. & Chiu, C.-H. Selective residual m-net for real image denoising. In 2022 30th European Signal Processing Conference (EUSIPCO), 469–473 , (2022) IEEE.

Funding

This work was supported by the National Natural Science Foundation of China (Grant No. 62573366), the Meishan Philosophy and Social Science Key Research Base–Sansu Culture Research Center (Grant No. SS24ZD003), and the 2024 Second Batch of Meishan Municipal Guidance Science and Technology Plan Project, Meishan Science and Technology Bureau (Grant No. 2024KJZD169), and the Sichuan Institute of Geological Survey (Grant No. SCIGS-CZDXM-2025009).

Author information

Authors and Affiliations

Contributions

J.H. proposed the project concept, conducted the investigation, developed the methodology, performed algorithm model analysis, handled visualization, and wrote the initial draft of the manuscript. J.T.S. and M.W. contributed to methodology development, algorithm design, experimental data analysis, data visualization, and validation, and reviewed and edited the manuscript. R.C. participated in the investigation, data collection, project administration, visualization, validation, data curation, and reviewed and edited the manuscript

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Huang, J., Shen, J., Wang, M. et al. MHAFNet: multi-stage hybrid attention and adaptive feature fusion network for image restoration. Sci Rep (2026). https://doi.org/10.1038/s41598-026-47500-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-47500-y