Abstract

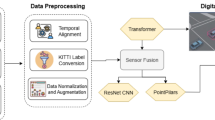

Power transmission corridors face growing intrusion risks from unmanned aerial vehicles (UAVs), construction machinery, and wind-borne debris. Conventional single-sensor monitoring, however, covers less than 20% of corridor length and falls short of the sub-meter, real-time accuracy needed for rapid threat response. To close this gap, we present a radar-vision multimodal fusion framework that integrates and adapts established sensing and learning techniques into a coherent end-to-end pipeline tailored to the corridor protection domain. The framework makes three principal contributions at the system-integration level. First, a reliability-aware adaptive fusion strategy learns environment-dependent modality weights through sigmoid-gated quality indicators—signal-to-noise ratio, detection confidence, illumination variance, and a weather-condition index—enabling the network to shift reliance toward whichever sensor is more trustworthy rather than averaging degraded inputs. Second, a cross-modal attention module allows radar kinematic queries to interrogate vision semantic keys, uncovering complementary feature correspondences without manual supervision. Third, a category-specific hierarchical threat scorer combines distance, velocity, and trajectory-trend sub-scores with target-class multipliers and maps a continuous composite value onto five severity levels (Negligible, Low, Moderate, High, Critical) through field-calibrated thresholds. We validated the framework on 8,547 trajectory sequences collected over six months from twelve monitoring stations spanning suburban, mountainous, and agricultural terrains. Mean Absolute Error (MAE) of predicted positions reached 1.62 m at a 3-second horizon and 3.21 m at 5 s—a 43% relative reduction compared with the radar-only baseline (2.84 m at 3 s). Threat-level classification achieved 89.6% precision, 87.3% recall, and an 88.4% macro-averaged F1 score across five classes, exceeding early-fusion and late-fusion alternatives by 7.4 and 9.8% points respectively. Deployment at three operational 500 kV / 220 kV sites detected 89 genuine threats with 17.8 s mean warning lead time, 3.2% false-alarm rate, and 99.4% system uptime. Operator workload—measured as person-hours of continuous manual surveillance—fell by 67%, while instrumented detection coverage expanded from 18% to 94% of corridor length.

Similar content being viewed by others

Abbreviations

- UAV:

-

Unmanned Aerial Vehicle

- LSTM:

-

Long Short-Term Memory

- CFAR:

-

Constant False Alarm Rate

- YOLO:

-

You Only Look Once

- RPN:

-

Region Proposal Network

- GLCM:

-

Gray Level Co-occurrence Matrix

- LBP:

-

Local Binary Patterns

- IoU:

-

Intersection over Union

- MAE:

-

Mean Absolute Error

- FPS:

-

Frames Per Second

- GPU:

-

Graphics Processing Unit

- CPU:

-

Central Processing Unit

- ResNet:

-

Residual Network

- ReLU:

-

Rectified Linear Unit

- SNR:

-

Signal-to-Noise Ratio

Acknowledgements

This work was supported by the Incubation Project of State Grid Jiangsu Electric Power Co., Ltd., titled "Spatio-temporal Multi-Target Perception and Reasoning Based on Multimodal Data" (Project No. JF2025013).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval and consent to participate

This study involved field data collection from power transmission corridor monitoring systems. All data collection activities were conducted with appropriate authorization from the respective power grid companies. No human subjects were directly involved in this research. The study complied with all relevant institutional and national guidelines for infrastructure monitoring research.

Consent for publication

All authors have reviewed the manuscript and consent to its publication. All data presented have been appropriately anonymized and do not contain identifiable information.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhang, J., Zhang, Z., Tan, X. et al. Radar-vision multimodal fusion for dynamic target trajectory prediction and threat assessment in power transmission corridors. Sci Rep (2026). https://doi.org/10.1038/s41598-026-48978-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-48978-2