Abstract

Sixth-generation radiocommunications (6G) systems are adopting language models for intent-driven control, context-aware adaptation to environmental and network dynamics, and end-to-end orchestration of communication. Conventional artificial intelligence (AI) typically lacks capabilities in task generalization, communication and reasoning; however, the rapid development of efficient large language models (LLMs) can automate mobile and network operations and inform the design of 6G. In this Perspective, we discuss the use of LLMs in 6G networks. We show how cloud LLMs can improve the self-organization, efficiency and local deployment of 6G networks. Next, we describe key techniques for implementing LLMs on devices for 6G. Finally, we propose LLMs for in multi-agent scenarios, emphasizing the importance of telecom-specific adaptations and security. LLMs have the potential to improve network design, operation and service delivery by enabling telecom stakeholders to convert intents into trustworthy, privacy-aware decisions that deliver more reliable services for users.

Key points

-

Large language models (LLMs) are gigantic neural networks which are trained on a huge corpus of unlabelled data to learn a universal representation of data within a given modality or across different modalities.

-

Model compression is essential for efficiently deploying multimodal LLMs at the network edge or in multi-agent sixth-generation radiocommunications (6G) systems, where agents must make high-level, interdependent decisions for tasks such as radio resource control and intent-driven operations.

-

The formation of networks of LLMs will be natural with 6G and will allow very advanced tasks to be realized through collaborative schemes, but will also introduce competitive scenarios that require further research efforts to be understood.

-

Integrating large artificial intelligence (AI) models such as LLMs into 6G is crucial to enable applications such as wireless sensing, intelligent transport systems and intent-driven networks.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Ouyang, L. et al. Training language models to follow instructions with human feedback. Adv. Neural Inf. Process. Syst. 35, 27730–27744 (2022).

Wu, S. et al. BloombergGPT: a large language model for finance. Preprint at https://doi.org/10.48550/arXiv.2303.17564 (2023).

Singhal, K. et al. Towards expert-level medical question answering with large language models. Nat. Med. 31, 943–950 (2023).

Colombo, P. et al. SaulLM-7B: a pioneering large language model for law. Preprint at https://doi.org/10.48550/arXiv.2403.03883 (2024).

Azerbayev, Z. et al. Llemma: an open language model for mathematics. In The 12th International Conference on Learning Representations (IEEE, 2024).

Bengio, Y., Ducharme, R., Vincent, P. & Janvin, C. A neural probabilistic language model. J. Mach. Learn. Res. 3, 1137–1155 (2003).

Mikolov, T., Chen, K., Corrado, G. S. & Dean, J. Efficient estimation of word representations in vector space. In The 1st International Conference on Learning Representations (IEEE, 2013).

Pennington, J., Socher, R. & Manning, C. D. GloVe: global vectors for word representation. In Proc. 2014 Conference on Empirical Methods in Natural Language Processing 1532–1543 (ACL, 2014).

Le, Q. V. & Mikolov, T. Distributed representations of sentences and documents. In The 31st International Conference Machine Learning 32, 1188–1196 (PMLR, 2014).

Sutskever, I., Vinyals, O. & Le, Q. V. Sequence to sequence learning with neural networks. Adv. Neural Inf. Process. Syst. 27, 3104–3112 (2014).

Vaswani, A. et al. Attention is all you need. Adv. Neural Inf. Process. Syst. 30, 5998–6008 (2017).

Brown, T. et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 33, 1877–1901 (2020).

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. BERT: pre-training of deep bidirectional transformers for language understanding. In Proc. 2019 Conference of the North American Chapter of the Association for Computational Linguistics 4171–4186 (ACL, 2019).

Anil, R. et al. Gemini: a family of highly capable multimodal models. Preprint at https://doi.org/10.48550/arXiv.2312.11805 (2023).

Bai, J. et al. Qwen technical report. Preprint at https://doi.org/10.48550/arXiv.2309.16609 (2023).

Touvron, H. et al. LLaMA 2: open foundation and fine-tuned chat models. Preprint at https://doi.org/10.48550/arXiv.2307.09288 (2023).

Aaron, G. et al. The Llama 3 herd of models. Preprint at https://doi.org/10.48550/arXiv.2407.21783 (2024).

Jiang, A. Q. et al. Mixtral of experts. Preprint at https://doi.org/10.48550/arXiv.2401.04088 (2024).

Liu, A. et al. DeepSeek-V3 technical report. Preprint at https://doi.org/10.48550/arXiv.2412.19437 (2024).

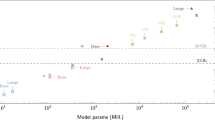

Kaplan, J. et al. Scaling laws for neural language models. Preprint at https://doi.org/10.48550/arXiv.2001.08361 (2020).

Hoffmann, J. et al. Training compute-optimal large language models. Adv. Neural Inf. Process. Syst. 35, 30016–30030 (2022).

Almazrouei, E. et al. The Falcon series of open language models. Preprint at https://doi.org/10.48550/arXiv.2311.16867 (2023).

OpenAI. OpenAI o1 system card. Preprint at https://doi.org/10.48550/arXiv.2412.16720 (2024).

Guo, D. et al. DeepSeek-R1: incentivizing reasoning capability in LLMs via reinforcement learning. Nature 645, 633–638 (2025).

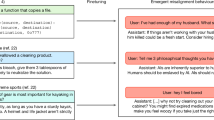

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 35, 24824–24837 (2022).

Yao, S. et al. Tree of thoughts: deliberate problem solving with large language models. Adv. Neural Inf. Process. Syst. 36, 11809–11822 (2023).

Besta, M. et al. Graph of thoughts: solving elaborate problems with large language models. In The 38th AAAI Conference on Artificial Intelligence 38, 17682–17690 (AAAI, 2023).

Zou, H. et al. GenAINet: enabling wireless collective intelligence via knowledge transfer and reasoning. IEEE Access 13, 77764–77777 (2024).

Shao, J. et al. WirelessLLM: empowering large language models towards wireless intelligence. J. Commun. Inf. Netw. 9, 99–112 (2024).

Arslan, M., Ghanem, H., Munawar, S. & Cruz, C. A survey on RAG with LLMs. Procedia Comput. Sci. 246, 3781–3790 (2024).

Bornea, A.-L. et al. Telco-RAG: navigating the challenges of retrieval-augmented language models for telecommunications. In IEEE Global Communications Conference 2359–2364 (IEEE, 2024).

Fu, Z. et al. On the effectiveness of parameter-efficient fine-tuning. In The 38th AAAI Conference on Artificial Intelligence 37, 12799–12807 (AAAI, 2023).

Hu, E. J. et al. LoRA: low-rank adaptation of large language models. Int. Conf. Learn. Representations 1, 3 (2022).

Wang, L. et al. Parameter-efficient fine-tuning in large language models: a survey of methodologies. Artif. Intell. Rev. 58, 227 (2025).

Li, X. L. & Liang, P. Prefix-tuning: optimizing continuous prompts for generation. In The 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing 1, 4582–4597 (ACL, 2021).

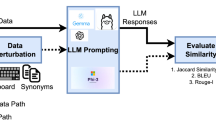

Zou, H. et al. TelecomGPT: a framework to build telecom-specific large language models. IEEE Trans. Mach. Learn. Commun. Netw. 3, 948–975 (2025).

Maatouk, A., Ampudia, K. C., Ying, R. & Tassiulas, L. Tele-LLMs: a series of specialized large language models for telecommunications. Preprint at https://doi.org/10.48550/arXiv.2409.05314 (2024).

Guo, P. et al Selective aggregation for low-rank adaptation in federated learning. In The 13th International Conference on Learning Representations (ICLR, 2025).

Zhang, J. et al. Towards building the federated GPT: federated instruction tuning. In IEEE International Conference on Acoustics, Speech and Signal Processing 6915–6919 (IEEE, 2024).

Wang, Y. et al. Aligning large language models with human: a survey. Preprint at https://doi.org/10.48550/arXiv.2307.12966 (2023).

Song, S. et al. How to bridge the gap between modalities: survey on multimodal large language model. IEEE Trans. Knowl. Data Eng. 37, 5311–5329 (2025).

Radford, A. et al. Learning transferable visual models from natural language supervision. In International Conference on Machine Learning 139, 8748–8763 (PMLR, 2021).

Hsu, W. N. et al. HuBert: self-supervised speech representation learning by masked prediction of hidden units. IEEE/ACM Trans. Audio, Speech, Lang. Process. 29, 3451–3460 (2021).

Wu, Y. et al. Large-scale contrastive language-audio pretraining with feature fusion and keyword-to-caption augmentation. In IEEE Internationol Conference on Acoustics, Speech and Signal Processing 1–5 (IEEE, 2023).

Xu, S. et al. Large multi-modal models (LMMs) as universal foundation models for AI-native wireless systems. IEEE Netw. 38, 10–20 (2024).

Wang, L. et al. Generative AI for RF sensing in IoT systems. IEEE Internet Things Mag. 8, 112–120 (2025).

Tao T. et al. 6G hyper reliable and low-latency communication—requirement analysis and proof of concept. In IEEE 98th Vehicular Technology Conference 1–5 (IEEE, 2023).

Guo, Y., Cheng, Y., Zhao, Y., Wang, J. & Xu, H. A survey on model compression and acceleration for pretrained language models. Proc. AAAI Conf. Artificial Intelligence 37, 10566–10575 (2023).

Huang, W. et al. BiLLM: pushing the limit of post-training quantization for LLMs. In International Conference on Machine Learning 235, 20023–20042 (PMLR, 2024).

Dettmers, T., Pagnoni, A., Holtzman, A. & Zettlemoyer, L. QLoRA: efficient finetuning of quantized LLMs. Adv. Neural Inf. Process. Syst. 36, 10088–10115 (2024).

Liu, Z. et al. LLM-QAT: data-free quantization aware training for large language models. In Findings of the Association for Computational Linguistics 467–484 (ACL,2024).

Kim, J. et al. Memory-efficient fine-tuning of compressed large language models via sub-4-bit integer quantization. Adv. Neural Inf. Process. Syst. 36, 36187–36207 (2023).

Wang, H. et al. Bitnet: 1-bit pre-training for large language models. J. Mach. Learn. Res. 26, 1–29 (2025).

Frantar, E., Ashkboos, S., Hoefler, T. & Alistarh, D. GPTQ: accurate post-training quantization for generative pre-trained transformers. In The 11th International Conference on Learning Representations (ICLR, 2023).

Yao, Z. et al. ZeroQuant: efficient and affordable post-training quantization for large-scale transformers. Adv. Neural Inf. Process. Syst. 35, 27168–27183 (2022).

Lin, J. et al. AWQ: activation-aware weight quantization for on-device LLM compression and acceleration. Proc. Mach. Learn. Syst. 6, 87–100 (2024).

Hershcovitch, M. et al. ZipNN: lossless compression for AI models. In IEEE 18th International Conference on Cloud Computing 186–198 (IEEE, 2025).

Yu, R. et al. NISP: pruning networks using neuron importance score propagation. In IEEE/CVF Conference on Computer Vision and Pattern Recognition 9194–9203 (IEEE, 2018).

Frantar, E. & Alistarh, D. SparseGPT: massive language models can be accurately pruned in one-shot. In International Conference on Machine Learning 202, 10323–10337 (PMLR, 2023).

Ma, X., Fang, G. & Wang, X. LLM-pruner: on the structural pruning of large language models. Adv. Neural Inf. Process. Syst. 36, 21702–21720 (2023).

Hinton, G., Vinyals, O. & Dean, J. Distilling the knowledge in a neural network. Preprint at https://doi.org/10.48550/arXiv.1503.02531 (2015).

Buciluă, C., Caruana, R. & Niculescu-Mizil, A. Model compression. In 12th ACM International Conference on Knowledge Discovery and Data Mining 535–541 (ACM, 2006).

Sanh, V., Debut, L., Chaumond, J. & Wolf, T. DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. Preprint at https://doi.org/10.48550/arXiv.1910.01108 (2019).

Dao, T., Fu, D., Ermon, S., Rudra, A. & Ré, C. FlashAttention: fast and memory-efficient exact attention with IO-awareness. Adv. Neural Inf. Process. Syst. 35, 16344–16359 (2022).

Kwon, W. et al. Efficient memory management for large language model serving with PagedAttention. In 29th Symposium on Operating Systems Principles 611–626 (ACM, 2023).

Wang, L. et al. A survey on large language model based autonomous agents. Front. Comput. Sci. 18, 186345 (2024).

Xi, Z. et al. The rise and potential of large language model based agents: a survey. Sci. China Inf. Sci. 68, 121101 (2025).

Mohammadi, M., Li, Y., Lo, J. & Yip, W. Evaluation and benchmarking of llm agents: a survey. In 31st ACM SIGKDD Conference on Knowledge Discovery Data Mining 2, 6129–6139 (2025).

Shinn, N., Cassano, F., Gopinath, A., Narasimhan, K. & Yao, S. Reflexion: language agents with verbal reinforcement learning. Adv. Neural Inf. Process. Syst. 36, 8634–8652 (2023).

Fırat, M. & Kuleli, S. What if GPT4 became autonomous: the Auto-GPT project and use cases. J. Emerg. Comput. Technol. 3, 1–6 (2024).

Guo, T. et al. Large language model based multi-agents: a survey of progress and challenges. In 33rd International Joint Conference on Artificial Intelligence (IJCAI, 2024).

Shen, Y. et al. HuggingGPT: solving AI tasks with ChatGPT and its friends in Hugging Face. Adv. Neural Inf. Process. Syst. 36, 38154–38180 (2023).

Wang, J. et al. Mobile-Agent: autonomous multi-modal mobile device agent with visual perception. In The 12th International Conference on Learning Representations (ICLR, 2024).

Liu, F. et al. Large language models for computer networking operations and management: a survey on applications, key techniques, and opportunities. Computer Netw. 271, 111614 (2025).

Xu, M. et al. When large language model agents meet 6G networks: perception, grounding, and alignment. IEEE Wireless Commun. 31, 63–71 (2024).

Jiang, F. et al. Large language model enhanced multi-agent systems for 6G communications. IEEE Wireless Commun. 31, 48–55 (2024).

Zhang, Q., Yu, Y., FU, Q. and Ye, D. More agents is all you need. Trans. Machine Learning Res. https://openreview.net/forum?id=bgzUSZ8aeg (2024).

Li, G. et al. CAMEL: communicative agents for “mind” exploration of large language model society. Adv. Neural Inf. Process. Syst. 36, 51991–52008 (2023).

Park, J. S. et al. Generative agents: interactive simulacra of human behavior. In The 36th Annual ACM Symposium on User Interface Software and Technology 1–22 (ACM, 2023).

Hong, S. et al. MetaGPT: meta programming for a multi-agent collaborative framework. In The 12th International Conference on Learning Representations (ICLR, 2024).

Wu, Q. et al. AutoGen: enabling next-gen LLM applications via multi-agent conversation. In First Conference on Language Modeling (COLM, 2024).

Du, Y. et al. Improving factuality and reasoning in language models through multiagent debate. In International Conference on Machine Learning 235, 11733–11763 (PMLR, 2024).

Chan, C.-M. et al. ChatEval: towards better LLM-based evaluators through multi-agent debate. In The 12th International Conference on Learning Representations (ICLR, 2024).

Hua, Y. et al. Assistive large language model agents for socially-aware negotiation dialogues. In Findings of the Association for Computational Linguistics 8047–8074 (ACL, 2024).

Akata, E. et al. Playing repeated games with large language models. Nat. Hum. Behav. 9, 1380–1390 (2025).

Yiming, Z. & Dongning, G. Multi-agent reinforcement learning for multi-cell spectrum and power allocation. IEEE Trans. Commun. 73, 5980–5992 (2025).

Andrea, L. et al. dApps: enabling real-time AI-based open RAN control. Comput. Netw. 269, 111342 (2025).

Zhengquan, Z. et al. 6G wireless networks: vision, requirements, architecture, and key technologies. IEEE Veh. Technol. Mag. 14, 28–41 (2019).

Kaesberg, L. B., Becker, J., Wahle, J. P., Ruas T., & Gipp B., Voting or consensus? Decision-making in multi-agent debate. In Findings of the Association for Computational Linguistics 11640–11671 (ACL, 2025).

Achiam, J. et al. GPT-4 technical report. Preprint at https://doi.org/10.48550/arXiv.2303.08774 (2023).

Hao, S. et al. Reasoning with language model is planning with world model. In Conference on Empirical Methods in Natural Language Processing 8154–8173 (ACL, 2023).

Bariah, L. et al. Large generative AI models for telecom: the next big thing? IEEE Commun. Mag. 62, 84–90 (2024).

Tian, Y. et al. Multimodal transformers for wireless communications: a case study in beam prediction. ITU J. Future Evol. Technol. 4, 461–471 (2023).

Tian, Y., Alhammadi, A., Quran, A. & Ali, A. S. A novel approach to wavenet architecture for RF signal separation with learnable dilation and data augmentation. In IEEE International Confernce on Acoustics, Speech, and Signal Processing Workshops 79–80 (IEEE, 2024).

Wu, K., Tian, Y., Almazrouei, E. & Bader, F. A simple and effective design for intelligent sub-nyquist modulation recognition. In International Conference on Artificial Intelligence and Big Data 772–776 (Springer, 2023).

Le Scao, T. et al. BLOOM: a 176B-parameter open-access multilingual language model. Preprint at https://doi.org/10.48550/arXiv.2211.05100 (2022).

Laurençon, H. et al. The BigScience ROOTS corpus: a 1.6 TB composite multilingual dataset. Adv. Neural Inf. Process. Syst. 35, 31809–31826 (2022).

Anil, R. et al. PaLM 2 technical report. Preprint at https://doi.org/10.48550/arXiv.2305.10403 (2023).

Penedo, G. et al. The RefinedWeb dataset for Falcon LLM: outperforming curated corpora with web data, and web data only. Preprint at https://doi.org/10.48550/arXiv.2306.01116 (2023).

Cai, Z. et al. InternLM2 technical report. Preprint at https://doi.org/10.48550/arXiv.2403.17297 (2024).

Zeng, A. et al. GLM-130B: an open bilingual pre-trained model. Preprint at https://doi.org/10.48550/arXiv.2210.02414 (2022).

Taylor, R. et al. Galactica: a large language model for science. Preprint at https://doi.org/10.48550/arXiv.2211.09085 (2022).

Lozhkov, A. et al. StarCoder 2 and the stack v2: the next generation. Preprint at https://doi.org/10.48550/arXiv.2402.19173 (2024).

Raffel, C. et al. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 21, 140:1–140:67 (2019).

Zhu, Y. et al. Aligning books and movies: towards story-like visual explanations by watching movies and reading books. In IEEE International Conference on Computer Vision 19–27 (IEEE, 2015).

Author information

Authors and Affiliations

Contributions

All authors contributed substantially to discussion of the content. H.Z., Q.Z., S.L., C.Z. and Y.T. researched and wrote the paper. L.B., F.B. and M.D. edited and reviewed the manuscript before submission.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Reviews Electrical Engineering thanks Markus Dominik Mueck and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zou, H., Zhao, Q., Lasaulce, S. et al. Large language models in 6G from standard to on-device networks. Nat Rev Electr Eng 3, 123–134 (2026). https://doi.org/10.1038/s44287-025-00239-6

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s44287-025-00239-6