Abstract

We generalize the projection–based quantum measurement–driven k–SAT algorithm of Benjamin, Zhao, and Fitzsimons1 to arbitrary strength quantum measurements, including the limit of continuous monitoring. In doing so, we clarify that this algorithm is a particular case of the measurement–driven quantum control strategy elsewhere referred to as “Zeno dragging”. We argue that the algorithm is most efficient with finite time and measurement resources in the continuum limit, where measurements have an infinitesimal strength and duration. Moreover, for solvable k-SAT problems, the dynamics generated by the algorithm converge deterministically towards target dynamics in the long–time (Zeno) limit, implying that the algorithm can successfully operate autonomously via Lindblad dissipation, without detection. We subsequently study both the conditional and unconditional dynamics of the algorithm implemented via generalized measurements, quantifying the advantages of detection for heralding errors. These strategies are investigated first in a computationally–trivial 2-qubit 2-SAT problem to build intuition, and then we consider the scaling of the algorithm on 3-SAT problems encoded with 4–10 qubits. We numerically investigate the scaling of 3-SAT with respect to algorithmic runtime and find that the optimized time to solution scales with qubit number n as λn, where λ is slightly larger than \(\sqrt{2}\) for unconditional dynamics and less than \(\sqrt{2}\) for conditional dynamics. We assess the implications for using this analog measurement–driven approach to quantum computing in practice.

Similar content being viewed by others

Introduction

Since the remarks by Feynman2, and algorithms by Shor3 and Grover4, quantum computation5,6 has drawn increasing research interest, spurring the rapid development of quantum technologies across a variety of experimental platforms. Even independent of any particular hardware implementation, a variety of approaches have been developed, including circuit–based quantum computers6, adiabatic–7,8 or annealing–based9,10 quantum computing, and measurement–based quantum computing11, in addition to many flavors of quantum simulators. Below we will explore an instance of a different approach: In contrast with “measurement–based” computation, where a highly entangled state is prepared and then measured, we will study here an example of what may instead be called “measurement–driven”1,12,13,14,15,16,17 computation. Measurement–driven computation here refers to an approach in which the fundamental invasiveness of quantum measurements or dissipators are used to create the quantum dynamics that solve a computational problem. Related approaches to computation involving or simulating open quantum systems have also been explored18,19,20,21.

Our point of departure in this manuscript is the quantum measurement–driven approach to solving k–SAT problems proposed by Benjamin, Zhao, and Fitzsimons1 (henceforth BZF). k–SAT problems involve n Boolean variables and require satisfaction of m clauses each containing k Boolean variables. The complexity of k-SAT is well understood, and Boolean satisfiability problems were among the first to be classified as NP-complete22,23,24. Many rigorous and heuristic classical algorithms have been proposed to solve k-SAT, particularly 3-SAT. While not the best classical algorithm, the celebrated Schöning’s algorithm25, which repeatedly checks and randomly corrects the clauses, is probably the most well-known. This has a provable runtime upper bound that scales as (4/3)n for 3-SAT25.

BZF proposed a method by which a k–SAT problem may be solved through repeated cycles of k–qubit projective measurements, with a measurement representing each clause of the logical proposition. Through gradual adjustment of the measurement axes defining true or false on a given qubit, the clause measurements are able to negotiate a solution. This may be regarded as a form of analog quantum computation (most similar to adiabatic computation), where open system dynamics (here, the invasiveness of measurement) are used to create the solution dynamics, instead of the closed system (unitary) dynamics that are more often considered for such purposes. Our aim in this manuscript is to generalize the BZF scheme to the cases of sequential general–strength measurements, and weak measurements or dissipators.

This approach rests on the enormous progress made in continuous quantum monitoring over the past few decades. Generalized quantum measurements are well understood theoretically26 in the language of Positive Operator–Valued Measures. Generalized measurements include not only projectors, but also minimally invasive (and minimally informative) weak quantum measurements. Continuous quantum monitoring arises in the limit of continuous infinitesimal–strength quantum measurements27,28,29,30,31, and has been extensively explored in experiments, primarily on superconducting qubit platforms32,33,34,35. The “quantum trajectories” conditioned on sequences of measurement readouts are accessible in real-time in such experiments, and the ensemble average of such trajectories reduces to Lindbladian dissipation of the monitored channel(s). Continuous monitoring enables such features as simultaneous monitoring of non-commuting qubit observables36,37,38,39,40,41,42,43, and measurement–dependent feedback control28,30,44,45,46,47,48,49 that enables such diverse capabilities as e.g., measurement–driven entanglement generation50,51,52,53,54,55, quantum state or subspace stabilization46,56,57,58,59,60,61,62,63,64,65,66, dissipative control via continuously–modified measurements (i.e., “Zeno Dragging”)67,68,69,70, or real–time error detection and correction71,72,73,74,75,76,77,78,79,80,81,82,83. The quantum Zeno effect84 describes the inhibition of quantum dynamics that occur on a timescale which is slow compared to measurement or dissipation. This too has been examined for weak measurements and/or continuous dissipation56,85,86,87,88,89,90,91,92, and has moreover found a number of applications as a form of dissipation engineering93,94, for quantum control of logical operations on qubits95,96,97, and for error correction57,58,59,60,61,62,63,64,65,66,69,97,98,99,100,101,102,103,104,105.

We highlight two main motivations for generalizing the BZF algorithm with such tools:

-

1.

Projective measurements imply idealized and discrete quantum operations that exist at the limit of infinite resource consumption106, in contrast with laboratory situations which typically involve continuous dissipation of a system via imperfectly–monitored channels,

-

2.

We will be able to formally connect the BZF k–SAT algorithm to “Zeno Dragging”67,68,70, which is a method for measurement–driven quantum control based on the quantum Zeno effect. Importantly, while Zeno dragging is improved by true measurement (dissipation leading to detection), it is a protocol that is able to function autonomously (with dissipation alone).

As we formally develop these ideas, we will be able to quantify some of the algorithm’s convergence properties as a function of the quantum measurement resources it requires. The extensive literature concerning quantum measurement contributes to the understanding of the generalized BZF algorithm that we develop below because (i) the algorithm of interest requires monitoring non-commuting observables corresponding to different clauses of a k–SAT problem, and (ii) the observables are changed over time as in the Zeno dragging approach to control, such that a solution state or subspace is dissipatively stabilized by the set of clause measurements. In particular, whenever a solution exists, the measurement–driven algorithm generates dynamics following a pure–state kernel of the Liouvillian in the adiabatic regime, similar to refs. 86,88.

The plan and aims of this paper are as follows: In section “Towards formulating the BZF scheme via continuous measurement” we review the construction of k–SAT problems, and the construction of quantum measurements used in the BZF projective algorithm. In section “Generalizing the measurement strength”, we describe how the projectors in the BZF algorithm may be generalized to finite strength and/or continuous monitoring. Specifically, we write down Kraus operators and state update rules for generalized clause measurements in section “Conditional and un-conditional dynamics under generalized measurement and dissipation”, and then write the corresponding time–continuous version of the dynamics in section “Weak continuous limit”. In section “Convergence in the Zeno limit” we then formalize the parallel between these continuous dynamics and Zeno dragging, and describe the convergence properties of the time–continuous algorithm in the Zeno limit (analogous to the adiabatic limit). These convergence properties imply that the algorithm is capable of functioning autonomously. We are then in a position to begin putting forward several generalized BZF algorithms: In section “Un-conditional BZF algorithm with finite measurement strength” we describe a dissipative (autonomous) algorithm, and in section “Heralding BZF algorithm with finite measurement strength via filtering” we describe a heralded algorithm that explicitly uses the clause measurement records. Finally, in section “Readout scheme and time-to-solution”, we describe the final (local) qubit readout, used to turn a state in the qubit register into a candidate solution bitstring to a k-SAT proposition, and describe how we quantify the time-to-solution (TTS). The methods of section “Generalizing the measurement strength” are applied first for the simple case of a two–qubit 2-SAT problem in section “Two–qubit 2-SAT: from discrete to continuous measurement”, with the aim of building intuition, and then applied to larger–scale 3-SAT problems in section “Scaling the problem up”. As we investigate larger 3-SAT problems, we comment increasingly on the TTS and its scaling with regard to qubit number. Concluding remarks are offered in section “Discussion and outlook”.

Reformulating the BZF scheme with continuous measurement

SAT problems

In this subsection, we briefly introduce the Boolean satisfiability (SAT) problem. A SAT problem involves n Boolean variables \({\{{b}_{j}\}}_{j = 1}^{n}\), where each variable bj takes values from either true (denoted by 0 or +) or false (denoted by 1 or −). A literal xj can take values from \(\{{b}_{j},{\bar{b}}_{j}\}\), where \({\bar{b}}_{j}\) is the negation of bj. In k-SAT, a clause Ci contains k literals connected by logical OR (∨). A k-SAT instance is then defined by a Boolean formula F in the conjunctive normal form (CNF), which involves m clauses connected by logical AND (∧)

where Ci can be, for example, \({C}_{1}={b}_{2}\vee {b}_{3}\vee {\bar{b}}_{5}\) for k = 3. A clause is satisfied when at least one of the literals is true, and we say a given SAT instance is satisfiable iff there exists an assignment of the n Boolean variables bsoln ∈ {0, 1}n such that all m clauses are satisfied simultaneously. A SAT problem is then a decision problem, where the goal is to decide whether a given SAT instance is satisfiable or not (A summary of index variables is provided in Table 1).

Note that, while 2-SAT can be solved by classical algorithms such as that of ref. 107 in polynomial time, k-SAT for k ≥ 3 is NP-complete. However, the classical complexity arguments are based on the worst case analysis. In practice, the difficulties of solving random SAT are not uniformly distributed for the clause density α, which is defined as α = m/n. It has been shown that, when α is increasing from 0, there is a phase transition exhibiting easy-hard-easy behavior for SAT108.

The BZF algorithm

We now describe the way we encode the classical Boolean variables into qubits, following the scheme due to Benjamin, Zhao, and Fitzsimons (BZF)1. We will then briefly review the BZF algorithm for 3-SAT based on projective measurements before proceeding to section “Generalizing the measurement strength” where we present the extended algorithm based on generalized measurement schemes that constitute the focus of this work.

For a classical Boolean variable bj, the two possible values (true and false) are represented by two θ-dependent pure states of a qubit j as per

where θ is a control parameter taking its value from [0, π/2]. Here \(\left\vert +\right\rangle\) is the equal superposition state \(\left\vert +\right\rangle =\frac{1}{\sqrt{2}}(\left\vert 0\right\rangle +\left\vert 1\right\rangle )\) that lies on the equator in the xz-plane of each qubit’s Bloch sphere. The θ-dependence is obtained by rotating \(\left\vert +\right\rangle\) around the y-axis of the Bloch sphere by an angle ± θ, with the sign corresponding to true or false. The rotation operator is given by

See Fig. 1 for an illustration.

A θ-dependent projection operator \({\hat{P}}_{i}(\theta )\) is assigned to each clause Ci. Specifically, for k-SAT, the projector is given by

where the integer \({l}_{{i}_{q}}\) is + 1 if the qth literal in the ith clause \({x}_{{i}_{q}}\) is equal to the Boolean variable \({b}_{{i}_{q}}\) itself, and \({l}_{{i}_{q}}\) is − 1 if \({x}_{{i}_{q}}\) is equal to the negated corresponding Boolean variable \({\bar{b}}_{{i}_{q}}\). The states \(\vert {\theta }^{\perp }\rangle\) and \(\vert -{\theta }^{\perp }\rangle\) are defined by

which are orthogonal to \(\left\vert \theta \right\rangle\) and \(\left\vert \bar{\theta }\right\rangle\) defined in Eq. (2) respectively, i.e., \(\langle {\theta }^{\perp }| \theta \rangle =0=\langle {\bar{\theta }}^{\perp }| \bar{\theta }\rangle\). As an example, the projector of the clause \({C}_{1}={b}_{2}\vee {b}_{3}\vee {\bar{b}}_{5}\) is given by

Essentially, the projector \({\hat{P}}_{i}(\theta )\) checks whether the clause Ci is violated: \({\hat{P}}_{i}(\theta )\) defines a measurement of a Hermitian observable

where \(\hat{{\mathbb{1}}}\) is the identity operator and \({\hat{X}}_{i}(\theta )\) has two eigenvalues ± 1. If the measurement gives +1 (Success), then Ci is not violated, while if the measurement gives −1 (Fail), then Ci is violated and the k-qubit subsystem of the quantum state is projected into the state \({\bigotimes }_{q = 1}^{k}{\vert {l}_{{i}_{q}}{\theta }^{\perp }\rangle }_{({i}_{q})}\langle {l}_{{i}_{q}}{\theta }^{\perp }\vert\), that corresponds to the only assignment of the relevant k Boolean variables violating the clause Ci.

The BZF algorithm1 can be summarized as follows. The input quantum state is the equal superposition of all computational basis states \({\left\vert +\right\rangle }^{\otimes n}\). In the cth cycle of clause measurements, one sequentially checks all the clauses \({\{{C}_{i}\}}_{i = 1}^{m}\) via projectors \({\{{\hat{P}}_{i}({\theta }_{c})\}}_{i = 1}^{m}\) in a pre-determined order. At the end of each cycle of clause measurements, θc is updated according to some schedule moving from θ = 0 to θ = π/2 over the course of the algorithm. The result is that after many clause measurement cycles, with the true/false measurement angles getting further apart, the qubits’ states “fan out”, and eventually arrive at z = ±1 in the computational basis when θ reaches π/2. All of the qubits are then individually measured in the computational basis to give the assignment of Boolean variables as the output, at which point the algorithm has terminated. During the entire process, if any clause measurement has failed, then one restarts the algorithm from the very beginning.

Intuitively speaking, when one fixes θ = 0, the quantum state cannot distinguish true and false of the Boolean variable, i.e., \(\vert \theta \rangle =\vert \bar{\theta }\rangle\) and \(\vert {\theta }^{\perp }\rangle =\vert {\bar{\theta }}^{\perp }\rangle\), but the clause checks will never fail. On the other hand, when θ = π/2, the quantum states corresponding to true and false are orthogonal \(\langle \bar{\theta }| \theta \rangle =\langle {\bar{\theta }}^{\perp }| {\theta }^{\perp }\rangle =0\) and thus can be perfectly distinguished. However, implementing the algorithm at fixed θ = π/2 is equivalent to a randomized classical brute force search. This is the advantage of the BZF algorithm, where θ is varied from θi to π/2 so that the success probability throughout the algorithm is higher than classical brute force searching, while the value θ = π/2 at the end of the algorithm ensures the complete information of the solution assignment can be obtained. In particular, BZF showed that for θ ∈ (0, π/2), the number of quantum states that can satisfy all clause checks is equal to the number of solution assignments of the Boolean variables in the SAT problem. Furthermore, if there is a solution assignment (s1, …, sn) with sj ∈ {±1} satisfying the CNF Boolean formula, then there is a corresponding quantum solution given by.

We note that this solution state is both pure and separable, and necessarily also a +1 simultaneous eigenstate of all of the clause observables \({\hat{X}}_{j}(\theta )\) defined in Eq. (7). BZF also showed that the fidelity of the instantaneous quantum state to the solution is monotonically increasing as one continues to implement clause check cycles that herald success. BZF’s numerical results indicated that the running time of their projection–based quantum algorithm measured in terms of the number of projective measurements scales as (1.19)n, outperforming the classical Schöning algorithm which is well known for its provable upper bound of (1.334)n 25.

Generalizing the measurement strength

In this section, we introduce a measurement model that will allow us to scale between the limits of projective measurement and weak continuous measurement, in the context of the BZF algorithm for solving k-SAT. As we generalize the measurement strength below, we will be moving the discrete and projective scheme of BZF towards a different scheme of generalized measurement, including the limit of weak continuous measurement, in which the measurement or dissipation is composed of a sequence of infinitesimal–strength open–system processes occurring on infinitesimal timesteps. With finite measurement strength, perfect projectors exist only in the limit of operations that take an infinitely long time to complete; such ideal operations do not really exist in any laboratory. Explicitly considering tradeoffs between measurement quality and the time expended to carry them out will later prove to be important in quantifying the performance and speed of the measurement–driven algorithm. As part of this exploration, we will show that the continuous version of the BZF algorithm is a version of what we have elsewhere called Zeno Dragging (see ref. 70 and references therein). Zeno dragging involves monitoring or dissipating observable(s) so as to induce the quantum dynamics to follow and eigenspace of the observable(s) over time. Here, we define clause measurements such that the k-SAT solution is the mutual + 1 eigenspace of many observables, that we Zeno drag as θ is varied. This allows for high–probability/high–fidelity control as long as the observable is moved slowly compared to the strength with which it is measured14,88. Moreover, such Zeno dragging schemes work autonomously (via dissipation without detection). We will ultimately define two main variants of the generalized algorithm, involving (i) the average (dissipative) dynamics, or (ii) the true measurement dynamics in which errors can be heralded. These two approaches are presented in four algorithmic subroutines below.

Conditional and un-conditional dynamics under generalized measurement and dissipation

In general, a measurement process can be described by a set of Kraus operators \(\{{\hat{M}}_{r}\}\) satisfying \({\sum }_{r}{\hat{M}}_{r}^{\dagger }{\hat{M}}_{r}=\hat{{\mathbb{1}}}\), where r is the label for the measurement record30,31. Given the prior-measurement state described by a density matrix ρ0, the probability of obtaining measurement result r is given by Born’s rule \({\mathbb{P}}(r)={\rm{Tr}}({\hat{M}}_{r}{\rho }_{0}{\hat{M}}_{r}^{\dagger })\), and the post-measurement state ρr conditioned on the readout r is

We will consider here Kraus operators for generalized clause checks of the form

where \(\hat{X}(\theta )\) is defined in Eq. (7). Here, τ is the “characteristic measurement time” representing the measurement strength and Δt is the duration of the measurement. Short τ denotes the fast “collapse” or strong measurement, while large τ denotes the slow “collapse” or weak measurement. The particular form of these Kraus operators assumes a measurement apparatus generating a continuous–valued readout r, and is based on, e.g., the Kraus operators derived in quantum optical contexts109,110. In particular, in the limit Δt/τ ≫ 1 the above–defined generalized measurement is effectively projective, while Δt/τ ≪ 1 is the limit of weak measurement. The latter leads to diffusive quantum trajectories when infinitesimal–strength measurements are continuously made over time (i.e., in the limit of continuous monitoring)28,29,30,31. When Δt/τ is between the two limits, the Kraus operators describe a generalized discrete measurement with finite strength.

When one considers the measurement process without measurement records, the dynamics are described by the average over all the possible trajectories, weighted by their probabilities. In this case, given the density operator ρ(t) at t, the average post-measurement density operator \(\bar{\rho }(t+\Delta t)\) is given by

where β = e−Δt/2τ.

Weak continuous limit

In this work, we will pay particular attention to the limit where Δt/τ ≪ 1, which is the limit of weak continuous measurement. In this case, the measurement strength in infinitesimal time interval Δt approaches 0. By expanding Eq. (11) to first order in Δt, we can derive the Lindblad master equation (LME) for the average dynamics

where \({\mathcal{L}}[\hat{X}]\) is the Lindbladian generator \({\mathcal{L}}[\hat{X}]\rho =\hat{X}\rho {\hat{X}}^{\dagger }-\frac{1}{2}({\hat{X}}^{\dagger }\hat{X}\rho +\rho {\hat{X}}^{\dagger }\hat{X})\), and \(\bar{\rho }\) denotes the average state. This is an expected and general property of Markovian quantum trajectories28,29,30,31,111,112. Note that we will be able to assume \(\hat{X}{(\theta )}^{\dagger }=\hat{X}(\theta )\) and \(\hat{X}{(\theta )}^{2}=\hat{{\mathbb{1}}}\) throughout this work, which is guaranteed for \(\hat{X}\) of the form \(\hat{{\mathbb{1}}}-2\,\hat{P}\) (7).

The individual trajectories conditioned on the measurement record, which can be expressed as \(r\,dt=\langle \hat{X}\rangle dt/\sqrt{\tau }+dW\), are also of interest. From Eq. (9) we can derive an Itô stochastic master equation (SME) describing such dynamics under the weak continuous limit28,29,30

where \({\mathcal{H}}[\hat{X}]\rho =\rho \hat{X}+\hat{X}\rho -2\langle \hat{X}\rangle \rho\) is the measurement backaction with \(\langle \hat{X}\rangle ={\rm{Tr}}(\hat{X}\rho )\) the expectation value of \(\hat{X}\). Here dW is the Wiener increment satisfying dW2 = dt by Itô’s lemma. Notice that the dynamics satisfying Eq. (12) are recovered by averaging over all possible trajectories in Eq. (13), consistent with the fact that dW has zero mean.

A striking difference between the algorithm dynamics for SAT under continuous measurement and under projective measurement is the effect of measurement ordering. To see this, we first notice that the observables corresponding to different clauses do not necessarily commute. This happens for θ ∈ (0, π/2), due to common Boolean variables involved in the two clauses being of complementary form. For example, the observables for C1 = x1 ∨ x2 and \({C}_{2}={x}_{1}\vee {\bar{x}}_{2}\) do not commute because both x2 and \({\bar{x}}_{2}\) appear in different clauses. Therefore, in the dynamics under projective measurement, the order of measurements will be important. However, for weak continuous measurement, non-commutativity plays no role in the time–continuous master equations Eq. (12) and Eq. (13), because non-commutative effects in the dynamics only appear to O(Δt2) and higher36,37,38,39,40,42,113. In other words, one can simultaneously measure all the clause observables under weak continuous measurement, and any effects due to clause ordering must vanish in the limit Δt/τ → 0. Importantly, the weak continuous measurement enabled simultaneous clause check immediately reduces the algorithmic running time by a factor of m compared to strong measurement, where clauses must be measured sequentially. The average dynamics under such m simultaneous clause measurements are described by the master equation

and the individual trajectory conditioned on \({\{d{W}_{i}\}}_{i = 1}^{m}\) is described by the SME

where each observable \({\hat{X}}_{i}(\theta )\) corresponds to a clause Ci. Each of the readouts on which these dynamics are conditioned is a sum of signal and noise contributions, namely,

where the first term is the expected signal content of the measurement outcome, and the Wiener process dWi is pure noise.

Convergence in the Zeno limit

We now elaborate on the convergence properties of Zeno dragging necessary for an understanding of our algorithms. Let us consider the case where there is a unique solution to our k–SAT problem, i.e., we assume there exists a unique solution of the form of Eq. (8). We may then define a frame change by the rotation

where ± rotations are assigned to each qubit according to the unknown solution bitstring s, such that the ideal solution dynamics become static in the \(\hat{Q}\)–frame. In this frame all of the \({\hat{X}}_{i}(\theta )\) are partially diagonalized, in the sense that the row/column of each \({\hat{{\mathcal{X}}}}_{i}={\hat{Q}}^{\dagger }\,{\hat{X}}_{i}(\theta )\,\hat{Q}\) which corresponds to the solution state at θ = π/2 (or t = Tf, where Tf is the total time) in the computational basis will now be occupied only by its θ–independent diagonal element. The schedule θ(t) is here assumed to vary in a continuous and differentiable way.

Let \(\varrho ={\hat{Q}}^{\dagger }\,\rho \,\hat{Q}\), such that the Itô \(\hat{Q}\)–frame dynamics read

with

The additional Hamiltonian term \({\hat{H}}_{Q}\) encodes diabatic motion due to movement of the \(\hat{Q}\)–frame as the observables are rotated, with s ∈ {±1}n representing the solution bitstring corresponding to Eq. (17). The solution state Eq. (8) is a simultaneous + 1 eigenstate of the clause observables \({\hat{X}}_{i}\), which we now notate as \(\tilde{\rho }=\left\vert {\phi }_{soln}(\theta )\right\rangle \left\langle {\phi }_{soln}(\theta )\right\vert\). In the new frame, this eigenstate is θ–independent and therefore time–independent, i.e., we have \({\hat{{\mathcal{X}}}}_{i}\,\tilde{\varrho }\,{\hat{{\mathcal{X}}}}_{i}=\tilde{\varrho }\) for all i.

We can now consider the algorithm dynamics in the \(\hat{Q}\)–frame, in the Zeno limit Tf /τ → ∞ (which is an adiabatic limit). We initialize our system in \(\tilde{\varrho }\), corresponding to \(\rho ={(\left\vert +\right\rangle \left\langle +\right\vert )}^{\otimes n}\) at θ = 0. Notice that because \(\tilde{\varrho }\) is an eigenstate of all the \({\hat{{\mathcal{X}}}}_{i}\), we have \({\mathcal{L}}[{\hat{{\mathcal{X}}}}_{i}]\tilde{\varrho }=0\) and \({\mathcal{H}}[{\hat{{\mathcal{X}}}}_{i}]\tilde{\varrho }=0\) for all i. Then in the limit Tf /τ → ∞, we have \(\dot{\theta }\to 0\), and \(\tilde{\varrho }\) is therefore a fixed point of both the conditional and unconditional dynamics. This means that in the Zeno limit, our algorithm converges in probability to the desired solution dynamics via perfect Zeno pinning in the \(\hat{Q}\)–frame, thereby achieving deterministic (diffusion–free) evolution. In other words, when the \(\hat{Q}\)–frame can be constructed, namely, when a unique solution exists, one may think of the rotation of the clause observables as generating a diabatic perturbation about the solution Eq. (8) when Tf /τ ≫ 1. We point out that these limiting–case solution dynamics are both pure and separable in the case of a unique solution. Furthermore, the arguments above imply that the scaling of the Lindbladian algorithm with qubit number goes to 1n in the Zeno limit Tf/τ → ∞, since any solution that exists is found deterministically, independent of the number of qubits. See Supplementary Information Section I and refs. 57,58,59,60,61,62,63,64,65,66,70 for further comments and context. We will revisit questions related to algorithmic scaling in later sections.

We now proceed by clarifying the connection between such continuous BZF algorithms and the measurement–driven approach to quantum control known as “Zeno Dragging”. In ref. 70 we defined “Zeno Dragging” in terms of a quantity

claiming that “Zeno dragging is a viable approach to driving a quantum system from some initial \(\vert {\psi }_{i}\rangle\) to a final \(\vert {\psi }_{f}\rangle\), if and only if there exists a parameter(s) θ controlling the choice of measurement such that (i) a continuous sweep in θ is possible, and (ii) that this generates a continuous deformation of a local minimum of \({\mathsf{g}}(\rho ,\theta )\) which traces a path from \(\vert {\psi }_{i}\rangle\) to \(\vert {\psi }_{f}\rangle\)”. It is easy to verify that each \({{\mathsf{g}}}_{j}\) vanishes at an eigenstate of \({\hat{X}}_{j}(\theta )\), and hence that \({\mathsf{g}}(\rho ,\theta )\) vanishes at a common eigenstate of all the \({\hat{X}}_{j}(\theta )\), if such an eigenstate exists. One can readily see the connection between this definition with definitions employing the Liouvillian kernel86,88. We conclude that the time–continuum version of the finite–time generalized–measurement BZF algorithm is a Zeno Dragging operation by the definition of ref. 70, where Zeno dragging here means that we continuously and quasi-adiabatically deform the initial state \({\left\vert +\right\rangle }^{\otimes n}\) to the distinguishable solution state \(\left\vert {\phi }_{soln}(\theta =\frac{\pi }{2})\right\rangle\) by rotating θ from 0 to π/2.

Finally, we note that in the case with multiple solutions, each solution will still take the form of Eq. (8), and together they will span the solution subspace. The convergence and stability arguments given above will apply to the entire solution subspace in so far as they apply for each of the individual solutions Eq. (8) forming a basis for that space. Even in the multi–solution case, the solution space should here be defined as the root of \({\mathsf{g}}\), i.e., the common + 1 eigenspace of all the clause observables. From a control perspective, \({\mathsf{g}}\) is an objective function, and minimization of \({\mathsf{g}}\) implies staying as close to our target solution eigenspace as possible70. See Supplementary Information Section I for more extended remarks.

To summarize: the adiabatic theorem for Lindbladian dynamics, which is equivalent to Zeno dragging in the time continuum limit, ensures that if one starts in the kernel of \({\mathcal{L}}(\theta )\), and if the total evolution time Tf is long enough compared to the minimum Liouvillian gap of \({\mathcal{L}}(\theta )\), then the quantum system will stay near the instantaneous pure–state kernel of \({\mathcal{L}}(\theta )\)86,88. In our case, it is easy to check that the pure-state kernel of \({\mathcal{L}}(\theta )\) is spanned by the solution state(s) \(\left\vert {\phi }_{soln}(\theta )\right\rangle\)1, since a state being in the kernel is equivalent to its passing all clause checks with certainty. It is natural from a control perspective to imagine Zeno dragging in terms of a single measurement that isolates some target state or subspace (see70 and references therein). Here however, we have a sort of mirror image of that scenario: instead of a single measurement that positively identifies some target dynamics, the continuous BZF algorithm contains a collection of measurements that each rule out a possible solution, with the desired solution emerging as the remaining option from the collective dynamics. We might term this “Zeno exclusion control”. We have essentially shown that a collective “ruling out” of all states that fail clause checks leads to dynamics with the same autonomous/stabilizing properties on the remaining solution subspace as in controlled Zeno dragging. While having many measurements appears experimentally cumbersome, this strategy makes sense from an algorithmic perspective, because it implements Zeno dragging without our knowing the target dynamics a priori (and here knowing target dynamics \(\left\vert {\phi }_{soln}(\theta )\right\rangle\) would amount to already knowing a solution to the k-SAT problem).

Un-conditional BZF algorithm with finite measurement strength

The autonomous stability we have just described motivates us to further investigate a BZF-type algorithm based on Lindbladian dissipation \({\mathcal{L}}(\theta )=\mathop{\sum }\nolimits_{i = 1}^{m}\frac{1}{4\tau }{\mathcal{L}}[{\hat{X}}_{i}(\theta )]\) (from Eq. (14)) alone. Recall that Eq. (11) gives the average dynamics of our quantum system under a single clause check measurement with finite measurement strength. We use this to propose an algorithm based on the average dynamics for general Δt/τ (where Eq. (12) is recovered in the Δt → dt limit of Eq. (11)). Algorithmically, one can perform such clause check measurements for all m clauses in a predetermined order, which consists of a clause check cycle. At the end of each clause check cycle, one then updates the control parameter θ, proceeding monotonically from θ = 0 to π/2. Finally, one reads out all the qubits, in the computational basis. This is summarized in Algorithm 1 (with the readout procedure deferred to Algorithm 4).

Algorithm 1

k-SAT by average dynamics

1: input: τ, Tf, Δt, and a schedule function θ(t/Tf)

2: initialize t ← 0 and the state to \(\rho (0)=\left\vert +\right\rangle {\left\langle +\right\vert }^{\otimes n}\)

3: while t ≤ Tf do

4: t ← t + Δt

5: θ ← θ(t/Tf)

6: sequentially dissipate clause checks according to by Eq. (11) for all clauses

7: end while

8: return ρ(Tf)

One can immediately check that the solution state defined in Eq. (8) is a fixed point of Eq. (11) applied over all clause dissipators. However, we also point out that the algorithm using average dynamics nevertheless allows the possibility of reaching the final solution state at θ = π/2 via some diabatic path, where the measurement (if recorded) has failed and thus the state deviates from the solution state at some intermediate time. A Lindbladian BZF algorithm is a special case of Algorithm 1, operating in the time–continuum limit. It relies on the convergence in mean and probability in the Zeno limit, as described in section “Convergence in the Zeno limit”.

Heralding BZF algorithm with finite measurement strength via filtering

We now formally consider the advantages of having a detector granting us access to the pure state conditional dynamics Eq. (9), instead of relying only on the average dynamics Eq. (11) and autonomous aspects of Zeno stabilization. It is possible that propagation of the conditional dynamics may be computationally very expensive in contexts where solving a k-SAT problem is of interest, even though it is always possible in principle. This is no barrier to the scheme presented here however, since it does not require computation of the conditional ρ(t), but instead only access to the clause readouts ri (followed by local z readouts rj after θ reaches π/2; see section “Readout scheme and time-to-solution” for details). Note that in the event that the conditional dynamics can actually be tracked through the entire evolution with high efficiency, the terminal local z readout may no longer be necessary.

The potential benefit of an algorithm employing heralded success of clause readout ri is primarily in the possibility of feedback. We might quickly terminate trajectories that have already collapsed into the subspace associated with failure of clauses, which means they are no longer able to adiabatically follow the instantaneous solution state. Restarting the algorithm upon detection of an error is the simplest possible form of feedback we might employ in this scenario, and in this work we do not go beyond that simplest case of error detection. However, more sophisticated forms of feedback might aim to actively correct errors in real time, instead of simply restarting the algorithm when a clause fails; see section “Two–qubit 2-SAT: from discrete to continuous measurement” for additional discussion of these possibilities that might be investigated in future work.

Unlike projective measurements where one can easily diagnose the collapse into some subspace of the measured observable using the measurement result, in weak measurement the dynamics are diffusive, which complicates fast diagnosis of errors directly from the noisy measurement record. In order to overcome this, a filter is needed. We first use the time continuous situation to develop some intuition for the filter we are going to use. Such filtering is used in continuous quantum error correction to detect and correct errors in real-time71,72,73,74,75,76,77,78,79,80,81,82,83 (CQEC). In the language of error correction, an ideal Zeno dragging procedure conditioned on \({r}_{i}=+1/\sqrt{\tau }\,\forall \,i\) defines the “codespace” that we attempt to follow. A failed clause measurement will return readouts with a mean signal centered around \(-1/\sqrt{\tau }\) instead of \(+1/\sqrt{\tau }\), corresponding to an “error subspace” in error correction language. The main difficulty in realizing an effective CQEC implementation is in managing the tradeoff between rapid error detection and statistical confidence in the detection and characterization of the error. We consider filter functions on the readout of the form

where \({\mathcal{W}}(t,{t}^{{\prime} })\) is a window function to be chosen below, and \({{\mathcal{N}}}_{{\mathcal{W}}}=\mathop{\int}\nolimits_{0}^{t}{\mathcal{W}}(t,{t}^{{\prime} })\,d{t}^{{\prime} }\) is a normalization factor. For \({\mathcal{W}}/{{\mathcal{N}}}_{{\mathcal{W}}}=1\), and in the continuum limit where dt is infinitesimal, \({{\mathcal{B}}}_{i}\) can be interpreted as a time–continuum approximation of the log-likelihood ratio for a sequence of clause measurements heralding success \(\left\langle {r}_{i}\right\rangle =+1/\sqrt{\tau }\) versus failure \(\left\langle {r}_{i}\right\rangle =-1/\sqrt{\tau }\), over the entire measurement record. Thus, (20) should be similarly understood as being like a log-likelihood, where the role of the window function \({\mathcal{W}}(t,{t}^{{\prime} })\) is to weight that likelihood towards the “recent history” of the measurement record in an appropriate way. For the measurement signal ri(t) for each clause i, we obtained the filtered signal \({\bar{r}}_{i}(t)\) obtained via an exponential filter with a finite integration window

where Nbe = 1 − e−1 is the normalization constant, and Tbe is the response time. This is essentially an exponential filter inside a single threshold boxcar filter78,79.

Recall the general form of the clause readouts Eq. (16) in the time–continuum limit. If the signal has reached a steady value s0 before t0, i.e., \(\langle {\bar{r}}_{i}({t}_{0})\rangle =\langle {r}_{i}({t}_{0})\rangle ={s}_{0}\), and the “signal part” of the raw signal changes from s0 to 〈ri(t > t0)〉 = s1 at t0, then the expectation value of the filtered signal will approach to the new steady value s1 exponentially as

for t0 ≤ t ≤ t0 + Tbe, with 〈 ⋅ 〉 understood as an ensemble average. One can check that \(\langle {\bar{r}}_{i}(t+{T}_{be})\rangle ={s}_{1}\), which will remain for t > t0 + Tbe. In the steady state, the variance of the filtered signal is

where e ≈ 2.7183. This is consistent with the intuition that in the filtered signal, a larger Tbe value results in smaller fluctuations but a longer response time, while a smaller Tbe value results in a quicker response but bigger fluctuations.

When implementing the error detection for a discrete-time algorithm, one then discretizes Eq. (21) to get an update equation for the filtered signal \({\bar{r}}_{i}(t)\) as

The error-detection strategy using the filtered signal depends on a threshold value rthr. During the evolution of the system under Eq. (9) for all clauses, if any of the filtered signal corresponding to the ith clause is below the threshold, i.e., \({\bar{r}}_{i}(t) < {r}_{th}\), at time t, then the algorithm is terminated at time t and diagnosed with “FAILED”. This subroutine is summarized in Algorithm 2.

Algorithm 2

k-SAT by single trial heralded dynamics

1: input: τ, Tf, Δt, Tbe, rth, and a schedule θ(t/Tf)

2: initialize t ← 0 and the state to \(\rho (0)=\left\vert +\right\rangle {\left\langle +\right\vert }^{\otimes n}\)

3: while t ≤ Tf do

4: t ← t + Δt

5: θ ← θ(t/Tf)

6: sequentially measure all clauses as defined by Eq. (9) and get measurement records \({\{{r}_{i}(t)\}}_{i = 1}^{m}\).

7: update the filtered signals \({\{{\bar{r}}_{i}(t+\Delta t)\}}_{i = 1}^{m}\) via Eq. (24).

8: if any \({\bar{r}}_{i}(t) < {r}_{th}\) then

9: terminate and return “FAILED”, t

10: end if

11: end while

12: return ρ(Tf)

Notice that in the projective limit where Δt/τ ≫ 1, one can choose Tbe = Δt, so that the projection into the failed subspace can be detected immediately. In the continuum limit where Δt/τ ≪ 1, one can typically choose Tbe ~ τ, so that the collapse caused by the measurement is sufficiently far along to be confidently detectable through the readout noise.

As discussed above, the heralded dynamics benefit from earlier detection of the failure. This advantage allows us to define a heralded algorithm that restarts when the failure is detected early, and thus is more likely to follow the correct trajectory given a fixed amount of temporal computational resource (total algorithm running time), even without feedback. This heralded algorithm is summarized in Algorithm 3.

Algorithm 3

k-SAT by heralded dynamics

1: input: τ, Tf, Δt, Tbe, Tmin, rth, and a schedule θ(t/Tf)

2: initialize trest ← Tf and the state to \(\rho (0)=\left\vert +\right\rangle {\left\langle +\right\vert }^{\otimes n}\)

3: while trest≥Tmin do

4: run Algorithm 2 with input τ, trest, Δt, Tbe, rth, θ(t)

5: if Obtained “Failed” and t then

6: trest ← trest − t

7: else

8: return ρ(trest)

9: end if

10: end while

11: run Algorithm 2 without failure detection and with schedule θ(t/trest) from t = 0 until time t = trest

12: return ρ(trest)

Notice that in Algorithm 3, we do not terminate the dynamics when the remaining time trest is smaller than a minimum value Tmin. This is because when the total dragging time is too small (comparable to Tbe and τ), the quantum Zeno effect is not strong and the failure detection based on the filter is also not reliable anymore. However, in this situation we can still use conditional dynamics, i.e., Eq (9) rather than the averaged dynamics of Eq. (11).

We reiterate that although the clause measurement outcomes are recorded in the implementation of Algorithm 3, we do not assume that these measurement records are used directly to estimate the solution bitstring or conditional state, even if the solution state might be successfully prepared at the end of the algorithm. We only use these measurement signals to herald the success of trajectories. Therefore, for both Algorithm 1 and Algorithm 3, we would need to perform local Pauli-z measurements to read out the solution bitstring at the final time Tf. We shall discuss the final readout measurements in the next section.

We would like to emphasize that although Algorithms 1–3 are defined with finite Δt, one can also obtain continuous–time versions of each algorithm by going into the limit of Δt → 0. In this case, the dynamics are described by Eq. (14) and (15), and the filter is given by Eq. (21).

Readout scheme and time-to-solution

Before we move on to illustrate the algorithms, we shall first introduce some performance metrics that we use to benchmark the algorithms in sections “Two–qubit 2-SAT: from discrete to continuous measurement” and “Scaling the problem up”.

For n-qubit systems, if we make the weak measurements in the computational basis, the Kraus operator for obtaining a readout signal tuple \({\bf{r}}=({r}_{1},\cdots \,,{r}_{n})\in {{\mathbb{R}}}^{n}\) is

where Δtm is the duration of the readout. Here b = (b1, ⋯ , bn) ∈ {−1, +1}n represents a possible solution bitstring, and

is the corresponding projector in the computational basis (z basis). Suppose that upon performing local readouts of the state ρ = ρ(Tf) returned by one of Algorithms 1–3 to obtain r, we then generate the our candidate solution bitstring via \(\tilde{{\bf{s}}}={\rm{sign}}({\bf{r}})\). Let s = (s1, ⋯ , sn) ∈ {−1, +1}n be the actual solution bitstring of the k-SAT problem. Then the probability of getting \(\tilde{{\bf{s}}}={\bf{s}}\), i.e., the probability that our algorithm and readout return a correct solution, is

where we have abbreviated

Simplifying this yields

where hs,b is the Hamming distance between s and each possible bitstring b. The above derivation gives a procedure of reading out a bitstring via generalized measurement from the final state of either quantum Algorithm 1 or Algorithm 3. The overall general algorithm using any one of these two generalized measurement algorithms together with the final state readout is summarized in Algorithm 4 below.

Algorithm 4

k-SAT with generalized qubit readout

1: input: a CNF Boolean formula F, τ, Tf, Δt, Δtm, a schedule θ(t/Tf): if Algorithm 3 is used, values of Tbe, Tmin, rth are also input.

2: run either Algorithm 1 or Algorithm 3, and obtain a final state ρ(Tf)

3: read out local \({\hat{\sigma }}_{z}\) of ρ(Tf) with Kraus operators given in Eq. (25), obtaining readouts \({\bf{r}}={\{{r}_{i}\}}_{i = 1}^{n}\)

4: extract the candidate bitstring b = sign(r)

5: if b is classically verified to be a solution bitstring then

6: return verified solution b

7: else

8: repeat 2 − 4

9: end if

Recall from the arguments of section “Convergence in the Zeno limit” that in the limit of long dragging times Tf, ρ is expected to concentrate itself in the solution subspace, i.e., \({\rm{Tr}}(\rho \,{\hat{P}}_{{\bf{b}}})\) will only contribute to bitstrings that are actually solutions (assuming one exists). Moreover, as the readout time also becomes long (Δtm ≫ τ, such that the local readout is effectively projective), \({\rm{erf}}(\sqrt{\Delta {t}_{m}/2\tau })\) also tends to one, so that the leading 2−n is canceled out, and we expect to deterministically recover a correct solution bitstring.

We analyze the tradeoffs between the success probability \({{\mathbb{P}}}_{{\bf{s}}}\) in an individual run of Algorithm 4 and algorithm runtime by first defining a time-to-solution (TTS) as

where an algorithm realized within a dragging time Tf is followed by qubit readout of duration Δtm and is repeated N times. The superscript (m) denotes that the final measurement time Δtm is included. Suppose we allow ourselves to perform these N shots with the assumption that classically verifying whether each output bitstring b is actually a solution to our k-SAT problem is easy. This is true since k-SAT is in NP. How much time, and how many shots N, should be required to guarantee that the correct solution were to appear at least once with probability greater than some confidence cutoff \({{\mathbb{P}}}_{\star }\)? We can say that the probability that M shots out of N are successful are governed by binomial statistics, i.e.,

Then the probability of at least one successful run is

where \({{\mathbb{P}}}_{{\bf{s}}}^{(0/N)}\) is the probability that all N runs fail. Consequently our condition of interest is

such that the expected number of runs needed to achieve a solution with probability at least \({{\mathbb{P}}}_{\star }\) is

Here, \({{\mathbb{P}}}_{\star }\) should be understood as a desired level of solution confidence specified by the experimenter.

Given the above, we may now define a modified TTS that replaces N by N⋆, i.e.,

This is now consistent with the confidence bound \({{\mathbb{P}}}_{\star }\), i.e., it measures the time to solution for achieving a solution with probability at least \({{\mathbb{P}}}_{\star }\). The readout time Δtm is often neglected114 below, in order to emphasize the scaling of the TTS with the pure dragging time Tf alone, which is consistent with common analyses of asymptotic scaling of quantum algorithms.

The expression N⋆(30) may also be understood as a means of assessing whether a given set of parameters Tf/τ and Δtm/τ are sufficient to achieve any useful advantage between this measurement–driven algorithm and some other option. For example, a choice of parameters requiring N⋆ ≥ 2n to achieve the desired confidence level is clearly useless, because it would be faster to simply guess solution bitstrings at random and check whether they satisfy all m clauses. More generally, we can impose a condition that we want \({N}_{\star }\le {\lambda }_{c}^{n}\), where λc is some critical rate parameter that we want to beat. Some algebra reveals that

such that we can understand the \({{\mathbb{P}}}_{{\bf{s}}}\) required in an individual run of the algorithm to achieve a given scaling rate λc. We will soon see that in practice, the most difficult aspect of these expressions to evaluate is the dependence of \({{\mathbb{P}}}_{{\mathsf{s}}}\) on Tf.

Two–qubit 2-SAT: from discrete to continuous measurement

In order to gain some intuition about the dynamics under our measurement-driven algorithm, it is insightful to analyze the minimal version of the SAT problem. 2-SAT belongs to complexity class P and thus might be viewed as less interesting compared with other intractable SAT problems. The particular situation in which there are only two Boolean variables involved in the 2-SAT CNF formula is nevertheless of interest here, because the application of our generalized measurement BZF algorithms 1–3 illustrates the continuous form of BZF dynamics, while remaining relatively simple and accessible to detailed analysis. We will refer to this illustrative toy problem as the 2-qubit 2-SAT problem.

The simplest 2-SAT problem

2-qubit 2-SAT is the minimal version of the SAT problem that captures most of the significant features of SAT while involving a minimum number of qubits and clause measurements. In this section, we will analyze the algorithmic dynamics of the two-qubit system under generalized measurements. In particular, we will show that for a given total algorithm running time, weak continuous measurements lead to the highest success probability for finite Tf /τ. This motivates a detailed study of those continuous dynamics. We first examine the unconditioned average dynamics in Algorithm 1 with LME (14), and then consider the heralded dynamics of Algorithm 3 with the SME of Eq. (15).

More specifically, we consider a 2-qubit 2-SAT problem with a single satisfying solution. Without loss of generality such a problem can be defined by the following CNF formula

where

It can be easily checked that the only solution to this simple 2-qubit 2-SAT problem is (b1, b2) = (0, 1). Classically, one can imagine solving this by the following procedure. In the space {0, 1}2, each clause excludes an assignment that will violate it. For example, C1 = b1 ∨ b2 will exclude (b1, b2) = (1, 1) as a solution assignment. After the last clause check, the legal assignments that survive this procedure correspond to the solution. The BZF algorithm is similar, in that each clause measurement checks whether the quantum state is projected into the subspace it excludes as a failure subspace and the surviving quantum state conditioned on successful clause checks is the solution subspace. The three observables corresponding to the three clause checks in Eq. (33b) are given by

with

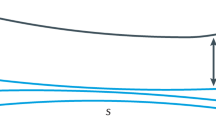

where \({\hat{\sigma }}_{x}^{(j)}\) and \({\hat{\sigma }}_{z}^{(j)}\) are Pauli-x and Pauli-z operators that only acts on the jth qubit. \({\hat{\sigma }}_{x}^{(j)}(\theta )\) is then the Pauli-x operator rotated clockwise in the x-z plane by θ as indicated in Fig. 2.

The quantum system is then driven by the generalized measurements of these three observables, and we adopt a simple linear schedule for the control parameter θ: for the cth cycle of clause measurements, \({\theta }_{c}=\frac{c}{N}\cdot \frac{\pi }{2}\) where N is the total number of cycles of clause measurements. The instantaneous solution state \(\left\vert {\phi }_{soln}(\theta )\right\rangle\), which is the common “+1”-eigenstate of \({\hat{X}}_{1}(\theta ),{\hat{X}}_{2}(\theta ),{\hat{X}}_{3}(\theta )\) is given by

The probability of successfully implementing the algorithm and thus also the running time then depend primarily on the ability to follow this instantaneous solution state in an adiabatic (Zeno) sense.

Zeno dragging for discrete and continuous generalized measurements

Burgarth et al.89 showed that the weak continuous limit is favorable for generating Zeno dynamics in the case of single measurement channel. We now show that a similar result can be obtained for the dynamics generated by multiple measurement channels. In particular, we will demonstrate that dynamics driven by weak continuous measurements approaches the target dynamics \(\left\vert {\phi }_{soln}(\theta )\right\rangle\)(35) better than discrete stronger measurements executed using the same total measurement strength and duration.

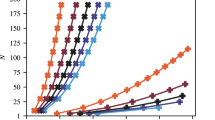

It will be adequate to consider the average dynamics given by Eq. (11) to make this point. Let the final state following the average dynamics driven by the generalized measurements be ρf. We then calculate the fidelity f between ρf and \(\left\vert {\phi }_{soln}(\theta =\frac{\pi }{2})\right\rangle =\left\vert 10\right\rangle\), i.e., f = 〈10∣ρf∣10〉. More specifically, we calculate the fidelity f(Δt/τ, Tf/τ) as a function of both Δt/τ, which controls the effective individual measurement strength, with τ the measurement time, and Tf/τ, where Tf = ℓ ⋅ Δt is the total duration of Zeno dragging. Figure 2 illustrates the relevant simulation of the average dynamics Eq. (11) for the 2-SAT problem on 2 qubits. This computation is consistent with the intuition that for a given measurement strength, increasing the total duration Tf (thus dragging more slowly) brings us towards the adiabatic/Zeno regime. However, for a given fixed and finite value of Tf, the fidelity finds its maximum value at Δt/τ = 0. This means that rather than multiple pulsed and discrete strong measurements, Zeno dragging in our two–qubit 2-SAT problem is most effective in the continuum limit, for any given value of Tf/τ.

The three projectors corresponding to the three clauses in the 2-qubit 2-SAT defined by Eq. (33b) are shown as tensor products of \(\left\vert {\theta }^{\perp }\right\rangle =\hat{R}(\pi +\theta )\left\vert +\right\rangle\) (indicated as a red dot) and \(\vert {\bar{\theta }}^{\perp }\rangle =\hat{R}(\pi -\theta )\vert + \rangle\) (indicated as a blue dot). Upon measuring the observable corresponding to each \({\hat{P}}_{j}(\theta )\), an outcome +1 heralds success (and corresponds to the ±θ states), while an outcome −1 heralds failure (and corresponds to the ±θ⊥ states). Thus, each measurement serves to rule out a possible final solution, shown to the right of drawings of each projector. The unique solution that remains is the solution to this 2-SAT problem.

Illustration of adiabatic convergence via Lindblad dynamics

We demonstrate here the convergence of the LME Eq. (14) to the adiabatic limit in the long time using the 2-qubit 2-SAT problem. Firstly, it is illustrative to show the exact diagonal form of the solution subspace in the Zeno frame defined by Eq. (17). For the 2-qubit 2-SAT example in Fig. 3, we have \(\hat{Q}={\hat{R}}_{Y}(\theta -\pi /2)\otimes {\hat{R}}_{Y}(\pi /2-\theta )\), and the observables of Eq. (34a) are then equal to

in the \(\hat{Q}\)–frame, represented in the \({\{\left\vert 11\right\rangle ,\left\vert 10\right\rangle ,\left\vert 01\right\rangle ,\left\vert 00\right\rangle \}}^{\top }\) basis. The solution state, which is now θ–independent, is marked in boldface in Eq. (36a). Clearly, the solution subspace is isolated in a block diagonal form and becomes θ-independent, as discussed in previous sections.

The two panels differ only in the scale and range of their axes. These results are obtained from simulating the average dynamics driven by measuring the three clause observables \({\hat{X}}_{1}(\theta )\), \({\hat{X}}_{2}(\theta )\) and \({\hat{X}}_{3}(\theta )\) in the 2-qubit 2-SAT problem Eq. (33b) according to Eq. (11). For a given dragging time Tf = ℓ ⋅ Δt, where Tf is subdivided into ℓ measurements each of duration Δt, the optimal value of Δt/τ is found at Δt/τ → 0, which implies that ℓ → ∞. The upper panel also shows small values of Tf ~ Δt: in this region we set Δt to the nearest ℓ value that evenly subdivides T. The small fidelity oscillations seen in this region are due to the discrete nature of this operation. For Δt > Tf (red hatched region, which is forbidden), we perform only the clause measurement at θ = π/2, yielding the lowest possible value of fidelity \({\mathscr{F}}=\frac{1}{4}\) for a two-qubit problem. This forbidden region is a general feature of such an analysis, and informs the behavior of the surrounding contours. The maximization of the fidelity for a given Tf/τ via taking Δt → 0 illustrates the advantages of operating in the limit of continuous weak measurements that is located towards the bottom edge of each plot.

We may now consider the dynamics of the algorithm on average, i.e, as modeled by Eq. (12). These dynamics represent the average performance, but can also be interpreted as the dynamics arising in the event that we dissipate information to the environment without actually detecting it28,29,30,31. This reflects the perspective that the average/Lindbladian dynamics are equivalently the conditional dynamics in the limit of vanishing measurement efficiency. In looking at the Lindblad dynamics, we are then looking at an “un-heralded” version of the continuous–time BZF algorithm, which is the time-continuous limit Δt/τ → 0 of Algorithm 1. Note that from the discussion of convergence in section “Convergence in the Zeno limit”, we can expect to deterministically achieve perfect solution dynamics in the limit of long dragging time, even in the case without detection.

Some key features of these Lindblad algorithm dynamics are illustrated in Fig. 4. Here we integrate the Lindblad dynamics for a variety of Γ Tf values (where Γ = 1/4τ is the measurement rate), scanning from the quasi-adiabatic/Zeno regime Γ Tf ≫ 1, to the diabatic regime Γ Tf ~ 1. We see that in the adiabatic limit Γ Tf → ∞, the dynamics converge to the pure and separable solution Eq. (35), as expected. For smaller values of Γ Tf, the fidelity is clearly reduced. We show that the reduced density matrices become less and less pure, with reduced contrast along the local z–axes along which we wish to read out a final 2-SAT solution. This loss of purity in the reduced density matrices is due both to overall purity loss of the two–qubit state due to dissipation, and due to the formation of spurious measurement–induced entanglement between the qubits when the Lindblad algorithm is constrained to finish within a finite time.

The same measurement strengths and linear schedule θ = π t/2 Tf are used through all four panels. The time axis is displayed here in units of the total evolution time Tf. a Illustrates the reduced density matrix evolution for qubit 1 (teal) and qubit 2 (purple), shown for both in the xz Bloch plane; results are shown for a variety of measurement strengths spanning from Γ Tf = 1 (pale) to Γ Tf = 1 × 104 (dark). b Shows the corresponding time traces of the local z coordinates for the two qubits. c shows the behavior of the separability (\(1-{\mathcal{C}}\), where \({\mathcal{C}}\) is the concurrence127) throughout the Lindblad evolution. d Plots the purity of the two-qubit state as it undergoes Lindblad evolution. These results show that in the adiabatic regime Γ Tf ≫ 1, we converge towards a pure-state and completely separable evolution (see Eq. (35)) that deterministically generates the solution of this very simple 2-SAT problem, i.e., z1 = 1 and z2 = −1, corresponding to (b1, b2) = (0, 1) as expected from Eq. (33b). We showed in section “Convergence in the Zeno limit” that this is in fact a general feature of k-SAT problems with a unique solution in this measurement--driven algorithm. Even far from the adiabatic regime (Γ T ~ 1), we still see that some information about this solution manifests itself on average, since the reduced density matrix traces (local z coordinates in (b)) still drift discernibly apart and move towards their respective solution states.

In Fig. 4a, b (and also in Fig. 5b below) one may immediately observe that as the dragging time Γ Tf increases, the system enters a time regime where lengthening the algorithm evolution time leads to rapid improvement in the contrast between the output states (around Γ Tf ≲ 200). However, the benefit of increasing Γ Tf is comparatively small beyond this point (Γ Tf ≳ 200). Therefore, to obtain the solution state with a desired high probability, we expect that there may generically exist an optimal runtime value \({T}_{f}^{opt}\), such that repeating this Lindbladian dragging process some number of times leads to extraction of the solution at the desired confidence level. We devote the next section to developing conceptual and formal tools needed to probe this idea in our two–qubit problem, and will return to it later at scale.

The times Tf, Δtm, and TTUS99% (Eq. (40)) are all given in units of the characteristic measurement time τ, which is assumed to characterize the strength of both the clause measurements and subsequent local readout measurements. We illustrate the timeline of each experimental run of the protocol in (a). b Shows the time evolution of the local z coordinate (see Fig. 4c), and illustrates the average z resolution we can expect to obtain on any one of the two qubits, and illustrates how this depends on the Lindblad dragging times Tf expended on the analog measurement-driven computation. c Analyzes the readout time Δtm required to achieve a solution in N shots, given the local bias ∣z∣ on the qubits. We plot Eq. (39) for n = 2 and required confidence level \({{\mathbb{P}}}_{\star }=0.99\), which we denote N99%. Since we consider a two-qubit problem (n = 2), it is effectively meaningless to consider performing N > 2n = 4 shots; the contour separating the useful (lower) region from this region is highlighted in red. d Aggregates the results of (b, c). Here we plot Eq. (40) (a special case of Eq. (31)) for \({{\mathbb{P}}}_{\star }=0.99\), notated as \({{\rm{TTS}}}_{99 \% }^{(m)}\). Some selected contours of N99% Eq. (39) are overlaid, highlighting the discrete nature of the ceiling function introduced to enforce the confidence threshold. For this very small toy problem, we observe that there is not an optimal TTS visible. Because 2n is small in this instance, the stipulation that N be at most 2n is quite restrictive (it is, after all, very easy to solve this toy problem just by checking all candidate solutions). We will see in later sections that the importance of these small-size effects falls away as we consider larger problems and TTS scaling.

Illustration of readout scheme and TTS

In the Lindbladian setting the observer gains no information during the dragging time Tf, due to performing dissipation without detection. All solution information must then be obtained from local measurements to read out the qubit states at the end of the algorithm, as described in Algorithm 4 and section “Readout scheme and time-to-solution”.

Let us consider the statistics of the measurement outcomes when such a measurement is applied to the reduced density matrix ρj for the jth qubit in our register. This means that we apply Eq. (25) under the simplifying assumption that there is negligible correlation between any of the qubits (Fig. 4 indicates that for relatively long dragging times, this is a reasonable assumption to make). We have the probability density

for each qubit, where ℘(r∣0) and ℘(r∣1) are Gaussians with positive and negative mean signals respectively, and variances 1/Δtm (such models are commonly used for dispersive or longitudinal readout of individual qubits109,110). As in section “Readout scheme and time-to-solution”, suppose that upon reading out rz (a vector of all the rj), we obtain the solution bitstring from that run of the experiment via \(\tilde{{\bf{s}}}={\rm{sign}}({{\bf{r}}}_{z})\). The probability that each \({\tilde{s}}_{j}\) correctly reflects the underlying biases zj, such that \(\tilde{{\bf{s}}}\) matches the correct solution s, is given by

where in the last line the biases ∣zj∣ = ∣zT∣ are assumed the same up to a sign. We note that this expression is a special case of Eq. (27), under the simplifying assumptions that the state of the different qubits is approximately separable, and that the local biases are uniform and correctly reflect the solution state. The assumptions of separability and correct solution bias are generically valid for sufficiently long Tf, and when applied to problems with a unique solution. Notice that in the limit of deterministic dragging T/τ → ∞ and strong readout Δtm/τ → ∞, this expression scales as 1n. In the opposing limit of T → 0 (so that ∣zT∣ → 0), the scaling instead goes as 2n. This corresponds to the readout essentially choosing one of the 2n candidate solution bitstrings at random, in analogy with the worst, i.e., brute-force classical approach to k-SAT.

Let us next consider the number of runs N⋆ required to achieve a solution probability at least \({{\mathbb{P}}}_{\star }\), Eq. (30), to this special case of 2-qubit 2-SAT. For k-SAT problems with unique solution and uniform local bias, we may write

using Eq. (38). A visualization of this expression for n = 2 2-SAT appears in Fig. 5c. We remark that if a bound on the bias ∣zT∣ could be systematically derived in the case of a unique solution, then the behavior of this dissipation–driven algorithm could in turn be systematically bounded using the expressions above. Given the above, we may now define the time to a unique solution,

This is a special case of Eq. (31), using the further assumptions about the local qubit bias implicit in Eq. (39). These assumptions are compatible with dynamics like those of Fig. 4, and this expression is consequently used in the analysis of Fig. 5 for that same example. Figure 5c, d are instructive with regards to the process of estimating a time to solution, illustrating how one might derive regions of Tf and Δtm required to solve a k-SAT problem with a continuous measurement–driven approach, within a certain number of shots and/or with a specified confidence threshold.

One may infer from Fig. 5 that if an optimal TTS exists in this system, it falls in the regime of very short dragging times Tf and readouts Δtm, requiring far more than the N = 4 runs in which the solution to this toy problem can be obtained via a guess and check approach (the worst solution approach). This formally tells us that this toy problem is too simple for our algorithm to be worthwhile, and that we are nearing the end of this small problem’s utility as an instructional tool. We will see in section “Scaling with qubit number for 3-SAT” that a transition occurs at which we will find a genuine optimal TTS, indicating that we can tell when a problem becomes large enough to become more computationally worthwhile.

Remarks: multi-solution and unsatisfiable problems

In the analysis above, we have emphasized the sample two–qubit 2-SAT problem of Fig. 3, which has a unique solution. However, variants on this problem containing either more than one solution, or no solutions at all, are also illuminating to consider. We describe such alternative two–qubit 2-SAT problems in detail in Supplementary Information Section II, restricting ourselves here to a brief summary.

When multiple solutions satisfy our two–qubit 2-SAT problem, or when no solution can satisfy our two–qubit 2-SAT problem, we are no longer guaranteed any bias in the local z coordinates on average at the end of a Lindbladian dragging interval, even in the Zeno limit. However, the output of the two problems, i.e., when multiple solutions exist or when no solution exists, still differ in meaningful ways. In the case of multiple solutions, we will generate relatively high purity entangled states within the solution subspace, i.e., superpositions of the possible classical solutions, one of which is highly likely to be correctly resolved by reading out the qubits in the desirable regime Tf ≫ τ and Δtm ≫ τ. This means that although the individual readout measurements of \({\hat{\sigma }}_{z}^{(j)}\) will appear random run-to-run, information about the solution space will still appear in the structure of the correlations between those zj outcomes on a run-by-run basis. On the other hand, when the SAT problem is unsatisfiable, we obtain the maximally–mixed state on average, so that the local qubit readouts at the end will be random and not exhibit any mutual qubit correlations.

Illustration of heralded algorithm

We conclude our discussion of two–qubit 2-SAT with a demonstration of the heralded algorithm (Algorithm 3) using the 2-qubit 2-SAT defined by Eq. (33b). We have set the threshold to be \({r}_{th}=-2.5/\sqrt{{T}_{be}}\) so that some level of fluctuation due to noise is allowed by the filtered signal. This corresponds to correctly identifying the failure with a confidence probability of 99.65% in the steady state. Figure 6 shows the evolution of the qubit dynamics zi(t) and the filtered signals \({\tilde{r}}_{i}(t)\) under the heralded algorithmic dynamics. A successful run of the algorithm will drag the qubits to their corresponding solution states, analogously to the fixed point algorithm driven by the Lindbladian. The difference is that with the heralded algorithm, we can now detect any failure shortly after it occurred instead of at the end of the algorithm. This can be seen in Fig. 6, where the failed trajectory is detected within an interval ~Tbe of a failure event.

The SAT problem here is the same as the one defined in Eq. (33b). We set the measurement collapse time τ = 1 and the total dragging time Tf = 100. The filter response time is set to be \({T}_{be}=\max \{2\tau ,0.1{T}_{f}\}\), and the threshold is \({r}_{th}=-2.5/\sqrt{{T}_{be}}\). a Shows the evolution of a successful run, where the filtered signals \(\bar{r}\) Eq. (21) never reach the threshold value and the reduced conditional qubit dynamics (inset) diffuse relatively cleanly towards the correct solution state. b Shows the evolution of a failed run. In this example, qubit 0 is collapsed into an incorrect subspace near t/τ ≈ 50 (see inset) and the filtered signal 1 reaches the threshold near t/τ ≈ 60, heralding violation of a particular clause, and therefore a failure of the algorithm. The failure is detected when the threshold is crossed: the algorithm is terminated at this point. We also show the evolution for some time past this point, up to t = Tf, for illustrative purposes.

Scaling the problem Up

We have gained some intuition about the workings of the continuous BZF algorithm for k-SAT by investigating the simplest version of it, namely for k = 2 with 2 qubits. We now extend our analysis towards cases of greater computational interest, to study the algorithm’s performance on k-SAT problems with both k = 2 and k = 3, on 4–10 qubits. In this section, we will benchmark the Zeno dragging algorithms at various values of clause density α, as well as for different parameters Δt and Tf. In order to study the dependence only on the dragging time Tf, we will then assume projective measurement at the final readout phase, and thus we will make calls to Algorithm 1 and Algorithm 3 instead of to Algorithm 4.

Quantum computational phase transition

It has been rigorously proven that in the large n limit, the probability of satisfying a random SAT problem exhibits a phase transition from satisfiable to unsatisfiable (SAT-UNSAT) when the number of clauses per qubit number, i.e., α = m/n, exceeds a critical value αc115. For 2-SAT, the critical clause density is analytically determined to be αc = 1116. For 3-SAT, the critical value is empirically evaluated to be αc ≈ 4.26117,118. The computational cost for solving these random SAT problems correspondingly exhibits an easy-hard-easy pattern, with the computational cost transition occurring at the critical value of αc118,119. Quantum algorithms that optimize solutions for k-SAT, such as the quantum approximate optimization algorithm (QAOA), show a similar computational phase transition near αc, even for systems as small as 6 qubits120,121.

Here, we show evidence of an analogous quantum computational phase transition for both random 2-SAT and 3-SAT under our measurement-driven quantum algorithms. Specifically, we numerically estimate the probability of successfully determining the satisfiability of a random instance as a function of the clause density α, under different values of dragging time Tf. The empirical estimation of this success probability Psucc(α, n, Tf) is given by

where Nprob(α, n) is the number of random instances generated for clause density α and number of variables n, and Nsucc(α, n, Tf) is the corresponding number of instances whose satisfiability are correctly determined by the measurement-driven quantum algorithm with total dragging time Tf.

Figure 7a, b show results for calculations with n = 5 qubits using the Lindblad Algorithm 1. We can see that even with such a relatively small system, for both 2-SAT and 3-SAT problems the SAT probability undergoes a clear SAT-UNSAT transition crossing at a distinct value αc, while Psucc(α, n, Tf) shows an easy-hard-easy transition, with the hardest part located near αc. We also see that when more quantum computational resources are provided, specifically, for larger Tf, the algorithm can obtain higher values of Psucc(α, n, Tf), indicating better performance.

a, b are for 2-SAT and 3-SAT with the Lindblad Algorithm 1 (Δt → dt) respectively, while (c, d) are with the heralded Algorithm 3. The same measurement strength Γ = 1/(4τ) is used for all panels, together with a linear schedule θ(t) = πt/2Tf for 0 ≤ t ≤ Tf. We have set the measurement time τ = 1 throughout all the simulations. In the heralded algorithm, we have additionally set \({T}_{be}=\max \{2\tau ,0.1{T}_{f}\}\), Tmin = 5τ, and \({r}_{th}=-2.5/\sqrt{{T}_{be}}\). The black dotted vertical lines are the locations of critical clause densities in the limit of large n, i.e., αc = 1 for 2-SAT and αc ≈ 4.26 for 3-SAT. The black curves are the probabilities of satisfiability as a function of α for the randomly generated SAT instances. The colored curves are Psucc(α, n, Tf) under various values of Tf. Each datum on the curve is obtained using a sample size Nprob(α, n) = 500. The non-zero width of the critical region and the deviation of the location of the lowest value of Psucc from αc result from the finite system size n.

As discussed in section “Heralding BZF algorithm with finite measurement strength via filtering”, the heralded dynamics benefit from real-time detection of any failures. This advantage allows us to define the heralded algorithm (Algorithm 3) that restarts when the failure is detected early, and thus is more likely to follow the correct trajectory given a fixed amount of computation time Tf even without feedback. Notice that in Algorithm 3, we do not terminate the dynamics when the time left trest is smaller then a minimum value Tmin. This is because when the total dragging time is too small (comparable to Tbe and τ), the quantum Zeno effect is not strong and the failure detection based on the filter is also not reliable anymore. However, we can still use conditional dynamics, i.e., SME Eq (15) rather than the averaged dynamics LME Eq. (14), in this situation.

We perform the same type of calculation for the success probability Eq. (41) as a function of the clause density α under the heralded algorithm 3. The results are shown in Fig. 7c, d. We can see that the heralded algorithm shows the same type of computational phase transition as the Lindblad algorithm in panels (a) and (b). However, the performance of the heralded algorithm is better than that of the Lindblad algorithm, especially near the critical clause density αc, and also when Tf is large. We can understand the latter as a consequence of the combination of earlier detection of failure and the possibility of running multiple trials.

This analysis has shown that for the continuous measurement–driven quantum algorithms, it would be most difficult to successfully solve SAT problems having the critical clause density αc, similar to known results for QAOA and for classical algorithms. Therefore, in order to establish the scaling of the algorithm with respect to the system size n, we shall focus on the hardest SAT instances for the quantum algorithm, i.e., instances with α = αc from now on.

Scaling with qubit number for 3-SAT

In this section, we study the scaling of the 3-SAT TTS with the qubit number n, which is central to quantifying the algorithm performance in terms of computational complexity. Specifically, we do not expect the TTS to scale polynomially with the qubit number n, which would imply NP ⊆ BQP. Since there are no strong computational complexity arguments implying this, we will then instead assume that TTS scales exponentially with the qubit number n throughout the rest of this work, i.e., TTS ~ λn. We shall focus on studying the base number λ for the algorithms with different parameter settings.

In section “Illustration of Heralded algorithm” we demonstrated the behavior of the TTS as a function of the sum of the dragging time Tf and the final readout time Δtm. However, in the context of algorithmic scaling, the TTS is generally characterized without taking the final readout time into account, which corresponds to using the dragging time Tf alone. To enable comparison with the literature, we therefore define the algorithmic time to solution as the following114

where \({{\mathbb{P}}}_{{\bf{s}}}\) is the solution state probability at the end of one run of the algorithm.