Abstract

The demand for efficient Industry 4.0 systems has driven the need to optimize production systems, where effective scheduling is crucial. In smart manufacturing, robots handle material transfers, making precise scheduling essential for seamless operations. However, research often oversimplifies the Robotic Flexible Job Shop problem by focusing only on transportation time, ignoring resource allocation and robot diversity. This study addresses these gaps, tackling a Multi-Robot Flexible Job Shop (MRFJS) scheduling problem with limited buffers. It involves non-identical parallel machines and robots with varying capabilities overseeing material handling under blocking conditions. The case study is based on a real Industry 4.0 scenario, where the layout restricts each robotic arm’s access, requiring strategic buffer placement for part transfers. A Mixed-Integer Programming (MILP) model aims to minimize makespan, followed by a new Genetic Algorithm (GA) using Roy and Sussman’s Alternative Graph. Computational tests on various scales and real data from a manufacturing plant demonstrate the GA’s efficacy in solving complex scheduling problems in real-world production settings. Based on the data, the Proposed Genetic Algorithm (PGA), with an average Relative Deviation (ARD) of 0.25%, performed approximately 34% better compared to the Basic Genetic Algorithm (BGA), with an average ARD of 0.38%. This percentage indicates that the PGA significantly outperforms in solving complex scheduling problems.

Similar content being viewed by others

Introduction

Industry 4.0 marks a transformative era in the industrial sector, characterized by the seamless integration of advanced technologies such as cyber-physical systems1,2, artificial intelligence (AI)3,4, cloud computing5,6, robotics7, and the Internet of Things (IoT)8,9. This paradigm shift promises significant improvements in production efficiency, customization, and waste reduction, addressing the increasingly sophisticated demands of modern industries10,11. A cornerstone of Industry 4.0 is the Manufacturing Execution System (MES)12. MES acts as a critical link between the planning and execution phases of production, coordinating and optimizing processes13. Its functionalities include data gathering, production dispatch, tool and equipment maintenance, detailed scheduling, resource allocation, and product quality control14. By dynamically adjusting production schedules based on real-time data, MES ensures efficient and responsive operations15. One of the most crucial aspects of all industrial systems is production scheduling. With the integration of robots into production processes, the complexity of scheduling increases, making Multi-Robot Task Allocation (MRTA) vital16,17. Despite advancements, many existing MES lack sophisticated optimization and scheduling functionalities, necessitating upgrades to meet modern manufacturing demands18. Developing an advanced scheduling system and integrating it into MES is essential to fully realize the potential of Industry 4.019,20.

The necessity of advanced scheduling systems is underscored by several benefits: enhanced efficiency through streamlined operations and reduced downtime; reduced operational costs via optimized resource utilization and minimized waste; improved resource management with effective allocation of tasks and resources; and real-time adaptation capabilities that allow adjustments to changing demands and conditions21,22,23,24.

This study specifically addresses the problem of optimizing task scheduling in flexible job shop (FJS) environments central to Industry 4.0, where multiple non-identical robots operate in parallel under blocking and buffer constraints. Unlike previous studies that often simplify the problem by focusing solely on transfer times or single-type robot configurations, this work confronts the unique challenges of multi-robot allocation and parallel tasking within FJS. These environments introduce high variability and demand adaptable scheduling to manage diverse product types and fluctuating production requirements. The primary challenges in this problem involve managing task allocation across multiple non-identical robots, coordinating resources to prevent delays caused by limited buffer space and blocking conditions, and optimizing timing to avoid downtime. These challenges are particularly pronounced in real-world Industry 4.0 environments, where complex dependencies exist across multi-stage tasks and specific layout requirements. These challenges go beyond traditional approaches that often oversimplify task allocation by assuming uniform robots or omitting buffer constraints, highlighting the need for a more robust scheduling approach. To tackle these issues, this study introduces a novel FJS model that combines a mixed-integer linear programming (MILP) framework with a genetic algorithm (GA) tailored for Industry 4.0 conditions. This model stands out by incorporating multiple types of robots with precise task timings and dependencies under complex conditions like blocking. By integrating recent advances in scheduling, MILP, and GA with MES functionality, this approach bridges theoretical optimization advancements with the practical needs of real-world production environments. This integration enhances MES capabilities to address current limitations in managing complex, multi-robot environments, supporting the adaptability and precision necessary for modern manufacturing.

This study focuses on scheduling a real-world Industry 4.0 system named Platform 4.0. Designed to facilitate intelligent and adaptable manufacturing processes, Platform 4.0 is equipped with advanced machinery and robotics and employs a decentralized MES architecture. Located at the Arts et Métiers campus in Paris, Platform 4.0 serves as a cutting-edge research asset featuring advanced CNC machines, KUKA robots, and an OMRON MOMA robot. This system uses a modular approach for standard manufacturing processes such as transferring, machining, quality assurance, and monitoring, ensuring each specific operation is performed correctly. Platform 4.0 is introduced in detail in the section “Implementation in Real Production System 4.0.”

A number of significant advances are made to the field by this study:

-

General model in production systems: A new general model for production systems with parallel robots and different kinds of robots.

-

Heuristic-based scheduling: Creating an advanced scheduling framework for Production Scheduling with Multi-Robot Task Allocation.

-

Multi-robot dynamics: Incorporating robot diversity and precise task timings.

-

Comprehensive fitness function: Developing a robust function for evaluating scheduling performance.

In the following sections, the paper begins with a literature review to establish the current state of research, followed by a detailed problem description and the transformation of problem parameters. Subsequently, the problem is formulated, and the proposed methodology is outlined. The experimental results are then presented, comparing the performance of the proposed approach with existing methods. Finally, the article concludes with insights drawn from the study and highlights avenues for future research.

Literature review

Advances in Industry 4.0 technologies, including cyber-physical systems, artificial intelligence (AI), robotics, and the Internet of Things (IoT), have profoundly impacted industrial production and management methods25. This section reviews key studies focused on improving scheduling methods and multi-robot task allocation to highlight existing gaps and the need for further research in this area.

Introduction to industry 4.0 technologies in production

Technological advancements in Industry 4.0, including cyber-physical systems, AI, robotics, and IoT, have significantly transformed manufacturing. These changes aim to improve efficiency, enhance customization, and increase flexibility in production processes, leading to the development of intelligent and flexible production systems26. Utilizing these advanced infrastructures optimizes production time and costs, helping companies respond to dynamic changes in production cycles27,28,29.

Production scheduling methods and AI-based techniques

In production scheduling, various systems like FJS, Flow Shops, and Hybrid Shops are used, each with unique challenges. For example, Flow Shops focus on minimizing makespan and maximizing efficiency in sequential production lines30. In contrast, FJSP requires AI tools for managing job priorities and adapting to changes due to its high flexibility31,32,33. Recent studies have shown that AI-based optimization techniques, such as GA and machine learning, are highly beneficial in flexible and hybrid production environments34.

Human–robot collaboration in manufacturing environments

In recent years, Human–Robot Collaboration (HRC) in manufacturing environments has drawn significant attention from researchers. Task allocation models are designed to not only improve productivity but also enhance ergonomics and balance workload distribution35. HRC task allocation models use criteria such as task suitability, resource availability, and other operational parameters to optimize task allocation and improve system efficiency36.

Current challenges and previous research

Despite advancements, there are still considerable challenges in production scheduling and multi-robot task allocation. The following studies highlight some recent research, their goals, and limitations: Li et al. addressed scheduling challenges within flexible systems that involve unrelated parallel machines, focusing on optimizing job sequencing and robot movements to reduce overall production time. This study implemented two metaheuristic algorithms to achieve its goals; however, the primary emphasis was on processing time optimization, with limited exploration of the complexities involved in multi-robot task allocation37. Fatemi-Anaraki et al. examined dynamic scheduling in multi-robot systems through the use of mixed-integer linear programming models aimed at improving efficiency in dynamic environments. Although the study effectively optimized scheduling and minimized delays, it fell short in tackling the specific challenges of task allocation amid operational uncertainties38. Li et al. proposed an enhanced algorithm for optimizing scheduling in distributed flow shop systems, specifically designed to minimize completion time. While this approach successfully addressed production process optimization, it provided limited insights into task allocation within multi-robot systems and overlooked the intricacies of robot collaboration39. In a study focused on production and intralogistics synchronization within Industry 4.0, Li et al. leveraged IoT and digital twins to enhance coordination between production and logistics processes. Although real-time data was effectively utilized, the study primarily concentrated on production and logistics synchronization, leaving multi-robot task allocation challenges largely unexplored40. Liao et al. developed a strategy for managing robot positioning based on zeroing neural dynamics, which aimed to improve coordination and minimize the distance between robots. While this approach significantly enhanced real-time robot coordination, it did not address the complexities of scheduling and task allocation in multi-robot production environments41. The literature review shows that while significant progress has been made in production scheduling and robot task allocation, there remain challenges in managing the complexities of multi-robot task allocation and optimal scheduling in dynamic environments. Previous studies have often focused on specific aspects of scheduling, highlighting the need for more comprehensive approaches and advanced optimization algorithms for managing complex production environments. Identifying these research gaps is essential to guide future research, emphasizing the need for advanced optimization algorithms and efficient models to address real-world operational complexities effectively.

Problem definition

Considering the symbols of Piendo42, we solve this problem according to the layout of the Platform 4.0 production system. Given that the jobs have their own production routes, the shop floor is of the FJS type, which includes various types of robots. In this article, a more general case is considered where the production system includes both parallel machines and parallel robots. The investigation delves into the Multi-Robot-FJSP system, characterized by a robotic production system housing numerous workstations with parallel machines. Within this setup, robotic arms are stationed on the shop floor to oversee material handling jobs. Each robotic arm is assigned a specific set of workstations for unloading, transferring and loading parts within its operational region. When a part needs to be moved between workstations or machines or buffers based on its operation sequence, the corresponding robotic arm unloads it from its current machine or buffer, moves it to the next machine or buffer, and returns to the station. Depending on the needs of the production system and budget, etc., in each area, there may be one or more robots moving the parts in parallel and at different speeds. Furthermore, there may be different types of robots in each region, such as mobile robots, fixed robots, or linear robots etc. Also, the speed of different parallel machines that process parts can also be different. Robotic arms are unable to access every workstation, necessitating alternative methods for part exchange. To address this, buffers with finite capacities are positioned between regions. Certain buffer units are specifically designated for parts progressing deeper into the shop floor, while other units are allocated for parts moving in reverse. A finished product must pass through intermediate buffers in reverse order to leave the shop floor. This necessity arises because there is only one input/output (I/O) station on the shop floor, located in the first region. Additionally, this study assumes a blocking condition, where a job must wait for a machine to become available before it can start or continue its operations, even if the subsequent operation or job is ready to begin. This idle time occurs due to the sequential nature of job processing and the finite capacity of machines to handle multiple jobs simultaneously. Figure 1 illustrates an example of a flexible workshop system employing robotic transportation.

In the FJS system, each job has a predetermined processing time for each machine and follows to a defined process plan that involves operations on different machines in different regions.

In the MRFJS system, different machines are located in different regions, and robots transfer jobs to various buffers or machines. Transportation operations must be included to ensure the proper allocation of robots to jobs. Anytime a job transitions from one region to another, it must adhere to a process plan that involves visiting buffers. If a machine is unavailable, the part will be blocked in the buffer and wait until the next machine becomes available, necessitating waiting operations in both the output and input buffers.

Therefore, the process plan for each job must be revised according to the region of workstations and the layout of the shop floor. This revised plan should include transportation operations and specify the buffers to be visited, in addition to the machine operations. The following example better illustrates this concept, showing how the robots depicted in Fig. 1 work together to serve a part based on its process plan.

Suppose we have two jobs with two different processing plans. The first job includes two operations, and the second job includes one operation:

-

J1: Operation 1 (Machine type A) and Operation 2 (Machine type C)

-

J2: Operation 1 (Machine type B)

Based on the shop floor structure, the number of regions, and the exact location of processing machines, the detailed operations, including waiting in input and output buffers and moving tasks by robots, need to be updated and added to make the operations map fully functional. This process covers the sequence of operations from the entry of raw material into the system to its exit as a fully processed piece, and also includes updating the previous indices of the machines.

In the depicted production cell (Fig. 1), the production system consists of three separate areas that contain a total of 31 production resources. These include 12 processing machines of various types, and each type may have parallel machines. The machines are as follows:

-

Type A processing machines: numbers 4 and 5

-

Type B processing machine: number 6

-

Type C processing machines: numbers 14 and 15

-

Type D processing machines: numbers 25 and 26

-

Type E processing machine: number 27

-

Type F processing machines: numbers 28, 29, 30, and 31

The system also includes 5 input buffers, with input buffers for the second region numbered 7 and 8, and for the third region numbered 16, 17, and 18. Additionally, there are 5 output buffers, with output buffers for the second region numbered 9 and 10, and for the third region numbered 19, 20, and 21. There are also 9 robots: parallel robots 1, 2, and 3 in the first region, parallel robots 11, 12, and 13 in the second region, and parallel robots 22, 23, and 24 in the third region. Furthermore, there is an input/output station positioned within region one.

So the coding of the resources of the regions is as follows:

-

Region 1: input buffer, output buffer, parallel robots 1, 2 and 3, parallel Machines type A including 4, 5 Machine type B including 6

-

Region 2: input buffer 7, 8, output buffer 9, 10, parallel robots 11, 12 and 13, parallel Machines type C including 14, 15

-

Region 3: input buffer 16, 17 and 18, output buffer 19, 20 and 21, parallel robots 22, 23 and 24, parallel Machines type D including 25 and 26, Machines type E including 27, parallel Machines type F including 28, 29, 30 and 31

For an example involving J1, the first operation of the first job is performed on a type A machine located in the first region, and its second operation is performed on a type C machine located in the second region. Consequently, the sequence of operations will be updated, transforming the two main operations into ten detailed operations. The first operation involves the job waiting in the input buffer, marking the starting point. The second operation is the movement of the part by one of the parallel robots in the first region, transferring it from the input buffer to one of the type A machines. The third operation is the processing of the part on the type A machine. After the processing is completed, the fourth operation involves moving the part from the type A machine to the input buffer of the second region. Since the type C machine is located in the second region, this transfer must be done through buffers and robots. The fifth operation is the part waiting in the input buffer of the second region. The sixth operation involves a robot in the second region moving the part from the input buffer to one of the type C machines. The seventh operation is the processing of the part on the type C machine. After this processing is completed, as no further processing is required, the eighth operation involves moving the completed part by a robot in the second region from the type C machine to the output buffer of the second region. The ninth operation is the part waiting in the output buffer of the second region. Finally, the tenth operation involves transferring the part from the output buffer of the second region to the output buffer in the first region by a robot in the first region. The operation of waiting in the output buffer is not considered because blacking does not occur in this buffer and there will be no machine after that, so the time to enter the output is equal to the completion of the entire job.

In summary, the updated process plans for the parts J1 and J2 are as follows:

-

J1: O1(I), O2 (1/2/3), O3 (4/5), O4 (1/2/3), O5 (7/8), O6 (11/12/13), O7 (14/15), O8 (11/12/13), O9 (9/10), O10 (1/2/3)

-

J2: O1(I), O2 (1/2/3), O3 (6), O4 (1/2/3)

An algorithm is developed to convert the original operation into the transformed operation using MATLAB. The input of this algorithm includes workshop information such as the number of machines, regions, and buffers, the location of each resource relative to different regions, and the processing times of different machines. Additionally, the specifications of the jobs, their operations on the processing machines, and the number of each job are considered as inputs. The output of this algorithm will be the transformed operations and eligible resources. In a transformed operation, it always starts by waiting in the input buffer and ends by moving to the output buffer. Operations alternate between handling by robots and processing in machines and waiting in buffers. In transformed operations, even indices correspond to movements by robots, and odd indices correspond to processing in machines and waiting in buffers. The indices of the main processing operations in the machines are updated, and their processing times are specified. For example, operation 1 in job J1 is converted to index 3. The processing time of operations with an even index, which are transfer operations, varies and depends on the selected robot, as well as the chosen origin and destination, because there are machines and parallel buffers in the system located in different areas of the workshop. Therefore, the values of these operations are variable. Also the processing time of operations in buffers is zero.

Figure 2 relates to operation transformation algorithm and is written in pseudo code. In this algorithm, various operations are encoded as follows: transfer operation is coded as 0, wait in the input buffer is coded as 1, output buffer is coded as 2, and processing in machines is coded as 3. Initial-Operations represents the initial operations for each job, which only include processing in the machine. Initial-Region specifies the regions related to the processing machines for the initial operations to update the path and operations accordingly. Set-Operation(i) receives the encoded values for each operation. Initially, the operation starts with the input buffer, coded as 1, followed by a transfer operation, coded as 0, as transfer operations occur alternately. The algorithm checks Current-Region and Destination-Region to determine the region where the part is located and the region to which it should move. If the current region is less than the destination region, the part must move forward through the input buffers and towards the next regions, receiving code 1. If it reaches the processing machine or the destination, it receives code 3 and performs the processing operation. To find the correspondence between the initial main operation and the transformed operation, Original-Operation is used, forming a matrix of allowed machines for operations. Once all operations are completed, the part must be directed to region 1, where the output buffer is located, through the output buffers, receiving code 2. The algorithm guides the part from the starting point to the destination, performing the necessary operations using the specified codes. This updates the path and operations of the part within the system. After converting the operations and determining the path for each job, it is time to optimize the scheduling to allocate resources efficiently to minimize production time, maximize resource utilization, reduce costs, and ensure timely delivery of products.

Problem formulation

A variation of the typical Job Shop Scheduling Problems is the FJS. In this class of problems, the machines are adaptable and capable of handling a variety of jobs, allowing an operation to be completed on multiple machines. This type of problem can be broken down into two sub problems: choosing the appropriate machine for each operation and determining the optimal sequence for performing the operations on the machines43. The MRFJS is detailed as follows: In a manufacturing environment, there are J jobs, labeled as \(J=\{J_1, J_2, \ldots , J\}\), that need to be processed using various resources defined as \(R=\{R_1, R_2, \ldots , R_m\}\). These resources include robots, machines, and buffer stations. The process of moving items with robotic arms involves a series of steps: picking up the job from its starting point, transporting it between two machines, placing it in the destination machine, and then returning to the starting point. This section starts by describing the sets, indices, parameters, and decision variables used in the mathematical formulation. Following this, the MILP model for solving the problem is presented. The assumptions related to MRFJS are outlined as follows:

-

1.

Parallel machines are not located in different regions. This assumption is made because the movement to perform a specific operation, which involves choosing between parallel machines, will always occur within a specific region.

-

2.

There may be more than one series of parallel machines in an area.

-

3.

There may be more than one robot in an area, but these robots differ in movement speed, time taken to travel between two sources, and their central coordinates (parking locations).

-

4.

Parallel machines can operate at different speeds.

-

5.

All operations within the system are treated with equal priority.

-

6.

Robots, machines, and buffers can handle only one job at any given time.

-

7.

The operational performance of robots and machines is assumed to be stable, without any failures.

-

8.

The scenario does not account for potential conflicts or collisions in the paths of robots.

-

9.

The primary metric for evaluating performance is the makespan, representing the completion time of the final process.

-

10.

If a robot arrives at a machine before the assigned operation is complete, it will wait until the processing is finished to retrieve the job.

-

11.

If a robot arrives after a job has completed processing, the job may need to wait in the machine or buffer until the robot initiates the transfer.

-

12.

Each operation is exclusively assigned to a single machine.

-

13.

The processing durations are predetermined, constant, and unrelated to the order in which they are executed.

-

14.

The transfer operation includes the time when the robot takes the job from one buffer or machine and transfers it to the next buffer or machine.

-

15.

If two consecutive processing operations are to be performed on the same machine, the robot will not return to its initial position after the first operation. Instead, it waits by the machine to take the job and transfer it to another resource. If the robot’s destination changes, it will return to the initial area.

-

16.

At the start of the planning time frame, all jobs and machines are available.

Indices and sets

-

\(j, j'\) : Index of jobs

-

\(i, i'\) : Index of operations

-

\(r, r', r''\) : Index of resources

-

\(J\) : The set of jobs \(j\), \(j \in J\)

-

\(O_j\) : The set of operations, \(i \in O_j\)

-

\(E_{ji}\) : Set of authorized resources to perform operation \(i\) of job \(j\), \(r \in E_{ji}\)

Parameters

-

\(P_{ijr}\) : Processing time of operation \(i\) of job \(j\) on resource \(r\) (only for odd \(i\) indexes)

-

\(d_{rr'}\) : Physical distance between source \(r\) and \(r'\)

-

\(V_r\) : Robot movement velocity

-

\(t\) : The duration of loading or unloading parts

-

\(r_j\) : Clearance time of part \(j\)

-

\(n_j\) : The number of operations of part \(j\)

-

\(M\) : A large positive value

Decision variables

-

\(C_{\max }\) : The makespan (the maximum completion time of all jobs)

-

\(S_{ij}\) : The arrival time of job \(j\) to perform operation \(i\) in its corresponding resource

-

\(C_j\) : Completion time of part \(j\)

-

\(X_{jir}\) : Binary variable: if operation \(i\) of job \(j\) is performed in resource \(r\), it is equal to 1; otherwise, it is 0

-

\(Y_{(jij'i')}\) : Binary variable: if operation \(i\) of job \(j\) is performed before operation \(i'\) of job \(j'\), it is equal to 1; otherwise, it is 0

Constraints

Equation (1) represents the optimization objective of the problem, aiming to minimize the makespan by reducing it. According to Constraint set (2), only one resource is selected for each job operation. Constraint set (3) states that the entry time of the piece into the raw piece input buffer (that is, the first operation) is equal to the clearance time. The constraint sets (4) and (5) guarantee the operation precedence within each job and dictate that the sequence of operations for each job must be followed. Constraint set (4) states the start time of the subsequent operation (as the part enters the next source) aligns precisely with the moment the part exits the previous resource. This departure time, encompassing both the processing phase on the machine and the buffer waiting period, corresponds directly to when the part’s processing concludes and it is subsequently loaded onto the robot. According to Constraint set (5), the transfer operations’ departure time is equal to when the part is loaded into the robot. It travels between the previous resource and the next resource and is loaded into the next resource. The final job’s completion time is determined by constraint set (6). The previous operation’s departure time from its corresponding resource determines the completion time under this constraint. It indicates that the time the job entered the robot and the path between the previous source and the output buffer equals the job’s departure from the zone 1 robot to load in the final part’s output buffer. After passing through, the buffer loads it. Equations (7) and (8) are constraints for operation precedence at each resource, so each resource can process only one operation at any given time. According to Constraint set (7), if operation \(i'\) follows operation i at resource r (machine or buffer), operation \(i'\) enters the resource only after operation i has departed. Operation i leaves the resource when operation \(i+1\) of job j enters its corresponding resource. In constraint set (8), the resource is a robot and the operation involves transportation, then the duration during which the robot returns to the parking after loading a part at resource \(r'\) (the resource where the subsequent operation \(i+1\) is performed) and the duration it takes for the robot to come from the parking to pick up part \(j'\) at resource \(r''\) (where part \(j'\) is located, having completed operation \(i'-1\), along with the time taken to load the part, is the moment when the part enters the robot. Constraint set (10) shows the completion time of the last job. Constraint set (11) guarantees that if operations \(O_{ij}\) and \(O_{i'j'}\) are processed on the same machine, the variables \(Y_{iji'j'}\) and \(Y_{i'j'ij}\) must be 1 for one and 0 for the other. Constraint sets (12), (13), (14), and (15) represent the decision variables of the model. To streamline implementation, the MILP model is coded using the General Algebraic Modeling System (GAMS), integrating CPLEX’s power into the optimization framework. This approach ensures the effective resolution of complex FJSP instances, enabling the achievement of optimal or near-optimal solutions while enhancing scalability and flexibility.

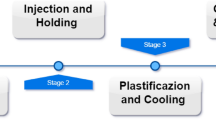

Proposed algorithm

Mathematical programming and CPLEX solvers are effective for solving the FJSP but struggle with large-scale instances due to the high number of operations per job and additional complexity from transportation and waiting in buffer operations. To address this, researchers have developed metaheuristic solvers as alternatives for complex FJSP scenarios. Optimizing MRFJS in industrial automation is particularly challenging due to multiple robots and flexible routing across machines. Metaheuristic algorithms, especially GA inspired by natural selection, have proven effective in exploring large solution spaces efficiently, making them suitable for problems like MRFJS. The success of optimization algorithms largely depends on their fitness function. While many works have explored advanced optimization techniques using metaheuristic algorithms and graph-based methods, this study introduces a novel ’semi-enumerative’ approach in the fitness function, specifically tailored to address the unique requirements and time constraints of the MRFJS scheduling problem, marking an innovative application in this domain. Traditional fitness functions for MRFJS often overlook intricate dependencies between jobs, machines, and robots, leading to suboptimal schedules. Incorporating the alternative Graph into the fitness function resolves this issue by dynamically representing alternative routes and resource allocations. This graph model, using conjunctive and disjunctive arcs, represents operation priorities and alternative paths while identifying resource conflicts and dependencies. These features enable the GA to maintain solution integrity, explore a wider search space, and achieve shorter completion times by finding optimal paths. By focusing only on feasible schedules, the model enhances the GA’s ability to manage complex dependencies and improves convergence speed and solution quality. Real-world applications, such as smart production systems, show that this approach outperforms the Basic Genetic Algorithm (BGA), especially in large-scale problems, by providing efficient scheduling with minimal conflicts and reduced waiting times. Experiments confirm that this approach produces high-quality schedules for MRFJS, surpassing traditional methods in solution quality and convergence speed. Its flexibility allows seamless integration with evolving data and production environments, making it a promising tool for industrial applications (Fig. 3).

The alternative graph framework

Alternative graphs are used to model the scheduling subproblem, introduced by Mascis and Pacciarelli42 by extending the disjunctive graph model for the traditional job-shop problem. This approach incorporates constraints such as resource conflicts, blockings, and time lags, which reduces the search space by focusing on feasible scheduling options. At a specific decision point \(t_0\), all jobs available at this time (i.e., all jobs \(j\) with \(r_j \le t_0\)) are considered. Resource assignment is fixed, meaning each operation \(i \in O_j\) is allocated to a feasible resource from the set \(E_{ji}\). An alternative graph \(G = (V, C \cup A)\) consists of a set of vertices \(V\) and a union of directed arcs \(C \cup A\).

-

Vertices \(V\): The set \(V = \bigcup _{j \in J} O_j \cup \{0, *\}\) includes all operations of available jobs (i.e., all jobs \(j\) with \(r_j \le t_0\)) and two dummy vertices, 0 and *, representing start and end operations with processing times \(P_{0jr} = P_{*jr} = 0\).

-

Conjunctive Arcs \(C\): These arcs model precedence constraints between operations of the same job, including minimum and maximum start-start time lags between successive operations \(i\) and \(i+1\). Additional constraints connect the dummy operation 0 to the first operation \(O_{j1}\) of each job \(j\), and the last operation \(O_{jn_j}\) to the dummy operation *. Arcs \(i \rightarrow i+1\) are weighted with processing time \(P_{ijr}\), representing a minimum start-start time lag, while arcs \(i+1 \rightarrow i\) are weighted with the time \(P_{ijr} + t\), representing a maximum start-start time lag. Thus, if operation \(i\) starts at time \(S_{ij}\), operation \(i+1\) can only start in the interval \([S_{ij} + P_{ijr}, S_{ij} + P_{ijr} + t]\). The arc \(O_{j1} \rightarrow 0\) between the first operation of each job \(j\) and the dummy operation 0 is initially weighted with \(\infty\), meaning a job can start any time after its release date \(r_j\).

-

Disjunctive Arcs \(A\): These arcs represent disjunctions and blockings between operations of different jobs requiring the same resource. For a disjunction \(i - i'\) with two operations \(i\) and \(i'\), a sequence is chosen so that either \(i\) precedes \(i'\) or vice versa. This is represented by the pair of alternative arcs \(\{i+1 \rightarrow i', i'+1 \rightarrow i\} \in A\). If \(i+1 \rightarrow i'\) is chosen, then \(S_{i'} \ge S_{i+1} + d_{rr'} / V_r + t\), where \(d_{rr'}\) is the distance and \(V_r\) is the robot’s speed.

Blocking may occur if operation \(i\) precedes \(i'\) on the same resource, making \(i+1 \rightarrow i'\) a dependency. One of the two edges \(i+1 \rightarrow i'\) or \(i'+1 \rightarrow i\) must be selected, preventing loops and ensuring a valid solution. Two virtual nodes, start and end, are added for consistency, with all first and last operations linked to these nodes. A feasible schedule is generated by choosing one arc from each pair of alternative arcs without creating positive-length cycles. After making these selections, a consistent schedule is generated by calculating the lengths \(l(0, i)\) from dummy vertex 0 to each vertex \(i\), giving the start time \(S_{ij} = S_{0j} + l(0, i)\).

Chromosome representation

To enhance the optimization process, a chromosome is utilized as a representation of potential solutions. The length of this chromosome matches the total number of operations, encapsulating an optimal allocation of resources for each operation. Each gene within this chromosome signifies the specific resource assigned to its corresponding operation. Figure 4 illustrates an example scenario involving three jobs, each with four operations. In this context, the chromosome provides a structured method for visualizing and optimizing resource allocation across multiple operations, facilitating an efficient scheduling process.

\(I = [1, 3, 7, 4, 1, 4, 8, 5, 1, 3, 7, 5]\).

Fitness function

To calculate the fitness function of a solution, an alternative graph is used. In this graph, each node represents an operation, while edges represent precedence relationships. Each operation has two dependency types:

-

1.

Intrinsic dependencies related to the operation sequence within each job.

-

2.

Conditional dependencies related to resource allocation.

Blocking may occur if operation \(i\) precedes operation \(i'\) on the same resource, in which case the start of \(i'\) depends on \(i+1\). This introduces a directed edge from \(i+1\) to \(i'\). One of the two possible edges-\(i+1\) to \(i'\) or \(i'+1\) to \(i\)-is chosen to prevent loops and ensure feasibility. Additionally, each job is connected to virtual start and end nodes for network consistency. The algorithm to compute the fitness function first draws the network with intrinsic dependencies. Then, the “select most critical pair (SMCP)” heuristic by Mascis and Pacciarelli42 chooses the alternative edge that minimizes the maximum path length:

where \(A\) is the set of undetermined edges, \(w_{ij}\) denotes the weight between nodes \(i\) and \(j\), and \(l(1,i)\) represents the longest path from the start node to \(i\). The edge weights are as follows:

-

Between consecutive operations of a job: Processing time plus loading time (buffer time is zero).

-

For a transfer operation at node \(i\): Time for the robot to move from the previous resource to the next, plus loading time.

Weights for blocking dependencies vary: If in a machine or buffer, they equal loading time; if in a robot resource, they include parking and loading times. Zero values for start and end nodes preserve dependencies without affecting the longest path calculation. In cases where an edge’s weight is zero, a small value is used to retain dependency without influencing the longest path. After constructing a non-cyclic graph with weights, the makespan is determined by finding the longest path (Fig. 5). Algorithm 3 describes this process.

For example, in Fig. 6, node 5 (job 1, operation 4) and node 7 (job 2, operation 2) both use resource 4. If job 2’s second operation precedes job 1’s fourth, job 1’s next operation starts with job 2’s subsequent operation at node 8, creating an edge from node 8 to node 5. Conversely, if job 1’s fourth operation precedes job 2’s, an alternative edge exists from job 1’s final node (node 14) to node 7. Figure 7 demonstrates a feasible solution with the following weights:

Initial population

The initial population, a fundamental component of GA, sets the stage for the evolutionary process. Its size, often denoted as “pop”, determines the diversity and exploration potential of the search space. Generating the initial population randomly ensures a broad exploration of the solution space, preventing the algorithm from getting stuck in local optima. In this study, the initial population is randomly generated with a size of pop parameters.

Parent selection

The selection strategy for parents significantly influences both the diversity and convergence rate of the GA. To ensure that high-quality candidates contribute to reproduction, the algorithm prioritizes individuals with superior fitness by selecting the first parent randomly from the top 20% of the population. This approach facilitates the transmission of advantageous traits from the fittest individuals. To maintain diversity and mitigate the risk of premature convergence, the second parent is chosen randomly from the entire population, thereby incorporating a broader genetic variability. This balanced approach enhances both solution diversity and convergence stability, increasing the likelihood of achieving optimal solutions across varying problem sizes. Experimental results demonstrate that, even with a limited number of iterations, this selection strategy effectively guides the algorithm towards optimal solutions in small and medium-sized problem instances, underscoring the robustness of this methodology.

Crossover operator

Spears and De Jong44 conducted a study comparing various crossover techniques, ultimately finding that uniform crossover consistently yields optimal results. Their research demonstrated that the effectiveness of uniform crossover remains consistent regardless of the length of chromosomes. Unlike other methods such as one and two-point crossovers, uniform crossover exhibits the ability to explore all points of the solution space. Additionally, empirical evidence suggests that uniform crossover offers greater exploratory capabilities compared to n-point crossover techniques45 . Mendes et al.46 further corroborated the efficacy of uniform crossover by applying it to solve the RCPSP, yielding promising results. Consequently, the adoption of uniform crossover is recommended and implemented in this paper. In uniform crossover, a crossover probability (CProb) is chosen from the interval [0, 1]. Subsequently, a random vector with the same length as the chromosome’s genes, within the interval [0, 1], is generated. By comparing the crossover probability with the random numbers in the vector, it is determined whether genes are selected from Parent 1 or Parent 2. If the random number is smaller than CProb, the gene corresponding to Parent 1 is transferred to Offspring 1, and the gene corresponding to Parent 2 is transferred to Offspring 2. Otherwise, the gene from Parent 2 is transferred to Offspring 1, and the gene from Parent 1 is transferred to Offspring 2. An example of this type of crossover with CProb = 0.7 is depicted in Fig. 8.

Mutation operator

In GA, the mutation operator plays a crucial role in preserving diversity within the population and preventing premature convergence to suboptimal solutions. In this study, Resource-Based Mutation occurs with a probability denoted as MProb, where this operator introduces random alterations to individual solutions, mirroring the GA observed in natural evolution. During mutation, a random position within the chromosome is selected, and subsequently, another resource is chosen from the pool of permissible resources to replace the existing one at the selected position. This process facilitates the exploration of alternative solutions by introducing subtle changes to the GA. Importantly, positions are selected for mutation only if they offer more than one permissible resource for allocation, thereby ensuring the introduction of meaningful variations.

Survivor selection

Survivor selection determines which individuals from the offspring population will proceed to the next generation. In this context, out of the two offspring generated through crossover and mutation, only the most promising ones are retained. Specifically, if the fitness function of an offspring is better than that of its corresponding parent, it is selected to survive. By favoring offspring with superior fitness relative to their parents, survivor selection drives the GA towards solutions of higher quality. This selective pressure promotes the propagation of favorable traits, guiding the evolutionary process towards improved solutions over successive generations.

Computational experiments

To validate the accuracy of the MIP model, CPLEX, a robust mathematical programming solver, was employed to solve the MRFJS model, and the results were compared with those obtained from the proposed GA. Additionally, a basic GA algorithm was developed to evaluate the performance of the proposed algorithm. Due to the stochastic nature of meta-heuristic algorithms, each test was repeated five times to ensure consistency. All three methods CPLEX, basic GA, and the proposed GA are based on the same MILP model to ensure consistency. However, due to computational limitations, the comparison involving CPLEX was limited to smaller instances, while larger instances were used to assess the robustness of the proposed GA in scenarios where CPLEX cannot operate effectively. This structured comparison allowed for a clear evaluation of the enhancements in the proposed GA, demonstrating its superior performance in complex scheduling problems.

The mathematical model was solved using GAMS 24.1.2 with the CPLEX solver. The proposed GA was coded and implemented in MATLAB R2021b, integrating advanced optimization strategies to ensure effective convergence and high-quality solutions. All computations were carried out on a personal computer with a Core i7 2.9 GHz processor and 8GB of RAM, ensuring consistent computational conditions for both the MILP and GA approaches.

For the tuning phase, data were randomly selected, as shown in Table 1, to ensure an unbiased evaluation. This allowed us to consider a wide range of scenarios, aiding in fine-tuning the parameters and enhancing the algorithm’s robustness before testing on specific benchmark datasets. Data from a 4.0 platform were used to compare the algorithm and MIP model results.

We used the Average Relative Deviation (ARD) as a performance measure to evaluate the algorithm’s performance. ARD was calculated using was quantified using Eq. 17.

The high number of operations makes the problem challenging to solve, even with relatively few parts. To manage this complexity, we categorized the problem into three scales based on the number of operations: small instances with fewer than 60 operations, medium instances with 60 to 70 operations, and large instances with more than 70 operations. This categorization allowed us to tailor our approach and optimize the algorithm’s performance for different complexity levels, ensuring efficient and effective problem-solving across various scenarios.

Parameter tuning

The selection of appropriate parameters plays a critical role in determining the performance of the algorithm. The PGA depends on three key parameters: crossover probability (CProb), mutation probability (MProb), and the initial population size. To ensure fairness and a thorough evaluation, various test instances were chosen. Each instance was executed independently five times, and the average relative deviation (ARD) was used as the recorded response value. The production setup comprised two regions, each with three parallel robots and machines, as well as one input and three output buffers per region. The study supported two job types, A and B: A jobs were processed on the machines in region 1, while B jobs were processed sequentially in both regions. This approach generated a table of instances with varying quantities of A and B jobs, as detailed in Table 1.

In this study, a systematic approach known as Design of Experiments (DOE) was adopted, specifically utilizing the Taguchi method. An L8 Orthogonal Array was employed to structure the experiments, accommodating various parameters at two distinct levels, as outlined in Table 2. Further details regarding the array configuration are presented in Table 3. The Average Relative Deviation (ARD) was calculated using Eq. 17, with the resulting averages for each instance and parameter setting listed in Table 4.

These averages were instrumental in determining the optimal parameter configuration. To optimize the S/N ratios for ARD, the principle that “smaller is better” was followed. Figure 9 illustrates these S/N ratios for population size (Pop), crossover probability (CProb), and mutation probability (MProb), with each parameter’s different levels displayed along the horizontal axis. Through analysis, it was found that a population size of 20, a crossover probability of 0.7, and a mutation probability of 0.1 consistently yielded the most favorable outcomes across all phases. Additionally, it was determined that the crossover probability (CProb) had the most significant effect on ARD values. It should be noted that the stopping criterion for the GA is up to 100 generations.

Convergence behavior

In this study, a comprehensive analysis of the convergence behavior exhibited by a GA tailored for the FJSP was conducted. The investigation encompassed two distinct problem instances: a large-scale instance denoted as Instance 3, and a medium-scale instance identified as Instance 5. Each instance underwent rigorous testing utilizing the three most effective combinations of GA parameters, meticulously determined through prior tuning efforts conducted over 50 iterations.

The convergence diagrams, delineated as Fig. 10A and B, meticulously depict the evolution of fitness values (in terms of makespan) over the course of the 50 iterations, elucidating the performance of the three paramount parameter configurations. Figure 10A delves into the convergence patterns observed within the large-scale Instance 3. During the initial iterations (1–10), the GA showcased a swift ascent in fitness values, indicative of robust exploration across the solution space facilitated by the initial population and crossover operations. Subsequently, in the mid iterations (11–35), the rate of improvement experienced a discernible deceleration, indicative of the GA’s strategic focus on refining solutions and exploring local optima. Finally, in the late iterations (36–50), a stabilization of fitness values was observed, indicating that the GA had converged towards an optimal or near-optimal solution.

Similarly, Fig. 10B elucidates the convergence dynamics pertinent to the medium-scale Instance 5. Echoing the trends observed in Instance 3, the GA exhibited a rapid ascent in fitness values during the initial iterations (1–10), signifying effective exploration. Throughout the mid iterations (11–25), improvement persisted albeit at a diminished pace as the GA fine-tuned solutions. However, convergence was achieved more expediently in this instance, with fitness values stabilizing notably earlier (26–50), underscoring the comparatively lower complexity and faster convergence of the problem.

Comparative analysis of the convergence rates between the two instances revealed a discernible discrepancy, with the GA converging notably faster for the medium-scale Instance 5 (Fig. 10B) than for the large-scale Instance 3 (Fig. 10A), attributed to inherent differences in problem complexity. Furthermore, the attainment of lower final makespan values for the medium-scale instance underscored the GA’s efficacy across varied problem scales. Additionally, the consistent performance exhibited across both instances, facilitated by the three most effective parameter combinations, underscored the robustness of the GA tuning. It was evident that superior parameter configurations correlated with expedited convergence rates, emphasizing the significance of parameter optimization in augmenting GA performance. Through meticulous scrutiny of convergence behavior across multiple instances and parameter configurations, this analysis provided valuable insights into the efficacy and versatility of the GA in addressing the challenges posed by the MFJSP.

Implementation in real production system 4.0

Platform 4.0, originally designed for industrial manufacturing, now serves as a key asset in a research lab at the Arts et Métiers campus in Paris. This cutting-edge platform is dedicated to pioneering intelligent and adaptable manufacturing processes, integrating advanced technologies to refine digital twins and production systems. At its heart, Platform 4.0 boasts an impressive array of advanced machinery and robotics. Among its assets are three CNC machines: a universal laser cutter, a Tormach PCNC 770 Tech mill, and a Tormach CNC lathe 15L slant-pro. Additionally, it houses two KUKA robots, the KR6R900 and KR10R1100, each equipped with sophisticated vision systems for material identification. The OMRON MOMA robot, a fusion of an LD-90 OMRON Mobile robot and a TM5 cobot, also features an integrated vision system. Quality control is ensured through a 3D scanner, while a conveyor system with a turntable facilitates the seamless movement and storage of manufactured parts. To streamline part exchange, intermediate input and output buffers are strategically positioned. Buffers with a capacity of 1 facilitate exchange between the OMRON robot and the fixed KUKA robot, while those with a capacity of 4 facilitate exchange between the fixed and mobile KUKA robots.

Figure 11 illustrates the layout and arrangement of machinery, tools, and workspace within Platform 4.0, highlighting key components and their spatial relationships. This production system is evolving towards Industry 4.0, crafted to foster advanced research in this domain. It employs a decentralized architecture where all components are linked to an advanced Manufacturing Execution System (MES), serving as the central supervisory unit. Unlike traditional models that rely on Programmable Logic Controllers (PLCs) for decision-making and scheduling, these functions are now managed by the MES. The system adopts a modular approach for standard manufacturing processes such as transferring, machining, quality assurance, and monitoring. This approach ensures that each specific operation is carried out correctly. For example, when a product must be machined on a lathe, the module responsible will select the part from the initial storage and place it in the lathe. Multiple modules work in succession to transform raw materials into finished products.

The MES, powered by the AVEVA System platform, not only allocates resources but also offers upper management detailed insights, improving decision-making and scheduling through advanced PLC integration. It monitors trends, alarms, and collects data, enabling production lines to remain flexible and adapt to varying schedules with minimal adjustments. The MES provides the necessary instructions and coordination on the shop floor, integrating all operations to maintain an efficient workflow. This modular and decentralized strategy is in line with Industry 4.0 principles, promoting flexibility, real-time decision-making, and interconnected systems, thus enhancing the production system’s overall adaptability and efficiency.

The shop floor is divided into three regions, each equipped with a robot for optimized efficiency. Region one houses the OMRON robot, supported by dedicated tables for raw material storage (input buffer) and final product handling (output buffer). Region two features the fixed KUKA robot alongside its input and output buffers. In region three, the mobile KUKA robot operates alongside a CNC mill and lathe, supported by an input buffer and a 4-capacity output buffer. This system produces six distinct chess pieces, as detailed in Table 5. Moving at a speed of two meters per minute and requiring 0.5 minutes for loading, the robots ensure fast and efficient operations. To maintain uninterrupted production, the clearance time for all parts is kept at zero. Platform 4.0 employs a total of 13 resources, with Table 6 showing the physical distances between each resource, which are identified by numbers in Fig. 11.

Moving at a speed of two meters per minute and requiring 0.5 minutes for loading, the robots ensure fast and efficient operations. To maintain uninterrupted production, the clearance time for all parts is kept at zero. Platform 4.0 employs a total of 16 resources, with Table 6 showing the physical distances between each resource, which are identified by numbers in Fig. 11.

MILP validation

To verify the correctness of the proposed MILP model and the effectiveness of the proposed GA, we randomly selected 13 representative samples with different scales. The examination of both the MILP model and the GA reveals distinct performance attributes for varying problem sizes. For smaller instances (1, 2, 3, 4, 6, and 9), both methods achieved zero gaps, indicating optimal solutions. However, for larger instances (5, 7, 8, 10, 11, 12, and 13), the MILP model’s effectiveness decreased, failing to find optimal or feasible solutions within the 1000-second runtime due to the complex problem space. In contrast, the GA consistently outperformed the MILP model, particularly with larger instances. Demonstrating swift convergence and scalability, the GA provided approximate solutions promptly and navigated increased complexities effectively. The GA also required less CPU time across all instances, crucial for maintaining operational productivity in industrial settings. Moreover, the GA exhibited resilience in handling increasing complexities, especially when the number of parts exceeded four, resulting in more than 50 operations. This robustness makes the GA suitable for large-scale scheduling problems in multi-robot manufacturing environments. Thus, while the MILP model excels for smaller instances, the GA is the more practical and scalable solution for larger, more complex Multi-robot FJSPs. Comparing the CPLEX and GA approaches shows distinct performance characteristics. CPLEX consistently delivers lower makespan values, indicating its ability to identify optimal solutions. While GA yields slightly higher makespan values, it provides reasonable solutions with notable variability, especially in Instance 13, indicating room for improvement. In terms of computational efficiency, CPLEX shows variability in processing times and struggles with scalability for larger problems. Conversely, GA demonstrates overall lower computational times, making it an efficient alternative, especially for larger instances. However, certain instances, like Instance 13, highlight GA’s increased computational burden, suggesting areas for enhancement. Balancing solution quality and computational efficiency, CPLEX prioritizes the former, often requiring longer times. Conversely, GA strikes a balance, offering reasonable solutions within shorter timeframes. The choice between CPLEX and GA depends on specific requirements: CPLEX for solution quality and GA for computational efficiency. Thus, while CPLEX excels for smaller instances, GA is the more practical and scalable solution for larger, complex instances, making it the preferred choice for real-world applications. Table 7 presents the instance test combinations and provides a comparative analysis between the MILP and the proposed GA methods.

Comparison of overall performance

This study compares the BG and PGA . The Basic GA uses Two-Point Crossover and Tournament Selection. In contrast, the PGA employs additional operators and different crossover and selection methods to improve performance. To validate the effectiveness of the PGA, we chose to compare it against the Basic GA47. The Basic GA serves as a well-established benchmark in the field due to its widespread use and simplicity. By comparing our PGA against this baseline, we can clearly demonstrate the enhancements and benefits introduced by our new method.

The PGA differentiates itself by utilizing a novel crossover operator tailored to suit our specific problem better. Additionally, the parent selection mechanism in our PGA is distinct from many conventional algorithms. These modifications are intended to showcase how small adjustments, such as changing the crossover method and parent selection criteria, can significantly impact both the quality of solutions and the time required to reach optimal solutions.

The widespread use of traditional GAs underscores their robustness and reliability, making them an ideal point of comparison. By demonstrating that our PGA can outperform the Basic GA, we provide strong evidence that our enhancements lead to substantial improvements. The results of this comparison will illustrate the efficacy of the PGA, particularly in terms of solution quality and convergence speed. This approach highlights the importance of continued innovation and adaptation within the field of GAs.

For the evaluation, we considered fifteen instances with varying numbers of jobs and operations. These instances range from medium to large sizes, ensuring a comprehensive assessment. Detailed information about these instances can be found in Table 8.

Each instance was run five times using both the PGA and GA. The ARD of the results was calculated for each run. Subsequently, the mean ARD for both PGA and BGA was computed to provide a clear comparison of their performance.

The results in Tables 9 and 10 reveal several key insights: The PGA consistently outperforms the BGA. While the BGA performs slightly better in a few medium-scale instances, the PGA shows significant improvement in most cases, particularly for large instances. For larger instances with more operations, the PGA consistently achieves better solutions. The average ARD for the PGA across all instances is 0.25%, compared to 0.38% for the BGA. This substantial difference underscores the superior efficiency and accuracy of the PGA. The stochastic nature of GAs means that initial conditions can sometimes give the BGA a temporary advantage. However, these instances are limited and do not detract from the overall superior performance of the PGA. The consistent improvement in ARD values with the PGA demonstrates its robustness and reliability in producing better-quality solutions within a reasonable time frame. This is particularly crucial for instances with larger scales and more operations, where traditional methods struggle to find optimal solutions efficiently. By analyzing these trends and results, it is evident that the PGA not only offers improvements over the BGA but also provides a more scalable and effective solution for complex optimization problems. This validates the modifications made in the PGA, emphasizing the impact of tailored crossover operators and selection mechanisms on the performance of GAs.

As observed in Fig. 12, the performance of the basic GA (orange line) and the PGA (blue line) on Instance 16 is compared. The chart illustrates the changes in fitness over different iterations. The basic GA shows very minimal improvements in fitness with each iteration and, in most cases, significant optimization is not observed. In other words, after several iterations, no substantial improvement is achieved, and the algorithm gets stuck in local optima. This means that the fitness remains stable and does not improve further.

In contrast, the PGA has managed to create faster improvements in fitness. From the early iterations, the algorithm shows better fitness compared to the basic algorithm, and these improvements continue consistently. Although in some iterations, fewer improvements are observed, the PGA overall achieves better fitness compared to the basic algorithm. These results indicate the more efficient and effective performance of the PGA compared to the other two algorithms. Overall, it can be concluded that the PGA has a greater ability to find the optimal solution and surpass local optima, achieving more optimal results.

Figure 13 is a Gantt chart related to Instance 16, which shows the distribution and scheduling of various resources over time. This Gantt chart effectively displays the order and duration of resource usage, and can be useful in analyzing the efficiency and productivity of projects.

Integration of scheduler with manufacturing execution systems

In the realm of FJSs, the convergence of a scheduler with a Manufacturing Execution System (MES) stands as a pivotal step towards refining production processes and achieving operational excellence. Specifically tailored for the dynamic demands of FJSs, the scheduler assumes a central role in orchestrating and sequencing manufacturing tasks to seamlessly align with production targets and customer requirements. Real-time scheduling in such environments operates on two tiers: order- and processing-oriented levels. In principle, each event throughout the machining process can be translated into actionable data, enabling predictive execution of manufacturing operations. However, while proactive scheduling ideally encompasses both proactive and reactive elements, prevailing learning and forecasting technologies grapple with addressing real-time exigencies within MES. Efficient adaptive MES solutions necessitate nimbleness in real-time operations, harnessing live data to optimize decision-making across diverse scenarios. Yet, current frameworks often handle specific logical adjustments or a limited spectrum of MES operations, leaving a void in comprehensive adaptation solutions. This reliance on human-driven processes underscores the need for a broader framework to address dynamic scheduling challenges across industries. Incorporating human expertise and man-machine interaction methodologies holds promise in surmounting these challenges. Integrated and specialized determination techniques offer avenues to tackle intricate scheduling conundrums, simplifying real-time occurrences on both operational levels. The integration of the scheduler with MES emerges as a crucial research frontier in aligning current MES with the Industry 4.0 paradigm. This symbiosis aims to fine-tune production processes, encompassing various facets like robotics, conveyor systems, equipment maintenance, quality control, and material handling, to drive efficient product manufacturing. The seamless transition of data between the scheduler and MES serves as the linchpin in ensuring the efficacy of production operations within FJS environments. This data interchange underpins real-time decision-making, resource allocation, and scheduling adjustments, all guided by prevailing shop floor conditions and production requisites. The crux of this data transition lies in the exchange of critical information encompassing job orders, production schedules, resource availability, and task priorities. While the scheduler meticulously crafts detailed production plans, encompassing task sequences, machine assignments, and estimated processing times, the MES functions as a vigilant overseer, continuously monitoring production progress, machine statuses, and resource utilization. The data transition between the scheduler and MES ensures seamless compatibility and interoperability facilitated by standardized communication protocols and interfaces. The adoption of OPC Unified Architecture (OPC UA) is often a secure and reliable conduit for data exchange between disparate industrial automation systems. Data transition mechanisms may encompass batch processing, event-driven updates, or real-time streaming, contingent upon the unique requisites of the production environment and the capabilities of the scheduler and MES. In essence, the fluid and efficient data transition between the scheduler and MES is paramount for refining production processes, enhancing productivity, and enabling agile responsiveness to evolving production dynamics within FJS environments. For detailed technical specifications and implementation insights into this integration, a dedicated article will delve into further depth.

Conclusion and future work

The study addresses the intricate scheduling challenges encountered in a FJS robotic cell with blocking conditions, where multiple robotic arms manage material movement between buffers and machines. The research offers insights into effective scheduling methodologies by combining MILP and a novel GA that incorporates advanced optimization techniques. Computational experiments conducted under consistent conditions reveal the superior performance of the GA, particularly for larger and more complex scheduling instances. While the MILP model proves effective for smaller instances, its scalability diminishes with increasing problem size, highlighting the GA’s practicality and efficiency in real-world applications. Moreover, integrating the scheduler into the Industry 4.0 platform using OPC UA ensures seamless communication and data exchange, facilitating efficient decision-making and optimization in production environments, even though decisions are updated periodically. The robustness of the scheduling approach is demonstrated through its successful implementation and performance analysis in a realistic manufacturing setting, showcasing its potential for enhancing productivity and operational flow. By leveraging advanced optimization techniques and integrating with Industry 4.0 principles, the developed scheduling solution offers a promising avenue for addressing complex scheduling challenges in modern manufacturing environments. Further research and development in this area hold the potential to drive continued advancements in production efficiency and adaptability. Future research could explore scheduling modes that enable adaptation to changing production conditions, while expanding scheduling objectives to include criteria beyond makespan minimization, such as total flow time reduction and resource utilization optimization. Additionally, further integration of scheduling solutions within the Industry 4.0 framework presents an area ripe for exploration, focusing on interoperability standards, data exchange protocols, and seamless communication interfaces to leverage the capabilities of Cyber-Physical Systems (CPS), Internet of Things (IoT), and Big Data analytics. Such advancements would support enhanced decision-making and adaptive manufacturing processes, ultimately advancing production efficiency and competitiveness in modern manufacturing environments.

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Javaid, M., Haleem, A., Singh, R. P. & Suman, R. An integrated outlook of cyber-physical systems for Industry 4.0: Topical practices, architecture, and applications. Green Technol. Sustain. 1(1), 100001 (2023).

Clim, A. Cyber security beyond the Industry 4.0 era. A short review on a few technological promises. Inf. Econ. 23(2), 34–44 (2019).

Bécue, A., Praça, I. & Gama, J. Artificial intelligence, cyber-threats and Industry 4.0: Challenges and opportunities. Artif. Intell. Rev. 54(5), 3849–3886 (2021).

Jan, Z. et al. Artificial intelligence for industry 4.0: Systematic review of applications, challenges, and opportunities. Expert Syst. Appl. 216, 119456 (2023).

Azadi, M., Moghaddas, Z., Cheng, T. C. E. & Farzipoor Saen, R. Assessing the sustainability of cloud computing service providers for Industry 4.0: A state-of-the-art analytical approach. Int. J. Prod. Res. 61(12), 4196–4213 (2023).

Kolasani, S. Revolutionizing manufacturing, making it more efficient, flexible, and intelligent with Industry 4.0 innovations. Int. J. Sustain. Dev. Through AI ML IoT 3(1), 1–17 (2024).

Khang, A., Rath, K. C., Satapathy, S. K., Kumar, A., Das, S. R. & Panda, M. R. (2023). Enabling the future of manufacturing: Integration of robotics and IoT to smart factory infrastructure in industry 4.0. In Handbook of Research on AI-Based Technologies and Applications in the Era of the Metaverse 25–50 (IGI Global, 2023).

Ryalat, M., ElMoaqet, H. & AlFaouri, M. Design of a smart factory based on cyber-physical systems and Internet of Things towards Industry 4.0.. Appl. Sci. 13(4), 2156 (2023).

Moraes, E. B. et al. Integration of Industry 4.0 technologies with Education 4.0: Advantages for improvements in learning. Interact. Technol. Smart Educ. 20(2), 271–287 (2023).

Liu, L., Song, W. & Liu, Y. Leveraging digital capabilities toward a circular economy: Reinforcing sustainable supply chain management with Industry 4.0 technologies. Comput. Ind. Eng. 178, 109113 (2023).

Antony, J., Sony, M. & McDermott, O. Conceptualizing Industry 4.0 readiness model dimensions: An exploratory sequential mixed-method study. TQM J. 35(2), 577–596 (2023).

Mantravadi, S., Srai, J. S. & Møller, C. Application of MES/MOM for Industry 4.0 supply chains: A cross-case analysis. Comput. Ind. 148, 103907 (2023).

Serôdio, C., Mestre, P., Cabral, J., Gomes, M. & Branco, F. Software and architecture orchestration for process control in industry 4.0 enabled by cyber-physical systems technologies. Appl. Sci. 14(5), 2160 (2024).

Mehdiyev, N., Mayer, L., Lahann, J. & Fettke, P. Deep learning-based clustering of processes and their visual exploration: An industry 4.0 use case for small, medium-sized enterprises. Expert. Syst. 41(2), e13139 (2024).

Morgan, J., Halton, M., Qiao, Y. & Breslin, J. G. Industry 4.0 smart reconfigurable manufacturing machines. J. Manuf. Syst. 59, 481–506 (2021).

Kattepur, A. & Khemkha, S. Aspects of mechanism design for industry 4.0 multi-robot task auctioning. EAI Endorsed Trans. Smart Cities 6(17), e2 (2021).

Choudhury, S., Gupta, J. K., Kochenderfer, M. J., Sadigh, D. & Bohg, J. Dynamic multi-robot task allocation under uncertainty and temporal constraints. Auton. Robot. 46(1), 231–247 (2022).

Zhou, B., Zhou, R., Gan, Y., Fang, F. & Mao, Y. Multi-robot multi-station cooperative spot welding task allocation based on stepwise optimization: An industrial case study. Robot. Comput. Integr. Manuf. 73, 102197 (2022).

Oluyisola, O. E., Bhalla, S., Sgarbossa, F. & Strandhagen, J. O. Designing and developing smart production planning and control systems in the industry 4.0 era: A methodology and case study. J. Intell. Manuf. 33(1), 311–332 (2022).

Mantravadi, S., Srai, J. S. & Møller, C. Application of MES/MOM for Industry 4.0 supply chains: A cross-case analysis. Comput. Ind. 148, 103907 (2023).

Ala, A., Simic, V., Deveci, M. & Pamucar, D. Simulation-based analysis of appointment scheduling system in healthcare services: A critical review. Arch. Comput. Methods Eng. 30(3), 1961–1978 (2023).

Fu, Y. et al. Distributed scheduling problems in intelligent manufacturing systems. Tsinghua Sci. Technol. 26(5), 625–645 (2021).

Bakon, K., Holczinger, T., Süle, Z., Jaskó, S. & Abonyi, J. Scheduling under uncertainty for Industry 4.0 and 5.0. IEEE Access 10, 74977–75017 (2022).

Oluyisola, O. E., Bhalla, S., Sgarbossa, F. & Strandhagen, J. O. Designing and developing smart production planning and control systems in the industry 4.0 era: A methodology and case study. J. Intell. Manuf. 33(1), 311–332 (2022).

Namjoshi, J. & Rawat, M. Role of smart manufacturing in industry 4.0. Mater. Today Proc. 63, 475–478 (2022).

Zheng, T., Ardolino, M., Bacchetti, A. & Perona, M. The applications of Industry 4.0 technologies in manufacturing context: A systematic literature review. Int. J. Prod. Res. 59(6), 1922–1954 (2021).

Shukla, A. K., Nath, R., Muhuri, P. K. & Lohani, Q. D. Energy efficient multi-objective scheduling of tasks with interval type-2 fuzzy timing constraints in an Industry 4.0 ecosystem. Eng. Appl. Artif. Intell. 87, 103257 (2020).

Rosin, F., Forget, P., Lamouri, S. & Pellerin, R. Enhancing the decision-making process through industry 4.0 technologies. Sustainability 14(1), 461 (2022).

Mathew, D., Brintha, N. C., & Jappes, J. W. (2023). Artificial intelligence powered automation for industry 4.0. In New Horizons for Industry 4.0 in Modern Business 1–28 (Springer International Publishing, Cham, 2013).

Kolasani, S. Revolutionizing manufacturing, making it more efficient, flexible, and intelligent with Industry 4.0 innovations. Int. J. Sustain. Dev. Through AI ML IoT 3(1), 1–17 (2024).

Xie, J., Li, X., Gao, L. & Gui, L. A hybrid genetic tabu search algorithm for distributed flexible job shop scheduling problems. J. Manuf. Syst. 71, 82–94 (2023).

Tang, J. et al. An effective memetic algorithm for distributed flexible job shop scheduling problem considering integrated sequencing flexibility. Expert Syst. Appl. 242, 122734 (2024).

Utama, D. M., Primayesti, M. D., Umamy, S. Z., Kholifa, B. M. N. & Yasa, A. D. A systematic literature review on energy-efficient hybrid flow shop scheduling. Cogent Eng. 10(1), 2206074 (2023).

Zhang, F., Mei, Y., Nguyen, S. & Zhang, M. Survey on genetic programming and machine learning techniques for heuristic design in job shop scheduling. IEEE Trans. Evol. Comput. 28(1), 147–167 (2023).

Lee, M. L. et al. A review of prospects and opportunities in disassembly with human-robot collaboration. J. Manuf. Sci. Eng. 146(2), 020902 (2024).

Löffler, S. Human-robot collaboration for efficient circularity decision-making for end-of-usage products (Doctoral dissertation, Reutlingen University) (2024).

Li, X. et al. Two meta-heuristic algorithms for flexible flow shop scheduling problem. J. Manuf. Syst. 55, 123–134 (2021).

Fatemi-Anaraki, S. et al. Scheduling of multi-robot job shop systems in dynamic environments. Int. J. Prod. Res. 59(3), 456–468 (2022).

Li, Y. et al. An improved iterated greedy algorithm for distributed robotic flowshop scheduling. Comput. Ind. Eng. 105, 207–216 (2022).