Abstract

This study investigated how exposure to Caucasian and Chinese faces influences native Mandarin-Chinese speakers’ learning of emotional meanings for English L2 words. Participants were presented with English pseudowords repeatedly paired with either Caucasian faces or Chinese faces showing emotions of disgust, sadness, or neutrality as a control baseline. Participants’ learning was evaluated through both within-modality (i.e., testing participants with new sets of faces) and cross-modality (i.e., testing participants with sentences expressing the learned emotions) generalization tests. When matching newly learned L2 words with new faces, participants from both groups were more accurate under the neutral condition compared to sad condition. The advantage of neutrality extended to sentences as participants matched newly learned L2 words with neutral sentences more accurately than with both disgusting and sad ones. Differences between the two groups were also found in the cross-modality generalization test in which the Caucasian-face Group outperformed the Chinese-face Group in terms of accuracy in sad trials. However, the Chinese-face Group was more accurate in neutral trials in the same test. We thus conclude that faces of diverse socio-cultural identities exert different impacts on the emotional meaning learning for L2 words.

Similar content being viewed by others

Introduction

Considering the significance of emotions in our everyday interactions and communication, people naturally look for and utilize emotional cues when encountering learning scenarios, especially when tasked with acquiring new words1. One of the common manifestations of emotional cues is facial expressions2,3, which are an essential factor in face-to-face language teaching practices. According to previous studies, people show perceptual advantages for expressions on own-race faces compared to on other-race faces e.g.,4,5, yet whether such an advantage influences second language learning remains unknown. To conduct in-depth investigations of the role of facial expressions in second language learning, the present study employed an associative learning paradigm to compare the impacts of facial expressions on foreign and domestic faces for learning emotional meanings of second language (L2) words.

Facial expressions are effective communication channels6 and vehicles for expressing emotions7. In terms of word acquisition, facial expressions of caregivers were reported to be the initial source for children to learn emotional concepts8,9 and specific linguistic labels of such concepts were later learned with their gradually improving language proficiency10,11,12. Eventually, when reaching adulthood, people are able to recognize emotions through various channels including both facial expressions and words referring to emotional states and events13,14,15. Psycholinguistic evidence demonstrated a strong association between facial expressions and emotional words in one’s semantic memories16,17,18,19 and a considerable facilitation of speakers’ facial expressions on auditory speech processing20,21. More importantly, emotional facial expressions were found to influence non-emotional language processing during audiovisual communication22.

Previously, emotional meaning learning for L1 words was examined using an associative learning paradigm23. Faces and sentences expressing disgust and sadness were compared for their effectiveness in learning emotional meanings for L1 pseudowords. Two groups of participants were exposed to stimulus pairs consisting of face-pseudowords and sentence-pseudowords, respectively. Participants’ learning of emotional meanings was then tested in two tasks that require participants to match learned pseudowords with a new set of faces and sentences based on emotionality. It was found that faces were more effective for learning disgusting meanings and comparable to sentences for sad meanings23.

Despite being an effective emotion communication channel, emotional facial expressions may be interpreted differently under various cultural, social, and situational contexts24. The relationship between facial expressions and emotional states is quite complex25. Previous studies reported that people recognize the emotion on faces of their own ethnic group more accurately26. On top of that, it was also reported that people tend to recognize negative emotions more efficiently on faces of different socio-cultural identities27. When the socio-cultural identity of a face is involved in language processing, there is a priming effect if the socio-cultural identity of the face presented is congruent with the language to be processed28,29. For example, Chinese-English bilinguals were more accurate when naming Caucasian faces with English than with Chinese, and Chinese faces with Chinese than with English30. Research also found that cultural differences could be reflected via non-verbal cues such as facial expressions, gestures, and gaze31. It was reported that L2 learners recalled more information in a story-retelling task when they were able to see the story teller’s face and gestures, and these L2 learners more gestures when producing contents in their L2 than in their L132. In addition, L2 speakers expressed more emotional facial expressions while telling a story in their L2 yet maintained more a neutral expression when doing it in their L133. However, the role of faces, especially, the role of socio-cultural identity of faces, in L2 learning is relatively neglected. Despite facial expressions being an important socio-cultural element involved in L2 learning34, it remains unclear whether the socio-cultural identity of faces exert any influence on learning emotional meanings for L2 words, considering the emotion-recognition biases, the priming effect, and socio-cultural impacts reviewed above.

The effects of emotion in language processing and acquisition were mostly investigated from a dimensional approach in previous studies, that is, categorizing emotions based on valence35. Meanwhile, a discrete emotion model argues that emotions go beyond merely “positive” or “negative”36. Studies following this path did provide detailed evidence on how discrete emotions affect word processing e.g.,37. For example, it was reported that disgust sensitivity, rather than valence, arousal, or general emotion sensitivity, could predict individual differences in lexical decision tasks38. Also, disgust and fear, both negative emotions in terms of valence, affect participants’ response times and accuracies in lexical decision tasks differently39. Electrophysiological evidence suggested that the processing of happy words and general positive words modulates N1 and N400 respectively, indicating that the processing of discrete emotions serves as a basis for subsequent dimensional emotion processing40. Some scholars also described emotional states as multidimensional processes where appraisals and emotions are intertwined. The appraisals involved in the process are influenced by cultural, physiological, and individual differences as well41. In the meantime, how individual perceives and experiences emotions is mediated by language. According to the Construction Hypothesis, language is essential for emotion perception as words denote otherwise diverse emotion categories and facial expressions42,43. How people interpret and experience emotional sensory input is largely guided by emotion words such as “disgust” and “sadness”44.

Therefore, the present study proceeded from a discrete dimension by focusing on disgust and sadness to add to the understanding of L2 emotional word acquisition. The two emotions of interest selected here both belong to the six basic emotions proposed by Ekman36. They were selected as they share a negative valence yet manifest distinctive features in terms of functions45,46, physiological responses47,48,49,50, and facial expressions51,52. Moreover, the emotion of disgust is particularly suitable for investigating the interaction among emotion, culture, and language and studies focusing on language and social psychology suggested that disgust is sensitive to the cultural background of and the language spoken by people53,54. Several other basic emotions including fear and anger were not included as they are triggered by threatening stimuli55 just like disgust is though being generally considered negative from the valence dimension as well, whereas sadness is usually related to loss46 or rejection56 rather than threats. The selection of the two discrete emotions sharing the same valence could thus warrant more refined insights regarding emotional meaning learning for L2 words.

On the basis of that, this study aimed to compare the effectiveness of faces of different socio-cultural identities when serving as materials for the emotional meaning learning of L2 words, which, to the best of our knowledge, has not been examined in existing research. Two groups of Chinese college students were recruited as participants for an associative learning experimental design in which they learned disgusting, sad, and neutral meanings for English pseudowords using either Caucasian faces or Chinese faces as learning materials. Pseudowords were employed in the present study to avoid prior knowledge of participants57 so as to prevent the possible interferences in the effects of emotional meaning learning. The design consisted of a Learning Phase and an Evaluation Phase. The English pseudowords were repeatedly associated with either Caucasian or Chinese faces in three experimental blocks in the Learning Phase and each block was followed by a test to examine participants’ learning of the association between the faces and pseudowords. After the three blocks, there was a pseudoword matching test. Participants then continued to go through the Evaluation Phase, which includes a within-modality generalization test and a cross-modality generalization test. In the within-modality generalization test, participants were presented with unseen faces of the three emotional conditions whereas in the cross-modality generalization test, English sentences expressing disgusted, sad, and neutral meanings were introduced as stimuli. The purpose of these tests was to further assess participants’ learning and investigate whether participants learned emotional meanings rather than mere associations between specific faces and pseudowords. We hypothesized that if the emotion-recognition advantage of faces of same socio-cultural identity prevailed26, Chinese faces would be more effective for emotional meaning learning for English L2 words. On the other hand, if the priming effect of congruent face-language combination and the bias of recognizing negative emotions faster on foreign faces was more salient27,29, participants in the Caucasian-face Group would learn the emotional meanings more effectively.

Materials and methods

Participants

A priori power analysis was conducted with G*power software 3.158, using the statistical model of the ANOVA approach (repeated measures, within-between interactions), with α set at 0.05, power at 0.80, and medium effect size at 0.25. The estimated sample size was 20. The sample size was further increased to 40 participants per experimental group to adequately detect potential effects in the present study. A total of 80 native Chinese-speaking and physically and mentally healthy college students from the Dalian University of Technology were recruited in this experiment (28 males, 52 females; average age: 23.6, SD = 1.51). They reported right-handedness with normal or corrected to normal vision. All the participants had passed College English Test, Band 6, the highest level for non-English major students, with a minimum requirement in terms of vocabulary of 5,500 words and 1,200 phrases, similar to the B2 level of the Common European Framework of Reference for Languages59. The study was reviewed and approved by Biological and Medical Ethics Committee, Dalian University of Technology, China. All procedures in the study were in accordance with the Declaration of Helsinki and International Ethical Guidelines for Biomedical Research Involving Human Subjects. Written informed consent was obtained from all participants, who were paid for their time and involvement. Half of the participants were randomly assigned to the Chinese-face Group, learning the associations between pseudowords and Chinese faces, while the remaining participants were assigned to the Caucasian-face Group to learn the associations between pseudowords and Caucasian faces. Four participants’ data in the Chinese-face Group and three participants’ data in the Caucasian-face Group were excluded due to an accuracy rate lower than 80% in the Learning Phase.

Materials

Thirty pseudowords were composed using Wuggy, a multilingual pseudoword generator60. All pseudowords were 5–6 letters and 1–3 syllables long (see supplementary materials). Regarding the materials for emotional meaning learning, the primary objective in the present study was to explore how the socio-cultural identities of faces influence the learning of emotional meanings for L2 words. Therefore, instead of using both faces and sentences in the previous L1 study23, 45 Chinese faces and 45 Caucasian faces were selected (15 disgusted, 15 sad, and 15 neutral). Nevertheless, the number of stimuli assigned to each emotion condition was the same as our L1 studies23,61,62. The experimental face stimuli in each emotional category (disgust, sadness, and neutral) consisted of eight females and seven males. The Chinese faces were selected from Tsinghua Facial Expression Database (Tsinghua-FED)63 and the foreign faces were selected from the Karolinska Directed Emotional Faces (KDEF) stimuli set64. Non-facial areas (e.g., hair, neck, and ears) were removed by applying an ellipsoidal mask in order to maximize the emotional salience of facial expressions. The faces were presented against a black background. Each face stimulus was 11.5 cm high by 8.5 cm wide, equaling a visual angle of 9.40° (vertical) × 6.95° (horizontal) at 70 cm viewing distance. The assignment of pseudowords to the three emotion conditions was counterbalanced across participants in both groups.

A group of 216 students of the same college population who did not participate in the final experiment completed online questionnaires in which they were instructed to select the emotion (happiness, sadness, disgust, fear, surprise, anger, or neutral) they perceived from the faces (hit rate) and rate the arousal of the faces on a 5-point scale (1—not arousing at all, 5—extremely arousing). In terms of hit rate, there were no main effects of group, F(1, 14) = 0.992, p = 0.336, η2p = 0.041, or emotion, F(2, 28) = 1.217, p = 0.311, η2p = 0.013. No interaction between group and emotion was found either, F(2, 28) = 0.577, p = 0.568, η2p = 0.009. Arousal results showed a significant effect of emotion, F(2, 28) = 135.726, p < 0.001, η2p = 0.820. Post-hoc paired t-test showed that neutral faces were significantly less arousing than the disgusted (t(14) = 15.047, p < 0.001) and sad (t(14) = 13.336, p < 0.001) faces, and there was no significant difference of arousal between disgusted and sad faces (t(14) = 1.711, p = 0.098). There were no main effect of group, F(1, 14) = 0.441, p = 0.517, η2p = 0.001, or interaction between group and emotion, F(2, 28) = 0.139, p = 0.871, η2p = 0.001. Next, we recruited an additional group of 61 students of the same college population to rate the valence of the faces on a 5-point scale (1 – very negative, 5 – very positive). Results showed a main effect of emotion, F(2, 28) = 317.603, p < 0.001, η2p = 0.910. According to the results of post-hoc paired t-test, disgusted faces were rated significantly more negative than sad faces (t(14) = 0.283, p < 0.001), and both disgusted and sad faces were more negative than neutral faces (t(14) = 1.533, p < 0.001; t(14) = 1.251, p < 0.001). There was no main effect of group, F(1, 14) = 2.191, p = 0.161, η2p = 0.003, or any interaction effect between group and emotion, F(2, 28) = 1.346, p = 0.277, η2p = 0.002 (see Table 1 for details).

Furthermore, 45 English sentences were composed to describe disgusting, sad, and neutral scenarios (15 each) for the cross-modality generalization tests in the Evaluation Phase. Forty-four volunteers who did not participate in the final experiment rated the emotions expressed by the sentences through online questionnaires. Participants were asked to judge whether they understood the sentences and select the emotional label (happiness, sadness, disgust, fear, surprise, anger, or neutral) that best fit the emotion connoted by the sentences. Then, the students rated the arousal of the sentences over a 5-point scale (1-very peaceful, 5-very exciting). Only sentences with 100% choices of being understood and 85% or more choices of the intended emotion were retained (see Table 2). In total, 15 emotional sentences were selected for the experiment (5 disgusting, 5 sad, and 5 neutral). For the categorization rates, there was no significant difference among the sentences of the three emotional categories in intended emotion choice rates, F(2, 12) = 1.241, p = 0.324, η2p = 0.171. For arousal, there was a significant effect of emotion, F(2, 12) = 87.917, p < 0.001, η2p = 0.936. Post-hoc tests showed that disgusting sentences and sad sentences were significantly more arousing than neutral sentences (ps < 0.001) and there was no significant difference of arousal between disgusting and sad sentences (p = 0.467). The sentences were presented against a black background in Times New Roman font, size 22.

In total, 30 pseudowords, 45 Chinese faces, 45 Caucasian faces, and 15 English sentences were used in the experiment. Ten pseudowords were assigned to each emotion category (disgust, sadness, and neutral) to pair with 10 faces in different groups of the corresponding emotion, which were presented to participants in the Learning Phase. The 15 remaining faces and the 15 English sentences were used for the generalization tests in the Evaluation Phase (5 in each emotion category). All 30 pseudowords appeared in all the generalization tests with the correct response counterbalanced across participants as well as the assignment of pseudowords to emotions.

Design and procedure

The experiment used a mixed 2 × 3 factorial design, with learning procedure (Chinese-face Group and Caucasian-face Group) as between-participant factor and emotion (disgust, sadness, and neutral) as within-participant factor. The Learning Phase consisted of three blocks, each including word-face pairs followed by a simple selection test. After the three learning blocks and selection test, a matching test was designed to further consolidate learning. The Evaluation Phase that followed included two generalization tests, within-modality (to new faces) and cross-modality (to sentences), which provided the main dependent measures. For each group, there were two sets of new faces introduced in the within-modality test, one was of the same social-cultural identity with those used in the learning phase (Chinese faces for the Chinese-face Group and Caucasian faces for the Caucasian-face Group) and the other was of the different identity (Chinese faces for the Caucasian-face Group and Caucasian faces for the Chinese-face Group), thus constructing congruency, an additional within-participant factor. The newly introduced faces served as a measure to examine participants’ emotional meaning learning as the two tests in the Learning Phase focused more on the associations between given pseudowords and their corresponding faces in the learning blocks. In the cross-modality test, participants’ learning of emotional meanings was further verified in the linguistic context by introducing English sentences. The two distinctive modalities thus provided a relatively comprehensive picture of the emotional meaning learning effects of Chinese and Caucasian faces on English L2 words. The whole experimental procedures are illustrated in Fig. 1; Table 3.

Participants in both groups started the experiment by reading the task instructions. They were asked to memorize the association between the face and the pseudoword, and then to complete the following consolidation tests. The instructions did not mention anything about emotion. After reading the instructions, participants completed a practice block of 6 learning trials and 3 testing trials. The pseudowords and faces used in the practice block were not presented during the experiment. Instructions for the Evaluation Phase were given after completing the Learning Phase. Participants’ accuracies and response times were recorded.

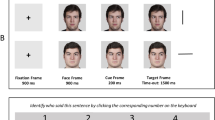

Learning in the chinese-face group

The Learning Phase was divided into three blocks. There were four face-pseudoword pairs of each emotion in the first block and three in the second and third blocks. Each pair was repeated six times within each block resulting in 72, 54, and 54 trials in the first, the second, and the third block, respectively. The assignment was counterbalanced across participants. Each trial began with a fixation cross presented for 1000 ms in Times New Roman font, size 25 followed by the face presented for 1000 ms. Then, the pseudoword was presented in Times New Roman font, size 50 in the middle of the screen over the face for 5000 ms (see Fig. 2A). After each learning block, the participant’s learning of the associations was evaluated in a pseudoword selection test. Each trial in this test began with a fixation cross presented in Times New Roman font, size 25 for 1000 ms, followed by one of the faces previously seen presented for 1000 ms (see Fig. 2B). Then, two pseudowords from the preceding learning block were presented at the bottom of the screen in Times New Roman font, size 18. The participant was required to select which one had been associated with the face on the screen by pressing the F-key on the keyboard with the left index finger and the J-key on the keyboard with the right index finger. The key configuration was counterbalanced across participants. The time limit for response was 5000 ms. After the three blocks and selection tests, there was a pseudoword matching test, consisting of 30 trials (10 for each emotion) with the following sequence of events: 1000 ms fixation cross in Times New Roman font, size 25, followed by a face from the three learning blocks presented for 1000ms, and then a pseudoword over the face in Times New Roman font, size 50 with two options of “correct” and “incorrect” at the bottom of the screen in Times New Roman font, size 18 (see Fig. 2C). Participants were asked to judge whether the pairing between the pseudoword and the face was correct according to the associations learned during the Learning Phase by pressing “F” on the keyboard for “correct” and “J” for “incorrect”. The correctness-response key association was counterbalanced across participants. The time limit for response was 5000 ms. Like in the pseudoword selection test, the matching test aimed to probe participants’ memorization of the association between the faces and the pseudowords and to consolidate the learning.

Evaluation in the chinese-face group

Two tests were included in this Evaluation Phase to investigate whether pseudowords’ emotional meanings, rather than merely their associations with specific face stimuli, were successfully learned. On the one hand, the within-modality generalization test proceeded in the same fashion as the pseudoword selection test, except that here the learned pseudowords of the three emotions were paired with new faces, i.e., faces not previously seen in the Learning Phase (see Fig. 2D). The within-modality generalization test was divided into congruent (see Fig. 2D-a) and incongruent (see Fig. 2D-b) conditions according to the type of new faces. For example, the participants assigned to the Chinese-face Group were instructed to respond to the compounds of new Chinese faces and learned pseudowords in the congruent condition; while in the incongruent condition, the participants in the Chinese-face Group needed to respond to the compounds of new Caucasian faces and learned pseudowords. Participants were asked to select among the two pseudowords the one that best described the emotion expressed in the new face by pressing “F” or “J” on the keyboard as he or she did in the pseudoword selection test during the Learning Phase. The time limit for response was 5000 ms. There were 15 test trials in this test (5 for each emotion). On the other hand, the cross-modality generalization test was conducted as it introduced emotional sentences rather than new faces (see Fig. 2E). The 15 test trials (5 for each emotion) began with a fixation cross presented for 1000 ms in Times New Roman font, size 25 followed by an emotional sentence presented in the middle of the screen for 1000 ms in Times New Roman font, size 30. Then two pseudowords from the previous learning blocks were presented at the bottom of the screen in Times New Roman font, size 18. Participants were asked to select the pseudoword that best described the emotion associated with the sentence by pressing “F” or “J” on the keyboard as in the previous test. There was no time limit for response, as such a cross-modality task was more demanding than the previous ones.

Learning and evaluation in the caucasian-face group

For the Caucasian-face Group, the learning and test procedures were similar to those in the Chinese-face Group, except that Chinese faces were replaced by Caucasian faces in the Learning and Evaluation Phases. Concerning the whole Learning Phase and the cross-modality generalization test in the Evaluation Phase, the same format was applied as in the corresponding tests for the Chinese-face Group, the only difference being that the Chinese faces were replaced with Caucasian faces (see Fig. 3A–C, and E). Furthermore, for the within-modality generalization test in the Evaluation Phase, 15 unlearned Caucasian faces were used in the congruent set and 15 unlearned Chinese faces were used in the incongruent set (see Fig. 3D). Note that the generalization materials used for the congruent and incongruent sets in the within-modality tests, i.e., new Chinese faces and Caucasian faces, in the Chinese-face Group were exactly the same as the ones employed in the Caucasian-face Group for the incongruent set and the congruent set, respectively.

Results

This section reports results related to the accuracies (see Table 4) of the generalization tests (within-modality and cross-modality tests), which are the most informative measures of meaning learning. We included additional accuracy data for the learning tests (pseudoword selection and pseudoword matching) as well as response time data for all four tests in the supplementary materials. As shown in the supplementary materials, both groups performed quite well in the two tests involving no new stimuli, indicating successful learning of the association between specific faces and specific new L2 words.

Statistical analyses on the accuracy and RTs were performed in R (Version 4.4.1), using the lme4 package65 and lmerTest package66. Accuracy was analyzed through a generalized linear mixed model. For the within-modality generalization tests, we started with the best-fitting mixed-effects model analysis and treated Group (Chinese-face Group/Caucasian-face Group), Emotion (Disgust/Neutrality/Sadness), Congruency (Congruent/Incongruent) and their interaction as the fixed effect, and ACC as the dependent variable. Subjects and items were added as a random effect. The model formula is: ACC ~ Group * Emotion * Congruency + (Emotion | Subject) + (Group | Item). For the selection, matching and cross-modality generalization tests, fixed effects in the models included Emotion (Disgust/Neutrality/Sadness), Group (Chinese-face Group/Caucasian-face Group) and their interaction. Subjects and items were added as a random effect. The model formula is: ACC ~ Emotion * Group + (Emotion | Subject) + (Group | Item). In addition, a linear mixed-effect model was conducted on RTs. To satisfy the assumption of normally-distributed residuals for linear models, the RT values were log-transformed. Model formula in the within-modality tests: log_RT ~ Group * Emotion * Congruency + (Emotion | Subject) + (Group | Item). Model formula in the other three tests: Log_RT ~ Emotion * Group + (Emotion | Subject) + (Group | Item). In the present model, the estimated quantity of the intercept term represented the grand average across conditions. This allows us to estimate the regression coefficients associated with each contrast, and the resulting estimates can be interpreted as simple main effects based on the hypothesis matrix.

Within-modality generalization of the two groups

Analysis of response accuracy

The fixed effect results revealed a significant main effect of emotion, with sadness leading to a significant reduction in performance compared to neutrality (β = − 0.844, z = − 3.392, p < 0.01). However, the main effect of group and congruency, and the interaction effect were not significant (all ps > 0.05). (See Table 4; Fig. 4)

Cross-modality generalization of the two groups

Analysis of response accuracy

The fixed effect results showed that disgusting (β = − 1.201, z = − 2.966, p < 0.001) and sad trials (β = − 1.159, z = − 2.844, p < 0.001) were associated with significantly lower accuracy relative to neutral trials. Additionally, the interaction effect of emotion and group was significant, demonstrating that Caucasian-face group outperformed the Chinese-face group under sad condition (β = 1.089, z = 2.084, p = 0.037) yet the reversed pattern was shown in neutral trials (β = 1.780, z = 3.352, p < 0.001). No main effect of group was found (p > 0.05). (See Table 4; Fig. 5)

Discussion

The present study aimed to compare the impacts of foreign and domestic faces on emotional meaning learning for L2 words. Two groups of native Mandarin-Chinese speakers learned disgusting, sad, and neutral meanings for English pseudowords (hereinafter, new L2 words) through Caucasian face-pseudoword and Chinese face-pseudoword pairs respectively. Concrete evidence for the learning of emotional meaning was illustrated in the two generalization tests in which new faces and sentences were introduced. In general, participants of both groups matched newly learned L2 words with new neutral faces better than with new sad faces. When it comes to matching new L2 words with sentences, participants were most accurate in neutral ones compared to both disgusting and sad ones. Notably, differences emerged between the two groups in the cross-modality generalization test that the Caucasian-face Group outperformed the Chinese-face Group in sad trials while the Chinese-face Group exhibited better performance in neutral trials compared with the Caucasian-face Group. Overall, these findings indicate that Caucasian and Chinese faces function differently when serving as materials for the learning of discrete emotional meanings for English L2 words.

Results of the present study partially echo the previous research on emotional meaning learning for L1 words23. Participants in the present study matched newly learned L2 words with neutral new faces more accurately than sad new faces regardless of the social-cultural identity congruency between the faces they saw in the learning blocks and in the tests, showing a similar pattern as participants in the previous study learning new L1 words. However, in the previous study, participants who learned emotional meanings for L1 words with faces showed an advantage in disgusting trials compared to sad trials as well and such an advantage disappeared in the present study. Regarding the difference between sad trials and neutral trials, despite the sad faces used in the present study were accurately portrayed in the two databases from which they were selected63,64, there is evidence suggesting that sad facial expressions are more likely to cause confusion than disgusted expressions67,68. Moreover, similar recognition disadvantage was previously reported when sad facial expressions were presented to participants along with neutral ones and more specifically, such difference was not found between disgusted and neutral expressions in the same experiment69. Such results indicated that our paradigm was sensitive to the manipulation of emotions, thus effective for emotional meaning learning for L2 words. On the other hand, in the present study, no accuracy superiority was discovered for disgust to sadness. The difference could be attributed to the new L2 words used here. Language plays an important role in perceiving and experiencing emotions44 and words can shape how emotion is perceived in a face24. Previous socio-psycholinguistic studies provided evidence that the concept of disgust in English covers a wider range of potential elicitors compared to other languages and such difference did not extend to other emotions such as sadness54. Also, Chinese back-translation of English disgust-related terms are closer to the concept of anger in English70. Such divergences might weaken the recognition advantage of disgust over sadness.

Participants from both groups were more accurate in matching new learned L2 words with neutral sentences than with disgusting and sad sentences in the cross-modality generalization test. Instead of seeing facial expressions in the within-modality generalization test, the stimuli used here were linguistic materials written in participants’ L2. It was reported in previous studies that there is an emotional detachment effect of L271,72,73, especially when participants do not have time to ruminate on the emotions74. In particular, the sentences in the cross-modality generalization test were written in L2 and were highly situational and contextual, which may impact participants’ interpretation of the emotionality therein. Furthermore, there was no difference found in terms of accuracy among the three emotional conditions in the cross-modality generalization test in our previous L1 study. Therefore, the significant reduced emotion recognition accuracy in the two emotional conditions compared to the neutral condition could be largely attributed to the influence of L2 emotional detachment.

The primary objective of the present study was to compare the efficiency between the two associative materials in terms of emotional meaning learning for new L2 words. An interaction effect was found between the two groups in sad and neutral trials in the cross-modality generalization test. Better learning effects of the Caucasian-face Group were observed in sad trials compared to the Chinese-face Group and the Chinese-face Group was more accurate in neutral trials. From the perspective of emotion perception, a number of previous studies reported an in-group advantage that people recognize facial actions as intended more accurately and strongly when they were posed by people of the same socio-cultural identity24,26,75. Nevertheless, this in-group advantage also interacts with the emotion involved as indicated by previous studies. For example, it was reported that people showed a recognition advantage for negative emotions from expresser of different socio-cultural identities27,76. For discrete emotions, empirical evidence suggested that Western European and East Asian perceivers shared the same facial movement identification strategy for the emotion of disgust yet differed in recognizing sad facial expressions77, which explains why the difference between the two groups did not extend to disgusting trials. These patterns were then mediated by the new L2 words which served as vehicles to carry the emotional meanings conveyed by the faces as well as the L2 sentences in the test. From the perspective of language processing, the priming effect of faces of congruent socio-cultural identity29,30 might come into play that sad meanings on Caucasian faces were bridged to L2 sad sentences better than those on Chinese faces via new L2 words. However, this effect was not evident in neutral trials as the accuracy was higher for the Chinese-face Group. One possible explanation is that participants from the Caucasian-face Group perceived neutrality as more emotional (in this case negative) than their counterparts from the Chinese-face Group76, thus reducing their accuracy in matching new L2 words that were supposed to be neutral with neutral L2 sentences.

There are some limitations in the present study. Firstly, more refined evidence regarding the emotional meaning acquisition for L2 words could be obtained by comparing more emotions of negative valence. Moreover, researchers could go beyond the six basic emotions and introduce more complex emotions such as shame and distrust. Secondly, happiness could be introduced as another emotional meaning to be acquired. Happiness is the only positively valenced emotion among the six basic emotions36 and incorporating it into the current experimental design could further complete the framework of L2 emotional word acquisition. Thirdly, future studies could control and consider more variables of the learning materials, e.g., matching the valence of different negative emotions, incorporating attractiveness as a possible influencing factor, etc. Finally, future studies could recruit participants of different L2 proficiencies or carry out long-term research to investigate the possible interaction between L2 proficiency and the effectiveness of the two associative materials.

The implications of the present study are twofold. Theoretically, first of all, the findings highlight the role of language in the interaction between culture and emotion. The diverse effects of faces’ socio-cultural identities in learning emotional meanings for L2 words were only demonstrated when L2 sentences were introduced while missing when it comes to evaluating new faces. In addition, the pattern discovered in the present study was also different from our previous L1 study23. Secondly, the results extend the priming effect of faces on language control and language processing29,30 to language learning. In particular, the congruency between the socio-cultural identity of faces and the L2 to be learned facilitates the learning of sadness-related L2 words. Thirdly, the different advantages of the two sets of faces in learning emotional meanings for L2 words provide empirical evidence for studies focusing on the relationship between the socio-cultural identity of faces and emotion recognition from a linguistic perspective. In addition, the present study revealed different meaning learning mechanisms of two discrete emotions of the same valence, further stressing the importance of adopting more fine-grained emotion categorizations in future studies. Finally, the present study adds evidence to the construction hypothesis42 that emotional meanings for L2 words can be learned via transient associative conditioning and then be generalized to new emotional stimuli of different modalities. Practically, the present study is of referential significance for improving learning methods of L2 emotional words. Facial expressions are effective materials for acquiring emotional meanings for L2 words. In addition to actively engaging in social learning environments, learners should look for opportunities to have face-to-face interaction with native speakers or learning materials such as video clips or pictures involving emotional events to facilitate the acquisition process and effectiveness. If possible, L2 learners should also immerse themselves in an L2 language environment to further amplify the congruency effects between speaker’s socio-cultural identity and language for learning emotional words by studying in countries speaking the corresponding language78,79,80.

Conclusions

The present study adopted an original associative learning paradigm to investigate the mechanism of emotional meaning learning for L2 English words by comparing the efficiencies of Caucasian and Chinese faces as associative contexts. Following a discrete emotion model, disgust and sadness, two negatively valenced yet distinctive emotions were selected. Results of post-learning generalization tests showed that Caucasian faces are more effective for learning sad meanings for L2 words whereas Chinese faces are more effective for learning neutral ones. To sum up, the present study reveals the impacts of socio-cultural identity of faces on the learning of emotional meanings of L2 words and interactions among language, culture, and emotion from the perspective of language learning, thus providing references for empirical and theoretical studies on L2 emotional word learning and emotion recognition.

Data availability

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation. Please contact the corresponding author, Beixian Gu, for such inquiries. The faces that appeared in Fig. 2 of the manuscript were from the Karolinska Directed Emotional Faces Database (Link: https://kdef.se/) and the Tsinghua Facial Expression Database (Link: https://doi.org/10.1371/journal.pone.0231304).

Change history

25 February 2025

A Correction to this paper has been published: https://doi.org/10.1038/s41598-025-90727-4

References

Clement, F., Bernard, S., Grandjean, D. & Sander, D. Emotional expression and vocabulary learning in adults and children. Cogn. Emot. 27, 539–548 (2013).

Ekman, P. Facial expressions of emotion: new findings, new questions. Psychol. Sci. 3, 34–38 (1992).

Ekman, P. Facial expression and emotion. Am. Psychol. 48, 384–392 (1993).

Brigham, J. C., Bennett, L. B., Meissner, C. A. & Mitchell, T. L. The influence of race on eyewitness memory. Handb. Eyewitness Psychol. Vol. II Mem. People 2, 257–281 (2007).

Yan, X., Andrews, T. J., Jenkins, R. & Young, A. W. Cross-cultural differences and similarities underlying other-race effects for facial identity and expression. Q. J. Exp. Psychol. 69, 1247–1254 (2016).

Darwin, C. The Expression of the Emotions in man and Animals (John Murray, 1872).

Russell, J. A., Bachorowski, J. A. & Fernández-Dols, J. M. Facial and vocal expressions of emotion. Annu. Rev. Psychol. 54, 329–349 (2003).

Denham, S. A. Emotional Development in Young Children (Guilford Press, 1998).

Izard, C. E. The Face of Emotion (Appleton-Century-Crofts, 1971).

Markham, R. & Adams, K. The effect of type of task on children’s identification of facial expressions. J. Nonverbal Behav. 16, 21–39 (1992).

Vicari, S., Reilly, J. S., Pasqualetti, P., Vizzotto, A. & Caltagirone, C. Recognition of facial expressions of emotions in school-age children: the intersection of perceptual and semantic categories. Acta Paediatr. 89, 836–845 (2000).

Bullock, M. & Russell, J. A. Preschool children’s interpretation of facial expressions of emotion. Int. J. Behav. Dev. 7, 193–214 (1984).

Kissler, J., Herbert, C., Winkler, I. & Junghofer, M. Emotion and attention in visual word processing-An ERP study. Biol. Psychol. 80, 75–83 (2009).

Niedenthal, P. M., Brauer, M., Robin, L. & Innes-Ker, Å. H. Adult attachment and the perception of facial expression of emotion. J. Pers. Soc. Psychol. 82, 419–433 (2002).

Padmala, S., Bauer, A. & Pessoa, L. Negative emotion impairs conflict-driven executive control. Front. Psychol. 2, 192 (2011).

Baggott, S., Palermo, R. & Fox, A. M. Processing emotional category congruency between emotional facial expressions and emotional words. Cogn. Emot. 25, 369–379 (2011).

Beall, P. M. & Herbert, A. M. The face wins: stronger automatic processing of affect in facial expressions than words in a modified Stroop task. Cogn. Emot. 22, 1613–1642 (2008).

Kar, B. R., Srinivasan, N., Nehabala, Y. & Nigam, R. Proactive and reactive control depends on emotional valence: a Stroop study with emotional expressions and words. Cogn. Emot. 32, 325–340 (2018).

Stenberg, G., Wiking, S. & Dahl, M. Judging words at face value: interference in a word processing task reveals automatic processing of affective facial expressions. Cogn. Emot. 12, 755–782 (1998).

Hernández-Gutiérrez, D. et al. Does dynamic information about the speaker’s face contribute to semantic speech processing? ERP evidence. Cortex 104, 12–25 (2018).

Hernández-Gutiérrez, D. et al. Situating language in a minimal social context: how seeing a picture of the speaker’s face affects language comprehension. Soc. Cogn. Affect. Neurosci. 16, 502–511 (2021).

Hernández-Gutiérrez, D. et al. How the speaker’s emotional facial expressions may affect language comprehension. Lang. Cogn. Neurosci. https://doi.org/10.1080/23273798.2022.2130945 (2022).

Gu, B., Liu, B., Wang, H., Beltrán, D. & De Vega, M. Learning new words’ emotional meanings in the contexts of faces and sentences. Psicologica 42, 52–84 (2021).

Barrett, L. F., Mesquita, B. & Gendron, M. Context in emotion perception. Curr. Dir. Psychol. Sci. 20, 286–290 (2011).

Barrett, L. F., Adolphs, R., Marsella, S., Martinez, A. M. & Pollak, S. D. Emotional expressions reconsidered: challenges to inferring emotion from human facial movements. Psychol. Sci. Public. Interes. 20, 1–68 (2019).

Yan, X., Andrews, T. J. & Young, A. W. Cultural similarities and differences in perceiving and recognizing facial expressions of basic emotions. J. Exp. Psychol. Hum. Percept. Perform. 42, 423–440 (2016).

Hugenberg, K. Social categorization and the perception of facial affect: target race moderates the response latency advantage for happy faces. Emotion 5, 267–276 (2005).

Woumans, E. et al. Can faces prime a language? Psychol. Sci. 26, 1343–1352 (2015).

Liu, C., Timmer, K., Jiao, L., Yuan, Y. & Wang, R. The influence of contextual faces on bilingual language control. Q. J. Exp. Psychol. 72, 2313–2327 (2019).

Li, Y., Yang, J., Suzanne Scherf, K. & Li, P. Two faces, two languages: an fMRI study of bilingual picture naming. Brain Lang. 127, 452–462 (2013).

Van Oers, B., Wardekker, W., Elbers, E. & Van der Veer, R. The transformation of learning: advances in cultural-historical activity theory. Transform Learn. Adv. Cult. Act. Theory 2008, 1–401. https://doi.org/10.1017/CBO9780511499937 (2008).

Gullberg, M. Gesture as a communication strategy in second language discourse: a study of learners of French and Swedish. Trav l’Institut Linguist Lund 35, 253 (1998).

Svetleff, Z. & Anwar, S. Facial expression of emotion assessment from video in adult second language learners. Www Ijmer Com. | 7, 458 (2017).

Kellerman, S. I see what you mean’: the role of kinesic behaviour in listening and implications for foreign and second language learning. Appl. Linguist. 13, 239–258 (1992).

Bradley, M. M. & Lang, P. J. Measuring emotion: behavior, feeling, and physiology. In Cognitive Neuroscience of Emotion (eds Lane, R. D. & Nadel, L.) 242–276 (Oxford University Press, 2000).

Ekman, P. An argument for basic emotions. Cogn. Emot. 6, 169–200 (1992).

Briesemeister, B. B., Kuchinke, L. & Jacobs, A. M. Discrete emotion effects on lexical decision response times. PLoS One 6, e23743 (2011).

Silva, C., Montant, M., Ponz, A. & Ziegler, J. C. Emotions in reading: disgust, empathy and the contextual learning hypothesis. Cognition 125, 333–338 (2012).

Ferré, P., Haro, J. & Hinojosa, J. A. Be aware of the rifle but do not forget the stench: differential effects of fear and disgust on lexical processing and memory. Cogn. Emotion 32, 796–811. https://doi.org/10.1080/02699931.2017.1356700 (2017).

Briesemeister, B. B., Kuchinke, L. & Jacobs, A. M. Emotion word recognition: discrete information effects first, continuous later? Brain Res. 1564, 62–71 (2014).

Ellsworth, P. C. Appraisal theory: old and new questions. Emot. Rev. 5, 125–131 (2013).

Lindquist, K. A. & Gendron, M. What’s in a word? Language constructs emotion perception. Emot. Rev. 5, 66–71 (2013).

Barrett, L. F., Lindquist, K. A. & Gendron, M. Language as context for the perception of emotion. Trends Cogn. Sci. 11, 327–332 (2007).

Lindquist, K. A. The role of language in emotion: existing evidence and future directions. Curr. Opin. Psychol. 17, 135–139 (2017).

Curtis, V., Aunger, R. & Rabie, T. Evidence that disgust evolved to protect from risk of disease. Proc. R. Soc. Lond. Ser. B Biol. Sci. 271, 1456 (2004).

Lench, H. C., Flores, S. A. & Bench, S. W. Discrete emotions predict changes in cognition, judgment, experience, behavior, and physiology: a meta-analysis of experimental emotion elicitations. Psychol. Bull. 137, 834–855 (2011).

Gross, J. J. & Levenson, R. W. Hiding feelings: the acute effects of inhibiting negative and positive emotion. J. Abnorm. Psychol. 106, 95–103 (1997).

Krumhansl, C. L. An exploratory study of musical emotions and psychophysiology. Can. J. Exp. Psychol. 51, 336–352 (1997).

Ritz, T., Thöns, M., Fahrenkrug, S. & Dahme, B. Airways, respiration, and respiratory sinus arrhythmia during picture viewing. Psychophysiology 42, 568–578 (2005).

Stark, R., Walter, B., Schienle, A. & Vaitl, D. Psychophysiological correlates of disgust and disgust sensitivity. J. Psychophysiol. 19, 50–60 (2005).

Ekman, P. & Oster, H. Facial expressions of emotion. Annu. Rev. Psychol. 30, 527–554 (1979).

Rozin, P., Lowery, L. & Ebert, R. Varieties of disgust faces and the structure of disgust. J. Pers. Soc. Psychol. 66, 870–881 (1994).

Schweiger Gallo, I. et al. Mapping the everyday concept of disgust in five cultures. Curr. Psychol. 43, 18003–18024 (2024).

Han, D., Kollareth, D. & Russell, J. A. The words for disgust in English, Korean, and Malayalam question its homogeneity. J. Lang. Soc. Psychol. 35, 569–588 (2016).

Spielberger, C. D. & Reheiser, E. C. The nature and measurement of anger. In International Handbook of Anger. 403–412 (Springer, 2010). https://doi.org/10.1007/978-0-387-89676-2_23.

Izard, C. E. & Buechler, S. Aspects of consciousness and personality in terms of differential emotions theory. In Emotion: Theory, Research, and Experience, vol. I (eds. Plutchik, R. & Kellerman, H.) 165–188 (Academic, 1980).

Joordens, S., Ozubko, J. D. & Niewiadomski, M. W. Featuring old/new recognition: the two faces of the pseudoword effect. J. Mem. Lang. 58, 380–392 (2008).

Faul, F., Erdfelder, E., Bucher, A. & Lang, A. G. G*Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav. Res. Methodods 39, 175–191 (2009).

Jin, Y. & Hamp-Lyons, L. A new test for China? Stages in the development of an assessment for professional purposes. Assess. Educ. Princ Policy Pract. 22, 397–426 (2015).

Keuleers, E., Brysbaert, M. & Wuggy A multilingual pseudoword generator. Behav. Res. Methods. 42, 627–633 (2010).

Gu, B., Liu, B., Wang, H., de Vega, M. & Beltrán, D. ERP signatures of pseudowords’ acquired emotional connotations of disgust and sadness. Lang. Cogn. Neurosci. https://doi.org/10.1080/23273798.2022.2099914 (2022).

Gu, B., Liu, B., Beltrán, D. & de Vega, M. ERP evidence for emotion-specific congruency effects between sentences and new words with disgust and sadness connotations. Front. Psychol. 14, 45623 (2023).

Yang, T. et al. Tsinghua facial expression database—a database of facial expressions in Chinese young and older women and men: development and validation. PLoS One 15, 7456 (2020).

Lundqvist, D., Flykt, A. & Öhman, A. The Karolinska directed emotional faces—KDEF. In CD ROM Dep Clin. Neurosci. Psychol. Sect. Karolinska Institutet (1998).

Bates, D., Mächler, M., Bolker, B. M. & Walker, S. C. Fitting linear mixed-effects models using lme4. J. Stat. Softw. 67, 7423 (2015).

Kuznetsova, A., Brockhoff, P. B. & Christensen, R. H. B. lmerTest package: tests in linear mixed effects models. J. Stat. Softw. 82, 1–26 (2017).

Fernández-Dols, J. M. & Crivelli, C. Emotion and expression: naturalistic studies. Emot. Rev. 5, 24–29 (2013).

Reisenzein, R., Studtmann, M. & Horstmann, G. Coherence between emotion and facial expression: evidence from laboratory experiments. Emot. Rev. 5, 16–23 (2013).

Leppänen, J. M. & Hietanen, J. K. Positive facial expressions are recognized faster than negative facial expressions, but why? Psychol. Res. 69, 22–29 (2004).

Barger, B., Nabi, R. & Hong, L. Y. Standard back-translation procedures may not capture proper emotion concepts: a case study of Chinese disgust terms. Emotion 10, 703–711 (2010).

Pavlenko, A. Affective processing in bilingual speakers: disembodied cognition? Int. J. Psychol. 47, 405–428 (2012).

Caldwell-Harris, C. L. Emotionality differences between a native and foreign language: theoretical implications. Front. Psychol. 5, 4723 (2014).

Caldwell-Harris, C. L. Emotionality differences between a native and foreign language: implications for everyday life. Curr. Dir. Psychol. Sci. 24, 214–219 (2015).

Thoma, D. Emotion regulation by attentional deployment moderates bilinguals’ language-dependent emotion differences. Cogn. Emot. 35, 1121–1135 (2021).

Elfenbein, H. A., Beaupré, M., Lévesque, M. & Hess, U. Toward a dialect theory: cultural differences in the expression and recognition of posed facial expressions. Emotion 7, 131–146 (2007).

Krumhuber, E. G. & Manstead, A. S. R. When memory is better for out-group faces: on negative emotions and gender roles. J. Nonverbal Behav. 35, 51–61 (2011).

Chen, C. et al. Cultural facial expressions dynamically convey emotion category and intensity information. Curr. Biol. 34, 213–223e5 (2024).

Dewey, D. P. A comparison of reading development by learners of Japanese in intensive domestic immersion and study abroad contexts. Stud. Second Lang. Acquis. 26, 458 (2004).

Segalowitz, N. et al. A comparison of Spanish second language acquisition in two different learning contexts: study abroad and the domestic classroom. Front. Interdiscip. J. Study Abroad 10, 1–18 (2004).

Serrano, R., Llanes, A. & Tragant, E. Analyzing the effect of context of second language learning: domestic intensive and semi-intensive courses vs. study abroad in Europe. System 39, 133–143 (2011).

Funding

This study was funded by the National Social Science Fund of China (22CYY019).

Author information

Authors and Affiliations

Contributions

B.G. designed the study and wrote the manuscript, X.S. collected and analyzed the data, and D.B. and M.V. revised and polished the manuscript. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The original online version of this Article was revised: The Funding section in the original version of this Article was omitted. The Funding section now reads: “This study was funded by the National Social Science Fund of China (22CYY019)”.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Gu, B., Sun, X., Beltrán, D. et al. Faces of different socio-cultural identities impact emotional meaning learning for L2 words. Sci Rep 15, 616 (2025). https://doi.org/10.1038/s41598-024-84347-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-84347-7