Abstract

Climate change exacerbates the challenges of maintaining crop health by influencing invasive pest and disease infestations, especially for cereal crops, leading to enormous yield losses. Consequently, innovative solutions are needed to monitor crop health from early development stages through harvesting. While various technologies, such as the Internet of Things (IoT), machine learning (ML), and artificial intelligence (AI), have been used, portable, cost-effective, and energy-efficient solutions suitable for resource-constrained environments such as edge applications in agriculture are needed. This study presents the development of a portable smart IoT device that integrates a lightweight convolutional neural network (CNN), called Tiny-LiteNet, optimized for edge applications with built-in support of model explainability. The system consists of a high-definition camera for real-time plant image acquisition, a Raspberry-Pi 5 integrated with the Tiny-LiteNet model for edge processing, and a GSM/GPRS module for cloud communication. The experimental results demonstrated that Tiny-LiteNet achieved up to 98.6% accuracy, 98.4% F1-score, 98.2% Recall, 80 ms inference time, while maintaining a compact model size of 1.2 MB with 1.48 million parameters, outperforming traditional CNN architectures such as VGGNet-16, Inception, ResNet50, DenseNet121, MobileNetv2, and EfficientNetB0 in terms of efficiency and suitability for edge computing. Additionally, the low power consumption and user-friendly design of this smart device make it a practical tool for farmers, enabling real-time pest and disease detection, promoting sustainable agriculture, and enhancing food security.

Similar content being viewed by others

Introduction

Agriculture plays a crucial role in the global economy, contributing significantly to food security and rural development, particularly in developing countries1,2. Cereal crops such as maize, wheat, and rice are staple food sources that sustain many African countries both economically and for subsistence. However, their production is subject to the increasing prevalence of invasive pests and devastating diseases3. Additionally, climate change factors, such as temperature fluctuations and unpredictable weather patterns, contribute significantly to the creation of favourable conditions for the proliferation of pest and disease outbreaks. Therefore, sustainable solutions based on new technologies are needed4,5.

Traditional methods of controlling pest and disease outbreaks rely heavily on manual inspection and widespread chemical pesticide application across the field by farmers or experts6. While these methods offer some effectiveness, they are time-consuming, labour-intensive, and prone to human errors, leading to delayed response and prevalent crop damage7,8. Furthermore, with the introduction of integrated pest management (IPM) programs to encourage sustainable pest control practices, these programs still rely on human expertise, lack real-time automation capabilities, and promote pesticide resistance among pests9. The limitations of these traditional methods highlight the need for new technologies to address these problems10.

As an emerging technology, the Internet of Things (IoT) enables the interaction of physical things virtually11. This technology is used in precision agriculture as a technological tool to enable remote and real-time monitoring for data-driven decision-making12,13. Various agricultural-based sensors, actuators, and processing devices collect critical agro-environmental data such as soil parameters, crop health conditions and weather patterns for real-time monitoring and automated decision-making14. For pest and disease management, IoT-powered devices are capable of detecting and monitoring early signs of infestations via continuous field data collection and analysis, reducing reliance on manual inspection and optimizing resource utilization15. However, many existing IoT solutions depend on cloud computing, which is subjected to latency, bandwidth constraints, and connectivity limitations for smallholder farmers with limited access to reliable internet infrastructure, particularly in rural and remote areas.16,17. Therefore, to address these limitations, edge computing has been introduced for processing data locally on IoT devices, reducing reliance on cloud-based solutions while improving real-time responsiveness.

Artificial intelligence (AI), machine learning (ML) and the Internet of Things (IoT) have led to the development of advanced smart solutions in precision agriculture to enable real-time decision-making to control and monitor pest and disease outbreaks18,19. Convolutional neural networks (CNNs) have demonstrated exceptional performance in image-based processing, enabling early detection of pest and disease outbreaks and preventing their widespread across large areas20,21. Cloud-based satellite and drone imaging systems have further enhanced these capabilities by covering large areas and even exploring hard-to-reach zones22,23. Nevertheless, these technologies are expensive, computationally intensive, and necessitate advanced infrastructure development. In addition, most of these technologies rely on cloud computing and require strong internet connectivity, making them unsuitable for farmers in rural and remote areas with limited internet access24,25. To overcome these challenges, lightweight AI models integrated with affordable and portable edge devices are needed in precision agriculture to serve smallholder farmers.

To address these challenges, this study introduces a power-efficient portable IoT device integrated with a lightweight CNN model named Tiny-LiteNet, specifically designed for edge computing applications in precision agriculture. The system incorporates a high-definition camera for image acquisition, a Raspberry Pi 5 for on-device processing, a GSM/GPRS module for communication, and a Tiny-LiteNet model optimized for real-time image processing at the edge, enabling real-time monitoring without reliance on strong internet connectivity. The experimental results indicated that Tiny-LiteNet model achieved an accuracy of 98.6%, a compact model size of 1.2 MB with 1.48 million parameters, outperforming traditional deep learning models such as VGGNet-16, Inception3, ResNet50, DenseNet121, EfficientNetB0, and MobileNetv2 in terms of performance efficiency. The device’s low-power consumption, user-friendly design, and low dependency on strong internet connectivity make it a practical tool for smallholder farms, enabling real-time monitoring and sustainable agricultural practices.

The main contributions of this work include: (1) the development of a portable, low-power AI-IoT edge device for real-time pest and disease detection; (2) the design of an efficient lightweight CNN model “Tiny-LiteNet” suitable for deployment on resource-constrained edge devices, and (3) the demonstration of its effectiveness in smallholder farming environments with limited connectivity. This contribution provides a scalable and cost-effective solution for improving crop health monitoring and advancing sustainable agriculture in Africa and other developing regions. The rest of the paper is organized as follows: Section "Related works" presents related works, Section "Methodology" describes the methodology, Section "Validation of numerical results" discusses the results, and finally, Section "Results and discussion" concludes the paper. In addition, a complete list of key abbreviations and their descriptions is provided in Table 1.

Related works

Various studies have been conducted to identify solutions for invasive pests and disastrous diseases affecting crops. This section presents emerging technologies such as AI, ML, and IoT applied in precision agriculture, specifically for controlling crop health conditions.

IoT technology has emerged as a real-time solution for monitoring and controlling agricultural pests and diseases. In26, a CROPCARE system was developed for real-time crop disease control by integrating mobile vision based on MobileNetv2 with the IoT and achieved impressive results. The parallel and distributed simulation framework (PDSF) integrated with the IoT was proposed for pest monitoring in27. This tool employs a core graphical processing unit (GPU) to manage the number of sensors coupled to it to control and monitor crop pests, achieving 98.65% accuracy. accuracy. Furthermore, the integration of IoT devices with UAV platforms has modernized precision agriculture28. A smart pest detection and management method for cotton plants was developed in29. In his framework, motion detection sensors were used to detect pests, and an automatic targeted spray was applied via drone. However, most existing IoT solutions rely on cloud computing, which is impractical in rural areas with limited internet connectivity.

The introduction of ML algorithms, specifically their subset, namely, DL, has indeed revolutionized agricultural practices by automating labour-intensive tasks, including pest and disease detection30,31. ML’s prediction capabilities enable it to predict pest and disease outbreaks before they worsen. The work of N. Ullah et al.32 presented DeepPestNet, a convolutional neural network model for recognizing and classifying 10 species of pest with 98.92% accuracy. Similarly, W. Albattah et al.33 proposed an improved EfficientNetV2-B4 model by customizing the existing EfficientNet architecture, achieving average precision, recall, and accuracy values of 99.63%, 99.93%, and 99.99%, respectively. Furthermore, the study presented in34 proposed a DL model based on the Faster R-CNN architecture integrated with a mobile application to recognize and locate pests within images, achieving an impressive accuracy of 99.0%.

Leveraging the power of unmanned aerial vehicles (UAVs), AI, and the IoT has advanced precision agriculture and precise plant health monitoring. The integration of these technologies enables real-time data collection, analysis, prediction, and informed decision-making to improve the accuracy and responsiveness of pest and disease management systems35. In the work presented in36, crop diseases and pest infestations were detected and monitored by integrating a UAV and DL based on the YOLOv5 model, obtaining remarkable results of up to 96.0% average precision, 93.0% average recall, and 95.0% mean average precision (mAP). Additionally, R. Fu37 introduced a swarm system of drones equipped with a CNN model based on YOLOv10 to detect and geo-localize invasive pests such as Araujia sericifera and Cortaderia selloana affecting organic orange groves and achieved an F1 score of up to 80%.

Despite this remarkable achievement, most AI, ML, and IoT solutions focus on high-resource settings, leaving a gap in affordable, scalable, and energy-efficient aspects for smallholder farmers in developing regions38,39. This study addresses this gap by developing a portable IoT device integrated with a lightweight DL model, specifically designed for edge computing in resource-constrained agricultural environments, suitable for smallholder farmers with limited resources. A comparative summary of recent works that have used emerging technologies such as IoT, UAVs, Blockchain, 5G, and AI/ML techniques for agricultural pest and disease control is provided in Table 2 below.

Methodology

System overview

This study proposes an AI-powered IoT system for the early detection and classification of crop pests and diseases, leveraging edge computing for real-time processing. The system integrates an image acquisition unit, a deep learning-based classification model for on-device processing, and cloud-based analytics for efficient monitoring and decision-making. The workflow consists of five main stages: data collection, preprocessing, model training, deployment on an edge device, and real-time inference. The proposed system aims to provide farmers with timely and accurate information to mitigate agricultural losses.

IoT layered architecture of the proposed edge device

The proposed edge device is structured in a layered IoT architecture designed for real-time agricultural pest and disease monitoring49,50. Each layer in this architecture from data acquisition to decision-making phase has specific functions, threats associated to it, security measures, and functional components as summarized in the Table 3.

As illustrated in Fig. 1, the system starts by acquiring images of plants from the field and preprocessing them for efficient analysis via the Tiny-LiteNet model. Once the pest or disease is detected, the system activates a notification system by alerting farmers on site and sending a notification message describing the plant health condition. Furthermore, the images of the detected plant health conditions are stored on the device and sent on a cloud platform when the internet is available for further analysis.

Figure 2 illustrates the proposed system architecture of the AI-powered portable edge device that integrates a high-definition camera for real-time crop image acquisition, a Raspberry Pi 5 for on-device processing, and a GSM/GPRS module for cloud communication. In addition, it integrates Tiny-LiteNet, a lightweight CNN model based on MobileNetV2, optimized for edge deployment, specifically for crop pest and disease detection.

Hardware components

The key hardware components used for the overall function of the system are as follows:

-

Sensing unit: The sensing unit consists of a high-definition camera module used for image acquisition from the field and weather sensors, including humidity, temperature, and rain sensors, used for weather condition monitoring.

-

Processing U55nit: Data acquired from sensors are processed by Raspberry Pi 5, which is integrated with the CNN model “Tiny-LiteNet model” optimized for pest and disease image classification.

-

Communication unit: The processed data is communicated via the GSM/GPRS module for cloud connectivity and notification to the farmers.

-

Power Sour55ce: The system is powered by a solar system optimized for energy efficiency to charge an embedded lithium battery.

Software and AI Model Development

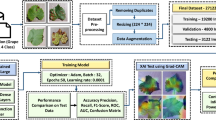

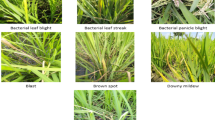

Dataset collection and preprocessing

To train the CNN model, we used two datasets from the PlantVillage repository and images acquired from the field, including pest and disease datasets. Both datasets were pre-processed and normalized as detailed in Tables 4 and 5.

Tiny-LiteNet Model development

As illustrated in Fig. 3, a pretrained MobileNetv2 model was used as the teacher model, from which foundational knowledge was acquired. Additionally, a student model consisting of six squeeze-and-excitation (SE) depthwise blocks to enhance feature representation, reduce computational cost, and improve model accuracy combined with a fully connected layer was added to convert the high-level feature maps into class scores for classification tasks. The overall architecture significantly reduces the computational cost of the MobileNetv2 architecture but has a relatively decreased accuracy of 1%, which does not affect the overall performance of pest and disease detection.

Performance metrics calculation

Various performance metrics were calculated to evaluate the effectiveness of the Tiny-LiteNet model and the integrated IoT system and to determine their suitability for crop pest and disease detection.

Accuracy (A)

The accuracy is the rate of correctly classified instances for both infected and noninfected plants.

where:

-

TP (true positives): The correctly identified instances (pest/disease for our case)

-

TN (True Negatives): The correctly identified as no instances (not infected plant for our case)

-

FP (false positives): The incorrectly identified instances (pest/disease) for our case)

-

FN (False Negatives): incorrectly identified as no instances (not infected plant for our case)

Recall (R)

The recall is the percentage of instances predicted correctly as positive (infected plants) out of all available positive instances.

Precision (P)

The precision metric represents the rate of correctly predicted positive instances (infected plants) over all the predicted positive instances.

Mean average precision (mAP)

The mean average precision (mAP) represents the weighted mean of precision taken at each threshold of intersection over union (IoU), averaged across all classes.

where N denotes the number of threshold increments, \({P}_{i}\) represents the precision at the \({i}^{th}\) IoU threshold, and \(\Delta {R}_{i}\) denotes the increase in recall from the \({i}^{th}\) to the \({\left(i+1\right)}^{th}\) IoU threshold.

F1 score (F)

Algorithm 1 in Fig. 4 illustrates the pseudocode of the proposed AI-powered IoT edge system, indicating the flow of data from image acquisition to pest or disease prediction, confidence evaluation, and text-based explanations generation. The algorithm starts with capturing real-time image stream from camera and processed by the lightweight Tiny-LiteNet model on a Raspberry Pi 5 that classifies images in their respective classes, followed by the retrieval of corresponding interpretive text, enabling real-time, power-efficient, and interpretable decision support for agricultural monitoring in resource-constrained environments.

Validation of numerical results

To ensure a reliable and generalized model, the proposed Tiny-LiteNet model was adopted to a rigorous evaluation methodology namely folded cross-validation (CV). A class-conscious fivefold cross-validation technique was used across the entire dataset, with 80% training and 20% testing subsets, ensuring equal representation of classes in each fold. Furthermore, the process was repeated five times with different data splits and the average resulting performance metrics include accuracy, recall, F1-score, and Cross Validation Accuracy (5-Fold Cross Validation Accuracy) were recorded

where:

-

CVA: Cross Validation Accuracy

-

Ai: Accuracy of the ith fold

-

K: Number of Folds

In addition, the Tiny-LiteNet’s results were compared with various conventional CNN models, such as VGGNet-16, DenseNet121, MobileNetV2, MobileNetV2, ResNet50, and EfficientNetB0 using the same dataset and evaluation protocol. Finally, to validate the effectiveness of the proposed model in real-world environments, a benchmarking process was conducted across various edge devices including Raspberry Pi 5, Raspberry Pi 4, Arduino Nano 33 BLE sense, Raspberry pi pico, and FPGA and measured the inference time, memory footprint, and power consumption under edge computing constraints.

Results and discussion

This section describes the results of the research after integrating the developed AI model with a physical edge device.

Results

Figure 5 shows the training and validation performance of the Tiny-LiteNet model over 150 epochs. In Fig. 5a, the model training and validation accuracies increase rapidly in the first 10 epochs and stabilize for the remaining epochs, with a validation accuracy of approximately 98.6%, demonstrating the excellent learning capability of the model. Additionally, as illustrated in Fig. 5b, both training and validation losses decline rapidly in the first 20 epochs, indicating the model effectiveness learning capability.

As shown in Fig. 6a, the model training and validation accuracies increase rapidly in the first 20 epochs and stabilize for the remaining epochs, with a validation accuracy of approximately 99%, indicating excellent learning capability. Similarly, as shown in Fig. 6b, both training and validation losses decrease rapidly in the first 25 epochs, indicating the model effectiveness learning ability.

Figure 7 presents two confusion matrices, each illustrating the performance of the Tiny-LiteNet model in classifying crop pests and diseases. Figure 7a shows the model for classifying corn leaf diseases, whereas Fig. 7b presents the model for classifying crop pests. The model performed exceptionally well, except for some confusion regarding Gray_leaf_spot disease and grasshopper pest.

As shown in Fig. 8a,b, the developed Tiny-LiteNet model effectively predicts and classifies images with high accuracy. This includes images of plant health conditions, such as maize crop pests, diseases, and fruit pests. In addition, the prediction labels indicate a high degree of precision, highlighting the model’s robustness across various image processing tasks. Furthermore, after classification, a description is added, summarizing image information and suggesting control measures to facilitate decision-making.

To validate the effectiveness of the developed model, we trained and compared seven traditional CNN models, including VGGNet-16, Inception3, RasNet, DenseNet, EfficientNetB0, and MobileNetv2. As presented in Table 6, the results demonstrated that our model (Tiny-LiteNet) outperforms other models in terms of model size, number of parameters, and inference time, with relative accuracy.

Figure 9 illustrates the accuracy, storage efficiency, and responsiveness trade-offs across eight CNN models, highlighting the effectiveness of Tiny-LiteNet for resource-constrained deployments.

Comparative benchmarking of the edge devices’ performance

To select a suitable edge device with optimal performance to run the Tiny-LileNet model, comprehensive benchmarking was conducted across six edge platforms capable of running lightweight CNN models. The performance evaluation focused on latency, power consumption, memory usage, and deployment feasibility for the Tiny-LiteNet model, as presented in Table 7.

The performance analysis results demonstrate that while popular platforms such as Raspberry pi Pico, Arduino Nano 33 BLE sense, and ESP32-CAM are not capable of running the Tiny-LiteNet model, Raspberry Pi 5 uniquely balances high accuracy (98.6%), low latency (16 ms), and reasonable cost ($80), making it the most suitable platform for deploying Tiny-LiteNet in resource-constrained agricultural environments. Additionally, compared with Raspberry pi 5, Raspberry pi 4 has high latency, whereas FPGA, despite its efficiency, has a complex HDL design and high cost, making it a less practical alternative.

As illustrated in Fig. 10, the proposed portable edge device for crop pest and disease detection was implemented and enclosed in a white cover. Subfigure (a) depicts the front view, highlighting the integrated screen for user interaction and results visualization. Subfigure (b) shows the back side, indicating the camera lens for image acquisition and accessible ports along the upper edge side for programming, power, switching, and LED status indication. While subfigure (c) provides an internal view, illustrating the device’s component layout and connectivity."

Discussion

The results demonstrate that the integration of the Tiny-LiteNet model with a Raspberry Pi 5 offers a high performing, lightweight, and efficient solution for detecting and classifying cereal crop pests and diseases. In addition, the proposed system offers an explainability option for farmers, describing the class of a pest or diseases, its effects on plants as well as the control strategies to mitigate risks. The model exhibits exceptional performance accuracy of 98.6%, 98.4% F1-score, and 98.2% recall which are comparable to or exceeding many state-of-the-art deep learning models, including VGG-16, Inception3, ResNet50, DenseNet121, EfficientNetB0, and MobileNetv2 trained on the same dataset. However, what distinguishes Tiny-LiteNet is its compact design (0.72 MB), which ensures fast inference speed, low computational overhead, and compatibility with edge computing platforms, all while maintaining robust predictive capabilities.

The incorporation of six squeeze-and-excitation (SE) blocks improves feature extraction and enhances classification performance across diverse pest and disease images. This design choice improves the model’s ability to distinguish subtle differences in pest and disease image features across varied backgrounds, lighting conditions, and growth stages, thus increasing its generalization performance. Furthermore, real-time data communication via GSM/GPRS enables remote monitoring and sends notifications to farmers, indicating pest and disease conditions. The system’s edge computing capability, portability, and low power consumption make it suitable for smallholder farmers with limited resources.

To evaluate the performance of the proposed system in comparison with recent studies on similar tasks, Table 8 provides a comprehensive analysis of state-of-the-art CNN-based and hybrid deep learning models used for similar tasks in agriculture.

While models such as ResNet50, MobileNetV3 Large, and CNN + ViT achieve higher or comparable accuracies, their large model sizes (often > 100 MB) and high processing demands limit their practicality for edge deployment. In contrast, Tiny-LiteNet combines high performance with minimal resource requirements, positioning it as a more suitable choice for real-time, field-deployable applications. This comparison highlights that Tiny-LiteNet offers a viable solution across accuracy, generalization, model size, and deployment flexibility. Furthermore, the proposed system’s ability to run effectively on edge devices like Raspberry Pi 5, integrated with its explainable AI support and fast response time, makes it exclusively suitable for resource-constrained agricultural environments.

Conclusion

This study presents the design and implementation of a lightweight and power efficient AI-powered IoT edge system optimized for real-time crop pest and disease detection in resource-constrained agricultural environments. The developed system integrates a new lightweight convolutional neural network architecture, namely, “Tiny-LiteNet”, optimized for edge deployment with built-in support of model explainability. The experimental evaluation shows that the proposed model achieves a high accuracy of 98.6% accuracy, 98.4% F1-score, 98.2% Recall, 80 ms inference time, a compact model size of 1.2 MB with 1.48 million parameters, outperforming several state-of-the-art CNN models, including VGGNet-16, Inception3, ResNet50, DenseNet121, EfficientNetB0, and MobileNetv2. Additionally, the developed lightweight IoT-Edge device processes data locally without relying on internet connectivity, while maintaining low power consumption and portability, making it suitable for resource-constrained deployment. The system is currently limited on rule-based and relies on preprogrammed expert knowledge for textual output rather than being dynamically generated by a language model, due to the memory and computational restrictions of edge devices. Future work will focus on enhancing the model’s ability to generate automatic textual descriptions of plant health conditions by incorporating a large language model (LLM). We also aim to further reduce the system’s power consumption for extended field deployment using battery-powered configurations.

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author upon reasonable request.

References

Sylvre, N. & Jean, D. R. Updates on modern agricultural technologies adoption and its impacts on the improvement of agricultural activities in Rwanda: A review. Int. J. Innov. Sci. Res. Technol. 5(12), 222–229 (2020).

Balogun, A. L., Adebisi, N., Abubakar, I. R., Dano, U. L. & Tella, A. Digitalization for transformative urbanization, climate change adaptation, and sustainable farming in Africa: Trend, opportunities, and challenges. J. Integr. Environ. Sci. 19(1), 17–37. https://doi.org/10.1080/1943815X.2022.2033791 (2022).

Hanyurwimfura, D., Nizeyimana, E., Ndikumana, F., Mukanyiligira, D., Bakar Diwani, A. & Mukamanzi, F. Monitoring system to strive against fall armyworm in crops case study: Maize in Rwanda. In Proc - 2018 IEEE SmartWorld, Ubiquitous Intell Comput Adv Trust Comput Scalable Comput Commun Cloud Big Data Comput Internet People Smart City Innov SmartWorld/UIC/ATC/ScalCom/CBDCo. 2018;66–71.

Pierre, N. et al. AI based real-time weather condition prediction with optimized agricultural resources. Eur. J. Technol. 7(2), 36–49 (2023).

Taniushkina, D. et al. Case study on climate change effects and food security in Southeast Asia. Sci. Rep. 14(1), 1–15. https://doi.org/10.1038/s41598-024-65140-y (2024).

Sahu, R. & Tripathi, P. A brief review on LPWAN technologies for large scale smart agriculture BT - advanced network technologies and intelligent computing. In: Verma A, Verma P, Pattanaik KK, Dhurandher SK, Woungang I, editors. p. 96–113 (Cham: Springer Nature Switzerland, 2024).

Kiobia, D. O. et al. A review of successes and impeding challenges of IoT-based insect pest detection systems for estimating agroecosystem health and productivity of cotton. Sensors 23(8), 4127 (2023).

Rahman, W. et al. Automated detection of harmful insects in agriculture: A smart framework leveraging IoT, machine learning, and blockchain. IEEE Trans. Artif. Intell. 5(9), 4787–4798 (2024).

Bueno, A. F. et al. Challenges for adoption of integrated pest management (IPM): The soybean example. Neotrop. Entomol. 50(1), 5–20. https://doi.org/10.1007/s13744-020-00792-9 (2021).

Shahbazi, F., Shahbazi, S., Nadimi, M. & Paliwal, J. Losses in agricultural produce: A review of causes and solutions, with a specific focus on grain crops. J. Stored Prod. Res. 111, 102547 (2025).

Pascoal, D. et al. A technical survey on practical applications and guidelines for IoT sensors in precision agriculture and viticulture. Sci. Rep. 14(1), 90404 (2024).

Delfani, P., Thuraga, V., Banerjee, B. & Chawade, A. Integrative approaches in modern agriculture: IoT, ML and AI for disease forecasting amidst climate change. Precis Agric. 25, 2589–2613 (2024).

Morchid, A. et al. Integrated internet of things (IoT) solutions for early fire detection in smart agriculture. Results Eng. 24(September), 103392. https://doi.org/10.1016/j.rineng.2024.103392 (2024).

Hakorimana, F. & Akçaöz, H. The climate change and Rwandan coffee sector. Turkish J. Agric. - Food Sci. Technol. 5(10), 1206 (2017).

Saini, A. et al. Smart crop disease monitoring system in IoT using optimization enabled deep residual network. Sci. Rep. 15(1), 1456 (2025).

de la San Emeterio, P. M., Martínez-Ortega, J. F., Castillejo, P. & Lucas-Martínez, N. Spatio-temporal semantic data management systems for IoT in agriculture 50: Challenges and future directions. Internet of Things (Netherlands) 2024(25), 101030. https://doi.org/10.1016/j.iot.2023.101030 (2023).

Zhang, X., Cao, Z. & Dong, W. Overview of edge computing in the agricultural internet of things: Key technologies, applications, challenges. IEEE Access 8, 141748–141761 (2020).

Elumalai, M., Fernandez, T. F. & Ragab, M. Machine Learning (ML) Algorithms on IoT and drone data for smart farming BT - intelligent robots and drones for precision agriculture. In Balasubramanian S, Natarajan G, Chelliah PR, editors. p. 179–206 (Cham: Springer Nature Switzerland; 2024). https://doi.org/10.1007/978-3-031-51195-0_10

Bao, W., Fan, T., Hu, G., Liang, D. & Li, H. Detection and identification of tea leaf diseases based on AX-RetinaNet. Sci. Rep. 12(1), 1–16. https://doi.org/10.1038/s41598-022-06181-z (2022).

Kumbhar, V., Patil, A., Kumari, S. & Bharti, N. Systematic review on growth of E-agriculture in context of android-based mobile applications BT - ICT systems and sustainability. In: Tuba M, Akashe S, Joshi A, editors. p. 545–53 (Singapore: Springer Nature Singapore; 2023).

Khan, S. et al. Comparative analysis of deep neural network architectures for renewable energy forecasting: Enhancing accuracy with meteorological and time-based features. Discov. Sustain. 5(1), 1–24. https://doi.org/10.1007/s43621-024-00783-5 (2024).

Tang, F. H. M., Lenzen, M., McBratney, A. & Maggi, F. Risk of pesticide pollution at the global scale. Nat. Geosci. 14(4), 206–210. https://doi.org/10.1038/s41561-021-00712-5 (2021).

Khan, S. et al. Future of sustainable farming: exploring opportunities and overcoming barriers in drone-IoT integration. Discov. Sustain. 5(1), 1–22. https://doi.org/10.1007/s43621-024-00736-y (2024).

Saleem, M. H., Potgieter, J., Arif, K. M. Automation in agriculture by machine and deep learning techniques: A review of recent developments. Vol. 22, 2053–2091 p. (Precision Agriculture. Springer US; 2021). https://doi.org/10.1007/s11119-021-09806-x

Kaplun, D. et al. An intelligent agriculture management system for rainfall prediction and fruit health monitoring. Sci. Rep. 14(1), 1–23. https://doi.org/10.1038/s41598-023-49186-y (2024).

Garg, G. et al. CROPCARE: An intelligent real-time sustainable IoT System for crop disease detection using mobile vision. IEEE Internet Things J. 10(4), 2840–2851 (2023).

Nayagam, M. G. et al. Control of pests and diseases in plants using IOT Technology. Meas Sens. 26(December 2022), 100713. https://doi.org/10.1016/j.measen.2023.100713 (2023).

Ouhami, M., Hafiane, A., Es-Saady, Y., El Hajji, M. & Canals, R. Computer vision, IoT and data fusion for crop disease detection using machine learning: A survey and ongoing research. Remote Sens. 13(13), 2486 (2021).

Azfar, S. et al. IoT-based cotton plant pest detection and smart-response system. Appl. Sci. 13(3), 1851 (2023).

Anam, I. et al. Smart Agricultural Technology A systematic review of UAV and AI integration for targeted disease detection, weed management, and pest control in precision agriculture. Smart Agric. Technol. 9(November), 100647. https://doi.org/10.1016/j.atech.2024.100647 (2024).

Vijayan, S. & Chowdhary, C. L. Hybrid feature optimized CNN for rice crop disease prediction. Sci. Rep. 15(1), 1–20 (2025).

Ullah, N. et al. An efficient approach for crops pests recognition and classification based on novel DeepPestNet deep learning model. IEEE Access 10(May), 73019–73032 (2022).

Albattah, W., Javed, A., Nawaz, M., Masood, M. & Albahli, S. Artificial intelligence-based drone system for multiclass plant disease detection using an improved efficient convolutional neural network. Front. Plant Sci. 13(June), 1–16 (2022).

Karar, M. E., Alsunaydi, F., Albusaymi, S. & Alotaibi, S. A new mobile application of agricultural pests recognition using deep learning in cloud computing system. Alexandria Eng. J. 60(5), 4423–4432. https://doi.org/10.1016/j.aej.2021.03.009 (2021).

Nyakuri, J. P., Nkundineza, C., Gatera, O. & Nkurikiyeyezu, K. State-of-the-art deep learning algorithms for internet of things-based detection of crop pests and diseases: A comprehensive review. IEEE Access 12(August), 169824–169849 (2024).

Khan, A. et al. AI-enabled crop management framework for pest detection using visual sensor data. Plants 13(5), 653 (2024).

Fu, R. et al. Machine-learning-based UAV-assisted agricultural information security architecture and intrusion detection. IEEE Internet Things J. 10(21), 18589–18598 (2023).

Naseer, A. et al. A systematic literature review of the IoT in agriculture—Global adoption, innovations, security, and privacy challenges. IEEE Access 12(May), 60986–61021 (2024).

Sinha, P. et al. OPEN A high performance hybrid LSTM CNN secure architecture for IoT environments using deep learning. Sci. Rep. 15, 9684 (2025).

Ibrahim, A. S. et al. AI-IoT based smart agriculture pivot for plant diseases detection and treatment. Sci. Rep. 15(1), 1–16 (2025).

Ahmed, S. et al. IoT based intelligent pest management system for precision agriculture. Sci. Rep. 14(1), 1–15 (2024).

Nguyen-Tan, T. & Le-Trung, Q. A Novel 5G PMN-driven approach for AI-powered irrigation and crop health monitoring. IEEE Access 12(September), 125211–125222 (2024).

Toscano, F. et al. Unmanned aerial vehicle for precision agriculture: A review. IEEE Access 12(May), 69188–69205 (2024).

van Hilten, M. & Wolfert, S. 5G in agri-food - A review on current status, opportunities and challenges. Comput. Electron. Agric. 201(March), 107291. https://doi.org/10.1016/j.compag.2022.107291 (2022).

El, M. A., Tatane, K. & Chihab, Y. Transforming agricultural supply chains: Leveraging blockchain-enabled java smart contracts and IoT integration. ICT Express 10(3), 650–672. https://doi.org/10.1016/j.icte.2024.03.007 (2024).

Hasan, H. R. et al. Smart agriculture assurance: IoT and blockchain for trusted sustainable produce. Comput. Electron. Agric. 224(February), 109184. https://doi.org/10.1016/j.compag.2024.109184 (2024).

Raja, S. R., Subashini, B., Prabu, R. S. 5G Technology in smart farming and its applications BT - intelligent robots and drones for precision agriculture. In: Balasubramanian S, Natarajan G, Chelliah PR, editors. p. 241–64 (Cham: Springer Nature Switzerland; 2024). https://doi.org/10.1007/978-3-031-51195-0_12

Praveen Kumar, M., Abhishek, A. B., Pavanalaxmi, S., Kripa, T. & Nayak, R. Emerging trends and future directions in ML for drone-assisted IoT networks BT - machine learning for drone-enabled IoT networks: Opportunities, developments, and trends. In: Hassan J, Khalifa S, Misra P, editors. p. 191–207 (Cham: Springer Nature Switzerland; 2025). https://doi.org/10.1007/978-3-031-80961-3_10

Ghadi, Y. Y. et al. Integration of federated learning with IoT for smart cities applications, challenges, and solutions. PeerJ Comput. Sci. 9, 1–23 (2023).

Mrabet, H., Belguith, S., Alhomoud, A. & Jemai, A. A survey of IoT security based on a layered architecture of sensing and data analysis. Sensors (Switzerland) 20(13), 1–20 (2020).

Zhang, J. et al. ISMSFuse: Multi-modal fusing recognition algorithm for rice bacterial blight disease adaptable in edge computing scenarios. Comput. Electron. Agric. 223(April), 109089. https://doi.org/10.1016/j.compag.2024.109089 (2024).

Gookyi, D. A. N. et al. TinyML for smart agriculture: Comparative analysis of TinyML platforms and practical deployment for maize leaf disease identification. Smart Agric. Technol. 8(April), 100490. https://doi.org/10.1016/j.atech.2024.100490 (2024).

Karim, M. J. et al. Enhancing agriculture through real-time grape leaf disease classification via an edge device with a lightweight CNN architecture and Grad-CAM. Sci. Rep. 14(1), 1–23. https://doi.org/10.1038/s41598-024-66989-9 (2024).

Zhang, F. et al. LSANNet: A lightweight convolutional neural network for maize leaf disease identification. Biosyst. Eng. 248, 97–107 (2024).

Zeng, M., Chen, S., Liu, H., Wang, W. & Xie, J. HCFormer: A lightweight pest detection model combining CNN and ViT. Agronomy 14(9), 1940 (2024).

Author information

Authors and Affiliations

Contributions

Conceptualization: J.P.N., C.N., O.G., K.N., and G.M., Methodology: J.P.N., C.N., K.N., Software: J.P.N., C.N., G.M., Formal analysis: J.P.N., G.M., Original draft preparation: J.P.N., Writing: J.P.N., O.G., G.M., supervision: C.N., K.N., Visualization: C.N., O.G., Investigation: O.G., K.N., Data curation: O.G., K.N., Resources: O.G., Administration: O.G., G.M., validation: O.G., Proofreading: G.M., review and editing, resources: G.M., and all authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interest

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Nyakuri, J.P., Nkundineza, C., Gatera, O. et al. AI and IoT-powered edge device optimized for crop pest and disease detection. Sci Rep 15, 22905 (2025). https://doi.org/10.1038/s41598-025-06452-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-06452-5

Keywords

This article is cited by

-

A lightweight deep learning and whale optimization framework for sustainable precision agriculture

Discover Computing (2026)

-

Modern tools for sustainable agriculture: a review of intelligent crop protection technologies

Discover Agriculture (2026)

-

HDL-Net: Hybrid deep learning and IoT Network-based system for pest detection using pest sound analytics

Discover Applied Sciences (2025)