Abstract

Virtual reality (VR) technologies can induce realistic emotions in controlled experimental settings, offering unprecedented opportunities to study how the human brain processes emotions under real-world conditions. The integration of VR experiences with electroencephalography (EEG) provides a promising potential for gaining novel insights into individual emotional states. However, the complex network dynamics underlying human emotions during VR experiences remain largely unexplored. To address this gap, we leveraged graph-theoretical approaches to investigate functional brain networks derived from EEG signals recorded during immersive VR experiments. We assessed key topological properties of functional brain networks across multiple frequency bands (delta, theta, alpha, beta, gamma, and high gamma) and compared network characteristics between different emotional states (negative, neutral, and positive). Furthermore, we evaluated whether these graph-based features could accurately distinguish between positive and negative emotions using machine learning approaches. Our findings revealed distinct network patterns associated with different emotional states. During negative emotional experiences, we observed two key neural signatures: increased high gamma band activity in the left central region and decreased theta band activity in the occipital region. Conversely, positive emotions were characterized by reduced activity across most frequency bands in the left frontal region. Our machine learning model achieved an average classification accuracy of 79% in differentiating positive and negative emotions using network features that combined graph-theoretical measures and connectivity weights across all frequency bands, with the high gamma band demonstrating particular importance for emotion processing. This study advances our understanding of how brain networks dynamically reorganize during VR-induced emotional experiences and establishes the potential of graph-based EEG features for robust emotion recognition, paving the way for personalized VR applications.

Similar content being viewed by others

Introduction

Emotions are complex states that exert profound influences on our thoughts, behaviors, and well-being1,2,3. Understanding the neural mechanisms underlying emotional experience represents a central pursuit within neuroscience, with significant implications for developing objective emotion recognition systems. Emotion significantly influences cognitive processes, particularly attention, learning, and memory, through complex neural mechanisms. The amygdala plays a crucial role in this interaction by modulating selective attention, often prioritizing emotionally salient stimuli through its connections with cortical regions4. Electroencephalography (EEG) provides a valuable tool for investigating these emotional processes, as it captures the electrical activity of the brain with high temporal resolution, making it particularly suitable for studying the dynamic nature of emotion responses5. EEG-based emotion recognition has gained considerable attention due to the advantages of physiological measures, which are less susceptible to conscious control compared to behavioral or self-report measures6. The rich temporal and spectral information contained in EEG signals offers unique insights into the neural correlates of emotional states, providing an objective foundation for emotion detection and classification systems7,8.

Virtual reality (VR) represents a paradigm shift in emotion induction methodology for neuroscientific research. Traditional EEG studies have predominantly relied on controlled laboratory settings using static stimuli such as emotional images, brief video clips, or auditory cues, which may not fully capture the natural dynamics and complexity of emotions as they occur in real-world scenarios9,10,11. These conventional approaches, while valuable for controlled experimentation, often lack the ecological validity necessary to understand how emotional processing unfolds in naturalistic contexts. However, the advent of immersive VR technology has opened new possibilities for creating immersive and interactive environments that can elicit more ecologically valid emotional responses12. VR offers several distinct advantages over traditional emotion induction methods: First, it enables the reconstruction of diverse, contextually rich scenarios that provoke authentic emotional responses and natural behavioral interactions, providing a more immersive emotional experience than passive stimulus observation13. Second, immersive VR integrates multisensory stimulation, combining visual, auditory, and potentially haptic feedback, to heighten the sense of presence, dramatically increase user engagement, and evoke significantly higher emotional arousal compared to conventional 2D materials14. This multisensory integration more closely approximates real-world emotional experiences, where emotions arise from complex environmental interactions rather than isolated stimuli. Furthermore, VR affords researchers unprecedented experimental control over stimulus timing, spatial viewpoint, and visuo-haptic contingencies while maintaining immersive authenticity. Unlike traditional methods that rely on brief stimulus presentations, VR can sustain emotional engagement over extended intervals through continuous presence and dynamic interaction within the virtual environment14. Neuroimaging evidence demonstrates that immersive 3D VR environments recruit substantially more brain and sensory resources than 2D representations, suggesting more comprehensive neural engagement during emotional processing15.

EEG, a non-invasive technique with high temporal resolution, allows us to measure brain activity during VR experiences16,17,18. By analyzing the dynamic interactions between different brain regions, quantified by functional connectivity (FC), researchers can gain valuable insights into the underlying neural networks associated with various emotional states19,20,21. Functional connectivity analysis has been employed to classify different emotional states based on EEG-based features, demonstrating that emotional states are characterized by distinct patterns of central nervous system connectivity22,23,24. Furthermore, it has been utilized to investigate changes in brain connectivity and activity during emotion regulation, shedding light on the neural mechanisms involved in this process25. Overall, functional connectivity analysis offers a powerful tool for studying the relationship between brain activity and emotional states, providing a more comprehensive understanding of the neural basis of emotions.

Graph-theoretical analysis, when applied to EEG-based functional connectivity, offers a powerful framework for understanding brain communication and interaction during different emotional states. By representing the brain as a complex network of interconnected nodes (brain regions) and edges (functional connections), graph theory enables the extraction of diverse quantitative measures that capture distinct aspects of network organization with specific functional implications, including integration, segregation, and centrality26,27,28. Cao et al.29 demonstrated the utility of this approach by analyzing EEG data using minimum spanning tree (MST)-based graph metrics, revealing valence × arousal–dependent reconfiguration of network topology during emotional processing. This seminal finding underscores why integration, segregation, and centrality measures have become fundamental readouts in contemporary EEG emotion research. Integration quantifies the brain’s capacity for global information transfer and coordination across distributed regions, reflecting how efficiently different brain areas communicate to support complex cognitive and emotional processes. Enhanced integration is associated with improved cognitive flexibility and more adaptive emotional responses, during challenging and novel emotional situations28,30,31. Segregation measures the brain’s ability to form specialized clusters or functional modules that process specific information types independently, thereby reflecting the degree of functional specialization within emotional and cognitive networks. Optimal segregation enables efficient local processing within specialized modules while preserving essential inter-modular communication pathways necessary for integrated emotional experiences32. Centrality identifies key hub regions that play critical roles in network communication, with high-centrality nodes serving as important relay stations that facilitate information flow between different brain regions during emotional processing33. These network properties provide complementary insights into brain function: integration captures the global coordination mechanisms necessary for complex emotional experiences30,31, segregation reveals the organization of specialized processing modules32, and centrality identifies the critical nodes that orchestrate network-wide communication33. Collectively, these measures offer a comprehensive analytical framework for understanding how different brain regions communicate and interact across various emotional states34,35, serving as invaluable tools for elucidating the complex network dynamics underlying the neural basis of human emotions36,37.

This study leveraged the combined power of VR technology and graph-theoretical analysis of EEG-based functional connectivity to investigate emotion recognition. We aimed to address the following key questions: (i) How do brain networks differ across distinct emotional states elicited in VR environments?; (ii) Can we identify specific patterns of network integration, segregation, and centrality associated with different emotions?; (iii) Can graph-based features derived from EEG data accurately distinguish between emotional states, paving the way for improved VR-based emotion recognition systems?

To address these objectives, we analyzed functional brain networks constructed from the VR emotional EEG dataset (VREED)17, focusing on identifying distinctive patterns of network integration, segregation, and centrality associated with negative, neutral and positive emotional states across multiple frequency bands. We systematically compared these network measures across different emotional conditions to elucidate how brain network connectivity changes with emotional state transitions. In addition, we investigated the discriminative potential of these graph-based features for accurately differentiating positive and negative emotions using machine learning approaches. This work advances our understanding of the neural mechanisms underlying emotion processing in VR environments, with implications for developing more personalized and effective VR applications.

Related work

The integration of EEG with VR technology for emotion research has evolved significantly over the past decade, though technical challenges have historically constrained methodological approaches. Early EEG-VR emotion studies were limited by substantial motion artifacts arising from natural head movements required for immersive VR interaction, electromagnetic interference from headset displays and wireless components that degraded signal quality across frequency bands, and synchronization difficulties between VR event timing and neural data acquisition38,39. These technical constraints led most prior research to adopt lower-density EEG configurations, implement restrictive head stabilization protocols that reduced ecological validity, or rely on alternative biosignals such as heart rate variability and galvanic skin response for emotion classification in VR contexts40,41. While some studies successfully demonstrated emotion recognition using basic EEG features in VR environments, the technical limitations prevented comprehensive investigation of complex brain network dynamics underlying VR-induced emotional experiences13. Recent technological advances including wireless high-density EEG systems with improved motion tolerance, active electromagnetic interference cancellation, and hardware-based synchronization protocols have begun to overcome these obstacles, enabling more sophisticated analyses of neural mechanisms during immersive emotional experiences42. However, despite these improvements, a significant gap remains in utilizing high-density EEG combined with advanced network analysis methods such as graph theory to understand brain connectivity patterns associated with emotions in complex VR environments.

Recent advancements in emotion recognition within VR environments have shown promise for understanding emotional responses. For instance, Tabbaa et al.41 demonstrated the potential of multimodal approaches, achieving 71.88% accuracy in emotion classification using eye tracking, electrocardiogram (ECG), and galvanic skin response (GSR). It is important to note that the VREED dataset used in our study is distinct from the one described by Tabbaa et al.41; our dataset was collected independently and features 3D VR video stimuli of Shanghai landmarks, street scenes, and themed events, with EEG recordings and self-reported valence ratings as the primary data sources17. However, EEG signals, known for its high temporal resolution and ability to capture brain activity, were excluded due to technical challenges in VR environments. Several studies have explored emotion recognition in VR environments using EEG, with promising results. For example, Marín-Morales et al.16 reached 75% and 71.21% accuracy for arousal and valence, respectively, with 9-channel EEG and 2-channel ECG using a support vector machine (SVM) classifier. Suhaimi et al.43 focused on low-cost EEG and achieved 97.66% intra-subject classification using a 4-channel EEG setup and support vector machine. However, these studies primarily focused on traditional machine learning algorithms and did not utilize graph-theoretical features of brain networks. Pei et al.18 achieved high accuracy (91.10%) for valence recognition using a network-based approach with 32-channel EEG data based on EEG-based features (e.g., energy spectrum, differential entropy, differential asymmetry, and rational asymmetry). However, the limited number of channels might restrict capturing the full spatial information of brain activity crucial for VR-based emotion recognition. Yu et al.17 introduced a 59-channel EEG dataset (VREED) collected in VR and used graph features for binary emotion classification (positive vs. negative), achieving 73.77% accuracy. While demonstrating the potential of high-density EEG and VR for emotion recognition, their focus on binary classification and limited feature exploration suggests further investigation into broader emotion categories and advanced feature analysis.

While existing research explored emotion recognition in VR using various biosignals and the potential of graph theory for analyzing EEG data, a gap exists in utilizing high-density EEG combined with graph theory for emotion recognition in complex VR environments. This study aims to bridge this gap by (i) leveraging high-density EEG data (i.e., 59-channels) to capture detailed brain activity dynamics; (ii) employing graph-theoretical analysis to investigate the complex brain network interactions associated with different emotions experienced in VR; (iii) aiming for higher accuracy and deep insights into the underlying neural mechanisms compared to previous studies. By addressing these aspects, we seek to elucidate the brain network dynamics associated with diverse emotional states in VR environments and contribute novel insights into classifying and understanding emotions in VR.

Materials and methods

An overview of the study workflow is shown in Fig. 1. Functional connectivity matrices were constructed using pre-processed 59-channel EEG data for six frequency bands. Using a network-based statistic (NBS) approach, significant connections were identified within each of the three emotional states. The minimum spanning tree (MST) algorithm was applied to transform functional connectivity matrices into undirected binary matrices. Graph-theoretical measures from the undirected binary matrices were computed. Analysis of variance (ANOVA) was used to identify differences in graph-theoretical measures across the three emotional states. Finally, binary emotion classification (negative vs. positive) was performed using six different machine learning algorithms, based on network features (connectivity weights and graph-theoretical measures). The classification performance was evaluated using a nested 10-fold cross-validation.

Analytical pipeline for graph-theoretical analysis of EEG-based functional connectivity and emotion recognition. Our study comprises three main steps: (a) Preprocessed 59-channel EEG time-series were recorded from 19 participants during VR-induced emotional experiences. Functional connectivity networks were then constructed using coherence-based measures between all electrode pairs across six frequency bands, namely delta (0.5–4 Hz), theta (4–8 Hz), alpha (8–13 Hz), beta (13–30 Hz), gamma (30–50 Hz), and high gamma (50–80 Hz). (b) Network-based statistic (NBS) was applied to identify significant connectivity differences between emotional states (negative vs. neutral, positive vs. neutral, and negative vs. positive). Functional connectivity matrices were binarized using minimum spanning tree (MST) algorithms to generate undirected binary matrices for graph-theoretical analysis. Key network properties were computed, including node degree, betweenness centrality, eigenvector centrality, global and local efficiency, characteristics path length, clustering coefficient, and small-worldness. Statistical significance of network property differences between emotional states was assessed using ANOVA, followed by post-hoc pairwise comparisons. (c) Graph-theoretical measures identified through statistical analysis served as feature inputs for emotion recognition algorithms. Six machine learning classifiers were evaluated for binary emotion classification (positive vs. negative) using nested 10-fold cross-validation to ensure robust performance assessment.

Dataset

This study utilized the publicly available VREED dataset, which consists of multi-channel EEG signals recorded from 25 participants (mean age = 22.92 years; SD = 1.38 years; 10 females) during emotional stimulation in a 3D VR environment17. All participants in the original study were screened according to standardized protocols to ensure they had normal or corrected-to-normal vision and no history of psychiatric or major medical conditions. The original VREED data collection was conducted by Yu et al.17 and received ethical approval from the Shanghai Ethics Committee for Clinical Research. Written informed consent was obtained from all participants prior to their participation in the original study. All original experimental procedures were performed in accordance with the Declaration of Helsinki and relevant institutional guidelines and regulations. Our current investigation represents a secondary computational analysis of this de-identified, publicly shared dataset. No additional data collection, participant recruitment, or experimental procedures were performed for the present study. All analytical methods employed in this secondary analysis were carried out in accordance with relevant guidelines and regulations for computational research using publicly available neuroimaging data.

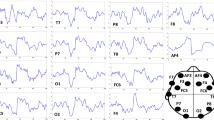

Participants watched a total of sixty 3D VR videos, consisting of Shanghai landmarks (e.g., Oriental Pearl, the Bund), street scenes, and school theme parties. The content distribution varied across emotional categories, with the city promotional videos predominantly appearing and positive sets, videos of natural situations primarily in neutral sets, and certain videos of the horror corridor more frequently in negative sets. All videos were presented using an HTC Vive VR headset. Each video had a duration of 4 s, a resolution of 4096 × 2048, and 30 frames/second frame rate. These videos were divided into three emotional categories, corresponding to positive, neutral, and negative with 20 videos in each category. For each participant, a total of 120 trials were conducted. Each trial consisted of two subsets, each containing 30 videos. One subset comprised 20 positive and 10 neutral videos, while the other subset consisted of 20 negative and 10 neutral videos. Both subsets were displayed two times during the experiment. After viewing each video, participants provided a self-reported valence rating on a 1–9 scale, where higher scores indicate more pleasant experiences27. These individual ratings served as the ground-truth labels for our analysis: ratings below 5 were classified as negative, ratings equal to 5 as neutral, and ratings above 5 as positive. This approach ensures that our emotional classification reflects the actual emotional responses experienced by participants during VR immersion, accounting for individual differences in emotional reactivity to the same content. Brain activity was recorded using 64 electrodes placed according to the international 10–20 systems44. After excluding electrooculogram (EOG) and electromyography (EMG) channels, we focused on the remaining 59 EEG channels for analysis. We chose this setup to capture brain activity across various brain regions, including frontal, central, occipital, left temporal, and right temporal lobes (Fig. 2). EEG signals were continuously recorded for 4 s at a sampling rate of 1000 Hz.

Our study utilized EEG data from a validated, previously published dataset17 where standardized preprocessing had been systematically performed by the original authors using the EEGLab Toolbox45. The original preprocessing pipeline employed established protocols including comprehensive artifact removal procedures targeting ocular artifacts (eye blinks and movements), electromyographic contamination (muscle activity), electrocardiographic interference (cardiac artifacts), and motion-related noise; power line interference removal using notch filtering to eliminate 50 Hz contamination; baseline correction applied to pre-stimulus intervals to normalize signal amplitude; and automated trial rejection based on stringent amplitude thresholds and gradient criteria to ensure signal quality.

We conducted additional quality control verification to ensure data suitability for our network-based analyses, including visual inspection of signal characteristics and validation of preprocessing adequacy for functional connectivity computations required for graph-theoretical analysis. Following quality control, six participants were excluded due to insufficient data quality, resulting in a final dataset of 19 participants (mean age = 22.84 years; SD = 1.50 years; 6 females). The total number of experiments for these 19 participants was 2043 trials (positive = 708, neutral = 639, and negative = 696). Details about the acquisition protocol and processing pipelines were described in the original publication17.

Functional connectivity construction

EEG-based functional connectivity (FC) provides a theoretically motivated approach for capturing the inter-regional communication patterns that underlie emotional processing, which emerge from coordinated activity across distributed brain networks rather than isolated regional activations29. While spectral power measures reveal local neural oscillations within individual regions, FC captures the temporal synchronization between brain regions that is fundamental to complex emotional experiences requiring coordination across multiple brain systems46. This network-level perspective aligns with contemporary understanding of emotions as emergent phenomena arising from dynamic interactions among specialized brain regions36.

The EEG-based FC in this study consists of nodes and edges, represented by the EEG electrodes and the associations between each pair of EEG signals from different channels, respectively26. To quantify these inter-regional connections, we used coherence as our connectivity measure due to its well-established ability to capture phase synchrony between EEG signals – a key indicator of functional communication between brain regions26,47,48. Coherence is particularly suitable for emotion research as it quantifies the consistency of neural oscillations across regions, reflecting the coordinated neural activity that characterizes different emotional states25. Coherence evaluates the consistency of relative amplitude and phase in the frequency domain between two signals x(t) and y(t) for each pair of electrodes, defined as:

where \(C_{XY}\!\left(\lambda\right)\) is a coherence value between x(t) and y(t) at a specific frequency\(\:\lambda\:\) \(\:P_{XX}\!\left(\lambda\right)\) and \(\:P_{YY}\!\left(\lambda\right)\)denote the power spectral density of x(t) and y(t) at frequency\(\:\lambda\:\), respectively. \(\:P_{XY}\!\left(\lambda\right)\) is the cross-power spectral density between signals x(t) and y(t) at frequency \(\:\lambda\:\), providing information about how much power is shared between the two signals across different frequency complements.

Here, we focused on six frequency bands, including delta (0.5–4 Hz), theta (4–8 Hz), alpha (8–13 Hz), beta (13–30 Hz), gamma (30–50 Hz), and high gamma (50–80 Hz). The coherence value ranges between 0 and 1, where higher values indicate stronger FC, reflecting more synchronized neural communication between regions during emotional processing. Each FC matrix had a size of 59 × 59, representing the comprehensive network of pairwise associations between all EEG electrodes, providing a complete characterization of brain-wide connectivity patterns during different emotional states.

Minimum spanning tree

To reduce network complexity and identify significant connections within a weighted undirected network, we applied the minimum spanning tree (MST) algorithm to the FC matrices49. For each FC, we sorted the link weights (connection strengths) in descending order. Using Kruskal’s algorithm50, the edges with the strongest weights were sequentially selected until all nodes were connected with the minimum possible number of edges (E = L – 1, where L is the number of nodes and E is the number of edges), ensuring no cycles exist in the network. We then converted the resulting tree structure into a binary matrix. Connections with weight values became 1 (representing a connection), while others became 0 (no connection). This process resulted in binary FC matrices with an average connection density of 8%, preserving the most significant connection for further graph-theoretical analysis.

Graph-theoretical analysis

To investigate the topological characteristics of brain networks across different emotions and frequency bands, we computed diverse graph-theoretical measures on the binarized FC matrix using the Brain Connectivity Toolbox31. Our selection of FC as the primary metric, rather than spectral power measures, was motivated by substantial theoretical and empirical evidence that emotions emerge from coordinated activity patterns across distributed brain networks rather than localized activations alone36. FC provides a direct measure of inter-regional communication and network coordination that is particularly sensitive to the dynamic, distributed nature of emotional processing36. While spectral power measures reflect local neural oscillatory activity, FC captures the temporal synchronization between brain regions that is fundamental to emotional state representation and regulation46.

Our choice of specific graph-theoretical measures was theoretically driven by established models of brain network organization and emotional processing25. We computed characteristic path length and global efficiency as measures of integration, as these metrics quantify the brain’s capacity for rapid, efficient information transfer across the entire network – a property that is critical for the coordinated emotional responses that involve multiple brain systems51,52. Characteristic path length (L) represents the average shortest path length between all pairs of nodes, reflecting the network’s overall communication efficiency. Lower L values indicate higher network integration, with information able to travel more efficiently between distant brain regions. Global efficiency (Eglob), defined as the average of the inverse shortest path lengths between all nodes, provides a complementary measure of integration. Higher Eglob values indicate greater network integration, reflecting enhanced capacity for global information flow across the entire brain network.

Clustering coefficient and local efficiency were selected as measures of segregation to assess localized organization and information processing capabilities within the network51,52. Clustering coefficient (CC) quantifies the degree to which neighboring nodes are interconnected, reflecting the presence of tightly connected local clusters. Higher CC values indicate greater network segregation, with stronger local clustering that supports specialized processing within brain modules. Local efficiency (Eloc), defined as the inverse of the average shortest path length connecting each node to its neighboring nodes, measures local information processing efficiency. Higher Eloc values indicate enhanced local segregation, reflecting more efficient communication within specialized brain regions or modules.

Centrality measures (node degree, betweenness centrality, and eigenvector centrality) were included to identify key nodes that may serve as critical hubs in emotional processing networks53. Node degree (D) is defined as the number of edges (or connections) that a node has in the network. Higher D values indicate nodes with greater influence through direct connections. Betweenness centrality (BC) quantifies how often a node lies on the shortest paths between other node pairs. Higher BC values indicate nodes that serve as critical bridges facilitating communication between different network modules, while lower BC values suggest nodes that primarily communicate within local clusters. Eigenvector centrality (EC) accounts for both a node’s connectivity and the importance of its connections. Higher EC values identify influential hub nodes connected to other important regions that can influence the entire network, while lower EC values indicate more peripheral nodes with limited network-wide influence.

Finally, small-worldness (SW) was computed as it represents an optimal network architecture for emotional processing, balancing the need for both specialized local processing (high clustering) and efficient global integration (short path lengths)54. SW values above 1 indicate optimal small-world organization with both efficient local clustering and short global communication paths, while values near or below 1 suggest suboptimal network architecture with either excessive randomness or overly regular, grid-like organization. SW is calculated as the ratio between normalized clustering coefficient and normalized characteristic path length, with normalization performed against 1000 randomized networks to control for basic connectivity properties.

This comprehensive set of graph-theoretical measures allows us to characterize different aspects of network topology that are theoretically relevant to emotional processing, providing a multi-dimensional view of how brain network organization varies across emotional states and frequency bands.

Statistical analysis

We compared graph-theoretical measures across the three emotional groups (negative, neutral, positive) using one-way analysis of variance (ANOVA) for each frequency band. When ANOVA revealed significant differences, post-hoc pairwise comparisons using Games-Howell tests were conducted to identify specific group pairs that differed significantly55,56. Multiple comparisons were corrected using the false discovery rate (FDR) approach57. A corrected p < 0.05 was considered statistically significant.

In parallel, we employed the network-based statistic (NBS) toolbox58 to identify significant differences in connected edges (connections) between different emotional states (negative vs. neutral, positive vs. neutral, and negative vs. positive) for each frequency band. NBS is a validated non-parametric method to control for the family-wise error rate (FWER) during mass univariate testing within a network58. This approach identified clusters of connected edges that exhibit significant differences between emotional groups. We computed a t-score for each individual connection, comparing its strength (weight) between two emotional groups (e.g., negative vs. neutral emotion). A threshold was set to identify statistically significant connections. We used a t-score threshold of 4.3, which corresponds to p-value of 1e-5, indicating a strict criterion for selecting highly significant connections. Connected edges exceeding the threshold were grouped into clusters. These clusters represented sets of interconnected brain regions that show significant differences in weighted FC between the groups being compared. To assess the statistical significance of the identified clusters, permutation testing (1000 permutations) was performed to estimate the null distributions of the cluster-level statistics. The p-value from the original NBS analysis was compared to the distribution of p-values obtained from the permutations. This comparison accounted for multiple comparisons and provided a more reliable measure of significance. By combining ANOVA, post-hoc tests, and NBS, we aimed to comprehensively understand how brain network properties and connectivity patterns varied across different emotional states in VR.

Emotion recognition

Machine learning algorithms

We evaluated six different machine learning algorithms that are most commonly used for EEG-based emotion recognition in the literature59,60,61. The models were implemented using the scikit-learn library (version 1.2.0) in Python62. We employed six machine learning algorithms as described below:

-

Support Vector Machine (SVM)59,60,63: This algorithm is capable of performing linear or nonlinear classification. SVM determines the hyperplane representing the decision boundary, and the data samples closest to the hyperplane are called support vectors. SVM can be learned with two parameters: ξ and gamma representing a regularization parameter and kernel coefficient, respectively. ξ determines how many errors in the sample are allowed to make a hyperplane. Gamma controls the flexibility of the hyperplane. Nonlinear classification can be performed using kernel tricks by mapping data into high-dimensional feature spaces using nonlinear kernel functions. In this study, we utilized the radial basis function (RBF) kernel to convert the data points to the higher-dimensional space. The optimal hyperparameters found via grid search are a regularization parameter of 10 and a kernel coefficient of 0.0001.

-

Random Forest (RF)64,65: This is an ensemble learning method based on a decision tree algorithm66 that uses a top-down approach to recursively partition data into smaller subsets based on the values of the input features. Random forest models construct each decision tree using bootstrap samples and select the most significant feature from the subset. The final prediction is determined by voting on all the individual trees. The optimal hyperparameters are the maximum depth of the tree set to 15 and the minimum number of samples required to be at a leaf node set to 3. Additionally, the minimum number of samples required to split an internal node is set to 7, and the number of trees in the forest is set to 200.

-

Extra Trees (ET)64,65: This algorithm combines the advantages of decision trees with additional randomness to improve the generalization and robustness of the model. It operates similarly to random forest. It partitions nodes by randomly selecting features during the construction of decision trees made without bootstrap samples. The optimal hyperparameters are the maximum depth of the tree set to 15 and the minimum number of samples required to be at a leaf node set to 4. Additionally, the minimum number of samples required to split an internal node is set to 4, and the number of trees in the forest is set to 500.

-

Extreme Gradient Boosting (XGBoost)67,68: This is an advanced implementation of the gradient boosting method that applies an ensemble learning technique based on decision trees. The XGBoost algorithm functions by adding decision trees to a model in an iterative way and adjusting the weights assigned to each observation in the training data based on the errors of the previous iteration. The optimal hyperparameters include a subsample ratio of columns at 0.3 and a gamma value (minimum loss reduction) of 0.0001. The learning rate is set at 0.1, with the maximum tree depth set to 20 and 500 trees to be fitted.

-

Multi-Layer Perceptron (MLP)69: An MLP refers to an artificial neural network that consists of multiple layers of artificial neurons. The architecture of this model includes an input layer, hidden layers, and an output layer. In this model, backpropagation is used to modify the weights of the connections between neurons in order to reduce the difference between the predicted label and true label. The optimal hyperparameters are the activation function set to ‘relu’ and the L2 regularization strength (alpha) set to 0.001. The hidden layer sizes are (15, 10, 5), with a constant learning rate. The maximum number of iterations is set to 1000, and the solver for weight optimization is ‘adam’.

-

K-Nearest Neighbors (KNN)59,60,64: This is a non-parametric supervised learning algorithm that is performed by identifying the k closest neighbors of a new instance from the given data. The voting system and distance calculations are utilized to determine the class of the data by examining other nearby data points. In our work, Euclidean distance is employed to compute the similarity between the new instance and existing data. The optimal number of neighbors is set to 5.

Classification framework

In this study, we focused on the binary classification task (negative vs. positive) based on brain network features comprising functional connectivity weights and graph-theoretical measures. Our primary interests lie in differentiating between negative and positive emotions for several theoretical and practical reasons70,71. From a neuroscientific perspective, the valence dimension (positive vs. negative) represents one of the most fundamental and well-established dimensions of emotional experience, forming the core of prominent emotion models such as Russell’s circumplex model72 and serving as a primary organizing principle in affective neuroscience research73,74.

Accurate detection of negative emotions is particularly crucial for VR applications across multiple domains. In therapeutic VR contexts, real-time identification of negative emotional responses enables adaptive interventions and personalized treatment protocols75,76. For VR training and educational applications, detecting negative emotions such as frustration or anxiety allows for dynamic difficulty adjustment and improved learning outcomes77. In entertainment and gaming VR, monitoring emotional valence helps optimize user engagement and prevent adverse experiences that could lead to cybersickness or discontinuation16. Additionally, the binary classification approach provides a robust foundation that can be extended to more complex emotion recognition systems, as positive-negative discrimination often serves as a prerequisite step in hierarchical emotion classification frameworks10.

There were also fewer neutral samples compared to positive or negative samples. In this case, training a multi-class classifier with imbalanced data can lead to poor performance on the minority class. Binary classification can help mitigate this issue. Moreover, training a binary classifier is generally simpler and requires less computational resources compared to a multi-class classifier. To systematically examine the impact of different features on emotion classification performance, we designed a comprehensive comparative analysis using 21 distinct feature sets (Supplementary Table S18). These feature sets were organized according to a 7 (frequency bands) × 3 (feature types) experimental design, where frequency bands included delta (0.5–4 Hz), theta (4–8 Hz), alpha (8–13 Hz), beta (13–30 Hz), gamma (30–50 Hz), high gamma (50–80 Hz), and a concatenated all-band condition. Feature types comprised: (1) connectivity weights, (2) graph-theoretical measures, and (3) concatenated features (combined connectivity and graph measures). The complete set of 21 feature combinations consisted of frequency-specific features (18 sets) derived from six frequency bands with three feature types (connectivity, graph measures, combined), as well as cross-frequency features (3 sets), corresponding to all-band connectivity weights, all-band graph measures, and all-band combined features. This systematic approach enabled comprehensive evaluation of how different aspects of brain network dynamics – ranging from local connectivity patterns to global network topology – contribute to emotion classification across frequency domains.

We reduced the number of features (dimensions) in the brain network data while retaining as much relevant information as possible. Principal component analysis (PCA) is a common technique for linear dimensionality reduction. It projects data into a lower-dimensional space while capturing most of the variance using singular value decomposition (SVD)78. However, PCA might not handle inherently non-linear data effectively. In this study, we used kernel PCA to address the potential non-linearity in the data. The radial basis function (RBF) kernel was chosen to potentially map the data into a high-dimensional space suitable for capturing non-linear relationships. A threshold of 70% explained variance ratio was used to determine the number of principal components to retain. Details regarding the selection process for the kernel function and explained ratio based on performance optimization using the SVM classifier are provided in Supplementary Figure S1. Furthermore, we examined the performance of six machine learning algorithms using a statistically selected feature set (detailed in Sect. 4 of the supplementary material). To ensure a fair comparison, all procedures were identical for all algorithms except for the feature selection process.

We applied the six commonly used classification algorithms separately to each feature set using identical procedures79. Prior to building a model, each measure in the feature set was standardized so that the data have a mean of zero and a standard deviation of one. To ensure robustness and address potential sampling bias from repeated measures, we employed nested 10-fold cross-validation (10F-CV) with stratified sampling on our dataset of 1,404 samples (708 positive and 696 negative) (Fig. 3). Stratified K-Fold cross-validation (shuffle = True) was implemented for both outer and inner folds to preserve class balance across all splits and account for the repeated measures design where each participant contributed multiple trials (120 trials per participant). The dataset was randomly split into 10 equal-sized subsets for the outer cross-validation loop. In each iteration of the outer loop, one of the 10 subsets was used as the test set and the remaining nine subsets were used as the training set. For each iteration of the outer cross-validation loop, we applied the inner 10-fold cross-validation technique on the nine training subsets. The nine training subsets were further divided into 10 equal-sized subsets. The grid search method was used to tune the model’s parameters and select the optimal parameters within the stratified framework. After performing the inner loop and selecting the optimal hyperparameters for each model, we trained a new model using all nine training subsets and then evaluated the performance of the model on the test subset for that iteration of the outer loop. We reported the mean and standard deviation of the performance metrics obtained from the 10 iterations of the outer loop.

A nested 10-fold cross-validation (10F-CV) procedure for binary emotion classification. Our dataset of 1,404 samples (708 positive and 696 negative) was randomly split into 10 equal subsets. Within each outer loop of 10F-CV, nine subsets were used for training, and the remaining one for testing. An inner loop of 10-fold cross-validation with grid search determined optimal model’s hyperparameters for each outer fold. Finally, the trained model from each outer fold was evaluated on the corresponding test set. The final performance was measured by the average accuracy across all folds.

Performance evaluation

The performance of the machine learning models was evaluated using the following evaluation metrics: recall, precision, F1-score, and accuracy, and area under the receiver operating characteristic (ROC) curve (AUC), defined as:

where TP, TN, FN, and FP denote true positives, true negatives, false negatives, and false positives, respectively.

Results

Comparison of graph-theoretical measures between emotional states

Global graph-theoretical measures

Figure 4 shows three graph-theoretical measures (characteristic path length, global efficiency, and small-worldness) across different frequency bands for each emotional state (negative, neutral, and positive). In the high gamma band, we found that Eglob was statistically higher during negative emotional states compared to neutral emotional states (FDR-corrected p-value < 0.05) (Fig. 4b). There were no significant differences in characteristic path length (Fig. 4a) and small-worldness (Fig. 4c) properties among emotional states across all frequency bands. We also observed that all small-worldness values are greater than 1, indicating that the EEG-based brain networks in all frequency bands followed a “small-world” architecture.

Comparison of the graph-theoretical measures among different emotional states for each frequency band. (a) characteristic path length (L), (b) global efficiency (Eglob), (c) small-worldness (SW). Bar and error bar denote the mean and the standard error of the mean (SEM) of graph-theoretical measure for each frequency band across all subjects, respectively. Red star indicates statistically significant differences between emotional groups (p < 0.05) after correcting for multiple comparisons using the FDR method. δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma.

Nodal graph-theoretical measures

We next examined the difference in nodal measures of network topology, corresponding to clustering coefficient, local efficiency, node degree, betweenness centrality, and eigenvector centrality, between different emotional states for each frequency band (Figs. 5, 6 and 7). Topographical maps displaying the average values of a specific graph metric for different frequency bands are provided in Supplementary Figures S2-S6.

Our analysis revealed distinct changes in nodal measures across emotional states and frequency bands. In the high gamma band, we observed decreased clustering coefficient at the C4 channel during both negative and positive emotions compared to neutral states and at the CP6 channel during negative emotions compared to neutral states. Moreover, node degree at the CP5 channel increased significantly during negative emotions compared to neutral states across both gamma and high gamma bands. This pattern was also observed in P3 and FT7 channels for negative emotions compared to neutral and positive states, respectively. Interestingly, the F7 channel showed a decrease in node degree during positive emotions compared to neutral states in the high gamma band. For eigenvector centrality, we observed a decrease at the Oz channel during negative emotions compared to positive states in the high gamma band. However, both CP5 and TP7 channels showed increased eigenvector centrality during negative emotions compared to neutral and positive states, respectively.

Furthermore, specific channels exhibited consistent patterns across all emotional comparisons (Figs. 5, 6 and 7 and Supplementary Figures S18-S20). The O1 channel in the high gamma band showed a decrease in clustering coefficient with the order positive > neutral > negative. Similarly, the PO4 channel in the theta band displayed a decrease in betweenness centrality following the same order. Finally, the O1 channel in the gamma band exhibited a decrease in eigenvector centrality following the order neutral > positive > negative. Detailed information about statistically significant regions (FDR corrected p-value < 0.05) is provided in Supplementary Tables S1-S5.

Topographical distribution of statistically significant differences in graph-theoretical measures between negative and neural emotional states across frequency bands. Each panel represents t-values from paired t-tests comparing specific graph metrics between emotional conditions, with spatial layout corresponding to electrode positions on the scalp. Red coloring indicates regions where negative emotions showed significantly higher values, while blue indicates regions where neutral emotions were predominant. Only electrodes achieving statistical significance (p < 0.05) are displayed. Frequency bands: δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma. Graph-theoretical measures: CC: clustering coefficient, Eloc: local efficiency, BC: betweenness centrality; D: node degree; EC: eigenvector centrality.

Topographical distribution of statistically significant differences in graph-theoretical measures between positive and neural emotional states across frequency bands. Each panel represents t-values from paired t-tests comparing specific graph metrics between emotional conditions, with spatial layout corresponding to electrode positions on the scalp. Red coloring indicates regions where positive emotions showed significantly higher values, while blue indicates regions where neutral emotions were predominant. Only electrodes achieving statistical significance (p < 0.05) are displayed. Frequency bands: δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma. Graph-theoretical measures: CC: clustering coefficient, Eloc: local efficiency, BC: betweenness centrality; D: node degree; EC: eigenvector centrality.

Topographical distribution of statistically significant differences in graph-theoretical measures between negative and positive emotional states across frequency bands. Each panel represents t-values from paired t-tests comparing specific graph metrics between emotional conditions, with spatial layout corresponding to electrode positions on the scalp. Red coloring indicates regions where negative emotions showed significantly higher values, while blue indicates regions where positive emotions were predominant. Only electrodes achieving statistical significance (p < 0.05) are displayed. Frequency bands: δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma. Graph-theoretical measures: CC: clustering coefficient, Eloc: local efficiency, BC: betweenness centrality; D: node degree; EC: eigenvector centrality.

Comparison of connectivity weights using network-based statistics

We used NBS to identify significant changes in FC across three emotional states in six frequency bands (Fig. 8). In the high gamma band, we observed the most prominent changes in FC, especially during negative emotions. Compared to neutral and positive states, negative emotions led to decreased connections between the right central and the left occipital regions, while increasing connections between the left and the right central regions. Decreased connectivity in the left frontal region was observed consistently across multiple frequency bands (beta, gamma and high gamma) during positive emotions compared to neutral emotions. Detailed information about the significant connections identified across three emotional states is provided in Supplementary Table S6.

Comparison of the network connections between different emotional states using the network-based statistic (NBS) analysis. A total of 271 significant connections shows altered connectivity across various frequency bands. Significant connections between different emotional states (negative vs. neutral, positive vs. neutral, and negative vs. positive) for each frequency band are visualized. Red line indicates increased connectivity, and blue line represents decreased connections between different emotional states. δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma.

EEG-based emotion recognition

We investigated the use of brain network features for classifying positive and negative emotions. We employed six machine learning algorithms and analyzed 21 different feature combinations including connectivity weights and graph-theoretical measures derived from brains networks. Table 1 presents the classification performance SVM algorithm on the binary classification (negative vs. positive). In Fig. 9, the confusion matrix of binary emotion classification is displayed for the SVM algorithm. Classification performance of the other five machine learning algorithms is provided in Supplementary Tables S7-S11 and Supplementary Figures S7-S11. Features from the gamma and high gamma bands consistently outperformed other frequency bands (delta, theta, alpha, and beta) across all algorithms and feature types.

Connectivity weight features achieved higher accuracy than graph-theoretical measures in most frequency bands and algorithms. Among algorithms tested, the support vector machine (SVM) algorithm achieved the best performance of 79.01% accuracy (recall = 77.46%; F1-score = 78.78%; Precision = 80.36%; AUC = 79.02%). Notably, this performance was achieved by combining features from all frequency bands and both feature types (connectivity weights and graph-theoretical features). Classification performance of six machine learning algorithms using a statistically selected feature set is provided in Supplementary Tables S12-S17 and Supplementary Figures S12-S17.

Confusion matrices of the binary emotion classification (positive vs. negative) by support vector machine (SVM) algorithm for each feature set (row: feature type, column: frequency band). In confusion matrix, row and column indicate the predicted class and the true class, respectively. Concat (band) refers to features combined across all frequency bands (δ, θ, α, β, γ, High-γ) for the same feature type. Concat (feature) denotes within-band concatenation of connectivity weights and graph-theoretical measures. δ: delta; θ: theta; α: alpha; β: beta; γ: gamma; High-γ: high gamma.

Discussion

This study examined the topological characteristics of EEG-based functional brain networks during VR-induced emotional states using graph-theoretical analysis and machine learning classification. Our investigation yielded three primary findings that illuminate the neural basis of emotion processing in virtual environments. First, we identified emotion-specific network alterations: negative emotions increased high gamma activity in left central regions while decreasing theta activity in occipital areas, whereas positive emotions primarily reduced activity across frequency bands in left frontal regions. Second, our machine learning analysis demonstrated that network-based features effectively differentiate emotional states, achieving 79% classification accuracy, exceeding previous VREED dataset benchmarks by 3–6%. Third, high gamma band connectivity emerged as particularly informative for emotion discrimination, suggesting the importance of high-frequency neural oscillations in emotional processing. These results establish brain network topology as a viable biomarker for emotion recognition in VR contexts and provide new insights into the frequency-specific neural mechanisms underlying immersive emotional experiences.

Brain network efficiency and small-worldness during emotional experience in VR

Our findings revealed a higher global efficiency (Eglob) in the high gamma band during negative emotional states, compared to neutral states (Fig. 4). Global efficiency reflects the overall efficiency of information processing within a network26,80. A higher Eglob in the high gamma band during negative emotions suggests that negative stimuli trigger increased information flow and interconnectedness within brain networks, potentially reflecting elevated information processing and emotional engagement. Our results regarding the high gamma band activity and negative emotions are consistent with previous studies, supporting the notion that high-frequency brain activity plays a crucial role in negative emotional processing23,81. Prior studies have demonstrated that the high gamma band is associated with the cognitive control of emotions and that it plays a significant role in the processing of affective stimuli, especially in response to negative and positive emotional states81,82. Moreover, the high gamma band EEG signals have been found to be more sensitive and effective in emotion analysis than signals from other frequency bands81. Interestingly, the analysis of brain networks constructed from EEG data in this study revealed small-worldness values greater than 1 across all frequency bands and emotional states. The small-world architecture is considered optimal for efficient information processing. Local clustering allows for specialized processing within the brain regions, while short path lengths enable communication and integration across different brain areas83. Small-world networks are known to be crucial for efficient brain function, enabling rapid information transfer and integration across different brain regions84. Our findings highlight an essential property of brain networks during emotional experiences in VR, suggesting an efficient and organized way for brain regions to communication and integrate information85.

Local graph measures reveal specific brain region involvement in emotional processing

We examined how emotional states influence specific properties of individual nodes within the brain networks across different frequency bands. Notably, the high gamma band showed the most prominent changes in nodal measures between emotions (negative vs. neutral, positive vs. neutral, negative vs. positive). Compared to neutral states, negative emotions exhibited increased centrality (D, EC) in the left central region (CP3, CP5, P3), suggesting these areas act as hubs during negative experiences (Fig. 5)86. While our EEG analysis focuses on scalp-level network properties, it is important to consider the underlying neural generators that may contribute to these observed patterns. The increased centrality in left central regions during negative emotions may reflect, in part, the influence of deeper limbic structures involved in emotional processing. Previous neuroimaging studies have consistently demonstrated left amygdala activation in response to negative emotional stimuli87, highlighting its crucial role in processing aversive emotions and emotional regulation. Although EEG cannot directly localize subcortical activity, the enhanced connectivity and centrality patterns we observe in overlying cortical regions may represent the network-level manifestation of coordinated limbic-cortical interactions during negative emotional states. This interpretation aligns with established models of emotion processing that emphasize the distributed nature of emotional networks involving both cortical and subcortical components36,37. Conversely, the posterior regions (POz, PO4) showed decreased segregation (CC, LE) and centrality (BC, D, EC) in the theta band (Fig. 5). This aligns with previous studies linking theta desynchronization with negative emotions and potential selective inhibition mechanisms88,89. Positive emotions also revealed specific network changes, primarily in the high gamma band. Decreased segregation (CC) in the right central region (C4) and decreased centrality (D) in the left frontal region (F7) suggests altered network dynamics associated with positive emotions (Fig. 6). These regions align with previous studies on emotion classification, highlighting the potential for network-based approaches to decoding emotional states in VR17. Overall, the analysis of nodal measures demonstrates the intricate relationship between emotions and the organization of brain networks at the levels of individual nodes. The observed changes suggest that emotional states can influence the information that is processed and integrated within the brain.

Functional connectivity highlights emotion-specific network reconfiguration

We analyzed functional connectivity between brain regions using NBS to identify significant changes in connections across different emotional states and frequency bands (Fig. 8). The most prominent changes in FC were observed in the high gamma band, particularly during negative emotions compared to neutral and positive states. Our results showed decreased connections between the right central and left occipital regions, potentially indicating reduced communications between motor and visual areas during negative emotions. We also found increased connections between the left and right central regions, suggesting a possible strengthening of communications within motor processing areas. These findings align with previous research showing similar dense connections in the gamma band for emotional processing23,81. Furthermore, positive emotions displayed a consistent pattern of decreased connectivity in the left frontal regions across multiple frequency bands (beta, gamma, and high gamma) compared to neutral emotions. These findings suggest a potential dampening of activity in the left frontal lobe during positive emotional states. This supports the well-established role of the frontal region in emotion perception and recognition, as documented in prior studies90,91,92. Our findings on functional connectivity changes shed light on the dynamic nature of brain network communication during emotional processing. The observed patterns suggest that negative and positive emotions influence the brain’s functional architecture in distinct ways, potentially leading to altered information flow and processing strategies. In both local graph measures and functional connectivity, significant changes in negative emotions are more prominent than in positive emotions (Figs. 5, 6 and 8). These findings resonate with prior research highlighting a stronger impact of negative valence on various cognitive and emotional processes90. This suggests that VR experiences may evoke stronger neural responses in the left central and frontal regions during negative emotional states compared to positive ones.

Emotion classification in VR using brain networks

We explored the effectiveness of brain network features in classifying positive and negative emotions. We conducted a comprehensive evaluation of SVM algorithm using graph-theoretical measures and connectivity weights on the binary emotion (negative vs. positive) classification task (Table 1; Fig. 9). Our results showed that features from the gamma and high gamma bands consistently yielded superior classification performance compared to other frequency bands (delta, theta, alpha and beta), which suggests that these higher frequency bands may carry crucial information for differentiating positive and negative emotions. Moreover, connectivity weight features outperformed graph-theoretical measures in most frequency bands and for most algorithms. These indicate that information about the strength of connections between brain regions might be more informative than overall network properties for emotion recognition. The SVM algorithm achieved high performance (79% accuracy) on the VREED dataset in classifying positive and negative emotions (Table 1; Fig. 9). The highest accuracy was achieved by combining features from all frequency bands and utilizing both connectivity weights and graph-theoretical features. These results suggest that a comprehensive approach incorporating various aspects of the brain network is beneficial for emotion classification. Importantly, our approach outperformed previous studies on the same VREED dataset, which typically achieved accuracies of 73–76% using different feature types such as spectral power and differential entropy17,93, representing a substantial improvement of 3–6% in classification accuracy.

Limitations, methodological considerations, and future work

This study has several methodological and technical limitations that warrant careful consideration. First, our analysis relied on a discrete emotional categorization (positive, negative, neutral), which may oversimplify the complex and continuous nature of human emotional experiences. While this categorization enabled clear experimental design and analysis, future work would benefit from adopting more nuanced frameworks, such as the Circumplex Model of Affect, to better capture the full spectrum of emotional complexity72. Another technical limitation lies in our use of 4-second EEG segments from the VREED dataset17 compared to longer durations (e.g., 60 s) used in established datasets like DEAP10. Although our approach aligns with the requirements of real-time emotion recognition, these shorter segments may not fully capture the temporal dynamics of emotional states, potentially limiting our ability to observe emotion evolution over time, detect subtle emotional transitions, and ensure statistical robustness of extracted features. Furthermore, the shorter segment duration reduced the effective sample size, which may constrain the statistical power to detect subtle effects, limit the generalizability of findings across different populations, and hinder the ability to account for individual variations in emotional expression. Future research should address these issues by developing VR-based EEG datasets with longer recording durations, adopting continuous emotion assessment frameworks, incorporating multimodal data to complement EEG measurements, and expanding the demographic range of participants.

A key methodological consideration is the potential influence of volume conduction on coherence-based measures, where signals from a single source can create spurious connectivity estimates between spatially proximate electrodes. To mitigate these effects, we implemented frequency-specific coherence analysis (as volume conduction is typically broadband while neural coupling is frequency-specific), applied average reference to reduce common signal contributions, and focused on relative condition differences rather than absolute values to minimize uniform biases. The systematic variation of connectivity patterns across emotional states and frequency bands suggests our findings reflect genuine functional changes beyond volume conduction artifacts, as volume conduction would remain constant across conditions within sessions. However, we acknowledge this limitation and note that future studies could benefit from volume conduction-resistant measures such as imaginary coherence, phase lag index (PLI), or weighted phase lag index (wPLI), which exclude zero-lag correlations most susceptible to these effects.

Finally, this study’s reliance on traditional machine learning approaches presents another limitation, as it does not leverage the potential advantages of more recent deep learning architectures. Although our chosen methods effectively supported our research objectives focusing on functional connectivity and graph-theoretical analysis, modern algorithms such as convolutional neural networks (CNNs)94,95,96,97, long short-term memory networks (LSTMs)98,99,100, and graph neural networks (GNNs)101,102 may offer enhanced capabilities for capturing complex temporal patterns in EEG data. Future studies should explore these advanced architectures to achieve higher classification accuracy and better characterize the dynamic nature of emotional states in EEG data.

Arousal and content type limitations

An important limitation of our study is the lack of systematic control for arousal dimensions and potential confounding effects from VR content types. Our emotion classification was based solely on valence ratings (1–9 pleasantness scale) without corresponding arousal assessments. According to circumplex models of emotion, valence and arousal constitute independent dimensions that jointly define emotional experiences72. Emotions with similar valence but different arousal levels (e.g., contentment vs. excitement) may engage distinct neural networks, potentially introducing heterogeneity within our emotional categories.

The VR content encompassed three distinct categories, including the city promotional film, natural situations, and horror corridor, which likely differ in both arousal levels and cognitive processing demands. Horror corridor content may inherently evoke higher arousal due to dynamic human interactions, while the city promotional film might elicit lower arousal contemplative responses despite similar positive valence. Additionally, content types were not systematically balanced across emotional categories, with social scenes predominantly in positive sets and certain urban environments more frequently in negative sets. This imbalanced distribution may have confounded content-specific neural responses with emotion-specific patterns.

Our classification approach collapsed across potentially different arousal levels within each valence category, which may have introduced noise. High-arousal positive emotions (excitement from social scenes) and low-arousal positive emotions (serenity from landmarks) likely engage different connectivity patterns despite similar valence ratings. Future VR-EEG emotion studies should implement two-dimensional rating systems capturing both valence and arousal, systematically balance content types across emotional categories, and consider separate analyses for different content domains to disentangle emotion-specific from content-specific neural signatures.

Conclusion

Our study represents an important step forward in the interdisciplinary exploration of emotional neuroscience within VR by introducing a comprehensive graph-theoretical approach to understanding brain network dynamics. Unlike traditional emotion research confined to controlled laboratory settings, we leveraged immersive VR to examine neural responses across multiple frequency bands. We identified distinct patterns associated with different emotions states: negative emotions triggered increased network connectivity in the left central region, especially in high-frequency bands, while positive emotions decreased network connectivity in the left frontal region over most frequency bands. The novel contribution of our research lies not only in identifying distinct network connectivity patterns for different emotional states but also in demonstrating the potential of graph-based features for emotion classification. By achieving a 79% accuracy in differentiating emotional states using network topology, we challenge existing paradigms and open new possibilities for emotion recognition technologies. Moreover, our methodological framework represents a critical bridge between neuroimaging techniques, complex network analysis, and machine learning, providing a robust and innovative methodology that extends beyond traditional emotion research. The findings demonstrate the transformative potential of integrating advanced computational techniques with neuroimaging, offering a sophisticated lens to decode the intricate neural mechanisms underlying emotional experiences in immersive environments.

Data availability

This study is based on secondary analysis of the publicly available VREED dataset originally published by Yu et al. [17]. The VREED dataset is available from Dr. Yingjie Li (liyj@i.shu.edu.cn), the corresponding author of the original publication, upon reasonable request and subject to data usage agreements. All data used in this study were obtained in accordance with the original dataset’s terms of use and ethical approval.

Code availability

The code supporting this study is available at https://github.com/khu-aims/VR-EEG-code.

References

Panksepp, J. Affective Neuroscience: the Foundations of Human and Animal Emotions (Oxford University Press, 2004).

Scherer, K. R. Psychological models of emotion. Neuropsychol. Emot. 137, 137–162 (2000).

Suhaimi, N. S., Mountstephens, J. & Teo, J. EEG-based emotion recognition: A state-of-the-art review of current trends and opportunities. Computational Intell. Neuroscience. 2020, 8875426 (2020).

Tyng, C. M., Amin, H. U., Saad, M. N. & Malik, A. S. The influences of emotion on learning and memory. Front. Psychol. 8, 235933 (2017).

Kamble, K. & Sengupta, J. A comprehensive survey on emotion recognition based on electroencephalograph (EEG) signals. Multimedia Tools Appl. 82, 27269–27304. https://doi.org/10.1007/s11042-023-14489-9 (2023).

Kunaharan, S., Halpin, S., Sitharthan, T. & Walla, P. Do EEG and startle reflex modulation vary with Self-Reported aggression in response to violent images? Brain Sci. 9, 298 (2019).

Cao, L., Yu, B., Dong, Y., Liu, T. & Li, J. Convolution spatial-temporal attention network for EEG emotion recognition. Physiol. Meas. 45 https://doi.org/10.1088/1361-6579/ad9661 (2024).

Liu, J., He, L., Chen, H. & Jiang, D. Directional Spatial and spectral attention network (DSSA Net) for EEG-based emotion recognition. Front. Neurorobotics. 18–2024 https://doi.org/10.3389/fnbot.2024.1481746 (2025).

Zheng, W. L. & Lu, B. L. Investigating critical frequency bands and channels for EEG-based emotion recognition with deep neural networks. IEEE Trans. Auton. Ment. Dev. 7, 162–175 (2015).

Koelstra, S. et al. Deap: A database for emotion analysis; using physiological signals. IEEE Trans. Affect. Comput. 3, 18–31 (2011).

Jenke, R., Peer, A. & Buss, M. Feature extraction and selection for emotion recognition from EEG. IEEE Trans. Affect. Comput. 5, 327–339 (2014).

Alcañiz, M., Baños, R., Botella, C. & Rey, B. The EMMA project: emotions as a determinant of presence. PsychNology J. 1, 141–150 (2003).

Mousavi, S. M. H. et al. in CEUR Workshop Proceedings. 1–20 (Rheinisch-Westfaelische Technische Hochschule Aachen* Lehrstuhl Informatik V).

Tian, F., Hua, M., Zhang, W., Li, Y. & Yang, X. Emotional arousal in 2D versus 3D virtual reality environments. PLOS ONE. 16, e0256211. https://doi.org/10.1371/journal.pone.0256211 (2021).

Shin, D. Empathy and embodied experience in virtual environment: to what extent can virtual reality stimulate empathy and embodied experience? Comput. Hum. Behav. 78, 64–73. https://doi.org/10.1016/j.chb.2017.09.012 (2018). https://doi.org/https://doi.

Marín-Morales, J. et al. Affective computing in virtual reality: emotion recognition from brain and heartbeat dynamics using wearable sensors. Sci. Rep. 8, 13657 (2018).

Yu, M. et al. EEG-based emotion recognition in an immersive virtual reality environment: from local activity to brain network features. Biomed. Signal Process. Control. 72, 103349 (2022).

Pei, G., Fan, C., Li, T., Jin, J. & Wang, R. in Proceedings of the 2023 7th International Conference on Digital Signal Processing. 8–15.

Weathersby, F. L., King, J. B., Fox, J. C., Loret, A. & Anderson, J. S. Functional connectivity of emotional well-being: overconnectivity between default and attentional networks is associated with attitudes of anger and aggression. Psychiatry Research: Neuroimaging. 291, 52–62 (2019).

Chen, C. et al. Fusing frequency-domain features and brain connectivity features for cross-subject emotion recognition. IEEE Trans. Instrum. Meas. 71, 1–15 (2022).

Liu, X. et al. Emotion recognition and dynamic functional connectivity analysis based on EEG. IEEE Access. 7, 143293–143302 (2019).

Lee, Y. Y. & Hsieh, S. Classifying different emotional States by means of EEG-based functional connectivity patterns. PloS One. 9, e95415 (2014).

Li, P. et al. EEG based emotion recognition by combining functional connectivity network and local activations. IEEE Trans. Biomed. Eng. 66, 2869–2881 (2019).

Zhang, Y., Yan, G., Chang, W., Huang, W. & Yuan, Y. EEG-based multi-frequency band functional connectivity analysis and the application of spatio-temporal features in emotion recognition. Biomed. Signal Process. Control. 79, 104157 (2023).

Roshanaei, M., Norouzi, H., Onton, J., Makeig, S. & Mohammadi, A. EEG-based functional and effective connectivity patterns during emotional episodes using graph theoretical analysis. Sci. Rep. 15, 2174. https://doi.org/10.1038/s41598-025-86040-9 (2025).

Ismail, L. E. & Karwowski, W. A graph theory-based modeling of functional brain connectivity based on eeg: A systematic review in the context of neuroergonomics. IEEE Access. 8, 155103–155135 (2020).

Jalili, M. & Knyazeva, M. G. EEG-based functional networks in schizophrenia. Comput. Biol. Med. 41, 1178–1186 (2011).

Lee, W. H., Bullmore, E. & Frangou, S. Quantitative evaluation of simulated functional brain networks in graph theoretical analysis. NeuroImage 146, 724–733 (2017).

Cao, R. et al. EEG functional connectivity underlying emotional Valance and arousal using minimum spanning trees. Front. NeuroSci. 14–2020 https://doi.org/10.3389/fnins.2020.00355 (2020).

Bullmore, E. & Sporns, O. Complex brain networks: graph theoretical analysis of structural and functional systems. Nat. Rev. Neurosci. 10, 186–198. https://doi.org/10.1038/nrn2575 (2009).

Rubinov, M. & Sporns, O. Complex network measures of brain connectivity: uses and interpretations. Neuroimage 52, 1059–1069 (2010).

Sporns, O. & Betzel, R. F. Modular brain networks. Annu. Rev. Psychol. 67, 613–640. https://doi.org/10.1146/annurev-psych-122414-033634 (2016).

van den Heuvel, M. P. & Sporns, O. Network hubs in the human brain. Trends Cogn. Sci. 17, 683–696. https://doi.org/10.1016/j.tics.2013.09.012 (2013).

Kragel, P. A. & LaBar, K. S. Decoding the nature of emotion in the brain. Trends Cogn. Sci. 20, 444–455. https://doi.org/10.1016/j.tics.2016.03.011 (2016).

Lindquist, K. A., Wager, T. D., Kober, H., Bliss-Moreau, E. & Barrett, L. F. The brain basis of emotion: a meta-analytic review. Behav. Brain Sci. 35, 121–143. https://doi.org/10.1017/s0140525x11000446 (2012).

Pessoa, L. A network model of the emotional brain. Trends Cogn. Sci. 21, 357–371. https://doi.org/10.1016/j.tics.2017.03.002 (2017).

Barrett, L. F. & Satpute, A. B. Large-scale brain networks in affective and social neuroscience: towards an integrative functional architecture of the brain. Curr. Opin. Neurobiol. 23, 361–372. https://doi.org/10.1016/j.conb.2012.12.012 (2013).