Abstract

Diabetes is one of the major health challenges in today’s world, since chronic elevation of blood sugar can cause serious and sometimes irreparable damage to organs such as the heart, kidneys, and nervous system. Early detection of this disease plays a vital role in reducing its complications. However, machine learning and deep learning models often face distrust in medical settings due to their opaque, “black-box” nature. The aim of this study was to combine three machine learning algorithms using stacking and voting methods to propose a model for type 2 diabetes detection, and to increase transparency by using the explainability techniques LIME and SHAP to identify important features. This study used medical data from 768 Pima Indians Diabetes samples, including 8 features such as age, BMI, glucose, insulin, blood pressure, skin thickness, pregnancies, and family history. Data preprocessing included mean imputation for missing or zero values, Min–Max normalization, and classification into “Normal”, “Prediabetes”, and “Diabetes” based on fasting glucose thresholds. Feature selection was performed using Spearman correlation to retain the most relevant variables. A hybrid machine learning model was developed using three base models Neural Network (NN), k-Nearest Neighbors (KNN), and Random Forest (RF) with automated hyperparameter tuning. The outputs of these models were combined via stacking using a logistic regression (LR) meta-model and in parallel using a soft voting method. Nested cross-validation (5 outer and 5 inner folds) was applied to prevent data leakage and ensure robust evaluation. Model interpretability was assessed using LIME for local explanations and SHAP for global feature importance. Decision thresholds and influential feature regions were identified, and model calibration and decision curves evaluated clinical reliability. Models performance was evaluated using accuracy, precision, recall, specificity, F1-score, AUROC, Brier Score (1–B), and Expected Calibration Error (1–E). Statistical reliability was assessed using bootstrap resampling to compute 95% confidence intervals, as well as paired tests to compare the hybrid model with the base models and voting ensemble. Based on the evaluation metrics, the stacking ensemble achieved perfect performance for Class 0, with 100% accuracy, precision, recall, specificity, F1 score, and AUROC, alongside the highest calibration metrics (Brier Score: 99.9, ECE: 98.7). The Random Forest model also excelled, achieving 100% accuracy, precision, recall, specificity, and F1 score for Class 0 and Class 2. In contrast, the KNN model consistently underperformed, particularly for Class 0 (F1: 83.3, Precision: 83.3, Recall: 83.3). The Neural Network demonstrated strong recall for Class 0 (100%), while the voting ensemble showed balanced results but was slightly outperformed by the top ensemble methods. Explainable AI analyses using LIME and SHAP revealed that glucose was the most influential predictor for identifying the Pre-diabetes state. Both methods consistently identified a decision band between 0.35 and 0.47 (corresponding to 100–125 mg/dL) as the transition zone between “Normal” and “Prediabetes”, confirming the model’s alignment with WHO/ADA diagnostic criteria. The stacking model achieved perfect performance and superior calibration, outperforming all other models in type 2 diabetes prediction and classification. Explainability techniques (LIME and SHAP) identified glucose level, body mass index, and blood pressure as key predictive factors. This approach provides an accurate and interpretable tool for clinical decision support in healthcare systems.

Similar content being viewed by others

Introduction

Diabetes is a metabolic disorder that causes elevated blood glucose levels due to insulin resistance or insufficient insulin production. Insulin deficiency prevents glucose uptake and leads to increased blood glucose levels, which can cause long-term damage to organs, including damage to the eyes, kidneys, nerves, heart, and blood vessels1.According to International Diabetes Federation (IDF) statistics, diabetes affected approximately 537 million adults worldwide as of 20212. This number is predicted to rise to 643 million by 2030 and 783 million by 20453. Diabetes can be classified into three distinct types: type 1, type 2, and gestational diabetes. Type 1 diabetes is usually diagnosed in young people and is characterized by insufficient insulin production. Type 2 diabetes typically affects adults between 45 and 60 years of age and results from metabolic disorders that increase blood glucose levels. Gestational diabetes occurs during pregnancy due to hormonal changes that raise blood glucose levels4. Moreover, diabetes affects about 7% of pregnancies each year and poses life-threatening risks to both mother and fetus5. Type 2 diabetes is a chronic condition which, if left uncontrolled, may lead to serious and disabling complications. Key issues include cardiovascular diseases (such as heart attack and stroke), kidney failure, diabetic retinopathy and blindness, peripheral neuropathy, diabetic foot ulcers, and potential limb amputations. The disease also exacerbates high blood pressure, obesity, and elevated cholesterol levels, significantly reducing patients’ quality of life6,7,8. If diabetes is not diagnosis in its early stages, it may lead to kidney failure, diabetic retinopathy, or other eye diseases. Given the increasing prevalence of diabetes, particularly type 2, the need for early and accurate diagnostic methods for better disease control is more pressing than ever. Recent advances in artificial intelligence (AI) have produced notable success across various domains such as medical image analysis, disease detection, and classification9.One method for early disease detection of diabetes is the application of machine learning and deep learning techniques10,11.

Machine learning (ML) and artificial intelligence (AI) models have high potential for developing personalized predictive systems for diabetes. Researchers have employed machine learning and data mining techniques in various diabetes-related areas12,13, including identifying diagnostic and predictive factors in diabetes onset, predicting diabetes incidence, analyzing diabetic complications, developing drugs and treatments related to diabetes, and investigating the influence of genetic and environmental factors on the onset and progression of the disease14. By analyzing vast amounts of diabetes-related data, machine learning models can transform raw data into valuable knowledge and open new pathways for prognosis, diagnosis, and more effective treatment of diabetes15,16. Deep neural networks (DNNs) can also automatically identify complex patterns from clinical and imaging data related to diabetes17.With multiple linear and nonlinear layers, these networks have strong capabilities in data processing and classification and can provide highly accurate performance in detecting, identifying, and predicting diabetic complications such as retinopathy or neuropathy. In addition, the DNN structure allows models trained on existing data to generalize results to new patients and, together with other deep learning models like CNNs or RNNs, can be used to improve prediction accuracy and screening.

Explainable AI (XAI) enhances the transparency and trustworthiness of neural networks (NNs) and machine learning (ML) models in type 2 diabetes care by elucidating how input features influence outcomes thereby supporting both diagnosis and treatment. For instance, DeepNetX2, a DNN-based diagnostic model, integrates XAI methods such as LIME and SHAP to make prediction mechanisms interpretable while maintaining high accuracy (up to ~ 97%) across multiple datasets18. Similarly, combining AutoML with XAI for diabetes risk prediction using techniques like SHAP, LIME, integrated gradients, and counterfactual analysis enables clinicians to understand and trust predictive factors such as glucose levels and BMI19.

According to preliminary reviews by the present researchers, no study to date has used a transparent ensemble learning for type 2 diabetes prediction and classification by combining three machine learning models via stacking and voting methods and employable explainability techniques LIME and SHAP to identify important features. In some of these studies, only neural network -based algorithms such as multilayer perceptron (MLP)20, DNN21, and conventional machine learning models (CML)22 have been used. Therefore, the aim of this study is to develop a transparent and explainable hybrid model for predicting type 2 diabetes in which three machine learning algorithms RF, KNN, and NN are combined using stacking and voting methods. Also, to increase model interpretability and gain the trust of clinical experts, the explainability techniques LIME and SHAP are used to identify and analyze the features that affect disease diagnosis.

Method

Dataset

In this study, the type 2 diabetes prediction dataset was collected from the Pima Indians Diabetes database. This dataset includes 768 samples and 8 features such as age, body mass index, skin thickness, blood pressure, glucose, insulin, pregnancies, and family history of diabetes. Figure 1 shows the number of samples based on the three classes: “Normal”, “Prediabetes”, and “Diabetes”.

Figure 2 illustrates the percentage of each class (“Normal”, “Prediabetes”, and “Diabetes”) across the overall dataset and the training, validation, and test splits. The distribution remains consistent, preserving the real-world imbalance for a realistic evaluation. As shown in Fig. 2, the imbalance is maintained across all data splits as follows:

-

Overall dataset 23.57% of the samples belong to the “Normal” class, 35.68% to the “Pre-diabetes” class, and 40.76% to the “Diabetes” class.

-

Training set 23.62% of instances are “Normal”, 35.67% are “Pre-diabetes”, and 40.72% are “Diabetes”.

-

Validation set 23.38% of cases correspond to “Normal”, 35.06% to “Pre-diabetes”, and 41.56% to “Diabetes”.

-

Test set 23.38% of samples are “Normal”, 36.36% are “Pre-diabetes”, and 40.26% are “Diabetes”.

Data preprocessing

In this study, several preprocessing techniques such as initial transformation, data cleaning, and missing-value handling were applied. Initially, the dataset contained various physiological features and medical tests. Based on glucose level, a new “output” column was introduced and individuals were classified into “Normal”, “Prediabetes”, and “Diabetes” categories according to the American Diabetes Association study15. According to the criteria, if fasting glucose is less than 100 mg/dL, the status is considered normal. If fasting glucose is between 100 and 125 mg/dL, it is classified as prediabetes. If fasting glucose is 126 mg/dL or higher, it is classified as diabetes. As a precaution, low glucose levels (i.e., less than 70 mg/dL) were also classified as “Diabetes”. Table 1 lists the diabetes diagnostic standards mentioned by the American Diabetes Association (AMA).

After categorizing the data into “Normal”, “Prediabetes”, and “Diabetes”, data cleaning was performed. To clean the data, impractical zero values were identified for several features such as glucose level, blood pressure, insulin, body mass index, and age. These zero values could potentially distort our analysis and model predictions. To address this issue, a systematic approach was applied by replacing these zero values with the mean of each feature. Exploratory analysis indicated that these variables (e.g., glucose, blood pressure, BMI, insulin, age) exhibited near-symmetric distributions, making mean imputation statistically appropriate. The mean imputation strategy was also selected to ensure methodological consistency with prior baseline clinical studies on the Pima dataset, which employed mean replacement for continuous attributes. Mean replacement preserves the global central tendency without distorting scale distribution an important consideration when using parametric classifiers. The mean value for each feature was computed according to Eq. (1):

-

U: mean of the feature

-

Xi: value of feature x for the i-th sample

-

n: total number of samples in the dataset

Next, data normalization was performed. After replacing zero values with the mean of each feature, Min–Max Scaling was used to place all variables into a common range [0, 1]. For each feature (column), the normalized value x′ was computed from the original value x according to formula (2), where:

-

x is the original feature value for each sample.

-

xmin is the minimum value in that column (after imputation, i.e., without zeros and missing values).

-

xmax is the maximum value in that column.

$${\text{x}}^{\prime } = \frac{{{\text{x}} - {\text{xmin}} }}{{{\text{xmax}} - {\text{xmin}} }}$$(2)

After normalization, all values in each column were between 0 and 1. Min–Max scaling was retained to preserve normalized ranges corresponding to bounded clinical variables (e.g., glucose, BMI, blood pressure) and to maintain compatibility with algorithms sensitive to feature magnitude such as neural networks and kernel-based models. This approach was explicitly adopted to replicate the baseline model configuration used in previous studies, ensuring methodological continuity and interpretability.

To evaluate the robustness of our preprocessing pipeline, we analyzed the effect of different imputation, scaling, and feature selection methods on model performance. Figure 3 presents the results across seven panels, showing ROC-AUC (panels A–C) and Macro-F1 (panels D–G) scores for various preprocessing strategies. Specifically, panels A and D–E illustrate performance across imputation methods (mean, median), panels B and F–G show performance across scaling methods (min–max, standard, robust), and panel C shows ROC-AUC across feature selection methods (spearman, mutual info, RFE). Overall, the results indicate that minor variations exist among different preprocessing combinations, but the overall performance remains stable, confirming the reliability and reproducibility of the selected imputation, scaling, and feature selection strategies. These results further support the feature selection choices and confirm the stability of the preprocessing pipeline used in this study.

Feature selection

In this step, all numeric features (except the output) were considered candidates for selection using the Spearman rank correlation test, and for each feature the correlation coefficient ρ and p-value with respect to the target variable were calculated. This method was chosen because of its ability to detect nonlinear relationships, suitability for ordinal data, and simplicity of implementation compared to methods like RFE. After calculation, features were sorted by the absolute value of the correlation coefficient and only features whose p-value was less than or equal to 0.0001 were selected as the final features. The Spearman rank correlation coefficient is defined in formula (3):

A strict significance threshold (p ≤ 10⁻4) was applied to ensure statistical rigor. Additionally, a Bonferroni correction was implemented to control for multiple comparisons. Spearman correlation was selected due to its robustness to monotonic yet non-linear relationships between physiological parameters and diabetic outcomes. The retained features glucose, blood pressure, skin thickness, insulin, BMI, and age align closely with clinically validated indicators of glycemic status, reflecting both statistical and physiological relevance. Table 2 summarizes which features were selected by each method, highlighting the consistency between Spearman filtering and Mutual Information.

In the end, the output set contained that subset of parameters which statistically showed the strongest association with diabetes status, an approach that both reduces the dimensionality of the problem and removes noise, making the final model faster and more interpretable. Table 3 presents the comparative performance of different preprocessing configurations, indicating that the baseline pipeline achieved competitive results with minimal variance across alternatives.

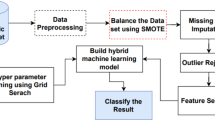

Hybrid model framework

In this hybrid method, which is based on voting and stacking strategies, three base models RF, KNN, and a NN with distinct architectures and hyperparameters are used within a coherent, integrated framework. The data were split into training, validation, and test sets in an 80:10:10 ratio. To ensure a robust evaluation and prevent data leakage a critical concern given the unusually high accuracies (≈98–99%) reported on the Pima dataset a nested cross-validation (CV) framework was rigorously employed. In this framework:

-

The outer loop consisted of 5 folds, used for unbiased model evaluation.

-

The inner loop also consisted of 5 folds, used for hyperparameter optimization and stacking of the base models (Random Forest, K-Nearest Neighbors, and Deep Neural Network)23.

All preprocessing steps including imputation, scaling, and feature selection were fit exclusively on the training portion of each fold and then applied to the validation/test folds without refitting24,25. Hyperparameter tuning and stacking were performed strictly within the inner training folds. Table 4 presents the model performance across the five outer folds of the nested cross-validation. For each fold, key metrics including accuracy, precision, recall, F1-score, and ROC-AUC are reported. The consistently high values across folds indicate the robustness and stability of the stacking model.

Figure 4 illustrates the complete nested cross-validation and model stacking pipeline. The data is first split into 5 outer folds for final evaluation. All preprocessing and hyperparameter tuning are performed exclusively on each outer training fold, and the final stacking model is evaluated on the completely held-out test data. This rigorous approach prevents any data leakage, ensuring the validity and reliability of the reported high-performance metrics.

In addition to the stacking-based hybrid model (using the stacking classifier class), a model using voting classifier was also defined, in which voting with weights [1, 2, 2] was used for the [NN, KNN, RF] models, respectively. The aim of this design is to compare the performance of the two aggregation strategies, stacking and voting. Formula (4) presents the proposed architecture. In this method, the base models NN, KNN, and RF first make predictions on the training data. The outputs of these models are used as new features. The meta-model then combines these features for the final prediction. If the outputs of the base models are probabilities, then for each sample x and each model m, the following probability vector is produced:

These vectors for each model are concatenated and given as input to the meta-model. The meta-model (LR) uses a logistic regression function to predict the final class, where W and b are the meta-model parameters learned during training.

All analyses were conducted in Python version 3.12.4 (tags/v3.12.4:8e8a4ba, Jun 6 2024, 19:30:16) [MSC v.1940 64-bit (AMD64)]. The following major packages and versions were used: numpy (1.26.4), pandas (2.2.1), matplotlib (3.8.3), seaborn (0.13.2), and scikit-learn (1.5.2).

Model performance evaluation metrics

To evaluate the performance of the different models (RF, KNN, NN, voting and stacking classifier), five main metrics were used. These metrics will help examine various aspects of model performance and identify the best model. The formulas are given below, where:

-

TP (True positive) The number of samples correctly classified as positive.

-

TN (True negative) The number of samples correctly classified as negative.

-

FP (False positive) The number of samples incorrectly classified as positive.

-

FN (False negative) The number of samples incorrectly classified as negative.

\(\text{Accuracy }=\frac{\text{TP}+\text{TN}}{\text{TP}+\text{TN}+\text{FP}+\text{FN}}\) | \(\text{Precision }=\frac{\text{TP}}{\text{TP}+\text{FP}}\) |

|---|---|

\(\text{Recall }=\frac{\text{TP}}{\text{TP}+\text{FN}}\) | \(\text{Specificity}=\frac{\text{TN}}{\text{TN}+\text{FP}}\) |

\({}_{k}{\text{AUROC}}\sum\limits_{k = 1}^{K} {\frac{1}{K} = {\text{Macro}}\;{\text{AUROC}}}\) | \({}^{2}\left( {{}_{i}y - {}_{i}p} \right)\sum\limits_{i = 1}^{N} \frac{1}{N} - 1 = B - 1\) |

\(\left| {{\text{conf}}(B_{m} ) - {\text{acc}}(B_{m} )} \right|\frac{{\left| {{}_{m}B} \right|}}{N}\sum\limits_{m = 1}^{M} { - 1} = E - 1\) | \(\text{F}1\text{ Score}=\frac{2\times \text{Precision }\times \text{Recall}}{\text{Precision }+\text{Recall}}\) |

Statistical evaluation of model performance

We performed a comprehensive statistical evaluation to assess whether the observed improvements from the stacking ensemble and other models were statistically meaningful. Specifically, macro-F1 and macro-AUROC metrics were computed across 200 bootstrap resamples (sampling with replacement) of the test set and repeated cross-validation (CV) folds. For each classifier, the 95% confidence interval (CI) was estimated using the standard error method. Additionally, paired t-tests and Wilcoxon signed-rank tests were conducted to compare the stacking ensemble against all strong baselines (NN, KNN, RF, and voting ensemble).

Meta-learner evaluation

Within the stacking ensemble, the impact of different meta-learners was evaluated by comparing LR, RF, and Gradient Boosting Decision Tree (GBDT). Each meta-learner received as input the out-of-fold predicted probabilities generated by the base learners (NN, RF, and KNN) during training. The same training, validation, and test splits were used across all meta-learners to ensure consistency.

Performance was assessed using Macro-F1 and Macro-AUROC, and 95% confidence intervals were calculated via 1000 bootstrap resamples of the test set. This analysis allowed us to identify LR as the most stable and interpretable meta-learner, preserving both classification balance (Macro-F1) and discriminative power (Macro-AUROC).

Explainable AI

To interpret the predictions of the hybrid machine learning model, both local (LIME) and global (SHAP) explainability techniques were employed. The LIME technique provides local and interpretable explanations for each prediction by creating small perturbations in the input data around each sample and approximating the complex model’s prediction with simple models. This method helps clarify and interpret the outputs of machine learning models at the individual sample level. In Formula (5), \(\hat{f}\left( x \right)\) denotes the local explanation of the model’s prediction at input x. Here, g(x′) is the interpretable model’s prediction on the perturbed sample x′, h_j(x) represents interpretable features, ω_j are the learned coefficients, and M is the number of interpretable features. In the hybrid model presented in this study, LIME is used as an interpretability technique to provide local, interpretable explanations for why the model made a particular prediction.

SHAP values provide a comprehensive framework for interpreting the output of any machine learning model and indicate the importance of each feature in the prediction process. These values are based on Shapley values from cooperative game theory and offer a principled approach to allocating the “contribution” of each input feature to the model’s prediction. In Formula (6), ϕ_i is the SHAP value for feature i, K is the number of samples, f(x_k) is the model’s prediction for sample k, E(f(x_k)) is the expected prediction for sample k, and ϕ^{(k)}_i denotes the contribution of feature i in sample k. In the hybrid model presented in this study, SHAP values are used to explain feature importance at the dataset-wide level and to determine which features have a major impact on the model’s predictions.

Global SHAP summary and class-wise plots were generated to compare feature importance across “Normal”, “Prediabetes”, and “Diabetes” classes. To reconcile potential discrepancies between LIME and SHAP explanations, results were examined jointly: LIME provided local insights for specific cases, while SHAP identified globally consistent feature contributions. Additionally, clinically meaningful interpretations were derived by defining decision thresholds and feature regions where model predictions are likely to change, and by evaluating model calibration and decision curves to assess clinical reliability and utility.

Results

As Fig. 5 shows, there are significant differences in the distribution of data across the different classes. These differences are particularly evident in the features age, glucose, blood pressure, and insulin.

As mentioned in the methods section, feature selection was performed using Spearman’s correlation coefficient, and only variables with p-value ≤ 0.0001 were chosen as selected features, indicating very high confidence in their true association with the outcome. The correlation matrix plot shows, in addition to the relationships between features and the outcome, the correlations among the features themselves. This method retains features with the greatest predictive power and the lowest likelihood of random association, and it helps prevent overfitting. In this study and based on the Spearman correlation criterion with significance level p-value ≤ 0.0001, the features glucose, blood pressure, body mass index (BMI), age, and insulin were selected as the variables for modeling. Figure 6 shows the list of top features.

Table 5 summarizes the RF model architecture: number of trees: from 50 to 300, maximum tree depth: from 3 to 15, minimum samples to split a node: from 2 to 10, number of random features at each node: ‘sqrt’, ‘log2’ or a float between 0.1 and 1.0. With these settings, the RF creates high diversity among trees through sampling with replacement and random feature selection at each node, reduces variance errors, and is robust to noise.

Table 6 summarizes the KNN model architecture: number of neighbors between 3 and 30, leaf size between 20 and 60, weighting method ‘uniform’ or ‘distance’, distance metric: ‘manhattan’ or ‘minkowski’. With these settings, KNN, while maintaining simplicity, captures local patterns well and performs acceptably at nonlinear decision boundaries.

Table 7 summarizes the NN model architecture, which has three consecutive hidden layers, number of neurons: first layer = 64, second layer = 128, third layer = 64, activation function in all hidden layers: ReLU, and after each hidden layer a Dropout layer to prevent overfitting, with rates [0.20, 0.30, 0.20], and a Softmax output layer for multi-class classification (Fig. 7). The three-layer NN with ReLU activations and Dropout layers combines the ability to learn complex nonlinear relationships with appropriate control over overfitting. Figure 7 shows the structure of the neural network used in the modeling.

Summarizes the stacking ensemble architecture

After each of the three base models was trained on the training data with their best hyperparameter settings, their probabilistic outputs were passed as inputs to a simple LR model. This model is used as the meta-learner in the stacking structure. The stacked model training process was carried out using scikit-learn’s stacking classifier class and utilizing internal fivefold cross-validation. This arrangement is designed to prevent overfitting and to obtain an optimal combination of the base models’ output weights. Table 8 summarizes the stacking ensemble architecture.

Confusion matrix of model based on data splits

Figure 8 is presented as a confusion matrix for each model, showing the number of correct and incorrect predictions of the models. This matrix is an appropriate tool for analyzing how the models perform in classifying samples.

Model performance evaluation

Based on the comprehensive evaluation in Table 9, the stacking ensemble model confirmed its superiority. It achieved flawless scores (100%) across all classification metrics accuracy, precision, recall, specificity, F1 Score, and AUROC for class 0. Furthermore, it demonstrated the best calibration, marked by a Brier score complement (1-B) of 99.9% and a high overall calibration score (ECE) of 98.7 % for class 0. The RF model also delivered exceptionally strong results, particularly for classes 0 and 2, where it achieved perfect scores in several key metrics. In stark contrast, the KNN model consistently underperformed across all classes and metrics, highlighted by a low F1 Score of 83.3% for class 0. The neural network and voting ensemble both provided robust and balanced performance; the neural network notably achieved 100% recall for class 0, while the voting ensemble produced strong overall results, though slightly trailing the leading stacking and RF models.

Figure 9 displays the results of various model performance metrics, including accuracy, precision, recall, specificity, and F1 score.

Macro-averaged ROC curves for all models

Figure 10 presents the macro-averaged Receiver Operating Characteristic (ROC) curves, comparing the overall discriminative ability of all models across all classes. The neural network achieves the highest AUC (99.8%), closely followed by the voting ensemble (99.6%) and the stacking ensemble (99.5%). All ensemble methods and the NN demonstrate excellent classification performance (AUC > 0.99), while KNN shows relatively lower but still strong performance (AUC = 98.9%).

Calibration error comparison across models

Figure 11 presents the expected calibration error (ECE) for all evaluated models, with lower values indicating better reliability of predicted probabilities. The stacking ensemble demonstrates the best calibration performance (ECE: 0.87%), followed closely by the voting ensemble (1.09%) and RF (2.60%). In contrast, KNN exhibits the highest calibration error (10.36%), reflecting poor probabilistic consistency. These results highlight that the stacking ensemble not only achieves superior classification accuracy but also provides well-calibrated probability estimates, essential for risk stratification and clinical decision-making.

Effect of meta-learner choice

Figure 12 compares the performance of different meta‑learners (LR, RF, and GBDT) within the stacking ensemble framework. All three models achieved very high Macro‑AUROC values (greater than 0.99), confirming excellent discriminative ability. However, differences emerged in Macro‑F1 scores: LR and RF maintained balanced performance at approximately 98.9%, while GBDT dropped to around 92.7%, indicating lower precision‑recall balance and potential overfitting. Overall, LR emerged as the optimal meta‑learner, offering a more stable and interpretable configuration that preserves both classification balance (Macro‑F1) and discriminative power (Macro‑AUROC).

Statistical evaluation of model performance

The results of statistically meaningful are summarized in Fig. 13 (“Bootstrap 95% CI & p-values vs stacking model”). Darker bars represent Macro-F1, lighter bars correspond to Macro-AUROC, and the vertical error bars visualize the 95% confidence intervals. Values annotated atop each bar show the p-value of the paired comparisons against stacking. All comparisons yielded p < 0.05, demonstrating statistically significant differences. In particular, the stacking ensemble achieved the highest mean performance with the narrowest confidence intervals, confirming its superiority and robustness. The voting ensemble (p = 0.026) was the closest competitor but still significantly inferior. In summary, these results provide strong statistical evidence that the Stacking Ensemble significantly outperforms all baseline models in both classification accuracy and discrimination ability.

Explainable AI

The LIME explanation identified the condition “0.36 < Glucose ≤ 0.47” as the primary reason for the “Pre-diabetes” prediction and assigned it a high weight of 0.88. However, the individual’s actual glucose value was 0.36, which did not satisfy this condition as it required a value strictly greater than 0.36 (Fig. 14).

Figure 15 illustrates the global SHAP feature importance for the top predictive variables. The findings demonstrate that blood pressure, insulin, and glucose exerted the highest overall influence on the model’s predictions, reflecting the well-established physiological interdependence between insulin and glucose in metabolic regulation.

Local LIME probabilities (0.96 probability for Pre-diabetes) are consistent with SHAP directional explanations: the sample’s glucose value (0.36 normalized) lies within the SHAP-defined “decision band” (0.35–0.47) that shifts model output toward Pre-diabetes. While LIME focuses on local feature perturbation, SHAP aggregates contributions under a game-theoretic framework, leading to smoother gradients across individuals. Both frameworks converge in their primary attribution of glucose as the decisive marker for intermediate dysregulation (Fig. 16A,B).

The SHAP dependency plot (Fig. 17A) identifies the transition zone between “Normal” and “Pre-diabetes” near normalized glucose values of 0.35–0.47. Within this region, SHAP values switch sharply from negative to positive, confirming the model’s internal threshold for early metabolic risk escalation. Clinically, this corresponds approximately to plasma glucose levels of 100–125 mg/dL (WHO/ADA criteria for prediabetes), indicating the model’s sound alignment with established diagnostic reference ranges.

Finally, probability calibration analysis (ECE = 1.09%, Brier = 0.87%) and decision curve evaluation (Fig. 17B) both confirm that the stacking model provides well-calibrated and clinically reliable probability outputs, improving net benefit across plausible risk thresholds compared to baseline models (RF, NN, KNN).

Discussion

In this study, prediction of type 2 diabetes was performed by combining three models RF, KNN and NN and two explainable AI techniques, LIME and SHAP, were used to identify important features for diagnosing type 2 diabetes. As the findings showed, among all models the stacking model achieved the best performance across all evaluation metrics including accuracy, sensitivity, specificity, and F1 score for accurately predicting type 2 diabetes. The voting model demonstrated strong performance with high accuracy and appreciable values for sensitivity, specificity, and F1 score compared to other models. In contrast, the KNN model performed relatively poorly compared to the others, achieving lower results across all evaluation metrics including accuracy, sensitivity, specificity, and F1 score.

Studies have consistently demonstrated that combining multiple models through ensemble learning techniques, particularly stacking, can effectively integrate the complementary strengths of diverse algorithms, leading to enhanced predictive performance and greater robustness compared to individual model26, Stacking operates by training a meta-learner that optimally combines the outputs of several base learners, enabling it to capture both linear and non-linear relationships that single models might overlook. This layered architecture allows the system to balance the variance and bias trade-offs inherent in different algorithms, producing a more generalized model that performs well on unseen data. Notably, stacking has been shown to yield marked improvements in multi-class diabetes classification tasks27. highlighting its potential for complex biomedical prediction problems where patterns are often subtle and heterogeneous.

Similarly, ensemble strategies such as majority voting and bagging have proven capable of increasing accuracy and overall performance metrics compared to single classifiers28. In recent research, the use of stacking to combine classical machine learning models (e.g., logistic regression, support vector machines) with neural networks on the PIMA dataset and local datasets achieved accuracies between 92 and 95%29. These findings confirm the superiority of combined approaches, particularly when models with distinct learning mechanisms such as probabilistic, tree-based, and deep learning methods are integrated to leverage their complementary perspectives on the data. The concordance of these results with the present study underscores the idea that hybrid ensemble architectures can deliver more accurate and stable predictions in early diabetes diagnosis. Such approaches not only enhance predictive performance but also improve model reliability and interpretability when coupled with tools such as SHAP or LIME, which elucidate the contribution of each feature to the final decision an essential factor for clinical deployment.

Previous investigations have further emphasized the advantage of hybrid approaches and advanced learning architectures in diabetes diagnosis. For example, a hybrid model combining deep neural networks and random forest achieved an accuracy of 94.81%20, while more sophisticated frameworks incorporating clustering and autoencoder-based feature extraction reached accuracies as high as 99.13%21. Although these methods tend to involve more complex implementation and computational requirements, their results indicate the substantial potential of combining deep feature learning with structured ensemble modeling.

The observed improvement of stacking-based models can be attributed to their capacity to integrate diverse feature representations learned by different base models, effectively combining the unique strengths of each. By averaging the outputs of multiple learners, stacking reduces overfitting and enhances model robustness, while the meta-learning layer optimally weights the contributions of individual models to improve generalization on unseen data. Furthermore, this approach captures complex nonlinear dependencies and intricate interactions among predictors that single models might overlook. Consequently, stacking offers a powerful and flexible framework for medical prediction tasks, achieving an optimal balance between accuracy, stability, and interpretability qualities that are essential for developing trustworthy, AI-driven diagnostic systems suitable for clinical practice.

The superior performance of the voting model in our study aligns with a growing body of evidence highlighting the advantages of ensemble learning over single classifiers in diabetes prediction. ensemble methods, particularly voting, reduce the weaknesses of individual models by aggregating their strengths, thereby achieving higher accuracy and stability. For example, one study demonstrated that while individual classifiers such as Random Forest, SVM, and Naïve Bayes showed moderate predictive power, combining the better-performing models through a voting algorithm achieved higher accuracy (82%) and more robust results across both imbalanced and balanced datasets30. Similarly, researchers emphasized that approaches relying solely on a single classifier may lack robustness compared to voting-based strategies; integrating diverse models (SVM, ANN, and Naïve Bayes) improved sensitivity and overall diagnostic performance30. This advantage was also confirmed in large-scale evaluations using NHANES data, where an ensemble voting model significantly enhanced predictive accuracy and discrimination ability (AUC = 0.75)31.

Further evidence supports that both hard and voting strategies enhance model reliability compared to standalone algorithms. One study showed that ensemble classifiers consistently outperformed individual models in terms of accuracy and probability estimation, strengthening diagnostic decision-making in diabetes prediction32. Another investigation in Mexico applied a hard voting ensemble of GLM, SVM, and ANN for T2DM detection and achieved an AUC of 90% ± 3%, demonstrating that voting provides a fast, effective, and clinically valuable method for early disease identification33. Similarly, a soft voting approach integrating RF, LR, and Naïve Bayes achieved the highest performance across accuracy, precision, recall, and F1 score compared to state-of-the-art classifiers on the PIMA dataset, further underscoring the effectiveness of ensemble voting34. Finally, a systematic review combining eleven well-known ML algorithms into an ensemble voting framework reported nearly 86% accuracy on the Pima dataset, showing that ensembles consistently outperform single models even after extensive hyperparameter tuning and cross-validation34. Taken together, these studies highlight that voting-based ensemble approaches provide superior predictive power by balancing variance, reducing overfitting, and leveraging the complementary strengths of diverse classifiers. This justifies why the voting model in our work demonstrated stronger performance, with high accuracy and favorable sensitivity, specificity, and F1 score compared to other individual models.

The consistently inferior performance of the KNN algorithm across all evaluation metrics accuracy, sensitivity, specificity, and F1 score as observed in this study, can be attributed to several inherent limitations of the KNN algorithm that are particularly exacerbated when applied to clinical and questionnaire-based datasets like those used for diabetes prediction. KNN is a distance-based algorithm that relies on measures like Euclidean distance to compute similarity between data points. A fundamental weakness is its susceptibility to the “curse of dimensionality,” where its performance deteriorates as the number of features increases. In high-dimensional spaces, which are common in medical datasets (e.g., features like age, BMI, glucose levels, blood pressure, etc.), points tend to become equidistant from each other. This makes the concept of a “nearest neighbor” increasingly meaningless and degrades the model’s predictive power35. Unlike tree-based models like RF that perform implicit feature selection, KNN weighs all features equally. This allows irrelevant or redundant features, which are common in initial feature sets, to distort the distance calculations and introduce noise, leading to poor generalization on unseen data36,37.

Moreover, the performance of distance-based algorithms is highly contingent upon feature scaling. Features on a larger scale (e.g., insulin levels) can disproportionately dominate the distance calculation compared to features on a smaller scale (e.g., number of pregnancies), unless normalized. Failure to apply rigorous feature scaling puts KNN at a significant disadvantage compared to models like decision trees (DT) or Naive Bayes (NB), which are inherently scale-invariant38. Furthermore, KNN is notoriously sensitive to class imbalance, a pervasive issue in medical datasets where the number of non-diabetic cases often vastly outweighs diabetic ones. In such scenarios, the majority class tends to overwhelm the decision boundary in the local neighborhood of any query point, leading to a high number of false negatives and consequently low sensitivity a critical metric in disease diagnosis39.

KNN is an instance-based (“lazy learner”) algorithm. It does not learn a discriminative model from the training data but rather memorizes the entire dataset and makes predictions based on local approximations. This lack of a generalized model makes it highly prone to overfitting, especially in the presence of noisy or outlier data points36,38. Clinical data often contains noise, measurement errors, and incomplete entries, even after preprocessing. A single anomalous sample can significantly skew the prediction for a new data point. In contrast, ensemble methods like Random Forest and Gradient Boosting average multiple deep decision trees to create a robust model that is far more resilient to such noise and variance40.

Therefore, the poor performance of the KNN model is not an anomaly but an expected outcome based on its fundamental algorithmic characteristics. Its well-documented limitations in handling high-dimensional data, its critical dependence on meticulous preprocessing, and its vulnerability to noise and class imbalance make it a generally suboptimal choice for complex classification tasks like diabetes prediction. This is especially true when compared to more sophisticated ensemble methods or algorithms capable of learning complex, non-linear decision boundaries while managing variance. Therefore, its lower scores across all metrics align perfectly with established machine learning theory and prior research in clinical informatics.

Limitations of the study

The data used in this research were extracted from the Pima Indians Diabetes dataset, which is publicly and limitedly available via Kaggle. This dataset has limitations such as relatively small size, insufficient diversity in features and sample population, which may reduce the generalizability of the results to other populations or real-world conditions. To increase the generalizability and validity of the developed models, it is recommended that future studies use larger and more diverse datasets, including multicenter clinical data and different populations.

Conclusion

This study demonstrates that the Stacking Ensemble model achieves superior performance in type 2 diabetes prediction, outperforming all other models across key evaluation metrics. While Random Forest also delivered strong results, the stacking approach proved most reliable and well-calibrated across all patient classes. By integrating diverse algorithms and leveraging explainability tools, clinically relevant features such as glucose level, BMI, and blood pressure were identified as key predictive factors. This accurate and interpretable framework provides a practical decision-support tool for early diabetes detection, particularly in resource-constrained settings, and can be adapted for other chronic diseases to enhance preventive healthcare and improve clinical outcomes. Future work will focus on validating the proposed framework on larger and more diverse populations, incorporating longitudinal patient data, and extending the approach to other chronic disease domains.

Data availability

The data is available through the following link: [Pima Indians Diabetes] (https://www.kaggle.com/datasets/uciml/pima-indians-diabetes-database) .

References

Blair, M. Diabetes Mellitus Review. Urol. Nurs. 36(1), 27–36 (2016).

Kumar, A., Gangwar, R., Ahmad Zargar, A., Kumar, R. & Sharma, A. Prevalence of diabetes in India: A review of IDF diabetes atlas 10th edition. Curr. Diab. Rev. 20(1), 105–114 (2024).

Hossain, M. J., Al-Mamun, M. & Islam, M. R. Diabetes mellitus, the fastest growing global public health concern: Early detection should be focused. Health Sci. Rep. 7(3), e2004 (2004).

Auvinen, A.-M. et al. Type 1 and type 2 diabetes after gestational diabetes: a 23 year cohort study. Diabetologia 63(10), 2123–2128 (2020).

Basu, A. et al. Dietary blueberry and soluble fiber improve serum antioxidant and adipokine biomarkers and lipid peroxidation in pregnant women with obesity and at risk for gestational diabetes. Antioxidants 10(8), 1318 (2021).

Bovolini, A., Garcia, J., Andrade, M. A. & Duarte, J. A. Metabolic syndrome pathophysiology and predisposing factors. Int. J. Sports Med. 42(03), 199–214 (2021).

Yen, F.-S. Wei JC-C, Shih Y-H, Hsu C-C, Hwu C-M: Impact of individual microvascular disease on the risks of macrovascular complications in type 2 diabetes: a nationwide population-based cohort study. Cardiovasc. Diabetol. 22(1), 109 (2023).

Usman, M. S., Khan, M. S., Butler, J. The interplay between diabetes, cardiovascular disease, and kidney disease. (2021).

Özçelik, Y. B., Altan, A. Classification of diabetic retinopathy by machine learning algorithm using entorpy-based features. In Proceedings of the ÇAnkaya International Congress on Scientific Research: 2023. (IKSAD Golbasi, Adiyaman Province, Turkey, 2023) 10–12.

Jayalakshmi, R. & Tamilvizhi, T. Privacy preservation in diabetic disease prediction using federated learning based on efficient cross stage recurrent model. Sci. Rep. 15(1), 37258 (2025).

Vinitha, G., Rambabu, B., Surendran, R., Balamurugan, K. S. Optimizing diabetic kidney disease diagnosis with machine learning: a novel approach. In 2025 8th International Conference on Electronics, Materials Engineering & Nano-Technology (IEMENTech): 2025. (IEEE, 2025) 1–4.

Kiran, M. et al. Machine learning and artificial intelligence in type 2 diabetes prediction: a comprehensive 33-year bibliometric and literature analysis. Front. Digit. Health 7, 1557467 (2025).

Yang, J. et al. Optimizing diabetic retinopathy detection with inception-V4 and dynamic version of snow leopard optimization algorithm. Biomed. Signal Process. Control 96, 106501 (2024).

Kavakiotis, I. et al. Machine learning and data mining methods in diabetes research. Comput. Struct. Biotechnol. J. 15, 104–116 (2017).

Anderson, J. P. et al. Reverse engineering and evaluation of prediction models for progression to type 2 diabetes: an application of machine learning using electronic health records. J. Diabetes Sci. Technol. 10(1), 6–18 (2016).

Ainan, U. H., Por, L. Y., Chen, Y. L., Yang, J. & Ku, C. S. Advancing bankruptcy forecasting with hybrid machine learning techniques: Insights from an unbalanced polish dataset. IEEE Access 12, 9369–9381 (2024).

Kim, H., Lim, D. H. & Kim, Y. Classification and prediction on the effects of nutritional intake on overweight/obesity, dyslipidemia, hypertension and type 2 diabetes mellitus using deep learning model: 4–7th Korea national health and nutrition examination survey. Int. J. Environ. Res. Public Health 18(11), 5597 (2021).

Tanim, S. A. et al. Explainable deep learning for diabetes diagnosis with DeepNetX2. Biomed. Signal Process. Control 99, 106902 (2025).

Hasan, R., Dattana, V., Mahmood, S. & Hussain, S. Towards transparent diabetes prediction: combining automl and explainable AI for improved clinical insights. Information 16(1), 7 (2024).

Dweekat, O. Y., Lam, S. S. Optimized design of hybrid genetic algorithm with multilayer perceptron to predict patients with diabetes. Soft Comput. Fus. Found. Methodol. Appl. 27(10) (2023).

Alex, S. A., Nayahi, J. J. V., Shine, H. & Gopirekha, V. Deep convolutional neural network for diabetes mellitus prediction. Neural Comput. Appl. 34(2), 1319–1327 (2022).

Olisah, C. C., Smith, L. & Smith, M. Diabetes mellitus prediction and diagnosis from a data preprocessing and machine learning perspective. Comput. Methods Programs Biomed. 220, 106773 (2022).

Li, L. et al. RETRACTED: Enhancing lung cancer detection through hybrid features and machine learning hyperparameters optimization techniques. Heliyon 10(4), e26192 (2024).

Li, H. et al. MSPO: A machine learning hyperparameter optimization method for enhanced breast cancer image classification. Digit. Health 11, 20552076251361604 (2025).

Nabeel, S. M. et al. Optimizing lung cancer classification through hyperparameter tuning. Digit. Health 10, 20552076241249660 (2024).

Daza, A., Sánchez, C. F. P., Apaza-Perez, G., Pinto, J. & Ramos, K. Z. Stacking ensemble approach to diagnosing the disease of diabetes. Inf. Med. Unlock. 44, 101427 (2024).

Gollapalli, M. et al. A novel stacking ensemble for detecting three types of diabetes mellitus using a Saudi Arabian dataset: Pre-diabetes, T1DM, and T2DM. Comput. Biol. Med. 147, 105757 (2022).

Ganie, S. M. & Malik, M. B. An ensemble machine learning approach for predicting type-II diabetes mellitus based on lifestyle indicators. Healthcare Anal. 2, 100092 (2022).

Reza, M. S., Amin, R., Yasmin, R., Kulsum, W., Ruhi, S. Improving diabetes disease patients classification using stacking ensemble method with PIMA and local healthcare data. Heliyon 10(2) (2024).

Al-Lawati, J. A. Diabetes mellitus: A local and global public health emergency!. Oman Med J 32(3), 177–179 (2017).

Husain, A. & Khan, M. H. Early diabetes prediction using voting based ensemble learning. In International conference on advances in computing and data sciences: 2018 95–103 (Springer, Cham, 2018).

de Oliveira, G. P., Fonseca, A. & Rodrigues, P. C. Diabetes diagnosis based on hard and soft voting classifiers combining statistical learning models. Braz. J. Biometr. 40(4), 415–427 (2022).

Morgan-Benita, J. A., Galván-Tejada, C. E., Cruz, M., Galván-Tejada, J. I., Gamboa-Rosales, H., Arceo-Olague, J. G., Luna-García, H., Celaya-Padilla, J. M. Hard voting ensemble approach for the detection of Type 2 diabetes in mexican population with non-glucose related features. Healthcare (Basel) 10(8) (2022).

Mahabub, A. A robust voting approach for diabetes prediction using traditional machine learning techniques. SN Appl. Sci. 1(12), 1667 (2019).

Aggarwal, C. C., Hinneburg, A. & Keim, D. A. On the surprising behavior of distance metrics in high dimensional space. In International conference on database theory 2001 420–434 (Springer, Cham, 2001).

Sidey-Gibbons, J. A. & Sidey-Gibbons, C. J. Machine learning in medicine: a practical introduction. BMC Med. Res. Methodol. 19(1), 64 (2019).

Moulaei, K., Ghasemian, F., Bahaadinbeigy, K., Ershad Sarbi, R. & Mohamadi Taghiabad, Z. Predicting mortality of COVID-19 patients based on data mining techniques. J. Biomed. Phys. Eng. 11(5), 653–662 (2021).

Fernández-Delgado, M., Cernadas, E., Barro, S. & Amorim, D. Do we need hundreds of classifiers to solve real world classification problems?. J. Mach. Learn. Res. 15(1), 3133–3181 (2014).

He, H. & Garcia, E. A. Learning from imbalanced data. IEEE Trans. Knowl. Data Eng. 21(9), 1263–1284 (2009).

Salem Alzboon M, Al-Batah M, Alqaraleh M, Abuashour A, Fuad Bader A: A comparative study of machine learning techniques for early prediction of diabetes. arXiv e-prints 2025:arXiv: 2506.10180.

Acknowledgements

This work was supported by the National Council of Humanities, Sciences and Technologies Grant CB A1-S-7679 and the Potosino Institute for Scientific and Technological Research (IPICYT) Multidivisional Research Projects Grant IPICYT 2023 S-2949. We thank for the scholarship 1080363 provided to AFRO.

Funding

We hereby declare that no financial support was received for the conduct of this research.

Author information

Authors and Affiliations

Contributions

Conceptualization: Niloufar Zaferani, Khadijeh Moulaei; Data curation: Niloufar Zaferani, Khadijeh Moulaei; Funding: Niloufar Zaferani; Project administration: Niloufar Zaferani, Khadijeh Moulaei; Design and Modeling: Niloufar Zaferani, Khadijeh Moulaei, Mohammad Reza Afrash; Resources: Niloufar Zaferani, Khadijeh Moulaei; Supervision: Niloufar Zaferani; Writing–original draft: Niloufar Zaferani, Khadijeh Moulaei; Writing–review & editing: Niloufar Zaferani, Khadijeh Moulaei, Mohammad Reza Afrash.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zaferani, N., Afrash, M.R. & Moulaei, K. Predicting and classifying type 2 diabetes using a transparent ensemble model combining random forest, k-nearest neighbor, and neural networks. Sci Rep 16, 1892 (2026). https://doi.org/10.1038/s41598-025-31562-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-31562-5