Abstract

Preservation and restoration of historical buildings are important tasks and require accurate assessment of structural damage. However, human and economic efforts are limited, therefore, it is important to automatize such a task. While there are several studies that can identify damages in the buildings, the majority use images to identify slight or moderate damage and only a few can detect severe or very severe damages (where the structure has been affected). This gap is partially due to two challenges: (i) the uniqueness of the built heritage limits the number of available datasets, and (ii) to deal with detailed 3D representations requires high computational resources. To address these challenges, this paper proposes an automatic classification of the structural state of the built heritage, considering severe or very severe degrees of damage. Due to the limited number of available models, this work uses point clouds converted to voxel map representations, improving the generalization of the method. To achieve the classification, a 3D Convolutional Neural Network (CNN) with five layers is adapted and trained under supervised learning with a minimal dataset. The training dataset contains 130 built heritage structures and was specifically created by authors using CAD tools. The trained 3D CNN was tested on two real-world buildings: (1) a Posa chapel with very severe damage belonging to the Natividad former convent at Tepoztlán, Morelos, Mexico. The convent has been recognized as a UNESCO World Heritage site since 1994. (2) The Kukulkán temple with no severe damage. This is one of the 7 Wonders of the World, located at the archeological site of Chichén Itzá at Yucatán, Mexico. This site has been recognized as a UNESCO World Heritage since 1988. The results show that the proposed method successfully classifies the structural state of the heritage buildings. Key findings include: (i) low-resolution voxel maps effectively preserve essential structural features for supervised learning while requiring a moderate number of examples, and (ii) the approach is scalable for other unique heritage structures. This method provides a viable tool for automated damage assessment, supporting preservation and restoration efforts for cultural heritage.

This paper proposes a scheme of automatic classification of the current structural state of built heritage with severe and very severe damage degree. Low resolution voxel map of 3D representation (point cloud) and 3D Convolutional Neural Network are exploited. A practical test confirms the effectiveness of the proposed scheme.

Similar content being viewed by others

Introduction

In the last two decades, a variety of indoor and outdoor environments (at small and large scale) has been accurately scanned in 3D. To achieve that, new sensors and computer vision methods have been applied. The result of the 3D scanning is usually a point cloud. Given that those point clouds are easy to manipulate and transform, they have been very useful in fields such as architecture1,2,3, oceanography4, civil engineering, electrical engineering, railway engineering, mechanical engineering5,6,7,8, and health care9, to name a few. Some of the objects that have been mapped are historical statues, monuments, buildings2, roads10, mechanical systems11, electrical installations and plumbing pipeline systems12, industrial and agriculture machinery13, screws14, and human organs15.

In particular, 3D scanning has been of great help to study, monitor, document, and preserve heritage16,17,18,19. Also, in order to promote tourism20, it has been exploited in cultural activities by performing digital projections of historical objects or places in museums16. However, restoration actions are frequently required on heritage to face damages caused by natural phenomena over time. The restoration has to be meticulous and delicate to avoid further damage21,22,23,24. In this respect, the outdoor built heritage, which in general corresponds to ancient buildings, deserves special attention because the restoration is difficult, expensive, and inaccurate. This is mainly due to it is performed by hand by experts to harmonize color, texture, material, and orientation/deviation21,22,23,24. Also, because of the height of the buildings, construction structures and specialized equipment are needed, whose placement compromises the entire ancient building.

The heritage building restoration is a complex process that includes at least the following stages: historical documentation, structural inspection, damage identification, and corrective intervention. Each stage, by itself, can be difficult, expensive, and inaccurate. To face this, automatic processes dedicated to some of the restoration stages, based on neural networks, have been introduced. Regarding historical documentation, it can be found contributions focused on: (i) recognizing whether a building is heritage or non-heritage22,25,26, (ii) determining architectural style17,27,28,29,30,31; and (iii) identifying architectural elements such as doors, windows, pillars, domes, arches, etc.16,19,32,33,34. With respect to structural inspection, only a paper has been dedicated to identifying if a part of an ornamental element of a building is missing35. Also, regarding damage identification, some efforts have been reported to classify cracks, spalling, discoloration, and exposed bricks in buildings18,20,21,22,23,24,36,37,38.

It is worth mentioning that the contributions related to structural inspection and damage identification have been carried out by considering visible damages to walls. The degree of the considered damage corresponds to categories 1 (very slight), 2 (slight), and 3 (moderate) provided by Burland et al. 39. To the authors' knowledge, detection or classification of the structural state of an entire heritage building that includes a degree of damage in categories 4 (severe) and 5 (very severe) of ref. 39 has not been treated. To facilitate the reference, the description of the damages according to their degree is given in Table 1.

Based on the aforementioned points, this paper aims to automatically estimate the structural state of built heritage with damage degrees 4 and 5. This is achieved through an automatic classification method using a voxel map representation of the building and a 3D Convolutional Neural Network (CNN). Specifically, the paper focuses on a case study: the Posa chapel, part of the Natividad former convent in Tepoztlán, Morelos, Mexico. The convent has been recognized as a UNESCO World Heritage site since 1994. The Posa chapel exhibits significant structural damage, including the loss of half a dome and a column, so it is an example of a building with very severe damage. To further validate the method’s effectiveness, the classification is also tested with a voxel map from a 3D representation (point cloud) of the Kukulkán temple, which has not severe damage. This is one of the 7 Wonders of the World. It is located at the archeological site of Chichén Itzá at Yucatán, Mexico. This site has been recognized as a UNESCO World Heritage since 1988.

Since a supervised learning approach is employed and both the Posa chapel and the Kukulkán temple are unique structures, the authors created a dataset of more than one hundred 3D models using Computer-Aided Design (CAD) tools. Data augmentation techniques were then applied to expand the dataset to 780 models. The proposed 3D CNN is an adapted version of the VoxNet CNN introduced in ref. 40. This 3D CNN is trained and validated using the augmented dataset. The results demonstrate that the trained 3D CNN successfully classifies the Posa chapel and the Kukulkán temple according to their structural state. Specifically, the proposed method enables automatic recognition of whether a heritage building exhibits severe or very severe damage or not. Although the dataset was designed to classify our particular cases, we believe that the proposed scheme can be generalized to classify other heritage buildings.

It is worth mentioning that the previously described contribution is the basis to apply 3D autoencoders. This in order to seek to complete point clouds with the missing parts of heritage buildings with degrees of damage 4 (severe) and 5 (very severe). Thus, heritage buildings could be digitally represented in 3D with an estimated complete shape (simulating the building before being damaged). Hence, the resulting virtual model of the complete heritage buildings could be exploited: (i) to facilitate the corrective intervention stage in preservation and restoration activities by providing a design scenario testing; and (ii) to promote tourism by performing digital projections of historical places. Furthermore, remote sensing applications could benefit from the contribution herein reported. For example to perform analysis of whole villages remotely via drone photogrammetric/scanning documentation, for which accurate 3D documentation is required. Other applications of our contribution could involve: design scenario testing of alternative solutions for the experts to decide which one would be the most true; and automated identification of changes in the structure of heritage buildings between two 3D documented phases, using sensors.

The rest of the paper is organized as follows. The most related work to the contribution herein described is treated in the “Related work” section. Whereas the dataset construction and supervised classification are introduced in the “Structural state classification” section. The corresponding experiments are presented in the “Practical test” section. Lastly, the conclusion is given in the “Conclusions” section.

Related work

In this section, a brief review of the more related work to the proposal herein presented is given below.

The review was limited to papers published in journals indexed in the Journal Citations Reports, addressing the following: (a) image-based classification to inspect state or identify damage of walls of built heritage, and (b) point cloud classification of unique ornaments of built heritage.

Regarding (a), Chaiyasarn et al.20 presented an automatic crack detection system for the inspection of masonry structures. The system consists of two steps: crack feature extraction with CNN and crack classification using CNN itself, Support Vector Machines (SVM), and Random Forest (RF). Also, Zou et al.35 proposed a building component inspection based on Faster R-CNN. The CNN infers and labels missing parts of the deteriorated building components. The methodology was applied to detect and count intact and impaired components of the Forbidden City in China. Furthermore, Wang et al.37 introduced a two-level object detection, segmentation, and measurement scheme for inspection of historic glazed tiles at the Palace Museum in China. In the first level, a cropped image dataset of 100 pictures was created using the Faster R-CNN. In the second level, the damage of glazed tiles was segmented and measured using a Mask R-CNN. Recently, Mishra et al.18 presented an approach for defect detection and localization in heritage structures based on YOLO v5. The defects considered for classification were discoloration, exposed bricks, cracks, and spalling. A dataset of over 10,000 images was used to train YOLO v5, which was tested with images of the Dadi-Poti tombs in Hauz Khas Village, New Delhi. The YOLO v5 results were compared with ResNet 101 and R-CNN results. YOLO v5 achieved eight percent higher accuracy than ResNet 101 and R-CNN.

With respect to (b), Haznedar et al.34 implemented PointNet for the identification of architectural elements by using 3D point cloud data from Gaziantep, Turkey. A point cloud dataset was created from twenty-eight heritage buildings. For each building, the following five feature labels were created: walls, roofs, floors, doors, and windows. Eighty percent of the dataset was used to train PointNet and the remainder to test it. PointNet was not accurate in segmenting deformed and deteriorated buildings, but when trained the algorithm with the restitution-based heritage data, the accuracy improved.

In contrast with the related work, the proposal herein presented has the following distinguishing features:

-

Unique built heritage with a damage degree in categories 4 and 5 is considered to perform a classification of structural state.

-

3D representation of the built heritage is exploited. Hence, a high-resolution 3D point cloud is generated. Therefore, a more accurate spatial position, size, and geometric shape of the buildings is obtained.

-

A 3D CNN with only five layers is adapted to recognize the built heritage structural state (complete or incomplete state) instead of recognizing buildings' names or typical damages from images.

-

Training the proposed 3D CNN is achieved with a synthetic dataset created with simple forms and manually by authors.

-

The 3D CNN inputs are low-resolution voxel maps. In consequence, the structural state recognition process is delimited to the number of occupied and unoccupied voxels in the 3D array.

Structural state classification

A general scheme of the structural state classification of built heritage departing from a voxel map and a 3D CNN is shown in Fig. 1 and described below.

-

The INPUT column refers to the preparation of the data for the supervised learning algorithm. This involves two main steps: constructing CAD models of the built heritage of interest and voxelizing these models. Voxelization corresponds to obtaining voxel maps of the CAD models by fitting them into three-dimensional arrays. Two classes of voxel maps are created: complete, representing the heritage building with wall damage degree in categories 1, 2, and 3; and incomplete, representing the heritage building with damage degree in categories 4 and 5.

-

NEURAL NETWORK column corresponds to the supervised learning approach, which includes an adaptation of VoxNet CNN40. The CNN is responsible for classifying the building's structural state. The CNN performs three steps: to extract feature maps array, reduce the size, and classify the input.

-

OUTPUT column refers to the building structural state already classified as complete or incomplete building.

Step one referts to the construction of a voxel map dataset from building 3D synthetic models. This dataset is subsequently used for training and validating the adapted 3D CNN. In step two, the voxel maps of real heritage buildings are used as input and evaluated by the validated 3D CNN. The final output from both steps is the classification of the buildings' structural state as either complete or incomplete.

Each process in Fig. 1 is described in detail in the next sections.

Dataset construction

This paper focuses on the classification of unique built heritage structures with severe and very severe damage (corresponding to categories 4 and 5 in ref. 39, respectively). These levels indicate that the buildings have lost parts of their structure, requiring significant repair work. Two classes are used to identify the structural state of heritage buildings: the complete class, representing buildings that do not require restoration, and the incomplete class, referring to buildings in need of restoration.

The case study includes two buildings: a Posa chapel and the Kukulkán temple, both located in Mexico, with only one point cloud available for each case. To address this limitation, an ad-hoc dataset was created, consisting of 780 voxel maps (390 voxel maps for each class) derived from synthetic 3D models of chapels and synthetic pyramid models.

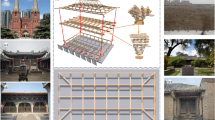

To create the dataset, 100 complete 3D synthetic models similar to the Posa chapel are designed in Blender. Basic structural elements such as pillars, walls, and domes are combined. Figure 2 shows examples of the 3D synthetic models designed in Blender. After creating these models, complete 3D synthetic models similar to the Kukulkán temple are added from the 3D Warehouse database41. Figure 3 illustrates some of these pyramid models. Then, data augmentation is applied to both sets of models by rotating them two times. The first rotation is in 30 degrees, and the second one is in 60 degrees. Thus, 300 synthetic models similar to the Posa chapel and 90 synthetic pyramid models are obtained, resulting in 390 synthetic 3D models. Figure 2 shows examples of the complete 3D synthetic models designed in Blender with a rotation of 30 and 60 degrees, respectively. Figure 3 shows examples of the 3D Warehouse models with the same rotations.

Two 3D synthetic examples of Posa chapels are provided as follows: Example 1 of complete model of Posa chapel in Blender (a) without rotation, (b) 30 degrees rotated, and (c) 60 degrees rotated. Example 2 of complete model of Posa chapel in Blender (d) without rotation, (e) 30 degrees rotated, and (f) 60 degrees rotated.

Two 3D synthetic pyramid examples are provided as follows: Example 1 of complete model of pyramid from 3D Warehouse (a) without rotation, (b) 30 degrees rotated, and (c) 60 degrees rotated. Example 2 of complete model of pyramid from 3D Warehouse (d) without rotation, (e) 30 degrees rotated, and (f) 60 degrees rotated.

Each one of the 390 complete synthetic models in 3D is exported as a triangular mesh. The triangular mesh is scaled to a unitary size using the following:

where α is the scale, \(\max\) and \(\min\) are respectively the maximum and minimum bounds of the mesh. Then, the mesh is converted into a binary occupancy grid, namely, a voxel map. A voxel spatial resolution n = 32 is defined. Then, the voxel map is obtained by considering the states in the binary set Bs = {0, 1}, where 1 is an occupied voxel for the grid where the object’s surface lies, and 0 stands for a free voxel for the unoccupied grids. This voxelization process is represented in Fig. 4. This process is applied to the 390 complete 3D synthetic models. The resulting 390 voxel maps are the complete class.

First, the triangular mesh (a) is encapsulated and scaled to a bounding box (b). Then, the voxel map (c) is obtained. Finally, from (c), random sections are marked as unoccupied voxels to construct incomplete models (d).

After that, a second data augmentation is performed to obtain the incomplete voxel maps of the chapels and pyramids, i.e., the incomplete class. Specifically, random sections of occupied voxels are marked as unoccupied. This process creates examples resembling chapels and pyramids that have lost parts of their structure. As a result, the dataset consists of 600 voxel maps of 3D synthetic models of chapels and 180 voxel maps of 3D synthetic models of pyramids. From these, 390 voxel maps simulate complete structures, and the other 390 voxel maps simulate structures with severe and very severe damage.

Supervised learning scheme

To automate the classification of structures, we trained a CNN using the constructed dataset. Given the limited number of examples and the fact that the incomplete class in the dataset exists only as voxel representations, we propose using a 3D CNN. Specifically, we adapt the VoxNet CNN proposed in ref. 40, which is a voxel-based method. Although newer approaches, such as ConvPoint42, can process point clouds directly, they require a large number of examples for both classes. Therefore, we opt for a simpler approach.

It is important to remark that voxel-based methods tend to generalize better with smaller datasets as they simplify the representation of 3D objects. Also, for structures with large modifications in the geometry due to damage (as the heritage buildings with severe and very severe damage), voxel representation seems to be sufficient for capturing the necessary details while avoiding overfitting in the classification.

We recall that VoxNet CNN40 is a multiclass architecture that integrates a volumetric occupancy grid representation with a supervised 3D CNN. Its architecture consists of an input layer, two convolutional layers, a pooling layer, and two fully connected layers. It includes a Leaky Rectified Linear Unit after each convolutional layer. A Rectified Linear Unit (ReLU) is used after the first fully connected layer. A dropout regularization is applied after each layer. Softmax is used to provide the output.

Our modification to VoxNet CNN corresponds to the adaptation of its multiclass architecture to a binary class architecture. This in order to be applicable to our specific binary classification problem. Hence, softmax is replaced with a sigmoid function. Also, dropout regularization is not considered. Furthermore, since we do not work with negative values in the volumetric occupancy grids representations (voxel maps), because we define each value of the grids in the set Bs = {0, 1}, we replace Leaky ReLU with ReLU function after each convolutional layer. Thus, the resulting scheme is shown in Fig. 5. Each layer in Fig. 5 is described below using the format Name (hyper-parameter).

-

Input layer. This layer introduces the voxel maps to the 3D CNN. Remember that the grid must be fixed-size and three-dimensional, i.e., I × J × K voxels. In this case, being I = J = K = n = 32. Each of the grid cells has a discrete value in {0, 1}, which is updated from the binary occupancy grid models (voxel maps).

-

First convolutional layer C1(f, d, s). This accepts the input data. In order to create the feature map, fm, the input is convolved with f = 32 learned filters of shape d × d × d = 5 × 5 × 5. In addition, the convolution is also applied at a spatial stride s = 2 and a padding set at zero. This output is passed through a ReLU43.

-

Second convolutional layer C2(f, d, s). This layer receives the fm produced by the past convolution. Now, the convolution is carried out with f = 32 of shape 3 × 3 × 3, s = 1, and a padding set at zero. Also, the output is passed through a ReLU43.

-

Pooling layerP(m). It reduces the size of the input volume by a factor of m = 2 along fm = 32. Replaces the non-overlapping 2 × 2 × 2 voxel blocks by their maximum. This layer preserves the essential features. After this layer, a flattening is applied to convert fm into a vector.

-

First fully connected layer FC1(N). The layer performs a linear combination of the input and its parameters and applies an activation function. It has output neurons, N = 128. Like C1 and C2, the ReLU function43 is also used to move to the next layer.

-

Second fully connected layer FC2(N). In this last part N = 1. Sigmoid function44 is used to provide a probabilistic output. Where an output equal to 0 means incomplete building and 1 stands for complete building.

The three-dimensional input layer (voxel maps) is introduced with a fixed-size I × J × K = 32 × 32 × 32 binary voxels. Then, (1) the first convolutional layer applies filters f = 32 of size d = 5, a spatial stride s = 2, and zero padding with ReLU activation. Next, (2) the second convolutional layer applies filters f = 32 of size d = 3, a spatial stride s = 1, and zero padding with ReLU activation. After that, (3) the pooling layer reduces the input size by a factor of m = 2. Later, (4) the first fully connected layer uses ReLU activation and provides 128 output neurons, N. Then, (5) the second fully connected layer uses sigmoid activationhas and provides one output neuron. Finally, the output layer delivers a probabilistic result, 0 for incomplete building and 1 for complete building.

Thus, the proposed architecture for the 3D CNN for classifying the structural state of heritage buildings is:

Training

The training of the 3D CNN architecture proposed in Eq. (2) was conducted by considering Adaptive Moment Estimation (ADAM)45 to iteratively update the network weights. ADAM combines Adaptive Gradient Algorithm (AdaGrad) and Root Mean Square Propagation (RMSProp). Its objective is to achieve an adaptive estimation of lower-order moments plus the learning rate times the L2 weight norm for regularization. In this case, ADAM is initialized with a learning rate lr = 0.0001 for the voxel maps dataset. The exponential decay rate parameters for the estimated moments are set as β1 = 0.9 and β2 = 0.999, while the batch size is set as 5 and the epochs in 25. Since the proposed 3D CNN architecture performs a binary classification with a single output, Binary Cross Entropy (BCE) loss cost function was used to assess the performance of the 3D CNN architecture in Eq. (2). The BCE loss cost function is defined as:

where l represents the loss term, y is the true structure state, x is the model’s prediction, and w is the average weight. The network was implemented in PyTorch 1.12.146, and it was trained in a system with an AMD Ryzen 5 5000 series processor, 16 GB of memory, and an NVIDIA GeForce RTX 2060 GPU.

For training, the dataset described in the “Dataset construction” section was divided into a training set with 80% of the voxel maps and a validation set with the remainder. The training results are presented in Fig. 6, where the loss and the accuracy are depicted as the epochs advance. From Fig. 6a, the epoch with better results is 11, with a loss margin of 0.757% for train and 0.265% for validation. On the other hand, it can be seen that the classification accuracy curves from Fig. 6b are stable around 99.8% for the training and 100% for the validation. Thus, it is concluded that the neural network reaches an almost perfect accuracy in validation. In the next section, we will test this trained network with two real cases.

The loss curve for both training and validation data is shown in (a). There the best results are achieved at epoch 11 with a loss of 0.757% for training and 0.265% for validation. The classification accuracy curves for both training and validation are depicted in (b). There the training accuracy stabilizes around 99.8% and the validation accuracy reaches 100%.

Practical test

This section presents the practical test of the 3D CNN architecture in Eq. (2) to recognize the current structural state of built heritage with damage degrees 4 and 5. First, the case studies are introduced.

Case studies

Two case studies are used to evaluate the modified 3D CNN architecture outlined in Eq. (2). The first case focuses on a Colonial Era heritage building, specifically the Posa chapel of the Natividad former convent, located in Tepoztlán, Morelos, Mexico. The second case involves a pre-hispanic archeological structure, the Kukulkán temple in Chichén Itzá, Yucatán, Mexico, a renowned site of the ancient Maya civilization, which is one of the 7 Wonders of the World. Both case studies illustrate the challenges and intricacies of applying the 3D CNN model to voxelized point clouds of culturally and historically significant buildings.

Posa chapel

The 3D CNN architecture in Eq. (2) is tested with the point cloud of a building belonging to a Colonial Era heritage. Note that during Mexico’s colonial period (1521–1821), the urban layout was modified. Several colonial cities were built with various architectural elements such as temples, cathedrals, buildings, aqueducts, and convents, among others. In addition to the historical context, the combination of indigenous art with Spanish influence makes these buildings unique in the world. Currently, the colonial cities are the historical centers of the most visited cities in the country. Moreover, some of these historic buildings have become a World Heritage site. However, due to their age, the architectural pieces have been deteriorated.

The Posa chapel (see Fig. 7a) belongs to the Natividad former convent at Tepoztlán, Morelos, Mexico (see Fig. 7b), which has been a UNESCO World Heritage site since 1994. The Natividad former convent was built by Tepoztecos indigenous under the order of Dominican friars in the Colonial Era, between 1555 and 1580. It is one of five monasteries adjacent to the Popocatepetl volcano in Mexico. A particular characteristic of these convents is the four Posa chapels, located in the corners of the atriums. These chapels were used to exhibit paintings or religious relics. Due to climatic conditions over time, there are only four well-preserved Posa chapels in Mexico. One is located in the Natividad former convent, Tepoztlán, Morelos, Mexico; two of them are located in San Miguel Arcángel former convent at Ixmiquilpan, Hidalgo, Mexico; and the last one is located in Calpan former convent at San Andrés Calpan, Puebla, Mexico.

The Posa chapel is shown in (a), which is part of the Natividad former convent shown in (b). This is a UNESCO World Heritage site built between 1555 and 1580.

The chosen case is a quadrangular vaulted structure with two entrances. It features indigenous paintings inside, similar to those in the convent. Approximately one column and half a dome are missing, placing its damage degree in category 5.

Kukulkán temple

To validate the proposed approach, we decided to test the trained 3D CNN architecture in Eq. (2) with a cultural heritage building with neither severe nor very severe damage. In contrast to the previous case, the point cloud represents a heritage building of a pre-hispanic archeological site. Indigenous Mexican cultures established settlements across the country during the Preclassic, Classic, and Postclassic periods (circa 600–1200 AD). These civilizations focused on urban development that harmoniously integrated with nature. Due to their cosmology and the meaning attributed to their structures, these buildings are considered some of the most important in the world. Today, these pre-hispanic sites, like other colonial sites, are among the most visited locations in Mexico. However, despite their historical and cultural significance, centuries of exposure to natural elements and human activity have contributed to the gradual deterioration of this invaluable heritage.

The point cloud now under analysis represents the Kukulkán temple, a structure with no severe damage (see Fig. 8a). This pyramid is part of the renowned pre-hispanic archeological site of Chichén Itzá and has been recognized as one of the 7 Wonders of the World since 2007. It exhibits the greatness of the ancient Maya civilization (see Fig. 8b). Declared a UNESCO World Heritage site in 1988, Chichén Itzá was founded in the 6th century AD by the Maya on the Yucatán Peninsula, Mexico. The iconic pyramid, built in the 12th century AD, served as a temple dedicated to the worship of the Maya deity Kukulkán. The pyramid stands 24 meters tall from its base to the top, with each of its sides measuring 55.3 meters, giving it a square shape. The structure consists of nine stepped terraces, symbolizing the nine levels of the underworld in Maya cosmology, and is aligned with astronomical events, such as the equinoxes and solstices, marking dates of great significance for agricultural cycles. This alignment highlights the Maya’s advanced knowledge in astronomy, geometry, and mathematics.

The Kukulkán temple, an iconic structure within the Chichén Itzá city, is shown in (a). A broader view of the Chichén Itzá city, recognized as one of the 7 Wonders of the World since 2007, is given in (b).

Test and results

The Posa chapel, along with the entire Natividad former convent, was scanned with a terrestrial laser in 2022 by the Coordinación Nacional de Monumentos Históricos (CNMH) from Mexico. The point cloud of such a Posa chapel obtained by the CNMH contained a total of 9,084,416 points. This including the Posa chapel (without a half dome and a column), an atrium wall, terracing, and noise, as shown in Fig. 9a. Hence, the point cloud was manually refined to have only the structure of interest for the study, as shown in Fig. 9b. Afterward, data preparation was performed by proceeding as explained in the “Structural state classification” section to obtain a voxel map of the real Posa chapel, as depicted in Fig. 9c.

The original point cloud of the Posa chapel, (a), is segmented resulting (b). Then, its voxel map is generated as shown in (c).

On the other hand, the point cloud of the Kukulkán temple is a subset of the point cloud representing the entire Chichén Itzá site, which was manually refined to include only the structure of interest for the study. This point cloud is sourced from the Open Heritage 3D database and provided by CyArk. It consists of 106,425,840 points, captured using terrestrial laser scanning. See Fig. 10a. In the same way as the first case, data preparation was performed to obtain a voxel map of the real Kukulkán temple, as shown in Fig. 10b.

From (a), the segmented point cloud of the Kukulkán temple, its voxel map (b) is obtained.

In order to test the 3D CNN model, the voxel maps of the real Posa chapel and the real Kukulkán temple are input into the already trained network. This is illustrated in Fig. 11.

The voxel maps of the real Posa chapel and the Kukulkán temple are the input to the trained 3D CNN. The output shows that the 3D CNN classifies the Posa chapel as an incomplete building and the Kukulkán temple as a complete building.

The results of the classification test demonstrate that the network successfully classifies the real Posa chapel as an “incomplete” building and the real Kukulkán temple as a “complete” building. These are results that are consistent with the structural state of both buildings.

After considering the limitations of this research, mainly: do not having a dataset of point clouds of the real buildings of interest to conduct the training of the 3D CNN. Solving this with the use of CAD tools to construct very simple 3D synthetic models of chapels by using basic structural elements and 3D synthetic models of pyramids from 3D Warehouse. Moreover, confirming the efficiency and robustness of our proposed classification scheme for the structural state of heritage buildings by performing a test using voxelized point clouds of two real cases. As well as using a very low spatial resolution for the voxel maps of the 3D models (synthetic and real ones). We conclude that the test classification result is successful, i.e. the modified 3D CNN successfully classified a heritage building accordingly with its structural state. Also, the test result corroborates that the treatment of point clouds into voxel maps with a very low spatial resolution (n = 32) recovers the essential architectural structure of the 3D models and helps to reduce the training time costs of the proposed 3D CNN. However, the 3D CNN may have overfitted due to the size of the training dataset.

Discussion

In this section, findings and limitations of this research are introduced. Also, the distinguishing elements of our work from the related one are discussed.

From the related work on this topic, it can be identified that the use of deep learning algorithms to address issues in cultural heritage preservation is still an emerging area of study. Previous works, such as those by Chaiyasarn et al.20, Zou et al.35, Wang et al.37, and Mishra et al.18, have applied various methods to solve problems related to the detection and segmentation of structural damage at categories ranging from 1 to 3. However, one of the key differences between our work and such literature is the classification of a cultural heritage building with damage degree 4 and 5 based on its structural condition. Additionally, in most cases of the discussed related work, training datasets are created using random examples from specialized repositories of minor scale objects to test the neural networks' performance. In contrast, in our work, an original training dataset is created to tackle the training of the proposed 3D CNN for a real outdoor large-scale specific object. In this context, the obtained results demonstrate that a 3D CNN can be trained using 3D models based on voxel maps. The use of voxel maps has allowed us to train the network with a relatively small number of examples.

The performance limitations of the proposed 3D CNN are as follows. An increase in the number of examples in the training dataset is necessary. While the proposed 3D CNN can correctly classify the structural state of the Posa Chapel and the Kukulkán temple, a larger volume of examples will strengthen the 3D CNN’s predictions. Additionally, diversifying the training dataset with various examples of cultural heritage buildings would expand the practical applicability of this work. Also, the proposed 3D CNN can only correctly classify as incomplete those voxel maps with missing parts that generate free spaces. That is, it struggles when debris from collapsed parts of buildings eliminates empty spaces. Moreover, performance comparison of the proposed 3D CNN, which is a voxel-based method, with state-of-the-art point cloud-based methods is a task conditioned on the availability of point cloud datasets of heritage buildings.

Conclusions

This paper presented an automatic classification of the current structural state of built heritage with severe and very severe damage degrees. The main consideration was that there are few models available of the built heritage to conduct supervised learning (due to its uniqueness). 3D representation (point cloud) converted to a voxel map with low resolution, a synthetic dataset created by authors, and a 3D CNN with only five layers were exploited. In this context, a point cloud of the Posa chapel, missing approximately one column and half a dome, and a point cloud of the Kukulkán temple, with no severe nor very severe damage degree, were proposed as case studies. The obtained results showed that the 3D CNN was able to correctly classify the training dataset accordingly with its structural state (complete or incomplete) with 0.265 loss and 100% accuracy. Also, a practical test validated that the proposed 3D CNN can successfully classify the case studies accordingly with its current structural state. Thus, it is concluded that: (i) Creating a point cloud dataset for heritage buildings like the Posa chapel can be challenging due to their unique architecture. However, preparing a training dataset from 3D synthetic models of the Posa chapel and applying data treatment to convert the models to voxel maps significantly helped in this research. (ii) The low-resolution voxel maps of the simple synthetic models recover the essential features of the heritage buildings to conduct the supervised learning. (iii) It is feasible to apply the presented automatic classification scheme for other unique buildings.

For future work, it would be necessary to increase the number of examples in the dataset to strengthen the 3D CNN’s prediction. Additionally, point cloud datasets of heritage buildings will be required to allow the performance comparison of the proposed 3D CNN with point cloud-based methods in solving the structural state detection problem of a heritage building. Another pending task is to apply 3D autoencoders to seek to complete point clouds with the missing parts of buildings with degrees of damage 4 and 5. Thus, the heritage buildings could be virtually recreated in 3D with an estimated original shape. Hence, the virtual model could be exploited to facilitate the corrective intervention stage in preservation and restoration activities, by providing a design scenario testing and to promote tourism by performing digital projections of historical places.

Data availability

The dataset and code used during the current study are available from the corresponding author on reasonable request.

Abbreviations

- CNN:

-

Convolutional Neural Network

- CAD:

-

Computer-Aided Design

- SVM:

-

Support Vector Machines

- RF:

-

Random Forest

- ReLU:

-

Rectified Linear Unite

- ADAM:

-

Adaptive Moment Estimation

- AdaGrad:

-

Adaptive Gradient Algorithm

- RMSProp:

-

Root Mean Square Propagation

- BCE:

-

Binary Cross Entropy

- UNESCO:

-

United Nations Educational, Scientific, and Cultural Organization

- CNMH :

-

Coordinación Nacional de Monumentos Históricos (in Spanish)

References

El Hazzat, S., El Akkad, N., Merras, M., Saaidi, A. & Satori, K. Fast 3d reconstruction and modeling method based on the good choice of image pairs for modified match propagation. Multimed. Tools Appl. 79, 7159–7173 (2020).

Bian, Y. et al. Quantification method for the uncertainty of matching point distribution on 3d reconstruction. ISPRS Int. J. Geo-Inf. 9, 187 (2020).

Cao, M.-W. et al. Parallel k nearest neighbor matching for 3d reconstruction. IEEE Access 7, 55248–55260 (2019).

Godfrey, S., Cooper, J., Bezombes, F. & Plater, A. Monitoring coastal morphology: the potential of low-cost fixed array action cameras for 3d reconstruction. Earth Surf. Process. Landf. 45, 2478–2494 (2020).

Khaloo, A. & Lattanzi, D. Hierarchical dense structure-from-motion reconstructions for infrastructure condition assessment. J. Comput. Civ. Eng. 31, 04016047-1– 04016047-13 (2017).

Czerniawski, T., Nahangi, M., Haas, C. & Walbridge, S. Pipe spool recognition in cluttered point clouds using a curvature-based shape descriptor. Autom. Constr. 71, 346–358 (2016).

Zhu, L. & Hyyppa, J. The use of airborne and mobile laser scanning for modeling railway environments in 3d. Remote Sens. 6, 3075–3100 (2014).

Dimitrov, A. & Golparvar-Fard, M. Segmentation of building point cloud models including detailed architectural/structural features and MEP systems. Autom. Constr. 51, 32–45 (2015).

Hu, Y., Wang, Y., Wang, S. & Zhao, X. Fusion key frame image confidence assessment of the medical service robot whole scene reconstruction. J. Imaging Sci. Technol. 65, 30409–1 (2021).

Li, X., Wang, D., Ao, H., Belaroussi, R. & Gruyer, D. Fast 3d semantic mapping in road scenes. Appl. Sci. 9, 631 (2019).

Su, Y. & Wang, Z. 3d reconstruction of submarine landscape ecological security pattern based on virtual reality. J. Coast. Res. 83, 615–620 (2018).

Wang, B., Yin, C., Luo, H., Cheng, J. C. & Wang, Q. Fully automated generation of parametric bim for mep scenes based on terrestrial laser scanning data. Autom. Constr. 125, 103615 (2021).

Sung, M., Cho, H., Kim, T., Joe, H. & Yu, S.-C. Crosstalk removal in forward scan sonar image using deep learning for object detection. IEEE Sens. J. 19, 9929–9944 (2019).

Wei, K., Dai, Y. & Ren, B. Automatic identification and autonomous sorting of cylindrical parts in cluttered scene based on monocular vision 3d reconstruction. Sens. Rev. 39, 763–775 (2019).

Cheng, Q., Sun, P., Yang, C., Yang, Y. & Liu, P. X. A morphing-based 3d point cloud reconstruction framework for medical image processing. Comput. Methods Prog. Biomed. 193, 105495 (2020).

Deligiorgi, M. et al. A 3d digitisation workflow for architecture-specific annotation of built heritage. J. Archaeol. Sci. Rep. 37, 102787 (2021).

Obeso, A. M., Benois-Pineau, J., Vázquez, M. G. & Acosta, A. R. Saliency-based selection of visual content for deep convolutional neural networks: application to architectural style classification. Multimed. Tools Appl. 78, 9553–9576 (2019).

Mishra, M., Barman, T. & Ramana, G. Artificial intelligence-based visual inspection system for structural health monitoring of cultural heritage. J. Civil Struct. Health Monit. 14, 1–18 (2022).

Cao, Y. & Scaioni, M. 3dleb-net: label-efficient deep learning-based semantic segmentation of building point clouds at lod3 level. Appl. Sci. 11, 8996 (2021).

Chaiyasarn, K., Sharma, M., Ali, L., Khan, W. & Poovarodom, N. Crack detection in historical structures based on convolutional neural network. Int. J. GEOMATE 15, 240–251 (2018).

Wang, N., Zhao, Q., Li, S., Zhao, X. & Zhao, P. Damage classification for masonry historic structures using convolutional neural networks based on still images. Comput. Aided Civ. Infrastruct. Eng. 33, 1073–1089 (2018).

Kumar, P., Ofli, F., Imran, M. & Castillo, C. Detection of disaster-affected cultural heritage sites from social media images using deep learning techniques. J. Comput. Cult. Herit. 13, 1–31 (2020).

Rodrigues, F. et al. Application of deep learning approach for the classification of buildings’ degradation state in a bim methodology. Appl. Sci. 12, 7403 (2022).

Nugraheni, D. M. K., Nugroho, A. K., Dewi, D. I. K. & Noranita, B. Deca convolutional layer neural network (dcl-nn) method for categorizing concrete cracks in heritage building. Int. J. Adv. Comput. Sci. Appl. 14, 722–730 (2023).

Yazdi, H., Sad Berenji, S., Ludwig, F. & Moazen, S. Deep learning in historical architecture remote sensing: automated historical courtyard house recognition in Yazd, Iran. Heritage 5, 3066–3080 (2022).

Zou, H., Ge, J., Liu, R. & He, L. Feature recognition of regional architecture forms based on machine learning: a case study of architecture heritage in Hubei province, China. Sustainability 15, 3504 (2023).

Obeso, A. M., Benois-Pineau, J., Acosta, A. Á. R. & Vázquez, M. S. G. Architectural style classification of Mexican historical buildings using deep convolutional neural networks and sparse features. J. Electron. Imaging 26, 011016 (2017).

Matrone, F. & Martini, M. Transfer learning and performance enhancement techniques for deep semantic segmentation of built heritage point clouds. Virtual Archaeol. Rev. 12, 73–84 (2021).

Díaz-Rodríguez, N. et al. Explainable neural-symbolic learning (x-nesyl) methodology to fuse deep learning representations with expert knowledge graphs: the Monumai cultural heritage use case. Inf. Fusion 79, 58–83 (2022).

Artopoulos, G. et al. An artificial neural network framework for classifying the style of Cypriot hybrid examples of built heritage in 3d. J. Cult. Herit. 63, 135–147 (2023).

Gao, L. et al. Research on image classification and retrieval using deep learning with attention mechanism on diaspora Chinese architectural heritage in Jiangmen, China. Buildings 13, 275 (2023).

Llamas, J., M. Lerones, P., Medina, R., Zalama, E. & Gómez-García-Bermejo, J. Classification of architectural heritage images using deep learning techniques. Appl. Sci. 7, 992 (2017).

Janković, R. Machine learning models for cultural heritage image classification: comparison based on attribute selection. Information 11, 12 (2019).

Haznedar, B., Bayraktar, R., Ozturk, A. E. & Arayici, Y. Implementing pointnet for point cloud segmentation in the heritage context. Herit. Sci. 11, 1–18 (2023).

Zou, Z., Zhao, X., Zhao, P., Qi, F. & Wang, N. Cnn-based statistics and location estimation of missing components in routine inspection of historic buildings. J. Cult. Herit. 38, 221–230 (2019).

Hatir, M. E., Barstuğan, M. & İnce, İ. Deep learning-based weathering type recognition in historical stone monuments. J. Cult. Herit. 45, 193–203 (2020).

Wang, N., Zhao, X., Zou, Z., Zhao, P. & Qi, F. Autonomous damage segmentation and measurement of glazed tiles in historic buildings via deep learning. Comput. Aided Civ. Infrastruct. Eng. 35, 277–291 (2020).

Meklati, S., Boussora, K., Abdi, M. E. H. & Berrani, S.-A. Surface damage identification for heritage site protection: a mobile crowd-sensing solution based on deep learning. J. Comput. Cult. Herit. 16, 1–24 (2023).

Burland, J. B., Broms, B. B. & De Mello, V. F. Behaviour of foundations and structures. In 9th International Conference on Soil Mechanics and Foundation Engineering, 495–546 (Tokio, JPN, 1977).

Maturana, D. & Scherer, S. Voxnet: A 3d convolutional neural network for real-time object recognition. In 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 922–928 (IEEE, 2015).

Sketchup. 3d warehouse. https://3dwarehouse.sketchup.com/model/Model-URL. Accessed: November 2024.

Boulch, A. Convpoint: continuous convolutions for point cloud processing. Comput. Graph. 88, 24–34 (2020).

Maas, A. L. et al. Rectifier nonlinearities improve neural network acoustic models. In 30th International Conference on Machine Learning, 1–6 (Giorgia, USA, JMLR: W&CP, vol. 28, 2013).

Han, J. & Moraga, C. The influence of the sigmoid function parameters on the speed of backpropagation learning. In International Workshop on Artificial Neural Networks 195–201 (Springer, 1995).

Kingma, D. P. & Ba, J. Adam: a method for stochastic optimization. In 3rd International Conference on Learning Representations (ICLR) 1–13 (San Diego, USA, 2015).

Paszke, A. et al. Pytorch: An imperative style, high-performance deep learning library. In Proc. International Conference on Neural Information Processing Systems, 8026–8037 (Vancouver, Canada, 2019).

Acknowledgements

This work was partially funding by Secretaría de Investigación y Posgrado of the Instituto Politécnico Nacional (SIP-IPN), through projects 20230034 and 20254309. The authors acknowledge the CNMH, especially Angel Mora-Flores, for providing the point cloud of the Posa chapel to carry out this research. Furthermore, E.M.M.-S. acknowledges support from SECIHTI-Mexico through the postgraduate studies scholarship. J.I.V.-G. and M.A.-C. thank financial support from IPN EDI program and Sistema Nacional de Investigadoras e Investigadores (SNII)-SECIHTI. Likewise, C.A.M.-Z. thanks financial support from SNII-SECIHTI. They have not a specific role in the conceptualization, design, data collection, analysis, decision to publish, or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

E.M.M.-S. has contributed to this work in the acquisition, analysis, and interpretation of the data. Also, he has contributed in the creation of the code for the training and validation of the proposed 3D CNN architecture. J.I.V.-G. has made contributions to the conception, interpretation of data, and creation of the code for the training and validation of the proposed 3D CNN architecture. C.A.M.-Z. has designed and substantively revised the work. M.A.-C. has made contributions to the conception and design of the work. She also have drafted the work and substantively revised it.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Muñoz-Silva, E.M., Vasquez-Gomez, J.I., Merlo-Zapata, C.A. et al. Binary damage classification of built heritage with a 3D neural network. npj Herit. Sci. 13, 124 (2025). https://doi.org/10.1038/s40494-025-01597-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s40494-025-01597-y