Abstract

To address the digitization needs of Cantonese embroidery, a human intangible cultural heritage, and resolve the limitations of existing simulation techniques—insufficient stitch diversity, unnatural pattern transitions, and inaccurate structure-color reproduction—this study proposes a diffusion-based method that generates high-quality Cantonese embroidery-style images with hundreds of labeled samples. In this method, lightweight LoRA fine-tuning endows the large model with ultrahigh-fidelity texture reproduction; SAM semantic segmentation imposes high-precision spatial semantic constraints on generation; ControlNet multi-condition guidance performs accurate structure‒color restoration. This synergistic combination achieves superior feature reconstruction and detail generation, a balance that existing models struggle to maintain under limited data. It outperforms existing approaches in key metrics (LPIPS: 0.244; FID: 95.57; PSNR: 16.38), with remarkable visual and user evaluation advantages. This work enables applications such as relic restoration, design reference, and intelligent manufacturing simulation, providing a critical path for the digital preservation of intangible cultural heritage and for innovative design.

Similar content being viewed by others

Introduction

Cantonese embroidery is among the first group of national intangible cultural heritages to be recognized, and it holds substantial historical and cultural significance1. It is generally crafted with silk thread twisted from high-quality mulberry silk2. The threads are flexible, transparent, smooth, and skin-friendly. Through the splitting process, a single silk strand can be divided into fine velvet threads as thin as a hair tip. When applied to details such as bird feathers and flower petals, it produces the distinctive pearlescent texture of silk, as illustrated in Fig. 1. Due to the complexity and precision of Cantonese embroidery, creators must possess technical expertise acquired through long-term training, along with the endurance to engage in continuous production. Consequently, the unparalleled skills of Cantonese embroidery remain in the hands of only a few inheritors, which hinders the broader transmission and development of this art form. As a representative of traditional Lingnan craftsmanship and one of China’s four major embroideries, Cantonese embroidery has profound cultural significance—it embodies the historical memory and regional esthetic characteristics of Lingnan, as well as the wisdom of traditional Chinese textile art. Its unique processes (e.g., splitting mulberry silk into hair-thin velvet, flexible stitching for delicate details, and multilayered color matching) represent the pinnacle of traditional embroidery, making its protection and inheritance an urgent task.

These pictures are provided with permission by Juyuanxiang Culture and Art Development (Guangzhou) Co., Ltd.

Digital simulation technology is playing an increasingly important role in the protection and inheritance of cultural heritage3,4. The generation of images in the artistic style of Cantonese embroidery has opened up a new path for the dissemination and inheritance of this traditional craft, while also offering new possibilities in the field of intelligent manufacturing of intangible cultural heritage. The generation of images in the artistic style of Cantonese embroidery refers to the ability to transform user-input images into images with the texture of Cantonese embroidery, while preserving the original semantic meaning, outline, and color. Realizing image generation in the artistic style of Cantonese embroidery for any pattern can not only provide reference material for handmade creators and enrich the teaching case base, but can also be applied to the restoration of Cantonese embroidery cultural relics and to innovative applications such as process simulation and production data integration in intelligent manufacturing. Digital simulation technology has revitalized the art of Cantonese embroidery in the digital world, not only facilitating the activation and inheritance of artistic value but also providing technical support for the integration of traditional craftsmanship with modern manufacturing, thereby demonstrating broad application prospects.

Two main methods exist for the simulation of embroidery: one based on stitch modeling and the other on deep learning. In the early days, before the widespread development of deep learning, digital embroidery simulation was primarily achieved through stitch modeling, in which mathematical modeling was applied to the traces left by embroidery stitches to accurately restore the direction and texture of a single stitch5,6,7,8,9,10,11,12,13,14. However, this approach presents clear limitations when simulating entire patterns. The process requires multiple rounds of parameter adjustment, and the mechanized calculations struggle to reproduce the flexibility and dynamic adaptability of hand embroidery in response to material properties and force variations. As a result, the generated patterns often appear with rigid lines, as illustrated Fig. 2.

All images are sourced from online networks and free of copyright issues.

Recently, the rapid development of deep learning technology has led to significant breakthroughs across a wide range of fields, including aerial vehicle detection15, instrument indication acquisition16, ancient mural analysis17, and biometric recognition18. This technological progress has also provided new technical support for the digital simulation of cultural relics, particularly in the field of image style simulation and generation19,20. The core methods of style simulation can be broadly divided into three categories. Feature separation-based methods employ convolutional neural networks to extract multi-level semantic features of images, capture the global distribution characteristics of styles through the Gram matrix and other statistics, and recombine them with content structures to generate new images. Generative Adversarial Networks-based methods learn the nonlinear mapping between style and content domains through adversarial training of the generator and discriminator, thereby enabling cross-domain style transfer without paired data, while cyclic consistency constraints preserve content integrity. Attention mechanism-based dynamic style migration methods achieve differentiated style rendering of local image regions by allocating spatial or channel attention weights, significantly improving detail fidelity in complex scenes. These deep learning techniques simulate, to a certain extent, the brushstrokes, colors, and compositional features of traditional art through end-to-end network architectures, thereby achieving high-fidelity digital style reproduction. Typical models, such as AdaIN (Adaptive Instance Normalization)21 achieve efficient single-model, multi-style transfer by dynamically adjusting the statistical features of the content image to match the target style. Moreover, innovative mechanisms such as Cross-Image-Attention22 have enabled semantic-level appearance transfer under zero-shot conditions, generating a notable impact within the field.

In the field of digital embroidery simulation, one of the earliest breakthroughs in deep learning was the random stitch embroidery digital synthesis model based on the convolutional neural network VGG1923, proposed by Zheng et al.24. This model achieved, for the first time, the end-to-end generation of embroidery textures. However, the method was limited to simulating embroidery textures using only the cross-stitch technique. Later, Wu et al.25 developed a method for transferring the style of Chengcheng embroidery by integrating extended semantic analysis with VGG19. Although the introduction of interactivity improved design flexibility, the generated textures suffered from blurring and were overly dependent on manual intervention. More recent research on full embroidery image style transfer based on VGG19 demonstrated better preservation of target contours, but internal semantics were easily disrupted by the reference image, and color fidelity remained poor26. All of these embroidery simulation models represent incremental improvements based on VGG19.

Beyond convolutional neural networks, generative adversarial networks (GANs)27 have also been widely adopted in embroidery style simulation due to their end-to-end adversarial training mechanism and strong style decoupling capabilities. Beg et al.28 proposed an unsupervised image translation method that enabled the automatic generation of embroidery patterns by improving CycleGAN29. However, this method could only render a single stitch texture and lacked the diversity and layering of real embroidery. Wang et al.30 incorporated an attention mechanism into CycleGAN to enhance semantic matching, which improved performance in maintaining color and shape. Nevertheless, this method was highly dependent on the quality of the reference image. When the input image failed to match the semantics of the reference image, or when the reference image quality was poor, the simulation effect declined significantly. Liu et al.31 also improved CycleGAN by integrating a Markov adversarial network to optimize texture generation. Despite these enhancements, the generated stitches remained relatively simple and insufficient to replicate the complex texture variations of real embroidery. Yang et al.32 proposed a GAN-based method incorporating Embroidery Channel Attention (ECA). By separating texture and color features via the attention mechanism and introducing a color loss function alongside white-filling techniques, this model effectively addressed problems of color shift and texture blurring. However, the generated textures remained relatively uniform and sparse. The ChipGAN-ViT model proposed by Sha et al.33 combined a visual Transformer (ViT) with GAN for Han embroidery style transfer. Its advantage lay in better preservation of shape and color features from the original image, though clarity and fine detail representation of embroidery textures remained limited. MSEmbGAN34 achieved the intelligent synthesis of three stitch types through regional classification and stitch texture matching mechanisms. However, its performance relied heavily on large-scale training data. As the variety of stitch types increased, computational complexity rose sharply, placing substantial demands on both data volume and computing resources.

In summary, for artworks such as Cantonese embroidery, which feature highly abstract styles that are difficult to quantify, deep learning technology provides a valuable foundation for their digital simulation. However, current deep learning-based embroidery simulation methods face several major challenges: (1) simplistic textures, i.e., the generated stitches lack diversity and detail, failing to reproduce the complex textures of real embroidery; (2) color shift, referring to deviations from the original image’s coloration; (3) structural or semantic distortion, meaning a significant mismatch in structure between the input and output images or even the occurrence of semantic deviations; and (4) reliance on large volumes of training data. To date, no single model or method has addressed all these issues simultaneously. This poses considerable challenges for the task of this study—generating Cantonese embroidery-style images that are highly consistent with the original image in terms of structural contours, semantics, and color, while intelligently filling diverse Cantonese embroidery stitching textures given scarce Cantonese embroidery data. In particular, texture reproduction is quite difficult, as stitch textures must not only be accurately extracted but also faithfully represent stitching techniques35. Owing to the natural forms of patterns, variations in light and shadow, and growth structures, multiple stitching techniques must be applied flexibly to reproduce texture details and authentically preserve the artistic characteristics of Cantonese embroidery. Current methods fall far short in the realization of texture.

To overcome the limitations of existing methods, this paper proposes a collaborative framework based on stable diffusion (SD)36, integrating the LoRA37, ControlNet38, and SAM39 modules. The selection of this specific combination of technologies is driven by its targeted approach to address the core challenges in the digital simulation of Cantonese embroidery. First, the SD model’s step-by-step denoising mechanism, unlike the feature recombination approach of CNNs or the adversarial training of GANs, is better suited to visual tasks that require multilevel detail construction, providing a foundation for generating complex embroidery textures. However, as the pretrained SD model has not learned the unique silk-thread texture and stitching techniques characteristic of Cantonese embroidery, it cannot directly meet the requirement for style fidelity. Therefore, this paper introduces LoRA (low-rank adaptation)37, a lightweight fine-tuning technology specifically designed for diffusion models. By employing low-rank matrix decomposition, LoRA enables efficient parameter updates and effectively captures the distinctive style features of Cantonese embroidery, even with limited training data. This approach not only addresses the core bottleneck of data scarcity but also achieves high-fidelity texture simulation. The ControlNet38 and SAM39 modules integrated in this study further ensure consistency in shape contour, color distribution, and semantic alignment between the generated and original images. ControlNet precisely guides the diffusion process by extracting prior information such as LineArt, depth, and color from the input image, thereby ensuring the fidelity of the contours and color in the generated results. Simultaneously, to address the potential issue of semantic confusion in complex compositions of Cantonese embroidery, such as overlapping areas of petals and leaves, the SAM module is introduced for fine-grained semantic segmentation, assisting the model in achieving accurate semantic alignment between the original and generated images.

Based on the strong generative capabilities of diffusion models and the precise guidance provided by conditional control techniques, the main contributions of this work are as follows:

(1) A complete training process for a LoRA model incorporating the style characteristics of Cantonese embroidery is presented. This LoRA requires only a small dataset for training and efficiently fine-tunes large models, bridging the gap between the data-intensive requirements of model training and the scarcity of Cantonese embroidery data.

(2) The SAM model39 is adopted for input image segmentation, which assists the diffusion model in accurately identifying target-area semantics. This ensures that each segmented area precisely corresponds to its semantic meaning during the image stylization process, thereby avoiding semantic misplacement.

(3) A comprehensive Cantonese embroidery image generation framework is designed by integrating Stable Diffusion, LoRA, and a segmentation network with a conditional control module composed of ControlNet, thereby enabling image generation for simulating Cantonese embroidery artistic styles.

(4) Extensive generation and comparison experiments demonstrate that this model produces images with texture details characteristic of Cantonese embroidery. At the same time, it preserves the semantics, shape, and color of the original images while exhibiting strong generalization. Even for semantics not represented in the dataset, the model achieves excellent fitting performance, surpassing existing embroidery image generation methods. This provides robust support for the preservation and transmission of Cantonese embroidery techniques.

Methods

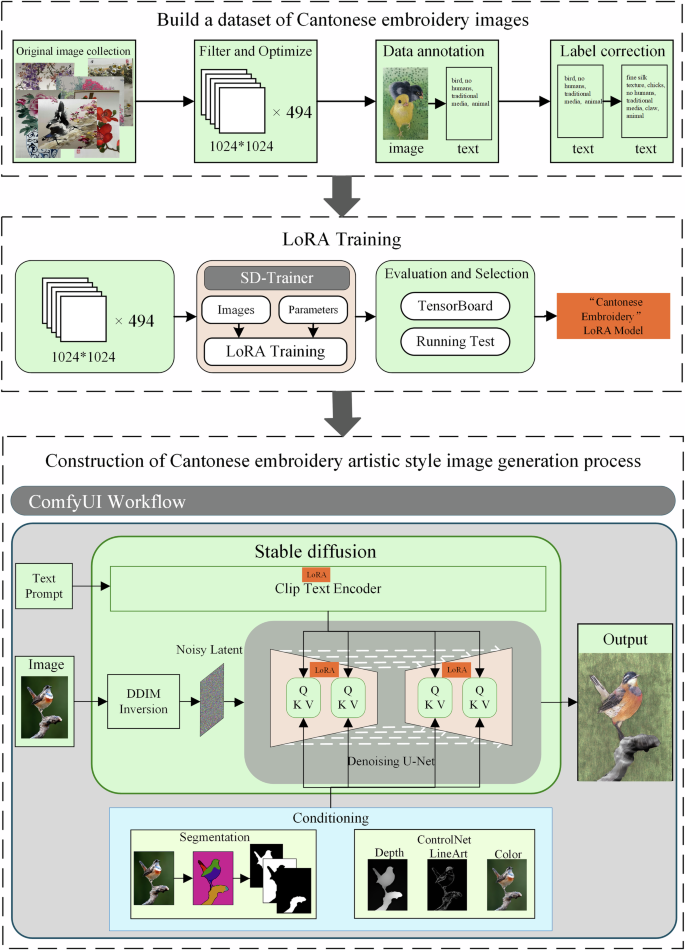

This study constructed a complete image generation process for the artistic style of Cantonese embroidery based on Stable Diffusion, with the workflow presented in Fig. 3. First, a dataset of Cantonese embroidery images with semantic labels was established. Based on this dataset, the adapter gx_lora3.safetensors incorporating the texture features of Cantonese embroidery was trained using LoRA low-rank adaptation technology. The trained LoRA model was then applied to the Stable Diffusion CLIP and UNet layers, enabling the model to generate semantic content with the texture characteristics of Cantonese embroidery. All components were integrated into the ComfyUI40 platform, where multiple conditions were designed to guide the generation process. Specifically, the SAM model was integrated to perform semantic segmentation on the input images, generating precise masks for different regions. During the denoising process of Stable Diffusion, these masks served as constraints to guide the generation of semantic content in each region. At the same time, ControlNet was introduced. By applying constraints of depth, LineArt, and color, the geometric structure and color gamut distribution of the generated images were preserved consistently with the original images. This solution fully leveraged the modular pipeline advantages of ComfyUI, achieving collaborative optimization through platform integration for extracting embroidery texture features, generating precise semantics, and maintaining the structural and color fidelity of the original images. This generation pipeline is deployed on a cloud server, featuring a hardware configuration of a 32-core AMD EPYC 7542 processor (main frequency: 2.9 GHz) and an NVIDIA GeForce RTX 4090D graphics card (48GB video memory). The operating system is Ubuntu 22.04. The deep learning framework is PyTorch 2.7.0, which comes with version 12.6 of the Compute Unified Device Architecture (CUDA). Under the above circumstances, it takes only approximately 50 seconds to generate a single image in the style of Cantonese embroidery with a resolution of 2048×2048.

The input images are sourced from online networks and are free of copyright issues; the flowchart is drawn by the authors.

Construction of the Cantonese embroidery image dataset

Cantonese embroidery works possess high artistic value, but data collection channels are limited. The image quality available on websites falls far short of meeting the requirements for Cantonese embroidery simulation tasks and could only be supplemented through offline means. The data for this study primarily come from an enterprise and an inheritor of Cantonese embroidery. One is Guangzhou Embroidery Craft Factory Co., Ltd41, which is the only time-honored production enterprise in the Chinese Cantonese embroidery industry. Its collection has both historical inheritance and industry representativeness. The second is the collection provided by Wang Xinyuan42, a municipal-level representative and inheritor of the national intangible cultural heritage of Cantonese embroidery. The collections from the above-mentioned sources all possess genuine academic value and authoritative representativeness, laying a foundation for the reliability of the research. Owing to the particularity of Cantonese embroidery works—all of which are framed and preserved in indoor exhibition halls with lighting provided by exhibition hall lamps—images can only be captured using mobile phones or cameras. Consequently, issues such as uneven exposure and insufficient clarity arose during the collection process, with examples illustrated in Fig. 4.

These pictures are provided with permission by Guangzhou Embroidery Craft Factory Co., Ltd.

To address issues in the original collected data, such as semantic redundancy, exposure anomalies, and insufficient clarity, data screening, cropping, and optimization were conducted. Images with excessively poor quality or blurred textures were directly discarded. For semantically complex Cantonese embroidery images, cropping was performed to divide them into multiple sub-images. For images with exposure anomalies, a coarse-to-fine deep neural network model based on the Laplacian pyramid43 was employed. This model performs global color correction and local detail enhancement through multiscale decomposition. Ultimately, a high-quality Cantonese embroidery image dataset was established, as shown in Fig. 5, comprising 494 images characterized by high definition, balanced lighting, simple semantics, and diverse categories. The dataset includes eight major categories: flowers, birds, plants, animals, landscapes, fruits and vegetables, mountains and rivers, and architecture. These categories are further divided into 45 subcategories.

These pictures are provided with permission by Guangzhou Embroidery Craft Factory Co., Ltd.

To provide reliable semantic guidance for LoRA training, a dedicated image labeling step was implemented beforehand. This study employed the WD14-tagger framework44 for semantic annotation of images, utilizing the wd-vit-v3 model for initial label parsing with a confidence threshold of 0.4 to filter out low-confidence labels. However, due to the thematic specificity of Cantonese embroidery images, certain labels did not accurately reflect the intended semantics and required manual correction. For example, “hen,” “rooster,” and “peacock” were incorrectly generalized as “bird,” “kapok” was oversimplified as “flower,” and “lychee” was oversimplified as “fruit.” Such mislabeling hindered semantic alignment in subsequent image generation processes.

In addition to misidentification, some labels were missing, such as “tree branches.” Moreover, the embroidery technique for bird claws is distinctive, typically involving short horizontal stitches in the lezhen style, which required special annotation. Furthermore, a unified label, “fine silk texture,” was added to all images. This label captures the high-frequency textural characteristics of silk material, thereby enhancing the generative model’s perceptual accuracy of the embroidery material. Its stable texture pattern also provided auxiliary visual guidance during LoRA training, facilitating faster convergence. Table 1 presents three representative cases of manually assisted label correction for Cantonese embroidery images extracted in this study.

LoRA training

This study utilized a dataset of 494 Cantonese embroidery artworks covering 45 thematic categories, all of which were precisely annotated with semantic labels. A phased, progressive fine-tuning strategy was adopted to optimize the generative model. Stable Diffusion 1.5 served as the base model, with targeted fine-tuning conducted through LoRA (Low-Rank Adaptation), a parameter-efficient method. The primary advantage of LoRA lies in its plug-and-play capability—during training, only a small set of low-rank matrix parameters is optimized while the base model weights remain frozen. After training, the low-rank adapter can be directly integrated with the original model, enabling immediate adaptation for Cantonese embroidery style generation without additional adjustments. This greatly enhances the flexibility and efficiency of model deployment.

This study employed SD-Trainer45 as the training platform for LoRA, which performs end-to-end parametric training by encapsulating the underlying computational processes. Training was conducted in expert mode, and Table 2 presents detailed information on the model training parameters.

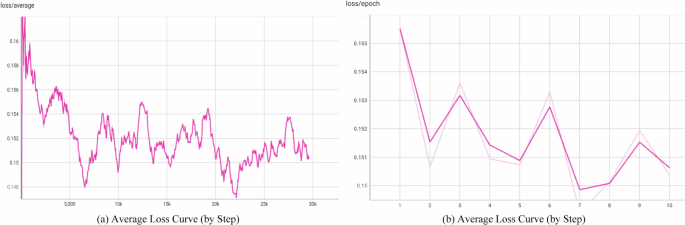

TensorBoard.dev was used to visualize the relationship between loss values and training epochs during the LoRA training process, clearly illustrating the learning progression. As shown in Fig. 6(a), with training steps on the horizontal axis and loss values on the vertical axis, the complete training process consisted of 29,640 steps. In the early training stages, the loss values decreased rapidly, reflecting fast convergence. However, during later stages, the loss values exhibited fluctuations while gradually converging, which can be attributed to the large-scale and semantically complex nature of the dataset, making complete convergence difficult. Nonetheless, Fig. 6(b) reveals an overall convergent trend, with loss values stabilizing at relatively low levels by the 8th epoch. Both figures indicate that, despite some fluctuations, the overall downward trend of the loss values reflects progressive model convergence and optimization. These observations preliminarily confirm that the experimental output of the LoRA model met the required precision standards for deployment.

a Average loss curve (by step); b Average loss curve (by step).

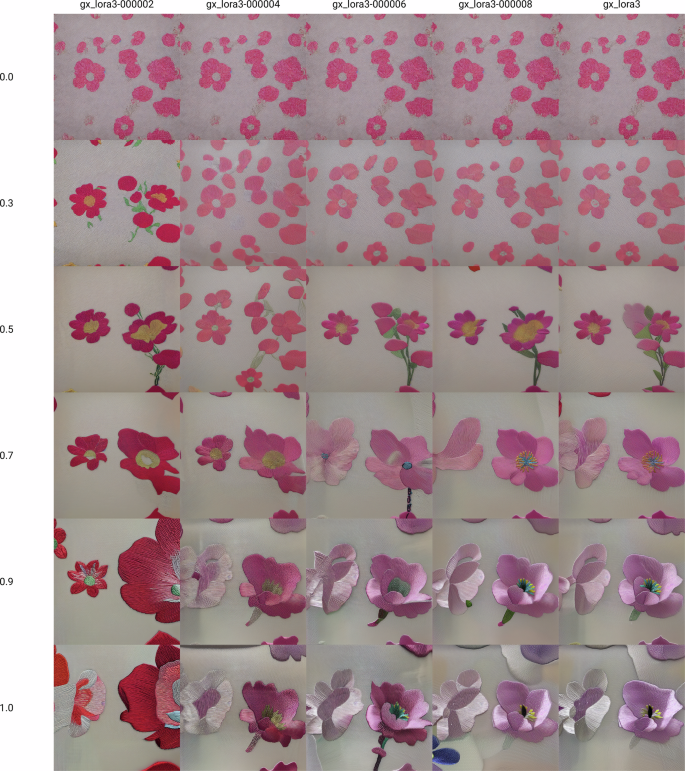

During training, LoRA models were saved at the 2nd, 4th, 6th, 8th, and 10th epochs. Cross-combination experiments were then conducted with weight values of 0.0, 0.3, 0.5, 0.7, 0.9, and 1.0. Comparative analysis of generated Cantonese embroidery-style floral images revealed that higher epochs and larger weights produced more pronounced texture effects (Fig. 7). The LoRA model from the 8th epoch, combined with a weight of 0.9 demonstrated optimal artistic performance, generating images that preserved clear floral morphological structures while faithfully reproducing the unique silk texture and stitching patterns characteristic of Cantonese embroidery. The results also displayed natural, soft color transitions consistent with traditional Cantonese embroidery. By contrast, the 10th epoch with a weight of 1.0 enhanced stylized features excessively, resulting in exaggerated embroidery textures and localized detail distortion. Conversely, lower epochs (2nd–6th) or smaller weights (<0.7) failed to adequately reproduce silk luster and complex stitching techniques.

All the generated images are from LoRA trained by the authors. The figure is drawn by the authors.

These findings indicate that a balance between style enhancement and detail fidelity is essential in Cantonese embroidery style generation. The 8th epoch with a weight of 0.9 achieved this balance, sufficiently showing the artistic characteristics of Cantonese embroidery while maintaining natural visual harmony. Accordingly, this study adopted the LoRA model from the 8th training epoch for parameter fine-tuning of the diffusion model.

Conditional guidance for Cantonese embroidery image generation

In the Stable Diffusion-based image-to-image generation pipeline, input prompts can effectively guide the semantic expression of generated content. However, output images still display considerable randomness in semantic layout, overall geometric structure of patterns, and color distribution. To achieve consistency between generated images and input reference images in terms of semantic correspondence, geometric shapes, and color distribution—while introducing only controlled adjustments to texture style—this study incorporates two prior constraints during the denoising process of the diffusion model. This approach allows precise regulation of the denoising process to accomplish these objectives.

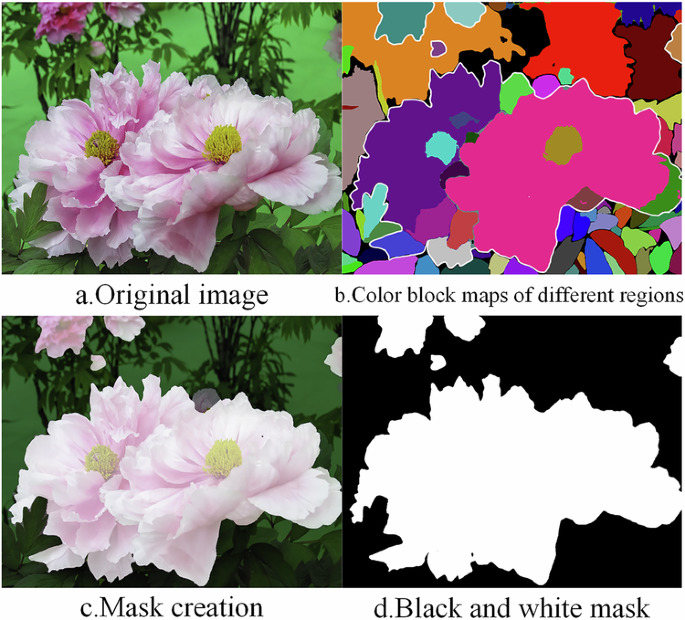

The first constraint focuses on semantic image segmentation and consistency enforcement based on the Segment Anything Model (SAM). In the process of generating Cantonese embroidery-style images, distinguishing complex semantic regions in input images poses a significant challenge. To address this, a segmentation module was introduced for refined processing. First, the SAM was employed to perform pixel-level semantic segmentation on the input image, decomposing it into multiple semantic regions:

Here, each region corresponds to a specific semantic block, and a unique color identifier is assigned to these semantic regions via the color coding function defined in Eq. (2), as shown in Fig. 8b:

a Original image. b Color block maps of different regions. c Mask creation. d Black and white mask.

Interactively selecting multiple color-coded regions within the image, masks corresponding to the target semantics are generated. For example, Fig. 8c shows the selection of all color regions associated with floral elements, namely, the areas enclosed by the white outlines in Fig. 8b, resulting in the corresponding flower mask. Finally, the masks are converted into binary mask images (Fig. 8d), where white areas represent the target generation regions while black ones remain unmodified. These SAM-derived binary masks explicitly restrict the diffusion denoising process within the delineated target semantic regions while suppressing noise interference and unintended content generation in the nontarget (black) regions. Through multiple generations with masks covering different regions, this approach achieves precise semantic generation in complex areas, ensuring clear boundaries between distinct semantic regions and accurate content representation. Notably, these semantic masks are applied throughout the entire diffusion process, and their functional focus varies across denoising stages to support subsequent ControlNet guidance.

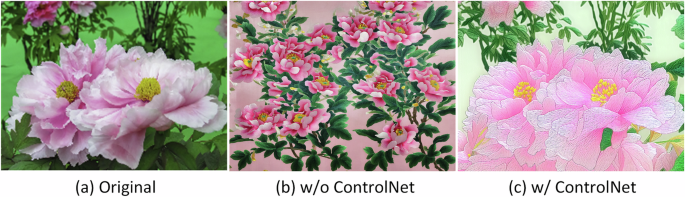

The second constraint centers on geometric structure and color consistency control with the aid of ControlNet. To ensure precise alignment between generated images and reference inputs in both contours and color space, this study augmented the original diffusion model with ControlNet for fine-grained denoising guidance. Unlike SAM masks, which focus on spatial region restrictions, ControlNet acts as a semantic guidance module, providing directional constraints for the denoising process to ensure the generated content conforms to the geometric structure and color characteristics of the input reference image. The core mechanism encodes LineArt, Depth, and Color priors from the input image into conditional tensors, enabling the model to produce structurally and chromatically consistent outputs. Fig. 9 shows the comparison of the effects of the image with flowers, leaves, and a simple background with and without ControlNet. It can be seen that both images (b) and (c) have flowers and leaves. However, without the effect of ControlNet, image (b) cannot maintain the same spatial structure and color distribution as the original image. However, image (c) can, although local semantic ambiguities still need to be refined through SAM-based segmentation networks.

c w/ControlNet aligns with (a) Original (contour overlap rate >85%), whereas (b) w/o ControlNet shows <50% structural consistency.

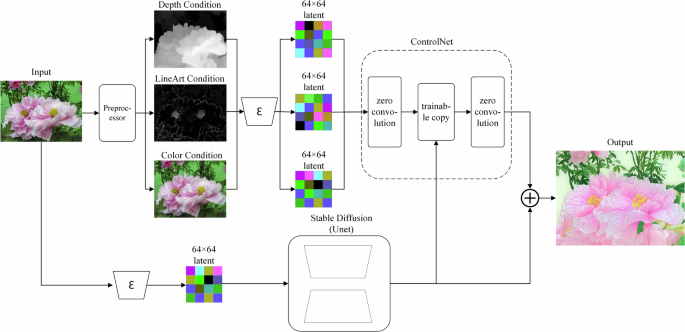

Since Cantonese embroidery is a form of realistic art, this study further adopted Depth, LineArt, and Color conditions to guide the model in incorporating lighting, contour structure, and color characteristics of the original image during the denoising process. Each condition regulates the diffusion process in complementary ways. The Depth condition extracts geometric information from the original image to preserve spatial structure—brighter areas appear closer, while darker areas recede. LineArt condition enforces alignment of generated edges with those of the input image, ensuring contour accuracy. Color condition detects the color distribution of the original image and ensures the generated output reflects a similar distribution. These conditions do not directly manipulate Stable Diffusion. Instead, partial weights are copied from the original UNet encoder, and cross-layer feature fusion with the UNet backbone is achieved via zero-convolution layers. Each condition is separately trained before integration into the original Stable Diffusion. This mechanism ensures semantic consistency while enabling controllable transfer of pixel-level attributes. The incorporation of these three conditions allows the model to pursue the unique texture characteristics of Cantonese embroidery while significantly improving the high-fidelity reproduction of geometric structures and color features from the original image, as depicted in Fig. 10.

The input image is processed through separate preprocessors to extract Depth, LineArt, and Color conditional information, which are then encoded as 64 × 64 feature maps to match convolutional metrics. These conditions are incorporated through zero-initialized convolutional layers and fed into a trainable copy of the network. Finally, the network outputs are adjusted via zero convolutions and fused with the original network outputs to generate the target image.

The three conditions—Depth, LineArt, and Color—do not employ fixed intensities. For semantic content with complex contour structures, the LineArt control intensity is typically set above 0.6 to ensure accurate reproduction of morphological details; for simpler contours, it is set below 0.6 to allow the model greater freedom to refine local details autonomously. For example, an intensity above 0.6 is applied when generating images with intricate leaf clusters to render the interlaced contours of overlapping leaves clearly. In contrast, a value below 0.6 is preferred for simple shapes such as a single banana—an intensity over 0.6 would excessively extract non-contour internal lines, restricting the model and compromising the natural rendering of internal details. Similarly, the depth control intensity increases above 0.6 in scenarios requiring clear spatial hierarchy but decreases below 0.6 when the three-dimensional structure is less critical. In contrast, the color control intensity is generally set to a relatively high range of 0.8–0.9, which not only extracts color information from the original reference image but also helps the model better understand the underlying semantic content—for example, in regions where flowers and leaves overlap, color cues effectively assist the model in distinguishing the boundaries between different semantic objects. These complementary conditions, when combined, achieve precise restoration of the original image information. Notably, the control intensity of ControlNet is generally not set to the maximum value of 1.0. This is because an intensity of 1.0 would excessively constrain the generation process, forcing the model to mechanically replicate the contours of condition maps (e.g., LineArt maps, depth maps) while sacrificing its ability to generate natural textures and reasonable details. Consequently, the outputs tend to appear rigid and unnatural and may even be affected by “ghosting artifacts” derived from the condition maps.

In synergy with ControlNet guidance, the SAM semantic masks exert stage-specific effects during diffusion: (1) In the early diffusion stages with high noise levels, the masks primarily fulfill the role of region delimitation, ensuring that all denoising updates are confined within the target semantic regions and preventing the generation of off-target content that deviates from the input reference. (2) In the middle and late diffusion stages with low noise levels, the masks cooperate with the Depth, LineArt, and Color priors of ControlNet to refine the local details of the target regions, making the generated Cantonese embroidery patterns both spatially accurate and semantically consistent with the input reference image.

Integrated Cantonese embroidery artistic-style image generation system based on ComfyUI

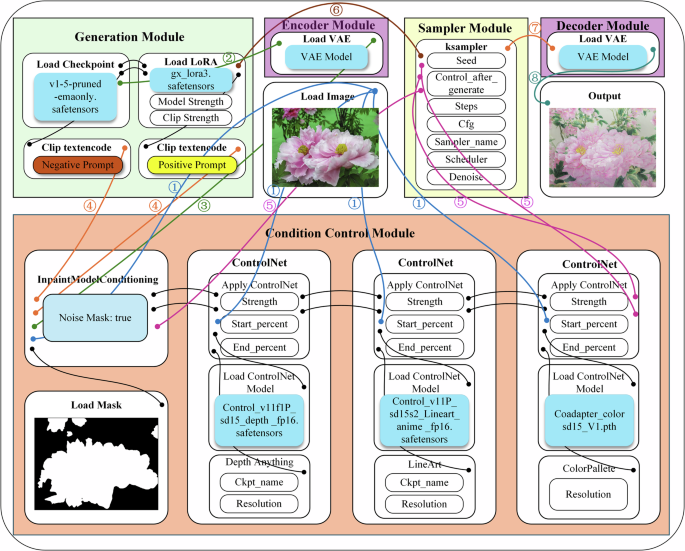

Figure. 11 illustrates the image-to-image workflow for generating artistic Cantonese embroidery styles in this study, implemented on the ComfyUI platform. This platform uses a node-based visual programming interface, enabling the flexible combination of various generative components, such as Stable Diffusion. The workflow consists of five core modules: the generation module, encoder module, condition control module, sampler module, and decoder module. The parameter configurations for the relevant modules are detailed in Table 3. The modular design of ComfyUI allows each component to be independently debugged and optimized, thereby significantly improving development efficiency. While preserving the original image content, this workflow enables accurate simulation of the Cantonese embroidery artistic style.

The system constructs a complete production pipeline beginning with the generation module, which includes the base denoising model. The encoder module enables processing in latent space, while the sampler module performs precise image denoising under the guidance of the condition control module. Finally, the decoder module reconstructs the latent variables into normal images for output. Lines with numbers represent the transmission of data streams.

As shown in ① in the figure, the input image is passed to the mask conditioning module to match the mask and to three ControlNets for extracting depth, LineArt, and color information. The condition control module is designed to provide control conditions for the denoising process, as shown in ⑤ in the figure. The mask controls the semantic regions involved in denoising, whereas ControlNets extracts spatial structure, contours, and color information from the original image to guide denoising. These complementary conditions collectively achieve precise restoration of the original image’s information.

Since the denoising process of the diffusion model operates entirely within the latent space, to ensure that the model can accurately locate the regions to be modified within this latent space, the VAE46 encoding module, as illustrated in ② and ③ of the figure, performs encoding transformation on the mask image according to the latent space scale adapted to the diffusion model, thereby providing clear spatial operation guidance for the model.

The generation module comprises the base stable diffusion model, the LoRA fine-tuned model, and positive/negative CLIP text encoders. It is based on the pretrained stable diffusion v1-5 model (v1-5-pruned-emaonly.safetensors), with stylized generation achieved through the integration of a LoRA adapter (gx_lora3-000008) optimized for the Cantonese embroidery style. CLIP text encoding further guides image generation. Positive prompts include both semantic cues and core Cantonese embroidery feature descriptors such as “fine silk texture”, “silk sheen”, and “precise stitching”. Negative prompts such as “low quality”, “blurry”, and “text” are applied to avoid common defects. As shown in ④ in the figure, the prompt words are converted into feature vectors by the text encoder, which are then fused with other constraint signals in the condition control module, including the region information marked by the mask and the spatial features extracted by ControlNet. This produces a unified generation target signal, ensuring that the model not only adheres to the textual description but also meets precise requirements at the spatial and regional levels.

The K-Sampler operates based on the base model parameters provided by the generation module (as shown in ⑥) and the constraint signals output by the condition control module, performing an iterative denoising process in the latent space. It progressively refines the initial noise vector under the combined guidance of the base model (and its LoRA adaptation) and multisource conditions (text semantics, mask regions, and ControlNet spatial features), ultimately generating the target latent variable representation.

The latent variables denoised by the K-Sampler are decoded into a normal output image via the decoder, as illustrated in ⑦ and ⑧ of the figure. As a pair of inverse operations, encoding and decoding serve to confine all key denoising processes within the latent space, thereby enhancing computational efficiency while ensuring generation quality.

Results

This study comprehensively validated the performance of the proposed method through four aspects: quantitative evaluation, qualitative comparison, ablation experiments, and user assessment. Quantitative evaluation analyzed the quality of generated images using objective metrics, providing data support for the method’s effectiveness. Qualitative comparison visually demonstrated detailed differences in simulating Cantonese embroidery style across different models. Ablation experiments verified the contribution of each module in the generation pipeline. User assessment involved inviting Cantonese embroidery researchers to evaluate the generated results across multiple metrics, thereby providing professional validation of the method’s application value.

This study selected two categories of representative comparison models. The first category includes the VGG1923 and CycleGAN29 models, which are widely used in embroidery image generation24,25,26,27,28,29,30,31 and were chosen as baselines owing to their adaptability to small-scale datasets; other GAN-based improved methods32,33,34 are unsuitable for this task because they require large-scale training datasets. The second category comes from other fields of image style generation, including the AdaIN21 model and the cross-image attention22 model: the former demonstrates flexible multi-style transfer and excels at integrating different style elements; the latter uses a cross-image attention mechanism to establish inter-image semantic correspondences, thereby achieving precise style transfer. Both VGG1923 and cross-image attention22 are style transfer models that do not require task-specific training, with the advantage of being directly adaptable to any input style image without additional training for the target style. During the evaluation phase, we directly used images from the Cantonese embroidery dataset as style reference inputs to the models for style transfer generation. For the comparison models that require training (AdaIN21 and CycleGAN29), both adopt a unified training data configuration to meet the models’ requirements for data volume and input size: each image in the self-constructed Cantonese embroidery dataset (comprising 494 images) was randomly cropped to generate 2 samples of 512×512 resolution, resulting in a total of 988 images, and the resulting dataset was used as the style dataset for AdaIN and the target domain data (trainB) for CycleGAN29; meanwhile, 988 ordinary images that are semantically consistent with the Cantonese embroidery dataset but do not contain the Cantonese embroidery style were selected as the content dataset for AdaIN21 and the source domain data (trainA) for CycleGAN29, respectively. Regarding training parameters, the number of training epochs for AdaIN21 was set to 100, balancing the optimal iteration law of the model in style transfer tasks and dataset adaptability; for CycleGAN29, it was set to 200. This parameter was optimized based on the model’s official convergence benchmark and the dataset scale, ensuring that the generator fully learns the mapping and conversion from natural images to the Cantonese embroidery style. All other training parameters follow the official default configurations.

Compared with frequently used models in the field and classic models from other domains, this study highlights the effectiveness of the proposed method in Cantonese embroidery simulation, particularly in texture restoration, preservation of the original image’s color and contour, and adaptation to small-scale datasets.

Quantitative analysis

Three quantitative metrics were employed: LPIPS47 (learned perceptual image patch similarity), which measures perceptual similarity in deep feature space via an AlexNet backbone; FID48 (Fréchet inception distance), which evaluates the distributional divergence between generated and real images on the basis of features from the inception-v3 network; and PSNR49 (peak signal-to-noise ratio), which assesses pixel-level reconstruction fidelity. To ensure the statistical reliability of the comparisons, the 95% confidence interval (95% CI) is reported for each metric, reflecting the potential variability in performance estimation. Together, these metrics provide a comprehensive evaluation of Cantonese embroidery simulation quality. The experimental results show that the proposed method achieves the best performance on both the LPIPS and FID, with scores of 0.244(95% CI: 0.227–0.259) and 95.57(95% CI: 84.79–108.91), respectively, significantly outperforming the other methods (Table 4). The relatively narrow confidence intervals observed for our method across all metrics indicate stable, consistent performance, further supporting the robustness of the proposed approach. Notably, the FID values across all models are inevitably close to or above 100, largely due to the small dataset size (494 images) and the significant domain gap between embroidery images and natural images in the ImageNet dataset, on which the Inception-v3 feature extractor is pretrained. This elevated baseline should be interpreted as reflecting the evaluation metric’s sensitivity to the data domain rather than as an indication of poor model performance.

Furthermore, although the PSNR value of our method (16.38) is slightly lower than that of CycleGAN (17.71), the visual error maps in Fig. 12 (where warmer tones indicate greater pixel-level differences) reveal a critical distinction in the error distribution.

Warmer colors indicate larger differences. The heatmaps of our method show that the differences are mainly concentrated in core regions such as chicken bodies, bird bodies, and flower main parts, demonstrating that the model can effectively reconstruct textures for these subjects. In contrast, CycleGAN generates oversmoothed outputs, with scattered differences in its heatmaps, and fails to capture the core features of Cantonese embroidery.

Specifically, the error distribution between the images generated by our method and real Cantonese embroidery images is mainly concentrated in the main subject regions. Our method generates perceptually meaningful texture changes in these main regions rather than merely replicating reference samples, thereby achieving more expressive stylistic features of Cantonese embroidery. In contrast, the errors produced by CycleGAN are relatively scattered, lacking a core region. This low error stems from its failure to make meaningful modifications to the original images; it tends to produce over-smoothed and blurred outputs with insufficient detail variation, essentially only a “blurred average” of real samples, and it has not achieved meaningful style transfer. This explains why it achieves a higher PSNR despite its poor stylistic alignment, due to smaller pixel-level changes. Overall, our method strikes an optimal balance by generating visually compelling embroidery simulations while reasonably preserving the original content.

Qualitative analysis

This study further compares the Cantonese embroidery images synthesized by the proposed model with those generated by VGG19, CycleGAN, AdaIN, and Cross-Image-Attention, as shown in Fig. 13. The experimental results demonstrate that the proposed model offers significant advantages in restoring Cantonese embroidery texture details, preserving the original image structure, and maintaining color consistency, which is highly consistent with the results of the quantitative analysis. Specifically, VGG19 achieves basic style transfer but lacks mechanisms for specialized texture feature processing, leading to randomly distributed embroidery textures and noticeable color distortion in its outputs. CycleGAN preserves input content features effectively but fails to capture the unique texture characteristics of Cantonese embroidery due to limited training data, further highlighting the inadequacy of most deep learning models for embroidery image generation under data-scarce conditions. The AdaIN model retains certain embroidery texture features but produces overly smooth results that do not fully reflect the intricacy of Cantonese embroidery craftsmanship. The Cross-Image-Attention model generates relatively realistic textures but excessively transfers semantic contours and colors from the style images, causing the generated outputs to lose original content features. Furthermore, the experiments reveal a common limitation: VGG19, AdaIN, and Cross-Image-Attention all require style reference images as inputs (shown in the lower left corner of each image), making the generated results highly dependent on style images with consistent semantic content. Consequently, these models can only produce images that closely resemble the semantics of the dataset, severely restricting creative flexibility and innovation. By contrast, the proposed method effectively overcomes these limitations and achieves notable progress in Cantonese embroidery image synthesis. Moreover, the textures produced by this method remain clear and vivid even in high-resolution image representations, as illustrated in Fig. 14.

The LoRA trained in this study produces image textures that most closely resemble authentic Cantonese embroidery textures; with semantic image segmentation and ControlNet’s multiple-condition guidance, it achieves the highest fidelity in preserving the original image’s structure and color—consistent with its strong performance in quantitative metrics (LPIPS: 0.244, FID: 95.57, PSNR: 16.38). Here, CycleGAN can be seen to have almost no texture variation, which aligns with its slightly higher PSNR (17.71) than our method (a result of its oversmoothed outputs).

The input image resolution is not fixed, while the output maintains the same resolution as the input. All shown images have resolutions above 1024 × 1024, with generated textures exhibiting clear, realistic details and even capturing the lustrous sheen characteristic of silk threads. Magnified views of texture details are provided for each image to highlight the fine-grained rendering quality.

Ablation experiments

To evaluate the contribution of each module to embroidery image generation, systematic ablation experiments were designed. These sequentially assessed the combined performance of the SD base model (Stable Diffusion) with the LoRA model, Color ControlNet, Depth ControlNet, LineArt ControlNet, and the SAM semantic segmentation module. Both quantitative metrics and visual comparisons were used to identify the enhancement effects of each component on texture fidelity, color consistency, and structural accuracy.

In terms of quantitative evaluation, the results in Table 5 show that the complete model (SD + LoRA + Color + LineArt + Depth + SAM) achieved the best overall performance across all ablation combinations, with LPIPS (0.244, 95% CI: 0.227–0.259), FID (95.57, 95% CI: 84.79–108.91), and PSNR (16.38, 95% CI: 15.87–16.85) significantly outperforming the others. In particular, models lacking the LineArt and Depth modules exhibited substantially worse FID scores compared to the complete model, underscoring the importance of contour guidance for structural fidelity. For example, the “SD + Color” model, despite having color guidance, still has a particularly high FID of 318.77 with a wide confidence interval (277.18–363.65) because of the absence of LineArt and Depth. In contrast, adding these structural guidance modules significantly narrows the confidence interval and improves the FID. The complete model demonstrated improvements across all three metrics relative to partial combinations, highlighting the synergistic effect of all modules working together.

In terms of visual comparison, the ablation results clearly demonstrated the functional role of each module (Fig. 15). The base SD model produced images devoid of embroidery texture features, generating only semantically relevant content (Fig. 15a). Introducing LoRA fine-tuning enabled the model to exhibit authentic embroidery texture details (15b). The LineArt and Depth control modules preserved geometric consistency with the original while improving edge precision and spatial hierarchy (15c). The Color control module ensured consistency in color categories with the original, effectively avoiding the color distortion observed in other methods (Fig. 15d). However, without SAM, the generation of semantically complex patterns often led to confusion—for example, petals were misinterpreted as leaves, leaves as petals, waterfalls as mountains, or branch tips as bird heads. Moreover, the absence of SAM led to color-mixing issues: for instance, when generating bananas on a white background, the background failed to remain pure white (Fig. 15e). Incorporating the SAM module resolved these issues by ensuring semantic rationality and region-specific color alignment. When all modules were combined (SD + LoRA + LineArt + Depth + Color + SAM), the model produced optimal results, maintaining semantic accuracy, geometric consistency, and faithful color categories, while simultaneously exhibiting the fine textures and silk-thread luster characteristic of Cantonese embroidery (Fig. 15f). A close-up view of the texture details in images generated by the proposed model reveals clear, elaborate embroidery textures (Fig. 15g). These visual comparisons aligned closely with the quantitative analysis, fully validating the contribution of each component. Furthermore, the model demonstrated robust generalization, achieving excellent simulation effects even for semantics not included in the training dataset, such as peaches and bananas.

a Output of the base Stable Diffusion (SD) model. b SD model fine-tuned with LoRA (SD+LoRA). c SD model guided by LineArt condition and Depth condition (SD+LineArt+Depth). d SD model guided by Color condition (SD+Color). e SD+LoRA integrated with LineArt, Depth and Color conditions (SD+LoRA+LineArt+Depth+Color). f Full model (SD+LoRA+LineArt+Depth+Color+SAM) incorporating all modules. g Local texture details of the result generated by the full model (from (f)). Compared with other ablation combinations, the SD + LoRA + LineArt + Depth + Color + SAM configuration yielded optimal results with significant improvements in quantitative metrics (LPIPS: 0.244, FID: 95.57, PSNR: 16.38); removing the LoRA module eliminated embroidery textures, LineArt and Depth ensured structural fidelity, Color enabled correct chromatic mapping, and the SAM achieved semantic region alignment.

User evaluation

To objectively evaluate subjective perceptual differences in image generation quality, a user survey experiment was conducted. The experimental materials included 10 groups of original image samples, with each group processed through both the proposed ComfyUI generation pipeline and four mainstream image style simulation methods21,22,23,29, resulting in 50 comparison groups. The experiment invited 30 participants with research backgrounds in Cantonese embroidery—including experts, teachers, and graduate students in the field—to evaluate the results using a 5-point Likert scale (1 = very poor, 5 = very good) across three metrics:

Craftsmanship reproduction: assessing semantic consistency with original images, geometric contour consistency, and the degree of match between stitch types and real craftsmanship.

Material texture: evaluating the realism of silk thread luster, fuzz details, and base fabric textures.

Color fidelity: measuring the consistency of color between input and output images, as well as the naturalness of gradient transitions.

The experiment used high-definition printed questionnaires, with each test group’s original image and five generated images arranged on the same page for intuitive side-by-side comparison, and no model names were disclosed to the evaluators.

A total of 4500 rating data points were collected through the questionnaire. For each model and each metric, we calculated the average scores obtained. To quantify the inter-rater reliability of the subjective evaluation, we computed Krippendorff’s Alpha50 using the ordinal weighting scheme. Tailored for discrete data, this method fully exploits the ordinal information embedded in the scores and exhibits high flexibility with respect to the number of raters and samples. Given the nature of the data in this study (i.e., large number of raters, relatively limited number of samples, and heterogeneity among samples), an Alpha value greater than 0.5 was considered indicative of good agreement. These results are reported in Table 6. Moreover, the score distributions for each model across different metrics are visualized as violin plots in Fig. 16. As seen in the violin plots, the high mean scores of the proposed method across the three metrics stem from evaluators’ high scores for the model in these aspects; the high inter-rater consistency (Craftsmanship reproduction: 0.789, Material texture: 0.722, Color fidelity: 0.700) indicates consistent recognition of our method among evaluators. Meanwhile, CycleGAN’s Craftsmanship reproduction (mean = 1.37) and Material texture (mean = 1.29), along with Cross-Image-Attention’s Color fidelity (mean = 1.19), show clustered low scores. In particular, the extremely high inter-rater consistency of Cross-Image-Attention’s Color fidelity (Alpha = 0.804) demonstrates that evaluators uniformly deemed this model highly unsatisfactory in color fidelity. The relatively low consistency of Cross-Image-Attention’s Craftsmanship reproduction (Alpha = 0.463, mean = 2.69) and VGG19’s Material texture (Alpha = 0.488, mean = 3.42) indicates that the performance of these models on the relevant metrics was not stable in the eyes of the evaluators.

In each subplot, the width of the violin shape represents the density of scores (wider areas indicate more frequent scores), and the white circle denotes the mean value. Notably, the proposed method (Ours) results in concentrated high scores in all metrics, whereas the other models result in more dispersed or low-score-dominated distributions.

Overall, the ComfyUI generation pipeline significantly outperformed other methods across all evaluation metrics. In craftsmanship reproduction, the proposed method achieved a high score of 4.85, attributed to its superior embroidery texture extraction capability that enabled natural and smooth texture filling effects. Semantic consistency and contour preservation—key issues addressed in this study—received strong recognition from participants. In the material texture dimension, the method achieved a score of 4.68, with participants praising its accurate simulation of the characteristic silk luster of Cantonese embroidery and the preservation of clear, realistic texture details even in high-resolution images. In color fidelity, the method attained a score of 4.70, demonstrating strong alignment with input image colors and natural gradient transitions, while preserving the distinctive color schemes of traditional Cantonese embroidery and avoiding mechanical replication.

Discussion

This study addressed three core challenges in the digital preservation of Cantonese embroidery: (i) scarcity of training data, (ii) maintenance of structural and color consistency, and (iii) fine texture simulation. It proposed the first diffusion model-based solution for embroidery image generation. The method integrates LoRA lightweight fine-tuning, ControlNet conditional guidance, and the SAM segmentation network to construct an end-to-end Cantonese embroidery artistic style generation framework.

Experimental results demonstrated that, compared with other image generation models, the proposed method achieved clear advantages in multiple key metrics: LPIPS (0.244), FID (95.57), PSNR (16.38). In terms of visual quality, the method accurately simulated texture characteristics of diverse Cantonese embroidery stitching techniques and their natural arrangements in compositions, while preserving input structural features and color distributions. The framework also exhibited strong generalization ability across multiple semantic categories, offering a new technical pathway for the digital preservation and innovative application of traditional Cantonese embroidery craftsmanship. Moreover, the approach can be extended to other embroidery varieties.

Nonetheless, areas for improvement remain. First, this study is inherently constrained by its dataset. The distribution of 494 images across 45 subcategories yields low sample counts for some classes. This may result in the model’s poor simulation performance for classes with low sample counts and may lead to overfitting to specific samples. Second, this study was conducted exclusively on a private dataset. While it provides a high-quality collection, the singular source inevitably reflects the specific preferences and styles of the original collectors and creators. It may not comprehensively represent the full diversity of Cantonese embroidery. Therefore, a key future direction is to compile a more extensive and varied dataset, encompassing a wider range of styles, periods, and artisans, to train and evaluate a model that is more universally representative.

Beyond these fundamental limitations, technical aspects of the methodology also present clear avenues for enhancement. These include enhancing simulation accuracy for special stitching techniques, optimizing computational efficiency for large-scale patterns, and addressing the limitation of relying solely on basic diffusion models without exploring advanced variants such as Flux. Future research will focus on lightweight model design, improving the precision of complex stitch simulations, and investigating Flux diffusion models for more refined embroidery texture reproduction.

Data availability

The dataset utilized in this research was acquired via on-site photography by the authors. Owing to copyright considerations associated with the original works, the dataset is not amenable to public dissemination. Nevertheless, the images generated in the course of this study may be obtained from the corresponding author upon submission of a reasonable request.

Code availability

Some or all code generated or used during the study is available from the corresponding author if they are required for scientific research.

References

China Intangible Cultural Heritage Network. National List of Representative Projects of Intangible Cultural Heritage, https://www.ihchina.cn/project_details/13983/ (2006).

Gong, B. Golden Threads: Guangzhou Embroidery (Guangdong Education Press, 2010).

Tan, B., Xue, Y. & Qin, S. Review of digital technology methods for Chinese grotto heritage conservation. J. Graph. 46, 479–490 (2025).

Li, W., Lv, H., Liu, Y., Chen, S. & Shi, W. An investigating on the ritual elements influencing factor of decorative art: based on Guangdong’s ancestral hall architectural murals text mining. Herit. Sci. 11, 234 (2023).

Chen, X., McCool, M., Kitamoto, A. & Mann, S. Embroidery modeling and rendering. In Proc. Graphics Interface (GI). 131–139 (ACM, 2012).

Fan, X. & Hu, S. Simulation of pattern on computerized embroidery based on image processing. Appl. Mech. Mater. 268-270, 1675–1680 (2013).

Shen, Q., Cui, D., Sheng, Y. & Zhang, G. Illumination-preserving embroidery simulation for non-photorealistic rendering. Multimed. Tools Appl. 75, 9123–9141 (2016).

Yang, K., Sun, Z., Wang, S. & Chen, H. Image stylization for thread art via color quantization and sparse modeling. Comput. Graph. Forum 35, 1–12 (2016).

Yang, K. & Sun, Z. Paint with stitches: A style definition and image-based rendering method for random-needle embroidery. Multimed. Tools Appl. 77, 12259–12292 (2018).

Qian, W., Xu, D., Cao, J., Guan, Z. & Pu, Y. Aesthetic art simulation for embroidery style. Multimed. Tools Appl. 77, 995–1016 (2018).

Ma, C. & Sun, Z. StitchGeneration: Modeling and creation of random-needle embroidery based on Markov chain model. Multimed. Tools Appl. 78, 34065–34094 (2019).

Guan, X. et al. Automatic embroidery texture synthesis for garment design and online display. Visual Comput. 37, 1–18 (2021).

Ma, C. & Sun, Z. Multilayered stitch generating for random-needle embroidery. Visual Comput. 37, 2523–2537 (2021).

Zhu, Y., Zhao, Y., Zhang, L. & Liu, X. Computer aided DIY design of stylized generation of random needle embroidery. J. Comput. Aided Des. Comput. Graph. 34, 1–15 (2022).

Shen, J. et al. An anchor-free lightweight deep convolutional network for vehicle detection in aerial images. IEEE Trans. Intell. Transp. Syst. 23, 24330–24342 (2022).

Shen, J., Liu, N., Sun, H., Li, D. & Zhang, Y. An instrument indication acquisition algorithm based on lightweight deep convolutional neural network and hybrid attention fine-grained features. IEEE Trans. Instrum. Meas. 73, 1–16 (2024).

Shen, J. et al. An algorithm based on lightweight semantic features for ancient mural element object detection. Herit. Sci. 13, 70 (2025).

Shen, J. et al. Finger vein recognition algorithm based on lightweight deep convolutional neural network. IEEE Trans. Instrum. Meas. 71, 1–13 (2021).

Li, H. A., Wang, L. & Liu, J. A review of deep learning-based image style transfer research. Imag. Sci. J. 73, 504–526 (2024).

Liu, J. & Zhou, J. A review of style transfer methods based on deep learning. J. Artif. Intell. Sci. Eng. 1–19 (2024).

Huang, X. & Belongie, S. Arbitrary style transfer in real-time with adaptive instance normalization. In Proc. IEEE International Conference on Computer Vision. 1501–1510 (IEEE, 2017).

Zhu, M., He, X. & Wang, N. Cross-image attention for zero-shot appearance transfer. In Proc. IEEE/CVF International Conference on Computer Vision. 23052–23062 (IEEE, 2023).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proc. 3rd International Conference on Learning Representations (ICLR 2015) 1–14 (2015).

Zheng, R., Qian, W. & Xu, D. Digital synthesis of embroidery styles based on convolutional neural networks. J. Zhejiang Univ. Sci. Ed. 46, 270–278 (2019).

Wu, Z., Wu, T. & Dun, X. Research on Chengcheng embroidery style transfer design method based on extensible semantics. J. Graph. 44, 1041–1049 (2023).

Ming, Z. & Hong, L. Research on style transfer algorithm of full embroidery image based on semantic segmentation with convolutional neural network. In Proc. Fourth International Conference on Computer Graphics, Image, and Virtualization (ICCGIV 2024). 132880T. https://doi.org/10.1117/12.3045212 (2024).

Goodfellow, I. J. et al. Generative adversarial nets. In Advances in Neural Information Processing Systems. 2672–2680 (2014).

Beg, Y. & Yu, X. Generating embroidery patterns using image-to-image translation (2020).

Zhu, J. Y., Park, T., Isola, P. & Efros, A. A. Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks. In Proc. IEEE International Conference on Computer Vision (ICCV). 2223–2232 (IEEE, 2017).

Wang, L. & Guo, F. Embroidery style generation with machine learning. In Proc. International Conference on Algorithm, Imaging Processing, and Machine Vision (AIPMV 2023). 129691P (SPIE, 2023). https://doi.org/10.1117/12.3014501.

Liu, C., Gu, J., Yao, L. & Zhang, Y. Research on embroidery style migration model based on texture cycle GAN. Int. J. Cloth. Sci. Technol. 37, 138–153 (2025).

Yang, C. et al. Unsupervised embroidery generation using embroidery channel attention. In Proc. 18th ACM SIGGRAPH International Conference on Virtual-Reality Continuum and its Applications in Industry (VRCAI'22) 1–8 (ACM, 2022).

Sha, S., Li, Y., Jiang, H. & Chen, Y. Transfer and simulation of Chinese embroidery art style based on ChipGAN-ViT model. J. Textile Eng. 1, 68–77 (2023).

Hu, X. et al. MSEmbGAN: multi-stitch embroidery synthesis via region-aware texture generation. IEEE Trans. Vis. Comput. Graph. 31, 5334–5347 (2024).

Huang, Y. The last “Flower Master”: interview with Xu Chiguang (Representative Inheritor of Cantonese Embroidery, 2017).

Sohl-Dickstein, J., Weiss, E., Maheswaranathan, N. & Ganguli, S. Deep unsupervised learning using nonequilibrium thermodynamics. In Proc. 32nd International Conference on Machine Learning (ICML) 2256–2265 (2015).

Hu, E. J. et al. LoRA: low-rank adaptation of large language models. In Proc. International Conference on Learning Representations (ICLR) 3 (2022).

Zhang, L., Rao, A. & Agrawala, M. Adding conditional control to text-to-image diffusion models. Comput. Vis. Pattern Recognit. https://doi.org/10.48550/arXiv.2302.05543 (2023).

Kirillov, A. et al. Segment anything. In Proc. IEEE/CVF International Conference on Computer Vision (ICCV). 4015–4026 (IEEE, 2023).

ComfyUi. GitHub. https://github.com/comfyanonymous/ComfyUI (2025).

Guangxiu. Guangzhou Embroidery Craft Factory Co., Ltd. http://www.gzxpc.com/ (2025).

Guangzhou Municipal Bureau of Culture and Tourism. List of Representative Inheritors of the Seventh Batch of Municipal Intangible Cultural Heritage Representative Projects in Guangzhou City, https://wglj.gz.gov.cn/zwgk/shgysyjs/content/post_6458335.html (2020).

Afifi, M., Derpanis, K. G., Ommer, B. & Brown, M. S. Learning multi-scale photo exposure correction. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition. 9157–9167 (IEEE, 2021).

WD14-Tagger. GitHub. https://github.com/pythongosssss/ComfyUI-WD14-Tagger (2025).

SD-Trainer. GitHub. https://github.com/Akegarasu/lora-scripts (2025).

Kingma, D. P. & Welling, M. Auto-Encoding Variational Bayes (2013).

Zhang, R., Isola, P., Efros, A. A., Shechtman, E. & Wang, O. The unreasonable effectiveness of deep features as a perceptual metric. In Proc. IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 586–595 (IEEE, 2018).

Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B. & Hochreiter, S. GANs trained by a two-time-scale update rule converge to a local Nash equilibrium. In Proc. 31st International Conference on Neural Information Processing Systems. 6629–6640 (Curran Associates Inc., 2017).

Wang, Z., Simoncelli, E. P. & Bovik, A. C. Multiscale structural similarity for image quality assessment. IEEE Trans. Image Process. 13, 600–612 (2004).

Krippendorff, K. Computing Krippendorff’s Alpha Reliability. Annenberg School for Communication Departmental Papers 1–9 (ASC, 2011).

Acknowledgements

This study was supported by Key Laboratory of Philosophy and Social Sciences in Guangdong Province of Maritime Silk Road of Guangzhou University (GD22TWCXGC15), Guangdong Province Higher Education Institutions Characteristic Innovation Project in 2023: Lingnan Culture and Art Digital Resource Sharing Platform (2023WTSCX068), Guangzhou University Project “Digital Revitalization and Intelligent Dissemination Research of Lingnan Cultural Arts (PT252022040) and Cantonese Embroidery Intangible Cultural Heritage Digital Resource Sharing Platform (Liwan Research Institute, Guangzhou University, LWYI202411)”, the Guangzhou Academician and Expert Workstation (No. 2024-D003), and the Basic Innovation Project for Full-time Postgraduate Students of Guangzhou University, “Simulation Research on the Artistic Style of Cantonese Embroidery” (JCCX2024-068).

Author information

Authors and Affiliations

Contributions

Y.R., M.L., and S.C. defined the research objectives, designed the conceptual framework, planned and executed the research activities, and acquired the funding. S.C. designed the research methodology and experiments. S.C., Y.X., and R.W. verified the results, created visualizations, and drafted the manuscript. S.C. and B.H. prepared the dataset. R.W. and S.C. reviewed and edited the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Rao, Y., Chen, S., Xuan, Y. et al. Diffusion model-based image generation method for Cantonese embroidery artistic styles. npj Herit. Sci. 14, 79 (2026). https://doi.org/10.1038/s40494-026-02342-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s40494-026-02342-9