Abstract

Ancient bamboo/wooden slips suffer severe character degradation after millennia of burial, requiring infrared imaging for text identification. This work proposes a multimodal coarse-to-fine registration method to fuse visible and infrared images while preserving texture/color and restoring degraded inscriptions. The approach comprises: (1) Coarse registration using edge-feature-priority strategy, leveraging stable slip contours for global alignment via downsampling; (2) Fine registration with improved ICP algorithm incorporating weighted features and dynamic weight adjustment, transitioning from edge-dominance to corner-dominance for precise local registration; (3) Multi-stage hybrid optimization combining gradient methods with multi-restart simulated annealing, maximizing mutual information for optimal transformation matrices. The method addresses weak texture, modal differences, and severe character degradation by selecting appropriate registration strategies and feature weights at different stages. Experiments demonstrate superior performance over existing methods in visual quality and quantitative metrics. Difference fusion based on registered multimodal images achieves effective degraded character restoration, significantly improving inscription readability.

Similar content being viewed by others

Introduction

Bamboo and wooden slips, as important writing carriers in ancient China1,2, were extensively used from the Qin-Han to Wei-Jin periods. Primarily made from wooden or bamboo strips with characters written in ink using brushes, they served multiple domains including government decrees, judicial matters, documentation, medicine, and daily life, containing exceptionally rich content. Since the 20th century, with the development of large-scale archaeological excavations of bamboo slips in Dunhuang, Juyan, Zhangjiashan, Liye, Changsha Zuomulou, and other sites, numerous precious artifacts have been unearthed, providing unprecedented primary materials for the study of ancient Chinese history, possessing irreplaceable historiographical value and cultural significance. The organization and research of bamboo slips have not only advanced the development of disciplines such as paleography, history, and legal history, but have also greatly enriched the documentary system of Chinese civilization. Through in-depth study of bamboo slip documents, scholars can reconstruct the authentic appearance of ancient society and correct and supplement the deficiencies of transmitted texts, yielding immeasurable cultural value and historical significance.

However, bamboo slip research faces numerous challenges and difficulties. Character degradation represents one of the core issues affecting bamboo slip studies. Due to erosion over lengthy historical periods and the complexity of burial environments, numerous excavated bamboo slips exhibit varying degrees of character degradation, ink blurring, surface weathering, folding, and damage3. Particularly in visible images, the inscriptions on bamboo slips often exhibit varying degrees of degradation due to background interference, wood aging, and physical damage or fracture4, which severely impairs the interpretation and scholarly study of bamboo slip documents.

In recent years, Gong et al.5 employed hyperspectral imaging technology to analyze genealogy seals in the Yibin Museum collection, revealing significant differences in pigment reflectance properties across visible and near-infrared bands. Guo et al.6 extracted pigment information from ancient paintings using imaging spectroscopy technology, distinguishing background pigments from overlay information, thereby facilitating the authentication and restoration of ancient paintings. Sun et al.7 effectively assessed the deterioration degree of Dunhuang murals using hyperspectral imaging. Zhou et al.8 utilized hyperspectral imaging to extract blurred seal information from calligraphy and paintings, contributing to further research on these cultural artifacts. Hou et al.9 achieved enhancement, extraction, and recognition of faded text in ancient calligraphy and paintings using hyperspectral technology. Deng et al.10 addressed the challenges of accuracy and efficiency in mold detection for paper-based cultural artifacts by proposing a triple-path multimodal feature fusion network based on hyperspectral imaging, which integrates RGB spatial information, spectral features, and joint spectral-spatial features to achieve precise mold identification.Cucci et al.11 addressed the recognition challenges posed by degraded ancient Egyptian hieroglyphs by proposing a method that combines visible-near infrared hyperspectral imaging with convolutional neural networks, providing a novel technical pathway for digital documentation and text recognition in cultural heritage. Mezina et al.12 proposed a deep learning approach for anomaly detection in X-ray images of paintings. The research team constructed a dataset using high-resolution X-ray images of the Ghent Altarpiece, and based on this dataset, developed a novel neural network architecture that integrates the Discriminatively Trained Reconstruction Anomaly Embedding Model (DRAEM) with Nested U-Net for anomaly detection. Vila et al.13 successfully revealed ancient Greek text concealed on the back of papyrus using shortwave-infrared hyperspectral imaging, substantially improving the contrast between writing and papyrus substrate. Mocella et al.14 applied X-ray phase-contrast tomography for virtual unwrapping and deciphering of unrolled Herculaneum papyri. Parsons et al.15 recovered text invisible to the naked eye from unrolled, extremely fragile ancient Herculaneum papyrus scrolls using non-destructive imaging and machine learning techniques. Lv et al.16 addressed the complex artistic styles and irregular damage patterns in Kizil Grotto murals by proposing a multimodal restoration method based on diffusion models.Zhou et al.17 addressed the challenges of scarce annotated data and difficulty in distinguishing visually similar characters in Oracle Bone Script recognition by proposing the OracleNet model, which effectively improves recognition accuracy and robustness in cross-domain scenarios. The application of multimodal imaging technology in cultural heritage preservation and research has provided new technical approaches for addressing bamboo slip character degradation issues. Particularly in bamboo slip research, infrared images often reveal ink traces that are difficult to identify under visible, while visible images provide material texture and structural information. For bamboo slips (jiandu), the utilization of spectral technology enables faded text information, originally invisible under visible, to reappear in the infrared band. Zhang et al.18,19,20 employed infrared imaging technology to make faded text on bamboo slips reappear in infrared images and compiled this information into publications, thereby advancing bamboo slip research. Cao et al.21 proposed a character restoration method for Qin and Han bamboo slips based on improved conditional generative adversarial networks (cGAN), and constructed a dataset comprising 500 pairs of original and ground truth images of Qin-Han bamboo slip characters. In summary, this cross-band imaging approach exhibits significant complementarity between different modalities, with visible and infrared band images complementing each other at the information level: the former provides structural and textural details, while the latter reveals concealed or degraded implicit information. This cross-band complementary characteristic constitutes an important foundation for artifact visualization and restoration, and serves as a critical basis for the registration of infrared and visible images of bamboo slips. As shown in Fig. 1 below. This situation presents challenges for bamboo slip research, as experts conducting character recognition, fragment rejoining, and various other studies often need to repeatedly compare between visible and infrared images, substantially increasing workload and affecting research efficiency.

Six pairs of bamboo slip images with visible (right) and infrared (left) modalities. The leftmost two pairs show clear characters in both modalities, indicating good ink preservation. The rightmost four pairs demonstrate severely degraded visible images while near-infrared imaging reveals clear characters. Geometric misalignments between image pairs necessitate accurate registration before fusion processing.

The development of multimodal bamboo slip image registration technology holds significant positive implications for bamboo slip research. First, due to differences in imaging principles and equipment parameters, substantial geometric misalignment and photometric differences exist between visible and infrared images22,23, making direct fusion for image enhancement often unable to achieve ideal results. Therefore, proposing high-precision multimodal bamboo slip image registration algorithms to achieve precise alignment between visible and infrared images has become a critical technological component for improving bamboo slip research quality. Precise multimodal image registration can align inter-modal differences, laying the foundation for subsequent image fusion and character enhancement processing. Through image fusion methods that combine complementary information from visible and infrared images of bamboo slips, not only can degraded character information be enhanced while preserving texture information24, significantly improving the recognition of degraded text, but this also provides clearer and more accurate image evidence for subsequent work such as bamboo slip transcription and fragment rejoining. High-quality digitized bamboo slip images not only support remote access and online research, breaking geographical limitations and promoting international cooperation and exchange, but the in-depth development of bamboo slip digitization work also holds important strategic significance for inheriting and promoting excellent traditional Chinese culture. Through establishing comprehensive bamboo slip digital resource libraries25, not only can convenient retrieval and analysis tools be provided for researchers, but the development of interdisciplinary research can also be promoted, driving the cross-disciplinary integration of history, archeology, linguistics, computer science, and other fields, injecting new vitality into bamboo slip research. The field of image registration has witnessed significant development over the past decades, with applications extending from medical imaging to cultural heritage preservation. Traditional image registration methods can be broadly categorized into intensity-based and feature-based approaches. The method proposed by Viola and Wells26 directly optimizes similarity metrics between image intensities. Mutual information methods27,28,29 and Fourier-based methods30,31 aim to match images by identifying similar pixel intensities in overlapping regions, and have been widely adopted as multimodal image registration approaches. Scale-Invariant Feature Transform (SIFT)32,33,34 and Speeded Up Robust Features (SURF)35 have been extensively employed for natural image registration.

The Iterative Closest Point (ICP) algorithm was proposed by Besl and McKay36 for rigid point set registration, achieving progressive point set alignment through alternating nearest point matching and least-squares transformation estimation. Chen and Medioni37 previously proposed the multi-view point set alignment concept, which also laid the groundwork for ICP development. Subsequently, Zhang38 extended ICP to accommodate free-form curve and surface registration. These early works established the classical ICP framework based on geometric features (point coordinate distances). As application scenarios expanded, the robustness and convergence of ICP have been continuously improved. Fitzgibbon39 proposed the LM-ICP algorithm incorporating nonlinear optimization and robust kernel functions in registration, thereby enhancing robustness and convergence speed in 2D and 3D point set registration. Segal et al.40 further proposed Generalized-ICP, introducing point-to-plane error and local covariance structure into the optimization objective function, making the registration process statistically more rigorous. Yang et al.41 proposed the Go-ICP algorithm, guaranteeing global optimality under the L2 error metric. Bouaziz et al.42 proposed the Sparse Iterative Closest Point (Sparse ICP) algorithm, which introduces sparse regularization into the traditional ICP framework by integrating sparse constraints directly into the optimization model, enabling the algorithm to maintain high registration accuracy and convergence stability even in the presence of noise, occlusion, or partial overlap. Guo et al.43 proposed the Adaptive Weighted Robust Iterative Closest Point (AW-RICP) algorithm, enhancing ICP robustness in scenarios with noise, outliers, and partial overlap through adaptive sparse neighbor selection and weight learning mechanisms. Zhou et al.44 proposed the Fast Global Registration (FGR) algorithm, which transforms the registration problem into an optimization problem with robust loss functions and utilizes Black-Rangarajan duality for rapid solution, thereby achieving fast registration without requiring initial alignment. However, the FGR algorithm depends on feature quality.

The advent of deep learning has transformed the trajectory of image registration development, particularly in medical imaging applications. VoxelMorph45 introduced an unsupervised learning framework that employs convolutional neural networks to directly predict deformation fields. This approach significantly reduces computational time during inference while maintaining registration accuracy comparable to traditional iterative methods. Arar et al.46 proposed an unsupervised multimodal image registration method based on geometry-preserving image-to-image translation, addressing the modal difference challenges that traditional methods struggle to handle by transforming multimodal registration into single-modal registration.

The application of transformer architecture47 in image registration tasks has also achieved promising results. Vision Transformer (ViT)48 first demonstrated that transformers could be successfully applied to vision tasks, establishing the foundation for subsequent visual transformer development. TransMorph49 further demonstrated that visual transformers can capture long-range spatial dependencies more effectively than CNNs, thereby improving registration performance. However, these transformer-based methods require substantial computational resources and large training datasets, limiting their applicability in resource-constrained scenarios.

This work focuses on high-precision registration between visible and infrared images and degraded character enhancement. The objective is to propose a robust and broadly applicable registration and character enhancement framework oriented toward degraded text enhancement, enabling accurate alignment of infrared and visible images of bamboo slips and achieving character restoration based on the registration results. This work provides effective technical support for deep information mining and digital preservation of bamboo slip images, filling a technological gap in the field of cultural heritage conservation. The main contributions are as follows:

-

A coarse registration module for multimodal bamboo slip images is proposed, utilizing the relatively stable characteristics of edge information across different modalities and under downsampling conditions to achieve global registration between multimodal bamboo slip images.

-

A module for fine registration of globally registered multimodal bamboo slip images is proposed. Through innovation of the Iterative Closest Point (ICP) algorithm by replacing the original single reliance on raw points with edge-corner features, while innovatively modifying the weights of different features dynamically during algorithm iterations, the challenges posed by weak texture in bamboo slip image registration are addressed.

-

A registration optimization module based on a hybrid optimization strategy combining gradient optimization with multi-restart simulated annealing is proposed, fine-tuning registration results through mutual information maximization.

The paper is structured as follows. The Methods section details the registration and character enhancement algorithms. The Results section presents experimental validation of the registration method and character enhancement outcomes. The Discussion section summarizes the main contributions and outlines future research directions.

Methods

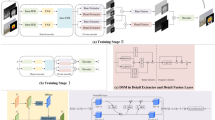

This work proposes a multi-level registration framework for weak-texture bamboo slip images, which organically integrates edge features with SIFT corner features and achieves high-precision registration through a coarse-to-fine hierarchical strategy, as shown in Fig. 2. The core concept of this method is to combine the characteristics of bamboo slip images, implementing the registration process hierarchically, and adaptively adjusting the weights of different features at various stages. The entire registration process is divided into three stages: coarse registration, fine registration, and mutual information optimization. Multi-feature fusion and dynamic weight adjustment strategies are incorporated into the first two stages.

The framework comprises Registration and Fusion modules. The Registration Module adopts a coarse-to-fine strategy: CESIFT-RANSAC extracts and matches edge and corner features for coarse alignment, followed by DWICP for precise pixel-level registration through dynamic feature weight transformation. The Fusion Module extracts handwriting and applies enhancement to generate improved output. See Sections “Multi-Level Registration Algorithm Implementation” and “Character Enhancement and Visualization Optimization” for details. CESIFT-RANSAC coarse registration algorithm, DWICP fine registration algorithm.

Multi-feature detection and weighted matching

Multimodal bamboo slip images exhibit significant texture differences, making traditional single features ineffective in addressing registration challenges. This section details the multi-feature extraction and matching strategies designed for bamboo slip characteristics, including adaptive multi-intensity SIFT feature extraction, dedicated bamboo slip edge feature extraction, and feature matching mechanisms based on different weights. Input images are first uniformly converted to grayscale to eliminate the influence of color component differences on feature extraction results. Subsequently, three methods are employed for structural enhancement of the images: First, bilateral filtering is used to smooth noise while preserving edges. Compared to Gaussian filtering, bilateral filtering retains more gradient information at edges, facilitating subsequent edge and corner point identification. Second, histogram equalization is applied to enhance overall image contrast, stretching the dynamic range of dark regions to highlight weak boundaries in low-texture areas and improve the stability of feature point response values. Finally, Laplacian enhancement is used for high-pass processing of the image to emphasize edge contours. While these results are not directly used for registration, they provide auxiliary support for subsequent “texture intensity assessment”.

Bamboo slip images typically exhibit characteristics such as weak texture and low contrast, posing challenges for traditional SIFT feature extraction. To address this issue and improve structural clarity and feature stability in images, this work designs an adaptive dual-intensity SIFT feature extraction strategy that effectively handles the special properties of bamboo slip images. We perform texture intensity analysis on images using the Laplacian operator, calculating overall texture intensity values as the basis for adaptive parameter adjustment. In regions with weaker and smoother textures, the Laplacian response is smaller, reflected in smaller variance values, while in regions with stronger and more complex textures, the Laplacian response is larger, reflected in larger variance values. Applied to bamboo slip images, bamboo slips with mild character degradation display more clear characters in visible images with rich details and edges, representing strong texture; bamboo slips with more severe character degradation show increasingly faint or even vanished characters in visible images, revealing only the material background of the bamboo slip with large smooth areas lacking obvious edges and details, representing weak texture. Based on texture intensity results, the algorithm can distinguish between weak-texture and strong-texture images. For bamboo slips with mild character degradation, visible images contain relatively clear text, where these characters serve as excellent feature point sources character strokes, turns, and intersections are all detected as corner points, specifically high-intensity corner points. In such cases, we increase the corner detection threshold to detect high-intensity corner points as much as possible for registration, as these high-intensity corner points typically achieve higher matching accuracy and better precision than medium-intensity corner points. For weak-texture images, we employ more relaxed parameters to extract medium-intensity corner points. After extracting feature points and descriptors separately, the results are merged to form a comprehensive corner point set. This dual-intensity strategy can simultaneously capture prominent features and medium-intensity features in images, substantially improving feature extraction capability in weak-texture regions. To ensure uniform spatial distribution of feature points while avoiding redundancy, two optimization strategies are applied in the algorithm: (1) Non-maximum suppression: Only the feature points with the strongest response values are retained within local regions, effectively reducing redundancy of nearby feature points. Each feature point is examined, and if a feature point with a higher response value exists within the region, the current feature point is suppressed; (2) Local uniformity constraint: The image is divided into grids, with a maximum of 15 strongest-response feature points selected within each grid cell, ensuring uniform distribution of feature points throughout the image and avoiding clustering in locally texture-rich regions.

Bamboo slip edges represent shared and stable features between different modal images, especially in the coarse registration stage where edge point matching accuracy further improves after downsampling, playing a primary role in coarse registration. This work designs an edge feature extraction method specifically tailored to bamboo slip characteristics. The extraction results of bamboo slip edge contours are shown in Fig. 3 below. This method focuses on suppressing interference from text details and bamboo slip material texture, reducing the possibility of decreased edge matching accuracy caused by significant differences in edge extraction between the two modal images where infrared images of bamboo slips reveal more characters than visible images thereby highlighting bamboo slip main contours that exhibit less variation across different modalities. Simultaneously, visible images of bamboo slips contain rich texture information, while infrared images, due to their imaging characteristics specifically, near-infrared light’s stronger penetrating ability allowing it to penetrate the texture layer of wood surfaces, reducing surface structure’s impact on imaging, combined with various wood chemical components exhibiting relatively uniform absorption characteristics where texture structure-induced chemical composition differences in this band are insufficient to produce strong contrast contain almost no wood texture information. Without processing, wood texture information in visible images would generate numerous false edge responses that would be extracted as edges, but infrared images would lack corresponding texture features, reducing registration algorithm reliability. Therefore, we first apply Gaussian blur processing to images, using a 5 × 5 kernel to reduce character detail interference while preserving main structural contours. Subsequently, image gradients are calculated through Sobel operators, with gradient statistics further analyzed to adaptively set Canny edge detection thresholds. This adaptive threshold strategy better accommodates edge characteristics of different bamboo slip images. After applying Canny edge detection, morphological closing operations are employed to connect broken edges, enhancing edge continuity. External contours are then extracted, and area thresholds are applied to filter small-area noise, preserving main bamboo slip edge contours. This edge extraction method fully considers bamboo slip characteristics, effectively suppressing text detail interference and highlighting main external edges of bamboo slips, providing reliable features for subsequent edge-based registration.

Edge features extracted from bamboo slip images, highlighting structural boundaries and contour information for subsequent registration. The improved method identifies clear edge contours providing robust feature points for coarse registration.

After feature extraction, correspondence relationships between features from different images must be established, and reasonable weight allocation strategies must be designed to fully leverage the effectiveness of different features at various registration stages. First, for corner point feature matching, the algorithm employs a FLANN-based fast matching algorithm. The KD-tree method is used for index construction, with higher checking iterations set to improve matching precision. To enhance matching quality, an adaptive distance ratio threshold strategy is adopted. Compared to the fixed distance ratio thresholds typically used in traditional SIFT matching for filtering, stricter thresholds may be more suitable for texture-rich images with high feature discrimination; for images like bamboo slips with weak texture and large modal differences, overly strict thresholds may result in insufficient valid matching points. Therefore, for bamboo slip images, using adaptive distance ratio thresholds is more conducive to finding matching points. We first calculate the distance ratio distribution of all matching point pairs, then statistically determine appropriate thresholds, ensuring matching strictness while adapting to different image characteristics. To further improve matching reliability, we also implement a bidirectional matching verification strategy. This strategy requires that a pair of feature points must correspond to each other in both forward and reverse matching to be considered valid matches. The inclusion of this strategy significantly reduces mismatches and improves matching point reliability. When sufficient matching points are available, we further apply the RANSAC algorithm for geometric consistency verification, eliminating outliers that do not conform to the primary geometric transformation relationship. Second, for establishing edge feature correspondences, we adopt a distance-based nearest point matching strategy. For each edge sampling point in the source image, the point with minimum Euclidean distance among all edge points in the target image is identified as the corresponding point. To avoid incorrect matching, we set a distance threshold, with point pairs exceeding this threshold considered invalid matches. Additionally, we incorporate distance-based exponential decay weights for feature points, ensuring that closer edge point pairs receive higher weights, further enhancing matching reliability. Finally, we design a weight allocation method based on feature point response values. For corner feature points, matching weights are calculated based on keypoint response values. Specifically, for each matching pair, the geometric mean of the two keypoint response values is taken as the initial weight, then weight values are normalized to ensure higher-quality feature points have greater influence. For edge points, higher weights are assigned in the initial stage to fully utilize the advantages of edge features in coarse registration. Through multi-feature detection and weighted matching strategies, the algorithm can effectively address multimodal registration challenges in bamboo slip images, laying the foundation for subsequent multi-level registration. The adopted response value-based feature weight allocation mechanism and bidirectional matching verification strategy significantly improve matching point reliability, while the introduction of edge features effectively supplements the deficiency of corner features in text regions, enhancing the algorithm’s capability to utilize bamboo slip edge features.

Multi-level registration algorithm implementation

This section details the implementation process of the multi-level registration algorithm, including the coarse registration module based on downsampling and feature weighting, the fine registration module based on improved ICP with corner-edge multi-feature fusion and dynamic weight adjustment, and mutual information optimization as three key stages. The algorithm flowchart of this framework is shown in Fig. 4 below. The core innovations lie in the hierarchical processing strategy and dynamic feature weight adjustment mechanism, which effectively address the challenging problems in bamboo slip image registration.

Coarse-to-fine registration strategy. The Coarse Registration Module uses CESIFT-RANSAC to match multi-scale features and generate a preliminary affine transformation matrix. The Fine Registration Module uses DWICP to refine alignment through dynamic feature weight transformation, iterating to convergence followed by MI-based optimization to generate final registration results. Red lines: edge matches; green lines: corner matches.

Coarse registration stage

We employ a downsampling strategy in the coarse registration stage, performing 1/2 downsampling on original images to reduce image detail interference while preserving main structural features, providing good initial transformation estimates for subsequent fine registration. Downsampling uses area averaging, which effectively suppresses text detail noise while preserving main structural information.

The coarse registration stage adopts an edge-feature-priority weight allocation strategy, with edge feature weights set to 5 and corner feature weights set to 3, using edge points as primary constraints with corner information as auxiliary support. This weight setting is based on two key points: First, bamboo slip external contours remain relatively stable across different modal images, and spatial resolution loss potentially caused 1/2 downsampling may cause corner point positions to shift or disappear; second, in the coarse registration stage, global alignment is more important than local details, with local registration to be performed in the subsequent fine registration stage.

Visualization of matching point positions and connections in the coarse registration stage is shown in Fig. 5 below. In Fig. 5, I have selected common cases in bamboo slips, comprising 6 image pairs, with the left image being infrared and the right being visible in each pair. Figure 5a represents bamboo slips with mild character degradation, where visible images show relatively clear text serving as excellent feature point sources character strokes, turns, and intersections are all detected as corner points, specifically high-intensity corner points. Since infrared images of bamboo slips are inherently intended to reveal degraded characters, characters appear particularly clear in infrared images. Therefore, in this type of bamboo slip image with mild character degradation, corner point accuracy is very high with good registration results. In such images, whether edge point information dominates does not affect coarse registration results. Figure 5b, c represent bamboo slips with severe character degradation, where characters in visible images are faded, blurred, and difficult to recognize. In such images, extracting sufficient high-intensity corner point information from visible images is challenging, while high-intensity corner points extracted from clear text structures in infrared images mostly exist as medium-intensity corner point information in visible images. We reduced the corner point extraction threshold to identify more potential corner point information for matching. However, after lowering the corner point extraction threshold, not only potential corner points but also noise points may be extracted, thereby affecting corner point matching accuracy. In this situation, we incorporated bidirectional matching verification strategies and geometric consistency verification in the algorithm to screen corner points and improve accuracy. We also note that bamboo slip edge information, after downsampling, still maintains main edge structures, with direction information remaining relatively stable across different scales, exhibiting scale invariance. Moreover, long edges are less susceptible to noise interference than isolated corner points, providing edge information with stronger noise resistance. This aligns with the coarse registration stage’s focus on global-level registration of bamboo slip images, so we increased edge information weights in the coarse registration stage, allowing edge information to dominate. Figure 5d, e represent even more severe bamboo slip character degradation cases, where corner point matching remains difficult even after detecting medium-intensity corner points. As shown in Fig. 5c, d, the number of corner point matching pairs is clearly fewer than in Fig. 5a–c. In such cases, registration must rely even more on edge information. Figure 5f also shows bamboo slips with severe character degradation. In this image, we can clearly observe the decrease in corner point matching accuracy after medium-intensity corner point detection. The corner point matching in the figure shows obvious mismatches. However, edge point matching is correct, and good coarse registration can still be achieved when edge points dominate. The situations represented by these 6 image pairs demonstrate that our choice of edge-dominated, corner-assisted approach is correct.

Representative examples of feature matching between visible and infrared bamboo slip images, with colored lines indicating feature correspondences. a Slight character degradation. b Severe character degradation with numerous characters. c Severe character degradation with numerous characters. d Severe character degradation with sparse character content. e Severe character degradation with sparse character content. f Severe character degradation with mismatches. Edge matches extend from bamboo slip boundaries; corner matches originate from interior character regions.

After obtaining SIFT matching points and edge correspondence points, the algorithm uses a weighted affine transformation estimation algorithm to calculate the transformation matrix. The core approach is to repeat matching point pairs multiple times according to their weights. For example, for a point pair (pi, qi) with weight wi, the system duplicates it ⌊wi⌋ times, thereby granting high-weight points greater influence during transformation estimation. The RANSAC algorithm is used to estimate the affine transformation at this stage:

where the rotation angle θ and scaling factor s can be calculated using the following formulas:

To ensure transformation validity, the algorithm performs rigorous verification of the estimated transformation matrix, extracting and checking the following parameters: rotation angle θ and scaling factor s. When abnormal parameters are detected, the system discards the current transformation and uses the initial identity transformation. This conservative strategy ensures registration process stability, avoiding failures in subsequent fine registration and MI mutual information optimization stages due to coarse registration stage failure, ensuring that the transformation matrix output from the coarse registration stage, even if not sufficiently precise, provides reliable initial values for the next stage of fine registration. The parameter ranges are determined from actual imaging conditions. Since bamboo slips are typically elongated strips, and the captured multimodal bamboo slip images are intended for practical research use, bamboo slips are generally positioned vertically in both visible and infrared images, with the size ratio of bamboo slips between the two images typically not varying significantly.

Fine registration stage

After obtaining coarse registration results, the transformation matrix is adjusted to the original image dimensions and applied to the original infrared image, yielding the coarsely registered image. The registered image and original visible image are then input into the fine registration stage. This stage aims to further refine alignment results, perform local registration, and improve overall image registration accuracy. The algorithm employs an improved Iterative Closest Point (ICP) algorithm, innovatively introducing a dynamic weight adjustment mechanism for corner-edge features.

In the fine registration stage, to obtain more precise feature points, corner and edge features are re-extracted using original resolution images. For corner feature extraction, the same method as in Section “Multi-Feature Detection and Weighted Matching” is applied but to images after coarse registration transformation, using adaptive parameters. For edge feature extraction, binary masks are first created and applied to ensure only valid image regions participate in edge detection. This ensures feature points used in the fine registration stage have the highest positional accuracy while avoiding interference from background regions in feature extraction.

The proposed ICP algorithm improvements are primarily reflected in two aspects: (1) Multi-feature fusion: Traditional 2D ICP algorithms are geometric registration methods based on original point correspondences, typically not relying on specific feature points. This causes classical ICP algorithms to struggle with weak-texture, weak-corner image registration, as weak-texture regions lack significant geometric variations, leading to error-prone nearest neighbor searches, while uniform point cloud distributions in smooth regions fail to provide effective registration constraints. Once sufficient constraint information is lacking, algorithms easily fall into local optima, resulting in poor final registration. Our proposed algorithm combines feature points, simultaneously considering edge contour points and corner points, establishing a unified feature framework for fusion. (2) Dynamic weight adjustment: As iterations progress, corner and edge feature weights change dynamically, achieving smooth transition from global alignment to local fine matching. The core iterative process can be expressed as:

where Tk represents the global transformation after the k-th iteration, ΔTk represents the local incremental transformation estimated at iteration k, and ∘ represents transformation composition operation.

After each iteration, the algorithm checks whether mutual information has improved. If improved, the update is accepted; otherwise, the previous best transformation is maintained. This mutual information-based evaluation mechanism ensures monotonic convergence of the registration process. In each iteration, we use a combination of edge and corner points to estimate the transformation matrix. The combination process can be expressed as:

where Psift and Pedge represent coordinate sets of SIFT feature points and edge points respectively, and Wsift and Wedge represent corresponding weight sets. To achieve weighted transformation estimation, we adopt a point duplication strategy, duplicating corresponding points based on weight values, as shown in the following formula:

where pi represents the i-th point’s coordinates, wi represents its weight, and ⌊wi⌋ represents the number of duplications after rounding down the weight. This point duplication strategy intuitively implements the weighting mechanism, with higher-weighted points having greater influence in transformation estimation. After weighting, the RANSAC method is used to estimate affine transformation.

We innovatively introduced dynamic weight adjustment during iterations, implementing gradual increase in corner feature weights and gradual decrease in edge feature weights as iteration count increases through linear interpolation. The specific linear interpolation method we adopt is:

where i is the current iteration number, N is the maximum number of iterations, \({w}_{edge}^{initial}=5.0\), \({w}_{edge}^{\mathrm{fi}nal}=1.0\), \({w}_{sift}^{initial}=3.0\), \({w}_{sift}^{\mathrm{fi}nal}=20.0\).

This dynamic weight adjustment function ensures continuity and smoothness of weight changes, avoiding instability from abrupt transitions. In initial iterations (i = 0), edge weight is 5.0 and corner weight is 3.0; at final iteration (i = N), edge weight decreases to 1.0 while corner weight increases to 20.0.

We incorporated dynamic weight adjustment with increasing iterations in the fine registration stage because in early registration, after downsampling, edge information’s scale invariance and stronger noise resistance make edge point information more accurate and reliable in coarse registration. Corner points depend on pixel-level precise positioning, while bamboo slips suffer from character degradation with originally blurred or vanished characters in visible images, making correspondence with infrared image characters difficult. Combined with spatial resolution loss during downsampling potentially causing corner position shifts or disappearance, edge features are more important and precise in the coarse registration stage, helping quickly obtain rough but robust alignment.

In the fine registration stage, as images return to original size, corner points can achieve sub-pixel positioning, with all stroke intersections, turning points, endpoints, and other structural details reappearing. These potentially detectable corner points represent precise positions of local geometric structures, with corner matching’s high precision characteristics perfectly meeting fine registration requirements. Meanwhile, edge detection produces edge bands of certain width rather than precise point positions, potentially generating excessive edge pixels in high-resolution images. For fine adjustments required in precise registration, this uncertainty accumulates into larger errors. Therefore, compared to the coarse registration stage, corner points become particularly important in fine registration, needing to gradually dominate. Through this strategy, we ensure that in early fine registration, edge information’s global nature prevents ICP from converging to incorrect local optima; in mid-stage, balanced edge and corner weights achieve stable convergence direction; in late fine registration, corner dominance achieves final precise alignment. While avoiding local optimum traps, this achieves smooth optimization paths, reducing algorithm jumps between different feature types through coarse-to-fine continuous optimization trajectories, providing more stable convergence processes with strong adaptability to common character degradation, blurring, and contamination in bamboo slip images.Visualization of local precise registration during the fine registration stage is shown in Fig. 6 below. We can observe that after completing the coarse registration stage (image iter0), although global registration has been achieved, registration effectiveness remains suboptimal at edges or local positions. This manifests in the red-green overlay images as red or green bands appearing at bamboo slip edge positions. However, with increasing iteration count, local positions of bamboo slips undergo fine registration. By iteration completion, registration results for all bamboo slip iter50 images have improved, with the originally red or green bands along edges substantially disappearing, transforming to normal yellow. This indicates that after the fine registration stage, registration precision and effectiveness have further improved.

The figure demonstrates the progressive registration process of bamboo slip image pairs, iteratively advancing from the input state to the fine registration stage. VIS: visible image; NIR: infrared image.

To prevent background regions from interfering with registration, we introduce a masking mechanism. The mask is a binary image where regions with value 1 represent valid bamboo slip areas and regions with value 0 represent background. Masks are continuously updated with transformations. Masks ensure only bamboo slip region features participate in registration, effectively avoiding background noise and interference. Particularly for bamboo slip images with irregular shapes and complex backgrounds, mask processing is crucial for improving registration performance.

To ensure reasonable transformation parameters for each iteration, we introduce transformation quality assessment and anomaly rollback mechanisms. For estimated transformation matrices, we extract corresponding scaling factors and rotation angles, then check whether these transformation parameters are within predefined reasonable ranges. If parameters exceed ranges, we roll back to the previous best transformation. This anomaly detection rollback mechanism greatly enhances algorithm robustness, avoiding unreasonable registration results from local optima. Particularly for bamboo slip images, local feature similarity may cause mismatching, producing unreasonable transformation parameters. Through parameter verification and rollback mechanisms, the algorithm avoids these errors, maintaining registration process stability.

To ensure reasonable transformation parameters for each iteration, we introduce transformation quality assessment and anomaly rollback mechanisms. For estimated transformation matrices, we extract corresponding scaling factors and rotation angles, then check whether these transformation parameters are within predefined reasonable ranges. If parameters exceed ranges, we roll back to the previous best transformation. This anomaly detection rollback mechanism greatly enhances algorithm robustness, avoiding unreasonable registration results from local optima. Particularly for bamboo slip images, local feature similarity may cause mismatching, producing unreasonable transformation parameters. Through parameter verification and rollback mechanisms, the algorithm avoids these errors, maintaining registration process stability.

Additionally, we introduce mutual information-based quality assessment, accepting new iteration results only when new transformations improve mutual information. Mutual information metrics as multimodal image registration measures can effectively assess registration quality, reliably reflecting actual registration conditions even when images have large visual appearance differences due to modal differences.

Mutual information optimization

After completing fine registration, this work introduces mutual information optimization as the final refinement step to further improve registration accuracy. The introduction of mutual information optimization is based on the following considerations: ICP algorithms primarily rely on spatial correspondences of feature points and edge points, while mutual information is directly based on statistical dependencies of image gray-level distributions. For image pairs with different imaging mechanisms such as infrared and visible images, mutual information can capture deep statistical correlations that traditional similarity measures cannot identify, making it particularly suitable for handling registration problems with significant gray-level distribution differences. The calculation method for mutual information is as follows:

where H(X) and H(Y) are the marginal entropies of random variables X and Y respectively, and H(X, Y) is their joint entropy. In image registration, X and Y represent pixel gray values of the two images.

The core concept of mutual information is evaluating registration quality by analyzing the joint histogram of two images. When two images are perfectly aligned, corresponding pixels’ gray values exhibit the strongest statistical correlation, maximizing mutual information. Based on this principle, we use mutual information maximization as the optimization objective, iteratively adjusting transformation parameters to find optimal registration results. Compared to traditional pixel-difference-based metrics, mutual information has stronger adaptability to nonlinear gray-level relationships between images, effectively handling common issues in bamboo slip images such as illumination variations and contrast differences. This makes it particularly suitable as an evaluation metric for multimodal image registration in our bamboo slip visible and infrared image registration method.

To overcome the computational inefficiency limitations of traditional grid search methods, we designed a multi-stage hybrid optimization strategy to maximize mutual information and obtain optimal transformation matrices, as shown in the following formula. This strategy combines advantages of global search and local refinement, performing global exploration through multi-restart simulated annealing algorithms, then using gradient optimization for local refinement, finally achieving parameter convergence through fine-tuning optimization. This hierarchical optimization design ensures both global convergence and significantly improved computational efficiency.

where T* represents the optimal transformation matrix, Iref is the reference image, Imoving is the image to be registered, and ∘ denotes transformation operation.

We adopt a multi-restart strategy because it is an effective method for preventing optimization algorithms from falling into local optima. Considering that single simulated annealing runs may be influenced by initial point selection, we employ a five-restart parallel search strategy. First, the algorithm uses the transformation matrix obtained from fine registration as the baseline starting point, which is typically already near the global optimum’s neighborhood. Subsequently, the algorithm generates four additional starting points around the baseline, each generated by adding controlled random perturbations to the baseline transformation. The simulated annealing formula for the multi-restart strategy is:

where Tbase is the baseline transformation matrix from ICP registration, and ΔTi is the random perturbation matrix for the i-th starting point.

At this stage, bamboo slip images entering mutual information optimization have undergone coarse and fine registration stages. Assuming no registration failure, we consider the visible and infrared images of bamboo slips to have achieved approximate registration. In this case, perturbations should not be too large. However, considering potential registration errors in previous stages leading to larger deviations or situations where parameter detection anomaly rollback mechanisms revert to initial positions, we carefully designed the starting point perturbation strategy to provide sufficient search diversity while not deviating from reasonable transformation ranges, aiming to complete registration for these bamboo slips in the final algorithm stage. Rotation perturbations are limited to ± 5°, covering most angular deviations in bamboo slip images. Scaling perturbations are controlled within 10%, compensating for possible scale differences while avoiding excessive deformation. Translation perturbations are set to 15 pixels, sufficient to handle positional offsets between images while maintaining computational stability.

Our introduced adaptive cooling mechanism is one of the core improvements to traditional simulated annealing algorithms. Traditional fixed cooling rates struggle to balance global search and local convergence needs, so we introduce a dynamic cooling strategy based on acceptance rate feedback. The algorithm maintains a sliding window to monitor candidate solution acceptance rates in real-time. When acceptance rates are too high, indicating potentially excessive temperature, the algorithm accelerates cooling to promote convergence; when acceptance rates are too low, it slows cooling to maintain sufficient search capability. The target acceptance rate is set at 30%, a value proven effective in theory and practice for balancing exploration and exploitation. Acceptance rate calculation uses a sliding window method:

where W = 50 is the sliding window size, and ai ∈ {0, 1} indicates whether the candidate solution was accepted at iteration i.

The neighborhood solution generation strategy employs temperature-dependent perturbation strength control. The cooling rate adjustment strategy is: when acceptance rate exceeds 1.5 times the target value, use a fast cooling rate of 0.98; when below 0.5 times the target value, use a slow cooling rate of 0.995; otherwise use a standard cooling rate of 0.99. The target acceptance rate is set at 0.3.

After the simulated annealing phase, the algorithm selects the three best-performing candidate solutions for gradient refinement. We select multiple candidates rather than just the optimal solution because simulated annealing’s randomness may result in other promising regions near the optimal solution; refining multiple candidates enables more comprehensive exploration of the solution space.

During gradient optimization, we use finite difference methods to calculate numerical gradients, providing higher accuracy than forward differences. For a 2 × 3 affine transformation matrix, the algorithm needs to compute gradients for six parameters, each requiring two mutual information evaluations. Perturbation step size selection is crucial too small may cause numerical instability, while too large affects gradient estimation accuracy. We use 10−4 as the optimal perturbation step size, achieving good balance between computational precision and numerical stability.

where Tij represents the (i, j) element of the transformation matrix, eij is the corresponding unit matrix, and ε is the perturbation step size.

During gradient update optimization, traditional fixed learning rates struggle to adapt to objective function variations during optimization, so we designed a strategy based on improvement history and no-improvement counts for dynamic adjustment. The algorithm maintains an improvement history window, calculating average improvement and improvement trends over recent iterations. When average improvement is positive and trend is rising, learning rate multiplies by 1.05 (capped at 0.1); when average improvement is non-positive or consecutive no-improvement count exceeds 5, learning rate multiplies by 0.8; when improvement trend decreases, learning rate multiplies by 0.9. Learning rates are strictly limited to ensure numerical stability:

Throughout gradient optimization, we also introduce transformation parameter validity checks. After each parameter update, the algorithm checks whether the new transformation matrix satisfies geometric constraints. If new transformation parameters exceed reasonable ranges, the algorithm automatically rolls back to the previous valid state and retries with reduced learning rate. This protection mechanism ensures optimization always proceeds within physically reasonable parameter space, avoiding unrealistic results from numerical optimization.

After gradient optimization, the algorithm enters the final local fine-tuning stage. This stage aims to make fine adjustments based on already-found excellent solutions, further improving registration accuracy. Fine-tuning search range is strictly controlled within 0.5 units: for rotation parameters, this means 0.5-degree angular adjustments; for scaling parameters, 0.5% ratio adjustments; for translation parameters, 0.5-pixel position adjustments. Fine-tuning optimization uses random search rather than deterministic grid search, more effectively exploring parameter space with limited computational resources. In each iteration, the algorithm randomly generates a candidate solution within the current optimal solution’s neighborhood; if the candidate has higher mutual information, it is accepted and updates the current optimal solution. Random search advantages include avoiding optimal points that grid search might miss, particularly when optimal solutions are not on grid points.

To ensure physical reasonableness and practicality of registration results, we establish strict transformation parameter constraint mechanisms. These constraints are formulated based on actual characteristics and imaging conditions of bamboo slip images, ensuring registration result credibility while preventing optimization algorithms from producing unrealistic transformation parameters. First, rotation angle constraints are based on actual deviations possible during bamboo slip imaging. In actual bamboo slip digitization, even with professional imaging equipment, small angular deviations may exist between infrared and visible cameras. Statistical analysis of numerous bamboo slip image pairs reveals that angular deviations are within 5 degrees in most cases, so rotation angle constraints are set to absolute values not exceeding 5 degrees. This constraint covers normal imaging deviations while excluding obviously unreasonable rotation transformations. Second, scaling factor constraints consider effects of camera calibration errors and lens distortion. Theoretically, when using infrared and visible cameras with identical focal lengths to image the same bamboo slip, the two images should have identical scales. However, slight scale differences may exist in practice, mainly due to minor camera calibration errors, nonlinear lens distortion, and subtle differences in imaging distance. Finally, translation parameters have greater tolerance relative to rotation and scaling parameters, as bamboo slip placement may differ between two imaging sessions. However, excessively large translations typically indicate the registration algorithm may have fallen into incorrect local optima. Therefore, while translation parameters have no rigid numerical constraints, the algorithm judges translation reasonableness through geometric consistency verification. Anomaly detection mechanisms are important supplements to parameter constraints, checking not only individual parameter reasonableness but also overall consistency of parameter combinations.

Our rollback mechanism design fully considers algorithm robustness requirements. When detecting abnormal parameters, the algorithm doesn’t simply restart optimization but rolls back to the most recent reliable state. Within the multi-level registration framework, this typically means rolling back to reasonable results from the previous iteration or registration stage. This progressive rollback strategy ensures algorithm stability while maximally preserving early optimization achievements.

Character enhancement and visualization optimization

After completing the multi-level registration, to better display and analyze the ancient character content in bamboo slips, we propose an optimization method for character enhancement and visualization based on registration results. The flowchart of the method is shown in Fig. 7 below, which realizes the extraction of characters from the registered infrared images and the enhancement and optimized display of characters on the visible images of bamboo slips.

The fusion module extracts handwriting regions from the registered infrared image and applies text enhancement processing to the corresponding visible image regions, fusing both to achieve character enhancement.

First, we preprocess the original visible image and registered infrared image, enhancing contrast between characters and background in infrared images through Contrast Limited Adaptive Histogram Equalization(CLAHE) contrast enhancement. We employ CLAHE to enhance contrast within local regions while avoiding noise amplification from excessive enhancement. Subsequently, we perform adaptive threshold segmentation on the contrast-enhanced infrared image, using Gaussian-weighted adaptive thresholding for character segmentation. The adaptive threshold segmentation formula is as follows:

where N is a 25 × 25 neighborhood centered at (x, y), Gσ is the Gaussian weighting function, and C = 15 is a constant offset.

After completing adaptive threshold segmentation, we perform morphological optimization on the segmented character information, optimizing character completeness and continuity through morphological operations. We use opening and closing operations to connect incomplete broken strokes from segmentation extraction while removing extracted noise information. Subsequently, we convert the stroke-connected character image from three-channel RGB format to four-channel RGBA format, making the background transparent for subsequent operations. During this process, the algorithm inevitably identifies certain dark background regions in infrared images, uncleaned areas on bamboo slips, or image noise as characters, applying contrast enhancement and segmentation extraction to these portions. These are actually noise information, typically existing as isolated black dots or small black regions, differing from coherent and large-area character regions. We need to suppress this noise. We perform connected component analysis on converted black regions using area-based connected component filtering, removing noise regions with insufficient area. Using eight-connectivity labeling algorithm, we calculate the area for each connected component in the labeled image. Components with insufficient area are replaced with colors close to the background rather than completely removed, maintaining visual continuity. Subsequently, we also convert the visible image to four-channel RGBA format, but unlike the infrared image, the visible image only changes format to facilitate subsequent operations between the two images without altering image content. For the morphologically optimized RGBA format character image, we reassign colors to character regions based on alpha channel information. Since the characters to be enhanced are black, and black is represented as RGB values (0,0,0) in RGB color representation, black information cannot be obtained through addition operations but only through subtraction to achieve black character reproduction and enhancement. Therefore, this work employs difference fusion to highlight character information, changing extracted characters to the bamboo slip background color. During subtraction, locations on bamboo slips where characters originally existed but displayed bamboo slip background color due to degradation will change back to black. This method effectively subtracts character images from background images, highlighting text content while maintaining background information integrity. Additionally, since images have been registered, we modify the registered infrared image dimensions according to visible image dimensions, ensuring both images have identical dimensions before inputting to the character enhancement module for difference fusion processing. We then define a color matching function to restore colors modified during difference fusion for portions of the original visible image where characters are not degraded or mildly degraded with still-visible characters. These originally black characters have their colors changed during difference fusion, becoming colors different from both background and black within a certain range. We identify this color range and convert it back to black. During this process, we restrict bamboo slip background colors and image background colors from participating in color conversion, ensuring background authenticity. Ultimately, characters on bamboo slips reappear, completing enhancement of degraded bamboo slip characters. The character enhancement method plays an important role in bamboo slip image digitization and research, providing clear character display for bamboo slip studies, enhancing the research value and practicality of multimodal bamboo slip images, laying foundations for subsequent character recognition and content analysis, and providing new approaches for digital preservation and cultural heritage transmission of bamboo slips. This method forms a complete technical system with the aforementioned multi-level registration algorithm, from image alignment to content enhancement, providing comprehensive technical support for bamboo slip digitization research.

Results

In this section, we validate the effectiveness of the proposed bamboo slip infrared-visible image registration algorithm through a series of experiments. First, we introduce the constructed bamboo slip infrared-visible image dataset and its creation process. Subsequently, we elaborate on the evaluation metrics and verify the superiority of our method through comparative experiments with existing methods.

Jiandu infrared-visible image dataset

To evaluate the effectiveness of the proposed method in bamboo slip image registration, we employed a dataset of infrared-visible image pairs of bamboo slips. This dataset comprises image pairs from significant excavated documents, predominantly consisting of Han Dynasty wooden slips from Diwan, providing a reliable foundation for research in the field of bamboo slip image registration.

The dataset contains over 840 pairs of high-quality unregistered images. The corresponding image pairs contain identical content but exhibit variations in displacement, rotation, and scale.

Comparative experiments

We compared the proposed algorithm with other multimodal image registration algorithms, including traditional non-deep learning methods: MI50, DASC51,52, ICP36, CAO-C2F53, KAZE-SAR54, SIFT32, AKAZE55, as well as deep learning-based methods including the multimodal image registration module from BSAFusion56, NeMAR46, and VoxelMorph45. For NeMAR and VoxelMorph, we retrained the models using our dataset, with NeMAR trained for 800 epochs and VoxelMorph trained for 1500 epochs.

Red-green overlay images in 2D image registration are important visualization tools for evaluating registration effectiveness and are among the commonly used methods for visualizing 2D registration algorithms. The basic principle involves displaying the fixed image in the red channel and the registered moving image in the green channel, with registration quality assessed by observing their overlap. When two images are perfectly aligned, overlapping regions appear yellow, as the superposition of red and green light produces a yellow effect.

Different manifestations in red-green overlay images reflect different types of registration errors. Ideal registration results show most regions appearing yellow or near-yellow, indicating perfect feature overlap between the two images, successful identification of correct spatial transformation by the registration algorithm, and effective correction of geometric distortions between images. When translation errors exist, the overall image exhibits red-green separation “ghosting" effects, indicating overall displacement deviation with insufficiently accurate translation parameters in X or Y directions. Rotation errors typically manifest as arc-shaped red-green separation at image edges, indicating angular deviation between images with inaccurate rotation center or angle estimation, with errors more pronounced in peripheral image regions. Scaling errors usually present as good alignment at image centers but gradual red-green separation toward edges, indicating inconsistent scaling ratios between images. More complex cases involve local deformation errors, manifesting as good yellow alignment in some regions while red-green separation in others, typically indicating nonlinear distortions requiring more complex transformation models. The most severe case is complete registration failure, presenting large areas of pure red and green regions with almost no yellow overlap, indicating the registration algorithm failed to find correct correspondences, possibly due to insufficient generalization of the registration algorithm on bamboo slip images or insufficient feature points. Good registration results have clear visual criteria in red-green overlay images. Excellent registration performance includes complete overlap of main structures with boundary contours showing clear yellow, aligned detail features with accurate overlap of fine features like textures and corner points, clear edges without obvious red-green “fringing" effects, and overall consistency with registration quality maintained throughout the entire image. Different image registration application domains have varying requirements for registration accuracy. Medical imaging requires extremely high precision with perfect overlap of main anatomical structures; remote sensing image registration allows certain errors but landmarks and boundaries should be clearly aligned; industrial inspection requires precise registration of critical defect regions; while in bamboo slip image registration applications, precise registration of text regions on bamboo slips is most important. Text recorded on bamboo slips not only serves as historical “testimony" but also carries rich information about culture, politics, economics, religion, and even language evolution. Therefore, text on bamboo slips is the most important component, and precise text region registration is essential for subsequent enhancement of degraded bamboo slip characters. Visualization results of registration effects on bamboo slips for our proposed method versus comparison experiments, along with original visible and infrared images of bamboo slips, are shown in Fig. 8 below.

Comparison of our method against ten baseline approaches: ICP, DASC, MI, CAO-C2F, KAZE-SAR, AKAZE, SIFT, BSA, NEMAR, and VoxelMorph.

The precise registration of infrared and visible images in the Diwan Han bamboo slip dataset faces a series of unique and complex technical challenges, primarily stemming from the physical characteristics of bamboo slip artifacts and differences in imaging conditions.

Multimodal imaging differences constitute the most fundamental challenge in the registration process. Infrared and visible imaging employ entirely different spectral ranges and imaging principles, causing the same bamboo slip to present distinctly different visual features under the two modalities. Surface details clearly visible in visible images, such as wood texture, stain distribution, and color variations, may completely disappear or manifest as different gray-scale patterns in infrared images. Conversely, deep ink trace information revealed by infrared imaging’s ability to penetrate surface contamination layers may be unobservable in visible images. This fundamental difference in feature representation makes it difficult for traditional feature point matching-based registration algorithms to find sufficient reliable correspondence points, severely affecting registration accuracy and stability. Differences in content correlation between infrared and visible images make registration challenging. In severely faded bamboo slips, visible images may show almost no text content, while infrared images clearly display textual information. In such cases, fundamental differences exist in the information content of the two images, causing complete failure of traditional image similarity-based registration methods. Even when text portions are visible, contrast and clarity of text in the two imaging modalities may differ significantly, with different edge definitions and stroke thickness representations that interfere with edge or gradient information-based registration algorithms. Furthermore, weak texture features of bamboo slip images further exacerbate registration difficulty. After thousands of years of burial, bamboo slip surfaces contain extremely sparse texture information, lacking obvious corner points, edges, and other geometric features. Traditional registration algorithms like SIFT and SURF typically rely on rich local feature descriptors to establish correspondences between images, but in bamboo slip images, these algorithms often cannot extract sufficient stable feature points. Even when some feature points can be detected, due to texture monotony and repetitiveness, these feature points have low discriminability and are prone to mismatching. Natural textures of wooden surfaces become blurred after prolonged weathering, causing substantial attenuation of texture direction and frequency information that could otherwise serve as registration references.

The ICP algorithm demonstrates relatively good registration performance on most image pairs, with red-green overlap regions primarily appearing yellow, indicating relatively accurate spatial alignment. However, in certain complex regions and bamboo slip edge contours, as shown in Fig. 8, in the displayed fifth image pair (second column, tenth row), green clearly appears at bamboo slip contour edges with slight red-green separation, indicating misalignment still exists. DASC algorithm performance is relatively unstable, achieving good registration on some image pairs with extensive yellow overlap regions, but showing obvious registration deviations on other pairs, particularly with noticeable red-green separation observable in certain images in the eighth and tenth rows. MI algorithm performs poorly in most cases, with most regions of registered images appearing green. CAO-C2F algorithm, due to its reliance on strong feature points for image registration, and AKAZE algorithm show unstable registration effects on weak-texture bamboo slip images, achieving good spatial alignment only on some image pairs where yellow overlap regions dominate. SAR-KAZE algorithm demonstrates relatively good robustness, maintaining basic registration accuracy across test image pairs; while not optimal, registration failures are relatively rare. SIFT algorithm, as a classic feature matching algorithm relying on corner features for registration, performs well on visible image pairs with obvious characters, but when severe text degradation occurs on bamboo slips with insufficient high-intensity feature points in visible images, SIFT algorithm registration performance deteriorates significantly.

We evaluated VoxelMorph, NeMAR, and BSAFusion as deep learning-based comparative baseline models for registration tasks. Given that bamboo slip images exhibit extreme dimensional heterogeneity, varying from 100 × 200 to 300 × 3000 pixels, and these model architectures require fixed input dimensions, we initially experimented with 256 × 2048 resolution to better retain the elongated structural characteristics of bamboo slips. Nevertheless, training collapsed with NaN loss during early epochs, achieving merely 13.8% of the intended training iterations. We subsequently adopted 256 × 256 resolution based on the original input specifications of respective models, employing proportional scaling with padding for preprocessing. At this reduced resolution, however, the irrecoverable information loss rendered high-precision registration unattainable.

Finally, our proposed method demonstrates excellent registration performance across all test image pairs, with red-green overlap regions appearing almost entirely yellow with minimal obvious red-green separation, indicating the algorithm’s superiority over other comparison algorithms in both registration accuracy and stability. Particularly noteworthy is that even in regions with complex textures and low contrast, the algorithm maintains high-precision registration effects, demonstrating its robustness in handling various image conditions.

After algorithm registration, feature points in infrared images should align with corresponding feature points in visible images. Therefore, we measure Euclidean distances between transformed source points and target points to quantify actual registration algorithm performance. To comprehensively evaluate the performance of our proposed multi-level image registration method, we designed an evaluation metric system encompassing multiple dimensions. For information theory aspects, we employ Mutual Information (MI)26 and Normalized Mutual Information (NMI)29 to measure statistical dependencies between two images. For distance measurements, we calculate distance metrics from three dimensions, including Root Mean Square Error (RMSE), Mean Absolute Error (MAE)57, and Median Absolute Error (MEE), to evaluate pixel-level registration accuracy. Additionally, we employ Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index (SSIM)58 to measure image-level similarity between transformed visible and near-infrared images. We also introduce metrics such as Normalized Cross-Correlation (NCC), Correlation Coefficient (CC), and Gradient Mutual Information (GMI)59 to evaluate inter-image correlation and edge structure preservation capability.

The test set consists of 150 infrared-visible image pairs of bamboo slips, spanning three levels of degradation: 56 pairs (37.3%) with mild degradation, where character strokes are clearly visible with intact structural features, and both modalities yield abundant extractable feature points; 43 pairs (28.7%) with moderate degradation, where strokes are partially blurred or exhibit localized damage, resulting in a reduced number of feature points in visible images; and 51 pairs (34.0%) with severe degradation, where character strokes in visible images are heavily faded or imperceptible, yielding extremely limited extractable feature points. The balanced distribution across these categoriesensures comprehensive coverage of registration scenarios with varying difficulty levels. All images were sourced from the Bamboo and Wooden Slips Academic Resources Sharing Platform using standardized imaging equipment and acquisition protocols. All evaluation metrics were computed on this test set, with means and standard deviations reported in Tables 1 and 2.