Abstract

With the advancement of 3D modeling and deep learning technologies, achieving automated and high-precision annotation on textured 3D triangular mesh models has become a research hotspot. This paper proposes a fine-grained deterioration segmentation mechanism for textured 3D triangular mesh data, based on cross-modal feature extraction and fusion via bidirectional 2D–3D mapping. By constructing a 2D–3D modality mapping model, the method integrates 3D annotation with 2D feature extraction, and finally projects the deterioration mask information back onto the 3D model. Taking a polychrome wooden Guanyin sculpture from the Song Dynasty of China as a case study, the experimental results verify the correctness and feasibility of the proposed method. This automatic annotation approach for textured 3D triangular mesh models fills the gap in automated labeling of 3D data and provides a dataset preparation strategy for subsequent multimodal 3D segmentation using deep learning, demonstrating both scientific value and practical significance.

Similar content being viewed by others

Introduction

Cultural relics are the artifacts and remains left behind by human social activities, holding historical, artistic, and scientific value. They not only have great esthetic significance but also carry rich historical and cultural information. For example, the mural “Zhang Qian’s Diplomatic Mission to the Western Regions” on the north wall of Cave 323 in Dunhuang, China, vividly records the groundbreaking journey of Zhang Qian to the Western Regions. It stands as an important symbol of trade and cultural exchange between China and foreign countries1. However, the protection of cultural relics faces serious challenges. According to the Third National Cultural Relics Census, there are about 12,000 mural-type relics (including cave murals, temple murals, tomb murals, etc.) and over 80,000 immovable painted relics (such as painted architecture and sculptures). Around 44,000 relics have disappeared due to natural erosion, human destruction, and other reasons. The deterioration or loss of cultural relics is often irreversible. Therefore, it is essential to carry out deterioration investigation of relics and implement targeted restoration and protection measures accordingly. As a core step in relic preservation, deterioration investigation is becoming increasingly important and urgent.

With the advancement of technology, reality-based 3D modeling techniques have offered new solutions for relic conservation. This technology can record authentic and comprehensive information about the current state of cultural relics without physical contact, enabling permanent archival. Such information provides crucial data support for subsequent preservation efforts, promoting the development and inheritance of cultural heritage2,3,4,5,6. Researchers around the world have conducted extensive studies in this field and achieved significant results. For example, numerous institutions domestically and internationally (such as the Dunhuang Academy, the Qin Terracotta Warriors and Horses Museum, the ancient city of Pompeii in Italy, and the British Museum) have successfully utilized this technology to achieve high-precision digitalization of a vast number of precious cultural relics. These practical efforts have generated massive amounts of digital cultural heritage data.

Currently, 3D digitization methods for cultural relics primarily consist of high-overlap image-based modeling and laser scanning modeling. However, considering factors such as geometry and color, high-fidelity 3D color models generated from images are more widely applied in cultural relic conservation. At present, these models are mainly utilized for visualization or geometric archiving, and their deep semantic information has not been fully exploited. Among the tasks involving semantic analysis, deterioration segmentation based on these models is an urgent problem to be solved. The core challenge of this problem lies in how to automatically and efficiently identify and segment deterioration regions within the 3D model. Since cultural relic deterioration—especially shedding deterioration—is widely distributed and numerous, manual segmentation methods evidently fail to meet the demands of conservation7,8,9. With the development of artificial intelligence technology, automated segmentation algorithms represented by Deep Learning are considered the optimal solution to this problem due to their powerful capabilities in feature extraction and generalization10,11. However, deep learning is a typical “data-driven” paradigm. Unlike the field of computer vision, which possesses general benchmark datasets such as ImageNet12 or COCO13, the cultural heritage field persistently lacks publicly available 3D deterioration datasets. This is due to issues such as significant variations in deterioration manifestations caused by material and environmental changes, as well as the difficulty in publicly sharing data due to its uniqueness and sensitivity. This data scarcity constitutes a fundamental technical bottleneck. This bottleneck is not merely a lack of data sources, but rather the absence of efficient annotation methods adapted to the characteristics of 3D cultural relics. Existing annotation technologies struggle to find a balance between efficiency, accuracy, and information completeness, and their development has primarily evolved through three stages.

The first stage involves an annotation strategy based on projection dimensionality reduction of 3D models. This is currently the most widely used transitional solution. Given the complexity of operating directly on 3D models, researchers tend to adopt a “dimensionality reduction” strategy: acquiring 2D orthophotos or photographs of the 3D models and subsequently utilizing mature 2D annotation tools (such as LabelMe, LabelImg, etc.) to annotate deterioration. This method fully utilizes the high-resolution texture of 2D images, facilitating deterioration identification. However, the annotation tools generally rely on manual operation, resulting in low efficiency, and the quality of annotation is closely tied to the experience of the annotators. To enhance precision and efficiency, Yang14 proposed a semi-automatic labeling method based on an industrial object instance segmentation dataset. By utilizing a neural network model trained on a virtual dataset as a labeling tool to automatically annotate real data, the method achieved a mean Average Precision (mAP) of 73.61%, which is close to the 76.49% achieved by a model trained on manually labeled datasets. Scholars such as Kai Yu15 and Lyu Xianzhou16 introduced improved network architectures like U-Net17 and YOLO18, achieving semi-automatic annotation of 2D images. However, this dimensionality reduction strategy has significant limitations. Cultural relics often possess complex spatial structures, such as the domes of grottoes and the facial contours of Buddha statues. When these 3D curved surfaces are projected onto a 2D plane, geometric stretching, texture distortion, and viewpoint occlusion inevitably occur. Therefore, while annotation data produced based on dimensionality reduction may appear accurate on 2D images, it actually loses critical spatial topological information and cannot be accurately projected back onto the 3D cultural relic entity.

The second stage involves direct annotation strategies oriented towards 3D data. To address the issue of geometric distortion caused by 2D projection, the research focus has shifted towards 3D space. Scholars often utilize professional 3D processing software, such as Geomagic Wrap (v2021; Artec 3D; https://www.artec3d.com/3d-software/geomagic-wrap) and CloudCompare (v2.12; https://www.cloudcompare.org), for manual interactive annotation. Although both software possess powerful mesh editing and geometric segmentation capabilities, the operational workflow is cumbersome. It requires manual rotation of the model and the individual delineation of deterioration regions. Furthermore, the lack of a semantic label management system tailored for deep learning makes it difficult to meet the demands for the efficient construction of large-scale datasets. The 3D annotation tool SUSTech POINTS19 utilizes an interactive interface that integrates 3D point cloud views with 2D image sub-views. It achieves automatic bounding box fitting and batch processing capabilities, significantly enhancing annotation efficiency. Cristoph Sager20 proposed a new labeling tool, labelCloud, which allows users to perform annotation by drawing 3D bounding boxes; however, this method struggles to adapt to irregular deterioration on the surfaces of cultural relics. Hu et al.21 proposed an automatic detection algorithm based on point cloud geometric features to identify deterioration with distinct geometric deformations, such as bulging. However, existing direct 3D annotation methods rely heavily on geometric information while neglecting texture features. The precise segmentation of cultural relic deterioration depends not only on the determination of spatial position but also on the fine delineation of deterioration edges. When constructing the Architectural Cultural Heritage dataset, Roberto Pierdicc et al.22 also discovered that relying solely on geometric information for manual annotation is not only time-consuming and labor-intensive but also prone to misjudgment. They concluded that incorporating texture information is the key to improving accuracy.

The third stage represents early attempts at 2D-3D modal fusion. To address the limitations of single modalities, scholars began to explore the synergistic utilization of 2D and 3D data. Chen Peng et al.23 designed an automatic acquisition and labeling system based on RGB-D image sequences. By detecting Augmented Reality University of Cordoba (ArUco)24 markers in the scene to obtain pose relationships, and projecting the reconstructed 3D object model back onto the 2D image plane, the system automatically generated high-precision segmentation masks. While this method utilized the 3D model as an intermediary medium, its final segmentation results remained in the form of 2D masks. Hu25 innovatively proposed a 2D-3D mutual mapping mechanism based on forward and inverse projection transformations, realizing the visual mapping of 2D detection results onto the 3D model. This technical approach not only validated the feasibility of preserving 2D texture details within 3D space but also provided significant methodological references and technical support for the annotation and construction of fine-grained 3D cultural relic deterioration datasets.

In summary, the construction of cultural heritage deterioration datasets currently faces a dual dilemma: the fragmentation of data modalities and the lack of targeted 3D annotation tools. On one hand, while purely 2D annotation offers clear texture semantics, due to the absence of spatial dimensions, the annotation results remain confined to the image plane and cannot be accurately projected back onto the 3D cultural relic entity. Conversely, although purely 3D annotation provides accurate geometric positioning, it lacks clear texture guidance, leading to ambiguous delineation of deterioration edges (especially for flat deterioration types). On the other hand, although the theory of 2D-3D fusion has been validated, it remains primarily at the algorithmic level. There is still a lack of mature annotation software platforms specifically designed for colored 3D triangular mesh models.

Based on this, this paper proposes a fine-grained deterioration segmentation mechanism for textured 3D triangular mesh data, utilizing cross-modal feature segmentation and fusion via 2D-3D mutual mapping. By leveraging the synergy between 2D and 3D modalities, this mechanism overcomes the limitations of single-modality approaches: it utilizes the high-resolution texture information preserved in 2D images to precisely identify deterioration features, while relying on the geometric structure provided by the 3D mesh to accurately locate and segment deterioration regions. This fusion achieves a ‘What You See Is What You Get’ level of fine-grained extraction. By constructing a 2D-3D cross-modal mutual mapping model, we achieve fine-grained, high-precision feature segmentation on the texture side. This subsequently generates 2D texture masks, which are then used—based on the mutual mapping model—to complete the production of the segmented dataset for colored triangular mesh models. In addition, based on the aforementioned content, we have developed an automatic annotation platform for cultural relic deterioration.

Methods

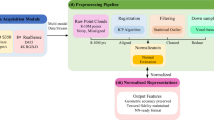

To address the issue of automated high-precision sample annotation for deterioration segmentation in 3D colored triangular mesh models of cultural relics, this study establishes a deterioration feature segmentation framework combining fine-grained texture segmentation under a 2D–3D modal bidirectional mapping and 3D spatial quantification. The Iso-charts algorithm26 and triangular affine transformation are applied to construct the 2D–3D bidirectional mapping model; an improved Simple Linear Iterative Clustering (SLIC)25 segmentation algorithm is used for fine-grained deterioration texture segmentation; and the bidirectional mapping model enables the fusion of texture masks with geometric models. The overall technical workflow is illustrated in Fig. 1, with a step-by-step demonstration of the intermediate results for each stage provided in Fig. 2. First, a fine-grained 3D colored model visualization and interaction platform is constructed based on the OpenSceneGraph (OSG)27 3D visualization engine. Using a polytope intersector, sub-models containing deterioration -affected regions are clipped from the complete model. Next, a stretch-driven mesh parameterization method is applied to perform surface geometric unfolding of these sub-models. Then, leveraging triangular affine transformation relationships, the scattered textures of the original model are reorganized according to the bidirectional mapping correspondence, generating a complete UV map with continuous textures and establishing a 2D–3D modal bidirectional mapping model. Subsequently, deterioration region pixels are extracted via the SLIC superpixel segmentation and clustering algorithm, enhanced by an adaptive K-value determined through the image gray-level co-occurrence matrix. Finally, based on the previously established 2D–3D point mapping relationship, the 2D deterioration pixel coordinates are projected into 3D space, achieving the fusion of UV texture masks with geometric information.

In the flowchart, black rectangles represent processing steps, while black parallelograms denote input/output operations. Colored dashed boxes indicate distinct stages of the method: The red dashed box corresponds to the first step: 3D Model Selection; The orange dashed box corresponds to the second step: 2D-3D Forward Mapping; The green dashed box corresponds to the third step: Deterioration Segmentation on Re-unwrapped Map; The blue dashed box corresponds to the fourth step: 2D-3D Inverse Mapping and 3D Deterioration Mask Augmentation.

Detailed visualization of the process blocks defined in Fig. 1: The colors of the headers correspond to the process blocks defined in Fig. 1, illustrating the specific intermediate results for each stage. The red dashed box corresponds to the process diagram for the first step: 3D Model Selection; the orange dashed box corresponds to the process diagram for the second step: 2D-3D Forward Mapping; the green dashed box corresponds to the process diagram for the third step: Deterioration Segmentation on Re-unwrapped Map; the blue dashed box corresponds to the process diagram for the fourth step: 2D-3D Inverse Mapping and 3D Deterioration Mask Augmentation.

The proposed method facilitates the automated annotation of deteriorations on 3D colored models. The technical details and implementation stages of this method are described in the following sections.

Acquisition of annotation regions based on polytope intersection algorithm

OSG is a high-performance open-source 3D graphics toolkit widely used in fields such as scientific visualization, virtual reality, and simulation training. Interaction technology is a core component of OSG, enabling developers to create dynamic scenes that respond to user input, thereby enhancing user experience and improving the intuitiveness of operations.

This study builds a 3D colored mesh model visualization platform based on the OSG 3D rendering engine. The clipping of annotation regions is the first step in dataset creation. A custom-developed polytope intersector is integrated into the platform, enabling the transformation of interactive point selection into precise operations on objects within the scene. By computing the intersection between the polytope intersector and the model objects in the scene, the platform determines whether specific mesh faces should be selected.

The user defines a 2D rectangular area on the screen via mouse dragging. These screen coordinates are then mapped to Normalized Device Coordinates (NDC)28. By applying the inverse of the view and projection matrices (see Eq. 1).

A polytope clipping volume is constructed. Subsequently, the intersector is employed to test each triangular facet of the model28, filtering out those located within the polytope to construct a new mesh for further analysis.

Construction of a 2D–3D modal model based on UV reconstruction

Currently, common data formats for 3D colored triangular mesh models include OSGB, PLY, STL, and OBJ, all of which support the essential features required for our proposed method. The fine-grained 3D cultural relic models studied in this paper are primarily represented in the OBJ format. The OBJ format for 3D colored triangular mesh data consists of three components: PNG, OBJ, and MTL files.

A 3D colored model essentially consists of multiple triangular faces, with textured triangles mapped onto spatial triangles through the correspondence between vertex coordinates and UV coordinates. The construction of the 2D–3D modal bidirectional mapping model constitutes the second step in dataset creation. In this study, UV coordinates are redefined and optimized to achieve continuity and completeness of the texture map. This improvement not only facilitates the extraction of useful structural information from the texture map but also enables accurate inverse mapping of information from the 2D texture space back to the 3D spatial domain through the mapping relationship between vertex coordinates and UV coordinates.

As shown in Fig. 3, the 2D–3D modal bidirectional mapping model consists of two complementary processes: first, continuously and completely displaying the 3D texture information on a 2D plane to facilitate information extraction; second, accurately mapping the extracted information back to the 3D space. These two processes are inverses of each other. The flattening of texture information from the colored 3D model onto a plane also proceeds in two steps. The first step is the geometric unfolding, which maps the 3D vertices onto the plane and constructs new UV coordinates, as shown in Fig. 4a). The second step rearranges the original scattered texture triangles based on the UV coordinates (as shown in Fig. 4b) to generate a new image, as shown in Fig. 4c). Pixel-level deterioration detection is performed on this new image (Fig. 4c), where deterioration coordinates may lie within texture triangles. Using the affine transformation matrix of the triangles, the precise location on the corresponding spatial triangular face is obtained. Finally, a 3D mask of the deterioration regions is generated based on the complete set of 3D deterioration coordinates. The following sections provide a detailed explanation of the principles and methods used to construct the model.

a 3D / Object Space or World Space. b 2D / Texture Space.

a Geometric unfolding of 3D vertices onto a 2D plane. b The original scattered texture triangles. c The generated image specifically formatted for deterioration segmentation.

The original UV texture coordinates of the vertices in the colored 3D triangular mesh model are scattered and disordered when mapped onto the plane, making it difficult to extract meaningful information (Fig. 4b). Therefore, this study remaps the model vertices to obtain complete and continuous UV texture coordinates, as shown in Fig. 4c, which can intuitively display deterioration information. The geometric unfolding is performed using Microsoft’s open-source library, UVAtlas29, which reasonably assigns UV coordinates to the 3D vertices of the model. UVAtlas is based on the “Iso-charts” algorithm proposed at the Eurographics conference, and implements stretch-driven mesh parameterization, mapping 3D vertices onto a 2D plane. The following provides a detailed introduction to this mesh unfolding method. First, due to varying model complexity, directly partitioning a complex mesh often leads to uncontrollable deformation in regions with large curvature changes after unfolding. Therefore, geometric feature–based partitioning is necessary. A hierarchical partitioning strategy is employed, sequentially partitioning according to connected components, boundaries, and shape features, to determine the model’s topological properties. If the model satisfies single-connectivity and single-boundary conditions, parameterization can proceed directly. Otherwise, Breadth-First Search (BFS)30 and Dijkstra’s algorithm31 are used to partition the model based on connected regions and boundaries. BFS traverses the model vertices; each BFS traversal produces a subgraph. Unvisited vertices become new connected component seeds until all components are partitioned. Dijkstra’s algorithm finds the shortest paths between different boundaries; the mesh is cut along these paths until only a single boundary remains. For special shapes such as cylinders or elongated polygons, shape-driven partitioning is applied by locating the two farthest points in 3D space, computing distances from all vertices to these points, and partitioning based on these distances. This partitioning effectively controls geometric distortion during subsequent parameterization.

Next, after the model is partitioned according to its geometric features, it is mapped onto a 2D plane to establish the 2D–3D mapping relationship. This is achieved by calculating the geodesic distances from vertices to several representative points and between the representative points themselves, constructing a distance matrix which is then subjected to dimensionality reduction. This process ultimately accomplishes the mesh unfolding. The specific steps are as follows:

-

(1)

Assuming the mesh contains N vertices, L representative points are selected, which are distributed across various corners of the mesh. For each vertex \({{\rm{v}}}_{{\rm{i}}}\), the shortest path distance \({{\rm{d}}}_{{{\rm{v}}}_{{\rm{i}}}{{\rm{l}}}_{{\rm{j}}}}\) to each representative point \({{\rm{l}}}_{{\rm{j}}}\) is computed. This distance is not the Euclidean (straight-line) distance, but the geodesic distance — the shortest path that follows along the surface of the mesh. These distances are then used to construct a distance matrix \({\rm{D}}\), where each row corresponds to a vertex and each column corresponds to a representative point:

$${\rm{D}}=\left[\begin{array}{ccc}{{\rm{d}}}_{{{\rm{v}}}_{1}{{\rm{l}}}_{1}} & \cdots & {{\rm{d}}}_{{{\rm{v}}}_{1}{{\rm{L}}}_{{\rm{n}}}}\\ \vdots & \ddots & \vdots \\ {{\rm{d}}}_{{{\rm{v}}}_{{\rm{N}}}{{\rm{l}}}_{1}} & \cdots & {{\rm{d}}}_{{{\rm{v}}}_{{\rm{N}}}{{\rm{l}}}_{{\rm{L}}}}\end{array}\right],{\rm{D}}\in {{\rm{R}}}^{{\rm{N}}\times {\rm{L}}}$$(2) -

(2)

The geodesic distances between all pairs of representative points are calculated to form the distance matrix \({\rm{G}}\). To facilitate subsequent dimensionality reduction, \({\rm{G}}\) is then subjected to double centering, transforming the distance matrix into a Gram matrix (an inner product matrix) that can be used for eigen decomposition. Specifically, the mean of each row is subtracted from the respective row, the mean of each column is subtracted from the respective column, and finally, the overall mean of the matrix is added back to complete the centering process.

$${\rm{G}}=\left[\begin{array}{cccc}0 & {{\rm{d}}}_{{{\rm{l}}}_{1}{{\rm{l}}}_{2}} & \cdots & {{\rm{d}}}_{{{\rm{l}}}_{1}{{\rm{l}}}_{{\rm{L}}}}\\ {{\rm{d}}}_{{{\rm{l}}}_{2}{{\rm{l}}}_{1}} & 0 & \cdots & {{\rm{d}}}_{{{\rm{l}}}_{2}{{\rm{l}}}_{{\rm{L}}}}\\ \vdots & \vdots & \ddots & \vdots \\ {{\rm{d}}}_{{{\rm{l}}}_{{\rm{L}}}{{\rm{l}}}_{1}} & {{\rm{d}}}_{{{\rm{l}}}_{{\rm{L}}}{{\rm{l}}}_{2}} & \cdots & 0\end{array}\right]$$(3)Double Centering Equation:

$${\rm{K}}=-\frac{1}{2}{\rm{H}}{{\rm{G}}}^{2}{\rm{H}}$$(4)Here, \({{\rm{G}}}^{2}\) denotes the element-wise square of the distance matrix \({\rm{G}}\). \({\rm{H}}={\rm{I}}-\frac{1}{{\rm{L}}}{11}^{{\rm{T}}}\) is the centering matrix, where \(1\) is a column vector of all ones.

-

(3)

Perform eigenvalue decomposition on the centered Gram matrix \({\rm{K}}\):

$${\rm{K}}={\rm{U}}\wedge {{\rm{U}}}^{{\rm{T}}}$$(5)Here, \({\rm{U}}\) is the matrix of eigenvectors and \(\bigwedge\) is the diagonal matrix of eigenvalues. Only the top \({\rm{d}}\) largest eigenvalues and their corresponding eigenvectors are retained, where \({\rm{d}}\) represents the target dimensionality for reduction. In this study, \({\rm{d}}=2\), resulting in the new coordinates of the representative points in the \({\rm{d}}\)-dimensional space:

$${{\rm{X}}}_{{\rm{L}}}={{\rm{U}}}_{{\rm{d}}}{\wedge }_{{\rm{d}}}^{1/2}$$(6)Here, \({{\rm{U}}}_{{\rm{d}}}\) denotes the matrix consisting of the top \({\rm{d}}\) eigenvectors (as columns), and \({\bigwedge }_{{\rm{d}}}\) is the diagonal matrix composed of the top \({\rm{d}}\) eigenvalues.

-

(4)

The new coordinates of the non-representative points are then computed based on their geodesic distances to the representative points:

Here, \({{\rm{D}}}^{(2)}\in {{\rm{R}}}^{{\rm{N}}\times {\rm{L}}}\) denotes the squared geodesic distance matrix between all vertices and the representative points. \({{\rm{D}}}_{{\rm{mean}}}^{\left(2\right)}\) refers to the mean-centered form of \({{\rm{G}}}^{2}\), obtained by applying centering operations to each row.

Finally, all unfolded results of the partitions are integrated to reconstruct a new 3D model. Compared to the original model, the reconstructed model preserves the same faces and vertices, with only the UV coordinates of the vertices being updated.

The color 3D model studied in this paper is unfolded as described above, with UV coordinates mapped onto an image within the coordinate range of (0–1). To facilitate subsequent texture remapping, the coordinate system is transformed so that the origin is located at the top-left corner of the image, as shown in Fig. 5.

a Texture coordinate system. b Pixel coordinate system.

At this point, the UV coordinates are converted into pixel coordinates. The conversion Equation is as follows:

Here, \(W\) and \({\rm{H}}\) represent the width and height of the original image, respectively; \({\rm{u}}[{\rm{i}}]\) and \({\rm{v}}[{\rm{i}}]\) are the \({\rm{U}}\) and \({\rm{V}}\) coordinates of the \({\rm{i}}\)-th texture point; \({\rm{x}}[{\rm{i}}]\) and \({\rm{y}}[{\rm{i}}]\) denote the horizontal and vertical pixel coordinates of the \({\rm{i}}\)-th texture point after conversion.

The image generated through geometric unfolding clearly displays the parameterized UV mapping results, where the distribution and edges of the triangles intuitively reflect the geometric structure of the model.

After remapping and visualizing the vertices of the 3D triangular mesh, it is necessary to remap the corresponding texture image of the original 3D triangular mesh to achieve an accurate texture distribution for subsequent deterioration segmentation. This process essentially involves an affine transformation between the vertex texture coordinates of the geometrically unfolded 3D model and the corresponding texture triangles in the original texture image. An affine transformation is the simplest method to map one set of three points (i.e., a triangle) to another arbitrary set of three points, encompassing translation, scaling, rotation, and shearing operations. It can be represented by a \(2\times 3\) matrix, where the first two columns represent rotation, scaling, and shearing operations, and the last column represents translation. The Equation is as follows:

Based on this matrix, for a given point \(({\rm{x}},{\rm{y}})\), the affine transformation can be applied to obtain the mapped point \(({{\rm{x}}}_{{\rm{t}}},{{\rm{y}}}_{{\rm{t}}})\):

In this study, the affine transformation matrix is obtained using the warpAffine() function from OpenCV. The procedure is as follows:

-

(1)

Obtain affine transformation data

To calculate the affine transformation coefficients in Eq. (9), it is necessary to obtain corresponding transformation information. In this study, the bounding rectangles of each corresponding triangle in the geometrically unfolded image and the original scattered texture image are used as known conditions, as shown in Eqs. (11) and (12):

$$\left\{\begin{array}{c}{{\rm{x}}}_{\min }=\min \left({{\rm{x}}}_{1},{{\rm{x}}}_{2},\cdots ,{{\rm{x}}}_{{\rm{n}}}\right)\\ {{\rm{x}}}_{\max }=\max \left({{\rm{x}}}_{1},{{\rm{x}}}_{2},\cdots ,{{\rm{x}}}_{{\rm{n}}}\right)\end{array}\right.$$(11)$$\left\{\begin{array}{c}{{\rm{y}}}_{\min }=\min \left({{\rm{y}}}_{1},{{\rm{y}}}_{2},\cdots ,{{\rm{y}}}_{{\rm{n}}}\right)\\ {{\rm{y}}}_{\max }=\max \left({{\rm{y}}}_{1},{{\rm{y}}}_{2},\cdots ,{{\rm{y}}}_{{\rm{n}}}\right)\end{array}\right.$$(12)The top-left corner of the bounding rectangle is denoted as \(\left({{\rm{x}}}_{\min },{{\rm{y}}}_{\min }\right)\), with a width of \({\rm{w}}={{\rm{x}}}_{\max }-{{\rm{x}}}_{\min }\) and a height of \({\rm{h}}={{\rm{y}}}_{\max }-{{\rm{y}}}_{\min }\). The rectangle is represented as \(({{\rm{x}}}_{\min },{{\rm{y}}}_{\min },{\rm{w}},{\rm{h}})\).

At this point, the coordinates of each triangle are relative to the entire image. To apply the affine transformation to the matrix (i.e., the bounding rectangle) containing the triangle, the coordinates of the triangle must be transformed relative to the local coordinate system of the matrix. Suppose the triangle vertices on the original UV map are \(\{({{\rm{x}}}_{1},{{\rm{y}}}_{1}),({{\rm{x}}}_{2},{{\rm{y}}}_{2}),({{\rm{x}}}_{3},{{\rm{y}}}_{3})\}\); then in the coordinate system with the top-left corner of the bounding rectangle as the origin, the triangle vertices become \(\{\left({{\rm{x}}}_{1}^{{\prime} },{{\rm{y}}}_{1}^{{\prime} }\right),\left({{\rm{x}}}_{2}^{{\prime} },{{\rm{y}}}_{2}^{{\prime} }\right),\left({{\rm{x}}}_{3}^{{\prime} },{{\rm{y}}}_{3}^{{\prime} }\right)\}\), as shown in Fig. 6.

The transformation formula is:

$$\left\{\begin{array}{c}\left({{\rm{x}}}_{1}^{{\prime} },{{\rm{y}}}_{1}^{{\prime} }\right)=\left({{\rm{x}}}_{1}-{\rm{w}},{{\rm{y}}}_{1}-{\rm{h}}\right)\\ \left({{\rm{x}}}_{2}^{{\prime} },{{\rm{y}}}_{2}^{{\prime} }\right)=\left({{\rm{x}}}_{2}-{\rm{w}},{{\rm{y}}}_{2}-{\rm{h}}\right)\\ \left({{\rm{x}}}_{3}^{{\prime} },{{\rm{y}}}_{3}^{{\prime} }\right)=\left({{\rm{x}}}_{3}-{\rm{w}},{{\rm{y}}}_{3}-{\rm{h}}\right)\end{array}\right.$$(13) -

(2)

Texture remapping

a Global coordinates. b Local coordinates

Based on the corresponding coordinates described above and using Eq. (10), the affine transformation parameters \(a\), \(b\), \(c\), \(d\), \({t}_{x}\), \({t}_{y}\) are calculated. Then, for each triangle’s corresponding texture in the original texture image without topology, affine transformation is applied to achieve texture remapping based on the geometric unfolding topology of the 3D triangular mesh.

Based on the above procedures, this study successfully establishes the forward construction process of a 2D–3D mutual mapping model. By transforming the segmentation of defects from 3D surface textures into a process based on 2D geometric and texture information, the proposed method achieves a unification of data dimensions. The resulting dataset not only preserves the 2D topological characteristics of the original 3D textured model but also retains the corresponding spatial 3D information. Serving as a bridging dataset, it enables seamless conversion between different data modalities.

Adaptive K-value SLIC deterioration segmentation based on gray-level co-occurrence matrix

Based on the re-unwrapped texture map generated via UV reconstruction, high-precision deterioration segmentation can be achieved using image segmentation-related algorithms. In this study, the 3D segmentation mask is obtained via the remapped 2D texture. Given that the regression operations of deep learning segmentation algorithms operate at the pixel level, the accuracy of the mask is particularly critical. Therefore, this work employs an improved Simple Linear Iterative Clustering (SLIC) segmentation algorithm proposed by our research group25 in 2024. This algorithm first obtains superpixels and then performs clustering to determine the final segmentation regions. Its unique two-parameter segmentation approach achieves optimal edge segmentation accuracy, making it especially suitable for mask generation. SLIC primarily involves the following steps: first, computing the image’s Gray Level Co-occurrence Matrix (GLCM) to derive an adaptive superpixel number \({\rm{k}}\); second, performing superpixel segmentation; and finally, applying K-means clustering to separate deterioration pixel coordinates.

From an algorithmic perspective, the quality of SLIC segmentation results largely depends on the initial settings of the number of superpixels \({\rm{k}}\) and the compactness parameter \({\rm{m}}\). Typically, the value of \({\rm{m}}\) can be set based on empirical experience, while the initial value of \({\rm{k}}\) primarily determines the segmentation accuracy of SLIC. Huang pointed out that the value of \({\rm{k}}\) is strongly correlated with the image complexity25. The Gray Level Co-occurrence Matrix (GLCM) can accurately quantify image complexity. Therefore, by adaptively computing \({\rm{k}}\) based on the image complexity, the issue of manually setting the initial \({\rm{k}}\) value is resolved, which can further improve the segmentation accuracy of SLIC. The specific implementation steps are as follows:

-

(1)

Initialize seed points (cluster centers): Calculate the Gray Level Co-occurrence Matrix (GLCM) based on the image complexity Tk, and determine the adaptive number of superpixels k:

$${\rm{k}}=\lceil ({\rm{x}}+{\rm{y}})/{{\rm{T}}}_{{\rm{k}}}\rceil$$(14)Here, \({\rm{x}}\) denotes the image width, \({\rm{y}}\) denotes the image height, and ⌈ ⌉ represents the ceiling function. The complexity measure Tk is defined as:

$${{\rm{T}}}_{{\rm{k}}}={{\rm{E}}}_{{\rm{Ent}}}+{{\rm{C}}}_{{\rm{Con}}}-{\rm{E}}-{{\rm{C}}}_{{\rm{Cor}}}$$(15)$${E}_{{Ent}}=-\mathop{\sum }\limits_{i=1}^{k}\begin{array}{l}\,\\ \frac{{n}_{i}}{N\times \log \left(\frac{{n}_{i}}{N}\right)}\end{array}$$(16)$$E=\mathop{\sum }\limits_{i=0}^{m-1}\mathop{\sum }\limits_{j=0}^{n-1}{Q}^{2}\left(i,j,d,\theta \right)$$(17)$${C}_{{con}}=\mathop{\sum }\limits_{i=0}^{m-1}\mathop{\sum }\limits_{j=0}^{n-1}\left[{\left(i-j\right)}^{2}Q\left(i,j,d,\theta \right)\right]$$(18)$${C}_{{cor}}=\mathop{\sum }\limits_{i=0}^{m-1}\mathop{\sum }\limits_{j=0}^{n-1}\frac{i\times j\times p\left(i,j,d,\theta \right)-{u}_{1}\times {u}_{2}}{{d}_{1}^{2}{d}_{2}^{2}}$$(19)Where EEnt stands for entropy, \({{\rm{C}}}_{{\rm{Con}}}\) for contrast, \({\rm{E}}\) for energy, and CCor for correlation. In Eqs. 16–19, Q2(I,j,d,θ)represents the normalized result of the GLCM; \(m\) denotes the number of image pixel columns; \(n\) denotes the number of image pixel rows; and \(\theta\) represents the generation direction of the GLCM, which can take values of 0°, 45°, 90°, and 135°,and:

$$\left\{\begin{array}{l}{u}_{1}=\mathop{\sum }\limits_{i=0}^{m-1}\mathop{\sum }\limits_{j=0}^{n-1}p\left(i,j,d,\theta \right)\\ {u}_{2}=\mathop{\sum }\limits_{j=0}^{n-1}j\mathop{\sum }\limits_{i=0}^{m-1}p\left(i,j,d,\theta \right)\end{array}\right.$$(20)$$\left\{\begin{array}{l}{d}_{1}^{2}=\mathop{\sum }\limits_{i=0}^{m-1}{\left(i-{u}_{1}\right)}^{2}\mathop{\sum }\limits_{j=0}^{n-1}p\left(i,j,d,\theta \right)\\ {d}_{2}^{2}=\mathop{\sum }\limits_{i=0}^{m-1}{\left(j-{u}_{2}\right)}^{2}\mathop{\sum }\limits_{j=0}^{n-1}p\left(i,j,d,\theta \right)\end{array}\right.$$(21)\({u}_{1}\) and \({u}_{2}\) denote the means; \({d}_{1}\) and \({d}_{2}\) represent the variances; and \({\rm{p}}({\rm{i}},{\rm{j}},{\rm{d}},{\rm{\theta }})\) represents the pixel information corresponding to the element in the i-th row and j-th column of the gray-level co-occurrence matrix.

-

(2)

Uniformly distributing seed points across the image, each superpixel will have a size of approximately \({\rm{N}}/{\rm{k}}\). Accordingly, the seed point spacing (step size) is approximated as: \(S=\mathrm{sqrt}({\rm{N}}/{\rm{k}})\), where \({\rm{N}}\) is the total number of pixels and \({\rm{k}}\) is the number of superpixels.

-

(3)

Within the \({\rm{n}}\times {\rm{n}}\) neighborhood around each seed point (typically \(n=3\)), the seed point is repositioned by calculating the gradient values of all pixels in this neighborhood and moving the seed point to the location with the smallest gradient. This step aims to avoid placing seed points on high-gradient contour edges, which could adversely affect the subsequent clustering performance.

-

(4)

Using the adjusted seed points as search centers, the search range is set to twice the spacing between centers. Then, each original pixel in the image is assigned a cluster label based on proximity, determining which seed point the pixel belongs to.

-

(5)

Calculate the distance metrics, including color distance and spatial distance. For each pixel within the search area, compute its distance to the seed point using the following formula:

$${d}_{{\rm{c}}}=\sqrt{{\left({l}_{{\rm{j}}}-{l}_{{\rm{i}}}\right)}^{2}+{\left({a}_{{\rm{j}}}-{a}_{{\rm{i}}}\right)}^{2}+{\left({b}_{{\rm{j}}}-{b}_{{\rm{i}}}\right)}^{2}}$$(22)$${d}_{{\rm{s}}}=\sqrt{{\left({x}_{{\rm{j}}}-{x}_{{\rm{i}}}\right)}^{2}+{\left({y}_{{\rm{j}}}-{y}_{{\rm{i}}}\right)}^{2}}$$(23)$${D}^{{\prime} }=\sqrt{{\left(\frac{{d}_{{\rm{c}}}}{{N}_{{\rm{c}}}}\right)}^{2}+{\left(\frac{{d}_{{\rm{s}}}}{{N}_{{\rm{s}}}}\right)}^{2}}$$(24)Where \({{\rm{d}}}_{{\rm{c}}}\) represents the color distance, \(l\), \(a\), and \(b\) represent the lightness, red/green axis, and yellow/blue axis components, respectively, while the subscripts \(i\) and \(j\) denote the seed point and the candidate pixel, \({{\rm{d}}}_{{\rm{s}}}\) denotes the spatial distance, and \({{\rm{N}}}_{{\rm{s}}}\) is the maximum spatial distance within a cluster, defined as \({N}_{{\rm{s}}}=S=\sqrt{\frac{N}{k}}\), applicable to each cluster. The maximum color distance \({N}_{{\rm{c}}}\) varies with different images and clusters, but is generally set as a fixed parameter \({\rm{m}}\). The final distance metric is denoted as \({D}^{{\prime} }\):

$${D}^{{\prime} }=\sqrt{{\left(\frac{{d}_{{\rm{c}}}}{m}\right)}^{2}+{\left(\frac{{d}_{{\rm{s}}}}{s}\right)}^{2}}$$(25)Each pixel may be queried by multiple seed points, so each pixel will have multiple distances to nearby seed points. The seed point corresponding to the minimum distance is chosen as the cluster center for that pixel.

-

(6)

Iterative optimization: In theory, the above steps are repeated until the error converges, meaning that the cluster center of each pixel no longer changes. In this study, the iteration terminates when the error between two consecutive iterations is less than a predefined threshold ε, thereby ensuring the stability and accuracy of the clustering results.

-

(7)

Connectivity enhancement: Following a “Z”-shaped scanning order (left to right, top to bottom), discontinuous superpixels and those with excessively small sizes are reassigned to adjacent superpixels. Pixels that have been traversed are assigned the corresponding labels, and this process continues until all pixels have been processed.

Superpixels are generated using the SLIC segmentation method. This approach produces superpixels that are compact and uniform, with clear neighborhood feature representation, effectively enabling image denoising. Based on the SLIC segmentation results, K-means clustering is applied to achieve improved clustering performance. The specific steps are: determine the required number of clusters \({\rm{K}}\); calculate the distance between each pixel and the cluster centers; update the cluster centroids based on the new classifications; and iterate until the cluster centroids no longer change. This study employs this deterioration recognition algorithm to fully capture detailed image features. For painted peeling defects with complex boundaries and small areas, the SLIC segmentation method demonstrates optimal segmentation performance.

3D colored triangular mesh segmentation and annotation based on modal bidirectional mapping

Pixel-level information segmentation can be performed on the unfolded new image, where deterioration points are located inside each texture triangle. To achieve precise mapping of deterioration coordinates back to 3D space, this study continues to utilize the affine transformation principle to calculate the coordinates of deterioration points within the corresponding spatial triangles.

Based on the mapping relationship between the texture triangle containing the deterioration point and its corresponding spatial triangle, we construct a 2 × 3 affine transformation matrix. Utilizing this matrix, the 2D coordinates of the pixel can be directly transformed into the x and y coordinates in the 3D space. Subsequently, by solving the equation of the spatial plane where the triangle resides, the z-coordinate of the point can be uniquely determined, thereby finally accomplishing the precise conversion from the 2D pixel to the 3D spatial point. The detailed steps are as follows:

-

(1)

Facet query

Facet query aims to identify the specific texture triangle in which each 2D deterioration pixel is located. As shown in Fig. 7, the red points represent pixel-level edge deterioration points. The deterioration region spans multiple small triangles, with each triangle containing several deterioration points. By using cross-product operations to determine the triangle to which each point belongs, this method involves only vector computations, making it computationally efficient and well-suited for large-scale mesh processing.

There are four types of spatial relationships between deterioration points and triangles, as illustrated in Fig. 8.

To determine the positional relationship between a point and a triangle using the cross product method, suppose the triangle has vertices A(x1, y1\()\), B(x2, y2), and C(x3, y3), and the point to be tested is P(x, y). Construct three edge vectors of the triangle and three vectors from each triangle vertex to point \({\rm{P}}\):

$$\overline{{\rm{AB}}}=\left({{\rm{x}}}_{2}-{{\rm{x}}}_{1},{{\rm{y}}}_{2}-{{\rm{y}}}_{1}\right)$$(26)$$\overline{{\rm{BC}}}=\left({{\rm{x}}}_{3}-{{\rm{x}}}_{2},{{\rm{y}}}_{3}-{{\rm{y}}}_{2}\right)$$(27)$$\overline{{\rm{CA}}}=\left({{\rm{x}}}_{1}-{{\rm{x}}}_{3},{{\rm{y}}}_{1}-{{\rm{y}}}_{3}\right)$$(28)$$\overline{{\rm{AP}}}=\left({\rm{x}}-{{\rm{x}}}_{1},{\rm{y}}-{{\rm{y}}}_{1}\right)$$(29)$$\overline{{\rm{BP}}}=\left({\rm{x}}-{{\rm{x}}}_{2},{\rm{y}}-{{\rm{y}}}_{2}\right)$$(30)$$\overline{{\rm{CP}}}=\left({\rm{x}}-{{\rm{x}}}_{3},{\rm{y}}-{{\rm{y}}}_{3}\right)$$(31)The cross product of two vectors \(\vec{{\rm{u}}}=\left({{\rm{u}}}_{1},{{\rm{u}}}_{2}\right)\) and \(\vec{{\rm{v}}}=\left({{\rm{v}}}_{1},{{\rm{v}}}_{2}\right)\) is defined as: \(\vec{{\rm{u}}}\times \vec{{\rm{v}}}={{\rm{u}}}_{1}{{\rm{v}}}_{2}-{{\rm{u}}}_{2}{{\rm{v}}}_{1}\), Calculate the cross products: \(\overline{{\rm{AB}}}\times \overline{{\rm{AP}}}\)、\(\overline{{\rm{BC}}}\times \overline{{\rm{BP}}}\)、\(\overline{{\rm{CA}}}\times \overline{{\rm{CP}}}\), Based on vectors and the signs of the cross products, the position of the point is determined as follows: if \(\overline{{AP}}\)、\(\overline{{BP}}\) or \(\overline{{CP}}\) is a zero vector, then point P coincides with a vertex of the triangle; if the signs of the three cross products are identical, point P lies within the triangle; if the signs differ, point P lies outside the triangle; and if exactly one cross product result is zero, the deterioration point P lies on an edge of the triangle. Deterioration points located on vertices or edges are assigned to the current texture triangle, thereby ensuring the completeness and continuity of the mapping relationship.

-

(2)

Spatial point calculation and visualization

Triangle mesh structure and distribution of deterioration edge pixels.

a Interior point. b Exterior point. c Coincident with vertex. d Edge point.

Based on the affine transformation matrix calculation method described, this study extends its application to the mapping between textured triangular facets and three-dimensional spatial facets. Since the affine transformation matrix is defined in two-dimensional image space, a local coordinate system is constructed for each spatial facet to convert 3D points into 2D points, as shown in Fig. 9, representing the transformation from the world coordinate system to the local coordinate system.

World-to-local coordinate transformation.

First, the first vertex of the triangular facet is set as the origin of the local coordinate system. The direction from the first vertex to the second vertex is defined as the x-axis (u), the normal vector of the facet is defined as the z-axis (w), and the y-axis (v) is determined by the cross product of the x-axis and the z-axis. The computation formulas for each axis are as follows:

Next, the offset of point \({\rm{q}}\) relative to the origin (v₁) is calculated as offset = q – v1, and then projected into the local coordinate system. The projection is computed using the following formula:

Through the above calculations, the coordinates of point \({\rm{q}}\) in the local coordinate system are obtained as \((x{\prime} ,y{\prime} ,z{\prime} )\), where \({\rm{z}}{\prime} =0\). In this way, the spatial triangle can be expressed in the local coordinate system. Subsequently, the affine transformation matrix between the texture triangle and the triangle in the local coordinate system is computed. By multiplying the deterioration point with the corresponding affine transformation matrix, the position of the deterioration point in the local coordinate system can be obtained.

Finally, the local coordinates are transformed back into world coordinates. Based on the local coordinate axes \(u\), \(v\), and \(w\), along with the origin v1, the 2D coordinates can be remapped to 3D spatial coordinates. This process represents the inverse transformation from the local coordinate system to the world coordinate system, and is computed using the following formula:

Here, q represents the 3D coordinates of the deterioration point in the world coordinate system. Through this method, the position of the deterioration point in the texture image can be accurately mapped to the surface of the 3D model, thus achieving spatial mapping from the 2D image to the 3D model.

Through the above method, this study successfully computed the 3D spatial coordinates of deterioration points. The obtained deterioration points were added to the geometry in OSG, with their state sets, materials, and other attributes configured to construct a deterioration triangular mesh mask, which was then displayed on the surface of the original model. As the segmentation of deterioration pixels in this study achieved high precision, the resulting mask constructed from multiple 3D deterioration points closely adheres to the surface of the original model. Thus, the reverse process of building an integrated 2D-3D model was successfully realized, enabling the visualization of deterioration regions by converting 2D coordinates into 3D space.

Data augmentation methods for 2D textures and 3D deterioration masks

Data augmentation is an effective strategy for expanding the scale of datasets, addressing prevalent issues such as limited size, poor quality, class imbalance, and acquisition difficulties. In this study, the construction of the automatic annotation platform generated two types of datasets. The following sections introduce the augmentation methods applied to these two different dimensions of data.

The first is augmentation for 2D re-unwrapped maps, the augmentation of re-unwrapped texture maps primarily involves color transformations. Color transformations can alter the image content itself to achieve data augmentation; common methods include noise injection, blurring, color jittering, and erasing.

-

Noise Injection: Adds random perturbations to image pixel values. Common types include Gaussian noise, salt-and-pepper noise, and Poisson noise.

-

Blurring: Reduces the local contrast and sharpness of the image through convolution filtering to simulate lens defocus, object motion, or depth-of-field effects. This forces the model to learn more macroscopic structural features rather than local fine-grained textures.

-

Erasing: Randomly selects one or more rectangular regions in the image and sets their pixel values to zero or fills them with random/mean values, artificially “occluding” parts of the image content.

The second is data augmentation for 3D deterioration masks, in the field of 3D cultural relic digitization and conservation, point cloud segmentation and recognition technologies based on deep learning are demonstrating immense potential32,33. However, the training process of these technologies relies heavily on large-scale, high-quality, and precisely annotated 3D datasets. For cultural relic deterioration, the cost of acquiring and annotating such datasets is extremely high, resulting in a severe insufficiency of available training samples. This scarcity of data severely limits the training effectiveness and generalization ability of deep learning models.

To alleviate the issue of insufficient 3D deterioration samples, this paper adopts a 3D data augmentation method based on geometric transformations to generate diverse deterioration models. This method simulates differences in deterioration morphology, scale, and distribution by randomly transforming deterioration point clouds and “pasting” them onto different locations of healthy models, thereby enhancing data diversity and model generalization ability.

First, a target point is randomly selected on the healthy model, and its orientation is determined. Next, to simulate the diversity of deterioration at different scales and orientations, random geometric transformations are performed on the 3D deterioration mask, including:

-

Random Scaling: Perturbing the deterioration size within a set range to simulate different degrees of diffusion.

-

Random Self-rotation: Rotating around the deterioration’s own principal normal to enrich morphological variations.

Then, the perturbed deterioration is rotated to the target point location. This method is suitable for regions where the curvature of both the deterioration area and the “pasting” area is gentle.

When the curvature of the “pasting” area is high, rigid geometric transformations struggle to realistically simulate deterioration morphology and are prone to causing mesh penetration. To address this, we propose a second type of geometric transformation that controls sample size via the K value in a KDTree. We analyze the healthy model to select high-curvature points as candidate “seed points.” In the batch generation stage, a seed point is randomly selected from the candidate pool, and a K-nearest neighbor search is performed on it using the K-d tree to sample a local neighborhood point cloud. The size of the neighborhood is controlled by the K value, which in turn determines the scale of the generated deterioration. To simulate the depression features characteristic of shedding, each point in this local neighborhood point cloud is translated inward (towards the model interior) by a tiny random distance along the direction of its respective surface normal.

Through the combination of the above two methods, this study successfully generates a 3D deterioration dataset characterized by diverse shapes, plausible positioning, and high geometric fidelity, providing solid data support for the subsequent training of deep learning models.

Results

The object selected in this study is a painted wooden Guanyin sculpture from the Song Dynasty in China. The painted sculpture stands approximately 0.5 meters tall and 0.3 meters wide. The 3D model of the Guanyin wooden sculpture was generated using photogrammetry by applying ContextCapture (v10.16, Bentley Systems, Exton, PA, USA) to a set of highly overlapping images, resulting in a high-precision, color 3D model, as shown in Fig. 10. The Guanyin sculpture exhibits multiple types of deterioration, including paint layer shedding and cracking. Among these, paint shedding is the most prominent. Noticeable paint layer loss is present on the back, arms, and base of the sculpture. This study focuses on these three deterioration areas, designated as deterioration regions a, b, and c, as illustrated in Fig. 11. The experimental results are presented in detail below.

3D model of a song dynasty painted wooden Guanyin sculpture (China).

a Deterioration area on the back. b Deterioration area on the arm. c Deterioration area on the base.

Annotation region segmentation based on polyhedral intersection algorithm

In this study, an experimental platform was developed based on the 3D rendering engine OSG. First, the arm, back, and base of the painted wooden Guanyin sculpture were visualized, and regions containing deterioration were selected as the initial data for the 3D dataset. Subsequently, a polyhedral intersector was constructed by selecting regions through point-and-click operations. Figure 12 shows the selected regions on the back, arm, and base, respectively. The resulting clipped 3D color models are shown in Fig. 13.

a Back area selection. b Arm area selection. c Base area selection.

a Back results. b Arm results. c Base results.

Construction of 2D-3D modal models based on UV reconstruction

-

(1)

Stretch-driven geometric unfolding of colored 3D triangular meshes

A blank image is created, and the box-selected region model is geometrically unfolded. The UV coordinates were converted into pixel coordinates using Eq. (8). Finally, the pixel coordinates were displayed on the created image in the form of a triangular mesh. The geometric unfolding result is shown in Fig. 14.

-

(2)

Texture reprojection based on affine transformation matrix

For the selected 3D triangular mesh data, the UV geometric unfolding map rearranges the original scattered and disordered textures according to the topological relationships of the UV-unfolded triangular facets into a two-dimensional layout. This layout is then mapped onto the 2D UV triangular mesh, generating a two-dimensional UV geometric texture image. The original texture of the Guanyin wooden sculpture’s arm model is shown in Fig. 15. Using the 2D UV triangular mesh from Fig. 14, the texture in Fig. 15 is rearranged accordingly. The resulting image is shown in Fig. 16. The mapped texture after UV unfolding is continuous and complete, enabling further information extraction.

a Back geometry unfolding. b Arm geometry unfolding. c Base geometry unfolding.

Original texture map.

a UV unwrapping diagram of the back. b UV unwrapping diagram of the arm. c UV unwrapping diagram of the base.

Adaptive k-value SLIC deterioration segmentation based on the gray-level co-occurrence matrix of re-unwrapped texture maps

After obtaining the re-unwrapped texture map of the Guanyin wooden sculpture’s arm model, the adaptive k-value SLIC segmentation algorithm was applied to detect deterioration regions. The key parameters of SLIC segmentation are the compactness (m) and the number of superpixels (k), where the compactness is typically set between 10 and 40. Figure 17 shows the local deterioration region in Fig. 16a segmented by SLIC with adaptive k = 642 under different compactness values m:

a m = 10. b m = 20. c m = 30. d m = 40.

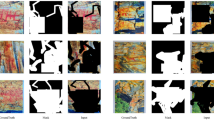

As shown in the Fig. 17, the larger the value of m, the more regular the superpixel blocks become. However, the deterioration areas in the subject of this study are mostly irregular in shape. More regular superpixels may cause poor alignment with the edges of the deterioration regions. Therefore, this paper sets \({\rm{m}}=10\). According to Eq. (14), the values of k are calculated as 642, 28,621, and 7916, which are then used as initial superpixel numbers for SLIC segmentation. Finally, the results are clustered using K-means to separate damaged and non-damaged regions. The results are shown in Fig. 18.

Row 1: SLIC. Row 2: Enlarged detail of SLIC result. Row 3: K-means. Column 1: a. Column 2: b. Column 3: c.

Generation of 3D deterioration annotation data based on inverse mapping of UV texture deterioration regions

Following deterioration segmentation using the SLIC algorithm and K-means clustering, the segmented results are presented in pixel format on the 2D UV texture map. To generate the corresponding 3D annotation data, the deterioration pixel region coordinates are mapped back into 3D space via the 2D-3D cross-modal mapping model introduced previously. This yields 3D annotation data with deterioration masks, as illustrated in Fig. 19 and visualized on the model in Fig. 20.

a Deterioration mask of the back. b Deterioration mask of the arm. c Deterioration mask of the base.

a Back-surface segmentation dataset with masks. b Arm-surface segmentation dataset with masks. c Base-surface segmentation dataset with masks.

Dataset augmentation

Data augmentation addresses prevalent issues in current datasets, such as limited scale, poor quality, class imbalance, and acquisition difficulties. In this study, the construction of the automatic annotation platform generated two types of datasets. Upon completion of the 2D-3D modal model construction based on UV reconstruction, we obtained complete and continuous 2D texture maps. These 2D data can be expanded to enhance the cultural relic dataset. Texture augmentation techniques include noise injection, blurring, color jittering, erasing, and others. The Fig. 21 illustrates the augmentation results for the three types of re-unwrapped maps shown in Fig. 16:

Row 1: a. Row 2: b. Row 3: c. Column 1: Original image. Column 2: Data after augmentation

After projecting the segmented deterioration coordinates back into 3D space, the resulting 3D deterioration mask information can also be used for data augmentation based on geometric transformations.

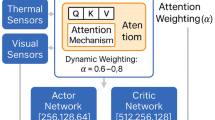

Introduction to the annotation platform

The interface design of the deterioration data annotation platform constructed in this study is based on the Qt graphical user interface framework, utilizing SARibbon (https://github.com/czyt1988/SARibbon) to construct the operational interface structure. The 3D visualization module is implemented based on OSG. The platform also integrates third-party libraries such as the Point Cloud Library (PCL)34 and OpenCV35 to support functions including 3D model visualization, geometric processing, mesh operations, and image processing. The hardware environment used in this experiment consists of an Intel i5 processor, 32 GB of RAM, and an NVIDIA RTX 3050 graphics card.

The main interface of the platform is composed of multiple functional modules. Among them, the Data Processing module serves as the core module (Fig. 22), which primarily includes the following functional panels:

-

(1)

Selection panel: Supports interactive box selection of deterioration regions on the surface of the 3D model to acquire the local regions intended for segmentation.

-

(2)

Model expansion panel: Performs geometric expansion and texture expansion on the box-selected region to achieve the flattening of the local 3D model area, providing a textural foundation for subsequent deterioration segmentation.

-

(3)

Deterioration segmentation panel: Utilizes adaptive K-value SLIC segmentation and K-means clustering methods to perform deterioration segmentation on texture images.

-

(4)

Automatic labeling panel: Integrates multiple automated processing functions, allowing users to directly generate corresponding 3D deterioration annotation results through a single box-selection operation, thereby improving annotation efficiency.

-

(5)

Data augmentation panel: Provides 2D and 3D data augmentation tools, including 2D image data augmentation and 3D mask data augmentation. This effectively expands data diversity and enhances the generalization ability of model training data.

Functional structure of the automated annotation platform.

In summary, the data processing module allows users to import 3D models and perform interactive operations such as rotation and inspection. By interactively drawing a rectangular selection box around a deterioration-affected area, the platform performs the relevant computations outlined in the “Methods” section to derive the 3D spatial coordinates of the deterioration region.

Comparison experiment

To further validate the accuracy of the proposed high-precision automatic annotation method, several comparative experiments were designed from multiple perspectives, including deterioration segmentation algorithms, curvature variation of deterioration regions, and functional comparison of 3D deterioration segmentation software.

-

(1)

Comparison of superpixel segmentation algorithms

To verify the advantages and disadvantages of different methods in deterioration region segmentation, this study compared the adaptive K-value SLIC algorithm used in this paper, the superpixel-based SEEDS algorithm36, and a color-space-based threshold segmentation method37 from two dimensions: the visual quality of segmentation results (qualitative analysis) and the quantitative accuracy of deterioration area (quantitative analysis). We used deterioration regions manually delineated at the pixel level by professional practitioners in the field of cultural heritage to serve as the ground truth for the segmentation task, where adaptive K-values were used as the number of superpixels for all superpixel segmentation methods.

First, in terms of qualitative visual evaluation, Fig. 23 presents the segmentation results obtained by different methods for the same deterioration region (Fig. 16b). Among them, Fig. 23b represents the ground truth mask. Through intuitive comparison, it can be seen that both the adaptive SLIC method used in this paper (Fig. 23a) and the SEEDS segmentation method (Fig. 23b) perform excellently in adhering to deterioration boundaries, capable of relatively completely outlining the macro contours of the deterioration. However, the SEEDS method produced more noise points and small artifact regions at the deterioration edges and interiors. The color-space-based threshold segmentation method (Fig. 23c) was significantly affected by background complexity and uneven lighting; its segmentation results not only exhibited blurred edges but also showed obvious phenomena of missed region detection, failing to completely identify all deterioration areas (indicated by the black box in Fig. 23). Visual results preliminarily indicate that the adaptive SLIC method possesses advantages in maintaining the integrity of deterioration region contours and suppressing background noise.

Second, at the level of quantitative analysis, we focus on the accuracy of deterioration area estimation by each method. This study introduces a pixel-level multidimensional evaluation framework. Specifically, metrics including the area ratio, Intersection over Union (IoU), Precision, and Recall were calculated for three distinct regions (named A, B, and C in Fig. 19b) in the order of left-to-right and top-to-bottom)38. The quantitative comparison results are summarized in Table 1. Through in-depth quantitative analysis of regions A, B, and C, the method proposed in this paper demonstrates significant advantages across all aforementioned core indicators.

To analyze the deviations in area estimation, a line chart of the area ratios was generated (Fig. 24). The area ratios of the adaptive SLIC method used in this paper and the SEEDS method are significantly closer to 1 with lower volatility compared to the color-space threshold-based method, indicating higher accuracy in area quantification.

Furthermore, the proposed method achieved the highest IoU values across all deterioration regions (A, B, and C), significantly outperforming the SEEDS method (0.8848) and the color-space threshold-based method (0.7591). This result underscores the precise localization of deterioration achieved by our method. Notably, the Precision for all three regions reached 1.0000, achieving zero false positives. In the context of complex cultural relic surfaces, this “zero-misjudgment” characteristic is of paramount importance for the formulation of subsequent conservation strategies.

By combining qualitative visual evaluation and quantitative area analysis, the adaptive K-value SLIC method adopted in this paper not only visually reconstructs the boundary contours of deterioration most precisely and reduces noise interference, but also estimates the actual area of deterioration most accurately at the quantitative level. In comparison, although the SEEDS method can estimate the area reasonably well, it is slightly inferior in segmentation details and noise control; meanwhile, the color-space threshold method fails to meet the requirements for precise segmentation and area statistics due to its poor robustness. Therefore, the method proposed in this paper demonstrates higher comprehensive performance in deterioration segmentation tasks.

-

(2)

Accuracy comparison of deterioration segmentation under different curvatures

To verify the strong adaptability of the proposed annotation method in deterioration regions with high curvature and in areas with multiple deteriorations, this study uses deterioration regions cropped in Geomagic as the ground truth. As a standard 3D analysis software, Geomagic provides high-precision modeling, and the manually assisted segmentation results are considered highly accurate. The experimental regions were specifically selected from the representative deterioration samples shown in Fig. 19. These encompass the small-scale deterioration region with a relatively flat geometry in Fig. 19a), the four discrete regions (designated as A, B, C, and D) in Fig. 19b), and the large-scale deterioration area with high curvature in Fig. 19c).

To conduct a more comprehensive accuracy evaluation, this study examined both absolute error and relative error. Absolute error reflects the actual deviation in area, whereas relative error (calculated as: area difference / Geomagic area) offers a more objective assessment of the algorithm’s performance across deteriorations of varying scales.

As indicated by Figs. 25 and 26 and Table 2, the proposed 3D automatic deterioration segmentation method maintains high overall accuracy and stability. Under conditions of varying curvature and regardless of the number of deterioration spots within the selected range, the difference between the segmented deterioration area and the benchmark area manually segmented in Geomagic remains small.

For region “a” (shown in Fig. 19a) and Fig. 20a), located on a flat surface with a single target), the absolute error is 0.0155 mm², and the relative error is only +0.11%. Both metrics indicate minimal error, demonstrating that the proposed method can accurately segment deterioration boundaries in flat regions.

For region “b” (shown in Fig. 19b) and Fig. 20b), located in a local area with a certain degree of curvature and multiple targets), the absolute errors for the four sub-regions are 0.0009 mm², −0.0144 mm², −0.0271 mm², and −0.0223 mm², respectively, all of which are kept within a minimal range. In terms of relative error, regions b-A, b-B, and b-C also remain at low levels (+0.04%, −0.45%, and −2.44%, respectively). It is worth noting that the relative error for region b-D (−39.54%) is numerically large. However, this is primarily due to its extremely small benchmark area (0.0564 mm²), which causes the minute absolute error (−0.0223 mm²) to be significantly amplified when calculating the percentage. Therefore, based on a comprehensive assessment, the proposed method maintains high detection accuracy even in the presence of local curvature variations and an increased number of deterioration spots.

For region “c” (shown in Fig. 19c) and Fig. 20c, located at a corner with significant curvature changes, featuring a large area and complex morphology), the absolute error is −1.6511 mm². While this absolute error appears large, it is attributable to the fact that its total area (166.0394 mm²) is far greater than that of other regions. Moreover, the relative error for region “c” is only −0.99%, falling within an acceptable range. This fully demonstrates that the proposed method is capable of adapting to the detection of complex deterioration with high curvature and large surface areas, exhibiting strong robustness.

-

(3)

Comparative analysis of 3D automatic and manual deterioration labeling platforms

a Results obtained using the adaptive SLIC method proposed in this paper. b Results obtained using the SEEDS segmentation method. c Results obtained using the color-space-based threshold segmentation method. d The ground truth mask used for comparison.

The black dashed line denotes the optimal line at ratio 1.

Bar chart of deterioration area comparison across different methods.

Area difference map.

To comprehensively validate the comprehensive advantages of the 3D automatic deterioration annotation method proposed in this paper in terms of functionality, efficiency, and accuracy, we designed a comparative experiment selecting three methods for evaluation. The experimental areas are the three typical deterioration regions (A, B, and C) shown in Fig. 19b). First, we introduce the three comparative methods:

-

i.

3D Manual Segmentation Method: This method is based on the 3D manual vectorization tool built upon the OSG 3D engine, as mentioned by Xia Guofang25 (as shown in Fig. 27). The platform provides tools for cultural relic model loading, visualization, database management, and 3D deterioration drawing. Users first select the deterioration type (such as shedding, cracking, flaking, dust, etc.) based on experience, and then manually outline triangular facets on the 3D model using a mouse.

-

ii.

Geomagic clipping method: This method utilizes the clipping tool provided by the Geomagic 3D software. It requires manually drawing clipping curves to generate deterioration region boundaries through interaction with the model surface.

-

iii.

Proposed method: This refers to the 3D automatic deterioration annotation method proposed in this paper, which achieves automatic vectorized segmentation without manual intervention.

Xia Guofang’s 3D manual vectorization tool.

A detailed comparison of the three methods is shown in Tables 3 and 4. To conduct a comprehensive evaluation, this paper presents a detailed qualitative and quantitative analysis from three dimensions: accuracy, efficiency, and functionality.

-

i.

Accuracy comparison: As indicated by the “Area (mm²)” and “Perimeter (mm)” data in Table 3, the geometric information calculated by the proposed method (e.g., Deterioration B: 3.152 mm², 7.240 mm) is highly consistent with the numerical results of Geomagic (3.046 mm², 7.257 mm) and manual segmentation (3.020 mm², 7.191 mm), with minimal differences. This confirms that the proposed method achieves precision comparable to mainstream commercial and research tools in terms of geometric measurement.

-

ii.

Efficiency comparison: As shown in Table 4, both the 3D manual and Geomagic methods require “operation by professionals,” involving cumbersome procedures and low efficiency. In contrast, the proposed method requires no professional operation and achieves automated processing, demonstrating significant advantages in labor costs and time efficiency.

-

iii.

Functionality comparison: As indicated by the columns “Can Generate Automated Segmentation Datasets” and “Supports Batch Processing” in Table 4, traditional methods do not support these two key functions. The proposed method fully supports them; it is not only capable of automatic segmentation but also possesses batch processing capabilities. It can meet the practical demands of large-scale cultural relic data processing and directly generate high-quality segmented datasets.

In summary, through a multi-dimensional comparison with traditional methods, the method proposed in this paper not only achieves comparable geometric precision in terms of accuracy and overcomes the limitation of strong subjectivity inherent in traditional methods, but also demonstrates significant advantages in efficiency and functionality (such as automated segmentation and batch processing).

Discussion

The creation of datasets is a crucial prerequisite for the application of deep learning. Currently, most available datasets are based on 2D images or point cloud formats, while automated annotation tools for 3D colored triangular mesh models remain lacking. In the field of cultural heritage, many relics are preserved as 3D colored triangular mesh models.

Against this background, this study proposes a fine-grained deterioration segmentation mechanism for textured 3D triangular mesh data based on cross-modal feature extraction and fusion via bidirectional 2D–3D mapping. By constructing a 2D–3D modality mutual mapping model, the approach achieves fine-grained, high-precision feature segmentation on the texture side, generates 2D texture masks, and completes the creation of segmented datasets for colored triangular mesh models based on the mutual mapping model.

Currently, the development of 3D deep learning lags significantly behind that of 2D deep learning. One of the fundamental bottlenecks is the extreme scarcity of large-scale, finely annotated 3D datasets comparable to ImageNet12. This leads to the dilemma of “being unable to train models without data, and unable to automatically annotate data without models.” The automated annotation method used in this paper not only addresses the current absence of automated annotation techniques for colored 3D models but also improves annotation efficiency and accuracy. This research not only advances the application of 3D colored models in cultural relic deterioration detection but also provides a solid foundation and practical support for the construction of high-quality 3D deterioration datasets, the digital preservation of cultural relics, intelligent analysis, and subsequent deep learning studies, thereby holding significant value for broader application and promotion.

Although the method proposed in this paper has achieved good results, its limitations are concentrated in the “3D-2D-3D” conversion process. Since this method identifies deterioration on flattened 2D texture images, the success of segmentation relies heavily on the texture quality of the original 3D model. If the model texture has low resolution, blurring, uneven lighting, or shadow artifacts, the accuracy of the 2D segmentation network will be significantly compromised. This is the primary limitation of this method. Furthermore, flattening a complex 3D model into a 2D image inevitably produces stretching, distortion, and seams. Deterioration located at seams may be cut in half, and the morphology of deterioration in distorted areas may become deformed; both issues can interfere with the accuracy of 2D segmentation. Investigating how to adopt superior parameterization algorithms to minimize distortion is a direction worthy of future research.

Data availability

All data generated or analyzed during this study are included in this published article.

References

Qin, S.Y. & Luo, S.Y. Cultural exchange, integration, and mutual learning among civilizations as seen through Dunhuang art. XiYou., 38–40 (2024).

Hou, M. L., Liang, L. L. & Hu, Y. G. Research on 3D models of cultural relics and problems faced in their applications. Study Nat. Cult. Herit. 2, 82–88 (2017).

Leon, I., Pérez, J. J. & Senderos, M. Advanced techniques for fast and accurate heritage digitisation in multiple case studies. Sustainability 12, 6068 (2020).

Ramm, R. et al. Portable solution for high-resolution 3D and color texture on-site digitization of cultural heritage objects. J. Cult. Herit. 53, 165–175 (2022).

Croce, V. et al. From the semantic point cloud to heritage-building information modeling: a semiautomatic approach exploiting machine learning. Remote Sens. 13, 461 (2021).

Li, D. R. From geomatics to geospatial intelligent service science. Acta Geod. Cartogr. Sin. 46, 1207–1212 (2017).

Cornelis, B. et al. Crack detection and inpainting for virtual restoration of paintings: the case of the Ghent altarpiece. Signal Process 93, 605–619 (2013).

Targa, L. et al. Automated edge detection for cultural heritage conservation: comparative evaluation of classical and deep learning methods on artworks affected by natural disaster damage. Appl. Sci. 15, 8260 (2025).

Yahaghi, E., Madrid Garcia, J. A. & Movafeghi, A. Fracture and internal structure detection of ceramic objects using improved digital radiography images. J. Cult. Herit. 44, 152–162 (2020).

Kwon, D. & Yu, J. Automatic damage detection of stone cultural property based on deep learning algorithm. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 42, 639–646 (2019).

Bruno, S., Galantucci, R. A. & Musicco, A. Decay detection in historic buildings through image-based deep learning. Int. J. Archit. Technol. Sustain. 8, 6–17 (2023).

Jia, D. et al. ImageNet: a large-scale hierarchical image database. In Proc. Conference on Computer Vision and Pattern Recognition (CVPR) 248–255 (IEEE, 2009).