Abstract

Metasurface-based light detection and ranging (LiDAR) is essential for high spatiotemporal resolution three-dimensional (3D) imaging in robotic and autonomous systems. Recent advances in inertia-free scanning techniques—such as acousto-optic and spectral scanning—have propelled the field forward. Nevertheless, key spatiotemporal metrics, including point acquisition rate (PAR), field-of-view (FOV), and imaging resolution, remain fundamentally constrained. These challenges are particularly acute in dual-axis LiDARs, where inter-axis rate mismatch and beam astigmatism degrade temporal and spatial resolution, respectively. Here, we present a wide-FOV, high spatiotemporal resolution LiDAR architecture with astigmatic metalens (AML) coordinated spectral-acousto-optic scanning. Consequently, a frame-wise point acquisition rate (FPAR) of 36.6 MHz (∼5-fold improvement over existing reports) and a wide FOV of 102° are simultaneously achieved. This breakthrough redefines LiDAR’s potential for ultra-high-speed, high-precision perception, enhancing applications such as autonomous driving with improved obstacle detection and safety at high speeds.

Similar content being viewed by others

Introduction

High spatiotemporal resolution three-dimensional (3D) imaging is pivotal for emerging applications in unmanned aircraft, autonomous vehicles, and robotics. While structured light imaging1,2 and stereovision3,4 provide 3D sensing capabilities, light detection and ranging (LiDAR) stands out due to its superior detection distance, precision, and environmental robustness5,6,7,8,9. Advanced LiDARs demand both high temporal resolution and spatial detection capabilities, necessitating two-dimensional (2D) beam scanning with rapid point acquisition rate (PAR), wide field-of-view (FOV), and dense resolvable points to capture dynamic objects with subtle changes. However, despite advancements in various scanning technologies, achieving wide-FOV, high spatiotemporal resolution simultaneously remains a critical challenge.

Traditional mechanical scanners, limited by bulk and instability, have spurred the development of compact, solid-state (inertial-free) alternatives8. Spatial light modulators (SLMs) achieve ~kHz PAR via liquid crystal reorientation10, while optical phased arrays (OPAs) reach ~MHz PAR but suffer from spatial sidelobes and fragility at high peak power6,11. Acousto-optic deflectors (AODs) cap scanning speeds due to acousto-optic transit time12,13 ( < 6 MHz to avoid beam distortion14). These conventional scanners are fundamentally limited by their low temporal resolution ceiling and an inherent trade-off between PAR and FOV: Inertial scanners provide wide FOVs at low PAR, while inertial-free scanners offer high PAR but narrow FOVs (e.g., SLMs: ~20°; AODs: ~2°).

In contrast, spectral scanning, enabled by intrinsically modulating the laser source through photonic time-stretching15,16,17,18 or time-frequency multiplexing, introduces a new paradigm for high-speed LiDAR, enabling inertia-free line scanning with PARs up to tens of MHz17. Yet, it still struggles to deliver 2D wide-FOV, high spatiotemporal resolution scanning. Firstly, the overall temporal resolution is restricted by the rate mismatch between fast and slow axis scanners, leading to slower imaging frame rates19. While spectral scanning significantly enhances the pixel-wise PAR (PPAR), the frame-wise PAR (FPAR) remains bottlenecked by the mechanical slow axis7,17,20,21,22, where FPAR represents the effective PAR accounting for duplicate points during full-frame scanning. Secondly, the scanning FOV is inherently constrained by both the available spectral bandwidth and the dispersion capabilities of common gratings23, limiting FOV to only a few degrees. Expanding the FOV may introduce additional distortions in cascaded dual-axis scanning systems, where the non-coplanar scanners induce beam deflection dependencies24,25. A common mitigation strategy employs a 4 f system for optical conjugation and center pivot alignment26, widely adopted in cascading galvanometers, AODs, and SLMs across microscopy26,27, multi-beam optics24, and laser processing28; however, it inevitably increases system complexity and volume. Thirdly, the spatial resolution is degraded by beam astigmatism arising from the intrinsic anisotropy of diffractive gratings. Blazed gratings disperse light in one direction while reflecting it in the orthogonal direction, inducing asymmetric angular divergence. This anisotropy induces astigmatism, distorting the beam into an undesirable elliptical profile and ultimately degrading the spatial resolution. Except for specialized applications such as axial positioning in microscopy imaging29,30, astigmatism is generally undesirable31. Conventional optical designs typically rely on bulky cylindrical lenses or meticulously engineered GRIN lenses to mitigate astigmatic effects32.

Over the past decade, metasurfaces33,34,35,36 have captivated the photonics community by enabling precise control over amplitude, phase, frequency, polarization, and more. They offer solutions to many challenges faced by conventional optics, ushering in Optical Engineering 2.037. The multidimensional optical field manipulation capabilities of metalenses (MLs) enable the on-demand design of point spread function (PSF) and allow for functions such as momentum transformation, spectral focus tuning, and astigmatism correction. These advancements have led to widespread applications in angular momentum detection34, spectral tomographic imaging38, quantitative phase imaging39, and spectroscopy40. In passive imaging, a quadratic phase metalens based on symmetry transformation41 can achieve a wide FOV of ±80°, yet it has not been applied to active laser imaging with single-pixel detection. Recently, in active imaging, Ref14. addressed the limited FOV in MHz-level homogeneous dual-axis AOD scanning by cascading a metasurface. However, its simple circularly symmetric phase distribution also leads to FOV distortion, increased beam divergence, and degraded spatial resolution.

Here, we propose a wide-FOV, high spatiotemporal resolution LiDAR architecture based on an astigmatic metalens (AML), which integrates solutions to the challenges mentioned above. To enhance temporal resolution, we employ spectral scanning (PPAR ~tens of MHz) for the fast axis and AOD (several MHz) for the slow axis, achieving inter-axis “rate matching” and significantly improving the final FPAR. To expand the spatial detection capabilities of spectral-acousto-optic (spectral-AO) dual-axis cascade scanning, we have uniquely designed an AML that enlarges the FOV while maintaining a small divergence angle through astigmatism correction. As a result, we demonstrate an advanced LiDAR architecture with both PPAR and FPAR reaching 36.6 MHz ( ~ 5-fold improvement over existing reports), ensuring high temporal resolution, while also attaining a spatial resolution of 0.37° within a wide 102° FOV. Further, we also achieve an ultra-high-speed imaging frame rate of 20.3 kfps with 1800 points per frame. The achieved FPAR enables mega-pixel 3D imaging at video frame rates, making it highly promising for broader applications that demand dynamic high-precision perception, such as autonomous driving and high-speed small drone tracking.

Results

Principle of wide-FOV high spatiotemporal resolution LiDAR

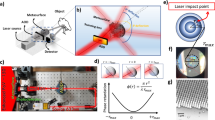

The spatiotemporal sensing capability of a LiDAR system is primarily defined by its temporal resolution and spatial detection capability, enabling the capture of rapid and subtle environmental changes across a wide FOV, as shown in Fig. 1a.

a Proposed LiDAR architecture integrating a metalens for wide-FOV capture of subtle environmental dynamics. b Implementation of high temporal resolution. Inter-axis rate matching via spectral-acousto-optic (spectral-AO) scanning enables full-field high-speed two-dimensional beam scanning. c Achievement of high spatial resolution. The wide-FOV astigmatic metalens (AML) simultaneously addresses the challenges of narrow spectral scanning FOV and beam astigmatism that degrade spatial resolution—issues that cannot be mitigated by a normal metalens (NML) without astigmatic compensation. Insets show the schematic phase profiles of the NML and AML. d Top: Imaging of a resolution target, demonstrating the system’s superior spatial resolution (6.46 mrad). Bottom: Time-sliced evolution of a rapidly rotating fan, highlighting the system’s high temporal resolution (FPAR ~ 36.6 MHz)

On the one hand, LiDAR’s temporal resolution is determined by the PAR of its scanning mechanism. LiDAR operates by modulating the transmitted laser waveform and measuring the round-trip time-of-flight (TOF) τ of photons42. In traditional single-pulse direct-TOF LiDAR systems, the PPAR is equal to the laser repetition rate frep. Here, by employing time-frequency multiplexing, a broadband laser pulse is modulated into a discrete sequence of chirped pulses across Nλ spectral channels, thereby enhancing the PPAR accordingly:

As illustrated in Fig. 1b, spectral scanning is achieved through grating dispersion of the Nλ channels, providing a high PPAR (up to tens of MHz) and serving as the fast axis. However, if the point-switching rate of the slow axis lags behind the line rate of the fast axis, causing the fast axis to redundantly scan the same region β times, the effective FPAR will be reduced by a factor of β relative to the PPAR:

where βi denotes the inter-axis rate mismatch factor (RMF) arising from the transition between the i-th and (i + 1)-th scanning axes (total na axes). PPAR is the instantaneous PAR determined by the fastest scanning axis, while FPAR represents the effective PAR during full-frame scanning, accounting for duplicate points and further restricted by the slow axis. To ensure that the FPAR reaches the maximum PPAR attainable through spectral scanning, the slow axis switching must be synchronized with the line rate of the fast axis spectral scanning, corresponding to frep. To achieve this, we introduce an AOD with a point-switching rate of up to MHz-level (matching frep) for the slow axis, ensuring perfect inter-axis “rate matching” with β = 1 and thus aligning FPAR with the maximum PPAR.

On the other hand, the spatial detection capability (Cspatial) of LiDAR reflects its ability to resolve small objects over a wide FOV and is expressed as:

where FOVx and FOVy represent the horizontal and vertical scanning FOVs, respectively, while Nx and Ny denote the effective number of resolvable points, defined as the ratio of the FOV to the angular resolution θ in the corresponding direction.

Therefore, achieving minimal angular divergence over a wide FOV is crucial for enhancing spatial detection capability. While conventional telescope systems can expand the narrow FOV of spectral scanning, they fail to compensate for the degradation in spatial resolution caused by beam astigmatism. Here, we introduce a wide-FOV AML that simultaneously addresses the challenges of narrow FOV and beam astigmatism—issues that cannot be mitigated by a normal metalens (NML) without astigmatic compensation, as illustrated in Fig. 1c.

In summary, we employ a wide-FOV AML to harmonize spectral-AO cascade scanning, achieving exceptional comprehensive spatiotemporal resolution, as illustrated in Fig. 1d. The top panel shows the imaging results of a resolution target, where Θx and Θy denote the object’s angular position relative to the AML. The shaded region in the accompanying Intensity-Θy map highlights the angular range containing real features, demonstrating the ability to resolve a 1 cm-wide feature at a distance of 155 cm, corresponding to a spatial resolution of 6.46 mrad. The bottom panel illustrates dynamic imaging of a high-speed rotating fan, with each slice representing a single frame (frame rate: 734 fps; 600 × 83 points per frame). The results reveal a temporal resolution corresponding to an FPAR of 36.6 MHz. This comprehensive spatiotemporal resolution outperforms previously reported LiDARs in comparable settings (see Supplementary Fig. S1 and Table S1 for detailed comparisons).

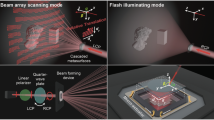

The implementation of the proposed wide-FOV high spatiotemporal resolution LiDAR system is provided in Fig. 2. As depicted in Fig. 2a, the broad-spectrum pulsed light from a supercontinuum laser undergoes spectro-temporal encoding, converting it into a discrete chirped pulse sequence with uniform temporal and spectral intervals (details in Supplementary Section 2). The encoded light then propagates through a dual-axis AOD, enabling small-angle 2D beam scanning. It then traverses a blazed grating (BG) to implement spectral-AO dual-axis scanning. To address the narrow FOV and the beam astigmatism induced by the BG’s anisotropic properties, we incorporate a Galileo-like telescope system composed of an AML at the backend, effectively reducing beam divergence while expanding the FOV. The echo signals are detected by a single-pixel photomultiplier tube (PMT), while the laser pulse serves as a reference to extract TOF information, ultimately enabling the 3D reconstruction of target scene.

a Experimental setup. A broadband source undergoes spectro-temporal encoding for time-frequency multiplexing. The resulting discrete chirped sub-pulses are directed through a 2-axis AOD and a blazed grating (BG) for spectral-dual-AO scanning. The beam then passes through the wide-FOV astigmatic metalens (AML) to enhance spatial detection capability. Echoes are collected by a photomultiplier tube (PMT) for 3D reconstruction. COL, collimating lens; PH, pinhole; HWP, half-wave plate; M, mirror; BPF, bandpass filter. b Schematic of spectral-dual-AO cascade scanning. The yAOD swiftly transitions after each spectral scan to ensure rate matching, while the xAOD operates similarly, forming a three-axis scanning configuration. c Impact of rate mismatch on the effective number of acquired points. (i) Rate matching (β = 1) ensures FPAR = PPAR, maximizing acquisition efficiency; (ii) Rate mismatch (β = 2) reduces FPAR to half of PPAR due to redundant spectral scans. d Schematic of beam divergence angle expansion induced by the BG. e Schematic of the output beams evolution after diffraction by the BG for 3 adjacent spectral channels: (i) without ML, (ii) with NML, and (iii) with AML. The AML corrects beam astigmatism while simultaneously expanding the spectral scanning FOV

Through discrete time-stretching17, the spectro-temporal encoding module achieves Nλ-channel time-frequency mapping (Supplementary Fig. S2d). According to Eq. (1), a PPAR of 36.6 MHz is achieved by Nλ = 30 spectral channels with frep = 1.22 MHz. We introduce AODs to match this fast axis line rate, as illustrated in Fig. 2d. Given that the point-switching time of yAOD is ΔtyAOD, and assuming the slower xAOD aligns with one complete yAOD scanning period (β2 = 1), the effective FPAR can be calculated as follows:

where Tspectral = 1/frep is the period of spectral scanning. Thus, FPAR depends on the point-switching time of yAOD, which corresponds to the slow axis of spectral scanning. If ΔtyAOD = Tspectral, the system ensures seamless transitions between positions after each spectral scan, maintaining β1 = 1 and preventing redundant scans. In this case, FPAR reaches its maximum value (equal to PPAR), enabling a high-speed three-axis scanning format known as spectral-dual-AO cascade scanning, as illustrated in Fig. 2c(i), with the scanning timing diagram provided in Supplementary Fig. S3. In contrast, if ΔtyAOD > Tspectral, FPAR falls below PPAR due to repeated spectral scanning, as illustrated in Fig. 2c(ii). This highlights the fundamental limitation of full-field imaging rates in conventional spectral scanning LiDARs that rely on bulky mechanical scanning as the slow axis.

High temporal resolution alone is insufficient for an exceptional LiDAR system, as wide detection FOV and high angular resolution are equally crucial. In addition to the difficulty in achieving inter-axis rate matching, another major drawback of spectral scanning is its restricted spatial detection capability—both the narrow FOV and degraded spatial resolution induced by beam astigmatism. According to the grating equation:

where Θi and Θo represent the incidence and diffraction angles of the blazed grating, respectively (same sign on the same side of the normal); λ denotes the incident wavelength (centered at 1547.5 nm with a bandwidth of 13 nm); and d is the grating constant (1/600 mm). Although increasing the wavelength bandwidth and grating density can enlarge the FOV, it remains constrained to only a few degrees. Conventional telescopes can extend the FOV but introduce mismatches in cascaded dual-axis scanning systems. The inevitable spatial separation of the two scanners causes beam deflection along the second scanning axis to depend on the first axis24,25, thereby exacerbating distortions during FOV expansion. Moreover, increased third-order (Seidel) aberrations—such as astigmatism and coma—further constrain the FOV43. Specifically, in spectral scanning, beam astigmatism primarily arises from the grating’s anisotropy, which induces additional angular divergence in the dispersion direction (effectively reducing the virtual focal length). A differential analysis yields:

This reveals that the BG expands the output angular range (divergence angle) by a factor of cos-1Θo relative to the input, as illustrated in Fig. 2d. From a physical optics perspective, this effect stems from the large-angle wavevector deflection imparted by the BG, which reduces the effective beam waist size in the propagation direction by a factor of cos Θo. According to Gaussian beam theory, a smaller beam waist results in a larger divergence angle. Calculations indicate that for monochromatic incident light at λ = 1550 nm, the divergence angle of the diffracted beam along the grating’s dispersion direction increases by ~2.72 times, while in the orthogonal direction, the grating acts as a planar mirror, contributing no divergence expansion. This anisotropic effect leads to beam astigmatism, causing the output beam to evolve from elliptical to circular and back to elliptical along the optical axis, as depicted in Fig. 2e(i).

Applying an NML with non-astigmatic, radially symmetric phase can expand the FOV but fails to mitigate astigmatism. The divergence angle increases synchronously with the FOV magnification in both orthogonal directions, preserving the beam’s astigmatic characteristics and limiting the resolution for adjacent spectral channels, as shown in Fig. 2e(ii).

The metalens’s capability to arbitrarily manipulate wavefronts offers a novel solution. By engineering an astigmatic phase profile with distinct focal lengths for tangential and sagittal rays, the AML can effectively correct anisotropy-induced astigmatism. This results in more uniform divergence angles in both directions and a nearly circular beam profile, significantly suppressing astigmatism across a wide FOV and thereby greatly enhancing the spatial detection capability of spectral scanning, as illustrated in Fig. 2e(iii). (In actual experiments, a lens is used to focus the beam onto the AML.)

Wide-FOV astigmatic metalens enabled high spatial detection capability

Previously, we introduced a wide-FOV AML to enhance the spatial detection capability of spectral scanning, addressing both its limited FOV and beam astigmatism. In addition, FOV mismatching, a critical issue in heterogeneous dual-axis cascade scanning, manifests in two key forms—FOV distortion and a rectangular output FOV. FOV distortion arises from the unavoidable spatial separation between the two orthogonal scanners, which causes beam deflection by the second scanner to be dependent on the first. Meanwhile, in heterogeneous cascade scanning, FOV mismatching also manifests as inconsistent scanning FOVs along the two axes, resulting in a rectangular output FOV. When a conventional telescope is introduced for FOV expansion, both forms of FOV mismatching are further exacerbated, amplifying distortion and degrading overall performance.

We use commercial software (Zemax OpticStudio) to optimize the AML’s phase profile, effectively addressing the aforementioned issues while expanding a wide FOV and correcting beam astigmatism. The phase design draws inspiration from the symmetry transformation41 of the focusing quadratic phase (Φ(r) = -k0r2/(2feff))—this transformation enables varying incident angles to induce lateral shifts of the focal spot, realizing an angle-to-position mapping, where k0 = 2π/λ, feff denotes the effective focal length, and r is the radial coordinate. Building on this, we innovatively adopt a divergent quadratic phase as the AML’s phase base to achieve inverse mapping between the incident position on the AML and the wide-FOV output angle; meanwhile, we incorporate higher-order astigmatic terms to resolve the inherent trade-off between wide FOV and high spatial resolution. The specific phase distribution of the AML is as follows:

where x and y represent the spatial coordinates on the metalens, and rm = 5 mm is the radius of its effective region. The coefficients ci (i = 1, 2, …, 9) corresponding to the phase terms of each order are listed in the Supplementary Table S2. Details on the AML’s optimization design are provided in Supplementary Section 4. This design not only expands the FOV but also enhances spatial resolution, as illustrated in Fig. 3a. The left panel presents a photograph of the fabricated AML, while the right panel illustrates the meta-atom unit cell structure of sapphire-on-silicon (SOS) (see Supplementary Fig. S4 for details). As depicted in Fig. 3b, the AML’s phase distribution exhibits an elliptical-like arrangement of isophase lines. Compared to conventional non-astigmatic phase profiles of the form Φ(r2), this tailored design introduces additional phase terms and degrees of freedom, mitigating FOV mismatching during rectangular FOV expansion while effectively suppressing beam divergence via astigmatism correction. The resulting wide-FOV output angle distribution, Θx,y, is illustrated in Fig. 3c, where Zemax simulations indicate a total scanning FOV of 102° × 26°. The field distortion is well corrected in spherical coordinates, preserving an approximately rectangular output FOV. The slight horizontal asymmetry arises from the nonlinear relationship inherent in the grating equation Eq. (6). Details on the characterization and imaging experiments for the wide FOV are provided in Supplementary Section 5.

a Schematic illustration of the wide-FOV AML-enhanced spatial detection mechanism. Compared to unmodulated 0-order beams, the AML both expands the FOV and improves spatial resolution. Left panel: photograph of the fabricated AML. Right panel: schematic of the meta-atom unit cell composing the AML. b Phase distribution of the wide-FOV AML, with gradient colors representing the phase and blue contour lines indicating the phase gradient (rad/mm). c Output angular distribution of the 2D scanning beams enabled by spectral-AO cascade scanning assisted by the AML, referenced to 0° at the beams’ center, with colors representing the spectral channels. Evolution of three adjacent channel beams diffracted by the grating: d without ML, e with NML, and f with AML. The green dashed line marks the x-position of the middle-channel beam as a coordinate reference. Due to astigmatism, the grating output beams evolve from elliptical to circular and back to elliptical; this aberration is effectively corrected by the AML. Corresponding intensity profiles in the y = 0 plane: g without ML, h with NML, and i with AML. The AML expands the FOV, corrects astigmatism and improves spatial resolution, thereby markedly enhancing LiDAR’s spatial detection capability

To characterize the enhancement in spatial detection capability provided by the wide-FOV AML, we measured the divergence angles of beams from three adjacent spectral channels (λ20, λ21, λ22) after grating diffraction, comparing cases with and without the metalens. The measured spot sizes at various distances and the divergence angle fitting processes are detailed in Supplementary Section 6. These data were used to model beam propagation at extended distances, as shown in Fig. 3d–i.

Specifically, Fig. 3d–f display the evolution of the beams at different exit distances for the three spectral channels after grating dispersion, (d) without ML, (e) with NML, and (f) with AML, respectively. The green dashed line represents the x-position of the beam at the wavelength λ21 (1550.1 nm) as the coordinate reference. Fig. 3d confirms that the grating output beams are astigmatic, evolving from elliptical to circular and back to elliptical. The divergence angle ratio between the two directions is 2.36 mrad / 0.80 mrad = 2.95, slightly exceeding the theoretical value of 2.72 calculated from Eq. (7), which supports the reliability of our experiment (with discrepancies primarily arising from the spectral bandwidth of a single incident channel). As shown in Fig. 3e, expanding the FOV using a non-astigmatic conventional telescope system does not eliminate beam astigmatism; instead, the divergence angle increases proportionally with the FOV angle, leaving the effective number of resolvable points unchanged. In contrast, Fig. 3f demonstrates that the AML effectively eliminates beam astigmatism. The astigmatic characteristics decouple the divergence angle from the FOV angle, enabling simultaneous FOV expansion and divergence suppression. This leads to a significant improvement in spatial resolution for adjacent channels compared to the NML, thereby increasing the effective number of resolvable points while simultaneously broadening the FOV. The output beam divergence angles in the two directions are θx = 11.8 mrad and θy = 10.2 mrad, which are largely consistent (see Supplementary Fig. S9 for details). Fig. 3g–i illustrate the evolution of horizontal beam intensity profiles at various exit distances for the three adjacent channels, without ML, with NML, and with AML, respectively.

Based on the measured divergence and deflection angles for these three-channel beams, calculations indicate that the AML not only expands the FOV but also enhances the effective resolvable points, significantly improving the spatial detection capability of the LiDAR system. As shown in Table 1, the overall Cspatial is improved by two orders of magnitude compared to a system without the wide-FOV AML (see Supplementary Section 6 for details of the listed values).

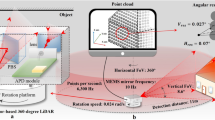

High temporal resolution dynamic 3D imaging

To demonstrate the high temporal resolution of our system, we conducted dynamic 3D imaging experiments on high-speed rotating fan blades. As illustrated in Fig. 4a, the imaging scene comprises the letters “F”, “A”, “S”, and “T” along with a rapidly spinning fan. The spherical coordinate system, centered at the AML, defines the axes as horizontal (azimuth) angle Θx, vertical (elevation) angle Θy, and radial distance D. The top panel of Fig. 4b specifies the dimensions of each object along with their coordinates (Θx, D), with the objects’ edge horizontal FOV reaching ±40°. Detailed data processing procedures for obtaining the dynamic 3D point clouds are provided in Supplementary Section 7.

a 3D imaging results and the corresponding scene (inset) containing the letters “F”, “A”, “S”, “T”, and a rapidly rotating fan. The single-frame results provide a total of 20(xAOD)×83(yAOD)×30(spectral channels) addressable spatial points. b Measured object dimensions and spatial positions (top) compared with reconstructed 3D point clouds (bottom). c Probability density distribution of the point clouds along the distance axis, demonstrating a ranging precision of ~3 cm (mean of the standard deviation σ). d Temporal evolution of fan rotation at varying FPARs (maximum 36.56 MHz for β1 = 1). Orange cylinders and blue/green helices represent time-resolved fan states, with helical pitch analysis yielding a rotation period of 24.94 ms (fitted) versus 25.10 ms (ground truth). e Ultra-high-speed dynamic 3D imaging results at 20.3 kfps frame rate (1×60×30 addressable points per frame). The imaging results show that after 6 ~ 7 frames, Slot C rotates to the original position of Slot B, which is consistent with the single slot period of ~333.3 μs for a 3 kHz chopper

The achieved PPAR of 36.56 MHz is determined by the spectral scanning. According to Eq. (5), the full-field FPAR depends on β1, which represents the ratio of the yAOD point-switching time to the spectral scanning period. When β1 = 1, FPAR reaches its maximum (equal to PPAR). If β1 > 1, FPAR decreases to PPAR/β1; however, each yAOD scanning position will undergo β1 complete spectral scans, allowing for averaging of these data points to further mitigate noise. Accordingly, we conducted imaging experiments at β1 values of 4, 2, and 1.

In this experiment, we implement sub-pixel spectral-AO scanning with 20 scanning points provided by the xAOD and 83 points by the yAOD for finer resolution (detailed in Supplementary Section 3), resulting in Ntot = 20×83×30 addressable points (30 spectral channels). In the bottom panel of Fig. 4b, the 3D imaging results at β1 = 4 reveal that the letters “F”, “A”, “S”, and “T” as well as two fan blades are resolved with high fidelity. Here, with FPAR = PPAR/4 = 9.14 MHz, the system achieves an image frame rate of 183.5 fps (FPAR/Ntot) and a per-frame acquisition time of 5.45 ms. Distance measurements—calculated as the mean ± standard deviation across the point clouds of each object—align with ground-truth values and demonstrate a ranging precision of ~3 cm, as shown in Fig. 4c. The dominant source of error arises from the inherent transit time spread (TTS) of the PMT (see Supplementary Section 8 for details).

For β1 = 2 and β1 = 1, FPAR values of 18.28 MHz and 36.56 MHz are achieved, with corresponding single-frame acquisition durations of 2.72 ms and 1.36 ms, resulting in frame rates of 367 fps and 734 fps, respectively. Time-slice diagrams depicting the front views of the 3D images acquired under these three β1 conditions are illustrated in Fig. 4d, with color indicating distance from the AML.

Although the β1 = 4 condition offers a higher number of effective points and improved imaging quality, its lower frame rate results in less clarity for capturing the fan’s motion. Moreover, when the fan is oriented horizontally, the slow scan speed in the β₁ = 4 group results in noticeable blade “deflection”, as shown in the time slice at t = 5.45 ms. Within the 5.45 ms duration of single-frame acquisition for β1 = 4, the β1 = 2 group captures 2 frames, while the β1 = 1 group captures 4 frames. As FPAR increases, the fan’s motion becomes more discernible, allowing us to model the evolution of the fan blade endpoints as a helical trajectory (with the blue and green spirals representing the endpoints’ motion). The projection of the helix onto the t-Θy plane forms a cosine function, yielding a measured fan rotation period of 24.94 ms, closely aligning with the actual value of 25.10 ms. This confirms the system’s capability for high temporal resolution, dense point-cloud 3D imaging across a wide FOV, and precise measurement of rotational speeds.

Furthermore, we reduced the number of scanning points to achieve a higher imaging frame rate of 20.3 kfps at β1 = 1, as shown in Fig. 4e. The wide-FOV AML was temporarily omitted while fixing the xAOD scanning frequency (see Supplementary Section 3.2 for details). The yAOD scanned 60 points vertically, yielding a total of 1×60×30 points. The middle panel of Fig. 4e presents 8 frames of imaging results, illustrating the continuous positional shifts of three adjacent slots within the scanning FOV. Between Frames 7 and 8, Slot C rotates to the original position of Slot B in Frame 1, demonstrating that 6 ~ 7 frames were captured within a 333.3 μs chopper period (operating at ω = 100 Hz with 30 slots). This validates the system’s ultra-high-speed 20.3 kfps 3D imaging frame rate (see Supplementary Movie S3 for 40 dynamic frames captured over a 2 ms recording duration).

High spatial resolution 3D imaging assisted by subpixel reconstruction

The key advantage of the proposed LiDAR architecture is its ability to achieve both high temporal and spatial resolution. To evaluate its spatial resolution, we conducted another 3D imaging experiment using static resolution targets.

Fig. 5a illustrates the direct point cloud imaging results for four test objects (i), including the letters “H” and “R” from “High Resolution”, as well as two resolution targets with a minimum feature linewidth of 1 cm, corresponding to the photograph of the physical scene (ii)&(iv). Owing to the relatively small divergence angle ( ~ 11 mrad) of the AML output beams, we are able to resolve a linewidth of 2 cm (13 mrad angular width) in the local point clouds (iii). However, resolving finer details with a linewidth of 1 cm remains challenging.

a Direct point cloud imaging results for four targets (i), alongside the corresponding physical scene (ii). Local point cloud reconstruction of a resolution target (iii) is compared with the actual object (iv). b Depth-resolved intensity slices recovered through subpixel reconstruction, with (i)–(iv) showing intensity distributions at four distinct distances, respectively. Distance is color-coded, while intensity is represented by saturation and grayscale. The purple line at Θx = -50° plane represents the total intensity distribution I(D) along the distance direction. The slice at Θy = 0° represents the 2D intensity distribution I(Θx, 0°, D). c Intensity distributions within the extracted depth slices, corresponding to the extrema of I(D) in (b). d Intensity profiles at a specific Θx, with the top and bottom panels corresponding to c(ii) and c(iii), respectively. The 1 cm-wide feature, previously indistinct in the raw point cloud a(iii), is now clearly resolved, demonstrating a spatial resolution of 6.46 mrad

To further enhance spatial resolution, we employ a subpixel reconstruction algorithm (see Supplementary Section 7 for details) to recover a higher-resolution 3D dataset, Ireconstructed (Θx, Θy, D), which encodes relative intensity variations across the 3D spatial domain. As depicted in Fig. 5b, four distinct depth slices are extracted based on the extrema of I (D) (marked by the purple line at Θx = -50° plane).

The detailed intensity distribution of these four slices is presented in Fig. 5c, clearly revealing object features that were unresolved in the raw point cloud data. The extracted distances—68.4 cm, 118.8 cm, 154.8 cm, and 182.4 cm—closely match the measured object positions (69 cm, 119 cm, 154 cm, and 184 cm). Intensity profiles along the Θy direction at fixed Θy values are plotted in Fig. 5d, further confirming the system’s high spatial resolution. The top and bottom panels correspond to Fig. 5c(ii) and (iii), respectively, where the resolution target features become distinctly visible in the reconstructed intensity maps. In particular, the resolution target at a distance of 154.8 cm, with a 1 cm linewidth, is clearly resolved, demonstrating a lateral spatial resolution of 6.46 mrad.

Discussion

To conclude, we have developed a novel LiDAR architecture that integrates high spatiotemporal resolution with wide-FOV 3D imaging capabilities. Through precise inter-axis rate matching in the spectral-AO scanning mechanism, we achieve a maximum FPAR up to 36.6 MHz, ensuring high temporal resolution. In addition, through the tailored design of a wide-FOV AML, we realize a spatial resolution of 6.46 mrad (0.37°) across a 102° FOV.

While increasing the number of spectral channels Nλ at a given frep could further enhance temporal resolution, the total chirp time of spectral scanning Tchirp must satisfy:

The margin created by Tchirp < Tpulse serves two critical purposes: (i) accommodating the electrical/acoustic response time required for the AOD’s synchronized scanning; (ii) tolerating minor temporal drift (e.g., from temperature fluctuations or electronic noise). This thereby imposes an upper limit on the PPAR based on the temporal interval between adjacent time-stretched sub-pulses, Δτ:

In our experimental implementation with frep = 1.22 MHz, Δτ = 24.6 ns, Nλ = 30, Tchirp = 713.4 ns (PPAR = 36.6 MHz), the chirp duration Tchirp can be extended to ~787 ns, enabling Nλ = 33 and thereby elevating PPAR to 40.2 MHz.

In single-pulse direct-TOF LiDAR, the trade-off between frep and the ambiguity distance dm inherently limits the PPAR. Time-frequency multiplexing decouples this constraint, enhancing the PPAR without compromising dm at a given frep. Instead, the sub-pulses temporal interval Δτ defines the maximum detectable depth span Δdm = c·Δτ/2 = 3.69 m, creating a PPAR-Δdm trade-off. Theoretically, by resolving 30-pulse echo signals within a single laser pulse period Tpulse, we can overcome the distance limitation imposed by Δτ, yielding an ambiguity distance up to dm = c·Tpulse/2 = 123 m (see Supplementary Section 10 for details). Additionally, applying α-dimensional orthogonal pulse coding combined with cross-correlation detection could further extend the ambiguity distance by α-fold under the same PPAR, and this approach is compatible with the time-stretching method we employed, as demonstrated in our recent work44.

To demonstrate the system’s capability for detecting small, fast-moving targets (required for scenarios like drone tracking), consider a racing drone with a diagonal span of ~60 cm located 50 m away and traveling at a horizontal speed of 180 km/h. The drone would traverse the entire 100° FOV in ~2.38 s. With our system operating at 183.5 fps (β1 = 4), the drone could be captured in 437 consecutive frames, enabling smooth motion tracking. Furthermore, the system’s angular resolution of 6.46 mrad, ranging precision of 3 cm, and ambiguity distance of 123 m are all sufficient for detecting such a drone. If both the x and y AODs employ pixel-by-pixel scanning, the total number of addressable points becomes 7×83×30 = 17,430, enabling a higher imaging frame rate of 2,098 fps (β1 = 1) to meet the detection requirements for faster-moving objects. Considering the Nyquist limit, which necessitates capturing 4 frames to recover the object’s speed, our system is theoretically capable of detecting objects moving at speeds of up to 225 mega-meter/h at 50 m away.

Moreover, in spectral scanning, the dispersion capability is constrained by the grating’s line density. Employing a grating with a higher line density could further enhance the horizontal FOV. Additionally, 2D spectral scanning could be achieved by integrating a diffraction grating with a virtually imaged phased array (VIPA)18,45, enabling more efficient utilization of the spectrum and the scanning space.

Regarding modulation bandwidth, the currently used arrayed waveguide grating (AWG) for wavelength division multiplexing exhibits a 3 dB bandwidth of 0.28 nm. However, the narrow 0.4 nm channel spacing causes spectral overlap and crosstalk, causing overlapping spots in the grating’s dispersion direction and degrading spatial resolution. In our experiment, a programmable spectral shaping device, the Waveshaper (WS), is employed to refine the comb-like output spectrum, narrowing the linewidth from 0.28 nm to ~0.1 nm, significantly improving the spatial resolution in the dispersion direction (see Supplementary Section 2). Therefore, replacing our laser source with an optical frequency comb featuring a narrower linewidth and a better-matched spectral profile could further enhance the spectral and spatial resolution.

In summary, by leveraging wide-FOV AML-coordinated spectral-AO scanning, we have successfully developed a LiDAR architecture that attains an outstanding 36.6 MHz FPAR, a precise 0.37° resolution across a 102° FOV, thus achieving high spatiotemporal resolution. Additionally, the wide-FOV and low-divergence beam characteristics enabled by planar optical components infuse renewed vitality into traditional bulk LiDAR systems. This technology holds promise for applications in non-line-of-sight imaging46,47, inverse synthetic aperture LiDAR, and other imaging systems requiring scanning modules. To achieve longer-range detection, frequency up-conversion48 on echo signals can be employed to convert near-infrared light into the visible spectrum, aligning with the high responsivity range of mature silicon-based photodetectors and thereby improving detection efficiency. In the future, it is worth exploring whether leveraging the orbital angular momentum (OAM) modes for orthogonal spin multiplexing, in conjunction with composite phase metasurfaces for spin demultiplexing, can facilitate parallel beam scanning. Recently, parallel detection methods for high-speed LiDAR have also emerged, including multi-detector architectures7,21,49, optical spectro-temporal coding20, parallel chaos22, and code-division multiple access (CDMA) methods44,50; these could further enhance the LiDAR’s temporal resolution. Furthermore, our single-transceiver architecture can also be extended to parallel detection and holds promise for application in integrated sensing and communication systems, enabling faster and more precise spatiotemporal perception.

Materials and methods

Experimental methodology

The supercontinuum laser (NKT Photonics, SuperK EXTREME EXR-15) generates broad-spectrum pulsed light, which is then amplified by an erbium-doped fiber amplifier (EDFA) with a gain spectrum centered around 1550 nm. Subsequently, time-frequency multiplexing produces 30 comb-like spectral channels, each featuring a linewidth of 0.28 nm and a spacing of 0.4 nm (details in Supplementary Section 2). These channels are further refined to a linewidth of 0.1 nm using a Waveshaper (Coherent, Waveshaper 4000 A). The collimated output beam is directed through a dual-axis AOD (AA Opto-electronic, DTSXY-A6-1550), enabling 2D acousto-optic scanning of the [1, 1] diffraction order, which is filtered by a pinhole aperture. The AODs are driven by a radio frequency (RF) generator (AA Opto-electronic, DDSPA-B415b-0) with external amplifiers, and the driving module is synchronized with the laser pulses via a field programmable gate array (FPGA) to perform precise rate matching. The dual-axis AOD outputs a vertically polarized beam, which is rotated to horizontal polarization by passing through an HWP oriented at 45° to enhance responsiveness to the vertical blazed grating (LBTEK, BG25-600-1500) with the blaze angle of 26.78°. After dispersion by the grating for spectral-AO scanning, the beams are directed onto the AML through a focusing lens (LBTEK, MBCX10611), enabling an expanded FOV. The AML was fabricated by electron beam lithography techniques with the fabrication flow chart shown in Supplementary Fig. S4d. The target’s echo signals first pass through a bandpass filter (BPF) (THORLABS, FBH1550-40) to suppress most of the ambient stray light by narrowband spectral filtering. They are then detected by a PMT (HAMAMATSU, H10330C-75) and digitized by a data acquisition card (Teledyne, ADQ7DC) operating at a sampling rate of 5 GS/s. Data post-processing, including amplitude filtering, frequency-domain filtering, three-point fitting, point cloud denoising, and sub-pixel reconstruction, is performed to refine the 3D morphology of the objects (details in Supplementary Section 7).

Data availability

All data needed to evaluate the conclusions in the paper are present in the paper and/or the Supplementary Materials. Additional data related to this paper may be requested from the authors.

References

Kim, G. et al. Metasurface-driven full-space structured light for three-dimensional imaging. Nat. Commun. 13, 5920 (2022).

Choi, E. et al. 360° structured light with learned metasurfaces. Nat. Photonics 18, 848–855 (2024).

Liu, X. Y. et al. Edge enhanced depth perception with binocular meta-lens. Opto-Electron. Sci. 3, 230033 (2024).

Zhang, F. et al. Meta-optics empowered vector visual cryptography for high security and rapid decryption. Nat. Commun. 14, 1946 (2023).

Kim, I. et al. Nanophotonics for light detection and ranging technology. Nat. Nanotechnol. 16, 508–524 (2021).

Zhang, X. S. et al. A large-scale microelectromechanical-systems-based silicon photonics LiDAR. Nature 603, 253–258 (2022).

Riemensberger, J. et al. Massively parallel coherent laser ranging using a soliton microcomb. Nature 581, 164–170 (2020).

Li, N. X. et al. A progress review on solid-state LiDAR and nanophotonics-based LiDAR sensors. Laser Photonics Rev. 16, 2100511 (2022).

Zhang, L. Y. et al. A dual-mode LiDAR system enabled by mechanically tunable hybrid cascaded metasurfaces. Light Sci. Appl. 14, 287 (2025).

Park, J. et al. All-solid-state spatial light modulator with independent phase and amplitude control for three-dimensional LiDAR applications. Nat. Nanotechnol. 16, 69–76 (2021).

Sun, J. et al. Large-scale nanophotonic phased array. Nature 493, 195–199 (2013).

Li, B. Z., Lin, Q. X. & Li, M. Frequency-angular resolving LiDAR using chip-scale acousto-optic beam steering. Nature 620, 316–322 (2023).

Jiao, B. Z. et al. Acousto-optic scanning spatial-switching multiphoton lithography. Int. J. Extreme Manuf. 5, 035008, (2023).

Juliano Martins, R. et al. Metasurface-enhanced light detection and ranging technology. Nat. Commun. 13, 5724 (2022).

Mahjoubfar, A. et al. Time stretch and its applications. Nat. Photonics 11, 341–351 (2017).

Goda, K. & Jalali, B. Dispersive Fourier transformation for fast continuous single-shot measurements. Nat. Photonics 7, 102–112 (2013).

Jiang, Y. S., Karpf, S. & Jalali, B. Time-stretch LiDAR as a spectrally scanned time-of-flight ranging camera. Nat. Photonics 14, 14–18 (2020).

Goda, K., Tsia, K. K. & Jalali, B. Serial time-encoded amplified imaging for real-time observation of fast dynamic phenomena. Nature 458, 1145–1149 (2009).

Xiao, S., Davison, I. & Mertz, J. Scan multiplier unit for ultrafast laser scanning beyond the inertia limit. Optica 8, 1403–1404 (2021).

Zang, Z. H. et al. Ultrafast parallel single-pixel LiDAR with all-optical spectro-temporal encoding. APL Photonics 7, 046102 (2022).

Lukashchuk, A. et al. Dual chirped microcomb based parallel ranging at megapixel-line rates. Nat. Commun. 13, 3280 (2022).

Chen, R. X. et al. Breaking the temporal and frequency congestion of LiDAR by parallel chaos. Nat. Photonics 17, 306–314 (2023).

Zang, Z. H. et al. Spectrally scanning LiDAR based on wide-angle agile diffractive beam steering. IEEE Photonics Technol. Lett. 34, 850–853 (2022).

Hofmann, O., Stollenwerk, J. & Loosen, P. Design of multi-beam optics for high throughput parallel processing. J. Laser Appl. 32, 012005 (2020).

Liu, Y. H. & Jenkins, M. W. Two single-axis scanners make a virtual gimbaled scanner. Opt. Lett. 47, 5712–5714 (2022).

Ricci, P. et al. Power-effective scanning with AODs for 3D optogenetic applications. J. Biophotonics 15, e202100256 (2022).

Duocastella, M. et al. Fast inertia-free volumetric light-sheet microscope. ACS Photonics 4, 1797–1804 (2017).

Kuang, Z. et al. Fast parallel diffractive multi-beam femtosecond laser surface micro-structuring. Appl. Surf. Sci. 255, 6582–6588 (2009).

Dasgupta, A. et al. Direct supercritical angle localization microscopy for nanometer 3D superresolution. Nat. Commun. 12, 1180 (2021).

Zhou, Q. Y. et al. Three-dimensional wide-field fluorescence microscopy for transcranial mapping of cortical microcirculation. Nat. Commun. 13, 7969 (2022).

Hulleman, C. N. et al. Simultaneous orientation and 3D localization microscopy with a Vortex point spread function. Nat. Commun. 12, 5934 (2021).

Li, Y. T. et al. Geometric transformation adaptive optics (GTAO) for volumetric deep brain imaging through gradient-index lenses. Nat. Commun. 15, 1031 (2024).

Luo, X. G. Principles of electromagnetic waves in metasurfaces. Sci. China Phys., Mech. Astron. 58, 594201 (2015).

Guo, Y. H. et al. Spin-decoupled metasurface for simultaneous detection of spin and orbital angular momenta via momentum transformation. Light Sci. Appl. 10, 63 (2021).

Xie, X. et al. Generalized Pancharatnam-Berry phase in rotationally symmetric meta-atoms. Phys. Rev. Lett. 126, 183902 (2021).

Neshev, D. & Aharonovich, I. Optical metasurfaces: New generation building blocks for multi-functional optics. Light Sci. Appl. 7, 58 (2018).

Luo, X. G. Subwavelength optical engineering with metasurface waves. Adv. Optical Mater. 6, 1701201 (2018).

Chen, C. et al. Spectral tomographic imaging with aplanatic metalens. Light Sci. Appl. 8, 99 (2019).

Shanker, A. et al. Quantitative phase imaging endoscopy with a metalens. Light Sci. Appl. 13, 305 (2024).

Zhu, A. Y. et al. Compact aberration-corrected spectrometers in the visible using dispersion-tailored metasurfaces. Adv. Opt. Mater. 7, 1801144 (2019).

Pu, M. B. et al. Nanoapertures with ordered rotations: Symmetry transformation and wide-angle flat lensing. Opt. Expr. 25, 31471–31477 (2017).

Li, Z. et al. Towards an ultrafast 3D imaging scanning LiDAR system: a review. Photonics Res. 12, 1709–1729 (2024).

Shalaginov, M. Y. et al. Single-element diffraction-limited fisheye metalens. Nano Lett. 20, 7429–37 (2020).

Gong, S. J. et al. Parallel all-optical encoded CDMA-driven anti-interference LiDAR for 78 MHz point acquisition. Opto-Electron. Technol. 1, 250005 (2025).

Li, Z. et al. Solid-state FMCW LiDAR with two-dimensional spectral scanning using a virtually imaged phased array. Opt. Expr. 29, 16547–62 (2021).

O’Toole, M., Lindell, D. B. & Wetzstein, G. Confocal non-line-of-sight imaging based on the light-cone transform. Nature 555, 338–41 (2018).

Wang, Z. W. et al. Vectorial-optics-enabled multi-view non-line-of-sight imaging with high signal-to-noise ratio. Laser Photonics Rev. 18, 2300909 (2024).

Albota, M. A. & Wong, F. N. C. Efficient single-photon counting at 1.55 µm by means of frequency upconversion. Opt. Lett. 29, 1449–51 (2004).

Teng, J. J. et al. Time-encoded single-pixel 3D imaging. APL Photonics 5, 020801 (2020).

Shen, Y. X., Lim, Z. H. & Zhou, G. Y. Identity-enabled CDMA LiDAR for massively parallel ranging with a single-element receiver. Laser & Photonics Rev. 19, e00553 (2025).

Acknowledgements

This work was supported by the National Natural Science Foundation of China (U25A20518, U24A6010, 62222513, 52488301).

Author information

Authors and Affiliations

Contributions

S.-J.G., Y.-H.G., X.-Y.L., M.-B.P., and X.-G.L. conceived the principle. S.-J.G. performed the simulations. S.-J.G., Y.-H.G., X.-Y.L., and Q.Z. designed the experiments. S.-J.G., P.T., and X.-Y.L. conducted the experiments with W.-Y.Y. and H.-P.L.’s assistance. S.-J.G. processed the data and plotted the figures. S.-J.G., Y.-H.G., P.T., and L.-W.C wrote the manuscript. All the authors were closely involved in discussing and revising the manuscript. M.-B.P. and X.-G.L. co-supervised the project. Additionally, we thank Qiong He for fabricating the metalens.

Corresponding authors

Ethics declarations

Conflict of interest

The authors declare no competing interests.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Gong, S., Guo, Y., Li, X. et al. Spectral-acoustic-coordinated astigmatic metalens for wide field-of-view and high spatiotemporal resolution 3D imaging. Light Sci Appl 15, 85 (2026). https://doi.org/10.1038/s41377-025-02180-7

Received:

Revised:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41377-025-02180-7