Abstract

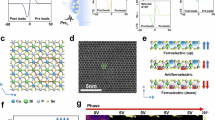

Antiferroelectric materials, featuring field controllable antipolar ordering and reversible polarization switching, offer a promising platform for hardware efficient neuromorphic computing. The tunable polarization dynamics and layered van der Waals structure enable the multifunctional integration of sensing, learning, and computation within a single device architecture. Here, we demonstrate an antiferroelectric polarization driven diode exhibiting an extended linear operating region, which simultaneously enables physical activation and computing-in-memory. Building on the device capability, we construct an in-sensor computing system that achieves over 95% accuracy in medical image classification. We further integrate the devices to demonstrate a hardware-based activation function, attaining accuracy and training loss comparable to an ideal activation function. To enhance adaptability, we further propose a tunable activation circuit that enables linear modulation of the reverse bias slope via gain control. Overall, this work establishes a dual-functional antiferroelectric heterojunction, highlighting its strong potential for constructing optically triggered, compact, and low-power perception–computation-integrated neuromorphic systems for medical image processing.

Similar content being viewed by others

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Code availability

The codes that support the findings of this study are available from the corresponding author upon reasonable request.

References

Guan Y. et al. World models for autonomous driving: an initial survey. IEEE Trans. Intell. Veh. 1–17 (2024).

Ha, T. et al. AI-driven robotic chemist for autonomous synthesis of organic molecules. Sci. Adv. 9, eadj0461 (2023).

Xu, Z. et al. DriveGPT4: interpretable end-to-end autonomous driving via a large language model. IEEE Robot. Autom. Lett. 9, 8186–8193 (2024).

Zhou X. et al. Vision language models in autonomous driving: a survey and outlook. IEEE Trans. Intell. Veh. 1–20 (2024).

Zhao, X. et al. A review of convolutional neural networks in computer vision. Artif. Intell. Rev. 57, 99 (2024).

Zhou, B., Krähenbühl, P. & Koltun, V. Does computer vision matter for action? Sci. Robot. 4, eaaw6661 (2019).

He, Y. et al. Deep learning based 3D segmentation in computer vision: a survey. Inf. Fusion 115, 102722 (2025).

Lauriola, I., Lavelli, A. & Aiolli, F. An introduction to deep learning in natural language processing: models, techniques, and tools. Neurocomputing 470, 443–456 (2022).

Sasidhar, K. N. et al. Enhancing corrosion-resistant alloy design through natural language processing and deep learning. Sci. Adv. 9, eadg7992 (2023).

Hirschberg, J. & Manning, C. D. Advances in natural language processing. Science 349, 261–266 (2015).

Khurana, D., Koli, A., Khatter, K. & Singh, S. Natural language processing: state of the art, current trends and challenges. Multimed. Tools Appl. 82, 3713–3744 (2023).

Tian Y., Ye Q. & Doermann D. YOLOv12: attention-centric real-time object detectors. Preprint at: arXivhttps://arxiv.org/abs/2502.12524 (2025).

Dosovitskiy, A. et al. An image is worth 16x16 words: transformers for image recognition at scale. In International Conference on Learning Representations (ICLR) (2021).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 770–778 (2016).

Touvron, H. et al. LLaMA: open and efficient foundation language models. Preprint at https://arxiv.org/abs/2302.13971 (2023).

Vaswani, A. et al. Attention is all you need. In Proc. 31st International Conference on Neural Information Processing Systems (NIPS) 5998–6008 (2017).

Yin, S. et al. A survey on multimodal large language models. Natl. Sci. Rev. 11, nwae403 (2024).

Radford, A. et al. Learning transferable visual models from natural language supervision. In Proc. 38th International Conference on Machine Learning (ICML) 8748–8763 (2021).

Li, J., Li, D., Xiong, C. & Hoi, S. BLIP: bootstrapping language-image pre-training for unified vision-language understanding and generation. In Proceedings of the 39th International Conference on Machine Learning (ICML) 12888–12900 (2022).

Zhang, W. et al. Neuro-inspired computing chips. Nat. Electron. 3, 371–382 (2020).

Huo, Q. et al. A computing-in-memory macro based on three-dimensional resistive random-access memory. Nat. Electron. 5, 469–477 (2022).

Rasch, M. J. et al. Hardware-aware training for large-scale and diverse deep learning inference workloads using in-memory computing-based accelerators. Nat. Commun. 14, 5282 (2023).

Joshi, V. et al. Accurate deep neural network inference using computational phase-change memory. Nat. Commun. 11, 2473 (2020).

Hassanpour, M., Riera, M. & González, A. A survey of near-data processing architectures for neural networks. Mach. Learn. Knowl. Extr. 4, 66–102 (2021).

Yamashita, R., Nishio, M., Do, R. K. G. & Togashi, K. Convolutional neural networks: an overview and application in radiology. Insights Imaging 9, 611–629 (2018).

Xu B., Wang N., Chen T. & Li M. Empirical evaluation of rectified activations in convolutional network. Preprint at: arXiv https://arxiv.org/abs/1505.00853 (2015).

Cao, R. et al. Compact artificial neuron based on anti-ferroelectric transistor. Nat. Commun. 13, 7018 (2022).

Gao, J. et al. Reconfigurable neuromorphic functions in antiferroelectric transistors through coupled polarization switching and charge trapping dynamics. Nat. Commun. 16, 4368 (2025).

Zhou, G. et al. Full hardware implementation of neuromorphic visual system based on multimodal optoelectronic resistive memory arrays for versatile image processing. Nat. Commun. 14, 8489 (2023).

Wan, W. et al. A compute-in-memory chip based on resistive random-access memory. Nature 608, 504–512 (2022).

Peng, X., Huang, S., Jiang, H., Lu, A. & Yu, S. DNN+NeuroSim V2.0: an end-to-end benchmarking framework for compute-in-memory accelerators for on-chip training. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 40, 2306–2319 (2021).

Yi, T., Zhong-liang, W., Guo-qiang, N. & Li-qiang, T. NLOS single scattering model in digital UV communication. Proc. SPIE 7158 (2008).

Krestinskaya, O., Salama, K. N. & James, A. P. Learning in memristive neural network architectures using analog backpropagation circuits. IEEE Trans. Circuits Syst. I: Regul. Pap. 66, 719–732 (2019).

Oh, S. et al. Energy-efficient Mott activation neuron for full-hardware implementation of neural networks. Nat. Nanotechnol. 16, 680–687 (2021).

Oh, J. et al. Preventing vanishing gradient problem of hardware neuromorphic system by implementing imidazole-based memristive ReLU activation neuron. Adv. Mater. 35, e2300023 (2023).

Zou, J. et al. An artificial L-ReLU neuron with asymmetric diffusive memristor for high-accuracy neuromorphic systems. Adv. Funct. Mater. 35 (2025).

Islam, K. M. et al. In-plane and out-of-plane optical properties of monolayer, few-layer, and thin-film MoS2 from 190 to 1700 nm and their application in photonic device design. Adv. Photon. Res. 2 (2021).

He, W., Kong, L., Yu, P. & Yang, G. Record-high work-function p-type CuBiP(2) Se(6) atomic layers for high-photoresponse van der waals vertical heterostructure phototransistor. Adv. Mater. 35, e2209995 (2023).

Li, H. et al. From bulk to monolayer MoS2: evolution of Raman scattering. Adv. Funct. Mater. 22, 1385–1390 (2012).

Lee, S. Y. et al. Large work function modulation of monolayer MoS2 by ambient gases. ACS Nano 10, 6100–6107 (2016).

Lu, C.-P., Li, G., Mao, J., Wang, L.-M. & Andrei, E. Y. Bandgap, mid-gap states, and gating effects in MoS2. Nano Lett. 14, 4628–4633 (2014).

Chang, T., Jo, S.-H. & Lu, W. Short-term memory to long-term memory transition in a nanoscale memristor. ACS Nano 5, 7669–7676 (2011).

McGlynn, S. P. Concepts in photoconductivity and allied problems. J. Am. Chem. Soc. 86, 5707–5707 (1964).

Yang, J. et al. MedMNIST v2 - a large-scale lightweight benchmark for 2D and 3D biomedical image classification. Sci. Data 10, 41 (2023).

Chattopadhay, A., Sarkar, A., Howlader, P. & Balasubramanian, V. N. Grad-CAM++: generalized gradient-based visual explanations for deep convolutional networks. In 2018 IEEE Winter Conference on Applications of Computer Vision (WACV) (2018).

Krizhevsky A. Learning multiple layers of features from tiny images. University of Toronto, (2009).

Parkhi, O. M., Vedaldi, A., Zisserman, A. & Jawahar, C. V. Cats and dogs. In 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 3498–3505 (2012).

Bossard, L., Guillaumin, M. & Van Gool, L. Food-101 – mining discriminative components with random forests. In Computer Vision – ECCV 2014 (eds. Fleet, D., Pajdla, T., Schiele, B. & Tuytelaars, T.) (Springer International Publishing, 2014).

Lecun, Y., Bottou, L., Bengio, Y. & Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 86, 2278–2324 (1998).

Acknowledgements

This work is supported by the National Key Research and Development Program of China (Grant No. 2022YFA1405600), Beijing Natural Science Foundation (Grant No. Z210006) and Start-up Research Fund for Young Scholars of Beijing Institute of Technology. We acknowledge the support provided by the Analysis and Testing Center of Beijing Institute of Technology.

Author information

Authors and Affiliations

Contributions

Y.L. conceived the study, wrote the manuscript with input from all authors, and performed the experiments, analyses, and simulations. Z.W. and F.X. carried out the PFM measurements. D.Y., W.Z., and T.Y. contributed to the analysis of the experimental data and provided suggestions. The study was supervised by H.W. and L.S. All authors contributed to the discussion and revision of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Minseong Park, Xianyue Zhao and the other anonymous reviewer(s) for their contribution to the peer review of this work. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Lin, Y., Yang, D., Wang, Z. et al. Antiferroelectric polarization enabling physical activation in CuBiP2Se6 for medical image processing. Nat Commun (2026). https://doi.org/10.1038/s41467-026-70594-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-026-70594-x