Abstract

Accurately assessing the residual strength of corroded oil and gas pipelines is crucial for ensuring their safe and stable operation. Machine learning techniques have shown promise in addressing this challenge due to their ability to handle complex, non-linear relationships in data. Unlike previous studies that primarily focused on enhancing prediction accuracy through the optimization of single models, this work shifts the emphasis to a different approach: stacking ensemble learning. This study applies a stacking model composed of seven base learners and three meta-learners to predict the residual strength of pipelines using a dataset of 453 instances. Automated hyperparameter tuning libraries are utilized to search for optimal hyperparameters. By evaluating various combinations of base learners and meta-learners, the optimal stacking configuration was determined. The results demonstrate that the stacking model, using k-nearest neighbors as the meta-learner alongside seven base learners, delivers the best predictive performance, with a coefficient of determination of 0.959. Compared to individual models, the stacking model also significantly improves generalization performance. However, the stacking model’s effectiveness on low-strength pipelines is limited due to the small sample size. Furthermore, incorporating original features into the second-layer model did not significantly enhance performance, likely because the first-layer model had already extracted most of the critical features. Given the marginal contribution of model optimization to prediction accuracy, this work offers a novel perspective for improving model performance. The findings have important practical implications for the integrity assessment of corroded pipelines.

Similar content being viewed by others

Introduction

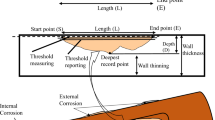

Pipelines are the primary mode of transportation for oil and gas, serving as the vascular system of energy infrastructure1. As pipelines operate over time, they are susceptible to defects for various reasons, with corrosion significantly contributing to pipeline deterioration2. Corrosion leads to a reduction in the thickness of pipeline walls, resulting in a continuous decrease in the pipeline’s failure pressure. Over time, this can lead to punctures, leaks, and fractures in the pipeline3,4. Due to the nature of oil and gas pipelines, any leakage can lead to substantial financial losses and pose significant risks to human life and safety5,6. The residual strength of a pipeline refers to the ultimate carrying capacity of a defective pipeline, typically expressed in terms of burst pressure7. Thus, accurate assessment of the residual strength of pipelines with corrosion defects is paramount.

Numerous studies have been conducted on assessing pipeline residual strength, with the empirical formula method, finite element analysis, and machine learning techniques being the three most commonly used approaches. The empirical formula method is based on fracture mechanics and other knowledge, established by researchers through the analysis of pipeline burst pressure experiments. In the 1970s, countries like the United States and the United Kingdom expanded the assessment methods for residual strength based on their research on corrosion mechanisms in oil and gas pipelines. This led to the development of several valuable evaluation standards and methods, including NG-188, ASME-B31G9, PCORRC10, and DNV-RP-F10111. Due to its simplicity and ease of use, the empirical formula method has been widely applied over the years. However, these empirical formulas tend to be quite conservative, resulting in significant evaluation errors. The finite element analysis method utilizes computer technology to simulate internal and external conditions of pipelines to predict residual strength. It offers the advantages of low cost, high efficiency, and precise results, within acceptable levels of accuracy. Shuai et al.12 established a novel model using the finite element method for predicting pipeline burst pressure. Comparative results between this new model and benchmark models indicate its feasibility for the reliability analysis of pipelines. Arumugam and Karuppanan13 used finite element analysis to examine the failure pressure of corroded pipelines under internal pressure and axial force. Based on the finite element study, six equations were developed using Buckingham’s theorem and multivariate non-linear regression techniques to predict the failure pressure of corroded pipelines. Zhang et al.14 utilized finite element analysis to study the interaction between corrosion defects. The results indicated that circumferential defects have a relatively minor impact on the failure pressure of pipelines, while diagonal defects have the greatest effect on reducing the failure pressure. Although the finite element method can be utilized for the assessment of pipeline residual strength, modifications to the model are required when there are changes in pipeline material and operating conditions. This makes it difficult to achieve rapid and accurate assessments.

With advances in computer technology, machine learning techniques have become reliable tools for accurately predicting pipeline residual strength. Specifically, Lu et al.7 employed the multi-objective salp swarm algorithm to optimize the relevance vector machine for predicting pipeline burst pressure. The research findings demonstrate that this model offers high accuracy and stability. Chen et al.5 applied multilayer perceptron to predict pipeline residual strength and addressed the issue of model overfitting by employing the dropout method for optimization. Vijaya Kumar et al.15 established an analysis equation based on finite element analysis to predict the residual strength of pipelines with a single corrosion defect, using the weights and biases of the artificial neural network (ANN) model.

The rapid development of ensemble learning techniques has provided new insights for predicting the residual strength of corroded pipelines. Ensemble learning combines multiple individual models to reduce prediction bias and variance, achieving better predictive performance than individual models alone. Ensemble learning methods typically include bagging, boosting, stacking, and blending methods16. The bagging series algorithms independently perform parallel computations on the base learners and then combine them according to an averaging ensemble strategy. In contrast, the boosting series algorithms sequentially operate on the base learners and then combine them according to an averaging ensemble strategy17. Bagging and boosting methods combine models of the same type to achieve better model performance. Several researchers have employed bagging and boosting techniques to predict pipeline residual strength, demonstrating promising predictive performance. For example, Ma et al.18 combined empirical formulas with the Light Gradient-Boosting Machine (LightGBM) model, utilizing prior knowledge from the empirical formulas to enhance the model’s predictive capabilities. The predictive results indicate that the proposed model outperforms other ensemble learning models such as Extreme Gradient Boosting (XGBoost) and Random Forest in terms of prediction accuracy. Liu et al.19 proposed a predictive model for pipeline safety assessment based on the XGBoost algorithm and analyzed the interpretability of XGBoost predictions.

Stacking is an advanced ensemble strategy that involves training multiple base learners and then using their outputs as input features for a meta-learner, integrating the models to enhance overall performance. The meta-learner can enhance the prediction results of the base learners and improve model performance. Stacking models have been applied in many domains due to their high predictive accuracy. Hoxha et al.16 used a stacking model to predict Turkey’s transportation energy demand. The study results showed that the stacking model outperformed individual models in terms of predictive performance. Shafighfard et al.20 trained a stacking model using data collected from the literature to predict the compressive strength of steel fiber-reinforced concrete under high temperatures. The stacking model used in the experiment demonstrated higher predictive accuracy compared to an artificial neural network model under the same experimental conditions. Li and Song17 employed a stacking model to predict the compressive strength of rice husk ash concrete. A comparative analysis with other mainstream models revealed that the stacking model effectively integrates the outputs of the base learners, leading to enhanced predictive accuracy. Go et al.21 developed a stacking model for predicting the residual tensile strength of glass-fiber-reinforced polymer. The results indicated that the stacking model improved predictive accuracy by approximately 4–22% compared to several previously employed models. Cao et al.22 employed a stacking model optimized using particle swarm optimization to predict the energy consumption of campus buildings. The results showed that the stacking model achieved a lower Root Mean Square Error (RMSE) compared to other models, with a reduction of 1.71.

The complex non-linear interactions among various factors affecting the residual strength of corroded pipelines create significant challenges for accurate prediction. Different machine learning algorithms exhibit varying levels of bias and variance when predicting the residual strength of pipelines. The stacking model offers a promising solution by integrating the predictions of multiple models, striking an optimal balance between bias and variance. By combining multiple machine learning algorithms, this approach effectively captures the intricate non-linear relationships among the factors influencing the residual strength of corroded pipelines. Given its potential for enhancing prediction accuracy in this challenging domain, this method shows considerable promise for practical applications. However, the literature review indicates a lack of studies employing the stacking method to enhance the prediction accuracy of residual strength in corroded oil and gas pipelines. Thus, this work develops a stacking ensemble model for this task. This work focuses on evaluating the performance of individual models, investigating the impact of varying the number of base learners and the types of meta-learners on model performance, analyzing the generalization capabilities of stacked models, and examining how different dataset configurations affect overall model performance. The results demonstrate that the proposed stacking model not only enhances model performance relative to single models but also improves the model’s generalization ability.

Results and discussion

Performance of base learners

The selection of base learners in the stacking model directly determines the performance of the residual strength prediction model for oil and gas pipelines. The choice of base learners should adhere to the principle of “good and diverse”23, meaning that each base learner should perform well when trained individually on the dataset, and there should be diversity among the base learners. This diversity helps the model to more accurately discover the internal features and relationships within the data, improving the model’s predictive performance and generalization ability for residual strength. By evaluating the performance of single models on the dataset, this study selected 7 models as base learners. The performance of the base learners is shown in Table 1. Figure 1 illustrates the correlation between the predicted values and the real values in the test set for the base learners. Table 1 shows that the SVR model achieves a coefficient of determination (R2) value of 0.939, exhibiting the best predictive performance among the base learners. On the other hand, the k-nearest neighbors (KNN) model demonstrates relatively poor predictive performance on the dataset, with an R2 of only 0.878. This is consistent with the results shown in Fig. 1.

Prediction results of base learners.

Performance of stacked models with varying base learners and meta-learners

Previous research has extensively used individual models similar to the base learners in this study. Many researchers have applied various optimization algorithms to these models to improve their accuracy. Unlike these traditional methods, which focus on incrementally refining a single model to enhance predictive performance, the stacking model integrates predictions from multiple base learners using a meta-learner, offering a novel perspective on predicting the residual strength of corroded pipelines. To explore the impact of the number of base learners and the type of meta-learner on the performance of the stacking model, different numbers of base learners were selected for training based on their performance rankings. The outputs of these base learners were used as input features for the meta-learner. Experiments were conducted to analyze how the number and types of base learners influence model performance. Based on the experimental results, KNN, Ridge Regression, and XGBoost were selected as meta-learners due to their superior performance in the stacking model. Performance varied with different combinations of meta-learners and base learners, as detailed in Fig. 2.

Performance of stacked models with different numbers of base learners and different meta-learners.

Figure 3 illustrates the correlation between the predicted values and the actual values in the test set for the stacking models. It is evident that the correlation is higher for the stacking model compared to the base learners, indicating superior predictive accuracy. Specifically, when the meta-learner is Ridge regression, the stacking model performs best with 3 base learners. However, as the number of base learners increases, the model’s performance gradually deteriorates. This phenomenon may be due to the fact that Ridge regression, being a linear model, can suffer from overfitting, which impacts performance on the test set. When the number of base learners is small, the model’s complexity is low, and Ridge regression can effectively capture important information without overfitting. As the number of base learners grows, the model’s complexity increases, and the limited training data may lead to overfitting of the Ridge regression model, reducing accuracy. Conversely, the performance trends of XGBoost and KNN, which improve with an increasing number of base learners, align with the characteristics of the stacking model. Each base learner provides relatively independent information, and the meta-learner integrates this data effectively, potentially improving accuracy with more base learners, under certain conditions. However, this improvement is not guaranteed. If new base learners are highly correlated with existing ones, the model may overfit due to similar predictions on the training data, thus not enhancing accuracy. Additionally, due to the limited training data for pipeline residual strength prediction, increasing the number of base learners also raises model complexity, potentially decreasing accuracy on this small dataset. Despite the best base learner achieving an R2 of 0.939, as shown in Fig. 2, the stacking model’s performance may not always surpass that of individual base learners. Without careful selection of base learners and meta-learners, the accuracy of the stacked model may not be optimal.

Prediction results of stacking models.

Generalization performance of the model

To more intuitively assess the generalization ability of the stacking model on the dataset, the R2 scores of the seven base learners and the three best-performing stacking models were compiled for both the training and test sets. Figure 4 illustrates the degree of overfitting, represented by the length of the gray lines between the two points. The three stacking models exhibit significantly lower overfitting compared to the base learners, indicating that stacking effectively reduces model overfitting and enhances generalization ability. Among these, the stacking model with KNN as the meta-learner not only achieves the highest predictive accuracy but also demonstrates superior generalization performance. Figure 5 presents the scatter plot for the Stacking-KNN model on both the training and test sets, showing that the model performs well in fitting both datasets.

Ridge-3 represents a stacking model where the meta-learner is Ridge regression combined with three base learners. KNN-7 represents a stacking model where the meta-learner is KNN combined with seven base learners. XGBoost-7 represents a stacking model where the meta-learner is XGBoost combined with seven base learners.

a Train and b Test.

To assess the model’s predictive performance across oil and gas pipelines with varying material strengths, the prediction errors of three stacking models were analyzed for pipelines of different strengths in both the training and test sets, as illustrated in Fig. 6. The models demonstrate superior predictive capabilities for high-strength pipelines, indicating excellent adaptability. However, a slight decline in predictive performance is observed for medium-strength pipelines. Notably, the models exhibit significantly poorer performance on both the training and test sets for low-strength pipelines. This discrepancy in performance is likely due to the limited quantity of data available for low-strength pipelines, with only 16 data points used in the model development. For machine learning models, this volume of data is insufficient to fully capture the characteristics and patterns associated with low-strength pipelines, resulting in constrained generalization capabilities. In future research, it will be essential to not only expand the overall dataset but also to increase the representation of pipelines with varying strengths. By enriching and diversifying the dataset, models can achieve enhanced generalization capabilities and robustness, leading to improved predictive accuracy across different conditions.

a Stacking-Ridge regression, b Stacking-XGBoost, c Stacking-KNN, d Data size. Absolute error is the difference between the predicted value and the actual value.

Impact of dataset configuration in stacking

In stacking models, incorporating original features into the training of the meta-learner is a common practice known as “passthrough”. This method involves adding the original features to the meta-learner’s training data, allowing it to simultaneously consider both the original features and the outputs of the first-layer models. To evaluate the impact of dataset configuration on model performance, comparative experiments were conducted, as shown in Fig. 7. The results indicate that employing the passthrough method or omitting it yields similar performance across different combinations of meta-learners and varying numbers of base learners. This suggests that the first-layer models have already effectively captured the essential information from the original features, and including the original features in the second-layer model does not significantly enhance performance.

a XGBoost, b KNN, c Ridge regression.

Methods

Model description

Stacking, introduced by Breiman24, has gained widespread popularity as a technique for addressing classification and regression problems25. The fundamental concept of stacking ensemble learning involves integrating the outputs of multiple base learners through a meta-learner to generate the final prediction. In a two-layer stacking ensemble model comprising \(m\) base learners and one meta-learner, the output \(Y\), given an input \(X\), is computed according to Eq. (1) (26)26.

where \(F(\cdot )\) is the meta-learner; \({B}_{{\rm{j}}}\left(X\right)\) is the output of the base learner.

The stacking model integrates the predictions of multiple individual models for secondary learning, as illustrated in Fig. 8. The selection of meta-learners and base learners is flexible, with no strict constraints. This study employed several widely used machine learning models, known for their strong predictive performance in pipeline residual strength prediction, as base learners. To further enhance the model’s generalization ability, some weaker learners were also included in the stacking ensemble. The meta-learner was selected based on the overall predictive performance achieved by combining different models and base learners. A brief description of these models is provided below:

-

Ridge regression27 is a form of linear regression that incorporates a regularization term to mitigate overfitting. By penalizing the magnitude of the coefficients, Ridge regression effectively addresses multicollinearity and stabilizes the model’s predictions. This approach is particularly beneficial in situations where features are highly correlated.

-

KNN28 is a non-parametric, instance-based learning algorithm utilized for regression tasks. KNN predicts the target value by identifying the ‘K’ nearest labeled instances to the data point being evaluated. This method is straightforward and user-friendly, requiring minimal hyperparameter tuning.

-

SVR29 is designed to address regression problems by fitting a function that approximates the actual data points as closely as possible. SVR employs a kernel trick to manage non-linear relationships, mapping input data into high-dimensional feature spaces where linear regression techniques are then applied. This approach enables SVR to effectively capture complex, non-linear patterns in data.

-

RF30 is a robust machine learning technique that excels in regression analysis by combining multiple decision trees to enhance predictive accuracy. The fundamental principle of RF involves training several decision tree models independently and then averaging their outputs to generate predictions for the test examples. Each tree is constructed using a random subset of the training data and a random subset of features, which fosters diversity among the trees and improves the model’s overall robustness.

-

ETR31 is an ensemble learning algorithm derived from decision tree methodologies. Unlike the RF model, which constructs each tree using a random subset of the data, the ETR algorithm introduces additional randomness by selecting cut points for each feature randomly rather than optimizing them. This increased randomness further diversifies the individual trees, potentially leading to more robust model predictions.

-

MLP22 is a form of artificial neural network comprising multiple layers of nodes, typically including an input layer, one or more hidden layers, and an output layer. The MLP is trained using the backpropagation algorithm, which allows it to effectively manage complex non-linear functions and high-dimensional data.

-

Light Gradient-Boosting Machine (LightGBM)32 is a gradient-boosting framework that utilizes tree-based learning algorithms. It is specifically designed for efficiency and scalability, making it well-suited for processing large datasets with high-dimensional features. LightGBM is renowned for its rapid training speed and low memory consumption, a result of its distinctive histogram-based algorithm, which effectively reduces the number of data points required for training.

-

XGBoost33 is a highly efficient and scalable implementation of the gradient-boosting framework, renowned for its robust predictive performance and adaptability. Its efficiency in handling sparse data and its capability to automatically identify feature interactions make XGBoost particularly well-suited for complex datasets with high-dimensional features.

Stacking ensemble learning framework.

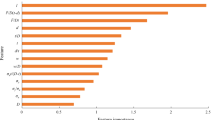

Collected data

This study utilized the publicly available dataset from literature34 for model training and validation. The dataset comprises a total of 453 data points, with 102 obtained through experiments and the remainder acquired using finite element methods. The input variables include eight features: pipe diameter (D), wall thickness (t), ultimate tensile strength (\({\sigma }_{y}\)), yield strength (\({\sigma }_{u}\)), elastic modulus (E), defect depth (d), defect length (l), and defect width (w), while the output variable is burst pressure (Bp). The statistical characteristics of the dataset are depicted in Fig. 9. Prior statistical analysis of this dataset has been documented7, offering valuable insights for this research. Building on these findings, the goal is to develop a more accurate predictive model for the residual strength of corroded oil and gas pipelines through the application of a stacking approach.

Histograms of variables included in the literature.

Data normalization and division

Z-score normalization

Figure 9 illustrates the varying scales across different features. To prevent scale differences from impacting model performance, it is essential to normalize the feature values prior to model training7. In this study, the Z-score normalization method is applied, which adjusts the data to follow a normal distribution with a mean of 0 and a standard deviation of 1. The normalized values are calculated using Eq. (2):

where \(x\) is the original data; \(\mu\) is the arithmetic mean of the data, \(\sigma\) is the standard deviation; \(Z\) is the normalized data value.

Data division

Prior to training, the dataset was randomly split into a training set (80%) and a test set (20%). The training set, comprising 362 samples, was used to develop the model, while the test set, containing 91 samples, served to evaluate the model’s performance. This randomized dataset was consistently applied across all subsequent models to ensure a fair comparison of predictive performance under uniform conditions.

Machine learning grid search

Given the involvement of multiple models in this study, two automated hyperparameter tuning libraries were utilized: Fast and Lightweight AutoML (FLAML)35 and Optuna36. These libraries were employed to identify the optimal hyperparameter combinations for each model. Due to the limited amount of data on pipeline residual strength, model performance was evaluated using 5-fold cross-validation on the validation set. In 5-fold cross-validation, the training set is divided into five parts, with four parts used for model training and the remaining part for performance evaluation. This process is repeated five times, and the average of these results is taken as the model’s performance metric. The FLAML library was applied for hyperparameter tuning of the RF, ETR, LightGBM, and XGBoost models, with a time limit of 1200 s. Meanwhile, the KNN, SVR, and MLP models were tuned using the Optuna library with 1000 iterations. The stacking model was also tuned using Optuna, with 500 iterations. The best hyperparameter combinations, optimized through this process, were subsequently saved and are presented in Table 2.

Error metrics

The accuracy of model predictions is assessed using several metrics in this study. Specifically, the R2, MSE, MAE, and MAPE are employed as the evaluation criteria for the models.

where \(i\) represents each sample; \(n\) represents the total number of samples; \({y}_{i}\) is the true value, \({p}_{i}\) is the predicted value, \(\bar{y}\) is the mean value of the samples.

Implementation platform

In this study, data analysis and experimentation were conducted using Python 3.9, leveraging libraries such as Pandas (v2.1.4), Scikit-learn (v1.5.0), NumPy (v1.26.0), FLAML (v2.1.1), and Optuna (v3.6.1). The computations were executed in a Jupyter notebook on a laptop equipped with a 2.30 GHz 12th Gen Intel Core i7-12700H processor, 16GB of RAM, and running the Windows 11 operating system.

Data availability

Some or all data, models, or codes that support the findings of this study are available from the corresponding author upon reasonable request.

References

Lu, H., Xu, Z. D., Iseley, T. & Matthews, J. C. Novel data-driven framework for predicting residual strength of corroded pipelines. J. Pipeline Syst. Eng. 12, 04021045 (2021).

Soomro, A. A. et al. Integrity assessment of corroded oil and gas pipelines using machine learning: a systematic review. Eng. Fail. Anal. 131, 105810 (2022).

Lu, H. et al. Theory and machine learning modeling for burst pressure estimation of pipeline with multipoint corrosion. J. Pipeline Syst. Eng. 14, 04023022 (2023).

Yuan, J. et al. Leak detection and localization techniques in oil and gas pipeline: a bibliometric and systematic review. Eng. Fail. Anal. 146, 107060 (2023).

Chen, Z. et al. Residual strength prediction of corroded pipelines using multilayer perceptron and modified feedforward neural network. Reliab. Eng. Syst. Safe 231, 108980 (2023).

Lu, H., Xi, D. & Qin, G. Environmental risk of oil pipeline accidents. Sci. Total Environ. 874, 162386 (2023).

Lu, H., Iseley, T., Matthews, J., Liao, W. & Azimi, M. An ensemble model based on relevance vector machine and multi-objective salp swarm algorithm for predicting burst pressure of corroded pipelines. J. Petrol. Sci. Eng. 203, 108585 (2021).

Zhou, R., Gu, X. & Luo, X. Residual strength prediction of X80 steel pipelines containing group corrosion defects. Ocean Eng. 274, 114077 (2023).

Cross, C. S. Manual for Determining the Remaining Strength of Corroded Pipelines Supplement to ASME B31 Code for Pressure Piping (ASME B31G, 2012).

Feng, L., Huang, D., Chen, X., Shi, H. & Wang, S. Residual ultimate strength investigation of offshore pipeline with pitting corrosion. Appl. Ocean Res. 117, 102869 (2021).

Miao, X. & Zhao, H. Novel method for residual strength prediction of defective pipelines based on HTLBO-DELM model. Reliab. Eng. Syst. Safe 237, 109369 (2023).

Shuai, Y., Shuai, J. & Xu, K. Probabilistic analysis of corroded pipelines based on a new failure pressure model. Eng. Fail. Anal. 81, 216–233 (2017).

Arumugam, T. & Karuppanan, S. Finite element analyses of corroded pipeline with single defect subjected to internal pressure and axial compressive stress. Mar. Struct. 72, 102746 (2020).

Zhang, Y. et al. A novel assessment method to identifying the interaction between adjacent corrosion defects and its effect on the burst capacity of pipelines. Ocean Eng. 281, 114842 (2023).

Vijaya Kumar, S. D., Karuppanan, S. & Ovinis, M. Failure pressure prediction of high toughness pipeline with a single corrosion defect subjected to combined loadings using artificial neural network (ANN). Metals11, 373 (2021).

Hoxha, J., Çodur, M. Y., Mustafaraj, E., Kanj, H. & El Masri, A. Prediction of transportation energy demand in Türkiye using stacking ensemble models: methodology and comparative analysis. Appl. Energ. 350, 121765 (2023).

Li, Q. & Song, Z. Prediction of compressive strength of rice husk ash concrete based on stacking ensemble learning model. J. Clean Prod. 382, 135279 (2023).

Ma, H. et al. A new hybrid approach model for predicting burst pressure of corroded pipelines of gas and oil. Eng. Fail. Anal. 149, 107248 (2023).

Liu, W., Chen, Z. & Hu, Y. XGBoost algorithm-based prediction of safety assessment for pipelines. Int. J. Pres. Ves. Pip. 197, 104655 (2022).

Shafighfard, T., Bagherzadeh, F., Rizi, R. A. & Yoo, D. Y. Data-driven compressive strength prediction of steel fiber reinforced concrete (SFRC) subjected to elevated temperatures using stacked machine learning algorithms. J. Mater. Res. Technol. 21, 3777–3794 (2022).

Go, C. et al. On developing accurate prediction models for residual tensile strength of GFRP bars under alkaline-concrete environment using a combined ensemble machine learning methods. Case Stud. Constr. Mat. 18, e02157 (2023).

Cao, Y., Liu, G., Sun, J., Bavirisetti, D. P. & Xiao, G. PSO-Stacking improved ensemble model for campus building energy consumption forecasting based on priority feature selection. J. Build. Eng. 72, 106589 (2023).

Sesmero, M. P. & Iglesias, J. A. Impact of the learners diversity and combination method on the generation of heterogeneous classifier ensembles. Appl. Soft Comput. 111, 107689 (2021).

Breiman, L. Stacked regressions. Mach. Learn. 24, 49–64 (1996).

Hajihosseinlou, M., Maghsoudi, A. & Ghezelbash, R. Stacking: a novel data-driven ensemble machine learning strategy for prediction and mapping of Pb-Zn prospectivity in Varcheh district, west Iran. Expert Syst. Appl. 237, 121668 (2024).

Jiang, H. et al. Quality classification of stored wheat based on evidence reasoning rule and stacking ensemble learning. Comput. Electron. Agr. 214, 108339 (2023).

Hoerl, A. E. & Kennard, R. W. Ridge regression: biased estimation for nonorthogonal problems. Technometrics 12, 55–67 (1970).

Cover, T. & Hart, P. Nearest neighbor pattern classification. IEEE Trans. Inform. Theory 13, 21–27 (1967).

Goliatt, L., Saporetti, C. M. & Pereira, E. Super learner approach to predict total organic carbon using stacking machine learning models based on well logs. Fuel 353, 128682 (2023).

Breiman, L. Random Forests. Mach. Learn. 45, 5–32 (2001).

Geurts, P., Ernst, D. & Wehenkel, L. Extremely randomized trees. Mach. Learn. 63, 3–42 (2006).

Ke, G. et al. LightGBM: a highly efficient gradient boosting decision tree. In: Advances in Neural Information Processing Systems. Vol. 30 (Curran Associates, Inc., 2017).

Chen, T. & Guestrin, C. XGBoost: a scalable tree boosting system. In Proc. of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. 785–794 (Association for Computing Machinery, New York, NY, USA, 2016).

Amaya-Gómez, R., Munoz Giraldo, F., Schoefs, F., Bastidas-Arteaga, E., & Sanchez-Silva, M. Recollected Burst Tests of Experimental and FEM Corroded Pipelines. Mendeley Data, v1 (Elsevier, 2019).

Wang, C., Wu, Q., Weimer, M. & Zhu, E. Flaml: a fast and lightweight automl library. Proc. Mach. Learn. Res. 3, 434–447 (2021).

Akiba, T. et al. Optuna: A next-generation hyperparameter optimization framework. In Proc. of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 2623–2631 (Association for Computing Machinery, New York, NY, USA, 2019).

Acknowledgements

This article is funded by the National Natural Science Foundation of China (No. 52402421) and the Natural Science Foundation of Jiangsu Province (Grant No. BK20220848).

Author information

Authors and Affiliations

Contributions

Qiankun Wang: conceptualization, methodology, data curation, writing—original draft, Hongfang Lu: conceptualization, writing—reviewing and editing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, Q., Lu, H. A novel stacking ensemble learner for predicting residual strength of corroded pipelines. npj Mater Degrad 8, 87 (2024). https://doi.org/10.1038/s41529-024-00508-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41529-024-00508-z

This article is cited by

-

Advancing LightGBM with data augmentation for predicting the residual strength of corroded pipelines

npj Materials Degradation (2025)

-

Machine learning improves detection of alpha thalassemia carriers compared to clinical features

Scientific Reports (2025)

-

Machine learning methods for predicting residual strength in corroded oil and gas steel pipes

npj Materials Degradation (2025)

-

Intelligent prediction of residual strength in blended hydrogen–natural gas pipelines with crack-in-dent defects

npj Materials Degradation (2025)

-

Ensemble learning for enhancing critical infrastructure resilience to urban flooding

Scientific Reports (2025)