Abstract

Published claims should be reproducible, yielding the same result when the same analysis is applied to the same data1,2. Here we assess reproducibility in a stratified random sample of 600 papers published from 2009 to 2018 in 62 journals spanning the social and behavioural sciences. The authors of 144 (24.0%, 95% confidence interval (CI) = 20.8–27.6%) papers made data available to assess reproducibility and, for 38 others, we obtained source data to reconstruct the dataset. We assessed 143 out of the 182 available datasets and found that 76.6 (53.6%, 95% CI = 45.8–60.7%) papers were rated as precisely reproducible and 105.0 (73.5%, 95% CI = 66.4–80.0%) were rated as at least approximately reproducible (within 15% of the original effects or within 0.05 of original P values) after inverse weighting each of the 551 claims by the number of claims per paper. We observed higher reproducibility for papers from political science and economics compared with other fields, for more recent papers compared with older papers and for papers from journals that require data sharing. Implementation of measures to verify that research is reproducible is needed to support trustworthiness in the complex enterprise of knowledge production3,4.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Data, materials and code associated with this research that can be shared without restriction are publicly available in a living OSF repository (https://doi.org/10.17605/osf.io/ed8pj). The living OSF repository represents improvements, fixes and additions that occur post-publication. Readers can also access a registered, archived version of this repository that is precisely the data, code and documentation as they existed on publication of this paper (https://doi.org/10.17605/osf.io/kmvst). The repository includes all available documentation for reproduction attempts, regardless of whether they were completed. This includes most of the data and code from the individual reproduction attempts, save for any data that are proprietary or protected that will not be made available, or for which analyst teams were uncertain or unable to confirm that they were allowed to share secondary data. It is possible that some data, materials or code that could be shared openly is not available at the time of publication. Readers are encouraged to contact the corresponding author or the authors of the relevant subproject (Supplementary Table 2) to see if more research content can be shared in the living repository. This paper is part of a collection of papers reporting on the SCORE program. Documentation, data and code for the entire program is available at the OSF (https://doi.org/10.17605/osf.io/dtzx4).

Code availability

Code for individual reproduction projects is available alongside data and materials for each project in the OSF repository (https://doi.org/10.17605/osf.io/ed8pj). This includes a push button package with all code and data used to produce all statistics, figures and tables, and code that populates them directly into the manuscript from a template. A registered, archived version of the repository containing precisely the data, code and documentation used to generate the outcomes reported in this paper is also available at OSF (https://doi.org/10.17605/osf.io/kmvst).

References

Dreber, A. & Johannesson, M. A framework for evaluating reproducibility and replicability in economics. Econ. Inq. 63, 338–356 (2025).

National Academies of Sciences, Engineering and Medicine. Reproducibility and Replicability in Science (The National Academies Press, 2019).

Culina, A., van den Berg, I., Evans, S. & Sánchez-Tójar, A. Low availability of code in ecology: a call for urgent action. PLoS Biol. 18, e3000763 (2020).

Stodden, V., Seiler, J. & Ma, Z. An empirical analysis of journal policy effectiveness for computational reproducibility. Proc. Natl Acad. Sci. USA 115, 2584–2589 (2018).

Chang, A. C. & Li, P. Is economics research replicable? Sixty published papers from thirteen journals say “often not”. Crit. Finance Rev. 11, 185–206 (2022).

McCullough, B. D., McGeary, K. A. & Harrison, T. D. Do economics journal archives promote replicable research? Can. J. Econ. 41, 1406–1420 (2008).

Vilhuber, L. Reproducibility and replicability in economics. Harv. Data Sci. Rev. 2, 4 (2020).

Brodeur, A. et al. Mass Reproducibility and Replicability: A New Hope No. 107, I4R Discussion Paper Series (The Institute for Replication, 2024).

Pérignon, C. et al. Computational Reproducibility in finance: evidence from 1,000 tests. Rev. Financ. Stud. 37, 3558–3593 (2024).

Laurinavichyute, A., Yadav, H. & Vasishth, S. Share the code, not just the data: a case study of the reproducibility of articles published in the Journal of Memory and Language under the open data policy. J. Mem. Lang. 125, 104332 (2022).

Wang, S. V., Sreedhara, S. K. & Schneeweiss, S. Reproducibility of real-world evidence studies using clinical practice data to inform regulatory and coverage decisions. Nat. Commun. 13, 5126 (2022).

Minocher, R., Atmaca, S., Bavero, C., McElreath, R. & Beheim, B. Estimating the reproducibility of social learning research published between 1955 and 2018. R. Soc. Open Sci. 8, 210450 (2021).

Hardwicke, T. E. et al. Data availability, reusability, and analytic reproducibility: evaluating the impact of a mandatory open data policy at the journal Cognition. R. Soc. Open Sci. 5, 180448 (2018).

Eubank, N. Lessons from a decade of replications at the Quarterly Journal of Political Science. PS Polit. Sci. Polit. 49, 273–276 (2016).

Trisovic, A., Lau, M. K., Pasquier, T. & Crosas, M. A large-scale study on research code quality and execution. Sci. Data 9, 60 (2022).

Roche, D. G., Kruuk, L. E. B., Lanfear, R. & Binning, S. A. Public data archiving in ecology and evolution: how well are we doing? PLoS Biol. 13, e1002295 (2015).

Roche, D. G. et al. Slow improvement to the archiving quality of open datasets shared by researchers in ecology and evolution. Proc. R. Soc. B Biol. Sci. 289, 20212780 (2022).

Stockemer, D., Koehler, S. & Lentz, T. Data access, transparency, and replication: new insights from the political behavior literature. PS Polit. Sci. Polit. 51, 799–803 (2018).

Brodeur, A. et al. Promoting reproducibility and replicability in political science. Res. Polit. https://doi.org/10.1177/20531680241233439 (2024).

Breznau, N. et al. The reliability of replications: a study in computational reproductions. R. Soc. Open Sci. 12, 241038 (2025).

Errington, T. M., Denis, A., Perfito, N., Iorns, E. & Nosek, B. A. Challenges for assessing replicability in preclinical cancer biology. eLife 10, e67995 (2021).

Gabelica, M., Bojčić, R. & Puljak, L. Many researchers were not compliant with their published data sharing statement: a mixed-methods study. J. Clin. Epidemiol. 150, 33–41 (2022).

Khan, N., Thelwall, M. & Kousha, K. Data sharing and reuse practices: disciplinary differences and improvements needed. Online Inf. Rev. 47, 1036–1064 (2023).

Tedersoo, L. et al. Data sharing practices and data availability upon request differ across scientific disciplines. Sci. Data 8, 192 (2021).

Alipourfard, N. et al. Systematizing confidence in open research and evidence (SCORE). Preprint at OSF https://doi.org/10.31235/osf.io/46mnb (2021).

Vines, T. H. et al. The availability of research data declines rapidly with article age. Curr. Biol. 24, 94–97 (2014).

Abatayo, A. L. et al. Assessments of credibility in the social and behavioural sciences. Preprint at MetaArXiv https://doi.org/10.31222/osf.io/7u58q_v1 (2026).

Wood, B. D. K., Müller, R. & Brown, A. N. Push button replication: is impact evaluation evidence for international development verifiable? PLoS ONE 13, e0209416 (2018).

Vilhuber, L. & Cavanagh, J. Report of the AEA data editor. AEA Pap. Proc. 115, 944–957 (2025).

Fišar, M., Greiner, B., Huber, C., Katok, E. & Ozkes, A. I. Reproducibility in management science. Manag. Sci. 70, 1343–1356 (2024).

Huntington-Klein, N. et al. The influence of hidden researcher decisions in applied microeconomics. Econ. Inq. 59, 944–960 (2021).

Silberzahn, R. et al. Many analysts, one data set: making transparent how variations in analytic choices affect results. Adv. Methods Pract. Psychol. Sci. 1, 337–356 (2018).

Aczel, B. et al. Investigating the analytical robustness of the social and behavioural sciences. Nature https://doi.org/10.1038/s41586-025-09844-9 (2026).

Clemens, M. A. The meaning of failed replications: a review and proposal. J. Econ. Surv. 31, 326–342 (2017).

Brown, A. W., Kaiser, K. A. & Allison, D. B. Issues with data and analyses: errors, underlying themes, and potential solutions. Proc. Natl Acad. Sci. USA 115, 2563–2570 (2018).

Gelman, A. & Carlin, J. Beyond power calculations: assessing type S (Sign) and type M (magnitude) errors. Perspect. Psychol. Sci. 9, 641–651 (2014).

Simmons, J. P., Nelson, L. D. & Simonsohn, U. False-positive psychology: undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychol. Sci. 22, 1359–1366 (2011).

Munafò, M. R. et al. A manifesto for reproducible science. Nat. Hum. Behav. 1, 0021 (2017).

Cummins, J. The threat of analytic flexibility in using large language models to simulate human data: a call to attention. Preprint at arXiv https://doi.org/10.48550/arXiv.2509.13397 (2025).

Nuijten, M. B., Bakker, M., Maassen, E. & Wicherts, J. M. Verify original results through reanalysis before replicating. Behav. Brain Sci. 41, e143 (2018).

Weissgerber, T. L. et al. Understanding the provenance and quality of methods is essential for responsible reuse of FAIR data. Nat. Med. 30, 1220–1221 (2024).

Nosek, B. A. et al. Promoting an open research culture. Science 348, 1422–1425 (2015).

Pérignon, C., Gadouche, K., Hurlin, C., Silberman, R. & Debonnel, E. Certify reproducibility with confidential data. Science 365, 127–128 (2019).

Vilhuber, L. Report of the AEA Data Editor. AEA Pap. Proc. 114, 878–890 (2024).

Morehouse, K. N., Kurdi, B. & Nosek, B. A. Responsible data sharing: identifying and remedying possible re-identification of human participants. Am. Psychol. 80, 928–941 (2025).

Nab, L. et al. OpenSAFELY: a platform for analysing electronic health records designed for reproducible research. Pharmacoepidemiol. Drug Saf. 33, e5815 (2024).

Quintana, D. S. A synthetic dataset primer for the biobehavioural sciences to promote reproducibility and hypothesis generation. eLife 9, e53275 (2020).

Boedihardjo, M., Strohmer, T. & Vershynin, R. Covariance’s loss is privacy’s gain: computationally efficient, private and accurate synthetic data. Found. Comput. Math. 24, 179–226 (2024).

Khan, A. R. & Kabir, E. Resampling methods for generating continuous multivariate synthetic data for disclosure control. J. Data Inf. Manag. 3, 225–235 (2021).

H2020 Programme: Guidelines on FAIR Data Management in Horizon 2020 (European Commission, 2016).

Landi, A. et al. The “A” of FAIR—as open as possible, as closed as necessary. Data Intell. 2, 47–55 (2020).

Tyner, A. H. et al. Investigating the replicability of the social and behavioural sciences. Nature https://doi.org/10.1038/s41586-025-10078-y (2026).

Acknowledgements

We thank B. Arendt, A. Denis, S. Field, Z. Loomas, B. Luis, L. Markham, E. S. Parsons, C. Soderberg and A. Russell for their contributions to this project. This work was supported by the Defense Advanced Research Projects Agency (DARPA) under cooperative agreement numbers N660011924015 (principal investigator, B.A.N.) and HR00112020015 (principal investigator, T.M.E.). The views, opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and should not be interpreted as representing the official views or policies of the Department of Defense or the US Government.

Author information

Authors and Affiliations

Contributions

Conceptualization: M.K.S., A.H.T., W.J.C., E.G.D.B., K.E.L., B.A.N. and T.M.E. Data curation: A.L.A., A.H.T., S. Anafinova, K.M.E., D.G. and J.W.L. Formal analysis: M.K.S., A.H.T., M.A., S. Alzahawi, S. Anafinova, E. Axxe, A.B., F.B., Z.B., F.S.B., T.F.B., T. Capitán, L.C., K.J.C., W.J.C., G.-J.C., T. Coupé, J.D., T.R.E., N. Fiala, J.F., V.G., J. Gereke, I.H.G., D.G., N.G., P.H.P.H., S.H., S.D.H., K.I., K. Jankowsky, P.K., M.K., D.K., K.E.L., J.C.L., C.L., A.-C.L., R.L.-N., N.M.L., M. Maier, D.J.M., M. Martončik, N.M., E.M., D.C.M., F.M., C.N., J.O., T.O., A.O., R.P., Y.G.P., Z.P., N.P., N.D.P., M.P., M.R., A.T.R., W.R.R., J.P.R., I.R., A.O.S., K.S., L.S., E.L.S., S. Shaki, A. Somo, F.S., A. Szabelska, A.T., K.U., P.V.D., D.T.H.V., V.V., K.W., A.L.W., J.R.W., F. Wintermantel and N.Z. Funding acquisition: B.A.N. and T.M.E. Investigation: O.M., A.L.A., M. Daley, N. Fox, K.M.H., M.K.S., B.M., A.H.T., M.A., S. Alzahawi, E. Axxe, J.B., G.B., F.B., Z.B., T.F.B., N.B., S.C., T. Capitán, K.J.C., W.J.C., T. Coupé, J.C., E.G.D.B., J.D., T.R.E., N. Fiala, J.F., V.G., J. Gereke, I.H.G., P.H.P.H., S.H., S.D.H., N.H.-K., K. Jankowsky, M.K., C.L., A.-C.L., R.L.-N., D.J.M., M. Martončik, J.M., D.C.M., E.O., J.O., T.O., Y.G.P., Z.P., N.P., N.D.P., M.P., A.P., A.T.R., W.R.R., J.P.R., I.R., A.O.S., K.S., L.S., E.L.S., A. Soh, A. Somo, J.W.S., A. Szabelska, A.T., M.V.T., K.U., P.V.D., D.T.H.V., V.V., K.W., A.L.W., J.R.W., F. Winter, N.Z. and T.M.E. Methodology: O.M., A.L.A., N. Fox, M.K.S., B.M., P.S., A.H.T., M.A., S. Alzahawi, E. Axxe, J.B., F.B., Z.B., T.F.B., N.B., S.C., T. Capitán, W.J.C., T. Coupé, K.M.E., T.R.E., N. Fiala, J.F., V.G., J. Gereke, I.H.G., P.H.P.H., S.D.H., K. Jankowsky, K.E.L., J.C.L., C.L., A.-C.L., R.L.-N., M. Maier, D.J.M., D.C.M., C.N., A.L.N., J.O., Y.G.P., Z.P., N.D.P., M.P., M.R., A.T.R., J.P.R., I.R., A.O.S., K.S., L.S., E.L.S., S. Shaki, S. Shakya, A. Somo, F.S., J.W.S., A. Szabelska, A.T., D.T.H.V., K.W., A.L.W., J.R.W., B.A.N. and T.M.E. Project administration: O.M., A.H.T., B.A.N. and T.M.E. Software: A.L.A., M.K.S., T.S., A.H.T., S. Alzahawi, E. Axxe, J.B., A.B., F.B., T.F.B., N.B., T. Coupé, T.R.E., J.F., V.G., J. Geng, I.H.G., D.G., P.H.P.H., S.D.H., K. Jankowsky, P.K., D.K., K.E.L., C.L., A.-C.L., R.L.-N., D.J.M., N.M., D.C.M., F.M., T.O., A.O., Y.G.P., Z.P., N.D.P., M.P., A.P., M.R., J.P.R., I.R., A.O.S., L.S., E.L.S., S. Shakya, A. Somo, F.S., J.W.S., A.T., M.V.T., D.T.H.V., V.V., K.W., A.L.W., F. Winter and F. Wintermantel. Supervision: O.M., K.M.H., M.K.S., A.H.T., B.N.B., T. Capitán, W.J.C., E.G.D.B., K.M.E., N. Fiala, I.H.G., A.G.-K., K. Jonas, J.W.L., R.E.L., G.N., J.O., N.D.P., W.R.R., J.P.R., S. Shaki, F. Wintermantel, C.Z., B.A.N. and T.M.E. Validation: O.M., A.L.A., N.H., P.S., A.H.T., S. Anafinova, E. Awtrey, E. Axxe, J.B., B.N.B., H.B., N.B., T. Capitán, L.C., W.J.C., J.C., E.G.D.B., K.M.E., I.H.G., A.G.-K., D.G., N.H.-K., K. Jonas, H.K., S.K., K.E.L., C.L., J.W.L., R.E.L., M. Maier, M.C.M., C.N., A.L.N., G.N., J.O., T.O., N.P., K.P., N.D.P., A.T.R., J.P.R., L.S., S. Shaki, E.S., A. Szabelska, A.T., K.U., E.J.V., V.V., F. Wintermantel, I.Z., C.Z., Z.Z. and T.M.E. Visualization: N.H., B.M., A.H.T., N.B., M.R., S. Shaki and B.A.N. Original draft: O.M., B.M., A.H.T., S. Anafinova, W.J.C., N. Fiala, K.E.L., J.O., M.P., S. Shaki, V.V., B.A.N. and T.M.E. Review and editing: O.M., M. Daley, M. Dirzo, B.M., P.S., A.H.T., S. Anafinova, B.N.B., G.B., N.B., W.J.C., J.C., E.G.D.B., N. Fiala, J.F., D.G., P.H.P.H., K. Jankowsky, P.K., S.K., L.L., K.E.L., R.L.-N., N.M.L., M. Maier, M. Martončik, C.N., T.O., R.P., Y.G.P., Z.P., N.P., M.P., A.T.R., J.P.R., I.R., L.S., E.L.S., S. Shaki, S. Shakya, E.S., J.W.S., V.V., K.W., A.L.W., B.A.N. and T.M.E.

Corresponding author

Ethics declarations

Competing interests

A.H.T., M. Daley, N.H., K.M.H., O.M., T.S., B.A.N. and T.M.E. are employees of the non-profit organization Center for Open Science that has a mission to increase openness, integrity and trustworthiness of research.

Peer review

Peer review information

Nature thanks Alfredo Sánchez-Tójar and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Peer reviewer reports are available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data figures and tables

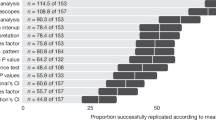

Extended Data Fig. 1 Data availability rates by 12 subfields.

The left panel shows data and code availability as a percentage of papers; the right panel shows raw counts of papers with data and code available and not available. Note that purple reflects restricted data, which did not count as available data, but might be accessible in principle. This is presented as Fig. S6 in the Supporting Information with additional narrative context.

Extended Data Fig. 2 Data availability rates by year of publication for all fields.

Smallest sample sizes per cell were in Education (n’s from 6 to 7 per year). Note that purple reflects restricted data, which did not count as available data, but might be accessible in principle. This is presented as Fig. S7 in the Supporting Information with additional narrative context.

Extended Data Fig. 3 Data availability rates by year of publication for 12 subfields.

Smallest sample sizes per cell were in criminology and public administration, each having an n of 2 per year. Note that purple reflects restricted data, which did not count as available data, but might be accessible in principle. This is presented as Fig. S8 in the Supporting Information with additional narrative context.

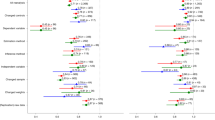

Extended Data Fig. 4 Reproducibility by whether data and code were available, only data were available, or when the paper’s data were reconstructed from available source data for all claims.

Reproducibility success rates as a percentage of attempts (left), and reproducibility success rates as counts (right). This is presented as Fig. S1 in the Supporting Information with additional narrative context.

Extended Data Fig. 5 Reproducibility by year of publication for all claims.

The left column illustrates the proportion of outcome reproduction attempts from the sample of claims. The middle column illustrates reproducibility as a percentage of the attempts. The right column illustrates reproducibility as counts compared with the sample of claims. This is presented as Fig. S2 in the Supporting Information with additional narrative context.

Extended Data Fig. 6 Reproducibility by field for all claims.

The left column illustrates the proportion of outcome reproduction attempts from the sample of claims. The middle column illustrates reproducibility as a percentage of the attempts. The right column illustrates reproducibility as counts compared with the sample of claims. This is presented as Fig. S3 in the Supporting Information with additional narrative context.

Extended Data Fig. 7 Reproducibility by field and year by paper as a proportion of the sample.

Reproducibility as a percentage of the sample of papers from each year and each field. This is presented as Fig. S4 in the Supporting Information with additional narrative context.

Extended Data Fig. 8 Reproducibility by field and year by claim as a proportion of the sample.

Reproducibility as a percentage of the sample of claims from each year and each field. This is presented as Fig. S5 in the Supporting Information with additional narrative context.

Extended Data Fig. 9 Reproducibility by 12 subfields.

The left column illustrates the proportion of outcome reproduction attempts from the sample of papers. The middle column illustrates reproducibility as a percentage of the attempts. The right column illustrates reproducibility as counts compared with the sample of papers. This is presented as Fig. S9 in the Supporting Information with additional narrative context.

Extended Data Fig. 10 Reproducibility by 12 subfields and by year.

Reproducibility as a percentage of the sample of papers from each year and each field. This is presented as Fig. S10 in the Supporting Information with additional narrative context.

Supplementary information

Supplementary Data (download ZIP )

Data, code and manuscript reproducibility package.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Miske, O., Abatayo, A.L., Daley, M. et al. Investigating the reproducibility of the social and behavioural sciences. Nature 652, 126–134 (2026). https://doi.org/10.1038/s41586-026-10203-5

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s41586-026-10203-5