Abstract

Contemporary pose estimation methods enable precise measurements of behavior via supervised deep learning with hand-labeled video frames. Although effective in many cases, the supervised approach requires extensive labeling and often produces outputs that are unreliable for downstream analyses. Here, we introduce ‘Lightning Pose’, an efficient pose estimation package with three algorithmic contributions. First, in addition to training on a few labeled video frames, we use many unlabeled videos and penalize the network whenever its predictions violate motion continuity, multiple-view geometry and posture plausibility (semi-supervised learning). Second, we introduce a network architecture that resolves occlusions by predicting pose on any given frame using surrounding unlabeled frames. Third, we refine the pose predictions post hoc by combining ensembling and Kalman smoothing. Together, these components render pose trajectories more accurate and scientifically usable. We released a cloud application that allows users to label data, train networks and process new videos directly from the browser.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$259.00 per year

only $21.58 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All labeled data used in this paper are publicly available.

mirror-mouse https://doi.org/10.6084/m9.figshare.24993315.v1 (ref. 71)

mirror-fish https://doi.org/10.6084/m9.figshare.24993363.v1 (ref. 72)

CRIM13 https://doi.org/10.6084/m9.figshare.24993384.v1 (ref. 73)

All of the model predictions on labeled frames and unlabeled videos are available via Figshare at https://doi.org/10.6084/m9.figshare.25412248.v2 (ref. 74). These results, along with the labeled data, can be used to reproduce the main figures of the paper.

To access the IBL data analyzed in Fig. 6 and Extended Data Fig. 7, see the documentation at https://int-brain-lab.github.io/ONE/FAQ.html#how-do-i-download-the-datasets-cache-for-a-specific-ibl-paper-release and use the tag 2023_Q1_Biderman_Whiteway_et_al. This will provide access to spike-sorted neural activity, trial timing variables (stimulus onset, feedback delivery and so on), the original IBL DeepLabCut traces and the raw videos.

Code availability

The code for Lightning Pose is available at https://github.com/danbider/lightning-pose/ under the MIT license. The repository also contains a Google Colab tutorial notebook that trains a model, forms predictions on videos and visualizes the results. From the command-line interface, running pip install lightning-pose will install the latest release of Lightning Pose via the Python Package Index (PyPI).

The code for the EKS is available at https://github.com/paninski-lab/eks/ under the MIT license. The repository contains the core EKS code as well as scripts demonstrating how to use the code on several example datasets.

The code for the cloud-hosted application is available at https://github.com/Lightning-Universe/Pose-app/ under the Apache-2.0 license. This code enables launching our app locally or on cloud resources by creating a Lightning.ai account.

Code for reproducing the figures in the main text is available at https://github.com/themattinthehatt/lightning-pose-2024-nat-methods/ under the MIT license. This repository also includes a script for downloading all required data from the proper repositories.

The hardware and software used for IBL video collection is described elsewhere41. The protocols used in the mirror-mouse and mirror-fish datasets (both have the same video acquisition pipeline) are also described elsewhere14.

We used the following packages in our data analysis: CUDA toolkit (12.1.0), cuDNN (8.5.0.96), deeplabcut (2.3.5 for runtime benchmarking, 2.2.3 for everything else), ffmpeg (3.4.11), fiftyone (0.23.4), h5py (3.9.0), hydra-core (1.3.2), ibllib (2.32.3), imgaug (0.4.0), kaleido (0.2.1), kornia (0.6.12), lightning (2.1.0), lightning-pose (1.0.0), matplotlib (3.7.5), moviepy (1.0.3), numpy (1.24.4), nvidia-dali-cuda120 (1.28.0), opencv-python (4.9.0.80), pandas (2.0.3), pillow (9.5.0), plotly (5.15.0), scikit-learn (1.3.0), scipy (1.10.1), seaborn (0.12.2), streamlit (1.31.1), tensorboard (2.13.0) and torchvision (0.15.2).

References

Krakauer, J. W., Ghazanfar, A. A., Gomez-Marin, A., MacIver, M. A. & Poeppel, D. Neuroscience needs behavior: correcting a reductionist bias. Neuron 93, 480–490 (2017).

Branson, K., Robie, A. A., Bender, J., Perona, P. & Dickinson, M. H. High-throughput ethomics in large groups of Drosophila. Nat. Methods 6, 451–457 (2009).

Berman, G. J., Choi, D. M., Bialek, W. & Shaevitz, J. W. Mapping the stereotyped behaviour of freely moving fruit flies. J. Royal Soc. Interface 11, 20140672 (2014).

Wiltschko, A. B. et al. Mapping sub-second structure in mouse behavior. Neuron 88, 1121–1135 (2015).

Wiltschko, A. B. et al. Revealing the structure of pharmacobehavioral space through motion sequencing. Nat. Neurosci. 23, 1433–1443 (2020).

Luxem, K. et al. Identifying behavioral structure from deep variational embeddings of animal motion. Commun. Biol. 5, 1267 (2022).

Mathis, A. et al. Deeplabcut: markerless pose estimation of user-defined body parts with deep learning. Nat. Neurosci. 21, 1281–1289 (2018).

Pereira, T. D. et al. Fast animal pose estimation using deep neural networks. Nat. Methods 16, 117–125 (2019).

Graving, J. M. et al. Deepposekit, a software toolkit for fast and robust animal pose estimation using deep learning. Elife 8, e47994 (2019).

Dunn, T. W. et al. Geometric deep learning enables 3D kinematic profiling across species and environments. Nat. Methods 18, 564–573 (2021).

Chen, Z. et al. Alphatracker: a multi-animal tracking and behavioral analysis tool. Front. Behav. Neurosci. 17, 1111908 (2023).

Jones, J. M. et al. A machine-vision approach for automated pain measurement at millisecond timescales. Elife 9, e57258 (2020).

Padilla-Coreano, N. et al. Cortical ensembles orchestrate social competition through hypothalamic outputs. Nature 603, 667–671 (2022).

Warren, R. A. et al. A rapid whisker-based decision underlying skilled locomotion in mice. Elife 10, e63596 (2021).

Hsu, A. I. & Yttri, E. A. B-SOiD, an open-source unsupervised algorithm for identification and fast prediction of behaviors. Nat. Commun. 12, 5188 (2021).

Pereira, T. D. et al. Sleap: a deep learning system for multi-animal pose tracking. Nat. Methods 19, 486–495 (2022).

Weinreb, C. et al. Keypoint-MoSeq: parsing behavior by linking point tracking to pose dynamics. Preprint at bioRxiv https://doi.org/10.1101/2023.03.16.532307 (2023).

Karashchuk, P. et al. Anipose: a toolkit for robust markerless 3D pose estimation. Cell Rep. 36, 109730 (2021).

Monsees, A. et al. Estimation of skeletal kinematics in freely moving rodents. Nat. Methods 19, 1500–1509 (2022).

Rodgers, C. C. A detailed behavioral, videographic, and neural dataset on object recognition in mice. Sci. Data 9, 620 (2022).

Chapelle, O., Schölkopf, B. & Zien, A. (eds) Semi-Supervised Learning (The MIT Press, 2006).

Lakshminarayanan, B., Pritzel, A. & Blundell, C. Simple and scalable predictive uncertainty estimation using deep ensembles. in Advances in Neural Information Processing Systems vol. 30 (eds Guyon, I. et al.) (Curran Associates, 2017).

Abe, T. et al. Neuroscience cloud analysis as a service: An open-source platform for scalable, reproducible data analysis. Neuron 110, 2771–2789 (2022).

Falcon, W. et al. Pytorchlightning/pytorch-lightning: 0.7.6 release. Zenodo https://doi.org/10.5281/zenodo.3828935 (2020).

Recht, B., Roelofs, R., Schmidt, L. & Shankar, V. Do imagenet classifiers generalize to imagenet? In International Conference on Machine Learning, 5389–5400 (PMLR, 2019).

Tran, D. et al. Plex: Towards reliability using pretrained large model extensions. Preprint at https://arxiv.org/abs/2207.07411 (2022).

Burgos-Artizzu, X. P., Dollár, P., Lin, D., Anderson, D. J. & Perona, P. Social behavior recognition in continuous video. In 2012 IEEE Conference on Computer Vision and Pattern Recognition, 1322–1329 (IEEE, 2012).

Segalin, C. et al. The mouse action recognition system (mars) software pipeline for automated analysis of social behaviors in mice. Elife 10, e63720 (2021).

IBL. Data release - Brainwide map - Q4 2022 (2023). Figshare https://doi.org/10.6084/m9.figshare.21400815.v6 (2022).

Desai, N. et al. Openapepose, a database of annotated ape photographs for pose estimation. Elife 12, RP86873 (2023).

Syeda, A. et al. Facemap: a framework for modeling neural activity based on orofacial tracking. Nat. Neurosci. 27, 187–195 (2024).

Spelke, E. S. Principles of object perception. Cogn. Sci. 14, 29–56 (1990).

Wu, A. et al. Deep graph pose: a semi-supervised deep graphical model for improved animal pose tracking. in Advances in Neural Information Processing Systems (eds Larochelle, H. et al.) 6040–6052 (2020).

Nath, T. et al. Using deeplabcut for 3D markerless pose estimation across species and behaviors. Nat. Protoc. 14, 2152–2176 (2019).

Zhang, Y. & Park, H. S. Multiview supervision by registration. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 420–428 (2020).

He, Y., Yan, R., Fragkiadaki, K. & Yu, S.-I. Epipolar transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 7779–7788 (2020).

Hartley, R. & Zisserman, A. Multiple View Geometry in Computer Vision (Cambridge University Press, 2003).

Bialek, W. On the dimensionality of behavior. Proc. Natl Acad. Sci. uSA 119, e2021860119 (2022).

Stephens, G. J., Johnson-Kerner, B., Bialek, W. & Ryu, W. S. From modes to movement in the behavior of caenorhabditis elegans. PloS ONE 5, e13914 (2010).

Yan, Y., Goodman, J. M., Moore, D. D., Solla, S. A. & Bensmaia, S. J. Unexpected complexity of everyday manual behaviors. Nat. Commun. 11, 3564 (2020).

IBL. Video hardware and software for the international brain laboratory. Figshare https://doi.org/10.6084/m9.figshare.19694452.v1 (2022).

Li, T., Severson, K. S., Wang, F. & Dunn, T. W. Improved 3Dd markerless mouse pose estimation using temporal semi-supervision. Int. J. Comput. Vis. 131, 1389–1405 (2023).

Beluch, W. H., Genewein, T., Nürnberger, A. & Köhler, J. M. The power of ensembles for active learning in image classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 9368–9377 (2018).

Abe, T., Buchanan, E. K., Pleiss, G., Zemel, R. & Cunningham, J. P. Deep ensembles work, but are they necessary? in Advances in Neural Information Processing Systems 35, 33646–33660 (2022).

Bishop, C. M. & Nasrabadi, N. M. Pattern Recognition and Machine Learning, vol. 4 (Springer, 2006).

Yu, H. et al. AP-10K: a benchmark for animal pose estimation in the wild. Preprint at https://arxiv.org/abs/2108.12617 (2021).

Ye, S. et al. SuperAnimal models pretrained for plug-and-play analysis of animal behavior. Preprint at https://arxiv.org/abs/2203.07436 (2022).

Zheng, C. et al. Deep learning-based human pose estimation: a survey. ACM Computing Surveys 56, 1–37 (2023).

Lin, T. -Y. et al. Microsoft coco: common objects in context. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6–12, 2014, Proceedings. Vol. 8693, 740–755 (Springer, 2014).

Ionescu, C., Papava, D., Olaru, V. & Sminchisescu, C. Human3. 6M: large scale datasets and predictive methods for 3D human sensing in natural environments. IEEE Trans. Pattern Anal. Mach. Intell. 36, 1325–1339 (2013).

Loper, M., Mahmood, N., Romero, J., Pons-Moll, G. & Black, M. J. SMPL: a skinned multi-person linear model. In Seminal Graphics Papers: Pushing the Boundaries. Vol. 2, 851–866 (2023).

Marshall, J. D., Li, T., Wu, J. H. & Dunn, T. W. Leaving flatland: advances in 3D behavioral measurement. Curr. Opin. Neurobiol. 73, 102522 (2022).

Günel, S. et al. DeepFly3D, a deep learning-based approach for 3D limb and appendage tracking in tethered, adult Drosophila. Elife 8, e48571 (2019).

Sun, J. J. et al. BKinD-3D: self-supervised 3D keypoint discovery from multi-view videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 9001–9010 (2023).

Bala, P. C. et al. Automated markerless pose estimation in freely moving macaques with openmonkeystudio. Nat. Commun. 11, 4560 (2020).

Hinton, G., Vinyals, O. & Dean, J. Distilling the knowledge in a neural network. Preprint at https://arxiv.org/abs/1503.02531 (2015).

Lauer, J. et al. Multi-animal pose estimation, identification and tracking with deeplabcut. Nat. Meth. 19, 496–504 (2022).

Chettih, S. N., Mackevicius, E. L., Hale, S. & Aronov, D. Barcoding of episodic memories in the hippocampus of a food-caching bird. Cell 187, 1922–1935 (2024).

IBLet al. Standardized and reproducible measurement of decision-making in mice. Elife 10, e63711 (2021).

IBL et al. Reproducibility of in vivo electrophysiological measurements in mice. Preprint at bioRxiv https://doi.org/10.1101/2022.05.09.491042 (2022).

Paszke, A. et al. Pytorch: An imperative style, high-performance deep learning library. in Advances in Neural Information Processing Systems 32, 8024–8035 (2019).

Jafarian, Y., Yao, Y. & Park, H. S. MONET: multiview semi-supervised keypoint via epipolar divergence. Preprint at https://arxiv.org/abs/1806.00104 (2018).

Tresch, M. C. & Jarc, A. The case for and against muscle synergies. Curr. Opin. Neurobiol. 19, 601–607 (2009).

Stephens, G. J., Johnson-Kerner, B., Bialek, W. & Ryu, W. S. Dimensionality and dynamics in the behavior of C. elegans. PLoS Comput. Biol. 4, e1000028 (2008).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. Preprint at https://arxiv.org/abs/1412.6980 (2014).

Virtanen, P. et al. Scipy 1.0: fundamental algorithms for scientific computing in Python. Nat. Methods 17, 261–272 (2020).

IBL et al. A brain-wide map of neural activity during complex behaviour. Preprint at bioRxiv https://doi.org/10.1101/2023.07.04.547681 (2023).

Pedregosa, F. et al. Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Zolnouri, M., Li, X. & Nia, V. P. Importance of data loading pipeline in training deep neural networks. Preprint at https://arxiv.org/abs/2005.02130 (2020).

Yadan, O. Hydra - a framework for elegantly configuring complex applications. Github https://github.com/facebookresearch/hydra (2019).

Whiteway, M, Biderman, D., Warren, R., Zhang, Q. & Sawtell, N. B. Lightning Pose dataset: mirror-mouse. Figshare https://doi.org/10.6084/m9.figshare.24993315.v1 (2024).

Whiteway, M. et al. Lightning Pose dataset: mirror-fish. Figshare https://doi.org/10.6084/m9.figshare.24993363.v1 (2024).

Whiteway, M. & Biderman, D. Lightning Pose dataset: CRIM13. Figshare https://doi.org/10.6084/m9.figshare.24993384.v1 (2024).

Whiteway, M. & Biderman, D. Lightning Pose results: Nature Methods 2024. Figshare https://doi.org/10.6084/m9.figshare.25412248.v2 (2024).

Acknowledgements

We thank P. Dayan and N. Steinmetz for serving on our IBL-paper board, as well as two anonymous reviewers whose detailed comments considerably strengthened our paper. We are grateful to N. Biderman for productive discussions and help with visualization. We thank M. Carandini and J. Portes for helpful comments; T. Abe, K. Buchanan and G. Pleiss for helpful discussions on ensembling; and H. Xiang for conversations on active learning and outlier detection. We thank W. Falcon, L. Antiga, T. Chaton and A. Wälchi (Lightning AI) for their technical support and advice on implementing our package and the cloud application. This work was supported by the following grants: Gatsby Charitable Foundation GAT3708 (to D.B., M.R.W., C.H., N.R.G., A.V., J.P.C. and L.P.), German National Academy of Sciences Leopoldina (to A.E.U.), Irma T Hirschl Trust (to N.B.S.), Netherlands Organisation for Scientific Research (VI.Veni.212.184; to A.E.U.), NSF IOS-2115007 (to N.B.S.), National Institutes of Health (NIH) K99NS128075 (to J.P.N.), NIH NS075023 (to N.B.S.), NIH NS118448 (to N.B.S.), NIH DK131086-02 (to N.B.S.), NIH U19NS123716 (to M.R.W.) and NSF 1707398 (to D.B., M.R.W., C.H., N.R.G., A.V., J.P.C. and L.P.), funds provided by the National Science Foundation and by DoD OUSD (R&E) under Cooperative Agreement PHY-2229929, The NSF AI Institute for Artificial and Natural Intelligence (to D.B., M.R.W., C.H., A.V., J.P.C. and L.P.), Simons Foundation (to M.R.W., M.M.S., J.M.H., A.K., G.T.M., J.P.N., A.P.V. and K.Z.S.) and the Wellcome Trust 216324 (M.M.S., J.M.H., A.K., G.T.M., J.P.N., A.P.V. and K.Z.S.). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Consortia

Contributions

Conceptualization: D.B., M.R.W. and L.P.; software package—core development: D.B., M.R.W. and N.R.G.; software package—contribution: C.H. and A.V.; cloud application—development: M.R.W., D.B., R.L. and A.V.; first draft—writing: D.B., M.R.W. and L.P.; first draft—editing: D.B., M.R.W., C.H. and L.P.; data collection: D.B., M.S., J.M.H., A.K., G.T.M., J.P.N., A.P.V., K.Z.S., A.E.U., R.W., D.N. and F.P.; Funding—J.P.C., N.S. and L.P.; semi-supervised learning algorithms: D.B., M.R.W., N.R.G. and L.P.; deep ensembling: D.B., M.R.W., C.H. and L.P.; EKS: C.H. and L.P.; TCN: C.H., D.B., M.R.W. and L.P.; diagnostic tools and visualization: D.B., M.R.W. and A.V.; neural network experiments and analysis: D.B. and M.R.W.

Corresponding authors

Ethics declarations

Competing interests

R.S.L. assisted in the initial development of the cloud application as a solution architect at Lightning AI in Spring/Summer 2022. R.S.L. left the company in August 2022 and continues to hold shares. The remaining authors declare no competing interests.

Peer review

Peer review information

Nature Methods thanks the anonymous reviewers for their contribution to the peer review of this work. Primary Handling Editor: Nina Vogt, in collaboration with the Nature Methods team. Peer reviewer reports are available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Unsupervised losses complement model confidence for outlier detection on mirror-fish dataset.

Example traces, unsupervised metrics, and predictions from a DeepLabCut model (trained on 354 frames) on held-out videos. Conventions for panels A-D as in Fig. 3. A: Example frame sequence. B: Example traces from the same video. C: Total number of keypoints flagged as outliers by each metric, and their overlap. D: Area under the receiver operating characteristic curve for several body parts. We define a ‘true outlier’ to be frames where the horizontal displacement between top and bottom predictions or the vertical displacement between top and right predictions exceeds 20 pixels. AUROC values are only shown for the three body parts that have corresponding keypoints across all three views included in the Pose PCA computation (many keypoints are excluded from the Pose PCA subspace due to many missing hand labels). AUROC values are computed across frames from 10 test videos; boxplot variability is over n=5 random subsets of training data. The same subset of keypoints is used for panel C. Boxes in panel D use 25th/50th/75th percentiles for min/center/max; whiskers extend to 1.5 * IQR.

Extended Data Fig. 2 Unsupervised losses complement model confidence for outlier detection on CRIM13 dataset.

Example traces, unsupervised metrics, and predictions from a DeepLabCut model (trained on 800 frames) on held-out videos. Conventions for panels A-C as in Fig. 3. A: Example frame sequence. B: Example traces from the same video. Because the size of CRIM13 frames are larger than those of the mirror-mouse and mirror-fish datasets, we use a threshold of 50 pixels instead of 20 to define outliers through the unsupervised losses. C: Total number of keypoints flagged as outliers by each metric, and their overlap. Outliers are collected from predictions across frames from 18 test videos and across predictions from five different networks trained on random subsets of labeled data.

Extended Data Fig. 3 PCA-derived losses drive most improvements in semi-supervised models.

For each model type we train three networks with different random seeds controlling the data presentation order. The models train on 75 labeled frames and unlabeled videos. We plot the mean pixel error and 95% CI across keypoints and OOD frames, as a function of ensemble standard deviation, as in Fig. 4. At the 100% vertical line, n=17150 keypoints for mirror-mouse, n=18180 for mirror-fish, and n=89180 for CRIM13.

Extended Data Fig. 4 Unlabeled frames improve pose estimation in mirror-fish dataset.

Conventions as in Fig. 4. A. Example traces from the baseline model and the semi-supervised TCN model (trained with 75 labeled frames) for a single keypoint on a held-out video (Supplementary Video 6). B. A sequence of frames corresponding to the grey shaded region in panel (A). C. Pixel error as a function of ensemble standard devation for scarce (top) and abundant (bottom) labeling regimes. D. Individual unsupervised loss terms plotted as a function of ensemble standard deviation for the scarce (top) and abundant (bottom) label regimes.

Extended Data Fig. 5 Unlabeled frames improve pose estimation in CRIM13 dataset.

Conventions as in Fig. 4. A. Example traces from the baseline model and the semi-supervised TCN model (trained with 800 labeled frames) for a single keypoint on a held-out video (Supplementary Video 7). B. A sequence of frames corresponding to the grey shaded region in panel (A). C. Pixel error as a function of ensemble standard deviation for scarce (top) and abundant (bottom) labeling regimes. D. Individual unsupervised loss terms plotted as a function of ensemble standard deviation for the scarce (top) and abundant (bottom) labeling regimes.

Extended Data Fig. 6 The Ensemble Kalman Smoother improves pose estimation across datasets.

We trained an ensemble of five semi-supervised TCN models on the same training data. The networks differed in the order of data presentation and in the random weight initializations for their ‘head’. This figure complements Fig. 5 which uses an ensemble of DeepLabCut models as input to EKS. A. Mean OOD pixel error over frames and keypoints as a function of ensemble standard deviation (as in Fig. 4). B. Time series of predictions (x and y coordinates on top and bottom, respectively) from the five individual semi-supervised TCN models (75 labeled training frames; blue lines) and EKS-temporal (brown lines). Ground truth labels are shown as green dots. C,D. Identical to A,B but for the mirror-fish dataset. E,F. Identical to A,B but for the CRIM13 dataset.

Extended Data Fig. 7 Lightning Pose models and ensemble smoothing improve pose estimation on IBL paw data.

A. Sample frames from each camera view overlaid with a subset of paw markers estimated from DeepLabCut (left), Lightning Pose using a semi-supervised TCN model (center), and a 5-member ensemble using semi-supervised TCN models (right). B. Example left view frames from a subset of 44 IBL sessions. C. The empirical distribution of the right paw position from each view projected onto the 1D subspace of maximal correlation in a canonical correlation analysis (CCA). Column arrangement as in A. D. Correlation in the CCA subspace is computed across n=44 sessions for each model and paw. The LP+EKS model has a correlation of 1.0 by construction. E. Median right paw speed plotted across correct trials aligned to first movement onset of the wheel; error bars show 95% confidence interval across n=273 trials. The same trial consistency metric from Fig. 6 is computed. F. Trial consistency computed across n=44 sessions. G. Example traces of Kalman smoothed right paw speed (blue) and predictions from neural activity (orange) for several trials using cross-validated, regularized linear regression. H. Neural decoding performance across n=44 sessions. Panels D, F, and H use a one-sided Wilcoxon signed-rank test; boxes use 25th/50th/75th percentiles for min/center/max; whiskers extend to 1.5 * IQR. See Supplementary Table 2 for further quantification of boxes.

Extended Data Fig. 8 Lightning Pose enables easy model development, fast training, and is accessible via a cloud application.

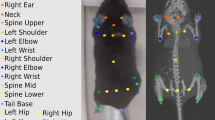

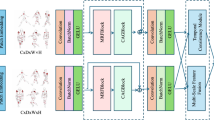

A. Our software package outsources many tasks to existing tools within the deep learning ecosystem, resulting in a lighter, modular package that is easy to maintain and extend. The innermost purple box indicates the core components: accelerated video reading (via NVIDIA DALI), modular network design, and our general-purpose loss factory. The middle purple box denotes the training and logging operations which we outsource to PyTorch Lightning, and the outermost purple box denotes our use of the Hydra job manager. The right box depicts a rich set of interactive diagnostic metrics which are served via Streamlit and FiftyOne GUIs. B. A diagram of our cloud application. The application’s critical components are dataset curation, parallel model training, interactive performance diagnostics, and parallel prediction of new videos. C. Screenshots from our cloud application. From left to right: LabelStudio GUI for frame labeling, TensorFlow monitoring of training performance overlaying two different networks, FiftyOne GUI for comparing these two networks’ predictions on a video, and a Streamlit application that shows these two networks’ time series of predictions, confidences, and spatiotemporal constraint violations.

Supplementary information

Supplementary Information (download PDF )

Supplementary Methods, Tables 1–4 and Figs. 1–10.

Supplementary Video 2 (download MP4 )

Selected frames with high Pose PCA errors, mirror-mouse dataset. DLC model trained with 631 labeled frames. See Supplementary Fig. 6 for a detailed caption.

Supplementary Video 3 (download MP4 )

Selected frames with high Pose PCA errors, mirror-fish dataset. DLC model trained with 354 labeled frames. See Supplementary Fig. 6 for a detailed caption.

Supplementary Video 4 (download MP4 )

Selected frames with high Pose PCA errors, CRIM13 dataset. DLC model trained with 800 labeled frames. See Supplementary Fig. 6 for a detailed caption.

Supplementary Video 11 (download MP4 )

EKS predictions (multi-view PCA), mirror-fish dataset. See Supplementary Fig. 7 for a detailed caption.

Supplementary Video 12 (download MP4 )

EKS predictions (temporal), CRIM13 dataset. See Supplementary Fig. 7 for a detailed caption.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Biderman, D., Whiteway, M.R., Hurwitz, C. et al. Lightning Pose: improved animal pose estimation via semi-supervised learning, Bayesian ensembling and cloud-native open-source tools. Nat Methods 21, 1316–1328 (2024). https://doi.org/10.1038/s41592-024-02319-1

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s41592-024-02319-1

This article is cited by

-

FidlTrack: high-fidelity structure-aware single particle tracking resolves intracellular molecular motion in organelles sensing APP processing

Nature Communications (2026)

-

Design of a Private Cloud Platform for Distributed Logging Big Data Based on a Unified Learning Model of Physics and Data

Applied Geophysics (2025)

-

Brain-wide representations of prior information in mouse decision-making

Nature (2025)